🤗 Hugging Face • 🤖 ModelScope • ✡️ WiseModel

👋 Join us 💬 WeChat (Chinese) !

[ Back to top ⬆️ ]

## 🎉 NewsYi-VL-34B and Yi-VL-6B, are open-sourced and available to the public.Yi-VL-34B has ranked first among all existing open-source models in the latest benchmarks, including MMMU and CMMMU (based on data available up to January 2024).

Yi-6B-200K and Yi-34B-200K, are open-sourced and available to the public.Yi-6B and Yi-34B, are open-sourced and available to the public.[ Back to top ⬆️ ]

## 🎯 Models Yi models come in multiple sizes and cater to different use cases. You can also fine-tune Yi models to meet your specific requirements. If you want to deploy Yi models, make sure you meet the [software and hardware requirements](#deployment). ### Chat models | Model | Download |---|--- Yi-34B-Chat | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi-34B-Chat) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi-34B-Chat/summary) Yi-34B-Chat-4bits | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi-34B-Chat-4bits) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi-34B-Chat-4bits/summary) Yi-34B-Chat-8bits | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi-34B-Chat-8bits) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi-34B-Chat-8bits/summary) Yi-6B-Chat| • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi-6B-Chat) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi-6B-Chat/summary) Yi-6B-Chat-4bits | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi-6B-Chat-4bits) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi-6B-Chat-4bits/summary) Yi-6B-Chat-8bits | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi-6B-Chat-8bits) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi-6B-Chat-8bits/summary) - 4-bit series models are quantized by AWQ.[ Back to top ⬆️ ]

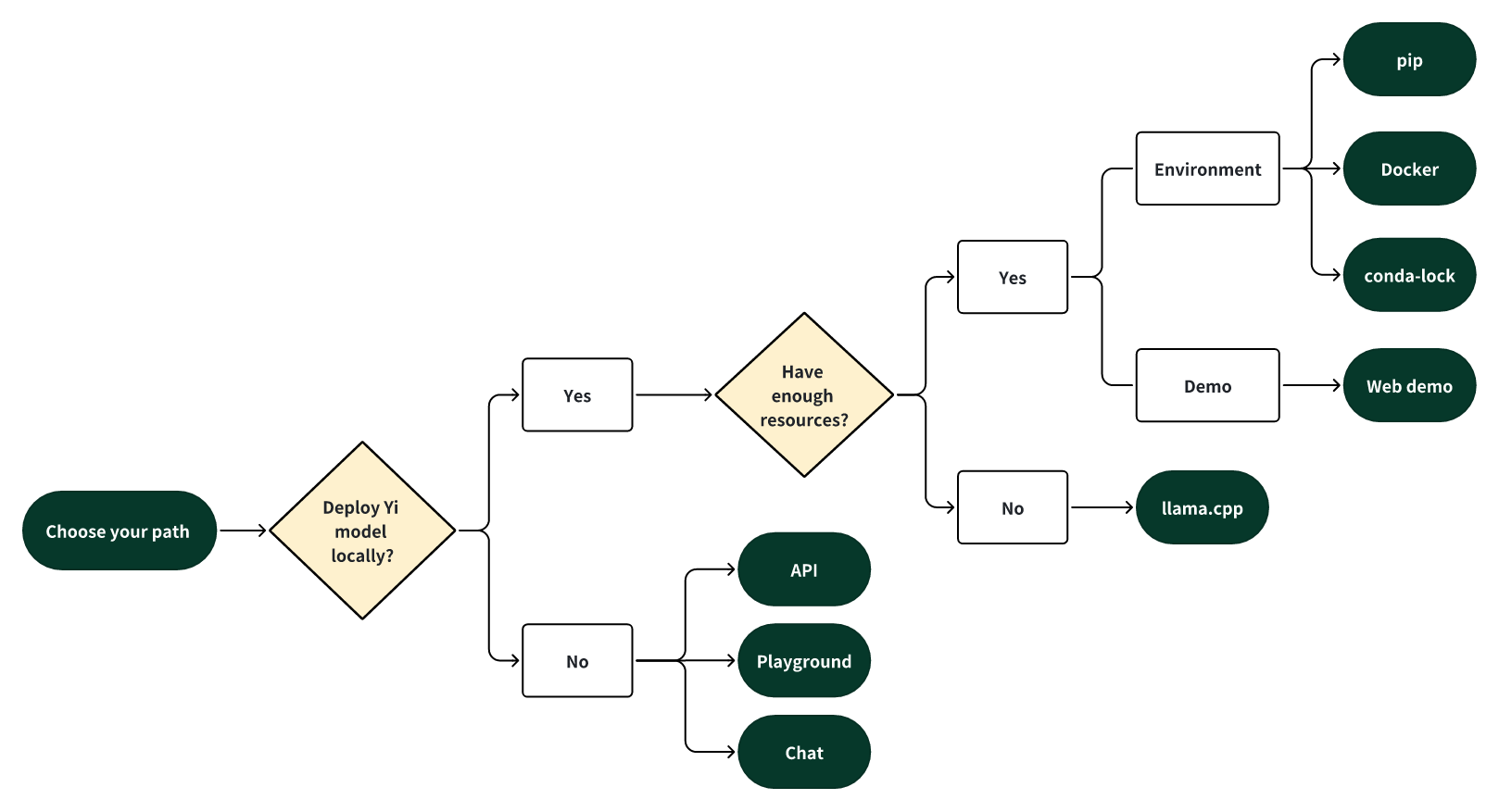

# 🟢 How to use Yi? - [Quick start](#quick-start) - [Choose your path](#choose-your-path) - [pip](#quick-start---pip) - [docker](#quick-start---docker) - [conda-lock](#quick-start---conda-lock) - [llama.cpp](#quick-start---llamacpp) - [Web demo](#web-demo) - [Fine-tuning](#finetuning) - [Quantization](#quantization) - [Deployment](#deployment) - [Learning hub](#learning-hub) ## Quick start Getting up and running with Yi models is simple with multiple choices available. ### Choose your path Select one of the following paths to begin your journey with Yi!  #### 🎯 Deploy Yi locally If you prefer to deploy Yi models locally, - 🙋♀️ and you have **sufficient** resources (for example, NVIDIA A800 80GB), you can choose one of the following methods: - [pip](#pip) - [Docker](#quick-start---docker) - [conda-lock](#quick-start---conda-lock) - 🙋♀️ and you have **limited** resources (for example, a MacBook Pro), you can use [llama.cpp](#quick-start---llamacpp) #### 🎯 Not to deploy Yi locally If you prefer not to deploy Yi models locally, you can explore Yi's capabilities using any of the following options. ##### 🙋♀️ Run Yi with APIs If you want to explore more features of Yi, you can adopt one of these methods: - Yi APIs (Yi official) - [Early access has been granted](https://x.com/01AI_Yi/status/1735728934560600536?s=20) to some applicants. Stay tuned for the next round of access! - [Yi APIs](https://replicate.com/01-ai/yi-34b-chat/api?tab=nodejs) (Replicate) ##### 🙋♀️ Run Yi in playground If you want to chat with Yi with more customizable options (e.g., system prompt, temperature, repetition penalty, etc.), you can try one of the following options: - [Yi-34B-Chat-Playground](https://platform.lingyiwanwu.com/prompt/playground) (Yi official) - Access is available through a whitelist. Welcome to apply (fill out a form in [English](https://cn.mikecrm.com/l91ODJf) or [Chinese](https://cn.mikecrm.com/gnEZjiQ)). - [Yi-34B-Chat-Playground](https://replicate.com/01-ai/yi-34b-chat) (Replicate) ##### 🙋♀️ Chat with Yi If you want to chat with Yi, you can use one of these online services, which offer a similar user experience: - [Yi-34B-Chat](https://huggingface.co/spaces/01-ai/Yi-34B-Chat) (Yi official on Hugging Face) - No registration is required. - [Yi-34B-Chat](https://platform.lingyiwanwu.com/) (Yi official beta) - Access is available through a whitelist. Welcome to apply (fill out a form in [English](https://cn.mikecrm.com/l91ODJf) or [Chinese](https://cn.mikecrm.com/gnEZjiQ)). ### Quick start - pip This tutorial guides you through every step of running **Yi-34B-Chat locally on an A800 (80G)** and then performing inference. #### Step 0: Prerequisites - Make sure Python 3.10 or a later version is installed. - If you want to run other Yi models, see [software and hardware requirements](#deployment) #### Step 1: Prepare your environment To set up the environment and install the required packages, execute the following command. ```bash git clone https://github.com/01-ai/Yi.git cd yi pip install -r requirements.txt ``` #### Step 2: Download the Yi model You can download the weights and tokenizer of Yi models from the following sources: - [Hugging Face](https://huggingface.co/01-ai) - [ModelScope](https://www.modelscope.cn/organization/01ai/) - [WiseModel](https://wisemodel.cn/organization/01.AI) #### Step 3: Perform inference You can perform inference with Yi chat or base models as below. ##### Perform inference with Yi chat model 1. Create a file named `quick_start.py` and copy the following content to it. ```python from transformers import AutoModelForCausalLM, AutoTokenizer model_path = 'Make sure you've installed Docker and nvidia-container-toolkit.

docker run -it --gpus all \

-v <your-model-path>: /models

ghcr.io/01-ai/yi:latest

Alternatively, you can pull the Yi Docker image from registry.lingyiwanwu.com/ci/01-ai/yi:latest.

You can perform inference with Yi chat or base models as below.

The steps are similar to pip - Perform inference with Yi chat model.

Note that the only difference is to set model_path = '<your-model-mount-path>' instead of model_path = '<your-model-path>'.

The steps are similar to pip - Perform inference with Yi base model.

Note that the only difference is to set --model <your-model-mount-path>' instead of model <your-model-path>.

conda-lock to generate fully reproducible lock files for conda environments. ⬇️micromamba for installing these dependencies.

micromamba install -y -n yi -f conda-lock.yml to create a conda environment named yi and install the necessary dependencies.

[ Back to top ⬆️ ]

### Deployment If you want to deploy Yi models, make sure you meet the software and hardware requirements. #### Software requirements Before using Yi quantized models, make sure you've installed the correct software listed below. | Model | Software |---|--- Yi 4-bit quantized models | [AWQ and CUDA](https://github.com/casper-hansen/AutoAWQ?tab=readme-ov-file#install-from-pypi) Yi 8-bit quantized models | [GPTQ and CUDA](https://github.com/PanQiWei/AutoGPTQ?tab=readme-ov-file#quick-installation) #### Hardware requirements Before deploying Yi in your environment, make sure your hardware meets the following requirements. ##### Chat models | Model | Minimum VRAM | Recommended GPU Example | |----------------------|--------------|:-------------------------------------:| | Yi-6B-Chat | 15 GB | RTX 3090[ Back to top ⬆️ ]

## 📌 Benchmarks - [📊 Chat model performance](#-chat-model-performance) - [📊 Base model performance](#-base-model-performance) ### 📊 Chat model performance Yi-34B-Chat model demonstrates exceptional performance, ranking first among all existing open-source models in the benchmarks including MMLU, CMMLU, BBH, GSM8k, and more. [ Back to top ⬆️ ]

# 🟢 Misc. ### Acknowledgments A heartfelt thank you to each of you who have made contributions to the Yi community! You have helped Yi not just a project, but a vibrant, growing home for innovation. [](https://github.com/01-ai/yi/graphs/contributors)[ Back to top ⬆️ ]

### 📡 Disclaimer We use data compliance checking algorithms during the training process, to ensure the compliance of the trained model to the best of our ability. Due to complex data and the diversity of language model usage scenarios, we cannot guarantee that the model will generate correct, and reasonable output in all scenarios. Please be aware that there is still a risk of the model producing problematic outputs. We will not be responsible for any risks and issues resulting from misuse, misguidance, illegal usage, and related misinformation, as well as any associated data security concerns.[ Back to top ⬆️ ]

### 🪪 License The source code in this repo is licensed under the [Apache 2.0 license](https://github.com/01-ai/Yi/blob/main/LICENSE). The Yi series models are fully open for academic research and free for commercial use, with automatic permission granted upon application. All usage must adhere to the [Yi Series Models Community License Agreement 2.1](https://github.com/01-ai/Yi/blob/main/MODEL_LICENSE_AGREEMENT.txt). For free commercial use, you only need to send an email to [get official commercial permission](https://www.lingyiwanwu.com/yi-license).[ Back to top ⬆️ ]