Update README.md

Browse files- README.md +85 -36

- config.json +30 -0

- configuration_intern_vit.py +119 -0

- flash_attention.py +76 -0

- model.safetensors +3 -0

- modeling_intern_vit.py +363 -0

- preprocessor_config.json +19 -0

README.md

CHANGED

|

@@ -1,42 +1,89 @@

|

|

| 1 |

---

|

| 2 |

license: mit

|

| 3 |

-

datasets:

|

| 4 |

-

- laion/laion2B-en

|

| 5 |

-

- laion/laion-coco

|

| 6 |

-

- laion/laion2B-multi

|

| 7 |

-

- kakaobrain/coyo-700m

|

| 8 |

-

- conceptual_captions

|

| 9 |

-

- wanng/wukong100m

|

| 10 |

pipeline_tag: image-feature-extraction

|

|

|

|

|

|

|

| 11 |

---

|

| 12 |

|

| 13 |

# InternViT-300M-448px-V2_5

|

| 14 |

|

| 15 |

-

[\[📂 GitHub\]](https://github.com/OpenGVLab/InternVL) [\[🆕 Blog\]](https://internvl.github.io/blog/) [\[📜 InternVL 1.0

|

| 16 |

|

| 17 |

-

[\[🗨️ Chat Demo\]](https://internvl.opengvlab.com/) [\[🤗 HF Demo\]](https://huggingface.co/spaces/OpenGVLab/InternVL) [\[🚀 Quick Start\]](#quick-start)

|

| 18 |

-

|

| 19 |

-

We further refined the InternViT-300M by incrementally pre-training the previous weights [InternViT-6B-448px-V1-5](https://huggingface.co/OpenGVLab/InternViT-6B-448px-V1-5) on a more diverse data mixture using the NTP loss, leading to the enhanced InternViT-300M-448px-V2.5. This update enhances the foundational capabilities of edge-side MLLMs.

|

| 20 |

|

| 21 |

<div align="center">

|

|

|

|

|

|

|

| 22 |

|

| 23 |

-

|

| 24 |

-

| :---------------------: | :----------------------------------------: |

|

| 25 |

-

|InternViT-300M-448px-V2_5|[🤗 link](https://huggingface.co/OpenGVLab/InternViT-300M-448px-V2_5) |

|

| 26 |

-

|InternViT-6B-448px-V2_5|[🤗 link](https://huggingface.co/OpenGVLab/InternViT-6B-448px-V2_5)|

|

| 27 |

|

| 28 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 29 |

|

| 30 |

-

|

| 31 |

|

| 32 |

-

|

| 33 |

-

- **Model Stats:**

|

| 34 |

-

- Params (M): 304

|

| 35 |

-

- Image size: 448 x 448, training with 1 - 12 tiles

|

| 36 |

-

- **Pretrain Dataset:** LAION-en, LAION-zh, COYO, GRIT, COCO, TextCaps, Objects365, OpenImages, All-Seeing, Wukong-OCR, LaionCOCO-OCR, and other OCR-related datasets.

|

| 37 |

-

To enhance the OCR capability of the model, we have incorporated additional OCR data alongside the general caption datasets. Specifically, we utilized PaddleOCR to perform Chinese OCR on images from Wukong and English OCR on images from LAION-COCO.

|

| 38 |

|

| 39 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 40 |

|

| 41 |

```python

|

| 42 |

import torch

|

|

@@ -59,17 +106,20 @@ pixel_values = pixel_values.to(torch.bfloat16).cuda()

|

|

| 59 |

outputs = model(pixel_values)

|

| 60 |

```

|

| 61 |

|

|

|

|

|

|

|

|

|

|

|

|

|

| 62 |

## Citation

|

| 63 |

|

| 64 |

If you find this project useful in your research, please consider citing:

|

| 65 |

|

| 66 |

```BibTeX

|

| 67 |

-

|

| 68 |

-

|

| 69 |

-

|

| 70 |

-

|

| 71 |

-

|

| 72 |

-

year={2023}

|

| 73 |

}

|

| 74 |

@article{chen2024far,

|

| 75 |

title={How Far Are We to GPT-4V? Closing the Gap to Commercial Multimodal Models with Open-Source Suites},

|

|

@@ -77,11 +127,10 @@ If you find this project useful in your research, please consider citing:

|

|

| 77 |

journal={arXiv preprint arXiv:2404.16821},

|

| 78 |

year={2024}

|

| 79 |

}

|

| 80 |

-

@article{

|

| 81 |

-

title={

|

| 82 |

-

author={

|

| 83 |

-

journal={arXiv preprint arXiv:

|

| 84 |

-

year={

|

| 85 |

}

|

| 86 |

-

|

| 87 |

```

|

|

|

|

| 1 |

---

|

| 2 |

license: mit

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 3 |

pipeline_tag: image-feature-extraction

|

| 4 |

+

base_model: OpenGVLab/InternViT-300M-448px

|

| 5 |

+

base_model_relation: finetune

|

| 6 |

---

|

| 7 |

|

| 8 |

# InternViT-300M-448px-V2_5

|

| 9 |

|

| 10 |

+

[\[📂 GitHub\]](https://github.com/OpenGVLab/InternVL) [\[🆕 Blog\]](https://internvl.github.io/blog/) [\[📜 InternVL 1.0\]](https://arxiv.org/abs/2312.14238) [\[📜 InternVL 1.5\]](https://arxiv.org/abs/2404.16821) [\[📜 InternVL 2.5\]](https://github.com/OpenGVLab/InternVL/blob/main/InternVL2_5_report.pdf)

|

| 11 |

|

| 12 |

+

[\[🗨️ Chat Demo\]](https://internvl.opengvlab.com/) [\[🤗 HF Demo\]](https://huggingface.co/spaces/OpenGVLab/InternVL) [\[🚀 Quick Start\]](#quick-start) [\[📖 Documents\]](https://internvl.readthedocs.io/en/latest/)

|

|

|

|

|

|

|

| 13 |

|

| 14 |

<div align="center">

|

| 15 |

+

<img width="500" alt="image" src="https://cdn-uploads.huggingface.co/production/uploads/64006c09330a45b03605bba3/zJsd2hqd3EevgXo6fNgC-.png">

|

| 16 |

+

</div>

|

| 17 |

|

| 18 |

+

## Introduction

|

|

|

|

|

|

|

|

|

|

| 19 |

|

| 20 |

+

We are excited to announce the release of `InternViT-300M-448px-V2_5`, a significant enhancement built on the foundation of `InternViT-300M-448px`. By employing **ViT incremental learning** with NTP loss (Stage 1.5), the vision encoder has improved its ability to extract visual features, enabling it to capture more comprehensive information. This improvement is particularly noticeable in domains that are underrepresented in large-scale web datasets such as LAION-5B, including multilingual OCR data and mathematical charts, among others.

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

## InternViT 2.5 Family

|

| 25 |

+

|

| 26 |

+

In the following table, we provide an overview of the InternViT 2.5 series.

|

| 27 |

+

|

| 28 |

+

| Model Name | HF Link |

|

| 29 |

+

| :-----------------------: | :-------------------------------------------------------------------: |

|

| 30 |

+

| InternViT-300M-448px-V2_5 | [🤗 link](https://huggingface.co/OpenGVLab/InternViT-300M-448px-V2_5) |

|

| 31 |

+

| InternViT-6B-448px-V2_5 | [🤗 link](https://huggingface.co/OpenGVLab/InternViT-6B-448px-V2_5) |

|

| 32 |

+

|

| 33 |

+

## Model Architecture

|

| 34 |

+

|

| 35 |

+

As shown in the following figure, InternVL 2.5 retains the same model architecture as its predecessors, InternVL 1.5 and 2.0, following the "ViT-MLP-LLM" paradigm. In this new version, we integrate a newly incrementally pre-trained InternViT with various pre-trained LLMs, including InternLM 2.5 and Qwen 2.5, using a randomly initialized MLP projector.

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

|

| 39 |

+

As in the previous version, we applied a pixel unshuffle operation, reducing the number of visual tokens to one-quarter of the original. Besides, we adopted a similar dynamic resolution strategy as InternVL 1.5, dividing images into tiles of 448×448 pixels. The key difference, starting from InternVL 2.0, is that we additionally introduced support for multi-image and video data.

|

| 40 |

+

|

| 41 |

+

## Training Strategy

|

| 42 |

+

|

| 43 |

+

### Dynamic High-Resolution for Multimodal Data

|

| 44 |

+

|

| 45 |

+

In InternVL 2.0 and 2.5, we extend the dynamic high-resolution training approach, enhancing its capabilities to handle multi-image and video datasets.

|

| 46 |

+

|

| 47 |

+

|

| 48 |

+

|

| 49 |

+

- For single-image datasets, the total number of tiles `n_max` are allocated to a single image for maximum resolution. Visual tokens are enclosed in `<img>` and `</img>` tags.

|

| 50 |

+

|

| 51 |

+

- For multi-image datasets, the total number of tiles `n_max` are distributed across all images in a sample. Each image is labeled with auxiliary tags like `Image-1` and enclosed in `<img>` and `</img>` tags.

|

| 52 |

+

|

| 53 |

+

- For videos, each frame is resized to 448×448. Frames are labeled with tags like `Frame-1` and enclosed in `<img>` and `</img>` tags, similar to images.

|

| 54 |

+

|

| 55 |

+

### Single Model Training Pipeline

|

| 56 |

+

|

| 57 |

+

The training pipeline for a single model in InternVL 2.5 is structured across three stages, designed to enhance the model's visual perception and multimodal capabilities.

|

| 58 |

+

|

| 59 |

+

|

| 60 |

+

|

| 61 |

+

- **Stage 1: MLP Warmup.** In this stage, only the MLP projector is trained while the vision encoder and language model are frozen. A dynamic high-resolution training strategy is applied for better performance, despite increased cost. This phase ensures robust cross-modal alignment and prepares the model for stable multimodal training.

|

| 62 |

+

|

| 63 |

+

- **Stage 1.5: ViT Incremental Learning (Optional).** This stage allows incremental training of the vision encoder and MLP projector using the same data as Stage 1. It enhances the encoder’s ability to handle rare domains like multilingual OCR and mathematical charts. Once trained, the encoder can be reused across LLMs without retraining, making this stage optional unless new domains are introduced.

|

| 64 |

+

|

| 65 |

+

- **Stage 2: Full Model Instruction Tuning.** The entire model is trained on high-quality multimodal instruction datasets. Strict data quality controls are enforced to prevent degradation of the LLM, as noisy data can cause issues like repetitive or incorrect outputs. After this stage, the training process is complete.

|

| 66 |

+

|

| 67 |

+

## Evaluation on Vision Capability

|

| 68 |

+

|

| 69 |

+

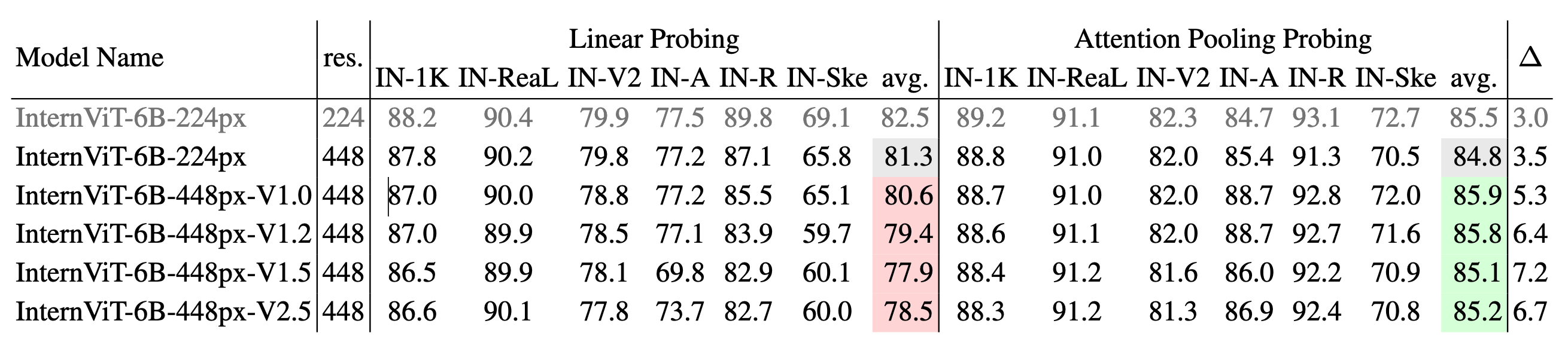

We present a comprehensive evaluation of the vision encoder’s performance across various domains and tasks. The evaluation is divided into two key categories: (1) image classification, representing global-view semantic quality, and (2) semantic segmentation, capturing local-view semantic quality. This approach allows us to assess the representation quality of InternViT across its successive version updates. Please refer to our technical report for more details.

|

| 70 |

+

|

| 71 |

+

## Image Classification

|

| 72 |

+

|

| 73 |

+

|

| 74 |

|

| 75 |

+

**Image classification performance across different versions of InternViT.** We use IN-1K for training and evaluate on the IN-1K validation set as well as multiple ImageNet variants, including IN-ReaL, IN-V2, IN-A, IN-R, and IN-Sketch. Results are reported for both linear probing and attention pooling probing methods, with average accuracy for each method. ∆ represents the performance gap between attention pooling probing and linear probing, where a larger ∆ suggests a shift from learning simple linear features to capturing more complex, nonlinear semantic representations.

|

| 76 |

|

| 77 |

+

## Semantic Segmentation Performance

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 78 |

|

| 79 |

+

|

| 80 |

+

|

| 81 |

+

**Semantic segmentation performance across different versions of InternViT.** The models are evaluated on ADE20K and COCO-Stuff-164K using three configurations: linear probing, head tuning, and full tuning. The table shows the mIoU scores for each configuration and their averages. ∆1 represents the gap between head tuning and linear probing, while ∆2 shows the gap between full tuning and linear probing. A larger ∆ value indicates a shift from simple linear features to more complex, nonlinear representations.

|

| 82 |

+

|

| 83 |

+

## Quick Start

|

| 84 |

+

|

| 85 |

+

> \[!Warning\]

|

| 86 |

+

> 🚨 Note: In our experience, the InternViT V2.5 series is better suited for building MLLMs than traditional computer vision tasks.

|

| 87 |

|

| 88 |

```python

|

| 89 |

import torch

|

|

|

|

| 106 |

outputs = model(pixel_values)

|

| 107 |

```

|

| 108 |

|

| 109 |

+

## License

|

| 110 |

+

|

| 111 |

+

This project is released under the MIT License.

|

| 112 |

+

|

| 113 |

## Citation

|

| 114 |

|

| 115 |

If you find this project useful in your research, please consider citing:

|

| 116 |

|

| 117 |

```BibTeX

|

| 118 |

+

@article{gao2024mini,

|

| 119 |

+

title={Mini-internvl: A flexible-transfer pocket multimodal model with 5\% parameters and 90\% performance},

|

| 120 |

+

author={Gao, Zhangwei and Chen, Zhe and Cui, Erfei and Ren, Yiming and Wang, Weiyun and Zhu, Jinguo and Tian, Hao and Ye, Shenglong and He, Junjun and Zhu, Xizhou and others},

|

| 121 |

+

journal={arXiv preprint arXiv:2410.16261},

|

| 122 |

+

year={2024}

|

|

|

|

| 123 |

}

|

| 124 |

@article{chen2024far,

|

| 125 |

title={How Far Are We to GPT-4V? Closing the Gap to Commercial Multimodal Models with Open-Source Suites},

|

|

|

|

| 127 |

journal={arXiv preprint arXiv:2404.16821},

|

| 128 |

year={2024}

|

| 129 |

}

|

| 130 |

+

@article{chen2023internvl,

|

| 131 |

+

title={InternVL: Scaling up Vision Foundation Models and Aligning for Generic Visual-Linguistic Tasks},

|

| 132 |

+

author={Chen, Zhe and Wu, Jiannan and Wang, Wenhai and Su, Weijie and Chen, Guo and Xing, Sen and Zhong, Muyan and Zhang, Qinglong and Zhu, Xizhou and Lu, Lewei and Li, Bin and Luo, Ping and Lu, Tong and Qiao, Yu and Dai, Jifeng},

|

| 133 |

+

journal={arXiv preprint arXiv:2312.14238},

|

| 134 |

+

year={2023}

|

| 135 |

}

|

|

|

|

| 136 |

```

|

config.json

ADDED

|

@@ -0,0 +1,30 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"InternVisionModel"

|

| 4 |

+

],

|

| 5 |

+

"auto_map": {

|

| 6 |

+

"AutoConfig": "configuration_intern_vit.InternVisionConfig",

|

| 7 |

+

"AutoModel": "modeling_intern_vit.InternVisionModel"

|

| 8 |

+

},

|

| 9 |

+

"attention_dropout": 0.0,

|

| 10 |

+

"drop_path_rate": 0.1,

|

| 11 |

+

"dropout": 0.0,

|

| 12 |

+

"hidden_act": "gelu",

|

| 13 |

+

"hidden_size": 1024,

|

| 14 |

+

"image_size": 448,

|

| 15 |

+

"initializer_factor": 1.0,

|

| 16 |

+

"initializer_range": 0.02,

|

| 17 |

+

"intermediate_size": 4096,

|

| 18 |

+

"layer_norm_eps": 1e-06,

|

| 19 |

+

"model_type": "intern_vit_6b",

|

| 20 |

+

"norm_type": "layer_norm",

|

| 21 |

+

"num_attention_heads": 16,

|

| 22 |

+

"num_channels": 3,

|

| 23 |

+

"num_hidden_layers": 24,

|

| 24 |

+

"patch_size": 14,

|

| 25 |

+

"qk_normalization": false,

|

| 26 |

+

"qkv_bias": true,

|

| 27 |

+

"torch_dtype": "bfloat16",

|

| 28 |

+

"transformers_version": "4.37.2",

|

| 29 |

+

"use_flash_attn": true

|

| 30 |

+

}

|

configuration_intern_vit.py

ADDED

|

@@ -0,0 +1,119 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# --------------------------------------------------------

|

| 2 |

+

# InternVL

|

| 3 |

+

# Copyright (c) 2023 OpenGVLab

|

| 4 |

+

# Licensed under The MIT License [see LICENSE for details]

|

| 5 |

+

# --------------------------------------------------------

|

| 6 |

+

import os

|

| 7 |

+

from typing import Union

|

| 8 |

+

|

| 9 |

+

from transformers.configuration_utils import PretrainedConfig

|

| 10 |

+

from transformers.utils import logging

|

| 11 |

+

|

| 12 |

+

logger = logging.get_logger(__name__)

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

class InternVisionConfig(PretrainedConfig):

|

| 16 |

+

r"""

|

| 17 |

+

This is the configuration class to store the configuration of a [`InternVisionModel`]. It is used to

|

| 18 |

+

instantiate a vision encoder according to the specified arguments, defining the model architecture.

|

| 19 |

+

|

| 20 |

+

Configuration objects inherit from [`PretrainedConfig`] and can be used to control the model outputs. Read the

|

| 21 |

+

documentation from [`PretrainedConfig`] for more information.

|

| 22 |

+

|

| 23 |

+

Args:

|

| 24 |

+

num_channels (`int`, *optional*, defaults to 3):

|

| 25 |

+

Number of color channels in the input images (e.g., 3 for RGB).

|

| 26 |

+

patch_size (`int`, *optional*, defaults to 14):

|

| 27 |

+

The size (resolution) of each patch.

|

| 28 |

+

image_size (`int`, *optional*, defaults to 224):

|

| 29 |

+

The size (resolution) of each image.

|

| 30 |

+

qkv_bias (`bool`, *optional*, defaults to `False`):

|

| 31 |

+

Whether to add a bias to the queries and values in the self-attention layers.

|

| 32 |

+

hidden_size (`int`, *optional*, defaults to 3200):

|

| 33 |

+

Dimensionality of the encoder layers and the pooler layer.

|

| 34 |

+

num_attention_heads (`int`, *optional*, defaults to 25):

|

| 35 |

+

Number of attention heads for each attention layer in the Transformer encoder.

|

| 36 |

+

intermediate_size (`int`, *optional*, defaults to 12800):

|

| 37 |

+

Dimensionality of the "intermediate" (i.e., feed-forward) layer in the Transformer encoder.

|

| 38 |

+

qk_normalization (`bool`, *optional*, defaults to `True`):

|

| 39 |

+

Whether to normalize the queries and keys in the self-attention layers.

|

| 40 |

+

num_hidden_layers (`int`, *optional*, defaults to 48):

|

| 41 |

+

Number of hidden layers in the Transformer encoder.

|

| 42 |

+

use_flash_attn (`bool`, *optional*, defaults to `True`):

|

| 43 |

+

Whether to use flash attention mechanism.

|

| 44 |

+

hidden_act (`str` or `function`, *optional*, defaults to `"gelu"`):

|

| 45 |

+

The non-linear activation function (function or string) in the encoder and pooler. If string, `"gelu"`,

|

| 46 |

+

`"relu"`, `"selu"` and `"gelu_new"` ``"gelu"` are supported.

|

| 47 |

+

layer_norm_eps (`float`, *optional*, defaults to 1e-6):

|

| 48 |

+

The epsilon used by the layer normalization layers.

|

| 49 |

+

dropout (`float`, *optional*, defaults to 0.0):

|

| 50 |

+

The dropout probability for all fully connected layers in the embeddings, encoder, and pooler.

|

| 51 |

+

drop_path_rate (`float`, *optional*, defaults to 0.0):

|

| 52 |

+

Dropout rate for stochastic depth.

|

| 53 |

+

attention_dropout (`float`, *optional*, defaults to 0.0):

|

| 54 |

+

The dropout ratio for the attention probabilities.

|

| 55 |

+

initializer_range (`float`, *optional*, defaults to 0.02):

|

| 56 |

+

The standard deviation of the truncated_normal_initializer for initializing all weight matrices.

|

| 57 |

+

initializer_factor (`float`, *optional*, defaults to 0.1):

|

| 58 |

+

A factor for layer scale.

|

| 59 |

+

"""

|

| 60 |

+

|

| 61 |

+

model_type = 'intern_vit_6b'

|

| 62 |

+

|

| 63 |

+

def __init__(

|

| 64 |

+

self,

|

| 65 |

+

num_channels=3,

|

| 66 |

+

patch_size=14,

|

| 67 |

+

image_size=224,

|

| 68 |

+

qkv_bias=False,

|

| 69 |

+

hidden_size=3200,

|

| 70 |

+

num_attention_heads=25,

|

| 71 |

+

intermediate_size=12800,

|

| 72 |

+

qk_normalization=True,

|

| 73 |

+

num_hidden_layers=48,

|

| 74 |

+

use_flash_attn=True,

|

| 75 |

+

hidden_act='gelu',

|

| 76 |

+

norm_type='rms_norm',

|

| 77 |

+

layer_norm_eps=1e-6,

|

| 78 |

+

dropout=0.0,

|

| 79 |

+

drop_path_rate=0.0,

|

| 80 |

+

attention_dropout=0.0,

|

| 81 |

+

initializer_range=0.02,

|

| 82 |

+

initializer_factor=0.1,

|

| 83 |

+

**kwargs,

|

| 84 |

+

):

|

| 85 |

+

super().__init__(**kwargs)

|

| 86 |

+

|

| 87 |

+

self.hidden_size = hidden_size

|

| 88 |

+

self.intermediate_size = intermediate_size

|

| 89 |

+

self.dropout = dropout

|

| 90 |

+

self.drop_path_rate = drop_path_rate

|

| 91 |

+

self.num_hidden_layers = num_hidden_layers

|

| 92 |

+

self.num_attention_heads = num_attention_heads

|

| 93 |

+

self.num_channels = num_channels

|

| 94 |

+

self.patch_size = patch_size

|

| 95 |

+

self.image_size = image_size

|

| 96 |

+

self.initializer_range = initializer_range

|

| 97 |

+

self.initializer_factor = initializer_factor

|

| 98 |

+

self.attention_dropout = attention_dropout

|

| 99 |

+

self.layer_norm_eps = layer_norm_eps

|

| 100 |

+

self.hidden_act = hidden_act

|

| 101 |

+

self.norm_type = norm_type

|

| 102 |

+

self.qkv_bias = qkv_bias

|

| 103 |

+

self.qk_normalization = qk_normalization

|

| 104 |

+

self.use_flash_attn = use_flash_attn

|

| 105 |

+

|

| 106 |

+

@classmethod

|

| 107 |

+

def from_pretrained(cls, pretrained_model_name_or_path: Union[str, os.PathLike], **kwargs) -> 'PretrainedConfig':

|

| 108 |

+

config_dict, kwargs = cls.get_config_dict(pretrained_model_name_or_path, **kwargs)

|

| 109 |

+

|

| 110 |

+

if 'vision_config' in config_dict:

|

| 111 |

+

config_dict = config_dict['vision_config']

|

| 112 |

+

|

| 113 |

+

if 'model_type' in config_dict and hasattr(cls, 'model_type') and config_dict['model_type'] != cls.model_type:

|

| 114 |

+

logger.warning(

|

| 115 |

+

f"You are using a model of type {config_dict['model_type']} to instantiate a model of type "

|

| 116 |

+

f'{cls.model_type}. This is not supported for all configurations of models and can yield errors.'

|

| 117 |

+

)

|

| 118 |

+

|

| 119 |

+

return cls.from_dict(config_dict, **kwargs)

|

flash_attention.py

ADDED

|

@@ -0,0 +1,76 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# https://github.com/Dao-AILab/flash-attention/blob/v0.2.8/flash_attn/flash_attention.py

|

| 2 |

+

import torch

|

| 3 |

+

import torch.nn as nn

|

| 4 |

+

from einops import rearrange

|

| 5 |

+

|

| 6 |

+

try: # v1

|

| 7 |

+

from flash_attn.flash_attn_interface import \

|

| 8 |

+

flash_attn_unpadded_qkvpacked_func

|

| 9 |

+

except: # v2

|

| 10 |

+

from flash_attn.flash_attn_interface import flash_attn_varlen_qkvpacked_func as flash_attn_unpadded_qkvpacked_func

|

| 11 |

+

|

| 12 |

+

from flash_attn.bert_padding import pad_input, unpad_input

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

class FlashAttention(nn.Module):

|

| 16 |

+

"""Implement the scaled dot product attention with softmax.

|

| 17 |

+

Arguments

|

| 18 |

+

---------

|

| 19 |

+

softmax_scale: The temperature to use for the softmax attention.

|

| 20 |

+

(default: 1/sqrt(d_keys) where d_keys is computed at

|

| 21 |

+

runtime)

|

| 22 |

+

attention_dropout: The dropout rate to apply to the attention

|

| 23 |

+

(default: 0.0)

|

| 24 |

+

"""

|

| 25 |

+

|

| 26 |

+

def __init__(self, softmax_scale=None, attention_dropout=0.0, device=None, dtype=None):

|

| 27 |

+

super().__init__()

|

| 28 |

+

self.softmax_scale = softmax_scale

|

| 29 |

+

self.dropout_p = attention_dropout

|

| 30 |

+

|

| 31 |

+

def forward(self, qkv, key_padding_mask=None, causal=False, cu_seqlens=None,

|

| 32 |

+

max_s=None, need_weights=False):

|

| 33 |

+

"""Implements the multihead softmax attention.

|

| 34 |

+

Arguments

|

| 35 |

+

---------

|

| 36 |

+

qkv: The tensor containing the query, key, and value. (B, S, 3, H, D) if key_padding_mask is None

|

| 37 |

+

if unpadded: (nnz, 3, h, d)

|

| 38 |

+

key_padding_mask: a bool tensor of shape (B, S)

|

| 39 |

+

"""

|

| 40 |

+

assert not need_weights

|

| 41 |

+

assert qkv.dtype in [torch.float16, torch.bfloat16]

|

| 42 |

+

assert qkv.is_cuda

|

| 43 |

+

|

| 44 |

+

if cu_seqlens is None:

|

| 45 |

+

batch_size = qkv.shape[0]

|

| 46 |

+

seqlen = qkv.shape[1]

|

| 47 |

+

if key_padding_mask is None:

|

| 48 |

+

qkv = rearrange(qkv, 'b s ... -> (b s) ...')

|

| 49 |

+

max_s = seqlen

|

| 50 |

+

cu_seqlens = torch.arange(0, (batch_size + 1) * seqlen, step=seqlen, dtype=torch.int32,

|

| 51 |

+

device=qkv.device)

|

| 52 |

+

output = flash_attn_unpadded_qkvpacked_func(

|

| 53 |

+

qkv, cu_seqlens, max_s, self.dropout_p if self.training else 0.0,

|

| 54 |

+

softmax_scale=self.softmax_scale, causal=causal

|

| 55 |

+

)

|

| 56 |

+

output = rearrange(output, '(b s) ... -> b s ...', b=batch_size)

|

| 57 |

+

else:

|

| 58 |

+

nheads = qkv.shape[-2]

|

| 59 |

+

x = rearrange(qkv, 'b s three h d -> b s (three h d)')

|

| 60 |

+

x_unpad, indices, cu_seqlens, max_s = unpad_input(x, key_padding_mask)

|

| 61 |

+

x_unpad = rearrange(x_unpad, 'nnz (three h d) -> nnz three h d', three=3, h=nheads)

|

| 62 |

+

output_unpad = flash_attn_unpadded_qkvpacked_func(

|

| 63 |

+

x_unpad, cu_seqlens, max_s, self.dropout_p if self.training else 0.0,

|

| 64 |

+

softmax_scale=self.softmax_scale, causal=causal

|

| 65 |

+

)

|

| 66 |

+

output = rearrange(pad_input(rearrange(output_unpad, 'nnz h d -> nnz (h d)'),

|

| 67 |

+

indices, batch_size, seqlen),

|

| 68 |

+

'b s (h d) -> b s h d', h=nheads)

|

| 69 |

+

else:

|

| 70 |

+

assert max_s is not None

|

| 71 |

+

output = flash_attn_unpadded_qkvpacked_func(

|

| 72 |

+

qkv, cu_seqlens, max_s, self.dropout_p if self.training else 0.0,

|

| 73 |

+

softmax_scale=self.softmax_scale, causal=causal

|

| 74 |

+

)

|

| 75 |

+

|

| 76 |

+

return output, None

|

model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e88b49b25b61cf1874f4b9eb1289ab16fffcaa2f0e0766daceaaf3ec1471a9a3

|

| 3 |

+

size 608059320

|

modeling_intern_vit.py

ADDED

|

@@ -0,0 +1,363 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# --------------------------------------------------------

|

| 2 |

+

# InternVL

|

| 3 |

+

# Copyright (c) 2023 OpenGVLab

|

| 4 |

+

# Licensed under The MIT License [see LICENSE for details]

|

| 5 |

+

# --------------------------------------------------------

|

| 6 |

+

from typing import Optional, Tuple, Union

|

| 7 |

+

|

| 8 |

+

import torch

|

| 9 |

+

import torch.nn.functional as F

|

| 10 |

+

import torch.utils.checkpoint

|

| 11 |

+

from einops import rearrange

|

| 12 |

+

from timm.models.layers import DropPath

|

| 13 |

+

from torch import nn

|

| 14 |

+

from transformers.activations import ACT2FN

|

| 15 |

+

from transformers.modeling_outputs import (BaseModelOutput,

|

| 16 |

+

BaseModelOutputWithPooling)

|

| 17 |

+

from transformers.modeling_utils import PreTrainedModel

|

| 18 |

+

from transformers.utils import logging

|

| 19 |

+

|

| 20 |

+

from .configuration_intern_vit import InternVisionConfig

|

| 21 |

+

|

| 22 |

+

try:

|

| 23 |

+

from .flash_attention import FlashAttention

|

| 24 |

+

has_flash_attn = True

|

| 25 |

+

except:

|

| 26 |

+

print('FlashAttention is not installed.')

|

| 27 |

+

has_flash_attn = False

|

| 28 |

+

|

| 29 |

+

|

| 30 |

+

logger = logging.get_logger(__name__)

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

class InternRMSNorm(nn.Module):

|

| 34 |

+

def __init__(self, hidden_size, eps=1e-6):

|

| 35 |

+

super().__init__()

|

| 36 |

+

self.weight = nn.Parameter(torch.ones(hidden_size))

|

| 37 |

+

self.variance_epsilon = eps

|

| 38 |

+

|

| 39 |

+

def forward(self, hidden_states):

|

| 40 |

+

input_dtype = hidden_states.dtype

|

| 41 |

+

hidden_states = hidden_states.to(torch.float32)

|

| 42 |

+

variance = hidden_states.pow(2).mean(-1, keepdim=True)

|

| 43 |

+

hidden_states = hidden_states * torch.rsqrt(variance + self.variance_epsilon)

|

| 44 |

+

return self.weight * hidden_states.to(input_dtype)

|

| 45 |

+

|

| 46 |

+

|

| 47 |

+

try:

|

| 48 |

+

from apex.normalization import FusedRMSNorm

|

| 49 |

+

|

| 50 |

+

InternRMSNorm = FusedRMSNorm # noqa

|

| 51 |

+

|

| 52 |

+

logger.info('Discovered apex.normalization.FusedRMSNorm - will use it instead of InternRMSNorm')

|

| 53 |

+

except ImportError:

|

| 54 |

+

# using the normal InternRMSNorm

|

| 55 |

+

pass

|

| 56 |

+

except Exception:

|

| 57 |

+

logger.warning('discovered apex but it failed to load, falling back to InternRMSNorm')

|

| 58 |

+

pass

|

| 59 |

+

|

| 60 |

+

|

| 61 |

+

NORM2FN = {

|

| 62 |

+

'rms_norm': InternRMSNorm,

|

| 63 |

+

'layer_norm': nn.LayerNorm,

|

| 64 |

+

}

|

| 65 |

+

|

| 66 |

+

|

| 67 |

+

class InternVisionEmbeddings(nn.Module):

|

| 68 |

+

def __init__(self, config: InternVisionConfig):

|

| 69 |

+

super().__init__()

|

| 70 |

+

self.config = config

|

| 71 |

+

self.embed_dim = config.hidden_size

|

| 72 |

+

self.image_size = config.image_size

|

| 73 |

+

self.patch_size = config.patch_size

|

| 74 |

+

|

| 75 |

+

self.class_embedding = nn.Parameter(

|

| 76 |

+

torch.randn(1, 1, self.embed_dim),

|

| 77 |

+

)

|

| 78 |

+

|

| 79 |

+

self.patch_embedding = nn.Conv2d(

|

| 80 |

+

in_channels=3, out_channels=self.embed_dim, kernel_size=self.patch_size, stride=self.patch_size

|

| 81 |

+

)

|

| 82 |

+

|

| 83 |

+

self.num_patches = (self.image_size // self.patch_size) ** 2

|

| 84 |

+

self.num_positions = self.num_patches + 1

|

| 85 |

+

|

| 86 |

+

self.position_embedding = nn.Parameter(torch.randn(1, self.num_positions, self.embed_dim))

|

| 87 |

+

|

| 88 |

+

def _get_pos_embed(self, pos_embed, H, W):

|

| 89 |

+

target_dtype = pos_embed.dtype

|

| 90 |

+

pos_embed = pos_embed.float().reshape(

|

| 91 |

+

1, self.image_size // self.patch_size, self.image_size // self.patch_size, -1).permute(0, 3, 1, 2)

|

| 92 |

+

pos_embed = F.interpolate(pos_embed, size=(H, W), mode='bicubic', align_corners=False).\

|

| 93 |

+

reshape(1, -1, H * W).permute(0, 2, 1).to(target_dtype)

|

| 94 |

+

return pos_embed

|

| 95 |

+

|

| 96 |

+

def forward(self, pixel_values: torch.FloatTensor) -> torch.Tensor:

|

| 97 |

+

target_dtype = self.patch_embedding.weight.dtype

|

| 98 |

+

patch_embeds = self.patch_embedding(pixel_values) # shape = [*, channel, width, height]

|

| 99 |

+

batch_size, _, height, width = patch_embeds.shape

|

| 100 |

+

patch_embeds = patch_embeds.flatten(2).transpose(1, 2)

|

| 101 |

+

class_embeds = self.class_embedding.expand(batch_size, 1, -1).to(target_dtype)

|

| 102 |

+

embeddings = torch.cat([class_embeds, patch_embeds], dim=1)

|

| 103 |

+

position_embedding = torch.cat([

|

| 104 |

+

self.position_embedding[:, :1, :],

|

| 105 |

+

self._get_pos_embed(self.position_embedding[:, 1:, :], height, width)

|

| 106 |

+

], dim=1)

|

| 107 |

+

embeddings = embeddings + position_embedding.to(target_dtype)

|

| 108 |

+

return embeddings

|

| 109 |

+

|

| 110 |

+

|

| 111 |

+

class InternAttention(nn.Module):

|

| 112 |

+

"""Multi-headed attention from 'Attention Is All You Need' paper"""

|

| 113 |

+

|

| 114 |

+

def __init__(self, config: InternVisionConfig):

|

| 115 |

+

super().__init__()

|

| 116 |

+

self.config = config

|

| 117 |

+

self.embed_dim = config.hidden_size

|

| 118 |

+

self.num_heads = config.num_attention_heads

|

| 119 |

+

self.use_flash_attn = config.use_flash_attn and has_flash_attn

|

| 120 |

+

if config.use_flash_attn and not has_flash_attn:

|

| 121 |

+

print('Warning: Flash Attention is not available, use_flash_attn is set to False.')

|

| 122 |

+

self.head_dim = self.embed_dim // self.num_heads

|

| 123 |

+

if self.head_dim * self.num_heads != self.embed_dim:

|

| 124 |

+

raise ValueError(

|

| 125 |

+

f'embed_dim must be divisible by num_heads (got `embed_dim`: {self.embed_dim} and `num_heads`:'

|

| 126 |

+

f' {self.num_heads}).'

|

| 127 |

+

)

|

| 128 |

+

|

| 129 |

+

self.scale = self.head_dim ** -0.5

|

| 130 |

+

self.qkv = nn.Linear(self.embed_dim, 3 * self.embed_dim, bias=config.qkv_bias)

|

| 131 |

+

self.attn_drop = nn.Dropout(config.attention_dropout)

|

| 132 |

+

self.proj_drop = nn.Dropout(config.dropout)

|

| 133 |

+

|

| 134 |

+

self.qk_normalization = config.qk_normalization

|

| 135 |

+

|

| 136 |

+

if self.qk_normalization:

|

| 137 |

+

self.q_norm = InternRMSNorm(self.embed_dim, eps=config.layer_norm_eps)

|

| 138 |

+

self.k_norm = InternRMSNorm(self.embed_dim, eps=config.layer_norm_eps)

|

| 139 |

+

|

| 140 |

+

if self.use_flash_attn:

|

| 141 |

+

self.inner_attn = FlashAttention(attention_dropout=config.attention_dropout)

|

| 142 |

+

self.proj = nn.Linear(self.embed_dim, self.embed_dim)

|

| 143 |

+

|

| 144 |

+

def _naive_attn(self, x):

|

| 145 |

+

B, N, C = x.shape

|

| 146 |

+

qkv = self.qkv(x).reshape(B, N, 3, self.num_heads, C // self.num_heads).permute(2, 0, 3, 1, 4)

|

| 147 |

+

q, k, v = qkv.unbind(0) # make torchscript happy (cannot use tensor as tuple)

|

| 148 |

+

|

| 149 |

+

if self.qk_normalization:

|

| 150 |

+

B_, H_, N_, D_ = q.shape

|

| 151 |

+

q = self.q_norm(q.transpose(1, 2).flatten(-2, -1)).view(B_, N_, H_, D_).transpose(1, 2)

|

| 152 |

+

k = self.k_norm(k.transpose(1, 2).flatten(-2, -1)).view(B_, N_, H_, D_).transpose(1, 2)

|

| 153 |

+

|

| 154 |

+

attn = ((q * self.scale) @ k.transpose(-2, -1))

|

| 155 |

+

attn = attn.softmax(dim=-1)

|

| 156 |

+

attn = self.attn_drop(attn)

|

| 157 |

+

|

| 158 |

+

x = (attn @ v).transpose(1, 2).reshape(B, N, C)

|

| 159 |

+

x = self.proj(x)

|

| 160 |

+

x = self.proj_drop(x)

|

| 161 |

+

return x

|

| 162 |

+

|

| 163 |

+

def _flash_attn(self, x, key_padding_mask=None, need_weights=False):

|

| 164 |

+

qkv = self.qkv(x)

|

| 165 |

+

qkv = rearrange(qkv, 'b s (three h d) -> b s three h d', three=3, h=self.num_heads)

|

| 166 |

+

|

| 167 |

+

if self.qk_normalization:

|

| 168 |

+

q, k, v = qkv.unbind(2)

|

| 169 |

+

q = self.q_norm(q.flatten(-2, -1)).view(q.shape)

|

| 170 |

+

k = self.k_norm(k.flatten(-2, -1)).view(k.shape)

|

| 171 |

+

qkv = torch.stack([q, k, v], dim=2)

|

| 172 |

+

|

| 173 |

+

context, _ = self.inner_attn(

|

| 174 |

+

qkv, key_padding_mask=key_padding_mask, need_weights=need_weights, causal=False

|

| 175 |

+

)

|

| 176 |

+

outs = self.proj(rearrange(context, 'b s h d -> b s (h d)'))

|

| 177 |

+

outs = self.proj_drop(outs)

|

| 178 |

+

return outs

|

| 179 |

+

|

| 180 |

+

def forward(self, hidden_states: torch.Tensor) -> torch.Tensor:

|

| 181 |

+

x = self._naive_attn(hidden_states) if not self.use_flash_attn else self._flash_attn(hidden_states)

|

| 182 |

+

return x

|

| 183 |

+

|

| 184 |

+

|

| 185 |

+

class InternMLP(nn.Module):

|

| 186 |

+

def __init__(self, config: InternVisionConfig):

|

| 187 |

+

super().__init__()

|

| 188 |

+

self.config = config

|

| 189 |

+

self.act = ACT2FN[config.hidden_act]

|

| 190 |

+

self.fc1 = nn.Linear(config.hidden_size, config.intermediate_size)

|

| 191 |

+

self.fc2 = nn.Linear(config.intermediate_size, config.hidden_size)

|

| 192 |

+

|

| 193 |

+

def forward(self, hidden_states: torch.Tensor) -> torch.Tensor:

|

| 194 |

+

hidden_states = self.fc1(hidden_states)

|

| 195 |

+

hidden_states = self.act(hidden_states)

|

| 196 |

+

hidden_states = self.fc2(hidden_states)

|

| 197 |

+

return hidden_states

|

| 198 |

+

|

| 199 |

+

|

| 200 |

+

class InternVisionEncoderLayer(nn.Module):

|

| 201 |

+

def __init__(self, config: InternVisionConfig, drop_path_rate: float):

|

| 202 |

+

super().__init__()

|

| 203 |

+

self.embed_dim = config.hidden_size

|

| 204 |

+

self.intermediate_size = config.intermediate_size

|

| 205 |

+

self.norm_type = config.norm_type

|

| 206 |

+

|

| 207 |

+

self.attn = InternAttention(config)

|

| 208 |

+

self.mlp = InternMLP(config)

|

| 209 |

+

self.norm1 = NORM2FN[self.norm_type](self.embed_dim, eps=config.layer_norm_eps)

|

| 210 |

+

self.norm2 = NORM2FN[self.norm_type](self.embed_dim, eps=config.layer_norm_eps)

|

| 211 |

+

|

| 212 |

+

self.ls1 = nn.Parameter(config.initializer_factor * torch.ones(self.embed_dim))

|

| 213 |

+

self.ls2 = nn.Parameter(config.initializer_factor * torch.ones(self.embed_dim))

|

| 214 |

+

self.drop_path1 = DropPath(drop_path_rate) if drop_path_rate > 0. else nn.Identity()

|

| 215 |

+

self.drop_path2 = DropPath(drop_path_rate) if drop_path_rate > 0. else nn.Identity()

|

| 216 |

+

|

| 217 |

+

def forward(

|

| 218 |

+

self,

|

| 219 |

+

hidden_states: torch.Tensor,

|

| 220 |

+

) -> Tuple[torch.FloatTensor, Optional[torch.FloatTensor], Optional[Tuple[torch.FloatTensor]]]:

|

| 221 |

+

"""

|

| 222 |

+

Args:

|

| 223 |

+

hidden_states (`Tuple[torch.FloatTensor, Optional[torch.FloatTensor]]`): input to the layer of shape `(batch, seq_len, embed_dim)`

|

| 224 |

+

"""

|

| 225 |

+

hidden_states = hidden_states + self.drop_path1(self.attn(self.norm1(hidden_states)) * self.ls1)

|

| 226 |

+

|

| 227 |

+

hidden_states = hidden_states + self.drop_path2(self.mlp(self.norm2(hidden_states)) * self.ls2)

|

| 228 |

+

|

| 229 |

+

return hidden_states

|

| 230 |

+

|

| 231 |

+

|

| 232 |

+

class InternVisionEncoder(nn.Module):

|

| 233 |

+

"""

|

| 234 |

+

Transformer encoder consisting of `config.num_hidden_layers` self attention layers. Each layer is a

|

| 235 |

+

[`InternEncoderLayer`].

|

| 236 |

+

|

| 237 |

+

Args:

|

| 238 |

+

config (`InternConfig`):

|

| 239 |

+

The corresponding vision configuration for the `InternEncoder`.

|

| 240 |

+

"""

|

| 241 |

+

|

| 242 |

+

def __init__(self, config: InternVisionConfig):

|

| 243 |

+

super().__init__()

|

| 244 |

+

self.config = config

|

| 245 |

+

# stochastic depth decay rule

|

| 246 |

+

dpr = [x.item() for x in torch.linspace(0, config.drop_path_rate, config.num_hidden_layers)]

|

| 247 |

+

self.layers = nn.ModuleList([

|

| 248 |

+

InternVisionEncoderLayer(config, dpr[idx]) for idx in range(config.num_hidden_layers)])

|

| 249 |

+

self.gradient_checkpointing = True

|

| 250 |

+

|

| 251 |

+

def forward(

|

| 252 |

+

self,

|

| 253 |

+

inputs_embeds,

|

| 254 |

+

output_hidden_states: Optional[bool] = None,

|

| 255 |

+

return_dict: Optional[bool] = None,

|

| 256 |

+

) -> Union[Tuple, BaseModelOutput]:

|

| 257 |

+

r"""

|

| 258 |

+

Args:

|

| 259 |

+

inputs_embeds (`torch.FloatTensor` of shape `(batch_size, sequence_length, hidden_size)`):

|

| 260 |

+

Embedded representation of the inputs. Should be float, not int tokens.

|

| 261 |

+

output_hidden_states (`bool`, *optional*):

|

| 262 |

+

Whether or not to return the hidden states of all layers. See `hidden_states` under returned tensors

|

| 263 |

+

for more detail.

|

| 264 |

+

return_dict (`bool`, *optional*):

|

| 265 |

+

Whether or not to return a [`~utils.ModelOutput`] instead of a plain tuple.

|

| 266 |

+

"""

|

| 267 |

+

output_hidden_states = (

|

| 268 |

+

output_hidden_states if output_hidden_states is not None else self.config.output_hidden_states

|

| 269 |

+

)

|

| 270 |

+

return_dict = return_dict if return_dict is not None else self.config.use_return_dict

|

| 271 |

+

|

| 272 |

+

encoder_states = () if output_hidden_states else None

|

| 273 |

+

hidden_states = inputs_embeds

|

| 274 |

+

|

| 275 |

+

for idx, encoder_layer in enumerate(self.layers):

|

| 276 |

+

if output_hidden_states:

|

| 277 |

+

encoder_states = encoder_states + (hidden_states,)

|

| 278 |

+

if self.gradient_checkpointing and self.training:

|

| 279 |

+

layer_outputs = torch.utils.checkpoint.checkpoint(

|

| 280 |

+

encoder_layer,

|

| 281 |

+

hidden_states)

|

| 282 |

+

else:

|

| 283 |

+

layer_outputs = encoder_layer(

|

| 284 |

+

hidden_states,

|

| 285 |

+

)

|

| 286 |

+

hidden_states = layer_outputs

|

| 287 |

+

|

| 288 |

+

if output_hidden_states:

|

| 289 |

+

encoder_states = encoder_states + (hidden_states,)

|

| 290 |

+

|

| 291 |

+

if not return_dict:

|

| 292 |

+

return tuple(v for v in [hidden_states, encoder_states] if v is not None)

|

| 293 |

+

return BaseModelOutput(

|

| 294 |

+

last_hidden_state=hidden_states, hidden_states=encoder_states

|

| 295 |

+

)

|

| 296 |

+

|

| 297 |

+

|

| 298 |

+

class InternVisionModel(PreTrainedModel):

|

| 299 |

+

main_input_name = 'pixel_values'

|

| 300 |

+

config_class = InternVisionConfig

|

| 301 |

+

_no_split_modules = ['InternVisionEncoderLayer']

|

| 302 |

+

|

| 303 |

+

def __init__(self, config: InternVisionConfig):

|

| 304 |

+

super().__init__(config)

|

| 305 |

+

self.config = config

|

| 306 |

+

|

| 307 |

+

self.embeddings = InternVisionEmbeddings(config)

|

| 308 |

+

self.encoder = InternVisionEncoder(config)

|

| 309 |

+

|

| 310 |

+

def resize_pos_embeddings(self, old_size, new_size, patch_size):

|

| 311 |

+

pos_emb = self.embeddings.position_embedding

|

| 312 |

+

_, num_positions, embed_dim = pos_emb.shape

|

| 313 |

+

cls_emb = pos_emb[:, :1, :]

|

| 314 |

+

pos_emb = pos_emb[:, 1:, :].reshape(1, old_size // patch_size, old_size // patch_size, -1).permute(0, 3, 1, 2)

|

| 315 |

+

pos_emb = F.interpolate(pos_emb.float(), size=new_size // patch_size, mode='bicubic', align_corners=False)

|

| 316 |

+

pos_emb = pos_emb.to(cls_emb.dtype).reshape(1, embed_dim, -1).permute(0, 2, 1)

|

| 317 |

+

pos_emb = torch.cat([cls_emb, pos_emb], dim=1)

|

| 318 |

+

self.embeddings.position_embedding = nn.Parameter(pos_emb)

|

| 319 |

+

self.embeddings.image_size = new_size

|

| 320 |

+

logger.info('Resized position embeddings from {} to {}'.format(old_size, new_size))

|

| 321 |

+

|

| 322 |

+

def get_input_embeddings(self):

|

| 323 |

+

return self.embeddings

|

| 324 |

+

|

| 325 |

+

def forward(

|

| 326 |

+

self,

|

| 327 |

+

pixel_values: Optional[torch.FloatTensor] = None,

|

| 328 |

+

output_hidden_states: Optional[bool] = None,

|

| 329 |

+

return_dict: Optional[bool] = None,

|

| 330 |

+

pixel_embeds: Optional[torch.FloatTensor] = None,

|

| 331 |

+

) -> Union[Tuple, BaseModelOutputWithPooling]:

|

| 332 |

+

output_hidden_states = (

|

| 333 |

+

output_hidden_states if output_hidden_states is not None else self.config.output_hidden_states

|

| 334 |

+

)

|

| 335 |

+

return_dict = return_dict if return_dict is not None else self.config.use_return_dict

|

| 336 |

+

|

| 337 |

+

if pixel_values is None and pixel_embeds is None:

|

| 338 |

+

raise ValueError('You have to specify pixel_values or pixel_embeds')

|

| 339 |

+

|

| 340 |

+

if pixel_embeds is not None:

|

| 341 |

+

hidden_states = pixel_embeds

|

| 342 |

+

else:

|

| 343 |

+

if len(pixel_values.shape) == 4:

|

| 344 |

+

hidden_states = self.embeddings(pixel_values)

|

| 345 |

+

else:

|

| 346 |

+

raise ValueError(f'wrong pixel_values size: {pixel_values.shape}')

|

| 347 |

+

encoder_outputs = self.encoder(

|

| 348 |

+

inputs_embeds=hidden_states,

|

| 349 |

+

output_hidden_states=output_hidden_states,

|

| 350 |

+

return_dict=return_dict,

|

| 351 |

+

)

|

| 352 |

+

last_hidden_state = encoder_outputs.last_hidden_state

|

| 353 |

+

pooled_output = last_hidden_state[:, 0, :]

|

| 354 |

+

|

| 355 |

+

if not return_dict:

|

| 356 |

+

return (last_hidden_state, pooled_output) + encoder_outputs[1:]

|

| 357 |

+

|

| 358 |

+

return BaseModelOutputWithPooling(

|

| 359 |

+

last_hidden_state=last_hidden_state,

|

| 360 |

+

pooler_output=pooled_output,

|

| 361 |

+

hidden_states=encoder_outputs.hidden_states,

|

| 362 |

+

attentions=encoder_outputs.attentions,

|

| 363 |

+

)

|

preprocessor_config.json

ADDED

|

@@ -0,0 +1,19 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"crop_size": 448,

|

| 3 |

+

"do_center_crop": true,

|

| 4 |

+

"do_normalize": true,

|

| 5 |

+

"do_resize": true,

|

| 6 |

+

"feature_extractor_type": "CLIPFeatureExtractor",

|

| 7 |

+

"image_mean": [

|

| 8 |

+

0.485,

|

| 9 |

+

0.456,

|

| 10 |

+

0.406

|

| 11 |

+

],

|

| 12 |

+

"image_std": [

|

| 13 |

+

0.229,

|

| 14 |

+

0.224,

|

| 15 |

+

0.225

|

| 16 |

+

],

|

| 17 |

+

"resample": 3,

|

| 18 |

+

"size": 448

|

| 19 |

+

}

|