huangyi

commited on

Commit

·

316ed90

1

Parent(s):

9979829

init: Add documents 1st

Browse filesSigned-off-by: huangyi <[email protected]>

- README.md +245 -0

- README_zh.md +242 -0

- assets/imgs/inf_spd_en.png +0 -0

- assets/imgs/inf_spd_zh.png +0 -0

- assets/imgs/orion_star.PNG +0 -0

- assets/imgs/wechat_group.jpg +0 -0

README.md

ADDED

|

@@ -0,0 +1,245 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

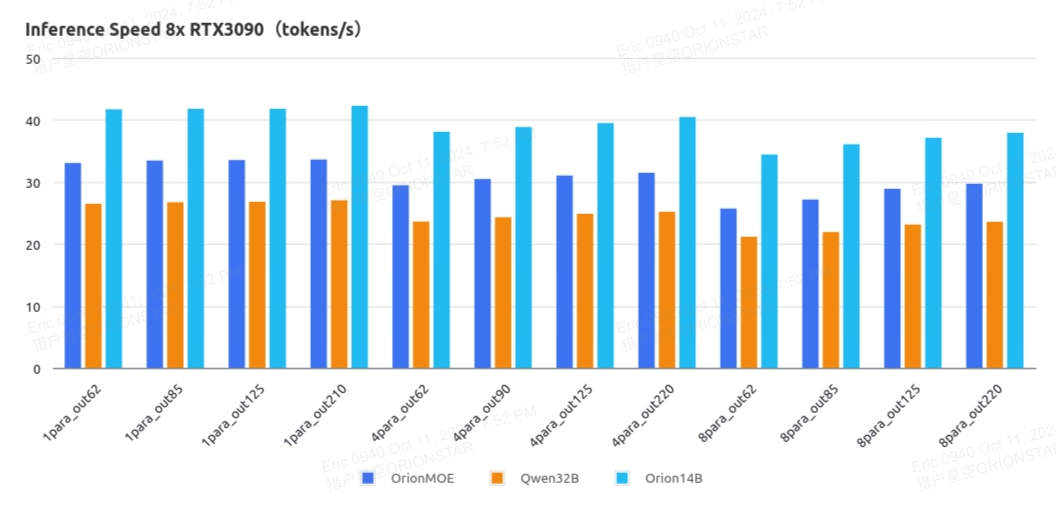

|

|

|

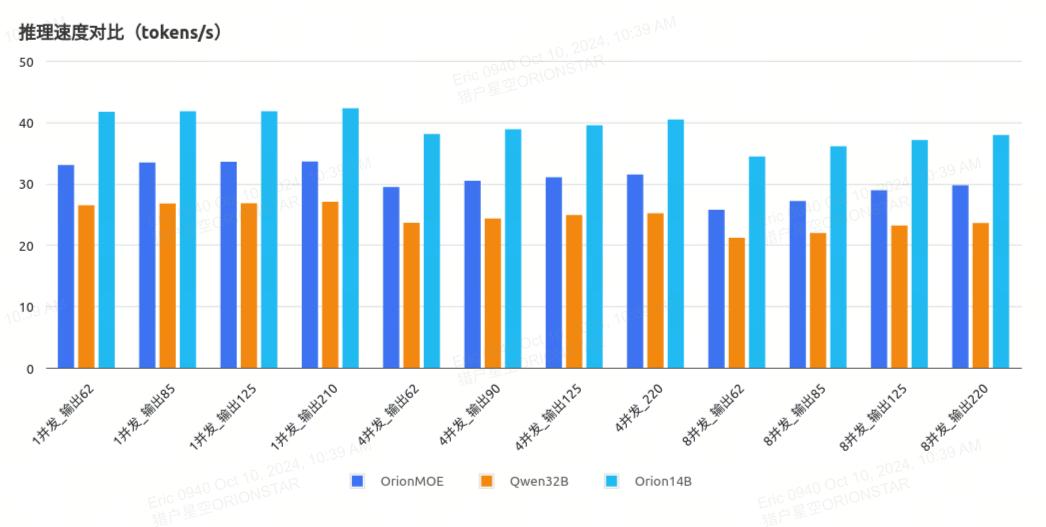

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language:

|

| 3 |

+

- en

|

| 4 |

+

- zh

|

| 5 |

+

- ja

|

| 6 |

+

- ko

|

| 7 |

+

metrics:

|

| 8 |

+

- accuracy

|

| 9 |

+

pipeline_tag: text-generation

|

| 10 |

+

tags:

|

| 11 |

+

- code

|

| 12 |

+

- model

|

| 13 |

+

- llm

|

| 14 |

+

---

|

| 15 |

+

<!-- markdownlint-disable first-line-h1 -->

|

| 16 |

+

<!-- markdownlint-disable html -->

|

| 17 |

+

<div align="center">

|

| 18 |

+

<img src="./assets/imgs/orion_star.PNG" alt="logo" width="50%" />

|

| 19 |

+

</div>

|

| 20 |

+

|

| 21 |

+

<div align="center">

|

| 22 |

+

<h1>

|

| 23 |

+

Orion-MOE8x7B

|

| 24 |

+

</h1>

|

| 25 |

+

</div>

|

| 26 |

+

|

| 27 |

+

<div align="center">

|

| 28 |

+

|

| 29 |

+

<div align="center">

|

| 30 |

+

<b>🌐English</b> | <a href="https://huggingface.co/OrionStarAI/Orion-MOE8x7B-Base/blob/main/README_zh.md" target="_blank">🇨🇳中文</a>

|

| 31 |

+

</div>

|

| 32 |

+

|

| 33 |

+

|

| 34 |

+

</div>

|

| 35 |

+

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

# Table of Contents

|

| 39 |

+

|

| 40 |

+

- [📖 Model Introduction](#model-introduction)

|

| 41 |

+

- [🔗 Model Download](#model-download)

|

| 42 |

+

- [🔖 Model Benchmark](#model-benchmark)

|

| 43 |

+

- [📜 Declarations & License](#declarations-license)

|

| 44 |

+

- [🥇 Company Introduction](#company-introduction)

|

| 45 |

+

|

| 46 |

+

<a name="model-introduction"></a><br>

|

| 47 |

+

# 1. Model Introduction

|

| 48 |

+

|

| 49 |

+

- Orion-MOE8x7B-Base Large Language Model(LLM) is a pretrained generative Sparse Mixture of Experts, trained from scratch by OrionStarAI. The base model is trained on multilingual corpus, including Chinese, English, Japanese, Korean, etc, and it exhibits superior performance in these languages.

|

| 50 |

+

|

| 51 |

+

- The Orion-MOE8x7B series models exhibit the following features:

|

| 52 |

+

- The model demonstrates excellent performance in comprehensive evaluations compared to other base models of the same parameter scale.

|

| 53 |

+

- It has strong multilingual capabilities, significantly leading in Japanese and Korean test sets, and also performing comprehensively better in Arabic, German, French, and Spanish test sets.

|

| 54 |

+

|

| 55 |

+

|

| 56 |

+

<a name="model-download"></a><br>

|

| 57 |

+

# 2. Model Download

|

| 58 |

+

|

| 59 |

+

Model release and download links are provided in the table below:

|

| 60 |

+

|

| 61 |

+

| Model Name | HuggingFace Download Links | ModelScope Download Links |

|

| 62 |

+

|----------------------|-----------------------------------------------------------------------------|-------------------------------------------------------------------------------------------|

|

| 63 |

+

| ⚾Orion-MOE8x7B-Base | [Orion-MOE8x7B-Base](https://huggingface.co/OrionStarAI/Orion-MOE8x7B-Base) | [Orion-MOE8x7B-Base](https://modelscope.cn/models/OrionStarAI/Orion-MOE8x7B-Base/summary) |

|

| 64 |

+

|

| 65 |

+

|

| 66 |

+

<a name="model-benchmark"></a><br>

|

| 67 |

+

# 3. Model Benchmarks

|

| 68 |

+

|

| 69 |

+

## 3.1. Base Model Orion-MOE8x7B-Base Benchmarks

|

| 70 |

+

### 3.1.1. LLM evaluation results on examination and professional knowledge

|

| 71 |

+

| Model | ceval | cmmlu | mmlu | mmlu_pro | ARC_c | hellaswag |

|

| 72 |

+

|-------|---------|--------|-------|----------|-------|-----------|

|

| 73 |

+

|Mixtral 8x7B | 54.0861 | 53.21 | 70.4000 | 38.5000 | 85.0847 | 81.9458 |

|

| 74 |

+

|Qwen1.5-32b | 83.5000 | 82.3000 | 73.4000 | 45.2500 | 90.1695 | 81.9757 |

|

| 75 |

+

|Qwen2.5-32b | 87.7414 | 89.0088 | 82.9000 | 58.0100 | 94.2373 | 82.5134 |

|

| 76 |

+

|Orion 14B | 72.8000 | 70.5700 | 69.9400 | 33.9500 | 79.6600 | 78.5300 |

|

| 77 |

+

|<span style="color: red;">Orion 8x7B | <span style="color: red;">89.7400 | <span style="color: red;">89.1555 | <span style="color: red;">85.9000 | <span style="color: red;">58.3100 | <span style="color: red;">91.8644 | <span style="color: red;">89.19 |

|

| 78 |

+

|**Model**|**lambada**|**bbh**|**musr**|**piqa**|**commonsense_qa**|**IFEval**|

|

| 79 |

+

|Mixtral 8x7B | 76.7902 | 50.87 | 43.21 | 83.41 | 69.62 | 24.15 |

|

| 80 |

+

|Qwen1.5-32b | 73.7434 | 57.2800 | 42.6500 | 82.1500 | 74.6900 | 32.9700 |

|

| 81 |

+

|Qwen2.5-32b | 75.3736 | 67.6900 | 49.7800 | 80.0500 | 72.9700 | 41.5900 |

|

| 82 |

+

|Orion 14B | 78.8300 | 50.3500 | 43.6100 | 79.5400 | 66.9100 | 29.0800 |

|

| 83 |

+

|<span style="color: red;">Orion 8x7B |<span style="color: red;">79.7399|<span style="color: red;">55.82 |<span style="color: red;">49.93 |<span style="color: red;">87.32 |<span style="color: red;">73.05 |<span style="color: red;">30.06 |

|

| 84 |

+

|**Model**|**GQPA**|**human-eval**|**MBPP**|**math_lv5**|**gsm8k**|**math**|

|

| 85 |

+

|Mixtral 8x7B | 30.9000 | 33.5366 | 60.7000 | 9.0000 | 47.5000 | 28.4000 |

|

| 86 |

+

|Qwen1.5-32b | 33.4900 | 35.9756 | 49.4000 | 25.0000 | 77.4000 | 36.1000 |

|

| 87 |

+

|Qwen2.5-32b | 49.5000 | 46.9512 | 71.0000 | 31.7200 | 80.3630 | 48.8800 |

|

| 88 |

+

|Orion 14B | 28.5300 | 20.1200 | 30.0000 | 2.5400 | 52.0100 | 7.8400 |

|

| 89 |

+

|<span style="color: red;">Orion 8x7B |<span style="color: red;">52.1700 |<span style="color: red;">44.5122 |<span style="color: red;">43.4 |<span style="color: red;">5.07 |<span style="color: red;">59.8200 |<span style="color: red;">23.6800 |

|

| 90 |

+

|

| 91 |

+

### 3.1.2. Comparison of LLM performances on Japanese testsets

|

| 92 |

+

| Model | jsquad | jcommonsenseqa | jnli | marc_ja | jaqket_v2 | paws_ja | avg |

|

| 93 |

+

|-------|---------|--------|-------|----------|-------|-----------|-----|

|

| 94 |

+

|Mixtral-8x7B | 0.8900 | 0.7873 | 0.3213 | 0.9544 | 0.7886 | 44.5000 | 8.0403 |

|

| 95 |

+

|Qwen1.5-32B | 0.8986 | 0.8454 | 0.5099 | 0.9708 | 0.8214 | 0.4380 | 0.7474 |

|

| 96 |

+

|Qwen2.5-32B | 0.8909 | 0.9383 | 0.7214 | 0.9786 | 0.8927 | 0.4215 | 0.8073 |

|

| 97 |

+

|Orion-14B-Base | 0.7422 | 0.8820 | 0.7285 | 0.9406 | 0.6620 | 0.4990 | 0.7424 |

|

| 98 |

+

|<span style="color: red;">Orion 8x7B |<span style="color: red;">0.9177 |<span style="color: red;">0.9043 |<span style="color: red;">0.9046 |<span style="color: red;">0.9640 |<span style="color: red;">0.8119 |<span style="color: red;">0.4735 |<span style="color: red;">0.8293 |

|

| 99 |

+

|

| 100 |

+

### 3.1.3. Comparison of LLM performances on Korean testsets

|

| 101 |

+

|Model | haerae | kobest boolq | kobest copa | kobest hellaswag | kobest sentineg | kobest wic | paws_ko | avg |

|

| 102 |

+

|--------|----|----|----|----|----|----|----|----|

|

| 103 |

+

|Mixtral-8x7B | 53.16 | 78.56 | 66.2 | 56.6 | 77.08 | 49.37 | 44.05 | 60.71714286 |

|

| 104 |

+

|Qwen1.5-32B | 46.38 | 76.28 | 60.4 | 53 | 78.34 | 52.14 | 43.4 | 58.56285714 |

|

| 105 |

+

|Qwen2.5-32B | 70.67 | 80.27 | 76.7 | 61.2 | 96.47 | 77.22 | 37.05 | 71.36857143 |

|

| 106 |

+

|Orion-14B-Base | 69.66 | 80.63 | 77.1 | 58.2 | 92.44 | 51.19 | 44.55 | 67.68142857 |

|

| 107 |

+

|<span style="color: red;">Orion 8x7B |<span style="color: red;">65.17 |<span style="color: red;">85.4 |<span style="color: red;">80.4 |<span style="color: red;">56 |<span style="color: red;">96.98 |<span style="color: red;">73.57 |<span style="color: red;">46.35 |<span style="color: red;">71.98142857 |

|

| 108 |

+

|

| 109 |

+

### 3.1.4. Comparison of LLM performances on Arabic, German, French, and Spanish testsets

|

| 110 |

+

| Lang | ar | | de | | fr | | es | |

|

| 111 |

+

|----|----|----|----|----|----|----|----|----|

|

| 112 |

+

|**model**|**hellaswag**|**arc**|**hellaswag**|**arc**|**hellaswag**|**arc**|**hellaswag**|**arc**|

|

| 113 |

+

|Mixtral-8x7B | 47.93 | 36.27 | 69.17 | 52.35 | 73.9 | 55.86 | 74.25 | 54.79 |

|

| 114 |

+

|Qwen1.5-32B | 50.07 | 39.95 | 63.77 | 50.81 | 68.86 | 55.95 | 70.5 | 55.13 |

|

| 115 |

+

|Qwen2.5-32B | 59.76 | 52.87 | 69.82 | 61.76 | 74.15 | 62.7 | 75.04 | 65.3 |

|

| 116 |

+

|Orion-14B-Base | 42.26 | 33.88 | 54.65 | 38.92 | 60.21 | 42.34 | 62 | 44.62 |

|

| 117 |

+

|<span style="color: red;">Orion 8x7B |<span style="color: red;">69.39 |<span style="color: red;">54.32 |<span style="color: red;">80.6 |<span style="color: red;">63.47 |<span style="color: red;">85.56 |<span style="color: red;">68.78 |<span style="color: red;">87.41 |<span style="color: red;">70.09 |

|

| 118 |

+

|

| 119 |

+

### 3.1.5. Leakage Detection Benchmark

|

| 120 |

+

The proportion of leakage data(from various evaluation benchmarks) in the pre-trained corpus; the higher the proportion, the more leakage it indicates.

|

| 121 |

+

- Code: https://github.com/nishiwen1214/Benchmark-leakage-detection

|

| 122 |

+

- Paper: https://web3.arxiv.org/pdf/2409.01790

|

| 123 |

+

- Blog: https://mp.weixin.qq.com/s/BtcJmDEUyzAYG-fqCal2lA

|

| 124 |

+

- English Test: mmlu

|

| 125 |

+

- Chinese Test: ceval, cmmlu

|

| 126 |

+

|

| 127 |

+

|Threshold 0.2 | qwen2.5 32b | qwen1.5 32b | orion 8x7b | orion 14b | mixtral 8x7b |

|

| 128 |

+

|----|----|----|----|----|----|

|

| 129 |

+

|mmlu | 0.3 | 0.27 |<span style="color: red;">0.22 | 0.28 | 0.25 |

|

| 130 |

+

|ceval | 0.39 | 0.38 |<span style="color: red;">0.27 | 0.26 | 0.26 |

|

| 131 |

+

|cmmlu | 0.38 | 0.39 |<span style="color: red;">0.23 | 0.27 | 0.22 |

|

| 132 |

+

|

| 133 |

+

### 3.1.6. Inference speed

|

| 134 |

+

Based on 8x Nvidia RTX3090, in unit of tokens per second.

|

| 135 |

+

|OrionLLM_V2.4.6.1 | 1para_out62 | 1para_out85 | 1para_out125 | 1para_out210 |

|

| 136 |

+

|----|----|----|----|----|

|

| 137 |

+

|OrionMOE | 33.03544296 | 33.43113606 | 33.53014102 | 33.58693529 |

|

| 138 |

+

|Qwen32B | 26.46267188 | 26.72846906 | 26.80413838 | 27.03123611 |

|

| 139 |

+

|Orion14B | 41.69121312 | 41.77423491 | 41.76050902 | 42.26096669 |

|

| 140 |

+

|

| 141 |

+

|OrionLLM_V2.4.6.1 | 4para_out62 | 4para_out90 | 4para_out125 | 4para_out220 |

|

| 142 |

+

|----|----|----|----|----|

|

| 143 |

+

|OrionMOE | 29.45015743 | 30.4472947 | 31.03748516 | 31.45783599 |

|

| 144 |

+

|Qwen32B | 23.60912215 | 24.30431956 | 24.86132023 | 25.16827535 |

|

| 145 |

+

|Orion14B | 38.08240373 | 38.8572788 | 39.50040645 | 40.44875947 |

|

| 146 |

+

|

| 147 |

+

|OrionLLM_V2.4.6.1 | 8para_out62 | 8para_out85 | 8para_out125 | 8para_out220 |

|

| 148 |

+

|----|----|----|----|----|

|

| 149 |

+

|OrionMOE | 25.71006327 | 27.13446743 | 28.89463226 | 29.70440167 |

|

| 150 |

+

|Qwen32B | 21.15920951 | 21.92001035 | 23.13867947 | 23.5649106 |

|

| 151 |

+

|Orion14B | 34.4151923 | 36.05635893 | 37.0874908 | 37.91705944 |

|

| 152 |

+

|

| 153 |

+

<div align="center">

|

| 154 |

+

<img src="./assets/imgs/inf_spd_en.png" alt="inf_speed" width="100%" />

|

| 155 |

+

</div>

|

| 156 |

+

|

| 157 |

+

|

| 158 |

+

|

| 159 |

+

|

| 160 |

+

<a name="model-inference"></a><br>

|

| 161 |

+

# 4. Model Inference

|

| 162 |

+

|

| 163 |

+

Model weights, source code, and configuration needed for inference are published on Hugging Face, and the download link

|

| 164 |

+

is available in the table at the beginning of this document. We demonstrate various inference methods here, and the

|

| 165 |

+

program will automatically download the necessary resources from Hugging Face.

|

| 166 |

+

|

| 167 |

+

## 4.1. Python Code

|

| 168 |

+

|

| 169 |

+

```python

|

| 170 |

+

import torch

|

| 171 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 172 |

+

from transformers.generation.utils import GenerationConfig

|

| 173 |

+

|

| 174 |

+

tokenizer = AutoTokenizer.from_pretrained("OrionStarAI/Orion-MOE8x7B-Base", use_fast=False, trust_remote_code=True)

|

| 175 |

+

model = AutoModelForCausalLM.from_pretrained("OrionStarAI/Orion-MOE8x7B", device_map="auto",

|

| 176 |

+

torch_dtype=torch.bfloat16, trust_remote_code=True)

|

| 177 |

+

|

| 178 |

+

model.generation_config = GenerationConfig.from_pretrained("OrionStarAI/Orion-MOE8x7B-Base")

|

| 179 |

+

messages = [{"role": "user", "content": "Hello, what is your name? "}]

|

| 180 |

+

response = model.chat(tokenizer, messages, streaming=False)

|

| 181 |

+

print(response)

|

| 182 |

+

|

| 183 |

+

```

|

| 184 |

+

|

| 185 |

+

In the above Python code, the model is loaded with `device_map='auto'` to utilize all available GPUs. To specify the

|

| 186 |

+

device, you can use something like `export CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7` (using GPUs 0,1,2,3,4,5,6,7).

|

| 187 |

+

|

| 188 |

+

## 4.2. Direct Script Inference

|

| 189 |

+

|

| 190 |

+

```shell

|

| 191 |

+

|

| 192 |

+

# base model

|

| 193 |

+

CUDA_VISIBLE_DEVICES=0 python demo/text_generation_base.py --model OrionStarAI/Orion-MOE8x7B --tokenizer OrionStarAI/Orion-MOE8x7B --prompt hello

|

| 194 |

+

|

| 195 |

+

# chat model

|

| 196 |

+

CUDA_VISIBLE_DEVICES=0 python demo/text_generation.py --model OrionStarAI/Orion-MOE8x7B-Chat --tokenizer OrionStarAI/Orion-MOE8x7B-Chat --prompt hi

|

| 197 |

+

|

| 198 |

+

```

|

| 199 |

+

|

| 200 |

+

<a name="declarations-license"></a><br>

|

| 201 |

+

# 5. Declarations, License

|

| 202 |

+

|

| 203 |

+

## 5.1. Declarations

|

| 204 |

+

|

| 205 |

+

We strongly urge all users not to use the Orion-MOE8x7B model for any activities that may harm national or social security or violate the law.

|

| 206 |

+

Additionally, we request users not to use the Orion-MOE8x7B model for internet services without proper security review and filing.

|

| 207 |

+

We hope all users abide by this principle to ensure that technological development takes place in a regulated and legal environment.

|

| 208 |

+

We have done our best to ensure the compliance of the data used in the model training process. However, despite our

|

| 209 |

+

significant efforts, unforeseen issues may still arise due to the complexity of the model and data. Therefore, if any

|

| 210 |

+

problems arise due to the use of the Orion-MOE8x7B open-source model, including but not limited to data security

|

| 211 |

+

issues, public opinion risks, or any risks and issues arising from the model being misled, abused, disseminated, or

|

| 212 |

+

improperly utilized, we will not assume any responsibility.

|

| 213 |

+

|

| 214 |

+

## 5.2. License

|

| 215 |

+

|

| 216 |

+

Community use of the Orion-MOE8x7B series models

|

| 217 |

+

- For code, please comply with [Apache License Version 2.0](./LICENSE)<br>

|

| 218 |

+

- For model, please comply with [【Orion-MOE8x7B Series】 Models Community License Agreement](./ModelsCommunityLicenseAgreement)

|

| 219 |

+

|

| 220 |

+

|

| 221 |

+

<a name="company-introduction"></a><br>

|

| 222 |

+

# 6. Company Introduction

|

| 223 |

+

|

| 224 |

+

OrionStar is a leading global service robot solutions company, founded in September 2016. OrionStar is dedicated to

|

| 225 |

+

using artificial intelligence technology to create the next generation of revolutionary robots, allowing people to break

|

| 226 |

+

free from repetitive physical labor and making human work and life more intelligent and enjoyable. Through technology,

|

| 227 |

+

OrionStar aims to make society and the world a better place.

|

| 228 |

+

|

| 229 |

+

OrionStar possesses fully self-developed end-to-end artificial intelligence technologies, such as voice interaction and

|

| 230 |

+

visual navigation. It integrates product development capabilities and technological application capabilities. Based on

|

| 231 |

+

the Orion robotic arm platform, it has launched products such as OrionStar AI Robot Greeting, AI Robot Greeting Mini,

|

| 232 |

+

Lucki, Coffee Master, and established the open platform OrionOS for Orion robots. Following the philosophy of "Born for

|

| 233 |

+

Truly Useful Robots", OrionStar empowers more people through AI technology.

|

| 234 |

+

|

| 235 |

+

**The core strengths of OrionStar lies in possessing end-to-end AI application capabilities,** including big data preprocessing, large model pretraining, fine-tuning, prompt engineering, agent, etc. With comprehensive end-to-end model training capabilities, including systematic data processing workflows and the parallel model training capability of hundreds of GPUs, it has been successfully applied in various industry scenarios such as government affairs, cloud services, international e-commerce, and fast-moving consumer goods.

|

| 236 |

+

|

| 237 |

+

Companies with demands for deploying large-scale model applications are welcome to contact us.<br>

|

| 238 |

+

**Enquiry Hotline: 400-898-7779**<br>

|

| 239 |

+

**E-mail: [email protected]**<br>

|

| 240 |

+

**Discord Link: https://discord.gg/zumjDWgdAs**

|

| 241 |

+

|

| 242 |

+

|

| 243 |

+

<div align="center">

|

| 244 |

+

<img src="./assets/imgs/wechat_group.jpg" alt="wechat" width="40%" />

|

| 245 |

+

</div>

|

README_zh.md

ADDED

|

@@ -0,0 +1,242 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

<!-- markdownlint-disable first-line-h1 -->

|

| 3 |

+

<!-- markdownlint-disable html -->

|

| 4 |

+

<div align="center">

|

| 5 |

+

<img src="./assets/imgs/orion_star.PNG" alt="logo" width="50%" />

|

| 6 |

+

</div>

|

| 7 |

+

|

| 8 |

+

<div align="center">

|

| 9 |

+

<h1>

|

| 10 |

+

Orion-MOE8x7B

|

| 11 |

+

</h1>

|

| 12 |

+

</div>

|

| 13 |

+

|

| 14 |

+

<div align="center">

|

| 15 |

+

|

| 16 |

+

<div align="center">

|

| 17 |

+

<b>🇨🇳中文</b> | <a href="./README.md">🌐English</a>

|

| 18 |

+

</div>

|

| 19 |

+

|

| 20 |

+

<h4 align="center">

|

| 21 |

+

<p>

|

| 22 |

+

🤗 <a href="https://huggingface.co/OrionStarAI" target="_blank">HuggingFace Mainpage</a> | 🤖 <a href="https://modelscope.cn/organization/OrionStarAI" target="_blank">ModelScope Mainpage</a><br>

|

| 23 |

+

<p>

|

| 24 |

+

</h4>

|

| 25 |

+

|

| 26 |

+

</div>

|

| 27 |

+

|

| 28 |

+

|

| 29 |

+

# 目录

|

| 30 |

+

|

| 31 |

+

|

| 32 |

+

- [📖 模型介绍](#zh_model-introduction)

|

| 33 |

+

- [🔗 下载路径](#zh_model-download)

|

| 34 |

+

- [🔖 评估结果](#zh_model-benchmark)

|

| 35 |

+

- [📜 声明协议](#zh_declarations-license)

|

| 36 |

+

- [🥇 企业介绍](#zh_company-introduction)

|

| 37 |

+

|

| 38 |

+

|

| 39 |

+

<a name="zh_model-introduction"></a><br>

|

| 40 |

+

# 1. 模型介绍

|

| 41 |

+

|

| 42 |

+

- Orion-MOE8x7B-Base是一个具有8乘以70亿参数的生成式稀疏混合专家大语言模型,该模型在训练数据语言上涵盖了中文、英语、日语、韩语等多种语言。在多语言环境下的一系列任务中展现出卓越的性能。在主流的公开基准评测中,Orion-MOE8x7B-Base模型表现优异,多项指标显著超越同等参数基本的其他模型。

|

| 43 |

+

|

| 44 |

+

- Orion-MOE8x7B-Base模型有以下几个特点:

|

| 45 |

+

- 同规模参数级别基座大模型综合评测效果表现优异

|

| 46 |

+

- 多语言能力强,在日语、韩语测试集上显著领先,在阿拉伯语、德语、法语、西班牙语测试集上也全面领先

|

| 47 |

+

|

| 48 |

+

|

| 49 |

+

|

| 50 |

+

|

| 51 |

+

|

| 52 |

+

<a name="zh_model-download"></a><br>

|

| 53 |

+

# 2. 下载路径

|

| 54 |

+

|

| 55 |

+

发布模型和下载链接见下表:

|

| 56 |

+

|

| 57 |

+

| 模型名称 | HuggingFace下载链接 | ModelScope下载链接 |

|

| 58 |

+

|---------------------|-----------------------------------------------------------------------------------|------------------------------------------------------------------------------------------------|

|

| 59 |

+

| ⚾ 基座模型 | [Orion-MOE8x7B-Base](https://huggingface.co/OrionStarAI/Orion-MOE8x7B-Base) | [Orion-MOE8x7B-Base](https://modelscope.cn/models/OrionStarAI/Orion-MOE8x7B-Base/summary) |

|

| 60 |

+

|

| 61 |

+

|

| 62 |

+

|

| 63 |

+

<a name="zh_model-benchmark"></a><br>

|

| 64 |

+

# 3. 评估结果

|

| 65 |

+

|

| 66 |

+

## 3.1. 基座模型Orion-MOE8x7B-Base评估

|

| 67 |

+

|

| 68 |

+

### 3.1.1. 基座模型基准测试对比

|

| 69 |

+

| Model | ceval | cmmlu | mmlu | mmlu_pro | ARC_c | hellaswag |

|

| 70 |

+

|-------|---------|--------|-------|----------|-------|-----------|

|

| 71 |

+

|Mixtral 8x7B | 54.0861 | 53.21 | 70.4000 | 38.5000 | 85.0847 | 81.9458 |

|

| 72 |

+

|Qwen1.5-32b | 83.5000 | 82.3000 | 73.4000 | 45.2500 | 90.1695 | 81.9757 |

|

| 73 |

+

|Qwen2.5-32b | 87.7414 | 89.0088 | 82.9000 | 58.0100 | 94.2373 | 82.5134 |

|

| 74 |

+

|Orion 14B | 72.8000 | 70.5700 | 69.9400 | 33.9500 | 79.6600 | 78.5300 |

|

| 75 |

+

|<span style="color: red;">Orion 8x7B | <span style="color: red;">89.7400 | <span style="color: red;">89.1555 | <span style="color: red;">85.9000 | <span style="color: red;">58.3100 | <span style="color: red;">91.8644 | <span style="color: red;">89.19 |

|

| 76 |

+

|**Model**|**lambada**|**bbh**|**musr**|**piqa**|**commonsense_qa**|**IFEval**|

|

| 77 |

+

|Mixtral 8x7B | 76.7902 | 50.87 | 43.21 | 83.41 | 69.62 | 24.15 |

|

| 78 |

+

|Qwen1.5-32b | 73.7434 | 57.2800 | 42.6500 | 82.1500 | 74.6900 | 32.9700 |

|

| 79 |

+

|Qwen2.5-32b | 75.3736 | 67.6900 | 49.7800 | 80.0500 | 72.9700 | 41.5900 |

|

| 80 |

+

|Orion 14B | 78.8300 | 50.3500 | 43.6100 | 79.5400 | 66.9100 | 29.0800 |

|

| 81 |

+

|<span style="color: red;">Orion 8x7B |<span style="color: red;">79.7399|<span style="color: red;">55.82 |<span style="color: red;">49.93 |<span style="color: red;">87.32 |<span style="color: red;">73.05 |<span style="color: red;">30.06 |

|

| 82 |

+

|**Model**|**GQPA**|**human-eval**|**MBPP**|**math_lv5**|**gsm8k**|**math**|

|

| 83 |

+

|Mixtral 8x7B | 30.9000 | 33.5366 | 60.7000 | 9.0000 | 47.5000 | 28.4000 |

|

| 84 |

+

|Qwen1.5-32b | 33.4900 | 35.9756 | 49.4000 | 25.0000 | 77.4000 | 36.1000 |

|

| 85 |

+

|Qwen2.5-32b | 49.5000 | 46.9512 | 71.0000 | 31.7200 | 80.3630 | 48.8800 |

|

| 86 |

+

|Orion 14B | 28.5300 | 20.1200 | 30.0000 | 2.5400 | 52.0100 | 7.8400 |

|

| 87 |

+

|<span style="color: red;">Orion 8x7B |<span style="color: red;">52.1700 |<span style="color: red;">44.5122 |<span style="color: red;">43.4 |<span style="color: red;">5.07 |<span style="color: red;">59.8200 |<span style="color: red;">23.6800 |

|

| 88 |

+

|

| 89 |

+

|

| 90 |

+

### 3.1.2. 小语种: 日文

|

| 91 |

+

| Model | jsquad | jcommonsenseqa | jnli | marc_ja | jaqket_v2 | paws_ja | avg |

|

| 92 |

+

|-------|---------|--------|-------|----------|-------|-----------|-----|

|

| 93 |

+

|Mixtral-8x7B | 0.8900 | 0.7873 | 0.3213 | 0.9544 | 0.7886 | 44.5000 | 8.0403 |

|

| 94 |

+

|Qwen1.5-32B | 0.8986 | 0.8454 | 0.5099 | 0.9708 | 0.8214 | 0.4380 | 0.7474 |

|

| 95 |

+

|Qwen2.5-32B | 0.8909 | 0.9383 | 0.7214 | 0.9786 | 0.8927 | 0.4215 | 0.8073 |

|

| 96 |

+

|Orion-14B-Base | 0.7422 | 0.8820 | 0.7285 | 0.9406 | 0.6620 | 0.4990 | 0.7424 |

|

| 97 |

+

|<span style="color: red;">Orion 8x7B |<span style="color: red;">0.9177 |<span style="color: red;">0.9043 |<span style="color: red;">0.9046 |<span style="color: red;">0.9640 |<span style="color: red;">0.8119 |<span style="color: red;">0.4735 |<span style="color: red;">0.8293 |

|

| 98 |

+

|

| 99 |

+

|

| 100 |

+

### 3.1.3. 小语种: 韩文

|

| 101 |

+

|Model | haerae | kobest boolq | kobest copa | kobest hellaswag | kobest sentineg | kobest wic | paws_ko | avg |

|

| 102 |

+

|--------|----|----|----|----|----|----|----|----|

|

| 103 |

+

|Mixtral-8x7B | 53.16 | 78.56 | 66.2 | 56.6 | 77.08 | 49.37 | 44.05 | 60.71714286 |

|

| 104 |

+

|Qwen1.5-32B | 46.38 | 76.28 | 60.4 | 53 | 78.34 | 52.14 | 43.4 | 58.56285714 |

|

| 105 |

+

|Qwen2.5-32B | 70.67 | 80.27 | 76.7 | 61.2 | 96.47 | 77.22 | 37.05 | 71.36857143 |

|

| 106 |

+

|Orion-14B-Base | 69.66 | 80.63 | 77.1 | 58.2 | 92.44 | 51.19 | 44.55 | 67.68142857 |

|

| 107 |

+

|<span style="color: red;">Orion 8x7B |<span style="color: red;">65.17 |<span style="color: red;">85.4 |<span style="color: red;">80.4 |<span style="color: red;">56 |<span style="color: red;">96.98 |<span style="color: red;">73.57 |<span style="color: red;">46.35 |<span style="color: red;">71.98142857 |

|

| 108 |

+

|

| 109 |

+

|

| 110 |

+

### 3.1.4. 小语种: 阿拉伯语,德语,法语,西班牙语

|

| 111 |

+

| Lang | ar | | de | | fr | | es | |

|

| 112 |

+

|----|----|----|----|----|----|----|----|----|

|

| 113 |

+

|**model**|**hellaswag**|**arc**|**hellaswag**|**arc**|**hellaswag**|**arc**|**hellaswag**|**arc**|

|

| 114 |

+

|Mixtral-8x7B | 47.93 | 36.27 | 69.17 | 52.35 | 73.9 | 55.86 | 74.25 | 54.79 |

|

| 115 |

+

|Qwen1.5-32B | 50.07 | 39.95 | 63.77 | 50.81 | 68.86 | 55.95 | 70.5 | 55.13 |

|

| 116 |

+

|Qwen2.5-32B | 59.76 | 52.87 | 69.82 | 61.76 | 74.15 | 62.7 | 75.04 | 65.3 |

|

| 117 |

+

|Orion-14B-Base | 42.26 | 33.88 | 54.65 | 38.92 | 60.21 | 42.34 | 62 | 44.62 |

|

| 118 |

+

|<span style="color: red;">Orion 8x7B |<span style="color: red;">69.39 |<span style="color: red;">54.32 |<span style="color: red;">80.6 |<span style="color: red;">63.47 |<span style="color: red;">85.56 |<span style="color: red;">68.78 |<span style="color: red;">87.41 |<span style="color: red;">70.09 |

|

| 119 |

+

|

| 120 |

+

|

| 121 |

+

### 3.1.5. 泄漏检测结果

|

| 122 |

+

检测测试题目的泄露程度,值越大泄露的越严重

|

| 123 |

+

- 检测代码: https://github.com/nishiwen1214/Benchmark-leakage-detection

|

| 124 |

+

- 论文: https://web3.arxiv.org/pdf/2409.01790

|

| 125 |

+

- 博客: https://mp.weixin.qq.com/s/BtcJmDEUyzAYG-fqCal2lA

|

| 126 |

+

- 英文测试:mmlu

|

| 127 |

+

- 中文测试:ceval, cmmlu

|

| 128 |

+

|

| 129 |

+

|Threshold 0.2 | qwen2.5 32b | qwen1.5 32b | orion 8x7b | orion 14b | mixtral 8x7b |

|

| 130 |

+

|----|----|----|----|----|----|

|

| 131 |

+

|mmlu | 0.3 | 0.27 |<span style="color: red;">0.22 | 0.28 | 0.25 |

|

| 132 |

+

|ceval | 0.39 | 0.38 |<span style="color: red;">0.27 | 0.26 | 0.26 |

|

| 133 |

+

|cmmlu | 0.38 | 0.39 |<span style="color: red;">0.23 | 0.27 | 0.22 |

|

| 134 |

+

|

| 135 |

+

|

| 136 |

+

### 3.1.6. 推理速度

|

| 137 |

+

基于8卡Nvidia RTX3090,单位是令牌每秒

|

| 138 |

+

|OrionLLM_V2.4.6.1 | 1并发_输出62 | 1并发_输出85 | 1并发_输出125 | 1并发_输出210 |

|

| 139 |

+

|----|----|----|----|----|

|

| 140 |

+

|OrionMOE | 33.03544296 | 33.43113606 | 33.53014102 | 33.58693529 |

|

| 141 |

+

|Qwen32B | 26.46267188 | 26.72846906 | 26.80413838 | 27.03123611 |

|

| 142 |

+

|Orion14B | 41.69121312 | 41.77423491 | 41.76050902 | 42.26096669 |

|

| 143 |

+

|

| 144 |

+

|OrionLLM_V2.4.6.1 | 4并发_输出62 | 4并发_输出90 | 4并发_输出125 | 4并发_输出220 |

|

| 145 |

+

|----|----|----|----|----|

|

| 146 |

+

|OrionMOE | 29.45015743 | 30.4472947 | 31.03748516 | 31.45783599 |

|

| 147 |

+

|Qwen32B | 23.60912215 | 24.30431956 | 24.86132023 | 25.16827535 |

|

| 148 |

+

|Orion14B | 38.08240373 | 38.8572788 | 39.50040645 | 40.44875947 |

|

| 149 |

+

|

| 150 |

+

|OrionLLM_V2.4.6.1 | 8并发_输出62 | 8并发_输出85 | 8并发_输出125 | 8并发_输出220 |

|

| 151 |

+

|----|----|----|----|----|

|

| 152 |

+

|OrionMOE | 25.71006327 | 27.13446743 | 28.89463226 | 29.70440167 |

|

| 153 |

+

|Qwen32B | 21.15920951 | 21.92001035 | 23.13867947 | 23.5649106 |

|

| 154 |

+

|Orion14B | 34.4151923 | 36.05635893 | 37.0874908 | 37.91705944 |

|

| 155 |

+

|

| 156 |

+

<div align="center">

|

| 157 |

+

<img src="./assets/imgs/inf_spd_zh.png" alt="inf_speed" width="100%" />

|

| 158 |

+

</div>

|

| 159 |

+

|

| 160 |

+

|

| 161 |

+

# 4. 模型推理

|

| 162 |

+

|

| 163 |

+

推理所需的模型权重、源码、配置已发布在 Hugging Face,下载链接见本文档最开始的表格。我们在此示范多种推理方式。程序会自动从

|

| 164 |

+

Hugging Face 下载所需资源。

|

| 165 |

+

|

| 166 |

+

## 4.1. Python 代码方式

|

| 167 |

+

|

| 168 |

+

```python

|

| 169 |

+

import torch

|

| 170 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 171 |

+

from transformers.generation.utils import GenerationConfig

|

| 172 |

+

|

| 173 |

+

tokenizer = AutoTokenizer.from_pretrained("OrionStarAI/Orion-MOE8x7B-Base", use_fast=False, trust_remote_code=True)

|

| 174 |

+

model = AutoModelForCausalLM.from_pretrained("OrionStarAI/Orion-MOE8x7B-Base", device_map="auto",

|

| 175 |

+

torch_dtype=torch.bfloat16, trust_remote_code=True)

|

| 176 |

+

|

| 177 |

+

model.generation_config = GenerationConfig.from_pretrained("OrionStarAI/Orion-MOE8x7B-Base")

|

| 178 |

+

messages = [{"role": "user", "content": "你好! 你叫什么名字!"}]

|

| 179 |

+

response = model.chat(tokenizer, messages, streaming=Flase)

|

| 180 |

+

print(response)

|

| 181 |

+

|

| 182 |

+

```

|

| 183 |

+

|

| 184 |

+

在上述两段代码中,模型加载指定 `device_map='auto'`

|

| 185 |

+

,会使用所有可用显卡。如需指定使用的设备,可以使用类似 `export CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7`(使用了0、1、2、3、4、5、6、7号显卡)的方式控制。

|

| 186 |

+

|

| 187 |

+

|

| 188 |

+

## 4.2. 脚本直接��理

|

| 189 |

+

|

| 190 |

+

```shell

|

| 191 |

+

# base model

|

| 192 |

+

CUDA_VISIBLE_DEVICES=0 python demo/text_generation_base.py --model OrionStarAI/Orion-14B --tokenizer OrionStarAI/Orion-14B --prompt 你好,你叫什么名字

|

| 193 |

+

|

| 194 |

+

# chat model

|

| 195 |

+

CUDA_VISIBLE_DEVICES=0 python demo/text_generation.py --model OrionStarAI/Orion-14B-Chat --tokenizer OrionStarAI/Orion-14B-Chat --prompt 你好,你叫什么名字

|

| 196 |

+

|

| 197 |

+

```

|

| 198 |

+

|

| 199 |

+

|

| 200 |

+

|

| 201 |

+

|

| 202 |

+

<a name="zh_declarations-license"></a><br>

|

| 203 |

+

# 5. 声明、协议

|

| 204 |

+

|

| 205 |

+

## 5.1. 声明

|

| 206 |

+

|

| 207 |

+

我们强烈呼吁所有使用者,不要利用 Orion-MOE8x7B 模型进行任何危害国家社会安全或违法的活动。另外,我们也要求使用者不要将

|

| 208 |

+

Orion-MOE8x7B 模型用于未经适当安全审查和备案的互联网服务。

|

| 209 |

+

|

| 210 |

+

我们希望所有的使用者都能遵守这个原则,确保科技的发展能在规范和合法的环境下进行。

|

| 211 |

+

我们已经尽我们所能,来确保模型训练过程中使用的数据的合规性。然而,尽管我们已经做出了巨大的努力,但由于模型和数据的复杂性,仍有可能存在一些无法预见的问题。因此,如果由于使用

|

| 212 |

+

Orion-14B 开源模型而导致的任何问题,包括但不限于数据安全问题、公共舆论风险,或模型被误导、滥用、传播或不当利用所带来的任何风险和问题,我们将不承担任何责任。

|

| 213 |

+

|

| 214 |

+

## 5.2. 协议

|

| 215 |

+

|

| 216 |

+

社区使用Orion-MOE8x7B系列模型

|

| 217 |

+

- 代码请遵循 [Apache License Version 2.0](./LICENSE)<br>

|

| 218 |

+

- 模型请遵循 [Orion-14B系列模型社区许可协议](./ModelsCommunityLicenseAgreement)

|

| 219 |

+

|

| 220 |

+

|

| 221 |

+

<a name="zh_company-introduction"></a><br>

|

| 222 |

+

# 6. 企业介绍

|

| 223 |

+

|

| 224 |

+

猎户星空(OrionStar)是一家全球领先的服务机器人解决方案公司,成立于2016年9月。猎户星空致力于基于人工智能技术打造下一代革命性机器人,使人们能够摆脱重复的体力劳动,使人类的工作和生活更加智能和有趣,通过技术使社会和世界变得更加美好。

|

| 225 |

+

|

| 226 |

+

猎户星空拥有完全自主开发的全链条人工智能技术,如语音交互和视觉导航。它整合了产品开发能力和技术应用能力。基于Orion机械臂平台,它推出了ORION

|

| 227 |

+

STAR AI Robot Greeting、AI Robot Greeting Mini、Lucki、Coffee

|

| 228 |

+

Master等产品,并建立了Orion机器人的开放平台OrionOS。通过为 **真正有用的机器人而生** 的理念实践,它通过AI技术为更多人赋能。

|

| 229 |

+

|

| 230 |

+

凭借7年AI经验积累,猎户星空已推出的大模型深度应用“聚言”,并陆续面向行业客户提供定制化AI大模型咨询与服务解决方案,真正帮助客户实现企业经营效率领先同行目标。

|

| 231 |

+

|

| 232 |

+

**猎户星空具备全链条大模型应用能力的核心优势**,包括拥有从海量数据处理、大模型预训练、二次预训练、微调(Fine-tune)、Prompt

|

| 233 |

+

Engineering 、Agent开发的全链条能力和经验积累;拥有完整的端到端模型训练能力,包括系统化的数据处理流程和数百张GPU的并行模型训练能力,现已在大政务、云服务、出海电商、快消等多个行业场景落地。

|

| 234 |

+

|

| 235 |

+

***欢迎有大模型应用落地需求的企业联系我们进行商务合作***<br>

|

| 236 |

+

**咨询电话:** 400-898-7779<br>

|

| 237 |

+

**电子邮箱:** [email protected]<br>

|

| 238 |

+

**Discord社区链接: https://discord.gg/zumjDWgdAs**

|

| 239 |

+

|

| 240 |

+

<div align="center">

|

| 241 |

+

<img src="./assets/imgs/wechat_group.jpg" alt="wechat" width="40%" />

|

| 242 |

+

</div>

|

assets/imgs/inf_spd_en.png

ADDED

|

assets/imgs/inf_spd_zh.png

ADDED

|

assets/imgs/orion_star.PNG

ADDED

|

|

assets/imgs/wechat_group.jpg

ADDED

|