---

language:

- en

- zh

- ja

- ko

metrics:

- accuracy

pipeline_tag: text-generation

tags:

- code

- model

- llm

---

Orion-MOE8x7B

# Table of Contents

- [📖 Model Introduction](#model-introduction)

- [🔗 Model Download](#model-download)

- [🔖 Model Benchmark](#model-benchmark)

- [📜 Declarations & License](#declarations-license)

- [🥇 Company Introduction](#company-introduction)

# 1. Model Introduction

- Orion-MOE8x7B-Base Large Language Model(LLM) is a pretrained generative Sparse Mixture of Experts, trained from scratch by OrionStarAI. The base model is trained on multilingual corpus, including Chinese, English, Japanese, Korean, etc, and it exhibits superior performance in these languages.

- The Orion-MOE8x7B series models exhibit the following features:

- The model demonstrates excellent performance in comprehensive evaluations compared to other base models of the same parameter scale.

- It has strong multilingual capabilities, significantly leading in Japanese and Korean test sets, and also performing comprehensively better in Arabic, German, French, and Spanish test sets.

# 2. Model Download

Model release and download links are provided in the table below:

| Model Name | HuggingFace Download Links | ModelScope Download Links |

|----------------------|-----------------------------------------------------------------------------|-------------------------------------------------------------------------------------------|

| ⚾Orion-MOE8x7B-Base | [Orion-MOE8x7B-Base](https://huggingface.co/OrionStarAI/Orion-MOE8x7B-Base) | [Orion-MOE8x7B-Base](https://modelscope.cn/models/OrionStarAI/Orion-MOE8x7B-Base/summary) |

# 3. Model Benchmarks

## 3.1. Base Model Orion-MOE8x7B-Base Benchmarks

### 3.1.1. LLM evaluation results on examination and professional knowledge

|TestSet | Mixtral 8*7B | Qwen1.5-32b | Qwen2.5-32b | Orion 14B | Orion 8*7B|

| -- | -- | -- | -- | -- | -- |

|ceval | 54.0861 | 83.5 | 87.7414 | 72.8 | 89.74|

|cmmlu | 53.21 | 82.3 | 89.0088 | 70.57 | 89.1555|

|mmlu | 70.4 | 73.4 | 82.9 | 69.94 | 85.9|

|mmlu_pro | 38.5 | 45.25 | 58.01 | 33.95 | 58.31|

|ARC_c | 85.0847 | 90.1695 | 94.2373 | 79.66 | 91.8644|

|hellaswag | 81.9458 | 81.9757 | 82.5134 | 78.53 | 89.19|

|lambada | 76.7902 | 73.7434 | 75.3736 | 78.83 | 79.7399|

|bbh | 50.87 | 57.28 | 67.69 | 50.35 | 55.82|

|musr | 43.21 | 42.65 | 49.78 | 43.61 | 49.93|

|piqa | 83.41 | 82.15 | 80.05 | 79.54 | 87.32|

|commonsense_qa | 69.62 | 74.69 | 72.97 | 66.91 | 73.05|

|IFEval | 24.15 | 32.97 | 41.59 | 29.08 | 30.06|

|GQPA | 30.9 | 33.49 | 49.5 | 28.53 | 52.17|

|human-eval | 33.5366 | 35.9756 | 46.9512 | 20.12 | 44.5122|

|MBPP | 60.7 | 49.4 | 71 | 30 | 43.4|

|math lv5 | 9 | 25 | 31.72 | 2.54 | 5.07|

|gsm8k | 47.5 | 77.4 | 80.363 | 52.01 | 59.82|

|math | 28.4 | 36.1 | 48.88 | 7.84 | 23.68|

### 3.1.2. Comparison of LLM performances on Japanese testsets

| Model | jsquad | jcommonsenseqa | jnli | marc_ja | jaqket_v2 | paws_ja | avg |

|-------|---------|--------|-------|----------|-------|-----------|-----|

|Mixtral-8x7B | 0.8900 | 0.7873 | 0.3213 | 0.9544 | 0.7886 | 44.5000 | 8.0403 |

|Qwen1.5-32B | 0.8986 | 0.8454 | 0.5099 | 0.9708 | 0.8214 | 0.4380 | 0.7474 |

|Qwen2.5-32B | 0.8909 | 0.9383 | 0.7214 | 0.9786 | 0.8927 | 0.4215 | 0.8073 |

|Orion-14B-Base | 0.7422 | 0.8820 | 0.7285 | 0.9406 | 0.6620 | 0.4990 | 0.7424 |

|Orion 8x7B |0.9177 |0.9043 |0.9046 |0.9640 |0.8119 |0.4735 |0.8293 |

### 3.1.3. Comparison of LLM performances on Korean testsets

|Model | haerae | kobest boolq | kobest copa | kobest hellaswag | kobest sentineg | kobest wic | paws_ko | avg |

|--------|----|----|----|----|----|----|----|----|

|Mixtral-8x7B | 53.16 | 78.56 | 66.2 | 56.6 | 77.08 | 49.37 | 44.05 | 60.71714286 |

|Qwen1.5-32B | 46.38 | 76.28 | 60.4 | 53 | 78.34 | 52.14 | 43.4 | 58.56285714 |

|Qwen2.5-32B | 70.67 | 80.27 | 76.7 | 61.2 | 96.47 | 77.22 | 37.05 | 71.36857143 |

|Orion-14B-Base | 69.66 | 80.63 | 77.1 | 58.2 | 92.44 | 51.19 | 44.55 | 67.68142857 |

|Orion 8x7B |65.17 |85.4 |80.4 |56 |96.98 |73.57 |46.35 |71.98142857 |

### 3.1.4. Comparison of LLM performances on Arabic, German, French, and Spanish testsets

| Lang | ar | | de | | fr | | es | |

|----|----|----|----|----|----|----|----|----|

|**model**|**hellaswag**|**arc**|**hellaswag**|**arc**|**hellaswag**|**arc**|**hellaswag**|**arc**|

|Mixtral-8x7B | 47.93 | 36.27 | 69.17 | 52.35 | 73.9 | 55.86 | 74.25 | 54.79 |

|Qwen1.5-32B | 50.07 | 39.95 | 63.77 | 50.81 | 68.86 | 55.95 | 70.5 | 55.13 |

|Qwen2.5-32B | 59.76 | 52.87 | 69.82 | 61.76 | 74.15 | 62.7 | 75.04 | 65.3 |

|Orion-14B-Base | 42.26 | 33.88 | 54.65 | 38.92 | 60.21 | 42.34 | 62 | 44.62 |

|Orion 8x7B |69.39 |54.32 |80.6 |63.47 |85.56 |68.78 |87.41 |70.09 |

### 3.1.5. Leakage Detection Benchmark

The proportion of leakage data(from various evaluation benchmarks) in the pre-trained corpus; the higher the proportion, the more leakage it indicates.

- Code: https://github.com/nishiwen1214/Benchmark-leakage-detection

- Paper: https://web3.arxiv.org/pdf/2409.01790

- English Test: mmlu

- Chinese Test: ceval, cmmlu

|Threshold 0.2 | qwen2.5 32b | qwen1.5 32b | orion 8x7b | orion 14b | mixtral 8x7b |

|------|------|------|------|------|------|

|mmlu | 0.3 | 0.27 | 0.22 | 0.28 | 0.25 |

|ceval | 0.39 | 0.38 | 0.27 | 0.26 | 0.26 |

|cmmlu | 0.38 | 0.39 | 0.23 | 0.27 | 0.22 |

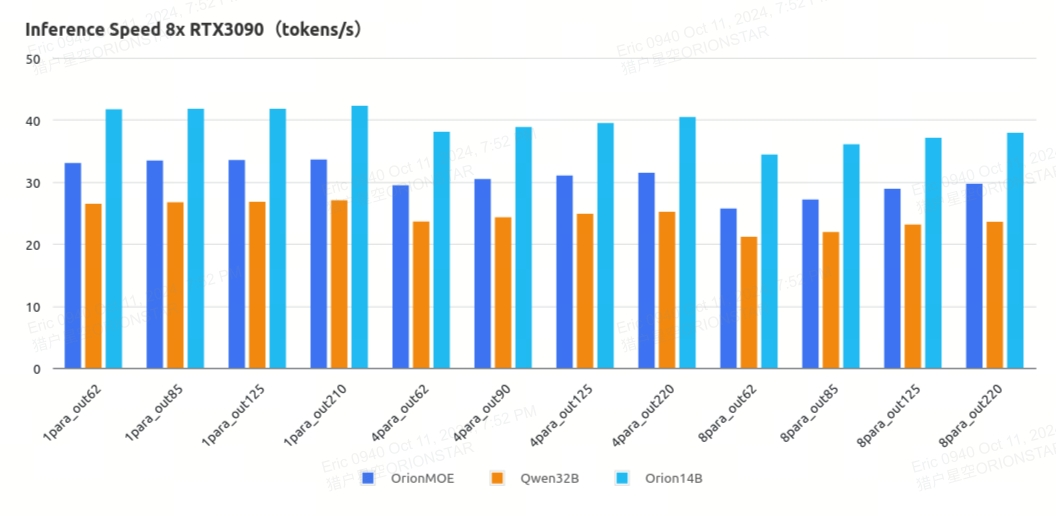

### 3.1.6. Inference speed

Based on 8x Nvidia RTX3090, in unit of tokens per second.

|OrionLLM_V2.4.6.1 | 1para_out62 | 1para_out85 | 1para_out125 | 1para_out210 |

|----|----|----|----|----|

|OrionMOE | 33.03544296 | 33.43113606 | 33.53014102 | 33.58693529 |

|Qwen32B | 26.46267188 | 26.72846906 | 26.80413838 | 27.03123611 |

|Orion14B | 41.69121312 | 41.77423491 | 41.76050902 | 42.26096669 |

|OrionLLM_V2.4.6.1 | 4para_out62 | 4para_out90 | 4para_out125 | 4para_out220 |

|----|----|----|----|----|

|OrionMOE | 29.45015743 | 30.4472947 | 31.03748516 | 31.45783599 |

|Qwen32B | 23.60912215 | 24.30431956 | 24.86132023 | 25.16827535 |

|Orion14B | 38.08240373 | 38.8572788 | 39.50040645 | 40.44875947 |

|OrionLLM_V2.4.6.1 | 8para_out62 | 8para_out85 | 8para_out125 | 8para_out220 |

|----|----|----|----|----|

|OrionMOE | 25.71006327 | 27.13446743 | 28.89463226 | 29.70440167 |

|Qwen32B | 21.15920951 | 21.92001035 | 23.13867947 | 23.5649106 |

|Orion14B | 34.4151923 | 36.05635893 | 37.0874908 | 37.91705944 |

# 4. Model Inference

Model weights, source code, and configuration needed for inference are published on Hugging Face, and the download link

is available in the table at the beginning of this document. We demonstrate various inference methods here, and the

program will automatically download the necessary resources from Hugging Face.

## 4.1. Python Code

```python

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer

from transformers.generation.utils import GenerationConfig

tokenizer = AutoTokenizer.from_pretrained("OrionStarAI/Orion-MOE8x7B-Base",

use_fast=False,

trust_remote_code=True)

model = AutoModelForCausalLM.from_pretrained("OrionStarAI/Orion-MOE8x7B-Base",

device_map="auto",

torch_dtype=torch.bfloat16,

trust_remote_code=True)

model.generation_config = GenerationConfig.from_pretrained("OrionStarAI/Orion-MOE8x7B-Base")

messages = [{"role": "user", "content": "Hello, what is your name? "}]

response = model.chat(tokenizer, messages, streaming=False)

print(response)

```

In the above Python code, the model is loaded with `device_map='auto'` to utilize all available GPUs. To specify the

device, you can use something like `export CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7` (using GPUs 0,1,2,3,4,5,6,7).

## 4.2. Direct Script Inference

```shell

# base model

CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 python demo/text_generation_base.py --model OrionStarAI/Orion-MOE8x7B-Base --tokenizer OrionStarAI/Orion-MOE8x7B-Base --prompt hello

```

# 5. Declarations, License

## 5.1. Declarations

We strongly urge all users not to use the Orion-MOE8x7B model for any activities that may harm national or social security or violate the law.

Additionally, we request users not to use the Orion-MOE8x7B model for internet services without proper security review and filing.

We hope all users abide by this principle to ensure that technological development takes place in a regulated and legal environment.

We have done our best to ensure the compliance of the data used in the model training process. However, despite our

significant efforts, unforeseen issues may still arise due to the complexity of the model and data. Therefore, if any

problems arise due to the use of the Orion-MOE8x7B open-source model, including but not limited to data security

issues, public opinion risks, or any risks and issues arising from the model being misled, abused, disseminated, or

improperly utilized, we will not assume any responsibility.

## 5.2. License

Community use of the Orion-MOE8x7B series models

- For code, please comply with [Apache License Version 2.0](./LICENSE)

- For model, please comply with [【Orion Series】 Models Community License Agreement](./ModelsCommunityLicenseAgreement)

# 6. Company Introduction

OrionStar is a leading global service robot solutions company, founded in September 2016. OrionStar is dedicated to

using artificial intelligence technology to create the next generation of revolutionary robots, allowing people to break

free from repetitive physical labor and making human work and life more intelligent and enjoyable. Through technology,

OrionStar aims to make society and the world a better place.

OrionStar possesses fully self-developed end-to-end artificial intelligence technologies, such as voice interaction and

visual navigation. It integrates product development capabilities and technological application capabilities. Based on

the Orion robotic arm platform, it has launched products such as OrionStar AI Robot Greeting, AI Robot Greeting Mini,

Lucki, Coffee Master, and established the open platform OrionOS for Orion robots. Following the philosophy of "Born for

Truly Useful Robots", OrionStar empowers more people through AI technology.

**The core strengths of OrionStar lies in possessing end-to-end AI application capabilities,** including big data preprocessing, large model pretraining, fine-tuning, prompt engineering, agent, etc. With comprehensive end-to-end model training capabilities, including systematic data processing workflows and the parallel model training capability of hundreds of GPUs, it has been successfully applied in various industry scenarios such as government affairs, cloud services, international e-commerce, and fast-moving consumer goods.

Companies with demands for deploying large-scale model applications are welcome to contact us.

**Enquiry Hotline: 400-898-7779**

**E-mail: ai@orionstar.com**

**Discord Link: https://discord.gg/zumjDWgdAs**