Commit

·

c448da8

1

Parent(s):

785a186

Upload 12 files

Browse files- MODEL_LICENSE.txt +65 -0

- config.json +47 -0

- configuration_chatglm.py +61 -0

- flowchart V2.png +0 -0

- generation_config.json +6 -0

- modeling_chatglm.py +1281 -0

- pytorch_model.bin.index.json +208 -0

- quantization.py +188 -0

- special_tokens_map.json +1 -0

- tokenizer.model +3 -0

- tokenizer_config.json +14 -0

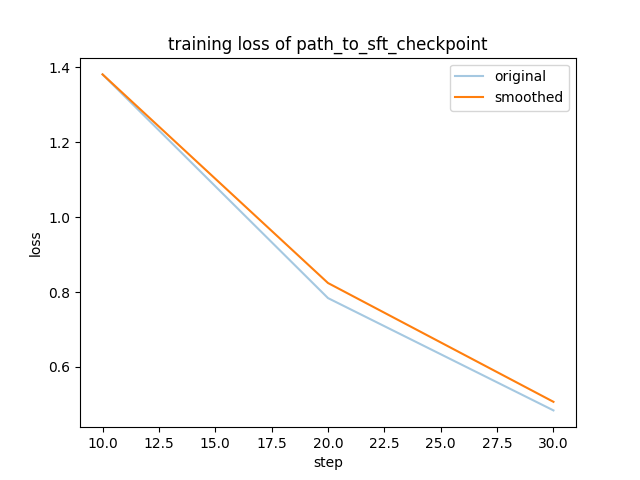

- training_loss.png +0 -0

MODEL_LICENSE.txt

ADDED

|

@@ -0,0 +1,65 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

The ChatGLM2-6B License

|

| 2 |

+

|

| 3 |

+

1. 定义

|

| 4 |

+

|

| 5 |

+

“许可方”是指分发其软件的 ChatGLM2-6B 模型团队。

|

| 6 |

+

|

| 7 |

+

“软件”是指根据本许可提供的 ChatGLM2-6B 模型参数。

|

| 8 |

+

|

| 9 |

+

2. 许可授予

|

| 10 |

+

|

| 11 |

+

根据本许可的条款和条件,许可方特此授予您非排他性、全球性、不可转让、不可再许可、可撤销、免版税的版权许可。

|

| 12 |

+

|

| 13 |

+

上述版权声明和本许可声明应包含在本软件的所有副本或重要部分中。

|

| 14 |

+

|

| 15 |

+

3.限制

|

| 16 |

+

|

| 17 |

+

您不得出于任何军事或非法目的使用、复制、修改、合并、发布、分发、复制或创建本软件的全部或部分衍生作品。

|

| 18 |

+

|

| 19 |

+

您不得利用本软件从事任何危害国家安全和国家统一、危害社会公共利益、侵犯人身权益的行为。

|

| 20 |

+

|

| 21 |

+

4.免责声明

|

| 22 |

+

|

| 23 |

+

本软件“按原样”提供,不提供任何明示或暗示的保证,包括但不限于对适销性、特定用途的适用性和非侵权性的保证。 在任何情况下,作者或版权持有人均不对任何索赔、损害或其他责任负责,无论是在合同诉讼、侵权行为还是其他方面,由软件或软件的使用或其他交易引起、由软件引起或与之相关 软件。

|

| 24 |

+

|

| 25 |

+

5. 责任限制

|

| 26 |

+

|

| 27 |

+

除适用法律禁止的范围外,在任何情况下且根据任何法律理论,无论是基于侵权行为、疏忽、合同、责任或其他原因,任何许可方均不对您承担任何直接、间接、特殊、偶然、示范性、 或间接损害,或任何其他商业损失,即使许可人已被告知此类损害的可能性。

|

| 28 |

+

|

| 29 |

+

6.争议解决

|

| 30 |

+

|

| 31 |

+

本许可受中华人民共和国法律管辖并按其解释。 因本许可引起的或与本许可有关的任何争议应提交北京市海淀区人民法院。

|

| 32 |

+

|

| 33 |

+

请注意,许可证可能会更新到更全面的版本。 有关许可和版权的任何问题,请通过 [email protected] 与我们联系。

|

| 34 |

+

|

| 35 |

+

1. Definitions

|

| 36 |

+

|

| 37 |

+

“Licensor” means the ChatGLM2-6B Model Team that distributes its Software.

|

| 38 |

+

|

| 39 |

+

“Software” means the ChatGLM2-6B model parameters made available under this license.

|

| 40 |

+

|

| 41 |

+

2. License Grant

|

| 42 |

+

|

| 43 |

+

Subject to the terms and conditions of this License, the Licensor hereby grants to you a non-exclusive, worldwide, non-transferable, non-sublicensable, revocable, royalty-free copyright license to use the Software.

|

| 44 |

+

|

| 45 |

+

The above copyright notice and this permission notice shall be included in all copies or substantial portions of the Software.

|

| 46 |

+

|

| 47 |

+

3. Restriction

|

| 48 |

+

|

| 49 |

+

You will not use, copy, modify, merge, publish, distribute, reproduce, or create derivative works of the Software, in whole or in part, for any military, or illegal purposes.

|

| 50 |

+

|

| 51 |

+

You will not use the Software for any act that may undermine China's national security and national unity, harm the public interest of society, or infringe upon the rights and interests of human beings.

|

| 52 |

+

|

| 53 |

+

4. Disclaimer

|

| 54 |

+

|

| 55 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.

|

| 56 |

+

|

| 57 |

+

5. Limitation of Liability

|

| 58 |

+

|

| 59 |

+

EXCEPT TO THE EXTENT PROHIBITED BY APPLICABLE LAW, IN NO EVENT AND UNDER NO LEGAL THEORY, WHETHER BASED IN TORT, NEGLIGENCE, CONTRACT, LIABILITY, OR OTHERWISE WILL ANY LICENSOR BE LIABLE TO YOU FOR ANY DIRECT, INDIRECT, SPECIAL, INCIDENTAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES, OR ANY OTHER COMMERCIAL LOSSES, EVEN IF THE LICENSOR HAS BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES.

|

| 60 |

+

|

| 61 |

+

6. Dispute Resolution

|

| 62 |

+

|

| 63 |

+

This license shall be governed and construed in accordance with the laws of People’s Republic of China. Any dispute arising from or in connection with this License shall be submitted to Haidian District People's Court in Beijing.

|

| 64 |

+

|

| 65 |

+

Note that the license is subject to update to a more comprehensive version. For any questions related to the license and copyright, please contact us at [email protected].

|

config.json

ADDED

|

@@ -0,0 +1,47 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "THUDM/chatglm2-6b",

|

| 3 |

+

"add_bias_linear": false,

|

| 4 |

+

"add_qkv_bias": true,

|

| 5 |

+

"apply_query_key_layer_scaling": true,

|

| 6 |

+

"apply_residual_connection_post_layernorm": false,

|

| 7 |

+

"architectures": [

|

| 8 |

+

"ChatGLMForConditionalGeneration"

|

| 9 |

+

],

|

| 10 |

+

"attention_dropout": 0.0,

|

| 11 |

+

"attention_softmax_in_fp32": true,

|

| 12 |

+

"auto_map": {

|

| 13 |

+

"AutoConfig": "configuration_chatglm.ChatGLMConfig",

|

| 14 |

+

"AutoModel": "modeling_chatglm.ChatGLMForConditionalGeneration",

|

| 15 |

+

"AutoModelForCausalLM": "THUDM/chatglm2-6b--modeling_chatglm.ChatGLMForConditionalGeneration",

|

| 16 |

+

"AutoModelForSeq2SeqLM": "THUDM/chatglm2-6b--modeling_chatglm.ChatGLMForConditionalGeneration",

|

| 17 |

+

"AutoModelForSequenceClassification": "THUDM/chatglm2-6b--modeling_chatglm.ChatGLMForSequenceClassification"

|

| 18 |

+

},

|

| 19 |

+

"bias_dropout_fusion": true,

|

| 20 |

+

"classifier_dropout": null,

|

| 21 |

+

"eos_token_id": 2,

|

| 22 |

+

"ffn_hidden_size": 13696,

|

| 23 |

+

"fp32_residual_connection": false,

|

| 24 |

+

"hidden_dropout": 0.0,

|

| 25 |

+

"hidden_size": 4096,

|

| 26 |

+

"kv_channels": 128,

|

| 27 |

+

"layernorm_epsilon": 1e-05,

|

| 28 |

+

"model_type": "chatglm",

|

| 29 |

+

"multi_query_attention": true,

|

| 30 |

+

"multi_query_group_num": 2,

|

| 31 |

+

"num_attention_heads": 32,

|

| 32 |

+

"num_layers": 28,

|

| 33 |

+

"original_rope": true,

|

| 34 |

+

"pad_token_id": 0,

|

| 35 |

+

"padded_vocab_size": 65024,

|

| 36 |

+

"post_layer_norm": true,

|

| 37 |

+

"pre_seq_len": null,

|

| 38 |

+

"prefix_projection": false,

|

| 39 |

+

"quantization_bit": 0,

|

| 40 |

+

"rmsnorm": true,

|

| 41 |

+

"seq_length": 32768,

|

| 42 |

+

"tie_word_embeddings": false,

|

| 43 |

+

"torch_dtype": "float32",

|

| 44 |

+

"transformers_version": "4.33.2",

|

| 45 |

+

"use_cache": true,

|

| 46 |

+

"vocab_size": 65024

|

| 47 |

+

}

|

configuration_chatglm.py

ADDED

|

@@ -0,0 +1,61 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from transformers import PretrainedConfig

|

| 2 |

+

|

| 3 |

+

|

| 4 |

+

class ChatGLMConfig(PretrainedConfig):

|

| 5 |

+

model_type = "chatglm"

|

| 6 |

+

def __init__(

|

| 7 |

+

self,

|

| 8 |

+

num_layers=28,

|

| 9 |

+

padded_vocab_size=65024,

|

| 10 |

+

hidden_size=4096,

|

| 11 |

+

ffn_hidden_size=13696,

|

| 12 |

+

kv_channels=128,

|

| 13 |

+

num_attention_heads=32,

|

| 14 |

+

seq_length=2048,

|

| 15 |

+

hidden_dropout=0.0,

|

| 16 |

+

classifier_dropout=None,

|

| 17 |

+

attention_dropout=0.0,

|

| 18 |

+

layernorm_epsilon=1e-5,

|

| 19 |

+

rmsnorm=True,

|

| 20 |

+

apply_residual_connection_post_layernorm=False,

|

| 21 |

+

post_layer_norm=True,

|

| 22 |

+

add_bias_linear=False,

|

| 23 |

+

add_qkv_bias=False,

|

| 24 |

+

bias_dropout_fusion=True,

|

| 25 |

+

multi_query_attention=False,

|

| 26 |

+

multi_query_group_num=1,

|

| 27 |

+

apply_query_key_layer_scaling=True,

|

| 28 |

+

attention_softmax_in_fp32=True,

|

| 29 |

+

fp32_residual_connection=False,

|

| 30 |

+

quantization_bit=0,

|

| 31 |

+

pre_seq_len=None,

|

| 32 |

+

prefix_projection=False,

|

| 33 |

+

**kwargs

|

| 34 |

+

):

|

| 35 |

+

self.num_layers = num_layers

|

| 36 |

+

self.vocab_size = padded_vocab_size

|

| 37 |

+

self.padded_vocab_size = padded_vocab_size

|

| 38 |

+

self.hidden_size = hidden_size

|

| 39 |

+

self.ffn_hidden_size = ffn_hidden_size

|

| 40 |

+

self.kv_channels = kv_channels

|

| 41 |

+

self.num_attention_heads = num_attention_heads

|

| 42 |

+

self.seq_length = seq_length

|

| 43 |

+

self.hidden_dropout = hidden_dropout

|

| 44 |

+

self.classifier_dropout = classifier_dropout

|

| 45 |

+

self.attention_dropout = attention_dropout

|

| 46 |

+

self.layernorm_epsilon = layernorm_epsilon

|

| 47 |

+

self.rmsnorm = rmsnorm

|

| 48 |

+

self.apply_residual_connection_post_layernorm = apply_residual_connection_post_layernorm

|

| 49 |

+

self.post_layer_norm = post_layer_norm

|

| 50 |

+

self.add_bias_linear = add_bias_linear

|

| 51 |

+

self.add_qkv_bias = add_qkv_bias

|

| 52 |

+

self.bias_dropout_fusion = bias_dropout_fusion

|

| 53 |

+

self.multi_query_attention = multi_query_attention

|

| 54 |

+

self.multi_query_group_num = multi_query_group_num

|

| 55 |

+

self.apply_query_key_layer_scaling = apply_query_key_layer_scaling

|

| 56 |

+

self.attention_softmax_in_fp32 = attention_softmax_in_fp32

|

| 57 |

+

self.fp32_residual_connection = fp32_residual_connection

|

| 58 |

+

self.quantization_bit = quantization_bit

|

| 59 |

+

self.pre_seq_len = pre_seq_len

|

| 60 |

+

self.prefix_projection = prefix_projection

|

| 61 |

+

super().__init__(**kwargs)

|

flowchart V2.png

ADDED

|

generation_config.json

ADDED

|

@@ -0,0 +1,6 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_from_model_config": true,

|

| 3 |

+

"eos_token_id": 2,

|

| 4 |

+

"pad_token_id": 0,

|

| 5 |

+

"transformers_version": "4.33.2"

|

| 6 |

+

}

|

modeling_chatglm.py

ADDED

|

@@ -0,0 +1,1281 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

""" PyTorch ChatGLM model. """

|

| 2 |

+

|

| 3 |

+

import math

|

| 4 |

+

import copy

|

| 5 |

+

import warnings

|

| 6 |

+

import re

|

| 7 |

+

import sys

|

| 8 |

+

|

| 9 |

+

import torch

|

| 10 |

+

import torch.utils.checkpoint

|

| 11 |

+

import torch.nn.functional as F

|

| 12 |

+

from torch import nn

|

| 13 |

+

from torch.nn import CrossEntropyLoss, LayerNorm

|

| 14 |

+

from torch.nn import CrossEntropyLoss, LayerNorm, MSELoss, BCEWithLogitsLoss

|

| 15 |

+

from torch.nn.utils import skip_init

|

| 16 |

+

from typing import Optional, Tuple, Union, List, Callable, Dict, Any

|

| 17 |

+

|

| 18 |

+

from transformers.modeling_outputs import (

|

| 19 |

+

BaseModelOutputWithPast,

|

| 20 |

+

CausalLMOutputWithPast,

|

| 21 |

+

SequenceClassifierOutputWithPast,

|

| 22 |

+

)

|

| 23 |

+

from transformers.modeling_utils import PreTrainedModel

|

| 24 |

+

from transformers.utils import logging

|

| 25 |

+

from transformers.generation.logits_process import LogitsProcessor

|

| 26 |

+

from transformers.generation.utils import LogitsProcessorList, StoppingCriteriaList, GenerationConfig, ModelOutput

|

| 27 |

+

|

| 28 |

+

from .configuration_chatglm import ChatGLMConfig

|

| 29 |

+

|

| 30 |

+

# flags required to enable jit fusion kernels

|

| 31 |

+

|

| 32 |

+

if sys.platform != 'darwin':

|

| 33 |

+

torch._C._jit_set_profiling_mode(False)

|

| 34 |

+

torch._C._jit_set_profiling_executor(False)

|

| 35 |

+

torch._C._jit_override_can_fuse_on_cpu(True)

|

| 36 |

+

torch._C._jit_override_can_fuse_on_gpu(True)

|

| 37 |

+

|

| 38 |

+

logger = logging.get_logger(__name__)

|

| 39 |

+

|

| 40 |

+

_CHECKPOINT_FOR_DOC = "THUDM/ChatGLM2-6B"

|

| 41 |

+

_CONFIG_FOR_DOC = "ChatGLM6BConfig"

|

| 42 |

+

|

| 43 |

+

CHATGLM_6B_PRETRAINED_MODEL_ARCHIVE_LIST = [

|

| 44 |

+

"THUDM/chatglm2-6b",

|

| 45 |

+

# See all ChatGLM models at https://huggingface.co/models?filter=chatglm

|

| 46 |

+

]

|

| 47 |

+

|

| 48 |

+

|

| 49 |

+

def default_init(cls, *args, **kwargs):

|

| 50 |

+

return cls(*args, **kwargs)

|

| 51 |

+

|

| 52 |

+

|

| 53 |

+

class InvalidScoreLogitsProcessor(LogitsProcessor):

|

| 54 |

+

def __call__(self, input_ids: torch.LongTensor, scores: torch.FloatTensor) -> torch.FloatTensor:

|

| 55 |

+

if torch.isnan(scores).any() or torch.isinf(scores).any():

|

| 56 |

+

scores.zero_()

|

| 57 |

+

scores[..., 5] = 5e4

|

| 58 |

+

return scores

|

| 59 |

+

|

| 60 |

+

|

| 61 |

+

class PrefixEncoder(torch.nn.Module):

|

| 62 |

+

"""

|

| 63 |

+

The torch.nn model to encode the prefix

|

| 64 |

+

Input shape: (batch-size, prefix-length)

|

| 65 |

+

Output shape: (batch-size, prefix-length, 2*layers*hidden)

|

| 66 |

+

"""

|

| 67 |

+

|

| 68 |

+

def __init__(self, config: ChatGLMConfig):

|

| 69 |

+

super().__init__()

|

| 70 |

+

self.prefix_projection = config.prefix_projection

|

| 71 |

+

if self.prefix_projection:

|

| 72 |

+

# Use a two-layer MLP to encode the prefix

|

| 73 |

+

kv_size = config.num_layers * config.kv_channels * config.multi_query_group_num * 2

|

| 74 |

+

self.embedding = torch.nn.Embedding(config.pre_seq_len, kv_size)

|

| 75 |

+

self.trans = torch.nn.Sequential(

|

| 76 |

+

torch.nn.Linear(kv_size, config.hidden_size),

|

| 77 |

+

torch.nn.Tanh(),

|

| 78 |

+

torch.nn.Linear(config.hidden_size, kv_size)

|

| 79 |

+

)

|

| 80 |

+

else:

|

| 81 |

+

self.embedding = torch.nn.Embedding(config.pre_seq_len,

|

| 82 |

+

config.num_layers * config.kv_channels * config.multi_query_group_num * 2)

|

| 83 |

+

|

| 84 |

+

def forward(self, prefix: torch.Tensor):

|

| 85 |

+

if self.prefix_projection:

|

| 86 |

+

prefix_tokens = self.embedding(prefix)

|

| 87 |

+

past_key_values = self.trans(prefix_tokens)

|

| 88 |

+

else:

|

| 89 |

+

past_key_values = self.embedding(prefix)

|

| 90 |

+

return past_key_values

|

| 91 |

+

|

| 92 |

+

|

| 93 |

+

def split_tensor_along_last_dim(

|

| 94 |

+

tensor: torch.Tensor,

|

| 95 |

+

num_partitions: int,

|

| 96 |

+

contiguous_split_chunks: bool = False,

|

| 97 |

+

) -> List[torch.Tensor]:

|

| 98 |

+

"""Split a tensor along its last dimension.

|

| 99 |

+

|

| 100 |

+

Arguments:

|

| 101 |

+

tensor: input tensor.

|

| 102 |

+

num_partitions: number of partitions to split the tensor

|

| 103 |

+

contiguous_split_chunks: If True, make each chunk contiguous

|

| 104 |

+

in memory.

|

| 105 |

+

|

| 106 |

+

Returns:

|

| 107 |

+

A list of Tensors

|

| 108 |

+

"""

|

| 109 |

+

# Get the size and dimension.

|

| 110 |

+

last_dim = tensor.dim() - 1

|

| 111 |

+

last_dim_size = tensor.size()[last_dim] // num_partitions

|

| 112 |

+

# Split.

|

| 113 |

+

tensor_list = torch.split(tensor, last_dim_size, dim=last_dim)

|

| 114 |

+

# Note: torch.split does not create contiguous tensors by default.

|

| 115 |

+

if contiguous_split_chunks:

|

| 116 |

+

return tuple(chunk.contiguous() for chunk in tensor_list)

|

| 117 |

+

|

| 118 |

+

return tensor_list

|

| 119 |

+

|

| 120 |

+

|

| 121 |

+

class RotaryEmbedding(nn.Module):

|

| 122 |

+

def __init__(self, dim, original_impl=False, device=None, dtype=None):

|

| 123 |

+

super().__init__()

|

| 124 |

+

inv_freq = 1.0 / (10000 ** (torch.arange(0, dim, 2, device=device).to(dtype=dtype) / dim))

|

| 125 |

+

self.register_buffer("inv_freq", inv_freq)

|

| 126 |

+

self.dim = dim

|

| 127 |

+

self.original_impl = original_impl

|

| 128 |

+

|

| 129 |

+

def forward_impl(

|

| 130 |

+

self, seq_len: int, n_elem: int, dtype: torch.dtype, device: torch.device, base: int = 10000

|

| 131 |

+

):

|

| 132 |

+

"""Enhanced Transformer with Rotary Position Embedding.

|

| 133 |

+

|

| 134 |

+

Derived from: https://github.com/labmlai/annotated_deep_learning_paper_implementations/blob/master/labml_nn/

|

| 135 |

+

transformers/rope/__init__.py. MIT License:

|

| 136 |

+

https://github.com/labmlai/annotated_deep_learning_paper_implementations/blob/master/license.

|

| 137 |

+

"""

|

| 138 |

+

# $\Theta = {\theta_i = 10000^{\frac{2(i-1)}{d}}, i \in [1, 2, ..., \frac{d}{2}]}$

|

| 139 |

+

theta = 1.0 / (base ** (torch.arange(0, n_elem, 2, dtype=dtype, device=device) / n_elem))

|

| 140 |

+

|

| 141 |

+

# Create position indexes `[0, 1, ..., seq_len - 1]`

|

| 142 |

+

seq_idx = torch.arange(seq_len, dtype=dtype, device=device)

|

| 143 |

+

|

| 144 |

+

# Calculate the product of position index and $\theta_i$

|

| 145 |

+

idx_theta = torch.outer(seq_idx, theta).float()

|

| 146 |

+

|

| 147 |

+

cache = torch.stack([torch.cos(idx_theta), torch.sin(idx_theta)], dim=-1)

|

| 148 |

+

|

| 149 |

+

# this is to mimic the behaviour of complex32, else we will get different results

|

| 150 |

+

if dtype in (torch.float16, torch.bfloat16, torch.int8):

|

| 151 |

+

cache = cache.bfloat16() if dtype == torch.bfloat16 else cache.half()

|

| 152 |

+

return cache

|

| 153 |

+

|

| 154 |

+

def forward(self, max_seq_len, offset=0):

|

| 155 |

+

return self.forward_impl(

|

| 156 |

+

max_seq_len, self.dim, dtype=self.inv_freq.dtype, device=self.inv_freq.device

|

| 157 |

+

)

|

| 158 |

+

|

| 159 |

+

|

| 160 |

+

@torch.jit.script

|

| 161 |

+

def apply_rotary_pos_emb(x: torch.Tensor, rope_cache: torch.Tensor) -> torch.Tensor:

|

| 162 |

+

# x: [sq, b, np, hn]

|

| 163 |

+

sq, b, np, hn = x.size(0), x.size(1), x.size(2), x.size(3)

|

| 164 |

+

rot_dim = rope_cache.shape[-2] * 2

|

| 165 |

+

x, x_pass = x[..., :rot_dim], x[..., rot_dim:]

|

| 166 |

+

# truncate to support variable sizes

|

| 167 |

+

rope_cache = rope_cache[:sq]

|

| 168 |

+

xshaped = x.reshape(sq, -1, np, rot_dim // 2, 2)

|

| 169 |

+

rope_cache = rope_cache.view(sq, -1, 1, xshaped.size(3), 2)

|

| 170 |

+

x_out2 = torch.stack(

|

| 171 |

+

[

|

| 172 |

+

xshaped[..., 0] * rope_cache[..., 0] - xshaped[..., 1] * rope_cache[..., 1],

|

| 173 |

+

xshaped[..., 1] * rope_cache[..., 0] + xshaped[..., 0] * rope_cache[..., 1],

|

| 174 |

+

],

|

| 175 |

+

-1,

|

| 176 |

+

)

|

| 177 |

+

x_out2 = x_out2.flatten(3)

|

| 178 |

+

return torch.cat((x_out2, x_pass), dim=-1)

|

| 179 |

+

|

| 180 |

+

|

| 181 |

+

class RMSNorm(torch.nn.Module):

|

| 182 |

+

def __init__(self, normalized_shape, eps=1e-5, device=None, dtype=None, **kwargs):

|

| 183 |

+

super().__init__()

|

| 184 |

+

self.weight = torch.nn.Parameter(torch.empty(normalized_shape, device=device, dtype=dtype))

|

| 185 |

+

self.eps = eps

|

| 186 |

+

|

| 187 |

+

def forward(self, hidden_states: torch.Tensor):

|

| 188 |

+

input_dtype = hidden_states.dtype

|

| 189 |

+

variance = hidden_states.to(torch.float32).pow(2).mean(-1, keepdim=True)

|

| 190 |

+

hidden_states = hidden_states * torch.rsqrt(variance + self.eps)

|

| 191 |

+

|

| 192 |

+

return (self.weight * hidden_states).to(input_dtype)

|

| 193 |

+

|

| 194 |

+

|

| 195 |

+

class CoreAttention(torch.nn.Module):

|

| 196 |

+

def __init__(self, config: ChatGLMConfig, layer_number):

|

| 197 |

+

super(CoreAttention, self).__init__()

|

| 198 |

+

|

| 199 |

+

self.apply_query_key_layer_scaling = config.apply_query_key_layer_scaling

|

| 200 |

+

self.attention_softmax_in_fp32 = config.attention_softmax_in_fp32

|

| 201 |

+

if self.apply_query_key_layer_scaling:

|

| 202 |

+

self.attention_softmax_in_fp32 = True

|

| 203 |

+

self.layer_number = max(1, layer_number)

|

| 204 |

+

|

| 205 |

+

projection_size = config.kv_channels * config.num_attention_heads

|

| 206 |

+

|

| 207 |

+

# Per attention head and per partition values.

|

| 208 |

+

self.hidden_size_per_partition = projection_size

|

| 209 |

+

self.hidden_size_per_attention_head = projection_size // config.num_attention_heads

|

| 210 |

+

self.num_attention_heads_per_partition = config.num_attention_heads

|

| 211 |

+

|

| 212 |

+

coeff = None

|

| 213 |

+

self.norm_factor = math.sqrt(self.hidden_size_per_attention_head)

|

| 214 |

+

if self.apply_query_key_layer_scaling:

|

| 215 |

+

coeff = self.layer_number

|

| 216 |

+

self.norm_factor *= coeff

|

| 217 |

+

self.coeff = coeff

|

| 218 |

+

|

| 219 |

+

self.attention_dropout = torch.nn.Dropout(config.attention_dropout)

|

| 220 |

+

|

| 221 |

+

def forward(self, query_layer, key_layer, value_layer, attention_mask):

|

| 222 |

+

pytorch_major_version = int(torch.__version__.split('.')[0])

|

| 223 |

+

if pytorch_major_version >= 2:

|

| 224 |

+

query_layer, key_layer, value_layer = [k.permute(1, 2, 0, 3) for k in [query_layer, key_layer, value_layer]]

|

| 225 |

+

if attention_mask is None and query_layer.shape[2] == key_layer.shape[2]:

|

| 226 |

+

context_layer = torch.nn.functional.scaled_dot_product_attention(query_layer, key_layer, value_layer,

|

| 227 |

+

is_causal=True)

|

| 228 |

+

else:

|

| 229 |

+

if attention_mask is not None:

|

| 230 |

+

attention_mask = ~attention_mask

|

| 231 |

+

context_layer = torch.nn.functional.scaled_dot_product_attention(query_layer, key_layer, value_layer,

|

| 232 |

+

attention_mask)

|

| 233 |

+

context_layer = context_layer.permute(2, 0, 1, 3)

|

| 234 |

+

new_context_layer_shape = context_layer.size()[:-2] + (self.hidden_size_per_partition,)

|

| 235 |

+

context_layer = context_layer.reshape(*new_context_layer_shape)

|

| 236 |

+

else:

|

| 237 |

+

# Raw attention scores

|

| 238 |

+

|

| 239 |

+

# [b, np, sq, sk]

|

| 240 |

+

output_size = (query_layer.size(1), query_layer.size(2), query_layer.size(0), key_layer.size(0))

|

| 241 |

+

|

| 242 |

+

# [sq, b, np, hn] -> [sq, b * np, hn]

|

| 243 |

+

query_layer = query_layer.view(output_size[2], output_size[0] * output_size[1], -1)

|

| 244 |

+

# [sk, b, np, hn] -> [sk, b * np, hn]

|

| 245 |

+

key_layer = key_layer.view(output_size[3], output_size[0] * output_size[1], -1)

|

| 246 |

+

|

| 247 |

+

# preallocting input tensor: [b * np, sq, sk]

|

| 248 |

+

matmul_input_buffer = torch.empty(

|

| 249 |

+

output_size[0] * output_size[1], output_size[2], output_size[3], dtype=query_layer.dtype,

|

| 250 |

+

device=query_layer.device

|

| 251 |

+

)

|

| 252 |

+

|

| 253 |

+

# Raw attention scores. [b * np, sq, sk]

|

| 254 |

+

matmul_result = torch.baddbmm(

|

| 255 |

+

matmul_input_buffer,

|

| 256 |

+

query_layer.transpose(0, 1), # [b * np, sq, hn]

|

| 257 |

+

key_layer.transpose(0, 1).transpose(1, 2), # [b * np, hn, sk]

|

| 258 |

+

beta=0.0,

|

| 259 |

+

alpha=(1.0 / self.norm_factor),

|

| 260 |

+

)

|

| 261 |

+

|

| 262 |

+

# change view to [b, np, sq, sk]

|

| 263 |

+

attention_scores = matmul_result.view(*output_size)

|

| 264 |

+

|

| 265 |

+

# ===========================

|

| 266 |

+

# Attention probs and dropout

|

| 267 |

+

# ===========================

|

| 268 |

+

|

| 269 |

+

# attention scores and attention mask [b, np, sq, sk]

|

| 270 |

+

if self.attention_softmax_in_fp32:

|

| 271 |

+

attention_scores = attention_scores.float()

|

| 272 |

+

if self.coeff is not None:

|

| 273 |

+

attention_scores = attention_scores * self.coeff

|

| 274 |

+

if attention_mask is None and attention_scores.shape[2] == attention_scores.shape[3]:

|

| 275 |

+

attention_mask = torch.ones(output_size[0], 1, output_size[2], output_size[3],

|

| 276 |

+

device=attention_scores.device, dtype=torch.bool)

|

| 277 |

+

attention_mask.tril_()

|

| 278 |

+

attention_mask = ~attention_mask

|

| 279 |

+

if attention_mask is not None:

|

| 280 |

+

attention_scores = attention_scores.masked_fill(attention_mask, float("-inf"))

|

| 281 |

+

attention_probs = F.softmax(attention_scores, dim=-1)

|

| 282 |

+

attention_probs = attention_probs.type_as(value_layer)

|

| 283 |

+

|

| 284 |

+

# This is actually dropping out entire tokens to attend to, which might

|

| 285 |

+

# seem a bit unusual, but is taken from the original Transformer paper.

|

| 286 |

+

attention_probs = self.attention_dropout(attention_probs)

|

| 287 |

+

# =========================

|

| 288 |

+

# Context layer. [sq, b, hp]

|

| 289 |

+

# =========================

|

| 290 |

+

|

| 291 |

+

# value_layer -> context layer.

|

| 292 |

+

# [sk, b, np, hn] --> [b, np, sq, hn]

|

| 293 |

+

|

| 294 |

+

# context layer shape: [b, np, sq, hn]

|

| 295 |

+

output_size = (value_layer.size(1), value_layer.size(2), query_layer.size(0), value_layer.size(3))

|

| 296 |

+

# change view [sk, b * np, hn]

|

| 297 |

+

value_layer = value_layer.view(value_layer.size(0), output_size[0] * output_size[1], -1)

|

| 298 |

+

# change view [b * np, sq, sk]

|

| 299 |

+

attention_probs = attention_probs.view(output_size[0] * output_size[1], output_size[2], -1)

|

| 300 |

+

# matmul: [b * np, sq, hn]

|

| 301 |

+

context_layer = torch.bmm(attention_probs, value_layer.transpose(0, 1))

|

| 302 |

+

# change view [b, np, sq, hn]

|

| 303 |

+

context_layer = context_layer.view(*output_size)

|

| 304 |

+

# [b, np, sq, hn] --> [sq, b, np, hn]

|

| 305 |

+

context_layer = context_layer.permute(2, 0, 1, 3).contiguous()

|

| 306 |

+

# [sq, b, np, hn] --> [sq, b, hp]

|

| 307 |

+

new_context_layer_shape = context_layer.size()[:-2] + (self.hidden_size_per_partition,)

|

| 308 |

+

context_layer = context_layer.view(*new_context_layer_shape)

|

| 309 |

+

|

| 310 |

+

return context_layer

|

| 311 |

+

|

| 312 |

+

|

| 313 |

+

class SelfAttention(torch.nn.Module):

|

| 314 |

+

"""Parallel self-attention layer abstract class.

|

| 315 |

+

|

| 316 |

+

Self-attention layer takes input with size [s, b, h]

|

| 317 |

+

and returns output of the same size.

|

| 318 |

+

"""

|

| 319 |

+

|

| 320 |

+

def __init__(self, config: ChatGLMConfig, layer_number, device=None):

|

| 321 |

+

super(SelfAttention, self).__init__()

|

| 322 |

+

self.layer_number = max(1, layer_number)

|

| 323 |

+

|

| 324 |

+

self.projection_size = config.kv_channels * config.num_attention_heads

|

| 325 |

+

|

| 326 |

+

# Per attention head and per partition values.

|

| 327 |

+

self.hidden_size_per_attention_head = self.projection_size // config.num_attention_heads

|

| 328 |

+

self.num_attention_heads_per_partition = config.num_attention_heads

|

| 329 |

+

|

| 330 |

+

self.multi_query_attention = config.multi_query_attention

|

| 331 |

+

self.qkv_hidden_size = 3 * self.projection_size

|

| 332 |

+

if self.multi_query_attention:

|

| 333 |

+

self.num_multi_query_groups_per_partition = config.multi_query_group_num

|

| 334 |

+

self.qkv_hidden_size = (

|

| 335 |

+

self.projection_size + 2 * self.hidden_size_per_attention_head * config.multi_query_group_num

|

| 336 |

+

)

|

| 337 |

+

self.query_key_value = nn.Linear(config.hidden_size, self.qkv_hidden_size,

|

| 338 |

+

bias=config.add_bias_linear or config.add_qkv_bias,

|

| 339 |

+

device=device, **_config_to_kwargs(config)

|

| 340 |

+

)

|

| 341 |

+

|

| 342 |

+

self.core_attention = CoreAttention(config, self.layer_number)

|

| 343 |

+

|

| 344 |

+

# Output.

|

| 345 |

+

self.dense = nn.Linear(self.projection_size, config.hidden_size, bias=config.add_bias_linear,

|

| 346 |

+

device=device, **_config_to_kwargs(config)

|

| 347 |

+

)

|

| 348 |

+

|

| 349 |

+

def _allocate_memory(self, inference_max_sequence_len, batch_size, device=None, dtype=None):

|

| 350 |

+

if self.multi_query_attention:

|

| 351 |

+

num_attention_heads = self.num_multi_query_groups_per_partition

|

| 352 |

+

else:

|

| 353 |

+

num_attention_heads = self.num_attention_heads_per_partition

|

| 354 |

+

return torch.empty(

|

| 355 |

+

inference_max_sequence_len,

|

| 356 |

+

batch_size,

|

| 357 |

+

num_attention_heads,

|

| 358 |

+

self.hidden_size_per_attention_head,

|

| 359 |

+

dtype=dtype,

|

| 360 |

+

device=device,

|

| 361 |

+

)

|

| 362 |

+

|

| 363 |

+

def forward(

|

| 364 |

+

self, hidden_states, attention_mask, rotary_pos_emb, kv_cache=None, use_cache=True

|

| 365 |

+

):

|

| 366 |

+

# hidden_states: [sq, b, h]

|

| 367 |

+

|

| 368 |

+

# =================================================

|

| 369 |

+

# Pre-allocate memory for key-values for inference.

|

| 370 |

+

# =================================================

|

| 371 |

+

# =====================

|

| 372 |

+

# Query, Key, and Value

|

| 373 |

+

# =====================

|

| 374 |

+

|

| 375 |

+