End of training

Browse files- README.md +3 -2

- all_results.json +22 -0

- eval_results.json +17 -0

- train_results.json +8 -0

- trainer_state.json +0 -0

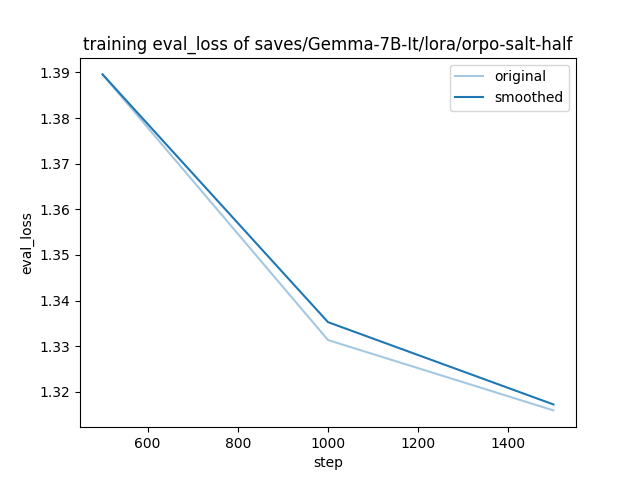

- training_eval_loss.png +0 -0

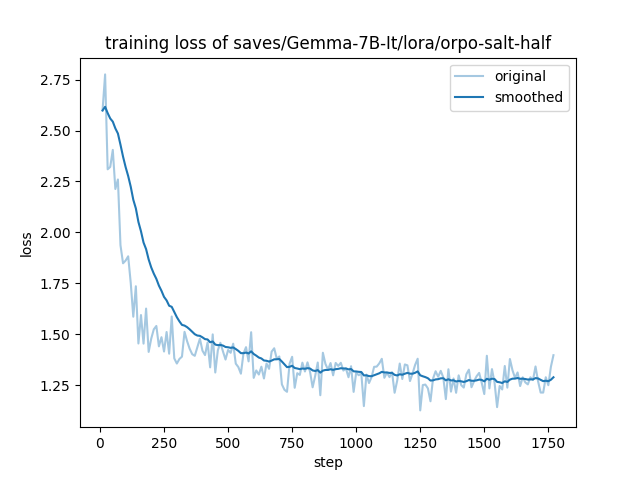

- training_loss.png +0 -0

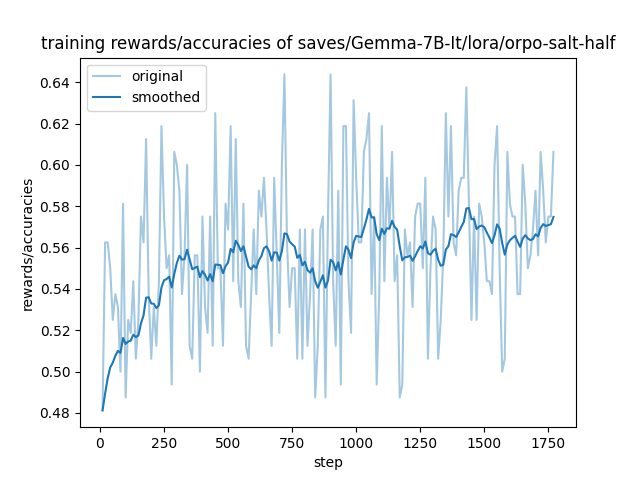

- training_rewards_accuracies.png +0 -0

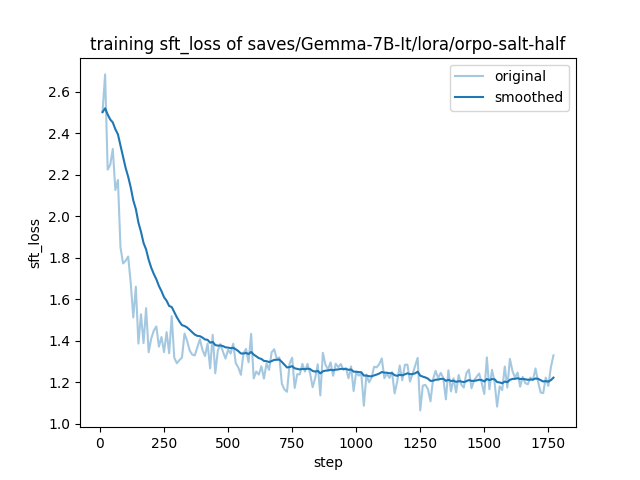

- training_sft_loss.png +0 -0

README.md

CHANGED

|

@@ -2,9 +2,10 @@

|

|

| 2 |

license: gemma

|

| 3 |

library_name: peft

|

| 4 |

tags:

|

|

|

|

|

|

|

| 5 |

- trl

|

| 6 |

- dpo

|

| 7 |

-

- llama-factory

|

| 8 |

- generated_from_trainer

|

| 9 |

base_model: google/gemma-7b-it

|

| 10 |

model-index:

|

|

@@ -17,7 +18,7 @@ should probably proofread and complete it, then remove this comment. -->

|

|

| 17 |

|

| 18 |

# Gemma-7B-It-ORPO-SALT-HALF

|

| 19 |

|

| 20 |

-

This model is a fine-tuned version of [google/gemma-7b-it](https://huggingface.co/google/gemma-7b-it) on the

|

| 21 |

It achieves the following results on the evaluation set:

|

| 22 |

- Loss: 1.3159

|

| 23 |

- Rewards/chosen: -0.1249

|

|

|

|

| 2 |

license: gemma

|

| 3 |

library_name: peft

|

| 4 |

tags:

|

| 5 |

+

- llama-factory

|

| 6 |

+

- lora

|

| 7 |

- trl

|

| 8 |

- dpo

|

|

|

|

| 9 |

- generated_from_trainer

|

| 10 |

base_model: google/gemma-7b-it

|

| 11 |

model-index:

|

|

|

|

| 18 |

|

| 19 |

# Gemma-7B-It-ORPO-SALT-HALF

|

| 20 |

|

| 21 |

+

This model is a fine-tuned version of [google/gemma-7b-it](https://huggingface.co/google/gemma-7b-it) on the dpo_mix_en and the bct_non_cot_dpo_500 datasets.

|

| 22 |

It achieves the following results on the evaluation set:

|

| 23 |

- Loss: 1.3159

|

| 24 |

- Rewards/chosen: -0.1249

|

all_results.json

ADDED

|

@@ -0,0 +1,22 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 2.9974597798475866,

|

| 3 |

+

"eval_logits/chosen": 253.5438995361328,

|

| 4 |

+

"eval_logits/rejected": 253.86448669433594,

|

| 5 |

+

"eval_logps/chosen": -1.2487919330596924,

|

| 6 |

+

"eval_logps/rejected": -1.470909833908081,

|

| 7 |

+

"eval_loss": 1.3159173727035522,

|

| 8 |

+

"eval_odds_ratio_loss": 0.6712530851364136,

|

| 9 |

+

"eval_rewards/accuracies": 0.561904788017273,

|

| 10 |

+

"eval_rewards/chosen": -0.12487921118736267,

|

| 11 |

+

"eval_rewards/margins": 0.02221176214516163,

|

| 12 |

+

"eval_rewards/rejected": -0.14709095656871796,

|

| 13 |

+

"eval_runtime": 222.5011,

|

| 14 |

+

"eval_samples_per_second": 4.719,

|

| 15 |

+

"eval_sft_loss": 1.2487919330596924,

|

| 16 |

+

"eval_steps_per_second": 2.36,

|

| 17 |

+

"total_flos": 2.135641671600046e+18,

|

| 18 |

+

"train_loss": 1.3953795637788071,

|

| 19 |

+

"train_runtime": 23493.5825,

|

| 20 |

+

"train_samples_per_second": 1.206,

|

| 21 |

+

"train_steps_per_second": 0.075

|

| 22 |

+

}

|

eval_results.json

ADDED

|

@@ -0,0 +1,17 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 2.9974597798475866,

|

| 3 |

+

"eval_logits/chosen": 253.5438995361328,

|

| 4 |

+

"eval_logits/rejected": 253.86448669433594,

|

| 5 |

+

"eval_logps/chosen": -1.2487919330596924,

|

| 6 |

+

"eval_logps/rejected": -1.470909833908081,

|

| 7 |

+

"eval_loss": 1.3159173727035522,

|

| 8 |

+

"eval_odds_ratio_loss": 0.6712530851364136,

|

| 9 |

+

"eval_rewards/accuracies": 0.561904788017273,

|

| 10 |

+

"eval_rewards/chosen": -0.12487921118736267,

|

| 11 |

+

"eval_rewards/margins": 0.02221176214516163,

|

| 12 |

+

"eval_rewards/rejected": -0.14709095656871796,

|

| 13 |

+

"eval_runtime": 222.5011,

|

| 14 |

+

"eval_samples_per_second": 4.719,

|

| 15 |

+

"eval_sft_loss": 1.2487919330596924,

|

| 16 |

+

"eval_steps_per_second": 2.36

|

| 17 |

+

}

|

train_results.json

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 2.9974597798475866,

|

| 3 |

+

"total_flos": 2.135641671600046e+18,

|

| 4 |

+

"train_loss": 1.3953795637788071,

|

| 5 |

+

"train_runtime": 23493.5825,

|

| 6 |

+

"train_samples_per_second": 1.206,

|

| 7 |

+

"train_steps_per_second": 0.075

|

| 8 |

+

}

|

trainer_state.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

training_eval_loss.png

ADDED

|

training_loss.png

ADDED

|

training_rewards_accuracies.png

ADDED

|

training_sft_loss.png

ADDED

|