Eugene Siow

commited on

Commit

·

7490281

1

Parent(s):

25c0a21

Initial commit.

Browse files- README.md +135 -0

- config.json +6 -0

- images/a2n_2_4_compare.png +0 -0

- images/a2n_4_4_compare.png +0 -0

- pytorch_model_4x.pt +3 -0

README.md

ADDED

|

@@ -0,0 +1,135 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

tags:

|

| 4 |

+

- super-image

|

| 5 |

+

- image-super-resolution

|

| 6 |

+

datasets:

|

| 7 |

+

- div2k

|

| 8 |

+

metrics:

|

| 9 |

+

- pnsr

|

| 10 |

+

- ssim

|

| 11 |

+

---

|

| 12 |

+

# Attention in Attention Network for Image Super-Resolution (A2N)

|

| 13 |

+

A2N model pre-trained on DIV2K (800 images training, augmented to 4000 images, 100 images validation) for 2x, 3x and 4x image super resolution. It was introduced in the paper [Attention in Attention Network for Image Super-Resolution](https://arxiv.org/abs/2104.09497) by Chen et al. (2021) and first released in [this repository](https://github.com/haoyuc/A2N).

|

| 14 |

+

|

| 15 |

+

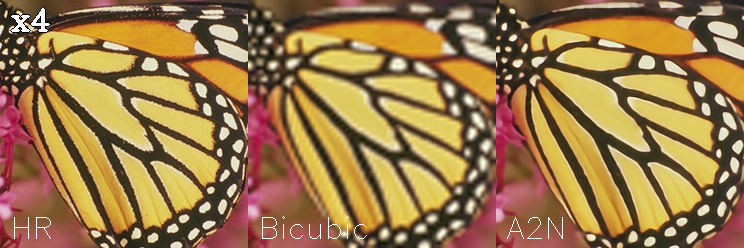

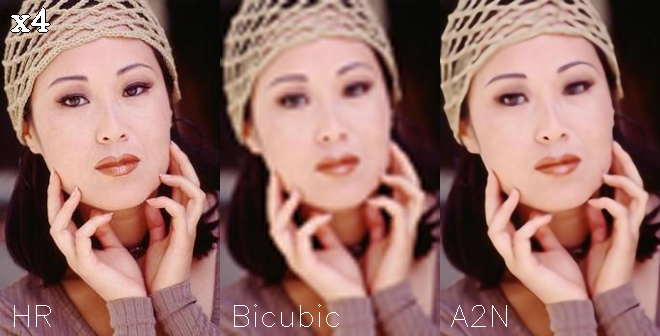

The goal of image super resolution is to restore a high resolution (HR) image from a single low resolution (LR) image. The image below shows the ground truth (HR), the bicubic upscaling x2 and model upscaling x2.

|

| 16 |

+

|

| 17 |

+

|

| 18 |

+

## Model description

|

| 19 |

+

The A2N model proposes an attention in attention network (A2N) for highly accurate image SR. Specifically, the A2N consists of a non-attention branch and a coupling attention branch. Attention dropout module is proposed to generate dynamic attention weights for these two branches based on input features that can suppress unwanted attention adjustments. This allows attention modules to specialize to beneficial examples without otherwise penalties and thus greatly improve the capacity of the attention network with little parameter overhead.

|

| 20 |

+

|

| 21 |

+

More importantly the model is lightweight and fast to train (~1.5m parameters, ~4mb).

|

| 22 |

+

## Intended uses & limitations

|

| 23 |

+

You can use the pre-trained models for upscaling your images 2x, 3x and 4x. You can also use the trainer to train a model on your own dataset.

|

| 24 |

+

### How to use

|

| 25 |

+

The model can be used with the [super_image](https://github.com/eugenesiow/super-image) library:

|

| 26 |

+

```bash

|

| 27 |

+

pip install super-image

|

| 28 |

+

```

|

| 29 |

+

Here is how to use a pre-trained model to upscale your image:

|

| 30 |

+

```python

|

| 31 |

+

from super_image import A2nModel, ImageLoader

|

| 32 |

+

from PIL import Image

|

| 33 |

+

import requests

|

| 34 |

+

|

| 35 |

+

url = 'https://paperswithcode.com/media/datasets/Set5-0000002728-07a9793f_zA3bDjj.jpg'

|

| 36 |

+

image = Image.open(requests.get(url, stream=True).raw)

|

| 37 |

+

|

| 38 |

+

model = A2nModel.from_pretrained('eugenesiow/a2n', scale=2) # scale 2, 3 and 4 models available

|

| 39 |

+

inputs = ImageLoader.load_image(image)

|

| 40 |

+

preds = model(inputs)

|

| 41 |

+

|

| 42 |

+

ImageLoader.save_image(preds, './scaled_2x.png') # save the output 2x scaled image to `./scaled_2x.png`

|

| 43 |

+

ImageLoader.save_compare(inputs, preds, './scaled_2x_compare.png') # save an output comparing the super-image with a bicubic scaling

|

| 44 |

+

```

|

| 45 |

+

## Training data

|

| 46 |

+

The models for 2x, 3x and 4x image super resolution were pretrained on [DIV2K](https://data.vision.ee.ethz.ch/cvl/DIV2K/), a dataset of 800 high-quality (2K resolution) images for training, augmented to 4000 images and uses a dev set of 100 validation images (images numbered 801 to 900).

|

| 47 |

+

## Training procedure

|

| 48 |

+

### Preprocessing

|

| 49 |

+

We follow the pre-processing and training method of [Wang et al.](https://arxiv.org/abs/2104.07566).

|

| 50 |

+

Low Resolution (LR) images are created by using bicubic interpolation as the resizing method to reduce the size of the High Resolution (HR) images by x2, x3 and x4 times.

|

| 51 |

+

During training, RGB patches with size of 64×64 from the LR input are used together with their corresponding HR patches.

|

| 52 |

+

Data augmentation is applied to the training set in the pre-processing stage where five images are created from the four corners and center of the original image.

|

| 53 |

+

|

| 54 |

+

The following code provides some helper functions to preprocess the data.

|

| 55 |

+

```python

|

| 56 |

+

from super_image.data import EvalDataset, TrainAugmentDataset, DatasetBuilder

|

| 57 |

+

|

| 58 |

+

DatasetBuilder.prepare(

|

| 59 |

+

base_path='./DIV2K/DIV2K_train_HR',

|

| 60 |

+

output_path='./div2k_4x_train.h5',

|

| 61 |

+

scale=4,

|

| 62 |

+

do_augmentation=True

|

| 63 |

+

)

|

| 64 |

+

DatasetBuilder.prepare(

|

| 65 |

+

base_path='./DIV2K/DIV2K_val_HR',

|

| 66 |

+

output_path='./div2k_4x_val.h5',

|

| 67 |

+

scale=4,

|

| 68 |

+

do_augmentation=False

|

| 69 |

+

)

|

| 70 |

+

train_dataset = TrainAugmentDataset('./div2k_4x_train.h5', scale=4)

|

| 71 |

+

val_dataset = EvalDataset('./div2k_4x_val.h5')

|

| 72 |

+

```

|

| 73 |

+

### Pretraining

|

| 74 |

+

The model was trained on GPU. The training code is provided below:

|

| 75 |

+

```python

|

| 76 |

+

from super_image import Trainer, TrainingArguments, A2nModel, A2nConfig

|

| 77 |

+

|

| 78 |

+

training_args = TrainingArguments(

|

| 79 |

+

output_dir='./results', # output directory

|

| 80 |

+

num_train_epochs=1000, # total number of training epochs

|

| 81 |

+

)

|

| 82 |

+

|

| 83 |

+

config = A2nConfig(

|

| 84 |

+

scale=4, # train a model to upscale 4x

|

| 85 |

+

)

|

| 86 |

+

model = A2nModel(config)

|

| 87 |

+

|

| 88 |

+

trainer = Trainer(

|

| 89 |

+

model=model, # the instantiated model to be trained

|

| 90 |

+

args=training_args, # training arguments, defined above

|

| 91 |

+

train_dataset=train_dataset, # training dataset

|

| 92 |

+

eval_dataset=val_dataset # evaluation dataset

|

| 93 |

+

)

|

| 94 |

+

|

| 95 |

+

trainer.train()

|

| 96 |

+

```

|

| 97 |

+

## Evaluation results

|

| 98 |

+

The evaluation metrics include [PSNR](https://en.wikipedia.org/wiki/Peak_signal-to-noise_ratio#Quality_estimation_with_PSNR) and [SSIM](https://en.wikipedia.org/wiki/Structural_similarity#Algorithm).

|

| 99 |

+

|

| 100 |

+

Evaluation datasets include:

|

| 101 |

+

- Set5 - [Bevilacqua et al. (2012)](http://people.rennes.inria.fr/Aline.Roumy/results/SR_BMVC12.html)

|

| 102 |

+

- Set14 - [Zeyde et al. (2010)](https://sites.google.com/site/romanzeyde/research-interests)

|

| 103 |

+

- BSD100 - [Martin et al. (2001)](https://www.eecs.berkeley.edu/Research/Projects/CS/vision/bsds/)

|

| 104 |

+

- Urban100 - [Huang et al. (2015)](https://sites.google.com/site/jbhuang0604/publications/struct_sr)

|

| 105 |

+

|

| 106 |

+

The results columns below are represented below as `PSNR/SSIM`. They are compared against a Bicubic baseline.

|

| 107 |

+

|

| 108 |

+

|Dataset |Scale |Bicubic |msrn-bam |

|

| 109 |

+

|--- |--- |--- |--- |

|

| 110 |

+

|Set5 |2x |33.64/0.9292 | |

|

| 111 |

+

|Set5 |3x |30.39/0.8678 | |

|

| 112 |

+

|Set5 |4x |28.42/0.8101 |**32.07/0.8933** |

|

| 113 |

+

|Set14 |2x |30.22/0.8683 | |

|

| 114 |

+

|Set14 |3x |27.53/0.7737 | |

|

| 115 |

+

|Set14 |4x |25.99/0.7023 |**28.56/0.7801** |

|

| 116 |

+

|BSD100 |2x |29.55/0.8425 | |

|

| 117 |

+

|BSD100 |3x |27.20/0.7382 | |

|

| 118 |

+

|BSD100 |4x |25.96/0.6672 |**27.54/0.7342** |

|

| 119 |

+

|Urban100 |2x |26.66/0.8408 | |

|

| 120 |

+

|Urban100 |3x | | |

|

| 121 |

+

|Urban100 |4x |23.14/0.6573 |**25.89/0.7787** |

|

| 122 |

+

|

| 123 |

+

|

| 124 |

+

|

| 125 |

+

## BibTeX entry and citation info

|

| 126 |

+

```bibtex

|

| 127 |

+

@misc{chen2021attention,

|

| 128 |

+

title={Attention in Attention Network for Image Super-Resolution},

|

| 129 |

+

author={Haoyu Chen and Jinjin Gu and Zhi Zhang},

|

| 130 |

+

year={2021},

|

| 131 |

+

eprint={2104.09497},

|

| 132 |

+

archivePrefix={arXiv},

|

| 133 |

+

primaryClass={cs.CV}

|

| 134 |

+

}

|

| 135 |

+

```

|

config.json

ADDED

|

@@ -0,0 +1,6 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "eugenesiow/a2n",

|

| 3 |

+

"data_parallel": false,

|

| 4 |

+

"model_type": "A2N",

|

| 5 |

+

"supported_scales": [2, 3, 4]

|

| 6 |

+

}

|

images/a2n_2_4_compare.png

ADDED

|

images/a2n_4_4_compare.png

ADDED

|

pytorch_model_4x.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a46036b232ed1afa303d176a374d73779c3dad2966ba1463ed6a2426081d42b4

|

| 3 |

+

size 4258525

|