Create README.md

Browse files

README.md

ADDED

|

@@ -0,0 +1,86 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

tags:

|

| 4 |

+

- object-detection

|

| 5 |

+

- vision

|

| 6 |

+

datasets:

|

| 7 |

+

- coco

|

| 8 |

+

widget:

|

| 9 |

+

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/savanna.jpg

|

| 10 |

+

example_title: Savanna

|

| 11 |

+

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/football-match.jpg

|

| 12 |

+

example_title: Football Match

|

| 13 |

+

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/airport.jpg

|

| 14 |

+

example_title: Airport

|

| 15 |

+

---

|

| 16 |

+

|

| 17 |

+

# Deformable DETR model trained using the Detic method on LVIS

|

| 18 |

+

|

| 19 |

+

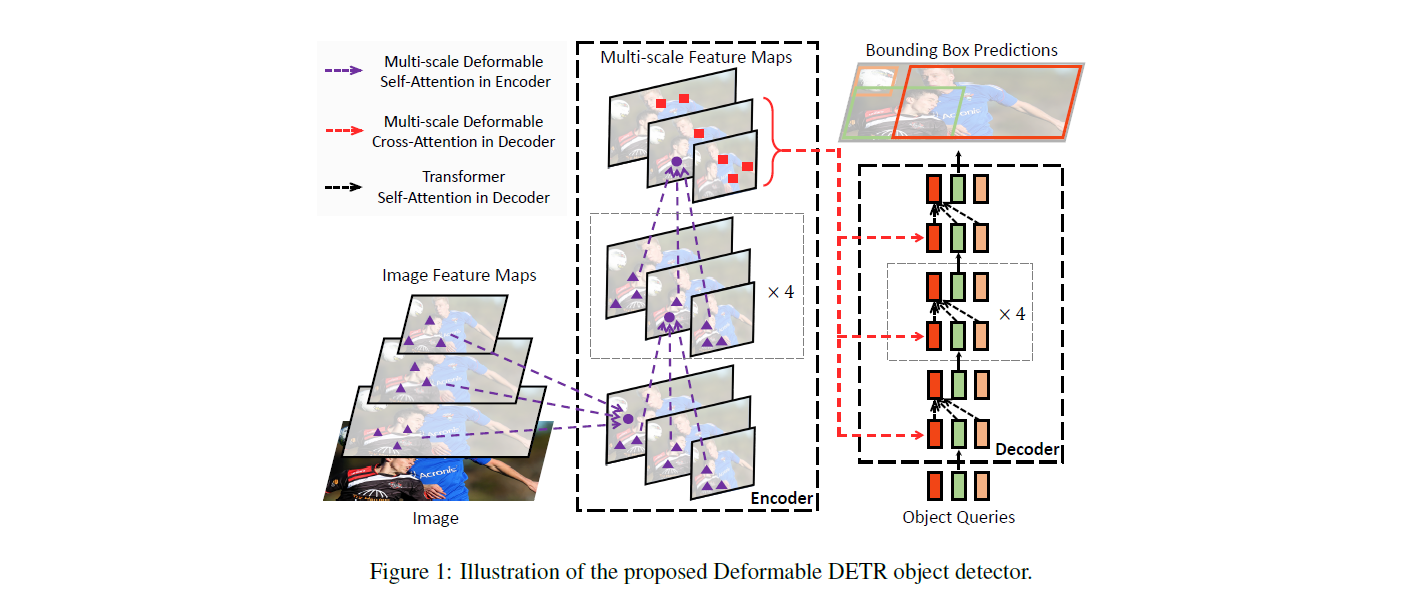

Deformable DEtection TRansformer (DETR), trained on LVIS (including 1203 classes). It was introduced in the paper [Detecting Twenty-thousand Classes using Image-level Supervision](https://arxiv.org/abs/2201.02605) by Zhou et al. and first released in [this repository](https://github.com/facebookresearch/Detic).

|

| 20 |

+

|

| 21 |

+

This model corresponds to the "Detic_DeformDETR_R50_4x" checkpoint released in the original repository.

|

| 22 |

+

|

| 23 |

+

Disclaimer: The team releasing Detic did not write a model card for this model so this model card has been written by the Hugging Face team.

|

| 24 |

+

|

| 25 |

+

## Model description

|

| 26 |

+

|

| 27 |

+

The DETR model is an encoder-decoder transformer with a convolutional backbone. Two heads are added on top of the decoder outputs in order to perform object detection: a linear layer for the class labels and a MLP (multi-layer perceptron) for the bounding boxes. The model uses so-called object queries to detect objects in an image. Each object query looks for a particular object in the image. For COCO, the number of object queries is set to 100.

|

| 28 |

+

|

| 29 |

+

The model is trained using a "bipartite matching loss": one compares the predicted classes + bounding boxes of each of the N = 100 object queries to the ground truth annotations, padded up to the same length N (so if an image only contains 4 objects, 96 annotations will just have a "no object" as class and "no bounding box" as bounding box). The Hungarian matching algorithm is used to create an optimal one-to-one mapping between each of the N queries and each of the N annotations. Next, standard cross-entropy (for the classes) and a linear combination of the L1 and generalized IoU loss (for the bounding boxes) are used to optimize the parameters of the model.

|

| 30 |

+

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

## Intended uses & limitations

|

| 34 |

+

|

| 35 |

+

You can use the raw model for object detection. See the [model hub](https://huggingface.co/models?search=sensetime/deformable-detr) to look for all available Deformable DETR models.

|

| 36 |

+

|

| 37 |

+

### How to use

|

| 38 |

+

|

| 39 |

+

Here is how to use this model:

|

| 40 |

+

|

| 41 |

+

```python

|

| 42 |

+

from transformers import AutoImageProcessor, DeformableDetrForObjectDetection

|

| 43 |

+

import torch

|

| 44 |

+

from PIL import Image

|

| 45 |

+

import requests

|

| 46 |

+

|

| 47 |

+

url = "http://images.cocodataset.org/val2017/000000039769.jpg"

|

| 48 |

+

image = Image.open(requests.get(url, stream=True).raw)

|

| 49 |

+

|

| 50 |

+

processor = AutoImageProcessor.from_pretrained("facebook/deformable-detr-detic")

|

| 51 |

+

model = DeformableDetrForObjectDetection.from_pretrained("facebook/deformable-detr-detic")

|

| 52 |

+

|

| 53 |

+

inputs = processor(images=image, return_tensors="pt")

|

| 54 |

+

outputs = model(**inputs)

|

| 55 |

+

|

| 56 |

+

# convert outputs (bounding boxes and class logits) to COCO API

|

| 57 |

+

# let's only keep detections with score > 0.7

|

| 58 |

+

target_sizes = torch.tensor([image.size[::-1]])

|

| 59 |

+

results = processor.post_process_object_detection(outputs, target_sizes=target_sizes, threshold=0.7)[0]

|

| 60 |

+

|

| 61 |

+

for score, label, box in zip(results["scores"], results["labels"], results["boxes"]):

|

| 62 |

+

box = [round(i, 2) for i in box.tolist()]

|

| 63 |

+

print(

|

| 64 |

+

f"Detected {model.config.id2label[label.item()]} with confidence "

|

| 65 |

+

f"{round(score.item(), 3)} at location {box}"

|

| 66 |

+

)

|

| 67 |

+

```

|

| 68 |

+

|

| 69 |

+

## Evaluation results

|

| 70 |

+

|

| 71 |

+

This model achieves 32.5 box mAP and 26.2 mAP (rare classes) on LVIS.

|

| 72 |

+

|

| 73 |

+

### BibTeX entry and citation info

|

| 74 |

+

|

| 75 |

+

```bibtex

|

| 76 |

+

@misc{https://doi.org/10.48550/arxiv.2010.04159,

|

| 77 |

+

doi = {10.48550/ARXIV.2010.04159},

|

| 78 |

+

url = {https://arxiv.org/abs/2010.04159},

|

| 79 |

+

author = {Zhu, Xizhou and Su, Weijie and Lu, Lewei and Li, Bin and Wang, Xiaogang and Dai, Jifeng},

|

| 80 |

+

keywords = {Computer Vision and Pattern Recognition (cs.CV), FOS: Computer and information sciences, FOS: Computer and information sciences},

|

| 81 |

+

title = {Deformable DETR: Deformable Transformers for End-to-End Object Detection},

|

| 82 |

+

publisher = {arXiv},

|

| 83 |

+

year = {2020},

|

| 84 |

+

copyright = {arXiv.org perpetual, non-exclusive license}

|

| 85 |

+

}

|

| 86 |

+

```

|