Update README.md

Browse files

README.md

CHANGED

|

@@ -1,199 +1,180 @@

|

|

| 1 |

---

|

| 2 |

-

|

| 3 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 4 |

---

|

| 5 |

|

| 6 |

-

# Model Card for Model ID

|

| 7 |

-

|

| 8 |

-

<!-- Provide a quick summary of what the model is/does. -->

|

| 9 |

-

|

| 10 |

-

|

| 11 |

-

|

| 12 |

## Model Details

|

| 13 |

|

| 14 |

-

|

| 15 |

-

|

| 16 |

-

<!-- Provide a longer summary of what this model is. -->

|

| 17 |

-

|

| 18 |

-

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

|

| 19 |

-

|

| 20 |

-

- **Developed by:** [More Information Needed]

|

| 21 |

-

- **Funded by [optional]:** [More Information Needed]

|

| 22 |

-

- **Shared by [optional]:** [More Information Needed]

|

| 23 |

-

- **Model type:** [More Information Needed]

|

| 24 |

-

- **Language(s) (NLP):** [More Information Needed]

|

| 25 |

-

- **License:** [More Information Needed]

|

| 26 |

-

- **Finetuned from model [optional]:** [More Information Needed]

|

| 27 |

-

|

| 28 |

-

### Model Sources [optional]

|

| 29 |

-

|

| 30 |

-

<!-- Provide the basic links for the model. -->

|

| 31 |

-

|

| 32 |

-

- **Repository:** [More Information Needed]

|

| 33 |

-

- **Paper [optional]:** [More Information Needed]

|

| 34 |

-

- **Demo [optional]:** [More Information Needed]

|

| 35 |

-

|

| 36 |

-

## Uses

|

| 37 |

-

|

| 38 |

-

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

|

| 39 |

-

|

| 40 |

-

### Direct Use

|

| 41 |

-

|

| 42 |

-

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

|

| 43 |

-

|

| 44 |

-

[More Information Needed]

|

| 45 |

|

| 46 |

-

|

| 47 |

|

| 48 |

-

|

| 49 |

|

| 50 |

-

|

| 51 |

|

| 52 |

-

|

| 53 |

|

| 54 |

-

|

| 55 |

|

| 56 |

-

|

| 57 |

|

| 58 |

-

##

|

| 59 |

|

| 60 |

-

|

| 61 |

|

| 62 |

-

|

| 63 |

-

|

| 64 |

-

### Recommendations

|

| 65 |

-

|

| 66 |

-

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

|

| 67 |

-

|

| 68 |

-

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

|

| 69 |

|

| 70 |

## How to Get Started with the Model

|

| 71 |

|

| 72 |

-

|

| 73 |

-

|

| 74 |

-

|

| 75 |

-

|

| 76 |

-

|

| 77 |

-

|

| 78 |

-

|

| 79 |

-

|

| 80 |

-

|

| 81 |

-

|

| 82 |

-

|

| 83 |

-

|

| 84 |

-

|

| 85 |

-

|

| 86 |

-

|

| 87 |

-

|

| 88 |

-

|

| 89 |

-

|

| 90 |

-

|

| 91 |

-

|

| 92 |

-

|

| 93 |

-

|

| 94 |

-

|

| 95 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

| 96 |

|

| 97 |

-

|

| 98 |

|

| 99 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 100 |

|

| 101 |

-

|

| 102 |

|

| 103 |

-

|

|

|

|

|

|

|

|

|

|

| 104 |

|

| 105 |

-

|

|

|

|

| 106 |

|

| 107 |

-

|

|

|

|

| 108 |

|

| 109 |

-

|

|

|

|

|

|

|

| 110 |

|

| 111 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

| 112 |

|

| 113 |

-

|

| 114 |

|

| 115 |

-

|

|

|

|

| 116 |

|

| 117 |

-

|

| 118 |

|

| 119 |

-

|

|

|

|

|

|

|

|

|

|

| 120 |

|

| 121 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 122 |

|

| 123 |

-

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

|

| 124 |

|

| 125 |

-

|

| 126 |

-

|

| 127 |

-

### Results

|

| 128 |

-

|

| 129 |

-

[More Information Needed]

|

| 130 |

-

|

| 131 |

-

#### Summary

|

| 132 |

-

|

| 133 |

-

|

| 134 |

-

|

| 135 |

-

## Model Examination [optional]

|

| 136 |

-

|

| 137 |

-

<!-- Relevant interpretability work for the model goes here -->

|

| 138 |

-

|

| 139 |

-

[More Information Needed]

|

| 140 |

-

|

| 141 |

-

## Environmental Impact

|

| 142 |

-

|

| 143 |

-

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

|

| 144 |

-

|

| 145 |

-

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

|

| 146 |

-

|

| 147 |

-

- **Hardware Type:** [More Information Needed]

|

| 148 |

-

- **Hours used:** [More Information Needed]

|

| 149 |

-

- **Cloud Provider:** [More Information Needed]

|

| 150 |

-

- **Compute Region:** [More Information Needed]

|

| 151 |

-

- **Carbon Emitted:** [More Information Needed]

|

| 152 |

-

|

| 153 |

-

## Technical Specifications [optional]

|

| 154 |

-

|

| 155 |

-

### Model Architecture and Objective

|

| 156 |

-

|

| 157 |

-

[More Information Needed]

|

| 158 |

-

|

| 159 |

-

### Compute Infrastructure

|

| 160 |

-

|

| 161 |

-

[More Information Needed]

|

| 162 |

-

|

| 163 |

-

#### Hardware

|

| 164 |

-

|

| 165 |

-

[More Information Needed]

|

| 166 |

-

|

| 167 |

-

#### Software

|

| 168 |

-

|

| 169 |

-

[More Information Needed]

|

| 170 |

-

|

| 171 |

-

## Citation [optional]

|

| 172 |

-

|

| 173 |

-

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

|

| 174 |

|

| 175 |

**BibTeX:**

|

| 176 |

|

| 177 |

-

|

| 178 |

-

|

| 179 |

-

|

| 180 |

-

|

| 181 |

-

|

| 182 |

-

|

| 183 |

-

|

| 184 |

-

|

| 185 |

-

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

|

| 186 |

-

|

| 187 |

-

[More Information Needed]

|

| 188 |

-

|

| 189 |

-

## More Information [optional]

|

| 190 |

-

|

| 191 |

-

[More Information Needed]

|

| 192 |

|

| 193 |

-

##

|

| 194 |

|

| 195 |

-

[

|

| 196 |

|

| 197 |

-

|

| 198 |

|

| 199 |

-

[

|

|

|

|

| 1 |

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

datasets:

|

| 4 |

+

- jan-hq/instruction-speech-v1

|

| 5 |

+

language:

|

| 6 |

+

- en

|

| 7 |

+

tags:

|

| 8 |

+

- sound language model

|

| 9 |

---

|

| 10 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 11 |

## Model Details

|

| 12 |

|

| 13 |

+

We have developed and released the family Llama-3-8B-Sound. This family is natively understanding audio and text input.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 14 |

|

| 15 |

+

We continue to expand [Meta-Llama-3-8B-Instruct](https://huggingface.co/meta-llama/Meta-Llama-3-8B-Instruct) with sound understanding capabilities by leveraging 700M tokens [Instruction Speech v1](https://huggingface.co/datasets/Vi-VLM/Vista) dataset.

|

| 16 |

|

| 17 |

+

**Model developers** Homebrew Research.

|

| 18 |

|

| 19 |

+

**Input** Text and sound.

|

| 20 |

|

| 21 |

+

**Output** Text.

|

| 22 |

|

| 23 |

+

**Model Architecture** Llama-3.

|

| 24 |

|

| 25 |

+

**Language(s):** English.

|

| 26 |

|

| 27 |

+

## Intended Use

|

| 28 |

|

| 29 |

+

**Intended Use Cases** This family is primarily intended for research applications. This version aims to further improve the LLM on sound understanding capabilities.

|

| 30 |

|

| 31 |

+

**Out-of-scope** The use of Llama-3-Sound in any manner that violates applicable laws or regulations is strictly prohibited.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 32 |

|

| 33 |

## How to Get Started with the Model

|

| 34 |

|

| 35 |

+

> TODO

|

| 36 |

+

|

| 37 |

+

## Training process

|

| 38 |

+

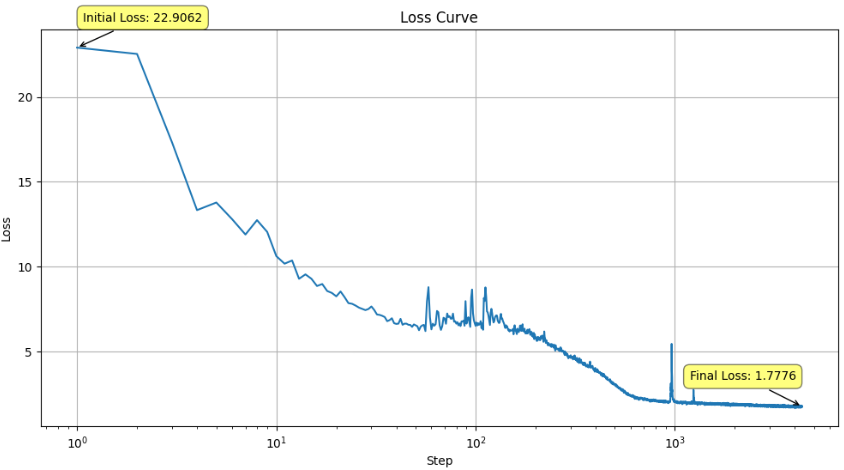

**Training Metrics Image**: Below is a snapshot of the training loss curve visualized.

|

| 39 |

+

|

| 40 |

+

|

| 41 |

+

|

| 42 |

+

### Hardware

|

| 43 |

+

|

| 44 |

+

**GPU Configuration**: Cluster of 8x NVIDIA H100-SXM-80GB.

|

| 45 |

+

**GPU Usage**:

|

| 46 |

+

- **Continual Training**: 8 hours.

|

| 47 |

+

|

| 48 |

+

### Training Arguments

|

| 49 |

+

|

| 50 |

+

| Parameter | Continual Training |

|

| 51 |

+

|----------------------------|-------------------------|

|

| 52 |

+

| **Epoch** | 1 |

|

| 53 |

+

| **Global batch size** | 128 |

|

| 54 |

+

| **Learning Rate** | 5e-5 |

|

| 55 |

+

| **Learning Scheduler** | Cosine with warmup |

|

| 56 |

+

| **Optimizer** | [Adam-mini](https://arxiv.org/abs/2406.16793) |

|

| 57 |

+

| **Warmup Ratio** | 0.1 |

|

| 58 |

+

| **Weight Decay** | 0.01 |

|

| 59 |

+

| **beta1** | 0.9 |

|

| 60 |

+

| **beta2** | 0.98 |

|

| 61 |

+

| **epsilon** | 1e-6 |

|

| 62 |

+

| **Gradient Cliping** | 1.0 |

|

| 63 |

|

| 64 |

+

### Accelerate FSDP Config

|

| 65 |

|

| 66 |

+

```

|

| 67 |

+

compute_environment: LOCAL_MACHINE

|

| 68 |

+

debug: false

|

| 69 |

+

distributed_type: FSDP

|

| 70 |

+

downcast_bf16: 'no'

|

| 71 |

+

enable_cpu_affinity: true

|

| 72 |

+

fsdp_config:

|

| 73 |

+

fsdp_activation_checkpointing: true

|

| 74 |

+

fsdp_auto_wrap_policy: TRANSFORMER_BASED_WRAP

|

| 75 |

+

fsdp_backward_prefetch: BACKWARD_PRE

|

| 76 |

+

fsdp_cpu_ram_efficient_loading: true

|

| 77 |

+

fsdp_forward_prefetch: false

|

| 78 |

+

fsdp_offload_params: false

|

| 79 |

+

fsdp_sharding_strategy: FULL_SHARD

|

| 80 |

+

fsdp_state_dict_type: SHARDED_STATE_DICT

|

| 81 |

+

fsdp_sync_module_states: true

|

| 82 |

+

fsdp_use_orig_params: false

|

| 83 |

+

machine_rank: 0

|

| 84 |

+

main_training_function: main

|

| 85 |

+

mixed_precision: bf16

|

| 86 |

+

num_machines: 1

|

| 87 |

+

num_processes: 8

|

| 88 |

+

rdzv_backend: static

|

| 89 |

+

same_network: true

|

| 90 |

+

tpu_env: []

|

| 91 |

+

tpu_use_cluster: false

|

| 92 |

+

tpu_use_sudo: false

|

| 93 |

+

use_cpu: false

|

| 94 |

+

```

|

| 95 |

|

| 96 |

+

## Examples

|

| 97 |

|

| 98 |

+

1. Good example:

|

| 99 |

+

```

|

| 100 |

+

# Question:

|

| 101 |

+

Does the following review have a positive or negative opinion of the movie?

|

| 102 |

|

| 103 |

+

i thought the relationships were wonderful ,

|

| 104 |

+

Options: A). negative; B). positive;

|

| 105 |

|

| 106 |

+

# Expected Output:

|

| 107 |

+

The review has a B). positive opinion about the movie because they think the relationships were wonderful.

|

| 108 |

|

| 109 |

+

# Model's Output:

|

| 110 |

+

The review is positive.

|

| 111 |

+

```

|

| 112 |

|

| 113 |

+

2. Misunderstanding example:

|

| 114 |

+

```

|

| 115 |

+

# Question:

|

| 116 |

+

Translate the following sentence to Russian:

|

| 117 |

+

6 Work in JOBS and careers after 40

|

| 118 |

|

| 119 |

+

Russian:

|

| 120 |

|

| 121 |

+

# Expected Output:

|

| 122 |

+

6 Работать в РАБОТАХ и карьере после 40

|

| 123 |

|

| 124 |

+

Russian (Cyrillic): 6 Работать в РАБОТАХ и карьере после 40

|

| 125 |

|

| 126 |

+

Let me explain this to you like you're five years old: This sentence means that people can still work and have careers, even if they're older than 40 years old. The words are in a different language, called Russian.

|

| 127 |

+

# Model's Output:

|

| 128 |

+

В случае, когда вы хотите перевести фразу "The first step is to identify the language of the text." на русский язык, вы можете использовать следующую фразу: "Первый шаг - определить язык текста."

|

| 129 |

+

```

|

| 130 |

|

| 131 |

+

3. Off-tracked example:

|

| 132 |

+

```

|

| 133 |

+

# Quesion:

|

| 134 |

+

Answer the following question: - The roots of the growing plants start to break up the rock - The plant acids dissolve the rock - The rock is broken down into smaller pieces - Erosion begins. What might be the first step of the process?

|

| 135 |

+

Answer:

|

| 136 |

+

|

| 137 |

+

# Expected Output:

|

| 138 |

+

The first step of the process might be: The roots of the growing plants start to break up the rock.

|

| 139 |

+

|

| 140 |

+

# Model's Output:

|

| 141 |

+

Step 1: Identify the key elements in the premise and hypothesis.

|

| 142 |

+

|

| 143 |

+

Premise: "A man in a blue shirt and a red hat is holding a sign."

|

| 144 |

+

Key elements: man, blue shirt, red hat, holding, sign

|

| 145 |

+

|

| 146 |

+

Hypothesis: "A man is holding a sign."

|

| 147 |

+

Key elements: man, holding, sign

|

| 148 |

+

|

| 149 |

+

Step 2: Compare the key elements in the premise and hypothesis.

|

| 150 |

+

|

| 151 |

+

In the premise, we have a man wearing a blue shirt and a red hat, while in the hypothesis, we only have a man. The premise also mentions that the man is holding a sign, which is also mentioned in the hypothesis.

|

| 152 |

+

|

| 153 |

+

Step 3: Determine if the hypothesis is entailed by the premise.

|

| 154 |

+

|

| 155 |

+

Since the hypothesis states that a man is holding a sign, and the premise confirms that a man is holding a sign, we can conclude that the hypothesis is entailed by the premise. The additional information about the man's clothing in the premise does not contradict or negate the

|

| 156 |

+

```

|

| 157 |

+

|

| 158 |

+

Despite being undertrained, the model demonstrates an emerging grasp of sound-text semantics.

|

| 159 |

|

|

|

|

| 160 |

|

| 161 |

+

## Citation Information

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 162 |

|

| 163 |

**BibTeX:**

|

| 164 |

|

| 165 |

+

```

|

| 166 |

+

@article{Llama-3-Sound: Sound Instruction LLM 2024,

|

| 167 |

+

title={Llama-3-Sound},

|

| 168 |

+

author={JanAI},

|

| 169 |

+

year=2024,

|

| 170 |

+

month=July},

|

| 171 |

+

url={https://huggingface.co/jan-hq/llama-3-sound-init-checkpoint-4340}

|

| 172 |

+

```

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 173 |

|

| 174 |

+

## Acknowledgement

|

| 175 |

|

| 176 |

+

- **[WhisperSpeech]**

|

| 177 |

|

| 178 |

+

- **[Encodec]**

|

| 179 |

|

| 180 |

+

- **[Meta-Llama-3-8B-Instruct]**

|