File size: 24,209 Bytes

29f941b 30a81d6 29f941b 5a74a8b 29f941b bc27b6a 29f941b 30a81d6 29f941b 30a81d6 29f941b 30a81d6 29f941b 30a81d6 29f941b 3bbbc08 29f941b 5a74a8b 29f941b c15ab9b 29f941b c15ab9b 29f941b c15ab9b 29f941b c15ab9b 29f941b c15ab9b 29f941b c15ab9b 29f941b c15ab9b 29f941b 30a81d6 29f941b 5a74a8b 29f941b c15ab9b 29f941b 30a81d6 29f941b 3bbbc08 29f941b 3bbbc08 29f941b 3bbbc08 29f941b 3bbbc08 29f941b 3bbbc08 29f941b 3bbbc08 29f941b 3bbbc08 29f941b 10faf4e 29f941b 5a74a8b 29f941b 5a74a8b 29f941b 5a74a8b 29f941b 5a74a8b |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 291 292 293 294 295 296 297 298 299 300 301 302 303 304 305 306 307 308 309 310 311 312 313 314 315 316 317 318 319 320 321 322 323 324 325 326 327 328 329 330 331 332 333 334 335 336 337 338 339 340 341 342 343 344 345 346 347 348 349 350 351 352 353 354 355 356 357 358 359 360 361 362 363 364 365 366 367 368 369 370 371 372 373 374 375 376 377 378 379 380 381 |

---

pipeline_tag: object-detection

tags:

- bioinformatics

- metadata

- image analysis

- applied machine learning

- contrast enhancement

- detectron2

- fish

- images

- museum specimens

- metadata generation

- object detection

license: mit

---

# Model Card for Drexel Metadata Generator

This model was designed to generate metadata for images of [museum] fish specimens. In addition to the metadata and quality metrics achieved with our initial model (detailed in [Automatic Metadata Generation for Fish Specimen Image Collections](https://ieeexplore.ieee.org/document/9651834), [bioRXiv](https://doi.org/10.1101/2021.10.04.463070

)), this updated model also generates various geometric and statistical properties on the mask generated over the biological specimen presented. Some examples of the new analytical features include convex area, eccentricity, perimeter, and skew. The updates to our model further improve on the accuracy, and time and labor cost over human generation.

<!--

This modelcard aims to be a base template for new models. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/modelcard_template.md?plain=1). -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

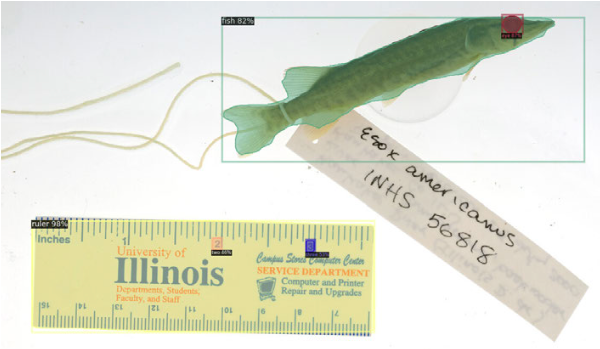

This model is based on [Facebook AI Research’s (FAIR) detectron tool](https://github.com/facebookresearch/detectron2) (the implementation of the Mask R-CNN architecture). There are five object classes identifiable by the model: fish, fish eyes, rulers, and the numbers two and three on rulers, as shown in Fig. 2, below. Of note is its capability to return pixel-by-pixel masks over detected objects.

||

|:--|

|**Figure 2.**|

Furthermore, detectron places no restriction on the number of object classes, can classify an arbitrary number of objects within a given image, and it is relatively straightforward to train it on COCO format datasets.

Objects that have a confidence score of at least 30% are maintained for analysis.

See the tables below for the number of class instances in the full (aggregate), INHS, and UWZM training datasets.

| **Table 1:** Aggregate training dataset | | **Table 2:** INHS Training Dataset | | **Table 3:** UWZM Training Dataset |

| ---- | ---- | ---- | ---- | ---- |

| Class | Number of Instances| | Class | Number of Instances| | Class | Number of Instances|

| --- | --- | ----- | --- | --- | ---- | --- | --- |

| Fish | 391| | Fish | 312| | Fish | 79|

| Ruler | 1095| | Ruler | 1016| | Ruler | 79|

| Eye | 550 | | Eye | 471 | | Eye | 79 |

| Two | 194 | | Two | 115 | | Two | 79 |

| Three | 194 | | Three | 115 | | Three | 79 |

See the [Glossary](#Glossary) below for a detailed list of the properties generated by the model.

- **Developed by:** Joel Pepper and Kevin Karnani

<!--- **Shared by [optional]:** [More Information Needed]-->

- **Model type:** Pytorch pickle file (.pth)

<!--- **Language(s) (NLP):** [More Information Needed]-->

- **License:** MIT <!-- As listed on the repo -->

- **Finetuned from model:** [detectron2 v0.6](https://github.com/facebookresearch/detectron2)

### Model Sources

<!-- Provide the basic links for the model. -->

- **Repository:** [hdr-bgnn/drexel_metadata](https://github.com/hdr-bgnn/drexel_metadata/)

- **Paper:** [Computational metadata generation methods for biological specimen image collections](https://doi.org/10.1007/s00799-022-00342-1) ([preprint](https://doi.org/10.21203/rs.3.rs-1506561/v1))

<!-- - **Demo [optional]:** [More Information Needed]-->

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

Object detection is currently being performed on 5 detection classes (fish, fish eyes, rulers, and the twos and threes found on rulers). The current setup is performed on the INHS and UWZM biological specimen image repositories.

#### Current Criteria

1. Image must contain a fish species (no eels, seashells, butterflies, seahorses, snakes, etc).

2. Image must contain only 1 of each class (except eyes).

3. Specimen body must lie alone the image plane from a side view.

4. Ruler must be consistent (only two ruler types, INHS + UWZM, were used in training set).

5. Fish must not be obscured by another object (petri dish for example).

6. Whole body of fish must be present (no heads, tails, or standalone features).

7. Fish body must not be folded and should have no curvature.

8. These do not need to be adhered to if properly set up/modified for a specific use case.

<!--### Downstream Use [optional]

[More Information Needed]

### Out-of-Scope Use

[More Information Needed]-->

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

- This model was trained solely for use on fish specimens.

- The model can detect and process multiple fish within a single image, although the capability is not extensively tested.

- The model was only trained on rectangular, machine printed tags that are aligned with the image (i.e. tags placed at an angle may not be handled correctly).

<!-- [More Information Needed] -->

The authors have declared that no conflict of interest exists.

<!--### Recommendations-->

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

<!-- Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations. -->

## How to Get Started with the Model

<!-- Use the code below to get started with the model. -->

### Dependencies

Every dependency is stored in a Pipfile. To set this up, run the following commands:

```bash

pip install pipenv

pipenv install

```

There may be OS dependent installations one may need to perform.

### Running

To generate the metadata, run the following command:

```bash

pipenv run python3 gen_metadata.py [file_or_dir_name]

```

Usage:

```

gen_metadata.py [-h] [--device {cpu,cuda}] [--outfname OUTFNAME] [--maskfname MASKFNAME] [--visfname VISFNAME]

file_or_directory [limit]

```

The `limit` parameter will limit

the number of files processed in the directory. The `limit` positional argument is only applicable when passing a directory.

#### Device Configuration

By default `gen_metadata.py` requires a GPU (cuda).

To use a CPU instead pass the `--device cpu` argument to `gen_metadata.py`.

#### Single File Usage

The following three arguments are only supported when processing a single image file:

- `--outfname <filename>` - When passed the script will save the output metadata JSON to `<filename>` instead of printing to the console (the default behavior when processing one file).

- `--maskfname <filename>` - Enables logic to save an output mask to `<filename>` for the single input file.

- `--visfname <filename>` - Changes the script to save the output visualization to `<filename>` instead of the hard coded location.

These arguments are meant to simplify adding `gen_metadata.py` to a workflow that process files individually.

### Running with Singularity

A Docker container is automatically built for each **drexel\_metadata** release. This container has the requirements installed and includes the model file.

To run the singularity container for a specific version follow this pattern:

```

singularity run docker://ghcr.io/hdr-bgnn/drexel_metadata:<release> gen_metadata.py ...

```

## Training Details

### Training Data

<!-- This should link to a Data Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

- University of Wisconsin Zoological Museum: [Fishes Collection](https://uwzm.integrativebiology.wisc.edu/fishes-collection/) (2022)

- Illinois Natural History Survey: [INHS Fish Collection](https://fish.inhs.illinois.edu/) (2022)

Labeled by hand using [makesense.ai](https://github.com/SkalskiP/make-sense/) (Skalski, P.: Make Sense. https://github.com/SkalskiP/make-sense/ (2019)).

Initially, we had 64 examples of each class from the UWZM collection in the training set. One issue that we encountered was the lack of catfish (_notorus genus_) in the training set, which led to a high count of undetected eyes in the testing set. Visually it is difficult even for humans to determine the location of catfish eyes given that they are either very close to the color of the skin or do not look like normal fish eyes. Thus, 15 catfish images from each image dataset were added to the training set.

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

Setup:

1. Create a COCO JSON training set using the images and labels.

1. This is done currently using [makesense](https://makesense.ai) in their polygon object detection mode.

2. The labels currently used are: `fish, ruler, eye, two, three` in that exact order.

3. Save as a COCO JSON after performing manual segmentations and labeling. Then, place in [datasets](datasets/).

2. In the [config](config/) directory, create a JSON file with a key name of the image directory on your local system, and then a value of an array of dataset names in the [datasets](datasets/) folder.

1. For multiple image collections, have multiple keys.

2. For multiple datasets for the same collection, append to the respective value array.

3. For example: `{"image_folder_name": ["dataset_name.json"]}`.

3. In the [train](train_model.py) script, set the `conf` variable at the top of the `main()` function to load the JSON file name created in the previous step.

4. Create a text file named `overall_prefix.txt` file in the [config](config/) folder. This file should have the absolute path to the directory in which all the image repositories will be stored.

1. Currently it is `/usr/local/bgnn/`. There are various image folders like `tulane`, `inhs_filtered`, `uwzm_filtered`, etc.

5. To edit the learning rate, batch size, or any other base training configuration, edit the [base training configurations](config/Base-RCNN-FPN.yaml) file.

6. To edit the number of iterations, dropoffs, or any model specific configurations, edit the [model training configurations](config/mask_rcnn_R_50_FPN_3x.yaml) file.

Finally, to train the model, run the following command:

```bash

pipenv run python3 train_model.py

```

#### Preprocessing

- Manual image preprocessing is not necessary. Some versions of the code do however contrast enhance the images internally (see [Citation](#citation))

#### Training Hyperparameters

- See [Citation](#citation)/source code.

## Evaluation

- See [Citation](#citation)

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Data Card if possible. -->

- See [Citation](#citation)

#### Factors

- See [Citation](#citation)

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

#### Metrics

- See [Citation](#citation)

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

### Results

- See [Citation](#citation)

#### Summary

### Goal

To develop a tool to check the validity of metadata associated with an image, and generate things that are missing. Also includes various geometric and statistical properties on the mask generated over the biological specimen presented.

### Metadata Generation

The metadata generated is extremely specific to our use case. In addition, we perform additional image processing techniques to improve our accuracies that may not work for other use cases. These include:

1. Image scaling when a fish is detected but not an eye, in an attempt to lower missing eyes.

2. Selection of highest confidence fish bounding box given our criterion of single fish in an image.

3. Contrast enhancement (CLAHE)

The metadata generated produces various statistical and geometric properties of a biological specimen image or collection in a JSON format. When a single file is passed, the data is yielded to the console (stdout). When a directory is passed, the data is stored in a JSON file.

## Environmental Impact

Extremely minimal as a regular workstation computer was used for this paper.

## Technical Specifications

### Model Architecture and Objective

- See [Citation](#citation)

### Compute Infrastructure

- Desktop computer with an Intel(R) Xeon(R) W-2175 CPU and an Nvidia Quadro RTX 4000 GPU.

## Citation

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

Karnani, K., Pepper, J., Bakiş, Y. et al. Computational metadata generation methods for biological specimen image collections. Int J Digit Libr (2022). https://doi.org/10.1007/s00799-022-00342-1

### Associated Publication

J. Pepper, J. Greenberg, Y. Bakiş, X. Wang, H. Bart and D. Breen, "Automatic Metadata Generation for Fish Specimen Image Collections," 2021 ACM/IEEE Joint Conference on Digital Libraries (JCDL), 2021, pp. 31-40, doi: [10.1109/JCDL52503.2021.00015](https://doi.org/10.1109/JCDL52503.2021.00015).

**BibTeX:**

```

@article{KPB2022,

title = "Computational metadata generation methods for biological specimen image collections",

author = "Karnani, Kevin and Pepper, Joel and Bak{\i}{\c s}, Yasin and Wang, Xiaojun and Bart, Jr, Henry and

Breen, David E and Greenberg, Jane",

journal = "International Journal on Digital Libraries",

year = 2022,

url = "https://doi.org/10.1007/s00799-022-00342-1",

doi = "10.1007/s00799-022-00342-1"

}

```

<!--

**APA:**

[More Information Needed] -->

## Glossary

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

### Properties Generated

| **Property** | **Association** | **Type** | **Explanation** |

|----------------------------------|--------------------------|-------------------|-------------------------------------------------------------------------------------------------------------------------------------------------------------|

| has\_fish | Overall Image | Boolean | Whether a fish was found in the image. |

| fish\_count | Overall Image | Integer | The quantity of fish present. |

| has\_ruler | Overall Image | Boolean | Whether a ruler was found in the image. |

| ruler\_bbox | Overall Image | 4 Tuple | The bounding box of the ruler (if found). |

| scale* | Overall Image | Float | The scale of the image in \\(\frac{\mathrm{pixels}}{\mathrm{cm}}\\). |

| bbox | Per Fish | 4 Tuple | The top left and bottom right coordinates of the bounding box for a fish. |

| background.mean | Per Fish | Float | The mean intensity of the background within a given fish's bounding box. |

| background.std | Per Fish | Float | The standard deviation of the background within a given fish's bounding box. |

| foreground.mean | Per Fish | Float | The mean intensity of the foreground within a given fish's bounding box. |

| foreground.std | Per Fish | Float | The standard deviation of the foreground within a given fish's bounding box. |

| contrast* | Per Fish | Float | The contrast between foreground and background intensities within a given fish's bounding box. |

| centroid | Per Fish | 4 Tuple | The centroid of a given fish's bitmask. |

| primary\_axis* | Per Fish | 2D Vector | The unit length primary axis (eigenvector) for the bitmask of a given fish. |

| clock\_value* | Per Fish | Integer | Fish's primary axis converted into an integer "clock value" between 1 and 12. |

| oriented\_length* | Per Fish | Float | The length of the fish bounding box in centimeters. |

| mask | Per Fish | 2D Matrix | The bitmask of a fish in 0's and 1's. |

| pixel\_analysis\_failed | Per Fish | Boolean | Whether the pixel analysis process failed for a given fish. If true, detectron's mask and bounding box were used for metadata generation. |

| score | Per Fish | Float | The percent confidence score output by detectron for a given fish. |

| has\_eye | Per Fish | Boolean | Whether an eye was found for a given fish. |

| eye\_center | Per Fish | 2 Tuple | The centroid of a fish's eye. |

| side* | Per Fish | String | The side (i.e. 'left' or 'right') of the fish that is facing the camera (dependent on finding its eye). |

| area | Per Fish | Float | Area of fish in \\(\mathrm{cm^2}\\). |

| cont\_length | Per Fish | Float | The longest contiguous length of the fish in centimeters. |

| cont\_width | Per Fish | Float | The longest contiguous width of the fish in centimeters. |

| convex\_area | Per Fish | Float | Area of convex hull image (smallest convex polygon that encloses the fish) in \\(\mathrm{cm^2}\\). |

| eccentricity | Per Fish | Float | Ratio of the focal distance over the major axis length of the ellipse that has the same second-moments as the fish. |

| extent | Per Fish | Float | Ratio of pixels of fish to pixels in the total bounding box. Computed as \\(\frac{\mathrm{area}}{\mathrm{rows} * \mathrm{cols}}\\) |

| feret\_diameter\_max | Per Fish | Float | The longest distance between points around the fish’s convex hull contour. |

| kurtosis | Per Fish | 2D Vector | The sharpness of the peaks of the frequency-distribution curve of mask pixel coordinates. |

| major\_axis\_length | Per Fish | Float | The length of the major axis of the ellipse that has the same normalized second central moments as the fish. |

| mask.encoding | Per Fish | String | The 8-way Freeman Encoding of the outline of the fish. |

| mask.start\_coord | Per Fish | 2D Vector | The starting coordinate of the Freeman encoded mask. |

| minor\_axis\_length | Per Fish | Float | The length of the minor axis of the ellipse that has the same normalized second central moments as the fish. |

| oriented\_width | Per Fish | Float | The width of the fish bounding box in centimeters. |

| perimeter | Per Fish | Float | The approximation of the contour in centimeters as a line through the centers of border pixels using 8-connectivity. |

| skew | Per Fish | 2D Vector | The measure of asymmetry of the frequency-distribution curve of mask pixel coordinates. |

| solidity | Per Fish | Float | The ratio of pixels in the fish to pixels of the convex hull image. |

| std | Per Fish | Float | The standard deviation of the mask pixel coordinate distribution. |

## More Information

Research supported by NSF Office of Advanced Cyberinfrastructure (OAC) [#1940233](https://nsf.gov/awardsearch/showAward?AWD_ID=1940233&HistoricalAwards=false) and [#1940322](https://www.nsf.gov/awardsearch/showAward?AWD_ID=1940322&HistoricalAwards=false), with additional support from NSF [Award #2118240](https://www.nsf.gov/awardsearch/showAward?AWD_ID=2118240).

Any opinions, findings and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the National Science Foundation.

### Authors and Affiliations:

**Computer Science Department, Drexel University, Philadelphia, PA, USA**

Kevin Karnani, Joel Pepper (corresponding author) & David E. Breen

**Biodiversity Research Institute, Tulane University, New Orleans, LA, USA**

Yasin Bakiş, Xiaojun Wang & Henry Bart Jr.

**Information Science Department, Drexel University, Philadelphia, PA, USA**

Jane Greenberg

## Model Card Authors

Elizabeth Campolongo and Joel Pepper

<!--## Model Card Contact

[More Information Needed]--> |