Create README.md

Browse files

README.md

ADDED

|

@@ -0,0 +1,28 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

base_model:

|

| 3 |

+

- GSAI-ML/LLaDA-8B-Instruct

|

| 4 |

+

language:

|

| 5 |

+

- en

|

| 6 |

+

library_name: transformers

|

| 7 |

+

datasets:

|

| 8 |

+

- KodCode/KodCode-V1-SFT-R1

|

| 9 |

+

---

|

| 10 |

+

|

| 11 |

+

# Large Language Diffusion with Ordered Unmasking (LLaDOU)

|

| 12 |

+

<a href="https://arxiv.org/abs/2505.10446"><img src="https://img.shields.io/badge/arXiv-2505.10446-b31b1b.svg" alt="ArXiv"></a>

|

| 13 |

+

<a href="https://arxiv.org/abs/2505.10446"><img src="https://img.shields.io/badge/GitHub-LLaDOU-777777.svg" alt="ArXiv"></a>

|

| 14 |

+

|

| 15 |

+

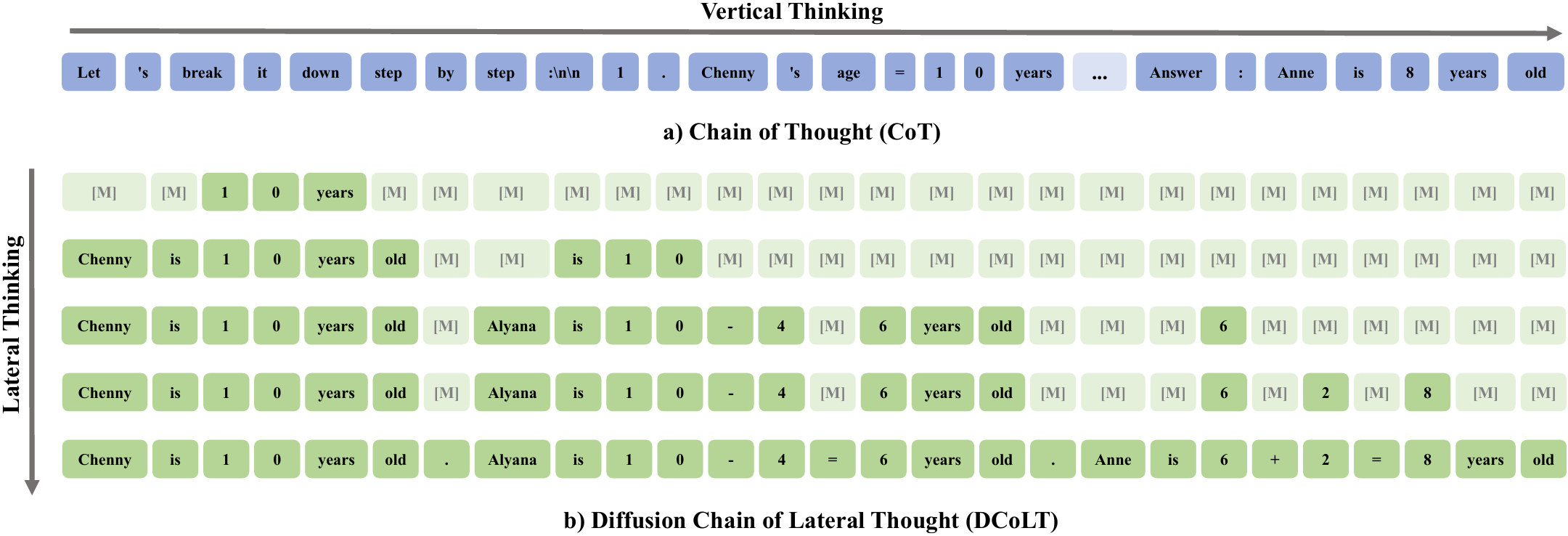

We introduce the **L**arge **La**nguage **D**iffusion with **O**rdered **U**nmasking (**LLaDOU**), which is trained by reinforcing a new reasoning paradigm named the **D**iffusion **C**hain **o**f **L**ateral **T**hought (**DCoLT**) for diffusion language models.

|

| 16 |

+

|

| 17 |

+

Compared to standard CoT, DCoLT is distinguished with several notable features:

|

| 18 |

+

- **Bidirectional Reasoning**: Allowing global refinement throughout generations with bidirectional self-attention masks.

|

| 19 |

+

- **Format-Free Reasoning**: No strict rule on grammatical correctness amid its intermediate steps of thought.

|

| 20 |

+

- **Nonlinear Generation**: Generating tokens at various positions in different steps.

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

## Instructions

|

| 25 |

+

|

| 26 |

+

**LLaDOU-v0-Code** is a code-specific model trained on a subset of [KodCode-V1-SFT-R1](https://huggingface.co/datasets/KodCode/KodCode-V1-SFT-R1).

|

| 27 |

+

|

| 28 |

+

For inference codes and detailed instructions, please refer our github page: [maple-research-lab/LLaDOU](https://github.com/maple-research-lab/LLaDOU).

|