add non-deterministic note

Browse files- README.md +15 -26

- configs/metadata.json +2 -1

- docs/README.md +15 -26

README.md

CHANGED

|

@@ -6,23 +6,20 @@ library_name: monai

|

|

| 6 |

license: apache-2.0

|

| 7 |

---

|

| 8 |

# Model Overview

|

| 9 |

-

|

| 10 |

A pre-trained model for simultaneous segmentation and classification of nuclei within multi-tissue histology images based on CoNSeP data. The details of the model can be found in [1].

|

| 11 |

|

| 12 |

## Workflow

|

|

|

|

| 13 |

|

| 14 |

-

|

| 15 |

-

|

| 16 |

-

|

| 17 |

-

|

| 18 |

-

- The original author's repo also has pre-trained weights which is for non-commercial use. Each user is responsible for checking the content of models/datasets and the applicable licenses and determining if suitable for the intended use. The license for the pre-trained model is different than MONAI license. Please check the source where these weights are obtained from: <https://github.com/vqdang/hover_net#data-format>

|

| 19 |

|

| 20 |

`PRETRAIN_MODEL_URL` is "https://drive.google.com/u/1/uc?id=1KntZge40tAHgyXmHYVqZZ5d2p_4Qr2l5&export=download" which can be used in bash code below.

|

| 21 |

|

| 22 |

|

| 23 |

|

| 24 |

## Data

|

| 25 |

-

|

| 26 |

The training data is from <https://warwick.ac.uk/fac/cross_fac/tia/data/hovernet/>.

|

| 27 |

|

| 28 |

- Target: segment instance-level nuclei and classify the nuclei type

|

|

@@ -39,7 +36,6 @@ python scripts/prepare_patches.py -root your-concep-dataset-path

|

|

| 39 |

```

|

| 40 |

|

| 41 |

## Training configuration

|

| 42 |

-

|

| 43 |

This model utilized a two-stage approach. The training was performed with the following:

|

| 44 |

|

| 45 |

- GPU: At least 24GB of GPU memory.

|

|

@@ -50,19 +46,16 @@ This model utilized a two-stage approach. The training was performed with the fo

|

|

| 50 |

- Loss: HoVerNetLoss

|

| 51 |

|

| 52 |

## Input

|

| 53 |

-

|

| 54 |

Input: RGB images

|

| 55 |

|

| 56 |

## Output

|

| 57 |

-

|

| 58 |

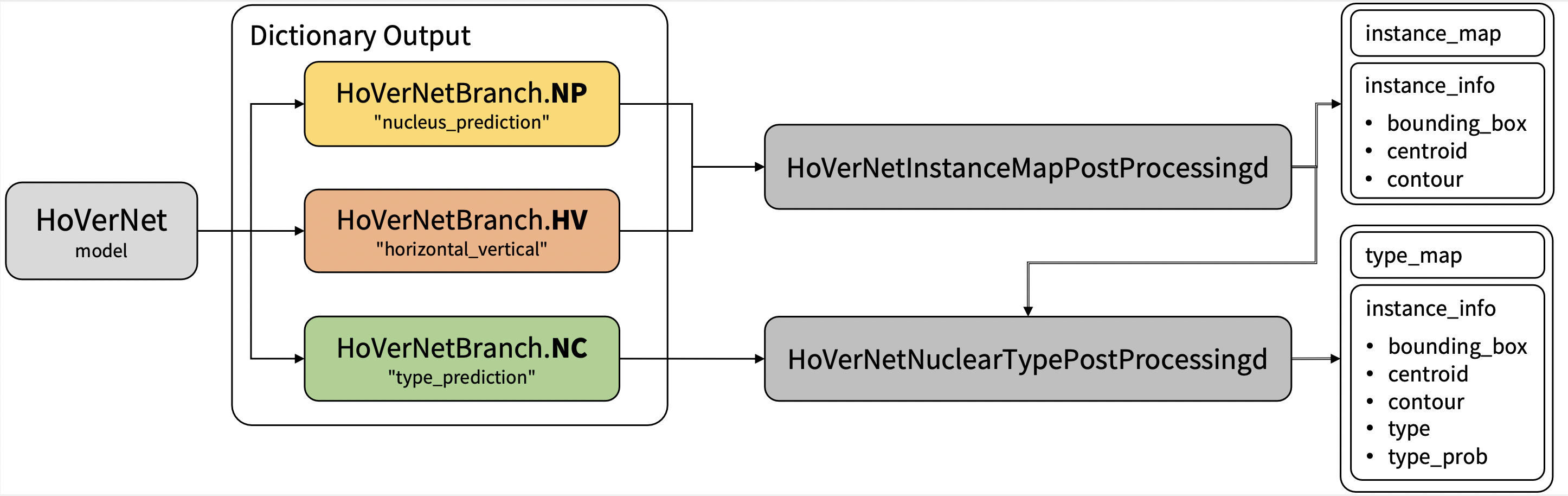

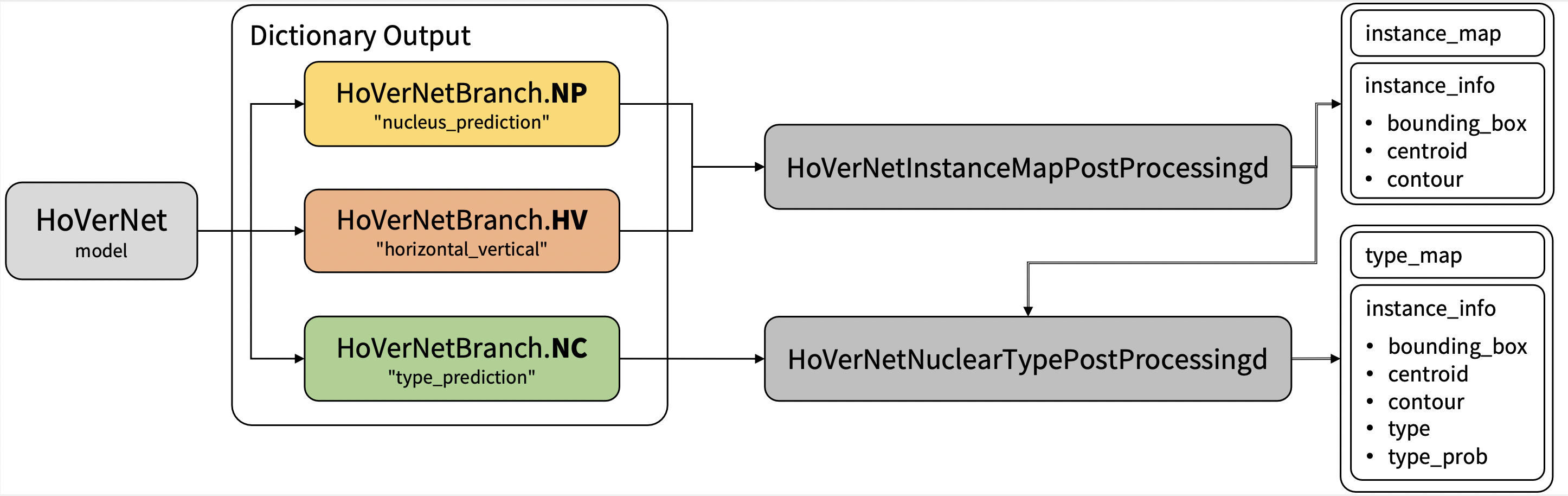

Output: a dictionary with the following keys:

|

| 59 |

|

| 60 |

1. nucleus_prediction: predict whether or not a pixel belongs to the nuclei or background

|

| 61 |

2. horizontal_vertical: predict the horizontal and vertical distances of nuclear pixels to their centres of mass

|

| 62 |

3. type_prediction: predict the type of nucleus for each pixel

|

| 63 |

|

| 64 |

-

##

|

| 65 |

-

|

| 66 |

The achieved metrics on the validation data are:

|

| 67 |

|

| 68 |

Fast mode:

|

|

@@ -72,10 +65,10 @@ Fast mode:

|

|

| 72 |

|

| 73 |

Note: Binary Dice is calculated based on the whole input. PQ and F1d were calculated from https://github.com/vqdang/hover_net#inference.

|

| 74 |

|

| 75 |

-

|

|

|

|

| 76 |

|

| 77 |

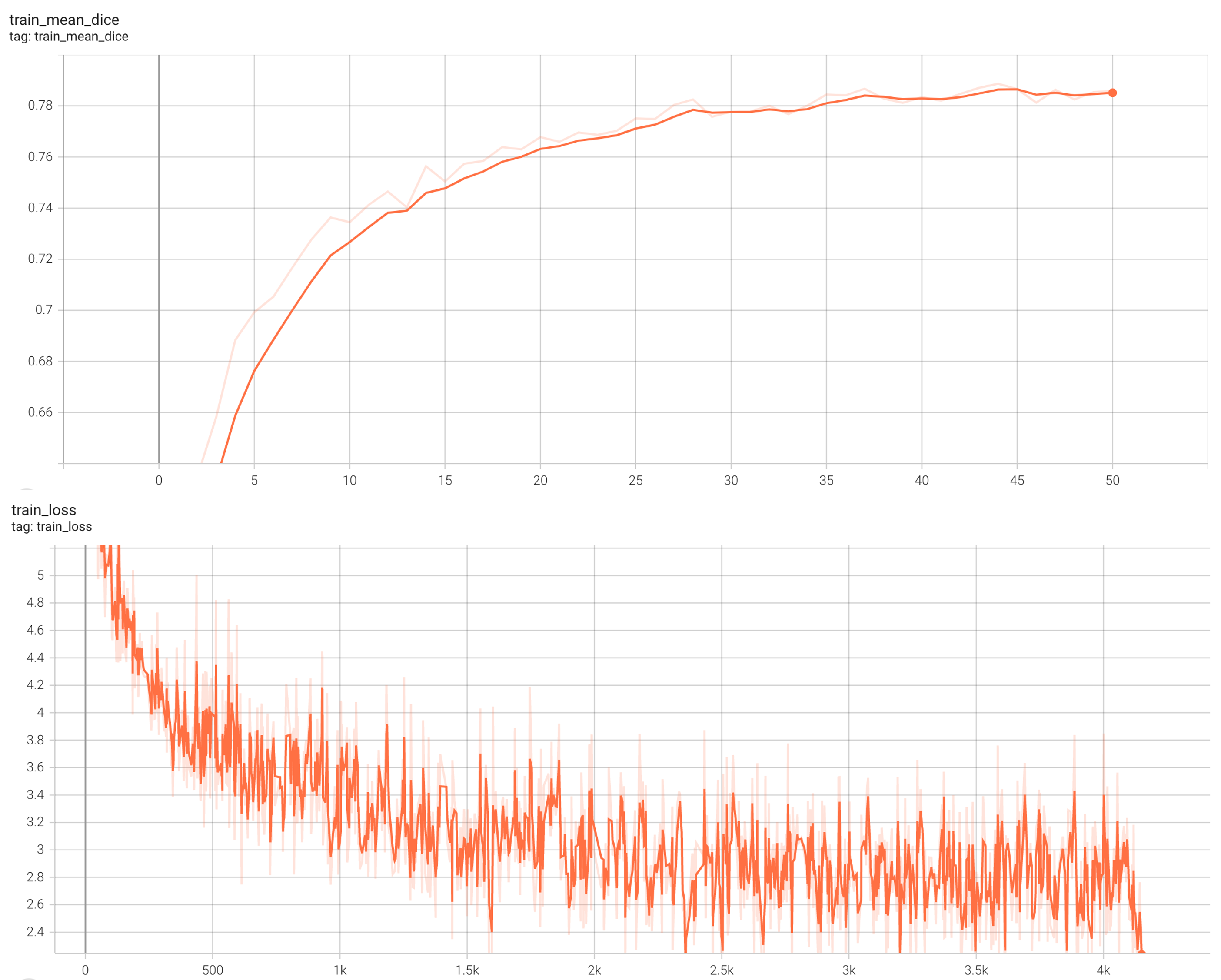

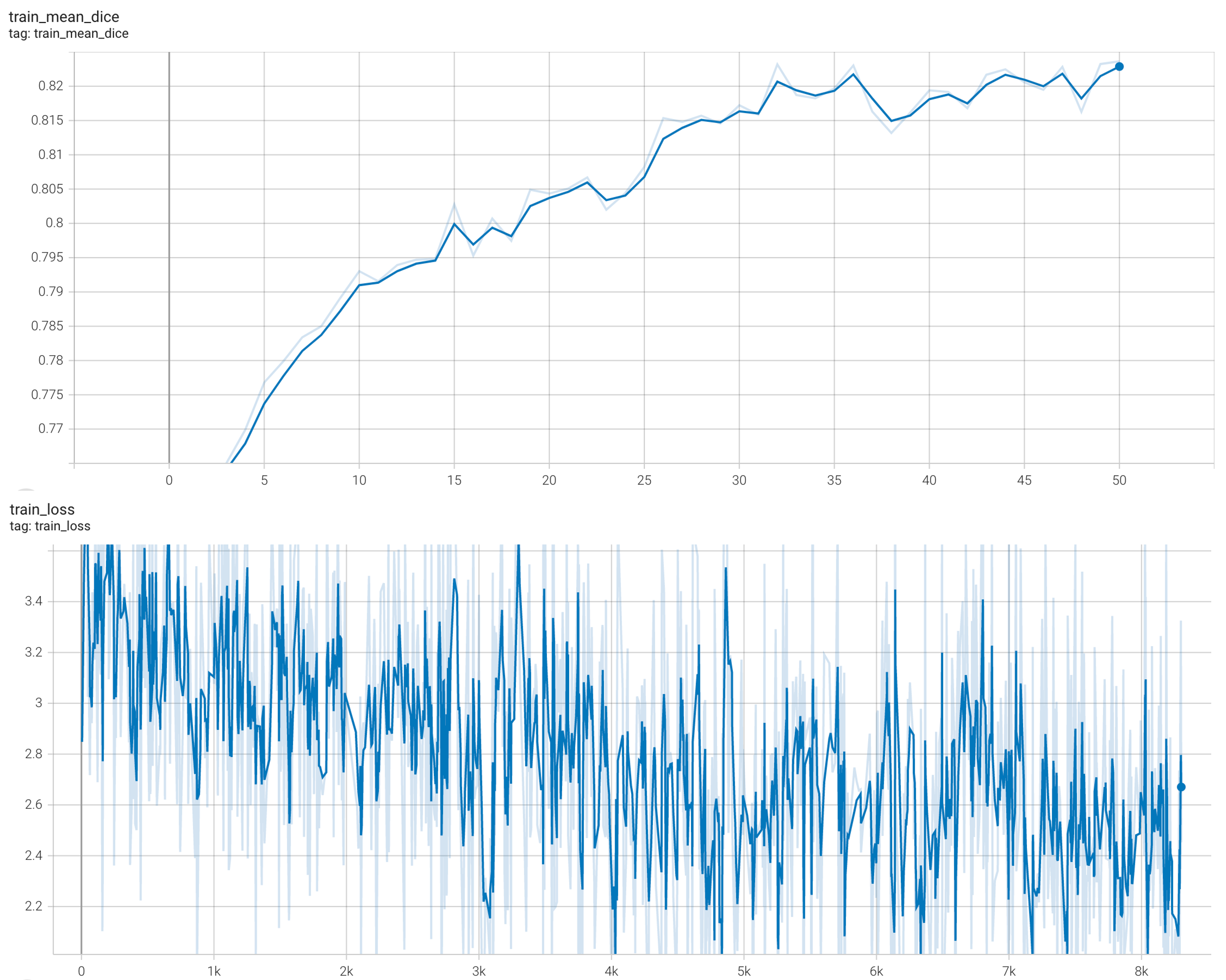

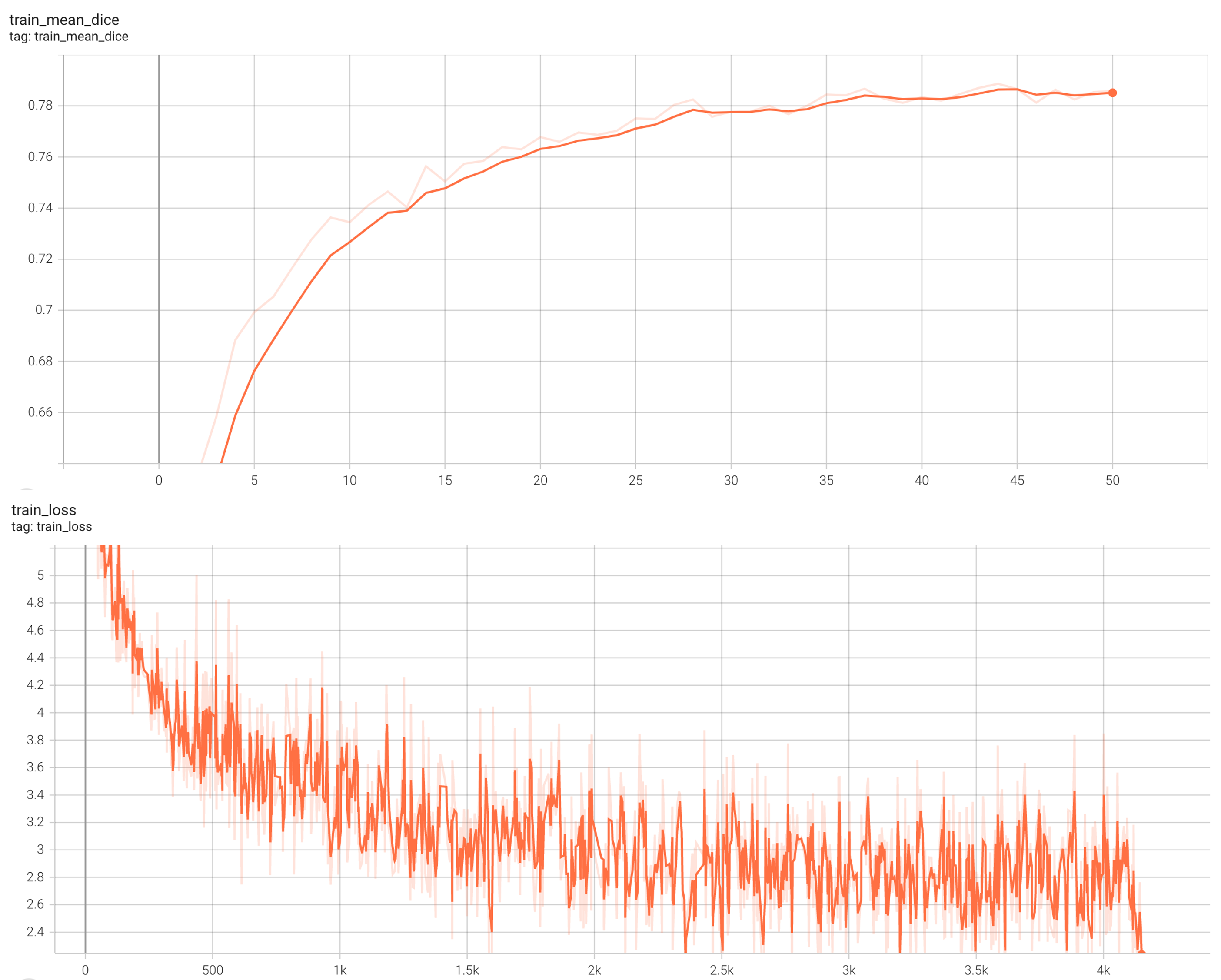

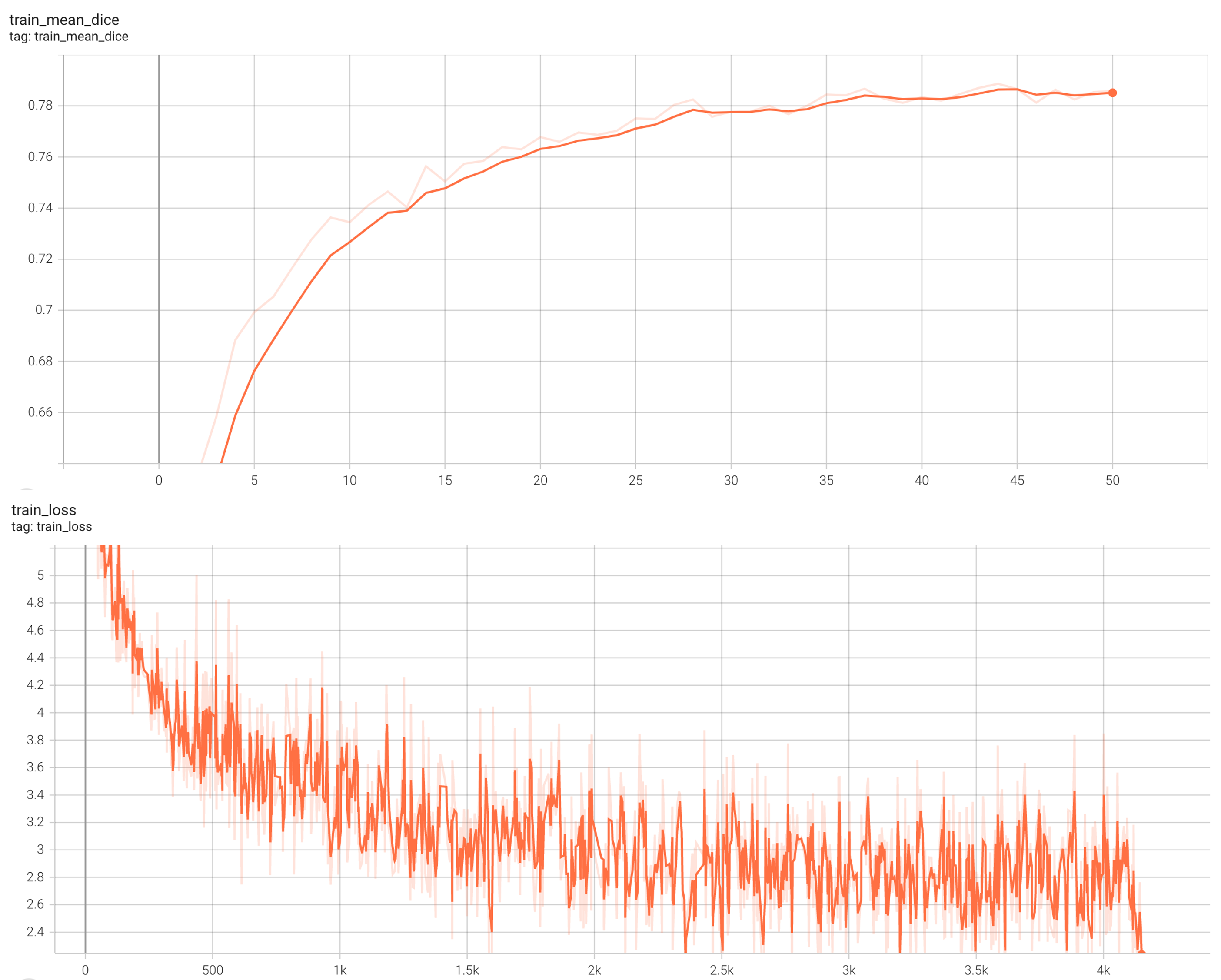

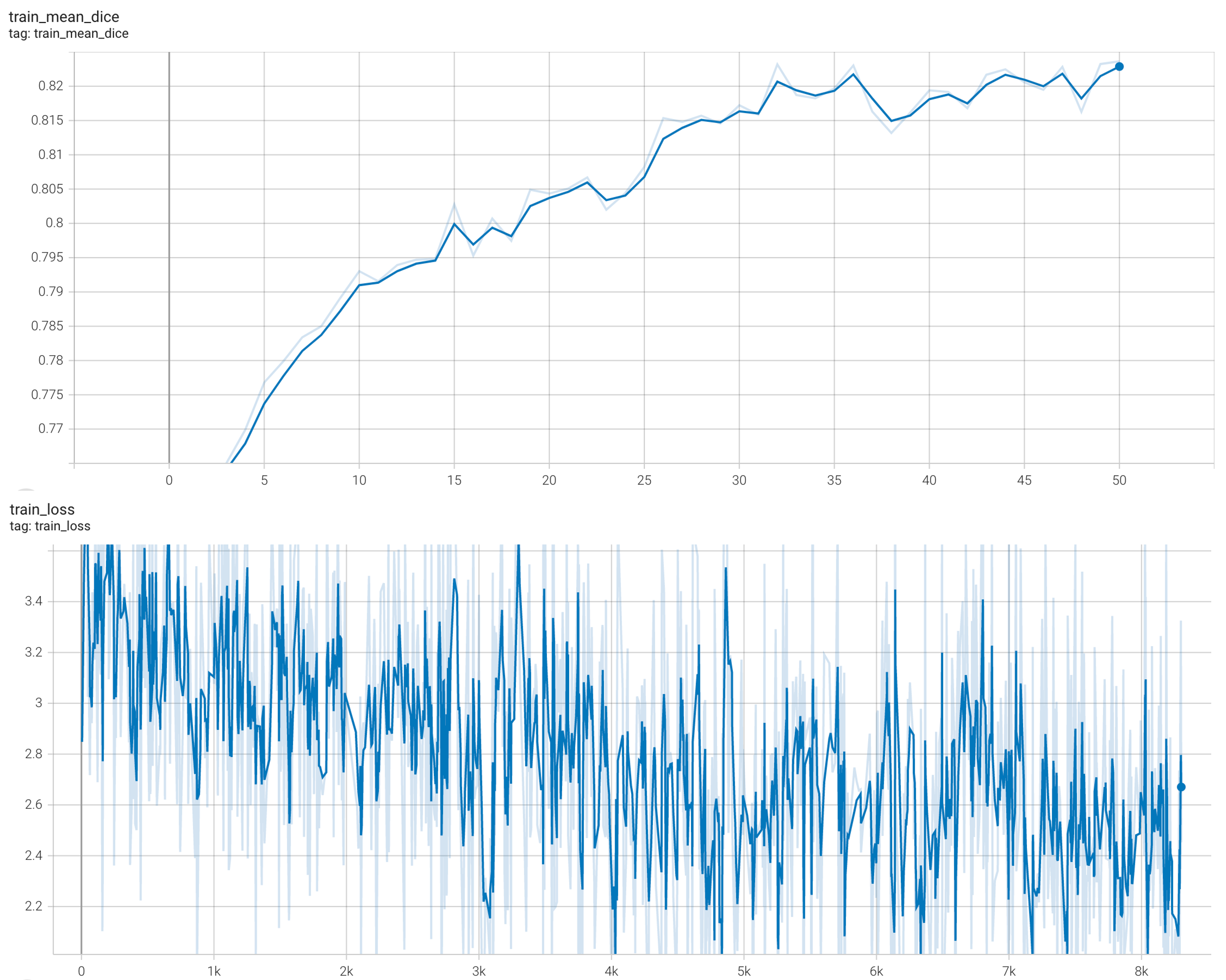

#### Training Loss and Dice

|

| 78 |

-

|

| 79 |

stage1:

|

| 80 |

|

| 81 |

|

|

@@ -83,7 +76,6 @@ stage2:

|

|

| 83 |

|

| 84 |

|

| 85 |

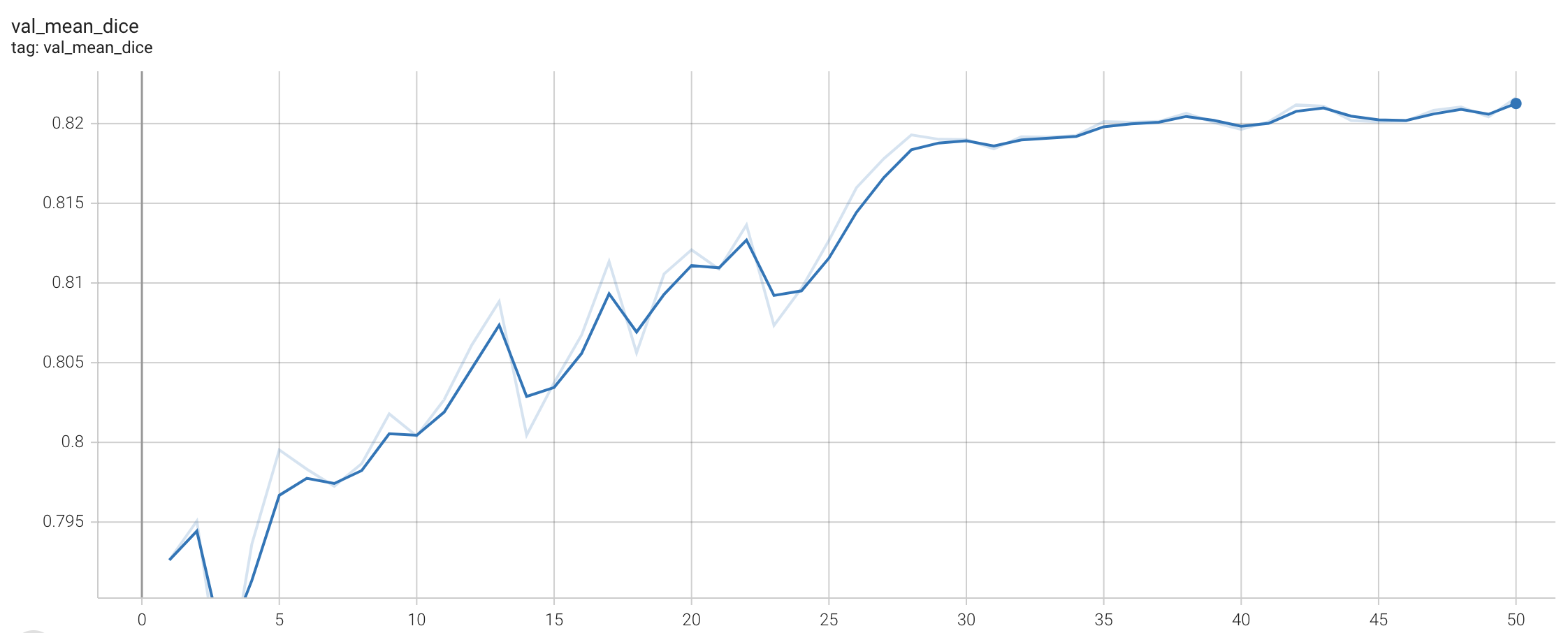

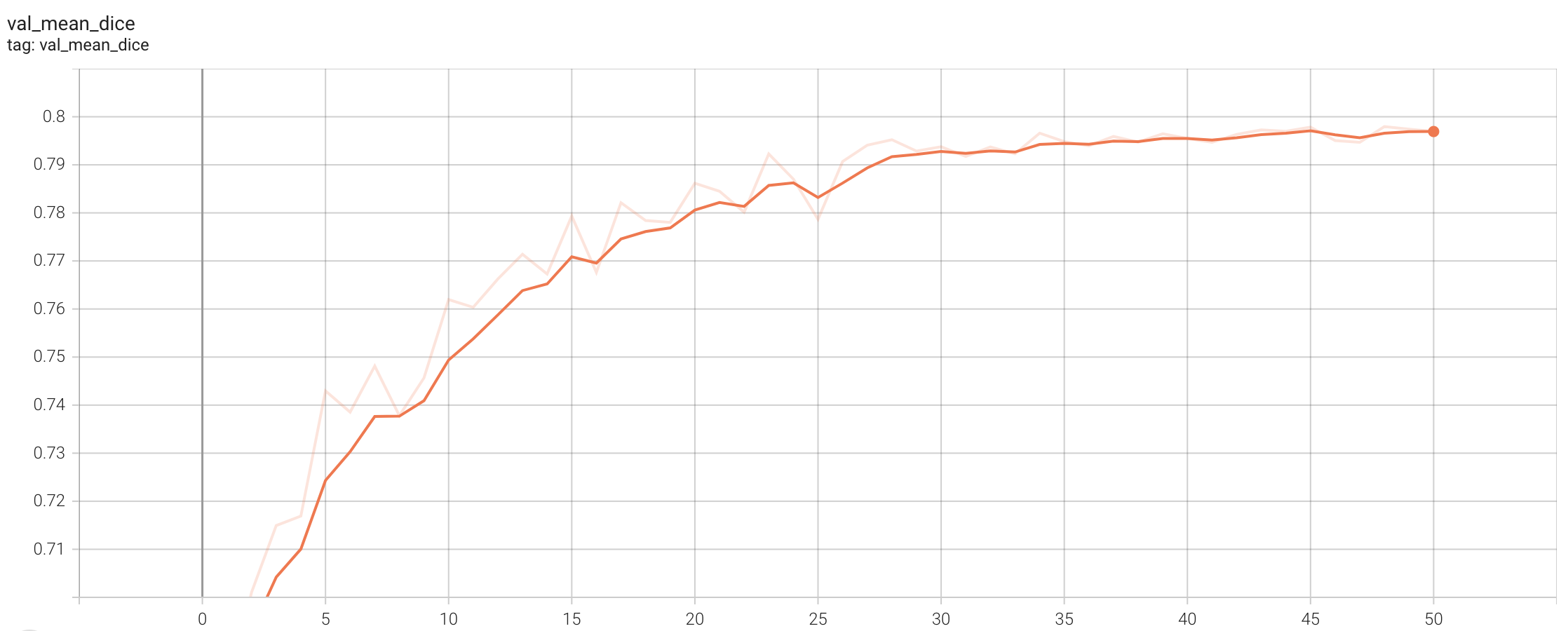

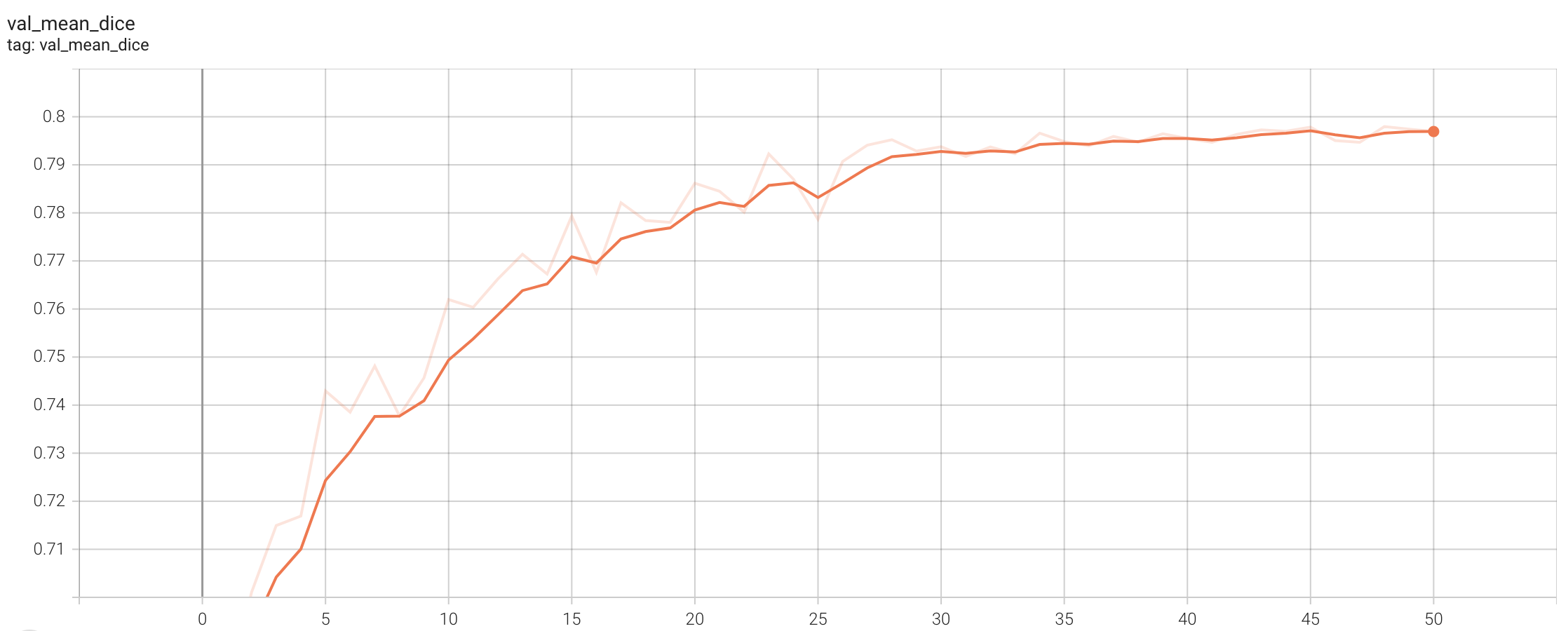

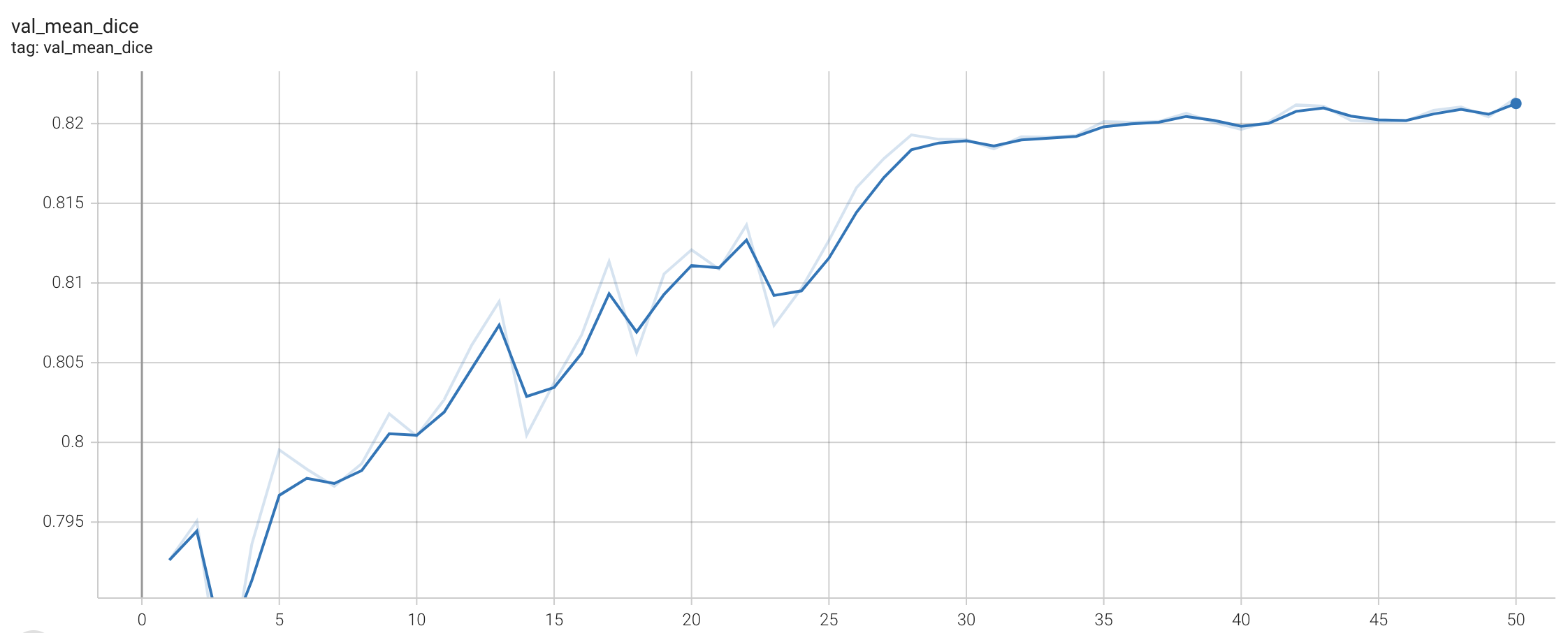

#### Validation Dice

|

| 86 |

-

|

| 87 |

stage1:

|

| 88 |

|

| 89 |

|

|

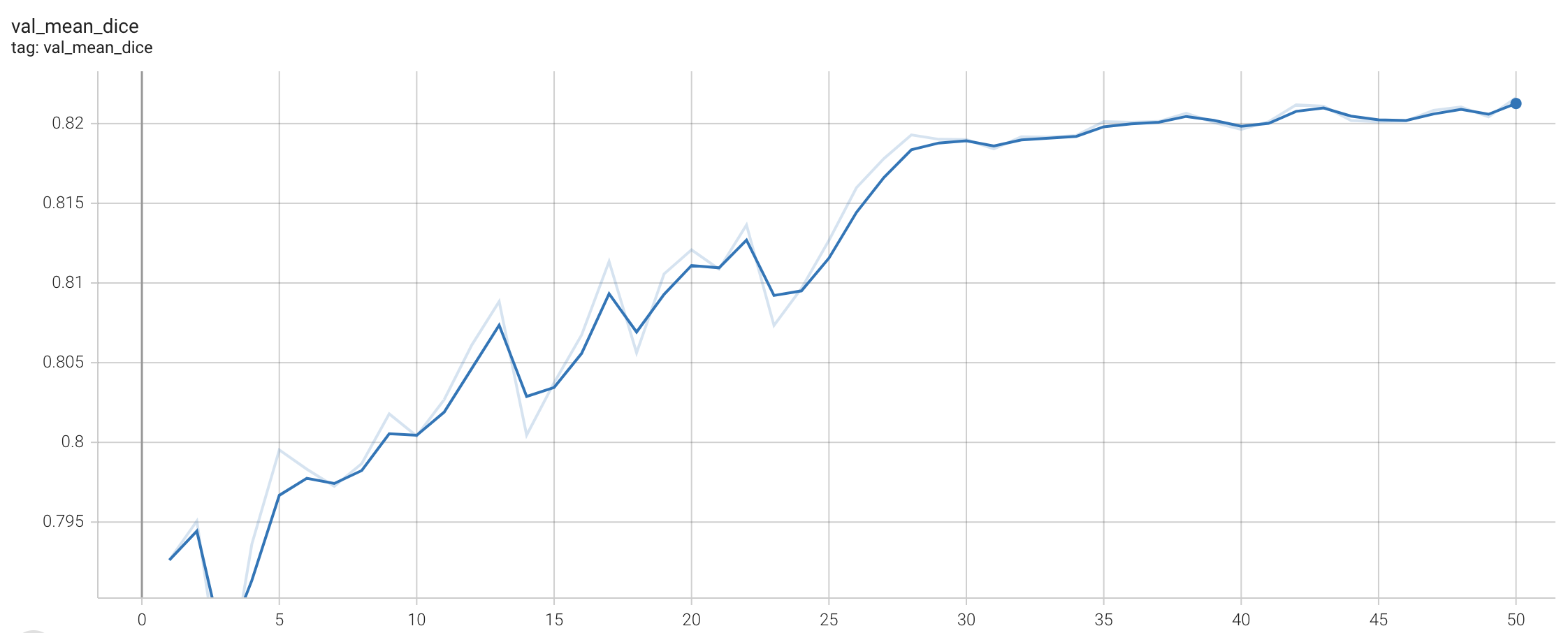

@@ -92,54 +84,51 @@ stage2:

|

|

| 92 |

|

| 93 |

|

| 94 |

|

| 95 |

-

##

|

|

|

|

| 96 |

|

| 97 |

-

|

| 98 |

|

| 99 |

-

|

| 100 |

|

|

|

|

| 101 |

```

|

| 102 |

python -m monai.bundle run --config_file configs/train.json --network_def#pretrained_url `PRETRAIN_MODEL_URL` --stage 0

|

| 103 |

```

|

| 104 |

|

| 105 |

- Run second stage

|

| 106 |

-

|

| 107 |

```

|

| 108 |

python -m monai.bundle run --config_file configs/train.json --network_def#freeze_encoder False --network_def#pretrained_url None --stage 1

|

| 109 |

```

|

| 110 |

|

| 111 |

-

Override the `train` config to execute multi-GPU training:

|

| 112 |

|

| 113 |

- Run first stage

|

| 114 |

-

|

| 115 |

```

|

| 116 |

torchrun --standalone --nnodes=1 --nproc_per_node=2 -m monai.bundle run --config_file "['configs/train.json','configs/multi_gpu_train.json']" --batch_size 8 --network_def#freeze_encoder True --network_def#pretrained_url `PRETRAIN_MODEL_URL --stage 0

|

| 117 |

```

|

| 118 |

|

| 119 |

- Run second stage

|

| 120 |

-

|

| 121 |

```

|

| 122 |

torchrun --standalone --nnodes=1 --nproc_per_node=2 -m monai.bundle run --config_file "['configs/train.json','configs/multi_gpu_train.json']" --batch_size 4 --network_def#freeze_encoder False --network_def#pretrained_url None --stage 1

|

| 123 |

```

|

| 124 |

|

| 125 |

-

Override the `train` config to execute evaluation with the trained model, here we evaluated dice from the whole input instead of the patches:

|

| 126 |

|

| 127 |

```

|

| 128 |

python -m monai.bundle run --config_file "['configs/train.json','configs/evaluate.json']"

|

| 129 |

```

|

| 130 |

|

| 131 |

-

|

| 132 |

|

| 133 |

```

|

| 134 |

python -m monai.bundle run --config_file configs/inference.json

|

| 135 |

```

|

| 136 |

|

| 137 |

# Disclaimer

|

| 138 |

-

|

| 139 |

This is an example, not to be used for diagnostic purposes.

|

| 140 |

|

| 141 |

# References

|

| 142 |

-

|

| 143 |

[1] Simon Graham, Quoc Dang Vu, Shan E Ahmed Raza, Ayesha Azam, Yee Wah Tsang, Jin Tae Kwak, Nasir Rajpoot, Hover-Net: Simultaneous segmentation and classification of nuclei in multi-tissue histology images, Medical Image Analysis, 2019 https://doi.org/10.1016/j.media.2019.101563

|

| 144 |

|

| 145 |

# License

|

|

|

|

| 6 |

license: apache-2.0

|

| 7 |

---

|

| 8 |

# Model Overview

|

|

|

|

| 9 |

A pre-trained model for simultaneous segmentation and classification of nuclei within multi-tissue histology images based on CoNSeP data. The details of the model can be found in [1].

|

| 10 |

|

| 11 |

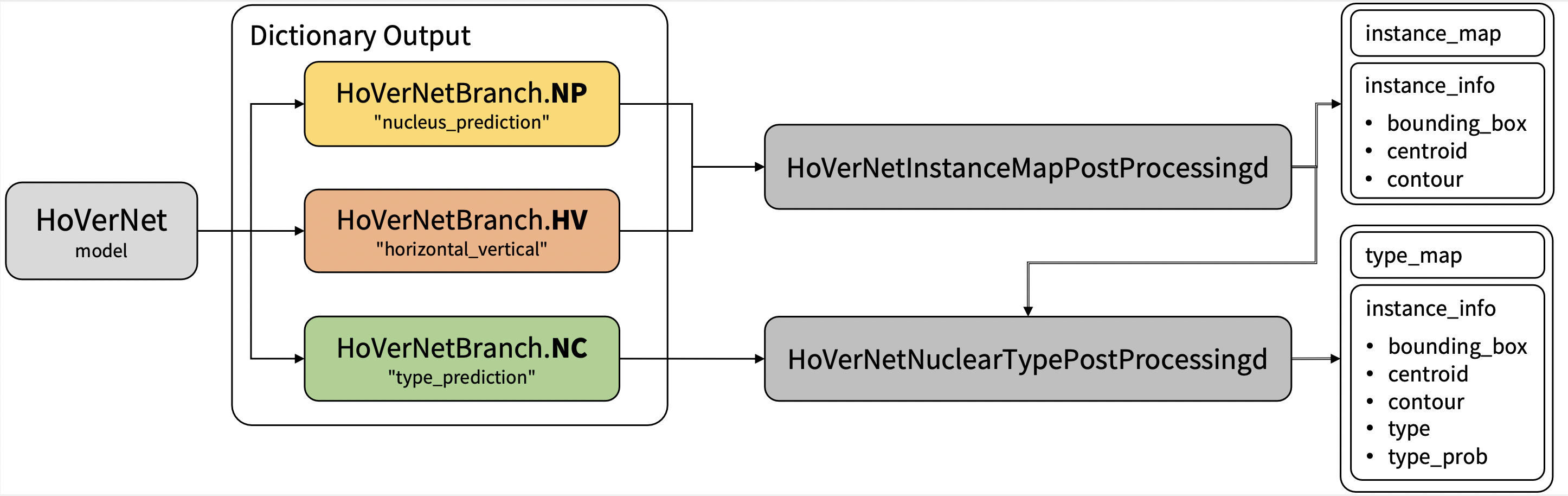

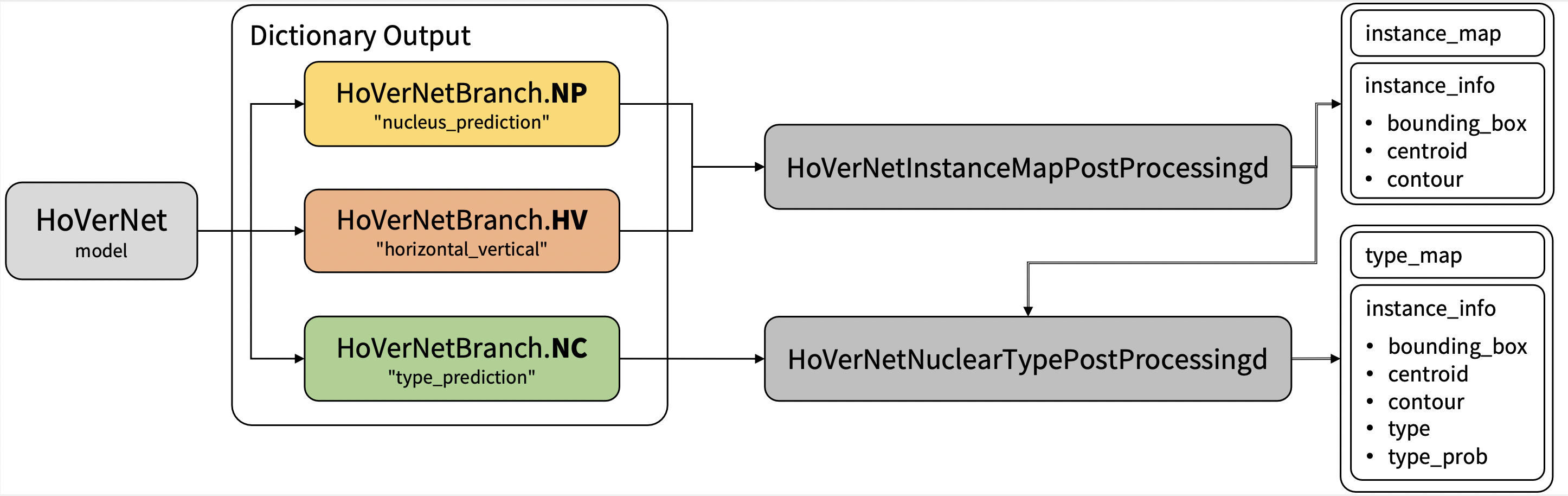

## Workflow

|

| 12 |

+

The model is trained to simultaneously segment and classify nuclei. Training is done via a two-stage approach. First initialized the model with pre-trained weights on the [ImageNet dataset](https://ieeexplore.ieee.org/document/5206848), trained only the decoders for the first 50 epochs, and then fine-tuned all layers for another 50 epochs. There are two training modes in total. If "original" mode is specified, [270, 270] and [80, 80] are used for `patch_size` and `out_size` respectively. If "fast" mode is specified, [256, 256] and [164, 164] are used for `patch_size` and `out_size` respectively. The results shown below are based on the "fast" mode.

|

| 13 |

|

| 14 |

+

### Pre-trained weights

|

| 15 |

+

The first stage is trained with pre-trained weights from some internal data.The [original author's repo](https://github.com/vqdang/hover_net#data-format) also provides pre-trained weights but for non-commercial use.

|

| 16 |

+

Each user is responsible for checking the content of models/datasets and the applicable licenses and determining if suitable for the intended use.

|

|

|

|

|

|

|

| 17 |

|

| 18 |

`PRETRAIN_MODEL_URL` is "https://drive.google.com/u/1/uc?id=1KntZge40tAHgyXmHYVqZZ5d2p_4Qr2l5&export=download" which can be used in bash code below.

|

| 19 |

|

| 20 |

|

| 21 |

|

| 22 |

## Data

|

|

|

|

| 23 |

The training data is from <https://warwick.ac.uk/fac/cross_fac/tia/data/hovernet/>.

|

| 24 |

|

| 25 |

- Target: segment instance-level nuclei and classify the nuclei type

|

|

|

|

| 36 |

```

|

| 37 |

|

| 38 |

## Training configuration

|

|

|

|

| 39 |

This model utilized a two-stage approach. The training was performed with the following:

|

| 40 |

|

| 41 |

- GPU: At least 24GB of GPU memory.

|

|

|

|

| 46 |

- Loss: HoVerNetLoss

|

| 47 |

|

| 48 |

## Input

|

|

|

|

| 49 |

Input: RGB images

|

| 50 |

|

| 51 |

## Output

|

|

|

|

| 52 |

Output: a dictionary with the following keys:

|

| 53 |

|

| 54 |

1. nucleus_prediction: predict whether or not a pixel belongs to the nuclei or background

|

| 55 |

2. horizontal_vertical: predict the horizontal and vertical distances of nuclear pixels to their centres of mass

|

| 56 |

3. type_prediction: predict the type of nucleus for each pixel

|

| 57 |

|

| 58 |

+

## Performance

|

|

|

|

| 59 |

The achieved metrics on the validation data are:

|

| 60 |

|

| 61 |

Fast mode:

|

|

|

|

| 65 |

|

| 66 |

Note: Binary Dice is calculated based on the whole input. PQ and F1d were calculated from https://github.com/vqdang/hover_net#inference.

|

| 67 |

|

| 68 |

+

Please note that this bundle is non-deterministic because of the bilinear interpolation used in the network. Therefore, reproducing the training process may not get exactly the same performance.

|

| 69 |

+

Please refer to https://pytorch.org/docs/stable/notes/randomness.html#reproducibility for more details about reproducibility.

|

| 70 |

|

| 71 |

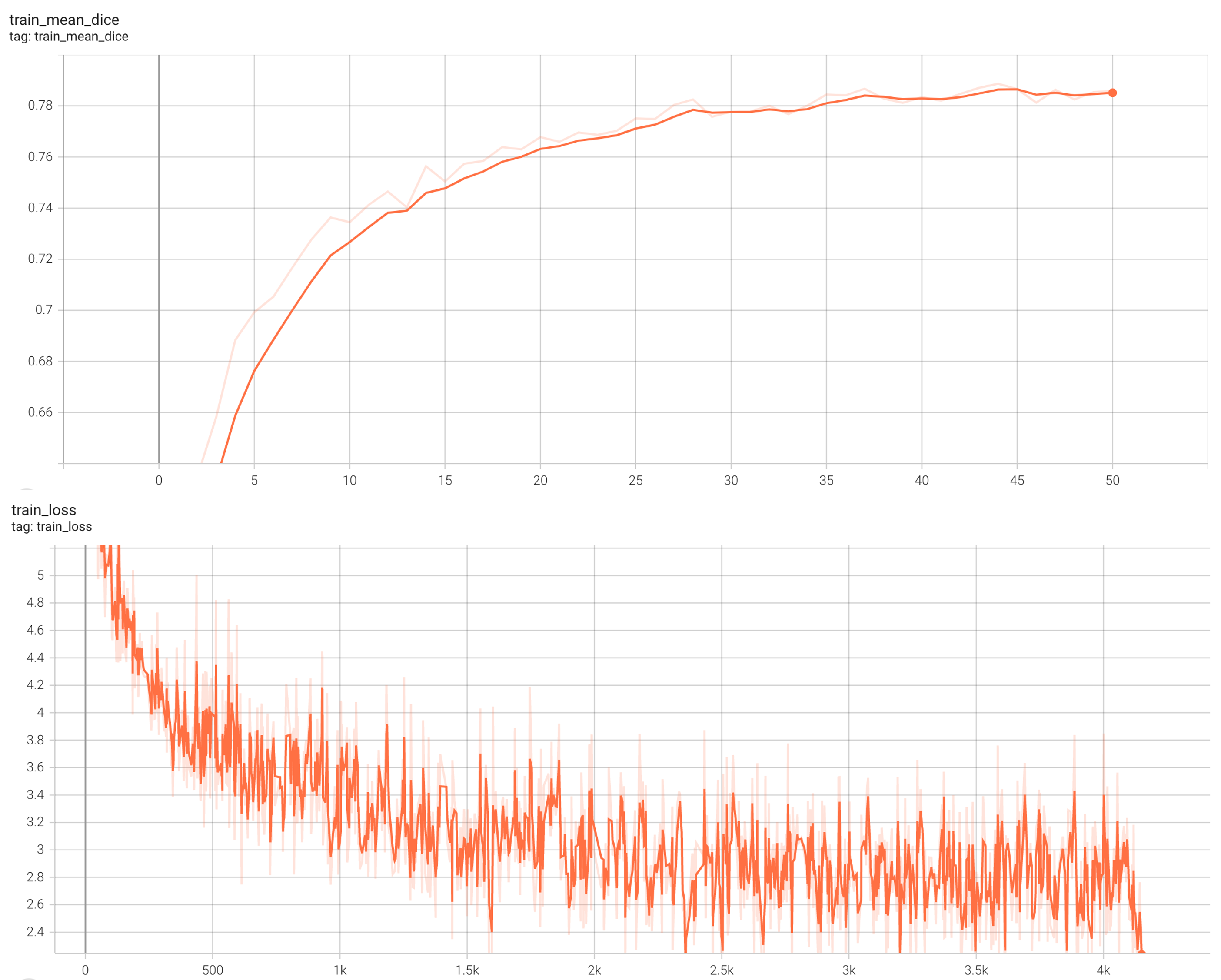

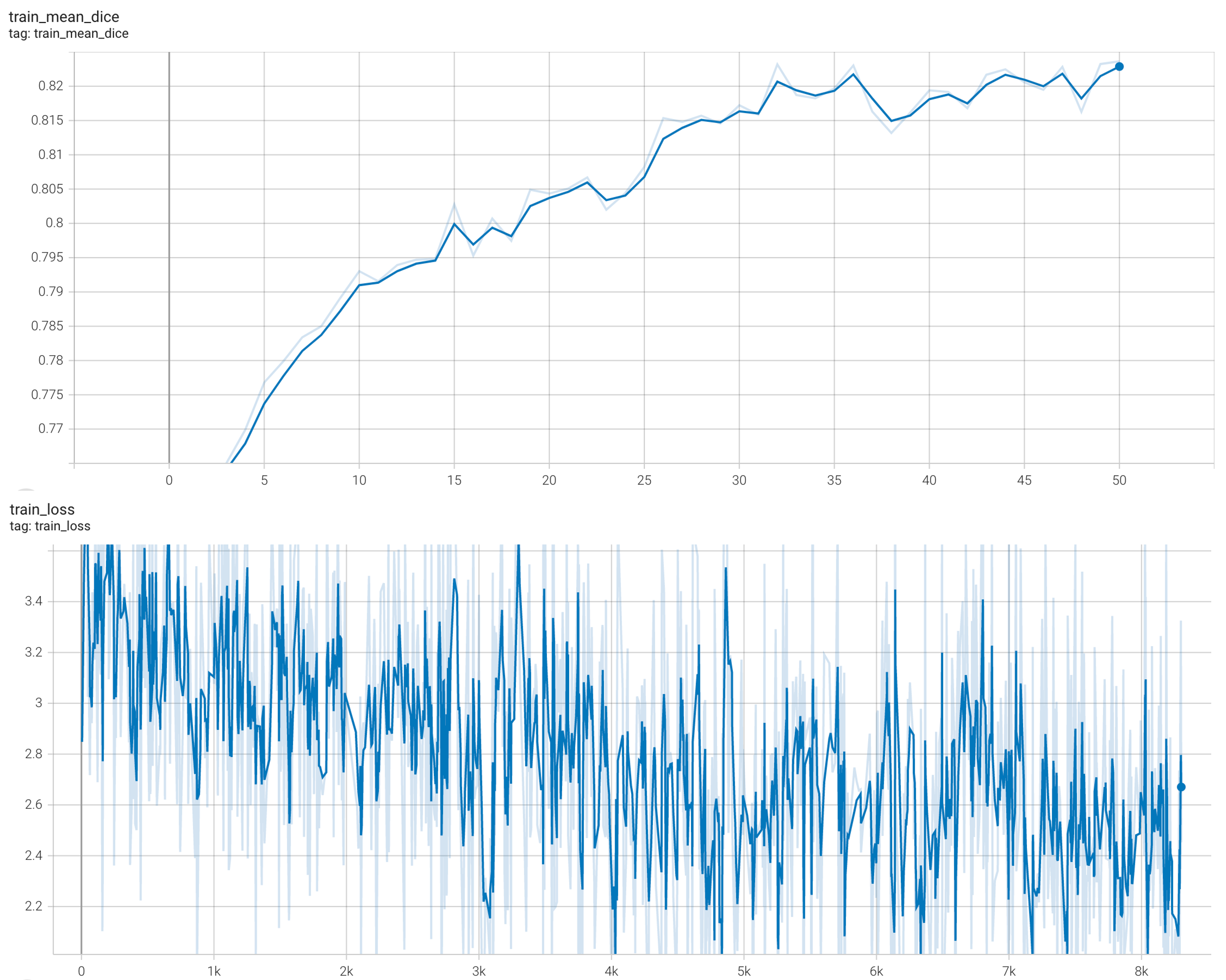

#### Training Loss and Dice

|

|

|

|

| 72 |

stage1:

|

| 73 |

|

| 74 |

|

|

|

|

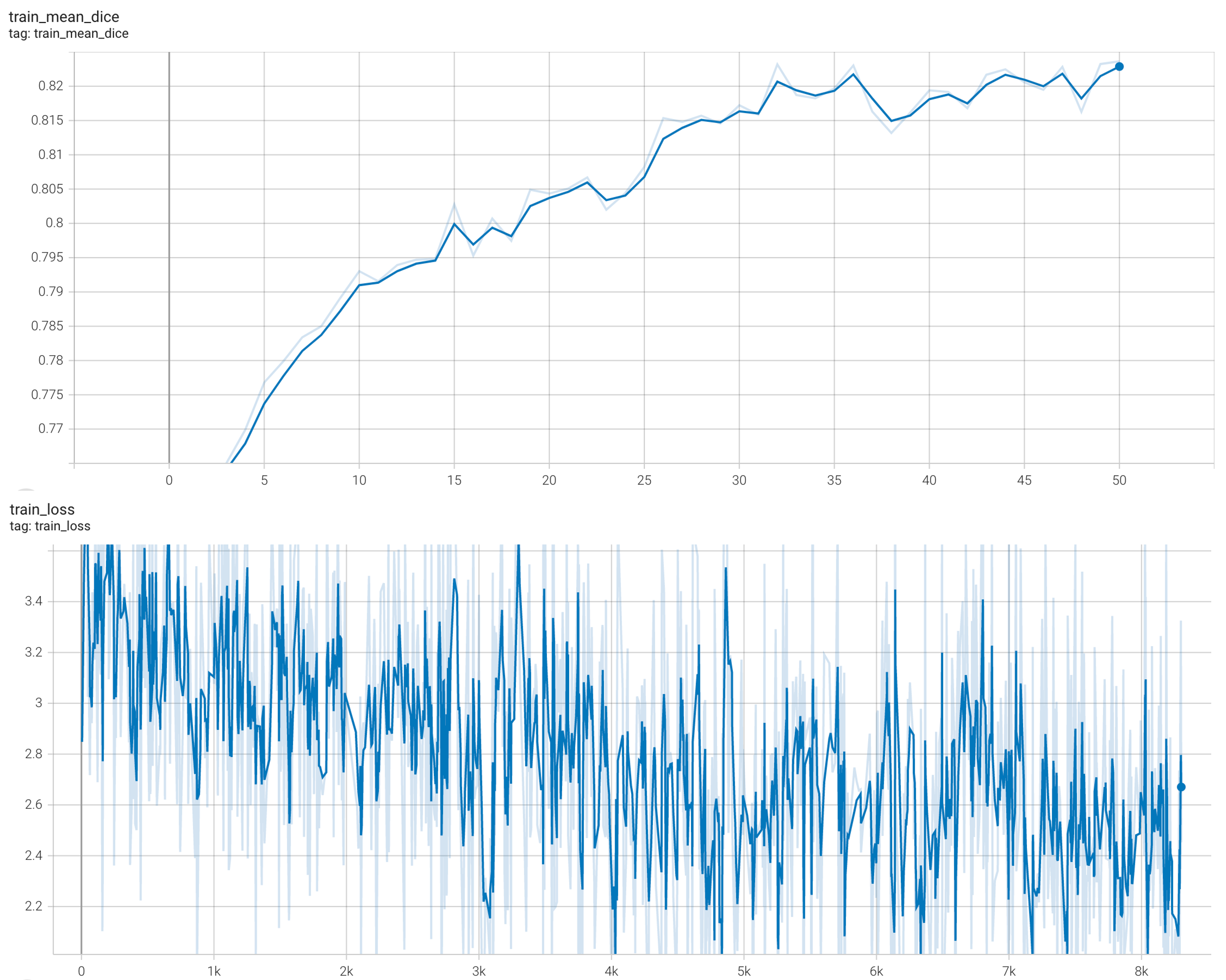

| 76 |

|

| 77 |

|

| 78 |

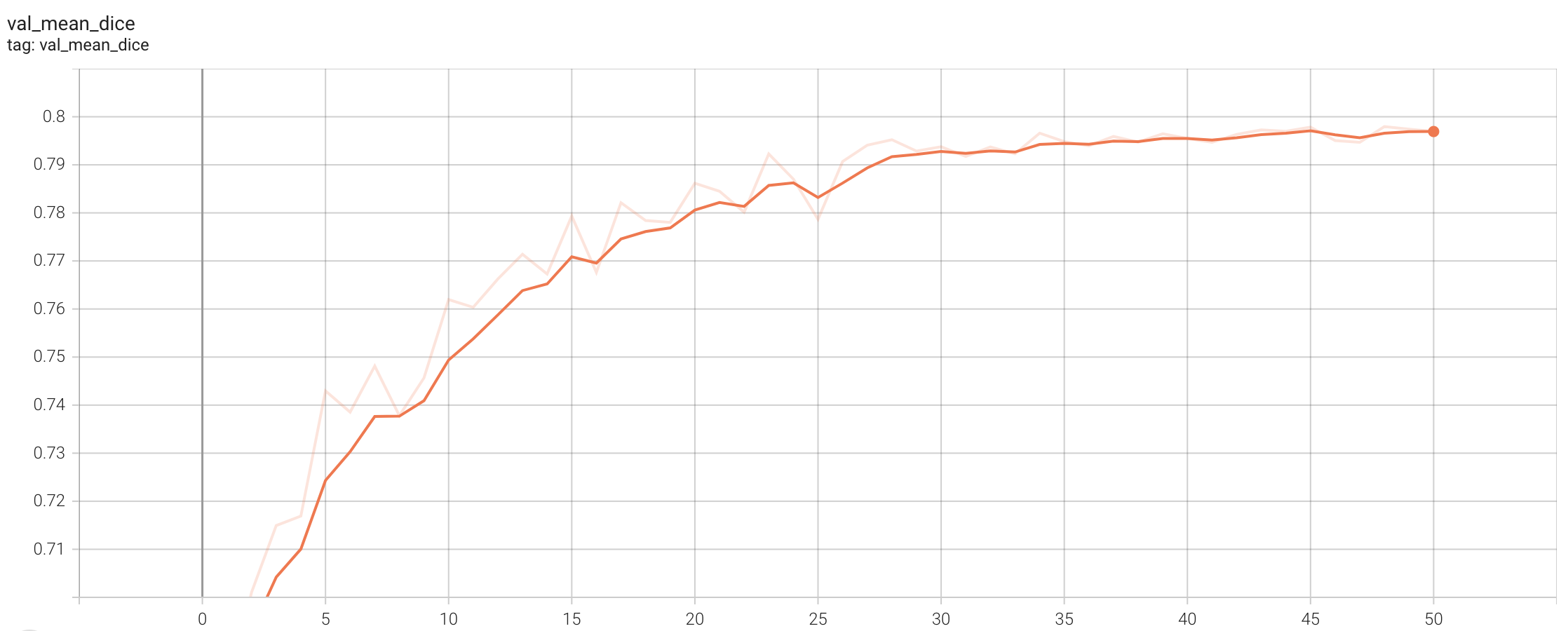

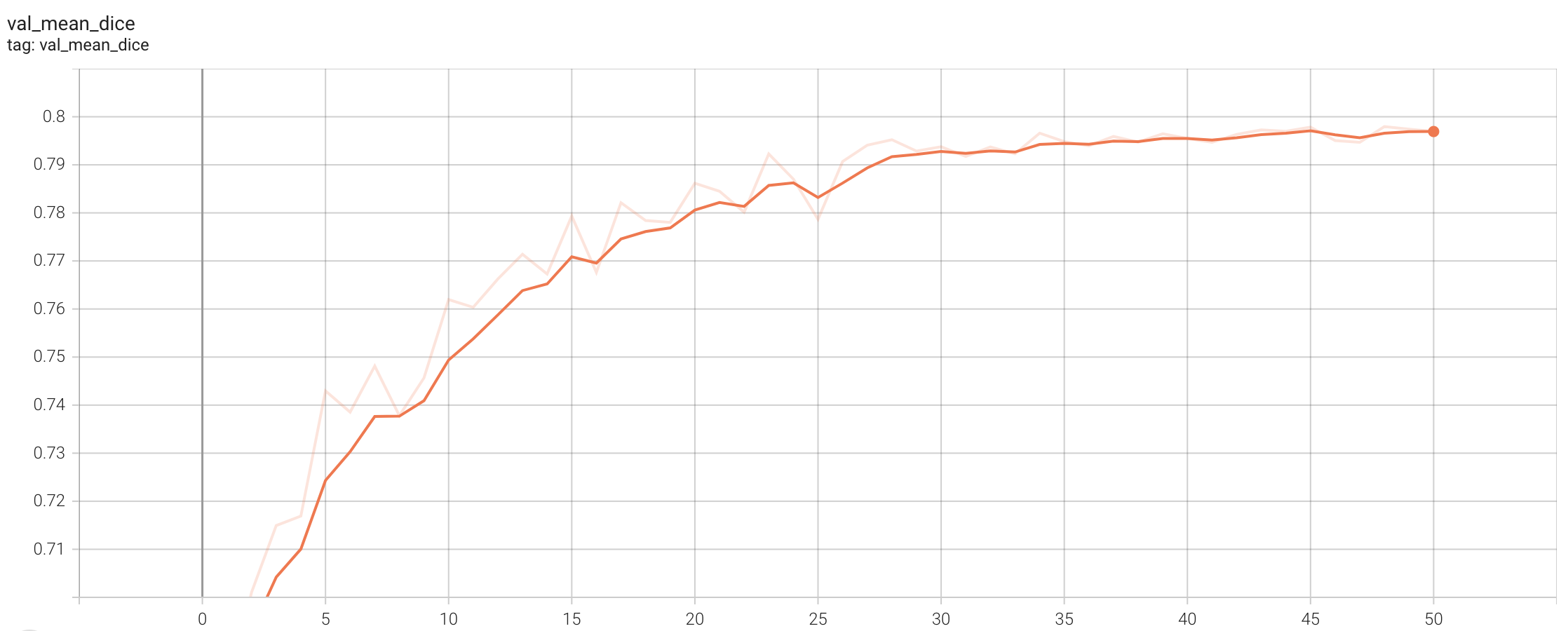

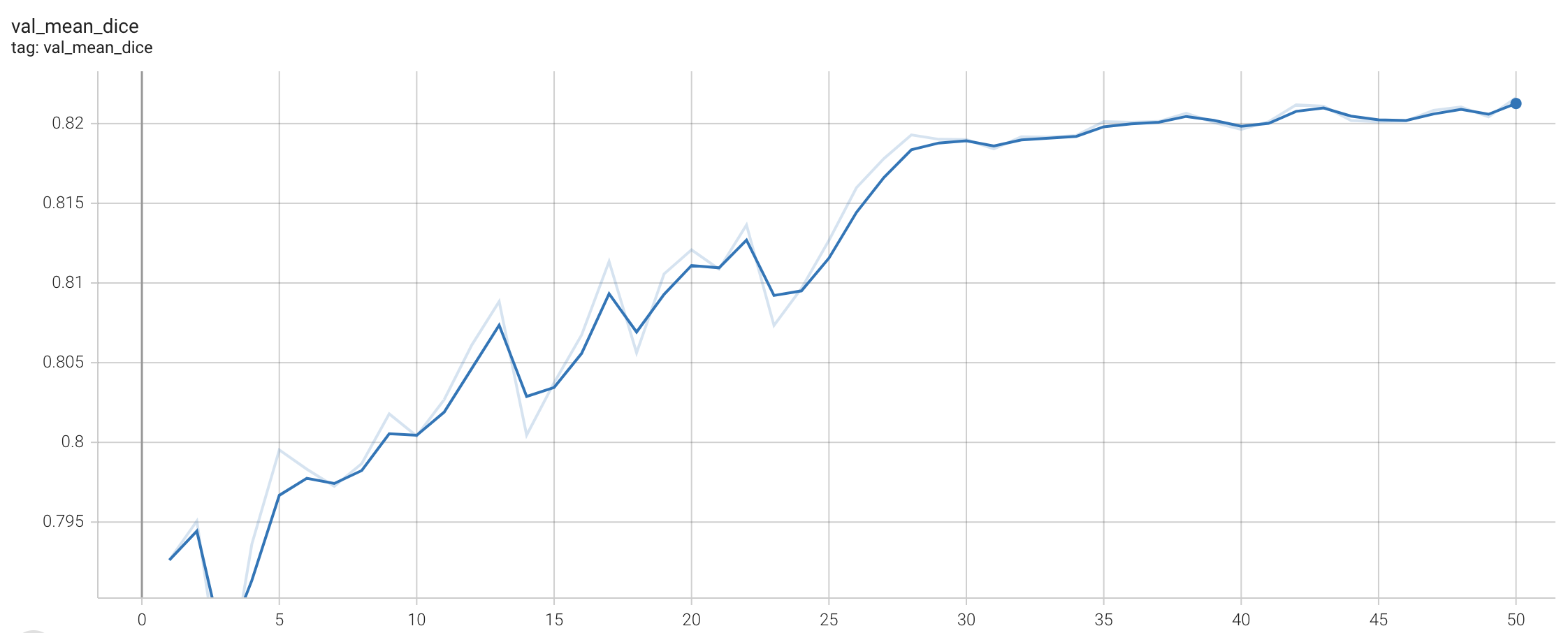

#### Validation Dice

|

|

|

|

| 79 |

stage1:

|

| 80 |

|

| 81 |

|

|

|

|

| 84 |

|

| 85 |

|

| 86 |

|

| 87 |

+

## MONAI Bundle Commands

|

| 88 |

+

In addition to the Pythonic APIs, a few command line interfaces (CLI) are provided to interact with the bundle. The CLI supports flexible use cases, such as overriding configs at runtime and predefining arguments in a file.

|

| 89 |

|

| 90 |

+

For more details usage instructions, visit the [MONAI Bundle Configuration Page](https://docs.monai.io/en/latest/config_syntax.html).

|

| 91 |

|

| 92 |

+

#### Execute training, the evaluation in the training were evaluated on patches:

|

| 93 |

|

| 94 |

+

- Run first stage

|

| 95 |

```

|

| 96 |

python -m monai.bundle run --config_file configs/train.json --network_def#pretrained_url `PRETRAIN_MODEL_URL` --stage 0

|

| 97 |

```

|

| 98 |

|

| 99 |

- Run second stage

|

|

|

|

| 100 |

```

|

| 101 |

python -m monai.bundle run --config_file configs/train.json --network_def#freeze_encoder False --network_def#pretrained_url None --stage 1

|

| 102 |

```

|

| 103 |

|

| 104 |

+

#### Override the `train` config to execute multi-GPU training:

|

| 105 |

|

| 106 |

- Run first stage

|

|

|

|

| 107 |

```

|

| 108 |

torchrun --standalone --nnodes=1 --nproc_per_node=2 -m monai.bundle run --config_file "['configs/train.json','configs/multi_gpu_train.json']" --batch_size 8 --network_def#freeze_encoder True --network_def#pretrained_url `PRETRAIN_MODEL_URL --stage 0

|

| 109 |

```

|

| 110 |

|

| 111 |

- Run second stage

|

|

|

|

| 112 |

```

|

| 113 |

torchrun --standalone --nnodes=1 --nproc_per_node=2 -m monai.bundle run --config_file "['configs/train.json','configs/multi_gpu_train.json']" --batch_size 4 --network_def#freeze_encoder False --network_def#pretrained_url None --stage 1

|

| 114 |

```

|

| 115 |

|

| 116 |

+

#### Override the `train` config to execute evaluation with the trained model, here we evaluated dice from the whole input instead of the patches:

|

| 117 |

|

| 118 |

```

|

| 119 |

python -m monai.bundle run --config_file "['configs/train.json','configs/evaluate.json']"

|

| 120 |

```

|

| 121 |

|

| 122 |

+

#### Execute inference:

|

| 123 |

|

| 124 |

```

|

| 125 |

python -m monai.bundle run --config_file configs/inference.json

|

| 126 |

```

|

| 127 |

|

| 128 |

# Disclaimer

|

|

|

|

| 129 |

This is an example, not to be used for diagnostic purposes.

|

| 130 |

|

| 131 |

# References

|

|

|

|

| 132 |

[1] Simon Graham, Quoc Dang Vu, Shan E Ahmed Raza, Ayesha Azam, Yee Wah Tsang, Jin Tae Kwak, Nasir Rajpoot, Hover-Net: Simultaneous segmentation and classification of nuclei in multi-tissue histology images, Medical Image Analysis, 2019 https://doi.org/10.1016/j.media.2019.101563

|

| 133 |

|

| 134 |

# License

|

configs/metadata.json

CHANGED

|

@@ -1,7 +1,8 @@

|

|

| 1 |

{

|

| 2 |

"schema": "https://github.com/Project-MONAI/MONAI-extra-test-data/releases/download/0.8.1/meta_schema_hovernet_20221124.json",

|

| 3 |

-

"version": "0.1.

|

| 4 |

"changelog": {

|

|

|

|

| 5 |

"0.1.5": "update benchmark on A100",

|

| 6 |

"0.1.4": "adapt to BundleWorkflow interface",

|

| 7 |

"0.1.3": "add name tag",

|

|

|

|

| 1 |

{

|

| 2 |

"schema": "https://github.com/Project-MONAI/MONAI-extra-test-data/releases/download/0.8.1/meta_schema_hovernet_20221124.json",

|

| 3 |

+

"version": "0.1.6",

|

| 4 |

"changelog": {

|

| 5 |

+

"0.1.6": "add non-deterministic note",

|

| 6 |

"0.1.5": "update benchmark on A100",

|

| 7 |

"0.1.4": "adapt to BundleWorkflow interface",

|

| 8 |

"0.1.3": "add name tag",

|

docs/README.md

CHANGED

|

@@ -1,21 +1,18 @@

|

|

| 1 |

# Model Overview

|

| 2 |

-

|

| 3 |

A pre-trained model for simultaneous segmentation and classification of nuclei within multi-tissue histology images based on CoNSeP data. The details of the model can be found in [1].

|

| 4 |

|

| 5 |

## Workflow

|

|

|

|

| 6 |

|

| 7 |

-

|

| 8 |

-

|

| 9 |

-

|

| 10 |

-

|

| 11 |

-

- The original author's repo also has pre-trained weights which is for non-commercial use. Each user is responsible for checking the content of models/datasets and the applicable licenses and determining if suitable for the intended use. The license for the pre-trained model is different than MONAI license. Please check the source where these weights are obtained from: <https://github.com/vqdang/hover_net#data-format>

|

| 12 |

|

| 13 |

`PRETRAIN_MODEL_URL` is "https://drive.google.com/u/1/uc?id=1KntZge40tAHgyXmHYVqZZ5d2p_4Qr2l5&export=download" which can be used in bash code below.

|

| 14 |

|

| 15 |

|

| 16 |

|

| 17 |

## Data

|

| 18 |

-

|

| 19 |

The training data is from <https://warwick.ac.uk/fac/cross_fac/tia/data/hovernet/>.

|

| 20 |

|

| 21 |

- Target: segment instance-level nuclei and classify the nuclei type

|

|

@@ -32,7 +29,6 @@ python scripts/prepare_patches.py -root your-concep-dataset-path

|

|

| 32 |

```

|

| 33 |

|

| 34 |

## Training configuration

|

| 35 |

-

|

| 36 |

This model utilized a two-stage approach. The training was performed with the following:

|

| 37 |

|

| 38 |

- GPU: At least 24GB of GPU memory.

|

|

@@ -43,19 +39,16 @@ This model utilized a two-stage approach. The training was performed with the fo

|

|

| 43 |

- Loss: HoVerNetLoss

|

| 44 |

|

| 45 |

## Input

|

| 46 |

-

|

| 47 |

Input: RGB images

|

| 48 |

|

| 49 |

## Output

|

| 50 |

-

|

| 51 |

Output: a dictionary with the following keys:

|

| 52 |

|

| 53 |

1. nucleus_prediction: predict whether or not a pixel belongs to the nuclei or background

|

| 54 |

2. horizontal_vertical: predict the horizontal and vertical distances of nuclear pixels to their centres of mass

|

| 55 |

3. type_prediction: predict the type of nucleus for each pixel

|

| 56 |

|

| 57 |

-

##

|

| 58 |

-

|

| 59 |

The achieved metrics on the validation data are:

|

| 60 |

|

| 61 |

Fast mode:

|

|

@@ -65,10 +58,10 @@ Fast mode:

|

|

| 65 |

|

| 66 |

Note: Binary Dice is calculated based on the whole input. PQ and F1d were calculated from https://github.com/vqdang/hover_net#inference.

|

| 67 |

|

| 68 |

-

|

|

|

|

| 69 |

|

| 70 |

#### Training Loss and Dice

|

| 71 |

-

|

| 72 |

stage1:

|

| 73 |

|

| 74 |

|

|

@@ -76,7 +69,6 @@ stage2:

|

|

| 76 |

|

| 77 |

|

| 78 |

#### Validation Dice

|

| 79 |

-

|

| 80 |

stage1:

|

| 81 |

|

| 82 |

|

|

@@ -85,54 +77,51 @@ stage2:

|

|

| 85 |

|

| 86 |

|

| 87 |

|

| 88 |

-

##

|

|

|

|

| 89 |

|

| 90 |

-

|

| 91 |

|

| 92 |

-

|

| 93 |

|

|

|

|

| 94 |

```

|

| 95 |

python -m monai.bundle run --config_file configs/train.json --network_def#pretrained_url `PRETRAIN_MODEL_URL` --stage 0

|

| 96 |

```

|

| 97 |

|

| 98 |

- Run second stage

|

| 99 |

-

|

| 100 |

```

|

| 101 |

python -m monai.bundle run --config_file configs/train.json --network_def#freeze_encoder False --network_def#pretrained_url None --stage 1

|

| 102 |

```

|

| 103 |

|

| 104 |

-

Override the `train` config to execute multi-GPU training:

|

| 105 |

|

| 106 |

- Run first stage

|

| 107 |

-

|

| 108 |

```

|

| 109 |

torchrun --standalone --nnodes=1 --nproc_per_node=2 -m monai.bundle run --config_file "['configs/train.json','configs/multi_gpu_train.json']" --batch_size 8 --network_def#freeze_encoder True --network_def#pretrained_url `PRETRAIN_MODEL_URL --stage 0

|

| 110 |

```

|

| 111 |

|

| 112 |

- Run second stage

|

| 113 |

-

|

| 114 |

```

|

| 115 |

torchrun --standalone --nnodes=1 --nproc_per_node=2 -m monai.bundle run --config_file "['configs/train.json','configs/multi_gpu_train.json']" --batch_size 4 --network_def#freeze_encoder False --network_def#pretrained_url None --stage 1

|

| 116 |

```

|

| 117 |

|

| 118 |

-

Override the `train` config to execute evaluation with the trained model, here we evaluated dice from the whole input instead of the patches:

|

| 119 |

|

| 120 |

```

|

| 121 |

python -m monai.bundle run --config_file "['configs/train.json','configs/evaluate.json']"

|

| 122 |

```

|

| 123 |

|

| 124 |

-

|

| 125 |

|

| 126 |

```

|

| 127 |

python -m monai.bundle run --config_file configs/inference.json

|

| 128 |

```

|

| 129 |

|

| 130 |

# Disclaimer

|

| 131 |

-

|

| 132 |

This is an example, not to be used for diagnostic purposes.

|

| 133 |

|

| 134 |

# References

|

| 135 |

-

|

| 136 |

[1] Simon Graham, Quoc Dang Vu, Shan E Ahmed Raza, Ayesha Azam, Yee Wah Tsang, Jin Tae Kwak, Nasir Rajpoot, Hover-Net: Simultaneous segmentation and classification of nuclei in multi-tissue histology images, Medical Image Analysis, 2019 https://doi.org/10.1016/j.media.2019.101563

|

| 137 |

|

| 138 |

# License

|

|

|

|

| 1 |

# Model Overview

|

|

|

|

| 2 |

A pre-trained model for simultaneous segmentation and classification of nuclei within multi-tissue histology images based on CoNSeP data. The details of the model can be found in [1].

|

| 3 |

|

| 4 |

## Workflow

|

| 5 |

+

The model is trained to simultaneously segment and classify nuclei. Training is done via a two-stage approach. First initialized the model with pre-trained weights on the [ImageNet dataset](https://ieeexplore.ieee.org/document/5206848), trained only the decoders for the first 50 epochs, and then fine-tuned all layers for another 50 epochs. There are two training modes in total. If "original" mode is specified, [270, 270] and [80, 80] are used for `patch_size` and `out_size` respectively. If "fast" mode is specified, [256, 256] and [164, 164] are used for `patch_size` and `out_size` respectively. The results shown below are based on the "fast" mode.

|

| 6 |

|

| 7 |

+

### Pre-trained weights

|

| 8 |

+

The first stage is trained with pre-trained weights from some internal data.The [original author's repo](https://github.com/vqdang/hover_net#data-format) also provides pre-trained weights but for non-commercial use.

|

| 9 |

+

Each user is responsible for checking the content of models/datasets and the applicable licenses and determining if suitable for the intended use.

|

|

|

|

|

|

|

| 10 |

|

| 11 |

`PRETRAIN_MODEL_URL` is "https://drive.google.com/u/1/uc?id=1KntZge40tAHgyXmHYVqZZ5d2p_4Qr2l5&export=download" which can be used in bash code below.

|

| 12 |

|

| 13 |

|

| 14 |

|

| 15 |

## Data

|

|

|

|

| 16 |

The training data is from <https://warwick.ac.uk/fac/cross_fac/tia/data/hovernet/>.

|

| 17 |

|

| 18 |

- Target: segment instance-level nuclei and classify the nuclei type

|

|

|

|

| 29 |

```

|

| 30 |

|

| 31 |

## Training configuration

|

|

|

|

| 32 |

This model utilized a two-stage approach. The training was performed with the following:

|

| 33 |

|

| 34 |

- GPU: At least 24GB of GPU memory.

|

|

|

|

| 39 |

- Loss: HoVerNetLoss

|

| 40 |

|

| 41 |

## Input

|

|

|

|

| 42 |

Input: RGB images

|

| 43 |

|

| 44 |

## Output

|

|

|

|

| 45 |

Output: a dictionary with the following keys:

|

| 46 |

|

| 47 |

1. nucleus_prediction: predict whether or not a pixel belongs to the nuclei or background

|

| 48 |

2. horizontal_vertical: predict the horizontal and vertical distances of nuclear pixels to their centres of mass

|

| 49 |

3. type_prediction: predict the type of nucleus for each pixel

|

| 50 |

|

| 51 |

+

## Performance

|

|

|

|

| 52 |

The achieved metrics on the validation data are:

|

| 53 |

|

| 54 |

Fast mode:

|

|

|

|

| 58 |

|

| 59 |

Note: Binary Dice is calculated based on the whole input. PQ and F1d were calculated from https://github.com/vqdang/hover_net#inference.

|

| 60 |

|

| 61 |

+

Please note that this bundle is non-deterministic because of the bilinear interpolation used in the network. Therefore, reproducing the training process may not get exactly the same performance.

|

| 62 |

+

Please refer to https://pytorch.org/docs/stable/notes/randomness.html#reproducibility for more details about reproducibility.

|

| 63 |

|

| 64 |

#### Training Loss and Dice

|

|

|

|

| 65 |

stage1:

|

| 66 |

|

| 67 |

|

|

|

|

| 69 |

|

| 70 |

|

| 71 |

#### Validation Dice

|

|

|

|

| 72 |

stage1:

|

| 73 |

|

| 74 |

|

|

|

|

| 77 |

|

| 78 |

|

| 79 |

|

| 80 |

+

## MONAI Bundle Commands

|

| 81 |

+

In addition to the Pythonic APIs, a few command line interfaces (CLI) are provided to interact with the bundle. The CLI supports flexible use cases, such as overriding configs at runtime and predefining arguments in a file.

|

| 82 |

|

| 83 |

+

For more details usage instructions, visit the [MONAI Bundle Configuration Page](https://docs.monai.io/en/latest/config_syntax.html).

|

| 84 |

|

| 85 |

+

#### Execute training, the evaluation in the training were evaluated on patches:

|

| 86 |

|

| 87 |

+

- Run first stage

|

| 88 |

```

|

| 89 |

python -m monai.bundle run --config_file configs/train.json --network_def#pretrained_url `PRETRAIN_MODEL_URL` --stage 0

|

| 90 |

```

|

| 91 |

|

| 92 |

- Run second stage

|

|

|

|

| 93 |

```

|

| 94 |

python -m monai.bundle run --config_file configs/train.json --network_def#freeze_encoder False --network_def#pretrained_url None --stage 1

|

| 95 |

```

|

| 96 |

|

| 97 |

+

#### Override the `train` config to execute multi-GPU training:

|

| 98 |

|

| 99 |

- Run first stage

|

|

|

|

| 100 |

```

|

| 101 |

torchrun --standalone --nnodes=1 --nproc_per_node=2 -m monai.bundle run --config_file "['configs/train.json','configs/multi_gpu_train.json']" --batch_size 8 --network_def#freeze_encoder True --network_def#pretrained_url `PRETRAIN_MODEL_URL --stage 0

|

| 102 |

```

|

| 103 |

|

| 104 |

- Run second stage

|

|

|

|

| 105 |

```

|

| 106 |

torchrun --standalone --nnodes=1 --nproc_per_node=2 -m monai.bundle run --config_file "['configs/train.json','configs/multi_gpu_train.json']" --batch_size 4 --network_def#freeze_encoder False --network_def#pretrained_url None --stage 1

|

| 107 |

```

|

| 108 |

|

| 109 |

+

#### Override the `train` config to execute evaluation with the trained model, here we evaluated dice from the whole input instead of the patches:

|

| 110 |

|

| 111 |

```

|

| 112 |

python -m monai.bundle run --config_file "['configs/train.json','configs/evaluate.json']"

|

| 113 |

```

|

| 114 |

|

| 115 |

+

#### Execute inference:

|

| 116 |

|

| 117 |

```

|

| 118 |

python -m monai.bundle run --config_file configs/inference.json

|

| 119 |

```

|

| 120 |

|

| 121 |

# Disclaimer

|

|

|

|

| 122 |

This is an example, not to be used for diagnostic purposes.

|

| 123 |

|

| 124 |

# References

|

|

|

|

| 125 |

[1] Simon Graham, Quoc Dang Vu, Shan E Ahmed Raza, Ayesha Azam, Yee Wah Tsang, Jin Tae Kwak, Nasir Rajpoot, Hover-Net: Simultaneous segmentation and classification of nuclei in multi-tissue histology images, Medical Image Analysis, 2019 https://doi.org/10.1016/j.media.2019.101563

|

| 126 |

|

| 127 |

# License

|