Update README.md

Browse files

README.md

CHANGED

|

@@ -20,7 +20,7 @@ metrics:

|

|

| 20 |

|

| 21 |

---

|

| 22 |

|

| 23 |

-

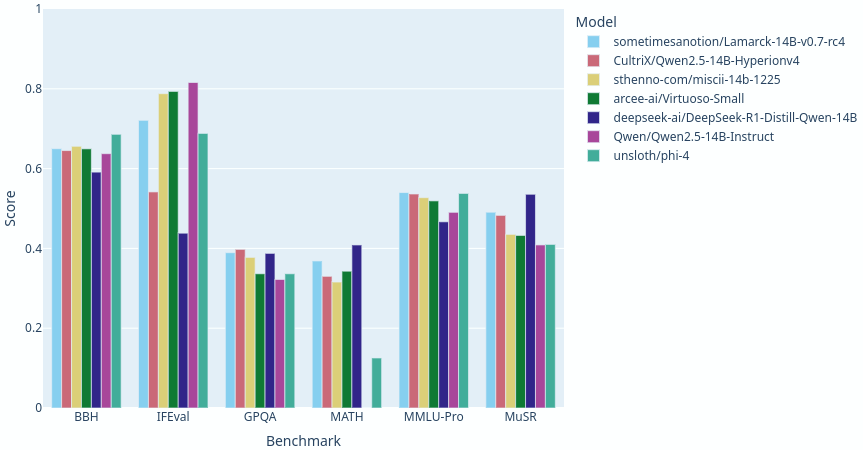

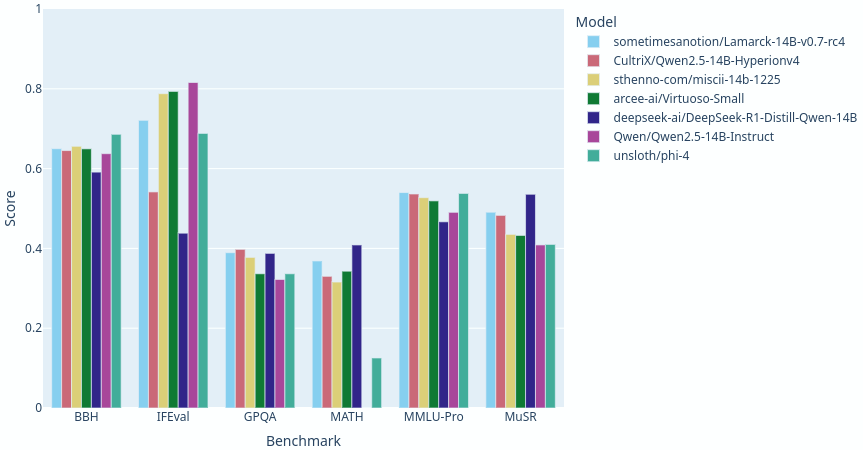

> [!TIP] With no benchmark regressions, mostly gains over the previous release, this version of Lamarck has [broken the 41.0 average](https://shorturl.at/jUqEk) maximum for 14B parameter models. Those providing feedback, thank you!

|

| 24 |

|

| 25 |

Lamarck 14B v0.7: A generalist merge with emphasis on multi-step reasoning, prose, and multi-language ability. The 14B parameter model class has a lot of strong performers, and Lamarck strives to be well-rounded and solid:

|

| 26 |

|

|

|

|

| 20 |

|

| 21 |

---

|

| 22 |

|

| 23 |

+

> [!TIP] With no benchmark regressions, mostly gains over the previous release, this version of Lamarck has [broken the 41.0 average](https://shorturl.at/jUqEk) maximum for 14B parameter models. Those providing feedback, thank you!

|

| 24 |

|

| 25 |

Lamarck 14B v0.7: A generalist merge with emphasis on multi-step reasoning, prose, and multi-language ability. The 14B parameter model class has a lot of strong performers, and Lamarck strives to be well-rounded and solid:

|

| 26 |

|