Spaces:

Runtime error

Runtime error

Update app.py

Browse files

app.py

CHANGED

|

@@ -1,12 +1,8 @@

|

|

| 1 |

import gradio as gr

|

| 2 |

from base64 import b64encode

|

| 3 |

-

|

| 4 |

import numpy

|

| 5 |

import torch

|

| 6 |

from diffusers import AutoencoderKL, LMSDiscreteScheduler, UNet2DConditionModel

|

| 7 |

-

|

| 8 |

-

|

| 9 |

-

|

| 10 |

from PIL import Image

|

| 11 |

from torch import autocast

|

| 12 |

from torchvision import transforms as tfms

|

|

@@ -15,15 +11,9 @@ from transformers import CLIPTextModel, CLIPTokenizer, logging

|

|

| 15 |

import torchvision.transforms as T

|

| 16 |

|

| 17 |

torch.manual_seed(1)

|

| 18 |

-

|

| 19 |

-

|

| 20 |

-

# Supress some unnecessary warnings when loading the CLIPTextModel

|

| 21 |

logging.set_verbosity_error()

|

| 22 |

-

|

| 23 |

torch_device = "cpu"

|

| 24 |

|

| 25 |

-

|

| 26 |

-

# Load the autoencoder model which will be used to decode the latents into image space.

|

| 27 |

vae = AutoencoderKL.from_pretrained("CompVis/stable-diffusion-v1-4", subfolder="vae")

|

| 28 |

|

| 29 |

# Load the tokenizer and text encoder to tokenize and encode the text.

|

|

@@ -36,35 +26,14 @@ unet = UNet2DConditionModel.from_pretrained("CompVis/stable-diffusion-v1-4", sub

|

|

| 36 |

# The noise scheduler

|

| 37 |

scheduler = LMSDiscreteScheduler(beta_start=0.00085, beta_end=0.012, beta_schedule="scaled_linear", num_train_timesteps=1000)

|

| 38 |

|

| 39 |

-

# To the GPU we go!

|

| 40 |

vae = vae.to(torch_device)

|

| 41 |

text_encoder = text_encoder.to(torch_device)

|

| 42 |

unet = unet.to(torch_device);

|

| 43 |

|

| 44 |

-

|

| 45 |

-

|

| 46 |

-

|

| 47 |

-

|

| 48 |

-

What we want to do in this notebook is dig a little deeper into how this works, so we'll start by checking that the example code runs. Again, this is adapted from the [HF notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_diffusion.ipynb) and looks very similar to what you'll find if you inspect [the `__call__()` method of the stable diffusion pipeline](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/stable_diffusion/pipeline_stable_diffusion.py#L200).

|

| 49 |

-

"""

|

| 50 |

-

|

| 51 |

-

|

| 52 |

-

# Prep Scheduler

|

| 53 |

-

def set_timesteps(scheduler, num_inference_steps):

|

| 54 |

-

scheduler.set_timesteps(num_inference_steps)

|

| 55 |

-

scheduler.timesteps = scheduler.timesteps.to(torch.float32) # minor fix to ensure MPS compatibility, fixed in diffusers PR 3925

|

| 56 |

-

|

| 57 |

-

|

| 58 |

-

# Prep latents

|

| 59 |

-

latents = torch.randn(

|

| 60 |

-

(batch_size, unet.in_channels, height // 8, width // 8),

|

| 61 |

-

generator=generator,

|

| 62 |

-

)

|

| 63 |

-

latents = latents.to(torch_device)

|

| 64 |

-

latents = latents * scheduler.init_noise_sigma # Scaling (previous versions did latents = latents * self.scheduler.sigmas[0]

|

| 65 |

-

|

| 66 |

-

|

| 67 |

-

|

| 68 |

|

| 69 |

def pil_to_latent(input_im):

|

| 70 |

# Single image -> single latent in a batch (so size 1, 4, 64, 64)

|

|

@@ -83,147 +52,6 @@ def latents_to_pil(latents):

|

|

| 83 |

pil_images = [Image.fromarray(image) for image in images]

|

| 84 |

return pil_images

|

| 85 |

|

| 86 |

-

|

| 87 |

-

"""What does this look like at different timesteps? Experiment and see for yourself!

|

| 88 |

-

|

| 89 |

-

If you uncomment the cell below you'll see that in this case the `scheduler.add_noise` function literally just adds noise scaled by sigma: `noisy_samples = original_samples + noise * sigmas`

|

| 90 |

-

"""

|

| 91 |

-

#encoded = pil_to_latent(input_image)

|

| 92 |

-

#encoded.shape

|

| 93 |

-

#decoded = latents_to_pil(encoded)[0]

|

| 94 |

-

#decoded

|

| 95 |

-

# ??scheduler.add_noise

|

| 96 |

-

|

| 97 |

-

"""Other diffusion models may be trained with different noising and scheduling approaches, some of which keep the variance fairly constant across noise levels ('variance preserving') with different scaling and mixing tricks instead of having noisy latents with higher and higher variance as more noise is added ('variance exploding').

|

| 98 |

-

|

| 99 |

-

If we want to start from random noise instead of a noised image, we need to scale it by the largest sigma value used during training, ~14 in this case. And before these noisy latents are fed to the model they are scaled again in the so-called pre-conditioning step:

|

| 100 |

-

`latent_model_input = latent_model_input / ((sigma**2 + 1) ** 0.5)` (now handled by `latent_model_input = scheduler.scale_model_input(latent_model_input, t)`).

|

| 101 |

-

|

| 102 |

-

Again, this scaling/pre-conditioning differs between papers and implementations, so keep an eye out for this if you work with a different type of diffusion model.

|

| 103 |

-

|

| 104 |

-

## Loop starting from noised version of input (AKA image2image)

|

| 105 |

-

|

| 106 |

-

Let's see what happens when we use our image as a starting point, adding some noise and then doing the final few denoising steps in the loop with a new prompt.

|

| 107 |

-

|

| 108 |

-

We'll use a similar loop to the first demo, but we'll skip the first `start_step` steps.

|

| 109 |

-

|

| 110 |

-

To noise our image we'll use code like that shown above, using the scheduler to noise it to a level equivalent to step 10 (`start_step`).

|

| 111 |

-

"""

|

| 112 |

-

|

| 113 |

-

# Settings (same as before except for the new prompt)

|

| 114 |

-

|

| 115 |

-

"""You can see that some colours and structure from the image are kept, but we now have a new picture! The more noise you add and the more steps you do, the further away it gets from the input image.

|

| 116 |

-

|

| 117 |

-

This is how the popular img2img pipeline works. Again, if this is your end goal there are tools to make this easy!

|

| 118 |

-

|

| 119 |

-

But you can see that under the hood this is the same as the generation loop just skipping the first few steps and starting from a noised image rather than pure noise.

|

| 120 |

-

|

| 121 |

-

Explore changing how many steps are skipped and see how this affects the amount the image changes from the input.

|

| 122 |

-

|

| 123 |

-

## Exploring the text -> embedding pipeline

|

| 124 |

-

|

| 125 |

-

We use a text encoder model to turn our text into a set of 'embeddings' which are fed to the diffusion model as conditioning. Let's follow a piece of text through this process and see how it works.

|

| 126 |

-

"""

|

| 127 |

-

|

| 128 |

-

# Our text prompt

|

| 129 |

-

prompt = 'A picture of a puppy'

|

| 130 |

-

|

| 131 |

-

|

| 132 |

-

"""We begin with tokenization:"""

|

| 133 |

-

|

| 134 |

-

# Turn the text into a sequnce of tokens:

|

| 135 |

-

text_input = tokenizer(prompt, padding="max_length", max_length=tokenizer.model_max_length, truncation=True, return_tensors="pt")

|

| 136 |

-

text_input['input_ids'][0] # View the tokens

|

| 137 |

-

|

| 138 |

-

# See the individual tokens

|

| 139 |

-

for t in text_input['input_ids'][0][:8]: # We'll just look at the first 7 to save you from a wall of '<|endoftext|>'

|

| 140 |

-

print(t, tokenizer.decoder.get(int(t)))

|

| 141 |

-

|

| 142 |

-

# TODO call out that 6829 is puppy

|

| 143 |

-

|

| 144 |

-

"""We can jump straight to the final (output) embeddings like so:"""

|

| 145 |

-

|

| 146 |

-

# Grab the output embeddings

|

| 147 |

-

output_embeddings = text_encoder(text_input.input_ids.to(torch_device))[0]

|

| 148 |

-

print('Shape:', output_embeddings.shape)

|

| 149 |

-

output_embeddings

|

| 150 |

-

|

| 151 |

-

"""We pass our tokens through the text_encoder and we magically get some numbers we can feed to the model.

|

| 152 |

-

|

| 153 |

-

How are these generated? The tokens are transformed into a set of input embeddings, which are then fed through the transformer model to get the final output embeddings.

|

| 154 |

-

|

| 155 |

-

To get these input embeddings, there are actually two steps - as revealed by inspecting `text_encoder.text_model.embeddings`:

|

| 156 |

-

"""

|

| 157 |

-

|

| 158 |

-

text_encoder.text_model.embeddings

|

| 159 |

-

|

| 160 |

-

"""### Token embeddings

|

| 161 |

-

|

| 162 |

-

The token is fed to the `token_embedding` to transform it into a vector. The function name `get_input_embeddings` here is misleading since these token embeddings need to be combined with the position embeddings before they are actually used as inputs to the model! Anyway, let's look at just the token embedding part first

|

| 163 |

-

|

| 164 |

-

We can look at the embedding layer:

|

| 165 |

-

"""

|

| 166 |

-

|

| 167 |

-

# Access the embedding layer

|

| 168 |

-

token_emb_layer = text_encoder.text_model.embeddings.token_embedding

|

| 169 |

-

token_emb_layer # Vocab size 49408, emb_dim 768

|

| 170 |

-

|

| 171 |

-

"""And embed a token like so:"""

|

| 172 |

-

|

| 173 |

-

# Embed a token - in this case the one for 'puppy'

|

| 174 |

-

embedding = token_emb_layer(torch.tensor(6829, device=torch_device))

|

| 175 |

-

embedding.shape # 768-dim representation

|

| 176 |

-

|

| 177 |

-

"""This single token has been mapped to a 768-dimensional vector - the token embedding.

|

| 178 |

-

|

| 179 |

-

We can do the same with all of the tokens in the prompt to get all the token embeddings:

|

| 180 |

-

"""

|

| 181 |

-

|

| 182 |

-

token_embeddings = token_emb_layer(text_input.input_ids.to(torch_device))

|

| 183 |

-

print(token_embeddings.shape) # batch size 1, 77 tokens, 768 values for each

|

| 184 |

-

token_embeddings

|

| 185 |

-

|

| 186 |

-

"""### Positional Embeddings

|

| 187 |

-

|

| 188 |

-

Positional embeddings tell the model where in a sequence a token is. Much like the token embedding, this is a set of (optionally learnable) parameters. But now instead of dealing with ~50k tokens we just need one for each position (77 total):

|

| 189 |

-

"""

|

| 190 |

-

|

| 191 |

-

pos_emb_layer = text_encoder.text_model.embeddings.position_embedding

|

| 192 |

-

pos_emb_layer

|

| 193 |

-

|

| 194 |

-

"""We can get the positional embedding for each position:"""

|

| 195 |

-

|

| 196 |

-

position_ids = text_encoder.text_model.embeddings.position_ids[:, :77]

|

| 197 |

-

position_embeddings = pos_emb_layer(position_ids)

|

| 198 |

-

print(position_embeddings.shape)

|

| 199 |

-

position_embeddings

|

| 200 |

-

|

| 201 |

-

"""### Combining token and position embeddings

|

| 202 |

-

|

| 203 |

-

Time to combine the two. How do we do this? Just add them! Other approaches are possible but for this model this is how it is done.

|

| 204 |

-

|

| 205 |

-

Combining them in this way gives us the final input embeddings ready to feed through the transformer model:

|

| 206 |

-

"""

|

| 207 |

-

|

| 208 |

-

# And combining them we get the final input embeddings

|

| 209 |

-

input_embeddings = token_embeddings + position_embeddings

|

| 210 |

-

print(input_embeddings.shape)

|

| 211 |

-

input_embeddings

|

| 212 |

-

|

| 213 |

-

"""We can check that these are the same as the result we'd get from `text_encoder.text_model.embeddings`:"""

|

| 214 |

-

|

| 215 |

-

# The following combines all the above steps (but doesn't let us fiddle with them!)

|

| 216 |

-

text_encoder.text_model.embeddings(text_input.input_ids.to(torch_device))

|

| 217 |

-

|

| 218 |

-

"""### Feeding these through the transformer model

|

| 219 |

-

|

| 220 |

-

|

| 221 |

-

|

| 222 |

-

We want to mess with these input embeddings (specifically the token embeddings) before we send them through the rest of the model, but first we should check that we know how to do that. I read the code of the text_encoders `forward` method, and based on that the code for the `forward` method of the text_model that the text_encoder wraps. To inspect it yourself, type `??text_encoder.text_model.forward` and you'll get the function info and source code - a useful debugging trick!

|

| 223 |

-

|

| 224 |

-

Anyway, based on that we can copy in the bits we need to get the so-called 'last hidden state' and thus generate our final embeddings:

|

| 225 |

-

"""

|

| 226 |

-

|

| 227 |

def get_output_embeds(input_embeddings):

|

| 228 |

# CLIP's text model uses causal mask, so we prepare it here:

|

| 229 |

bsz, seq_len = input_embeddings.shape[:2]

|

|

@@ -249,114 +77,15 @@ def get_output_embeds(input_embeddings):

|

|

| 249 |

# And now they're ready!

|

| 250 |

return output

|

| 251 |

|

| 252 |

-

|

| 253 |

-

print(out_embs_test.shape) # Check the output shape

|

| 254 |

-

out_embs_test # Inspect the output

|

| 255 |

-

|

| 256 |

-

"""Note that these match the `output_embeddings` we saw near the start - we've figured out how to split up that one step ("get the text embeddings") into multiple sub-steps ready for us to modify.

|

| 257 |

-

|

| 258 |

-

Now that we have this process in place, we can replace the input embedding of a token with a new one of our choice - which in our final use-case will be something we learn. To demonstrate the concept though, let's replace the input embedding for 'puppy' in the prompt we've been playing with with the embedding for token 2368, get a new set of output embeddings based on this, and use these to generate an image to see what we get:

|

| 259 |

-

"""

|

| 260 |

-

|

| 261 |

-

prompt = 'A picture of a puppy'

|

| 262 |

-

|

| 263 |

-

# Tokenize

|

| 264 |

-

text_input = tokenizer(prompt, padding="max_length", max_length=tokenizer.model_max_length, truncation=True, return_tensors="pt")

|

| 265 |

-

input_ids = text_input.input_ids.to(torch_device)

|

| 266 |

-

|

| 267 |

-

# Get token embeddings

|

| 268 |

-

token_embeddings = token_emb_layer(input_ids)

|

| 269 |

-

|

| 270 |

-

# The new embedding. In this case just the input embedding of token 2368...

|

| 271 |

-

replacement_token_embedding = text_encoder.get_input_embeddings()(torch.tensor(2368, device=torch_device))

|

| 272 |

-

|

| 273 |

-

# Insert this into the token embeddings (

|

| 274 |

-

token_embeddings[0, torch.where(input_ids[0]==6829)] = replacement_token_embedding.to(torch_device)

|

| 275 |

-

|

| 276 |

-

# Combine with pos embs

|

| 277 |

-

input_embeddings = token_embeddings + position_embeddings

|

| 278 |

-

|

| 279 |

-

# Feed through to get final output embs

|

| 280 |

-

modified_output_embeddings = get_output_embeds(input_embeddings)

|

| 281 |

-

|

| 282 |

-

print(modified_output_embeddings.shape)

|

| 283 |

-

modified_output_embeddings

|

| 284 |

-

|

| 285 |

-

"""The first few are the same, the last aren't. Everything at and after the position of the token we're replacing will be affected.

|

| 286 |

-

|

| 287 |

-

If all went well, we should see something other than a puppy when we use these to generate an image. And sure enough, we do!

|

| 288 |

-

"""

|

| 289 |

-

|

| 290 |

-

#Generating an image with these modified embeddings

|

| 291 |

-

|

| 292 |

-

def generate_with_embs(text_embeddings):

|

| 293 |

-

height = 512 # default height of Stable Diffusion

|

| 294 |

-

width = 512 # default width of Stable Diffusion

|

| 295 |

-

num_inference_steps = 30 # Number of denoising steps

|

| 296 |

-

guidance_scale = 7.5 # Scale for classifier-free guidance

|

| 297 |

-

generator = torch.manual_seed(32) # Seed generator to create the inital latent noise

|

| 298 |

-

batch_size = 1

|

| 299 |

-

|

| 300 |

-

max_length = text_input.input_ids.shape[-1]

|

| 301 |

-

uncond_input = tokenizer(

|

| 302 |

-

[""] * batch_size, padding="max_length", max_length=max_length, return_tensors="pt"

|

| 303 |

-

)

|

| 304 |

-

with torch.no_grad():

|

| 305 |

-

uncond_embeddings = text_encoder(uncond_input.input_ids.to(torch_device))[0]

|

| 306 |

-

text_embeddings = torch.cat([uncond_embeddings, text_embeddings])

|

| 307 |

-

|

| 308 |

-

# Prep Scheduler

|

| 309 |

-

set_timesteps(scheduler, num_inference_steps)

|

| 310 |

-

|

| 311 |

-

# Prep latents

|

| 312 |

-

latents = torch.randn(

|

| 313 |

-

(batch_size, unet.in_channels, height // 8, width // 8),

|

| 314 |

-

generator=generator,

|

| 315 |

-

)

|

| 316 |

-

latents = latents.to(torch_device)

|

| 317 |

-

latents = latents * scheduler.init_noise_sigma

|

| 318 |

-

|

| 319 |

-

# Loop

|

| 320 |

-

for i, t in tqdm(enumerate(scheduler.timesteps), total=len(scheduler.timesteps)):

|

| 321 |

-

# expand the latents if we are doing classifier-free guidance to avoid doing two forward passes.

|

| 322 |

-

latent_model_input = torch.cat([latents] * 2)

|

| 323 |

-

sigma = scheduler.sigmas[i]

|

| 324 |

-

latent_model_input = scheduler.scale_model_input(latent_model_input, t)

|

| 325 |

-

|

| 326 |

-

# predict the noise residual

|

| 327 |

-

with torch.no_grad():

|

| 328 |

-

noise_pred = unet(latent_model_input, t, encoder_hidden_states=text_embeddings)["sample"]

|

| 329 |

-

|

| 330 |

-

# perform guidance

|

| 331 |

-

noise_pred_uncond, noise_pred_text = noise_pred.chunk(2)

|

| 332 |

-

noise_pred = noise_pred_uncond + guidance_scale * (noise_pred_text - noise_pred_uncond)

|

| 333 |

-

|

| 334 |

-

# compute the previous noisy sample x_t -> x_t-1

|

| 335 |

-

latents = scheduler.step(noise_pred, t, latents).prev_sample

|

| 336 |

-

|

| 337 |

-

return latents_to_pil(latents)[0]

|

| 338 |

-

|

| 339 |

-

#Generating an image with these modified embeddings

|

| 340 |

-

|

| 341 |

-

def generate_with_embs_seed(text_embeddings, seed, max_length):

|

| 342 |

-

"""

|

| 343 |

-

|

| 344 |

-

Args:

|

| 345 |

-

text_embeddings:

|

| 346 |

-

seed:

|

| 347 |

-

max_length:

|

| 348 |

-

|

| 349 |

-

Returns:

|

| 350 |

-

|

| 351 |

-

"""

|

| 352 |

height = 512 # default height of Stable Diffusion

|

| 353 |

width = 512 # default width of Stable Diffusion

|

| 354 |

-

num_inference_steps =

|

| 355 |

guidance_scale = 7.5 # Scale for classifier-free guidance

|

| 356 |

-

generator = torch.manual_seed(

|

| 357 |

batch_size = 1

|

| 358 |

|

| 359 |

-

|

| 360 |

uncond_input = tokenizer(

|

| 361 |

[""] * batch_size, padding="max_length", max_length=max_length, return_tensors="pt"

|

| 362 |

)

|

|

@@ -395,420 +124,109 @@ def generate_with_embs_seed(text_embeddings, seed, max_length):

|

|

| 395 |

|

| 396 |

return latents_to_pil(latents)[0]

|

| 397 |

|

| 398 |

-

generate_with_embs(modified_output_embeddings)

|

| 399 |

-

|

| 400 |

-

"""Suprise! Now you know what token 2368 means ;)

|

| 401 |

-

|

| 402 |

-

**What can we do with this?** Why did we go to all of this trouble? Well, we'll see a more compelling use-case shortly but the tl;dr is that once we can access and modify the token embeddings we can do tricks like replacing them with something else. In the example we just did, that was just another token embedding from the model's vocabulary, equivalent to just editing the prompt. But we can also mix tokens - for example, here's a half-puppy-half-skunk:

|

| 403 |

-

"""

|

| 404 |

-

|

| 405 |

-

# In case you're wondering how to get the token for a word, or the embedding for a token:

|

| 406 |

-

prompt = 'skunk'

|

| 407 |

-

print('tokenizer(prompt):', tokenizer(prompt))

|

| 408 |

-

print('token_emb_layer([token_id]) shape:', token_emb_layer(torch.tensor([8797], device=torch_device)).shape)

|

| 409 |

-

|

| 410 |

-

prompt = 'A picture of a puppy'

|

| 411 |

-

|

| 412 |

-

# Tokenize

|

| 413 |

-

text_input = tokenizer(prompt, padding="max_length", max_length=tokenizer.model_max_length, truncation=True, return_tensors="pt")

|

| 414 |

-

input_ids = text_input.input_ids.to(torch_device)

|

| 415 |

-

|

| 416 |

-

# Get token embeddings

|

| 417 |

-

token_embeddings = token_emb_layer(input_ids)

|

| 418 |

-

|

| 419 |

-

# The new embedding. Which is now a mixture of the token embeddings for 'puppy' and 'skunk'

|

| 420 |

-

puppy_token_embedding = token_emb_layer(torch.tensor(6829, device=torch_device))

|

| 421 |

-

skunk_token_embedding = token_emb_layer(torch.tensor(42194, device=torch_device))

|

| 422 |

-

replacement_token_embedding = 0.5*puppy_token_embedding + 0.5*skunk_token_embedding

|

| 423 |

-

|

| 424 |

-

# Insert this into the token embeddings (

|

| 425 |

-

token_embeddings[0, torch.where(input_ids[0]==6829)] = replacement_token_embedding.to(torch_device)

|

| 426 |

-

|

| 427 |

-

# Combine with pos embs

|

| 428 |

-

input_embeddings = token_embeddings + position_embeddings

|

| 429 |

-

|

| 430 |

-

# Feed through to get final output embs

|

| 431 |

-

modified_output_embeddings = get_output_embeds(input_embeddings)

|

| 432 |

-

|

| 433 |

-

# Generate an image with these

|

| 434 |

-

generate_with_embs(modified_output_embeddings)

|

| 435 |

-

|

| 436 |

-

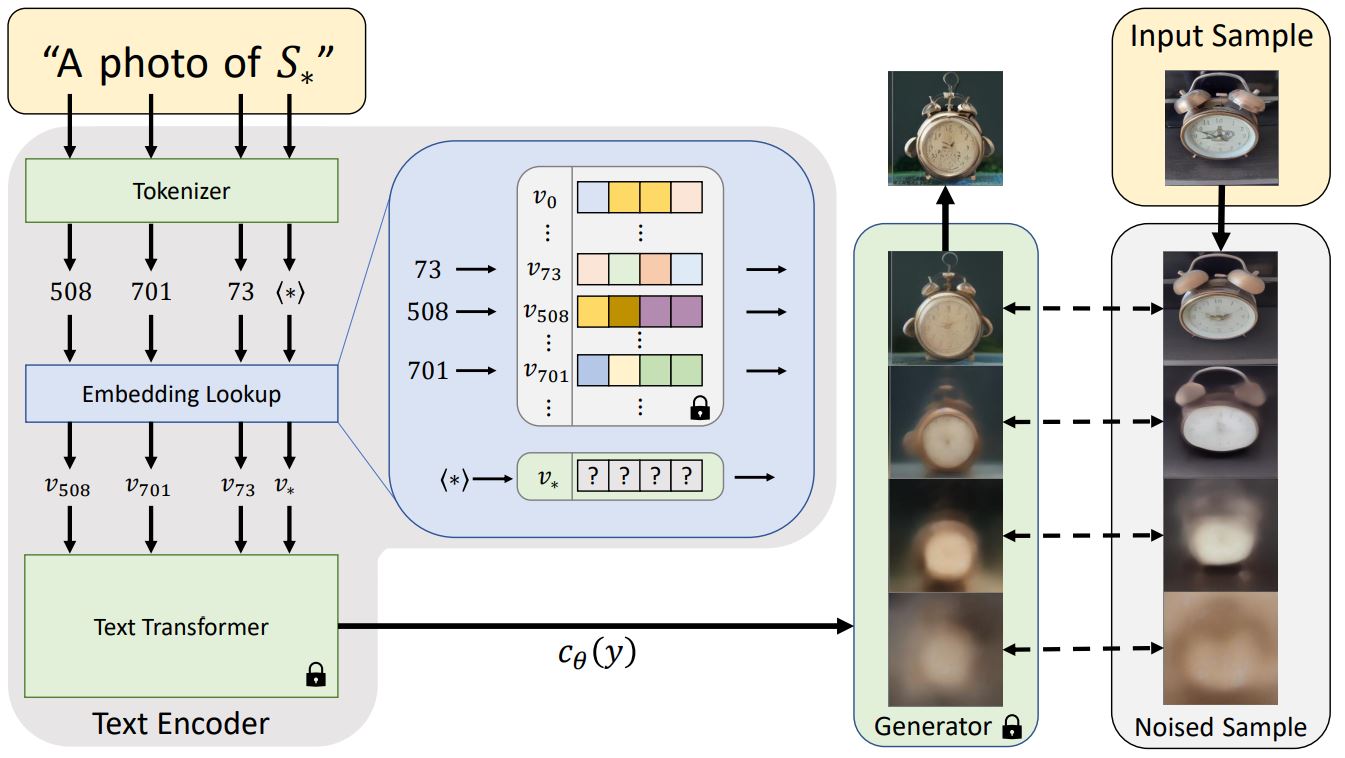

"""### Textual Inversion

|

| 437 |

-

|

| 438 |

-

OK, so we can slip in a modified token embedding, and use this to generate an image. We used the token embedding for 'cat' in the above example, but what if instead could 'learn' a new token embedding for a specific concept? This is the idea behind 'Textual Inversion', in which a few example images are used to create a new token embedding:

|

| 439 |

-

|

| 440 |

-

|

| 441 |

-

_Diagram from the [textual inversion blog post](https://textual-inversion.github.io/static/images/training/training.JPG) - note it doesn't show the positional embeddings step for simplicity_

|

| 442 |

-

|

| 443 |

-

We won't cover how this training works, but we can try loading one of these new 'concepts' from the [community-created SD concepts library](https://huggingface.co/sd-concepts-library) and see how it fits in with our example above. I'll use https://huggingface.co/sd-concepts-library/birb-style since it was the first one I made :) Download the learned_embeds.bin file from there and upload the file to wherever this notebook is before running this next cell:

|

| 444 |

-

"""

|

| 445 |

-

|

| 446 |

-

birb_embed = torch.load('learned_embeds.bin')

|

| 447 |

-

birb_embed.keys(), birb_embed['<birb-style>'].shape

|

| 448 |

-

|

| 449 |

-

"""We get a dictionary with a key (the special placeholder I used, <birb-style>) and the corresponding token embedding. As in the previous example, let's replace the 'puppy' token embedding with this and see what happens:"""

|

| 450 |

-

|

| 451 |

-

prompt = 'A mouse in the style of puppy'

|

| 452 |

-

|

| 453 |

-

# Tokenize

|

| 454 |

-

text_input = tokenizer(prompt, padding="max_length", max_length=tokenizer.model_max_length, truncation=True, return_tensors="pt")

|

| 455 |

-

input_ids = text_input.input_ids.to(torch_device)

|

| 456 |

-

|

| 457 |

-

# Get token embeddings

|

| 458 |

-

token_embeddings = token_emb_layer(input_ids)

|

| 459 |

-

|

| 460 |

-

# The new embedding - our special birb word

|

| 461 |

-

replacement_token_embedding = birb_embed['<birb-style>'].to(torch_device)

|

| 462 |

-

|

| 463 |

-

# Insert this into the token embeddings

|

| 464 |

-

token_embeddings[0, torch.where(input_ids[0]==6829)] = replacement_token_embedding.to(torch_device)

|

| 465 |

-

|

| 466 |

-

# Combine with pos embs

|

| 467 |

-

input_embeddings = token_embeddings + position_embeddings

|

| 468 |

-

|

| 469 |

-

# Feed through to get final output embs

|

| 470 |

-

modified_output_embeddings = get_output_embeds(input_embeddings)

|

| 471 |

-

|

| 472 |

-

# And generate an image with this:

|

| 473 |

-

generate_with_embs(modified_output_embeddings)

|

| 474 |

-

|

| 475 |

-

"""The token for 'puppy' was replaced with one that captures a particular style of painting, but it could just as easily represent a specific object or class of objects.

|

| 476 |

-

|

| 477 |

-

Again, there is [a nice inference notebook ](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) from hf to make it easy to use the different concepts, that properly handles using the names in prompts ("A \<cat-toy> in the style of \<birb-style>") without worrying about all this manual stuff. The goal of this notebook is to pull back the curtain a bit so you know what is going on behind the scenes :)

|

| 478 |

-

|

| 479 |

-

## Messing with Embeddings

|

| 480 |

-

|

| 481 |

-

Besides just replacing the token embedding of a single word, there are various other tricks we can try. For example, what if we create a 'chimera' by averaging the embeddings of two different prompts?

|

| 482 |

-

"""

|

| 483 |

-

|

| 484 |

-

# Embed two prompts

|

| 485 |

-

text_input1 = tokenizer(["A mouse"], padding="max_length", max_length=tokenizer.model_max_length, truncation=True, return_tensors="pt")

|

| 486 |

-

text_input2 = tokenizer(["A leopard"], padding="max_length", max_length=tokenizer.model_max_length, truncation=True, return_tensors="pt")

|

| 487 |

-

with torch.no_grad():

|

| 488 |

-

text_embeddings1 = text_encoder(text_input1.input_ids.to(torch_device))[0]

|

| 489 |

-

text_embeddings2 = text_encoder(text_input2.input_ids.to(torch_device))[0]

|

| 490 |

-

|

| 491 |

-

# Mix them together

|

| 492 |

-

mix_factor = 0.35

|

| 493 |

-

mixed_embeddings = (text_embeddings1*mix_factor + \

|

| 494 |

-

text_embeddings2*(1-mix_factor))

|

| 495 |

-

|

| 496 |

-

# Generate!

|

| 497 |

-

generate_with_embs(mixed_embeddings)

|

| 498 |

-

|

| 499 |

-

"""## The UNET and CFG

|

| 500 |

-

|

| 501 |

-

Now it's time we looked at the actual diffusion model. This is typically a Unet that takes in the noisy latents (x) and predicts the noise. We use a conditional model that also takes in the timestep (t) and our text embedding (aka encoder_hidden_states) as conditioning. Feeding all of these into the model looks like this:

|

| 502 |

-

`noise_pred = unet(latents, t, encoder_hidden_states=text_embeddings)["sample"]`

|

| 503 |

-

|

| 504 |

-

We can try it out and see what the output looks like:

|

| 505 |

-

"""

|

| 506 |

-

|

| 507 |

-

# Prep Scheduler

|

| 508 |

-

set_timesteps(scheduler, num_inference_steps)

|

| 509 |

-

|

| 510 |

-

# What is our timestep

|

| 511 |

-

t = scheduler.timesteps[0]

|

| 512 |

-

sigma = scheduler.sigmas[0]

|

| 513 |

-

|

| 514 |

-

# A noisy latent

|

| 515 |

-

latents = torch.randn(

|

| 516 |

-

(batch_size, unet.in_channels, height // 8, width // 8),

|

| 517 |

-

generator=generator,

|

| 518 |

-

)

|

| 519 |

-

latents = latents.to(torch_device)

|

| 520 |

-

latents = latents * scheduler.init_noise_sigma

|

| 521 |

-

|

| 522 |

-

# Text embedding

|

| 523 |

-

text_input = tokenizer(['A macaw'], padding="max_length", max_length=tokenizer.model_max_length, truncation=True, return_tensors="pt")

|

| 524 |

-

with torch.no_grad():

|

| 525 |

-

text_embeddings = text_encoder(text_input.input_ids.to(torch_device))[0]

|

| 526 |

-

|

| 527 |

-

# Run this through the unet to predict the noise residual

|

| 528 |

-

with torch.no_grad():

|

| 529 |

-

noise_pred = unet(latents, t, encoder_hidden_states=text_embeddings)["sample"]

|

| 530 |

-

|

| 531 |

-

latents.shape, noise_pred.shape # We get preds in the same shape as the input

|

| 532 |

-

|

| 533 |

-

"""Given a set of noisy latents, the model predicts the noise component. We can remove this noise from the noisy latents to see what the output image looks like (`latents_x0 = latents - sigma * noise_pred`). And we can add most of the noise back to this predicted output to get the (slightly less noisy hopefully) input for the next diffusion step. To visualize this let's generate another image, saving both the predicted output (x0) and the next step (xt-1) after every step:"""

|

| 534 |

-

|

| 535 |

-

prompt = 'Oil painting of an otter in a top hat'

|

| 536 |

-

height = 512

|

| 537 |

-

width = 512

|

| 538 |

-

num_inference_steps = 50

|

| 539 |

-

guidance_scale = 8

|

| 540 |

-

generator = torch.manual_seed(32)

|

| 541 |

-

batch_size = 1

|

| 542 |

-

|

| 543 |

-

# Make a folder to store results

|

| 544 |

-

#!rm -rf steps/

|

| 545 |

-

#!mkdir -p steps/

|

| 546 |

-

|

| 547 |

-

# Prep text

|

| 548 |

-

text_input = tokenizer([prompt], padding="max_length", max_length=tokenizer.model_max_length, truncation=True, return_tensors="pt")

|

| 549 |

-

with torch.no_grad():

|

| 550 |

-

text_embeddings = text_encoder(text_input.input_ids.to(torch_device))[0]

|

| 551 |

-

max_length = text_input.input_ids.shape[-1]

|

| 552 |

-

uncond_input = tokenizer(

|

| 553 |

-

[""] * batch_size, padding="max_length", max_length=max_length, return_tensors="pt"

|

| 554 |

-

)

|

| 555 |

-

with torch.no_grad():

|

| 556 |

-

uncond_embeddings = text_encoder(uncond_input.input_ids.to(torch_device))[0]

|

| 557 |

-

text_embeddings = torch.cat([uncond_embeddings, text_embeddings])

|

| 558 |

-

|

| 559 |

# Prep Scheduler

|

| 560 |

-

set_timesteps(scheduler, num_inference_steps)

|

| 561 |

-

|

| 562 |

-

#

|

| 563 |

-

latents = torch.randn(

|

| 564 |

-

(batch_size, unet.in_channels, height // 8, width // 8),

|

| 565 |

-

generator=generator,

|

| 566 |

-

)

|

| 567 |

-

latents = latents.to(torch_device)

|

| 568 |

-

latents = latents * scheduler.init_noise_sigma

|

| 569 |

-

|

| 570 |

-

# Loop

|

| 571 |

-

for i, t in tqdm(enumerate(scheduler.timesteps), total=len(scheduler.timesteps)):

|

| 572 |

-

# expand the latents if we are doing classifier-free guidance to avoid doing two forward passes.

|

| 573 |

-

latent_model_input = torch.cat([latents] * 2)

|

| 574 |

-

sigma = scheduler.sigmas[i]

|

| 575 |

-

latent_model_input = scheduler.scale_model_input(latent_model_input, t)

|

| 576 |

-

|

| 577 |

-

# predict the noise residual

|

| 578 |

-

with torch.no_grad():

|

| 579 |

-

noise_pred = unet(latent_model_input, t, encoder_hidden_states=text_embeddings)["sample"]

|

| 580 |

-

|

| 581 |

-

# perform guidance

|

| 582 |

-

noise_pred_uncond, noise_pred_text = noise_pred.chunk(2)

|

| 583 |

-

noise_pred = noise_pred_uncond + guidance_scale * (noise_pred_text - noise_pred_uncond)

|

| 584 |

-

|

| 585 |

-

# Get the predicted x0:

|

| 586 |

-

# latents_x0 = latents - sigma * noise_pred # Calculating ourselves

|

| 587 |

-

scheduler_step = scheduler.step(noise_pred, t, latents)

|

| 588 |

-

latents_x0 = scheduler_step.pred_original_sample # Using the scheduler (Diffusers 0.4 and above)

|

| 589 |

-

|

| 590 |

-

# compute the previous noisy sample x_t -> x_t-1

|

| 591 |

-

latents = scheduler_step.prev_sample

|

| 592 |

-

|

| 593 |

-

# To PIL Images

|

| 594 |

-

im_t0 = latents_to_pil(latents_x0)[0]

|

| 595 |

-

im_next = latents_to_pil(latents)[0]

|

| 596 |

-

|

| 597 |

-

# Combine the two images and save for later viewing

|

| 598 |

-

im = Image.new('RGB', (1024, 512))

|

| 599 |

-

im.paste(im_next, (0, 0))

|

| 600 |

-

im.paste(im_t0, (512, 0))

|

| 601 |

-

im.save(f'steps/{i:04}.jpeg')

|

| 602 |

-

|

| 603 |

-

# Make and show the progress video (change width to 1024 for full res)

|

| 604 |

-

#!ffmpeg -v 1 -y -f image2 -framerate 12 -i steps/%04d.jpeg -c:v libx264 -preset slow -qp 18 -pix_fmt yuv420p out.mp4

|

| 605 |

-

#mp4 = open('out.mp4','rb').read()

|

| 606 |

-

#data_url = "data:video/mp4;base64," + b64encode(mp4).decode()

|

| 607 |

-

#HTML("""

|

| 608 |

-

#<video width=600 controls>

|

| 609 |

-

# <source src="%s" type="video/mp4">

|

| 610 |

-

#</video>

|

| 611 |

-

#""" % data_url)

|

| 612 |

|

| 613 |

-

|

|

|

|

|

|

|

|

|

|

| 614 |

|

|

|

|

|

|

|

| 615 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 616 |

|

| 617 |

-

#

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 618 |

|

|

|

|

|

|

|

| 619 |

|

|

|

|

|

|

|

|

|

|

| 620 |

|

|

|

|

|

|

|

|

|

|

|

|

|

| 621 |

|

| 622 |

-

|

| 623 |

-

|

| 624 |

-

|

| 625 |

-

return error

|

| 626 |

|

| 627 |

-

|

| 628 |

-

|

| 629 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 630 |

|

| 631 |

-

|

| 632 |

-

|

| 633 |

-

The images are assumed to be in RGB format.

|

| 634 |

|

| 635 |

-

|

| 636 |

-

|

| 637 |

-

"""

|

| 638 |

-

# Define the target RGB values for the color orange

|

| 639 |

-

target_orange = torch.tensor([255/255, 200/255, 0/255]).view(1, 3, 1, 1).to(images.device) # (R, G, B)

|

| 640 |

|

| 641 |

-

#

|

| 642 |

-

|

|

|

|

| 643 |

|

| 644 |

-

# Calculate the mean absolute error between the RGB values and the target orange values

|

| 645 |

-

error = torch.abs(images - target_orange).mean()

|

| 646 |

|

|

|

|

|

|

|

|

|

|

| 647 |

return error

|

| 648 |

|

| 649 |

-

|

| 650 |

-

|

| 651 |

-

prompt = 'A campfire (oil on canvas)' #@param

|

| 652 |

-

height = 512 # default height of Stable Diffusion

|

| 653 |

-

width = 512 # default width of Stable Diffusion

|

| 654 |

-

num_inference_steps = 50 #@param # Number of denoising steps

|

| 655 |

-

guidance_scale = 8 #@param # Scale for classifier-free guidance

|

| 656 |

-

generator = torch.manual_seed(32) # Seed generator to create the inital latent noise

|

| 657 |

-

batch_size = 1

|

| 658 |

-

orange_loss_scale = 200 #@param

|

| 659 |

-

|

| 660 |

-

# Prep text

|

| 661 |

-

text_input = tokenizer([prompt], padding="max_length", max_length=tokenizer.model_max_length, truncation=True, return_tensors="pt")

|

| 662 |

-

with torch.no_grad():

|

| 663 |

-

text_embeddings = text_encoder(text_input.input_ids.to(torch_device))[0]

|

| 664 |

-

|

| 665 |

-

# And the uncond. input as before:

|

| 666 |

-

max_length = text_input.input_ids.shape[-1]

|

| 667 |

-

uncond_input = tokenizer(

|

| 668 |

-

[""] * batch_size, padding="max_length", max_length=max_length, return_tensors="pt"

|

| 669 |

-

)

|

| 670 |

-

with torch.no_grad():

|

| 671 |

-

uncond_embeddings = text_encoder(uncond_input.input_ids.to(torch_device))[0]

|

| 672 |

-

text_embeddings = torch.cat([uncond_embeddings, text_embeddings])

|

| 673 |

-

|

| 674 |

-

# Prep Scheduler

|

| 675 |

-

set_timesteps(scheduler, num_inference_steps)

|

| 676 |

-

|

| 677 |

-

# Prep latents

|

| 678 |

-

latents = torch.randn(

|

| 679 |

-

(batch_size, unet.in_channels, height // 8, width // 8),

|

| 680 |

-

generator=generator,

|

| 681 |

-

)

|

| 682 |

-

latents = latents.to(torch_device)

|

| 683 |

-

latents = latents * scheduler.init_noise_sigma

|

| 684 |

-

|

| 685 |

-

# Loop

|

| 686 |

-

for i, t in tqdm(enumerate(scheduler.timesteps), total=len(scheduler.timesteps)):

|

| 687 |

-

# expand the latents if we are doing classifier-free guidance to avoid doing two forward passes.

|

| 688 |

-

latent_model_input = torch.cat([latents] * 2)

|

| 689 |

-

sigma = scheduler.sigmas[i]

|

| 690 |

-

latent_model_input = scheduler.scale_model_input(latent_model_input, t)

|

| 691 |

-

|

| 692 |

-

# predict the noise residual

|

| 693 |

-

with torch.no_grad():

|

| 694 |

-

noise_pred = unet(latent_model_input, t, encoder_hidden_states=text_embeddings)["sample"]

|

| 695 |

-

|

| 696 |

-

# perform CFG

|

| 697 |

-

noise_pred_uncond, noise_pred_text = noise_pred.chunk(2)

|

| 698 |

-

noise_pred = noise_pred_uncond + guidance_scale * (noise_pred_text - noise_pred_uncond)

|

| 699 |

-

|

| 700 |

-

#### ADDITIONAL GUIDANCE ###

|

| 701 |

-

if i%5 == 0:

|

| 702 |

-

# Requires grad on the latents

|

| 703 |

-

latents = latents.detach().requires_grad_()

|

| 704 |

-

|

| 705 |

-

# Get the predicted x0:

|

| 706 |

-

latents_x0 = latents - sigma * noise_pred

|

| 707 |

-

# latents_x0 = scheduler.step(noise_pred, t, latents).pred_original_sample

|

| 708 |

-

|

| 709 |

-

# Decode to image space

|

| 710 |

-

denoised_images = vae.decode((1 / 0.18215) * latents_x0).sample / 2 + 0.5 # range (0, 1)

|

| 711 |

-

|

| 712 |

-

# Calculate loss

|

| 713 |

-

loss = blue_loss(denoised_images) * orange_loss_scale

|

| 714 |

-

|

| 715 |

-

# Occasionally print it out

|

| 716 |

-

if i%10==0:

|

| 717 |

-

print(i, 'loss:', loss.item())

|

| 718 |

-

|

| 719 |

-

# Get gradient

|

| 720 |

-

cond_grad = torch.autograd.grad(loss, latents)[0]

|

| 721 |

-

|

| 722 |

-

# Modify the latents based on this gradient

|

| 723 |

-

latents = latents.detach() - cond_grad * sigma**2

|

| 724 |

-

|

| 725 |

-

# Now step with scheduler

|

| 726 |

-

latents = scheduler.step(noise_pred, t, latents).prev_sample

|

| 727 |

-

|

| 728 |

|

| 729 |

-

|

| 730 |

-

|

| 731 |

-

|

| 732 |

-

|

| 733 |

-

|

| 734 |

-

text_input = tokenizer(prompt, padding="max_length", max_length=tokenizer.model_max_length, truncation=True, return_tensors="pt")

|

| 735 |

-

text_input

|

| 736 |

-

input_ids = text_input.input_ids.to(torch_device)

|

| 737 |

-

|

| 738 |

-

# Get token embeddings

|

| 739 |

-

token_embeddings = token_emb_layer(input_ids)

|

| 740 |

-

|

| 741 |

-

# The new embedding - our special birb word

|

| 742 |

-

replacement_token_embedding = birb_embed['<birb-style>'].to(torch_device)

|

| 743 |

-

|

| 744 |

-

# Insert this into the token embeddings

|

| 745 |

-

token_embeddings[0, torch.where(input_ids[0]==6829)] = replacement_token_embedding.to(torch_device)

|

| 746 |

-

|

| 747 |

-

# Combine with pos embs

|

| 748 |

-

input_embeddings = token_embeddings + position_embeddings

|

| 749 |

-

|

| 750 |

-

# Feed through to get final output embs

|

| 751 |

-

modified_output_embeddings = get_output_embeds(input_embeddings)

|

| 752 |

-

|

| 753 |

-

# And generate an image with this:

|

| 754 |

-

generate_with_embs(modified_output_embeddings)

|

| 755 |

-

|

| 756 |

-

text_input, input_ids,token_embeddings

|

| 757 |

-

|

| 758 |

-

def generate_loss(modified_output_embeddings, seed, max_length):

|

| 759 |

-

# prompt = 'A campfire (oil on canvas)' #@param

|

| 760 |

-

height = 512 # default height of Stable Diffusion

|

| 761 |

-

width = 512 # default width of Stable Diffusion

|

| 762 |

-

num_inference_steps = 50 #@param # Number of denoising steps

|

| 763 |

-

guidance_scale = 8 #@param # Scale for classifier-free guidance

|

| 764 |

-

generator = torch.manual_seed(32) # Seed generator to create the initial latent noise

|

| 765 |

batch_size = 1

|

| 766 |

-

|

| 767 |

-

|

| 768 |

-

# Prep text

|

| 769 |

-

# text_input = tokenizer([""] * batch_size, padding="max_length", max_length=tokenizer.model_max_length, truncation=True, return_tensors="pt")

|

| 770 |

-

|

| 771 |

-

#input_ids = text_input.input_ids.to(torch_device)

|

| 772 |

-

# Get token embeddings

|

| 773 |

-

#token_embeddings = token_emb_layer(input_ids)

|

| 774 |

-

|

| 775 |

-

# The new embedding - our special birb word

|

| 776 |

-

#replacement_token_embedding = birb_embed['<birb-style>'].to(torch_device)

|

| 777 |

-

# Insert this into the token embeddings

|

| 778 |

-

#indices = torch.where(input_ids[0] == 6829)[0]

|

| 779 |

-

#token_embeddings[0, indices] = replacement_token_embedding.expand_as(token_embeddings[0, indices])

|

| 780 |

-

|

| 781 |

-

# Combine with pos embs

|

| 782 |

-

#input_embeddings = token_embeddings + position_embeddings

|

| 783 |

-

|

| 784 |

-

# Pass the modified embeddings to the text encoder

|

| 785 |

-

#with torch.no_grad():

|

| 786 |

-

# text_embeddings = text_encoder(inputs_embeds=input_embeddings)[0]

|

| 787 |

|

| 788 |

-

# And the uncond. input as before:

|

| 789 |

-

# max_length = input_ids.shape[-1]

|

| 790 |

uncond_input = tokenizer(

|

| 791 |

[""] * batch_size, padding="max_length", max_length=max_length, return_tensors="pt"

|

| 792 |

)

|

| 793 |

with torch.no_grad():

|

| 794 |

uncond_embeddings = text_encoder(uncond_input.input_ids.to(torch_device))[0]

|

| 795 |

-

|

| 796 |

-

if uncond_embeddings.shape != modified_output_embeddings.shape:

|

| 797 |

-

raise ValueError(f"Shape mismatch: uncond_embeddings {uncond_embeddings.shape} vs modified_output_embeddings {modified_output_embeddings.shape}")

|

| 798 |

-

|

| 799 |

-

text_embeddings = torch.cat([uncond_embeddings, modified_output_embeddings])

|

| 800 |

|

| 801 |

# Prep Scheduler

|

| 802 |

-

set_timesteps(scheduler, num_inference_steps)

|

| 803 |

|

| 804 |

# Prep latents

|

| 805 |

latents = torch.randn(

|

| 806 |

-

|

| 807 |

-

|

| 808 |

)

|

| 809 |

latents = latents.to(torch_device)

|

| 810 |

latents = latents * scheduler.init_noise_sigma

|

| 811 |

|

|

|

|

|

|

|

| 812 |

# Loop

|

| 813 |

for i, t in tqdm(enumerate(scheduler.timesteps), total=len(scheduler.timesteps)):

|

| 814 |

# expand the latents if we are doing classifier-free guidance to avoid doing two forward passes.

|

|

@@ -824,143 +242,67 @@ def generate_loss(modified_output_embeddings, seed, max_length):

|

|

| 824 |

noise_pred_uncond, noise_pred_text = noise_pred.chunk(2)

|

| 825 |

noise_pred = noise_pred_uncond + guidance_scale * (noise_pred_text - noise_pred_uncond)

|

| 826 |

|

| 827 |

-

|

| 828 |

-

if i

|

| 829 |

# Requires grad on the latents

|

| 830 |

latents = latents.detach().requires_grad_()

|

| 831 |

|

| 832 |

# Get the predicted x0:

|

| 833 |

-

|

| 834 |

-

|

| 835 |

|

| 836 |

# Decode to image space

|

| 837 |

denoised_images = vae.decode((1 / 0.18215) * latents_x0).sample / 2 + 0.5 # range (0, 1)

|

| 838 |

|

|

|

|

| 839 |

# Calculate loss

|

| 840 |

-

loss =

|

| 841 |

|

| 842 |

# Occasionally print it out

|

| 843 |

-

if i

|

| 844 |

-

|

| 845 |

|

| 846 |

# Get gradient

|

| 847 |

cond_grad = torch.autograd.grad(loss, latents)[0]

|

| 848 |

|

| 849 |

# Modify the latents based on this gradient

|

| 850 |

-

latents = latents.detach() - cond_grad * sigma

|

|

|

|

|

|

|

|

|

|

| 851 |

|

| 852 |

# Now step with scheduler

|

| 853 |

latents = scheduler.step(noise_pred, t, latents).prev_sample

|

| 854 |

-

|

| 855 |

-

|

| 856 |

-

image = latents_to_pil(latents)[0]

|

| 857 |

-

image.show()

|

| 858 |

-

return image

|

| 859 |

-

|

| 860 |

-

def generate_loss_style(prompt, style_embed, style_seed):

|

| 861 |

-

# Tokenize

|

| 862 |

-

text_input = tokenizer(prompt, padding="max_length", max_length=tokenizer.model_max_length, truncation=True, return_tensors="pt")

|

| 863 |

-

input_ids = text_input.input_ids.to(torch_device)

|

| 864 |

-

|

| 865 |

-

# Get token embeddings

|

| 866 |

-

token_embeddings = token_emb_layer(input_ids)

|

| 867 |

-

if isinstance(style_embed, dict):

|

| 868 |

-

style_embed = style_embed['<gartic-phone>']

|

| 869 |

-

|

| 870 |

-

# The new embedding - our special birb word

|

| 871 |

-

replacement_token_embedding = style_embed.to(torch_device)

|

| 872 |

-

# Assuming token_embeddings has shape [batch_size, seq_length, embedding_dim]

|

| 873 |

-

replacement_token_embedding = replacement_token_embedding[:768] # Adjust the size

|

| 874 |

-

replacement_token_embedding = replacement_token_embedding.unsqueeze(0) # Make it [1, 768] if necessary

|

| 875 |

-

indices = torch.where(input_ids[0] == 6829)[0] # Extract indices where the condition is True

|

| 876 |

-

print(f"indices: {indices}") # Debug print

|

| 877 |

-

for index in indices:

|

| 878 |

-

print(f"index: {index}") # Debug print

|

| 879 |

-

token_embeddings[0, index] = replacement_token_embedding.to(torch_device) # Update each index

|

| 880 |

-

|

| 881 |

-

# Insert this into the token embeddings

|

| 882 |

-

# token_embeddings[0, torch.where(input_ids[0]==6829)] = replacement_token_embedding.to(torch_device)

|

| 883 |

-

|

| 884 |

-

# Combine with pos embs

|

| 885 |

-

input_embeddings = token_embeddings + position_embeddings

|

| 886 |

-

|

| 887 |

-

# Feed through to get final output embs

|

| 888 |

-

modified_output_embeddings = get_output_embeds(input_embeddings)

|

| 889 |

-

|

| 890 |

-

# And generate an image with this:

|

| 891 |

-

max_length = text_input.input_ids.shape[-1]

|

| 892 |

-

return generate_loss(modified_output_embeddings, style_seed,max_length)

|

| 893 |

-

|

| 894 |

-

def generate_embed_style(prompt, learned_style, seed):

|

| 895 |

-

# prompt = 'A campfire (oil on canvas)' #@param

|

| 896 |

-

height = 512 # default height of Stable Diffusion

|

| 897 |

-

width = 512 # default width of Stable Diffusion

|

| 898 |

-

num_inference_steps = 50 #@param # Number of denoising steps

|

| 899 |

-

guidance_scale = 8 #@param # Scale for classifier-free guidance

|

| 900 |

-

generator = torch.manual_seed(32) # Seed generator to create the initial latent noise

|

| 901 |

-

batch_size = 1

|

| 902 |

-

blue_loss_scale = 200 #@param

|

| 903 |

-

if isinstance(learned_style, dict):

|

| 904 |

-

learned_style = learned_style['<gartic-phone>']

|

| 905 |

-

|

| 906 |

-

# Prep text

|

| 907 |

-

text_input = tokenizer([prompt], padding="max_length", max_length=tokenizer.model_max_length, truncation=True, return_tensors="pt")

|

| 908 |

-

|

| 909 |

-

input_ids = text_input.input_ids.to(torch_device)

|

| 910 |

-

# Get token embeddings

|

| 911 |

-

token_embeddings = text_encoder.get_input_embeddings()(input_ids)

|

| 912 |

-

|

| 913 |

-

# The new embedding - our special birb word

|

| 914 |

-

replacement_token_embedding = learned_style.to(torch_device)

|

| 915 |

-

replacement_token_embedding = replacement_token_embedding[:768] # Adjust the size

|

| 916 |

-

replacement_token_embedding = replacement_token_embedding.unsqueeze(0) # Make it [1, 768] if necessary

|

| 917 |

-

# Insert this into the token embeddings

|

| 918 |

-

indices = torch.where(input_ids[0] == 6829)[0]

|

| 919 |

-

for index in indices:

|

| 920 |

-

token_embeddings[0, index] = replacement_token_embedding.to(torch_device)

|

| 921 |

-

# Combine with pos embs

|

| 922 |

-

position_ids = torch.arange(token_embeddings.shape[1], dtype=torch.long, device=torch_device)

|

| 923 |

-

position_ids = position_ids.unsqueeze(0).expand_as(input_ids)

|

| 924 |

-

position_ids = text_encoder.text_model.embeddings.position_ids[:, :77]

|

| 925 |

-

position_embeddings = pos_emb_layer(position_ids)

|

| 926 |

-

#position_embeddings = text_encoder.get_position_embeddings()(position_ids)

|

| 927 |

-

input_embeddings = token_embeddings + position_embeddings

|

| 928 |

-

# Feed through to get final output embs

|

| 929 |

-

modified_output_embeddings = get_output_embeds(input_embeddings)

|

| 930 |

-

# And generate an image with this:

|

| 931 |

-

max_length = text_input.input_ids.shape[-1]

|

| 932 |

-

emb_seed = generate_with_embs_seed(modified_output_embeddings, seed, max_length)

|

| 933 |

-

#generate_loss_details = generate_loss(modified_output_embeddings, seed, max_length)

|

| 934 |

-

return emb_seed

|

| 935 |

-

# And generate an , generateimage with this:

|

| 936 |

-

|

| 937 |

|

| 938 |

|

| 939 |

def generate_image_from_prompt(text_in, style_in):

|

| 940 |

|

| 941 |

-

|

| 942 |

-

|

| 943 |

-

dict_styles = {'<gartic-phone>':'learned_embeds_gartic-phone.bin',

|

| 944 |

-

'<hawaiian shirt>':'learned_embeds_hawaiian-shirt.bin',

|

| 945 |

-

'<gp>': 'learned_embeds_phone01.bin',

|

| 946 |

-

'<style-spdmn>':'learned_embeds_style-spdmn.bin',

|

| 947 |

-

'<yvmqznrm>': 'learned_embedssd_yvmqznrm.bin'}

|

| 948 |

|

| 949 |

-

|

| 950 |

-

|

| 951 |

-

|

| 952 |

-

|

| 953 |

-

|

| 954 |

-

|

| 955 |

-

|

| 956 |

-

|

| 957 |

-

|

| 958 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

| 959 |

|

| 960 |

-

|

| 961 |

|

| 962 |

-

|

| 963 |

|

|

|

|

|

|

|

| 964 |

|

| 965 |

# Define Interface

|

| 966 |

title = 'Stable Diffusion Art Generator'

|

|

@@ -989,4 +331,3 @@ demo = gr.Interface(generate_image_from_prompt,

|

|

| 989 |

)

|

| 990 |

|

| 991 |

demo.launch(debug=True)

|

| 992 |

-

|

|

|

|

| 1 |

import gradio as gr

|

| 2 |

from base64 import b64encode

|

|

|

|

| 3 |

import numpy

|

| 4 |

import torch

|

| 5 |

from diffusers import AutoencoderKL, LMSDiscreteScheduler, UNet2DConditionModel

|

|

|

|

|

|

|

|

|

|

| 6 |

from PIL import Image

|

| 7 |

from torch import autocast

|

| 8 |

from torchvision import transforms as tfms

|

|

|

|

| 11 |

import torchvision.transforms as T

|

| 12 |

|

| 13 |

torch.manual_seed(1)

|

|

|

|

|

|

|

|

|

|

| 14 |

logging.set_verbosity_error()

|

|

|

|

| 15 |

torch_device = "cpu"

|

| 16 |

|

|

|

|

|

|

|

| 17 |

vae = AutoencoderKL.from_pretrained("CompVis/stable-diffusion-v1-4", subfolder="vae")

|

| 18 |

|

| 19 |

# Load the tokenizer and text encoder to tokenize and encode the text.

|

|

|

|

| 26 |

# The noise scheduler

|

| 27 |

scheduler = LMSDiscreteScheduler(beta_start=0.00085, beta_end=0.012, beta_schedule="scaled_linear", num_train_timesteps=1000)

|

| 28 |

|

|

|

|

| 29 |

vae = vae.to(torch_device)

|

| 30 |

text_encoder = text_encoder.to(torch_device)

|

| 31 |

unet = unet.to(torch_device);

|

| 32 |

|

| 33 |

+

token_emb_layer = text_encoder.text_model.embeddings.token_embedding

|

| 34 |

+

pos_emb_layer = text_encoder.text_model.embeddings.position_embedding

|

| 35 |

+

position_ids = text_encoder.text_model.embeddings.position_ids[:, :77]

|

| 36 |

+

position_embeddings = pos_emb_layer(position_ids)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 37 |

|

| 38 |

def pil_to_latent(input_im):

|

| 39 |

# Single image -> single latent in a batch (so size 1, 4, 64, 64)

|

|

|

|

| 52 |

pil_images = [Image.fromarray(image) for image in images]

|

| 53 |

return pil_images

|

| 54 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 55 |

def get_output_embeds(input_embeddings):

|

| 56 |

# CLIP's text model uses causal mask, so we prepare it here:

|

| 57 |

bsz, seq_len = input_embeddings.shape[:2]

|

|

|

|

| 77 |

# And now they're ready!

|

| 78 |

return output

|

| 79 |

|

| 80 |

+

def generate_with_embs(text_embeddings, seed, max_length):

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 81 |

height = 512 # default height of Stable Diffusion

|

| 82 |

width = 512 # default width of Stable Diffusion

|

| 83 |

+

num_inference_steps = 10 # Number of denoising steps

|

| 84 |

guidance_scale = 7.5 # Scale for classifier-free guidance

|

| 85 |

+

generator = torch.manual_seed(seed) # Seed generator to create the inital latent noise

|

| 86 |

batch_size = 1

|

| 87 |

|

| 88 |

+

# max_length = text_input.input_ids.shape[-1]

|

| 89 |

uncond_input = tokenizer(

|

| 90 |

[""] * batch_size, padding="max_length", max_length=max_length, return_tensors="pt"

|

| 91 |

)

|

|

|

|

| 124 |

|

| 125 |

return latents_to_pil(latents)[0]

|

| 126 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|