diff --git a/.gitattributes b/.gitattributes

new file mode 100644

index 0000000000000000000000000000000000000000..ec4a626fbb7799f6a25b45fb86344b2bf7b37e64

--- /dev/null

+++ b/.gitattributes

@@ -0,0 +1 @@

+*.pth filter=lfs diff=lfs merge=lfs -text

diff --git a/.gitignore b/.gitignore

new file mode 100644

index 0000000000000000000000000000000000000000..42783cfc4c6e2cb42013158f42feb68ac3331ea2

--- /dev/null

+++ b/.gitignore

@@ -0,0 +1,141 @@

+# ignored folders

+venv/*

+datasets/*

+experiments/*

+results/*

+tb_logger/*

+wandb/*

+tmp/*

+

+

+version.py

+

+# Byte-compiled / optimized / DLL files

+__pycache__/

+*.py[cod]

+*$py.class

+

+# C extensions

+*.so

+

+# Distribution / packaging

+.Python

+build/

+develop-eggs/

+dist/

+downloads/

+eggs/

+.eggs/

+lib/

+lib64/

+parts/

+sdist/

+var/

+wheels/

+pip-wheel-metadata/

+share/python-wheels/

+*.egg-info/

+.installed.cfg

+*.egg

+MANIFEST

+

+# PyInstaller

+# Usually these files are written by a python script from a template

+# before PyInstaller builds the exe, so as to inject date/other infos into it.

+*.manifest

+*.spec

+

+# Installer logs

+pip-log.txt

+pip-delete-this-directory.txt

+

+# Unit test / coverage reports

+htmlcov/

+.tox/

+.nox/

+.coverage

+.coverage.*

+.cache

+nosetests.xml

+coverage.xml

+*.cover

+*.py,cover

+.hypothesis/

+.pytest_cache/

+

+# Translations

+*.mo

+*.pot

+

+# Django stuff:

+*.log

+local_settings.py

+db.sqlite3

+db.sqlite3-journal

+

+# Flask stuff:

+instance/

+.webassets-cache

+

+# Scrapy stuff:

+.scrapy

+

+# Sphinx documentation

+docs/_build/

+

+# PyBuilder

+target/

+

+# Jupyter Notebook

+.ipynb_checkpoints

+

+# IPython

+profile_default/

+ipython_config.py

+

+# pyenv

+.python-version

+

+# pipenv

+# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

+# However, in case of collaboration, if having platform-specific dependencies or dependencies

+# having no cross-platform support, pipenv may install dependencies that don't work, or not

+# install all needed dependencies.

+#Pipfile.lock

+

+# PEP 582; used by e.g. github.com/David-OConnor/pyflow

+__pypackages__/

+

+# Celery stuff

+celerybeat-schedule

+celerybeat.pid

+

+# SageMath parsed files

+*.sage.py

+

+# Environments

+.env

+.venv

+env/

+venv/

+ENV/

+env.bak/

+venv.bak/

+

+# Spyder project settings

+.spyderproject

+.spyproject

+

+# Rope project settings

+.ropeproject

+

+# mkdocs documentation

+/site

+

+# mypy

+.mypy_cache/

+.dmypy.json

+dmypy.json

+

+# Pyre type checker

+.pyre/

diff --git a/.pre-commit-config.yaml b/.pre-commit-config.yaml

new file mode 100644

index 0000000000000000000000000000000000000000..d221d29fbaac74bef1c0cd910ce8d8b6526181b8

--- /dev/null

+++ b/.pre-commit-config.yaml

@@ -0,0 +1,46 @@

+repos:

+ # flake8

+ - repo: https://github.com/PyCQA/flake8

+ rev: 3.8.3

+ hooks:

+ - id: flake8

+ args: ["--config=setup.cfg", "--ignore=W504, W503"]

+

+ # modify known_third_party

+ - repo: https://github.com/asottile/seed-isort-config

+ rev: v2.2.0

+ hooks:

+ - id: seed-isort-config

+

+ # isort

+ - repo: https://github.com/timothycrosley/isort

+ rev: 5.2.2

+ hooks:

+ - id: isort

+

+ # yapf

+ - repo: https://github.com/pre-commit/mirrors-yapf

+ rev: v0.30.0

+ hooks:

+ - id: yapf

+

+ # codespell

+ - repo: https://github.com/codespell-project/codespell

+ rev: v2.1.0

+ hooks:

+ - id: codespell

+

+ # pre-commit-hooks

+ - repo: https://github.com/pre-commit/pre-commit-hooks

+ rev: v3.2.0

+ hooks:

+ - id: trailing-whitespace # Trim trailing whitespace

+ - id: check-yaml # Attempt to load all yaml files to verify syntax

+ - id: check-merge-conflict # Check for files that contain merge conflict strings

+ - id: double-quote-string-fixer # Replace double quoted strings with single quoted strings

+ - id: end-of-file-fixer # Make sure files end in a newline and only a newline

+ - id: requirements-txt-fixer # Sort entries in requirements.txt and remove incorrect entry for pkg-resources==0.0.0

+ - id: fix-encoding-pragma # Remove the coding pragma: # -*- coding: utf-8 -*-

+ args: ["--remove"]

+ - id: mixed-line-ending # Replace or check mixed line ending

+ args: ["--fix=lf"]

diff --git a/.vscode/settings.json b/.vscode/settings.json

new file mode 100644

index 0000000000000000000000000000000000000000..b12635534688a8a8c69033d81fad96ef734ea6bb

--- /dev/null

+++ b/.vscode/settings.json

@@ -0,0 +1,19 @@

+{

+ "files.trimTrailingWhitespace": true,

+ "editor.wordWrap": "on",

+ "editor.rulers": [

+ 80,

+ 120

+ ],

+ "editor.renderWhitespace": "all",

+ "editor.renderControlCharacters": true,

+ "python.formatting.provider": "yapf",

+ "python.formatting.yapfArgs": [

+ "--style",

+ "{BASED_ON_STYLE = pep8, BLANK_LINE_BEFORE_NESTED_CLASS_OR_DEF = true, SPLIT_BEFORE_EXPRESSION_AFTER_OPENING_PAREN = true, COLUMN_LIMIT = 120}"

+ ],

+ "python.linting.flake8Enabled": true,

+ "python.linting.flake8Args": [

+ "max-line-length=120"

+ ],

+}

diff --git a/LICENSE b/LICENSE

new file mode 100644

index 0000000000000000000000000000000000000000..552a1eeaf01f4e7077013ed3496600c608f35202

--- /dev/null

+++ b/LICENSE

@@ -0,0 +1,29 @@

+BSD 3-Clause License

+

+Copyright (c) 2021, Xintao Wang

+All rights reserved.

+

+Redistribution and use in source and binary forms, with or without

+modification, are permitted provided that the following conditions are met:

+

+1. Redistributions of source code must retain the above copyright notice, this

+ list of conditions and the following disclaimer.

+

+2. Redistributions in binary form must reproduce the above copyright notice,

+ this list of conditions and the following disclaimer in the documentation

+ and/or other materials provided with the distribution.

+

+3. Neither the name of the copyright holder nor the names of its

+ contributors may be used to endorse or promote products derived from

+ this software without specific prior written permission.

+

+THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS "AS IS"

+AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE

+IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE ARE

+DISCLAIMED. IN NO EVENT SHALL THE COPYRIGHT HOLDER OR CONTRIBUTORS BE LIABLE

+FOR ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL

+DAMAGES (INCLUDING, BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR

+SERVICES; LOSS OF USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER

+CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY,

+OR TORT (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE

+OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

diff --git a/MANIFEST.in b/MANIFEST.in

new file mode 100644

index 0000000000000000000000000000000000000000..b87c827c894c82b5530c1267ea1d57e86c5f515b

--- /dev/null

+++ b/MANIFEST.in

@@ -0,0 +1,8 @@

+include assets/*

+include inputs/*

+include scripts/*.py

+include inference_realesrgan.py

+include VERSION

+include LICENSE

+include requirements.txt

+include weights/README.md

diff --git a/README.md b/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..f2fa0c1aeba686bded41c11e006bc5960d16e176

--- /dev/null

+++ b/README.md

@@ -0,0 +1,272 @@

+

+  +

+

+

+##

+

+

+

+👀[**Demos**](#-demos-videos) **|** 🚩[**Updates**](#-updates) **|** ⚡[**Usage**](#-quick-inference) **|** 🏰[**Model Zoo**](docs/model_zoo.md) **|** 🔧[Install](#-dependencies-and-installation) **|** 💻[Train](docs/Training.md) **|** ❓[FAQ](docs/FAQ.md) **|** 🎨[Contribution](docs/CONTRIBUTING.md)

+

+[](https://github.com/xinntao/Real-ESRGAN/releases)

+[](https://pypi.org/project/realesrgan/)

+[](https://github.com/xinntao/Real-ESRGAN/issues)

+[](https://github.com/xinntao/Real-ESRGAN/issues)

+[](https://github.com/xinntao/Real-ESRGAN/blob/master/LICENSE)

+[](https://github.com/xinntao/Real-ESRGAN/blob/master/.github/workflows/pylint.yml)

+[](https://github.com/xinntao/Real-ESRGAN/blob/master/.github/workflows/publish-pip.yml)

+

+

+

+🔥 **AnimeVideo-v3 model (动漫视频小模型)**. Please see [[*anime video models*](docs/anime_video_model.md)] and [[*comparisons*](docs/anime_comparisons.md)]

+🔥 **RealESRGAN_x4plus_anime_6B** for anime images **(动漫插图模型)**. Please see [[*anime_model*](docs/anime_model.md)]

+

+

+1. :boom: **Update** online Replicate demo: [](https://replicate.com/xinntao/realesrgan)

+1. Online Colab demo for Real-ESRGAN: [](https://colab.research.google.com/drive/1k2Zod6kSHEvraybHl50Lys0LerhyTMCo?usp=sharing) **|** Online Colab demo for for Real-ESRGAN (**anime videos**): [](https://colab.research.google.com/drive/1yNl9ORUxxlL4N0keJa2SEPB61imPQd1B?usp=sharing)

+1. Portable [Windows](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-windows.zip) / [Linux](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-ubuntu.zip) / [MacOS](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-macos.zip) **executable files for Intel/AMD/Nvidia GPU**. You can find more information [here](#portable-executable-files-ncnn). The ncnn implementation is in [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan)

+

+

+Real-ESRGAN aims at developing **Practical Algorithms for General Image/Video Restoration**.

+We extend the powerful ESRGAN to a practical restoration application (namely, Real-ESRGAN), which is trained with pure synthetic data.

+

+🌌 Thanks for your valuable feedbacks/suggestions. All the feedbacks are updated in [feedback.md](docs/feedback.md).

+

+---

+

+If Real-ESRGAN is helpful, please help to ⭐ this repo or recommend it to your friends 😊

+Other recommended projects:

+▶️ [GFPGAN](https://github.com/TencentARC/GFPGAN): A practical algorithm for real-world face restoration

+▶️ [BasicSR](https://github.com/xinntao/BasicSR): An open-source image and video restoration toolbox

+▶️ [facexlib](https://github.com/xinntao/facexlib): A collection that provides useful face-relation functions.

+▶️ [HandyView](https://github.com/xinntao/HandyView): A PyQt5-based image viewer that is handy for view and comparison

+▶️ [HandyFigure](https://github.com/xinntao/HandyFigure): Open source of paper figures

+

+---

+

+### 📖 Real-ESRGAN: Training Real-World Blind Super-Resolution with Pure Synthetic Data

+

+> [[Paper](https://arxiv.org/abs/2107.10833)] [[YouTube Video](https://www.youtube.com/watch?v=fxHWoDSSvSc)] [[B站讲解](https://www.bilibili.com/video/BV1H34y1m7sS/)] [[Poster](https://xinntao.github.io/projects/RealESRGAN_src/RealESRGAN_poster.pdf)] [[PPT slides](https://docs.google.com/presentation/d/1QtW6Iy8rm8rGLsJ0Ldti6kP-7Qyzy6XL/edit?usp=sharing&ouid=109799856763657548160&rtpof=true&sd=true)]

+> [Xintao Wang](https://xinntao.github.io/), Liangbin Xie, [Chao Dong](https://scholar.google.com.hk/citations?user=OSDCB0UAAAAJ), [Ying Shan](https://scholar.google.com/citations?user=4oXBp9UAAAAJ&hl=en)

+> [Tencent ARC Lab](https://arc.tencent.com/en/ai-demos/imgRestore); Shenzhen Institutes of Advanced Technology, Chinese Academy of Sciences

+

+

+  +

+

+

+---

+

+

+## 🚩 Updates

+

+- ✅ Add the **realesr-general-x4v3** model - a tiny small model for general scenes. It also supports the **-dn** option to balance the noise (avoiding over-smooth results). **-dn** is short for denoising strength.

+- ✅ Update the **RealESRGAN AnimeVideo-v3** model. Please see [anime video models](docs/anime_video_model.md) and [comparisons](docs/anime_comparisons.md) for more details.

+- ✅ Add small models for anime videos. More details are in [anime video models](docs/anime_video_model.md).

+- ✅ Add the ncnn implementation [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan).

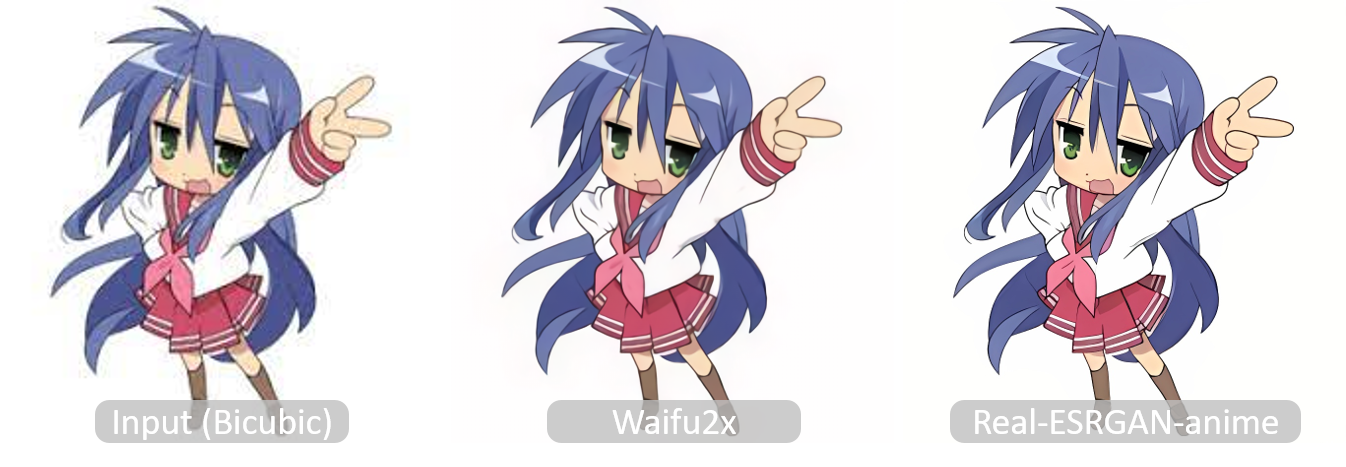

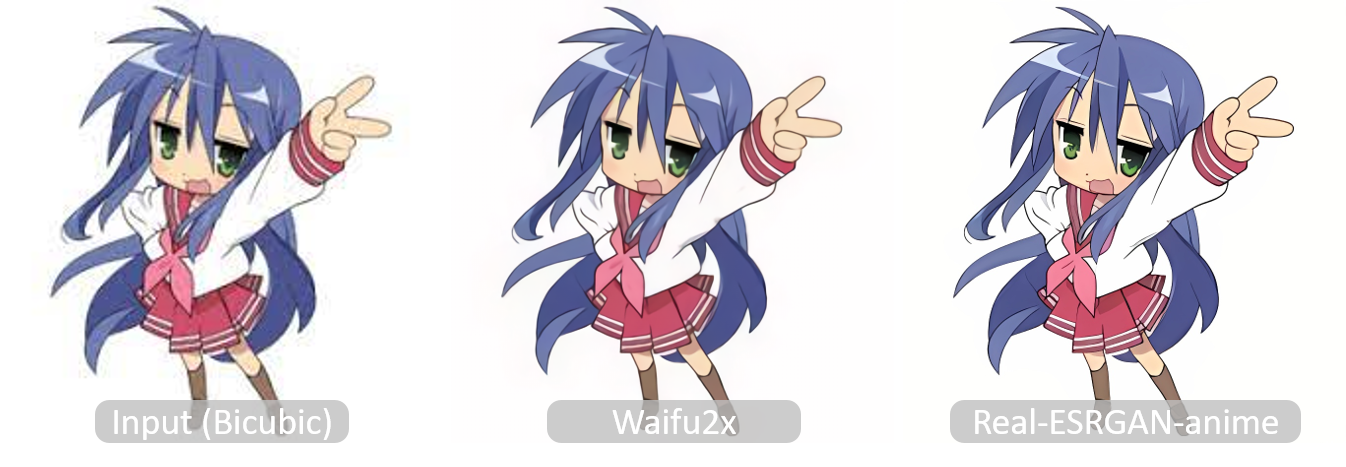

+- ✅ Add [*RealESRGAN_x4plus_anime_6B.pth*](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth), which is optimized for **anime** images with much smaller model size. More details and comparisons with [waifu2x](https://github.com/nihui/waifu2x-ncnn-vulkan) are in [**anime_model.md**](docs/anime_model.md)

+- ✅ Support finetuning on your own data or paired data (*i.e.*, finetuning ESRGAN). See [here](docs/Training.md#Finetune-Real-ESRGAN-on-your-own-dataset)

+- ✅ Integrate [GFPGAN](https://github.com/TencentARC/GFPGAN) to support **face enhancement**.

+- ✅ Integrated to [Huggingface Spaces](https://huggingface.co/spaces) with [Gradio](https://github.com/gradio-app/gradio). See [Gradio Web Demo](https://huggingface.co/spaces/akhaliq/Real-ESRGAN). Thanks [@AK391](https://github.com/AK391)

+- ✅ Support arbitrary scale with `--outscale` (It actually further resizes outputs with `LANCZOS4`). Add *RealESRGAN_x2plus.pth* model.

+- ✅ [The inference code](inference_realesrgan.py) supports: 1) **tile** options; 2) images with **alpha channel**; 3) **gray** images; 4) **16-bit** images.

+- ✅ The training codes have been released. A detailed guide can be found in [Training.md](docs/Training.md).

+

+---

+

+

+## 👀 Demos Videos

+

+#### Bilibili

+

+- [大闹天宫片段](https://www.bilibili.com/video/BV1ja41117zb)

+- [Anime dance cut 动漫魔性舞蹈](https://www.bilibili.com/video/BV1wY4y1L7hT/)

+- [海贼王片段](https://www.bilibili.com/video/BV1i3411L7Gy/)

+

+#### YouTube

+

+## 🔧 Dependencies and Installation

+

+- Python >= 3.7 (Recommend to use [Anaconda](https://www.anaconda.com/download/#linux) or [Miniconda](https://docs.conda.io/en/latest/miniconda.html))

+- [PyTorch >= 1.7](https://pytorch.org/)

+

+### Installation

+

+1. Clone repo

+

+ ```bash

+ git clone https://github.com/xinntao/Real-ESRGAN.git

+ cd Real-ESRGAN

+ ```

+

+1. Install dependent packages

+

+ ```bash

+ # Install basicsr - https://github.com/xinntao/BasicSR

+ # We use BasicSR for both training and inference

+ pip install basicsr

+ # facexlib and gfpgan are for face enhancement

+ pip install facexlib

+ pip install gfpgan

+ pip install -r requirements.txt

+ python setup.py develop

+ ```

+

+---

+

+## ⚡ Quick Inference

+

+There are usually three ways to inference Real-ESRGAN.

+

+1. [Online inference](#online-inference)

+1. [Portable executable files (NCNN)](#portable-executable-files-ncnn)

+1. [Python script](#python-script)

+

+### Online inference

+

+1. You can try in our website: [ARC Demo](https://arc.tencent.com/en/ai-demos/imgRestore) (now only support RealESRGAN_x4plus_anime_6B)

+1. [Colab Demo](https://colab.research.google.com/drive/1k2Zod6kSHEvraybHl50Lys0LerhyTMCo?usp=sharing) for Real-ESRGAN **|** [Colab Demo](https://colab.research.google.com/drive/1yNl9ORUxxlL4N0keJa2SEPB61imPQd1B?usp=sharing) for Real-ESRGAN (**anime videos**).

+

+### Portable executable files (NCNN)

+

+You can download [Windows](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-windows.zip) / [Linux](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-ubuntu.zip) / [MacOS](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-macos.zip) **executable files for Intel/AMD/Nvidia GPU**.

+

+This executable file is **portable** and includes all the binaries and models required. No CUDA or PyTorch environment is needed.

+

+You can simply run the following command (the Windows example, more information is in the README.md of each executable files):

+

+```bash

+./realesrgan-ncnn-vulkan.exe -i input.jpg -o output.png -n model_name

+```

+

+We have provided five models:

+

+1. realesrgan-x4plus (default)

+2. realesrnet-x4plus

+3. realesrgan-x4plus-anime (optimized for anime images, small model size)

+4. realesr-animevideov3 (animation video)

+

+You can use the `-n` argument for other models, for example, `./realesrgan-ncnn-vulkan.exe -i input.jpg -o output.png -n realesrnet-x4plus`

+

+#### Usage of portable executable files

+

+1. Please refer to [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan#computer-usages) for more details.

+1. Note that it does not support all the functions (such as `outscale`) as the python script `inference_realesrgan.py`.

+

+```console

+Usage: realesrgan-ncnn-vulkan.exe -i infile -o outfile [options]...

+

+ -h show this help

+ -i input-path input image path (jpg/png/webp) or directory

+ -o output-path output image path (jpg/png/webp) or directory

+ -s scale upscale ratio (can be 2, 3, 4. default=4)

+ -t tile-size tile size (>=32/0=auto, default=0) can be 0,0,0 for multi-gpu

+ -m model-path folder path to the pre-trained models. default=models

+ -n model-name model name (default=realesr-animevideov3, can be realesr-animevideov3 | realesrgan-x4plus | realesrgan-x4plus-anime | realesrnet-x4plus)

+ -g gpu-id gpu device to use (default=auto) can be 0,1,2 for multi-gpu

+ -j load:proc:save thread count for load/proc/save (default=1:2:2) can be 1:2,2,2:2 for multi-gpu

+ -x enable tta mode"

+ -f format output image format (jpg/png/webp, default=ext/png)

+ -v verbose output

+```

+

+Note that it may introduce block inconsistency (and also generate slightly different results from the PyTorch implementation), because this executable file first crops the input image into several tiles, and then processes them separately, finally stitches together.

+

+### Python script

+

+#### Usage of python script

+

+1. You can use X4 model for **arbitrary output size** with the argument `outscale`. The program will further perform cheap resize operation after the Real-ESRGAN output.

+

+```console

+Usage: python inference_realesrgan.py -n RealESRGAN_x4plus -i infile -o outfile [options]...

+

+A common command: python inference_realesrgan.py -n RealESRGAN_x4plus -i infile --outscale 3.5 --face_enhance

+

+ -h show this help

+ -i --input Input image or folder. Default: inputs

+ -o --output Output folder. Default: results

+ -n --model_name Model name. Default: RealESRGAN_x4plus

+ -s, --outscale The final upsampling scale of the image. Default: 4

+ --suffix Suffix of the restored image. Default: out

+ -t, --tile Tile size, 0 for no tile during testing. Default: 0

+ --face_enhance Whether to use GFPGAN to enhance face. Default: False

+ --fp32 Use fp32 precision during inference. Default: fp16 (half precision).

+ --ext Image extension. Options: auto | jpg | png, auto means using the same extension as inputs. Default: auto

+```

+

+#### Inference general images

+

+Download pre-trained models: [RealESRGAN_x4plus.pth](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth)

+

+```bash

+wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth -P weights

+```

+

+Inference!

+

+```bash

+python inference_realesrgan.py -n RealESRGAN_x4plus -i inputs --face_enhance

+```

+

+Results are in the `results` folder

+

+#### Inference anime images

+

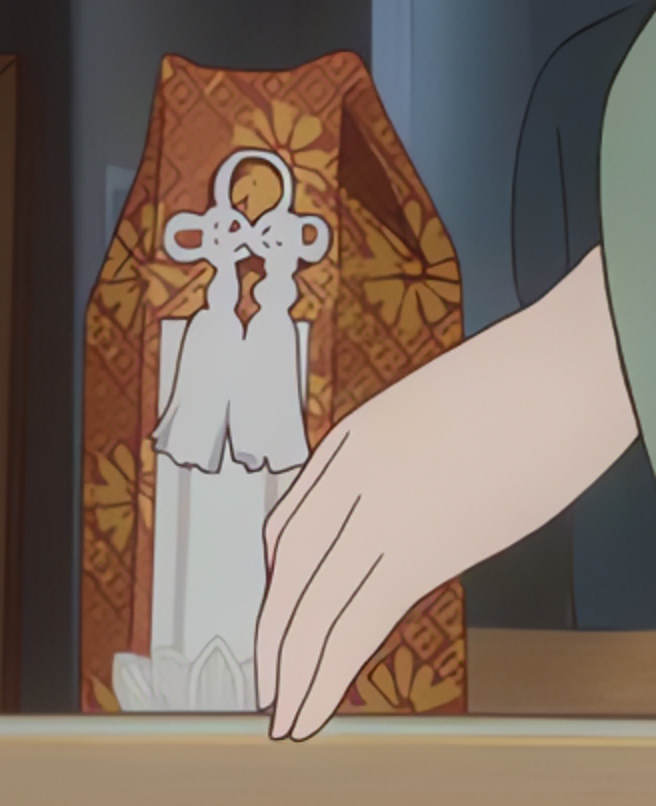

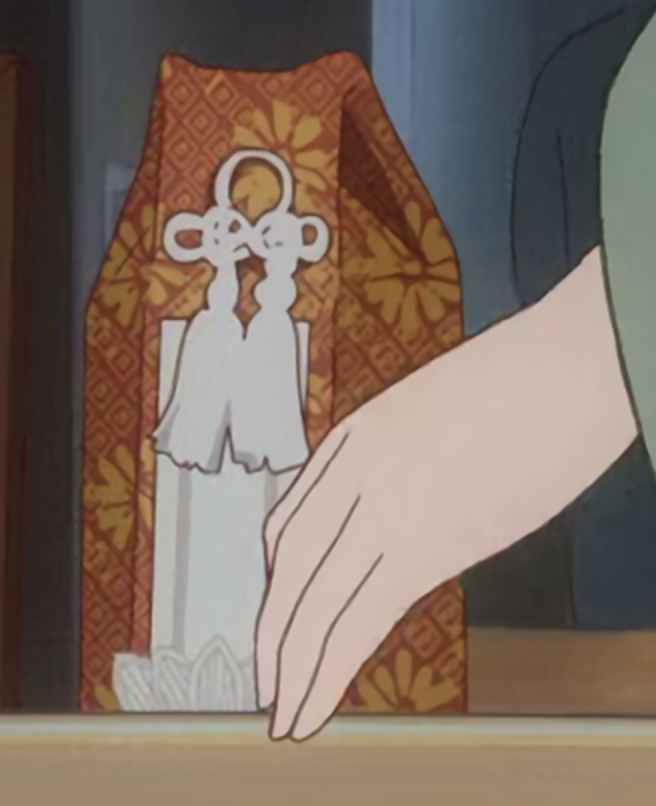

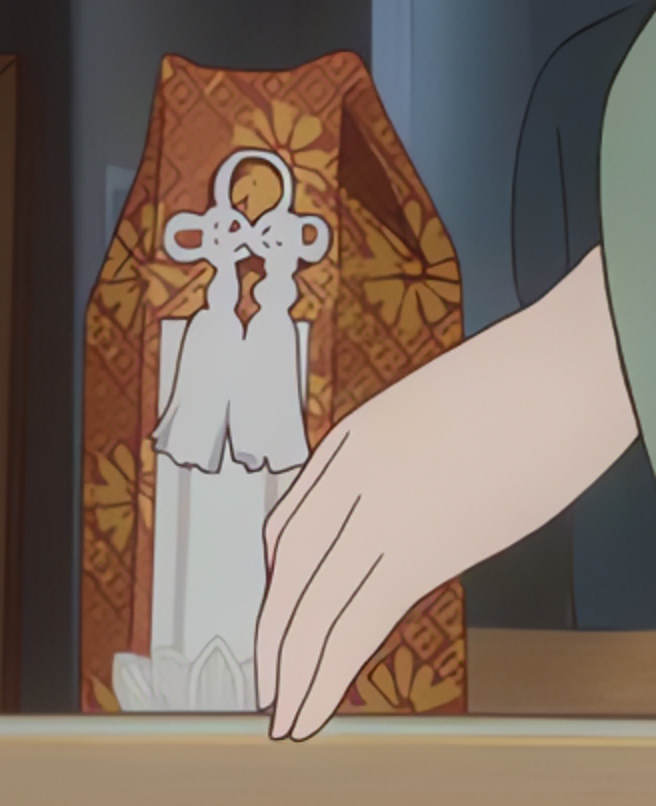

+

+  +

+

+

+Pre-trained models: [RealESRGAN_x4plus_anime_6B](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth)

+ More details and comparisons with [waifu2x](https://github.com/nihui/waifu2x-ncnn-vulkan) are in [**anime_model.md**](docs/anime_model.md)

+

+```bash

+# download model

+wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth -P weights

+# inference

+python inference_realesrgan.py -n RealESRGAN_x4plus_anime_6B -i inputs

+```

+

+Results are in the `results` folder

+

+---

+

+## BibTeX

+

+ @InProceedings{wang2021realesrgan,

+ author = {Xintao Wang and Liangbin Xie and Chao Dong and Ying Shan},

+ title = {Real-ESRGAN: Training Real-World Blind Super-Resolution with Pure Synthetic Data},

+ booktitle = {International Conference on Computer Vision Workshops (ICCVW)},

+ date = {2021}

+ }

+

+## 📧 Contact

+

+If you have any question, please email `xintao.wang@outlook.com` or `xintaowang@tencent.com`.

+

+

+## 🧩 Projects that use Real-ESRGAN

+

+If you develop/use Real-ESRGAN in your projects, welcome to let me know.

+

+- NCNN-Android: [RealSR-NCNN-Android](https://github.com/tumuyan/RealSR-NCNN-Android) by [tumuyan](https://github.com/tumuyan)

+- VapourSynth: [vs-realesrgan](https://github.com/HolyWu/vs-realesrgan) by [HolyWu](https://github.com/HolyWu)

+- NCNN: [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan)

+

+ **GUI**

+

+- [Waifu2x-Extension-GUI](https://github.com/AaronFeng753/Waifu2x-Extension-GUI) by [AaronFeng753](https://github.com/AaronFeng753)

+- [Squirrel-RIFE](https://github.com/Justin62628/Squirrel-RIFE) by [Justin62628](https://github.com/Justin62628)

+- [Real-GUI](https://github.com/scifx/Real-GUI) by [scifx](https://github.com/scifx)

+- [Real-ESRGAN_GUI](https://github.com/net2cn/Real-ESRGAN_GUI) by [net2cn](https://github.com/net2cn)

+- [Real-ESRGAN-EGUI](https://github.com/WGzeyu/Real-ESRGAN-EGUI) by [WGzeyu](https://github.com/WGzeyu)

+- [anime_upscaler](https://github.com/shangar21/anime_upscaler) by [shangar21](https://github.com/shangar21)

+- [Upscayl](https://github.com/upscayl/upscayl) by [Nayam Amarshe](https://github.com/NayamAmarshe) and [TGS963](https://github.com/TGS963)

+

+## 🤗 Acknowledgement

+

+Thanks for all the contributors.

+

+- [AK391](https://github.com/AK391): Integrate RealESRGAN to [Huggingface Spaces](https://huggingface.co/spaces) with [Gradio](https://github.com/gradio-app/gradio). See [Gradio Web Demo](https://huggingface.co/spaces/akhaliq/Real-ESRGAN).

+- [Asiimoviet](https://github.com/Asiimoviet): Translate the README.md to Chinese (中文).

+- [2ji3150](https://github.com/2ji3150): Thanks for the [detailed and valuable feedbacks/suggestions](https://github.com/xinntao/Real-ESRGAN/issues/131).

+- [Jared-02](https://github.com/Jared-02): Translate the Training.md to Chinese (中文).

diff --git a/README_CN.md b/README_CN.md

new file mode 100644

index 0000000000000000000000000000000000000000..fda1217bec600c5dcea72624c13533be6b71453e

--- /dev/null

+++ b/README_CN.md

@@ -0,0 +1,276 @@

+

+  +

+

+

+##

+

+[](https://github.com/xinntao/Real-ESRGAN/releases)

+[](https://pypi.org/project/realesrgan/)

+[](https://github.com/xinntao/Real-ESRGAN/issues)

+[](https://github.com/xinntao/Real-ESRGAN/issues)

+[](https://github.com/xinntao/Real-ESRGAN/blob/master/LICENSE)

+[](https://github.com/xinntao/Real-ESRGAN/blob/master/.github/workflows/pylint.yml)

+[](https://github.com/xinntao/Real-ESRGAN/blob/master/.github/workflows/publish-pip.yml)

+

+:fire: 更新动漫视频的小模型 **RealESRGAN AnimeVideo-v3**. 更多信息在 [[动漫视频模型介绍](docs/anime_video_model.md)] 和 [[比较](docs/anime_comparisons_CN.md)] 中.

+

+1. Real-ESRGAN的[Colab Demo](https://colab.research.google.com/drive/1k2Zod6kSHEvraybHl50Lys0LerhyTMCo?usp=sharing) | Real-ESRGAN**动漫视频** 的[Colab Demo](https://colab.research.google.com/drive/1yNl9ORUxxlL4N0keJa2SEPB61imPQd1B?usp=sharing)

+2. **支持Intel/AMD/Nvidia显卡**的绿色版exe文件: [Windows版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-windows.zip) / [Linux版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-ubuntu.zip) / [macOS版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-macos.zip),详情请移步[这里](#便携版(绿色版)可执行文件)。NCNN的实现在 [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan)。

+

+Real-ESRGAN 的目标是开发出**实用的图像/视频修复算法**。

+我们在 ESRGAN 的基础上使用纯合成的数据来进行训练,以使其能被应用于实际的图片修复的场景(顾名思义:Real-ESRGAN)。

+

+:art: Real-ESRGAN 需要,也很欢迎你的贡献,如新功能、模型、bug修复、建议、维护等等。详情可以查看[CONTRIBUTING.md](docs/CONTRIBUTING.md),所有的贡献者都会被列在[此处](README_CN.md#hugs-感谢)。

+

+:milky_way: 感谢大家提供了很好的反馈。这些反馈会逐步更新在 [这个文档](docs/feedback.md)。

+

+:question: 常见的问题可以在[FAQ.md](docs/FAQ.md)中找到答案。(好吧,现在还是空白的=-=||)

+

+---

+

+如果 Real-ESRGAN 对你有帮助,可以给本项目一个 Star :star: ,或者推荐给你的朋友们,谢谢!:blush:

+其他推荐的项目:

+:arrow_forward: [GFPGAN](https://github.com/TencentARC/GFPGAN): 实用的人脸复原算法

+:arrow_forward: [BasicSR](https://github.com/xinntao/BasicSR): 开源的图像和视频工具箱

+:arrow_forward: [facexlib](https://github.com/xinntao/facexlib): 提供与人脸相关的工具箱

+:arrow_forward: [HandyView](https://github.com/xinntao/HandyView): 基于PyQt5的图片查看器,方便查看以及比较

+

+---

+

+

+

+🚩更新

+

+- ✅ 更新动漫视频的小模型 **RealESRGAN AnimeVideo-v3**. 更多信息在 [anime video models](docs/anime_video_model.md) 和 [comparisons](docs/anime_comparisons.md)中.

+- ✅ 添加了针对动漫视频的小模型, 更多信息在 [anime video models](docs/anime_video_model.md) 中.

+- ✅ 添加了ncnn 实现:[Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan).

+- ✅ 添加了 [*RealESRGAN_x4plus_anime_6B.pth*](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth),对二次元图片进行了优化,并减少了model的大小。详情 以及 与[waifu2x](https://github.com/nihui/waifu2x-ncnn-vulkan)的对比请查看[**anime_model.md**](docs/anime_model.md)

+- ✅支持用户在自己的数据上进行微调 (finetune):[详情](docs/Training.md#Finetune-Real-ESRGAN-on-your-own-dataset)

+- ✅ 支持使用[GFPGAN](https://github.com/TencentARC/GFPGAN)**增强人脸**

+- ✅ 通过[Gradio](https://github.com/gradio-app/gradio)添加到了[Huggingface Spaces](https://huggingface.co/spaces)(一个机器学习应用的在线平台):[Gradio在线版](https://huggingface.co/spaces/akhaliq/Real-ESRGAN)。感谢[@AK391](https://github.com/AK391)

+- ✅ 支持任意比例的缩放:`--outscale`(实际上使用`LANCZOS4`来更进一步调整输出图像的尺寸)。添加了*RealESRGAN_x2plus.pth*模型

+- ✅ [推断脚本](inference_realesrgan.py)支持: 1) 分块处理**tile**; 2) 带**alpha通道**的图像; 3) **灰色**图像; 4) **16-bit**图像.

+- ✅ 训练代码已经发布,具体做法可查看:[Training.md](docs/Training.md)。

+

+

+

+

+

+🧩使用Real-ESRGAN的项目

+

+ 👋 如果你开发/使用/集成了Real-ESRGAN, 欢迎联系我添加

+

+- NCNN-Android: [RealSR-NCNN-Android](https://github.com/tumuyan/RealSR-NCNN-Android) by [tumuyan](https://github.com/tumuyan)

+- VapourSynth: [vs-realesrgan](https://github.com/HolyWu/vs-realesrgan) by [HolyWu](https://github.com/HolyWu)

+- NCNN: [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan)

+

+ **易用的图形界面**

+

+- [Waifu2x-Extension-GUI](https://github.com/AaronFeng753/Waifu2x-Extension-GUI) by [AaronFeng753](https://github.com/AaronFeng753)

+- [Squirrel-RIFE](https://github.com/Justin62628/Squirrel-RIFE) by [Justin62628](https://github.com/Justin62628)

+- [Real-GUI](https://github.com/scifx/Real-GUI) by [scifx](https://github.com/scifx)

+- [Real-ESRGAN_GUI](https://github.com/net2cn/Real-ESRGAN_GUI) by [net2cn](https://github.com/net2cn)

+- [Real-ESRGAN-EGUI](https://github.com/WGzeyu/Real-ESRGAN-EGUI) by [WGzeyu](https://github.com/WGzeyu)

+- [anime_upscaler](https://github.com/shangar21/anime_upscaler) by [shangar21](https://github.com/shangar21)

+- [RealESRGAN-GUI](https://github.com/Baiyuetribe/paper2gui/blob/main/Video%20Super%20Resolution/RealESRGAN-GUI.md) by [Baiyuetribe](https://github.com/Baiyuetribe)

+

+

+

+

+👀Demo视频(B站)

+

+- [大闹天宫片段](https://www.bilibili.com/video/BV1ja41117zb)

+

+

+

+### :book: Real-ESRGAN: Training Real-World Blind Super-Resolution with Pure Synthetic Data

+

+> [[论文](https://arxiv.org/abs/2107.10833)] [项目主页] [[YouTube 视频](https://www.youtube.com/watch?v=fxHWoDSSvSc)] [[B站视频](https://www.bilibili.com/video/BV1H34y1m7sS/)] [[Poster](https://xinntao.github.io/projects/RealESRGAN_src/RealESRGAN_poster.pdf)] [[PPT](https://docs.google.com/presentation/d/1QtW6Iy8rm8rGLsJ0Ldti6kP-7Qyzy6XL/edit?usp=sharing&ouid=109799856763657548160&rtpof=true&sd=true)]

+> [Xintao Wang](https://xinntao.github.io/), Liangbin Xie, [Chao Dong](https://scholar.google.com.hk/citations?user=OSDCB0UAAAAJ), [Ying Shan](https://scholar.google.com/citations?user=4oXBp9UAAAAJ&hl=en)

+> Tencent ARC Lab; Shenzhen Institutes of Advanced Technology, Chinese Academy of Sciences

+

+

+  +

+

+

+---

+

+我们提供了一套训练好的模型(*RealESRGAN_x4plus.pth*),可以进行4倍的超分辨率。

+**现在的 Real-ESRGAN 还是有几率失败的,因为现实生活的降质过程比较复杂。**

+而且,本项目对**人脸以及文字之类**的效果还不是太好,但是我们会持续进行优化的。

+

+Real-ESRGAN 将会被长期支持,我会在空闲的时间中持续维护更新。

+

+这些是未来计划的几个新功能:

+

+- [ ] 优化人脸

+- [ ] 优化文字

+- [x] 优化动画图像

+- [ ] 支持更多的超分辨率比例

+- [ ] 可调节的复原

+

+如果你有好主意或需求,欢迎在 issue 或 discussion 中提出。

+如果你有一些 Real-ESRGAN 中有问题的照片,你也可以在 issue 或者 discussion 中发出来。我会留意(但是不一定能解决:stuck_out_tongue:)。如果有必要的话,我还会专门开一页来记录那些有待解决的图像。

+

+---

+

+### 便携版(绿色版)可执行文件

+

+你可以下载**支持Intel/AMD/Nvidia显卡**的绿色版exe文件: [Windows版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-windows.zip) / [Linux版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-ubuntu.zip) / [macOS版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-macos.zip)。

+

+绿色版指的是这些exe你可以直接运行(放U盘里拷走都没问题),因为里面已经有所需的文件和模型了。它不需要 CUDA 或者 PyTorch运行环境。

+

+你可以通过下面这个命令来运行(Windows版本的例子,更多信息请查看对应版本的README.md):

+

+```bash

+./realesrgan-ncnn-vulkan.exe -i 输入图像.jpg -o 输出图像.png -n 模型名字

+```

+

+我们提供了五种模型:

+

+1. realesrgan-x4plus(默认)

+2. reaesrnet-x4plus

+3. realesrgan-x4plus-anime(针对动漫插画图像优化,有更小的体积)

+4. realesr-animevideov3 (针对动漫视频)

+

+你可以通过`-n`参数来使用其他模型,例如`./realesrgan-ncnn-vulkan.exe -i 二次元图片.jpg -o 二刺螈图片.png -n realesrgan-x4plus-anime`

+

+### 可执行文件的用法

+

+1. 更多细节可以参考 [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan#computer-usages).

+2. 注意:可执行文件并没有支持 python 脚本 `inference_realesrgan.py` 中所有的功能,比如 `outscale` 选项) .

+

+```console

+Usage: realesrgan-ncnn-vulkan.exe -i infile -o outfile [options]...

+

+ -h show this help

+ -i input-path input image path (jpg/png/webp) or directory

+ -o output-path output image path (jpg/png/webp) or directory

+ -s scale upscale ratio (can be 2, 3, 4. default=4)

+ -t tile-size tile size (>=32/0=auto, default=0) can be 0,0,0 for multi-gpu

+ -m model-path folder path to the pre-trained models. default=models

+ -n model-name model name (default=realesr-animevideov3, can be realesr-animevideov3 | realesrgan-x4plus | realesrgan-x4plus-anime | realesrnet-x4plus)

+ -g gpu-id gpu device to use (default=auto) can be 0,1,2 for multi-gpu

+ -j load:proc:save thread count for load/proc/save (default=1:2:2) can be 1:2,2,2:2 for multi-gpu

+ -x enable tta mode"

+ -f format output image format (jpg/png/webp, default=ext/png)

+ -v verbose output

+```

+

+由于这些exe文件会把图像分成几个板块,然后来分别进行处理,再合成导出,输出的图像可能会有一点割裂感(而且可能跟PyTorch的输出不太一样)

+

+---

+

+## :wrench: 依赖以及安装

+

+- Python >= 3.7 (推荐使用[Anaconda](https://www.anaconda.com/download/#linux)或[Miniconda](https://docs.conda.io/en/latest/miniconda.html))

+- [PyTorch >= 1.7](https://pytorch.org/)

+

+#### 安装

+

+1. 把项目克隆到本地

+

+ ```bash

+ git clone https://github.com/xinntao/Real-ESRGAN.git

+ cd Real-ESRGAN

+ ```

+

+2. 安装各种依赖

+

+ ```bash

+ # 安装 basicsr - https://github.com/xinntao/BasicSR

+ # 我们使用BasicSR来训练以及推断

+ pip install basicsr

+ # facexlib和gfpgan是用来增强人脸的

+ pip install facexlib

+ pip install gfpgan

+ pip install -r requirements.txt

+ python setup.py develop

+ ```

+

+## :zap: 快速上手

+

+### 普通图片

+

+下载我们训练好的模型: [RealESRGAN_x4plus.pth](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth)

+

+```bash

+wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth -P weights

+```

+

+推断!

+

+```bash

+python inference_realesrgan.py -n RealESRGAN_x4plus -i inputs --face_enhance

+```

+

+结果在`results`文件夹

+

+### 动画图片

+

+

+  +

+

+

+训练好的模型: [RealESRGAN_x4plus_anime_6B](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth)

+有关[waifu2x](https://github.com/nihui/waifu2x-ncnn-vulkan)的更多信息和对比在[**anime_model.md**](docs/anime_model.md)中。

+

+```bash

+# 下载模型

+wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth -P weights

+# 推断

+python inference_realesrgan.py -n RealESRGAN_x4plus_anime_6B -i inputs

+```

+

+结果在`results`文件夹

+

+### Python 脚本的用法

+

+1. 虽然你使用了 X4 模型,但是你可以 **输出任意尺寸比例的图片**,只要实用了 `outscale` 参数. 程序会进一步对模型的输出图像进行缩放。

+

+```console

+Usage: python inference_realesrgan.py -n RealESRGAN_x4plus -i infile -o outfile [options]...

+

+A common command: python inference_realesrgan.py -n RealESRGAN_x4plus -i infile --outscale 3.5 --face_enhance

+

+ -h show this help

+ -i --input Input image or folder. Default: inputs

+ -o --output Output folder. Default: results

+ -n --model_name Model name. Default: RealESRGAN_x4plus

+ -s, --outscale The final upsampling scale of the image. Default: 4

+ --suffix Suffix of the restored image. Default: out

+ -t, --tile Tile size, 0 for no tile during testing. Default: 0

+ --face_enhance Whether to use GFPGAN to enhance face. Default: False

+ --fp32 Whether to use half precision during inference. Default: False

+ --ext Image extension. Options: auto | jpg | png, auto means using the same extension as inputs. Default: auto

+```

+

+## :european_castle: 模型库

+

+请参见 [docs/model_zoo.md](docs/model_zoo.md)

+

+## :computer: 训练,在你的数据上微调(Fine-tune)

+

+这里有一份详细的指南:[Training.md](docs/Training.md).

+

+## BibTeX 引用

+

+ @Article{wang2021realesrgan,

+ title={Real-ESRGAN: Training Real-World Blind Super-Resolution with Pure Synthetic Data},

+ author={Xintao Wang and Liangbin Xie and Chao Dong and Ying Shan},

+ journal={arXiv:2107.10833},

+ year={2021}

+ }

+

+## :e-mail: 联系我们

+

+如果你有任何问题,请通过 `xintao.wang@outlook.com` 或 `xintaowang@tencent.com` 联系我们。

+

+## :hugs: 感谢

+

+感谢所有的贡献者大大们~

+

+- [AK391](https://github.com/AK391): 通过[Gradio](https://github.com/gradio-app/gradio)添加到了[Huggingface Spaces](https://huggingface.co/spaces)(一个机器学习应用的在线平台):[Gradio在线版](https://huggingface.co/spaces/akhaliq/Real-ESRGAN)。

+- [Asiimoviet](https://github.com/Asiimoviet): 把 README.md 文档 翻译成了中文。

+- [2ji3150](https://github.com/2ji3150): 感谢详尽并且富有价值的[反馈、建议](https://github.com/xinntao/Real-ESRGAN/issues/131).

+- [Jared-02](https://github.com/Jared-02): 把 Training.md 文档 翻译成了中文。

diff --git a/VERSION b/VERSION

new file mode 100644

index 0000000000000000000000000000000000000000..0d91a54c7d439e84e3dd17d3594f1b2b6737f430

--- /dev/null

+++ b/VERSION

@@ -0,0 +1 @@

+0.3.0

diff --git a/app.py b/app.py

new file mode 100644

index 0000000000000000000000000000000000000000..17eb16b7dd42273328d2edf4dd2dc1b23d94043f

--- /dev/null

+++ b/app.py

@@ -0,0 +1,30 @@

+import streamlit as st

+from PIL import Image

+import inference_realesrgan as ir

+

+st.set_page_config(

+ page_title="Image Enhancer",

+ page_icon="🧊",

+ layout="wide",

+ initial_sidebar_state="expanded",

+)

+

+st.header('Image Optimization Using Real-ESRGAN')

+upload_img = st.file_uploader(label="Upload Your Image",type=['jpg','png','jpeg'])

+

+col1,col2 = st.columns(2)

+

+if upload_img is not None:

+ image = Image.open(upload_img)

+

+ col1, col2 = st.columns( [0.5, 0.5])

+ with col1:

+ st.markdown('Before

',unsafe_allow_html=True)

+ st.image(image,width=300)

+

+ with col2:

+ with st.spinner('Processing...Seat Back And Relax.'):

+ st.markdown('After

',unsafe_allow_html=True)

+ result = ir.main(input='C:\\Users\\SUMIT\\Desktop\\IO\\Real-ESRGAN\\inputs\\image-31.png', outscale=3.5,fp32= '--fp32', face_enhance='True', ext='auto',output= 'results')

+

+ st.image(result,width=300)

diff --git a/assets/realesrgan_logo.png b/assets/realesrgan_logo.png

new file mode 100644

index 0000000000000000000000000000000000000000..88cd1ad6170794c2becb95006edffa0655d9372a

Binary files /dev/null and b/assets/realesrgan_logo.png differ

diff --git a/assets/realesrgan_logo_ai.png b/assets/realesrgan_logo_ai.png

new file mode 100644

index 0000000000000000000000000000000000000000..b0f595cf2535de7e69393384d8d056300f1cdddc

Binary files /dev/null and b/assets/realesrgan_logo_ai.png differ

diff --git a/assets/realesrgan_logo_av.png b/assets/realesrgan_logo_av.png

new file mode 100644

index 0000000000000000000000000000000000000000..501ac8e81292d9369122a69ec2dd56a3ae8beca6

Binary files /dev/null and b/assets/realesrgan_logo_av.png differ

diff --git a/assets/realesrgan_logo_gi.png b/assets/realesrgan_logo_gi.png

new file mode 100644

index 0000000000000000000000000000000000000000..cdb0a1a74e0b54a1c684141324c6635acf2f60f8

Binary files /dev/null and b/assets/realesrgan_logo_gi.png differ

diff --git a/assets/realesrgan_logo_gv.png b/assets/realesrgan_logo_gv.png

new file mode 100644

index 0000000000000000000000000000000000000000..21dfba05f3855f1d9740e6d2cbe2a8ac736f4508

Binary files /dev/null and b/assets/realesrgan_logo_gv.png differ

diff --git a/assets/teaser-text.png b/assets/teaser-text.png

new file mode 100644

index 0000000000000000000000000000000000000000..af9b424e390bf454838d962f049db9bb5ef1064d

Binary files /dev/null and b/assets/teaser-text.png differ

diff --git a/assets/teaser.jpg b/assets/teaser.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..dc9b7ccdf78e3c816b0b6ca567433b53253b2e1e

Binary files /dev/null and b/assets/teaser.jpg differ

diff --git a/cog.yaml b/cog.yaml

new file mode 100644

index 0000000000000000000000000000000000000000..daa6983934b6e186ecd0cf1d4e038acdb9910cbc

--- /dev/null

+++ b/cog.yaml

@@ -0,0 +1,22 @@

+# This file is used for constructing replicate env

+image: "r8.im/tencentarc/realesrgan"

+

+build:

+ gpu: true

+ python_version: "3.8"

+ system_packages:

+ - "libgl1-mesa-glx"

+ - "libglib2.0-0"

+ python_packages:

+ - "torch==1.7.1"

+ - "torchvision==0.8.2"

+ - "numpy==1.21.1"

+ - "lmdb==1.2.1"

+ - "opencv-python==4.5.3.56"

+ - "PyYAML==5.4.1"

+ - "tqdm==4.62.2"

+ - "yapf==0.31.0"

+ - "basicsr==1.4.2"

+ - "facexlib==0.2.5"

+

+predict: "cog_predict.py:Predictor"

diff --git a/cog_predict.py b/cog_predict.py

new file mode 100644

index 0000000000000000000000000000000000000000..fa0f89dfda8e3ff14afd7b3b8544f04d86e96562

--- /dev/null

+++ b/cog_predict.py

@@ -0,0 +1,148 @@

+# flake8: noqa

+# This file is used for deploying replicate models

+# running: cog predict -i img=@inputs/00017_gray.png -i version='General - v3' -i scale=2 -i face_enhance=True -i tile=0

+# push: cog push r8.im/xinntao/realesrgan

+

+import os

+

+os.system('pip install gfpgan')

+os.system('python setup.py develop')

+

+import cv2

+import shutil

+import tempfile

+import torch

+from basicsr.archs.rrdbnet_arch import RRDBNet

+from basicsr.archs.srvgg_arch import SRVGGNetCompact

+

+from realesrgan.utils import RealESRGANer

+

+try:

+ from cog import BasePredictor, Input, Path

+ from gfpgan import GFPGANer

+except Exception:

+ print('please install cog and realesrgan package')

+

+

+class Predictor(BasePredictor):

+

+ def setup(self):

+ os.makedirs('output', exist_ok=True)

+ # download weights

+ if not os.path.exists('weights/realesr-general-x4v3.pth'):

+ os.system(

+ 'wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesr-general-x4v3.pth -P ./weights'

+ )

+ if not os.path.exists('weights/GFPGANv1.4.pth'):

+ os.system('wget https://github.com/TencentARC/GFPGAN/releases/download/v1.3.0/GFPGANv1.4.pth -P ./weights')

+ if not os.path.exists('weights/RealESRGAN_x4plus.pth'):

+ os.system(

+ 'wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth -P ./weights'

+ )

+ if not os.path.exists('weights/RealESRGAN_x4plus_anime_6B.pth'):

+ os.system(

+ 'wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth -P ./weights'

+ )

+ if not os.path.exists('weights/realesr-animevideov3.pth'):

+ os.system(

+ 'wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesr-animevideov3.pth -P ./weights'

+ )

+

+ def choose_model(self, scale, version, tile=0):

+ half = True if torch.cuda.is_available() else False

+ if version == 'General - RealESRGANplus':

+ model = RRDBNet(num_in_ch=3, num_out_ch=3, num_feat=64, num_block=23, num_grow_ch=32, scale=4)

+ model_path = 'weights/RealESRGAN_x4plus.pth'

+ self.upsampler = RealESRGANer(

+ scale=4, model_path=model_path, model=model, tile=tile, tile_pad=10, pre_pad=0, half=half)

+ elif version == 'General - v3':

+ model = SRVGGNetCompact(num_in_ch=3, num_out_ch=3, num_feat=64, num_conv=32, upscale=4, act_type='prelu')

+ model_path = 'weights/realesr-general-x4v3.pth'

+ self.upsampler = RealESRGANer(

+ scale=4, model_path=model_path, model=model, tile=tile, tile_pad=10, pre_pad=0, half=half)

+ elif version == 'Anime - anime6B':

+ model = RRDBNet(num_in_ch=3, num_out_ch=3, num_feat=64, num_block=6, num_grow_ch=32, scale=4)

+ model_path = 'weights/RealESRGAN_x4plus_anime_6B.pth'

+ self.upsampler = RealESRGANer(

+ scale=4, model_path=model_path, model=model, tile=tile, tile_pad=10, pre_pad=0, half=half)

+ elif version == 'AnimeVideo - v3':

+ model = SRVGGNetCompact(num_in_ch=3, num_out_ch=3, num_feat=64, num_conv=16, upscale=4, act_type='prelu')

+ model_path = 'weights/realesr-animevideov3.pth'

+ self.upsampler = RealESRGANer(

+ scale=4, model_path=model_path, model=model, tile=tile, tile_pad=10, pre_pad=0, half=half)

+

+ self.face_enhancer = GFPGANer(

+ model_path='weights/GFPGANv1.4.pth',

+ upscale=scale,

+ arch='clean',

+ channel_multiplier=2,

+ bg_upsampler=self.upsampler)

+

+ def predict(

+ self,

+ img: Path = Input(description='Input'),

+ version: str = Input(

+ description='RealESRGAN version. Please see [Readme] below for more descriptions',

+ choices=['General - RealESRGANplus', 'General - v3', 'Anime - anime6B', 'AnimeVideo - v3'],

+ default='General - v3'),

+ scale: float = Input(description='Rescaling factor', default=2),

+ face_enhance: bool = Input(

+ description='Enhance faces with GFPGAN. Note that it does not work for anime images/vidoes', default=False),

+ tile: int = Input(

+ description=

+ 'Tile size. Default is 0, that is no tile. When encountering the out-of-GPU-memory issue, please specify it, e.g., 400 or 200',

+ default=0)

+ ) -> Path:

+ if tile <= 100 or tile is None:

+ tile = 0

+ print(f'img: {img}. version: {version}. scale: {scale}. face_enhance: {face_enhance}. tile: {tile}.')

+ try:

+ extension = os.path.splitext(os.path.basename(str(img)))[1]

+ img = cv2.imread(str(img), cv2.IMREAD_UNCHANGED)

+ if len(img.shape) == 3 and img.shape[2] == 4:

+ img_mode = 'RGBA'

+ elif len(img.shape) == 2:

+ img_mode = None

+ img = cv2.cvtColor(img, cv2.COLOR_GRAY2BGR)

+ else:

+ img_mode = None

+

+ h, w = img.shape[0:2]

+ if h < 300:

+ img = cv2.resize(img, (w * 2, h * 2), interpolation=cv2.INTER_LANCZOS4)

+

+ self.choose_model(scale, version, tile)

+

+ try:

+ if face_enhance:

+ _, _, output = self.face_enhancer.enhance(

+ img, has_aligned=False, only_center_face=False, paste_back=True)

+ else:

+ output, _ = self.upsampler.enhance(img, outscale=scale)

+ except RuntimeError as error:

+ print('Error', error)

+ print('If you encounter CUDA out of memory, try to set "tile" to a smaller size, e.g., 400.')

+

+ if img_mode == 'RGBA': # RGBA images should be saved in png format

+ extension = 'png'

+ # save_path = f'output/out.{extension}'

+ # cv2.imwrite(save_path, output)

+ out_path = Path(tempfile.mkdtemp()) / f'out.{extension}'

+ cv2.imwrite(str(out_path), output)

+ except Exception as error:

+ print('global exception: ', error)

+ finally:

+ clean_folder('output')

+ return out_path

+

+

+def clean_folder(folder):

+ for filename in os.listdir(folder):

+ file_path = os.path.join(folder, filename)

+ try:

+ if os.path.isfile(file_path) or os.path.islink(file_path):

+ os.unlink(file_path)

+ elif os.path.isdir(file_path):

+ shutil.rmtree(file_path)

+ except Exception as e:

+ print(f'Failed to delete {file_path}. Reason: {e}')

diff --git a/docs/CONTRIBUTING.md b/docs/CONTRIBUTING.md

new file mode 100644

index 0000000000000000000000000000000000000000..75990c2ce7545b72fb6ebad8295ca4895f437205

--- /dev/null

+++ b/docs/CONTRIBUTING.md

@@ -0,0 +1,44 @@

+# Contributing to Real-ESRGAN

+

+:art: Real-ESRGAN needs your contributions. Any contributions are welcome, such as new features/models/typo fixes/suggestions/maintenance, *etc*. See [CONTRIBUTING.md](docs/CONTRIBUTING.md). All contributors are list [here](README.md#hugs-acknowledgement).

+

+We like open-source and want to develop practical algorithms for general image restoration. However, individual strength is limited. So, any kinds of contributions are welcome, such as:

+

+- New features

+- New models (your fine-tuned models)

+- Bug fixes

+- Typo fixes

+- Suggestions

+- Maintenance

+- Documents

+- *etc*

+

+## Workflow

+

+1. Fork and pull the latest Real-ESRGAN repository

+1. Checkout a new branch (do not use master branch for PRs)

+1. Commit your changes

+1. Create a PR

+

+**Note**:

+

+1. Please check the code style and linting

+ 1. The style configuration is specified in [setup.cfg](setup.cfg)

+ 1. If you use VSCode, the settings are configured in [.vscode/settings.json](.vscode/settings.json)

+1. Strongly recommend using `pre-commit hook`. It will check your code style and linting before your commit.

+ 1. In the root path of project folder, run `pre-commit install`

+ 1. The pre-commit configuration is listed in [.pre-commit-config.yaml](.pre-commit-config.yaml)

+1. Better to [open a discussion](https://github.com/xinntao/Real-ESRGAN/discussions) before large changes.

+ 1. Welcome to discuss :sunglasses:. I will try my best to join the discussion.

+

+## TODO List

+

+:zero: The most straightforward way of improving model performance is to fine-tune on some specific datasets.

+

+Here are some TODOs:

+

+- [ ] optimize for human faces

+- [ ] optimize for texts

+- [ ] support controllable restoration strength

+

+:one: There are also [several issues](https://github.com/xinntao/Real-ESRGAN/issues) that require helpers to improve. If you can help, please let me know :smile:

diff --git a/docs/FAQ.md b/docs/FAQ.md

new file mode 100644

index 0000000000000000000000000000000000000000..843f4dd847487066a1c7c105c7292e2de0bd5f1a

--- /dev/null

+++ b/docs/FAQ.md

@@ -0,0 +1,10 @@

+# FAQ

+

+1. **Q: How to select models?**

+A: Please refer to [docs/model_zoo.md](docs/model_zoo.md)

+

+1. **Q: Can `face_enhance` be used for anime images/animation videos?**

+A: No, it can only be used for real faces. It is recommended not to use this option for anime images/animation videos to save GPU memory.

+

+1. **Q: Error "slow_conv2d_cpu" not implemented for 'Half'**

+A: In order to save GPU memory consumption and speed up inference, Real-ESRGAN uses half precision (fp16) during inference by default. However, some operators for half inference are not implemented in CPU mode. You need to add **`--fp32` option** for the commands. For example, `python inference_realesrgan.py -n RealESRGAN_x4plus.pth -i inputs --fp32`.

diff --git a/docs/Training.md b/docs/Training.md

new file mode 100644

index 0000000000000000000000000000000000000000..77da5ea5763f7a6ab291ebc28afb13be37df3f50

--- /dev/null

+++ b/docs/Training.md

@@ -0,0 +1,271 @@

+# :computer: How to Train/Finetune Real-ESRGAN

+

+- [Train Real-ESRGAN](#train-real-esrgan)

+ - [Overview](#overview)

+ - [Dataset Preparation](#dataset-preparation)

+ - [Train Real-ESRNet](#Train-Real-ESRNet)

+ - [Train Real-ESRGAN](#Train-Real-ESRGAN)

+- [Finetune Real-ESRGAN on your own dataset](#Finetune-Real-ESRGAN-on-your-own-dataset)

+ - [Generate degraded images on the fly](#Generate-degraded-images-on-the-fly)

+ - [Use paired training data](#use-your-own-paired-data)

+

+[English](Training.md) **|** [简体中文](Training_CN.md)

+

+## Train Real-ESRGAN

+

+### Overview

+

+The training has been divided into two stages. These two stages have the same data synthesis process and training pipeline, except for the loss functions. Specifically,

+

+1. We first train Real-ESRNet with L1 loss from the pre-trained model ESRGAN.

+1. We then use the trained Real-ESRNet model as an initialization of the generator, and train the Real-ESRGAN with a combination of L1 loss, perceptual loss and GAN loss.

+

+### Dataset Preparation

+

+We use DF2K (DIV2K and Flickr2K) + OST datasets for our training. Only HR images are required.

+You can download from :

+

+1. DIV2K: http://data.vision.ee.ethz.ch/cvl/DIV2K/DIV2K_train_HR.zip

+2. Flickr2K: https://cv.snu.ac.kr/research/EDSR/Flickr2K.tar

+3. OST: https://openmmlab.oss-cn-hangzhou.aliyuncs.com/datasets/OST_dataset.zip

+

+Here are steps for data preparation.

+

+#### Step 1: [Optional] Generate multi-scale images

+

+For the DF2K dataset, we use a multi-scale strategy, *i.e.*, we downsample HR images to obtain several Ground-Truth images with different scales.

+You can use the [scripts/generate_multiscale_DF2K.py](scripts/generate_multiscale_DF2K.py) script to generate multi-scale images.

+Note that this step can be omitted if you just want to have a fast try.

+

+```bash

+python scripts/generate_multiscale_DF2K.py --input datasets/DF2K/DF2K_HR --output datasets/DF2K/DF2K_multiscale

+```

+

+#### Step 2: [Optional] Crop to sub-images

+

+We then crop DF2K images into sub-images for faster IO and processing.

+This step is optional if your IO is enough or your disk space is limited.

+

+You can use the [scripts/extract_subimages.py](scripts/extract_subimages.py) script. Here is the example:

+

+```bash

+ python scripts/extract_subimages.py --input datasets/DF2K/DF2K_multiscale --output datasets/DF2K/DF2K_multiscale_sub --crop_size 400 --step 200

+```

+

+#### Step 3: Prepare a txt for meta information

+

+You need to prepare a txt file containing the image paths. The following are some examples in `meta_info_DF2Kmultiscale+OST_sub.txt` (As different users may have different sub-images partitions, this file is not suitable for your purpose and you need to prepare your own txt file):

+

+```txt

+DF2K_HR_sub/000001_s001.png

+DF2K_HR_sub/000001_s002.png

+DF2K_HR_sub/000001_s003.png

+...

+```

+

+You can use the [scripts/generate_meta_info.py](scripts/generate_meta_info.py) script to generate the txt file.

+You can merge several folders into one meta_info txt. Here is the example:

+

+```bash

+ python scripts/generate_meta_info.py --input datasets/DF2K/DF2K_HR datasets/DF2K/DF2K_multiscale --root datasets/DF2K datasets/DF2K --meta_info datasets/DF2K/meta_info/meta_info_DF2Kmultiscale.txt

+```

+

+### Train Real-ESRNet

+

+1. Download pre-trained model [ESRGAN](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.1/ESRGAN_SRx4_DF2KOST_official-ff704c30.pth) into `experiments/pretrained_models`.

+ ```bash

+ wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.1/ESRGAN_SRx4_DF2KOST_official-ff704c30.pth -P experiments/pretrained_models

+ ```

+1. Modify the content in the option file `options/train_realesrnet_x4plus.yml` accordingly:

+ ```yml

+ train:

+ name: DF2K+OST

+ type: RealESRGANDataset

+ dataroot_gt: datasets/DF2K # modify to the root path of your folder

+ meta_info: realesrgan/meta_info/meta_info_DF2Kmultiscale+OST_sub.txt # modify to your own generate meta info txt

+ io_backend:

+ type: disk

+ ```

+1. If you want to perform validation during training, uncomment those lines and modify accordingly:

+ ```yml

+ # Uncomment these for validation

+ # val:

+ # name: validation

+ # type: PairedImageDataset

+ # dataroot_gt: path_to_gt

+ # dataroot_lq: path_to_lq

+ # io_backend:

+ # type: disk

+

+ ...

+

+ # Uncomment these for validation

+ # validation settings

+ # val:

+ # val_freq: !!float 5e3

+ # save_img: True

+

+ # metrics:

+ # psnr: # metric name, can be arbitrary

+ # type: calculate_psnr

+ # crop_border: 4

+ # test_y_channel: false

+ ```

+1. Before the formal training, you may run in the `--debug` mode to see whether everything is OK. We use four GPUs for training:

+ ```bash

+ CUDA_VISIBLE_DEVICES=0,1,2,3 \

+ python -m torch.distributed.launch --nproc_per_node=4 --master_port=4321 realesrgan/train.py -opt options/train_realesrnet_x4plus.yml --launcher pytorch --debug

+ ```

+

+ Train with **a single GPU** in the *debug* mode:

+ ```bash

+ python realesrgan/train.py -opt options/train_realesrnet_x4plus.yml --debug

+ ```

+1. The formal training. We use four GPUs for training. We use the `--auto_resume` argument to automatically resume the training if necessary.

+ ```bash

+ CUDA_VISIBLE_DEVICES=0,1,2,3 \

+ python -m torch.distributed.launch --nproc_per_node=4 --master_port=4321 realesrgan/train.py -opt options/train_realesrnet_x4plus.yml --launcher pytorch --auto_resume

+ ```

+

+ Train with **a single GPU**:

+ ```bash

+ python realesrgan/train.py -opt options/train_realesrnet_x4plus.yml --auto_resume

+ ```

+

+### Train Real-ESRGAN

+

+1. After the training of Real-ESRNet, you now have the file `experiments/train_RealESRNetx4plus_1000k_B12G4_fromESRGAN/model/net_g_1000000.pth`. If you need to specify the pre-trained path to other files, modify the `pretrain_network_g` value in the option file `train_realesrgan_x4plus.yml`.

+1. Modify the option file `train_realesrgan_x4plus.yml` accordingly. Most modifications are similar to those listed above.

+1. Before the formal training, you may run in the `--debug` mode to see whether everything is OK. We use four GPUs for training:

+ ```bash

+ CUDA_VISIBLE_DEVICES=0,1,2,3 \

+ python -m torch.distributed.launch --nproc_per_node=4 --master_port=4321 realesrgan/train.py -opt options/train_realesrgan_x4plus.yml --launcher pytorch --debug

+ ```

+

+ Train with **a single GPU** in the *debug* mode:

+ ```bash

+ python realesrgan/train.py -opt options/train_realesrgan_x4plus.yml --debug

+ ```

+1. The formal training. We use four GPUs for training. We use the `--auto_resume` argument to automatically resume the training if necessary.

+ ```bash

+ CUDA_VISIBLE_DEVICES=0,1,2,3 \

+ python -m torch.distributed.launch --nproc_per_node=4 --master_port=4321 realesrgan/train.py -opt options/train_realesrgan_x4plus.yml --launcher pytorch --auto_resume

+ ```

+

+ Train with **a single GPU**:

+ ```bash

+ python realesrgan/train.py -opt options/train_realesrgan_x4plus.yml --auto_resume

+ ```

+

+## Finetune Real-ESRGAN on your own dataset

+

+You can finetune Real-ESRGAN on your own dataset. Typically, the fine-tuning process can be divided into two cases:

+

+1. [Generate degraded images on the fly](#Generate-degraded-images-on-the-fly)

+1. [Use your own **paired** data](#Use-paired-training-data)

+

+### Generate degraded images on the fly

+

+Only high-resolution images are required. The low-quality images are generated with the degradation process described in Real-ESRGAN during training.

+

+**1. Prepare dataset**

+

+See [this section](#dataset-preparation) for more details.

+

+**2. Download pre-trained models**

+

+Download pre-trained models into `experiments/pretrained_models`.

+

+- *RealESRGAN_x4plus.pth*:

+ ```bash

+ wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth -P experiments/pretrained_models

+ ```

+

+- *RealESRGAN_x4plus_netD.pth*:

+ ```bash

+ wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.3/RealESRGAN_x4plus_netD.pth -P experiments/pretrained_models

+ ```

+

+**3. Finetune**

+

+Modify [options/finetune_realesrgan_x4plus.yml](options/finetune_realesrgan_x4plus.yml) accordingly, especially the `datasets` part:

+

+```yml

+train:

+ name: DF2K+OST

+ type: RealESRGANDataset

+ dataroot_gt: datasets/DF2K # modify to the root path of your folder

+ meta_info: realesrgan/meta_info/meta_info_DF2Kmultiscale+OST_sub.txt # modify to your own generate meta info txt

+ io_backend:

+ type: disk

+```

+

+We use four GPUs for training. We use the `--auto_resume` argument to automatically resume the training if necessary.

+

+```bash

+CUDA_VISIBLE_DEVICES=0,1,2,3 \

+python -m torch.distributed.launch --nproc_per_node=4 --master_port=4321 realesrgan/train.py -opt options/finetune_realesrgan_x4plus.yml --launcher pytorch --auto_resume

+```

+

+Finetune with **a single GPU**:

+```bash

+python realesrgan/train.py -opt options/finetune_realesrgan_x4plus.yml --auto_resume

+```

+

+### Use your own paired data

+

+You can also finetune RealESRGAN with your own paired data. It is more similar to fine-tuning ESRGAN.

+

+**1. Prepare dataset**

+

+Assume that you already have two folders:

+

+- **gt folder** (Ground-truth, high-resolution images): *datasets/DF2K/DIV2K_train_HR_sub*

+- **lq folder** (Low quality, low-resolution images): *datasets/DF2K/DIV2K_train_LR_bicubic_X4_sub*

+

+Then, you can prepare the meta_info txt file using the script [scripts/generate_meta_info_pairdata.py](scripts/generate_meta_info_pairdata.py):

+

+```bash

+python scripts/generate_meta_info_pairdata.py --input datasets/DF2K/DIV2K_train_HR_sub datasets/DF2K/DIV2K_train_LR_bicubic_X4_sub --meta_info datasets/DF2K/meta_info/meta_info_DIV2K_sub_pair.txt

+```

+

+**2. Download pre-trained models**

+

+Download pre-trained models into `experiments/pretrained_models`.

+

+- *RealESRGAN_x4plus.pth*

+ ```bash

+ wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth -P experiments/pretrained_models

+ ```

+

+- *RealESRGAN_x4plus_netD.pth*

+ ```bash

+ wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.3/RealESRGAN_x4plus_netD.pth -P experiments/pretrained_models

+ ```

+

+**3. Finetune**

+

+Modify [options/finetune_realesrgan_x4plus_pairdata.yml](options/finetune_realesrgan_x4plus_pairdata.yml) accordingly, especially the `datasets` part:

+

+```yml

+train:

+ name: DIV2K

+ type: RealESRGANPairedDataset

+ dataroot_gt: datasets/DF2K # modify to the root path of your folder

+ dataroot_lq: datasets/DF2K # modify to the root path of your folder

+ meta_info: datasets/DF2K/meta_info/meta_info_DIV2K_sub_pair.txt # modify to your own generate meta info txt

+ io_backend:

+ type: disk

+```

+

+We use four GPUs for training. We use the `--auto_resume` argument to automatically resume the training if necessary.

+

+```bash

+CUDA_VISIBLE_DEVICES=0,1,2,3 \

+python -m torch.distributed.launch --nproc_per_node=4 --master_port=4321 realesrgan/train.py -opt options/finetune_realesrgan_x4plus_pairdata.yml --launcher pytorch --auto_resume

+```

+

+Finetune with **a single GPU**:

+```bash

+python realesrgan/train.py -opt options/finetune_realesrgan_x4plus_pairdata.yml --auto_resume

+```

diff --git a/docs/Training_CN.md b/docs/Training_CN.md

new file mode 100644

index 0000000000000000000000000000000000000000..dabc3c5d97e134a2d551157c2dd03a629ec661bc

--- /dev/null

+++ b/docs/Training_CN.md

@@ -0,0 +1,271 @@

+# :computer: 如何训练/微调 Real-ESRGAN

+

+- [训练 Real-ESRGAN](#训练-real-esrgan)

+ - [概述](#概述)

+ - [准备数据集](#准备数据集)

+ - [训练 Real-ESRNet 模型](#训练-real-esrnet-模型)

+ - [训练 Real-ESRGAN 模型](#训练-real-esrgan-模型)

+- [用自己的数据集微调 Real-ESRGAN](#用自己的数据集微调-real-esrgan)

+ - [动态生成降级图像](#动态生成降级图像)

+ - [使用已配对的数据](#使用已配对的数据)

+

+[English](Training.md) **|** [简体中文](Training_CN.md)

+

+## 训练 Real-ESRGAN

+

+### 概述

+

+训练分为两个步骤。除了 loss 函数外,这两个步骤拥有相同数据合成以及训练的一条龙流程。具体点说:

+

+1. 首先使用 L1 loss 训练 Real-ESRNet 模型,其中 L1 loss 来自预先训练的 ESRGAN 模型。

+

+2. 然后我们将 Real-ESRNet 模型作为生成器初始化,结合L1 loss、感知 loss、GAN loss 三者的参数对 Real-ESRGAN 进行训练。

+

+### 准备数据集

+

+我们使用 DF2K ( DIV2K 和 Flickr2K ) + OST 数据集进行训练。只需要HR图像!

+下面是网站链接:

+1. DIV2K: http://data.vision.ee.ethz.ch/cvl/DIV2K/DIV2K_train_HR.zip

+2. Flickr2K: https://cv.snu.ac.kr/research/EDSR/Flickr2K.tar

+3. OST: https://openmmlab.oss-cn-hangzhou.aliyuncs.com/datasets/OST_dataset.zip

+

+以下是数据的准备步骤。

+

+#### 第1步:【可选】生成多尺寸图片

+

+针对 DF2K 数据集,我们使用多尺寸缩放策略,*换言之*,我们对 HR 图像进行下采样,就能获得多尺寸的标准参考(Ground-Truth)图像。

+您可以使用这个 [scripts/generate_multiscale_DF2K.py](scripts/generate_multiscale_DF2K.py) 脚本快速生成多尺寸的图像。

+注意:如果您只想简单试试,那么可以跳过此步骤。

+

+```bash

+python scripts/generate_multiscale_DF2K.py --input datasets/DF2K/DF2K_HR --output datasets/DF2K/DF2K_multiscale

+```

+

+#### 第2步:【可选】裁切为子图像

+

+我们可以将 DF2K 图像裁切为子图像,以加快 IO 和处理速度。

+如果你的 IO 够好或储存空间有限,那么此步骤是可选的。

+

+您可以使用脚本 [scripts/extract_subimages.py](scripts/extract_subimages.py)。这是使用示例:

+

+```bash

+ python scripts/extract_subimages.py --input datasets/DF2K/DF2K_multiscale --output datasets/DF2K/DF2K_multiscale_sub --crop_size 400 --step 200

+```

+

+#### 第3步:准备元信息 txt

+

+您需要准备一个包含图像路径的 txt 文件。下面是 `meta_info_DF2Kmultiscale+OST_sub.txt` 中的部分展示(由于各个用户可能有截然不同的子图像划分,这个文件不适合你的需求,你得准备自己的 txt 文件):

+

+```txt

+DF2K_HR_sub/000001_s001.png

+DF2K_HR_sub/000001_s002.png

+DF2K_HR_sub/000001_s003.png

+...

+```

+

+你可以使用该脚本 [scripts/generate_meta_info.py](scripts/generate_meta_info.py) 生成包含图像路径的 txt 文件。

+你还可以合并多个文件夹的图像路径到一个元信息(meta_info)txt。这是使用示例:

+

+```bash

+ python scripts/generate_meta_info.py --input datasets/DF2K/DF2K_HR, datasets/DF2K/DF2K_multiscale --root datasets/DF2K, datasets/DF2K --meta_info datasets/DF2K/meta_info/meta_info_DF2Kmultiscale.txt

+```

+

+### 训练 Real-ESRNet 模型

+

+1. 下载预先训练的模型 [ESRGAN](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.1/ESRGAN_SRx4_DF2KOST_official-ff704c30.pth),放到 `experiments/pretrained_models`目录下。

+ ```bash

+ wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.1/ESRGAN_SRx4_DF2KOST_official-ff704c30.pth -P experiments/pretrained_models

+ ```

+2. 相应地修改选项文件 `options/train_realesrnet_x4plus.yml` 中的内容:

+ ```yml

+ train:

+ name: DF2K+OST

+ type: RealESRGANDataset

+ dataroot_gt: datasets/DF2K # 修改为你的数据集文件夹根目录

+ meta_info: realesrgan/meta_info/meta_info_DF2Kmultiscale+OST_sub.txt # 修改为你自己生成的元信息txt

+ io_backend:

+ type: disk

+ ```

+3. 如果你想在训练过程中执行验证,就取消注释这些内容并进行相应的修改:

+ ```yml

+ # 取消注释这些以进行验证

+ # val:

+ # name: validation

+ # type: PairedImageDataset

+ # dataroot_gt: path_to_gt

+ # dataroot_lq: path_to_lq

+ # io_backend:

+ # type: disk

+

+ ...

+

+ # 取消注释这些以进行验证

+ # 验证设置

+ # val:

+ # val_freq: !!float 5e3

+ # save_img: True

+

+ # metrics:

+ # psnr: # 指标名称,可以是任意的

+ # type: calculate_psnr

+ # crop_border: 4

+ # test_y_channel: false

+ ```

+4. 正式训练之前,你可以用 `--debug` 模式检查是否正常运行。我们用了4个GPU进行训练:

+ ```bash

+ CUDA_VISIBLE_DEVICES=0,1,2,3 \

+ python -m torch.distributed.launch --nproc_per_node=4 --master_port=4321 realesrgan/train.py -opt options/train_realesrnet_x4plus.yml --launcher pytorch --debug

+ ```

+

+ 用 **1个GPU** 训练的 debug 模式示例:

+ ```bash

+ python realesrgan/train.py -opt options/train_realesrnet_x4plus.yml --debug

+ ```

+5. 正式训练开始。我们用了4个GPU进行训练。还可以使用参数 `--auto_resume` 在必要时自动恢复训练。

+ ```bash

+ CUDA_VISIBLE_DEVICES=0,1,2,3 \

+ python -m torch.distributed.launch --nproc_per_node=4 --master_port=4321 realesrgan/train.py -opt options/train_realesrnet_x4plus.yml --launcher pytorch --auto_resume

+ ```

+

+ 用 **1个GPU** 训练:

+ ```bash

+ python realesrgan/train.py -opt options/train_realesrnet_x4plus.yml --auto_resume

+ ```

+

+### 训练 Real-ESRGAN 模型

+

+1. 训练 Real-ESRNet 模型后,您得到了这个 `experiments/train_RealESRNetx4plus_1000k_B12G4_fromESRGAN/model/net_g_1000000.pth` 文件。如果需要指定预训练路径到其他文件,请修改选项文件 `train_realesrgan_x4plus.yml` 中 `pretrain_network_g` 的值。

+1. 修改选项文件 `train_realesrgan_x4plus.yml` 的内容。大多数修改与上节提到的类似。

+1. 正式训练之前,你可以以 `--debug` 模式检查是否正常运行。我们使用了4个GPU进行训练:

+ ```bash

+ CUDA_VISIBLE_DEVICES=0,1,2,3 \

+ python -m torch.distributed.launch --nproc_per_node=4 --master_port=4321 realesrgan/train.py -opt options/train_realesrgan_x4plus.yml --launcher pytorch --debug

+ ```

+

+ 用 **1个GPU** 训练的 debug 模式示例:

+ ```bash

+ python realesrgan/train.py -opt options/train_realesrgan_x4plus.yml --debug

+ ```

+1. 正式训练开始。我们使用4个GPU进行训练。还可以使用参数 `--auto_resume` 在必要时自动恢复训练。

+ ```bash

+ CUDA_VISIBLE_DEVICES=0,1,2,3 \

+ python -m torch.distributed.launch --nproc_per_node=4 --master_port=4321 realesrgan/train.py -opt options/train_realesrgan_x4plus.yml --launcher pytorch --auto_resume

+ ```

+

+ 用 **1个GPU** 训练:

+ ```bash

+ python realesrgan/train.py -opt options/train_realesrgan_x4plus.yml --auto_resume

+ ```

+

+## 用自己的数据集微调 Real-ESRGAN

+

+你可以用自己的数据集微调 Real-ESRGAN。一般地,微调(Fine-Tune)程序可以分为两种类型:

+

+1. [动态生成降级图像](#动态生成降级图像)

+2. [使用**已配对**的数据](#使用已配对的数据)

+

+### 动态生成降级图像

+

+只需要高分辨率图像。在训练过程中,使用 Real-ESRGAN 描述的降级模型生成低质量图像。

+

+**1. 准备数据集**

+

+完整信息请参见[本节](#准备数据集)。

+

+**2. 下载预训练模型**

+

+下载预先训练的模型到 `experiments/pretrained_models` 目录下。

+

+- *RealESRGAN_x4plus.pth*:

+ ```bash

+ wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth -P experiments/pretrained_models

+ ```

+

+- *RealESRGAN_x4plus_netD.pth*:

+ ```bash

+ wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.3/RealESRGAN_x4plus_netD.pth -P experiments/pretrained_models

+ ```

+

+**3. 微调**

+

+修改选项文件 [options/finetune_realesrgan_x4plus.yml](options/finetune_realesrgan_x4plus.yml) ,特别是 `datasets` 部分:

+

+```yml

+train:

+ name: DF2K+OST

+ type: RealESRGANDataset

+ dataroot_gt: datasets/DF2K # 修改为你的数据集文件夹根目录

+ meta_info: realesrgan/meta_info/meta_info_DF2Kmultiscale+OST_sub.txt # 修改为你自己生成的元信息txt

+ io_backend:

+ type: disk

+```

+

+我们使用4个GPU进行训练。还可以使用参数 `--auto_resume` 在必要时自动恢复训练。

+

+```bash

+CUDA_VISIBLE_DEVICES=0,1,2,3 \

+python -m torch.distributed.launch --nproc_per_node=4 --master_port=4321 realesrgan/train.py -opt options/finetune_realesrgan_x4plus.yml --launcher pytorch --auto_resume

+```

+

+用 **1个GPU** 训练:

+```bash

+python realesrgan/train.py -opt options/finetune_realesrgan_x4plus.yml --auto_resume

+```

+

+### 使用已配对的数据

+

+你还可以用自己已经配对的数据微调 RealESRGAN。这个过程更类似于微调 ESRGAN。

+

+**1. 准备数据集**

+

+假设你已经有两个文件夹(folder):

+

+- **gt folder**(标准参考,高分辨率图像):*datasets/DF2K/DIV2K_train_HR_sub*

+- **lq folder**(低质量,低分辨率图像):*datasets/DF2K/DIV2K_train_LR_bicubic_X4_sub*

+

+然后,您可以使用脚本 [scripts/generate_meta_info_pairdata.py](scripts/generate_meta_info_pairdata.py) 生成元信息(meta_info)txt 文件。

+

+```bash

+python scripts/generate_meta_info_pairdata.py --input datasets/DF2K/DIV2K_train_HR_sub datasets/DF2K/DIV2K_train_LR_bicubic_X4_sub --meta_info datasets/DF2K/meta_info/meta_info_DIV2K_sub_pair.txt

+```

+

+**2. 下载预训练模型**

+

+下载预先训练的模型到 `experiments/pretrained_models` 目录下。

+

+- *RealESRGAN_x4plus.pth*:

+ ```bash

+ wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth -P experiments/pretrained_models

+ ```

+

+- *RealESRGAN_x4plus_netD.pth*:

+ ```bash

+ wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.3/RealESRGAN_x4plus_netD.pth -P experiments/pretrained_models

+ ```

+

+**3. 微调**

+

+修改选项文件 [options/finetune_realesrgan_x4plus_pairdata.yml](options/finetune_realesrgan_x4plus_pairdata.yml) ,特别是 `datasets` 部分:

+

+```yml

+train:

+ name: DIV2K

+ type: RealESRGANPairedDataset

+ dataroot_gt: datasets/DF2K # 修改为你的 gt folder 文件夹根目录

+ dataroot_lq: datasets/DF2K # 修改为你的 lq folder 文件夹根目录

+ meta_info: datasets/DF2K/meta_info/meta_info_DIV2K_sub_pair.txt # 修改为你自己生成的元信息txt

+ io_backend:

+ type: disk

+```

+

+我们使用4个GPU进行训练。还可以使用参数 `--auto_resume` 在必要时自动恢复训练。

+

+```bash

+CUDA_VISIBLE_DEVICES=0,1,2,3 \

+python -m torch.distributed.launch --nproc_per_node=4 --master_port=4321 realesrgan/train.py -opt options/finetune_realesrgan_x4plus_pairdata.yml --launcher pytorch --auto_resume

+```

+

+用 **1个GPU** 训练:

+```bash

+python realesrgan/train.py -opt options/finetune_realesrgan_x4plus_pairdata.yml --auto_resume

+```

diff --git a/docs/anime_comparisons.md b/docs/anime_comparisons.md

new file mode 100644

index 0000000000000000000000000000000000000000..09603bdc989bbf68b1f9f466acac5d8e442b8a01

--- /dev/null

+++ b/docs/anime_comparisons.md

@@ -0,0 +1,66 @@

+# Comparisons among different anime models

+

+[English](anime_comparisons.md) **|** [简体中文](anime_comparisons_CN.md)

+

+## Update News

+

+- 2022/04/24: Release **AnimeVideo-v3**. We have made the following improvements:

+ - **better naturalness**

+ - **Fewer artifacts**

+ - **more faithful to the original colors**

+ - **better texture restoration**

+ - **better background restoration**

+

+## Comparisons

+

+We have compared our RealESRGAN-AnimeVideo-v3 with the following methods.

+Our RealESRGAN-AnimeVideo-v3 can achieve better results with faster inference speed.

+

+- [waifu2x](https://github.com/nihui/waifu2x-ncnn-vulkan) with the hyperparameters: `tile=0`, `noiselevel=2`

+- [Real-CUGAN](https://github.com/bilibili/ailab/tree/main/Real-CUGAN): we use the [20220227](https://github.com/bilibili/ailab/releases/tag/Real-CUGAN-add-faster-low-memory-mode) version, the hyperparameters are: `cache_mode=0`, `tile=0`, `alpha=1`.

+- our RealESRGAN-AnimeVideo-v3

+

+## Results

+

+You may need to **zoom in** for comparing details, or **click the image** to see in the full size. Please note that the images

+in the table below are the resized and cropped patches from the original images, you can download the original inputs and outputs from [Google Drive](https://drive.google.com/drive/folders/1bc_Hje1Nqop9NDkUvci2VACSjL7HZMRp?usp=sharing) .

+

+**More natural results, better background restoration**

+| Input | waifu2x | Real-CUGAN | RealESRGAN

AnimeVideo-v3 |

+| :---: | :---: | :---: | :---: |

+| |  |  |  |

+| |  |  |  |

+| |  |  |  |

+

+**Fewer artifacts, better detailed textures**

+| Input | waifu2x | Real-CUGAN | RealESRGAN

AnimeVideo-v3 |

+| :---: | :---: | :---: | :---: |

+| |  |  |  |

+| |  |  |  |

+| |  |  |  |

+| |  |  |  |

+

+**Other better results**

+| Input | waifu2x | Real-CUGAN | RealESRGAN

AnimeVideo-v3 |

+| :---: | :---: | :---: | :---: |

+| |  |  |  |

+| |  |  |  |

+|  |   |   |   |

+| |  |  |  |

+| |  |  |  |

+

+## Inference Speed

+

+### PyTorch

+

+Note that we only report the **model** time, and ignore the IO time.

+

+| GPU | Input Resolution | waifu2x | Real-CUGAN | RealESRGAN-AnimeVideo-v3

+| :---: | :---: | :---: | :---: | :---: |

+| V100 | 1921 x 1080 | - | 3.4 fps | **10.0** fps |

+| V100 | 1280 x 720 | - | 7.2 fps | **22.6** fps |

+| V100 | 640 x 480 | - | 24.4 fps | **65.9** fps |

+

+### ncnn

+

+- [ ] TODO

diff --git a/docs/anime_comparisons_CN.md b/docs/anime_comparisons_CN.md

new file mode 100644

index 0000000000000000000000000000000000000000..43ba58344ed9554d5b30e2815d1b7d4ab8bc503f

--- /dev/null