+

+ This program is free software: you can redistribute it and/or modify

+ it under the terms of the GNU Affero General Public License as published

+ by the Free Software Foundation, either version 3 of the License, or

+ (at your option) any later version.

+

+ This program is distributed in the hope that it will be useful,

+ but WITHOUT ANY WARRANTY; without even the implied warranty of

+ MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

+ GNU Affero General Public License for more details.

+

+ You should have received a copy of the GNU Affero General Public License

+ along with this program. If not, see .

+

+Also add information on how to contact you by electronic and paper mail.

+

+ If your software can interact with users remotely through a computer

+network, you should also make sure that it provides a way for users to

+get its source. For example, if your program is a web application, its

+interface could display a "Source" link that leads users to an archive

+of the code. There are many ways you could offer source, and different

+solutions will be better for different programs; see section 13 for the

+specific requirements.

+

+ You should also get your employer (if you work as a programmer) or school,

+if any, to sign a "copyright disclaimer" for the program, if necessary.

+For more information on this, and how to apply and follow the GNU AGPL, see

+.

diff --git a/README.md b/README.md

index ffdbe63f08fbe333ee373f78666574eeed514517..7a5c8aec33f74b88f8356ff0c8eab8996224d5d3 100644

--- a/README.md

+++ b/README.md

@@ -1,13 +1,199 @@

----

-title: RVC UI

-emoji: 🏢

-colorFrom: red

-colorTo: gray

-sdk: gradio

-sdk_version: 4.39.0

-app_file: app.py

-pinned: false

-license: mit

----

-

-Check out the configuration reference at https://huggingface.co/docs/hub/spaces-config-reference

+

+

+# Retrieval-based-Voice-Conversion-WebUI

+An easy-to-use voice conversion framework based on VITS.

+

+

+

+[](https://github.com/fumiama/Retrieval-based-Voice-Conversion-WebUI)

+

+

+

+[](https://github.com/fumiama/Retrieval-based-Voice-Conversion-WebUI/blob/main/LICENSE)

+[](https://huggingface.co/lj1995/VoiceConversionWebUI/tree/main/)

+

+[](https://discord.gg/HcsmBBGyVk)

+

+[**FAQ (Frequently Asked Questions)**](https://github.com/fumiama/Retrieval-based-Voice-Conversion-WebUI/wiki/FAQ-(Frequently-Asked-Questions))

+

+[**English**](./README.md) | [**中文简体**](./docs/cn/README.cn.md) | [**日本語**](./docs/jp/README.ja.md) | [**한국어**](./docs/kr/README.ko.md) ([**韓國語**](./docs/kr/README.ko.han.md)) | [**Français**](./docs/fr/README.fr.md) | [**Türkçe**](./docs/tr/README.tr.md) | [**Português**](./docs/pt/README.pt.md)

+

+

+

+> The base model is trained using nearly 50 hours of high-quality open-source VCTK training set. Therefore, there are no copyright concerns, please feel free to use.

+

+> Please look forward to the base model of RVCv3 with larger parameters, larger dataset, better effects, basically flat inference speed, and less training data required.

+

+> There's a [one-click downloader](https://github.com/fumiama/RVC-Models-Downloader) for models/integration packages/tools. Welcome to try.

+

+| Training and inference Webui |

+| :--------: |

+|  |

+

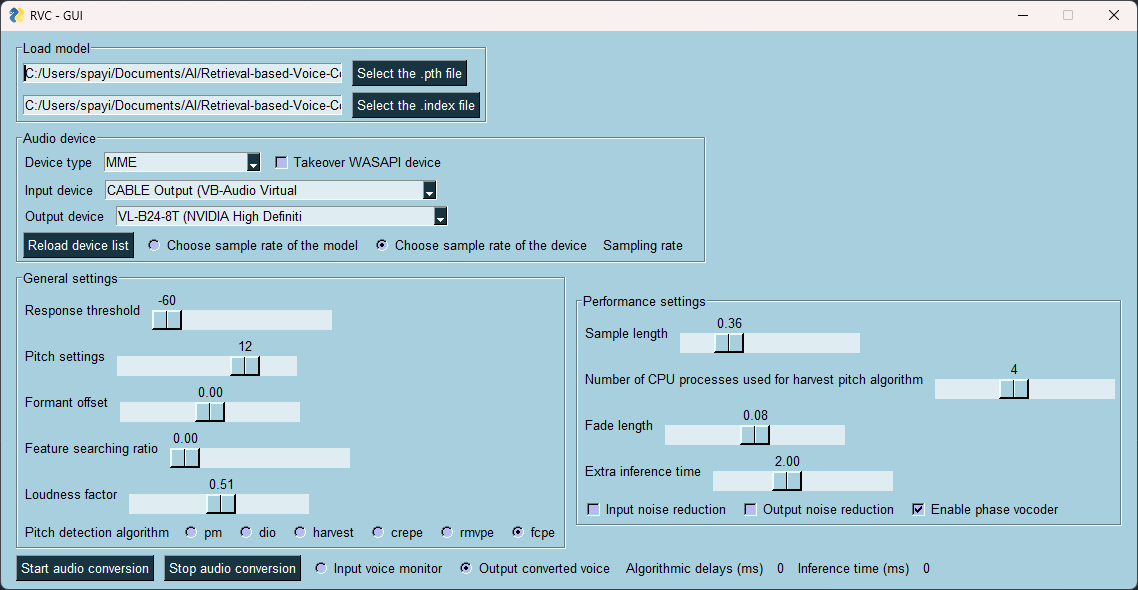

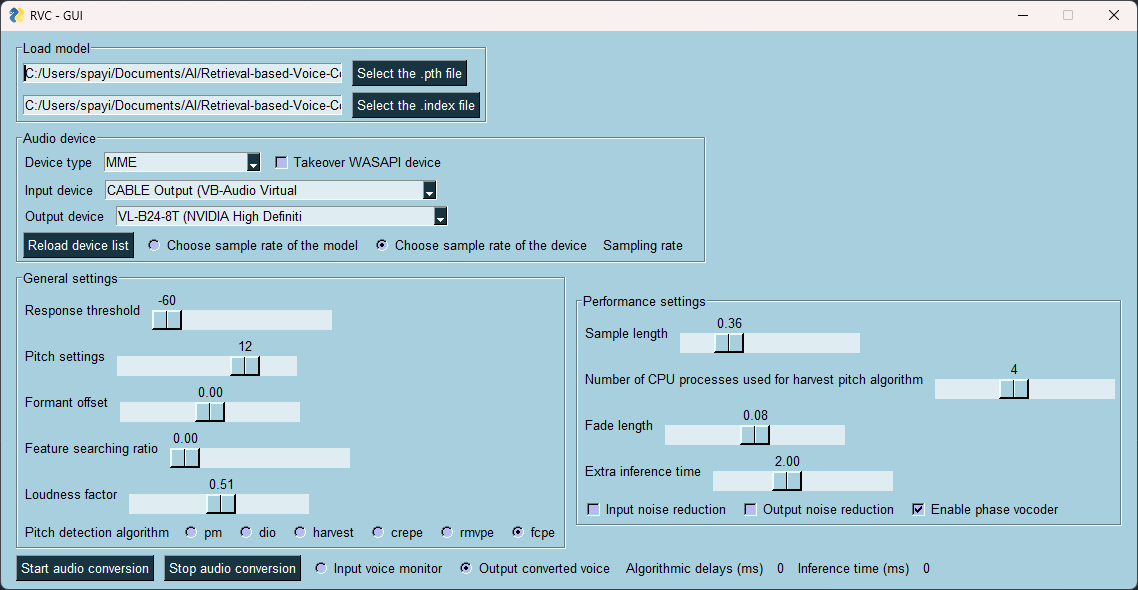

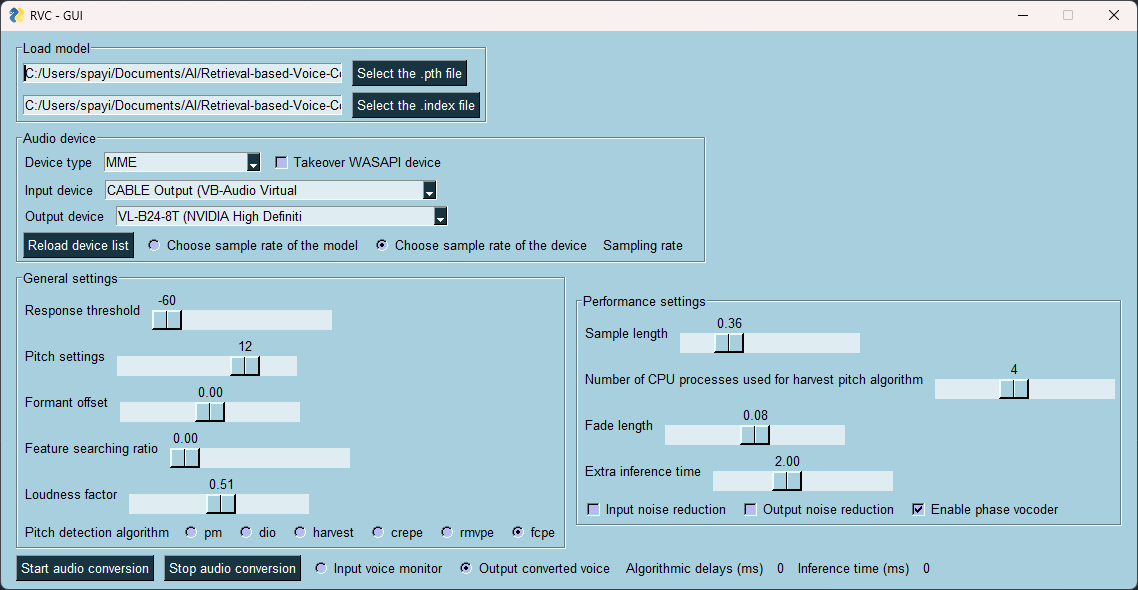

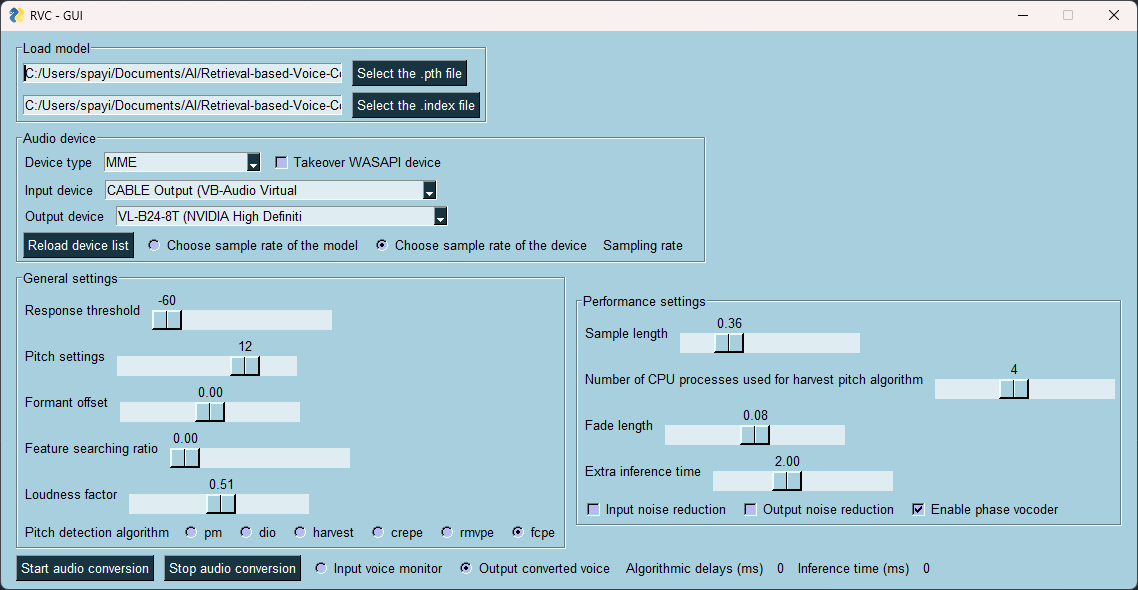

+| Real-time voice changing GUI |

+| :---------: |

+|  |

+

+## Features:

++ Reduce tone leakage by replacing the source feature to training-set feature using top1 retrieval;

++ Easy + fast training, even on poor graphics cards;

++ Training with a small amounts of data (>=10min low noise speech recommended);

++ Model fusion to change timbres (using ckpt processing tab->ckpt merge);

++ Easy-to-use WebUI;

++ UVR5 model to quickly separate vocals and instruments;

++ High-pitch Voice Extraction Algorithm [InterSpeech2023-RMVPE](#Credits) to prevent a muted sound problem. Provides the best results (significantly) and is faster with lower resource consumption than Crepe_full;

++ AMD/Intel graphics cards acceleration supported;

++ Intel ARC graphics cards acceleration with IPEX supported.

+

+Check out our [Demo Video](https://www.bilibili.com/video/BV1pm4y1z7Gm/) here!

+

+## Environment Configuration

+### Python Version Limitation

+> It is recommended to use conda to manage the Python environment.

+

+> For the reason of the version limitation, please refer to this [bug](https://github.com/facebookresearch/fairseq/issues/5012).

+

+```bash

+python --version # 3.8 <= Python < 3.11

+```

+

+### Linux/MacOS One-click Dependency Installation & Startup Script

+By executing `run.sh` in the project root directory, you can configure the `venv` virtual environment, automatically install the required dependencies, and start the main program with one click.

+```bash

+sh ./run.sh

+```

+

+### Manual Installation of Dependencies

+1. Install `pytorch` and its core dependencies, skip if already installed. Refer to: https://pytorch.org/get-started/locally/

+ ```bash

+ pip install torch torchvision torchaudio

+ ```

+2. If you are using Nvidia Ampere architecture (RTX30xx) in Windows, according to the experience of #21, you need to specify the cuda version corresponding to pytorch.

+ ```bash

+ pip install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu117

+ ```

+3. Install the corresponding dependencies according to your own graphics card.

+- Nvidia GPU

+ ```bash

+ pip install -r requirements/main.txt

+ ```

+- AMD/Intel GPU

+ ```bash

+ pip install -r requirements/dml.txt

+ ```

+- AMD ROCM (Linux)

+ ```bash

+ pip install -r requirements/amd.txt

+ ```

+- Intel IPEX (Linux)

+ ```bash

+ pip install -r requirements/ipex.txt

+ ```

+

+## Preparation of Other Files

+### 1. Assets

+> RVC requires some models located in the `assets` folder for inference and training.

+#### Check/Download Automatically (Default)

+> By default, RVC can automatically check the integrity of the required resources when the main program starts.

+

+> Even if the resources are not complete, the program will continue to start.

+

+- If you want to download all resources, please add the `--update` parameter.

+- If you want to skip the resource integrity check at startup, please add the `--nocheck` parameter.

+

+#### Download Manually

+> All resource files are located in [Hugging Face space](https://huggingface.co/lj1995/VoiceConversionWebUI/tree/main/)

+

+> You can find some scripts to download them in the `tools` folder

+

+> You can also use the [one-click downloader](https://github.com/fumiama/RVC-Models-Downloader) for models/integration packages/tools

+

+Below is a list that includes the names of all pre-models and other files required by RVC.

+

+- ./assets/hubert/hubert_base.pt

+ ```bash

+ rvcmd assets/hubert # RVC-Models-Downloader command

+ ```

+- ./assets/pretrained

+ ```bash

+ rvcmd assets/v1 # RVC-Models-Downloader command

+ ```

+- ./assets/uvr5_weights

+ ```bash

+ rvcmd assets/uvr5 # RVC-Models-Downloader command

+ ```

+If you want to use the v2 version of the model, you need to download additional resources in

+

+- ./assets/pretrained_v2

+ ```bash

+ rvcmd assets/v2 # RVC-Models-Downloader command

+ ```

+

+### 2. Download the required files for the rmvpe vocal pitch extraction algorithm

+

+If you want to use the latest RMVPE vocal pitch extraction algorithm, you need to download the pitch extraction model parameters and place them in `assets/rmvpe`.

+

+- [rmvpe.pt](https://huggingface.co/lj1995/VoiceConversionWebUI/blob/main/rmvpe.pt)

+ ```bash

+ rvcmd assets/rmvpe # RVC-Models-Downloader command

+ ```

+

+#### Download DML environment of RMVPE (optional, for AMD/Intel GPU)

+

+- [rmvpe.onnx](https://huggingface.co/lj1995/VoiceConversionWebUI/blob/main/rmvpe.onnx)

+ ```bash

+ rvcmd assets/rmvpe # RVC-Models-Downloader command

+ ```

+

+### 3. AMD ROCM (optional, Linux only)

+

+If you want to run RVC on a Linux system based on AMD's ROCM technology, please first install the required drivers [here](https://rocm.docs.amd.com/en/latest/deploy/linux/os-native/install.html).

+

+If you are using Arch Linux, you can use pacman to install the required drivers.

+````

+pacman -S rocm-hip-sdk rocm-opencl-sdk

+````

+For some models of graphics cards, you may need to configure the following environment variables (such as: RX6700XT).

+````

+export ROCM_PATH=/opt/rocm

+export HSA_OVERRIDE_GFX_VERSION=10.3.0

+````

+Also, make sure your current user is in the `render` and `video` user groups.

+````

+sudo usermod -aG render $USERNAME

+sudo usermod -aG video $USERNAME

+````

+## Getting Started

+### Direct Launch

+Use the following command to start the WebUI.

+```bash

+python web.py

+```

+### Linux/MacOS

+```bash

+./run.sh

+```

+### For I-card users who need to use IPEX technology (Linux only)

+```bash

+source /opt/intel/oneapi/setvars.sh

+./run.sh

+```

+### Using the Integration Package (Windows Users)

+Download and unzip `RVC-beta.7z`. After unzipping, double-click `go-web.bat` to start the program with one click.

+```bash

+rvcmd packs/general/latest # RVC-Models-Downloader command

+```

+

+## Credits

++ [ContentVec](https://github.com/auspicious3000/contentvec/)

++ [VITS](https://github.com/jaywalnut310/vits)

++ [HIFIGAN](https://github.com/jik876/hifi-gan)

++ [Gradio](https://github.com/gradio-app/gradio)

++ [Ultimate Vocal Remover](https://github.com/Anjok07/ultimatevocalremovergui)

++ [audio-slicer](https://github.com/openvpi/audio-slicer)

++ [Vocal pitch extraction:RMVPE](https://github.com/Dream-High/RMVPE)

+ + The pretrained model is trained and tested by [yxlllc](https://github.com/yxlllc/RMVPE) and [RVC-Boss](https://github.com/RVC-Boss).

+

+## Thanks to all contributors for their efforts

+[](https://github.com/fumiama/Retrieval-based-Voice-Conversion-WebUI/graphs/contributors)

diff --git a/assets/hubert/.gitignore b/assets/hubert/.gitignore

new file mode 100644

index 0000000000000000000000000000000000000000..d6b7ef32c8478a48c3994dcadc86837f4371184d

--- /dev/null

+++ b/assets/hubert/.gitignore

@@ -0,0 +1,2 @@

+*

+!.gitignore

diff --git a/assets/hubert/hubert_base.pt b/assets/hubert/hubert_base.pt

new file mode 100644

index 0000000000000000000000000000000000000000..72f47ab58564f01d5cc8b05c63bdf96d944551ff

--- /dev/null

+++ b/assets/hubert/hubert_base.pt

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:f54b40fd2802423a5643779c4861af1e9ee9c1564dc9d32f54f20b5ffba7db96

+size 189507909

diff --git a/assets/indices/.gitignore b/assets/indices/.gitignore

new file mode 100644

index 0000000000000000000000000000000000000000..d6b7ef32c8478a48c3994dcadc86837f4371184d

--- /dev/null

+++ b/assets/indices/.gitignore

@@ -0,0 +1,2 @@

+*

+!.gitignore

diff --git a/assets/pretrained/.gitignore b/assets/pretrained/.gitignore

new file mode 100644

index 0000000000000000000000000000000000000000..d6b7ef32c8478a48c3994dcadc86837f4371184d

--- /dev/null

+++ b/assets/pretrained/.gitignore

@@ -0,0 +1,2 @@

+*

+!.gitignore

diff --git a/assets/pretrained_v2/.gitignore b/assets/pretrained_v2/.gitignore

new file mode 100644

index 0000000000000000000000000000000000000000..d6b7ef32c8478a48c3994dcadc86837f4371184d

--- /dev/null

+++ b/assets/pretrained_v2/.gitignore

@@ -0,0 +1,2 @@

+*

+!.gitignore

diff --git a/assets/rmvpe/.gitignore b/assets/rmvpe/.gitignore

new file mode 100644

index 0000000000000000000000000000000000000000..d6b7ef32c8478a48c3994dcadc86837f4371184d

--- /dev/null

+++ b/assets/rmvpe/.gitignore

@@ -0,0 +1,2 @@

+*

+!.gitignore

diff --git a/assets/rmvpe/rmvpe.pt b/assets/rmvpe/rmvpe.pt

new file mode 100644

index 0000000000000000000000000000000000000000..6362f060846875c3b5d7012adea5f97e47305e7e

--- /dev/null

+++ b/assets/rmvpe/rmvpe.pt

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:6d62215f4306e3ca278246188607209f09af3dc77ed4232efdd069798c4ec193

+size 181184272

diff --git a/assets/uvr5_weights/.gitignore b/assets/uvr5_weights/.gitignore

new file mode 100644

index 0000000000000000000000000000000000000000..d6b7ef32c8478a48c3994dcadc86837f4371184d

--- /dev/null

+++ b/assets/uvr5_weights/.gitignore

@@ -0,0 +1,2 @@

+*

+!.gitignore

diff --git a/configs/__init__.py b/configs/__init__.py

new file mode 100644

index 0000000000000000000000000000000000000000..e9ab5e6d85936c4ff37838b5d66bcd4c4ce2855b

--- /dev/null

+++ b/configs/__init__.py

@@ -0,0 +1 @@

+from .config import singleton_variable, Config, CPUConfig

diff --git a/configs/config.json b/configs/config.json

new file mode 100644

index 0000000000000000000000000000000000000000..1aaa63fd3b7253a1b4271b0ca4e2dedafded559a

--- /dev/null

+++ b/configs/config.json

@@ -0,0 +1,21 @@

+{

+ "pth_path": "",

+ "index_path": "",

+ "sg_hostapi": "MME",

+ "sg_wasapi_exclusive": false,

+ "sg_input_device": "",

+ "sg_output_device": "",

+ "sr_type": "sr_device",

+ "threhold": -60.0,

+ "pitch": 12.0,

+ "formant": 0.0,

+ "rms_mix_rate": 0.5,

+ "index_rate": 0.0,

+ "block_time": 0.15,

+ "crossfade_length": 0.08,

+ "extra_time": 2.0,

+ "n_cpu": 4.0,

+ "use_jit": false,

+ "use_pv": false,

+ "f0method": "fcpe"

+}

\ No newline at end of file

diff --git a/configs/config.py b/configs/config.py

new file mode 100644

index 0000000000000000000000000000000000000000..0e8057c8b2495865c9d607c1e1114da7e57f9480

--- /dev/null

+++ b/configs/config.py

@@ -0,0 +1,259 @@

+import argparse

+import os

+import sys

+import json

+import shutil

+from multiprocessing import cpu_count

+

+import torch

+

+# TODO: move device selection into rvc

+import logging

+

+logger = logging.getLogger(__name__)

+

+

+version_config_list = [

+ "v1/32k.json",

+ "v1/40k.json",

+ "v1/48k.json",

+ "v2/48k.json",

+ "v2/32k.json",

+]

+

+

+def singleton_variable(func):

+ def wrapper(*args, **kwargs):

+ if wrapper.instance is None:

+ wrapper.instance = func(*args, **kwargs)

+ return wrapper.instance

+

+ wrapper.instance = None

+ return wrapper

+

+

+@singleton_variable

+class Config:

+ def __init__(self):

+ self.device = "cuda:0"

+ self.is_half = True

+ self.use_jit = False

+ self.n_cpu = 0

+ self.gpu_name = None

+ self.json_config = self.load_config_json()

+ self.gpu_mem = None

+ (

+ self.python_cmd,

+ self.listen_port,

+ self.global_link,

+ self.noparallel,

+ self.noautoopen,

+ self.dml,

+ self.nocheck,

+ self.update,

+ ) = self.arg_parse()

+ self.instead = ""

+ self.preprocess_per = 3.7

+ self.x_pad, self.x_query, self.x_center, self.x_max = self.device_config()

+

+ @staticmethod

+ def load_config_json() -> dict:

+ d = {}

+ for config_file in version_config_list:

+ p = f"configs/inuse/{config_file}"

+ if not os.path.exists(p):

+ shutil.copy(f"configs/{config_file}", p)

+ with open(f"configs/inuse/{config_file}", "r") as f:

+ d[config_file] = json.load(f)

+ return d

+

+ @staticmethod

+ def arg_parse() -> tuple:

+ exe = sys.executable or "python"

+ parser = argparse.ArgumentParser()

+ parser.add_argument("--port", type=int, default=7865, help="Listen port")

+ parser.add_argument("--pycmd", type=str, default=exe, help="Python command")

+ parser.add_argument(

+ "--global_link", action="store_true", help="Generate a global proxy link"

+ )

+ parser.add_argument(

+ "--noparallel", action="store_true", help="Disable parallel processing"

+ )

+ parser.add_argument(

+ "--noautoopen",

+ action="store_true",

+ help="Do not open in browser automatically",

+ )

+ parser.add_argument(

+ "--dml",

+ action="store_true",

+ help="torch_dml",

+ )

+ parser.add_argument(

+ "--nocheck", action="store_true", help="Run without checking assets"

+ )

+ parser.add_argument(

+ "--update", action="store_true", help="Update to latest assets"

+ )

+ cmd_opts = parser.parse_args()

+

+ cmd_opts.port = cmd_opts.port if 0 <= cmd_opts.port <= 65535 else 7865

+

+ return (

+ cmd_opts.pycmd,

+ cmd_opts.port,

+ cmd_opts.global_link,

+ cmd_opts.noparallel,

+ cmd_opts.noautoopen,

+ cmd_opts.dml,

+ cmd_opts.nocheck,

+ cmd_opts.update,

+ )

+

+ # has_mps is only available in nightly pytorch (for now) and MasOS 12.3+.

+ # check `getattr` and try it for compatibility

+ @staticmethod

+ def has_mps() -> bool:

+ if not torch.backends.mps.is_available():

+ return False

+ try:

+ torch.zeros(1).to(torch.device("mps"))

+ return True

+ except Exception:

+ return False

+

+ @staticmethod

+ def has_xpu() -> bool:

+ if hasattr(torch, "xpu") and torch.xpu.is_available():

+ return True

+ else:

+ return False

+

+ def use_fp32_config(self):

+ for config_file in version_config_list:

+ self.json_config[config_file]["train"]["fp16_run"] = False

+ with open(f"configs/inuse/{config_file}", "r") as f:

+ strr = f.read().replace("true", "false")

+ with open(f"configs/inuse/{config_file}", "w") as f:

+ f.write(strr)

+ logger.info("overwrite " + config_file)

+ self.preprocess_per = 3.0

+ logger.info("overwrite preprocess_per to %d" % (self.preprocess_per))

+

+ def device_config(self):

+ if torch.cuda.is_available():

+ if self.has_xpu():

+ self.device = self.instead = "xpu:0"

+ self.is_half = True

+ i_device = int(self.device.split(":")[-1])

+ self.gpu_name = torch.cuda.get_device_name(i_device)

+ if (

+ ("16" in self.gpu_name and "V100" not in self.gpu_name.upper())

+ or "P40" in self.gpu_name.upper()

+ or "P10" in self.gpu_name.upper()

+ or "1060" in self.gpu_name

+ or "1070" in self.gpu_name

+ or "1080" in self.gpu_name

+ ):

+ logger.info("Found GPU %s, force to fp32", self.gpu_name)

+ self.is_half = False

+ self.use_fp32_config()

+ else:

+ logger.info("Found GPU %s", self.gpu_name)

+ self.gpu_mem = int(

+ torch.cuda.get_device_properties(i_device).total_memory

+ / 1024

+ / 1024

+ / 1024

+ + 0.4

+ )

+ if self.gpu_mem <= 4:

+ self.preprocess_per = 3.0

+ elif self.has_mps():

+ logger.info("No supported Nvidia GPU found")

+ self.device = self.instead = "mps"

+ self.is_half = False

+ self.use_fp32_config()

+ else:

+ logger.info("No supported Nvidia GPU found")

+ self.device = self.instead = "cpu"

+ self.is_half = False

+ self.use_fp32_config()

+

+ if self.n_cpu == 0:

+ self.n_cpu = cpu_count()

+

+ if self.is_half:

+ # 6G显存配置

+ x_pad = 3

+ x_query = 10

+ x_center = 60

+ x_max = 65

+ else:

+ # 5G显存配置

+ x_pad = 1

+ x_query = 6

+ x_center = 38

+ x_max = 41

+

+ if self.gpu_mem is not None and self.gpu_mem <= 4:

+ x_pad = 1

+ x_query = 5

+ x_center = 30

+ x_max = 32

+ if self.dml:

+ logger.info("Use DirectML instead")

+ import torch_directml

+

+ self.device = torch_directml.device(torch_directml.default_device())

+ self.is_half = False

+ else:

+ if self.instead:

+ logger.info(f"Use {self.instead} instead")

+ logger.info(

+ "Half-precision floating-point: %s, device: %s"

+ % (self.is_half, self.device)

+ )

+ return x_pad, x_query, x_center, x_max

+

+

+@singleton_variable

+class CPUConfig:

+ def __init__(self):

+ self.device = "cpu"

+ self.is_half = False

+ self.use_jit = False

+ self.n_cpu = 1

+ self.gpu_name = None

+ self.json_config = self.load_config_json()

+ self.gpu_mem = None

+ self.instead = "cpu"

+ self.preprocess_per = 3.7

+ self.x_pad, self.x_query, self.x_center, self.x_max = self.device_config()

+

+ @staticmethod

+ def load_config_json() -> dict:

+ d = {}

+ for config_file in version_config_list:

+ with open(f"configs/{config_file}", "r") as f:

+ d[config_file] = json.load(f)

+ return d

+

+ def use_fp32_config(self):

+ for config_file in version_config_list:

+ self.json_config[config_file]["train"]["fp16_run"] = False

+ self.preprocess_per = 3.0

+

+ def device_config(self):

+ self.use_fp32_config()

+

+ if self.n_cpu == 0:

+ self.n_cpu = cpu_count()

+

+ # 5G显存配置

+ x_pad = 1

+ x_query = 6

+ x_center = 38

+ x_max = 41

+

+ return x_pad, x_query, x_center, x_max

diff --git a/configs/inuse/.gitignore b/configs/inuse/.gitignore

new file mode 100644

index 0000000000000000000000000000000000000000..419423674c957a466a985a929c6b846494643f57

--- /dev/null

+++ b/configs/inuse/.gitignore

@@ -0,0 +1,4 @@

+*

+!.gitignore

+!v1

+!v2

diff --git a/configs/inuse/v1/.gitignore b/configs/inuse/v1/.gitignore

new file mode 100644

index 0000000000000000000000000000000000000000..d6b7ef32c8478a48c3994dcadc86837f4371184d

--- /dev/null

+++ b/configs/inuse/v1/.gitignore

@@ -0,0 +1,2 @@

+*

+!.gitignore

diff --git a/configs/inuse/v2/.gitignore b/configs/inuse/v2/.gitignore

new file mode 100644

index 0000000000000000000000000000000000000000..d6b7ef32c8478a48c3994dcadc86837f4371184d

--- /dev/null

+++ b/configs/inuse/v2/.gitignore

@@ -0,0 +1,2 @@

+*

+!.gitignore

diff --git a/configs/v1/32k.json b/configs/v1/32k.json

new file mode 100644

index 0000000000000000000000000000000000000000..d5f16d691ed798f4c974b431167c36269b2ce7d2

--- /dev/null

+++ b/configs/v1/32k.json

@@ -0,0 +1,46 @@

+{

+ "train": {

+ "log_interval": 200,

+ "seed": 1234,

+ "epochs": 20000,

+ "learning_rate": 1e-4,

+ "betas": [0.8, 0.99],

+ "eps": 1e-9,

+ "batch_size": 4,

+ "fp16_run": true,

+ "lr_decay": 0.999875,

+ "segment_size": 12800,

+ "init_lr_ratio": 1,

+ "warmup_epochs": 0,

+ "c_mel": 45,

+ "c_kl": 1.0

+ },

+ "data": {

+ "max_wav_value": 32768.0,

+ "sampling_rate": 32000,

+ "filter_length": 1024,

+ "hop_length": 320,

+ "win_length": 1024,

+ "n_mel_channels": 80,

+ "mel_fmin": 0.0,

+ "mel_fmax": null

+ },

+ "model": {

+ "inter_channels": 192,

+ "hidden_channels": 192,

+ "filter_channels": 768,

+ "n_heads": 2,

+ "n_layers": 6,

+ "kernel_size": 3,

+ "p_dropout": 0,

+ "resblock": "1",

+ "resblock_kernel_sizes": [3,7,11],

+ "resblock_dilation_sizes": [[1,3,5], [1,3,5], [1,3,5]],

+ "upsample_rates": [10,4,2,2,2],

+ "upsample_initial_channel": 512,

+ "upsample_kernel_sizes": [16,16,4,4,4],

+ "use_spectral_norm": false,

+ "gin_channels": 256,

+ "spk_embed_dim": 109

+ }

+}

diff --git a/configs/v1/40k.json b/configs/v1/40k.json

new file mode 100644

index 0000000000000000000000000000000000000000..4ffc87b9e9725fcd59d81a68d41a61962213b777

--- /dev/null

+++ b/configs/v1/40k.json

@@ -0,0 +1,46 @@

+{

+ "train": {

+ "log_interval": 200,

+ "seed": 1234,

+ "epochs": 20000,

+ "learning_rate": 1e-4,

+ "betas": [0.8, 0.99],

+ "eps": 1e-9,

+ "batch_size": 4,

+ "fp16_run": true,

+ "lr_decay": 0.999875,

+ "segment_size": 12800,

+ "init_lr_ratio": 1,

+ "warmup_epochs": 0,

+ "c_mel": 45,

+ "c_kl": 1.0

+ },

+ "data": {

+ "max_wav_value": 32768.0,

+ "sampling_rate": 40000,

+ "filter_length": 2048,

+ "hop_length": 400,

+ "win_length": 2048,

+ "n_mel_channels": 125,

+ "mel_fmin": 0.0,

+ "mel_fmax": null

+ },

+ "model": {

+ "inter_channels": 192,

+ "hidden_channels": 192,

+ "filter_channels": 768,

+ "n_heads": 2,

+ "n_layers": 6,

+ "kernel_size": 3,

+ "p_dropout": 0,

+ "resblock": "1",

+ "resblock_kernel_sizes": [3,7,11],

+ "resblock_dilation_sizes": [[1,3,5], [1,3,5], [1,3,5]],

+ "upsample_rates": [10,10,2,2],

+ "upsample_initial_channel": 512,

+ "upsample_kernel_sizes": [16,16,4,4],

+ "use_spectral_norm": false,

+ "gin_channels": 256,

+ "spk_embed_dim": 109

+ }

+}

diff --git a/configs/v1/48k.json b/configs/v1/48k.json

new file mode 100644

index 0000000000000000000000000000000000000000..2d0e05beb794f6f61b769b48c7ae728bf59e6335

--- /dev/null

+++ b/configs/v1/48k.json

@@ -0,0 +1,46 @@

+{

+ "train": {

+ "log_interval": 200,

+ "seed": 1234,

+ "epochs": 20000,

+ "learning_rate": 1e-4,

+ "betas": [0.8, 0.99],

+ "eps": 1e-9,

+ "batch_size": 4,

+ "fp16_run": true,

+ "lr_decay": 0.999875,

+ "segment_size": 11520,

+ "init_lr_ratio": 1,

+ "warmup_epochs": 0,

+ "c_mel": 45,

+ "c_kl": 1.0

+ },

+ "data": {

+ "max_wav_value": 32768.0,

+ "sampling_rate": 48000,

+ "filter_length": 2048,

+ "hop_length": 480,

+ "win_length": 2048,

+ "n_mel_channels": 128,

+ "mel_fmin": 0.0,

+ "mel_fmax": null

+ },

+ "model": {

+ "inter_channels": 192,

+ "hidden_channels": 192,

+ "filter_channels": 768,

+ "n_heads": 2,

+ "n_layers": 6,

+ "kernel_size": 3,

+ "p_dropout": 0,

+ "resblock": "1",

+ "resblock_kernel_sizes": [3,7,11],

+ "resblock_dilation_sizes": [[1,3,5], [1,3,5], [1,3,5]],

+ "upsample_rates": [10,6,2,2,2],

+ "upsample_initial_channel": 512,

+ "upsample_kernel_sizes": [16,16,4,4,4],

+ "use_spectral_norm": false,

+ "gin_channels": 256,

+ "spk_embed_dim": 109

+ }

+}

diff --git a/configs/v2/32k.json b/configs/v2/32k.json

new file mode 100644

index 0000000000000000000000000000000000000000..70e534f4c641a5a2c8e5c1e172f61398ee97e6e0

--- /dev/null

+++ b/configs/v2/32k.json

@@ -0,0 +1,46 @@

+{

+ "train": {

+ "log_interval": 200,

+ "seed": 1234,

+ "epochs": 20000,

+ "learning_rate": 1e-4,

+ "betas": [0.8, 0.99],

+ "eps": 1e-9,

+ "batch_size": 4,

+ "fp16_run": true,

+ "lr_decay": 0.999875,

+ "segment_size": 12800,

+ "init_lr_ratio": 1,

+ "warmup_epochs": 0,

+ "c_mel": 45,

+ "c_kl": 1.0

+ },

+ "data": {

+ "max_wav_value": 32768.0,

+ "sampling_rate": 32000,

+ "filter_length": 1024,

+ "hop_length": 320,

+ "win_length": 1024,

+ "n_mel_channels": 80,

+ "mel_fmin": 0.0,

+ "mel_fmax": null

+ },

+ "model": {

+ "inter_channels": 192,

+ "hidden_channels": 192,

+ "filter_channels": 768,

+ "n_heads": 2,

+ "n_layers": 6,

+ "kernel_size": 3,

+ "p_dropout": 0,

+ "resblock": "1",

+ "resblock_kernel_sizes": [3,7,11],

+ "resblock_dilation_sizes": [[1,3,5], [1,3,5], [1,3,5]],

+ "upsample_rates": [10,8,2,2],

+ "upsample_initial_channel": 512,

+ "upsample_kernel_sizes": [20,16,4,4],

+ "use_spectral_norm": false,

+ "gin_channels": 256,

+ "spk_embed_dim": 109

+ }

+}

diff --git a/configs/v2/48k.json b/configs/v2/48k.json

new file mode 100644

index 0000000000000000000000000000000000000000..75f770cdacff3467e9e925ed2393b480881d0303

--- /dev/null

+++ b/configs/v2/48k.json

@@ -0,0 +1,46 @@

+{

+ "train": {

+ "log_interval": 200,

+ "seed": 1234,

+ "epochs": 20000,

+ "learning_rate": 1e-4,

+ "betas": [0.8, 0.99],

+ "eps": 1e-9,

+ "batch_size": 4,

+ "fp16_run": true,

+ "lr_decay": 0.999875,

+ "segment_size": 17280,

+ "init_lr_ratio": 1,

+ "warmup_epochs": 0,

+ "c_mel": 45,

+ "c_kl": 1.0

+ },

+ "data": {

+ "max_wav_value": 32768.0,

+ "sampling_rate": 48000,

+ "filter_length": 2048,

+ "hop_length": 480,

+ "win_length": 2048,

+ "n_mel_channels": 128,

+ "mel_fmin": 0.0,

+ "mel_fmax": null

+ },

+ "model": {

+ "inter_channels": 192,

+ "hidden_channels": 192,

+ "filter_channels": 768,

+ "n_heads": 2,

+ "n_layers": 6,

+ "kernel_size": 3,

+ "p_dropout": 0,

+ "resblock": "1",

+ "resblock_kernel_sizes": [3,7,11],

+ "resblock_dilation_sizes": [[1,3,5], [1,3,5], [1,3,5]],

+ "upsample_rates": [12,10,2,2],

+ "upsample_initial_channel": 512,

+ "upsample_kernel_sizes": [24,20,4,4],

+ "use_spectral_norm": false,

+ "gin_channels": 256,

+ "spk_embed_dim": 109

+ }

+}

diff --git a/docker-compose.yml b/docker-compose.yml

new file mode 100644

index 0000000000000000000000000000000000000000..0768b30333350f9f80afa4870b95e7e472d02256

--- /dev/null

+++ b/docker-compose.yml

@@ -0,0 +1,20 @@

+version: "3.8"

+services:

+ rvc:

+ build:

+ context: .

+ dockerfile: Dockerfile

+ container_name: rvc

+ volumes:

+ - ./weights:/app/assets/weights

+ - ./opt:/app/opt

+ # - ./dataset:/app/dataset # you can use this folder in order to provide your dataset for model training

+ ports:

+ - 7865:7865

+ deploy:

+ resources:

+ reservations:

+ devices:

+ - driver: nvidia

+ count: 1

+ capabilities: [gpu]

\ No newline at end of file

diff --git a/docs/cn/README.cn.md b/docs/cn/README.cn.md

new file mode 100644

index 0000000000000000000000000000000000000000..bf70a4ad53a14087f2fbefa22b1b6a772dd8045d

--- /dev/null

+++ b/docs/cn/README.cn.md

@@ -0,0 +1,198 @@

+

+

+# Retrieval-based-Voice-Conversion-WebUI

+一个基于VITS的简单易用的变声框架

+

+[](https://github.com/fumiama/Retrieval-based-Voice-Conversion-WebUI)

+

+

+

+[](https://github.com/fumiama/Retrieval-based-Voice-Conversion-WebUI/blob/main/LICENSE)

+[](https://huggingface.co/lj1995/VoiceConversionWebUI/tree/main/)

+

+[](https://discord.gg/HcsmBBGyVk)

+

+[**常见问题解答**](https://github.com/fumiama/Retrieval-based-Voice-Conversion-WebUI/wiki/%E5%B8%B8%E8%A7%81%E9%97%AE%E9%A2%98%E8%A7%A3%E7%AD%94) | [**AutoDL·5毛钱训练AI歌手**](https://github.com/fumiama/Retrieval-based-Voice-Conversion-WebUI/wiki/Autodl%E8%AE%AD%E7%BB%83RVC%C2%B7AI%E6%AD%8C%E6%89%8B%E6%95%99%E7%A8%8B) | [**对照实验记录**](https://github.com/fumiama/Retrieval-based-Voice-Conversion-WebUI/wiki/Autodl%E8%AE%AD%E7%BB%83RVC%C2%B7AI%E6%AD%8C%E6%89%8B%E6%95%99%E7%A8%8B](https://github.com/fumiama/Retrieval-based-Voice-Conversion-WebUI/wiki/%E5%AF%B9%E7%85%A7%E5%AE%9E%E9%AA%8C%C2%B7%E5%AE%9E%E9%AA%8C%E8%AE%B0%E5%BD%95)) | [**在线演示**](https://modelscope.cn/studios/FlowerCry/RVCv2demo)

+

+[**English**](../../README.md) | [**中文简体**](../cn/README.cn.md) | [**日本語**](../jp/README.ja.md) | [**한국어**](../kr/README.ko.md) ([**韓國語**](../kr/README.ko.han.md)) | [**Français**](../fr/README.fr.md) | [**Türkçe**](../tr/README.tr.md) | [**Português**](../pt/README.pt.md)

+

+

+

+> 底模使用接近50小时的开源高质量VCTK训练集训练,无版权方面的顾虑,请大家放心使用

+

+> 请期待RVCv3的底模,参数更大,数据集更大,效果更好,基本持平的推理速度,需要训练数据量更少。

+

+> 由于某些地区无法直连Hugging Face,即使设法成功访问,速度也十分缓慢,特推出模型/整合包/工具的一键下载器,欢迎试用:[RVC-Models-Downloader](https://github.com/fumiama/RVC-Models-Downloader)

+

+| 训练推理界面 |

+| :--------: |

+|  |

+

+| 实时变声界面 |

+| :---------: |

+|  |

+

+## 简介

+本仓库具有以下特点

++ 使用top1检索替换输入源特征为训练集特征来杜绝音色泄漏

++ 即便在相对较差的显卡上也能快速训练

++ 使用少量数据进行训练也能得到较好结果(推荐至少收集10分钟低底噪语音数据)

++ 可以通过模型融合来改变音色(借助ckpt处理选项卡中的ckpt-merge)

++ 简单易用的网页界面

++ 可调用UVR5模型来快速分离人声和伴奏

++ 使用最先进的[人声音高提取算法InterSpeech2023-RMVPE](#参考项目)根绝哑音问题,效果更好,运行更快,资源占用更少

++ A卡I卡加速支持

+

+点此查看我们的[演示视频](https://www.bilibili.com/video/BV1pm4y1z7Gm/) !

+

+## 环境配置

+### Python 版本限制

+> 建议使用 conda 管理 Python 环境

+

+> 版本限制原因参见此[bug](https://github.com/facebookresearch/fairseq/issues/5012)

+

+```bash

+python --version # 3.8 <= Python < 3.11

+```

+

+### Linux/MacOS 一键依赖安装启动脚本

+执行项目根目录下`run.sh`即可一键配置`venv`虚拟环境、自动安装所需依赖并启动主程序。

+```bash

+sh ./run.sh

+```

+

+### 手动安装依赖

+1. 安装`pytorch`及其核心依赖,若已安装则跳过。参考自: https://pytorch.org/get-started/locally/

+ ```bash

+ pip install torch torchvision torchaudio

+ ```

+2. 如果是 win 系统 + Nvidia Ampere 架构(RTX30xx),根据 #21 的经验,需要指定 pytorch 对应的 CUDA 版本

+ ```bash

+ pip install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu117

+ ```

+3. 根据自己的显卡安装对应依赖

+- N卡

+ ```bash

+ pip install -r requirements/main.txt

+ ```

+- A卡/I卡

+ ```bash

+ pip install -r requirements/dml.txt

+ ```

+- A卡ROCM(Linux)

+ ```bash

+ pip install -r requirements/amd.txt

+ ```

+- I卡IPEX(Linux)

+ ```bash

+ pip install -r requirements/ipex.txt

+ ```

+

+## 其他资源准备

+### 1. assets

+> RVC需要位于`assets`文件夹下的一些模型资源进行推理和训练。

+#### 自动检查/下载资源(默认)

+> 默认情况下,RVC可在主程序启动时自动检查所需资源的完整性。

+

+> 即使资源不完整,程序也将继续启动。

+

+- 如果您希望下载所有资源,请添加`--update`参数

+- 如果您希望跳过启动时的资源完整性检查,请添加`--nocheck`参数

+

+#### 手动下载资源

+> 所有资源文件均位于[Hugging Face space](https://huggingface.co/lj1995/VoiceConversionWebUI/tree/main/)

+

+> 你可以在`tools`文件夹找到下载它们的脚本

+

+> 你也可以使用模型/整合包/工具的一键下载器:[RVC-Models-Downloader](https://github.com/fumiama/RVC-Models-Downloader)

+

+以下是一份清单,包括了所有RVC所需的预模型和其他文件的名称。

+

+- ./assets/hubert/hubert_base.pt

+ ```bash

+ rvcmd assets/hubert # RVC-Models-Downloader command

+ ```

+- ./assets/pretrained

+ ```bash

+ rvcmd assets/v1 # RVC-Models-Downloader command

+ ```

+- ./assets/uvr5_weights

+ ```bash

+ rvcmd assets/uvr5 # RVC-Models-Downloader command

+ ```

+想使用v2版本模型的话,需要额外下载

+

+- ./assets/pretrained_v2

+ ```bash

+ rvcmd assets/v2 # RVC-Models-Downloader command

+ ```

+

+### 2. 下载 rmvpe 人声音高提取算法所需文件

+

+如果你想使用最新的RMVPE人声音高提取算法,则你需要下载音高提取模型参数并放置于`assets/rmvpe`。

+

+- 下载[rmvpe.pt](https://huggingface.co/lj1995/VoiceConversionWebUI/blob/main/rmvpe.pt)

+ ```bash

+ rvcmd assets/rmvpe # RVC-Models-Downloader command

+ ```

+

+#### 下载 rmvpe 的 dml 环境(可选, A卡/I卡用户)

+

+- 下载[rmvpe.onnx](https://huggingface.co/lj1995/VoiceConversionWebUI/blob/main/rmvpe.onnx)

+ ```bash

+ rvcmd assets/rmvpe # RVC-Models-Downloader command

+ ```

+

+### 3. AMD显卡Rocm(可选, 仅Linux)

+

+如果你想基于AMD的Rocm技术在Linux系统上运行RVC,请先在[这里](https://rocm.docs.amd.com/en/latest/deploy/linux/os-native/install.html)安装所需的驱动。

+

+若你使用的是Arch Linux,可以使用pacman来安装所需驱动:

+````

+pacman -S rocm-hip-sdk rocm-opencl-sdk

+````

+对于某些型号的显卡,你可能需要额外配置如下的环境变量(如:RX6700XT):

+````

+export ROCM_PATH=/opt/rocm

+export HSA_OVERRIDE_GFX_VERSION=10.3.0

+````

+同时确保你的当前用户处于`render`与`video`用户组内:

+````

+sudo usermod -aG render $USERNAME

+sudo usermod -aG video $USERNAME

+````

+

+## 开始使用

+### 直接启动

+使用以下指令来启动 WebUI

+```bash

+python web.py

+```

+### Linux/MacOS 用户

+```bash

+./run.sh

+```

+### 对于需要使用IPEX技术的I卡用户(仅Linux)

+```bash

+source /opt/intel/oneapi/setvars.sh

+./run.sh

+```

+### 使用整合包 (Windows 用户)

+下载并解压`RVC-beta.7z`,解压后双击`go-web.bat`即可一键启动。

+```bash

+rvcmd packs/general/latest # RVC-Models-Downloader command

+```

+

+## 参考项目

++ [ContentVec](https://github.com/auspicious3000/contentvec/)

++ [VITS](https://github.com/jaywalnut310/vits)

++ [HIFIGAN](https://github.com/jik876/hifi-gan)

++ [Gradio](https://github.com/gradio-app/gradio)

++ [Ultimate Vocal Remover](https://github.com/Anjok07/ultimatevocalremovergui)

++ [audio-slicer](https://github.com/openvpi/audio-slicer)

++ [Vocal pitch extraction:RMVPE](https://github.com/Dream-High/RMVPE)

+ + The pretrained model is trained and tested by [yxlllc](https://github.com/yxlllc/RMVPE) and [RVC-Boss](https://github.com/RVC-Boss).

+

+## 感谢所有贡献者作出的努力

+[](https://github.com/fumiama/Retrieval-based-Voice-Conversion-WebUI/graphs/contributors)

diff --git a/docs/cn/faq.md b/docs/cn/faq.md

new file mode 100644

index 0000000000000000000000000000000000000000..77d9d2aff6de243910512334ce4e7b08cdf632f5

--- /dev/null

+++ b/docs/cn/faq.md

@@ -0,0 +1,150 @@

+## Q1:一键训练结束没有索引

+

+显示"Training is done. The program is closed."则模型训练成功,后续紧邻的报错是假的;

+

+

+一键训练结束完成没有added开头的索引文件,可能是因为训练集太大卡住了添加索引的步骤;已通过批处理add索引解决内存add索引对内存需求过大的问题。临时可尝试再次点击"训练索引"按钮。

+

+

+## Q2:训练结束推理没看到训练集的音色

+点刷新音色再看看,如果还没有看看训练有没有报错,控制台和webui的截图,logs/实验名下的log,都可以发给开发者看看。

+

+

+## Q3:如何分享模型

+ rvc_root/logs/实验名 下面存储的pth不是用来分享模型用来推理的,而是为了存储实验状态供复现,以及继续训练用的。用来分享的模型应该是weights文件夹下大小为60+MB的pth文件;

+

+ 后续将把weights/exp_name.pth和logs/exp_name/added_xxx.index合并打包成weights/exp_name.zip省去填写index的步骤,那么zip文件用来分享,不要分享pth文件,除非是想换机器继续训练;

+

+ 如果你把logs文件夹下的几百MB的pth文件复制/分享到weights文件夹下强行用于推理,可能会出现f0,tgt_sr等各种key不存在的报错。你需要用ckpt选项卡最下面,手工或自动(本地logs下如果能找到相关信息则会自动)选择是否携带音高、目标音频采样率的选项后进行ckpt小模型提取(输入路径填G开头的那个),提取完在weights文件夹下会出现60+MB的pth文件,刷新音色后可以选择使用。

+

+

+## Q4:Connection Error.

+也许你关闭了控制台(黑色窗口)。

+

+

+## Q5:WebUI弹出Expecting value: line 1 column 1 (char 0).

+请关闭系统局域网代理/全局代理。

+

+

+这个不仅是客户端的代理,也包括服务端的代理(例如你使用autodl设置了http_proxy和https_proxy学术加速,使用时也需要unset关掉)

+

+

+## Q6:不用WebUI如何通过命令训练推理

+训练脚本:

+

+可先跑通WebUI,消息窗内会显示数据集处理和训练用命令行;

+

+

+推理脚本:

+

+https://huggingface.co/lj1995/VoiceConversionWebUI/blob/main/myinfer.py

+

+

+例子:

+

+

+runtime\python.exe myinfer.py 0 "E:\codes\py39\RVC-beta\todo-songs\1111.wav" "E:\codes\py39\logs\mi-test\added_IVF677_Flat_nprobe_7.index" harvest "test.wav" "weights/mi-test.pth" 0.6 cuda:0 True

+

+

+f0up_key=sys.argv[1]

+

+input_path=sys.argv[2]

+

+index_path=sys.argv[3]

+

+f0method=sys.argv[4]#harvest or pm

+

+opt_path=sys.argv[5]

+

+model_path=sys.argv[6]

+

+index_rate=float(sys.argv[7])

+

+device=sys.argv[8]

+

+is_half=bool(sys.argv[9])

+

+

+## Q7:Cuda error/Cuda out of memory.

+小概率是cuda配置问题、设备不支持;大概率是显存不够(out of memory);

+

+

+训练的话缩小batch size(如果缩小到1还不够只能更换显卡训练),推理的话酌情缩小config.py结尾的x_pad,x_query,x_center,x_max。4G以下显存(例如1060(3G)和各种2G显卡)可以直接放弃,4G显存显卡还有救。

+

+

+## Q8:total_epoch调多少比较好

+

+如果训练集音质差底噪大,20~30足够了,调太高,底模音质无法带高你的低音质训练集

+

+如果训练集音质高底噪低时长多,可以调高,200是ok的(训练速度很快,既然你有条件准备高音质训练集,显卡想必条件也不错,肯定不在乎多一些训练时间)

+

+

+## Q9:需要多少训练集时长

+ 推荐10min至50min

+

+ 保证音质高底噪低的情况下,如果有个人特色的音色统一,则多多益善

+

+ 高水平的训练集(精简+音色有特色),5min至10min也是ok的,仓库作者本人就经常这么玩

+

+ 也有人拿1min至2min的数据来训练并且训练成功的,但是成功经验是其他人不可复现的,不太具备参考价值。这要求训练集音色特色非常明显(比如说高频气声较明显的萝莉少女音),且音质高;

+

+ 1min以下时长数据目前没见有人尝试(成功)过。不建议进行这种鬼畜行为。

+

+

+## Q10:index rate干嘛用的,怎么调(科普)

+ 如果底模和推理源的音质高于训练集的音质,他们可以带高推理结果的音质,但代价可能是音色往底模/推理源的音色靠,这种现象叫做"音色泄露";

+

+ index rate用来削减/解决音色泄露问题。调到1,则理论上不存在推理源的音色泄露问题,但音质更倾向于训练集。如果训练集音质比推理源低,则index rate调高可能降低音质。调到0,则不具备利用检索混合来保护训练集音色的效果;

+

+ 如果训练集优质时长多,可调高total_epoch,此时模型本身不太会引用推理源和底模的音色,很少存在"音色泄露"问题,此时index_rate不重要,你甚至可以不建立/分享index索引文件。

+

+

+## Q11:推理怎么选gpu

+config.py文件里device cuda:后面选择卡号;

+

+卡号和显卡的映射关系,在训练选项卡的显卡信息栏里能看到。

+

+

+## Q12:如何推理训练中间保存的pth

+通过ckpt选项卡最下面提取小模型。

+

+

+

+## Q13:如何中断和继续训练

+现阶段只能关闭WebUI控制台双击go-web.bat重启程序。网页参数也要刷新重新填写;

+

+继续训练:相同网页参数点训练模型,就会接着上次的checkpoint继续训练。

+

+

+## Q14:训练时出现文件页面/内存error

+进程开太多了,内存炸了。你可能可以通过如下方式解决

+

+1、"提取音高和处理数据使用的CPU进程数" 酌情拉低;

+

+2、训练集音频手工切一下,不要太长。

+

+

+

+## Q15:如何中途加数据训练

+1、所有数据新建一个实验名;

+

+2、拷贝上一次的最新的那个G和D文件(或者你想基于哪个中间ckpt训练,也可以拷贝中间的)到新实验名;下

+

+3、一键训练新实验名,他会继续上一次的最新进度训练。

+

+

+## Q16: error about llvmlite.dll

+

+OSError: Could not load shared object file: llvmlite.dll

+

+FileNotFoundError: Could not find module lib\site-packages\llvmlite\binding\llvmlite.dll (or one of its dependencies). Try using the full path with constructor syntax.

+

+win平台会报这个错,装上https://aka.ms/vs/17/release/vc_redist.x64.exe这个再重启WebUI就好了。

+

+## Q17: RuntimeError: The expanded size of the tensor (17280) must match the existing size (0) at non-singleton dimension 1. Target sizes: [1, 17280]. Tensor sizes: [0]

+

+wavs16k文件夹下,找到文件大小显著比其他都小的一些音频文件,删掉,点击训练模型,就不会报错了,不过由于一键流程中断了你训练完模型还要点训练索引。

+

+## Q18: RuntimeError: The size of tensor a (24) must match the size of tensor b (16) at non-singleton dimension 2

+

+不要中途变更采样率继续训练。如果一定要变更,应更换实验名从头训练。当然你也可以把上次提取的音高和特征(0/1/2/2b folders)拷贝过去加速训练流程。

diff --git a/docs/en/faiss_tips_en.md b/docs/en/faiss_tips_en.md

new file mode 100644

index 0000000000000000000000000000000000000000..aafad6ed67f70ee1ea3a2a21ee0b5066ab1dcfa8

--- /dev/null

+++ b/docs/en/faiss_tips_en.md

@@ -0,0 +1,102 @@

+faiss tuning TIPS

+==================

+# about faiss

+faiss is a library of neighborhood searches for dense vectors, developed by facebook research, which efficiently implements many approximate neighborhood search methods.

+Approximate Neighbor Search finds similar vectors quickly while sacrificing some accuracy.

+

+## faiss in RVC

+In RVC, for the embedding of features converted by HuBERT, we search for embeddings similar to the embedding generated from the training data and mix them to achieve a conversion that is closer to the original speech. However, since this search takes time if performed naively, high-speed conversion is realized by using approximate neighborhood search.

+

+# implementation overview

+In '/logs/your-experiment/3_feature256' where the model is located, features extracted by HuBERT from each voice data are located.

+From here we read the npy files in order sorted by filename and concatenate the vectors to create big_npy. (This vector has shape [N, 256].)

+After saving big_npy as /logs/your-experiment/total_fea.npy, train it with faiss.

+

+In this article, I will explain the meaning of these parameters.

+

+# Explanation of the method

+## index factory

+An index factory is a unique faiss notation that expresses a pipeline that connects multiple approximate neighborhood search methods as a string.

+This allows you to try various approximate neighborhood search methods simply by changing the index factory string.

+In RVC it is used like this:

+

+```python

+index = faiss.index_factory(256, "IVF%s,Flat" % n_ivf)

+```

+Among the arguments of index_factory, the first is the number of dimensions of the vector, the second is the index factory string, and the third is the distance to use.

+

+For more detailed notation

+https://github.com/facebookresearch/faiss/wiki/The-index-factory

+

+## index for distance

+There are two typical indexes used as similarity of embedding as follows.

+

+- Euclidean distance (METRIC_L2)

+- inner product (METRIC_INNER_PRODUCT)

+

+Euclidean distance takes the squared difference in each dimension, sums the differences in all dimensions, and then takes the square root. This is the same as the distance in 2D and 3D that we use on a daily basis.

+The inner product is not used as an index of similarity as it is, and the cosine similarity that takes the inner product after being normalized by the L2 norm is generally used.

+

+Which is better depends on the case, but cosine similarity is often used in embedding obtained by word2vec and similar image retrieval models learned by ArcFace. If you want to do l2 normalization on vector X with numpy, you can do it with the following code with eps small enough to avoid 0 division.

+

+```python

+X_normed = X / np.maximum(eps, np.linalg.norm(X, ord=2, axis=-1, keepdims=True))

+```

+

+Also, for the index factory, you can change the distance index used for calculation by choosing the value to pass as the third argument.

+

+```python

+index = faiss.index_factory(dimention, text, faiss.METRIC_INNER_PRODUCT)

+```

+

+## IVF

+IVF (Inverted file indexes) is an algorithm similar to the inverted index in full-text search.

+During learning, the search target is clustered with kmeans, and Voronoi partitioning is performed using the cluster center. Each data point is assigned a cluster, so we create a dictionary that looks up the data points from the clusters.

+

+For example, if clusters are assigned as follows

+|index|Cluster|

+|-----|-------|

+|1|A|

+|2|B|

+|3|A|

+|4|C|

+|5|B|

+

+The resulting inverted index looks like this:

+

+|cluster|index|

+|-------|-----|

+|A|1, 3|

+|B|2, 5|

+|C|4|

+

+When searching, we first search n_probe clusters from the clusters, and then calculate the distances for the data points belonging to each cluster.

+

+# recommend parameter

+There are official guidelines on how to choose an index, so I will explain accordingly.

+https://github.com/facebookresearch/faiss/wiki/Guidelines-to-choose-an-index

+

+For datasets below 1M, 4bit-PQ is the most efficient method available in faiss as of April 2023.

+Combining this with IVF, narrowing down the candidates with 4bit-PQ, and finally recalculating the distance with an accurate index can be described by using the following index factory.

+

+```python

+index = faiss.index_factory(256, "IVF1024,PQ128x4fs,RFlat")

+```

+

+## Recommended parameters for IVF

+Consider the case of too many IVFs. For example, if coarse quantization by IVF is performed for the number of data, this is the same as a naive exhaustive search and is inefficient.

+For 1M or less, IVF values are recommended between 4*sqrt(N) ~ 16*sqrt(N) for N number of data points.

+

+Since the calculation time increases in proportion to the number of n_probes, please consult with the accuracy and choose appropriately. Personally, I don't think RVC needs that much accuracy, so n_probe = 1 is fine.

+

+## FastScan

+FastScan is a method that enables high-speed approximation of distances by Cartesian product quantization by performing them in registers.

+Cartesian product quantization performs clustering independently for each d dimension (usually d = 2) during learning, calculates the distance between clusters in advance, and creates a lookup table. At the time of prediction, the distance of each dimension can be calculated in O(1) by looking at the lookup table.

+So the number you specify after PQ usually specifies half the dimension of the vector.

+

+For a more detailed description of FastScan, please refer to the official documentation.

+https://github.com/facebookresearch/faiss/wiki/Fast-accumulation-of-PQ-and-AQ-codes-(FastScan)

+

+## RFlat

+RFlat is an instruction to recalculate the rough distance calculated by FastScan with the exact distance specified by the third argument of index factory.

+When getting k neighbors, k*k_factor points are recalculated.

diff --git a/docs/en/faq_en.md b/docs/en/faq_en.md

new file mode 100644

index 0000000000000000000000000000000000000000..23e325c2474ffce7fb998c32e99fc6139a55757b

--- /dev/null

+++ b/docs/en/faq_en.md

@@ -0,0 +1,114 @@

+## Q1:Cannot find index file after "One-click Training".

+If it displays "Training is done. The program is closed," then the model has been trained successfully, and the subsequent errors are fake;

+

+The lack of an 'added' index file after One-click training may be due to the training set being too large, causing the addition of the index to get stuck; this has been resolved by using batch processing to add the index, which solves the problem of memory overload when adding the index. As a temporary solution, try clicking the "Train Index" button again.

+

+## Q2:Cannot find the model in “Inferencing timbre” after training

+Click “Refresh timbre list” and check again; if still not visible, check if there are any errors during training and send screenshots of the console, web UI, and logs/experiment_name/*.log to the developers for further analysis.

+

+## Q3:How to share a model/How to use others' models?

+The pth files stored in rvc_root/logs/experiment_name are not meant for sharing or inference, but for storing the experiment checkpoits for reproducibility and further training. The model to be shared should be the 60+MB pth file in the weights folder;

+

+In the future, weights/exp_name.pth and logs/exp_name/added_xxx.index will be merged into a single weights/exp_name.zip file to eliminate the need for manual index input; so share the zip file, not the pth file, unless you want to continue training on a different machine;

+

+Copying/sharing the several hundred MB pth files from the logs folder to the weights folder for forced inference may result in errors such as missing f0, tgt_sr, or other keys. You need to use the ckpt tab at the bottom to manually or automatically (if the information is found in the logs/exp_name), select whether to include pitch infomation and target audio sampling rate options and then extract the smaller model. After extraction, there will be a 60+ MB pth file in the weights folder, and you can refresh the voices to use it.

+

+## Q4:Connection Error.

+You may have closed the console (black command line window).

+

+## Q5:WebUI popup 'Expecting value: line 1 column 1 (char 0)'.

+Please disable system LAN proxy/global proxy and then refresh.

+

+## Q6:How to train and infer without the WebUI?

+Training script:

+You can run training in WebUI first, and the command-line versions of dataset preprocessing and training will be displayed in the message window.

+

+Inference script:

+https://huggingface.co/lj1995/VoiceConversionWebUI/blob/main/myinfer.py

+

+

+e.g.

+

+runtime\python.exe myinfer.py 0 "E:\codes\py39\RVC-beta\todo-songs\1111.wav" "E:\codes\py39\logs\mi-test\added_IVF677_Flat_nprobe_7.index" harvest "test.wav" "weights/mi-test.pth" 0.6 cuda:0 True

+

+

+f0up_key=sys.argv[1]

+input_path=sys.argv[2]

+index_path=sys.argv[3]

+f0method=sys.argv[4]#harvest or pm

+opt_path=sys.argv[5]

+model_path=sys.argv[6]

+index_rate=float(sys.argv[7])

+device=sys.argv[8]

+is_half=bool(sys.argv[9])

+

+## Q7:Cuda error/Cuda out of memory.

+There is a small chance that there is a problem with the CUDA configuration or the device is not supported; more likely, there is not enough memory (out of memory).

+

+For training, reduce the batch size (if reducing to 1 is still not enough, you may need to change the graphics card); for inference, adjust the x_pad, x_query, x_center, and x_max settings in the config.py file as needed. 4G or lower memory cards (e.g. 1060(3G) and various 2G cards) can be abandoned, while 4G memory cards still have a chance.

+

+## Q8:How many total_epoch are optimal?

+If the training dataset's audio quality is poor and the noise floor is high, 20-30 epochs are sufficient. Setting it too high won't improve the audio quality of your low-quality training set.

+

+If the training set audio quality is high, the noise floor is low, and there is sufficient duration, you can increase it. 200 is acceptable (since training is fast, and if you're able to prepare a high-quality training set, your GPU likely can handle a longer training duration without issue).

+

+## Q9:How much training set duration is needed?

+

+A dataset of around 10min to 50min is recommended.

+

+With guaranteed high sound quality and low bottom noise, more can be added if the dataset's timbre is uniform.

+

+For a high-level training set (lean + distinctive tone), 5min to 10min is fine.

+

+There are some people who have trained successfully with 1min to 2min data, but the success is not reproducible by others and is not very informative.

This requires that the training set has a very distinctive timbre (e.g. a high-frequency airy anime girl sound) and the quality of the audio is high;

+Data of less than 1min duration has not been successfully attempted so far. This is not recommended.

+

+

+## Q10:What is the index rate for and how to adjust it?

+If the tone quality of the pre-trained model and inference source is higher than that of the training set, they can bring up the tone quality of the inference result, but at the cost of a possible tone bias towards the tone of the underlying model/inference source rather than the tone of the training set, which is generally referred to as "tone leakage".

+

+The index rate is used to reduce/resolve the timbre leakage problem. If the index rate is set to 1, theoretically there is no timbre leakage from the inference source and the timbre quality is more biased towards the training set. If the training set has a lower sound quality than the inference source, then a higher index rate may reduce the sound quality. Turning it down to 0 does not have the effect of using retrieval blending to protect the training set tones.

+

+If the training set has good audio quality and long duration, turn up the total_epoch, when the model itself is less likely to refer to the inferred source and the pretrained underlying model, and there is little "tone leakage", the index_rate is not important and you can even not create/share the index file.

+

+## Q11:How to choose the gpu when inferring?

+In the config.py file, select the card number after "device cuda:".

+

+The mapping between card number and graphics card can be seen in the graphics card information section of the training tab.

+

+## Q12:How to use the model saved in the middle of training?

+Save via model extraction at the bottom of the ckpt processing tab.

+

+## Q13:File/memory error(when training)?

+Too many processes and your memory is not enough. You may fix it by:

+

+1、decrease the input in field "Threads of CPU".

+

+2、pre-cut trainset to shorter audio files.

+

+## Q14: How to continue training using more data

+

+step1: put all wav data to path2.

+

+step2: exp_name2+path2 -> process dataset and extract feature.

+

+step3: copy the latest G and D file of exp_name1 (your previous experiment) into exp_name2 folder.

+

+step4: click "train the model", and it will continue training from the beginning of your previous exp model epoch.

+

+## Q15: error about llvmlite.dll

+

+OSError: Could not load shared object file: llvmlite.dll

+

+FileNotFoundError: Could not find module lib\site-packages\llvmlite\binding\llvmlite.dll (or one of its dependencies). Try using the full path with constructor syntax.

+

+The issue will happen in windows, install https://aka.ms/vs/17/release/vc_redist.x64.exe and it will be fixed.

+

+## Q16: RuntimeError: The expanded size of the tensor (17280) must match the existing size (0) at non-singleton dimension 1. Target sizes: [1, 17280]. Tensor sizes: [0]

+

+Delete the wav files whose size is significantly smaller than others, and that won't happen again. Than click "train the model"and "train the index".

+

+## Q17: RuntimeError: The size of tensor a (24) must match the size of tensor b (16) at non-singleton dimension 2

+

+Do not change the sampling rate and then continue training. If it is necessary to change, the exp name should be changed and the model will be trained from scratch. You can also copy the pitch and features (0/1/2/2b folders) extracted last time to accelerate the training process.

+

diff --git a/docs/en/training_tips_en.md b/docs/en/training_tips_en.md

new file mode 100644

index 0000000000000000000000000000000000000000..2aca8a77a864298a890b8b926505a6eef5f27319

--- /dev/null

+++ b/docs/en/training_tips_en.md

@@ -0,0 +1,65 @@

+Instructions and tips for RVC training

+======================================

+This TIPS explains how data training is done.

+

+# Training flow

+I will explain along the steps in the training tab of the GUI.

+

+## step1

+Set the experiment name here.

+

+You can also set here whether the model should take pitch into account.

+If the model doesn't consider pitch, the model will be lighter, but not suitable for singing.

+

+Data for each experiment is placed in `/logs/your-experiment-name/`.

+

+## step2a

+Loads and preprocesses audio.

+

+### load audio

+If you specify a folder with audio, the audio files in that folder will be read automatically.

+For example, if you specify `C:Users\hoge\voices`, `C:Users\hoge\voices\voice.mp3` will be loaded, but `C:Users\hoge\voices\dir\voice.mp3` will Not loaded.

+

+Since ffmpeg is used internally for reading audio, if the extension is supported by ffmpeg, it will be read automatically.

+After converting to int16 with ffmpeg, convert to float32 and normalize between -1 to 1.

+

+### denoising

+The audio is smoothed by scipy's filtfilt.

+

+### Audio Split

+First, the input audio is divided by detecting parts of silence that last longer than a certain period (max_sil_kept=5 seconds?). After splitting the audio on silence, split the audio every 4 seconds with an overlap of 0.3 seconds. For audio separated within 4 seconds, after normalizing the volume, convert the wav file to `/logs/your-experiment-name/0_gt_wavs` and then convert it to 16k sampling rate to `/logs/your-experiment-name/1_16k_wavs ` as a wav file.

+

+## step2b

+### Extract pitch

+Extract pitch information from wav files. Extract the pitch information (=f0) using the method built into parselmouth or pyworld and save it in `/logs/your-experiment-name/2a_f0`. Then logarithmically convert the pitch information to an integer between 1 and 255 and save it in `/logs/your-experiment-name/2b-f0nsf`.

+

+### Extract feature_print

+Convert the wav file to embedding in advance using HuBERT. Read the wav file saved in `/logs/your-experiment-name/1_16k_wavs`, convert the wav file to 256-dimensional features with HuBERT, and save in npy format in `/logs/your-experiment-name/3_feature256`.

+

+## step3

+train the model.

+### Glossary for Beginners

+In deep learning, the data set is divided and the learning proceeds little by little. In one model update (step), batch_size data are retrieved and predictions and error corrections are performed. Doing this once for a dataset counts as one epoch.

+

+Therefore, the learning time is the learning time per step x (the number of data in the dataset / batch size) x the number of epochs. In general, the larger the batch size, the more stable the learning becomes (learning time per step ÷ batch size) becomes smaller, but it uses more GPU memory. GPU RAM can be checked with the nvidia-smi command. Learning can be done in a short time by increasing the batch size as much as possible according to the machine of the execution environment.

+

+### Specify pretrained model

+RVC starts training the model from pretrained weights instead of from 0, so it can be trained with a small dataset.

+

+By default

+

+- If you consider pitch, it loads `rvc-location/pretrained/f0G40k.pth` and `rvc-location/pretrained/f0D40k.pth`.

+- If you don't consider pitch, it loads `rvc-location/pretrained/G40k.pth` and `rvc-location/pretrained/D40k.pth`.

+

+When learning, model parameters are saved in `logs/your-experiment-name/G_{}.pth` and `logs/your-experiment-name/D_{}.pth` for each save_every_epoch, but by specifying this path, you can start learning. You can restart or start training from model weights learned in a different experiment.

+

+### learning index

+RVC saves the HuBERT feature values used during training, and during inference, searches for feature values that are similar to the feature values used during learning to perform inference. In order to perform this search at high speed, the index is learned in advance.

+For index learning, we use the approximate neighborhood search library faiss. Read the feature value of `logs/your-experiment-name/3_feature256` and use it to learn the index, and save it as `logs/your-experiment-name/add_XXX.index`.

+

+(From the 20230428update version, it is read from the index, and saving / specifying is no longer necessary.)

+

+### Button description

+- Train model: After executing step2b, press this button to train the model.

+- Train feature index: After training the model, perform index learning.

+- One-click training: step2b, model training and feature index training all at once.

\ No newline at end of file

diff --git a/docs/fr/README.fr.md b/docs/fr/README.fr.md

new file mode 100644

index 0000000000000000000000000000000000000000..18699232a30bd47dbb3ca2d8d5c15ba62ae537b6

--- /dev/null

+++ b/docs/fr/README.fr.md

@@ -0,0 +1,166 @@

+

+

+# Retrieval-based-Voice-Conversion-WebUI

+Un framework simple et facile à utiliser pour la conversion vocale (modificateur de voix) basé sur VITS

+

+

+

+[](https://github.com/fumiama/Retrieval-based-Voice-Conversion-WebUI)

+

+

+

+[](https://github.com/fumiama/Retrieval-based-Voice-Conversion-WebUI/blob/main/LICENSE)

+[](https://huggingface.co/lj1995/VoiceConversionWebUI/tree/main/)

+

+[](https://discord.gg/HcsmBBGyVk)

+

+[**FAQ**](https://github.com/fumiama/Retrieval-based-Voice-Conversion-WebUI/wiki/%E5%B8%B8%E8%A7%81%E9%97%AE%E9%A2%98%E8%A7%A3%E7%AD%94) | [**AutoDL·Formation d'un chanteur AI pour 5 centimes**](https://github.com/fumiama/Retrieval-based-Voice-Conversion-WebUI/wiki/Autodl%E8%AE%AD%E7%BB%83RVC%C2%B7AI%E6%AD%8C%E6%89%8B%E6%95%99%E7%A8%8B) | [**Enregistrement des expériences comparatives**](https://github.com/fumiama/Retrieval-based-Voice-Conversion-WebUI/wiki/%E5%AF%B9%E7%85%A7%E5%AE%9E%E9%AA%8C%C2%B7%E5%AE%9E%E9%AA%8C%E8%AE%B0%E5%BD%95) | [**Démonstration en ligne**](https://huggingface.co/spaces/Ricecake123/RVC-demo)

+

+

+

+------

+

+[**English**](../en/README.en.md) | [ **中文简体**](../../README.md) | [**日本語**](../jp/README.ja.md) | [**한국어**](../kr/README.ko.md) ([**韓國語**](../kr/README.ko.han.md)) | [**Français**](../fr/README.fr.md) | [**Turc**](../tr/README.tr.md) | [**Português**](../pt/README.pt.md)

+

+Cliquez ici pour voir notre [vidéo de démonstration](https://www.bilibili.com/video/BV1pm4y1z7Gm/) !

+

+> Conversion vocale en temps réel avec RVC : [w-okada/voice-changer](https://github.com/w-okada/voice-changer)

+

+> Le modèle de base est formé avec près de 50 heures de données VCTK de haute qualité et open source. Aucun souci concernant les droits d'auteur, n'hésitez pas à l'utiliser.

+

+> Attendez-vous au modèle de base RVCv3 : plus de paramètres, plus de données, de meilleurs résultats, une vitesse d'inférence presque identique, et nécessite moins de données pour la formation.

+

+## Introduction

+Ce dépôt a les caractéristiques suivantes :

++ Utilise le top1 pour remplacer les caractéristiques de la source d'entrée par les caractéristiques de l'ensemble d'entraînement pour éliminer les fuites de timbre vocal.

++ Peut être formé rapidement même sur une carte graphique relativement moins performante.

++ Obtient de bons résultats même avec peu de données pour la formation (il est recommandé de collecter au moins 10 minutes de données vocales avec un faible bruit de fond).

++ Peut changer le timbre vocal en fusionnant des modèles (avec l'aide de l'onglet ckpt-merge).

++ Interface web simple et facile à utiliser.

++ Peut appeler le modèle UVR5 pour séparer rapidement la voix et l'accompagnement.

++ Utilise l'algorithme de pitch vocal le plus avancé [InterSpeech2023-RMVPE](#projets-référencés) pour éliminer les problèmes de voix muette. Meilleurs résultats, plus rapide que crepe_full, et moins gourmand en ressources.

++ Support d'accélération pour les cartes AMD et Intel.

+

+## Configuration de l'environnement

+Exécutez les commandes suivantes dans un environnement Python de version 3.8 ou supérieure.

+

+(Windows/Linux)

+Installez d'abord les dépendances principales via pip :

+```bash

+# Installez Pytorch et ses dépendances essentielles, sautez si déjà installé.

+# Voir : https://pytorch.org/get-started/locally/

+pip install torch torchvision torchaudio

+

+# Pour les utilisateurs de Windows avec une architecture Nvidia Ampere (RTX30xx), en se basant sur l'expérience #21, spécifiez la version CUDA correspondante pour Pytorch.

+pip install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu117

+

+# Pour Linux + carte AMD, utilisez cette version de Pytorch:

+pip install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/rocm5.4.2

+```

+

+Vous pouvez utiliser poetry pour installer les dépendances :

+```bash

+# Installez l'outil de gestion des dépendances Poetry, sautez si déjà installé.

+# Voir : https://python-poetry.org/docs/#installation

+curl -sSL https://install.python-poetry.org | python3 -

+

+# Installez les dépendances avec poetry.

+poetry install

+```

+

+Ou vous pouvez utiliser pip pour installer les dépendances :

+```bash

+# Cartes Nvidia :

+pip install -r requirements/main.txt

+

+# Cartes AMD/Intel :

+pip install -r requirements/dml.txt

+

+# Cartes Intel avec IPEX

+pip install -r requirements/ipex.txt

+

+# Cartes AMD sur Linux (ROCm)

+pip install -r requirements/amd.txt

+```

+

+------

+Les utilisateurs de Mac peuvent exécuter `run.sh` pour installer les dépendances :

+```bash

+sh ./run.sh

+```

+

+## Préparation d'autres modèles pré-entraînés

+RVC nécessite d'autres modèles pré-entraînés pour l'inférence et la formation.

+

+```bash

+#Télécharger tous les modèles depuis https://huggingface.co/lj1995/VoiceConversionWebUI/tree/main/

+python tools/download_models.py

+```

+

+Ou vous pouvez télécharger ces modèles depuis notre [espace Hugging Face](https://huggingface.co/lj1995/VoiceConversionWebUI/tree/main/).

+

+Voici une liste des modèles et autres fichiers requis par RVC :

+```bash

+./assets/hubert/hubert_base.pt

+

+./assets/pretrained

+

+./assets/uvr5_weights

+

+# Pour tester la version v2 du modèle, téléchargez également :

+

+./assets/pretrained_v2

+

+# Si vous souhaitez utiliser le dernier algorithme RMVPE de pitch vocal, téléchargez les paramètres du modèle de pitch et placez-les dans le répertoire racine de RVC.

+

+https://huggingface.co/lj1995/VoiceConversionWebUI/blob/main/rmvpe.pt

+

+ # Les utilisateurs de cartes AMD/Intel nécessitant l'environnement DML doivent télécharger :

+

+ https://huggingface.co/lj1995/VoiceConversionWebUI/blob/main/rmvpe.onnx

+

+```

+Pour les utilisateurs d'Intel ARC avec IPEX, exécutez d'abord `source /opt/intel/oneapi/setvars.sh`.

+Ensuite, exécutez la commande suivante pour démarrer WebUI :

+```bash

+python web.py

+```

+

+Si vous utilisez Windows ou macOS, vous pouvez télécharger et extraire `RVC-beta.7z`. Les utilisateurs de Windows peuvent exécuter `go-web.bat` pour démarrer WebUI, tandis que les utilisateurs de macOS peuvent exécuter `sh ./run.sh`.

+

+## Compatibilité ROCm pour les cartes AMD (seulement Linux)

+Installez tous les pilotes décrits [ici](https://rocm.docs.amd.com/en/latest/deploy/linux/os-native/install.html).

+

+Sur Arch utilisez pacman pour installer le pilote:

+````

+pacman -S rocm-hip-sdk rocm-opencl-sdk

+````

+

+Vous devrez peut-être créer ces variables d'environnement (par exemple avec RX6700XT):

+````

+export ROCM_PATH=/opt/rocm

+export HSA_OVERRIDE_GFX_VERSION=10.3.0

+````

+Assurez-vous que votre utilisateur est dans les groupes `render` et `video`:

+````

+sudo usermod -aG render $USERNAME

+sudo usermod -aG video $USERNAME

+````

+Enfin vous pouvez exécuter WebUI:

+```bash

+python web.py

+```

+

+## Crédits

++ [ContentVec](https://github.com/auspicious3000/contentvec/)

++ [VITS](https://github.com/jaywalnut310/vits)

++ [HIFIGAN](https://github.com/jik876/hifi-gan)

++ [Gradio](https://github.com/gradio-app/gradio)

++ [Ultimate Vocal Remover](https://github.com/Anjok07/ultimatevocalremovergui)

++ [audio-slicer](https://github.com/openvpi/audio-slicer)

++ [Extraction de la hauteur vocale : RMVPE](https://github.com/Dream-High/RMVPE)

+ + Le modèle pré-entraîné a été formé et testé par [yxlllc](https://github.com/yxlllc/RMVPE) et [RVC-Boss](https://github.com/RVC-Boss).

+

+## Remerciements à tous les contributeurs pour leurs efforts

+[](https://github.com/fumiama/Retrieval-based-Voice-Conversion-WebUI/graphs/contributors)

diff --git a/docs/fr/faiss_tips_fr.md b/docs/fr/faiss_tips_fr.md

new file mode 100644

index 0000000000000000000000000000000000000000..7fde76a46022e9a5f6ae5fd7315176dabbd375d4

--- /dev/null

+++ b/docs/fr/faiss_tips_fr.md

@@ -0,0 +1,105 @@

+Conseils de réglage pour faiss

+==================

+# À propos de faiss

+faiss est une bibliothèque de recherches de voisins pour les vecteurs denses, développée par Facebook Research, qui implémente efficacement de nombreuses méthodes de recherche de voisins approximatifs.

+La recherche de voisins approximatifs trouve rapidement des vecteurs similaires tout en sacrifiant une certaine précision.

+

+## faiss dans RVC

+Dans RVC, pour l'incorporation des caractéristiques converties par HuBERT, nous recherchons des incorporations similaires à l'incorporation générée à partir des données d'entraînement et les mixons pour obtenir une conversion plus proche de la parole originale. Cependant, cette recherche serait longue si elle était effectuée de manière naïve, donc une conversion à haute vitesse est réalisée en utilisant une recherche de voisinage approximatif.

+

+# Vue d'ensemble de la mise en œuvre

+Dans '/logs/votre-expérience/3_feature256' où le modèle est situé, les caractéristiques extraites par HuBERT de chaque donnée vocale sont situées.

+À partir de là, nous lisons les fichiers npy dans un ordre trié par nom de fichier et concaténons les vecteurs pour créer big_npy. (Ce vecteur a la forme [N, 256].)

+Après avoir sauvegardé big_npy comme /logs/votre-expérience/total_fea.npy, nous l'entraînons avec faiss.

+

+Dans cet article, j'expliquerai la signification de ces paramètres.

+

+# Explication de la méthode

+## Usine d'index

+Une usine d'index est une notation unique de faiss qui exprime un pipeline qui relie plusieurs méthodes de recherche de voisinage approximatif sous forme de chaîne.

+Cela vous permet d'essayer diverses méthodes de recherche de voisinage approximatif simplement en changeant la chaîne de l'usine d'index.

+Dans RVC, elle est utilisée comme ceci :

+

+```python

+index = faiss.index_factory(256, "IVF%s,Flat" % n_ivf)

+```

+

+Parmi les arguments de index_factory, le premier est le nombre de dimensions du vecteur, le second est la chaîne de l'usine d'index, et le troisième est la distance à utiliser.

+

+Pour une notation plus détaillée :

+https://github.com/facebookresearch/faiss/wiki/The-index-factory

+

+## Index pour la distance

+Il existe deux index typiques utilisés comme similarité de l'incorporation comme suit :

+

+- Distance euclidienne (METRIC_L2)

+- Produit intérieur (METRIC_INNER_PRODUCT)

+

+La distance euclidienne prend la différence au carré dans chaque dimension, somme les différences dans toutes les dimensions, puis prend la racine carrée. C'est la même chose que la distance en 2D et 3D que nous utilisons au quotidien.

+Le produit intérieur n'est pas utilisé comme index de similarité tel quel, et la similarité cosinus qui prend le produit intérieur après avoir été normalisé par la norme L2 est généralement utilisée.

+

+Lequel est le mieux dépend du cas, mais la similarité cosinus est souvent utilisée dans l'incorporation obtenue par word2vec et des modèles de récupération d'images similaires appris par ArcFace. Si vous voulez faire une normalisation l2 sur le vecteur X avec numpy, vous pouvez le faire avec le code suivant avec eps suffisamment petit pour éviter une division par 0.

+

+```python

+X_normed = X / np.maximum(eps, np.linalg.norm(X, ord=2, axis=-1, keepdims=True))

+```

+

+De plus, pour l'usine d'index, vous pouvez changer l'index de distance utilisé pour le calcul en choisissant la valeur à passer comme troisième argument.

+

+```python

+index = faiss.index_factory(dimention, texte, faiss.METRIC_INNER_PRODUCT)

+```

+

+## IVF

+IVF (Inverted file indexes) est un algorithme similaire à l'index inversé dans la recherche en texte intégral.

+Lors de l'apprentissage, la cible de recherche est regroupée avec kmeans, et une partition de Voronoi est effectuée en utilisant le centre du cluster. Chaque point de données est attribué à un cluster, nous créons donc un dictionnaire qui permet de rechercher les points de données à partir des clusters.

+

+Par exemple, si des clusters sont attribués comme suit :

+|index|Cluster|

+|-----|-------|

+|1|A|

+|2|B|

+|3|A|

+|4|C|

+|5|B|

+

+L'index inversé résultant ressemble à ceci :

+

+|cluster|index|

+|-------|-----|

+|A|1, 3|

+|B|2, 5|

+|C|4|

+

+Lors de la recherche, nous recherchons d'abord n_probe clusters parmi les clusters, puis nous calculons les distances pour les points de données appartenant à chaque cluster.

+