Spaces:

Runtime error

Runtime error

Upload 6 files

Browse files- README.md +5 -6

- app.py +49 -0

- code.jpg +0 -0

- gitattributes.txt +34 -0

- output.jpg +0 -0

- requirements.txt +3 -0

README.md

CHANGED

|

@@ -1,13 +1,12 @@

|

|

| 1 |

---

|

| 2 |

-

title:

|

| 3 |

-

emoji:

|

| 4 |

-

colorFrom:

|

| 5 |

-

colorTo:

|

| 6 |

sdk: gradio

|

| 7 |

-

sdk_version: 3.

|

| 8 |

app_file: app.py

|

| 9 |

pinned: false

|

| 10 |

-

license: mit

|

| 11 |

---

|

| 12 |

|

| 13 |

Check out the configuration reference at https://huggingface.co/docs/hub/spaces-config-reference

|

|

|

|

| 1 |

---

|

| 2 |

+

title: CodeExplainerPython

|

| 3 |

+

emoji: 🐨

|

| 4 |

+

colorFrom: gray

|

| 5 |

+

colorTo: red

|

| 6 |

sdk: gradio

|

| 7 |

+

sdk_version: 3.19.1

|

| 8 |

app_file: app.py

|

| 9 |

pinned: false

|

|

|

|

| 10 |

---

|

| 11 |

|

| 12 |

Check out the configuration reference at https://huggingface.co/docs/hub/spaces-config-reference

|

app.py

ADDED

|

@@ -0,0 +1,49 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import gradio as gr

|

| 2 |

+

|

| 3 |

+

from transformers import (

|

| 4 |

+

AutoModelForSeq2SeqLM,

|

| 5 |

+

AutoTokenizer,

|

| 6 |

+

AutoConfig,

|

| 7 |

+

pipeline,

|

| 8 |

+

)

|

| 9 |

+

|

| 10 |

+

model_name = "sagard21/python-code-explainer"

|

| 11 |

+

|

| 12 |

+

tokenizer = AutoTokenizer.from_pretrained(model_name, padding=True)

|

| 13 |

+

|

| 14 |

+

model = AutoModelForSeq2SeqLM.from_pretrained(model_name)

|

| 15 |

+

|

| 16 |

+

config = AutoConfig.from_pretrained(model_name)

|

| 17 |

+

|

| 18 |

+

model.eval()

|

| 19 |

+

|

| 20 |

+

pipe = pipeline("summarization", model=model_name, config=config, tokenizer=tokenizer)

|

| 21 |

+

|

| 22 |

+

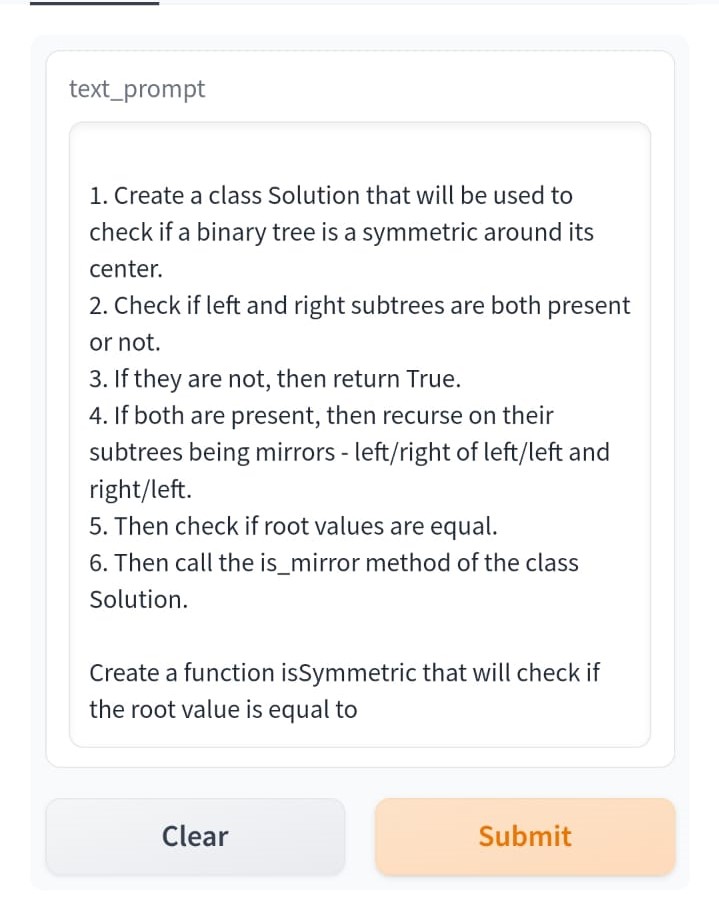

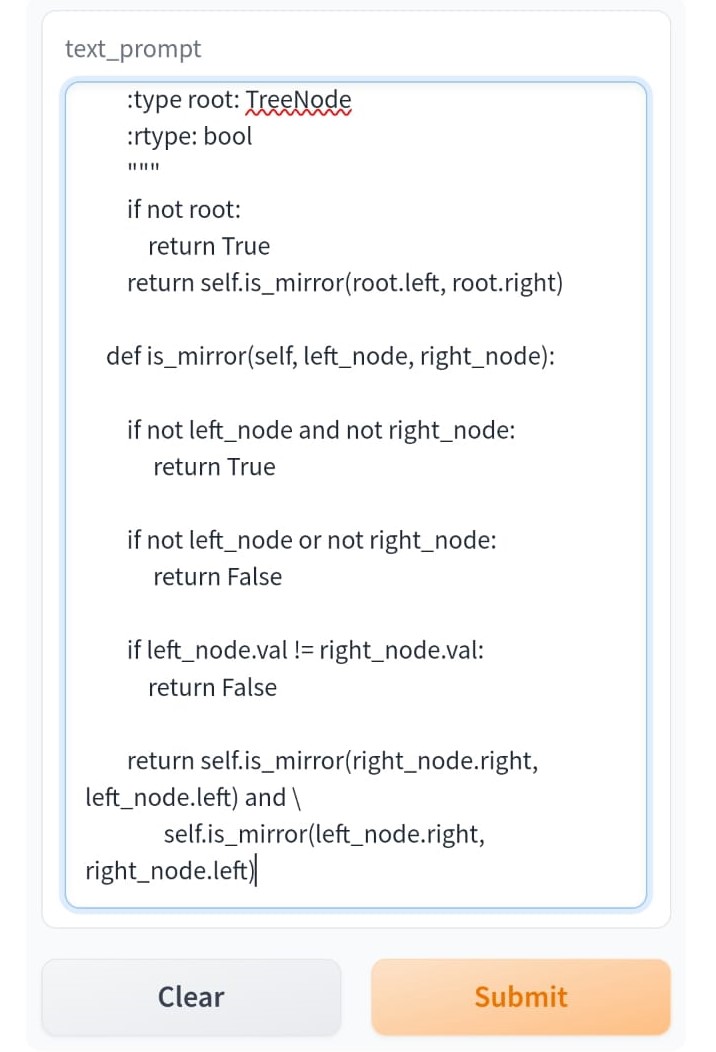

def generate_text(text_prompt):

|

| 23 |

+

response = pipe(text_prompt)

|

| 24 |

+

return response[0]['summary_text']

|

| 25 |

+

|

| 26 |

+

textbox1 = gr.Textbox(value = """

|

| 27 |

+

class Solution(object):

|

| 28 |

+

def isValid(self, s):

|

| 29 |

+

stack = []

|

| 30 |

+

mapping = {")": "(", "}": "{", "]": "["}

|

| 31 |

+

for char in s:

|

| 32 |

+

if char in mapping:

|

| 33 |

+

top_element = stack.pop() if stack else '#'

|

| 34 |

+

if mapping[char] != top_element:

|

| 35 |

+

return False

|

| 36 |

+

else:

|

| 37 |

+

stack.append(char)

|

| 38 |

+

return not stack""")

|

| 39 |

+

|

| 40 |

+

textbox2 = gr.Textbox()

|

| 41 |

+

|

| 42 |

+

if __name__ == "__main__":

|

| 43 |

+

gr.Textbox("The Inference Takes about 1 min 30 seconds")

|

| 44 |

+

with gr.Blocks() as demo:

|

| 45 |

+

gr.Interface(fn = generate_text, inputs = textbox1, outputs = textbox2)

|

| 46 |

+

with gr.Row():

|

| 47 |

+

gr.Image(value = "output.jpg", label = "Sample Explaination in Natural Language")

|

| 48 |

+

gr.Image(value = "code.jpg", label = "Sample Code for Checking if a Binary Tree is Mirrored")

|

| 49 |

+

demo.launch()

|

code.jpg

ADDED

|

gitattributes.txt

ADDED

|

@@ -0,0 +1,34 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 8 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 9 |

+

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 10 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 11 |

+

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

| 12 |

+

*.model filter=lfs diff=lfs merge=lfs -text

|

| 13 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 14 |

+

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 15 |

+

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 16 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 17 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 18 |

+

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 19 |

+

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 20 |

+

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 21 |

+

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 22 |

+

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 23 |

+

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 24 |

+

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 25 |

+

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 26 |

+

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 27 |

+

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 29 |

+

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 30 |

+

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 31 |

+

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 32 |

+

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 33 |

+

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 34 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

output.jpg

ADDED

|

requirements.txt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

torch

|

| 2 |

+

transformers

|

| 3 |

+

gradio

|