Spaces:

Runtime error

Runtime error

Upload 8 files

Browse files- .gitignore +162 -0

- LICENSE +201 -0

- README.md +258 -9

- briarmbg.py +462 -0

- db_examples.py +217 -0

- gradio_demo.py +433 -0

- gradio_demo_bg.py +465 -0

- requirements.txt +6 -10

.gitignore

ADDED

|

@@ -0,0 +1,162 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.safetensors

|

| 2 |

+

|

| 3 |

+

# Byte-compiled / optimized / DLL files

|

| 4 |

+

__pycache__/

|

| 5 |

+

*.py[cod]

|

| 6 |

+

*$py.class

|

| 7 |

+

|

| 8 |

+

# C extensions

|

| 9 |

+

*.so

|

| 10 |

+

|

| 11 |

+

# Distribution / packaging

|

| 12 |

+

.Python

|

| 13 |

+

build/

|

| 14 |

+

develop-eggs/

|

| 15 |

+

dist/

|

| 16 |

+

downloads/

|

| 17 |

+

eggs/

|

| 18 |

+

.eggs/

|

| 19 |

+

lib/

|

| 20 |

+

lib64/

|

| 21 |

+

parts/

|

| 22 |

+

sdist/

|

| 23 |

+

var/

|

| 24 |

+

wheels/

|

| 25 |

+

share/python-wheels/

|

| 26 |

+

*.egg-info/

|

| 27 |

+

.installed.cfg

|

| 28 |

+

*.egg

|

| 29 |

+

MANIFEST

|

| 30 |

+

|

| 31 |

+

# PyInstaller

|

| 32 |

+

# Usually these files are written by a python script from a template

|

| 33 |

+

# before PyInstaller builds the exe, so as to inject date/other infos into it.

|

| 34 |

+

*.manifest

|

| 35 |

+

*.spec

|

| 36 |

+

|

| 37 |

+

# Installer logs

|

| 38 |

+

pip-log.txt

|

| 39 |

+

pip-delete-this-directory.txt

|

| 40 |

+

|

| 41 |

+

# Unit test / coverage reports

|

| 42 |

+

htmlcov/

|

| 43 |

+

.tox/

|

| 44 |

+

.nox/

|

| 45 |

+

.coverage

|

| 46 |

+

.coverage.*

|

| 47 |

+

.cache

|

| 48 |

+

nosetests.xml

|

| 49 |

+

coverage.xml

|

| 50 |

+

*.cover

|

| 51 |

+

*.py,cover

|

| 52 |

+

.hypothesis/

|

| 53 |

+

.pytest_cache/

|

| 54 |

+

cover/

|

| 55 |

+

|

| 56 |

+

# Translations

|

| 57 |

+

*.mo

|

| 58 |

+

*.pot

|

| 59 |

+

|

| 60 |

+

# Django stuff:

|

| 61 |

+

*.log

|

| 62 |

+

local_settings.py

|

| 63 |

+

db.sqlite3

|

| 64 |

+

db.sqlite3-journal

|

| 65 |

+

|

| 66 |

+

# Flask stuff:

|

| 67 |

+

instance/

|

| 68 |

+

.webassets-cache

|

| 69 |

+

|

| 70 |

+

# Scrapy stuff:

|

| 71 |

+

.scrapy

|

| 72 |

+

|

| 73 |

+

# Sphinx documentation

|

| 74 |

+

docs/_build/

|

| 75 |

+

|

| 76 |

+

# PyBuilder

|

| 77 |

+

.pybuilder/

|

| 78 |

+

target/

|

| 79 |

+

|

| 80 |

+

# Jupyter Notebook

|

| 81 |

+

.ipynb_checkpoints

|

| 82 |

+

|

| 83 |

+

# IPython

|

| 84 |

+

profile_default/

|

| 85 |

+

ipython_config.py

|

| 86 |

+

|

| 87 |

+

# pyenv

|

| 88 |

+

# For a library or package, you might want to ignore these files since the code is

|

| 89 |

+

# intended to run in multiple environments; otherwise, check them in:

|

| 90 |

+

# .python-version

|

| 91 |

+

|

| 92 |

+

# pipenv

|

| 93 |

+

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

|

| 94 |

+

# However, in case of collaboration, if having platform-specific dependencies or dependencies

|

| 95 |

+

# having no cross-platform support, pipenv may install dependencies that don't work, or not

|

| 96 |

+

# install all needed dependencies.

|

| 97 |

+

#Pipfile.lock

|

| 98 |

+

|

| 99 |

+

# poetry

|

| 100 |

+

# Similar to Pipfile.lock, it is generally recommended to include poetry.lock in version control.

|

| 101 |

+

# This is especially recommended for binary packages to ensure reproducibility, and is more

|

| 102 |

+

# commonly ignored for libraries.

|

| 103 |

+

# https://python-poetry.org/docs/basic-usage/#commit-your-poetrylock-file-to-version-control

|

| 104 |

+

#poetry.lock

|

| 105 |

+

|

| 106 |

+

# pdm

|

| 107 |

+

# Similar to Pipfile.lock, it is generally recommended to include pdm.lock in version control.

|

| 108 |

+

#pdm.lock

|

| 109 |

+

# pdm stores project-wide configurations in .pdm.toml, but it is recommended to not include it

|

| 110 |

+

# in version control.

|

| 111 |

+

# https://pdm.fming.dev/#use-with-ide

|

| 112 |

+

.pdm.toml

|

| 113 |

+

|

| 114 |

+

# PEP 582; used by e.g. github.com/David-OConnor/pyflow and github.com/pdm-project/pdm

|

| 115 |

+

__pypackages__/

|

| 116 |

+

|

| 117 |

+

# Celery stuff

|

| 118 |

+

celerybeat-schedule

|

| 119 |

+

celerybeat.pid

|

| 120 |

+

|

| 121 |

+

# SageMath parsed files

|

| 122 |

+

*.sage.py

|

| 123 |

+

|

| 124 |

+

# Environments

|

| 125 |

+

.env

|

| 126 |

+

.venv

|

| 127 |

+

env/

|

| 128 |

+

venv/

|

| 129 |

+

ENV/

|

| 130 |

+

env.bak/

|

| 131 |

+

venv.bak/

|

| 132 |

+

|

| 133 |

+

# Spyder project settings

|

| 134 |

+

.spyderproject

|

| 135 |

+

.spyproject

|

| 136 |

+

|

| 137 |

+

# Rope project settings

|

| 138 |

+

.ropeproject

|

| 139 |

+

|

| 140 |

+

# mkdocs documentation

|

| 141 |

+

/site

|

| 142 |

+

|

| 143 |

+

# mypy

|

| 144 |

+

.mypy_cache/

|

| 145 |

+

.dmypy.json

|

| 146 |

+

dmypy.json

|

| 147 |

+

|

| 148 |

+

# Pyre type checker

|

| 149 |

+

.pyre/

|

| 150 |

+

|

| 151 |

+

# pytype static type analyzer

|

| 152 |

+

.pytype/

|

| 153 |

+

|

| 154 |

+

# Cython debug symbols

|

| 155 |

+

cython_debug/

|

| 156 |

+

|

| 157 |

+

# PyCharm

|

| 158 |

+

# JetBrains specific template is maintained in a separate JetBrains.gitignore that can

|

| 159 |

+

# be found at https://github.com/github/gitignore/blob/main/Global/JetBrains.gitignore

|

| 160 |

+

# and can be added to the global gitignore or merged into this file. For a more nuclear

|

| 161 |

+

# option (not recommended) you can uncomment the following to ignore the entire idea folder.

|

| 162 |

+

.idea/

|

LICENSE

ADDED

|

@@ -0,0 +1,201 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Apache License

|

| 2 |

+

Version 2.0, January 2004

|

| 3 |

+

http://www.apache.org/licenses/

|

| 4 |

+

|

| 5 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 6 |

+

|

| 7 |

+

1. Definitions.

|

| 8 |

+

|

| 9 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 10 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 11 |

+

|

| 12 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 13 |

+

the copyright owner that is granting the License.

|

| 14 |

+

|

| 15 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 16 |

+

other entities that control, are controlled by, or are under common

|

| 17 |

+

control with that entity. For the purposes of this definition,

|

| 18 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 19 |

+

direction or management of such entity, whether by contract or

|

| 20 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 21 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 22 |

+

|

| 23 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 24 |

+

exercising permissions granted by this License.

|

| 25 |

+

|

| 26 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 27 |

+

including but not limited to software source code, documentation

|

| 28 |

+

source, and configuration files.

|

| 29 |

+

|

| 30 |

+

"Object" form shall mean any form resulting from mechanical

|

| 31 |

+

transformation or translation of a Source form, including but

|

| 32 |

+

not limited to compiled object code, generated documentation,

|

| 33 |

+

and conversions to other media types.

|

| 34 |

+

|

| 35 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 36 |

+

Object form, made available under the License, as indicated by a

|

| 37 |

+

copyright notice that is included in or attached to the work

|

| 38 |

+

(an example is provided in the Appendix below).

|

| 39 |

+

|

| 40 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 41 |

+

form, that is based on (or derived from) the Work and for which the

|

| 42 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 43 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 44 |

+

of this License, Derivative Works shall not include works that remain

|

| 45 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 46 |

+

the Work and Derivative Works thereof.

|

| 47 |

+

|

| 48 |

+

"Contribution" shall mean any work of authorship, including

|

| 49 |

+

the original version of the Work and any modifications or additions

|

| 50 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 51 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 52 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 53 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 54 |

+

means any form of electronic, verbal, or written communication sent

|

| 55 |

+

to the Licensor or its representatives, including but not limited to

|

| 56 |

+

communication on electronic mailing lists, source code control systems,

|

| 57 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 58 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 59 |

+

excluding communication that is conspicuously marked or otherwise

|

| 60 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 61 |

+

|

| 62 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 63 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 64 |

+

subsequently incorporated within the Work.

|

| 65 |

+

|

| 66 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 67 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 68 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 69 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 70 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 71 |

+

Work and such Derivative Works in Source or Object form.

|

| 72 |

+

|

| 73 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 74 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 75 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 76 |

+

(except as stated in this section) patent license to make, have made,

|

| 77 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 78 |

+

where such license applies only to those patent claims licensable

|

| 79 |

+

by such Contributor that are necessarily infringed by their

|

| 80 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 81 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 82 |

+

institute patent litigation against any entity (including a

|

| 83 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 84 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 85 |

+

or contributory patent infringement, then any patent licenses

|

| 86 |

+

granted to You under this License for that Work shall terminate

|

| 87 |

+

as of the date such litigation is filed.

|

| 88 |

+

|

| 89 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 90 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 91 |

+

modifications, and in Source or Object form, provided that You

|

| 92 |

+

meet the following conditions:

|

| 93 |

+

|

| 94 |

+

(a) You must give any other recipients of the Work or

|

| 95 |

+

Derivative Works a copy of this License; and

|

| 96 |

+

|

| 97 |

+

(b) You must cause any modified files to carry prominent notices

|

| 98 |

+

stating that You changed the files; and

|

| 99 |

+

|

| 100 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 101 |

+

that You distribute, all copyright, patent, trademark, and

|

| 102 |

+

attribution notices from the Source form of the Work,

|

| 103 |

+

excluding those notices that do not pertain to any part of

|

| 104 |

+

the Derivative Works; and

|

| 105 |

+

|

| 106 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 107 |

+

distribution, then any Derivative Works that You distribute must

|

| 108 |

+

include a readable copy of the attribution notices contained

|

| 109 |

+

within such NOTICE file, excluding those notices that do not

|

| 110 |

+

pertain to any part of the Derivative Works, in at least one

|

| 111 |

+

of the following places: within a NOTICE text file distributed

|

| 112 |

+

as part of the Derivative Works; within the Source form or

|

| 113 |

+

documentation, if provided along with the Derivative Works; or,

|

| 114 |

+

within a display generated by the Derivative Works, if and

|

| 115 |

+

wherever such third-party notices normally appear. The contents

|

| 116 |

+

of the NOTICE file are for informational purposes only and

|

| 117 |

+

do not modify the License. You may add Your own attribution

|

| 118 |

+

notices within Derivative Works that You distribute, alongside

|

| 119 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 120 |

+

that such additional attribution notices cannot be construed

|

| 121 |

+

as modifying the License.

|

| 122 |

+

|

| 123 |

+

You may add Your own copyright statement to Your modifications and

|

| 124 |

+

may provide additional or different license terms and conditions

|

| 125 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 126 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 127 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 128 |

+

the conditions stated in this License.

|

| 129 |

+

|

| 130 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 131 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 132 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 133 |

+

this License, without any additional terms or conditions.

|

| 134 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 135 |

+

the terms of any separate license agreement you may have executed

|

| 136 |

+

with Licensor regarding such Contributions.

|

| 137 |

+

|

| 138 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 139 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 140 |

+

except as required for reasonable and customary use in describing the

|

| 141 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 142 |

+

|

| 143 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 144 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 145 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 146 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 147 |

+

implied, including, without limitation, any warranties or conditions

|

| 148 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 149 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 150 |

+

appropriateness of using or redistributing the Work and assume any

|

| 151 |

+

risks associated with Your exercise of permissions under this License.

|

| 152 |

+

|

| 153 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 154 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 155 |

+

unless required by applicable law (such as deliberate and grossly

|

| 156 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 157 |

+

liable to You for damages, including any direct, indirect, special,

|

| 158 |

+

incidental, or consequential damages of any character arising as a

|

| 159 |

+

result of this License or out of the use or inability to use the

|

| 160 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 161 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 162 |

+

other commercial damages or losses), even if such Contributor

|

| 163 |

+

has been advised of the possibility of such damages.

|

| 164 |

+

|

| 165 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 166 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 167 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 168 |

+

or other liability obligations and/or rights consistent with this

|

| 169 |

+

License. However, in accepting such obligations, You may act only

|

| 170 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 171 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 172 |

+

defend, and hold each Contributor harmless for any liability

|

| 173 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 174 |

+

of your accepting any such warranty or additional liability.

|

| 175 |

+

|

| 176 |

+

END OF TERMS AND CONDITIONS

|

| 177 |

+

|

| 178 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 179 |

+

|

| 180 |

+

To apply the Apache License to your work, attach the following

|

| 181 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 182 |

+

replaced with your own identifying information. (Don't include

|

| 183 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 184 |

+

comment syntax for the file format. We also recommend that a

|

| 185 |

+

file or class name and description of purpose be included on the

|

| 186 |

+

same "printed page" as the copyright notice for easier

|

| 187 |

+

identification within third-party archives.

|

| 188 |

+

|

| 189 |

+

Copyright [yyyy] [name of copyright owner]

|

| 190 |

+

|

| 191 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 192 |

+

you may not use this file except in compliance with the License.

|

| 193 |

+

You may obtain a copy of the License at

|

| 194 |

+

|

| 195 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 196 |

+

|

| 197 |

+

Unless required by applicable law or agreed to in writing, software

|

| 198 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 199 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 200 |

+

See the License for the specific language governing permissions and

|

| 201 |

+

limitations under the License.

|

README.md

CHANGED

|

@@ -1,12 +1,261 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

-

|

| 3 |

-

|

| 4 |

-

|

| 5 |

-

|

| 6 |

-

|

| 7 |

-

|

| 8 |

-

|

| 9 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 10 |

---

|

| 11 |

|

| 12 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# IC-Light

|

| 2 |

+

|

| 3 |

+

IC-Light is a project to manipulate the illumination of images.

|

| 4 |

+

|

| 5 |

+

The name "IC-Light" stands for **"Imposing Consistent Light"** (we will briefly describe this at the end of this page).

|

| 6 |

+

|

| 7 |

+

Currently, we release two types of models: text-conditioned relighting model and background-conditioned model. Both types take foreground images as inputs.

|

| 8 |

+

|

| 9 |

+

**Note that "iclightai dot com" is a scam website. They have no relationship with us. Do not give scam websites money! This GitHub repo is the only official IC-Light.**

|

| 10 |

+

|

| 11 |

+

# News

|

| 12 |

+

|

| 13 |

+

[Alternative model](https://github.com/lllyasviel/IC-Light/discussions/109) for stronger illumination modifications.

|

| 14 |

+

|

| 15 |

+

Some news about flux is [here](https://github.com/lllyasviel/IC-Light/discussions/98). (A fix [update](https://github.com/lllyasviel/IC-Light/discussions/98#discussioncomment-11370266) is added at Nov 25, more demos will be uploaded soon.)

|

| 16 |

+

|

| 17 |

+

# Get Started

|

| 18 |

+

|

| 19 |

+

Below script will run the text-conditioned relighting model:

|

| 20 |

+

|

| 21 |

+

git clone https://github.com/lllyasviel/IC-Light.git

|

| 22 |

+

cd IC-Light

|

| 23 |

+

conda create -n iclight python=3.10

|

| 24 |

+

conda activate iclight

|

| 25 |

+

pip install torch torchvision --index-url https://download.pytorch.org/whl/cu121

|

| 26 |

+

pip install -r requirements.txt

|

| 27 |

+

python gradio_demo.py

|

| 28 |

+

|

| 29 |

+

Or, to use background-conditioned demo:

|

| 30 |

+

|

| 31 |

+

python gradio_demo_bg.py

|

| 32 |

+

|

| 33 |

+

Model downloading is automatic.

|

| 34 |

+

|

| 35 |

+

Note that the "gradio_demo.py" has an official [huggingFace Space here](https://huggingface.co/spaces/lllyasviel/IC-Light).

|

| 36 |

+

|

| 37 |

+

# Screenshot

|

| 38 |

+

|

| 39 |

+

### Text-Conditioned Model

|

| 40 |

+

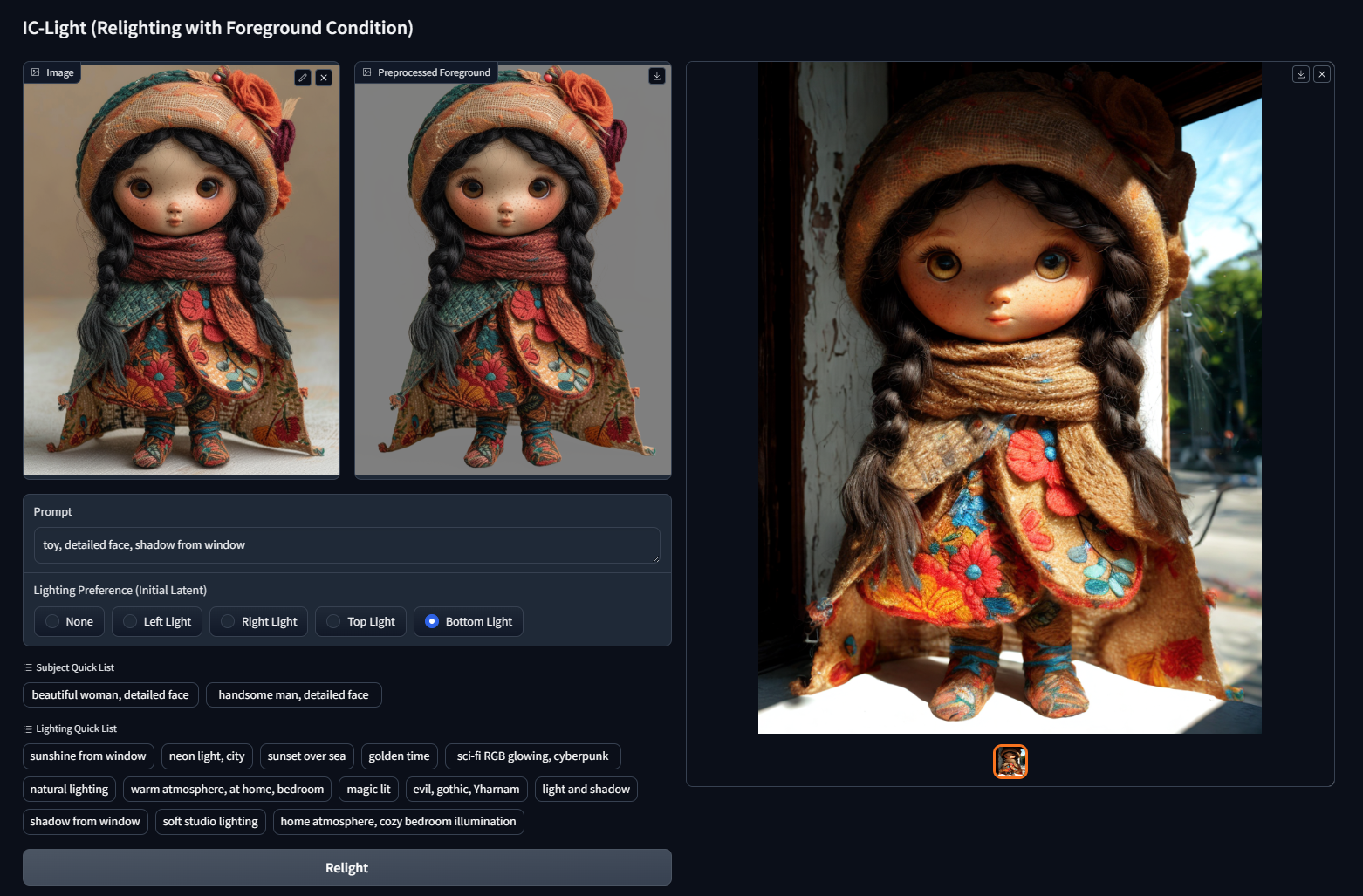

|

| 41 |

+

(Note that the "Lighting Preference" are just initial latents - eg., if the Lighting Preference is "Left" then initial latent is left white right black.)

|

| 42 |

+

|

| 43 |

---

|

| 44 |

+

|

| 45 |

+

**Prompt: beautiful woman, detailed face, warm atmosphere, at home, bedroom**

|

| 46 |

+

|

| 47 |

+

Lighting Preference: Left

|

| 48 |

+

|

| 49 |

+

|

| 50 |

+

|

| 51 |

+

---

|

| 52 |

+

|

| 53 |

+

**Prompt: beautiful woman, detailed face, sunshine from window**

|

| 54 |

+

|

| 55 |

+

Lighting Preference: Left

|

| 56 |

+

|

| 57 |

+

|

| 58 |

+

|

| 59 |

---

|

| 60 |

|

| 61 |

+

**beautiful woman, detailed face, neon, Wong Kar-wai, warm**

|

| 62 |

+

|

| 63 |

+

Lighting Preference: Left

|

| 64 |

+

|

| 65 |

+

|

| 66 |

+

|

| 67 |

+

---

|

| 68 |

+

|

| 69 |

+

**Prompt: beautiful woman, detailed face, sunshine, outdoor, warm atmosphere**

|

| 70 |

+

|

| 71 |

+

Lighting Preference: Right

|

| 72 |

+

|

| 73 |

+

|

| 74 |

+

|

| 75 |

+

---

|

| 76 |

+

|

| 77 |

+

**Prompt: beautiful woman, detailed face, sunshine, outdoor, warm atmosphere**

|

| 78 |

+

|

| 79 |

+

Lighting Preference: Left

|

| 80 |

+

|

| 81 |

+

|

| 82 |

+

|

| 83 |

+

---

|

| 84 |

+

|

| 85 |

+

**Prompt: beautiful woman, detailed face, sunshine from window**

|

| 86 |

+

|

| 87 |

+

Lighting Preference: Right

|

| 88 |

+

|

| 89 |

+

|

| 90 |

+

|

| 91 |

+

---

|

| 92 |

+

|

| 93 |

+

**Prompt: beautiful woman, detailed face, shadow from window**

|

| 94 |

+

|

| 95 |

+

Lighting Preference: Left

|

| 96 |

+

|

| 97 |

+

|

| 98 |

+

|

| 99 |

+

---

|

| 100 |

+

|

| 101 |

+

**Prompt: beautiful woman, detailed face, sunset over sea**

|

| 102 |

+

|

| 103 |

+

Lighting Preference: Right

|

| 104 |

+

|

| 105 |

+

|

| 106 |

+

|

| 107 |

+

---

|

| 108 |

+

|

| 109 |

+

**Prompt: handsome boy, detailed face, neon light, city**

|

| 110 |

+

|

| 111 |

+

Lighting Preference: Left

|

| 112 |

+

|

| 113 |

+

|

| 114 |

+

|

| 115 |

+

---

|

| 116 |

+

|

| 117 |

+

**Prompt: beautiful woman, detailed face, light and shadow**

|

| 118 |

+

|

| 119 |

+

Lighting Preference: Left

|

| 120 |

+

|

| 121 |

+

|

| 122 |

+

|

| 123 |

+

(beautiful woman, detailed face, soft studio lighting)

|

| 124 |

+

|

| 125 |

+

|

| 126 |

+

|

| 127 |

+

---

|

| 128 |

+

|

| 129 |

+

**Prompt: Buddha, detailed face, sci-fi RGB glowing, cyberpunk**

|

| 130 |

+

|

| 131 |

+

Lighting Preference: Left

|

| 132 |

+

|

| 133 |

+

|

| 134 |

+

|

| 135 |

+

---

|

| 136 |

+

|

| 137 |

+

**Prompt: Buddha, detailed face, natural lighting**

|

| 138 |

+

|

| 139 |

+

Lighting Preference: Left

|

| 140 |

+

|

| 141 |

+

|

| 142 |

+

|

| 143 |

+

---

|

| 144 |

+

|

| 145 |

+

**Prompt: toy, detailed face, shadow from window**

|

| 146 |

+

|

| 147 |

+

Lighting Preference: Bottom

|

| 148 |

+

|

| 149 |

+

|

| 150 |

+

|

| 151 |

+

---

|

| 152 |

+

|

| 153 |

+

**Prompt: toy, detailed face, sunset over sea**

|

| 154 |

+

|

| 155 |

+

Lighting Preference: Right

|

| 156 |

+

|

| 157 |

+

|

| 158 |

+

|

| 159 |

+

---

|

| 160 |

+

|

| 161 |

+

**Prompt: dog, magic lit, sci-fi RGB glowing, studio lighting**

|

| 162 |

+

|

| 163 |

+

Lighting Preference: Bottom

|

| 164 |

+

|

| 165 |

+

|

| 166 |

+

|

| 167 |

+

---

|

| 168 |

+

|

| 169 |

+

**Prompt: mysteriou human, warm atmosphere, warm atmosphere, at home, bedroom**

|

| 170 |

+

|

| 171 |

+

Lighting Preference: Right

|

| 172 |

+

|

| 173 |

+

|

| 174 |

+

|

| 175 |

+

---

|

| 176 |

+

|

| 177 |

+

### Background-Conditioned Model

|

| 178 |

+

|

| 179 |

+

The background conditioned model does not require careful prompting. One can just use simple prompts like "handsome man, cinematic lighting".

|

| 180 |

+

|

| 181 |

+

---

|

| 182 |

+

|

| 183 |

+

|

| 184 |

+

|

| 185 |

+

|

| 186 |

+

|

| 187 |

+

|

| 188 |

+

|

| 189 |

+

|

| 190 |

+

|

| 191 |

+

---

|

| 192 |

+

|

| 193 |

+

A more structured visualization:

|

| 194 |

+

|

| 195 |

+

|

| 196 |

+

|

| 197 |

+

# Imposing Consistent Light

|

| 198 |

+

|

| 199 |

+

In HDR space, illumination has a property that all light transports are independent.

|

| 200 |

+

|

| 201 |

+

As a result, the blending of appearances of different light sources is equivalent to the appearance with mixed light sources:

|

| 202 |

+

|

| 203 |

+

|

| 204 |

+

|

| 205 |

+

Using the above [light stage](https://www.pauldebevec.com/Research/LS/) as an example, the two images from the "appearance mixture" and "light source mixture" are consistent (mathematically equivalent in HDR space, ideally).

|

| 206 |

+

|

| 207 |

+

We imposed such consistency (using MLPs in latent space) when training the relighting models.

|

| 208 |

+

|

| 209 |

+

As a result, the model is able to produce highly consistent relight - **so** consistent that different relightings can even be merged as normal maps! Despite the fact that the models are latent diffusion.

|

| 210 |

+

|

| 211 |

+

|

| 212 |

+

|

| 213 |

+

From left to right are inputs, model outputs relighting, devided shadow image, and merged normal maps. Note that the model is not trained with any normal map data. This normal estimation comes from the consistency of relighting.

|

| 214 |

+

|

| 215 |

+

You can reproduce this experiment using this button (it is 4x slower because it relight image 4 times)

|

| 216 |

+

|

| 217 |

+

|

| 218 |

+

|

| 219 |

+

|

| 220 |

+

|

| 221 |

+

Below are bigger images (feel free to try yourself to get more results!)

|

| 222 |

+

|

| 223 |

+

|

| 224 |

+

|

| 225 |

+

|

| 226 |

+

|

| 227 |

+

For reference, [geowizard](https://fuxiao0719.github.io/projects/geowizard/) (geowizard is a really great work!):

|

| 228 |

+

|

| 229 |

+

|

| 230 |

+

|

| 231 |

+

And, [switchlight](https://arxiv.org/pdf/2402.18848) (switchlight is another great work!):

|

| 232 |

+

|

| 233 |

+

|

| 234 |

+

|

| 235 |

+

# Model Notes

|

| 236 |

+

|

| 237 |

+

* **iclight_sd15_fc.safetensors** - The default relighting model, conditioned on text and foreground. You can use initial latent to influence the relighting.

|

| 238 |

+

|

| 239 |

+

* **iclight_sd15_fcon.safetensors** - Same as "iclight_sd15_fc.safetensors" but trained with offset noise. Note that the default "iclight_sd15_fc.safetensors" outperform this model slightly in a user study. And this is the reason why the default model is the model without offset noise.

|

| 240 |

+

|

| 241 |

+

* **iclight_sd15_fbc.safetensors** - Relighting model conditioned with text, foreground, and background.

|

| 242 |

+

|

| 243 |

+

Also, note that the original [BRIA RMBG 1.4](https://huggingface.co/briaai/RMBG-1.4) is for non-commercial use. If you use IC-Light in commercial projects, replace it with other background replacer like [BiRefNet](https://github.com/ZhengPeng7/BiRefNet).

|

| 244 |

+

|

| 245 |

+

# Cite

|

| 246 |

+

|

| 247 |

+

@Misc{iclight,

|

| 248 |

+

author = {Lvmin Zhang and Anyi Rao and Maneesh Agrawala},

|

| 249 |

+

title = {IC-Light GitHub Page},

|

| 250 |

+

year = {2024},

|

| 251 |

+

}

|

| 252 |

+

|

| 253 |

+

# Related Work

|

| 254 |

+

|

| 255 |

+

Also read ...

|

| 256 |

+

|

| 257 |

+

[Total Relighting: Learning to Relight Portraits for Background Replacement](https://augmentedperception.github.io/total_relighting/)

|

| 258 |

+

|

| 259 |

+

[Relightful Harmonization: Lighting-aware Portrait Background Replacement](https://arxiv.org/abs/2312.06886)

|

| 260 |

+

|

| 261 |

+

[SwitchLight: Co-design of Physics-driven Architecture and Pre-training Framework for Human Portrait Relighting](https://arxiv.org/pdf/2402.18848)

|

briarmbg.py

ADDED

|

@@ -0,0 +1,462 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# RMBG1.4 (diffusers implementation)

|

| 2 |

+

# Found on huggingface space of several projects

|

| 3 |

+

# Not sure which project is the source of this file

|

| 4 |

+

|

| 5 |

+

import torch

|

| 6 |

+

import torch.nn as nn

|

| 7 |

+

import torch.nn.functional as F

|

| 8 |

+

from huggingface_hub import PyTorchModelHubMixin

|

| 9 |

+

|

| 10 |

+

|

| 11 |

+

class REBNCONV(nn.Module):

|

| 12 |

+

def __init__(self, in_ch=3, out_ch=3, dirate=1, stride=1):

|

| 13 |

+

super(REBNCONV, self).__init__()

|

| 14 |

+

|

| 15 |

+

self.conv_s1 = nn.Conv2d(

|

| 16 |

+

in_ch, out_ch, 3, padding=1 * dirate, dilation=1 * dirate, stride=stride

|

| 17 |

+

)

|

| 18 |

+

self.bn_s1 = nn.BatchNorm2d(out_ch)

|

| 19 |

+

self.relu_s1 = nn.ReLU(inplace=True)

|

| 20 |

+

|

| 21 |

+

def forward(self, x):

|

| 22 |

+

hx = x

|

| 23 |

+

xout = self.relu_s1(self.bn_s1(self.conv_s1(hx)))

|

| 24 |

+

|

| 25 |

+

return xout

|

| 26 |

+

|

| 27 |

+

|

| 28 |

+

def _upsample_like(src, tar):

|

| 29 |

+

src = F.interpolate(src, size=tar.shape[2:], mode="bilinear")

|

| 30 |

+

return src

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

### RSU-7 ###

|

| 34 |

+

class RSU7(nn.Module):

|

| 35 |

+

def __init__(self, in_ch=3, mid_ch=12, out_ch=3, img_size=512):

|

| 36 |

+

super(RSU7, self).__init__()

|

| 37 |

+

|

| 38 |

+

self.in_ch = in_ch

|

| 39 |

+

self.mid_ch = mid_ch

|

| 40 |

+

self.out_ch = out_ch

|

| 41 |

+

|

| 42 |

+

self.rebnconvin = REBNCONV(in_ch, out_ch, dirate=1) ## 1 -> 1/2

|

| 43 |

+

|

| 44 |

+

self.rebnconv1 = REBNCONV(out_ch, mid_ch, dirate=1)

|

| 45 |

+

self.pool1 = nn.MaxPool2d(2, stride=2, ceil_mode=True)

|

| 46 |

+

|

| 47 |

+

self.rebnconv2 = REBNCONV(mid_ch, mid_ch, dirate=1)

|

| 48 |

+

self.pool2 = nn.MaxPool2d(2, stride=2, ceil_mode=True)

|

| 49 |

+

|

| 50 |

+

self.rebnconv3 = REBNCONV(mid_ch, mid_ch, dirate=1)

|

| 51 |

+

self.pool3 = nn.MaxPool2d(2, stride=2, ceil_mode=True)

|

| 52 |

+

|

| 53 |

+

self.rebnconv4 = REBNCONV(mid_ch, mid_ch, dirate=1)

|

| 54 |

+

self.pool4 = nn.MaxPool2d(2, stride=2, ceil_mode=True)

|

| 55 |

+

|

| 56 |

+

self.rebnconv5 = REBNCONV(mid_ch, mid_ch, dirate=1)

|

| 57 |

+

self.pool5 = nn.MaxPool2d(2, stride=2, ceil_mode=True)

|

| 58 |

+

|

| 59 |

+

self.rebnconv6 = REBNCONV(mid_ch, mid_ch, dirate=1)

|

| 60 |

+

|

| 61 |

+

self.rebnconv7 = REBNCONV(mid_ch, mid_ch, dirate=2)

|

| 62 |

+

|

| 63 |

+

self.rebnconv6d = REBNCONV(mid_ch * 2, mid_ch, dirate=1)

|

| 64 |

+

self.rebnconv5d = REBNCONV(mid_ch * 2, mid_ch, dirate=1)

|

| 65 |

+

self.rebnconv4d = REBNCONV(mid_ch * 2, mid_ch, dirate=1)

|

| 66 |

+

self.rebnconv3d = REBNCONV(mid_ch * 2, mid_ch, dirate=1)

|

| 67 |

+

self.rebnconv2d = REBNCONV(mid_ch * 2, mid_ch, dirate=1)

|

| 68 |

+

self.rebnconv1d = REBNCONV(mid_ch * 2, out_ch, dirate=1)

|

| 69 |

+

|

| 70 |

+

def forward(self, x):

|

| 71 |

+

b, c, h, w = x.shape

|

| 72 |

+

|

| 73 |

+

hx = x

|

| 74 |

+

hxin = self.rebnconvin(hx)

|

| 75 |

+

|

| 76 |

+

hx1 = self.rebnconv1(hxin)

|

| 77 |

+

hx = self.pool1(hx1)

|

| 78 |

+

|

| 79 |

+

hx2 = self.rebnconv2(hx)

|

| 80 |

+

hx = self.pool2(hx2)

|

| 81 |

+

|

| 82 |

+

hx3 = self.rebnconv3(hx)

|

| 83 |

+

hx = self.pool3(hx3)

|

| 84 |

+

|

| 85 |

+

hx4 = self.rebnconv4(hx)

|

| 86 |

+

hx = self.pool4(hx4)

|

| 87 |

+

|

| 88 |

+

hx5 = self.rebnconv5(hx)

|

| 89 |

+

hx = self.pool5(hx5)

|

| 90 |

+

|

| 91 |

+

hx6 = self.rebnconv6(hx)

|

| 92 |

+

|

| 93 |

+

hx7 = self.rebnconv7(hx6)

|

| 94 |

+

|

| 95 |

+

hx6d = self.rebnconv6d(torch.cat((hx7, hx6), 1))

|

| 96 |

+

hx6dup = _upsample_like(hx6d, hx5)

|

| 97 |

+

|

| 98 |

+

hx5d = self.rebnconv5d(torch.cat((hx6dup, hx5), 1))

|

| 99 |

+

hx5dup = _upsample_like(hx5d, hx4)

|

| 100 |

+

|

| 101 |

+

hx4d = self.rebnconv4d(torch.cat((hx5dup, hx4), 1))

|

| 102 |

+

hx4dup = _upsample_like(hx4d, hx3)

|

| 103 |

+

|

| 104 |

+

hx3d = self.rebnconv3d(torch.cat((hx4dup, hx3), 1))

|

| 105 |

+

hx3dup = _upsample_like(hx3d, hx2)

|

| 106 |

+

|

| 107 |

+

hx2d = self.rebnconv2d(torch.cat((hx3dup, hx2), 1))

|

| 108 |

+

hx2dup = _upsample_like(hx2d, hx1)

|

| 109 |

+

|

| 110 |

+

hx1d = self.rebnconv1d(torch.cat((hx2dup, hx1), 1))

|

| 111 |

+

|

| 112 |

+

return hx1d + hxin

|

| 113 |

+

|

| 114 |

+

|

| 115 |

+

### RSU-6 ###

|

| 116 |

+

class RSU6(nn.Module):

|

| 117 |

+

def __init__(self, in_ch=3, mid_ch=12, out_ch=3):

|

| 118 |

+

super(RSU6, self).__init__()

|

| 119 |

+

|

| 120 |

+

self.rebnconvin = REBNCONV(in_ch, out_ch, dirate=1)

|

| 121 |

+

|

| 122 |

+

self.rebnconv1 = REBNCONV(out_ch, mid_ch, dirate=1)

|

| 123 |

+

self.pool1 = nn.MaxPool2d(2, stride=2, ceil_mode=True)

|

| 124 |

+

|

| 125 |

+

self.rebnconv2 = REBNCONV(mid_ch, mid_ch, dirate=1)

|

| 126 |

+

self.pool2 = nn.MaxPool2d(2, stride=2, ceil_mode=True)

|

| 127 |

+

|

| 128 |

+

self.rebnconv3 = REBNCONV(mid_ch, mid_ch, dirate=1)

|

| 129 |

+

self.pool3 = nn.MaxPool2d(2, stride=2, ceil_mode=True)

|

| 130 |

+

|

| 131 |

+

self.rebnconv4 = REBNCONV(mid_ch, mid_ch, dirate=1)

|

| 132 |

+

self.pool4 = nn.MaxPool2d(2, stride=2, ceil_mode=True)

|

| 133 |

+

|

| 134 |

+

self.rebnconv5 = REBNCONV(mid_ch, mid_ch, dirate=1)

|

| 135 |

+

|

| 136 |

+