{

"cells": [

{

"cell_type": "markdown",

"id": "220db57c-608d-4ebd-8e5d-97486477bc8e",

"metadata": {},

"source": [

"# Infinite Zoom Stable Diffusion v2 and OpenVINO™\n",

"\n",

"Stable Diffusion v2 is the next generation of Stable Diffusion model a Text-to-Image latent diffusion model created by the researchers and engineers from [Stability AI](https://stability.ai/) and [LAION](https://laion.ai/). \n",

"\n",

"General diffusion models are machine learning systems that are trained to denoise random gaussian noise step by step, to get to a sample of interest, such as an image.\n",

"Diffusion models have shown to achieve state-of-the-art results for generating image data. But one downside of diffusion models is that the reverse denoising process is slow. In addition, these models consume a lot of memory because they operate in pixel space, which becomes unreasonably expensive when generating high-resolution images. Therefore, it is challenging to train these models and also use them for inference. OpenVINO brings capabilities to run model inference on Intel hardware and opens the door to the fantastic world of diffusion models for everyone!\n",

"\n",

"In previous notebooks, we already discussed how to run [Text-to-Image generation and Image-to-Image generation using Stable Diffusion v1](../stable-diffusion-text-to-image/stable-diffusion-text-to-image.ipynb) and [controlling its generation process using ControlNet](./controlnet-stable-diffusion/controlnet-stable-diffusion.ipynb). Now is turn of Stable Diffusion v2.\n",

"\n",

"## Stable Diffusion v2: What’s new?\n",

"\n",

"The new stable diffusion model offers a bunch of new features inspired by the other models that have emerged since the introduction of the first iteration. Some of the features that can be found in the new model are:\n",

"\n",

"* The model comes with a new robust encoder, OpenCLIP, created by LAION and aided by Stability AI; this version v2 significantly enhances the produced photos over the V1 versions. \n",

"* The model can now generate images in a 768x768 resolution, offering more information to be shown in the generated images.\n",

"* The model finetuned with [v-objective](https://arxiv.org/abs/2202.00512). The v-parameterization is particularly useful for numerical stability throughout the diffusion process to enable progressive distillation for models. For models that operate at higher resolution, it is also discovered that the v-parameterization avoids color shifting artifacts that are known to affect high resolution diffusion models, and in the video setting it avoids temporal color shifting that sometimes appears with epsilon-prediction used in Stable Diffusion v1. \n",

"* The model also comes with a new diffusion model capable of running upscaling on the images generated. Upscaled images can be adjusted up to 4 times the original image. Provided as separated model, for more details please check [stable-diffusion-x4-upscaler](https://huggingface.co/stabilityai/stable-diffusion-x4-upscaler)\n",

"* The model comes with a new refined depth architecture capable of preserving context from prior generation layers in an image-to-image setting. This structure preservation helps generate images that preserving forms and shadow of objects, but with different content.\n",

"* The model comes with an updated inpainting module built upon the previous model. This text-guided inpainting makes switching out parts in the image easier than before.\n",

"\n",

"This notebook demonstrates how to convert and run Stable Diffusion v2 model using OpenVINO.\n",

"\n",

"Notebook contains the following steps:\n",

"\n",

"1. Create pipeline with PyTorch models using Diffusers library.\n",

"2. Convert models to OpenVINO IR format, using model conversion API.\n",

"3. Run Stable Diffusion v2 inpainting pipeline for generation infinity zoom video\n"

]

},

{

"cell_type": "markdown",

"id": "377238ed",

"metadata": {},

"source": [

"\n",

"#### Table of contents:\n",

"\n",

"- [Stable Diffusion v2 Infinite Zoom Showcase](#Stable-Diffusion-v2-Infinite-Zoom-Showcase)\n",

" - [Stable Diffusion Text guided Inpainting](#Stable-Diffusion-Text-guided-Inpainting)\n",

"- [Prerequisites](#Prerequisites)\n",

" - [Stable Diffusion in Diffusers library](#Stable-Diffusion-in-Diffusers-library)\n",

" - [Convert models to OpenVINO Intermediate representation (IR) format](#Convert-models-to-OpenVINO-Intermediate-representation-(IR)-format)\n",

" - [Prepare Inference pipeline](#Prepare-Inference-pipeline)\n",

" - [Zoom Video Generation](#Zoom-Video-Generation)\n",

" - [Configure Inference Pipeline](#Configure-Inference-Pipeline)\n",

" - [Select inference device](#Select-inference-device)\n",

" - [Run Infinite Zoom video generation](#Run-Infinite-Zoom-video-generation)\n",

"\n"

]

},

{

"cell_type": "markdown",

"id": "9ad7780c",

"metadata": {},

"source": [

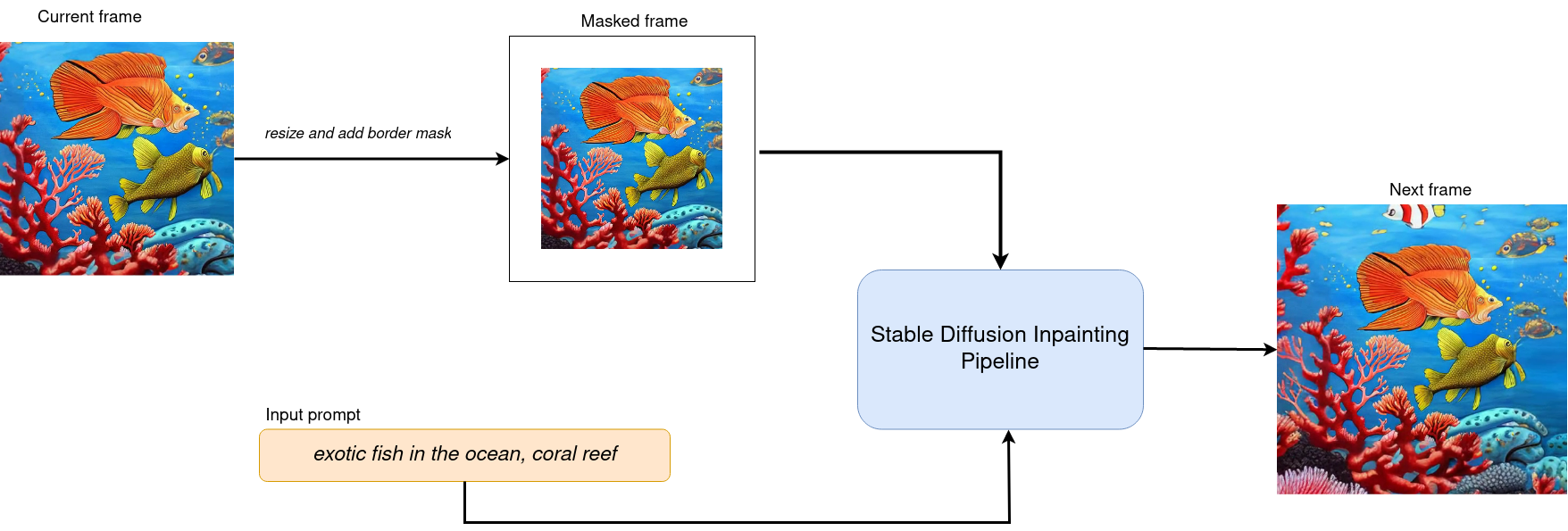

"## Stable Diffusion v2 Infinite Zoom Showcase\n",

"[back to top ⬆️](#Table-of-contents:)\n",

"\n",

"In this tutorial we consider how to use Stable Diffusion v2 model for generation sequence of images for infinite zoom video effect.\n",

"To do this, we will need [`stabilityai/stable-diffusion-2-inpainting`](https://huggingface.co/stabilityai/stable-diffusion-2-inpainting) model.\n",

"\n",

"### Stable Diffusion Text guided Inpainting\n",

"[back to top ⬆️](#Table-of-contents:)\n",

"\n",

"In image editing, inpainting is a process of restoring missing parts of pictures. Most commonly applied to reconstructing old deteriorated images, removing cracks, scratches, dust spots, or red-eyes from photographs.\n",

"\n",

"But with the power of AI and the Stable Diffusion model, inpainting can be used to achieve more than that. For example, instead of just restoring missing parts of an image, it can be used to render something entirely new in any part of an existing picture. Only your imagination limits it.\n",

"\n",

"The workflow diagram explains how Stable Diffusion inpainting pipeline for inpainting works:\n",

"\n",

"\n",

"\n",

"The pipeline has a lot of common with Text-to-Image generation pipeline discussed in previous section. Additionally to text prompt, pipeline accepts input source image and mask which provides an area of image which should be modified. Masked image encoded by VAE encoder into latent diffusion space and concatenated with randomly generated (on initial step only) or produced by U-Net latent generated image representation and used as input for next step denoising.\n",

"\n",

"Using this inpainting feature, decreasing image by certain margin and masking this border for every new frame we can create interesting Zoom Out video based on our prompt."

]

},

{

"cell_type": "markdown",

"id": "ee430286",

"metadata": {},

"source": [

"## Prerequisites\n",

"[back to top ⬆️](#Table-of-contents:)\n",

"\n",

"install required packages"

]

},

{

"cell_type": "code",

"execution_count": 1,

"id": "ceaa943d",

"metadata": {},

"outputs": [],

"source": [

"%pip install -q \"diffusers>=0.14.0\" \"transformers>=4.25.1\" \"gradio>=4.19\" \"openvino>=2023.1.0\" \"torch>=2.1\" Pillow opencv-python --extra-index-url https://download.pytorch.org/whl/cpu"

]

},

{

"cell_type": "markdown",

"id": "994be0f4",

"metadata": {},

"source": [

"### Stable Diffusion in Diffusers library\n",

"[back to top ⬆️](#Table-of-contents:)\n",

"\n",

"To work with Stable Diffusion v2, we will use Hugging Face [Diffusers](https://github.com/huggingface/diffusers) library. To experiment with Stable Diffusion models for Inpainting use case, Diffusers exposes the [`StableDiffusionInpaintPipeline`](https://huggingface.co/docs/diffusers/using-diffusers/conditional_image_generation) similar to the [other Diffusers pipelines](https://huggingface.co/docs/diffusers/api/pipelines/overview). The code below demonstrates how to create `StableDiffusionInpaintPipeline` using `stable-diffusion-2-inpainting`:"

]

},

{

"cell_type": "code",

"execution_count": 2,

"id": "9f3f6d26",

"metadata": {},

"outputs": [

{

"name": "stderr",

"output_type": "stream",

"text": [

"2023-09-25 12:14:32.810031: I tensorflow/core/util/port.cc:110] oneDNN custom operations are on. You may see slightly different numerical results due to floating-point round-off errors from different computation orders. To turn them off, set the environment variable `TF_ENABLE_ONEDNN_OPTS=0`.\n",

"2023-09-25 12:14:32.851215: I tensorflow/core/platform/cpu_feature_guard.cc:182] This TensorFlow binary is optimized to use available CPU instructions in performance-critical operations.\n",

"To enable the following instructions: AVX2 AVX512F AVX512_VNNI FMA, in other operations, rebuild TensorFlow with the appropriate compiler flags.\n",

"2023-09-25 12:14:33.562760: W tensorflow/compiler/tf2tensorrt/utils/py_utils.cc:38] TF-TRT Warning: Could not find TensorRT\n"

]

},

{

"data": {

"application/vnd.jupyter.widget-view+json": {

"model_id": "b5c9fad21adf40e183d233af4faa0b89",

"version_major": 2,

"version_minor": 0

},

"text/plain": [

"Loading pipeline components...: 0%| | 0/6 [00:00 0.5``) and cast to ``np.float32`` too.\n",

"\n",

" Args:\n",

" image (Union[np.array, PIL.Image]): The image to inpaint.\n",

" It can be a ``PIL.Image``, or a ``height x width x 3`` ``np.array``\n",

" mask (_type_): The mask to apply to the image, i.e. regions to inpaint.\n",

" It can be a ``PIL.Image``, or a ``height x width`` ``np.array``.\n",

"\n",

" Returns:\n",

" tuple[np.array]: The pair (mask, masked_image) as ``torch.Tensor`` with 4\n",

" dimensions: ``batch x channels x height x width``.\n",

" \"\"\"\n",

" if isinstance(image, (PIL.Image.Image, np.ndarray)):\n",

" image = [image]\n",

"\n",

" if isinstance(image, list) and isinstance(image[0], PIL.Image.Image):\n",

" image = [np.array(i.convert(\"RGB\"))[None, :] for i in image]\n",

" image = np.concatenate(image, axis=0)\n",

" elif isinstance(image, list) and isinstance(image[0], np.ndarray):\n",

" image = np.concatenate([i[None, :] for i in image], axis=0)\n",

"\n",

" image = image.transpose(0, 3, 1, 2)\n",

" image = image.astype(np.float32) / 127.5 - 1.0\n",

"\n",

" # preprocess mask\n",

" if isinstance(mask, (PIL.Image.Image, np.ndarray)):\n",

" mask = [mask]\n",

"\n",

" if isinstance(mask, list) and isinstance(mask[0], PIL.Image.Image):\n",

" mask = np.concatenate([np.array(m.convert(\"L\"))[None, None, :] for m in mask], axis=0)\n",

" mask = mask.astype(np.float32) / 255.0\n",

" elif isinstance(mask, list) and isinstance(mask[0], np.ndarray):\n",

" mask = np.concatenate([m[None, None, :] for m in mask], axis=0)\n",

"\n",

" mask[mask < 0.5] = 0\n",

" mask[mask >= 0.5] = 1\n",

"\n",

" masked_image = image * (mask < 0.5)\n",

"\n",

" return mask, masked_image"

]

},

{

"cell_type": "code",

"execution_count": 10,

"id": "486f0a6f",

"metadata": {},

"outputs": [],

"source": [

"class OVStableDiffusionInpaintingPipeline(DiffusionPipeline):\n",

" def __init__(\n",

" self,\n",

" vae_decoder: ov.Model,\n",

" text_encoder: ov.Model,\n",

" tokenizer: CLIPTokenizer,\n",

" unet: ov.Model,\n",

" scheduler: Union[DDIMScheduler, PNDMScheduler, LMSDiscreteScheduler],\n",

" vae_encoder: ov.Model = None,\n",

" ):\n",

" \"\"\"\n",

" Pipeline for text-to-image generation using Stable Diffusion.\n",

" Parameters:\n",

" vae_decoder (Model):\n",

" Variational Auto-Encoder (VAE) Model to decode images to and from latent representations.\n",

" text_encoder (Model):\n",

" Frozen text-encoder. Stable Diffusion uses the text portion of\n",

" [CLIP](https://huggingface.co/docs/transformers/model_doc/clip#transformers.CLIPTextModel), specifically\n",

" the clip-vit-large-patch14(https://huggingface.co/openai/clip-vit-large-patch14) variant.\n",

" tokenizer (CLIPTokenizer):\n",

" Tokenizer of class CLIPTokenizer(https://huggingface.co/docs/transformers/v4.21.0/en/model_doc/clip#transformers.CLIPTokenizer).\n",

" unet (Model): Conditional U-Net architecture to denoise the encoded image latents.\n",

" vae_encoder (Model):\n",

" Variational Auto-Encoder (VAE) Model to encode images to latent representation.\n",

" scheduler (SchedulerMixin):\n",

" A scheduler to be used in combination with unet to denoise the encoded image latents. Can be one of\n",

" DDIMScheduler, LMSDiscreteScheduler, or PNDMScheduler.\n",

" \"\"\"\n",

" super().__init__()\n",

" self.scheduler = scheduler\n",

" self.vae_decoder = vae_decoder\n",

" self.vae_encoder = vae_encoder\n",

" self.text_encoder = text_encoder\n",

" self.unet = unet\n",

" self._text_encoder_output = text_encoder.output(0)\n",

" self._unet_output = unet.output(0)\n",

" self._vae_d_output = vae_decoder.output(0)\n",

" self._vae_e_output = vae_encoder.output(0) if vae_encoder is not None else None\n",

" self.height = self.unet.input(0).shape[2] * 8\n",

" self.width = self.unet.input(0).shape[3] * 8\n",

" self.tokenizer = tokenizer\n",

" self.register_to_config(_progress_bar_config={})\n",

"\n",

" def prepare_mask_latents(\n",

" self,\n",

" mask,\n",

" masked_image,\n",

" height=512,\n",

" width=512,\n",

" do_classifier_free_guidance=True,\n",

" ):\n",

" \"\"\"\n",

" Prepare mask as Unet nput and encode input masked image to latent space using vae encoder\n",

"\n",

" Parameters:\n",

" mask (np.array): input mask array\n",

" masked_image (np.array): masked input image tensor\n",

" heigh (int, *optional*, 512): generated image height\n",

" width (int, *optional*, 512): generated image width\n",

" do_classifier_free_guidance (bool, *optional*, True): whether to use classifier free guidance or not\n",

" Returns:\n",

" mask (np.array): resized mask tensor\n",

" masked_image_latents (np.array): masked image encoded into latent space using VAE\n",

" \"\"\"\n",

" mask = torch.nn.functional.interpolate(torch.from_numpy(mask), size=(height // 8, width // 8))\n",

" mask = mask.numpy()\n",

"\n",

" # encode the mask image into latents space so we can concatenate it to the latents\n",

" latents = self.vae_encoder(masked_image)[self._vae_e_output]\n",

" masked_image_latents = latents * 0.18215\n",

"\n",

" mask = np.concatenate([mask] * 2) if do_classifier_free_guidance else mask\n",

" masked_image_latents = np.concatenate([masked_image_latents] * 2) if do_classifier_free_guidance else masked_image_latents\n",

" return mask, masked_image_latents\n",

"\n",

" def __call__(\n",

" self,\n",

" prompt: Union[str, List[str]],\n",

" image: PIL.Image.Image,\n",

" mask_image: PIL.Image.Image,\n",

" negative_prompt: Union[str, List[str]] = None,\n",

" num_inference_steps: Optional[int] = 50,\n",

" guidance_scale: Optional[float] = 7.5,\n",

" eta: Optional[float] = 0,\n",

" output_type: Optional[str] = \"pil\",\n",

" seed: Optional[int] = None,\n",

" ):\n",

" \"\"\"\n",

" Function invoked when calling the pipeline for generation.\n",

" Parameters:\n",

" prompt (str or List[str]):\n",

" The prompt or prompts to guide the image generation.\n",

" image (PIL.Image.Image):\n",

" Source image for inpainting.\n",

" mask_image (PIL.Image.Image):\n",

" Mask area for inpainting\n",

" negative_prompt (str or List[str]):\n",

" The negative prompt or prompts to guide the image generation.\n",

" num_inference_steps (int, *optional*, defaults to 50):\n",

" The number of denoising steps. More denoising steps usually lead to a higher quality image at the\n",

" expense of slower inference.\n",

" guidance_scale (float, *optional*, defaults to 7.5):\n",

" Guidance scale as defined in Classifier-Free Diffusion Guidance(https://arxiv.org/abs/2207.12598).\n",

" guidance_scale is defined as `w` of equation 2.\n",

" Higher guidance scale encourages to generate images that are closely linked to the text prompt,\n",

" usually at the expense of lower image quality.\n",

" eta (float, *optional*, defaults to 0.0):\n",

" Corresponds to parameter eta (η) in the DDIM paper: https://arxiv.org/abs/2010.02502. Only applies to\n",

" [DDIMScheduler], will be ignored for others.\n",

" output_type (`str`, *optional*, defaults to \"pil\"):\n",

" The output format of the generate image. Choose between\n",

" [PIL](https://pillow.readthedocs.io/en/stable/): PIL.Image.Image or np.array.\n",

" seed (int, *optional*, None):\n",

" Seed for random generator state initialization.\n",

" Returns:\n",

" Dictionary with keys:\n",

" sample - the last generated image PIL.Image.Image or np.array\n",

" \"\"\"\n",

" if seed is not None:\n",

" np.random.seed(seed)\n",

" # here `guidance_scale` is defined analog to the guidance weight `w` of equation (2)\n",

" # of the Imagen paper: https://arxiv.org/pdf/2205.11487.pdf . `guidance_scale = 1`\n",

" # corresponds to doing no classifier free guidance.\n",

" do_classifier_free_guidance = guidance_scale > 1.0\n",

" # get prompt text embeddings\n",

" text_embeddings = self._encode_prompt(\n",

" prompt,\n",

" do_classifier_free_guidance=do_classifier_free_guidance,\n",

" negative_prompt=negative_prompt,\n",

" )\n",

" # prepare mask\n",

" mask, masked_image = prepare_mask_and_masked_image(image, mask_image)\n",

" # set timesteps\n",

" accepts_offset = \"offset\" in set(inspect.signature(self.scheduler.set_timesteps).parameters.keys())\n",

" extra_set_kwargs = {}\n",

" if accepts_offset:\n",

" extra_set_kwargs[\"offset\"] = 1\n",

"\n",

" self.scheduler.set_timesteps(num_inference_steps, **extra_set_kwargs)\n",

" timesteps, num_inference_steps = self.get_timesteps(num_inference_steps, 1)\n",

" latent_timestep = timesteps[:1]\n",

"\n",

" # get the initial random noise unless the user supplied it\n",

" latents, meta = self.prepare_latents(latent_timestep)\n",

" mask, masked_image_latents = self.prepare_mask_latents(\n",

" mask,\n",

" masked_image,\n",

" do_classifier_free_guidance=do_classifier_free_guidance,\n",

" )\n",

"\n",

" # prepare extra kwargs for the scheduler step, since not all schedulers have the same signature\n",

" # eta (η) is only used with the DDIMScheduler, it will be ignored for other schedulers.\n",

" # eta corresponds to η in DDIM paper: https://arxiv.org/abs/2010.02502\n",

" # and should be between [0, 1]\n",

" accepts_eta = \"eta\" in set(inspect.signature(self.scheduler.step).parameters.keys())\n",

" extra_step_kwargs = {}\n",

" if accepts_eta:\n",

" extra_step_kwargs[\"eta\"] = eta\n",

"\n",

" for t in self.progress_bar(timesteps):\n",

" # expand the latents if we are doing classifier free guidance\n",

" latent_model_input = np.concatenate([latents] * 2) if do_classifier_free_guidance else latents\n",

" latent_model_input = self.scheduler.scale_model_input(latent_model_input, t)\n",

" latent_model_input = np.concatenate([latent_model_input, mask, masked_image_latents], axis=1)\n",

" # predict the noise residual\n",

" noise_pred = self.unet([latent_model_input, np.array(t, dtype=np.float32), text_embeddings])[self._unet_output]\n",

" # perform guidance\n",

" if do_classifier_free_guidance:\n",

" noise_pred_uncond, noise_pred_text = noise_pred[0], noise_pred[1]\n",

" noise_pred = noise_pred_uncond + guidance_scale * (noise_pred_text - noise_pred_uncond)\n",

"\n",

" # compute the previous noisy sample x_t -> x_t-1\n",

" latents = self.scheduler.step(\n",

" torch.from_numpy(noise_pred),\n",

" t,\n",

" torch.from_numpy(latents),\n",

" **extra_step_kwargs,\n",

" )[\"prev_sample\"].numpy()\n",

" # scale and decode the image latents with vae\n",

" image = self.vae_decoder(latents * (1 / 0.18215))[self._vae_d_output]\n",

"\n",

" image = self.postprocess_image(image, meta, output_type)\n",

" return {\"sample\": image}\n",

"\n",

" def _encode_prompt(\n",

" self,\n",

" prompt: Union[str, List[str]],\n",

" num_images_per_prompt: int = 1,\n",

" do_classifier_free_guidance: bool = True,\n",

" negative_prompt: Union[str, List[str]] = None,\n",

" ):\n",

" \"\"\"\n",

" Encodes the prompt into text encoder hidden states.\n",

"\n",

" Parameters:\n",

" prompt (str or list(str)): prompt to be encoded\n",

" num_images_per_prompt (int): number of images that should be generated per prompt\n",

" do_classifier_free_guidance (bool): whether to use classifier free guidance or not\n",

" negative_prompt (str or list(str)): negative prompt to be encoded\n",

" Returns:\n",

" text_embeddings (np.ndarray): text encoder hidden states\n",

" \"\"\"\n",

" batch_size = len(prompt) if isinstance(prompt, list) else 1\n",

"\n",

" # tokenize input prompts\n",

" text_inputs = self.tokenizer(\n",

" prompt,\n",

" padding=\"max_length\",\n",

" max_length=self.tokenizer.model_max_length,\n",

" truncation=True,\n",

" return_tensors=\"np\",\n",

" )\n",

" text_input_ids = text_inputs.input_ids\n",

"\n",

" text_embeddings = self.text_encoder(text_input_ids)[self._text_encoder_output]\n",

"\n",

" # duplicate text embeddings for each generation per prompt\n",

" if num_images_per_prompt != 1:\n",

" bs_embed, seq_len, _ = text_embeddings.shape\n",

" text_embeddings = np.tile(text_embeddings, (1, num_images_per_prompt, 1))\n",

" text_embeddings = np.reshape(text_embeddings, (bs_embed * num_images_per_prompt, seq_len, -1))\n",

"\n",

" # get unconditional embeddings for classifier free guidance\n",

" if do_classifier_free_guidance:\n",

" uncond_tokens: List[str]\n",

" max_length = text_input_ids.shape[-1]\n",

" if negative_prompt is None:\n",

" uncond_tokens = [\"\"] * batch_size\n",

" elif isinstance(negative_prompt, str):\n",

" uncond_tokens = [negative_prompt]\n",

" else:\n",

" uncond_tokens = negative_prompt\n",

" uncond_input = self.tokenizer(\n",

" uncond_tokens,\n",

" padding=\"max_length\",\n",

" max_length=max_length,\n",

" truncation=True,\n",

" return_tensors=\"np\",\n",

" )\n",

"\n",

" uncond_embeddings = self.text_encoder(uncond_input.input_ids)[self._text_encoder_output]\n",

"\n",

" # duplicate unconditional embeddings for each generation per prompt, using mps friendly method\n",

" seq_len = uncond_embeddings.shape[1]\n",

" uncond_embeddings = np.tile(uncond_embeddings, (1, num_images_per_prompt, 1))\n",

" uncond_embeddings = np.reshape(uncond_embeddings, (batch_size * num_images_per_prompt, seq_len, -1))\n",

"\n",

" # For classifier free guidance, we need to do two forward passes.\n",

" # Here we concatenate the unconditional and text embeddings into a single batch\n",

" # to avoid doing two forward passes\n",

" text_embeddings = np.concatenate([uncond_embeddings, text_embeddings])\n",

"\n",

" return text_embeddings\n",

"\n",

" def prepare_latents(self, latent_timestep: torch.Tensor = None):\n",

" \"\"\"\n",

" Function for getting initial latents for starting generation\n",

"\n",

" Parameters:\n",

" latent_timestep (torch.Tensor, *optional*, None):\n",

" Predicted by scheduler initial step for image generation, required for latent image mixing with nosie\n",

" Returns:\n",

" latents (np.ndarray):\n",

" Image encoded in latent space\n",

" \"\"\"\n",

" latents_shape = (1, 4, self.height // 8, self.width // 8)\n",

" noise = np.random.randn(*latents_shape).astype(np.float32)\n",

" # if we use LMSDiscreteScheduler, let's make sure latents are mulitplied by sigmas\n",

" if isinstance(self.scheduler, LMSDiscreteScheduler):\n",

" noise = noise * self.scheduler.sigmas[0].numpy()\n",

" return noise, {}\n",

"\n",

" def postprocess_image(self, image: np.ndarray, meta: Dict, output_type: str = \"pil\"):\n",

" \"\"\"\n",

" Postprocessing for decoded image. Takes generated image decoded by VAE decoder, unpad it to initila image size (if required),\n",

" normalize and convert to [0, 255] pixels range. Optionally, convertes it from np.ndarray to PIL.Image format\n",

"\n",

" Parameters:\n",

" image (np.ndarray):\n",

" Generated image\n",

" meta (Dict):\n",

" Metadata obtained on latents preparing step, can be empty\n",

" output_type (str, *optional*, pil):\n",

" Output format for result, can be pil or numpy\n",

" Returns:\n",

" image (List of np.ndarray or PIL.Image.Image):\n",

" Postprocessed images\n",

" \"\"\"\n",

" if \"padding\" in meta:\n",

" pad = meta[\"padding\"]\n",

" (_, end_h), (_, end_w) = pad[1:3]\n",

" h, w = image.shape[2:]\n",

" unpad_h = h - end_h\n",

" unpad_w = w - end_w\n",

" image = image[:, :, :unpad_h, :unpad_w]\n",

" image = np.clip(image / 2 + 0.5, 0, 1)\n",

" image = np.transpose(image, (0, 2, 3, 1))\n",

" # 9. Convert to PIL\n",

" if output_type == \"pil\":\n",

" image = self.numpy_to_pil(image)\n",

" if \"src_height\" in meta:\n",

" orig_height, orig_width = meta[\"src_height\"], meta[\"src_width\"]\n",

" image = [img.resize((orig_width, orig_height), PIL.Image.Resampling.LANCZOS) for img in image]\n",

" else:\n",

" if \"src_height\" in meta:\n",

" orig_height, orig_width = meta[\"src_height\"], meta[\"src_width\"]\n",

" image = [cv2.resize(img, (orig_width, orig_width)) for img in image]\n",

" return image\n",

"\n",

" def get_timesteps(self, num_inference_steps: int, strength: float):\n",

" \"\"\"\n",

" Helper function for getting scheduler timesteps for generation\n",

" In case of image-to-image generation, it updates number of steps according to strength\n",

"\n",

" Parameters:\n",

" num_inference_steps (int):\n",

" number of inference steps for generation\n",

" strength (float):\n",

" value between 0.0 and 1.0, that controls the amount of noise that is added to the input image.\n",

" Values that approach 1.0 allow for lots of variations but will also produce images that are not semantically consistent with the input.\n",

" \"\"\"\n",

" # get the original timestep using init_timestep\n",

" init_timestep = min(int(num_inference_steps * strength), num_inference_steps)\n",

"\n",

" t_start = max(num_inference_steps - init_timestep, 0)\n",

" timesteps = self.scheduler.timesteps[t_start:]\n",

"\n",

" return timesteps, num_inference_steps - t_start"

]

},

{

"cell_type": "markdown",

"id": "a0a54ba0",

"metadata": {},

"source": [

"### Zoom Video Generation\n",

"[back to top ⬆️](#Table-of-contents:)\n",

"\n",

"For achieving zoom effect, we will use inpainting to expand images beyond their original borders.\n",

"We run our `OVStableDiffusionInpaintingPipeline` in the loop, where each next frame will add edges to previous. The frame generation process illustrated on diagram below:\n",

"\n",

"\n",

"\n",

"After processing current frame, we decrease size of current image by mask size pixels from each side and use it as input for next step. Changing size of mask we can influence the size of painting area and image scaling.\n",

"\n",

"There are 2 zooming directions:\n",

"\n",

"* Zoom Out - move away from object\n",

"* Zoom In - move closer to object\n",

"\n",

"Zoom In will be processed in the same way as Zoom Out, but after generation is finished, we record frames in reversed order."

]

},

{

"cell_type": "code",

"execution_count": 11,

"id": "051acf7f",

"metadata": {},

"outputs": [],

"source": [

"from tqdm import trange\n",

"\n",

"\n",

"def generate_video(\n",

" pipe: OVStableDiffusionInpaintingPipeline,\n",

" prompt: Union[str, List[str]],\n",

" negative_prompt: Union[str, List[str]],\n",

" guidance_scale: float = 7.5,\n",

" num_inference_steps: int = 20,\n",

" num_frames: int = 20,\n",

" mask_width: int = 128,\n",

" seed: int = 9999,\n",

" zoom_in: bool = False,\n",

"):\n",

" \"\"\"\n",

" Zoom video generation function\n",

"\n",

" Parameters:\n",

" pipe (OVStableDiffusionInpaintingPipeline): inpainting pipeline.\n",

" prompt (str or List[str]): The prompt or prompts to guide the image generation.\n",

" negative_prompt (str or List[str]): The negative prompt or prompts to guide the image generation.\n",

" guidance_scale (float, *optional*, defaults to 7.5):\n",

" Guidance scale as defined in Classifier-Free Diffusion Guidance(https://arxiv.org/abs/2207.12598).\n",

" guidance_scale is defined as `w` of equation 2.\n",

" Higher guidance scale encourages to generate images that are closely linked to the text prompt,\n",

" usually at the expense of lower image quality.\n",

" num_inference_steps (int, *optional*, defaults to 50): The number of denoising steps for each frame. More denoising steps usually lead to a higher quality image at the expense of slower inference.\n",

" num_frames (int, *optional*, 20): number frames for video.\n",

" mask_width (int, *optional*, 128): size of border mask for inpainting on each step.\n",

" seed (int, *optional*, None): Seed for random generator state initialization.\n",

" zoom_in (bool, *optional*, False): zoom mode Zoom In or Zoom Out.\n",

" Returns:\n",

" output_path (str): Path where generated video loacated.\n",

" \"\"\"\n",

"\n",

" height = 512\n",

" width = height\n",

"\n",

" current_image = PIL.Image.new(mode=\"RGBA\", size=(height, width))\n",

" mask_image = np.array(current_image)[:, :, 3]\n",

" mask_image = PIL.Image.fromarray(255 - mask_image).convert(\"RGB\")\n",

" current_image = current_image.convert(\"RGB\")\n",

" pipe.set_progress_bar_config(desc=\"Generating initial image...\")\n",

" init_images = pipe(\n",

" prompt=prompt,\n",

" negative_prompt=negative_prompt,\n",

" image=current_image,\n",

" guidance_scale=guidance_scale,\n",

" mask_image=mask_image,\n",

" seed=seed,\n",

" num_inference_steps=num_inference_steps,\n",

" )[\"sample\"]\n",

" pipe.set_progress_bar_config()\n",

"\n",

" image_grid(init_images, rows=1, cols=1)\n",

"\n",

" num_outpainting_steps = num_frames\n",

" num_interpol_frames = 30\n",

"\n",

" current_image = init_images[0]\n",

" all_frames = []\n",

" all_frames.append(current_image)\n",

" for i in trange(\n",

" num_outpainting_steps,\n",

" desc=f\"Generating {num_outpainting_steps} additional images...\",\n",

" ):\n",

" prev_image_fix = current_image\n",

"\n",

" prev_image = shrink_and_paste_on_blank(current_image, mask_width)\n",

"\n",

" current_image = prev_image\n",

"\n",

" # create mask (black image with white mask_width width edges)\n",

" mask_image = np.array(current_image)[:, :, 3]\n",

" mask_image = PIL.Image.fromarray(255 - mask_image).convert(\"RGB\")\n",

"\n",

" # inpainting step\n",

" current_image = current_image.convert(\"RGB\")\n",

" images = pipe(\n",

" prompt=prompt,\n",

" negative_prompt=negative_prompt,\n",

" image=current_image,\n",

" guidance_scale=guidance_scale,\n",

" mask_image=mask_image,\n",

" seed=seed,\n",

" num_inference_steps=num_inference_steps,\n",

" )[\"sample\"]\n",

" current_image = images[0]\n",

" current_image.paste(prev_image, mask=prev_image)\n",

"\n",

" # interpolation steps bewteen 2 inpainted images (=sequential zoom and crop)\n",

" for j in range(num_interpol_frames - 1):\n",

" interpol_image = current_image\n",

" interpol_width = round((1 - (1 - 2 * mask_width / height) ** (1 - (j + 1) / num_interpol_frames)) * height / 2)\n",

" interpol_image = interpol_image.crop(\n",

" (\n",

" interpol_width,\n",

" interpol_width,\n",

" width - interpol_width,\n",

" height - interpol_width,\n",

" )\n",

" )\n",

"\n",

" interpol_image = interpol_image.resize((height, width))\n",

"\n",

" # paste the higher resolution previous image in the middle to avoid drop in quality caused by zooming\n",

" interpol_width2 = round((1 - (height - 2 * mask_width) / (height - 2 * interpol_width)) / 2 * height)\n",

" prev_image_fix_crop = shrink_and_paste_on_blank(prev_image_fix, interpol_width2)\n",

" interpol_image.paste(prev_image_fix_crop, mask=prev_image_fix_crop)\n",

" all_frames.append(interpol_image)\n",

" all_frames.append(current_image)\n",

"\n",

" video_file_name = f\"infinite_zoom_{'in' if zoom_in else 'out'}\"\n",

" fps = 30\n",

" save_path = video_file_name + \".mp4\"\n",

" write_video(save_path, all_frames, fps, reversed_order=zoom_in)\n",

" return save_path"

]

},

{

"cell_type": "code",

"execution_count": 12,

"id": "d9666757",

"metadata": {},

"outputs": [],

"source": [

"def shrink_and_paste_on_blank(current_image: PIL.Image.Image, mask_width: int):\n",

" \"\"\"\n",

" Decreases size of current_image by mask_width pixels from each side,\n",

" then adds a mask_width width transparent frame,\n",

" so that the image the function returns is the same size as the input.\n",

"\n",

" Parameters:\n",

" current_image (PIL.Image): input image to transform\n",

" mask_width (int): width in pixels to shrink from each side\n",

" Returns:\n",

" prev_image (PIL.Image): resized image with extended borders\n",

" \"\"\"\n",

"\n",

" height = current_image.height\n",

" width = current_image.width\n",

"\n",

" # shrink down by mask_width\n",

" prev_image = current_image.resize((height - 2 * mask_width, width - 2 * mask_width))\n",

" prev_image = prev_image.convert(\"RGBA\")\n",

" prev_image = np.array(prev_image)\n",

"\n",

" # create blank non-transparent image\n",

" blank_image = np.array(current_image.convert(\"RGBA\")) * 0\n",

" blank_image[:, :, 3] = 1\n",

"\n",

" # paste shrinked onto blank\n",

" blank_image[mask_width : height - mask_width, mask_width : width - mask_width, :] = prev_image\n",

" prev_image = PIL.Image.fromarray(blank_image)\n",

"\n",

" return prev_image\n",

"\n",

"\n",

"def image_grid(imgs: List[PIL.Image.Image], rows: int, cols: int):\n",

" \"\"\"\n",

" Insert images to grid\n",

"\n",

" Parameters:\n",

" imgs (List[PIL.Image.Image]): list of images for making grid\n",

" rows (int): number of rows in grid\n",

" cols (int): number of columns in grid\n",

" Returns:\n",

" grid (PIL.Image): image with input images collage\n",

" \"\"\"\n",

" assert len(imgs) == rows * cols\n",

"\n",

" w, h = imgs[0].size\n",

" grid = PIL.Image.new(\"RGB\", size=(cols * w, rows * h))\n",

"\n",

" for i, img in enumerate(imgs):\n",

" grid.paste(img, box=(i % cols * w, i // cols * h))\n",

" return grid\n",

"\n",

"\n",

"def write_video(\n",

" file_path: str,\n",

" frames: List[PIL.Image.Image],\n",

" fps: float,\n",

" reversed_order: bool = True,\n",

" gif: bool = True,\n",

"):\n",

" \"\"\"\n",

" Writes frames to an mp4 video file and optionaly to gif\n",

"\n",

" Parameters:\n",

" file_path (str): Path to output video, must end with .mp4\n",

" frames (List of PIL.Image): list of frames\n",

" fps (float): Desired frame rate\n",

" reversed_order (bool): if order of images to be reversed (default = True)\n",

" gif (bool): save frames to gif format (default = True)\n",

" Returns:\n",

" None\n",

" \"\"\"\n",

" if reversed_order:\n",

" frames.reverse()\n",

"\n",

" w, h = frames[0].size\n",

" fourcc = cv2.VideoWriter_fourcc(\"m\", \"p\", \"4\", \"v\")\n",

" # fourcc = cv2.VideoWriter_fourcc(*'avc1')\n",

" writer = cv2.VideoWriter(file_path, fourcc, fps, (w, h))\n",

"\n",

" for frame in frames:\n",

" np_frame = np.array(frame.convert(\"RGB\"))\n",

" cv_frame = cv2.cvtColor(np_frame, cv2.COLOR_RGB2BGR)\n",

" writer.write(cv_frame)\n",

"\n",

" writer.release()\n",

" if gif:\n",

" frames[0].save(\n",

" file_path.replace(\".mp4\", \".gif\"),\n",

" save_all=True,\n",

" append_images=frames[1:],\n",

" duratiobn=len(frames) / fps,\n",

" loop=0,\n",

" )"

]

},

{

"cell_type": "markdown",

"id": "e96e2c00",

"metadata": {},

"source": [

"### Configure Inference Pipeline\n",

"[back to top ⬆️](#Table-of-contents:)\n",

"\n",

"Configuration steps:\n",

"1. Load models on device\n",

"2. Configure tokenizer and scheduler\n",

"3. Create instance of `OVStableDiffusionInpaintingPipeline` class"

]

},

{

"cell_type": "code",

"execution_count": 13,

"id": "c5470d37",

"metadata": {},

"outputs": [],

"source": [

"core = ov.Core()\n",

"\n",

"tokenizer = CLIPTokenizer.from_pretrained(\"openai/clip-vit-large-patch14\")"

]

},

{

"cell_type": "markdown",

"id": "3f5c1ceb-11bb-4de8-ad55-804cfc0e153c",

"metadata": {},

"source": [

"### Select inference device\n",

"[back to top ⬆️](#Table-of-contents:)\n",

"\n",

"select device from dropdown list for running inference using OpenVINO"

]

},

{

"cell_type": "code",

"execution_count": 14,

"id": "d7459a0f-29b9-484c-9e1a-5c6e64f337a8",

"metadata": {},

"outputs": [

{

"data": {

"application/vnd.jupyter.widget-view+json": {

"model_id": "2d13dd4487bf44fa968be2bdc66a60bb",

"version_major": 2,

"version_minor": 0

},

"text/plain": [

"Dropdown(description='Device:', index=2, options=('CPU', 'GNA', 'AUTO'), value='AUTO')"

]

},

"execution_count": 14,

"metadata": {},

"output_type": "execute_result"

}

],

"source": [

"import ipywidgets as widgets\n",

"\n",

"device = widgets.Dropdown(\n",

" options=core.available_devices + [\"AUTO\"],\n",

" value=\"AUTO\",\n",

" description=\"Device:\",\n",

" disabled=False,\n",

")\n",

"\n",

"device"

]

},

{

"cell_type": "code",

"execution_count": 15,

"id": "67ac7373-35be-492f-8564-297b37e07642",

"metadata": {},

"outputs": [],

"source": [

"ov_config = {\"INFERENCE_PRECISION_HINT\": \"f32\"} if device.value != \"CPU\" else {}\n",

"\n",

"\n",

"text_enc_inpaint = core.compile_model(TEXT_ENCODER_OV_PATH_INPAINT, device.value)\n",

"unet_model_inpaint = core.compile_model(UNET_OV_PATH_INPAINT, device.value)\n",

"vae_decoder_inpaint = core.compile_model(VAE_DECODER_OV_PATH_INPAINT, device.value, ov_config)\n",

"vae_encoder_inpaint = core.compile_model(VAE_ENCODER_OV_PATH_INPAINT, device.value, ov_config)\n",

"\n",

"ov_pipe_inpaint = OVStableDiffusionInpaintingPipeline(\n",

" tokenizer=tokenizer,\n",

" text_encoder=text_enc_inpaint,\n",

" unet=unet_model_inpaint,\n",

" vae_encoder=vae_encoder_inpaint,\n",

" vae_decoder=vae_decoder_inpaint,\n",

" scheduler=scheduler_inpaint,\n",

")"

]

},

{

"cell_type": "markdown",

"id": "66fd066a",

"metadata": {},

"source": [

"### Run Infinite Zoom video generation\n",

"[back to top ⬆️](#Table-of-contents:)\n"

]

},

{

"cell_type": "code",

"execution_count": 16,

"id": "eeb67824-7d1c-4680-8f3c-e55edc5b36eb",

"metadata": {

"tags": []

},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

"Running on local URL: http://127.0.0.1:7860\n",

"Running on public URL: https://372deef95f8b1d0168.gradio.live\n",

"\n",

"This share link expires in 72 hours. For free permanent hosting and GPU upgrades, run `gradio deploy` from Terminal to deploy to Spaces (https://huggingface.co/spaces)\n"

]

},

{

"data": {

"text/html": [

""

],

"text/plain": [

""

]

},

"metadata": {},

"output_type": "display_data"

},

{

"data": {

"text/plain": []

},

"execution_count": 16,

"metadata": {},

"output_type": "execute_result"

}

],

"source": [

"import gradio as gr\n",

"from socket import gethostbyname, gethostname\n",

"\n",

"\n",

"def generate(\n",

" prompt,\n",

" negative_prompt,\n",

" seed,\n",

" steps,\n",

" frames,\n",

" edge_size,\n",

" zoom_in,\n",

" progress=gr.Progress(track_tqdm=True),\n",

"):\n",

" video_path = generate_video(\n",

" ov_pipe_inpaint,\n",

" prompt,\n",

" negative_prompt,\n",

" num_inference_steps=steps,\n",

" num_frames=frames,\n",

" mask_width=edge_size,\n",

" seed=seed,\n",

" zoom_in=zoom_in,\n",

" )\n",

" return video_path.replace(\".mp4\", \".gif\")\n",

"\n",

"\n",

"gr.close_all()\n",

"demo = gr.Interface(\n",

" generate,\n",

" [\n",

" gr.Textbox(\n",

" \"valley in the Alps at sunset, epic vista, beautiful landscape, 4k, 8k\",\n",

" label=\"Prompt\",\n",

" ),\n",

" gr.Textbox(\"lurry, bad art, blurred, text, watermark\", label=\"Negative prompt\"),\n",

" gr.Slider(value=9999, label=\"Seed\", maximum=10000000),\n",

" gr.Slider(value=20, label=\"Steps\", minimum=1, maximum=50),\n",

" gr.Slider(value=3, label=\"Frames\", minimum=1, maximum=50),\n",

" gr.Slider(value=128, label=\"Edge size\", minimum=32, maximum=256),\n",

" gr.Checkbox(label=\"Zoom in\"),\n",

" ],\n",

" \"image\",\n",

")\n",

"ipaddr = gethostbyname(gethostname())\n",

"demo.queue().launch(share=True)"

]

}

],

"metadata": {

"kernelspec": {

"display_name": "Python 3 (ipykernel)",

"language": "python",

"name": "python3"

},

"language_info": {

"codemirror_mode": {

"name": "ipython",

"version": 3

},

"file_extension": ".py",

"mimetype": "text/x-python",

"name": "python",

"nbconvert_exporter": "python",

"pygments_lexer": "ipython3",

"version": "3.8.10"

},

"openvino_notebooks": {

"imageUrl": "https://github.com/openvinotoolkit/openvino_notebooks/blob/latest/notebooks/stable-diffusion-v2/stable-diffusion-v2-infinite-zoom.gif?raw=true",

"tags": {

"categories": [

"Model Demos",

"AI Trends"

],

"libraries": [],

"other": [

"Stable Diffusion"

],

"tasks": [

"Text-to-Image",

"Image Inpainting",

"Text-to-Video"

]

}

},

"widgets": {

"application/vnd.jupyter.widget-state+json": {

"state": {},

"version_major": 2,

"version_minor": 0

}

}

},

"nbformat": 4,

"nbformat_minor": 5

}