ControlNet

Adding Conditional Control to Text-to-Image Diffusion Models by Lvmin Zhang and Maneesh Agrawala.

Using a pretrained model, we can provide control images (for example, a depth map) to control Stable Diffusion text-to-image generation so that it follows the structure of the depth image and fills in the details.

The abstract from the paper is:

We present a neural network structure, ControlNet, to control pretrained large diffusion models to support additional input conditions. The ControlNet learns task-specific conditions in an end-to-end way, and the learning is robust even when the training dataset is small (< 50k). Moreover, training a ControlNet is as fast as fine-tuning a diffusion model, and the model can be trained on a personal devices. Alternatively, if powerful computation clusters are available, the model can scale to large amounts (millions to billions) of data. We report that large diffusion models like Stable Diffusion can be augmented with ControlNets to enable conditional inputs like edge maps, segmentation maps, keypoints, etc. This may enrich the methods to control large diffusion models and further facilitate related applications.

This model was contributed by takuma104. ❤️

The original codebase can be found at lllyasviel/ControlNet.

Usage example

In the following we give a simple example of how to use a ControlNet checkpoint with Diffusers for inference. The inference pipeline is the same for all pipelines:

- Take an image and run it through a pre-conditioning processor.

- Run the pre-processed image through the [

StableDiffusionControlNetPipeline].

- Run the pre-processed image through the [

Let's have a look at a simple example using the Canny Edge ControlNet.

from diffusers import StableDiffusionControlNetPipeline

from diffusers.utils import load_image

# Let's load the popular vermeer image

image = load_image(

"https://hf.co/datasets/huggingface/documentation-images/resolve/main/diffusers/input_image_vermeer.png"

)

Next, we process the image to get the canny image. This is step 1. - running the pre-conditioning processor. The pre-conditioning processor is different for every ControlNet. Please see the model cards of the official checkpoints for more information about other models.

First, we need to install opencv:

pip install opencv-contrib-python

Next, let's also install all required Hugging Face libraries:

pip install diffusers transformers git+https://github.com/huggingface/accelerate.git

Then we can retrieve the canny edges of the image.

import cv2

from PIL import Image

import numpy as np

image = np.array(image)

low_threshold = 100

high_threshold = 200

image = cv2.Canny(image, low_threshold, high_threshold)

image = image[:, :, None]

image = np.concatenate([image, image, image], axis=2)

canny_image = Image.fromarray(image)

Let's take a look at the processed image.

Now, we load the official Stable Diffusion 1.5 Model as well as the ControlNet for canny edges.

from diffusers import StableDiffusionControlNetPipeline, ControlNetModel

import torch

controlnet = ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-canny", torch_dtype=torch.float16)

pipe = StableDiffusionControlNetPipeline.from_pretrained(

"runwayml/stable-diffusion-v1-5", controlnet=controlnet, torch_dtype=torch.float16

)

To speed-up things and reduce memory, let's enable model offloading and use the fast [UniPCMultistepScheduler].

from diffusers import UniPCMultistepScheduler

pipe.scheduler = UniPCMultistepScheduler.from_config(pipe.scheduler.config)

# this command loads the individual model components on GPU on-demand.

pipe.enable_model_cpu_offload()

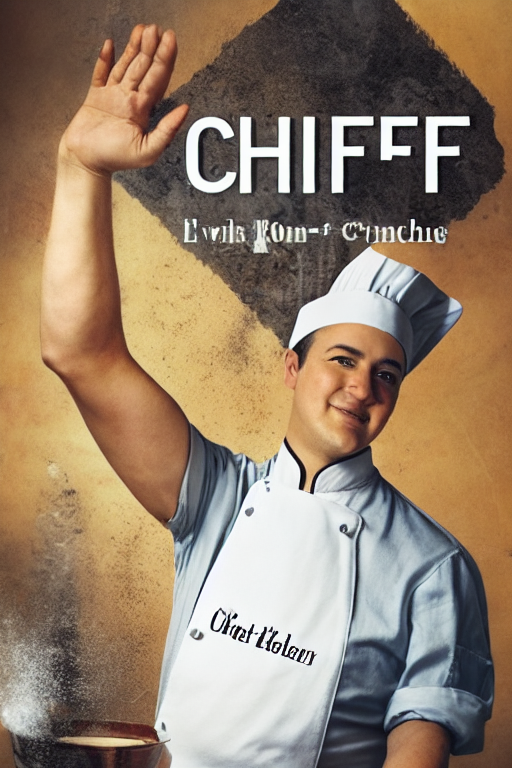

Finally, we can run the pipeline:

generator = torch.manual_seed(0)

out_image = pipe(

"disco dancer with colorful lights", num_inference_steps=20, generator=generator, image=canny_image

).images[0]

This should take only around 3-4 seconds on GPU (depending on hardware). The output image then looks as follows:

Note: To see how to run all other ControlNet checkpoints, please have a look at ControlNet with Stable Diffusion 1.5.

Combining multiple conditionings

Multiple ControlNet conditionings can be combined for a single image generation. Pass a list of ControlNets to the pipeline's constructor and a corresponding list of conditionings to __call__.

When combining conditionings, it is helpful to mask conditionings such that they do not overlap. In the example, we mask the middle of the canny map where the pose conditioning is located.

It can also be helpful to vary the controlnet_conditioning_scales to emphasize one conditioning over the other.

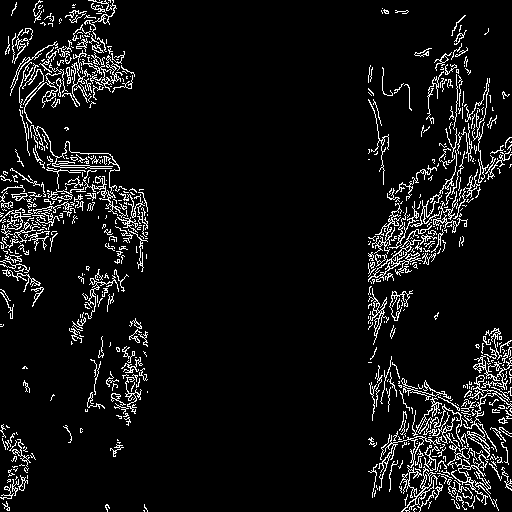

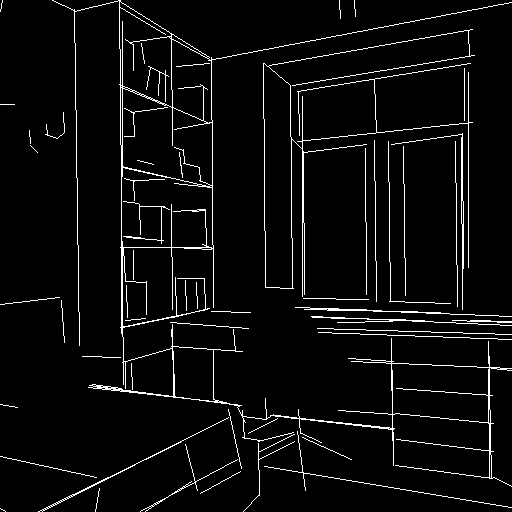

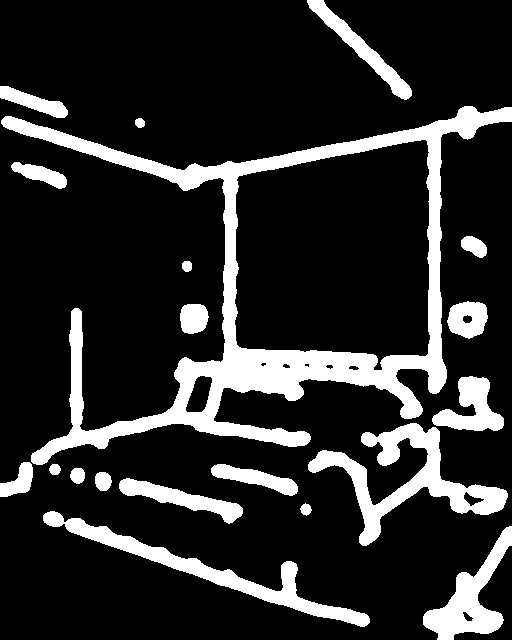

Canny conditioning

The original image:

Prepare the conditioning:

from diffusers.utils import load_image

from PIL import Image

import cv2

import numpy as np

from diffusers.utils import load_image

canny_image = load_image(

"https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/landscape.png"

)

canny_image = np.array(canny_image)

low_threshold = 100

high_threshold = 200

canny_image = cv2.Canny(canny_image, low_threshold, high_threshold)

# zero out middle columns of image where pose will be overlayed

zero_start = canny_image.shape[1] // 4

zero_end = zero_start + canny_image.shape[1] // 2

canny_image[:, zero_start:zero_end] = 0

canny_image = canny_image[:, :, None]

canny_image = np.concatenate([canny_image, canny_image, canny_image], axis=2)

canny_image = Image.fromarray(canny_image)

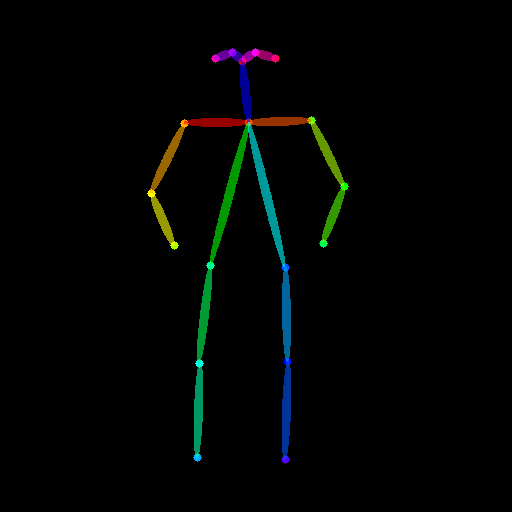

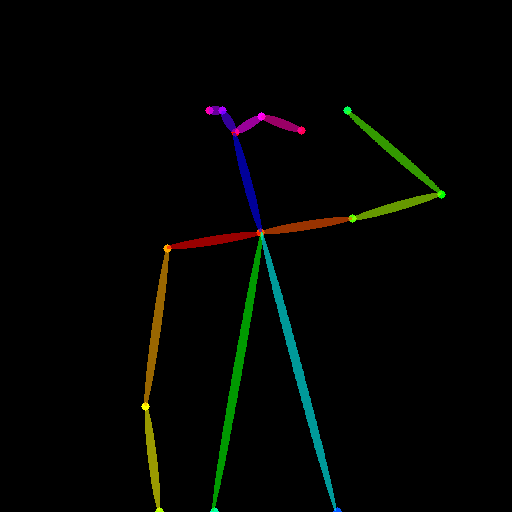

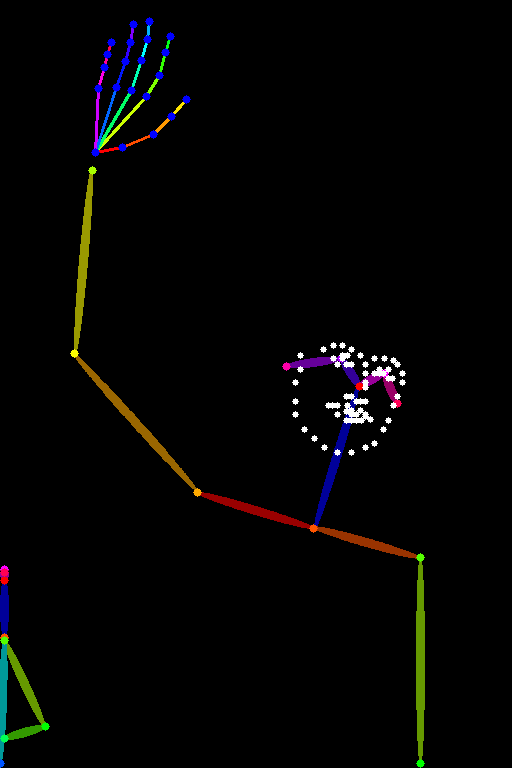

Openpose conditioning

The original image:

Prepare the conditioning:

from controlnet_aux import OpenposeDetector

from diffusers.utils import load_image

openpose = OpenposeDetector.from_pretrained("lllyasviel/ControlNet")

openpose_image = load_image(

"https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/person.png"

)

openpose_image = openpose(openpose_image)

Running ControlNet with multiple conditionings

from diffusers import StableDiffusionControlNetPipeline, ControlNetModel, UniPCMultistepScheduler

import torch

controlnet = [

ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-openpose", torch_dtype=torch.float16),

ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-canny", torch_dtype=torch.float16),

]

pipe = StableDiffusionControlNetPipeline.from_pretrained(

"runwayml/stable-diffusion-v1-5", controlnet=controlnet, torch_dtype=torch.float16

)

pipe.scheduler = UniPCMultistepScheduler.from_config(pipe.scheduler.config)

pipe.enable_xformers_memory_efficient_attention()

pipe.enable_model_cpu_offload()

prompt = "a giant standing in a fantasy landscape, best quality"

negative_prompt = "monochrome, lowres, bad anatomy, worst quality, low quality"

generator = torch.Generator(device="cpu").manual_seed(1)

images = [openpose_image, canny_image]

image = pipe(

prompt,

images,

num_inference_steps=20,

generator=generator,

negative_prompt=negative_prompt,

controlnet_conditioning_scale=[1.0, 0.8],

).images[0]

image.save("./multi_controlnet_output.png")

Guess Mode

Guess Mode is a ControlNet feature that was implemented after the publication of the paper. The description states:

In this mode, the ControlNet encoder will try best to recognize the content of the input control map, like depth map, edge map, scribbles, etc, even if you remove all prompts.

The core implementation:

It adjusts the scale of the output residuals from ControlNet by a fixed ratio depending on the block depth. The shallowest DownBlock corresponds to 0.1. As the blocks get deeper, the scale increases exponentially, and the scale for the output of the MidBlock becomes 1.0.

Since the core implementation is just this, it does not have any impact on prompt conditioning. While it is common to use it without specifying any prompts, it is also possible to provide prompts if desired.

Usage:

Just specify guess_mode=True in the pipe() function. A guidance_scale between 3.0 and 5.0 is recommended.

from diffusers import StableDiffusionControlNetPipeline, ControlNetModel

import torch

controlnet = ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-canny")

pipe = StableDiffusionControlNetPipeline.from_pretrained("runwayml/stable-diffusion-v1-5", controlnet=controlnet).to(

"cuda"

)

image = pipe("", image=canny_image, guess_mode=True, guidance_scale=3.0).images[0]

image.save("guess_mode_generated.png")

Output image comparison:

Canny Control Example

Available checkpoints

ControlNet requires a control image in addition to the text-to-image prompt. Each pretrained model is trained using a different conditioning method that requires different images for conditioning the generated outputs. For example, Canny edge conditioning requires the control image to be the output of a Canny filter, while depth conditioning requires the control image to be a depth map. See the overview and image examples below to know more.

All checkpoints can be found under the authors' namespace lllyasviel.

13.04.2024 Update: The author has released improved controlnet checkpoints v1.1 - see here.

ControlNet v1.0

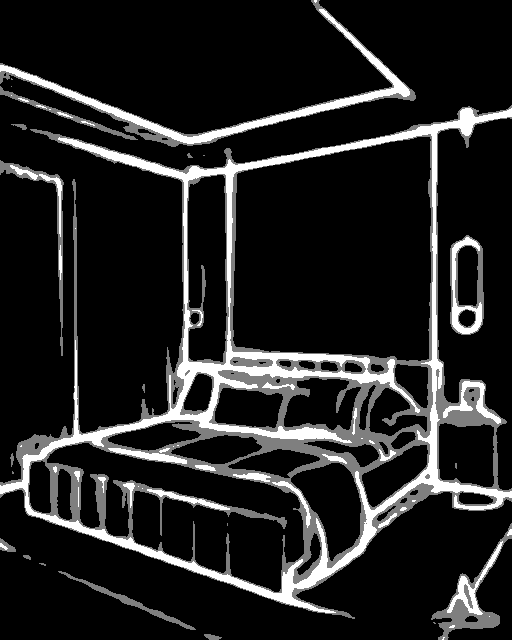

| Model Name | Control Image Overview | Control Image Example | Generated Image Example |

|---|---|---|---|

| lllyasviel/sd-controlnet-canny Trained with canny edge detection |

A monochrome image with white edges on a black background. |  |

|

| lllyasviel/sd-controlnet-depth Trained with Midas depth estimation |

A grayscale image with black representing deep areas and white representing shallow areas. |  |

|

| lllyasviel/sd-controlnet-hed Trained with HED edge detection (soft edge) |

A monochrome image with white soft edges on a black background. |  |

|

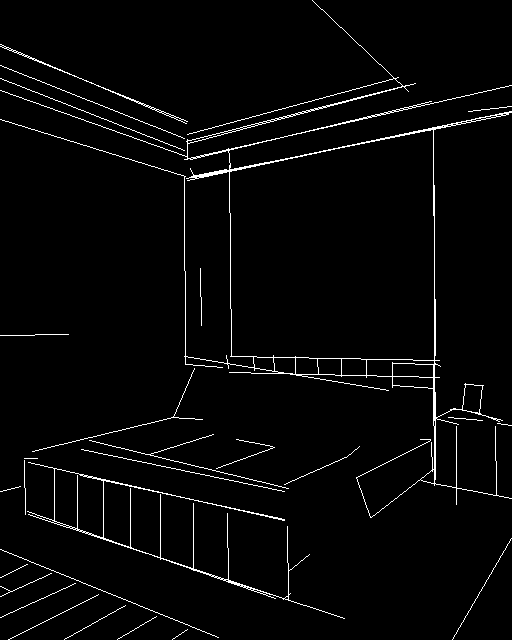

| lllyasviel/sd-controlnet-mlsd Trained with M-LSD line detection |

A monochrome image composed only of white straight lines on a black background. |  |

|

| lllyasviel/sd-controlnet-normal Trained with normal map |

A normal mapped image. |  |

|

| lllyasviel/sd-controlnet-openpose Trained with OpenPose bone image |

A OpenPose bone image. |  |

|

| lllyasviel/sd-controlnet-scribble Trained with human scribbles |

A hand-drawn monochrome image with white outlines on a black background. |  |

|

| lllyasviel/sd-controlnet-seg Trained with semantic segmentation |

An ADE20K's segmentation protocol image. |  |

|

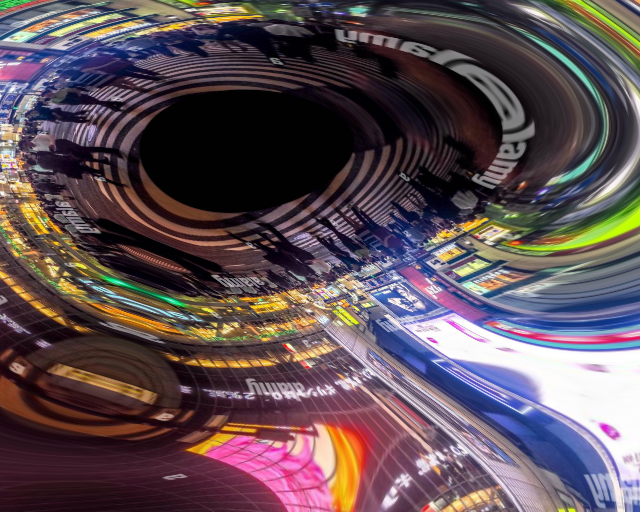

ControlNet v1.1

| Model Name | Control Image Overview | Condition Image | Control Image Example | Generated Image Example |

|---|---|---|---|---|

| lllyasviel/control_v11p_sd15_canny |

Trained with canny edge detection | A monochrome image with white edges on a black background. |  |

|

| lllyasviel/control_v11e_sd15_ip2p |

Trained with pixel to pixel instruction | No condition . |  |

|

| lllyasviel/control_v11p_sd15_inpaint |

Trained with image inpainting | No condition. |  |

|

| lllyasviel/control_v11p_sd15_mlsd |

Trained with multi-level line segment detection | An image with annotated line segments. |  |

|

| lllyasviel/control_v11f1p_sd15_depth |

Trained with depth estimation | An image with depth information, usually represented as a grayscale image. |  |

|

| lllyasviel/control_v11p_sd15_normalbae |

Trained with surface normal estimation | An image with surface normal information, usually represented as a color-coded image. |  |

|

| lllyasviel/control_v11p_sd15_seg |

Trained with image segmentation | An image with segmented regions, usually represented as a color-coded image. |  |

|

| lllyasviel/control_v11p_sd15_lineart |

Trained with line art generation | An image with line art, usually black lines on a white background. |  |

|

| lllyasviel/control_v11p_sd15s2_lineart_anime |

Trained with anime line art generation | An image with anime-style line art. |  |

|

| lllyasviel/control_v11p_sd15_openpose |

Trained with human pose estimation | An image with human poses, usually represented as a set of keypoints or skeletons. |  |

|

| lllyasviel/control_v11p_sd15_scribble |

Trained with scribble-based image generation | An image with scribbles, usually random or user-drawn strokes. |  |

|

| lllyasviel/control_v11p_sd15_softedge |

Trained with soft edge image generation | An image with soft edges, usually to create a more painterly or artistic effect. |  |

|

| lllyasviel/control_v11e_sd15_shuffle |

Trained with image shuffling | An image with shuffled patches or regions. |  |

|

| lllyasviel/control_v11f1e_sd15_tile |

Trained with image tiling | A blurry image or part of an image . |  |

|

StableDiffusionControlNetPipeline

[[autodoc]] StableDiffusionControlNetPipeline - all - call - enable_attention_slicing - disable_attention_slicing - enable_vae_slicing - disable_vae_slicing - enable_xformers_memory_efficient_attention - disable_xformers_memory_efficient_attention - load_textual_inversion

StableDiffusionControlNetImg2ImgPipeline

[[autodoc]] StableDiffusionControlNetImg2ImgPipeline - all - call - enable_attention_slicing - disable_attention_slicing - enable_vae_slicing - disable_vae_slicing - enable_xformers_memory_efficient_attention - disable_xformers_memory_efficient_attention - load_textual_inversion

StableDiffusionControlNetInpaintPipeline

[[autodoc]] StableDiffusionControlNetInpaintPipeline - all - call - enable_attention_slicing - disable_attention_slicing - enable_vae_slicing - disable_vae_slicing - enable_xformers_memory_efficient_attention - disable_xformers_memory_efficient_attention - load_textual_inversion

FlaxStableDiffusionControlNetPipeline

[[autodoc]] FlaxStableDiffusionControlNetPipeline - all - call