license: apache-2.0

Intruduction

Eval

Dev eval at CS-HellaSwag (automatically translated HellaSwag benchmark).

| Model | CS-HellaSwag Accuracy |

|---|---|

| mistral7b | 0.4992 |

| csmpt@130k steps [released] | 0.5004 |

| csmpt@100k steps | 0.4959 |

| csmpt@75k steps | 0.4895 |

| csmpt@50k steps | 0.4755 |

| csmpt@26,5k steps | 0.4524 |

However, we ran validation over the course of training on CS-Hellaswag, and after 100k steps, the improvements were very noisy if any. The improvement over mistral7b is not significant.

More evaluation details teaser.

Loss

We encountered loss spikes during training. As the model always recovered, and our budget for training 7b model was very constrained, we kept on training. We observed such loss spikes before in our ablations. In these ablations (with GPT-2 small), we found these to be

- (a) influenced by learning rate, the lower the learning rate, less they appear, as it gets higher, they start to appear, and with too high learning rate, the training might diverge on such loss spike.

- (b) in preliminary ablations, they only appear for continuously pretrained models. While we do not know why do they appear, we hypothesize this might be linked to theory on Adam instability in time-domain correlation of update vectors. However such instabilities were previously observed only for much larger models (larger than 65b).

Corpora

The model was trained on 3 corpora, which were hot-swapped during the training. These were collected/filtered during the course of training.

- Corpus #1 was the same we used for our Czech GPT-2 training (15,621,685,248 tokens).

- Corpus #2 contained 67,981,934,592 tokens, coming mostly from HPLT and CulturaX corpora.

- Corpus #3 is Corpus #2 after we removed proportions of the unappropriate content (which avoided our other checks) through linear classifier.

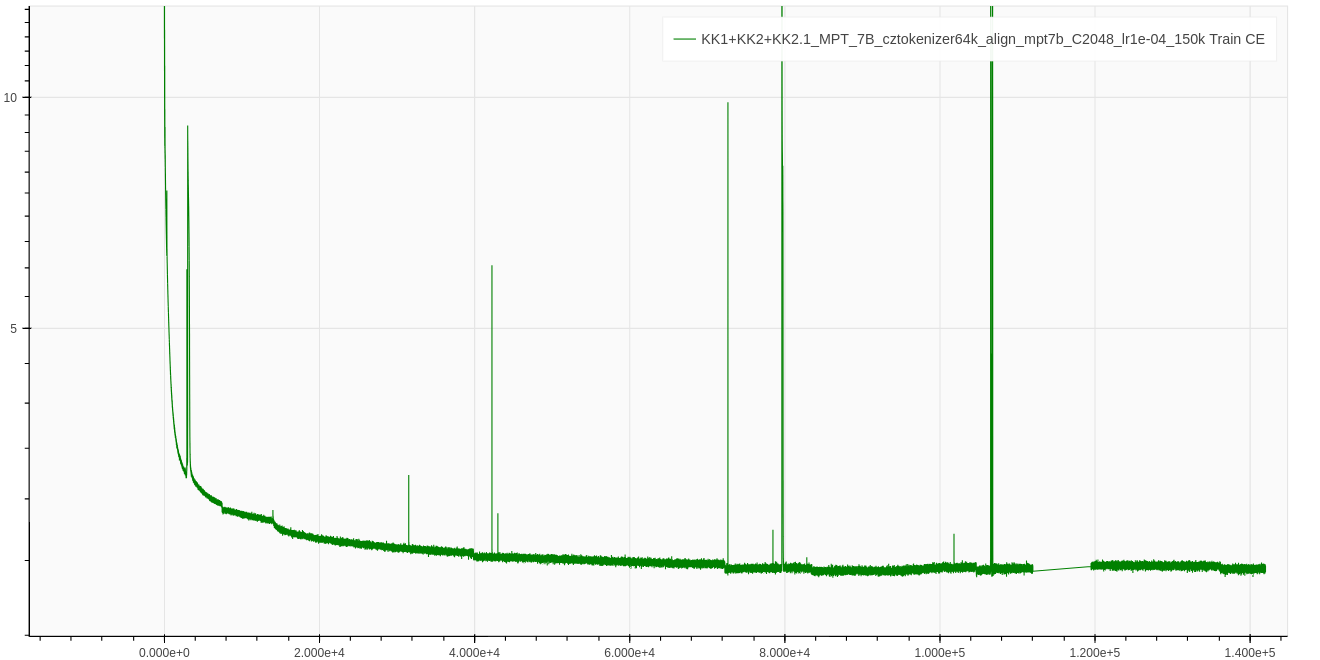

Figure 1: Training loss.

Figure 1: Training loss.

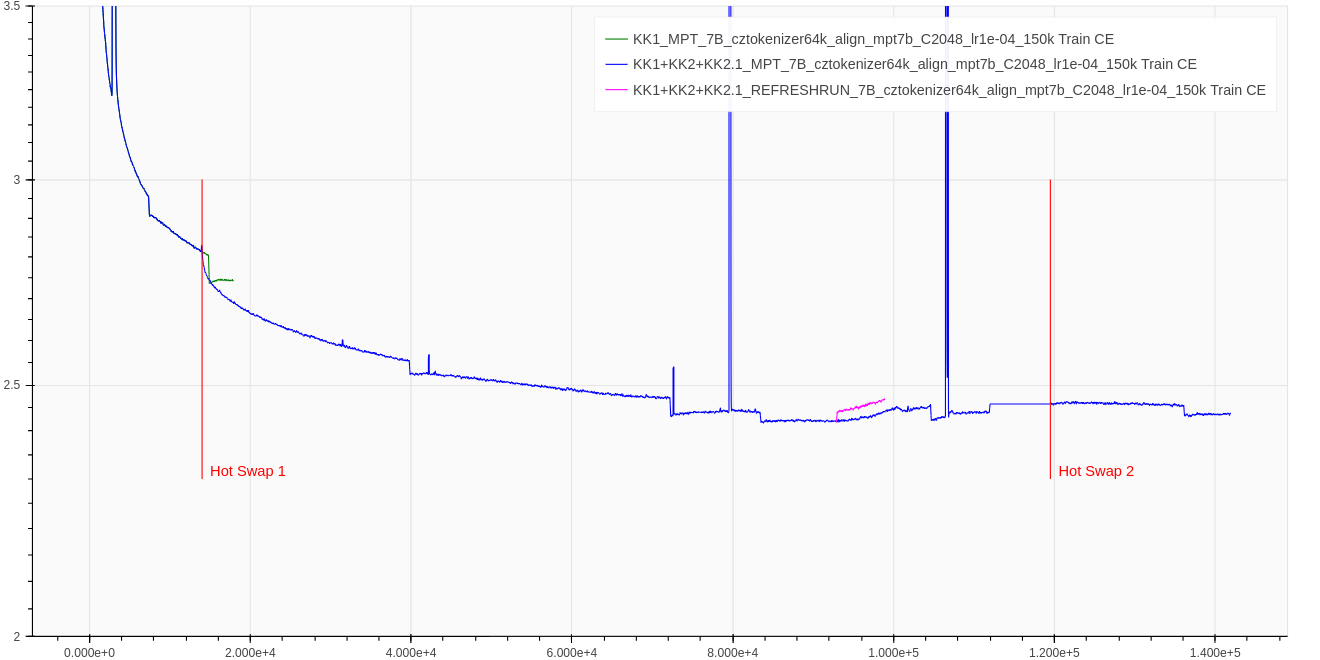

Figure 2: Training loss closeup. We mark two hotswap places, where the training corpus #1 was switched for internal-corpus #2 and internal-corpus #2.1 respectively.

Additionaly, we perform two ablations:

Figure 2: Training loss closeup. We mark two hotswap places, where the training corpus #1 was switched for internal-corpus #2 and internal-corpus #2.1 respectively.

Additionaly, we perform two ablations:

- (a) After first hot swap, we continued training on the corpus #1 for a while.

- (b) On step 94,000, the training loss stopped decreasing, increased, and around step 120,000 (near hot swap #2) started decreasing again. To ablate whether this was an effect of hot-swap,

- we resume training from step 93,000 using corpus #3. The optimizer states were reinitialized.

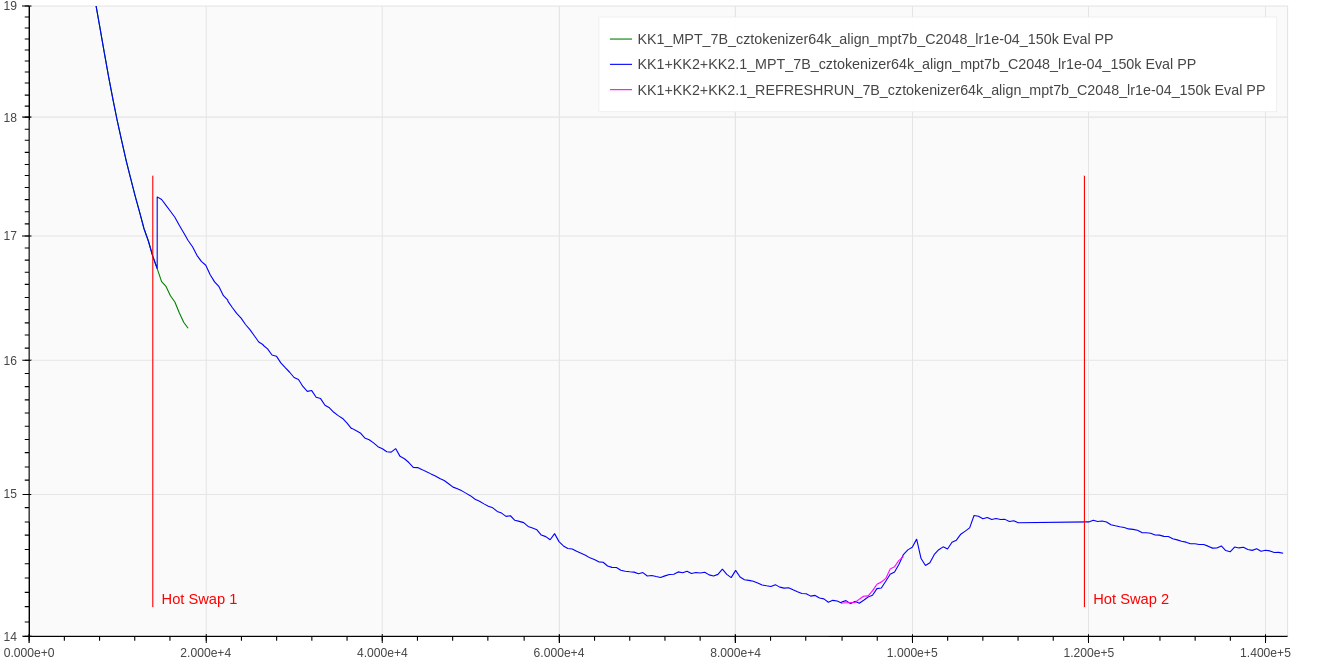

Figure 3: Test loss closeup, testing performed on split of internal-corpus #1. See Figure 2 description for ablation explanation.

Figure 3: Test loss closeup, testing performed on split of internal-corpus #1. See Figure 2 description for ablation explanation.

Training Method

tbd.

Usage

How to Setup Environment

pip install transformers==4.37.2 torch==2.1.2 einops==0.7.0

# be sure to install right flash-attn, we use torch compiled with CUDA 12.1, no ABI, python 3.9, Linux x86_64 architecture

pip install https://github.com/Dao-AILab/flash-attention/releases/download/v2.5.3/flash_attn-2.5.3+cu122torch2.

1cxx11abiFALSE-cp39-cp39-linux_x86_64.whl

Running the Code

import torch

import transformers

from transformers import pipeline

name = 'BUT-FIT/csmpt7b'

config = transformers.AutoConfig.from_pretrained(name, trust_remote_code=True)

config.init_device = 'cuda:0' # For fast initialization directly on GPU!

model = transformers.AutoModelForCausalLM.from_pretrained(

name,

config=config,

torch_dtype=torch.bfloat16, # Load model weights in bfloat16

trust_remote_code=True

)

tokenizer = transformers.AutoTokenizer.from_pretrained(name, trust_remote_code=True)

pipe = pipeline('text-generation', model=model, tokenizer=tokenizer, device='cuda:0')

with torch.autocast('cuda', dtype=torch.bfloat16):

print(

pipe('Nejznámějším českým spisovatelem ',

max_new_tokens=100,

top_p=0.95,

repetition_penalty=1.0,

do_sample=True,

use_cache=True))

Training Data

We release most (95.79%) of our training data corpus BUT-Large Czech Collection.

Our Release Plan

| Stage | Description | Date |

|---|---|---|

| 1 | 'Best' model + training data | 11.03.2024 |

| 2 | All checkpoints + training code | |

| 3 | Benczechmark a collection of Czech datasets for few-shot LLM evaluation Get in touch if you want to contribute! | |

| 4 | Preprint Publication |

Getting in Touch

For further questions, email to [email protected].

Disclaimer

This is a probabilistic model, it can output stochastic information. Authors are not responsible for the model outputs. Use at your own risk.

Acknowledgement

This work was supported by NAKI III program of Ministry of Culture Czech Republic, project semANT ---

"Sémantický průzkumník textového kulturního dědictví" grant no. DH23P03OVV060 and

by the Ministry of Education, Youth and Sports of the Czech Republic through the e-INFRA CZ (ID:90254).

Citation

@article{benczechmark,

author = {Martin Fajčík, Martin Dočekal, Jan Doležal, Karel Beneš, Michal Hradiš},

title = {BenCzechMark: Machine Language Understanding Benchmark for Czech Language},

journal = {arXiv preprint arXiv:insert-arxiv-number-here},

year = {2024},

month = {March},

eprint = {insert-arxiv-number-here},

archivePrefix = {arXiv},

primaryClass = {cs.CL},

}