File size: 2,895 Bytes

acfda51 042f5d7 acfda51 7ebbf6c 042f5d7 acfda51 fb32a4f acfda51 042f5d7 acfda51 fb32a4f acfda51 042f5d7 fb32a4f acfda51 042f5d7 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- EMBO/BLURB

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: bert-large-cased-lora-finetuned-ner-EMBO-SourceData

results: []

language:

- en

pipeline_tag: token-classification

---

# bert-large-cased-lora-finetuned-ner-EMBO-SourceData

This model is a fine-tuned version of [bert-large-cased](https://huggingface.co/bert-large-cased).

It achieves the following results on the evaluation set:

- Loss: 0.1282

- Precision: 0.7999

- Recall: 0.8278

- F1: 0.8136

- Accuracy: 0.9584

## Model description

For more information on how it was created, check out the following link: [https://github.com/DunnBC22/NLP_Projects/blob/main/Token%20Classification/Monolingual/EMBO-SourceData%20with%20LoRA/NER%20Project%20Using%20EMBO-SourceData%20with%20LoRA.ipynb](https://github.com/DunnBC22/NLP_Projects/blob/main/Token%20Classification/Monolingual/EMBO-SourceData%20with%20LoRA/NER%20Project%20Using%20EMBO-SourceData%20with%20LoRA.ipynb)

## Intended uses & limitations

This model is intended to demonstrate my ability to solve a complex problem using technology.

## Training and evaluation data

Dataset Source: [https://huggingface.co/datasets/EMBO/BLURB](https://huggingface.co/datasets/EMBO/BLURB)

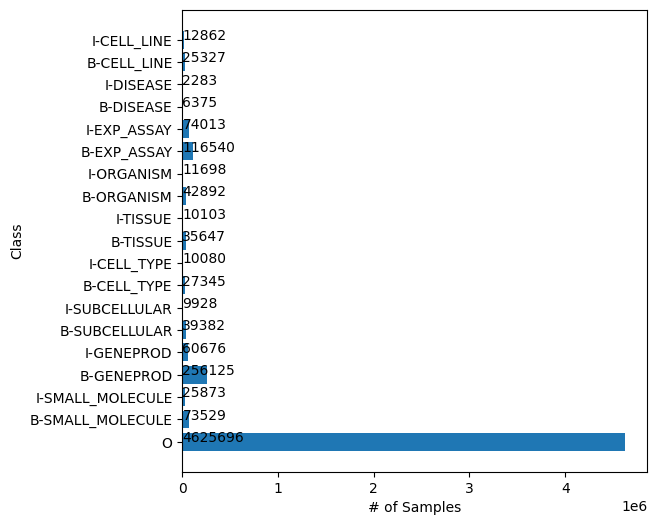

**Token Distribution**

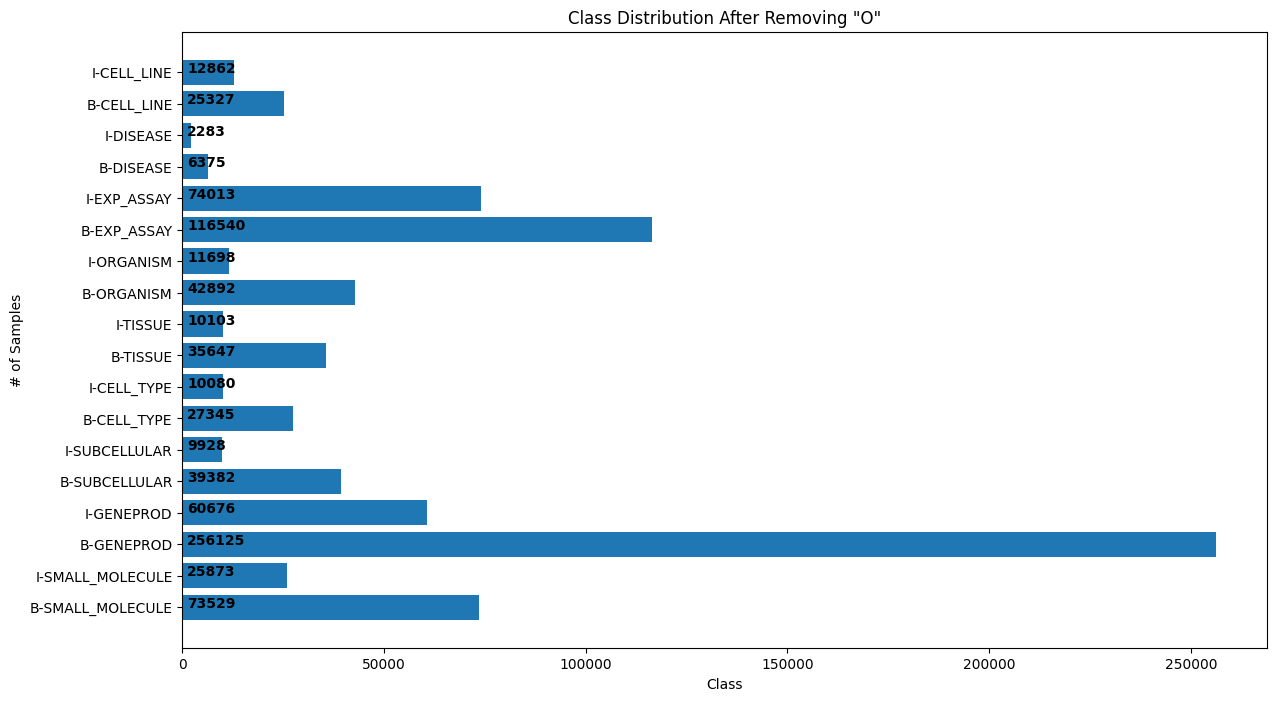

**Token Distribution After Removing 'O' Tokens**

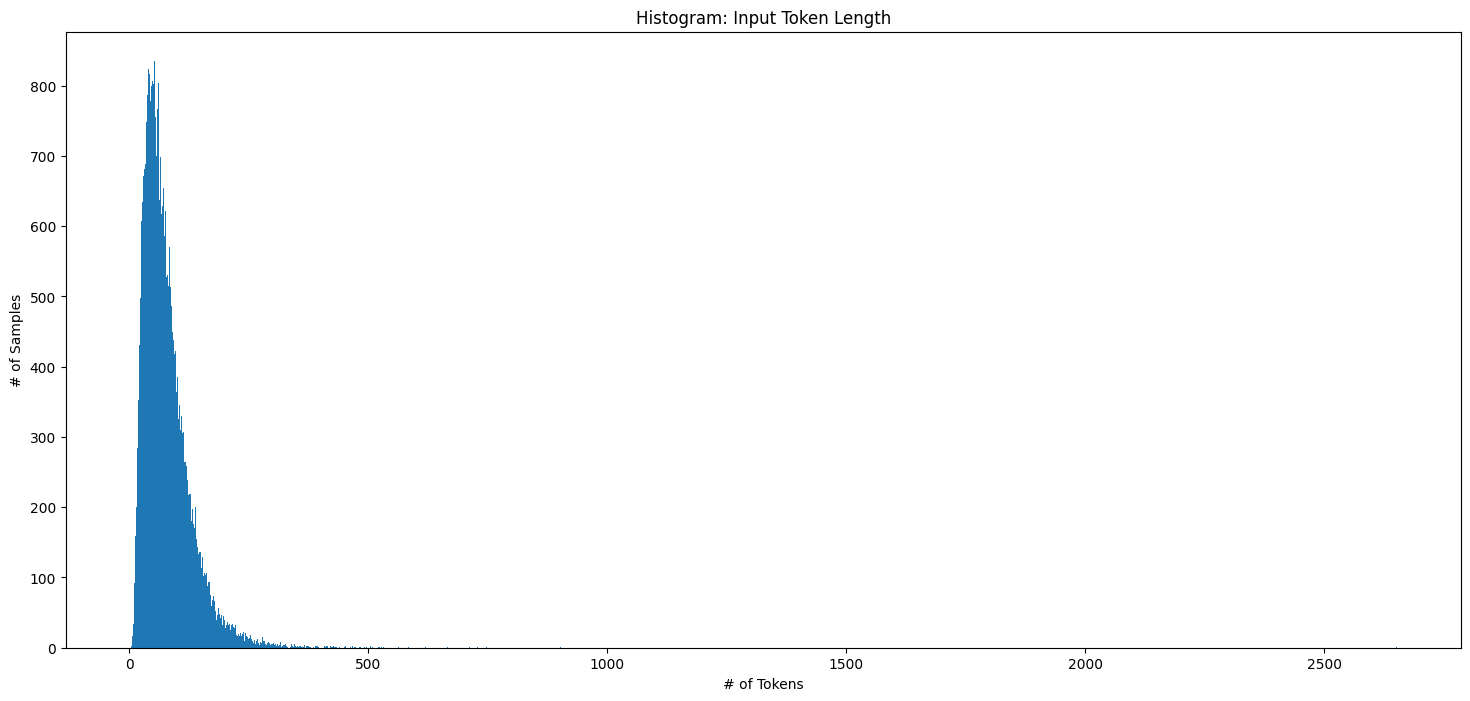

**Histogram of Tokenized Input Lengths**

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.001

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:---------:|:------:|:------:|:--------:|

| 0.1552 | 1.0 | 3454 | 0.1499 | 0.7569 | 0.7968 | 0.7763 | 0.9516 |

| 0.1179 | 2.0 | 6908 | 0.1328 | 0.7910 | 0.8120 | 0.8013 | 0.9564 |

| 0.0998 | 3.0 | 10362 | 0.1282 | 0.7999 | 0.8278 | 0.8136 | 0.9584 |

### Framework versions

- Transformers 4.26.1

- Pytorch 2.0.1

- Datasets 2.13.1

- Tokenizers 0.13.3 |