Upload folder using huggingface_hub

#1

by

sharpenb

- opened

- README.md +93 -0

- model/optimized_model.pkl +3 -0

- model/smash_config.json +32 -0

- plots.png +0 -0

README.md

ADDED

|

@@ -0,0 +1,93 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

library_name: pruna-engine

|

| 3 |

+

thumbnail: "https://assets-global.website-files.com/646b351987a8d8ce158d1940/64ec9e96b4334c0e1ac41504_Logo%20with%20white%20text.svg"

|

| 4 |

+

metrics:

|

| 5 |

+

- memory_disk

|

| 6 |

+

- memory_inference

|

| 7 |

+

- inference_latency

|

| 8 |

+

- inference_throughput

|

| 9 |

+

- inference_CO2_emissions

|

| 10 |

+

- inference_energy_consumption

|

| 11 |

+

---

|

| 12 |

+

<!-- header start -->

|

| 13 |

+

<!-- 200823 -->

|

| 14 |

+

<div style="width: auto; margin-left: auto; margin-right: auto">

|

| 15 |

+

<a href="https://www.pruna.ai/" target="_blank" rel="noopener noreferrer">

|

| 16 |

+

<img src="https://i.imgur.com/eDAlcgk.png" alt="PrunaAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

|

| 17 |

+

</a>

|

| 18 |

+

</div>

|

| 19 |

+

<!-- header end -->

|

| 20 |

+

|

| 21 |

+

[](https://twitter.com/PrunaAI)

|

| 22 |

+

[](https://github.com/PrunaAI)

|

| 23 |

+

[](https://www.linkedin.com/company/93832878/admin/feed/posts/?feedType=following)

|

| 24 |

+

[](https://discord.gg/CP4VSgck)

|

| 25 |

+

|

| 26 |

+

# Simply make AI models cheaper, smaller, faster, and greener!

|

| 27 |

+

|

| 28 |

+

- Give a thumbs up if you like this model!

|

| 29 |

+

- Contact us and tell us which model to compress next [here](https://www.pruna.ai/contact).

|

| 30 |

+

- Request access to easily compress your *own* AI models [here](https://z0halsaff74.typeform.com/pruna-access?typeform-source=www.pruna.ai).

|

| 31 |

+

- Read the documentations to know more [here](https://pruna-ai-pruna.readthedocs-hosted.com/en/latest/)

|

| 32 |

+

- Join Pruna AI community on Discord [here](https://discord.gg/CP4VSgck) to share feedback/suggestions or get help.

|

| 33 |

+

|

| 34 |

+

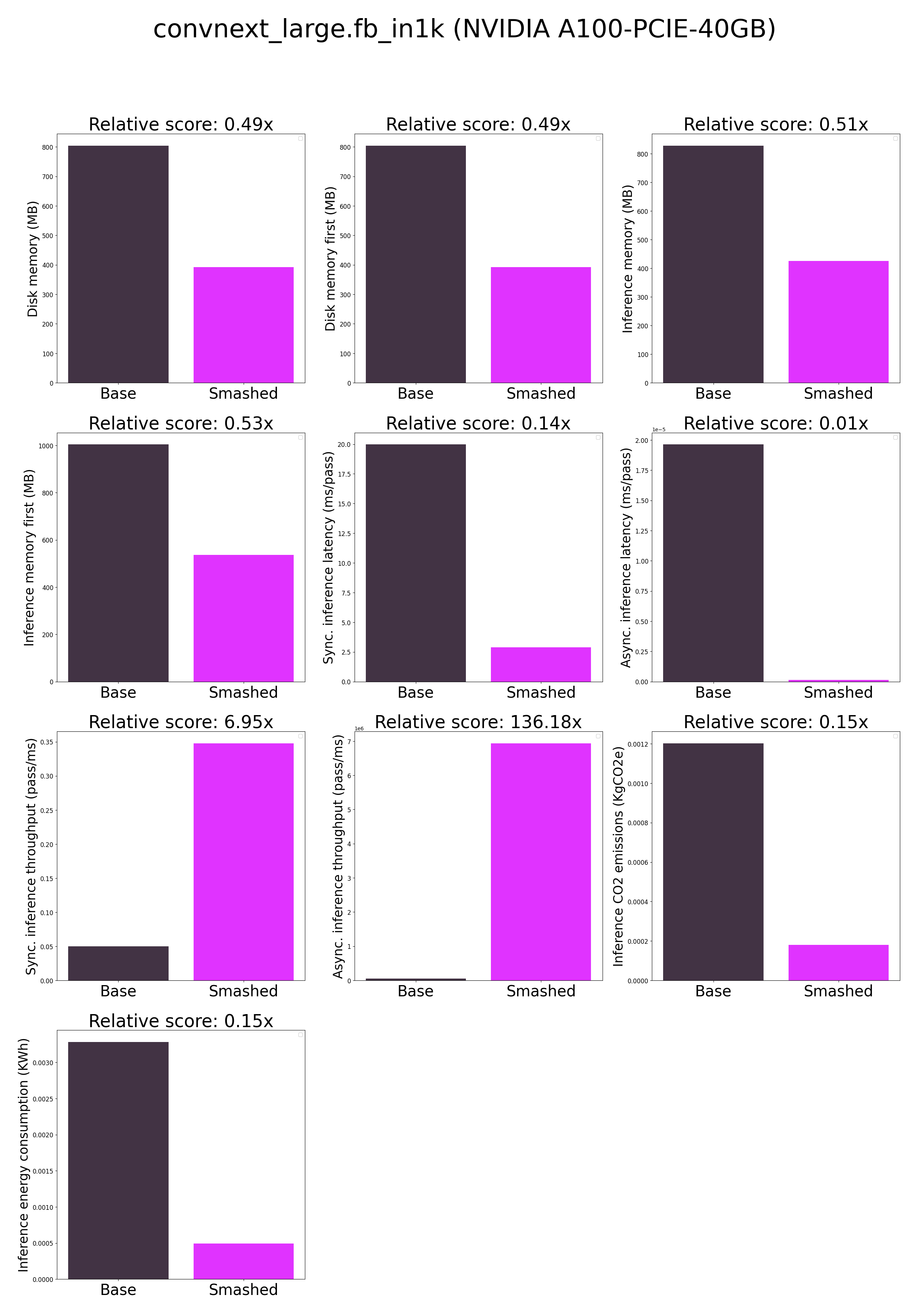

## Results

|

| 35 |

+

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

**Frequently Asked Questions**

|

| 39 |

+

- ***How does the compression work?*** The model is compressed by combining quantization, jit, cuda graphs.

|

| 40 |

+

- ***How does the model quality change?*** The quality of the model output might slightly vary compared to the base model.

|

| 41 |

+

- ***How is the model efficiency evaluated?*** These results were obtained on NVIDIA A100-PCIE-40GB with configuration described in `model/smash_config.json` and are obtained after a hardware warmup. The smashed model is directly compared to the original base model. Efficiency results may vary in other settings (e.g. other hardware, image size, batch size, ...). We recommend to directly run them in the use-case conditions to know if the smashed model can benefit you.

|

| 42 |

+

- ***What is the model format?*** We used a custom Pruna model format based on pickle to make models compatible with the compression methods. We provide a tutorial to run models in dockers in the documentation [here](https://pruna-ai-pruna.readthedocs-hosted.com/en/latest/) if needed.

|

| 43 |

+

- ***What is the naming convention for Pruna Huggingface models?*** We take the original model name and append "turbo", "tiny", or "green" if the smashed model has a measured inference speed, inference memory, or inference energy consumption which is less than 90% of the original base model.

|

| 44 |

+

- ***How to compress my own models?*** You can request premium access to more compression methods and tech support for your specific use-cases [here](https://z0halsaff74.typeform.com/pruna-access?typeform-source=www.pruna.ai).

|

| 45 |

+

- ***What are "first" metrics?*** Results mentioning "first" are obtained after the first run of the model. The first run might take more memory or be slower than the subsequent runs due cuda overheads.

|

| 46 |

+

- ***What are "Sync" and "Async" metrics?*** "Sync" metrics are obtained by syncing all GPU processes and stop measurement when all of them are executed. "Async" metrics are obtained without syncing all GPU processes and stop when the model output can be used by the CPU. We provide both metrics since both could be relevant depending on the use-case. We recommend to test the efficiency gains directly in your use-cases.

|

| 47 |

+

|

| 48 |

+

## Setup

|

| 49 |

+

|

| 50 |

+

You can run the smashed model with these steps:

|

| 51 |

+

|

| 52 |

+

0. Check that you have linux, python 3.10, and cuda 12.1.0 requirements installed. For cuda, check with `nvcc --version` and install with `conda install nvidia/label/cuda-12.1.0::cuda`.

|

| 53 |

+

1. Install the `pruna-engine` available [here](https://pypi.org/project/pruna-engine/) on Pypi. It might take up to 15 minutes to install.

|

| 54 |

+

```bash

|

| 55 |

+

pip install pruna-engine[gpu]==0.7.1 --extra-index-url https://pypi.nvidia.com --extra-index-url https://pypi.ngc.nvidia.com --extra-index-url https://prunaai.pythonanywhere.com/

|

| 56 |

+

```

|

| 57 |

+

2. Download the model files using one of these three options.

|

| 58 |

+

- Option 1 - Use command line interface (CLI):

|

| 59 |

+

```bash

|

| 60 |

+

mkdir convnext_large.fb_in1k-turbo-tiny-green-smashed

|

| 61 |

+

huggingface-cli download PrunaAI/convnext_large.fb_in1k-turbo-tiny-green-smashed --local-dir convnext_large.fb_in1k-turbo-tiny-green-smashed --local-dir-use-symlinks False

|

| 62 |

+

```

|

| 63 |

+

- Option 2 - Use Python:

|

| 64 |

+

```python

|

| 65 |

+

import subprocess

|

| 66 |

+

repo_name = "convnext_large.fb_in1k-turbo-tiny-green-smashed"

|

| 67 |

+

subprocess.run(["mkdir", repo_name])

|

| 68 |

+

subprocess.run(["huggingface-cli", "download", 'PrunaAI/'+ repo_name, "--local-dir", repo_name, "--local-dir-use-symlinks", "False"])

|

| 69 |

+

```

|

| 70 |

+

- Option 3 - Download them manually on the HuggingFace model page.

|

| 71 |

+

3. Load & run the model.

|

| 72 |

+

```python

|

| 73 |

+

from pruna_engine.PrunaModel import PrunaModel

|

| 74 |

+

|

| 75 |

+

model_path = "convnext_large.fb_in1k-turbo-tiny-green-smashed/model" # Specify the downloaded model path.

|

| 76 |

+

smashed_model = PrunaModel.load_model(model_path) # Load the model.

|

| 77 |

+

|

| 78 |

+

import torch; image = torch.rand(1, 3, 224, 224).to('cuda')

|

| 79 |

+

smashed_model(image)

|

| 80 |

+

```

|

| 81 |

+

|

| 82 |

+

## Configurations

|

| 83 |

+

|

| 84 |

+

The configuration info are in `model/smash_config.json`.

|

| 85 |

+

|

| 86 |

+

## Credits & License

|

| 87 |

+

|

| 88 |

+

The license of the smashed model follows the license of the original model. Please check the license of the original model convnext_large.fb_in1k before using this model which provided the base model. The license of the `pruna-engine` is [here](https://pypi.org/project/pruna-engine/) on Pypi.

|

| 89 |

+

|

| 90 |

+

## Want to compress other models?

|

| 91 |

+

|

| 92 |

+

- Contact us and tell us which model to compress next [here](https://www.pruna.ai/contact).

|

| 93 |

+

- Request access to easily compress your own AI models [here](https://z0halsaff74.typeform.com/pruna-access?typeform-source=www.pruna.ai).

|

model/optimized_model.pkl

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e0a003bb4163ca003069171fb77cbd9f73191e49b4b3fc4a8471db451b6017b2

|

| 3 |

+

size 395705763

|

model/smash_config.json

ADDED

|

@@ -0,0 +1,32 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"api_key": null,

|

| 3 |

+

"verify_url": "http://johnrachwan.pythonanywhere.com",

|

| 4 |

+

"smash_config": {

|

| 5 |

+

"pruners": "None",

|

| 6 |

+

"pruning_ratio": 0.0,

|

| 7 |

+

"factorizers": "None",

|

| 8 |

+

"quantizers": "['half']",

|

| 9 |

+

"n_quantization_bits": 32,

|

| 10 |

+

"output_deviation": 0.01,

|

| 11 |

+

"compilers": "['x-fast']",

|

| 12 |

+

"static_batch": true,

|

| 13 |

+

"static_shape": true,

|

| 14 |

+

"controlnet": "None",

|

| 15 |

+

"unet_dim": 4,

|

| 16 |

+

"device": "cuda",

|

| 17 |

+

"cache_dir": "/ceph/hdd/staff/charpent/.cache/models46txzlxr",

|

| 18 |

+

"batch_size": 1,

|

| 19 |

+

"model_name": "convnext_large.fb_in1k",

|

| 20 |

+

"max_batch_size": 1,

|

| 21 |

+

"qtype_weight": "torch.qint8",

|

| 22 |

+

"qtype_activation": "torch.quint8",

|

| 23 |

+

"qobserver": "<class 'torch.ao.quantization.observer.MinMaxObserver'>",

|

| 24 |

+

"qscheme": "torch.per_tensor_symmetric",

|

| 25 |

+

"qconfig": "x86",

|

| 26 |

+

"group_size": 128,

|

| 27 |

+

"damp_percent": 0.1,

|

| 28 |

+

"save_dir": ".models/optimized_model",

|

| 29 |

+

"fn_to_compile": "forward",

|

| 30 |

+

"save_load_fn": "x-fast"

|

| 31 |

+

}

|

| 32 |

+

}

|

plots.png

ADDED

|