[Homepage] | [Paper] | [Github] | [Discord] | [Blog] | [Community Demo]

Welcome to the xLAM model family! Large Action Models (LAMs) are advanced large language models designed to enhance decision-making and translate user intentions into executable actions that interact with the world. LAMs autonomously plan and execute tasks to achieve specific goals, serving as the brains of AI agents. They have the potential to automate workflow processes across various domains, making them invaluable for a wide range of applications. The model release is exclusively for research purposes. A new and enhanced version of xLAM will soon be available exclusively to customers on our Platform.

Table of Contents

Model Series

We provide a series of xLAMs in different sizes to cater to various applications, including those optimized for function-calling and general agent applications:

| Model | # Total Params | Context Length | Release Date | Category | Download Model | Download GGUF files |

|---|---|---|---|---|---|---|

| xLAM-7b-r | 7.24B | 32k | Sep. 5, 2024 | General, Function-calling | 🤗 Link | -- |

| xLAM-8x7b-r | 46.7B | 32k | Sep. 5, 2024 | General, Function-calling | 🤗 Link | -- |

| xLAM-8x22b-r | 141B | 64k | Sep. 5, 2024 | General, Function-calling | 🤗 Link | -- |

| xLAM-1b-fc-r | 1.35B | 16k | July 17, 2024 | Function-calling | 🤗 Link | 🤗 Link |

| xLAM-7b-fc-r | 6.91B | 4k | July 17, 2024 | Function-calling | 🤗 Link | 🤗 Link |

| xLAM-v0.1-r | 46.7B | 32k | Mar. 18, 2024 | General, Function-calling | 🤗 Link | -- |

For our Function-calling series (more details are included at here), we also provide their quantized GGUF files for efficient deployment and execution. GGUF is a file format designed to efficiently store and load large language models, making GGUF ideal for running AI models on local devices with limited resources, enabling offline functionality and enhanced privacy.

For more details, check our GitHub and paper.

Check Latest Examples on Interaction with xLAM

Here is the latest examples and tokenizer on interacting with xLAM models.

Repository Overview

This repository is about the general tool use series. For more specialized function calling models, please take a look into our fc series here.

The instructions will guide you through the setup, usage, and integration of our model series with HuggingFace.

Framework Versions

- Transformers 4.41.0

- Pytorch 2.3.0+cu121

- Datasets 2.19.1

- Tokenizers 0.19.1

Usage

Basic Usage with Huggingface

To use the model from Huggingface, please first install the transformers library:

pip install transformers>=4.41.0

Please note that, our model works best with our provided prompt format. It allows us to extract JSON output that is similar to the function-calling mode of ChatGPT.

We use the following example to illustrate how to use our model for 1) single-turn use case, and 2) multi-turn use case

1. Single-turn use case

import json

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer

torch.random.manual_seed(0)

model_name = "Salesforce/xLAM-7b-r"

model = AutoModelForCausalLM.from_pretrained(model_name, device_map="auto", torch_dtype="auto", trust_remote_code=True)

tokenizer = AutoTokenizer.from_pretrained(model_name)

# Please use our provided instruction prompt for best performance

task_instruction = """

Based on the previous context and API request history, generate an API request or a response as an AI assistant.""".strip()

format_instruction = """

The output should be of the JSON format, which specifies a list of generated function calls. The example format is as follows, please make sure the parameter type is correct. If no function call is needed, please make

tool_calls an empty list "[]".

```

{"thought": "the thought process, or an empty string", "tool_calls": [{"name": "api_name1", "arguments": {"argument1": "value1", "argument2": "value2"}}]}

```

""".strip()

# Define the input query and available tools

query = "What's the weather like in New York in fahrenheit?"

get_weather_api = {

"name": "get_weather",

"description": "Get the current weather for a location",

"parameters": {

"type": "object",

"properties": {

"location": {

"type": "string",

"description": "The city and state, e.g. San Francisco, New York"

},

"unit": {

"type": "string",

"enum": ["celsius", "fahrenheit"],

"description": "The unit of temperature to return"

}

},

"required": ["location"]

}

}

search_api = {

"name": "search",

"description": "Search for information on the internet",

"parameters": {

"type": "object",

"properties": {

"query": {

"type": "string",

"description": "The search query, e.g. 'latest news on AI'"

}

},

"required": ["query"]

}

}

openai_format_tools = [get_weather_api, search_api]

# Helper function to convert openai format tools to our more concise xLAM format

def convert_to_xlam_tool(tools):

''''''

if isinstance(tools, dict):

return {

"name": tools["name"],

"description": tools["description"],

"parameters": {k: v for k, v in tools["parameters"].get("properties", {}).items()}

}

elif isinstance(tools, list):

return [convert_to_xlam_tool(tool) for tool in tools]

else:

return tools

def build_conversation_history_prompt(conversation_history: str):

parsed_history = []

for step_data in conversation_history:

parsed_history.append({

"step_id": step_data["step_id"],

"thought": step_data["thought"],

"tool_calls": step_data["tool_calls"],

"next_observation": step_data["next_observation"],

"user_input": step_data['user_input']

})

history_string = json.dumps(parsed_history)

return f"\n[BEGIN OF HISTORY STEPS]\n{history_string}\n[END OF HISTORY STEPS]\n"

# Helper function to build the input prompt for our model

def build_prompt(task_instruction: str, format_instruction: str, tools: list, query: str, conversation_history: list):

prompt = f"[BEGIN OF TASK INSTRUCTION]\n{task_instruction}\n[END OF TASK INSTRUCTION]\n\n"

prompt += f"[BEGIN OF AVAILABLE TOOLS]\n{json.dumps(xlam_format_tools)}\n[END OF AVAILABLE TOOLS]\n\n"

prompt += f"[BEGIN OF FORMAT INSTRUCTION]\n{format_instruction}\n[END OF FORMAT INSTRUCTION]\n\n"

prompt += f"[BEGIN OF QUERY]\n{query}\n[END OF QUERY]\n\n"

if len(conversation_history) > 0: prompt += build_conversation_history_prompt(conversation_history)

return prompt

# Build the input and start the inference

xlam_format_tools = convert_to_xlam_tool(openai_format_tools)

conversation_history = []

content = build_prompt(task_instruction, format_instruction, xlam_format_tools, query, conversation_history)

messages=[

{ 'role': 'user', 'content': content}

]

inputs = tokenizer.apply_chat_template(messages, add_generation_prompt=True, return_tensors="pt").to(model.device)

# tokenizer.eos_token_id is the id of <|EOT|> token

outputs = model.generate(inputs, max_new_tokens=512, do_sample=False, num_return_sequences=1, eos_token_id=tokenizer.eos_token_id)

agent_action = tokenizer.decode(outputs[0][len(inputs[0]):], skip_special_tokens=True)

Then you should be able to see the following output string in JSON format:

{"thought": "I need to get the current weather for New York in fahrenheit.", "tool_calls": [{"name": "get_weather", "arguments": {"location": "New York", "unit": "fahrenheit"}}]}

2. Multi-turn use case

We also support multi-turn interaction with our model series. Here is the example of next round of interaction from the above example:

def parse_agent_action(agent_action: str):

"""

Given an agent's action, parse it to add to conversation history

"""

try: parsed_agent_action_json = json.loads(agent_action)

except: return "", []

if "thought" not in parsed_agent_action_json.keys(): thought = ""

else: thought = parsed_agent_action_json["thought"]

if "tool_calls" not in parsed_agent_action_json.keys(): tool_calls = []

else: tool_calls = parsed_agent_action_json["tool_calls"]

return thought, tool_calls

def update_conversation_history(conversation_history: list, agent_action: str, environment_response: str, user_input: str):

"""

Update the conversation history list based on the new agent_action, environment_response, and/or user_input

"""

thought, tool_calls = parse_agent_action(agent_action)

new_step_data = {

"step_id": len(conversation_history) + 1,

"thought": thought,

"tool_calls": tool_calls,

"step_id": len(conversation_history),

"next_observation": environment_response,

"user_input": user_input,

}

conversation_history.append(new_step_data)

def get_environment_response(agent_action: str):

"""

Get the environment response for the agent_action

"""

# TODO: add custom implementation here

error_message, response_message = "", ""

return {"error": error_message, "response": response_message}

# ------------- before here are the steps to get agent_response from the example above ----------

# 1. get the next state after agent's response:

# The next 2 lines are examples of getting environment response and user_input.

# It is depended on particular usage, we can have either one or both of those.

environment_response = get_environment_response(agent_action)

user_input = "Now, search on the Internet for cute puppies"

# 2. after we got environment_response and (or) user_input, we want to add to our conversation history

update_conversation_history(conversation_history, agent_action, environment_response, user_input)

# 3. we now can build the prompt

content = build_prompt(task_instruction, format_instruction, xlam_format_tools, query, conversation_history)

# 4. Now, we just retrieve the inputs for the LLM

messages=[

{ 'role': 'user', 'content': content}

]

inputs = tokenizer.apply_chat_template(messages, add_generation_prompt=True, return_tensors="pt").to(model.device)

# 5. Generate the outputs & decode

# tokenizer.eos_token_id is the id of <|EOT|> token

outputs = model.generate(inputs, max_new_tokens=512, do_sample=False, num_return_sequences=1, eos_token_id=tokenizer.eos_token_id)

agent_action = tokenizer.decode(outputs[0][len(inputs[0]):], skip_special_tokens=True)

This would be the corresponding output:

{"thought": "I need to get the current weather for New York in fahrenheit.", "tool_calls": [{"name": "get_weather", "arguments": {"location": "New York", "unit": "fahrenheit"}}]}

We highly recommend to use our provided prompt format and helper functions to yield the best function-calling performance of our model.

Example multi-turn prompt and output

Prompt:

[BEGIN OF TASK INSTRUCTION]

Based on the previous context and API request history, generate an API request or a response as an AI assistant.

[END OF TASK INSTRUCTION]

[BEGIN OF AVAILABLE TOOLS]

[

{

"name": "get_fire_info",

"description": "Query the latest wildfire information",

"parameters": {

"location": {

"type": "string",

"description": "Location of the wildfire, for example: 'California'",

"required": true,

"format": "free"

},

"radius": {

"type": "number",

"description": "The radius (in miles) around the location where the wildfire is occurring, for example: 10",

"required": false,

"format": "free"

}

}

},

{

"name": "get_hurricane_info",

"description": "Query the latest hurricane information",

"parameters": {

"name": {

"type": "string",

"description": "Name of the hurricane, for example: 'Irma'",

"required": true,

"format": "free"

}

}

},

{

"name": "get_earthquake_info",

"description": "Query the latest earthquake information",

"parameters": {

"magnitude": {

"type": "number",

"description": "The minimum magnitude of the earthquake that needs to be queried.",

"required": false,

"format": "free"

},

"location": {

"type": "string",

"description": "Location of the earthquake, for example: 'California'",

"required": false,

"format": "free"

}

}

}

]

[END OF AVAILABLE TOOLS]

[BEGIN OF FORMAT INSTRUCTION]

Your output should be in the JSON format, which specifies a list of function calls. The example format is as follows. Please make sure the parameter type is correct. If no function call is needed, please make tool_calls an empty list '[]'.

```{"thought": "the thought process, or an empty string", "tool_calls": [{"name": "api_name1", "arguments": {"argument1": "value1", "argument2": "value2"}}]}```

[END OF FORMAT INSTRUCTION]

[BEGIN OF QUERY]

User: Can you give me the latest information on the wildfires occurring in California?

[END OF QUERY]

[BEGIN OF HISTORY STEPS]

[

{

"thought": "Sure, what is the radius (in miles) around the location of the wildfire?",

"tool_calls": [],

"step_id": 1,

"next_observation": "",

"user_input": "User: Let me think... 50 miles."

},

{

"thought": "",

"tool_calls": [

{

"name": "get_fire_info",

"arguments": {

"location": "California",

"radius": 50

}

}

],

"step_id": 2,

"next_observation": [

{

"location": "Los Angeles",

"acres_burned": 1500,

"status": "contained"

},

{

"location": "San Diego",

"acres_burned": 12000,

"status": "active"

}

]

},

{

"thought": "Based on the latest information, there are wildfires in Los Angeles and San Diego. The wildfire in Los Angeles has burned 1,500 acres and is contained, while the wildfire in San Diego has burned 12,000 acres and is still active.",

"tool_calls": [],

"step_id": 3,

"next_observation": "",

"user_input": "User: Can you tell me about the latest earthquake?"

}

]

[END OF HISTORY STEPS]

Output:

{"thought": "", "tool_calls": [{"name": "get_earthquake_info", "arguments": {"location": "California"}}]}

Benchmark Results

Note: Bold and Underline results denote the best result and the second best result for Success Rate, respectively.

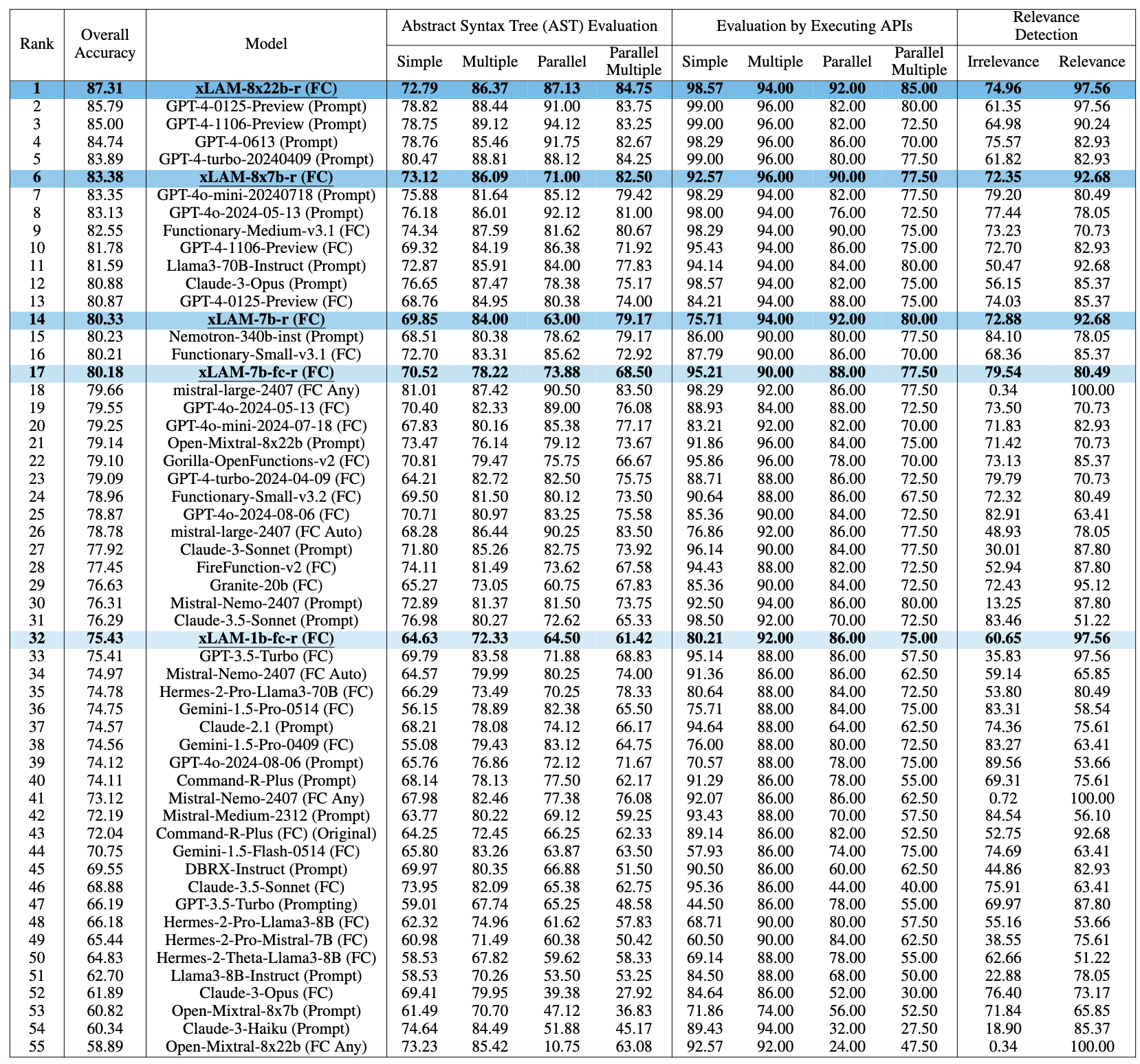

Berkeley Function-Calling Leaderboard (BFCL)

Table 1: Performance comparison on BFCL-v2 leaderboard (cutoff date 09/03/2024). The rank is based on the overall accuracy, which is a weighted average of different evaluation categories. "FC" stands for function-calling mode in contrast to using a customized "prompt" to extract the function calls.

Table 1: Performance comparison on BFCL-v2 leaderboard (cutoff date 09/03/2024). The rank is based on the overall accuracy, which is a weighted average of different evaluation categories. "FC" stands for function-calling mode in contrast to using a customized "prompt" to extract the function calls.

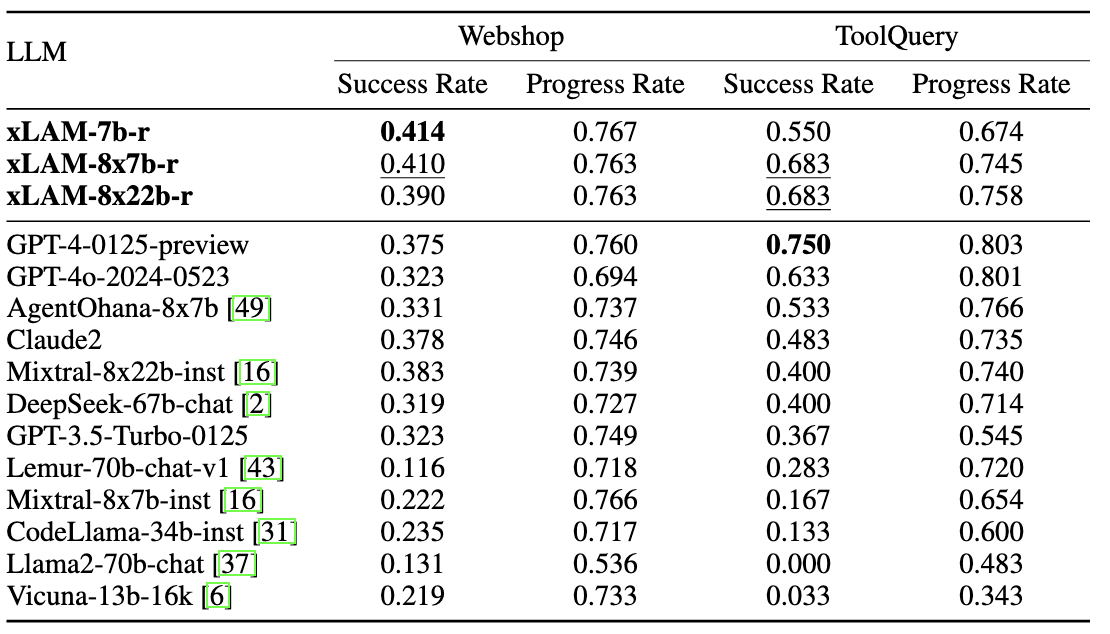

Webshop and ToolQuery

Table 2: Testing results on Webshop and ToolQuery. Bold and Underline results denote the best result and the second best result for Success Rate, respectively.

Table 2: Testing results on Webshop and ToolQuery. Bold and Underline results denote the best result and the second best result for Success Rate, respectively.

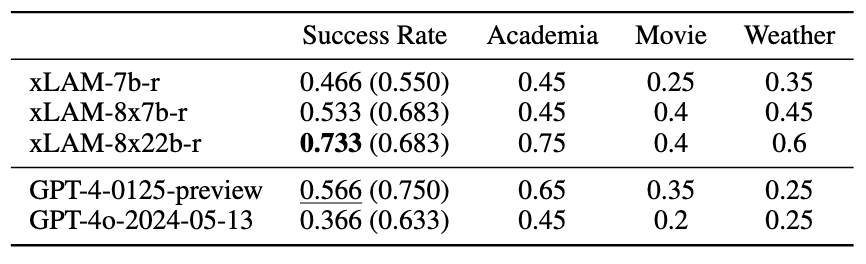

Unified ToolQuery

Table 3: Testing results on ToolQuery-Unified. Bold and Underline results denote the best result and the second best result for Success Rate, respectively. Values in brackets indicate corresponding performance on ToolQuery

Table 3: Testing results on ToolQuery-Unified. Bold and Underline results denote the best result and the second best result for Success Rate, respectively. Values in brackets indicate corresponding performance on ToolQuery

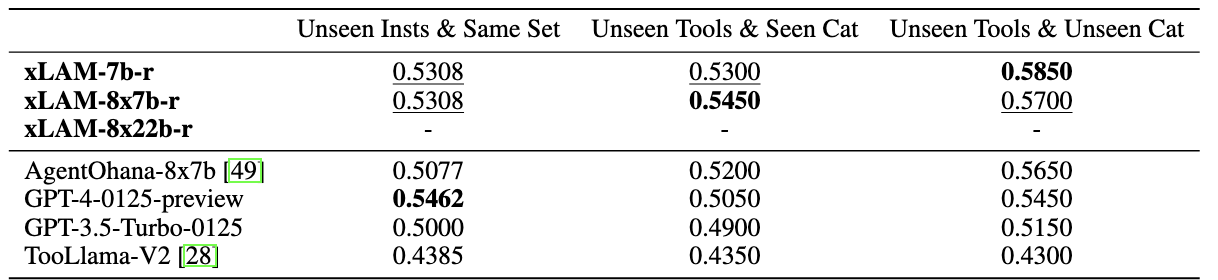

ToolBench

Table 4: Pass Rate on ToolBench on three distinct scenarios. Bold and Underline results denote the best result and the second best result for each setting, respectively. The results for xLAM-8x22b-r are unavailable due to the ToolBench server being down between 07/28/2024 and our evaluation cutoff date 09/03/2024.

Table 4: Pass Rate on ToolBench on three distinct scenarios. Bold and Underline results denote the best result and the second best result for each setting, respectively. The results for xLAM-8x22b-r are unavailable due to the ToolBench server being down between 07/28/2024 and our evaluation cutoff date 09/03/2024.

License

The model is distributed under the CC-BY-NC-4.0 license.

Ethical Considerations

This release is for research purposes only in support of an academic paper. Our models, datasets, and code are not specifically designed or evaluated for all downstream purposes. We strongly recommend users evaluate and address potential concerns related to accuracy, safety, and fairness before deploying this model. We encourage users to consider the common limitations of AI, comply with applicable laws, and leverage best practices when selecting use cases, particularly for high-risk scenarios where errors or misuse could significantly impact people’s lives, rights, or safety. For further guidance on use cases, refer to our AUP and AI AUP.

Citation

If you find this repo helpful, please consider to cite our papers:

@article{zhang2024xlam,

title={xLAM: A Family of Large Action Models to Empower AI Agent Systems},

author={Zhang, Jianguo and Lan, Tian and Zhu, Ming and Liu, Zuxin and Hoang, Thai and Kokane, Shirley and Yao, Weiran and Tan, Juntao and Prabhakar, Akshara and Chen, Haolin and others},

journal={arXiv preprint arXiv:2409.03215},

year={2024}

}

@article{liu2024apigen,

title={Apigen: Automated pipeline for generating verifiable and diverse function-calling datasets},

author={Liu, Zuxin and Hoang, Thai and Zhang, Jianguo and Zhu, Ming and Lan, Tian and Kokane, Shirley and Tan, Juntao and Yao, Weiran and Liu, Zhiwei and Feng, Yihao and others},

journal={arXiv preprint arXiv:2406.18518},

year={2024}

}

@article{zhang2024agentohana,

title={AgentOhana: Design Unified Data and Training Pipeline for Effective Agent Learning},

author={Zhang, Jianguo and Lan, Tian and Murthy, Rithesh and Liu, Zhiwei and Yao, Weiran and Tan, Juntao and Hoang, Thai and Yang, Liangwei and Feng, Yihao and Liu, Zuxin and others},

journal={arXiv preprint arXiv:2402.15506},

year={2024}

}

- Downloads last month

- 327