metadata

license: cc-by-nc-4.0

base_model: athirdpath/BigMistral-11b

tags:

- generated_from_trainer

model-index:

- name: qlora

results: []

language:

- en

pipeline_tag: text-generation

MAJOR regret: Should have not targeted Q, V, K, O; as those are less impactful for "healing" but more impactful on performance otherwise.

qlora

This model is a fine-tuned version of athirdpath/BigMistral-11b on the athirdpath/Merge_Glue dataset. It achieves the following results on the evaluation set:

- Loss: 0.9174

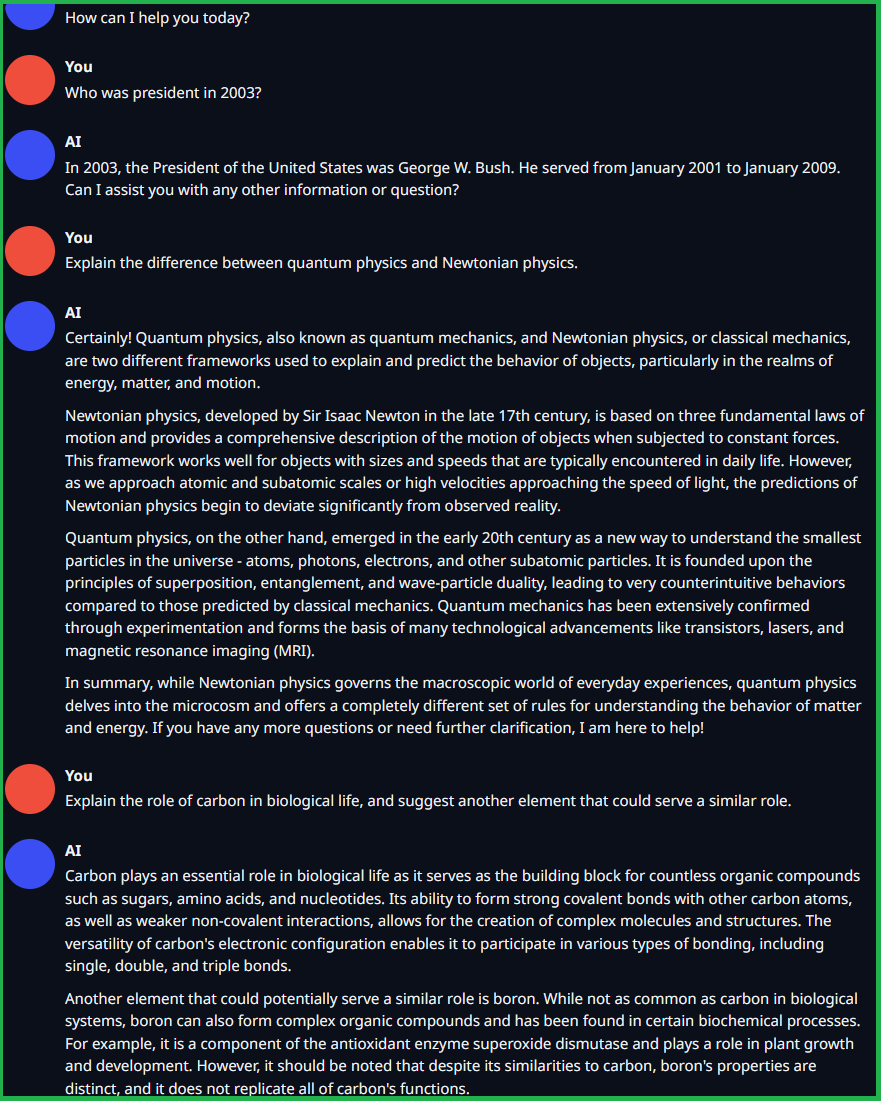

Before and After Example

Example model is athirdpath/CleverMage-11b

Examples with LoRA (min_p, alpaca)

Examples without LoRA (min_p, chatML)

Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0005

- train_batch_size: 10

- eval_batch_size: 10

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 40

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- lr_scheduler_warmup_steps: 10

- num_epochs: 3

Training results

| Training Loss | Epoch | Step | Validation Loss |

|---|---|---|---|

| 1.2198 | 0.63 | 30 | 0.9055 |

| 1.1206 | 1.26 | 60 | 0.8951 |

| 1.1319 | 1.89 | 90 | 0.8904 |

| 1.0031 | 2.51 | 120 | 0.9174 |

Framework versions

- Transformers 4.35.2

- Pytorch 2.0.1+cu118

- Datasets 2.15.0

- Tokenizers 0.15.0