metadata

license: apache-2.0

datasets:

- augmxnt/ultra-orca-boros-en-ja-v1

language:

- ja

- en

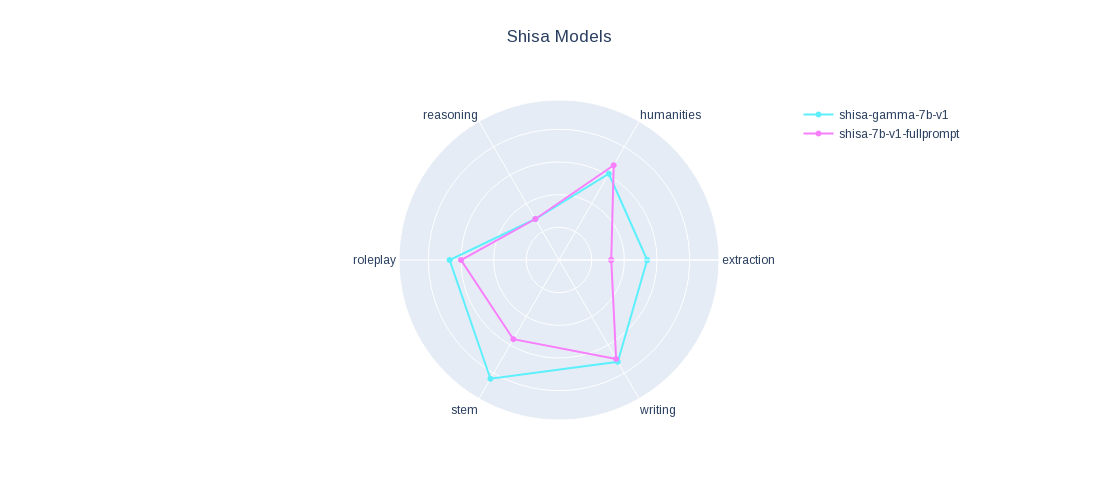

shisa-gamma-7b-v1

For more information see our main Shisa 7B model

We applied a version of our fine-tune data set onto Japanese Stable LM Base Gamma 7B and it performed pretty well, just sharing since it might be of interest.

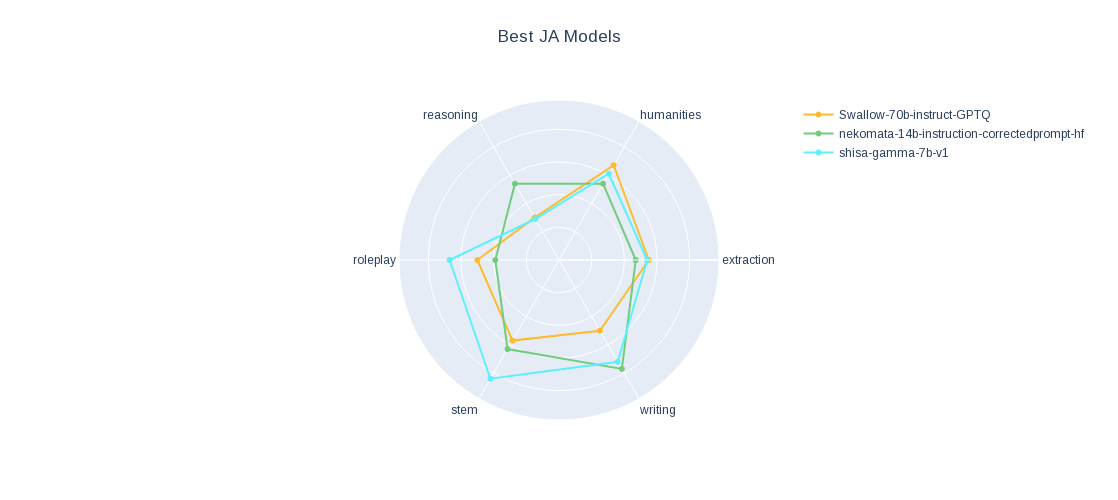

Check out our JA MT-Bench results.