language:

- en

- zh

license: other

tasks:

- text-generation

Baichuan 2 13B Chat - Int4

描述

该repo包含Baichuan 2 7B Chat的Int4 GPTQ模型文件。

GPTQ参数

该GPTQ文件都是用AutoGPTQ生成的。

- Bits: 4/8

- GS: 32/128

- Act Order: True

- Damp %: 0.1

- GPTQ dataset: 中文、英文混合数据集

- Sequence Length: 4096

模型版本 agieval ceval cmmlu size 推理速度(A100-40G) Baichuan2-13B-Chat 40.25 56.33 58.44 27.79g 31.55 tokens/s Baichuan2-13B-Chat-4bits ~ ~ ~ 9.08g 18.45 tokens/s GPTQ-4bit-32g 38.64 57.18 57.47 9.87g 27.35(hf) \ 38.28(autogptq) tokens/s GPTQ-4bit-128g 38.78 56.42 57.78 9.14g 28.74(hf) \ 39.24(autogptq) tokens/s

如何在Python代码中使用此GPTQ模型

安装必要的依赖

必须: Transformers 4.32.0以上、Optimum 1.12.0以上、AutoGPTQ 0.4.2以上

pip3 install transformers>=4.32.0 optimum>=1.12.0

pip3 install auto-gptq --extra-index-url https://huggingface.github.io/autogptq-index/whl/cu118/ # Use cu117 if on CUDA 11.7

如果您在使用预构建的pip包安装AutoGPTQ时遇到问题,请改为从源代码安装:

pip3 uninstall -y auto-gptq

git clone https://github.com/PanQiWei/AutoGPTQ

cd AutoGPTQ

pip3 install .

然后可以使用以下代码

from transformers import AutoModelForCausalLM, AutoTokenizer

from transformers.generation.utils import GenerationConfig

model_name_or_path = "csdc-atl/Baichuan2-13B-Chat-Int4"

model = AutoModelForCausalLM.from_pretrained(model_name_or_path,

torch_dtype=torch.float16,

device_map="auto",

trust_remote_code=True)

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, use_fast=True, trust_remote_code=True)

model.generation_config = GenerationConfig.from_pretrained("baichuan-inc/Baichuan2-7B-Chat")

messages = []

messages.append({"role": "user", "content": "解释一下“温故而知新”"})

response = model.chat(tokenizer, messages)

print(response)

"温故而知新"是一句中国古代的成语,出自《论语·为政》篇。这句话的意思是:通过回顾过去,我们可以发现新的知识和理解。换句话说,学习历史和经验可以让我们更好地理解现在和未来。

这句话鼓励我们在学习和生活中不断地回顾和反思过去的经验,从而获得新的启示和成长。通过重温旧的知识和经历,我们可以发现新的观点和理解,从而更好地应对不断变化的世界和挑战。

Baichuan 2

目录/Table of Contents

- 📖 模型介绍/Introduction

- ⚙️ 快速开始/Quick Start

- 📊 Benchmark评估/Benchmark Evaluation

- 📜 声明与协议/Terms and Conditions

模型介绍/Introduction

Baichuan 2 是百川智能推出的新一代开源大语言模型,采用 2.6 万亿 Tokens 的高质量语料训练,在权威的中文和英文 benchmark 上均取得同尺寸最好的效果。本次发布包含有 7B、13B 的 Base 和 Chat 版本,并提供了 Chat 版本的 4bits 量化,所有版本不仅对学术研究完全开放,开发者也仅需邮件申请并获得官方商用许可后,即可以免费商用。具体发布版本和下载见下表:

Baichuan 2 is the new generation of large-scale open-source language models launched by Baichuan Intelligence inc.. It is trained on a high-quality corpus with 2.6 trillion tokens and has achieved the best performance in authoritative Chinese and English benchmarks of the same size. This release includes 7B and 13B versions for both Base and Chat models, along with a 4bits quantized version for the Chat model. All versions are fully open to academic research, and developers can also use them for free in commercial applications after obtaining an official commercial license through email request. The specific release versions and download links are listed in the table below:

| Base Model | Chat Model | 4bits Quantized Chat Model | |

|---|---|---|---|

| 7B | Baichuan2-7B-Base | Baichuan2-7B-Chat | Baichuan2-7B-Chat-4bits |

| 13B | Baichuan2-13B-Base | Baichuan2-13B-Chat | Baichuan2-13B-Chat-4bits |

快速开始/Quick Start

在Baichuan2系列模型中,我们为了加快推理速度使用了Pytorch2.0加入的新功能F.scaled_dot_product_attention,因此模型需要在Pytorch2.0环境下运行。

In the Baichuan 2 series models, we have utilized the new feature F.scaled_dot_product_attention introduced in PyTorch 2.0 to accelerate inference speed. Therefore, the model needs to be run in a PyTorch 2.0 environment.

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer

from transformers.generation.utils import GenerationConfig

tokenizer = AutoTokenizer.from_pretrained("baichuan-inc/Baichuan2-13B-Chat", use_fast=False, trust_remote_code=True)

model = AutoModelForCausalLM.from_pretrained("baichuan-inc/Baichuan2-13B-Chat", device_map="auto", torch_dtype=torch.bfloat16, trust_remote_code=True)

model.generation_config = GenerationConfig.from_pretrained("baichuan-inc/Baichuan2-13B-Chat")

messages = []

messages.append({"role": "user", "content": "解释一下“温故而知新”"})

response = model.chat(tokenizer, messages)

print(response)

"温故而知新"是一句中国古代的成语,出自《论语·为政》篇。这句话的意思是:通过回顾过去,我们可以发现新的知识和理解。换句话说,学习历史和经验可以让我们更好地理解现在和未来。

这句话鼓励我们在学习和生活中不断地回顾和反思过去的经验,从而获得新的启示和成长。通过重温旧的知识和经历,我们可以发现新的观点和理解,从而更好地应对不断变化的世界和挑战。

Benchmark 结果/Benchmark Evaluation

我们在通用、法律、医疗、数学、代码和多语言翻译六个领域的中英文权威数据集上对模型进行了广泛测试,更多详细测评结果可查看GitHub。

We have extensively tested the model on authoritative Chinese-English datasets across six domains: General, Legal, Medical, Mathematics, Code, and Multilingual Translation. For more detailed evaluation results, please refer to GitHub.

7B Model Results

| C-Eval | MMLU | CMMLU | Gaokao | AGIEval | BBH | |

|---|---|---|---|---|---|---|

| 5-shot | 5-shot | 5-shot | 5-shot | 5-shot | 3-shot | |

| GPT-4 | 68.40 | 83.93 | 70.33 | 66.15 | 63.27 | 75.12 |

| GPT-3.5 Turbo | 51.10 | 68.54 | 54.06 | 47.07 | 46.13 | 61.59 |

| LLaMA-7B | 27.10 | 35.10 | 26.75 | 27.81 | 28.17 | 32.38 |

| LLaMA2-7B | 28.90 | 45.73 | 31.38 | 25.97 | 26.53 | 39.16 |

| MPT-7B | 27.15 | 27.93 | 26.00 | 26.54 | 24.83 | 35.20 |

| Falcon-7B | 24.23 | 26.03 | 25.66 | 24.24 | 24.10 | 28.77 |

| ChatGLM2-6B | 50.20 | 45.90 | 49.00 | 49.44 | 45.28 | 31.65 |

| Baichuan-7B | 42.80 | 42.30 | 44.02 | 36.34 | 34.44 | 32.48 |

| Baichuan2-7B-Base | 54.00 | 54.16 | 57.07 | 47.47 | 42.73 | 41.56 |

13B Model Results

| C-Eval | MMLU | CMMLU | Gaokao | AGIEval | BBH | |

|---|---|---|---|---|---|---|

| 5-shot | 5-shot | 5-shot | 5-shot | 5-shot | 3-shot | |

| GPT-4 | 68.40 | 83.93 | 70.33 | 66.15 | 63.27 | 75.12 |

| GPT-3.5 Turbo | 51.10 | 68.54 | 54.06 | 47.07 | 46.13 | 61.59 |

| LLaMA-13B | 28.50 | 46.30 | 31.15 | 28.23 | 28.22 | 37.89 |

| LLaMA2-13B | 35.80 | 55.09 | 37.99 | 30.83 | 32.29 | 46.98 |

| Vicuna-13B | 32.80 | 52.00 | 36.28 | 30.11 | 31.55 | 43.04 |

| Chinese-Alpaca-Plus-13B | 38.80 | 43.90 | 33.43 | 34.78 | 35.46 | 28.94 |

| XVERSE-13B | 53.70 | 55.21 | 58.44 | 44.69 | 42.54 | 38.06 |

| Baichuan-13B-Base | 52.40 | 51.60 | 55.30 | 49.69 | 43.20 | 43.01 |

| Baichuan2-13B-Base | 58.10 | 59.17 | 61.97 | 54.33 | 48.17 | 48.78 |

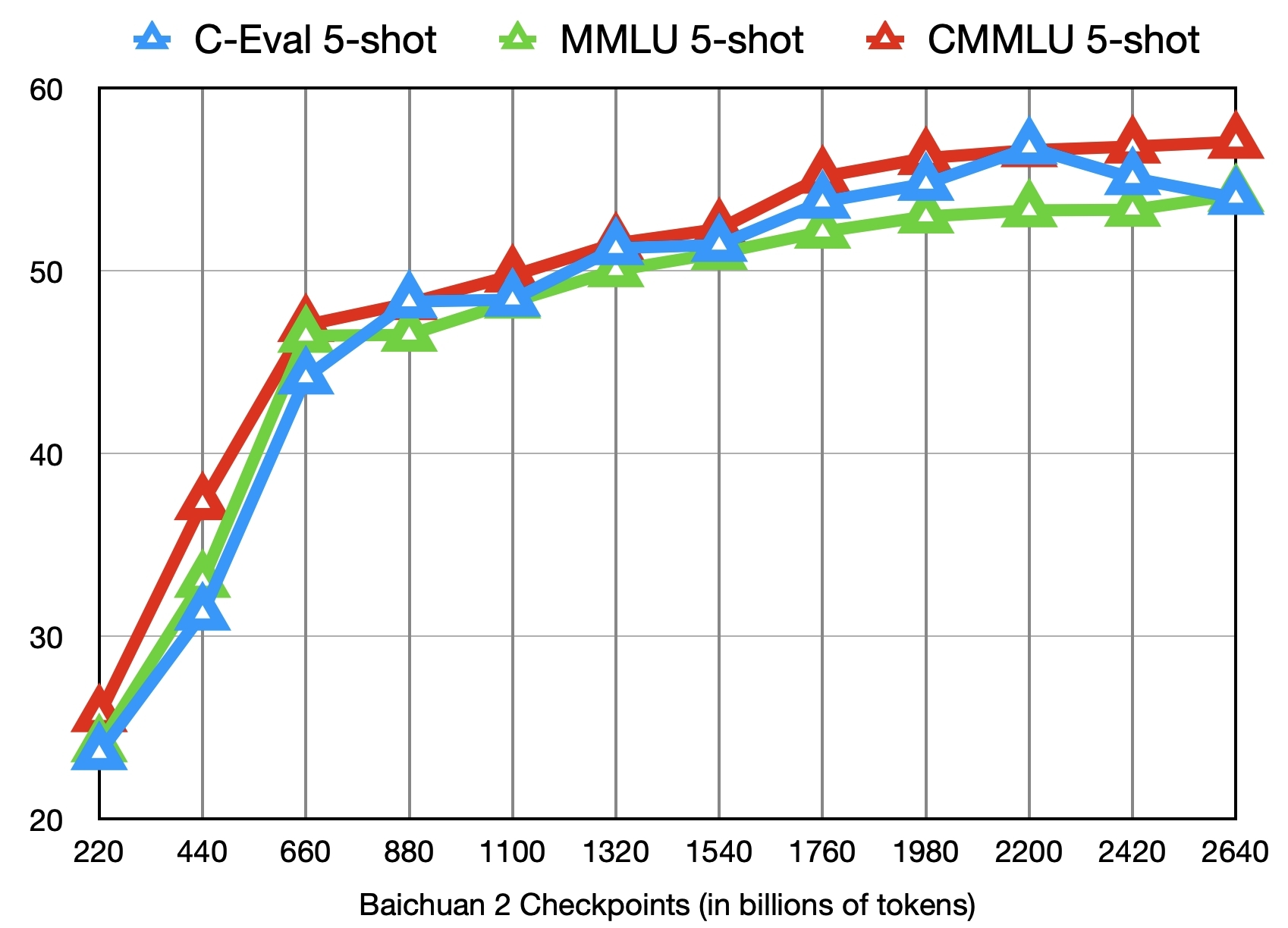

训练过程模型/Training Dynamics

除了训练了 2.6 万亿 Tokens 的 Baichuan2-7B-Base 模型,我们还提供了在此之前的另外 11 个中间过程的模型(分别对应训练了约 0.2 ~ 2.4 万亿 Tokens)供社区研究使用 (训练过程checkpoint下载)。下图给出了这些 checkpoints 在 C-Eval、MMLU、CMMLU 三个 benchmark 上的效果变化:

In addition to the Baichuan2-7B-Base model trained on 2.6 trillion tokens, we also offer 11 additional intermediate-stage models for community research, corresponding to training on approximately 0.2 to 2.4 trillion tokens each (Intermediate Checkpoints Download). The graph below shows the performance changes of these checkpoints on three benchmarks: C-Eval, MMLU, and CMMLU.

声明与协议/Terms and Conditions

声明

我们在此声明,我们的开发团队并未基于 Baichuan 2 模型开发任何应用,无论是在 iOS、Android、网页或任何其他平台。我们强烈呼吁所有使用者,不要利用 Baichuan 2 模型进行任何危害国家社会安全或违法的活动。另外,我们也要求使用者不要将 Baichuan 2 模型用于未经适当安全审查和备案的互联网服务。我们希望所有的使用者都能遵守这个原则,确保科技的发展能在规范和合法的环境下进行。

我们已经尽我们所能,来确保模型训练过程中使用的数据的合规性。然而,尽管我们已经做出了巨大的努力,但由于模型和数据的复杂性,仍有可能存在一些无法预见的问题。因此,如果由于使用 Baichuan 2 开源模型而导致的任何问题,包括但不限于数据安全问题、公共舆论风险,或模型被误导、滥用、传播或不当利用所带来的任何风险和问题,我们将不承担任何责任。

We hereby declare that our team has not developed any applications based on Baichuan 2 models, not on iOS, Android, the web, or any other platform. We strongly call on all users not to use Baichuan 2 models for any activities that harm national / social security or violate the law. Also, we ask users not to use Baichuan 2 models for Internet services that have not undergone appropriate security reviews and filings. We hope that all users can abide by this principle and ensure that the development of technology proceeds in a regulated and legal environment.

We have done our best to ensure the compliance of the data used in the model training process. However, despite our considerable efforts, there may still be some unforeseeable issues due to the complexity of the model and data. Therefore, if any problems arise due to the use of Baichuan 2 open-source models, including but not limited to data security issues, public opinion risks, or any risks and problems brought about by the model being misled, abused, spread or improperly exploited, we will not assume any responsibility.

协议

Baichuan 2 模型的社区使用需遵循《Baichuan 2 模型社区许可协议》。Baichuan 2 支持商用。如果将 Baichuan 2 模型或其衍生品用作商业用途,请您按照如下方式联系许可方,以进行登记并向许可方申请书面授权:联系邮箱 [email protected]。

The use of the source code in this repository follows the open-source license Apache 2.0. Community use of the Baichuan 2 model must adhere to the Community License for Baichuan 2 Model. Baichuan 2 supports commercial use. If you are using the Baichuan 2 models or their derivatives for commercial purposes, please contact the licensor in the following manner for registration and to apply for written authorization: Email [email protected].