Search is not available for this dataset

pipeline_tag

stringclasses 48

values | library_name

stringclasses 205

values | text

stringlengths 0

18.3M

| metadata

stringlengths 2

1.07B

| id

stringlengths 5

122

| last_modified

null | tags

listlengths 1

1.84k

| sha

null | created_at

stringlengths 25

25

|

|---|---|---|---|---|---|---|---|---|

null | peft | ## Training procedure

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: bfloat16

### Framework versions

- PEFT 0.4.0

| {"library_name": "peft"} | chakkakrishna/llamareqa | null | [

"peft",

"safetensors",

"llama",

"region:us"

]

| null | 2024-04-25T18:07:59+00:00 |

text-generation | transformers |

# Uploaded model

- **Developed by:** 1024m

- **License:** apache-2.0

- **Finetuned from model :** unsloth/mistral-7b-bnb-4bit

This mistral model was trained 2x faster with [Unsloth](https://github.com/unslothai/unsloth) and Huggingface's TRL library.

[<img src="https://raw.githubusercontent.com/unslothai/unsloth/main/images/unsloth%20made%20with%20love.png" width="200"/>](https://github.com/unslothai/unsloth)

| {"language": ["en"], "license": "apache-2.0", "tags": ["text-generation-inference", "transformers", "unsloth", "mistral", "trl", "sft"], "base_model": "unsloth/mistral-7b-bnb-4bit"} | 1024m/MISTRAL7B-01-EXALT1A-16bit | null | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"text-generation-inference",

"unsloth",

"trl",

"sft",

"en",

"base_model:unsloth/mistral-7b-bnb-4bit",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2024-04-25T18:08:16+00:00 |

null | null | https://civitai.com/models/417259/alisa-mikhailovna-kujou-ayra-san-or-my-deskmate-alya-sometimes-hides-her-feelings-in-russian-or-tokidoki-bosotto-roshia-go-de-dereru-tonari-no-arya-san | {"license": "creativeml-openrail-m"} | LarryAIDraw/Arya-06 | null | [

"license:creativeml-openrail-m",

"region:us"

]

| null | 2024-04-25T18:09:37+00:00 |

null | null | {} | Umbrosov/Orihime | null | [

"region:us"

]

| null | 2024-04-25T18:09:56+00:00 |

|

text-generation | null |

# Mistral 7B Instruct v0.2 - GGUF

- Model creator: [Mistral AI_](https://huggingface.co/mistralai)

- Original model: [Mistral 7B Instruct v0.2](https://huggingface.co/mistralai/Mistral-7B-Instruct-v0.2)

<!-- description start -->

## Description

This repo contains GGUF format model files for [Mistral AI_'s Mistral 7B Instruct v0.2](https://huggingface.co/mistralai/Mistral-7B-Instruct-v0.2).

<!-- description end -->

<!-- README_GGUF.md-about-gguf start -->

### About GGUF

GGUF is a new format introduced by the llama.cpp team on August 21st 2023. It is a replacement for GGML, which is no longer supported by llama.cpp.

Here is an incomplete list of clients and libraries that are known to support GGUF:

* [llama.cpp](https://github.com/ggerganov/llama.cpp). The source project for GGUF. Offers a CLI and a server option.

* [text-generation-webui](https://github.com/oobabooga/text-generation-webui), the most widely used web UI, with many features and powerful extensions. Supports GPU acceleration.

* [KoboldCpp](https://github.com/LostRuins/koboldcpp), a fully featured web UI, with GPU accel across all platforms and GPU architectures. Especially good for story telling.

* [GPT4All](https://gpt4all.io/index.html), a free and open source local running GUI, supporting Windows, Linux and macOS with full GPU accel.

* [LM Studio](https://lmstudio.ai/), an easy-to-use and powerful local GUI for Windows and macOS (Silicon), with GPU acceleration. Linux available, in beta as of 27/11/2023.

* [LoLLMS Web UI](https://github.com/ParisNeo/lollms-webui), a great web UI with many interesting and unique features, including a full model library for easy model selection.

* [Faraday.dev](https://faraday.dev/), an attractive and easy to use character-based chat GUI for Windows and macOS (both Silicon and Intel), with GPU acceleration.

* [llama-cpp-python](https://github.com/abetlen/llama-cpp-python), a Python library with GPU accel, LangChain support, and OpenAI-compatible API server.

* [candle](https://github.com/huggingface/candle), a Rust ML framework with a focus on performance, including GPU support, and ease of use.

* [ctransformers](https://github.com/marella/ctransformers), a Python library with GPU accel, LangChain support, and OpenAI-compatible AI server. Note, as of time of writing (November 27th 2023), ctransformers has not been updated in a long time and does not support many recent models.

<!-- README_GGUF.md-about-gguf end -->

<!-- prompt-template start -->

## Prompt template: Mistral

```

<s>[INST] {prompt} [/INST]

```

<!-- prompt-template end -->

<!-- README_GGUF.md-provided-files start -->

## Provided files

| Name | Quant method | Bits | Size | Max RAM required | Use case |

| ---- | ---- | ---- | ---- | ---- | ----- |

| [mistral-7b-instruct-v0.2.Q2_K.gguf](https://huggingface.co/TheBloke/Mistral-7B-Instruct-v0.2-GGUF/blob/main/mistral-7b-instruct-v0.2.Q2_K.gguf) | Q2_K | 2 | 3.08 GB| 5.58 GB | smallest, significant quality loss - not recommended for most purposes |

| [mistral-7b-instruct-v0.2.Q3_K_S.gguf](https://huggingface.co/TheBloke/Mistral-7B-Instruct-v0.2-GGUF/blob/main/mistral-7b-instruct-v0.2.Q3_K_S.gguf) | Q3_K_S | 3 | 3.16 GB| 5.66 GB | very small, high quality loss |

| [mistral-7b-instruct-v0.2.Q3_K_M.gguf](https://huggingface.co/TheBloke/Mistral-7B-Instruct-v0.2-GGUF/blob/main/mistral-7b-instruct-v0.2.Q3_K_M.gguf) | Q3_K_M | 3 | 3.52 GB| 6.02 GB | very small, high quality loss |

| [mistral-7b-instruct-v0.2.Q3_K_L.gguf](https://huggingface.co/TheBloke/Mistral-7B-Instruct-v0.2-GGUF/blob/main/mistral-7b-instruct-v0.2.Q3_K_L.gguf) | Q3_K_L | 3 | 3.82 GB| 6.32 GB | small, substantial quality loss |

| [mistral-7b-instruct-v0.2.Q4_0.gguf](https://huggingface.co/TheBloke/Mistral-7B-Instruct-v0.2-GGUF/blob/main/mistral-7b-instruct-v0.2.Q4_0.gguf) | Q4_0 | 4 | 4.11 GB| 6.61 GB | legacy; small, very high quality loss - prefer using Q3_K_M |

| [mistral-7b-instruct-v0.2.Q4_K_S.gguf](https://huggingface.co/TheBloke/Mistral-7B-Instruct-v0.2-GGUF/blob/main/mistral-7b-instruct-v0.2.Q4_K_S.gguf) | Q4_K_S | 4 | 4.14 GB| 6.64 GB | small, greater quality loss |

| [mistral-7b-instruct-v0.2.Q4_K_M.gguf](https://huggingface.co/TheBloke/Mistral-7B-Instruct-v0.2-GGUF/blob/main/mistral-7b-instruct-v0.2.Q4_K_M.gguf) | Q4_K_M | 4 | 4.37 GB| 6.87 GB | medium, balanced quality - recommended |

| [mistral-7b-instruct-v0.2.Q5_0.gguf](https://huggingface.co/TheBloke/Mistral-7B-Instruct-v0.2-GGUF/blob/main/mistral-7b-instruct-v0.2.Q5_0.gguf) | Q5_0 | 5 | 5.00 GB| 7.50 GB | legacy; medium, balanced quality - prefer using Q4_K_M |

| [mistral-7b-instruct-v0.2.Q5_K_S.gguf](https://huggingface.co/TheBloke/Mistral-7B-Instruct-v0.2-GGUF/blob/main/mistral-7b-instruct-v0.2.Q5_K_S.gguf) | Q5_K_S | 5 | 5.00 GB| 7.50 GB | large, low quality loss - recommended |

| [mistral-7b-instruct-v0.2.Q5_K_M.gguf](https://huggingface.co/TheBloke/Mistral-7B-Instruct-v0.2-GGUF/blob/main/mistral-7b-instruct-v0.2.Q5_K_M.gguf) | Q5_K_M | 5 | 5.13 GB| 7.63 GB | large, very low quality loss - recommended |

| [mistral-7b-instruct-v0.2.Q6_K.gguf](https://huggingface.co/TheBloke/Mistral-7B-Instruct-v0.2-GGUF/blob/main/mistral-7b-instruct-v0.2.Q6_K.gguf) | Q6_K | 6 | 5.94 GB| 8.44 GB | very large, extremely low quality loss |

| [mistral-7b-instruct-v0.2.Q8_0.gguf](https://huggingface.co/TheBloke/Mistral-7B-Instruct-v0.2-GGUF/blob/main/mistral-7b-instruct-v0.2.Q8_0.gguf) | Q8_0 | 8 | 7.70 GB| 10.20 GB | very large, extremely low quality loss - not recommended |

**Note**: the above RAM figures assume no GPU offloading. If layers are offloaded to the GPU, this will reduce RAM usage and use VRAM instead.

<!-- README_GGUF.md-provided-files end -->

<!-- README_GGUF.md-how-to-download start -->

## How to download GGUF files

**Note for manual downloaders:** You almost never want to clone the entire repo! Multiple different quantisation formats are provided, and most users only want to pick and download a single file.

The following clients/libraries will automatically download models for you, providing a list of available models to choose from:

* LM Studio

* LoLLMS Web UI

* Faraday.dev

### In `text-generation-webui`

Under Download Model, you can enter the model repo: TheBloke/Mistral-7B-Instruct-v0.2-GGUF and below it, a specific filename to download, such as: mistral-7b-instruct-v0.2.Q4_K_M.gguf.

Then click Download.

### On the command line, including multiple files at once

I recommend using the `huggingface-hub` Python library:

```shell

pip3 install huggingface-hub

```

Then you can download any individual model file to the current directory, at high speed, with a command like this:

```shell

huggingface-cli download TheBloke/Mistral-7B-Instruct-v0.2-GGUF mistral-7b-instruct-v0.2.Q4_K_M.gguf --local-dir . --local-dir-use-symlinks False

```

<details>

<summary>More advanced huggingface-cli download usage (click to read)</summary>

You can also download multiple files at once with a pattern:

```shell

huggingface-cli download TheBloke/Mistral-7B-Instruct-v0.2-GGUF --local-dir . --local-dir-use-symlinks False --include='*Q4_K*gguf'

```

For more documentation on downloading with `huggingface-cli`, please see: [HF -> Hub Python Library -> Download files -> Download from the CLI](https://huggingface.co/docs/huggingface_hub/guides/download#download-from-the-cli).

To accelerate downloads on fast connections (1Gbit/s or higher), install `hf_transfer`:

```shell

pip3 install hf_transfer

```

And set environment variable `HF_HUB_ENABLE_HF_TRANSFER` to `1`:

```shell

HF_HUB_ENABLE_HF_TRANSFER=1 huggingface-cli download TheBloke/Mistral-7B-Instruct-v0.2-GGUF mistral-7b-instruct-v0.2.Q4_K_M.gguf --local-dir . --local-dir-use-symlinks False

```

Windows Command Line users: You can set the environment variable by running `set HF_HUB_ENABLE_HF_TRANSFER=1` before the download command.

</details>

<!-- README_GGUF.md-how-to-download end -->

<!-- README_GGUF.md-how-to-run start -->

## Example `llama.cpp` command

Make sure you are using `llama.cpp` from commit [d0cee0d](https://github.com/ggerganov/llama.cpp/commit/d0cee0d36d5be95a0d9088b674dbb27354107221) or later.

```shell

./main -ngl 35 -m mistral-7b-instruct-v0.2.Q4_K_M.gguf --color -c 32768 --temp 0.7 --repeat_penalty 1.1 -n -1 -p "<s>[INST] {prompt} [/INST]"

```

Change `-ngl 32` to the number of layers to offload to GPU. Remove it if you don't have GPU acceleration.

Change `-c 32768` to the desired sequence length. For extended sequence models - eg 8K, 16K, 32K - the necessary RoPE scaling parameters are read from the GGUF file and set by llama.cpp automatically. Note that longer sequence lengths require much more resources, so you may need to reduce this value.

If you want to have a chat-style conversation, replace the `-p <PROMPT>` argument with `-i -ins`

For other parameters and how to use them, please refer to [the llama.cpp documentation](https://github.com/ggerganov/llama.cpp/blob/master/examples/main/README.md)

## How to run in `text-generation-webui`

Further instructions can be found in the text-generation-webui documentation, here: [text-generation-webui/docs/04 ‐ Model Tab.md](https://github.com/oobabooga/text-generation-webui/blob/main/docs/04%20%E2%80%90%20Model%20Tab.md#llamacpp).

## How to run from Python code

You can use GGUF models from Python using the [llama-cpp-python](https://github.com/abetlen/llama-cpp-python) or [ctransformers](https://github.com/marella/ctransformers) libraries. Note that at the time of writing (Nov 27th 2023), ctransformers has not been updated for some time and is not compatible with some recent models. Therefore I recommend you use llama-cpp-python.

### How to load this model in Python code, using llama-cpp-python

For full documentation, please see: [llama-cpp-python docs](https://abetlen.github.io/llama-cpp-python/).

#### First install the package

Run one of the following commands, according to your system:

```shell

# Base ctransformers with no GPU acceleration

pip install llama-cpp-python

# With NVidia CUDA acceleration

CMAKE_ARGS="-DLLAMA_CUBLAS=on" pip install llama-cpp-python

# Or with OpenBLAS acceleration

CMAKE_ARGS="-DLLAMA_BLAS=ON -DLLAMA_BLAS_VENDOR=OpenBLAS" pip install llama-cpp-python

# Or with CLBLast acceleration

CMAKE_ARGS="-DLLAMA_CLBLAST=on" pip install llama-cpp-python

# Or with AMD ROCm GPU acceleration (Linux only)

CMAKE_ARGS="-DLLAMA_HIPBLAS=on" pip install llama-cpp-python

# Or with Metal GPU acceleration for macOS systems only

CMAKE_ARGS="-DLLAMA_METAL=on" pip install llama-cpp-python

# In windows, to set the variables CMAKE_ARGS in PowerShell, follow this format; eg for NVidia CUDA:

$env:CMAKE_ARGS = "-DLLAMA_OPENBLAS=on"

pip install llama-cpp-python

```

#### Simple llama-cpp-python example code

```python

from llama_cpp import Llama

# Set gpu_layers to the number of layers to offload to GPU. Set to 0 if no GPU acceleration is available on your system.

llm = Llama(

model_path="./mistral-7b-instruct-v0.2.Q4_K_M.gguf", # Download the model file first

n_ctx=32768, # The max sequence length to use - note that longer sequence lengths require much more resources

n_threads=8, # The number of CPU threads to use, tailor to your system and the resulting performance

n_gpu_layers=35 # The number of layers to offload to GPU, if you have GPU acceleration available

)

# Simple inference example

output = llm(

"<s>[INST] {prompt} [/INST]", # Prompt

max_tokens=512, # Generate up to 512 tokens

stop=["</s>"], # Example stop token - not necessarily correct for this specific model! Please check before using.

echo=True # Whether to echo the prompt

)

# Chat Completion API

llm = Llama(model_path="./mistral-7b-instruct-v0.2.Q4_K_M.gguf", chat_format="llama-2") # Set chat_format according to the model you are using

llm.create_chat_completion(

messages = [

{"role": "system", "content": "You are a story writing assistant."},

{

"role": "user",

"content": "Write a story about llamas."

}

]

)

```

## How to use with LangChain

Here are guides on using llama-cpp-python and ctransformers with LangChain:

* [LangChain + llama-cpp-python](https://python.langchain.com/docs/integrations/llms/llamacpp)

* [LangChain + ctransformers](https://python.langchain.com/docs/integrations/providers/ctransformers)

<!-- README_GGUF.md-how-to-run end -->

<!-- footer start -->

<!-- 200823 -->

<!-- original-model-card start -->

# Original model card: Mistral AI_'s Mistral 7B Instruct v0.2

# Model Card for Mistral-7B-Instruct-v0.2

The Mistral-7B-Instruct-v0.2 Large Language Model (LLM) is an improved instruct fine-tuned version of [Mistral-7B-Instruct-v0.1](https://huggingface.co/mistralai/Mistral-7B-Instruct-v0.1).

For full details of this model please read our [paper](https://arxiv.org/abs/2310.06825) and [release blog post](https://mistral.ai/news/la-plateforme/).

## Instruction format

In order to leverage instruction fine-tuning, your prompt should be surrounded by `[INST]` and `[/INST]` tokens. The very first instruction should begin with a begin of sentence id. The next instructions should not. The assistant generation will be ended by the end-of-sentence token id.

E.g.

```

text = "<s>[INST] What is your favourite condiment? [/INST]"

"Well, I'm quite partial to a good squeeze of fresh lemon juice. It adds just the right amount of zesty flavour to whatever I'm cooking up in the kitchen!</s> "

"[INST] Do you have mayonnaise recipes? [/INST]"

```

This format is available as a [chat template](https://huggingface.co/docs/transformers/main/chat_templating) via the `apply_chat_template()` method:

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

device = "cuda" # the device to load the model onto

model = AutoModelForCausalLM.from_pretrained("mistralai/Mistral-7B-Instruct-v0.1")

tokenizer = AutoTokenizer.from_pretrained("mistralai/Mistral-7B-Instruct-v0.1")

messages = [

{"role": "user", "content": "What is your favourite condiment?"},

{"role": "assistant", "content": "Well, I'm quite partial to a good squeeze of fresh lemon juice. It adds just the right amount of zesty flavour to whatever I'm cooking up in the kitchen!"},

{"role": "user", "content": "Do you have mayonnaise recipes?"}

]

encodeds = tokenizer.apply_chat_template(messages, return_tensors="pt")

model_inputs = encodeds.to(device)

model.to(device)

generated_ids = model.generate(model_inputs, max_new_tokens=1000, do_sample=True)

decoded = tokenizer.batch_decode(generated_ids)

print(decoded[0])

```

## Model Architecture

This instruction model is based on Mistral-7B-v0.1, a transformer model with the following architecture choices:

- Grouped-Query Attention

- Sliding-Window Attention

- Byte-fallback BPE tokenizer

## Troubleshooting

- If you see the following error:

```

Traceback (most recent call last):

File "", line 1, in

File "/transformers/models/auto/auto_factory.py", line 482, in from_pretrained

config, kwargs = AutoConfig.from_pretrained(

File "/transformers/models/auto/configuration_auto.py", line 1022, in from_pretrained

config_class = CONFIG_MAPPING[config_dict["model_type"]]

File "/transformers/models/auto/configuration_auto.py", line 723, in getitem

raise KeyError(key)

KeyError: 'mistral'

```

Installing transformers from source should solve the issue

pip install git+https://github.com/huggingface/transformers

This should not be required after transformers-v4.33.4.

## Limitations

The Mistral 7B Instruct model is a quick demonstration that the base model can be easily fine-tuned to achieve compelling performance.

It does not have any moderation mechanisms. We're looking forward to engaging with the community on ways to

make the model finely respect guardrails, allowing for deployment in environments requiring moderated outputs.

## The Mistral AI Team

Albert Jiang, Alexandre Sablayrolles, Arthur Mensch, Blanche Savary, Chris Bamford, Devendra Singh Chaplot, Diego de las Casas, Emma Bou Hanna, Florian Bressand, Gianna Lengyel, Guillaume Bour, Guillaume Lample, Lélio Renard Lavaud, Louis Ternon, Lucile Saulnier, Marie-Anne Lachaux, Pierre Stock, Teven Le Scao, Théophile Gervet, Thibaut Lavril, Thomas Wang, Timothée Lacroix, William El Sayed.

<!-- original-model-card end -->

| {"license": "apache-2.0", "tags": ["finetuned"], "model_name": "Mistral 7B Instruct v0.2", "base_model": "mistralai/Mistral-7B-Instruct-v0.2", "inference": false, "model_creator": "Mistral AI_", "model_type": "mistral", "pipeline_tag": "text-generation", "prompt_template": "<s>[INST] {prompt} [/INST]\n", "quantized_by": "VesperAI"} | VesperAI/Mistral-7B-Instruct-v0.2-gguf | null | [

"gguf",

"finetuned",

"text-generation",

"arxiv:2310.06825",

"base_model:mistralai/Mistral-7B-Instruct-v0.2",

"license:apache-2.0",

"region:us"

]

| null | 2024-04-25T18:10:05+00:00 |

text-generation | transformers |

# KangalKhan-Alpha-Sapphiroid-7B-Fixed

KangalKhan-Alpha-Sapphiroid-7B-Fixed is a merge of the following models using [LazyMergekit](https://colab.research.google.com/drive/1obulZ1ROXHjYLn6PPZJwRR6GzgQogxxb?usp=sharing):

* [kaist-ai/mistral-orpo-capybara-7k](https://huggingface.co/kaist-ai/mistral-orpo-capybara-7k)

* [argilla/CapybaraHermes-2.5-Mistral-7B](https://huggingface.co/argilla/CapybaraHermes-2.5-Mistral-7B)

## 🧩 Configuration

```yaml

slices:

- sources:

- model: kaist-ai/mistral-orpo-capybara-7k

layer_range: [0, 32]

- model: argilla/CapybaraHermes-2.5-Mistral-7B

layer_range: [0, 32]

merge_method: slerp

base_model: kaist-ai/mistral-orpo-capybara-7k

parameters:

t:

- filter: self_attn

value: [0, 0.5, 0.3, 0.7, 1]

- filter: mlp

value: [1, 0.5, 0.7, 0.3, 0]

- value: 0.5

dtype: bfloat16

```

## 💻 Usage

```python

!pip install -qU transformers accelerate

from transformers import AutoTokenizer

import transformers

import torch

model = "Yuma42/KangalKhan-Alpha-Sapphiroid-7B-Fixed"

messages = [{"role": "user", "content": "What is a large language model?"}]

tokenizer = AutoTokenizer.from_pretrained(model)

prompt = tokenizer.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

pipeline = transformers.pipeline(

"text-generation",

model=model,

torch_dtype=torch.float16,

device_map="auto",

)

outputs = pipeline(prompt, max_new_tokens=256, do_sample=True, temperature=0.7, top_k=50, top_p=0.95)

print(outputs[0]["generated_text"])

``` | {"language": ["en"], "license": "apache-2.0", "tags": ["merge", "mergekit", "lazymergekit", "kaist-ai/mistral-orpo-capybara-7k", "argilla/CapybaraHermes-2.5-Mistral-7B"], "base_model": ["kaist-ai/mistral-orpo-capybara-7k", "argilla/CapybaraHermes-2.5-Mistral-7B"]} | Yuma42/KangalKhan-Alpha-Sapphiroid-7B-Fixed | null | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"merge",

"mergekit",

"lazymergekit",

"kaist-ai/mistral-orpo-capybara-7k",

"argilla/CapybaraHermes-2.5-Mistral-7B",

"conversational",

"en",

"base_model:kaist-ai/mistral-orpo-capybara-7k",

"base_model:argilla/CapybaraHermes-2.5-Mistral-7B",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| null | 2024-04-25T18:10:06+00:00 |

null | null | https://civitai.com/models/418957/lawine-sousou-no-frieren | {"license": "creativeml-openrail-m"} | LarryAIDraw/Lawine_snf_ | null | [

"license:creativeml-openrail-m",

"region:us"

]

| null | 2024-04-25T18:10:08+00:00 |

text-generation | transformers |

# Model Card for alokabhishek/Meta-Llama-3-8B-Instruct-GGUF

<!-- Provide a quick summary of what the model is/does. -->

This repo GGUF quantized version of Meta's meta-llama/Meta-Llama-3-8B-Instruct model using llama.cpp.

## Model Details

- Model creator: [Meta](https://huggingface.co/meta-llama)

- Original model: [Meta-Llama-3-8B-Instruct](https://huggingface.co/meta-llama/Meta-Llama-3-8B-Instruct)

### About GGUF quantization using llama.cpp

- llama.cpp github repo: [llama.cpp github repo](https://github.com/ggerganov/llama.cpp)

- llama-cpp-python github repo: [llama-cpp-python](https://github.com/abetlen/llama-cpp-python)

# How to Get Started with the Model

Use the code below to get started with the model. This code uses llama-cpp-python

```python

import time

import os

import dotenv

import json

import torch

from torch import bfloat16

from llama_cpp import Llama, llama_tokenize, LlamaGrammar

from inference.chat_prompt_format_util import formatted_chat_prompt

from huggingface_hub import login, HfApi

from transformers import (

AutoTokenizer,

AutoModelForCausalLM,

pipeline,

)

prompt_instruction = "You are a helpful, and fun loving assistant. Always answer as jestfully as possible."

user_question = "Why is Hulk always Angry?"

chat_messages = [

{"role": "system", "content": str(prompt_instruction)},

{"role": "user", "content": str(user_question)},

]

model_id = "alokabhishek/Meta-Llama-3-8B-Instruct-GGUF"

model_file = "meta-llama-3-8b-instruct.Q4_K_M.gguf"

tokenizer = AutoTokenizer.from_pretrained(model_id, use_fast=True)

model_name = Llama.from_pretrained(

repo_id=model_id,

filename=model_file,

verbose=False,

)

terminators = [

"<|end_of_text|>",

"<|eot_id|>",

"assistant\n\n",

]

llm_response = model_name.create_chat_completion(

messages=chat_messages,

max_tokens=1024,

temperature=1,

top_k=50,

top_p=1,

stop=terminators,

)

print("\nllm_response: ", llm_response)

llm_answer = llm_response["choices"][0]["message"]["content"]

print("\nllm_answer: ", llm_answer)

```

## Original Meta's Llama-3 Model Card:

Meta developed and released the Meta Llama 3 family of large language models (LLMs), a collection of pretrained and instruction tuned generative text models in 8 and 70B sizes. The Llama 3 instruction tuned models are optimized for dialogue use cases and outperform many of the available open source chat models on common industry benchmarks. Further, in developing these models, we took great care to optimize helpfulness and safety.

**Model developers** Meta

**Variations** Llama 3 comes in two sizes — 8B and 70B parameters — in pre-trained and instruction tuned variants.

**Input** Models input text only.

**Output** Models generate text and code only.

**Model Architecture** Llama 3 is an auto-regressive language model that uses an optimized transformer architecture. The tuned versions use supervised fine-tuning (SFT) and reinforcement learning with human feedback (RLHF) to align with human preferences for helpfulness and safety.

<table>

<tr>

<td>

</td>

<td><strong>Training Data</strong>

</td>

<td><strong>Params</strong>

</td>

<td><strong>Context length</strong>

</td>

<td><strong>GQA</strong>

</td>

<td><strong>Token count</strong>

</td>

<td><strong>Knowledge cutoff</strong>

</td>

</tr>

<tr>

<td rowspan="2" >Llama 3

</td>

<td rowspan="2" >A new mix of publicly available online data.

</td>

<td>8B

</td>

<td>8k

</td>

<td>Yes

</td>

<td rowspan="2" >15T+

</td>

<td>March, 2023

</td>

</tr>

<tr>

<td>70B

</td>

<td>8k

</td>

<td>Yes

</td>

<td>December, 2023

</td>

</tr>

</table>

**Llama 3 family of models**. Token counts refer to pretraining data only. Both the 8 and 70B versions use Grouped-Query Attention (GQA) for improved inference scalability.

**Model Release Date** April 18, 2024.

**Status** This is a static model trained on an offline dataset. Future versions of the tuned models will be released as we improve model safety with community feedback.

**License** A custom commercial license is available at: [https://llama.meta.com/llama3/license](https://llama.meta.com/llama3/license)

Where to send questions or comments about the model Instructions on how to provide feedback or comments on the model can be found in the model [README](https://github.com/meta-llama/llama3). For more technical information about generation parameters and recipes for how to use Llama 3 in applications, please go [here](https://github.com/meta-llama/llama-recipes).

## Intended Use

**Intended Use Cases** Llama 3 is intended for commercial and research use in English. Instruction tuned models are intended for assistant-like chat, whereas pretrained models can be adapted for a variety of natural language generation tasks.

**Out-of-scope** Use in any manner that violates applicable laws or regulations (including trade compliance laws). Use in any other way that is prohibited by the Acceptable Use Policy and Llama 3 Community License. Use in languages other than English**.

**Note: Developers may fine-tune Llama 3 models for languages beyond English provided they comply with the Llama 3 Community License and the Acceptable Use Policy.

## How to use

This repository contains two versions of Meta-Llama-3-8B-Instruct, for use with transformers and with the original `llama3` codebase.

### Use with transformers

You can run conversational inference using the Transformers pipeline abstraction, or by leveraging the Auto classes with the `generate()` function. Let's see examples of both.

#### Transformers pipeline

```python

import transformers

import torch

model_id = "meta-llama/Meta-Llama-3-8B-Instruct"

pipeline = transformers.pipeline(

"text-generation",

model=model_id,

model_kwargs={"torch_dtype": torch.bfloat16},

device_map="auto",

)

messages = [

{"role": "system", "content": "You are a pirate chatbot who always responds in pirate speak!"},

{"role": "user", "content": "Who are you?"},

]

prompt = pipeline.tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True

)

terminators = [

pipeline.tokenizer.eos_token_id,

pipeline.tokenizer.convert_tokens_to_ids("<|eot_id|>")

]

outputs = pipeline(

prompt,

max_new_tokens=256,

eos_token_id=terminators,

do_sample=True,

temperature=0.6,

top_p=0.9,

)

print(outputs[0]["generated_text"][len(prompt):])

```

#### Transformers AutoModelForCausalLM

```python

from transformers import AutoTokenizer, AutoModelForCausalLM

import torch

model_id = "meta-llama/Meta-Llama-3-8B-Instruct"

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(

model_id,

torch_dtype=torch.bfloat16,

device_map="auto",

)

messages = [

{"role": "system", "content": "You are a pirate chatbot who always responds in pirate speak!"},

{"role": "user", "content": "Who are you?"},

]

input_ids = tokenizer.apply_chat_template(

messages,

add_generation_prompt=True,

return_tensors="pt"

).to(model.device)

terminators = [

tokenizer.eos_token_id,

tokenizer.convert_tokens_to_ids("<|eot_id|>")

]

outputs = model.generate(

input_ids,

max_new_tokens=256,

eos_token_id=terminators,

do_sample=True,

temperature=0.6,

top_p=0.9,

)

response = outputs[0][input_ids.shape[-1]:]

print(tokenizer.decode(response, skip_special_tokens=True))

```

### Use with `llama3`

Please, follow the instructions in the [repository](https://github.com/meta-llama/llama3)

To download Original checkpoints, see the example command below leveraging `huggingface-cli`:

```

huggingface-cli download meta-llama/Meta-Llama-3-8B-Instruct --include "original/*" --local-dir Meta-Llama-3-8B-Instruct

```

For Hugging Face support, we recommend using transformers or TGI, but a similar command works.

## Hardware and Software

**Training Factors** We used custom training libraries, Meta's Research SuperCluster, and production clusters for pretraining. Fine-tuning, annotation, and evaluation were also performed on third-party cloud compute.

**Carbon Footprint Pretraining utilized a cumulative** 7.7M GPU hours of computation on hardware of type H100-80GB (TDP of 700W). Estimated total emissions were 2290 tCO2eq, 100% of which were offset by Meta’s sustainability program.

<table>

<tr>

<td>

</td>

<td><strong>Time (GPU hours)</strong>

</td>

<td><strong>Power Consumption (W)</strong>

</td>

<td><strong>Carbon Emitted(tCO2eq)</strong>

</td>

</tr>

<tr>

<td>Llama 3 8B

</td>

<td>1.3M

</td>

<td>700

</td>

<td>390

</td>

</tr>

<tr>

<td>Llama 3 70B

</td>

<td>6.4M

</td>

<td>700

</td>

<td>1900

</td>

</tr>

<tr>

<td>Total

</td>

<td>7.7M

</td>

<td>

</td>

<td>2290

</td>

</tr>

</table>

**CO2 emissions during pre-training**. Time: total GPU time required for training each model. Power Consumption: peak power capacity per GPU device for the GPUs used adjusted for power usage efficiency. 100% of the emissions are directly offset by Meta's sustainability program, and because we are openly releasing these models, the pretraining costs do not need to be incurred by others.

## Training Data

**Overview** Llama 3 was pretrained on over 15 trillion tokens of data from publicly available sources. The fine-tuning data includes publicly available instruction datasets, as well as over 10M human-annotated examples. Neither the pretraining nor the fine-tuning datasets include Meta user data.

**Data Freshness** The pretraining data has a cutoff of March 2023 for the 7B and December 2023 for the 70B models respectively.

## Benchmarks

In this section, we report the results for Llama 3 models on standard automatic benchmarks. For all the evaluations, we use our internal evaluations library. For details on the methodology see [here](https://github.com/meta-llama/llama3/blob/main/eval_methodology.md).

### Base pretrained models

<table>

<tr>

<td><strong>Category</strong>

</td>

<td><strong>Benchmark</strong>

</td>

<td><strong>Llama 3 8B</strong>

</td>

<td><strong>Llama2 7B</strong>

</td>

<td><strong>Llama2 13B</strong>

</td>

<td><strong>Llama 3 70B</strong>

</td>

<td><strong>Llama2 70B</strong>

</td>

</tr>

<tr>

<td rowspan="6" >General

</td>

<td>MMLU (5-shot)

</td>

<td>66.6

</td>

<td>45.7

</td>

<td>53.8

</td>

<td>79.5

</td>

<td>69.7

</td>

</tr>

<tr>

<td>AGIEval English (3-5 shot)

</td>

<td>45.9

</td>

<td>28.8

</td>

<td>38.7

</td>

<td>63.0

</td>

<td>54.8

</td>

</tr>

<tr>

<td>CommonSenseQA (7-shot)

</td>

<td>72.6

</td>

<td>57.6

</td>

<td>67.6

</td>

<td>83.8

</td>

<td>78.7

</td>

</tr>

<tr>

<td>Winogrande (5-shot)

</td>

<td>76.1

</td>

<td>73.3

</td>

<td>75.4

</td>

<td>83.1

</td>

<td>81.8

</td>

</tr>

<tr>

<td>BIG-Bench Hard (3-shot, CoT)

</td>

<td>61.1

</td>

<td>38.1

</td>

<td>47.0

</td>

<td>81.3

</td>

<td>65.7

</td>

</tr>

<tr>

<td>ARC-Challenge (25-shot)

</td>

<td>78.6

</td>

<td>53.7

</td>

<td>67.6

</td>

<td>93.0

</td>

<td>85.3

</td>

</tr>

<tr>

<td>Knowledge reasoning

</td>

<td>TriviaQA-Wiki (5-shot)

</td>

<td>78.5

</td>

<td>72.1

</td>

<td>79.6

</td>

<td>89.7

</td>

<td>87.5

</td>

</tr>

<tr>

<td rowspan="4" >Reading comprehension

</td>

<td>SQuAD (1-shot)

</td>

<td>76.4

</td>

<td>72.2

</td>

<td>72.1

</td>

<td>85.6

</td>

<td>82.6

</td>

</tr>

<tr>

<td>QuAC (1-shot, F1)

</td>

<td>44.4

</td>

<td>39.6

</td>

<td>44.9

</td>

<td>51.1

</td>

<td>49.4

</td>

</tr>

<tr>

<td>BoolQ (0-shot)

</td>

<td>75.7

</td>

<td>65.5

</td>

<td>66.9

</td>

<td>79.0

</td>

<td>73.1

</td>

</tr>

<tr>

<td>DROP (3-shot, F1)

</td>

<td>58.4

</td>

<td>37.9

</td>

<td>49.8

</td>

<td>79.7

</td>

<td>70.2

</td>

</tr>

</table>

### Instruction tuned models

<table>

<tr>

<td><strong>Benchmark</strong>

</td>

<td><strong>Llama 3 8B</strong>

</td>

<td><strong>Llama 2 7B</strong>

</td>

<td><strong>Llama 2 13B</strong>

</td>

<td><strong>Llama 3 70B</strong>

</td>

<td><strong>Llama 2 70B</strong>

</td>

</tr>

<tr>

<td>MMLU (5-shot)

</td>

<td>68.4

</td>

<td>34.1

</td>

<td>47.8

</td>

<td>82.0

</td>

<td>52.9

</td>

</tr>

<tr>

<td>GPQA (0-shot)

</td>

<td>34.2

</td>

<td>21.7

</td>

<td>22.3

</td>

<td>39.5

</td>

<td>21.0

</td>

</tr>

<tr>

<td>HumanEval (0-shot)

</td>

<td>62.2

</td>

<td>7.9

</td>

<td>14.0

</td>

<td>81.7

</td>

<td>25.6

</td>

</tr>

<tr>

<td>GSM-8K (8-shot, CoT)

</td>

<td>79.6

</td>

<td>25.7

</td>

<td>77.4

</td>

<td>93.0

</td>

<td>57.5

</td>

</tr>

<tr>

<td>MATH (4-shot, CoT)

</td>

<td>30.0

</td>

<td>3.8

</td>

<td>6.7

</td>

<td>50.4

</td>

<td>11.6

</td>

</tr>

</table>

### Responsibility & Safety

We believe that an open approach to AI leads to better, safer products, faster innovation, and a bigger overall market. We are committed to Responsible AI development and took a series of steps to limit misuse and harm and support the open source community.

Foundation models are widely capable technologies that are built to be used for a diverse range of applications. They are not designed to meet every developer preference on safety levels for all use cases, out-of-the-box, as those by their nature will differ across different applications.

Rather, responsible LLM-application deployment is achieved by implementing a series of safety best practices throughout the development of such applications, from the model pre-training, fine-tuning and the deployment of systems composed of safeguards to tailor the safety needs specifically to the use case and audience.

As part of the Llama 3 release, we updated our [Responsible Use Guide](https://llama.meta.com/responsible-use-guide/) to outline the steps and best practices for developers to implement model and system level safety for their application. We also provide a set of resources including [Meta Llama Guard 2](https://llama.meta.com/purple-llama/) and [Code Shield](https://llama.meta.com/purple-llama/) safeguards. These tools have proven to drastically reduce residual risks of LLM Systems, while maintaining a high level of helpfulness. We encourage developers to tune and deploy these safeguards according to their needs and we provide a [reference implementation](https://github.com/meta-llama/llama-recipes/tree/main/recipes/responsible_ai) to get you started.

#### Llama 3-Instruct

As outlined in the Responsible Use Guide, some trade-off between model helpfulness and model alignment is likely unavoidable. Developers should exercise discretion about how to weigh the benefits of alignment and helpfulness for their specific use case and audience. Developers should be mindful of residual risks when using Llama models and leverage additional safety tools as needed to reach the right safety bar for their use case.

<span style="text-decoration:underline;">Safety</span>

For our instruction tuned model, we conducted extensive red teaming exercises, performed adversarial evaluations and implemented safety mitigations techniques to lower residual risks. As with any Large Language Model, residual risks will likely remain and we recommend that developers assess these risks in the context of their use case. In parallel, we are working with the community to make AI safety benchmark standards transparent, rigorous and interpretable.

<span style="text-decoration:underline;">Refusals</span>

In addition to residual risks, we put a great emphasis on model refusals to benign prompts. Over-refusing not only can impact the user experience but could even be harmful in certain contexts as well. We’ve heard the feedback from the developer community and improved our fine tuning to ensure that Llama 3 is significantly less likely to falsely refuse to answer prompts than Llama 2.

We built internal benchmarks and developed mitigations to limit false refusals making Llama 3 our most helpful model to date.

#### Responsible release

In addition to responsible use considerations outlined above, we followed a rigorous process that requires us to take extra measures against misuse and critical risks before we make our release decision.

Misuse

If you access or use Llama 3, you agree to the Acceptable Use Policy. The most recent copy of this policy can be found at [https://llama.meta.com/llama3/use-policy/](https://llama.meta.com/llama3/use-policy/).

#### Critical risks

<span style="text-decoration:underline;">CBRNE</span> (Chemical, Biological, Radiological, Nuclear, and high yield Explosives)

We have conducted a two fold assessment of the safety of the model in this area:

* Iterative testing during model training to assess the safety of responses related to CBRNE threats and other adversarial risks.

* Involving external CBRNE experts to conduct an uplift test assessing the ability of the model to accurately provide expert knowledge and reduce barriers to potential CBRNE misuse, by reference to what can be achieved using web search (without the model).

### <span style="text-decoration:underline;">Cyber Security </span>

We have evaluated Llama 3 with CyberSecEval, Meta’s cybersecurity safety eval suite, measuring Llama 3’s propensity to suggest insecure code when used as a coding assistant, and Llama 3’s propensity to comply with requests to help carry out cyber attacks, where attacks are defined by the industry standard MITRE ATT&CK cyber attack ontology. On our insecure coding and cyber attacker helpfulness tests, Llama 3 behaved in the same range or safer than models of [equivalent coding capability](https://huggingface.co/spaces/facebook/CyberSecEval).

### <span style="text-decoration:underline;">Child Safety</span>

Child Safety risk assessments were conducted using a team of experts, to assess the model’s capability to produce outputs that could result in Child Safety risks and inform on any necessary and appropriate risk mitigations via fine tuning. We leveraged those expert red teaming sessions to expand the coverage of our evaluation benchmarks through Llama 3 model development. For Llama 3, we conducted new in-depth sessions using objective based methodologies to assess the model risks along multiple attack vectors. We also partnered with content specialists to perform red teaming exercises assessing potentially violating content while taking account of market specific nuances or experiences.

### Community

Generative AI safety requires expertise and tooling, and we believe in the strength of the open community to accelerate its progress. We are active members of open consortiums, including the AI Alliance, Partnership in AI and MLCommons, actively contributing to safety standardization and transparency. We encourage the community to adopt taxonomies like the MLCommons Proof of Concept evaluation to facilitate collaboration and transparency on safety and content evaluations. Our Purple Llama tools are open sourced for the community to use and widely distributed across ecosystem partners including cloud service providers. We encourage community contributions to our [Github repository](https://github.com/meta-llama/PurpleLlama).

Finally, we put in place a set of resources including an [output reporting mechanism](https://developers.facebook.com/llama_output_feedback) and [bug bounty program](https://www.facebook.com/whitehat) to continuously improve the Llama technology with the help of the community.

## Ethical Considerations and Limitations

The core values of Llama 3 are openness, inclusivity and helpfulness. It is meant to serve everyone, and to work for a wide range of use cases. It is thus designed to be accessible to people across many different backgrounds, experiences and perspectives. Llama 3 addresses users and their needs as they are, without insertion unnecessary judgment or normativity, while reflecting the understanding that even content that may appear problematic in some cases can serve valuable purposes in others. It respects the dignity and autonomy of all users, especially in terms of the values of free thought and expression that power innovation and progress.

But Llama 3 is a new technology, and like any new technology, there are risks associated with its use. Testing conducted to date has been in English, and has not covered, nor could it cover, all scenarios. For these reasons, as with all LLMs, Llama 3’s potential outputs cannot be predicted in advance, and the model may in some instances produce inaccurate, biased or other objectionable responses to user prompts. Therefore, before deploying any applications of Llama 3 models, developers should perform safety testing and tuning tailored to their specific applications of the model. As outlined in the Responsible Use Guide, we recommend incorporating [Purple Llama](https://github.com/facebookresearch/PurpleLlama) solutions into your workflows and specifically [Llama Guard](https://ai.meta.com/research/publications/llama-guard-llm-based-input-output-safeguard-for-human-ai-conversations/) which provides a base model to filter input and output prompts to layer system-level safety on top of model-level safety.

Please see the Responsible Use Guide available at [http://llama.meta.com/responsible-use-guide](http://llama.meta.com/responsible-use-guide)

## Citation instructions

@article{llama3modelcard,

title={Llama 3 Model Card},

author={AI@Meta},

year={2024},

url = {https://github.com/meta-llama/llama3/blob/main/MODEL_CARD.md}

}

## Contributors

Aaditya Singh; Aaron Grattafiori; Abhimanyu Dubey; Abhinav Jauhri; Abhinav Pandey; Abhishek Kadian; Adam Kelsey; Adi Gangidi; Ahmad Al-Dahle; Ahuva Goldstand; Aiesha Letman; Ajay Menon; Akhil Mathur; Alan Schelten; Alex Vaughan; Amy Yang; Andrei Lupu; Andres Alvarado; Andrew Gallagher; Andrew Gu; Andrew Ho; Andrew Poulton; Andrew Ryan; Angela Fan; Ankit Ramchandani; Anthony Hartshorn; Archi Mitra; Archie Sravankumar; Artem Korenev; Arun Rao; Ashley Gabriel; Ashwin Bharambe; Assaf Eisenman; Aston Zhang; Aurelien Rodriguez; Austen Gregerson; Ava Spataru; Baptiste Roziere; Ben Maurer; Benjamin Leonhardi; Bernie Huang; Bhargavi Paranjape; Bing Liu; Binh Tang; Bobbie Chern; Brani Stojkovic; Brian Fuller; Catalina Mejia Arenas; Chao Zhou; Charlotte Caucheteux; Chaya Nayak; Ching-Hsiang Chu; Chloe Bi; Chris Cai; Chris Cox; Chris Marra; Chris McConnell; Christian Keller; Christoph Feichtenhofer; Christophe Touret; Chunyang Wu; Corinne Wong; Cristian Canton Ferrer; Damien Allonsius; Daniel Kreymer; Daniel Haziza; Daniel Li; Danielle Pintz; Danny Livshits; Danny Wyatt; David Adkins; David Esiobu; David Xu; Davide Testuggine; Delia David; Devi Parikh; Dhruv Choudhary; Dhruv Mahajan; Diana Liskovich; Diego Garcia-Olano; Diego Perino; Dieuwke Hupkes; Dingkang Wang; Dustin Holland; Egor Lakomkin; Elina Lobanova; Xiaoqing Ellen Tan; Emily Dinan; Eric Smith; Erik Brinkman; Esteban Arcaute; Filip Radenovic; Firat Ozgenel; Francesco Caggioni; Frank Seide; Frank Zhang; Gabriel Synnaeve; Gabriella Schwarz; Gabrielle Lee; Gada Badeer; Georgia Anderson; Graeme Nail; Gregoire Mialon; Guan Pang; Guillem Cucurell; Hailey Nguyen; Hannah Korevaar; Hannah Wang; Haroun Habeeb; Harrison Rudolph; Henry Aspegren; Hu Xu; Hugo Touvron; Iga Kozlowska; Igor Molybog; Igor Tufanov; Iliyan Zarov; Imanol Arrieta Ibarra; Irina-Elena Veliche; Isabel Kloumann; Ishan Misra; Ivan Evtimov; Jacob Xu; Jade Copet; Jake Weissman; Jan Geffert; Jana Vranes; Japhet Asher; Jason Park; Jay Mahadeokar; Jean-Baptiste Gaya; Jeet Shah; Jelmer van der Linde; Jennifer Chan; Jenny Hong; Jenya Lee; Jeremy Fu; Jeremy Teboul; Jianfeng Chi; Jianyu Huang; Jie Wang; Jiecao Yu; Joanna Bitton; Joe Spisak; Joelle Pineau; Jon Carvill; Jongsoo Park; Joseph Rocca; Joshua Johnstun; Junteng Jia; Kalyan Vasuden Alwala; Kam Hou U; Kate Plawiak; Kartikeya Upasani; Kaushik Veeraraghavan; Ke Li; Kenneth Heafield; Kevin Stone; Khalid El-Arini; Krithika Iyer; Kshitiz Malik; Kuenley Chiu; Kunal Bhalla; Kyle Huang; Lakshya Garg; Lauren Rantala-Yeary; Laurens van der Maaten; Lawrence Chen; Leandro Silva; Lee Bell; Lei Zhang; Liang Tan; Louis Martin; Lovish Madaan; Luca Wehrstedt; Lukas Blecher; Luke de Oliveira; Madeline Muzzi; Madian Khabsa; Manav Avlani; Mannat Singh; Manohar Paluri; Mark Zuckerberg; Marcin Kardas; Martynas Mankus; Mathew Oldham; Mathieu Rita; Matthew Lennie; Maya Pavlova; Meghan Keneally; Melanie Kambadur; Mihir Patel; Mikayel Samvelyan; Mike Clark; Mike Lewis; Min Si; Mitesh Kumar Singh; Mo Metanat; Mona Hassan; Naman Goyal; Narjes Torabi; Nicolas Usunier; Nikolay Bashlykov; Nikolay Bogoychev; Niladri Chatterji; Ning Dong; Oliver Aobo Yang; Olivier Duchenne; Onur Celebi; Parth Parekh; Patrick Alrassy; Paul Saab; Pavan Balaji; Pedro Rittner; Pengchuan Zhang; Pengwei Li; Petar Vasic; Peter Weng; Polina Zvyagina; Prajjwal Bhargava; Pratik Dubal; Praveen Krishnan; Punit Singh Koura; Qing He; Rachel Rodriguez; Ragavan Srinivasan; Rahul Mitra; Ramon Calderer; Raymond Li; Robert Stojnic; Roberta Raileanu; Robin Battey; Rocky Wang; Rohit Girdhar; Rohit Patel; Romain Sauvestre; Ronnie Polidoro; Roshan Sumbaly; Ross Taylor; Ruan Silva; Rui Hou; Rui Wang; Russ Howes; Ruty Rinott; Saghar Hosseini; Sai Jayesh Bondu; Samyak Datta; Sanjay Singh; Sara Chugh; Sargun Dhillon; Satadru Pan; Sean Bell; Sergey Edunov; Shaoliang Nie; Sharan Narang; Sharath Raparthy; Shaun Lindsay; Sheng Feng; Sheng Shen; Shenghao Lin; Shiva Shankar; Shruti Bhosale; Shun Zhang; Simon Vandenhende; Sinong Wang; Seohyun Sonia Kim; Soumya Batra; Sten Sootla; Steve Kehoe; Suchin Gururangan; Sumit Gupta; Sunny Virk; Sydney Borodinsky; Tamar Glaser; Tamar Herman; Tamara Best; Tara Fowler; Thomas Georgiou; Thomas Scialom; Tianhe Li; Todor Mihaylov; Tong Xiao; Ujjwal Karn; Vedanuj Goswami; Vibhor Gupta; Vignesh Ramanathan; Viktor Kerkez; Vinay Satish Kumar; Vincent Gonguet; Vish Vogeti; Vlad Poenaru; Vlad Tiberiu Mihailescu; Vladan Petrovic; Vladimir Ivanov; Wei Li; Weiwei Chu; Wenhan Xiong; Wenyin Fu; Wes Bouaziz; Whitney Meers; Will Constable; Xavier Martinet; Xiaojian Wu; Xinbo Gao; Xinfeng Xie; Xuchao Jia; Yaelle Goldschlag; Yann LeCun; Yashesh Gaur; Yasmine Babaei; Ye Qi; Yenda Li; Yi Wen; Yiwen Song; Youngjin Nam; Yuchen Hao; Yuchen Zhang; Yun Wang; Yuning Mao; Yuzi He; Zacharie Delpierre Coudert; Zachary DeVito; Zahra Hankir; Zhaoduo Wen; Zheng Yan; Zhengxing Chen; Zhenyu Yang; Zoe Papakipos

| {"license": "other", "library_name": "transformers", "tags": ["GGUF", "llama-3", "llama", "Q4_K_M", "Q5_K_M", "meta", "facebook", "quantized", "8b"], "license_name": "llama3", "license_link": "LICENSE", "pipeline_tag": "text-generation"} | alokabhishek/Meta-Llama-3-8B-Instruct-GGUF | null | [

"transformers",

"safetensors",

"gguf",

"llama",

"text-generation",

"GGUF",

"llama-3",

"Q4_K_M",

"Q5_K_M",

"meta",

"facebook",

"quantized",

"8b",

"conversational",

"license:other",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| null | 2024-04-25T18:10:14+00:00 |

null | null | https://civitai.com/models/150021/aqua-konosuba-lora | {"license": "creativeml-openrail-m"} | LarryAIDraw/aqua-10 | null | [

"license:creativeml-openrail-m",

"region:us"

]

| null | 2024-04-25T18:10:33+00:00 |

null | null | https://civitai.com/models/150383/megumin-konosuba-lora | {"license": "creativeml-openrail-m"} | LarryAIDraw/megumin-10 | null | [

"license:creativeml-openrail-m",

"region:us"

]

| null | 2024-04-25T18:10:53+00:00 |

null | null | https://civitai.com/models/132043/aqua-konosuba-anime-character | {"license": "creativeml-openrail-m"} | LarryAIDraw/Aqua_Konosuba | null | [

"license:creativeml-openrail-m",

"region:us"

]

| null | 2024-04-25T18:11:13+00:00 |

null | peft |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# saiga_task_double_lora350

This model is a fine-tuned version of [TheBloke/Llama-2-7B-fp16](https://huggingface.co/TheBloke/Llama-2-7B-fp16) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 3.6634

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 2

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 10

- total_train_batch_size: 20

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- training_steps: 350

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 1.5302 | 9.26 | 50 | 2.1777 |

| 1.0061 | 18.52 | 100 | 2.4897 |

| 0.5505 | 27.78 | 150 | 2.8614 |

| 0.2832 | 37.04 | 200 | 3.2451 |

| 0.1525 | 46.3 | 250 | 3.5339 |

| 0.1026 | 55.56 | 300 | 3.6407 |

| 0.0902 | 64.81 | 350 | 3.6634 |

### Framework versions

- PEFT 0.10.0

- Transformers 4.36.2

- Pytorch 2.2.2+cu121

- Datasets 2.19.0

- Tokenizers 0.15.2 | {"library_name": "peft", "tags": ["generated_from_trainer"], "base_model": "TheBloke/Llama-2-7B-fp16", "model-index": [{"name": "saiga_task_double_lora350", "results": []}]} | marcus2000/saiga_task_double_lora350 | null | [

"peft",

"safetensors",

"generated_from_trainer",

"base_model:TheBloke/Llama-2-7B-fp16",

"region:us"

]

| null | 2024-04-25T18:12:14+00:00 |

null | null | {} | ee111/anuvjain11 | null | [

"region:us"

]

| null | 2024-04-25T18:12:36+00:00 |

|

text-generation | transformers | {} | laitrongduc/llama-2-7b-miniguanaco | null | [

"transformers",

"pytorch",

"llama",

"text-generation",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| null | 2024-04-25T18:13:22+00:00 |

|

null | null | {} | ashishp-wiai/Rice_LoRA_80-2024-04-25 | null | [

"safetensors",

"region:us"

]

| null | 2024-04-25T18:13:29+00:00 |

|

text-generation | transformers |

# Uploaded model

- **Developed by:** 1024m

- **License:** apache-2.0

- **Task** WASSA Shared Task 1A 2024**

- **Finetuned from model :** unsloth/mistral-7b-bnb-4bit

This mistral model was trained 2x faster with [Unsloth](https://github.com/unslothai/unsloth) and Huggingface's TRL library.

[<img src="https://raw.githubusercontent.com/unslothai/unsloth/main/images/unsloth%20made%20with%20love.png" width="200"/>](https://github.com/unslothai/unsloth)

prompt format :

-"""Below is an instruction that describes a task, paired with an input that provides further context. Write a response that appropriately completes the request.

-### Instruction:

-{Given the input text , classify it based on what emotion is being exibited among the following : Joy/Neutral/Anger/Love/Sadness/Fear. Respond with only one emotion only among the options given. Respond with ONLY ONE word and nothing else. }

-### Input:

-{} ( add input text here and remove this text )

-### Response:

-{} ( leave blank and remove this text ) """

| {"language": ["en"], "license": "apache-2.0", "tags": ["text-generation-inference", "transformers", "unsloth", "mistral", "trl", "sft"], "base_model": "unsloth/mistral-7b-bnb-4bit"} | 1024m/MISTRAL7B-01-EXALT1A-4bit | null | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"text-generation-inference",

"unsloth",

"trl",

"sft",

"en",

"base_model:unsloth/mistral-7b-bnb-4bit",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"4-bit",

"region:us"

]

| null | 2024-04-25T18:14:23+00:00 |

reinforcement-learning | stable-baselines3 |

# **PPO** Agent playing **LunarLander-v2**

This is a trained model of a **PPO** agent playing **LunarLander-v2**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

| {"library_name": "stable-baselines3", "tags": ["LunarLander-v2", "deep-reinforcement-learning", "reinforcement-learning", "stable-baselines3"], "model-index": [{"name": "PPO", "results": [{"task": {"type": "reinforcement-learning", "name": "reinforcement-learning"}, "dataset": {"name": "LunarLander-v2", "type": "LunarLander-v2"}, "metrics": [{"type": "mean_reward", "value": "284.80 +/- 18.80", "name": "mean_reward", "verified": false}]}]}]} | Ishan009/LunarLander-v2 | null | [

"stable-baselines3",

"LunarLander-v2",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

]

| null | 2024-04-25T18:15:38+00:00 |

null | peft |

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

### Framework versions

- PEFT 0.10.0 | {"library_name": "peft", "base_model": "tiiuae/falcon-7b"} | ClaudiaIoana550/nou_try7 | null | [

"peft",

"arxiv:1910.09700",

"base_model:tiiuae/falcon-7b",

"region:us"

]

| null | 2024-04-25T18:15:52+00:00 |

null | fastai |

# Amazing!

🥳 Congratulations on hosting your fastai model on the Hugging Face Hub!

# Some next steps

1. Fill out this model card with more information (see the template below and the [documentation here](https://huggingface.co/docs/hub/model-repos))!

2. Create a demo in Gradio or Streamlit using 🤗 Spaces ([documentation here](https://huggingface.co/docs/hub/spaces)).

3. Join the fastai community on the [Fastai Discord](https://discord.com/invite/YKrxeNn)!

Greetings fellow fastlearner 🤝! Don't forget to delete this content from your model card.

---

# Model card

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

| {"tags": ["fastai"]} | PablitoGil14/ModelCalzados | null | [

"fastai",

"has_space",

"region:us"

]

| null | 2024-04-25T18:16:09+00:00 |

automatic-speech-recognition | transformers | {} | xeon0618/indic_gujarati | null | [

"transformers",

"safetensors",

"wav2vec2",

"automatic-speech-recognition",

"endpoints_compatible",

"region:us"

]

| null | 2024-04-25T18:16:17+00:00 |

|

null | peft |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

[<img src="https://raw.githubusercontent.com/OpenAccess-AI-Collective/axolotl/main/image/axolotl-badge-web.png" alt="Built with Axolotl" width="200" height="32"/>](https://github.com/OpenAccess-AI-Collective/axolotl)

<details><summary>See axolotl config</summary>

axolotl version: `0.4.0`

```yaml

base_model: NousResearch/Meta-Llama-3-70B

model_type: LlamaForCausalLM

tokenizer_type: PreTrainedTokenizerFast

#overrides_of_model_config:

# rope_scaling:

# type: linear

# factor: 4

special_tokens:

pad_token: "<|end_of_text|>"

gptq: false

gptq_disable_exllama: true

load_in_8bit: false

load_in_4bit: true

strict: false

datasets:

- path: /workspace/axolotl/output.jsonl

ds_type: json

type: completion

data_files:

- /workspace/axolotl/output.jsonl

output_dir: ./2-qlora-out-l3-10

adapter: qlora

lora_model_dir:

sequence_len: 2048

sample_packing: true

eval_sample_packing: true

pad_to_sequence_len: true

lora_r: 32

lora_alpha: 90

lora_dropout: 0.10

lora_target_linear: true

lora_target_modules:

- gate_proj

- down_proj

- up_proj

- q_proj

- v_proj

- k_proj

- o_proj

peft_use_dora: true

wandb_project: kalomaze-model

wandb_entity:

wandb_watch:

wandb_name:

wandb_log_model:

gradient_accumulation_steps: 1

micro_batch_size: 2

num_epochs: 4

# optimizer: paged_adamw_8bit

# optimizer: adamw_bnb_8bit

optimizer: adamw_bnb_8bit

lr_scheduler: cosine

learning_rate: 0.000015

cosine_min_lr_ratio: 0.2

max_grad_norm: 1.0

train_on_inputs: true

group_by_length: false

bf16: true

fp16: false

tf32: false

gradient_checkpointing: true

early_stopping_patience:

resume_from_checkpoint:

local_rank:

logging_steps: 1

xformers_attention:

flash_attention: true

warmup_steps: 0

saves_per_epoch: 2

save_total_limit: 7

debug:

weight_decay: 0.0

# fsdp:

# - full_shard

# - auto_wrap

# fsdp_config:

# fsdp_limit_all_gathers: true

# fsdp_sync_module_states: true

# fsdp_offload_params: false

# fsdp_use_orig_params: false

# fsdp_cpu_ram_efficient_loading: false

# fsdp_auto_wrap_policy: TRANSFORMER_BASED_WRAP

# fsdp_transformer_layer_cls_to_wrap: LlamaDecoderLayer

# fsdp_state_dict_type: FULL_STATE_DICT

seed: 246

```

</details><br>

# 2-qlora-out-l3-10

This model is a fine-tuned version of [NousResearch/Meta-Llama-3-70B](https://huggingface.co/NousResearch/Meta-Llama-3-70B) on the None dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1.5e-05

- train_batch_size: 2

- eval_batch_size: 2

- seed: 246

- distributed_type: multi-GPU

- num_devices: 8

- total_train_batch_size: 16

- total_eval_batch_size: 16

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- num_epochs: 4

### Training results

### Framework versions

- PEFT 0.10.0

- Transformers 4.40.0.dev0

- Pytorch 2.2.1

- Datasets 2.15.0

- Tokenizers 0.15.0 | {"license": "other", "library_name": "peft", "tags": ["generated_from_trainer"], "base_model": "NousResearch/Meta-Llama-3-70B", "model-index": [{"name": "2-qlora-out-l3-10", "results": []}]} | wave-on-discord/llama-3-70b-llc-3 | null | [

"peft",

"llama",

"generated_from_trainer",

"base_model:NousResearch/Meta-Llama-3-70B",

"license:other",

"4-bit",

"region:us"

]

| null | 2024-04-25T18:17:39+00:00 |

null | null |

4-bit [OmniQuant](https://arxiv.org/abs/2308.13137) quantized version of [Phi-3-mini-4k-instruct](https://huggingface.co/microsoft/Phi-3-mini-4k-instruct) with an unquantized embedding layer.

| {"license": "mit"} | numen-tech/Phi-3-mini-4k-instruct-w4a16g128asym_1 | null | [

"arxiv:2308.13137",

"license:mit",

"region:us"

]

| null | 2024-04-25T18:20:22+00:00 |

sentence-similarity | sentence-transformers |

# nomic-embed-text-v1.5: Resizable Production Embeddings with Matryoshka Representation Learning

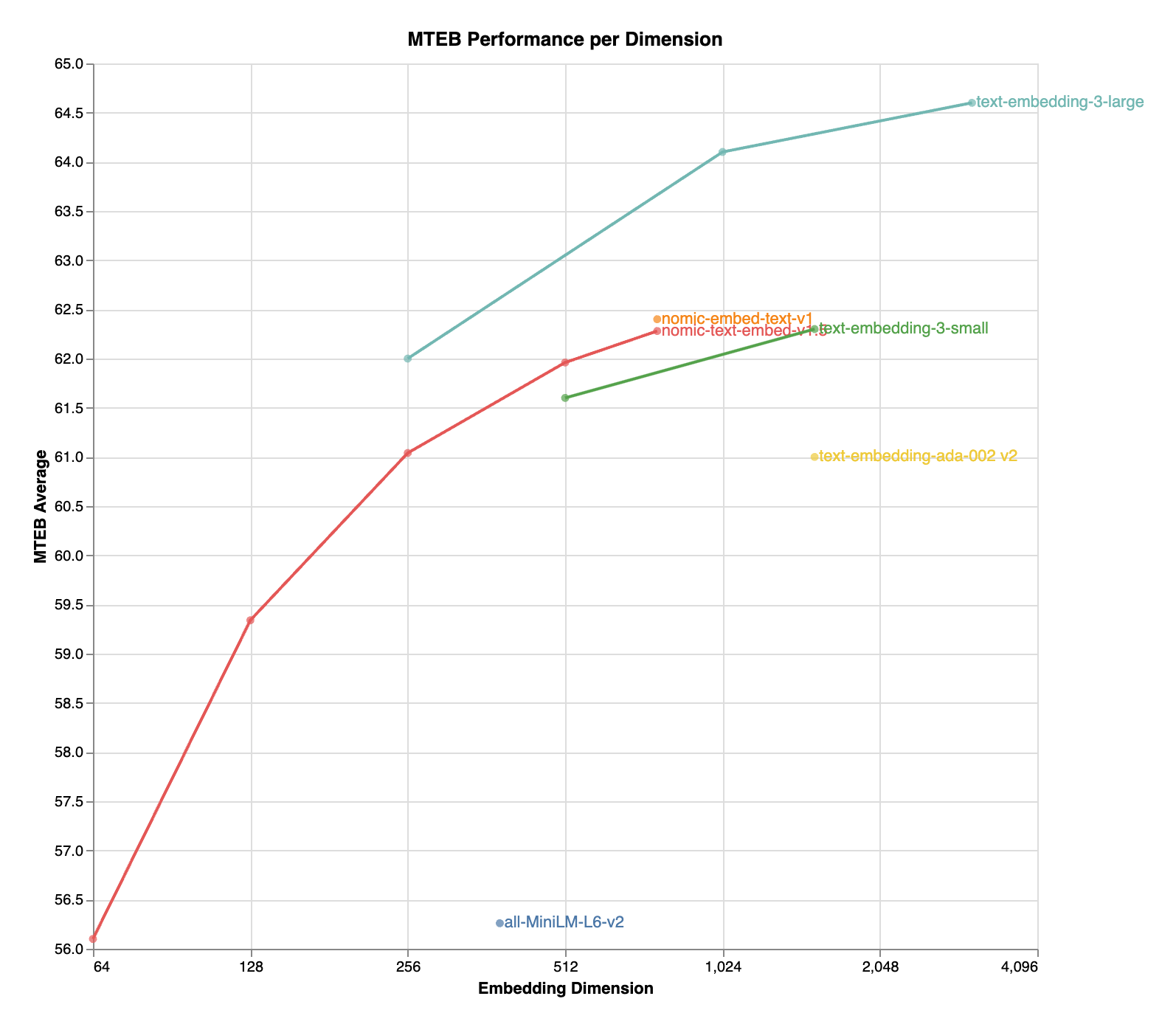

`nomic-embed-text-v1.5` is an improvement upon [Nomic Embed](https://huggingface.co/nomic-ai/nomic-embed-text-v1) that utilizes [Matryoshka Representation Learning](https://arxiv.org/abs/2205.13147) which gives developers the flexibility to trade off the embedding size for a negligible reduction in performance.

| Name | SeqLen | Dimension | MTEB |

| :-------------------------------:| :----- | :-------- | :------: |

| nomic-embed-text-v1 | 8192 | 768 | **62.39** |

| nomic-embed-text-v1.5 | 8192 | 768 | 62.28 |

| nomic-embed-text-v1.5 | 8192 | 512 | 61.96 |

| nomic-embed-text-v1.5 | 8192 | 256 | 61.04 |

| nomic-embed-text-v1.5 | 8192 | 128 | 59.34 |

| nomic-embed-text-v1.5 | 8192 | 64 | 56.10 |

## Hosted Inference API

The easiest way to get started with Nomic Embed is through the Nomic Embedding API.

Generating embeddings with the `nomic` Python client is as easy as

```python

from nomic import embed

output = embed.text(

texts=['Nomic Embedding API', '#keepAIOpen'],

model='nomic-embed-text-v1.5',

task_type='search_document',

dimensionality=256,

)

print(output)

```

For more information, see the [API reference](https://docs.nomic.ai/reference/endpoints/nomic-embed-text)

## Data Visualization

Click the Nomic Atlas map below to visualize a 5M sample of our contrastive pretraining data!

[](https://atlas.nomic.ai/map/nomic-text-embed-v1-5m-sample)

## Training Details

We train our embedder using a multi-stage training pipeline. Starting from a long-context [BERT model](https://huggingface.co/nomic-ai/nomic-bert-2048),

the first unsupervised contrastive stage trains on a dataset generated from weakly related text pairs, such as question-answer pairs from forums like StackExchange and Quora, title-body pairs from Amazon reviews, and summarizations from news articles.

In the second finetuning stage, higher quality labeled datasets such as search queries and answers from web searches are leveraged. Data curation and hard-example mining is crucial in this stage.

For more details, see the Nomic Embed [Technical Report](https://static.nomic.ai/reports/2024_Nomic_Embed_Text_Technical_Report.pdf) and corresponding [blog post](https://blog.nomic.ai/posts/nomic-embed-matryoshka).

Training data to train the models is released in its entirety. For more details, see the `contrastors` [repository](https://github.com/nomic-ai/contrastors)

## Usage

Note `nomic-embed-text` requires prefixes! We support the prefixes `[search_query, search_document, classification, clustering]`.

For retrieval applications, you should prepend `search_document` for all your documents and `search_query` for your queries.

### Sentence Transformers

```python

import torch.nn.functional as F

from sentence_transformers import SentenceTransformer

matryoshka_dim = 512

model = SentenceTransformer("nomic-ai/nomic-embed-text-v1.5", trust_remote_code=True)

sentences = ['search_query: What is TSNE?', 'search_query: Who is Laurens van der Maaten?']

embeddings = model.encode(sentences, convert_to_tensor=True)

embeddings = F.layer_norm(embeddings, normalized_shape=(embeddings.shape[1],))

embeddings = embeddings[:, :matryoshka_dim]

embeddings = F.normalize(embeddings, p=2, dim=1)

print(embeddings)

```

### Transformers

```diff

import torch

import torch.nn.functional as F

from transformers import AutoTokenizer, AutoModel

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0]

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

return torch.sum(token_embeddings * input_mask_expanded, 1) / torch.clamp(input_mask_expanded.sum(1), min=1e-9)

sentences = ['search_query: What is TSNE?', 'search_query: Who is Laurens van der Maaten?']

tokenizer = AutoTokenizer.from_pretrained('bert-base-uncased')

model = AutoModel.from_pretrained('nomic-ai/nomic-embed-text-v1.5', trust_remote_code=True, safe_serialization=True)

model.eval()

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

+ matryoshka_dim = 512

with torch.no_grad():

model_output = model(**encoded_input)

embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

+ embeddings = F.layer_norm(embeddings, normalized_shape=(embeddings.shape[1],))

+ embeddings = embeddings[:, :matryoshka_dim]

embeddings = F.normalize(embeddings, p=2, dim=1)

print(embeddings)

```

The model natively supports scaling of the sequence length past 2048 tokens. To do so,

```diff

- tokenizer = AutoTokenizer.from_pretrained('bert-base-uncased')

+ tokenizer = AutoTokenizer.from_pretrained('bert-base-uncased', model_max_length=8192)

- model = AutoModel.from_pretrained('nomic-ai/nomic-embed-text-v1', trust_remote_code=True)

+ model = AutoModel.from_pretrained('nomic-ai/nomic-embed-text-v1', trust_remote_code=True, rotary_scaling_factor=2)

```

### Transformers.js

```js

import { pipeline, layer_norm } from '@xenova/transformers';

// Create a feature extraction pipeline

const extractor = await pipeline('feature-extraction', 'nomic-ai/nomic-embed-text-v1.5', {

quantized: false, // Comment out this line to use the quantized version

});

// Define sentences

const texts = ['search_query: What is TSNE?', 'search_query: Who is Laurens van der Maaten?'];

// Compute sentence embeddings

let embeddings = await extractor(texts, { pooling: 'mean' });

console.log(embeddings); // Tensor of shape [2, 768]

const matryoshka_dim = 512;

embeddings = layer_norm(embeddings, [embeddings.dims[1]])

.slice(null, [0, matryoshka_dim])

.normalize(2, -1);

console.log(embeddings.tolist());

```

# Join the Nomic Community

- Nomic: [https://nomic.ai](https://nomic.ai)

- Discord: [https://discord.gg/myY5YDR8z8](https://discord.gg/myY5YDR8z8)

- Twitter: [https://twitter.com/nomic_ai](https://twitter.com/nomic_ai)

# Citation

If you find the model, dataset, or training code useful, please cite our work

```bibtex

@misc{nussbaum2024nomic,

title={Nomic Embed: Training a Reproducible Long Context Text Embedder},

author={Zach Nussbaum and John X. Morris and Brandon Duderstadt and Andriy Mulyar},

year={2024},

eprint={2402.01613},

archivePrefix={arXiv},

primaryClass={cs.CL}

}