anchor

stringlengths 159

16.8k

| positive

stringlengths 184

16.2k

| negative

stringlengths 167

16.2k

| anchor_status

stringclasses 3

values |

|---|---|---|---|

## TLDR

We are a developer toolkit which provides a decentralized database as a service where users can use our endpoints as well as NoCode tool where users can upload their data in a decentralised manner.

## Inspiration

👋🏻 With the advent of Web3, there are now decentralized **ways to store data that are a hundred times cheaper than Web2 solutions**. Such methods are equally, if not more secure, dependable and scalable.

😩 However, **using them remains vastly more technically challenging** and time consuming than traditional alternatives.

🤓 As Web3 developers, even as we've sought to push the envelope with frontier blockchain technology, we've **run into limitations** with Web2 solutions *time and time again*.

## What it does

🫡 ControlDB’s mission is to **bridge this gap between cost and performance**, allowing developers to use exponentially cheaper IPFS distributed file storage, while **circumventing much of the complexity and limitations** that normally come with it.

🚀 IPFS, the InterPlanetary File System, is a peer-to-peer file sharing network. Files are sharded across multiple nodes. Building on this protocol allows ControlDB to fundamentally **ensure user data remains decentralized, preventing a single source of failure because of IPFS' distributed nature.**

❌ A limitation of IPFS, however, is that shards of files are made public.

✅ To overcome this, ControlDB adds an additional layer of encryption to further increase user privacy. In the event that a user's IPFS hash is intercepted, an attacker will still have to circumvent the additional layer of advanced encryption, keeping users' data safe.

❌ IPFS also has no inherent read or write controls, necessary for handling sensitive data across multiple agents, for example in healthcare.

✅ Here ControlDB introduces a permission layer onto files, allowing admins to designate different levels of file access across multiple stakeholders with varied roles.

❌ IPFS is also accessed through command line interface, making it hard to debug and understand.

✅ In contrast ControlDB adds a user-friendly GUI on top of IPFS’ command line based function, allowing non-technical users to access its features. A simplified API makes it easy and quick for developers to implement ControlDB’s file storage into their programs. Doing so circumvents the need for developers to build a new IPFS pipeline, reducing the barrier to entry for smaller and/or less experienced teams.

✅ Our architecture is designed to be plug-and-play, which means that you can easily switch between different storage endpoints, such as IPFS or Estuary or any other option where you just need to add a configuration file, depending on your needs. This provides you with the flexibility to use the database of your choice, while still taking advantage of our platform's powerful features.

🔥🔥🔥 Altogether ControlDB will **save developers significant amounts of time, money and technical headache,** allowing them to scale usage from simple to complex use cases as their software grows.

😌😌😌 It will also **improve the availability of files, reduce privacy concerns, and grant users increased control over their data.**

## Demo

[](http://www.youtube.com/watch?v=WxG2gmMB57M)

## How we built it

🛠️ ControlDB was built with TypeScript and Golang. Its MVP consists of a **fully functional backend with full encryption, decryption and sharding across multiple nodes implemented, as well as a fully functional frontend with login, file upload and retrieval.** We even built a working user interface.

🤝🏻 Yes, everything is *fully working*. 🫡🫡🫡

### Technical Architecture

**Overall Architecture**

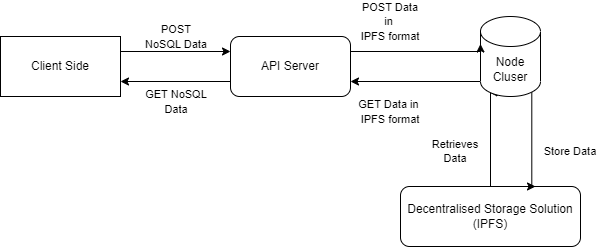

We have an API Server which interacts with the Node Cluster which is a wrapper to any decentralised storage system. We spun up 3 IPFS nodes locally which are used as the decentralised storage engine.

**No code frontend tool**

Our front end allows users to easily upload their data onto decentralised file storage quickly without any hassle or code.

**API Server**

API Server: This layer accepts user requests via our Frontend as well as the HTTP API requests. The permissions are fetched out of payload which user sent and then the main data is used for the further part.

**Middleware Node Cluster**

Middleware Node: A search engine is developed in this layer using B+ Trees for faster retrieval of data and lookup. As the data is received from the user, we encrypt it using AES-256 and generate the encryption key. A unique id is also generated in this node which will be used as a key for insertion in the B+ Tree. The package of permissions, encryption key and IPFS Hash is generated for the insertion in B+ Trees.

**IPFS Nodes**

We have started 3 IPFS nodes which interacts with our middleware node.

Firstly, IPFS is installed on the operating system by following the official IPFS documentation. This step provides the necessary software to create and manage the IPFS cluster.

Next, IPFS is initialized on each node using the ipfs init command, which creates configuration files for ipfs-cluster-ctl to interact with the nodes. This step is essential to ensure that the IPFS nodes are ready to be managed by the cluster.

A configuration file is created using the default docker-compose.yml file, or a custom configuration file can be used to set up the cluster. The configuration file specifies how many IPFS cluster nodes and Kubo nodes are to be created, which determines the cluster's capacity and the method of interaction with the nodes.

The Kubo nodes sit on top of the IPFS cluster nodes and provide a way for users to interact with the cluster via HTTP API or IPFS client. This interaction enables users to manage and control the IPFS cluster by creating endpoints that allow various actions to be performed on the nodes.

Once the cluster is set up, users can create endpoints to perform various actions on the cluster nodes. These endpoints can be migrated as necessary, enabling the cluster to be flexible and adaptable to changing requirements.

## Challenges we ran into

🧐 At first, the team struggled with ideation, and considered a broad spectrum of potential pathways. There was a struggle to find consensus. We also had little to no experience with IPFS, and no idea how to architect or scope it down. This was overcome with much discussion and research.

Challenges we ran into:

1. Developing the ACL and integrating it with the IPFS.

2. Starting the local IPFS architecture.

3. As we were running the local IPFS server, we faced the CORS issue in the IPFS nodes which prevented us to access from different origins.

4. Developing B+ search tree as per our use case of adding multiple values.

5. Integration of different components we built.

6. Integration with Estuary APIs for using them as the storage engine along with IPFS.

## Accomplishments that we're proud of

🙏🏻 We are incredibly proud to achieve a fully functional MVP within 36 hours. Integrating IPFS from zero experience, as well as permission and encryption layers, ensuring everything runs stably, has been a huge accomplishment within the short time we've had.

## What we learned

💯 This was a great deep dive into the world of decentralized data storage, IPFS and building permission layers. We also learnt to build synergy as a team, and how to build a strong team together, leveraging each individual's unique strengths.

## What's next for ControlDB

🥳 We'd like to change encryption keys over time, reducing vulnerabilities to attacks.

|

## Inspiration

In traditional finance, banks often swap cash flows from their assets for a fixed period of time. They do this because they want to hold onto their assets long-term, but believe their counter-party's assets will outperform their own in the short-term. We decided to port this over to DeFi, specifically Uniswap.

## What it does

Our platform allows for the lending and renting of Uniswap v3 liquidity positions. Liquidity providers can lend out their positions for a short amount of time to renters, who are able to collect fees from the position for the duration of the rental. Lenders are able to both hold their positions long term AND receive short term cash flow in the form of a lump sum ETH which is paid upfront by the renter. Our platform handles the listing, selling and transferring of these NFTs, and uses a smart contract to encode the lease agreements.

## How we built it

We used solidity and hardhat to develop and deploy the smart contract to the Rinkeby testnet. The frontend was done using web3.js and Angular.

## Challenges we ran into

It was very difficult to lower our gas fees. We had to condense our smart contract and optimize our backend code for memory efficiency. Debugging was difficult as well, because EVM Error messages are less than clear. In order to test our code, we had to figure out how to deploy our contracts successfully, as well as how to interface with existing contracts on the network. This proved to be very challenging.

## Accomplishments that we're proud of

We are proud that in the end after 16 hours of coding, we created a working application with a functional end-to-end full-stack renting experience. We allow users to connect their MetaMask wallet, list their assets for rent, remove unrented listings, rent assets from others, and collect fees from rented assets. To achieve this, we had to power through many bugs and unclear docs.

## What we learned

We learned that Solidity is very hard. No wonder blockchain developers are in high demand.

## What's next for UniLend

We hope to use funding from the Uniswap grants to accelerate product development and add more features in the future. These features would allow liquidity providers to swap yields from liquidity positions directly in addition to our current model of liquidity for lump-sums of ETH as well as a bidding system where listings can become auctions and lenders rent their liquidity to the highest bidder. We want to add different variable-yield assets to the renting platform. We also want to further optimize our code and increase security so that we can eventually go live on Ethereum Mainnet. We also want to map NFTs to real-world assets and enable the swapping and lending of those assets on our platform.

|

## 🪐 Inspiration 🪐

As four female developers, we wanted to create a hack that could make a noticeable difference in the world and still be enticing enough to use. We all felt drawn to the concept of helping women gain financial independence, so Plan It Girlboss was born.

Specifically, we wanted to target younger women who might be curious about starting their entrepreneurship journey. Throughout the project we’ve used ‘young people’ terms, with our most widely used term being the word ‘Girlboss”. The term ‘Girlboss’ has been an empowering term for female CEOs in the past and has recently become re-popularized with the millennial and gen z generations.

Our name, “Plan It Girlboss”, is a play on the words ‘planet’ and ‘plan it’; with planet/space being our theme and ‘plan it’ referencing planning your future business or financial plans.

We were heavily inspired by the following statistics:

* [Women live paycheck to paycheck five times more often than men](https://www.cnbc.com/2019/10/14/women-live-paycheck-to-paycheck-roughly-5-times-as-often-as-men.html). We want to give women the opportunity to learn how to make money for themselves and create a solid budget to help reduce this statistic.

* [In 2020, women made 80.4% of what men earned, and have NEVER earned more than 83%](https://www.fool.com/the-ascent/research/gender-pay-gap-statistics/). It's time for women to overcome the very real wage gap. We want resources to be available to women who are ready to take the step towards profitable entrepreneurship in their financial journey.

* [Companies that have women in executive leadership roles are statistically more profitable](https://hbr.org/2016/02/study-firms-with-more-women-in-the-c-suite-are-more-profitable). Women **can be** and **should be** leaders. We want to give women the opportunity to put themselves in these positions by starting their own companies.

## 🌟 What it does 🌟

Plan It Girlboss is a women-targeted website that has 3 main focuses: budgeting, connecting, and learning, specifically for young entrepreneurs. Additionally, enjoy a randomly generated quote or question on the home page to inspire you to keep progressing towards your goals.

###### **BUDGETING BABE**

With the budgeting portion of the website, you currently can select one of 3 years (2021, 2020, and 2019). Once selected, you’ll be taken to the physical budget where you can input your budget for the year and your expenses and can also remove previous expenses if needed. It also clearly demonstrates what you’ve spent out of the budget you’ve set and how much money you have remaining to spend.

###### **CONNECTION QUEEN**

With the connection portion of the website, you currently can input a specific city/location/business into the search bar and have the map instantly take you there. This is to help you seamlessly connect with fellow Girlbosses in your area.

###### **LEARNING LADY**

With the learning portion of the website, you currently can select one of four articles written by our development team to read to inform yourself about relevant topics that could impact your business, your day to day life, or are socially relevant. The articles are all tagged to make it easy to visually filter through what you’d like to read today so that you’re making the most out of your day and your time.

## 🌎 How we built it 🌎

This application was built with React, HTML, CSS, JavaScript using Glitch. All code obtained from public open-source materials has been cited within the project's README.

All of the art assets were created using Canva.

## 💫 Challenges we ran into 💫

* It was difficult for us to use React at first as it is a relatively new framework for all of us.

* A lot of time was spent on persisting the data while navigating between different routes.

* Because of the 36 hours time limit, we were unable to implement more complex features such as allowing users to log in and save their budgeting summaries and allowing users to connect with actual mentors.

* Styling elements proved to be a challenge as most members are unfamiliar with CSS.

## 🌗 Accomplishments that we're proud of 🌗

* This was most of our developer’s first hackathon! We’re happy we accomplished it within the timeframe and submitted something we were proud of.

* We got the budget page to work with different years!

* We had fun and got to learn more about each other, as well as have many laughs along the way.

## 🌠 What we learned 🌠

* How to use the State Hook to change the state of components.

* How to use props to allow data persistence between different routes.

* How to install and import npm packages that allow us to program with more ease.

* How to fetch data from an API.

* How to work together in a virtual hackathon.

## 🌌 What's next for Plan It Girlboss 🌌

We are excited for the future! There are quite a few features that could be added, but the main ones would be:

* A working login page

* A month-to-month budget system

* A chatting system to chat with fellow Girlbosses

* More articles in the ‘Learn’ section

If any investors are interested in this project, there are quite a few features that could be implemented so that you can make money:

* Top-quality, paid lessons for the learn page

* Paid mentors Girlbosses can connect with

* A monthly or annually billed subscription service to access all paid features at once

Thank you for reviewing our hack. We hope you have a great rest of your day!

|

winning

|

## 💡 Inspiration 💡

So many people around the world are fatally injured and require admission to multiple hospitals in order to receive life-changing surgery/procedures. When patients are transferred from one hospital to another, it is crucial for their medical information to be safely transferred as well.

## ❓ What it does ❓

Hermes is a secure, HIPAA-compliant app that allows hospital admin to transfer vital patient data to other domestic and/or international hospitals. The user inputs patient data, uploads patient files, and sending them securely to a hospital.

## ⚙️ How we built it ⚙️

We used the React.JS framework to build the web application. We used Javascript for the backend and HTML/CSS/JS for the frontend. We called the Auth0 API for authentication and Botdoc API for encrypted file sending.

## 🚧 Challenges we ran into 🚧

Figuring out how to send encrypted files through Botdoc was challenging but also critical to our project.

## ✨Accomplishments that we're proud of ✨

We’re proud to have built a dashboard-like functionality within 24 hours.

## 👩🏻💻 What we learned 👩🏻💻

We learned that authentication on every page is critical for an app like this that would require uploaded patient information from hospital admins. Learning to use Botdoc was also fruitful when it comes to sending encrypted messages/files.

|

## Inspiration

So, like every Hackathon we’ve done in the past, we wanted to build a solution based on the pain points of actual, everyday people. So when we decided to pursue the Healthtech track, we called the nurses and healthcare professionals in our lives. To our surprise, they all seemed to have the same gripe – that there was no centralized system for overviewing the procedures, files, and information about specific patients in a hospital or medical practice setting. Even a quick look through google showed that there wasn’t any new technology that was really addressing this particular issue. So, we created UniMed - united medical - to offer an innovate alternative to the outdated software that exists – or for some practices, pen and paper.

While this isn’t necessarily the sexiest idea, it’s probably one of the most important issues to address for healthcare professionals. Looking over the challenge criteria, we couldn’t come up with a more fitting solution – what comes to mind immediately is the criterion about increasing practitioner efficiency. The ability to have a true CMS – not client management software, but CARE management software – eliminates any need for annoying patients with a barrage of questions they’ve answered a hundred times, and allows nurses and doctors to leave observations and notes in a system where they can be viewed from other care workers going forward.

## What it does

From a technical, data-flow perspective, this is the gist of how UniMed works: Solace connects our React-based front end to our database. While we normally would have a built a SQL database or perhaps gone the noSQL route and leveraged mongoDB, due to time constraints we’re using JSON for simplicities sake. So while JSON is acting, typically, like a REST API, we’re pulling real-time data with Solace’s functionality. Any time an event-based subscription is called – for example, a nurse updates a patient’s records reporting that their post-op check-up went well and they should continue on their current dosage of medication – that value, in this case a comment value, is passed to that event (updating our React app by populating the comments section of a patient’s record with a new comment).

## How we built it

We all learned a lot at this hackathon – Jackson had some Python experience but learned some HTML5 to design the basic template of our log-in page. I had never used React before, but spent several hours watching youtube videos (the React workshop was also very helpful!) and Manny mentored me through some of the React app creation. Augustine is a marketing student but it turns out he has a really good eye for design, and he was super helpful in mockups and wireframes!

## What's next for UniMed

There are plenty of cool ideas we have for integrating new features - the ability to give patients a smartwatch that monitors their vital signs and pushes that bio-information to their patient "card" in real time would be super cool. It would be great to also integrate scheduling functionality so that practitioners can use our program as the ONLY program they need while they're at work - a complete hub for all of their information and duties!

|

## Inspiration 💡

**HERMES** was inspired by the urgent need to address multiple critical issues plaguing the healthcare industry, with a primary focus on improving the lives of individuals and communities. The following key factors served as driving forces:

1. **Inequity in Healthcare Access:** Across the world, disparities in healthcare access persist, with marginalized communities and underserved populations facing significant hurdles in receiving quality care. *HERMES* aims to bridge this gap by providing a universally accessible and interconnected healthcare system. By leveraging technology and digital records, it ensures that every individual, regardless of their socioeconomic background or geographic location, has access to essential health services.

2. **Fragmented Health Records:** Traditional health records are often fragmented and scattered across different healthcare providers, making it challenging for patients and doctors to access a comprehensive view of a patient's medical history. *HERMES* offers a unified platform where all medical information is consolidated as NFTs, ensuring that health records are complete, up-to-date, and easily accessible to authorized parties. This eliminates the inefficiencies and potential risks associated with incomplete records.

3. **Lack of Doctor-Patient Communication:** Effective communication between patients and healthcare providers is essential for improving health outcomes. The absence of convenient and real-time communication can lead to miscommunication, delayed diagnoses, and suboptimal care. *HERMES* provides a secure channel for seamless communication, enabling patients and doctors to connect, share information, and make informed decisions collaboratively.

4. **Data Security:** Data security and privacy in healthcare are paramount. Breaches in health data can have severe consequences for individuals, leading to identity theft and other potential risks. *HERMES* incorporates blockchain technology to establish a robust security framework, ensuring that personal health data remains confidential and immutable. This inspires trust in the system, making patients more comfortable sharing their information.

5. **Economic Barrier/Divide:** The cost of quality healthcare and medication is a significant economic barrier for many individuals. *HERMES* tackles this issue by fostering connections with pharmacies and specialists, facilitating online consultations, and enabling efficient management of health records. By lowering barriers to access and providing more cost-effective healthcare solutions, *HERMES* contributes to reducing economic disparities in healthcare.

## What it does 🏥

**HERMES** is an innovative digital healthcare platform designed to provide a wide range of services and address the complex issues in healthcare. It does the following:

* **Data Storage:** It stores various medical data types, including eye tests, X-rays, vaccines, blood tests, doctor's office notes, wellness checkup records, past appointments, prescription history, and health digital credentials as non-fungible tokens (NFTs) on the XRP Ledger. An off-chain Cockroach DB is used to efficiently manage and retrieve this data.

* **Data Security:** Data is secured on the blockchain with hash links to the Cockroach DB, ensuring fast access while prioritizing data safety.

* **General Medical Advice and Queries:** Patients can seek general medical advice and ask health-related questions. An AI system powered by OpenAI, with integration through Minds DB, assists in providing accurate and personalized responses.

* **AI Report Summarization and Recommendation:** The platform uses Vectara AI for summarizing medical reports and offering recommendations.

* **Image Disease Diagnosis:** It provides the capability to diagnose diseases from X-rays, scans, and other medical images, along with treatment recommendations.

* **Doctor-Patient Communication:** A video chat system and text communication are integrated, allowing for real-time interaction between doctors and patients. Hume AI is used for analyzing sentiment and tone in the communication.

* **Patient Services Recommendation:** The platform offers recommendations for hospitals, pharmacies, and treatment centers based on the patient's condition and needs.

## Tech: 🛠️

**HERMES** is built by combining several key technologies:

* XRP Ledger for NFT storage.

* Cockroach DB for off-chain database management.

* OpenAI and Minds DB for AI-powered medical advice and responses.

* Vectara AI for medical report summarization and recommendations.

* Integration of video chat and text communication systems for doctor-patient interaction.

## Challenges: 🌍

Building *HERMES* presented several challenges, including:

* Ensuring the security of medical data on the blockchain while maintaining fast accessibility.

* Developing and training AI models for accurate medical advice and diagnosis.

* Integrating various AI models and databases to work seamlessly together.

* Ensuring compliance with healthcare regulations and data privacy standards.

## Accomplishments: 🏥

We're proud of the following accomplishments:

* Successful integration of a wide range of cutting-edge technologies to create a comprehensive healthcare platform.

* Building a secure, efficient, and accessible system for storing and managing medical data.

* Developing AI systems for medical advice, report summarization, and sentiment analysis.

* Facilitating doctor-patient communication through video chat and text communication.

## Learnings: 📚

During the development of *HERMES*, we learned the following:

* The critical importance of data security in healthcare and how blockchain technology can enhance it.

* The potential of AI in transforming healthcare services and improving patient-doctor interactions.

* The challenges and complexities of integrating multiple technologies to create a unified healthcare platform.

## Next Steps: 🚀

The future of *HERMES* involves:

* Making the application integrated and connected with different features and services.

* Deployment of a private blockchain built on zero-knowledge proofs.

* Expanding the user base to include more patients, healthcare providers, and medical facilities.

* Continuous improvement of AI models to enhance accuracy and expand capabilities.

* Adhering to evolving healthcare regulations and data privacy standards.

* Enhancing the user experience with additional features and functionalities.

## Notes: 📝

* PowerPoint: <https://1drv.ms/p/s!ApHB9V1j-TOThIEu3RTnZ6RjM4BPQg?e=UMGVQQ>

* Demo(No Audio): <https://youtu.be/8BKdTKyvJEA>

* Pitch Video:<https://youtu.be/lZiH-TwsqcU>

* NFT-XRP is code to create NFTs using XRP Ledgers. Click Get Standby Account, wait until Seed, Account and Amount are filled. Then input link for medical data storage as NFT URI. Click MInt NFT, Get NFT.

* Cockroach, MindsDB, and Vectara Connection:

|

partial

|

## Inspiration

At many public places, recycling is rarely a priority. Recyclables are disposed of incorrectly and thrown out like garbage. Even here at QHacks2017, we found lots of paper and cans in the [garbage](http://i.imgur.com/0CpEUtd.jpg).

## What it does

The Green Waste Bin is a waste bin that can sort the items that it is given. The current of the version of the bin can categorize the waste as garbage, plastics, or paper.

## How we built it

The physical parts of the waste bin are the Lego, 2 stepper motors, a raspberry pi, and a webcam. The software of the Green Waste Bin was entirely python. The web app was done in html and javascript.

## How it works

When garbage is placed on the bin, a picture of it is taken by the web cam. The picture is then sent to Indico and labeled based on a collection that we trained. The raspberry pi then controls the stepper motors to drop the garbage in the right spot. All of the images that were taken are stored in AWS buckets and displayed on a web app. On the web app, images can be relabeled and the Indico collection is retrained.

## Challenges we ran into

AWS was a new experience and any mistakes were made. There were some challenges with adjusting hardware to the optimal positions.

## Accomplishments that we're proud of

Able to implement machine learning and using the Indico api

Able to implement AWS

## What we learned

Indico - never done machine learning before

AWS

## What's next for Green Waste Bin

Bringing the project to a larger scale and handling more garbage at a time.

|

## Inspiration

Every year roughly 25% of recyclable material is not able to be recycled due to contamination. We set out to reduce the amount of things that are needlessly sent to the landfill by reducing how much people put the wrong things into recycling bins (i.e. no coffee cups).

## What it does

This project is a lid for a recycling bin that uses sensors, microcontrollers, servos, and ML/AI to determine if something should be recycled or not and physically does it.

To do this it follows the following process:

1. Waits for object to be placed on lid

2. Take picture of object using webcam

3. Does image processing to normalize image

4. Sends image to Tensorflow model

5. Model predicts material type and confidence ratings

6. If material isn't recyclable, it sends a *YEET* signal and if it is it sends a *drop* signal to the Arduino

7. Arduino performs the motion sent to it it (aka. slaps it *Happy Gilmore* style or drops it)

8. System resets and waits to run again

## How we built it

We used an Arduino Uno with an Ultrasonic sensor to detect the proximity of an object, and once it meets the threshold, the Arduino sends information to the pre-trained TensorFlow ML Model to detect whether the object is recyclable or not. Once the processing is complete, information is sent from the Python script to the Arduino to determine whether to yeet or drop the object in the recycling bin.

## Challenges we ran into

A main challenge we ran into was integrating both the individual hardware and software components together, as it was difficult to send information from the Arduino to the Python scripts we wanted to run. Additionally, we debugged a lot in terms of the servo not working and many issues when working with the ML model.

## Accomplishments that we're proud of

We are proud of successfully integrating both software and hardware components together to create a whole project. Additionally, it was all of our first times experimenting with new technology such as TensorFlow/Machine Learning, and working with an Arduino.

## What we learned

* TensorFlow

* Arduino Development

* Jupyter

* Debugging

## What's next for Happy RecycleMore

Currently the model tries to predict everything in the picture which leads to inaccuracies since it detects things in the backgrounds like people's clothes which aren't recyclable causing it to yeet the object when it should drop it. To fix this we'd like to only use the object in the centre of the image in the prediction model or reorient the camera to not be able to see anything else.

|

## Inspiration

* Manual sorting of garbage is a difficult and expensive process and the global waste for recyclable materials has significantly increased over the years.

* It is projected by the World Bank that in 2050, the world will generate 3.4 billion tons of waste per year which is a 70% increase compared to 2023.

* Our strong desire to reduce the negative impact of waste and maintain a sustainable environment in the next 100 years brought us together, brainstorming methods for automated garbage sorting to improve the recycling process.

* We shared knowledge about machine learning, deep learning, Google Cloud, and full-stack development skills and we decided to design a mobile app that can automatically quick-sort the garbage with the support of machine learning models and lead the user to the nearest recycling places on Google Maps.

## What it does

* Users can scan everyday objects with the scanner in QuickSort and will immediately sort things into various categories with the support of the Machine Learning Model.

* The Maps feature will lead the users to the nearest garbage recycling places on Google Maps to improve the recycling process.

* With the support of Google Cloud, QuickSort will record the total amount of garbage recycled by the users in the past year/month/week and display the data on line charts and pie charts.

## How we built it:

* Machine Learning: PyTorch, Deep Learning

* Hosting: Google Cloud, CockroachDB

* Frontend: React Native

* Backend: Node.js, Express.js, TypeScript, PostgreSQL

* UI Design: Figma

## Challenges we ran into

* Flask server setup to connect with MongoDB and Google Cloud SQL.

* Implementation of authentication with Google.

* Speed of classification using our models.

## Accomplishments that we're proud of

* Trained numerous models using PyTorch with over 20k+ images.

* Set up the Node.js server to connect with CockroachDB hosted on Google Cloud.

## What we learned

* Mobile development using React Native for device cross-compatibility.

* Learned the process of connecting to various database services (Google Cloud SQL, MongoDB, CockroachDB)

* The process of setting up a server in Flask and Express.js.

## What's next for QuickSort

* Live-feed detection for rapid detection speed to improve user experience.

* Global recycling management system to track total garbage recycled on a global scale for further research.

* Implementation of a bonus system based on the amount of garbage a user recycles to encourage them to reduce waste production everyday.

|

winning

|

## Off The Grid

Super awesome offline, peer-to-peer, real-time canvas collaboration iOS app

# Inspiration

Most people around the world will experience limited or no Internet access at times during their daily lives. We could be underground (on the subway), flying on an airplane, or simply be living in areas where Internet access is scarce and expensive. However, so much of our work and regular lives depend on being connected to the Internet. I believe that working with others should not be affected by Internet access, especially knowing that most of our smart devices are peer-to-peer Wifi and Bluetooth capable. This inspired me to come up with Off The Grid, which allows up to 7 people to collaborate on a single canvas in real-time to share work and discuss ideas without needing to connect to Internet. I believe that it would inspire more future innovations to help the vast offline population and make their lives better.

# Technology Used

Off The Grid is a Swift-based iOS application that uses Apple's Multi-Peer Connectivity Framework to allow nearby iOS devices to communicate securely with each other without requiring Internet access.

# Challenges

Integrating the Multi-Peer Connectivity Framework into our application was definitely challenging, along with managing memory of the bit-maps and designing an easy-to-use and beautiful canvas

# Team Members

Thanks to Sharon Lee, ShuangShuang Zhao and David Rusu for helping out with the project!

|

## Inspiration

We are a team of goofy engineers and we love making people laugh. As Western students (and a stray Waterloo engineer), we believe it's important to have a good time. We wanted to make this game to give people a reason to make funny faces more often.

## What it does

We use OpenCV to analyze webcam input and initiate signals using winks and blinks. These signals control a game that we coded using PyGame.

See it in action here: <https://youtu.be/3ye2gEP1TIc>

## How to get set up

##### Prerequisites

* Python 2.7

* A webcam

* OpenCV

1. [Clone this repository on Github](https://github.com/sarwhelan/hack-the-6ix)

2. Open command line

3. Navigate to working directory

4. Run `python maybe-a-game.py`

## How to play

**SHOW ME WHAT YOU GOT**

You are playing as Mr. Poopybutthole who is trying to tame some wild GMO pineapples. Dodge the island fruit and get the heck out of there!

##### Controls

* Wink left to move left

* Wink right to move right

* Blink to jump

**It's time to get SssSSsssSSSssshwinky!!!**

## How we built it

Used haar cascades to detect faces and eyes. When users' eyes disappear, we can detect a wink or blink and use this to control Mr. Poopybutthole movements.

## Challenges we ran into

* This was the first game any of us have ever built, and it was our first time using Pygame! Inveitably, we ran into some pretty hilarious mistakes which you can see in the gallery.

* Merging the different pieces of code was by-far the biggest challenge. Perhaps merging shorter segments more frequently could have alleviated this.

## Accomplishments that we're proud of

* We had a "pineapple breakthrough" where we realized how much more fun we could make our game by including this fun fruit.

## What we learned

* It takes a lot of thought, time and patience to make a game look half decent. We have a lot more respect for game developers now.

## What's next for ShwinkySwhink

We want to get better at recognizing movements. It would be cool to expand our game to be a stand-up dance game! We are also looking forward to making more hacky hackeronis to hack some smiles in the future.

|

## Background

Collaboration is the heart of humanity. From contributing to the rises and falls of great civilizations to helping five sleep deprived hackers communicate over 36 hours, it has become a required dependency in the [[email protected]](mailto:[email protected]):**hacker/life**.

Plowing through the weekend, we found ourselves shortchanged by the current available tools for collaborating within a cluster of devices. Every service requires:

1. *Authentication* Users must sign up and register to use a service.

2. *Contact* People trying to share information must establish a prior point of contact(share e-mails, phone numbers, etc).

3. *Unlinked Output* Shared content is not deep-linked with mobile or web applications.

This is where Grasshopper jumps in. Built as a **streamlined cross-platform contextual collaboration service**, it uses our on-prem installation of Magnet on Microsoft Azure and Google Cloud Messaging to integrate deep-linked commands and execute real-time applications between all mobile and web platforms located in a cluster near each other. It completely gets rid of the overhead of authenticating users and sharing contacts - all sharing is done locally through gps-enabled devices.

## Use Cases

Grasshopper lets you collaborate locally between friends/colleagues. We account for sharing application information at the deepest contextual level, launching instances with accurately prepopulated information where necessary. As this data is compatible with all third-party applications, the use cases can shoot through the sky. Here are some applications that we accounted for to demonstrate the power of our platform:

1. Share a video within a team through their mobile's native YouTube app **in seconds**.

2. Instantly play said video on a bigger screen by hopping the video over to Chrome on your computer.

3. Share locations on Google maps between nearby mobile devices and computers with a single swipe.

4. Remotely hop important links while surfing your smartphone over to your computer's web browser.

5. Rick Roll your team.

## What's Next?

Sleep.

|

winning

|

## The Problem:

Inspired by our teammates' lack of knowledge in first aid administration, we address a gap in emergency medical response time for remote and underserviced locations. Currently, it takes 7 minutes for emergency medical services (EMS) to reach the scene, with the time in rural areas doubling to 14 minutes. For critical medical emergencies, such as cardiac arrest, every minute the **chances of survival decrease by 10%** making immediate intervention necessary. This is where the most crucial element to save lives comes in: the bystander. In fact, the intervention of bystanders can triple the chances of survival in instances of cardiac arrest.

Here is where another issue arises. Only 6 out of 10 people feel comfortable even attempting to perform CPR for someone in cardiac arrest. This stems from **only 3.5%** of people in the United States being trained in first-aid procedures such as cardiac arrest, and an irrational fear the bystander will inflict further damage to the victim.

## The Solution:

***The report below follows the assumption that in the next 5-10 years VR technology will be readily adopted into everyday life, by everyone. Strides in hardware will make the technology as sleek as a pair of glasses, and as common as a smartphone.***

Our novel VR and AI-based pipeline equips bystanders with the knowledge and visual guidance to perform life-saving procedures with confidence and precision. At Treehacks, we focused on creating guidance for a situation where a bystander witnesses someone seizing, which eventually escalates into cardiac arrest. Using Good Samaritan, the device passively detects a medical emergency and guides the bystander on how to care for the victim until emergency medical services arrive on the scene in order to give the victim the best chances of survival.

We provide in-depth, real-time visuals that detail:

* Real-time data streamed from a Fitbit of the victim to monitor vitals (TerraAPI)

* Facilitate proper “log-roll technique” to move an injured person

* The correct safe orientation for someone having a seizure so they don’t choke on their saliva

* The proper supporting of the head and neck to prevent paralysis

* Instruction for proper CPR with visuals on the victim’s body for where to place hands, what pace to perform compressions at, and when to give mouth-to-mouth breaths

* A live progress bar displaying the time until emergency medical services arrive on the scene

This immersive experience ensures that, without immediate professional help, the affected individuals receive the best possible care, increasing their chances of survival and recovery.

## What we are proud of, and how we built it:

Wow, we created a novel dynamic application that has the potential to save millions of lives in the future.

We programmed computer vision-based models using MediaPipe and OpenCV to perform pose detection and joint detection. We then performed linear transformations in a 3D vector space to identify and anchor the points in the Apple Vision Pro’s virtual space using real-time video from our computer vision script built onto VisionOS.

## Business Model:

$14.6 Billion Market Cap

-Work with public health departments to include the app in rural or underserved areas. The government could fund the deployment of the app as part of their mandate to improve public health infrastructure.

-Work with emergency services to integrate the app into their response protocols, providing first responders with additional information or assisting in situations where they can't reach the scene immediately.

-Apply for government grants aimed at technological innovations that improve public safety and health.

-Educational Programs: Integrate the app into educational programs, such as school safety initiatives or community health workshops, funded or supported by local or national government agencies.

## The team (4 people, 4 schools represented):

Ray- Stanford, specializes in AI and product structure

Shutaro- Columbia, specializes in immersive technologies

Shloak- UCLA, specializes in vision

Yash- Georgia Tech, specializes in medicine

## Next Steps:

This is one tangible application for Good Samaritan, though in the future we plan to have similar guiding procedures for:

-Anaphylactic shock

-Lacerations where bleeding must be managed

-Stroke

-AED’s

-Other emergency medical complications that benefit from the interference of a bystander

## Challenges we ran into:

Our expertise lay in Unity; however, Apple Vision Pro was only accessible with Unity Pro ($2,000), so we pivoted to and learned Swift. We ran into errors while translating CV’s 2D data into a 3D environment; we made use of anchoring techniques to pin the z-dimension while using the xy-dimension from CV.

## What we learned:

A ton! Applying CV’s 2D data into a 3D space, programming VR and AR on the Apple Vision Pro, and using Swift UI to develop VisionOS applications! Our pipeline is built ground up and novel—we figured it out along the way with little documentation to lean on!

|

# **MedKnight**

#### Professional medical care in seconds, when the seconds matter

## Inspiration

Natural disasters often put emergency medical responders (EMTs, paramedics, combat medics, etc.) in positions where they must assume responsibilities beyond the scope of their day-to-day job. Inspired by this reality, we created MedKnight, an AR solution designed to empower first responders. By leveraging cutting-edge computer vision and AR technology, MedKnight bridges the gap in medical expertise, providing first responders with life-saving guidance when every second counts.

## What it does

MedKnight helps first responders perform critical, time-sensitive medical procedures on the scene by offering personalized, step-by-step assistance. The system ensures that even "out-of-scope" operations can be executed with greater confidence. MedKnight also integrates safety protocols to warn users if they deviate from the correct procedure and includes a streamlined dashboard that streams the responder’s field of view (FOV) to offsite medical professionals for additional support and oversight.

## How we built it

We built MedKnight using a combination of AR and AI technologies to create a seamless, real-time assistant:

* **Meta Quest 3**: Provides live video feed from the first responder’s FOV using a Meta SDK within Unity for an integrated environment.

* **OpenAI (GPT models)**: Handles real-time response generation, offering dynamic, contextual assistance throughout procedures.

* **Dall-E**: Generates visual references and instructions to guide first responders through complex tasks.

* **Deepgram**: Enables speech-to-text and text-to-speech conversion, creating an emotional and human-like interaction with the user during critical moments.

* **Fetch.ai**: Manages our system with LLM-based agents, facilitating task automation and improving system performance through iterative feedback.

* **Flask (Python)**: Manages the backend, connecting all systems with a custom-built API.

* **SingleStore**: Powers our database for efficient and scalable data storage.

## SingleStore

We used SingleStore as our database solution for efficient storage and retrieval of critical information. It allowed us to store chat logs between the user and the assistant, as well as performance logs that analyzed the user’s actions and determined whether they were about to deviate from the medical procedure. This data was then used to render the medical dashboard, providing real-time insights, and for internal API logic to ensure smooth interactions within our system.

## Fetch.ai

Fetch.ai provided the framework that powered the agents driving our entire system design. With Fetch.ai, we developed an agent capable of dynamically responding to any situation the user presented. Their technology allowed us to easily integrate robust endpoints and REST APIs for seamless server interaction. One of the most valuable aspects of Fetch.ai was its ability to let us create and test performance-driven agents. We built two types of agents: one that automatically followed the entire procedure and another that responded based on manual input from the user. The flexibility of Fetch.ai’s framework enabled us to continuously refine and improve our agents with ease.

## Deepgram

Deepgram gave us powerful, easy-to-use functionality for both text-to-speech and speech-to-text conversion. Their API was extremely user-friendly, and we were even able to integrate the speech-to-text feature directly into our Unity application. It was a smooth and efficient experience, allowing us to incorporate new, cutting-edge speech technologies that enhanced user interaction and made the process more intuitive.

## Challenges we ran into

One major challenge was the limitation on accessing AR video streams from Meta devices due to privacy restrictions. To work around this, we used an external phone camera attached to the headset to capture the field of view. We also encountered microphone rendering issues, where data could be picked up in sandbox modes but not in the actual Virtual Development Environment, leading us to scale back our Meta integration. Additionally, managing REST API endpoints within Fetch.ai posed difficulties that we overcame through testing, and configuring SingleStore's firewall settings was tricky but eventually resolved. Despite these obstacles, we showcased our solutions as proof of concept.

## Accomplishments that we're proud of

We’re proud of integrating multiple technologies into a cohesive solution that can genuinely assist first responders in life-or-death situations. Our use of cutting-edge AR, AI, and speech technologies allows MedKnight to provide real-time support while maintaining accuracy and safety. Successfully creating a prototype despite the hardware and API challenges was a significant achievement for the team, and was a grind till the last minute. We are also proud of developing an AR product as our team has never worked with AR/VR.

## What we learned

Throughout this project, we learned how to efficiently combine multiple AI and AR technologies into a single, scalable solution. We also gained valuable insights into handling privacy restrictions and hardware limitations. Additionally, we learned about the importance of testing and refining agent-based systems using Fetch.ai to create robust and responsive automation. Our greatest learning take away however was how to manage such a robust backend with a lot of internal API calls.

## What's next for MedKnight

Our next step is to expand MedKnight’s VR environment to include detailed 3D renderings of procedures, allowing users to actively visualize each step. We also plan to extend MedKnight’s capabilities to cover more medical applications and eventually explore other domains, such as cooking or automotive repair, where real-time procedural guidance can be similarly impactful.

|

## Inspiration

Last year we did a project with our university looking to optimize the implementation of renewable energy sources for residential homes. Specifically, we determined the best designs for home turbines given different environments. In this project, we decided to take this idea of optimizing the implementation of home power further.

## What it does

A web application allows users to enter an address and determine if installing a backyard wind turbine or solar panel is more profitable/productive for their location.

## How we built it

Using an HTML front-end we send the user's address to a python flask back end where we use a combination of external APIs, web scraping, researched equations, and our own logic and math to predict how the selected piece of technology will perform.

## Challenges we ran into

We were hoping to use Google's Earth Engine to gather climate data, but were never approved fro the $25 credit so we had to find alternatives. There aren't alot of good options to gather the nessesary solar and wind data, so we had to use a combination of API's and web scraping to gather the required data which ended up being a bit more convulted than we hoped. Also integrating the back-end with the front-end was very difficult because we don't have much experience with full-stack development working end to end.

## Accomplishments that we're proud of

We spent a lot of time coming up with idea for EcoEnergy and we really think it has potential. Home renewable energy sources are quite an investment, so having a tool like this really highlights the benefits and should incentivize people to buy them. We also think it's a great way to try to popularize at-home wind turbine systems by directly comparing them to the output of a solar panel because depending on the location it can be a better investment.

## What we learned

During this project we learned how to predict the power output of solar panels and wind turbines based on windspeed and sunlight duration. We learned how to combine a back-end built in python to a front-end built in HTML using flask. We learned even more random stuff about optimizing wind turbine placement so we could recommend different turbines depending on location.

## What's next for EcoEnergy

The next step for EcoEnergy would be to improve the integration between the front and back end. As well as find ways to gather more location based climate data which would allow EcoEnergy to predict power generation with greater accuracy.

|

partial

|

## Inspiration

The classroom experience has *drastically* changed over the years. Today, most students and professors prefer to conduct their course organization and lecture notes electronically. Although there are applications that enabled a connected classroom, none of them are centered around measuring students' understanding during lectures.

The inspiration behind Enrich was driven by the need to create a user-friendly platform that expands the possibilities of electronic in-class course lectures: for both the students and the professors. We wanted to create a way for professors to better understand the student's viewpoint, recognize when their students need further help with a concept, and lead a lecture that would best provide value to students.

## What it does

Enrich is an interactive course organization platform. The essential idea of the app is that professor can create "classrooms" to which students can add themselves using a unique key provided by the professor. The professor has the ability to create multiple such classrooms for any class that he/she teaches. For each classroom, we provide a wide suite of services to enable a productive lecture.

An important feature in our app is a "learning ratio" statistic, which lets the professor know how well he/she is teaching the topics. As the teacher is going through the material, students can anonymously give real-time feedback on how they are responding to the lecture.The aggregation of this data is used to determine a color gradient from red (the lecture is going poorly) to green (the lecture is very clear and understandable). This allows the teacher to slow down if she recognizes that students are getting lost.

We also have a speech-to-text translation service that transcribes the lecture as it is going, providing students with the ability to read what the teacher is saying. This not only provides accessibility to those who can't hear, but also allows students to go back over what the teacher has said in the lecture.

Lastly, we have a messaging service that connects the students to Teaching Assistants during the lecture. This allows them to ask questions to clarify their understanding without disrupting the class.

## How we built it

Our platform consists of two sides to it: Learners and Educators. We used React.js as the front-end for both the Learner-side and Educator-side of our application. The whole project revolves around a effectively organized Firebase RealTime database, which stores the hierarchy of professor-class-student relationships. The React Components interface with Firebase to update students as and when they enter and leave a classroom. We also used Pusher to develop the chat service on the classrooms.

For the speech-to-text detection, we used the Google Speech-to-Text API to detect speech from the Educator's computer, transcribe this, and update the Firebase RealTime database with the transcript. The web application then updates user-facing site with the transcript.

## Challenges we ran into

The database structure on Firebase is quite intricate

Figuring out the best design for the Firebase database was challenging, because we wanted a seamless way to structure classes, students, their responses, and recordings. The speech-to-text transcription was also very challenging. We worked through using various APIs for the service, before finally settling on the Google Speech-to-Text API. Once we got the transcription service to work, it was hard to integrate it into the web application.

## Accomplishments that we're proud of

We were proud of getting the speech-to-text transcription service to work, as it took a while to connect to the API, get the transcription, and then transfer that over to our web application.

## What we learned

Despite using React for previous projects, we utilized new ways of state management through Redux that made things much simpler than before. We have also learned to integrate different services within our React application, such as the Chatbox in our application.

## What's next for Enrich - an education platform to increase collaboration

The great thing about Enrich is that it has a massive scope to expand! We had so many ideas to implement, but only such little time. We could have added a camera that tracks the expressions of students to analyze how they are reacting to lectures. This would have been a hands-off approach to getting feedback. We could also have added a progress bar for how far the lecture is going, a screen-sharing capability, and interactive whiteboard.

|

## Inspiration

The inspiration for this project stems from the well-established effectiveness of focusing on one task at a time, as opposed to multitasking. In today's era of online learning, students often find themselves navigating through various sources like lectures, articles, and notes, all while striving to absorb information effectively. Juggling these resources can lead to inefficiency, reduced retention, and increased distraction. To address this challenge, our platform consolidates these diverse learning materials into one accessible space.

## What it does

A seamless learning experience where you can upload and read PDFs while having instant access to a chatbot for quick clarifications, a built-in YouTube player for supplementary explanations, and a Google Search integration for in-depth research, all in one platform. But that's not all - with a click of a button, effortlessly create and sync notes to your Notion account for organized, accessible study materials. It's designed to be the ultimate tool for efficient, personalized learning.

## How we built it

Our project is a culmination of diverse programming languages and frameworks. We employed HTML, CSS, and JavaScript for the frontend, while leveraging Node.js for the backend. Python played a pivotal role in extracting data from PDFs. In addition, we integrated APIs from Google, YouTube, Notion, and ChatGPT, weaving together a dynamic and comprehensive learning platform

## Challenges we ran into

None of us were experienced in frontend frameworks. It took a lot of time to align various divs and also struggled working with data (from fetching it to using it to display on the frontend). Also our 4th teammate couldn't be present so we were left with the challenge of working as a 3 person team.

## Accomplishments that we're proud of

We take immense pride in not only completing this project, but also in realizing the results we envisioned from the outset. Despite limited frontend experience, we've managed to create a user-friendly interface that integrates all features successfully.

## What we learned

We gained valuable experience in full-stack web app development, along with honing our skills in collaborative teamwork. We learnt a lot about using APIs. Also a lot of prompt engineering was required to get the desired output from the chatgpt apu

## What's next for Study Flash

In the future, we envision expanding our platform by incorporating additional supplementary resources, with a laser focus on a specific subject matter

|

## Inspiration

Many of us had class sessions in which the teacher pulled out whiteboards or chalkboards and used them as a tool for teaching. These made classes very interactive and engaging. With the switch to virtual teaching, class interactivity has been harder. Usually a teacher just shares their screen and talks, and students can ask questions in the chat. We wanted to build something to bring back this childhood memory for students now and help classes be more engaging and encourage more students to attend, especially in the younger grades.

## What it does

Our application creates an environment where teachers can engage students through the use of virtual whiteboards. There will be two available views, the teacher’s view and the student’s view. Each view will have a canvas that the corresponding user can draw on. The difference between the views is that the teacher’s view contains a list of all the students’ canvases while the students can only view the teacher’s canvas in addition to their own.

An example use case for our application would be in a math class where the teacher can put a math problem on their canvas and students could show their work and solution on their own canvas. The teacher can then verify that the students are reaching the solution properly and can help students if they see that they are struggling.

Students can follow along and when they want the teacher’s attention, click on the I’m Done button to notify the teacher. Teachers can see their boards and mark up anything they would want to. Teachers can also put students in groups and those students can share a whiteboard together to collaborate.

## How we built it

* **Backend:** We used Socket.IO to handle the real-time update of the whiteboard. We also have a Firebase database to store the user accounts and details.

* **Frontend:** We used React to create the application and Socket.IO to connect it to the backend.

* **DevOps:** The server is hosted on Google App Engine and the frontend website is hosted on Firebase and redirected to Domain.com.

## Challenges we ran into

Understanding and planning an architecture for the application. We went back and forth about if we needed a database or could handle all the information through Socket.IO. Displaying multiple canvases while maintaining the functionality was also an issue we faced.

## Accomplishments that we're proud of

We successfully were able to display multiple canvases while maintaining the drawing functionality. This was also the first time we used Socket.IO and were successfully able to use it in our project.

## What we learned

This was the first time we used Socket.IO to handle realtime database connections. We also learned how to create mouse strokes on a canvas in React.

## What's next for Lecturely

This product can be useful even past digital schooling as it can save schools money as they would not have to purchase supplies. Thus it could benefit from building out more features.

Currently, Lecturely doesn’t support audio but it would be on our roadmap. Thus, classes would still need to have another software also running to handle the audio communication.

|

losing

|

# Inspiration

Meet one of our teammates, Lainey! Over the past three years, she has spent over 2,000 hours volunteering with youth who attend under-resourced schools in Washington state. During the sudden onset of the pandemic, the rapid school closures ended the state’s Free and Reduced Lunch program for thousands of children across the state, pushing the burden of purchasing healthy foods onto parents. It became apparent that many families she worked with heavily relied on government-provided benefits such as SNAP (Supplemental Nutrition Assistance Program) to purchase the bare necessities. Research shows that SNAP is associated with alleviating food insecurity. Receiving SNAP in early life can lead to improved outcomes in adulthood. Low-income families under SNAP are provided with an EBT (Electronic Benefit Transfer) card and are able to load a monthly balance and use it like a debit card to purchase food and other daily essentials.

However, the EBT system still has its limitations: to qualify to accept food stamps, stores must sell food in each of the staple food categories. Oftentimes, the only stores that possess the quantities of scale to achieve this are a small set of large chain grocery stores, which lack diverse healthy food options in favor of highly-processed goods. Not only does this hurt consumers with limited healthy options, it also prevents small, local producers from selling their ethically and sustainably sourced produce to those most in need. Studies have repeatedly shown a direct link between sustainable food production and food health quality.

The primary grocery sellers who have the means and scale to qualify to accept food stamps are large chain grocery stores, which often have varying qualities of produce (correlated with income in that area) that pale compared to output from smaller farms. Additionally, grocery stores often supplement their fresh food options with a large selection of cheaper, highly-processed items that are high in sodium, cholesterol, and sugar. On average, unhealthy foods are about $1.50 cheaper per day than healthy foods, making it both less expensive and less effort to choose those options. Studies have shown that lower income individuals “consume fewer fruits and vegetables, more sugar-sweetened beverages, and have lower overall diet quality”. This leads to deteriorated health, inadequate nutrition, and elevated risk for disease. In addition, groceries stores with healthier, higher quality products are often concentrated in wealthy areas and target a higher income group, making distance another barrier to entry when it comes to getting better quality foods.

Meanwhile, small, local farmers and stores are unable to accept food stamp payments. Along with being higher quality and supporting the community, buying local foods are also better for the environment. Local foods travel a shorter distance, and the structure of events like farmers markets takes away a customer’s dependency on harmful monocrop farming techniques. However, these benefits come with their own barriers as well. While farmers markets accept SNAP benefits, they (and similar events) aren’t as widespread: there are only 8600 markets registered in the USDA directory, compared to the over 62,000 grocery stores that exist in the USA. And the higher quality foods have their own reputation of higher prices.

Locl works to alleviate these challenges, offering a platform that supports EBT card purchases to allow SNAP benefit users to purchase healthy food options from local markets.

# What does Locl do?

Locl works to bridge the gap between EBT cardholders and fresh homegrown produce. Namely, it offers a platform where multiple local producers can list their produce online for shoppers to purchase with their EBT card. This provides a convenient and accessible way for EBT cardholders to access healthy meals, while also promoting better eating habits and supporting local markets and farmers. It works like a virtual farmers market, combining the quality of small farms with the ease and reach of online shopping. It makes it easier for a consumer to buy better quality foods with their EBT card, while also allowing a greater range of farms and businesses to accept these benefits. This provides a convenient and accessible way for EBT cardholders to access healthy meals, while also promoting better eating habits and supporting local markets and farmers.

When designing our product, some of our top concerns were the technological barrier of entry for consumers and ensuring an ethical and sustainable approach to listing produce online. To use Locl, users are required to have an electronic device and internet connection, ultimately limiting access within our target audience. Beyond this, we recognized that certain produce items or markets could be displayed disproportionally in comparison to others, which could create imbalances and inequities between all the stakeholders involved. We aim to address this issue by crafting a refined algorithm that balances the search appearance frequency from a certain product based on how many similar products like such are posted.

# Key Features

## EBT Support

Shoppers can convert their EBT balance into Locl credits. From there, they can spend their credits buying produce from our set of carefully curated suppliers. To prevent fraud, each vendor is carefully evaluated to ensure they sell ethically sourced produce. Thus, shoppers can only spend their Locl credits on produce, adhering to government regulation on SNAP benefits.

## Bank-less payment

Because low-income shoppers may not have access to a bank account, we've used Checkbook.io's virtual credit cards and direct deposit to facilitate payments between shoppers and vendors.

## Producer accessibility

By listing multiple vendors on one platform, Locl is able to circumvent the initial problems of scale. Rather than each vendor being its own store, we consolidate them all into one large store, thereby increasing accessibility for consumers to purchase products from smaller vendors.

## Recognizable marketplace

To improve the ease of use, Locl's interface is carefully crafted to emulate other popular marketplace applications such as Facebook Marketplace and Craigslist. Because shoppers will already be accustomed to our app, it'll far improve the overall user experience.

# How we built it

Locl revolves around a web app interface to allow shoppers and vendors to buy and sell produce.

## Flask

The crux of Locl centers on our Flask server. From there, we use requests and render\_templates() to populate our website with GET and POST requests.

## Supabase

We use Supabase and PostgreSQL to store our product, market, virtual credit card, and user information. Because Flask is a Python library, we use Supabase's community managed Python library to insert and update data.

## Checkbook.io

We use Checkbook.io's Payfac API to create transactions between shoppers and vendors. When people create an account on Locl, they are automatically added as a user in Checkbook with the `POST /v3/user` endpoint. Meanwhile, to onboard both local farmers and shoppers painlessly, we offer a bankless solution with Checkbook’s virtual credit card using the `POST /v3/account/vcc` endpoint.

First, shoppers deposit credits into their Locl account from the EBT card. The EBT funds are later redeemed with the state government by Locl. Whenever a user buys an item, we use the `POST /v3/check/digital` endpoint to create a transaction between them and the stores to pay for the goods. From there, vendors can also spend their funds as if it were a prepaid debit card. By using Checkbook’s API, we’re able to break down the financial barrier of having a bank account for low-income shoppers to buy fresh produce from local suppliers, when they otherwise wouldn’t have been able to.

# Challenges we encountered

Because we were all new to using these APIs, we were initially unclear about what actions they could support. For example, we wanted to use You.com API to build our marketplace. However, it soon became apparent that we couldn't embed their API into our static HTML page as we'd assume. Thus, we had to pivot to creating our own cards with Jinja.

# Looking forward

In the future, we hope to advance our API services to provide a wider breadth of services which would include more than just produce from local farmers markets. Given a longer timeframe, a few features we'd like to implement include:

* a search and filtering system to show shoppers their preferred goods.

* an automated redemption system with the state government for EBT.

* improved security and encryption for all API calls and database queries.

# Ethics

SNAP (Supplemental Nutrition Assistance Program), otherwise known as food stamps, is a government program that aids low-income families and individuals to purchase food. The inaccessibility of healthy foods is a pressing problem because there is a small number of grocery stores that accept food stamps, which are often limited to large, chain grocery stores that are not always accessible. Beyond this, these grocery stores often lack healthy food options in favor of highly-processed goods.

When doing further research into this issue, we were fortunate to have a team member who has knowledge about SNAP benefits through firsthand experience in classroom settings and at food banks. Through this, we learned about EBT (Electronic Benefit Transfer) cards, as well as their limitations. The only stores that can support EBT payments must offer a selection for each of the staple food categories, which prevents local markets and farmers from accepting food stamps as payment.

To tackle this issue of the limited accessibility of healthy foods for SNAP benefit users, we came up with Locl, an online platform that allows local markets and farmers to list fresh produce for EBT cardholders to purchase with food stamps. When creating Locl, we adhered to our goal of connecting food stamp users with healthy, ethically sourced foods in a sustainable manner. However, there are still many ethical challenges that must be explored further.

First, to use Locl, users would require a portable electronic device and an internet connection due to it being an online platform. The Pew Research center states that 29% of adults with incomes below $30,000/year do not have access to a smartphone and 44% do not have portable internet access. This would greatly lessen the range of individuals that we aim to serve.

Second, though Locl aims to serve SNAP beneficiaries, we also hope to aid local markets and farmers by increasing the number of potential customers. However, Locl runs the risk of displaying certain produce items or marketplaces disproportionately in comparison to others, which could create imbalances and inequities between all stakeholders involved. Furthermore, this display imbalance could limit user knowledge about certain marketplaces.

Third, Locl aims to increase ethical consumerism by connecting its users with sustainable markets and farmers. However, there arises the issue of selecting which markets and farmers to support on our platform. While considering baselines that we would expect marketplaces to meet to be displayed on Locl, we recognized that sustainability can be measured through a wide number of factors- labor, resources used, pollution levels, and began wondering whether we prioritize sustainability of items we market or the health of users. One example of this is meat, a popular food product which is known for its high health benefits, but similarly high water consumption and greenhouse gas levels. Narrowing these down could greatly limit the display of certain products.

Fourth, Locl does not have an option for users to filter the results that are displayed to them. Many EBT cardholders say that they do not use their benefits to make online purchases due to the difficulty of finding items on online store pages that qualify for their benefits as well as their dietary needs. Thus, our lack of a filter option would cause certain users to have increased difficulty in finding food options for themselves.

Our next step for Locl is to address the ethical concerns above, as well as explore ways to make it more accessible and well-known. However, there are still many components to consider from a sociotechnical lens. Currently, only 4% of SNAP beneficiaries make online purchases with their EBT cards. This small percentage may stem from reasons that range from lack of internet access, to not being aware that online options are available. We hope that with Locl, food stamp users will have increased access to healthy food options and local markets and farmers will have an increased customer-base.

# References

<https://ajph.aphapublications.org/doi/full/10.2105/AJPH.2019.305325>

<https://bmcpublichealth.biomedcentral.com/articles/10.1186/s12889-019-6546-2>

<https://www.ibisworld.com/industry-statistics/number-of-businesses/supermarkets-grocery-stores-united-states/> <https://www.masslive.com/food/2022/01/these-are-the-top-10-unhealthiest-grocery-items-you-can-buy-in-the-united-states-according-to-moneywise.html>

<https://farmersmarketcoalition.org/education/qanda/>

<https://bmcpublichealth.biomedcentral.com/articles/10.1186/s12889-019-6546-2>

<https://news.climate.columbia.edu/2019/08/09/farmers-market-week-2019/>

|

## Inspiration