anchor

stringlengths 86

24.4k

| positive

stringlengths 174

15.6k

| negative

stringlengths 76

13.7k

| anchor_status

stringclasses 3

values |

|---|---|---|---|

Record your happy, sad and motivational moments with our web-app. We hope to help you with your mental health during this time. When things get hard, remind yourself with a happy moment that has happened in your life, and motivate yourself with the goals you have set for yourself! We plan on further develop this site and increase customizability in the future.

|

## 💡 Inspiration

We got inspiration from or back-end developer Minh. He mentioned that he was interested in the idea of an app that helped people record their positive progress and showcase their accomplishments there. This then led to our product/UX designer Jenny to think about what this app would target as a problem and what kind of solution would it offer. From our research, we came to the conclusion quantity over quality social media use resulted in people feeling less accomplished and more anxious. As a solution, we wanted to focus on an app that helps people stay focused on their own goals and accomplishments.

## ⚙ What it does

Our app is a journalling app that has the user enter 2 journal entries a day. One in the morning and one in the evening. During these journal entries, it would ask the user about their mood at the moment, generate am appropriate response based on their mood, and then ask questions that get the user to think about such as gratuity, their plans for the day, and what advice would they give themselves. Our questions follow many of the common journalling practices. The second journal entry then follows a similar format of mood and questions with a different set of questions to finish off the user's day. These help them reflect and look forward to the upcoming future. Our most powerful feature would be the AI that takes data such as emotions and keywords from answers and helps users generate journal summaries across weeks, months, and years. These summaries would then provide actionable steps the user could take to make self-improvements.

## 🔧 How we built it

### Product & UX

* Online research, user interviews, looked at stakeholders, competitors, infinity mapping, and user flows.

* Doing the research allowed our group to have a unified understanding for the app.

### 👩💻 Frontend

* Used React.JS to design the website

* Used Figma for prototyping the website

### 🔚 Backend

* Flask, CockroachDB, and Cohere for ChatAI function.

## 💪 Challenges we ran into

The challenge we ran into was the time limit. For this project, we invested most of our time in understanding the pinpoint in a very sensitive topic such as mental health and psychology. We truly want to identify and solve a meaningful challenge; we had to sacrifice some portions of the project such as front-end code implementation. Some team members were also working with the developers for the first time and it was a good learning experience for everyone to see how different roles come together and how we could improve for next time.

## 🙌 Accomplishments that we're proud of

Jenny, our team designer, did tons of research on problem space such as competitive analysis, research on similar products, and user interviews. We produced a high-fidelity prototype and were able to show the feasibility of the technology we built for this project. (Jenny: I am also very proud of everyone else who had the patience to listen to my views as a designer and be open-minded about what a final solution may look like. I think I'm very proud that we were able to build a good team together although the experience was relatively short over the weekend. I had personally never met the other two team members and the way we were able to have a vision together is something I think we should be proud of.)

## 📚 What we learned

We learned preparing some plans ahead of time next time would make it easier for developers and designers to get started. However, the experience of starting from nothing and making a full project over 2 and a half days was great for learning. We learned a lot about how we think and approach work not only as developers and designer, but as team members.

## 💭 What's next for budEjournal

Next, we would like to test out budEjournal on some real users and make adjustments based on our findings. We would also like to spend more time to build out the front-end.

|

## Inspiration

Some things can only be understood through experience, and Virtual Reality is the perfect medium for providing new experiences. VR allows for complete control over vision, hearing, and perception in a virtual world, allowing our team to effectively alter the senses of immersed users. We wanted to manipulate vision and hearing in order to allow players to view life from the perspective of those with various disorders such as colorblindness, prosopagnosia, deafness, and other conditions that are difficult to accurately simulate in the real world. Our goal is to educate and expose users to the various names, effects, and natures of conditions that are difficult to fully comprehend without first-hand experience. Doing so can allow individuals to empathize with and learn from various different disorders.

## What it does

Sensory is an HTC Vive Virtual Reality experience that allows users to experiment with different disorders from Visual, Cognitive, or Auditory disorder categories. Upon selecting a specific impairment, the user is subjected to what someone with that disorder may experience, and can view more information on the disorder. Some examples include achromatopsia, a rare form of complete colorblindness, and prosopagnosia, the inability to recognize faces. Users can combine these effects, view their surroundings from new perspectives, and educate themselves on how various disorders work.

## How we built it

We built Sensory using the Unity Game Engine, the C# Programming Language, and the HTC Vive. We imported a rare few models from the Unity Asset Store (All free!)

## Challenges we ran into

We chose this project because we hadn't experimented much with visual and audio effects in Unity and in VR before. Our team has done tons of VR, but never really dealt with any camera effects or postprocessing. As a result, there are many paths we attempted that ultimately led to failure (and lots of wasted time). For example, we wanted to make it so that users could only hear out of one ear - but after enough searching, we discovered it's very difficult to do this in Unity, and would've been much easier in a custom engine. As a result, we explored many aspects of Unity we'd never previously encountered in an attempt to change lots of effects.

## What's next for Sensory

There's still many more disorders we want to implement, and many categories we could potentially add. We envision this people a central hub for users, doctors, professionals, or patients to experience different disorders. Right now, it's primarily a tool for experimentation, but in the future it could be used for empathy, awareness, education and health.

|

losing

|

[project video demo](https://github.com/R1tzG/SignSensei/assets/86858242/40b4d428-f614-4800-8151-0d3d9c74f5af)

## Inspiration

In an increasingly interconnected world, one of the most important skills we can acquire is the ability to communicate effectively with people from diverse backgrounds and abilities. American Sign Language (ASL) is a language used by millions of deaf and hard-of-hearing individuals around the world. However, there are still significant barriers preventing many from learning and using ASL. Our project, SignSensei, aims to break down those barriers, making it easier and faster for anyone to learn ASL, as well as other sign languages. We hope to promote inclusivity through communication for all.

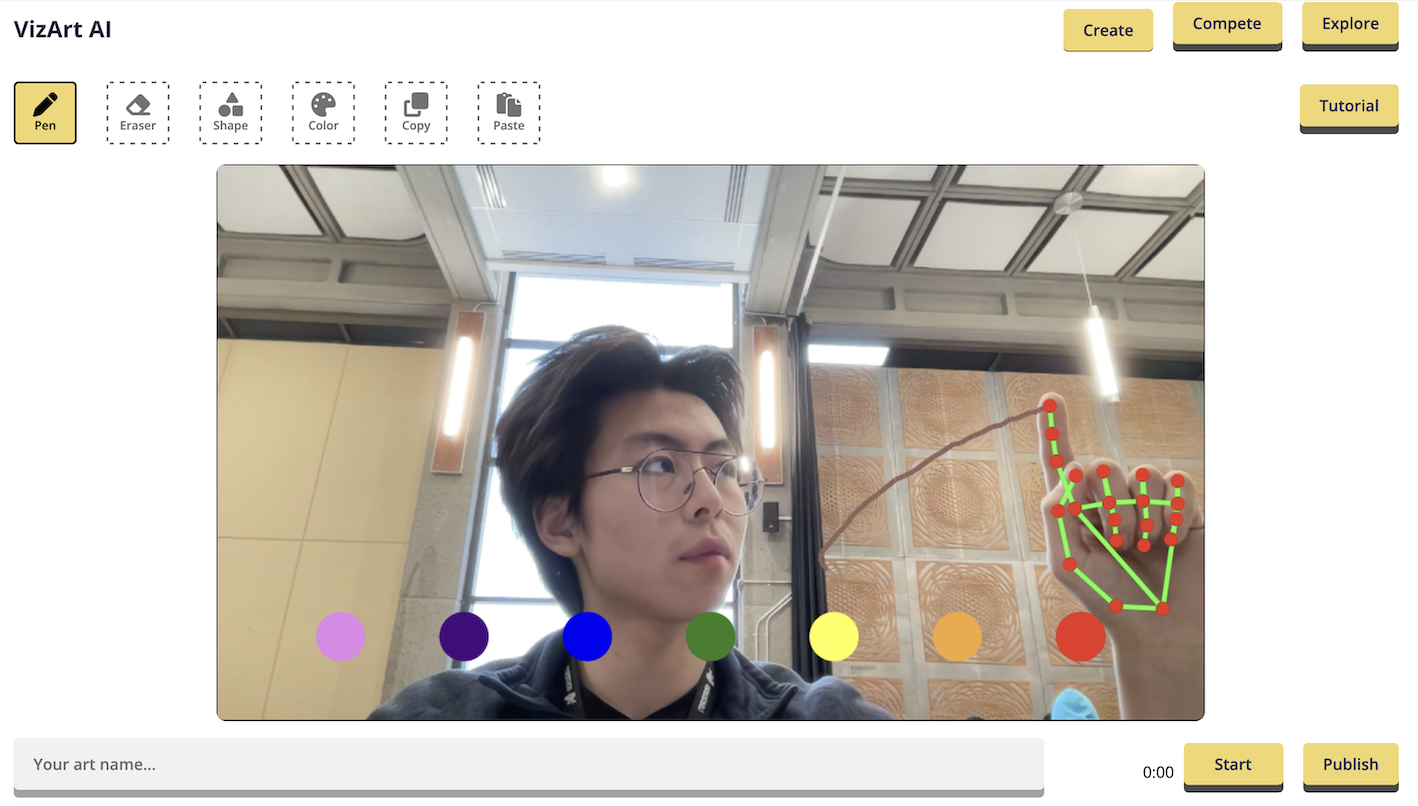

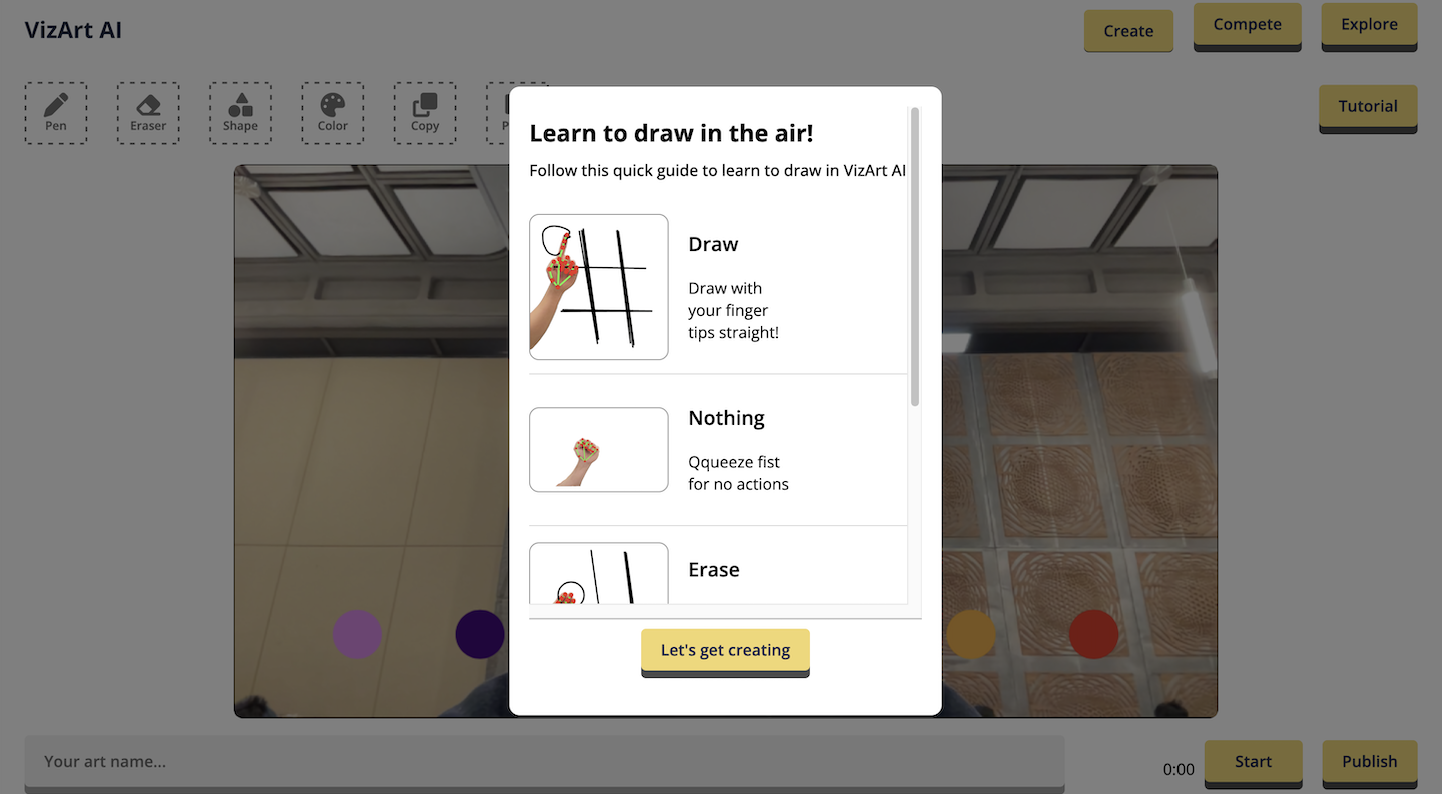

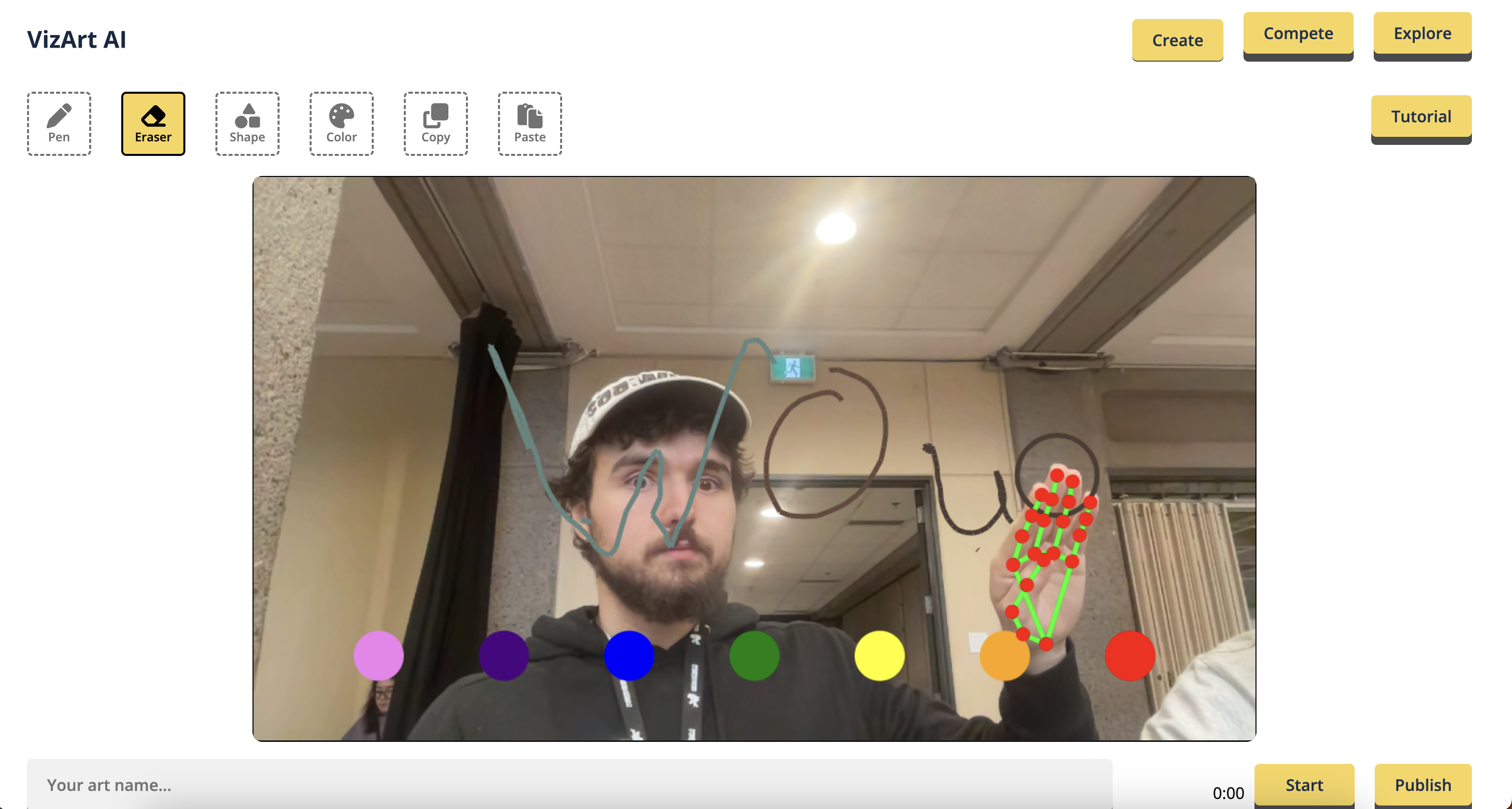

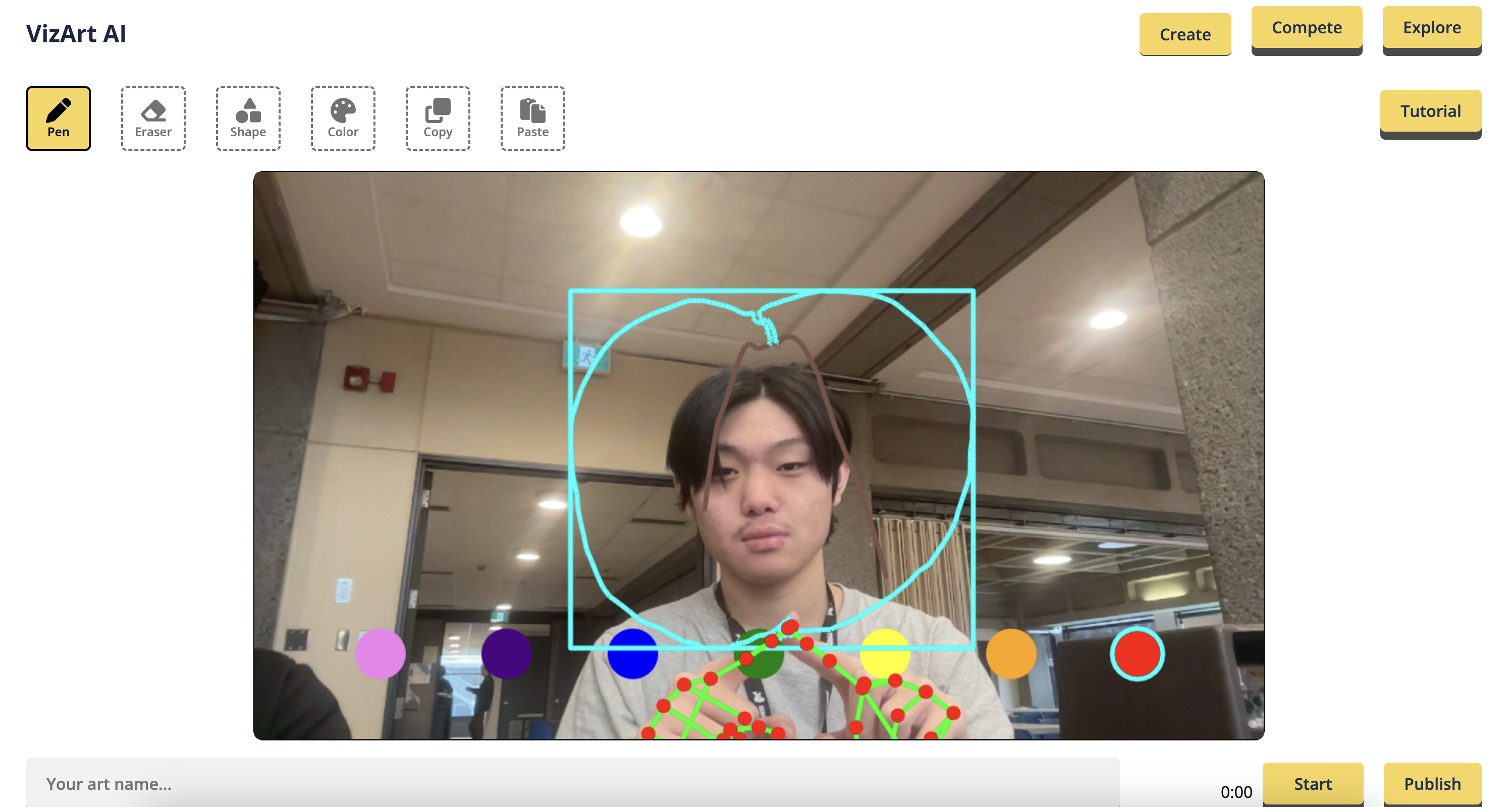

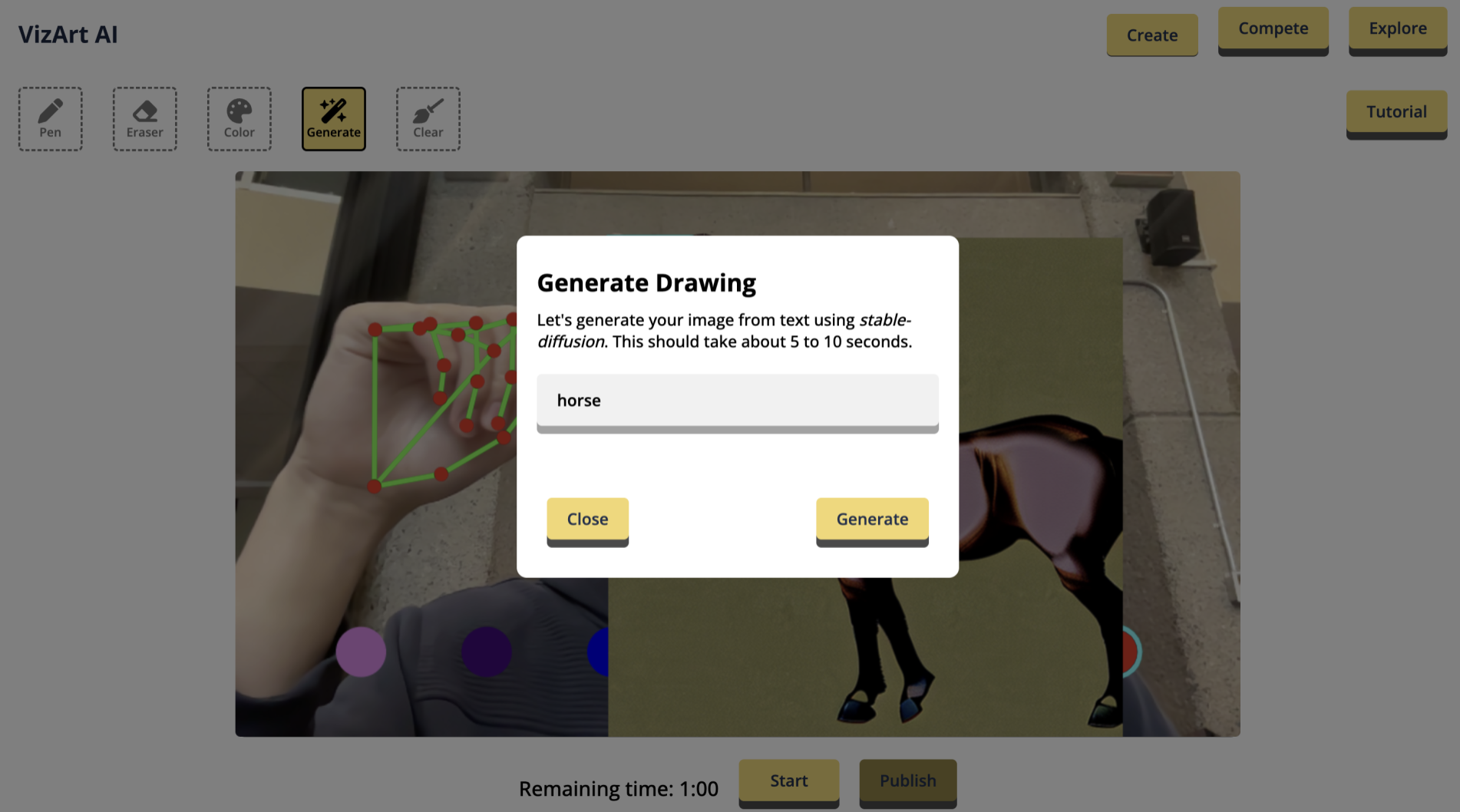

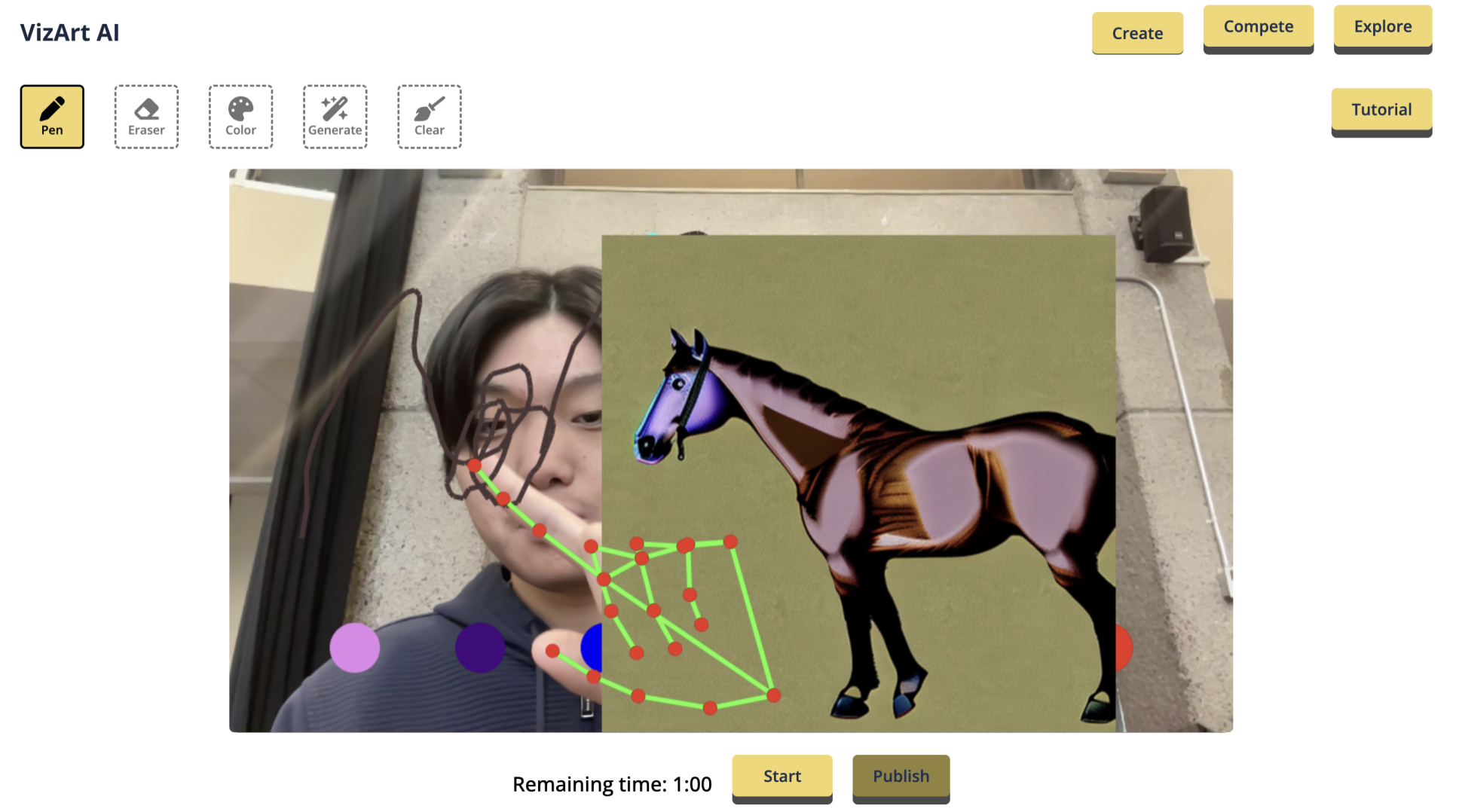

## What it does

SignSensei is a web application that gamifies the process of learning sign language. Using the webcam on your laptop (or front-facing camera on your phone), our app can detect the sign you are putting up with your hand, and tell you whether it is correct. You will be able to see yourself on the screen, as well as a lattice representation of your hand. This makes it easy to monitor your hands to make sure you are getting the signs right. The demo lesson (see video) teaches you the ASL alphabet.

## How we built it

Our sign language detection system is built in two parts. First we collect hand landmark coordinates using the Mediapipe machine learning library. We then pass the extracted coordinates through a custom fully connected neural network that we trained on a dataset of ASL signs. This approach allows us to detect signs from the webcam feed with high precision and accuracy (97% test accuracy on the custom model).

The sign detection system outlined above forms the backbone of our app. We also developed an interactive front-end with Streamlit, which serves lessons to users.

## Challenges we ran into

We were significantly challenged with developing an accurate detection model. Our first few attempts fell short in accuracy. We were eventually able to train a fast and accurate model for the task. Our final model is very simple but performant, made up primarily of Dense layers.

Another challenge we ran into was developing the user interface. At first, we looked at using React, but found it difficult to integrate Tensorflow and OpenCV seamlessly. We decided to switch gears and develop our front-end with Streamlit, leveraging the power of the Python programming language.

## Accomplishments that we're proud of

We are very proud of the powerful sign detection algorithm that we developed. Along with the use case that we found for ASL, the algorithm can easily be expanded to other sign languages, as well as applications in gesture recognition and VR gaming.

## What we learned

Through this project, we learned how to use Tensorflow to train machine learning models, as well as how they can be implemented in Javascript (even if this part didn't make it into the final application). We also learnt about different ways to make a front-end, from vanilla JS and React to solutions such as Flask.

## What's next for SignSensei

We're not done yet! We plan to add more interactive lessons to the app as well as add support for more sign languages.

View our slideshow [here](https://www.canva.com/design/DAFuAQrskMQ/y0TeL7Q-odr6c6klXBmfXA/view?utm_content=DAFuAQrskMQ&utm_campaign=designshare&utm_medium=link&utm_source=publishsharelink)

|

## Inspiration

We wanted to promote an easy learning system to introduce verbal individuals to the basics of American Sign Language. Often people in the non-verbal community are restricted by the lack of understanding outside of the community. Our team wants to break down these barriers and create a fun, interactive, and visual environment for users. In addition, our team wanted to replicate a 3D model of how to position the hand as videos often do not convey sufficient information.

## What it does

**Step 1** Create a Machine Learning Model To Interpret the Hand Gestures

This step provides the foundation for the project. Using OpenCV, our team was able to create datasets for each of the ASL alphabet hand positions. Based on the model trained using Tensorflow and Google Cloud Storage, a video datastream is started, interpreted and the letter is identified.

**Step 2** 3D Model of the Hand

The Arduino UNO starts a series of servo motors to activate the 3D hand model. The user can input the desired letter and the 3D printed robotic hand can then interpret this (using the model from step 1) to display the desired hand position. Data is transferred through the SPI Bus and is powered by a 9V battery for ease of transportation.

## How I built it

Languages: Python, C++

Platforms: TensorFlow, Fusion 360, OpenCV, UiPath

Hardware: 4 servo motors, Arduino UNO

Parts: 3D-printed

## Challenges I ran into

1. Raspberry Pi Camera would overheat and not connect leading us to remove the Telus IoT connectivity from our final project

2. Issues with incompatibilities with Mac and OpenCV and UiPath

3. Issues with lighting and lack of variety in training data leading to less accurate results.

## Accomplishments that I'm proud of

* Able to design and integrate the hardware with software and apply it to a mechanical application.

* Create data, train and deploy a working machine learning model

## What I learned

How to integrate simple low resource hardware systems with complex Machine Learning Algorithms.

## What's next for ASL Hand Bot

* expand beyond letters into words

* create a more dynamic user interface

* expand the dataset and models to incorporate more

|

### 🌟 Inspiration

We're inspired by the idea that emotions run deeper than a simple 'sad' or 'uplifting.' Our project was born from the realization that personalization is the key to managing emotional states effectively.

### 🤯🔍 What it does?

Our solution is an innovative platform that harnesses the power of AI and emotion recognition to create personalized Spotify playlists. It begins by analyzing a user's emotions, both from facial expressions and text input, to understand their current state of mind. We then use this emotional data, along with the user's music preferences, to curate a Spotify playlist that's tailored to their unique emotional needs.

What sets our solution apart is its ability to go beyond simplistic mood categorizations like 'happy' or 'sad.' We understand that emotions are nuanced, and our deep-thought algorithms ensure that the playlist doesn't worsen the user's emotional state but, rather, optimizes it. This means the music is not just a random collection; it's a therapeutic selection that can help users manage their emotions more effectively.

It's music therapy reimagined for the digital age, offering a new and more profound dimension in emotional support.

### 💡🛠💎 How we built it?

We crafted our project by combining advanced technologies and teamwork. We used Flask, Python, React, and TypeScript for the backend and frontend, alongside the Spotify and OpenAI APIs.

Our biggest challenge was integrating the Spotify API. When we faced issues with an existing wrapper, we created a custom solution to overcome the hurdle.

Throughout the process, our close collaboration allowed us to seamlessly blend emotion recognition, music curation, and user-friendly design, resulting in a platform that enhances emotional well-being through personalized music.

### 🧩🤔💡 Challenges we ran into

🔌 API Integration Complexities: We grappled with integrating and harmonizing multiple APIs.

🎭 Emotion Recognition Precision: Achieving high accuracy in emotion recognition was demanding.

📚 Algorithm Development: Crafting deep-thought algorithms required continuous refinement.

🌐 Cross-Platform Compatibility: Ensuring seamless functionality across devices was a technical challenge.

🔑 Custom Authorization Wrapper: Building a custom solution for Spotify API's authorization proved to be a major hurdle.

### 🏆🥇🎉 Accomplishments that we're proud of

#### Competition Win: 🥇

```

Our victory validates the effectiveness of our innovative project.

```

#### Functional Success: ✔️

```

The platform works seamlessly, delivering on its promise.

```

#### Overcoming Challenges: 🚀

```

Resilience in tackling API complexities and refining algorithms.

```

#### Cross-Platform Success: 🌐

```

Ensured a consistent experience across diverse devices.

```

#### Innovative Solutions: 🚧

```

Developed custom solutions, showcasing adaptability.

```

#### Positive User Impact: 🌟

```

Affirmed our platform's genuine enhancement of emotional well-being.

```

### 🧐📈🔎 What we learned

🛠 Tech Skills: We deepened our technical proficiency.

🤝 Teamwork: Collaboration and communication were key.

🚧 Problem Solving: Challenges pushed us to find innovative solutions.

🌟 User Focus: User feedback guided our development.

🚀 Innovation: We embraced creative thinking.

🌐 Global Impact: Technology can positively impact lives worldwide.

### 🌟👥🚀 What's next for Look 'n Listen

🚀 Scaling Up: Making our platform accessible to more users.

🔄 User Feedback: Continuous improvement based on user input.

🧠 Advanced AI: Integrating more advanced AI for better emotion understanding.

🎵 Enhanced Personalization: Tailoring the music therapy experience even more.

🤝 Partnerships: Collaborating with mental health professionals.

💻 Accessibility: Extending our platform to various devices and platforms.

|

partial

|

## Inspiration

At this stage of our lives, a lot of students haven’t yet developed the financial discipline to save money and tend to be wasteful with their spending. With this app, we hope to design an interface that focuses on minimalism. The app is easy to use and provides users with a visual breakdown of where their money is going to and from. This gives users a better idea of what their day-to-day spending habits look like and help them develop the necessary money saving skills that would be beneficial in the future.

## What it does

BreadBook enables users to input their expenses and income and categorize them chronologically from daily, monthly, weekly, to yearly perspectives. BreadBook also helps you visualize these finances across different time periods and assists you in budgeting properly throughout them.

## How we built it

This project was built using a simple web stack of Angular, Node.js and various Node libraries and packages. The back-end of the server is a simple REST api running on a Node.js express server that handles requests and allows the transmitting of data to the front-end. Our front-end was built using Angular and a few vfx packages such as chart.js.

## Accomplishments that we're proud of

Being able to implement various libraries of Angular and Node greatly helped us better understand our weaknesses and strengths as team members, and expanded our knowledge greatly regarding these technologies. Implementing chart.js to graphically show our data was a huge achievement given our limited experience with Angular modules.

## What we learned

Throughout the two day development process of our application, we all gained experience in using angular and what it allowed us to do in the creation of our web application. As a result, we all definitely became more comfortable with this framework, along with web development overall.

Our team decided to focus on the app functionalities right off the bat, as we all saw the potential and usefulness in our project idea and believed it should be our primary focus in the app’s development. As things progressed, we began to implement a cleaner UI and presentation aspect of the app as well, which was an entirely different realm of development. As a result, we all developed a better understanding of what to prioritize in the process of development as time is limited, as well as the importance in deciding whether or not to implement certain ideas based on their effort, required work and value to the project.

Finally one of the greatest parts about our participation in this event and being part of this project is the collaboration aspect. We can definitely all say we had an amazing experience from simply getting together, being creative and working in a group. This is especially different to us, as during this event, we created this project not as a school requirement, but through our own interests. It is when we work on projects like this that we are reminded of why we enjoy programming and the process of developing our ideas into something we can all use.

## What's next for BreadBook

The current state of BreadBook tracks all the day-to-day and recurring purchases that the user has made throughout daily, monthly or annual time periods. In the future, we would like to implement ways to identify or cut out unneeded speeding. We would give estimates on how much money could be saved daily/monthly/annually if this spending was reduced. We would also like to add a monthly spending plan that would allow you to allocate different amounts of money for different spending categories. When the spending limit of one or more of these categories is being approached a warning would be given to the user to ensure that they realize that they are near their limit.

|

Welcome to our demo video for our hack “Retro Readers”. This is a game created by our two man team including myself Shakir Alam and my friend Jacob Cardoso. We are both heading into our senior year at Dr. Frank J. Hayden Secondary School and enjoyed participating in our first hackathon ever, Hack The 6ix a tremendous amount.

We spent over a week brainstorming ideas for our first hackathon project and because we are both very comfortable with the idea of making, programming and designing with pygame, we decided to take it to the next level using modules that work with APIs and complex arrays.

Retro Readers was inspired by a social media post pertaining to another text font that was proven to help mitigate reading errors made by dyslexic readers. Jacob found OpenDyslexic which is an open-source text font that does exactly that.

The game consists of two overall gamemodes. These gamemodes aim towards an age group of mainly children and young children with dyslexia who are aiming to become better readers. We know that reading books is becoming less popular among the younger generation and so we decided to incentivize readers by providing them with a satisfying retro-style arcade reading game.

The first gamemode is a read and research style gamemode where the reader or player can press a key on their keyboard which leads to a python module calling a database of semi-sorted words from Wordnik API. The game then displays the word back to the reader and reads it aloud using a TTS module.

As for the second gamemode, we decided to incorporate a point system. Using the points the players can purchase unique customizables and visual modifications such as characters and backgrounds. This provides a little dopamine rush for the players for participating in a tougher gamemode.

The gamemode itself is a spelling type game where a random word is selected using the same python modules and API. Then a TTS module reads the selected word out loud for readers. The reader then must correctly spell the word to attain 5 points without seeing the word.

The task we found the most challenging was working with APIs as a lot of them were not deemed fit for our game. We had to scratch a few APIs off the list for incompatibility reasons. A few of these APIs include: Oxford Dictionary, WordsAPI and more.

Overall we found the game to be challenging in all the right places and we are highly satisfied with our final product. As for the future, we’d like to implement more reliable APIs and as for future hackathons (this being our first) we’d like to spend more time researching viable APIs for our project. And as far as business practicality goes, we see it as feasible to sell our game at a low price, including ads and/or pad cosmetics. We’d like to give a special shoutout to our friend Simon Orr allowing us to use 2 original music pieces for our game. Thank you for your time and thank you for this amazing opportunity.

|

# BananaExpress

A self-writing journal of your life, with superpowers!

We make journaling easier and more engaging than ever before by leveraging **home-grown, cutting edge, CNN + LSTM** models to do **novel text generation** to prompt our users to practice active reflection by journaling!

Features:

* User photo --> unique question about that photo based on 3 creative techniques

+ Real time question generation based on (real-time) user journaling (and the rest of their writing)!

+ Ad-lib style questions - we extract location and analyze the user's activity to generate a fun question!

+ Question-corpus matching - we search for good questions about the user's current topics

* NLP on previous journal entries for sentiment analysis

I love our front end - we've re-imagined how easy and futuristic journaling can be :)

And, honestly, SO much more! Please come see!

♥️ from the Lotus team,

Theint, Henry, Jason, Kastan

|

losing

|

## Inspiration

We found that the current price of smart doors on the market is incredibly expensive. We wanted to improve the current technology of smart doors at a fraction of the price. In addition, smart locks are not usually hands free, either requiring the press of a button or going on the User's phone. We wanted to make it as easy and fast as possible for User's to securely unlock their door while blocking intruders.

## What it does

Our product acts as a smart door with two-factor authentication to allow entry. A camera cross-matches your face with an internal database and also uses voice recognition to confirm your identity. Furthermore, the smart door provides useful information for your departure such as weather, temperature and even control of the lights in your home. This way, you can decide how much to put on at the door even if you forgot to check, and you won't forget to turn off the lights when you leave the house.

## How we built it

For the facial recognition portion, we used a Python script & OpenCV through the Qualcomm Dragonboard 410c, where we trained the algorithm to recognize correct and wrong individuals. For the user interaction, we used the Google Home to talk to the User and allow for the vocal confirmation as well as control over all other actions. We then used an Arduino to control a motor that would open and close the door.

## Challenges we ran into

OpenCV was incredibly difficult to work with. We found that the setup on the Qualcomm board was not well documented and we ran into several errors.

## Accomplishments that we're proud of

We are proud of getting OpenCV to work flawlessly and providing a seamless integration between the Google Home, the Qualcomm board and the Arduino. Each part was well designed to work on its own, and allowed for relatively easy integration together.

## What we learned

We learned a lot about working with the Google Home and the Qualcomm board. More specifically, we learned about all the steps required to set up a Google Home, the processes needed to communicate with hardware, and many challenges when developing computer vision algorithms.

## What's next for Eye Lock

We plan to market this product extensively and see it in stores in the future!

|

## Inspiration

We wanted to learn about machine learning. There are thousands of sliding doors made by Black & Decker and they're all capable of sending data about the door. With this much data, the natural thing to consider is a machine learning algorithm that can figure out ahead of time when a door is broken, and how it can be fixed. This way, we can use an app to send a technician a notification when a door is predicted to be broken. Since technicians are very expensive for large corporations, something like this can save a lot of time, and money that would otherwise be spent with the technician figuring out if a door is broken, and what's wrong with it.

## What it does

DoorHero takes attributes (eg. motor speed) from sliding doors and determines if there is a problem with the door. If it detects a problem, DoorHero will suggest a fix for the problem.

## How we built it

DoorHero uses a Tensorflow Classification Neural Network to determine fixes for doors. Since we didn't have actual sliding doors at the hackathon, we simulated data and fixes. For example, we'd assign high motor speed to one row of data, and label it as a door with a problem with the motor, or we'd assign normal attributes for a row of data and label it as a working door.

The server is built using Flask and runs on [Floydhub](https://floydhub.com). It has a Tensorflow Neural Network that was trained with the simulated data. The data is simulated in an Android app. The app generates the mock data, then sends it to the server. The server evaluates the data based on what it was trained with, adds the new data to its logs and training data, then responds with the fix it has predicted.

The android app takes the response, and displays it, along with the mock data it sent.

In short, an Android app simulates the opening and closing of a door and generates mock data about the door, which is sends everytime the door "opens", to a server using a Flask REST API. The server has a trained Tensorflow Neural Network, which evaluates the data and responds with either "No Problems" if it finds the data to be normal, or a fix suggestion if it finds that the door has an issue with it.

## Challenges we ran into

The hardest parts were:

* Simulating data (with no background in sliding doors, the concept of sliding doors sending data was pretty abstract).

* Learning how to use machine learning (turns out this isn't so easy) and implement tensorflow

* Running tensorflow on a live server.

## Accomplishments that we're proud of

## What we learned

* A lot about modern day sliding doors

* The basics of machine learning with tensorflow

* Discovered floydhub

## What we could have improve on

There are several things we could've done (and wanted to do) but either didn't have time or didn't have enough data to. ie:

* Instead of predicting a fix and returning it, the server can predict a set of potential fixes in order of likelihood, then send them to the technician who can look into each suggestion, and select the suggestion that worked. This way, the neural network could've learned a lot faster over time. (Currently, it adds the predicted fix to its training data, which would make for bad results

* Instead of having a fixed set of door "problems" for the door, we could have built the app so that in the beginning, when the neural network hasn't learned yet, it asks the technician for input after everytime the fix the door (So it can learn without data we simulated as this is what would have to happen in the normal environment)

* We could have made a much better interface for the app

* We could have added support for a wider variety of doors (eg. different models of sliding doors)

* We could have had a more secure (encrypted) data transfer method

* We could have had a larger set of attributes for the door

* We could have factored more into decisions (for example, detecting a problem if a door opens, but never closes).

|

## Inspiration

Tinder but Volunteering

## What it does

Connects people to volunteering organizations. Makes volunteering fun, easy and social

## How we built it

react for web and react native

## Challenges we ran into

So MANY

## Accomplishments that we're proud of

Getting a really solid idea and a decent UI

## What we learned

SO MUCH

## What's next for hackMIT

|

partial

|

## 📖✈️Inspiration

The past year and a half our team has been quarantined in our homes, dreaming about the day in which we would get to travel the world and explore. We made the most of our new lives by studying, working, starting new workout routines, cooking and of course watching ALOT of TikTok.

Believe it or not though, when all those things got too boring, we actually picked up a book and read. In fact, according to Global English Editing worldwide we've seen a 35% of individuals reading more during the pandemic.

So our team wanted to find a way to capitalize on both the incoming travel industry boom and the recent upward trends in reading. Something we can do by helping our users pick the perfect next travel destination based on their recent reading list.

Not only will this push individuals to travel to new places, but it can help remove the stress and anxiety around choosing a travel destination. With the world's most popular destinations likely to rise in price and congestion, new suggestions will be ever so important.

So join the movement, and **livre abroad**!

## 🏗️What it does

LivreAbroad is a web platform that allows users to record a list of books and receive a personalized list of the top travel destinations for them based on their reading. Not only will users receive amazing travel recommendations but our application will auto populate an Pinterest board with pictures of the locations we recommend for them to visit.

## 🔨 How we built it

* **Frontend:** built in React using Material UI and deployed on Microsoft Cloud Services (Azure)

* **Backend:** Python backend

* **Database:** CockroachDB

* **API:**Hootsuite API

* **Machine Learning: Tensorflow**

* **Branding and Design:** Figma

LivreAbroad is integrated with the Hootsuite API to take advantage of its unique and easy-to-use features to make our application seamless and fully integrated into our user's life by connecting to their social media accounts. After our application makes a recommendation it adds a picture of our location to a unique Pinterest board automatically. This way the user can see all the places they are recommended. We can further expand our integrations with Hootsuite by sending Facebook messages to friends of the user to invite them on these trips. As you can see on the Hootsuite dashboard our application has scheduled to post these travel locations to my Pinterest Board.

LivreAbroad uses NLTK’s natural language processing library as well as Tensorflow Machine Learning to generate travel destinations based on your recent reads. We used CockroachDB to store each user’s books, as well as our list of cities mapped to adjectives and genres that match that city. Storing the genres and cities with CockroachDB made it really easy to get a list of city recommendations based on the genres of the books inputted. And we stored the cities in Cockroach along with their adjectives so that we could use our Machine Learning Algorithm to determine genres for cities without them.

## 🚫 Challenges we ran into

* working with the Hootsuite API to post images on Pinterest

* building out the logic for location recommendation modelling

* scraping book information

## 🏆Accomplishments that we're proud of

* the way we work together, and the fun we had as a team

* creating a working product that we might even use ourselves

## 🧠 What we learned

* Sleep and balance is important

* To check university class deadlines prior to the weekend

## 🔮What's next for LivreAbroad

It's in your hands! Next time you're looking for a new travel location, try LivreAbroad and see what amazing adventures will sprout from our recommendations.

With that in mind, we'd love to build in the ability for users to input the places they have travelled and then producing recommendations of books they can read. Pictures of these books would also be automatically posted to a Pinterest Board as well for the user. We would also provide them with links to the GoodReads summary and can also be integrated with Pinterest's API and Goodreads API. We also can further use Hootsuite to message friends about their travel plans and invite them to facilitate groups trips.

|

## Inspiration

Traveling can often be stressful, with countless variables to consider such as budget, destinations, activities, and personal interests. We aimed to create an app that simplifies travel planning, making it more enjoyable and personalized. Marco.ai was inspired by the need for a comprehensive travel companion that not only provides an engaging way to 'match' with various trip components but also offers real-time, personalized recommendations based on your location, ensuring users can make the most out of their trips.

## What it does

Marco.AI uses your geolocation on a trip to find food and activities that might be of interest to you. In comparison to competitors like Expedia and Google Search, Marco.AI isinputted with personalized data and your present location and provides live data based on your past preferences and adventures! After each experience, we ask for a 1-10 rating and use an initial survey to store a profile with your preferences.

## How we built it

To build Marco.ai, we integrated You.com, Groq Llama3 8b, and GPT-4o APIs. For the backend, we utilized python to handle user data, travel plans, and interactions with third-party APIs in a JSON format. For each experience, our model generates keywords relating to a 1-10 rating of the experience. Ex: a 10/10 for beach would have keywords like "ocean, calm, relaxing." For the mobile app frontend, we chose React Native to connect to our model output and present an easy to use interface. Python's standard libraries for handling JSON data were employed to parse and save AI-generated recommendations. Additionally, we implemented functionalities to dynamically update user ratings and preferences, ensuring the app remains relevant and personalized.

## Challenges we ran into

As first-time hackers, we definitely ran into a few obstacles. Combining multiple APIs and learning how they work took a while to figure out and implement into our model. We started off by using mindsDB and trying to utilize a RAG model with You.com. However we realized that for our purpose, we didn't need to use a largescale model management and platform and decided to move towards using prompt engineering. Engineering our prompts for GPT-40 was a back and forth process of learning how to properly utilize the AI to give us our output in a formatted way, making it easier to parse.

The most challenging aspect for our team was frontend design. This was our first experience with app development, and the learning curve was steep. We are happy that we are able to provide a functional prototype that can already be used by people while they plan a trip!

## What's next for marco.ai

In the future we hope to bring this model to life with payment integration and a feature to be able to swipe through and save different elements of your trip: hotel, flights, food based on your interests and budgets then pay at the end. Additionally, we aspire to transform Marco.ai into a social platform where users can share their past vacation experiences, likes, dislikes, and recommendations, creating a vibrant community of travel enthusiasts! Marco aims to pioneer a social app focused on encompassing travel experiences, filling a gap that has yet to be explored in the social media landscape.

|

## Inspiration

As a startup founder, it is often difficult to raise money, but the amount of equity that is given up can be alarming for people who are unsure if they want the gasoline of traditional venture capital. With VentureBits, startup founders take a royalty deal and dictate exactly the amount of money they are comfortable raising. Also, everyone can take risks on startups as there are virtually no starting minimums to invest.

## What it does

VentureBits allows consumers to browse a plethora of early stage startups that are looking for funding. In exchange for giving them money anonymously, the investors will gain access to a royalty deal proportional to the amount of money they've put into a company's fund. Investors can support their favorite founders every month with a subscription, or they can stop giving money to less promising companies at any time. VentureBits also allows startup founders who feel competent to raise just enough money to sustain them and work full-time as well as their teams without losing a lot of long term value via an equity deal.

## How we built it

We drew out the schematics on the whiteboards after coming up with the idea at YHack. We thought about our own experiences as founders and used that to guide the UX design.

## Challenges we ran into

We ran into challenges with finance APIs as we were not familiar with them. A lot of finance APIs require approval to use in any official capacity outside of pure testing.

## Accomplishments that we're proud of

We're proud that we were able to create flows for our app and even get a lot of our app implemented in react native. We also began to work on structuring the data for all of the companies on the network in firebase.

## What we learned

We learned that finance backends and logic to manage small payments and crypto payments can take a lot of time and a lot of fees. It is a hot space to be in, but ultimately one that requires a lot of research and careful study.

## What's next for VentureBits

We plan to see where the project takes us if we run it by some people in the community who may be our target demographic.

|

losing

|

## Project Purpose:

Everyone has been in a situation where they need to talk to someone. Whether to unload the burden or ask for trusted advice, but does everyone have someone they trust enough to always talk to? Unfortunately, it doesn’t seem like it.

### Let the Data Speak:

* **Fig 1:** Current generation reports the highest levels of loneliness (79%)

* **Fig 2:** Number of close friendships significantly declined from 1990 to 2021

* **Fig 3:** The most alarming trend: the number of suicides has been rising since the 2000s

## Project Motivation:

I am an international student. Making friends was always hard, and I often felt isolated. I had no one to talk to about it, except my ex-girlfriend, but the long-distance relationship didn’t survive due to the distance. This added to my plate. I didn’t have close friends, and therapy was either expensive or had a long waitlist when provided by school. I found myself talking to ChatGPT about my feelings. I noticed it really helped me get things off my chest and shift from a dramatic point of view to a more realistic one. ChatGPT helped me overcome moments of isolation and emotional overloads.

However, there were a couple of issues. Every time I started talking to it, it had no idea who I was. It gave me generic responses until I shared more details deeper into the conversation. But looking back on these crisis moments, I believe ChatGPT helped me avoid having more worries and anxiety today.

## Conceptual Key Features:

I identified four main issues from my experience interacting with it in crisis moments, which I aim to solve in this project:

1. Lack of long-term memory.

2. Lack of short-term relevance.

3. Its communication style being more "chat-botish" instead of "humanish."

4. It never checked in on me.

These four factors are crucial to make it feel less like a generic chatbot and more like a caring friend.

## Technical Key Features:

1. **Long-Term Memory:**

* **Dynamic Categorical Memory:** After each interaction, tailored GPT agents update a set of summaries related to aspects of life:

`text

core_values_and_beliefs, mental_and_emotional_well_being, family_relationships, health_issues, personal_background,

aspirations_and_fears, profile_summary, strengths_and_weaknesses, romantic_relationships, social_circle_dynamics,

daily_routines, work_environment_and_dynamics, most_recent_challenges, most_recent_accomplishments,

emotional_triggers, communication_style, hobbies_and_interests, personal_development_and_skills,

financial_situation_and_goals, academic_performance_and_experiences, social_life_and_friendships,

physical_health_and_lifestyle, personal_interests_and_hobbies, past_traumas`

* The existing summary in each category is updated from the current interaction. Once updated, a master summary is generated, which guides the next interaction.

2. **Short-Term Relevance:**

* **Targeted Retrieval:** During conversation, a specialized GPT agent decides whether pulling up any categorical summaries is relevant. It can return an empty list or a list of three most relevant summaries. If not empty, a summarizing agent integrates them into the conversation seamlessly, providing GPT with relevant information.

3. **Chatbot Style Improvement:**

* **Three Layers of Humanization:**

1. **Master Prompt:** Directs GPT to respond in a human-like, “SMS-chat-with-friend” style.

2. **Personal User Communication Preference:** Pulled from the "communication\_style" category and dynamically updated after each interaction.

3. **Humanize Filter:** This top-layer filter ensures the response style is relevant to the last ten messages, splits long answers into multiple messages, and removes unnecessary periods to emulate human SMS texting.

4. **Check-In Feature:**

* **Check-In Scheduler:** After each interaction, this agent evaluates if a check-in message would be beneficial. It generates a check-in message and schedules a follow-up (within 1-24 hours) based on the context. The check-in message is sent to the user at the scheduled time.

## Goals:

The COVID vaccine does not guarantee that you will never get COVID. Similarly, this bot does not promise to completely eliminate the issues of loneliness and isolation.

Just as the vaccine lowers the risk of severe complications from the virus, the goal of this project is to lower the levels of loneliness and isolation, potentially preventing the tragedy of loneliness-related suicides.

## Challenges:

* Prompt engineering was a huge one. The project contains 35 prompts for 10 tailored agents. Writing them was not easy to ensure the desired outcome from the agents.

* The biggest one was to make GPT talk in a human-like SMS conversation style. It took me multiple layers (3) and many failed prompts to craft a style that feels human.

* It was hard to ensure that the LLM agents return the output in the expected format, which could further be used in standard algorithms.

## Accomplishments That I'm Proud Of:

* I think it talks like a caring friend. Many prompts were tested to ensure that. As of right now, the style is nice and mellow.

* Targeted retrieval is analyzing what aspects of memory will be beneficial for the current convo, pulling, summarizing, and injecting them. Pretty sick.

* Check-in scheduling is a nice and needed feature. Analyze the convo and schedule a check-in, almost like a doctor.

## What I Learned:

* Prompt engineering 101.

* How to make AI LLM agents and ensure that the output from them is in a required specific format.

* How to make 10 agents work together cohesively: generate summaries, and based on generated summaries, schedule and insert check-ins into the database table.

## Future Vectors:

* Send memes to the user.

* Improve targeted retrieval call logic.

* Add scheduled user suggestions for deeper communication style personalization, such as: "How is my conversation style so far? Is it too optimal/dull? I can adjust."

* Check-in scheduling: Improve the time scheduling mechanism.

|

## Inspiration🎓

To make the context clear, we are three students who believe that meaningful work facilitates self-content, inspiration and projects that either help others or ourselves. Since we joined university(we are all first-years), we did not find what we did so meaningful anymore. We had great expectations since we were all enrolled in a top 100 university. Still, we were surprised to see how little they cared about our creativity, ideas, and overall potential. Things were too theoretical; some answers in different disciplines, which turned out to be correct, **were dismissed because they were not based on ”the old ways”**.

**Also, there was no easy way to cooperate with other disciplines.** Unless you go out partying, and by some luck, you are not an introvert, there is no easy way to meet someone. For example, maybe as a business student, you cannot really meet someone from computer science or design so easily. Unless you go out of your way, there is no simple way to meet like-minded people that could help you create something meaningful.

Obviously, there are apps such as Instagram, Facebook, Linkedin, Reddit and fiver. Though, the way they are created and marketed, there is not much chance you find what you need. Almost no one answers messages from strangers on Instagram or Facebook. On LinkedIn, people are there for internships and money, pretending to be some fancy intellectual. Reddit is not really used by that many students, not as a way to meet students anyways, and if you need a specialist on fiver for anything unless you are rich, good luck with paying him.

We want to create a safe place for us, young individuals, who want something more than base our happiness on exam grades, work for a corporation for our entire life after 3-5 years of grinding for good marks, for subjects that don’t necessarily help you at anything or where we can meet students from all the disciplines, not only our own.

**Everybody is a genius. But if you judge a fish by its ability to climb a tree, it will live its whole life believing that it is stupid”-actually not by Albert Einstein.**

We strongly advise people to pursue a university; we are not against it, though we advise not to base your life upon it; it’s great as a safety net and learning place. We don’t like the old premise of you will do nothing with your life unless you finish your degree we want to truly bring the youth to their true potential, not inhibit it. Each year, the crisis of meaning expands, especially in young individuals. If not give rebirth to it, we would love to contribute to providing students with another chance to create, develop and meet other great colleagues

## What it does🔧

**StudHub** is an app with different features that facilitate flow in terms of building projects, meeting open-minded individuals and discussing with and helping fellow students.

**The main features** will be the main page, creating a post(with a specific template) and a search bar. The main page will consist of projects posted based on a template that doesn’t reveal too much, though enough to make people get the idea. For example, you are a computer science student with a great app idea. You are a great back-end developer though you know nothing in UI/UX design; respectively, you are pretty introverted and don’t know how to actually create a brand out of it as well. By posting your idea succinctly and selecting the skills you need for your projects, people with those specific skills, if they like your idea, can send you a request to get in touch with you. On the other hand, you can also search for these people in the search bar.

As stated above, if you are a creative individual, you can post your ideas and find the people you need for projects. On the other hand, if you want to do something meaningful with your free time but you are sick of volunteering work that just gives you a diploma and a line in your cv, this is a place for you too!

**Other Features** consist of:

1. “Reddit-style” thread (we are still developing/designing our own style for this), where you can ask questions, discuss different topics, and search for answers to whatever questions you have. It can be mostly based around student problems, passions, anything really! We want to create a safe space for meeting other students too!

2. Messages

3. Notifications

4. Profile (where you can select your skills and write about yourself; we want the real you! We’ll try to make sure no prospective employer can judge you based on that!

5. Learning environment; in the future, here, we will create courses on practical topics that are important but not so often taught in university. (public speaking, leadership, etc.)

We initially viewed it as a website. We have started development on Firebase. Though during the hackathon we realized that our main target demographic(Students and young individuals) uses mostly their phones, which meant an app would be significantly better and preferred. Then we started working on Flutter.

## How we built it👨🎨

Using the Flutter framework, we built a cross-platform app (Android/IOS/Web). For the backend, we used Firebase as a provider, its Authentication module, and also Firebase Firestore for our NoSQL database.

## Challenges we ran into⚖️

The post feature is the greatest challenge, which is still in discussion. We want to build a template and guideline as straightforward as possible that does not disclose too many of the ideas that someone will just take it. We are thinking about how to implement NDAs when you get it to discuss with interested people, but right now, the focus is to create a working MVP.

The overall concept is pretty hard to explain without looking like we are against universities. We genuinely find them very important to one's individual development. We are actively working to find a way to tell our story and create a brand that supports creativity and the individual while not sounding too much against the traditional model.

Also, due to the many features we want to add, it is a constant struggle to make everything as efficient, aesthetic and easy as possible. We are pretty much in an age of attention deficit, so we want to build something neat and user-friendly.

## Accomplishments that we're proud of🧐

We are genuinely surprised by the fact that our app constantly worked almost without any problems throughout the whole 36h.

We have never fought on any subject; we constantly built on each other's ideas and realized that we work lovely as a team. Any problems we had were discussed calmly and found a middle way.

## What we learned 👩🏫

A new programming language we had never worked with Dart before until the start of the Hackathon!

A new framework, it was our first time on Flutter before either!

How to listen to each other more without interrupting. And most importantly, working without sleep for 36 hours and a lot of caffeine! :D

## What's next for StudHub🚀

We have discussed this thoroughly, and after the Hackathon, we need to find an affordable UX/UI designer (ironically enough, our app would have been so helpful for this) to either join our project or at least build a starting page where we can have a subscription list, where we could make the "proof of concept" for our idea.

Eventually, we will send emails with updates on the project. During this Hackathon, we realized how in love we are with this idea, and we want to continue building on it and eventually launching it.

For now, our focus will be to design it as efficient, aesthetic and user-friendly and make it functional. We want to go through what we built during the Hackathon to analyze what we did out of speed and what we want to keep. There are many things that need more thought.

We also want to add "Meet your Mentor"! In an even further future, we want to implement a feature where our dear users can talk with experienced people from a diverse array of subjects, ask for advice on a making project, open a business, start research, etc.

We hope that our concept will catch on and become a big trend, eventually leading to many young individuals with great potential to meet each other and build beautiful things! I personally feel lucky to have met the people I have worked within this Hackathon because they became some of my best friends that I spend much time with. We hope to take the luck element out and make it easier for people to meet such beautiful people, that not only they will create cool projects and businesses, but they will become maybe friends for life.

Do not forget, creating stuff is cool but meeting great people is sometimes even cooler!

|

## Inspiration

There is a need for an electronic health record (EHR) system that is secure, accessible, and user-friendly. Currently, hundred of EHRs exist and different clinical practices may use different systems. If a patient requires an emergency visit to a certain physician, the physician may be unable to access important records and patient information efficiently, requiring extra time and resources that strain the healthcare system. This is especially true for patients traveling abroad where doctors from different countries may be unable to access a centralized healthcare database in another.

In addition, there is a strong potential to utilize the data available for improved analytics. In a clinical consultation, patient description of symptoms may be ambiguous and doctors often want to monitor the patient's symptoms for an extended period. With limited resources, this is impossible outside of an acute care unit in a hospital. As access to the internet is becoming increasingly widespread, patients may be able to self-report certain symptoms through a web portal if such an EHR exists. With a large amount of patient data, artificial intelligence techniques can be used to analyze the similarity of patients to predict certain outcomes before adverse events happen such that intervention can occur timely.

## What it does

myHealthTech is a block-chain EHR system that has a user-friendly interface for patients and health care providers to record patient information such as clinical visitation history, lab test results, and self-reporting records from the patient. The system is a web application that is accessible from any end user that is approved by the patient. Thus, doctors in different clinics can access essential information in an efficient manner. With the block-chain architecture compared to traditional databases, patient data is stored securely and anonymously in a decentralized manner such that third parties cannot access the encrypted information.

Artificial intelligence methods are used to analyze patient data for prognostication of adverse events. For instance, a patient's reported mood scores are compared to a database of similar patients that have resulted in self-harm, and myHealthTech will compute a probability that the patient will trend towards a self-harm event. This allows healthcare providers to monitor and intervene if an adverse event is predicted.

## How we built it

The block-chain EHR architecture was written in solidity, truffle, testRPC, and remix. The web interface was written in HTML5, CSS3, and JavaScript. The artificial intelligence predictive behavior engine was written in python.

## Challenges we ran into

The greatest challenge was integrating the back-end and front-end components. We had challenges linking smart contracts to the web UI and executing the artificial intelligence engine from a web interface. Several of these challenges require compatibility troubleshooting and running a centralized python server, which will be implemented in a consistent environment when this project is developed further.

## Accomplishments that we're proud of

We are proud of working with novel architecture and technology, providing a solution to solve common EHR problems in design, functionality, and implementation of data.

## What we learned

We learned the value of leveraging the strengths of different team members from design to programming and math in order to advance the technology of EHRs.

## What's next for myHealthTech?

Next is the addition of additional self-reporting fields to increase the robustness of the artificial intelligence engine. In the case of depression, there are clinical standards from the Diagnostics and Statistical Manual that identify markers of depression such as mood level, confidence, energy, and feeling of guilt. By monitoring these values for individuals that have recovered, are depressed, or inflict self-harm, the AI engine can predict the behavior of new individuals much stronger by logistically regressing the data and use a deep learning approach.

There is an issue with the inconvenience of reporting symptoms. Hence, a logical next step would be to implement smart home technology, such as an Amazon Echo, for the patient to interact with for self reporting. For instance, when the patient is at home, the Amazon Echo will prompt the patient and ask "What would you rate your mood today? What would you rate your energy today?" and record the data in the patient's self reporting records on myHealthTech.

These improvements would further the capability of myHealthTech of being a highly dynamic EHR with strong analytical capabilitys to understand and predict the outcome of patients to improve treatment options.

|

losing

|

## Inspiration

dwarf fortress and stardew valley

## What it does

simulates farming

## How we built it

quickly

## Challenges we ran into

learning how to farm

## Accomplishments that we're proud of

making a frickin gaem

## What we learned

games are hard

farming is harder

## What's next for soilio

make it better

|

# CourseAI: AI-Powered Personalized Learning Paths

## Inspiration

CourseAI was born from the challenges of self-directed learning in our information-rich world. We recognized that the issue isn't a lack of resources, but rather how to effectively navigate and utilize them. This inspired us to leverage AI to create personalized learning experiences, making quality education accessible to everyone.

## What it does

CourseAI is an innovative platform that creates personalized course schedules on any topic, tailored to the user's time frame and desired depth of study. Users input what they want to learn, their available time, and preferred level of complexity. Our AI then curates the best online resources into a structured, adaptable learning path. Key features include:

* AI-driven content curation from across the web

* Personalized scheduling based on user preferences

* Interactive course customization through an intuitive button-based interface

* Multi-format content integration (articles, videos, interactive exercises)

* Progress tracking with checkboxes for completed topics

* Adaptive learning paths that evolve based on user progress

## How we built it

We developed CourseAI using a modern, scalable tech stack:

* Frontend: React.js for a responsive and interactive user interface

* Backend Server: Node.js to handle API requests and serve the frontend

* AI Model Backend: Python for its robust machine learning libraries and natural language processing capabilities

* Database: MongoDB for flexible, document-based storage of user data and course structures

* APIs: Integration with various educational content providers and web scraping for resource curation

The AI model uses advanced NLP techniques to curate relevant content, and generate optimized learning schedules. We implemented machine learning algorithms for content quality assessment and personalized recommendations.

## Challenges we ran into

1. API Cost Management: Optimizing API usage for content curation while maintaining cost-effectiveness.

2. Complex Scheduling Logic: Creating nested schedules that accommodate various learning styles and content types.

3. Integration Complexity: Seamlessly integrating diverse content types into a cohesive learning experience.

4. Resource Scoring: Developing an effective system to evaluate and rank educational resources.

5. User Interface Design: Creating an intuitive, button-based interface for course customization that balances simplicity with functionality.

## Accomplishments that we're proud of

1. High Accuracy: Achieving a 95+% accuracy rate in content relevance and schedule optimization.

2. Elegant User Experience: Designing a clean, intuitive interface with easy-to-use buttons for course customization.

3. Premium Content Curation: Consistently sourcing high-quality learning materials through our AI.

4. Scalable Architecture: Building a robust system capable of handling a growing user base and expanding content library.

5. Adaptive Learning: Implementing a flexible system that allows users to easily modify their learning path as they progress.

## What we learned

This project provided valuable insights into:

* The intricacies of AI-driven content curation and scheduling

* Balancing user preferences with optimal learning strategies

* The importance of UX design in educational technology

* Challenges in integrating diverse content types into a cohesive learning experience

* The complexities of building adaptive learning systems

* The value of user-friendly interfaces in promoting engagement and learning efficiency

## What's next for CourseAI

Our future plans include:

1. NFT Certification: Implementing blockchain-based certificates for completed courses.

2. Adaptive Scheduling: Developing a system for managing backlogs and automatically adjusting schedules when users miss sessions.

3. Enterprise Solutions: Creating a customizable version of CourseAI for company-specific training.

4. Advanced Personalization: Implementing more sophisticated AI models for further personalization of learning paths.

5. Mobile App Development: Creating native mobile apps for iOS and Android.

6. Gamification: Introducing game-like elements to increase motivation and engagement.

7. Peer Learning Features: Developing functionality for users to connect with others studying similar topics.

With these enhancements, we aim to make CourseAI the go-to platform for personalized, AI-driven learning experiences, revolutionizing education and personal growth.

|

## What it does

"ImpromPPTX" uses your computer microphone to listen while you talk. Based on what you're speaking about, it generates content to appear on your screen in a presentation in real time. It can retrieve images and graphs, as well as making relevant titles, and summarizing your words into bullet points.

## How We built it

Our project is comprised of many interconnected components, which we detail below:

#### Formatting Engine

To know how to adjust the slide content when a new bullet point or image needs to be added, we had to build a formatting engine. This engine uses flex-boxes to distribute space between text and images, and has custom Javascript to resize images based on aspect ratio and fit, and to switch between the multiple slide types (Title slide, Image only, Text only, Image and Text, Big Number) when required.

#### Voice-to-speech

We use Google’s Text To Speech API to process audio on the microphone of the laptop. Mobile Phones currently do not support the continuous audio implementation of the spec, so we process audio on the presenter’s laptop instead. The Text To Speech is captured whenever a user holds down their clicker button, and when they let go the aggregated text is sent to the server over websockets to be processed.

#### Topic Analysis

Fundamentally we needed a way to determine whether a given sentence included a request to an image or not. So we gathered a repository of sample sentences from BBC news articles for “no” examples, and manually curated a list of “yes” examples. We then used Facebook’s Deep Learning text classificiation library, FastText, to train a custom NN that could perform text classification.

#### Image Scraping

Once we have a sentence that the NN classifies as a request for an image, such as “and here you can see a picture of a golden retriever”, we use part of speech tagging and some tree theory rules to extract the subject, “golden retriever”, and scrape Bing for pictures of the golden animal. These image urls are then sent over websockets to be rendered on screen.

#### Graph Generation

Once the backend detects that the user specifically wants a graph which demonstrates their point, we employ matplotlib code to programmatically generate graphs that align with the user’s expectations. These graphs are then added to the presentation in real-time.

#### Sentence Segmentation

When we receive text back from the google text to speech api, it doesn’t naturally add periods when we pause in our speech. This can give more conventional NLP analysis (like part-of-speech analysis), some trouble because the text is grammatically incorrect. We use a sequence to sequence transformer architecture, *seq2seq*, and transfer learned a new head that was capable of classifying the borders between sentences. This was then able to add punctuation back into the text before the rest of the processing pipeline.

#### Text Title-ification

Using Part-of-speech analysis, we determine which parts of a sentence (or sentences) would best serve as a title to a new slide. We do this by searching through sentence dependency trees to find short sub-phrases (1-5 words optimally) which contain important words and verbs. If the user is signalling the clicker that it needs a new slide, this function is run on their text until a suitable sub-phrase is found. When it is, a new slide is created using that sub-phrase as a title.

#### Text Summarization

When the user is talking “normally,” and not signalling for a new slide, image, or graph, we attempt to summarize their speech into bullet points which can be displayed on screen. This summarization is performed using custom Part-of-speech analysis, which starts at verbs with many dependencies and works its way outward in the dependency tree, pruning branches of the sentence that are superfluous.

#### Mobile Clicker

Since it is really convenient to have a clicker device that you can use while moving around during your presentation, we decided to integrate it into your mobile device. After logging into the website on your phone, we send you to a clicker page that communicates with the server when you click the “New Slide” or “New Element” buttons. Pressing and holding these buttons activates the microphone on your laptop and begins to analyze the text on the server and sends the information back in real-time. This real-time communication is accomplished using WebSockets.

#### Internal Socket Communication

In addition to the websockets portion of our project, we had to use internal socket communications to do the actual text analysis. Unfortunately, the machine learning prediction could not be run within the web app itself, so we had to put it into its own process and thread and send the information over regular sockets so that the website would work. When the server receives a relevant websockets message, it creates a connection to our socket server running the machine learning model and sends information about what the user has been saying to the model. Once it receives the details back from the model, it broadcasts the new elements that need to be added to the slides and the front-end JavaScript adds the content to the slides.

## Challenges We ran into

* Text summarization is extremely difficult -- while there are many powerful algorithms for turning articles into paragraph summaries, there is essentially nothing on shortening sentences into bullet points. We ended up having to develop a custom pipeline for bullet-point generation based on Part-of-speech and dependency analysis.

* The Web Speech API is not supported across all browsers, and even though it is "supported" on Android, Android devices are incapable of continuous streaming. Because of this, we had to move the recording segment of our code from the phone to the laptop.

## Accomplishments that we're proud of

* Making a multi-faceted application, with a variety of machine learning and non-machine learning techniques.

* Working on an unsolved machine learning problem (sentence simplification)

* Connecting a mobile device to the laptop browser’s mic using WebSockets

* Real-time text analysis to determine new elements

## What's next for ImpromPPTX

* Predict what the user intends to say next

* Scraping Primary sources to automatically add citations and definitions.

* Improving text summarization with word reordering and synonym analysis.

|

partial

|

## Inspiration

As we began to look at the TreeHacks 10 tracks, all team members were immediately drawn to the sustainability track. In a world with increasing temperatures, excessive greenhouse gas emissions, biodiversity loss, and pollution, among numerous other ecological challenges, we know we all have an individual responsibility to help preserve and revitalize our environment. As a result, we began brainstorming how we could individually help contribute to a more sustainable future. Our first thoughts centered around how we could encourage contributions to environmental nonprofits. Still, we struggled to name localized organizations that could impact on an individual scale.

With three of us originally from Iowa, we did a quick Google search to find potential organizations whose mission aligned with our goal and found over 20 (including 3 within 20 minutes of our hometown) around the state that could utilize resources from people in various ways. The contributions they were seeking primarily consisted of people volunteering and monetary donations. If this was the case in Iowa, we knew most other states would likely have even more available opportunities. But how could we make people aware of them? Looking at the communities of people we know, it’s clear there is no shortage of people interested in environmental sustainability. But just being passionate about an issue doesn’t lead to improvement. A streamlined way to identify tangible ways to catalyze change, though? That is what’s needed to bridge the gap between someone’s desire to make change and their ability to follow through. We realized our platform’s goal: to allow organizations to make themselves known to those people who already have a planted **spark** and want to help preserve their environment for future generations.

## What it does

**Your spark can create change.**

Spark is a platform that allows environmental organizations to create a campaign outlining their mission, vision, and goals to encourage people with an existing **spark** who don’t know what to do with their desire to make a difference to join their projects. Our platform works in two parts. First, organizations post their campaign, which is then added to a database holding all posted campaigns. Next, contributors can browse available campaigns to find one(s) that resonate with their goals. Once they identify organizations that do so, they can identify which of the organization’s goals they are inspired to contribute to and gain spark points. These spark points work to (1) allow contributors to see the tangible impact they are having as a continuous endeavor and (2) motivate these individuals to continue their contributions with more organizations.

## How we built it

Iterations:

1. We started with a basic outline of listing an organization and its needs and allowing an individual to sign up to help.

2. To explore the broader stakeholders beyond just contributors, we spoke to an Executive Director at a nonprofit local to us (someone who may make a campaign page). We learned what features would make this platform more useful for them:

* “Because it is so hard for nonprofits to receive funding [as the application process is often long and rarely fruitful because of the number of competitors], individual contributions go a long way,” so we made the monetary donation aspect the first built-out type of contribution with future plans to build out a page showing all volunteering opportunities

* Within the organization’s dashboard view, they should be able to view and manage all of their own campaigns, so we added this functionality

* “Nonprofits benefit greatly from being able to receive feedback from participants,” so in a future iteration, we hope to allow some form of communication between participants and the organization (if valuable and often not used, this may be required for someone to earn their spark points)

3. We then showed our product to a hackathon mentor to gain more feedback on how to address the pain points of a potential user

* She suggested the usefulness of being able to “visually” observe opportunities “physically nearby.” We used this feedback and incorporated the Google Maps API to display the physical locations of the opportunities. She also noted this would remind users “how accessible” it is to make change.

4. After speaking to friends (people who would hold a future contributor role), we added in a few more features that could make our platform better encourage people:

* A link to the non-profit website, if applicable, to allow for deeper learning. To encourage an easy-to-use UI (especially for those more tech-averse), we wanted to avoid a cluttered card and instead redirect contributors to the organization's website.

* The ability to favorite a non-profit for future engagements, which we hold as a goal for a future iteration

* Rotating information on our home page to serve as motivation for contributors (also not yet implemented but planned for next iteration)

Technology Used:

(1) We used Next.js to build and host the front-end portion of our application. This decision allowed us to scale easily with a growing user base. Next.js is a very popular framework with a lot of open-source support that made our ability to build a website quickly.

(2) We use Convex for our API and backend. We enjoyed their presentation during the opening ceremony, which convinced us to use its extensive functionality. Its lightweight nature helped us develop much more quickly than what would’ve been required with other software.

(3) Ant Design is a very popular UI framework and made it easy to translate our Figma designs into our final product with a clean, modern interface.

(4) Visual Studio Code + Extensions to make development environment easier

## What makes us different

The main features of Spark that set it apart from existing services can be grouped into three main parts:

1. The focus on individual contributions to environmental challenges