modelId

stringlengths 4

81

| tags

list | pipeline_tag

stringclasses 17

values | config

dict | downloads

int64 0

59.7M

| first_commit

timestamp[ns, tz=UTC] | card

stringlengths 51

438k

|

|---|---|---|---|---|---|---|

Declan/NPR_model_v1

|

[

"pytorch",

"bert",

"fill-mask",

"transformers",

"autotrain_compatible"

] |

fill-mask

|

{

"architectures": [

"BertForMaskedLM"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 3 | null |

---

tags:

- unity-ml-agents

- ml-agents

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-SnowballTarget

library_name: ml-agents

---

# **ppo** Agent playing **SnowballTarget**

This is a trained model of a **ppo** agent playing **SnowballTarget** using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://github.com/huggingface/ml-agents#get-started

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

### Resume the training

```

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser:**.

1. Go to https://huggingface.co/spaces/unity/ML-Agents-SnowballTarget

2. Step 1: Write your model_id: alberto-mate/ppo-SnowballTarget

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

Declan/NPR_model_v2

|

[

"pytorch",

"bert",

"fill-mask",

"transformers",

"autotrain_compatible"

] |

fill-mask

|

{

"architectures": [

"BertForMaskedLM"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 7 | null |

---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- transformers

---

# {MODEL_NAME}

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

<!--- Describe your model here -->

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('{MODEL_NAME}')

embeddings = model.encode(sentences)

print(embeddings)

```

## Usage (HuggingFace Transformers)

Without [sentence-transformers](https://www.SBERT.net), you can use the model like this: First, you pass your input through the transformer model, then you have to apply the right pooling-operation on-top of the contextualized word embeddings.

```python

from transformers import AutoTokenizer, AutoModel

import torch

#Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0] #First element of model_output contains all token embeddings

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

return torch.sum(token_embeddings * input_mask_expanded, 1) / torch.clamp(input_mask_expanded.sum(1), min=1e-9)

# Sentences we want sentence embeddings for

sentences = ['This is an example sentence', 'Each sentence is converted']

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer.from_pretrained('{MODEL_NAME}')

model = AutoModel.from_pretrained('{MODEL_NAME}')

# Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

# Perform pooling. In this case, mean pooling.

sentence_embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Evaluation Results

<!--- Describe how your model was evaluated -->

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name={MODEL_NAME})

## Training

The model was trained with the parameters:

**DataLoader**:

`torch.utils.data.dataloader.DataLoader` of length 411 with parameters:

```

{'batch_size': 16, 'sampler': 'torch.utils.data.sampler.RandomSampler', 'batch_sampler': 'torch.utils.data.sampler.BatchSampler'}

```

**Loss**:

`sentence_transformers.losses.BatchHardTripletLoss.BatchHardTripletLoss`

Parameters of the fit()-Method:

```

{

"epochs": 50,

"evaluation_steps": 0,

"evaluator": "NoneType",

"max_grad_norm": 1,

"optimizer_class": "<class 'torch.optim.adamw.AdamW'>",

"optimizer_params": {

"lr": 2e-05

},

"scheduler": "WarmupLinear",

"steps_per_epoch": null,

"warmup_steps": 2051.8,

"weight_decay": 0.01

}

```

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 512, 'do_lower_case': False}) with Transformer model: BertModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

)

```

## Citing & Authors

<!--- Describe where people can find more information -->

|

Declan/Politico_model_v8

|

[

"pytorch",

"bert",

"fill-mask",

"transformers",

"autotrain_compatible"

] |

fill-mask

|

{

"architectures": [

"BertForMaskedLM"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 7 | null |

---

license: creativeml-openrail-m

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

inference: true

---

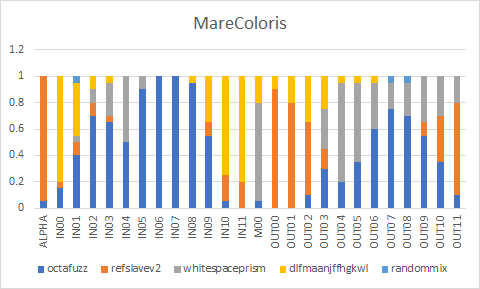

# MareColoris

OctaFuzz - <a href="https://huggingface.co/Lucetepolis/OctaFuzz">Download</a><br/>

RefSlave-V2 - <a href="https://civitai.com/models/11793/refslave-v2">Download</a><br/>

WhiteSpace Prism - <a href="https://civitai.com/models/12933/whitespace-prism">Download</a><br/>

dlfmaanjffhgkwl mix - <a href="https://civitai.com/models/9815/dlfmaanjffhgkwl-mix">Download</a><br/>

randommix - <a href="https://huggingface.co/1q2W3e/randommix">Download</a><br/>

EasyNegative and pastelmix-lora seem to work well with the models.

EasyNegative - <a href="https://huggingface.co/datasets/gsdf/EasyNegative">Download</a><br/>

pastelmix-lora - <a href="https://huggingface.co/andite/pastel-mix">Download</a>

# Formula

Used Merge Block Weighted : Each for this one.

```

model_0 : octafuzz.safetensors [364bdf849d]

model_1 : refslavev2.safetensors [cce9a2d200]

model_Out : or.safetensors

base_alpha : 0.95

output_file: C:\SD-webui\models\Stable-diffusion\or.safetensors

weight_A : 0.15,0.4,0.7,0.65,0.5,0.9,1,1,0.95,0.55,0.05,0,0.05,0,0,0.1,0.3,0.2,0.35,0.6,0.75,0.7,0.55,0.35,0.1

weight_B : 0.05,0.1,0.1,0.05,0,0,0,0,0,0.1,0.2,0.2,0,0.9,0.8,0.55,0.15,0,0,0,0,0,0.1,0.35,0.7

half : False

skip ids : 2 : 0:None, 1:Skip, 2:Reset

model_0 : or.safetensors [c7612d0b35]

model_1 : whitespaceprism.safetensors [59038bf169]

model_Out : orw.safetensors

base_alpha : 0

output_file: C:\SD-webui\models\Stable-diffusion\orw.safetensors

weight_A : 1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1

weight_B : 0,0.05,0.1,0.25,0.5,0.1,0,0,0,0,0,0,0.75,0,0,0,0.3,0.75,0.6,0.35,0.2,0.25,0.35,0.3,0.2

half : False

skip ids : 2 : 0:None, 1:Skip, 2:Reset

model_0 : orw.safetensors [3ca41b67cc]

model_1 : dlfmaanjffhgkwl.safetensors [d596b45d6b]

model_Out : orwd.safetensors

base_alpha : 0

output_file: C:\SD-webui\models\Stable-diffusion\orwd.safetensors

weight_A : 1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1

weight_B : 0.8,0.4,0.1,0.05,0,0,0,0,0.05,0.35,0.75,0.8,0.2,0.1,0.2,0.35,0.25,0.05,0.05,0.05,0,0,0,0,0

half : False

skip ids : 2 : 0:None, 1:Skip, 2:Reset

model_0 : orwd.safetensors [170cb9efc8]

model_1 : randommix.safetensors [8f44a11120]

model_Out : marecoloris.safetensors

base_alpha : 0

output_file: C:\SD-webui\models\Stable-diffusion\marecoloris.safetensors

weight_A : 1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1

weight_B : 0,0.05,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0.05,0.05,0,0,0

half : False

skip ids : 2 : 0:None, 1:Skip, 2:Reset

```

# Converted weights

# Samples

All of the images use following negatives/settings. EXIF preserved.

```

Negative prompt: (worst quality, low quality:1.4), EasyNegative, bad anatomy, bad hands, error, missing fingers, extra digit, fewer digits

Steps: 28, Sampler: DPM++ 2M Karras, CFG scale: 7, Size: 768x512, Denoising strength: 0.6, Clip skip: 2, ENSD: 31337, Hires upscale: 1.5, Hires steps: 14, Hires upscaler: Latent (nearest-exact)

```

|

Declan/WallStreetJournal_model_v4

|

[

"pytorch",

"bert",

"fill-mask",

"transformers",

"autotrain_compatible"

] |

fill-mask

|

{

"architectures": [

"BertForMaskedLM"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 7 | null |

---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- accuracy

model-index:

- name: lang_adapter_fa_digikala_multilingual_base_cased

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# lang_adapter_fa_digikala_multilingual_base_cased

This model is a fine-tuned version of [bert-base-multilingual-cased](https://huggingface.co/bert-base-multilingual-cased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 1.8074

- Accuracy: 0.6254

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 10.0

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|

| 2.7072 | 0.45 | 500 | 2.3782 | 0.5341 |

| 2.4037 | 0.9 | 1000 | 2.2189 | 0.5588 |

| 2.2792 | 1.35 | 1500 | 2.1282 | 0.5720 |

| 2.2017 | 1.8 | 2000 | 2.0641 | 0.5821 |

| 2.1322 | 2.25 | 2500 | 2.0273 | 0.5889 |

| 2.0955 | 2.7 | 3000 | 1.9950 | 0.5945 |

| 2.0673 | 3.15 | 3500 | 1.9655 | 0.6000 |

| 2.0399 | 3.6 | 4000 | 1.9462 | 0.6033 |

| 2.0148 | 4.05 | 4500 | 1.9300 | 0.6056 |

| 1.9987 | 4.5 | 5000 | 1.9113 | 0.6069 |

| 1.9824 | 4.95 | 5500 | 1.8823 | 0.6128 |

| 1.9704 | 5.41 | 6000 | 1.8824 | 0.6119 |

| 1.9502 | 5.86 | 6500 | 1.8701 | 0.6157 |

| 1.9527 | 6.31 | 7000 | 1.8497 | 0.6190 |

| 1.9217 | 6.76 | 7500 | 1.8493 | 0.6174 |

| 1.9091 | 7.21 | 8000 | 1.8341 | 0.6210 |

| 1.9095 | 7.66 | 8500 | 1.8145 | 0.6250 |

| 1.9107 | 8.11 | 9000 | 1.8320 | 0.6190 |

| 1.8929 | 8.56 | 9500 | 1.8051 | 0.6268 |

| 1.8855 | 9.01 | 10000 | 1.8257 | 0.6221 |

| 1.8893 | 9.46 | 10500 | 1.8206 | 0.6231 |

| 1.8899 | 9.91 | 11000 | 1.7972 | 0.6244 |

### Framework versions

- Transformers 4.26.1

- Pytorch 1.13.1+cu116

- Datasets 2.10.1

- Tokenizers 0.12.1

|

DeepChem/ChemBERTa-5M-MTR

|

[

"pytorch",

"roberta",

"transformers"

] | null |

{

"architectures": [

"RobertaForRegression"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 13 | 2023-03-03T10:17:47Z |

---

language:

- multilingual

- en

- de

- fr

- ja

license: mit

tags:

- object-detection

- vision

- generated_from_trainer

- DocLayNet

- COCO

- PDF

- IBM

- Financial-Reports

- Finance

- Manuals

- Scientific-Articles

- Science

- Laws

- Law

- Regulations

- Patents

- Government-Tenders

- object-detection

- image-segmentation

- token-classification

inference: false

datasets:

- pierreguillou/DocLayNet-base

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: layout-xlm-base-finetuned-with-DocLayNet-base-at-linelevel-ml384

results:

- task:

name: Token Classification

type: token-classification

metrics:

- name: f1

type: f1

value: 0.7336

- name: accuracy

type: accuracy

value: 0.9373

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

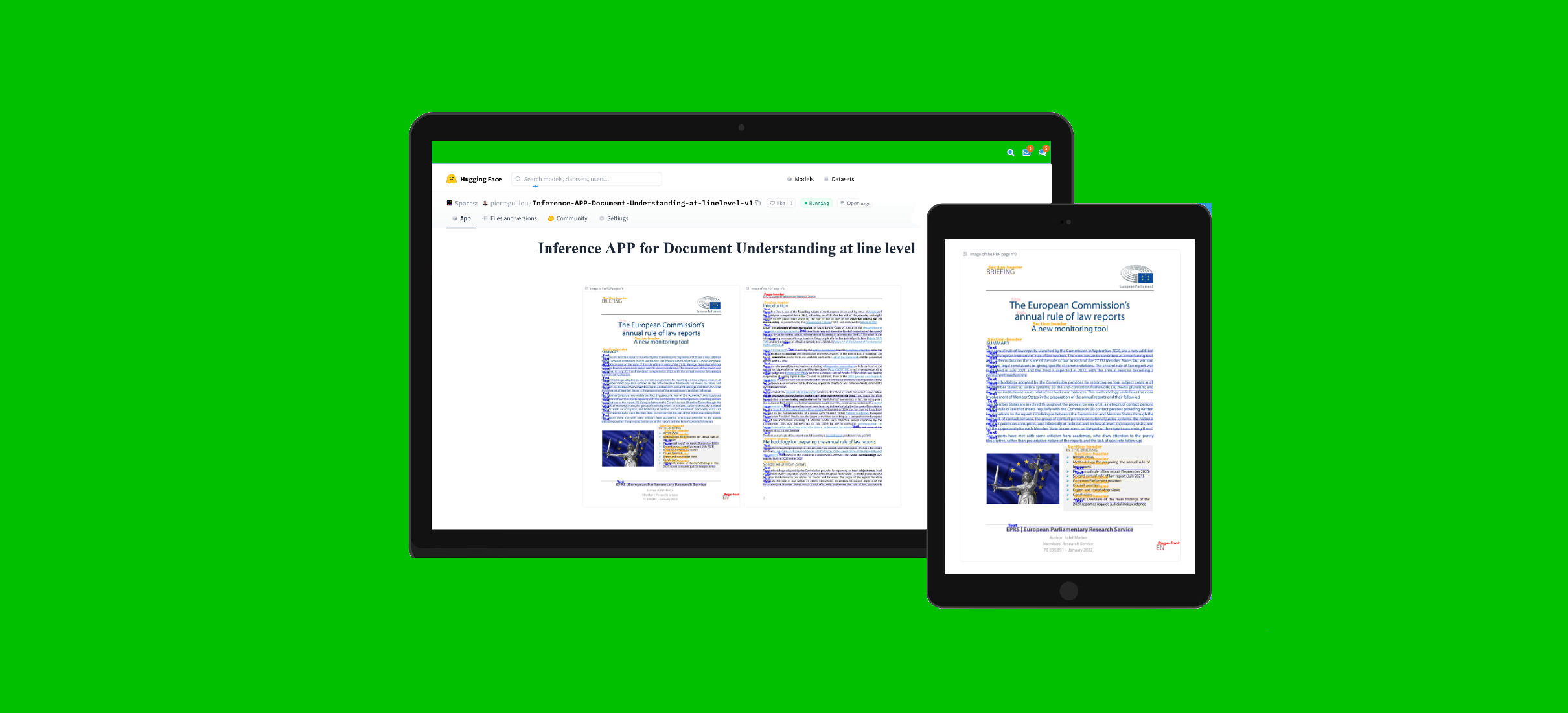

# Document Understanding model (finetuned LayoutXLM base at line level on DocLayNet base)

This model is a fine-tuned version of [microsoft/layoutxlm-base](https://huggingface.co/microsoft/layoutxlm-base) with the [DocLayNet base](https://huggingface.co/datasets/pierreguillou/DocLayNet-base) dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2364

- Precision: 0.7260

- Recall: 0.7415

- F1: 0.7336

- Accuracy: 0.9373

## References

### Blog posts

- Layout XLM base

- (03/05/2023) [Document AI | Inference APP and fine-tuning notebook for Document Understanding at line level with LayoutXLM base]()

- LiLT base

- (02/16/2023) [Document AI | Inference APP and fine-tuning notebook for Document Understanding at paragraph level](https://medium.com/@pierre_guillou/document-ai-inference-app-and-fine-tuning-notebook-for-document-understanding-at-paragraph-level-c18d16e53cf8)

- (02/14/2023) [Document AI | Inference APP for Document Understanding at line level](https://medium.com/@pierre_guillou/document-ai-inference-app-for-document-understanding-at-line-level-a35bbfa98893)

- (02/10/2023) [Document AI | Document Understanding model at line level with LiLT, Tesseract and DocLayNet dataset](https://medium.com/@pierre_guillou/document-ai-document-understanding-model-at-line-level-with-lilt-tesseract-and-doclaynet-dataset-347107a643b8)

- (01/31/2023) [Document AI | DocLayNet image viewer APP](https://medium.com/@pierre_guillou/document-ai-doclaynet-image-viewer-app-3ac54c19956)

- (01/27/2023) [Document AI | Processing of DocLayNet dataset to be used by layout models of the Hugging Face hub (finetuning, inference)](https://medium.com/@pierre_guillou/document-ai-processing-of-doclaynet-dataset-to-be-used-by-layout-models-of-the-hugging-face-hub-308d8bd81cdb)

### Notebooks (paragraph level)

- LiLT base

- [Document AI | Inference APP at paragraph level with a Document Understanding model (LiLT fine-tuned on DocLayNet dataset)](https://github.com/piegu/language-models/blob/master/Gradio_inference_on_LiLT_model_finetuned_on_DocLayNet_base_in_any_language_at_levelparagraphs_ml512.ipynb)

- [Document AI | Inference at paragraph level with a Document Understanding model (LiLT fine-tuned on DocLayNet dataset)](https://github.com/piegu/language-models/blob/master/inference_on_LiLT_model_finetuned_on_DocLayNet_base_in_any_language_at_levelparagraphs_ml512.ipynb)

- [Document AI | Fine-tune LiLT on DocLayNet base in any language at paragraph level (chunk of 512 tokens with overlap)](https://github.com/piegu/language-models/blob/master/Fine_tune_LiLT_on_DocLayNet_base_in_any_language_at_paragraphlevel_ml_512.ipynb)

### Notebooks (line level)

- Layout XLM base

- [Document AI | Inference at line level with a Document Understanding model (LayoutXLM base fine-tuned on DocLayNet dataset)](https://github.com/piegu/language-models/blob/master/inference_on_LayoutXLM_base_model_finetuned_on_DocLayNet_base_in_any_language_at_levellines_ml384.ipynb)

- [Document AI | Inference APP at line level with a Document Understanding model (LayoutXLM base fine-tuned on DocLayNet base dataset)](https://github.com/piegu/language-models/blob/master/Gradio_inference_on_LayoutXLM_base_model_finetuned_on_DocLayNet_base_in_any_language_at_levellines_ml384.ipynb)

- [Document AI | Fine-tune LayoutXLM base on DocLayNet base in any language at line level (chunk of 384 tokens with overlap)](https://github.com/piegu/language-models/blob/master/Fine_tune_LayoutXLM_base_on_DocLayNet_base_in_any_language_at_linelevel_ml_384.ipynb)

- LiLT base

- [Document AI | Inference at line level with a Document Understanding model (LiLT fine-tuned on DocLayNet dataset)](https://github.com/piegu/language-models/blob/master/inference_on_LiLT_model_finetuned_on_DocLayNet_base_in_any_language_at_levellines_ml384.ipynb)

- [Document AI | Inference APP at line level with a Document Understanding model (LiLT fine-tuned on DocLayNet dataset)](https://github.com/piegu/language-models/blob/master/Gradio_inference_on_LiLT_model_finetuned_on_DocLayNet_base_in_any_language_at_levellines_ml384.ipynb)

- [Document AI | Fine-tune LiLT on DocLayNet base in any language at line level (chunk of 384 tokens with overlap)](https://github.com/piegu/language-models/blob/master/Fine_tune_LiLT_on_DocLayNet_base_in_any_language_at_linelevel_ml_384.ipynb)

- [DocLayNet image viewer APP](https://github.com/piegu/language-models/blob/master/DocLayNet_image_viewer_APP.ipynb)

- [Processing of DocLayNet dataset to be used by layout models of the Hugging Face hub (finetuning, inference)](processing_DocLayNet_dataset_to_be_used_by_layout_models_of_HF_hub.ipynb)

### APP

You can test this model with this APP in Hugging Face Spaces: [Inference APP for Document Understanding at line level (v2)](https://huggingface.co/spaces/pierreguillou/Inference-APP-Document-Understanding-at-linelevel-v2).

### DocLayNet dataset

[DocLayNet dataset](https://github.com/DS4SD/DocLayNet) (IBM) provides page-by-page layout segmentation ground-truth using bounding-boxes for 11 distinct class labels on 80863 unique pages from 6 document categories.

Until today, the dataset can be downloaded through direct links or as a dataset from Hugging Face datasets:

- direct links: [doclaynet_core.zip](https://codait-cos-dax.s3.us.cloud-object-storage.appdomain.cloud/dax-doclaynet/1.0.0/DocLayNet_core.zip) (28 GiB), [doclaynet_extra.zip](https://codait-cos-dax.s3.us.cloud-object-storage.appdomain.cloud/dax-doclaynet/1.0.0/DocLayNet_extra.zip) (7.5 GiB)

- Hugging Face dataset library: [dataset DocLayNet](https://huggingface.co/datasets/ds4sd/DocLayNet)

Paper: [DocLayNet: A Large Human-Annotated Dataset for Document-Layout Analysis](https://arxiv.org/abs/2206.01062) (06/02/2022)

## Model description

The model was finetuned at **line level on chunk of 384 tokens with overlap of 128 tokens**. Thus, the model was trained with all layout and text data of all pages of the dataset.

At inference time, a calculation of best probabilities give the label to each line bounding boxes.

## Inference

See notebook: [Document AI | Inference at line level with a Document Understanding model (LayoutXLM base fine-tuned on DocLayNet dataset)](https://github.com/piegu/language-models/blob/master/inference_on_LayoutXLM_base_model_finetuned_on_DocLayNet_base_in_any_language_at_levellines_ml384.ipynb)

## Training and evaluation data

See notebook: [Document AI | Fine-tune LayoutXLM base on DocLayNet base in any language at line level (chunk of 384 tokens with overlap)](https://github.com/piegu/language-models/blob/master/Fine_tune_LayoutXLM_base_on_DocLayNet_base_in_any_language_at_linelevel_ml_384.ipynb)

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 3

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Accuracy | F1 | Validation Loss | Precision | Recall |

|:-------------:|:-----:|:----:|:--------:|:------:|:---------------:|:---------:|:------:|

| No log | 0.12 | 300 | 0.8413 | 0.1311 | 0.5185 | 0.1437 | 0.1205 |

| 0.9231 | 0.25 | 600 | 0.8751 | 0.5031 | 0.4108 | 0.4637 | 0.5498 |

| 0.9231 | 0.37 | 900 | 0.8887 | 0.5206 | 0.3911 | 0.5076 | 0.5343 |

| 0.369 | 0.5 | 1200 | 0.8724 | 0.5365 | 0.4118 | 0.5094 | 0.5667 |

| 0.2737 | 0.62 | 1500 | 0.8960 | 0.6033 | 0.3328 | 0.6046 | 0.6020 |

| 0.2737 | 0.75 | 1800 | 0.9186 | 0.6404 | 0.2984 | 0.6062 | 0.6787 |

| 0.2542 | 0.87 | 2100 | 0.9163 | 0.6593 | 0.3115 | 0.6324 | 0.6887 |

| 0.2542 | 1.0 | 2400 | 0.9198 | 0.6537 | 0.2878 | 0.6160 | 0.6962 |

| 0.1938 | 1.12 | 2700 | 0.9165 | 0.6752 | 0.3414 | 0.6673 | 0.6833 |

| 0.1581 | 1.25 | 3000 | 0.9193 | 0.6871 | 0.3611 | 0.6868 | 0.6875 |

| 0.1581 | 1.37 | 3300 | 0.9256 | 0.6822 | 0.2763 | 0.6988 | 0.6663 |

| 0.1428 | 1.5 | 3600 | 0.9287 | 0.7084 | 0.3065 | 0.7246 | 0.6929 |

| 0.1428 | 1.62 | 3900 | 0.9194 | 0.6812 | 0.2942 | 0.6866 | 0.6760 |

| 0.1025 | 1.74 | 4200 | 0.9347 | 0.7223 | 0.2990 | 0.7315 | 0.7133 |

| 0.1225 | 1.87 | 4500 | 0.9360 | 0.7048 | 0.2729 | 0.7249 | 0.6858 |

| 0.1225 | 1.99 | 4800 | 0.9396 | 0.7222 | 0.2826 | 0.7497 | 0.6966 |

| 0.108 | 2.12 | 5100 | 0.9301 | 0.7193 | 0.3071 | 0.7022 | 0.7372 |

| 0.108 | 2.24 | 5400 | 0.9334 | 0.7243 | 0.2999 | 0.7250 | 0.7237 |

| 0.0799 | 2.37 | 5700 | 0.9382 | 0.7254 | 0.2710 | 0.7310 | 0.7198 |

| 0.0793 | 2.49 | 6000 | 0.9329 | 0.7228 | 0.3201 | 0.7352 | 0.7108 |

| 0.0793 | 2.62 | 6300 | 0.9373 | 0.7336 | 0.3035 | 0.7260 | 0.7415 |

| 0.0696 | 2.74 | 6600 | 0.9374 | 0.7275 | 0.3137 | 0.7313 | 0.7237 |

| 0.0696 | 2.87 | 6900 | 0.9381 | 0.7253 | 0.3242 | 0.7369 | 0.7142 |

| 0.0866 | 2.99 | 7200 | 0.2473 | 0.7439 | 0.7207 | 0.7321 | 0.9407 |

### Framework versions

- Transformers 4.26.1

- Pytorch 1.10.0+cu111

- Datasets 2.10.1

- Tokenizers 0.13.2

## Other models

- Line level

- [Document Understanding model (finetuned LiLT base at line level on DocLayNet base)](https://huggingface.co/pierreguillou/lilt-xlm-roberta-base-finetuned-with-DocLayNet-base-at-linelevel-ml384) (accuracy | tokens: 85.84% - lines: 91.97%)

- [Document Understanding model (finetuned LayoutXLM base at line level on DocLayNet base)](https://huggingface.co/pierreguillou/layout-xlm-base-finetuned-with-DocLayNet-base-at-linelevel-ml384) (accuracy | tokens: 93.73% - lines: ...)

- Paragraph level

- [Document Understanding model (finetuned LiLT base at paragraph level on DocLayNet base)](https://huggingface.co/pierreguillou/lilt-xlm-roberta-base-finetuned-with-DocLayNet-base-at-paragraphlevel-ml512) (accuracy | tokens: 86.34% - paragraphs: 68.15%)

- [Document Understanding model (finetuned LayoutXLM base at paragraph level on DocLayNet base)](https://huggingface.co/pierreguillou/layout-xlm-base-finetuned-with-DocLayNet-base-at-paragraphlevel-ml512) (accuracy | tokens: 96.93% - paragraphs: 86.55%)

|

DeepChem/SmilesTokenizer_PubChem_1M

|

[

"pytorch",

"roberta",

"feature-extraction",

"transformers"

] |

feature-extraction

|

{

"architectures": [

"RobertaModel"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 227 | 2023-03-02T12:52:47Z |

---

tags:

- conversational

---

#qqpbksdj DailoGPT Model

|

DeepPavlov/bert-base-cased-conversational

|

[

"pytorch",

"jax",

"bert",

"feature-extraction",

"en",

"transformers"

] |

feature-extraction

|

{

"architectures": [

"BertModel"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 3,009 | null |

---

library_name: stable-baselines3

tags:

- SpaceInvadersNoFrameskip-v4

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: DQN

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: SpaceInvadersNoFrameskip-v4

type: SpaceInvadersNoFrameskip-v4

metrics:

- type: mean_reward

value: 403.00 +/- 148.73

name: mean_reward

verified: false

---

# **DQN** Agent playing **SpaceInvadersNoFrameskip-v4**

This is a trained model of a **DQN** agent playing **SpaceInvadersNoFrameskip-v4**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3)

and the [RL Zoo](https://github.com/DLR-RM/rl-baselines3-zoo).

The RL Zoo is a training framework for Stable Baselines3

reinforcement learning agents,

with hyperparameter optimization and pre-trained agents included.

## Usage (with SB3 RL Zoo)

RL Zoo: https://github.com/DLR-RM/rl-baselines3-zoo<br/>

SB3: https://github.com/DLR-RM/stable-baselines3<br/>

SB3 Contrib: https://github.com/Stable-Baselines-Team/stable-baselines3-contrib

Install the RL Zoo (with SB3 and SB3-Contrib):

```bash

pip install rl_zoo3

```

```

# Download model and save it into the logs/ folder

python -m rl_zoo3.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga jorgelzn -f logs/

python -m rl_zoo3.enjoy --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

If you installed the RL Zoo3 via pip (`pip install rl_zoo3`), from anywhere you can do:

```

python -m rl_zoo3.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga jorgelzn -f logs/

python -m rl_zoo3.enjoy --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

## Training (with the RL Zoo)

```

python -m rl_zoo3.train --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

# Upload the model and generate video (when possible)

python -m rl_zoo3.push_to_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/ -orga jorgelzn

```

## Hyperparameters

```python

OrderedDict([('batch_size', 32),

('buffer_size', 100000),

('env_wrapper',

['stable_baselines3.common.atari_wrappers.AtariWrapper']),

('exploration_final_eps', 0.01),

('exploration_fraction', 0.1),

('frame_stack', 4),

('gradient_steps', 1),

('learning_rate', 0.0001),

('learning_starts', 100000),

('n_timesteps', 1000000),

('optimize_memory_usage', False),

('policy', 'CnnPolicy'),

('target_update_interval', 1000),

('train_freq', 4),

('normalize', False)])

```

|

DeepPavlov/distilrubert-tiny-cased-conversational

|

[

"pytorch",

"distilbert",

"ru",

"arxiv:2205.02340",

"transformers"

] | null |

{

"architectures": null,

"model_type": "distilbert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 5,993 | null |

---

library_name: stable-baselines3

tags:

- door-lock-v2

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: PPO

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: door-lock-v2

type: door-lock-v2

metrics:

- type: mean_reward

value: 4548.17 +/- 353.30

name: mean_reward

verified: false

---

# **PPO** Agent playing **door-lock-v2**

This is a trained model of a **PPO** agent playing **door-lock-v2**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3)

and the [RL Zoo](https://github.com/DLR-RM/rl-baselines3-zoo).

The RL Zoo is a training framework for Stable Baselines3

reinforcement learning agents,

with hyperparameter optimization and pre-trained agents included.

## Usage (with SB3 RL Zoo)

RL Zoo: https://github.com/DLR-RM/rl-baselines3-zoo<br/>

SB3: https://github.com/DLR-RM/stable-baselines3<br/>

SB3 Contrib: https://github.com/Stable-Baselines-Team/stable-baselines3-contrib

Install the RL Zoo (with SB3 and SB3-Contrib):

```bash

pip install rl_zoo3

```

```

# Download model and save it into the logs/ folder

python -m rl_zoo3.load_from_hub --algo ppo --env door-lock-v2 -orga qgallouedec -f logs/

python -m rl_zoo3.enjoy --algo ppo --env door-lock-v2 -f logs/

```

If you installed the RL Zoo3 via pip (`pip install rl_zoo3`), from anywhere you can do:

```

python -m rl_zoo3.load_from_hub --algo ppo --env door-lock-v2 -orga qgallouedec -f logs/

python -m rl_zoo3.enjoy --algo ppo --env door-lock-v2 -f logs/

```

## Training (with the RL Zoo)

```

python -m rl_zoo3.train --algo ppo --env door-lock-v2 -f logs/

# Upload the model and generate video (when possible)

python -m rl_zoo3.push_to_hub --algo ppo --env door-lock-v2 -f logs/ -orga qgallouedec

```

## Hyperparameters

```python

OrderedDict([('batch_size', 32),

('gamma', 0.99),

('learning_rate', 0.0005),

('n_envs', 4),

('n_steps', 512),

('n_timesteps', 10000000),

('normalize', True),

('policy', 'MlpPolicy'),

('target_kl', 0.04),

('normalize_kwargs', {'norm_obs': True, 'norm_reward': False})])

```

|

DeltaHub/adapter_t5-3b_cola

|

[

"pytorch",

"transformers"

] | null |

{

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 3 | null |

---

library_name: stable-baselines3

tags:

- PandaReachDense-v2

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: A2C

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: PandaReachDense-v2

type: PandaReachDense-v2

metrics:

- type: mean_reward

value: -2.38 +/- 0.50

name: mean_reward

verified: false

---

# **A2C** Agent playing **PandaReachDense-v2**

This is a trained model of a **A2C** agent playing **PandaReachDense-v2**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

DeltaHub/adapter_t5-3b_qnli

|

[

"pytorch",

"transformers"

] | null |

{

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 3 | null |

---

library_name: stable-baselines3

tags:

- PandaReachDense-v2

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: A2C

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: PandaReachDense-v2

type: PandaReachDense-v2

metrics:

- type: mean_reward

value: -1.15 +/- 0.35

name: mean_reward

verified: false

---

# **A2C** Agent playing **PandaReachDense-v2**

This is a trained model of a **A2C** agent playing **PandaReachDense-v2**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

DemangeJeremy/4-sentiments-with-flaubert

|

[

"pytorch",

"flaubert",

"text-classification",

"fr",

"transformers",

"sentiments",

"french",

"flaubert-large"

] |

text-classification

|

{

"architectures": [

"FlaubertForSequenceClassification"

],

"model_type": "flaubert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 226 | null |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- imdb

metrics:

- accuracy

- f1

model-index:

- name: finetuning-sentiment-model-3000-samples

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: imdb

type: imdb

config: plain_text

split: test

args: plain_text

metrics:

- name: Accuracy

type: accuracy

value: 0.8666666666666667

- name: F1

type: f1

value: 0.8666666666666667

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# finetuning-sentiment-model-3000-samples

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset.

It achieves the following results on the evaluation set:

- Loss: 0.3049

- Accuracy: 0.8667

- F1: 0.8667

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

### Framework versions

- Transformers 4.26.1

- Pytorch 1.13.1+cu116

- Datasets 2.10.1

- Tokenizers 0.13.2

|

Denny29/DialoGPT-medium-asunayuuki

|

[

"pytorch",

"gpt2",

"text-generation",

"transformers",

"conversational"

] |

conversational

|

{

"architectures": [

"GPT2LMHeadModel"

],

"model_type": "gpt2",

"task_specific_params": {

"conversational": {

"max_length": 1000

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 9 | null |

---

license: creativeml-openrail-m

tags:

- text-to-image

---

### Meryl_Stryfe_20230302_1330_rep_old_DS_8000_steps on Stable Diffusion via Dreambooth

#### model by NickKolok

This your the Stable Diffusion model fine-tuned the Meryl_Stryfe_20230302_1330_rep_old_DS_8000_steps concept taught to Stable Diffusion with Dreambooth.

#It can be used by modifying the `instance_prompt`: **merylstryfetrigun**

You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb).

And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts)

|

DeskDown/MarianMixFT_en-ms

|

[

"pytorch",

"marian",

"text2text-generation",

"transformers",

"autotrain_compatible"

] |

text2text-generation

|

{

"architectures": [

"MarianMTModel"

],

"model_type": "marian",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 5 | 2023-03-02T13:47:09Z |

---

license: creativeml-openrail-m

base_model: runwayml/stable-diffusion-v1-5

instance_prompt: a photo of sks dog

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

- lora

inference: true

---

# LoRA DreamBooth - tsinglin/save_models_0302

These are LoRA adaption weights for runwayml/stable-diffusion-v1-5. The weights were trained on a photo of sks dog using [DreamBooth](https://dreambooth.github.io/). You can find some example images in the following.

|

Despin89/test

|

[] | null |

{

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 0 | null |

---

license: creativeml-openrail-m

tags:

- text-to-image

---

### Meryl_Stryfe_20230302_1330_rep_old_DS_4000_steps on Stable Diffusion via Dreambooth

#### model by NickKolok

This your the Stable Diffusion model fine-tuned the Meryl_Stryfe_20230302_1330_rep_old_DS_4000_steps concept taught to Stable Diffusion with Dreambooth.

#It can be used by modifying the `instance_prompt`: **merylstryfetrigun**

You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb).

And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts)

|

DevsIA/Devs_IA

|

[] | null |

{

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 0 | 2023-03-02T14:01:32Z |

---

license: creativeml-openrail-m

tags:

- text-to-image

---

### Meryl_Stryfe_20230302_1330_rep_old_DS_4800_steps on Stable Diffusion via Dreambooth

#### model by NickKolok

This your the Stable Diffusion model fine-tuned the Meryl_Stryfe_20230302_1330_rep_old_DS_4800_steps concept taught to Stable Diffusion with Dreambooth.

#It can be used by modifying the `instance_prompt`: **merylstryfetrigun**

You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb).

And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts)

|

DimaOrekhov/transformer-method-name

|

[

"pytorch",

"encoder-decoder",

"text2text-generation",

"transformers",

"autotrain_compatible"

] |

text2text-generation

|

{

"architectures": [

"EncoderDecoderModel"

],

"model_type": "encoder-decoder",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 8 | 2023-03-02T14:16:05Z |

---

tags:

- unity-ml-agents

- ml-agents

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-Huggy

library_name: ml-agents

---

# **ppo** Agent playing **Huggy**

This is a trained model of a **ppo** agent playing **Huggy** using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://github.com/huggingface/ml-agents#get-started

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

### Resume the training

```

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser:**.

1. Go to https://huggingface.co/spaces/unity/ML-Agents-Huggy

2. Step 1: Write your model_id: EExe/ppo-Huggy

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

Doogie/Waynehills-KE-T5-doogie

|

[] | null |

{

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 0 | null |

---

tags:

- unity-ml-agents

- ml-agents

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-Pyramids

library_name: ml-agents

---

# **ppo** Agent playing **Pyramids**

This is a trained model of a **ppo** agent playing **Pyramids** using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://github.com/huggingface/ml-agents#get-started

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

### Resume the training

```

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser:**.

1. Go to https://huggingface.co/spaces/unity/ML-Agents-Pyramids

2. Step 1: Write your model_id: CloXD/ppo-Pyramids

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

DoyyingFace/bert-asian-hate-tweets-asian-unclean-freeze-8

|

[

"pytorch",

"bert",

"text-classification",

"transformers"

] |

text-classification

|

{

"architectures": [

"BertForSequenceClassification"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 30 | null |

---

license: creativeml-openrail-m

tags:

- stable-diffusion

- text-to-image

---

----

# OrangeMixs

"OrangeMixs" shares various Merge models that can be used with StableDiffusionWebui:Automatic1111 and others.Enjoy the drawing AI.

Maintain a repository for the following purposes.

1. to provide easy access to models commonly used in the Japanese community.The Wisdom of the Anons💎

2. As a place to upload my merge models when I feel like it.

<img src="https://i.imgur.com/VZg0LqQ.png" width="1000" height="">

----

# Gradio

We support a [Gradio](https://github.com/gradio-app/gradio) Web UI to run OrangeMixs:

[](https://huggingface.co/spaces/akhaliq/webui-orangemixs)

----

# Table of Contents

- [OrangeMixs](#orangemixs)

- [Gradio](#gradio)

- [Table of Contents](#table-of-contents)

- [Reference](#reference)

- [Licence](#licence)

- [Terms of use](#terms-of-use)

- [Disclaimer](#disclaimer)

- [How to download](#how-to-download)

- [Batch Download](#batch-download)

- [Batch Download (Advanced)](#batch-download-advanced)

- [Select and download](#select-and-download)

- [Model Detail \& Merge Recipes](#model-detail--merge-recipes)

- [AbyssOrangeMix3 (AOM3)](#abyssorangemix3-aom3)

- [Update note](#update-note)

- [AOM3](#aom3)

- [AOM3A1](#aom3a1)

- [AOM3A2](#aom3a2)

- [AOM3A3](#aom3a3)

- [AOM3A1B](#aom3a1b)

- [MORE](#more)

- [Sample Gallery](#sample-gallery)

- [Description for enthusiast](#description-for-enthusiast)

- [AbyssOrangeMix2 (AOM2)](#abyssorangemix2-aom2)

- [AbyssOrangeMix2\_sfw (AOM2s)](#abyssorangemix2_sfw-aom2s)

- [AbyssOrangeMix2\_nsfw (AOM2n)](#abyssorangemix2_nsfw-aom2n)

- [AbyssOrangeMix2\_hard (AOM2h)](#abyssorangemix2_hard-aom2h)

- [EerieOrangeMix (EOM)](#eerieorangemix-eom)

- [EerieOrangeMix (EOM1)](#eerieorangemix-eom1)

- [EerieOrangeMix\_base (EOM1b)](#eerieorangemix_base-eom1b)

- [EerieOrangeMix\_Night (EOM1n)](#eerieorangemix_night-eom1n)

- [EerieOrangeMix\_half (EOM1h)](#eerieorangemix_half-eom1h)

- [EerieOrangeMix (EOM1)](#eerieorangemix-eom1-1)

- [EerieOrangeMix2 (EOM2)](#eerieorangemix2-eom2)

- [EerieOrangeMix2\_base (EOM2b)](#eerieorangemix2_base-eom2b)

- [EerieOrangeMix2\_night (EOM2n)](#eerieorangemix2_night-eom2n)

- [EerieOrangeMix2\_half (EOM2h)](#eerieorangemix2_half-eom2h)

- [EerieOrangeMix2 (EOM2)](#eerieorangemix2-eom2-1)

- [Models Comparison](#models-comparison)

- [AbyssOrangeMix (AOM)](#abyssorangemix-aom)

- [AbyssOrangeMix\_base (AOMb)](#abyssorangemix_base-aomb)

- [AbyssOrangeMix\_Night (AOMn)](#abyssorangemix_night-aomn)

- [AbyssOrangeMix\_half (AOMh)](#abyssorangemix_half-aomh)

- [AbyssOrangeMix (AOM)](#abyssorangemix-aom-1)

- [ElyOrangeMix (ELOM)](#elyorangemix-elom)

- [ElyOrangeMix (ELOM)](#elyorangemix-elom-1)

- [ElyOrangeMix\_half (ELOMh)](#elyorangemix_half-elomh)

- [ElyNightOrangeMix (ELOMn)](#elynightorangemix-elomn)

- [BloodOrangeMix (BOM)](#bloodorangemix-bom)

- [BloodOrangeMix (BOM)](#bloodorangemix-bom-1)

- [BloodOrangeMix\_half (BOMh)](#bloodorangemix_half-bomh)

- [BloodNightOrangeMix (BOMn)](#bloodnightorangemix-bomn)

- [ElderOrangeMix](#elderorangemix)

- [Troubleshooting](#troubleshooting)

- [FAQ and Tips (🐈MEME ZONE🦐)](#faq-and-tips-meme-zone)

----

# Reference

+/hdg/ Stable Diffusion Models Cookbook - <https://rentry.org/hdgrecipes#g-anons-unnamed-mix-e93c3bf7>

Model names are named after Cookbook precedents🍊

# Licence

This model is open access and available to all, with a CreativeML OpenRAIL-M license further specifying rights and usage. The CreativeML OpenRAIL License specifies:

1. You can't use the model to deliberately produce nor share illegal or harmful outputs or content

2. The authors claims no rights on the outputs you generate, you are free to use them and are accountable for their use which must not go against the provisions set in the license

3. You may re-distribute the weights and use the model commercially and/or as a service. If you do, please be aware you have to include the same use restrictions as the ones in the license and share a copy of the CreativeML OpenRAIL-M to all your users (please read the license entirely and carefully) Please read the full license here :https://huggingface.co/spaces/CompVis/stable-diffusion-license

# Terms of use

- **Clearly indicate where modifications have been made.**

If you used it for merging, please state what steps you took to do so.

# Disclaimer

```

The user has complete control over whether or not to generate NSFW content, and the user's decision to enjoy either SFW or NSFW is entirely up to the user.The learning model does not contain any obscene visual content that can be viewed with a single click.The posting of the Learning Model is not intended to display obscene material in a public place.

In publishing examples of the generation of copyrighted characters, I consider the following cases to be exceptional cases in which unauthorised use is permitted.

"when the use is for private use or research purposes; when the work is used as material for merchandising (however, this does not apply when the main use of the work is to be merchandised); when the work is used in criticism, commentary or news reporting; when the work is used as a parody or derivative work to demonstrate originality."

In these cases, use against the will of the copyright holder or use for unjustified gain should still be avoided, and if a complaint is lodged by the copyright holder, it is guaranteed that the publication will be stopped as soon as possible.

```

----

# How to download

## Batch Download

⚠Deprecated: Orange has grown too huge. Doing this will kill your storage.

1. install Git

2. create a folder of your choice and right click → "Git bash here" and open a gitbash on the folder's directory.

3. run the following commands in order.

```

git lfs install

git clone https://huggingface.co/WarriorMama777/OrangeMixs

```

4. complete

## Batch Download (Advanced)

Advanced: (When you want to download only selected directories, not the entire repository.)

<details>

<summary>Toggle: How to Batch Download (Advanced)</summary>

1. Run the command `git clone --filter=tree:0 --no-checkout https://huggingface.co/WarriorMama777/OrangeMixs` to clone the huggingface repository. By adding the `--filter=tree:0` and `--no-checkout` options, you can download only the file names without their contents.

```

git clone --filter=tree:0 --no-checkout https://huggingface.co/WarriorMama777/OrangeMixs

```

2. Move to the cloned directory with the command `cd OrangeMixs`.

```

cd OrangeMixs

```

3. Enable sparse-checkout mode with the command `git sparse-checkout init --cone`. By adding the `--cone` option, you can achieve faster performance.

```

git sparse-checkout init --cone

```

4. Specify the directory you want to get with the command `git sparse-checkout add <directory name>`. For example, if you want to get only the `Models/AbyssOrangeMix3` directory, enter `git sparse-checkout add Models/AbyssOrangeMix3`.

```

git sparse-checkout add Models/AbyssOrangeMix3

```

5. Download the contents of the specified directory with the command `git checkout main`.

```

git checkout main

```

This completes how to clone only a specific directory. If you want to add other directories, run `git sparse-checkout add <directory name>` again.

</details>

## Select and download

1. Go to the Files and vaersions tab.

2. select the model you want to download

3. download

4. complete

----

----

# Model Detail & Merge Recipes

## AbyssOrangeMix3 (AOM3)

――Everyone has different “ABYSS”!

▼About

The main model, "AOM3 (AbyssOrangeMix3)", is a purely upgraded model that improves on the problems of the previous version, "AOM2". "AOM3" can generate illustrations with very realistic textures and can generate a wide variety of content. There are also three variant models based on the AOM3 that have been adjusted to a unique illustration style. These models will help you to express your ideas more clearly.

▼Links

- [⚠NSFW] Civitai: AbyssOrangeMix3 (AOM3) | Stable Diffusion Checkpoint | https://civitai.com/models/9942/abyssorangemix3-aom3

### Update note

2023-02-27: Add AOM3A1B

### AOM3

Features: high-quality, realistic textured illustrations can be generated.

There are two major changes from AOM2.

1: Models for NSFW such as _nsfw and _hard have been improved: the models after nsfw in AOM2 generated creepy realistic faces, muscles and ribs when using Hires.fix, even though they were animated characters. These have all been improved in AOM3.

e.g.: explanatory diagram by MEME : [GO TO MEME ZONE↓](#MEME_realface)

2: sfw/nsfw merged into one model. Originally, nsfw models were separated because adding NSFW content (models like NAI and gape) would change the face and cause the aforementioned problems. Now that those have been improved, the models can be packed into one.

In addition, thanks to excellent extensions such as [ModelToolkit](https://github.com/arenatemp/stable-diffusion-webui-model-toolkit

), the model file size could be reduced (1.98 GB per model).

▼Variations

### AOM3A1

Features: Anime like illustrations with flat paint. Cute enough as it is, but I really like to apply LoRA of anime characters to this model to generate high quality anime illustrations like a frame from a theatre version.

### AOM3A2

Features: Oil paintings like style artistic illustrations and stylish background depictions. In fact, this is mostly due to the work of Counterfeit 2.5, but the textures are more realistic thanks to the U-Net Blocks Weight Merge.

### AOM3A3

Features: Midpoint of artistic and kawaii. the model has been tuned to combine realistic textures, a artistic style that also feels like an oil colour style, and a cute anime-style face. Can be used to create a wide range of illustrations.

### AOM3A1B

AOM3A1B added. The model was merged by mistakenly selecting 'Add sum' when 'Add differences' should have been selected in the AOM3A3 recipe. It was an unintended merge, but we share it because the illustrations produced are consistently good results.

In my review, this is an illustration style somewhere between AOM3A1 and A3.

### MORE

In addition, these U-Net Blocks Weight Merge models take numerous steps but are carefully merged to ensure that mutual content is not overwritten.

(Of course, all models allow full control over adult content.)

- 🔐 When generating illustrations for the general public: write "nsfw" in the negative prompt field

- 🔞 ~~When generating adult illustrations: "nsfw" in the positive prompt field~~ -> It can be generated without putting it in. If you include it, the atmosphere will be more NSFW.

### Sample Gallery

🚧Editing🚧

▼A1

<details>

<summary>©</summary>

(1)©Yurucamp: Inuyama Aoi, (2)©The Quintessential Quintuplets: Nakano Yotsuba, (3)©Sailor Moon: Mizuno Ami/SailorMercury

</details>

### Description for enthusiast

AOM3 was created with a focus on improving the nsfw version of AOM2, as mentioned above.The AOM3 is a merge of the following two models into AOM2sfw using U-Net Blocks Weight Merge, while extracting only the NSFW content part.

(1) NAI: trained in Danbooru

(2)gape: Finetune model of NAI trained on Danbooru's very hardcore NSFW content.

In other words, if you are looking for something like AOM3sfw, it is AOM2sfw.The AOM3 was merged with the NSFW model while removing only the layers that have a negative impact on the face and body. However, the faces and compositions are not an exact match to AOM2sfw.AOM2sfw is sometimes superior when generating SFW content. I recommend choosing according to the intended use of the illustration.See below for a comparison between AOM2sfw and AOM3.

▼A summary of the AOM3 work is as follows

1. investigated the impact of the NAI and gape layers as AOM2 _nsfw onwards is crap.

2. cut face layer: OUT04 because I want realistic faces to stop → Failed. No change.

3. gapeNAI layer investigation|

a. (IN05-08 (especially IN07) | Change the illustration significantly. Noise is applied, natural colours are lost, shadows die, and we can see that the IN deep layer is a layer of light and shade.

b. OUT03-05(?) | likely to be sexual section/NSFW layer.Cutting here will kill the NSFW.

c. OUT03,OUT04|NSFW effects are in(?). e.g.: spoken hearts, trembling, motion lines, etc...

d. OUT05|This is really an NSFW switch. All the "NSFW atmosphere" is in here. Facial expressions, Heavy breaths, etc...

e. OUT10-11|Paint layer. Does not affect detail, but does have an extensive impact.

1. (mass production of rubbish from here...)

2. cut IN05-08 and merge NAIgape with flat parameters → avoided creepy muscles and real faces. Also, merging NSFW models stronger has less impact.

3. so, cut IN05-08, OUT10-11 and merge NAI+gape with all others 0.5.

4. → AOM3

AOM3 roughly looks like this

----

▼How to use

- Prompts

- Negative prompts is As simple as possible is good.

(worst quality, low quality:1.4)

- Using "3D" as a negative will result in a rough sketch style at the "sketch" level. Use with caution as it is a very strong prompt.

- How to avoid Real Face

(realistic, lip, nose, tooth, rouge, lipstick, eyeshadow:1.0), (abs, muscular, rib:1.0),

- How to avoid Bokeh

(depth of field, bokeh, blurry:1.4)

- How to remove mosaic: `(censored, mosaic censoring, bar censor, convenient censoring, pointless censoring:1.0),`

- How to remove blush: `(blush, embarrassed, nose blush, light blush, full-face blush:1.4), `

- 🔰Basic negative prompts sample for Anime girl ↓

- v1

`nsfw, (worst quality, low quality:1.4), (realistic, lip, nose, tooth, rouge, lipstick, eyeshadow:1.0), (dusty sunbeams:1.0),, (abs, muscular, rib:1.0), (depth of field, bokeh, blurry:1.4),(motion lines, motion blur:1.4), (greyscale, monochrome:1.0), text, title, logo, signature`

- v2

`nsfw, (worst quality, low quality:1.4), (lip, nose, tooth, rouge, lipstick, eyeshadow:1.4), (blush:1.2), (jpeg artifacts:1.4), (depth of field, bokeh, blurry, film grain, chromatic aberration, lens flare:1.0), (1boy, abs, muscular, rib:1.0), greyscale, monochrome, dusty sunbeams, trembling, motion lines, motion blur, emphasis lines, text, title, logo, signature, `

- Sampler: ~~“DPM++ SDE Karras” is good~~ Take your pick

- Steps:

- DPM++ SDE Karras: Test: 12~ ,illustration: 20~

- DPM++ 2M Karras: Test: 20~ ,illustration: 28~

- Clipskip: 1 or 2

- Upscaler :

- Detailed illust → Latenet (nearest-exact)

Denoise strength: 0.5 (0.5~0.6)

- Simple upscale: Swin IR, ESRGAN, Remacri etc…

Denoise strength: Can be set low. (0.35~0.6)

---

👩🍳Model details / Recipe

▼Hash

- AOM3.safetensors

D124FC18F0232D7F0A2A70358CDB1288AF9E1EE8596200F50F0936BE59514F6D

- AOM3A1.safetensors

F303D108122DDD43A34C160BD46DBB08CB0E088E979ACDA0BF168A7A1F5820E0

- AOM3A2.safetensors

553398964F9277A104DA840A930794AC5634FC442E6791E5D7E72B82B3BB88C3

- AOM3A3.safetensors

EB4099BA9CD5E69AB526FCA22A2E967F286F8512D9509B735C892FA6468767CF

▼Use Models

1. AOM2sfw

「038ba203d8ba3c8af24f14e01fbb870c85bbb8d4b6d9520804828f4193d12ce9」

1. AnythingV3.0 huggingface pruned

[2700c435]「543bcbc21294831c6245cd74c8a7707761e28812c690f946cb81fef930d54b5e」

1. NovelAI animefull-final-pruned

[925997e9]「89d59c3dde4c56c6d5c41da34cc55ce479d93b4007046980934b14db71bdb2a8」

1. NovelAI sfw

[1d4a34af]「22fa233c2dfd7748d534be603345cb9abf994a23244dfdfc1013f4f90322feca」

1. Gape60

[25396b85]「893cca5903ccd0519876f58f4bc188dd8fcc5beb8a69c1a3f1a5fe314bb573f5」

1. BasilMix

「bbf07e3a1c3482c138d096f7dcdb4581a2aa573b74a68ba0906c7b657942f1c2」

1. chilloutmix_fp16.safetensors

「4b3bf0860b7f372481d0b6ac306fed43b0635caf8aa788e28b32377675ce7630」

1. Counterfeit-V2.5_fp16.safetensors

「71e703a0fca0e284dd9868bca3ce63c64084db1f0d68835f0a31e1f4e5b7cca6」

1. kenshi_01_fp16.safetensors

「3b3982f3aaeaa8af3639a19001067905e146179b6cddf2e3b34a474a0acae7fa」

----

▼AOM3

▼**Instructions:**

USE: [https://github.com/hako-mikan/sd-webui-supermerger/](https://github.com/hako-mikan/sd-webui-supermerger/)

(This extension is really great. It turns a month's work into an hour. Thank you)

STEP: 1 | BWM : NAI - NAIsfw & gape - NAI

CUT: IN05-IN08, OUT10-11

| Model: A | Model: B | Model: C | Interpolation Method | Weight | Merge Name |

| -------- | -------- | -------- | -------------------- | ----------------------------------------------------------------------------------------- | ---------- |

| AOM2sfw | NAI full | NAI sfw | Add Difference @ 1.0 | 0,0.5,0.5,0.5,0.5,0.5,0,0,0,0,0.5,0.5,0.5,0.5,0.5,0.5,0.5,0.5,0.5,0.5,0.5,0.5,0.5,0.5,0,0 | temp01 |

CUT: IN05-IN08, OUT10-11

| Model: A | Model: B | Model: C | Interpolation Method | Weight | Merge Name |

| -------- | -------- | -------- | -------------------- | ----------------------------------------------------------------------------------------- | ---------- |

| temp01 | gape60 | NAI full | Add Difference @ 1.0 | 0,0.5,0.5,0.5,0.5,0.5,0,0,0,0,0.5,0.5,0.5,0.5,0.5,0.5,0.5,0.5,0.5,0.5,0.5,0.5,0.5,0.5,0,0 | AOM3 |

▼AOM3A1

▼**Instructions:**

STEP: 1 | Change the base photorealistic model of AOM3 from BasilMix to Chilloutmix.

Change the photorealistic model from BasilMix to Chilloutmix and proceed to gapeNAI merge.

STEP: 2 |

| Step | Interpolation Method | Primary Model | Secondary Model | Tertiary Model | Merge Name |

| ---- | -------------------- | -------------- | --------------- | -------------- | ------------------ |

| 1 | SUM @ 0.5 | Counterfeit2.5 | Kenshi | | Counterfeit+Kenshi |

STEP: 3 |

CUT: BASE0, IN00-IN08:0, IN10:0.1, OUT03-04-05:0, OUT08:0.2

| Model: A | Model: B | Model: C | Interpolation Method | Weight | Merge Name |

| -------- | ------------------ | -------- | -------------------- | --------------------------------------------------------------------------- | ---------- |

| AOM3 | Counterfeit+Kenshi | | Add SUM @ 1.0 | 0,0,0,0,0,0,0,0,0,0.3,0.1,0.3,0.3,0.3,0.2,0.1,0,0,0,0.3,0.3,0.2,0.3,0.4,0.5 | AOM3A1 |

▼AOM3A2

▼?

CUT: BASE0, IN05:0.3、IN06-IN08:0, IN10:0.1, OUT03:0, OUT04:0.3, OUT05:0, OUT08:0.2

▼**Instructions:**

| Model: A | Model: B | Model: C | Interpolation Method | Weight | Merge Name |

| -------- | -------------- | -------- | -------------------- | --------------------------------------------------------- | ---------- |

| AOM3 | Counterfeit2.5 | | Add SUM @ 1.0 | 0,1,1,1,1,1,0.3,0,0,0,1,0.1,1,1,1,1,1,0,1,0,1,1,0.2,1,1,1 | AOM3A2 |

▼AOM3A3

▼?

CUT : BASE0, IN05-IN08:0, IN10:0.1, OUT03:0.5, OUT04-05:0.1, OUT08:0.2

| Model: A | Model: B | Model: C | Interpolation Method | Weight | Merge Name |

| -------- | -------------- | -------- | -------------------- | --------------------------------------------------------------------------------------------- | ---------- |

| AOM3 | Counterfeit2.5 | | Add SUM @ 1.0 | 0,0.6,0.6,0.6,0.6,0.6,0,0,0,0,0.6,0.1,0.6,0.6,0.6,0.6,0.6,0.5,0.1,0.1,0.6,0.6,0.2,0.6,0.6,0.6 | AOM3A3 |

----

## AbyssOrangeMix2 (AOM2)

――Creating the next generation of illustration with “Abyss”!