modelId

stringlengths 4

81

| tags

list | pipeline_tag

stringclasses 17

values | config

dict | downloads

int64 0

59.7M

| first_commit

timestamp[ns, tz=UTC] | card

stringlengths 51

438k

|

|---|---|---|---|---|---|---|

AnonymousSub/EManuals_BERT_copy_wikiqa

|

[

"pytorch",

"bert",

"text-classification",

"transformers"

] |

text-classification

|

{

"architectures": [

"BertForSequenceClassification"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 29 | 2023-03-06T12:01:11Z |

---

library_name: stable-baselines3

tags:

- SpaceInvadersNoFrameskip-v4

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: DQN

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: SpaceInvadersNoFrameskip-v4

type: SpaceInvadersNoFrameskip-v4

metrics:

- type: mean_reward

value: 259.00 +/- 184.58

name: mean_reward

verified: false

---

# **DQN** Agent playing **SpaceInvadersNoFrameskip-v4**

This is a trained model of a **DQN** agent playing **SpaceInvadersNoFrameskip-v4**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3)

and the [RL Zoo](https://github.com/DLR-RM/rl-baselines3-zoo).

The RL Zoo is a training framework for Stable Baselines3

reinforcement learning agents,

with hyperparameter optimization and pre-trained agents included.

## Usage (with SB3 RL Zoo)

RL Zoo: https://github.com/DLR-RM/rl-baselines3-zoo<br/>

SB3: https://github.com/DLR-RM/stable-baselines3<br/>

SB3 Contrib: https://github.com/Stable-Baselines-Team/stable-baselines3-contrib

Install the RL Zoo (with SB3 and SB3-Contrib):

```bash

pip install rl_zoo3

```

```

# Download model and save it into the logs/ folder

python -m rl_zoo3.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga kraken2404 -f logs/

python -m rl_zoo3.enjoy --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

If you installed the RL Zoo3 via pip (`pip install rl_zoo3`), from anywhere you can do:

```

python -m rl_zoo3.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga kraken2404 -f logs/

python -m rl_zoo3.enjoy --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

## Training (with the RL Zoo)

```

python -m rl_zoo3.train --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

# Upload the model and generate video (when possible)

python -m rl_zoo3.push_to_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/ -orga kraken2404

```

## Hyperparameters

```python

OrderedDict([('batch_size', 64),

('buffer_size', 100000),

('env_wrapper',

['stable_baselines3.common.atari_wrappers.AtariWrapper']),

('exploration_final_eps', 0.01),

('exploration_fraction', 0.1),

('frame_stack', 4),

('gamma', 0.995),

('gradient_steps', 1),

('learning_rate', 0.0001),

('learning_starts', 100000),

('n_timesteps', 130000.0),

('optimize_memory_usage', False),

('policy', 'CnnPolicy'),

('target_update_interval', 1000),

('train_freq', 4),

('normalize', False)])

```

|

AnonymousSub/EManuals_RoBERTa_wikiqa

|

[

"pytorch",

"roberta",

"text-classification",

"transformers"

] |

text-classification

|

{

"architectures": [

"RobertaForSequenceClassification"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 29 | 2023-03-06T12:02:33Z |

---

library_name: stable-baselines3

tags:

- PandaReachDense-v2

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: A2C

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: PandaReachDense-v2

type: PandaReachDense-v2

metrics:

- type: mean_reward

value: -1.45 +/- 0.39

name: mean_reward

verified: false

---

# **A2C** Agent playing **PandaReachDense-v2**

This is a trained model of a **A2C** agent playing **PandaReachDense-v2**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

AnonymousSub/SDR_HF_model_base

|

[

"pytorch",

"roberta",

"feature-extraction",

"transformers"

] |

feature-extraction

|

{

"architectures": [

"RobertaModel"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 1 | null |

---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

- name: distilgpt2-finetuned-common-voice

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilgpt2-finetuned-common-voice

This model is a fine-tuned version of [distilgpt2](https://huggingface.co/distilgpt2) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 3.0746

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 2

- eval_batch_size: 2

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| No log | 1.0 | 223 | 3.1103 |

| No log | 2.0 | 446 | 3.0796 |

| 4.4046 | 3.0 | 669 | 3.0746 |

### Framework versions

- Transformers 4.26.1

- Pytorch 1.13.1+cu117

- Datasets 2.10.1

- Tokenizers 0.13.2

|

AnonymousSub/SR_rule_based_roberta_twostage_quadruplet_epochs_1_shard_1

|

[

"pytorch",

"roberta",

"feature-extraction",

"transformers"

] |

feature-extraction

|

{

"architectures": [

"RobertaModel"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 4 | 2023-03-06T12:48:24Z |

---

tags:

- Taxi-v3

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: Taxi-v3

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Taxi-v3

type: Taxi-v3

metrics:

- type: mean_reward

value: 7.56 +/- 2.71

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **Taxi-v3**

This is a trained model of a **Q-Learning** agent playing **Taxi-v3** .

## Usage

```python

model = load_from_hub(repo_id="JulianZas/Taxi-v3", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

```

|

AnonymousSub/SR_rule_based_roberta_twostagetriplet_epochs_1_shard_10

|

[

"pytorch",

"roberta",

"feature-extraction",

"transformers"

] |

feature-extraction

|

{

"architectures": [

"RobertaModel"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 4 | null |

---

library_name: stable-baselines3

tags:

- SpaceInvadersNoFrameskip-v4

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: DQN

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: SpaceInvadersNoFrameskip-v4

type: SpaceInvadersNoFrameskip-v4

metrics:

- type: mean_reward

value: 574.00 +/- 157.16

name: mean_reward

verified: false

---

# **DQN** Agent playing **SpaceInvadersNoFrameskip-v4**

This is a trained model of a **DQN** agent playing **SpaceInvadersNoFrameskip-v4**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3)

and the [RL Zoo](https://github.com/DLR-RM/rl-baselines3-zoo).

The RL Zoo is a training framework for Stable Baselines3

reinforcement learning agents,

with hyperparameter optimization and pre-trained agents included.

## Usage (with SB3 RL Zoo)

RL Zoo: https://github.com/DLR-RM/rl-baselines3-zoo<br/>

SB3: https://github.com/DLR-RM/stable-baselines3<br/>

SB3 Contrib: https://github.com/Stable-Baselines-Team/stable-baselines3-contrib

Install the RL Zoo (with SB3 and SB3-Contrib):

```bash

pip install rl_zoo3

```

```

# Download model and save it into the logs/ folder

python -m rl_zoo3.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga pastells -f logs/

python -m rl_zoo3.enjoy --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

If you installed the RL Zoo3 via pip (`pip install rl_zoo3`), from anywhere you can do:

```

python -m rl_zoo3.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga pastells -f logs/

python -m rl_zoo3.enjoy --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

## Training (with the RL Zoo)

```

python -m rl_zoo3.train --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

# Upload the model and generate video (when possible)

python -m rl_zoo3.push_to_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/ -orga pastells

```

## Hyperparameters

```python

OrderedDict([('batch_size', 32),

('buffer_size', 100000),

('env_wrapper',

['stable_baselines3.common.atari_wrappers.AtariWrapper']),

('exploration_final_eps', 0.01),

('exploration_fraction', 0.1),

('frame_stack', 4),

('gradient_steps', 1),

('learning_rate', 0.0001),

('learning_starts', 100000),

('n_timesteps', 1000000.0),

('optimize_memory_usage', False),

('policy', 'CnnPolicy'),

('target_update_interval', 1000),

('train_freq', 4),

('normalize', False)])

```

|

AnonymousSub/bert_triplet_epochs_1_shard_10

|

[

"pytorch",

"bert",

"feature-extraction",

"transformers"

] |

feature-extraction

|

{

"architectures": [

"BertModel"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 1 | null |

---

tags:

- FrozenLake-v1-4x4-no_slippery

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-FrozenLake-v1-4x4-noSlippery

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: FrozenLake-v1-4x4-no_slippery

type: FrozenLake-v1-4x4-no_slippery

metrics:

- type: mean_reward

value: 1.00 +/- 0.00

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **FrozenLake-v1**

This is a trained model of a **Q-Learning** agent playing **FrozenLake-v1** .

## Usage

```python

model = load_from_hub(repo_id="Kittitouch/q-FrozenLake-v1-4x4-noSlippery", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

```

|

AnonymousSub/cline-techqa

|

[

"pytorch",

"roberta",

"question-answering",

"transformers",

"autotrain_compatible"

] |

question-answering

|

{

"architectures": [

"RobertaForQuestionAnswering"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 6 | null |

---

library_name: stable-baselines3

tags:

- SpaceInvadersNoFrameskip-v4

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: DQN

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: SpaceInvadersNoFrameskip-v4

type: SpaceInvadersNoFrameskip-v4

metrics:

- type: mean_reward

value: 621.00 +/- 179.57

name: mean_reward

verified: false

---

# **DQN** Agent playing **SpaceInvadersNoFrameskip-v4**

This is a trained model of a **DQN** agent playing **SpaceInvadersNoFrameskip-v4**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3)

and the [RL Zoo](https://github.com/DLR-RM/rl-baselines3-zoo).

The RL Zoo is a training framework for Stable Baselines3

reinforcement learning agents,

with hyperparameter optimization and pre-trained agents included.

## Usage (with SB3 RL Zoo)

RL Zoo: https://github.com/DLR-RM/rl-baselines3-zoo<br/>

SB3: https://github.com/DLR-RM/stable-baselines3<br/>

SB3 Contrib: https://github.com/Stable-Baselines-Team/stable-baselines3-contrib

Install the RL Zoo (with SB3 and SB3-Contrib):

```bash

pip install rl_zoo3

```

```

# Download model and save it into the logs/ folder

python -m rl_zoo3.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga rossHuggingMay -f logs/

python -m rl_zoo3.enjoy --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

If you installed the RL Zoo3 via pip (`pip install rl_zoo3`), from anywhere you can do:

```

python -m rl_zoo3.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga rossHuggingMay -f logs/

python -m rl_zoo3.enjoy --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

## Training (with the RL Zoo)

```

python -m rl_zoo3.train --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

# Upload the model and generate video (when possible)

python -m rl_zoo3.push_to_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/ -orga rossHuggingMay

```

## Hyperparameters

```python

OrderedDict([('batch_size', 32),

('buffer_size', 100000),

('env_wrapper',

['stable_baselines3.common.atari_wrappers.AtariWrapper']),

('exploration_final_eps', 0.01),

('exploration_fraction', 0.1),

('frame_stack', 4),

('gradient_steps', 1),

('learning_rate', 0.0001),

('learning_starts', 100000),

('n_timesteps', 10000000.0),

('optimize_memory_usage', False),

('policy', 'CnnPolicy'),

('target_update_interval', 1000),

('train_freq', 4),

('normalize', False)])

```

|

AnonymousSub/cline

|

[

"pytorch",

"roberta",

"transformers"

] | null |

{

"architectures": [

"LecbertForPreTraining"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 2 | null |

---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- accuracy

- f1

model-index:

- name: distilbert-base-uncased-finetuned-mathQA

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-mathQA

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0752

- Accuracy: 0.9857

- F1: 0.9857

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|

| 0.3155 | 1.0 | 1865 | 0.0997 | 0.9727 | 0.9727 |

| 0.0726 | 2.0 | 3730 | 0.0813 | 0.9826 | 0.9825 |

| 0.0292 | 3.0 | 5595 | 0.0752 | 0.9857 | 0.9857 |

### Framework versions

- Transformers 4.26.1

- Pytorch 1.13.1+cu116

- Datasets 2.10.1

- Tokenizers 0.13.2

|

AnonymousSub/cline_wikiqa

|

[

"pytorch",

"roberta",

"text-classification",

"transformers"

] |

text-classification

|

{

"architectures": [

"RobertaForSequenceClassification"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 27 | null |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- cats_vs_dogs

metrics:

- accuracy

model-index:

- name: beit-base

results:

- task:

name: Image Classification

type: image-classification

dataset:

name: cats_vs_dogs

type: cats_vs_dogs

config: default

split: train

args: default

metrics:

- name: Accuracy

type: accuracy

value: 0.9976505766766339

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# beit-base

This model is a fine-tuned version of [microsoft/beit-base-patch16-224-pt22k-ft22k](https://huggingface.co/microsoft/beit-base-patch16-224-pt22k-ft22k) on the cats_vs_dogs dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0116

- Accuracy: 0.9977

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 0.0303 | 1.0 | 585 | 0.0186 | 0.9942 |

| 0.0374 | 2.0 | 1170 | 0.0150 | 0.9955 |

| 0.0559 | 3.0 | 1755 | 0.0116 | 0.9977 |

### Framework versions

- Transformers 4.26.1

- Pytorch 1.13.1+cu116

- Datasets 2.10.1

- Tokenizers 0.13.2

|

AnonymousSub/declutr-s10-SR

|

[

"pytorch",

"roberta",

"text-classification",

"transformers"

] |

text-classification

|

{

"architectures": [

"RobertaForSequenceClassification"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 36 | null |

---

license: mit

tags:

- generated_from_trainer

metrics:

- precision

- recall

- f1

model-index:

- name: japanese-roberta-base-finetuned-rinna

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# japanese-roberta-base-finetuned-rinna

This model is a fine-tuned version of [rinna/japanese-roberta-base](https://huggingface.co/rinna/japanese-roberta-base) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.9637

- Precision: 0.8062

- Recall: 0.8107

- F1: 0.8071

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 4

- eval_batch_size: 4

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|

| 0.7188 | 1.0 | 617 | 0.5825 | 0.7584 | 0.7945 | 0.7724 |

| 0.5951 | 2.0 | 1234 | 0.7466 | 0.7489 | 0.7799 | 0.7628 |

| 0.5206 | 3.0 | 1851 | 0.9570 | 0.7814 | 0.7767 | 0.7747 |

| 0.4061 | 4.0 | 2468 | 0.9637 | 0.8062 | 0.8107 | 0.8071 |

| 0.1879 | 5.0 | 3085 | 1.0516 | 0.8028 | 0.8026 | 0.8021 |

### Framework versions

- Transformers 4.25.1

- Pytorch 1.13.0

- Datasets 2.8.0

- Tokenizers 0.13.2

|

AnonymousSub/declutr-techqa

|

[

"pytorch",

"roberta",

"question-answering",

"transformers",

"autotrain_compatible"

] |

question-answering

|

{

"architectures": [

"RobertaForQuestionAnswering"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 5 | null |

---

tags:

- unity-ml-agents

- ml-agents

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-Pyramids

library_name: ml-agents

---

# **ppo** Agent playing **Pyramids**

This is a trained model of a **ppo** agent playing **Pyramids** using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://github.com/huggingface/ml-agents#get-started

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

### Resume the training

```

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser:**.

1. Go to https://huggingface.co/spaces/unity/ML-Agents-Pyramids

2. Step 1: Write your model_id: FlavienDeseure/ppo-Pyramids

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

AnonymousSub/dummy_1

|

[

"pytorch",

"bert",

"text-classification",

"transformers"

] |

text-classification

|

{

"architectures": [

"BertForSequenceClassification"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 33 | 2023-03-06T14:04:13Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- emotion

metrics:

- accuracy

- f1

model-index:

- name: distilbert-base-uncased-finetuned-emotion

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: emotion

type: emotion

config: split

split: validation

args: split

metrics:

- name: Accuracy

type: accuracy

value: 0.9245

- name: F1

type: f1

value: 0.9245266966207797

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-emotion

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2154

- Accuracy: 0.9245

- F1: 0.9245

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|

| 0.8219 | 1.0 | 250 | 0.3094 | 0.911 | 0.9073 |

| 0.2496 | 2.0 | 500 | 0.2154 | 0.9245 | 0.9245 |

### Framework versions

- Transformers 4.26.1

- Pytorch 1.13.1+cu116

- Datasets 2.10.1

- Tokenizers 0.13.2

|

AnonymousSub/hier_triplet_epochs_1_shard_1

|

[

"pytorch",

"bert",

"feature-extraction",

"transformers"

] |

feature-extraction

|

{

"architectures": [

"BertModel"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 8 | 2023-03-06T14:14:40Z |

---

license: apache-2.0

language:

- en

- es

- it

- fr

metrics:

- f1

---

# Federated Learning Based Multilingual Emoji Prediction

This repository contains code for training and evaluating transformer-based models for Uni/multilingual emoji prediction in clean and attack scenarios using Federated Learning. This work is described in the paper "Federated Learning-Based Multilingual Emoji Prediction in Clean and Attack Scenarios."

# Abstract

Federated learning is a growing field in the machine learning community due to its decentralized and private design. Model training in federated learning is distributed over multiple clients giving access to lots of client data while maintaining privacy. Then, a server aggregates the training done on these multiple clients without access to their data, which could be emojis widely used in any social media service and instant messaging platforms to express users' sentiments. This paper proposes federated learning-based multilingual emoji prediction in both clean and attack scenarios. Emoji prediction data have been crawled from both Twitter and SemEval emoji datasets. This data is used to train and evaluate different transformer model sizes including a sparsely activated transformer with either the assumption of clean data in all clients or poisoned data via label flipping attack in some clients. Experimental results on these models show that federated learning in either clean or attacked scenarios performs similarly to centralized training in multilingual emoji prediction on seen and unseen languages under different data sources and distributions. Our trained transformers perform better than other techniques on the SemEval emoji dataset in addition to the privacy as well as distributed benefits of federated learning.

# Performance

> * ACC : 44.734 %

> * MAC-F1 : 31.462 %

> * Also see our [GitHub Repo](https://github.com/kareemgamalmahmoud/FEDERATED-LEARNING-BASED-MULTILINGUAL-EMOJI-PREDICTION-IN-CLEAN-AND-ATTACK-SCENARIOS)

# Dependencies

> * Python 3.6+

> * PyTorch 1.7.0+

> * Transformers 4.0.0+

# Usage

> To use the model, first install the `transformers` package from Hugging Face:

```python

pip install transformers

```

> Then, you can load the model and tokenizer using the following code:

```python

from transformers import AutoModelForSequenceClassification, AutoTokenizer

import numpy as np

import urllib.request

import csv

```

```python

MODEL = "Karim-Gamal/MMiniLM-L12-finetuned-emojis-cen-1"

tokenizer = AutoTokenizer.from_pretrained(MODEL)

model = AutoModelForSequenceClassification.from_pretrained(MODEL)

```

> Once you have the tokenizer and model, you can preprocess your text and pass it to the model for prediction:

```python

# Preprocess text (username and link placeholders)

def preprocess(text):

new_text = []

for t in text.split(" "):

t = '@user' if t.startswith('@') and len(t) > 1 else t

t = 'http' if t.startswith('http') else t

new_text.append(t)

return " ".join(new_text)

text = "Hello world"

text = preprocess(text)

encoded_input = tokenizer(text, return_tensors='pt')

output = model(**encoded_input)

scores = output[0][0].detach().numpy()

```

> The scores variable contains the probabilities for each of the possible emoji labels. To get the top k predictions, you can use the following code:

```python

# download label mapping

labels=[]

mapping_link = "https://raw.githubusercontent.com/cardiffnlp/tweeteval/main/datasets/emoji/mapping.txt"

with urllib.request.urlopen(mapping_link) as f:

html = f.read().decode('utf-8').split("\n")

csvreader = csv.reader(html, delimiter='\t')

labels = [row[1] for row in csvreader if len(row) > 1]

k = 3 # number of top predictions to show

ranking = np.argsort(scores)

ranking = ranking[::-1]

for i in range(k):

l = labels[ranking[i]]

s = scores[ranking[i]]

print(f"{i+1}) {l} {np.round(float(s), 4)}")

```

## Note : this is the source for that code : [Link](https://huggingface.co/cardiffnlp/twitter-roberta-base-emoji)

|

AnonymousSub/rule_based_hier_quadruplet_epochs_1_shard_1_wikiqa

|

[

"pytorch",

"bert",

"text-classification",

"transformers"

] |

text-classification

|

{

"architectures": [

"BertForSequenceClassification"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 30 | null |

---

license: apache-2.0

language:

- en

- es

- it

- fr

metrics:

- f1

---

# Federated Learning Based Multilingual Emoji Prediction

This repository contains code for training and evaluating transformer-based models for Uni/multilingual emoji prediction in clean and attack scenarios using Federated Learning. This work is described in the paper "Federated Learning-Based Multilingual Emoji Prediction in Clean and Attack Scenarios."

# Abstract

Federated learning is a growing field in the machine learning community due to its decentralized and private design. Model training in federated learning is distributed over multiple clients giving access to lots of client data while maintaining privacy. Then, a server aggregates the training done on these multiple clients without access to their data, which could be emojis widely used in any social media service and instant messaging platforms to express users' sentiments. This paper proposes federated learning-based multilingual emoji prediction in both clean and attack scenarios. Emoji prediction data have been crawled from both Twitter and SemEval emoji datasets. This data is used to train and evaluate different transformer model sizes including a sparsely activated transformer with either the assumption of clean data in all clients or poisoned data via label flipping attack in some clients. Experimental results on these models show that federated learning in either clean or attacked scenarios performs similarly to centralized training in multilingual emoji prediction on seen and unseen languages under different data sources and distributions. Our trained transformers perform better than other techniques on the SemEval emoji dataset in addition to the privacy as well as distributed benefits of federated learning.

# Performance

> * Acc : 44.412 %

> * Mac-F1 : 32.311 %

> * Also see our [GitHub Repo](https://github.com/kareemgamalmahmoud/FEDERATED-LEARNING-BASED-MULTILINGUAL-EMOJI-PREDICTION-IN-CLEAN-AND-ATTACK-SCENARIOS)

# Dependencies

> * Python 3.6+

> * PyTorch 1.7.0+

> * Transformers 4.0.0+

# Usage

> To use the model, first install the `transformers` package from Hugging Face:

```python

pip install transformers

```

> Then, you can load the model and tokenizer using the following code:

```python

from transformers import AutoModelForSequenceClassification, AutoTokenizer

import numpy as np

import urllib.request

import csv

```

```python

MODEL = "Karim-Gamal/MMiniLM-L12-finetuned-emojis-1-client-toxic-FedAvg-IID-Fed"

tokenizer = AutoTokenizer.from_pretrained(MODEL)

model = AutoModelForSequenceClassification.from_pretrained(MODEL)

```

> Once you have the tokenizer and model, you can preprocess your text and pass it to the model for prediction:

```python

# Preprocess text (username and link placeholders)

def preprocess(text):

new_text = []

for t in text.split(" "):

t = '@user' if t.startswith('@') and len(t) > 1 else t

t = 'http' if t.startswith('http') else t

new_text.append(t)

return " ".join(new_text)

text = "Hello world"

text = preprocess(text)

encoded_input = tokenizer(text, return_tensors='pt')

output = model(**encoded_input)

scores = output[0][0].detach().numpy()

```

> The scores variable contains the probabilities for each of the possible emoji labels. To get the top k predictions, you can use the following code:

```python

# download label mapping

labels=[]

mapping_link = "https://raw.githubusercontent.com/cardiffnlp/tweeteval/main/datasets/emoji/mapping.txt"

with urllib.request.urlopen(mapping_link) as f:

html = f.read().decode('utf-8').split("\n")

csvreader = csv.reader(html, delimiter='\t')

labels = [row[1] for row in csvreader if len(row) > 1]

k = 3 # number of top predictions to show

ranking = np.argsort(scores)

ranking = ranking[::-1]

for i in range(k):

l = labels[ranking[i]]

s = scores[ranking[i]]

print(f"{i+1}) {l} {np.round(float(s), 4)}")

```

## Note : this is the source for that code : [Link](https://huggingface.co/cardiffnlp/twitter-roberta-base-emoji)

|

AnonymousSub/rule_based_only_classfn_epochs_1_shard_10

|

[

"pytorch",

"bert",

"feature-extraction",

"transformers"

] |

feature-extraction

|

{

"architectures": [

"BertModel"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 7 | null |

Using this Fansly unlock media service allows you to access any Fansly premium account without paying monthly subscription.

You don’t need to create an account or add your card to unlock videos media on this Fansly platform by using that service

To unlock the locked media on Fansly creator`s page, you can merely follow this guide

1. Just visit Fansly unlock media service here https://ujeb.se/oiMifz

2. Simply enter Fansly creator`s username that you want to unlock media contents

3. Choose the device platform that you are using either iOS or Android or Windows

4. Choose photos or videos contents or both contents from that Fansly username

5. Click Unlock button to start the process

|

AnonymousSub/rule_based_roberta_bert_quadruplet_epochs_1_shard_10

|

[

"pytorch",

"roberta",

"feature-extraction",

"transformers"

] |

feature-extraction

|

{

"architectures": [

"RobertaModel"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 8 | null |

---

license: apache-2.0

language:

- en

- es

- it

- fr

metrics:

- f1

---

# Federated Learning Based Multilingual Emoji Prediction

This repository contains code for training and evaluating transformer-based models for Uni/multilingual emoji prediction in clean and attack scenarios using Federated Learning. This work is described in the paper "Federated Learning-Based Multilingual Emoji Prediction in Clean and Attack Scenarios."

# Abstract

Federated learning is a growing field in the machine learning community due to its decentralized and private design. Model training in federated learning is distributed over multiple clients giving access to lots of client data while maintaining privacy. Then, a server aggregates the training done on these multiple clients without access to their data, which could be emojis widely used in any social media service and instant messaging platforms to express users' sentiments. This paper proposes federated learning-based multilingual emoji prediction in both clean and attack scenarios. Emoji prediction data have been crawled from both Twitter and SemEval emoji datasets. This data is used to train and evaluate different transformer model sizes including a sparsely activated transformer with either the assumption of clean data in all clients or poisoned data via label flipping attack in some clients. Experimental results on these models show that federated learning in either clean or attacked scenarios performs similarly to centralized training in multilingual emoji prediction on seen and unseen languages under different data sources and distributions. Our trained transformers perform better than other techniques on the SemEval emoji dataset in addition to the privacy as well as distributed benefits of federated learning.

# Performance

> * Acc : 35.198 %

> * Mac-F1 : 25.343 %

> * Also see our [GitHub Repo](https://github.com/kareemgamalmahmoud/FEDERATED-LEARNING-BASED-MULTILINGUAL-EMOJI-PREDICTION-IN-CLEAN-AND-ATTACK-SCENARIOS)

# Dependencies

> * Python 3.6+

> * PyTorch 1.7.0+

> * Transformers 4.0.0+

# Usage

> To use the model, first install the `transformers` package from Hugging Face:

```python

pip install transformers

```

> Then, you can load the model and tokenizer using the following code:

```python

from transformers import AutoModelForSequenceClassification, AutoTokenizer

import numpy as np

import urllib.request

import csv

```

```python

MODEL = "Karim-Gamal/MMiniLM-L12-finetuned-emojis-2-client-toxic-FedAvg-IID-Fed"

tokenizer = AutoTokenizer.from_pretrained(MODEL)

model = AutoModelForSequenceClassification.from_pretrained(MODEL)

```

> Once you have the tokenizer and model, you can preprocess your text and pass it to the model for prediction:

```python

# Preprocess text (username and link placeholders)

def preprocess(text):

new_text = []

for t in text.split(" "):

t = '@user' if t.startswith('@') and len(t) > 1 else t

t = 'http' if t.startswith('http') else t

new_text.append(t)

return " ".join(new_text)

text = "Hello world"

text = preprocess(text)

encoded_input = tokenizer(text, return_tensors='pt')

output = model(**encoded_input)

scores = output[0][0].detach().numpy()

```

> The scores variable contains the probabilities for each of the possible emoji labels. To get the top k predictions, you can use the following code:

```python

# download label mapping

labels=[]

mapping_link = "https://raw.githubusercontent.com/cardiffnlp/tweeteval/main/datasets/emoji/mapping.txt"

with urllib.request.urlopen(mapping_link) as f:

html = f.read().decode('utf-8').split("\n")

csvreader = csv.reader(html, delimiter='\t')

labels = [row[1] for row in csvreader if len(row) > 1]

k = 3 # number of top predictions to show

ranking = np.argsort(scores)

ranking = ranking[::-1]

for i in range(k):

l = labels[ranking[i]]

s = scores[ranking[i]]

print(f"{i+1}) {l} {np.round(float(s), 4)}")

```

## Note : this is the source for that code : [Link](https://huggingface.co/cardiffnlp/twitter-roberta-base-emoji)

|

AnonymousSub/rule_based_roberta_bert_quadruplet_epochs_1_shard_1_squad2.0

|

[

"pytorch",

"roberta",

"question-answering",

"transformers",

"autotrain_compatible"

] |

question-answering

|

{

"architectures": [

"RobertaForQuestionAnswering"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 2 | null |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- imdb

model-index:

- name: finetuning-sentiment-model-3000-samples

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# finetuning-sentiment-model-3000-samples

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset.

It achieves the following results on the evaluation set:

- eval_loss: 0.6939

- eval_accuracy: 0.5

- eval_f1: 0.0

- eval_runtime: 8.9447

- eval_samples_per_second: 33.539

- eval_steps_per_second: 2.124

- step: 0

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Framework versions

- Transformers 4.26.1

- Pytorch 1.13.1+cu116

- Datasets 2.10.1

- Tokenizers 0.13.2

|

AnonymousSub/rule_based_roberta_hier_quadruplet_epochs_1_shard_1_wikiqa

|

[

"pytorch",

"roberta",

"text-classification",

"transformers"

] |

text-classification

|

{

"architectures": [

"RobertaForSequenceClassification"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 24 | null |

---

language: en

tags:

- financial-sentiment-analysis

- sentiment-analysis

widget:

- text: Stocks rallied and the British pound gained.

duplicated_from: ProsusAI/finbert

---

FinBERT is a pre-trained NLP model to analyze sentiment of financial text. It is built by further training the BERT language model in the finance domain, using a large financial corpus and thereby fine-tuning it for financial sentiment classification. [Financial PhraseBank](https://www.researchgate.net/publication/251231107_Good_Debt_or_Bad_Debt_Detecting_Semantic_Orientations_in_Economic_Texts) by Malo et al. (2014) is used for fine-tuning. For more details, please see the paper [FinBERT: Financial Sentiment Analysis with Pre-trained Language Models](https://arxiv.org/abs/1908.10063) and our related [blog post](https://medium.com/prosus-ai-tech-blog/finbert-financial-sentiment-analysis-with-bert-b277a3607101) on Medium.

The model will give softmax outputs for three labels: positive, negative or neutral.

---

About Prosus

Prosus is a global consumer internet group and one of the largest technology investors in the world. Operating and investing globally in markets with long-term growth potential, Prosus builds leading consumer internet companies that empower people and enrich communities. For more information, please visit www.prosus.com.

Contact information

Please contact Dogu Araci dogu.araci[at]prosus[dot]com and Zulkuf Genc zulkuf.genc[at]prosus[dot]com about any FinBERT related issues and questions.

---

FinBERT in Use (New!)

We are delighted to hear the use of FinBERT at many other organisations. Please, let us know your use-case if you have FinBERT deployed and we add you to this list:

- Prosus

- Huggingface

- Moodys Analytics

- ING

API Implementations

- [Multilingual Rapid API](https://rapidapi.com/financial-sentiment-financial-sentiment-default/api/finbert3/)

|

AnonymousSub/rule_based_roberta_hier_triplet_0.1_epochs_1_shard_1_squad2.0

|

[

"pytorch",

"roberta",

"question-answering",

"transformers",

"autotrain_compatible"

] |

question-answering

|

{

"architectures": [

"RobertaForQuestionAnswering"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 2 | null |

---

tags:

- Pixelcopter-PLE-v0

- reinforce

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: Reinforce-PixelCopter

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Pixelcopter-PLE-v0

type: Pixelcopter-PLE-v0

metrics:

- type: mean_reward

value: 18.90 +/- 20.10

name: mean_reward

verified: false

---

# **Reinforce** Agent playing **Pixelcopter-PLE-v0**

This is a trained model of a **Reinforce** agent playing **Pixelcopter-PLE-v0** .

To learn to use this model and train yours check Unit 4 of the Deep Reinforcement Learning Course: https://huggingface.co/deep-rl-course/unit4/introduction

|

AnonymousSub/rule_based_roberta_hier_triplet_epochs_1_shard_1_wikiqa

|

[

"pytorch",

"roberta",

"text-classification",

"transformers"

] |

text-classification

|

{

"architectures": [

"RobertaForSequenceClassification"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 25 | null |

---

license: apache-2.0

language:

- en

- es

- it

- fr

metrics:

- f1

---

# Federated Learning Based Multilingual Emoji Prediction

This repository contains code for training and evaluating transformer-based models for Uni/multilingual emoji prediction in clean and attack scenarios using Federated Learning. This work is described in the paper "Federated Learning-Based Multilingual Emoji Prediction in Clean and Attack Scenarios."

# Abstract

Federated learning is a growing field in the machine learning community due to its decentralized and private design. Model training in federated learning is distributed over multiple clients giving access to lots of client data while maintaining privacy. Then, a server aggregates the training done on these multiple clients without access to their data, which could be emojis widely used in any social media service and instant messaging platforms to express users' sentiments. This paper proposes federated learning-based multilingual emoji prediction in both clean and attack scenarios. Emoji prediction data have been crawled from both Twitter and SemEval emoji datasets. This data is used to train and evaluate different transformer model sizes including a sparsely activated transformer with either the assumption of clean data in all clients or poisoned data via label flipping attack in some clients. Experimental results on these models show that federated learning in either clean or attacked scenarios performs similarly to centralized training in multilingual emoji prediction on seen and unseen languages under different data sources and distributions. Our trained transformers perform better than other techniques on the SemEval emoji dataset in addition to the privacy as well as distributed benefits of federated learning.

# Performance

> * Acc : 43.364 %

> * Mac-F1 : 30.512 %

> * Also see our [GitHub Repo](https://github.com/kareemgamalmahmoud/FEDERATED-LEARNING-BASED-MULTILINGUAL-EMOJI-PREDICTION-IN-CLEAN-AND-ATTACK-SCENARIOS)

# Dependencies

> * Python 3.6+

> * PyTorch 1.7.0+

> * Transformers 4.0.0+

# Usage

> To use the model, first install the `transformers` package from Hugging Face:

```python

pip install transformers

```

> Then, you can load the model and tokenizer using the following code:

```python

from transformers import AutoModelForSequenceClassification, AutoTokenizer

import numpy as np

import urllib.request

import csv

```

```python

MODEL = "Karim-Gamal/MMiniLM-L12-finetuned-emojis-2-client-toxic-Krum-non-IID-Fed"

tokenizer = AutoTokenizer.from_pretrained(MODEL)

model = AutoModelForSequenceClassification.from_pretrained(MODEL)

```

> Once you have the tokenizer and model, you can preprocess your text and pass it to the model for prediction:

```python

# Preprocess text (username and link placeholders)

def preprocess(text):

new_text = []

for t in text.split(" "):

t = '@user' if t.startswith('@') and len(t) > 1 else t

t = 'http' if t.startswith('http') else t

new_text.append(t)

return " ".join(new_text)

text = "Hello world"

text = preprocess(text)

encoded_input = tokenizer(text, return_tensors='pt')

output = model(**encoded_input)

scores = output[0][0].detach().numpy()

```

> The scores variable contains the probabilities for each of the possible emoji labels. To get the top k predictions, you can use the following code:

```python

# download label mapping

labels=[]

mapping_link = "https://raw.githubusercontent.com/cardiffnlp/tweeteval/main/datasets/emoji/mapping.txt"

with urllib.request.urlopen(mapping_link) as f:

html = f.read().decode('utf-8').split("\n")

csvreader = csv.reader(html, delimiter='\t')

labels = [row[1] for row in csvreader if len(row) > 1]

k = 3 # number of top predictions to show

ranking = np.argsort(scores)

ranking = ranking[::-1]

for i in range(k):

l = labels[ranking[i]]

s = scores[ranking[i]]

print(f"{i+1}) {l} {np.round(float(s), 4)}")

```

## Note : this is the source for that code : [Link](https://huggingface.co/cardiffnlp/twitter-roberta-base-emoji)

|

AnonymousSub/rule_based_roberta_twostage_quadruplet_epochs_1_shard_1_wikiqa

|

[

"pytorch",

"roberta",

"text-classification",

"transformers"

] |

text-classification

|

{

"architectures": [

"RobertaForSequenceClassification"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 24 | null |

---

tags:

- CartPole-v1

- reinforce

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: Reinforce-Cartpole-v1

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: CartPole-v1

type: CartPole-v1

metrics:

- type: mean_reward

value: 500.00 +/- 0.00

name: mean_reward

verified: false

---

# **Reinforce** Agent playing **CartPole-v1**

This is a trained model of a **Reinforce** agent playing **CartPole-v1** .

To learn to use this model and train yours check Unit 4 of the Deep Reinforcement Learning Course: https://huggingface.co/deep-rl-course/unit4/introduction

|

AnonymousSub/rule_based_roberta_twostagequadruplet_hier_epochs_1_shard_10

|

[

"pytorch",

"roberta",

"feature-extraction",

"transformers"

] |

feature-extraction

|

{

"architectures": [

"RobertaModel"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 2 | null |

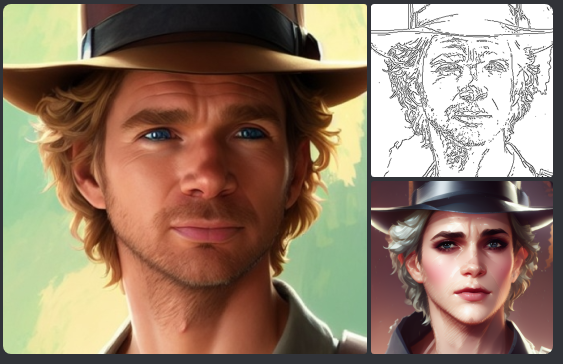

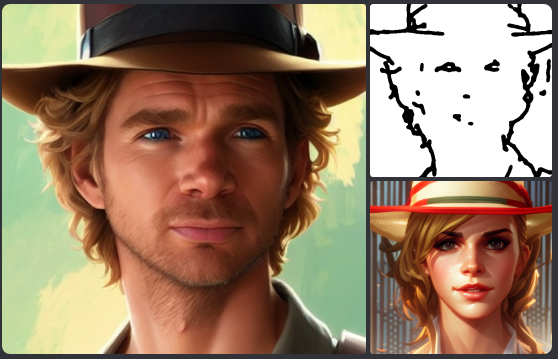

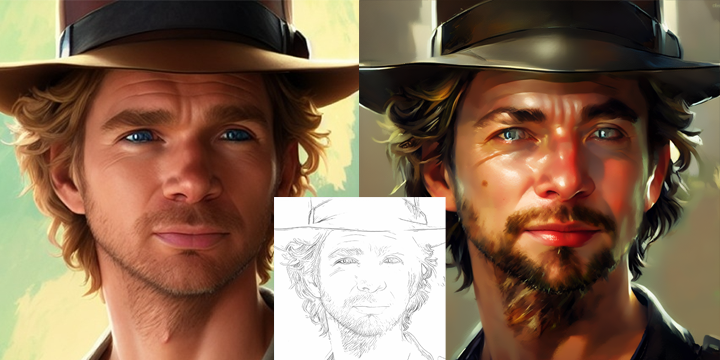

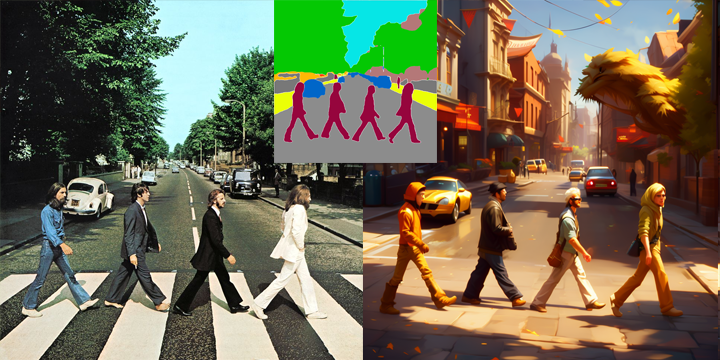

---

language:

- en

license: openrail++

tags:

- art

- diffusers

- stable diffusion

- controlnet

datasets: laion/laion-art

---

Want to support my work: you can bought my Artbook: https://thibaud.art

___

Here's the first version of controlnet for stablediffusion 2.1

Trained on a subset of laion/laion-art

### Safetensors version uploaded, only 700mb!

### Canny:

### Depth:

### ZoeDepth:

### Hed:

### Scribble:

### OpenPose:

### Color:

### OpenPose:

### LineArt:

### Ade20K:

### Normal BAE:

### To use with Automatic1111:

* Download the ckpt files or safetensors ones

* Put it in extensions/sd-webui-controlnet/models

* in settings/controlnet, change cldm_v15.yaml by cldm_v21.yaml

* Enjoy

### To use ZoeDepth:

You can use it with annotator depth/le_res but it works better with ZoeDepth Annotator. My PR is not accepted yet but you can use my fork.

My fork: https://github.com/thibaudart/sd-webui-controlnet

The PR: https://github.com/Mikubill/sd-webui-controlnet/pull/655#issuecomment-1481724024

### Misuse, Malicious Use, and Out-of-Scope Use

The model should not be used to intentionally create or disseminate images that create hostile or alienating environments for people. This includes generating images that people would foreseeably find disturbing, distressing, or offensive; or content that propagates historical or current stereotypes.

Thanks https://huggingface.co/lllyasviel/ for the implementation and the release of 1.5 models.

Thanks https://huggingface.co/p1atdev/ for the conversion script from ckpt to safetensors pruned & fp16

### Models can't be sell, merge, distributed without prior writing agreement.

|

AnonymousSub/rule_based_roberta_twostagequadruplet_hier_epochs_1_shard_1_squad2.0

|

[

"pytorch",

"roberta",

"question-answering",

"transformers",

"autotrain_compatible"

] |

question-answering

|

{

"architectures": [

"RobertaForQuestionAnswering"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 2 | null |

---

language:

- en

tags:

- pytorch

- causal-lm

- pythia

license: apache-2.0

datasets:

- Dahoas/synthetic-instruct-gptj-pairwise

---

This model is created by finetuning [`EleutherAI/pythia-12b-deduped`](https://huggingface.co/EleutherAI/pythia-12b-deduped) on the [`Dahoas/synthetic-instruct-gptj-pairwise`](https://huggingface.co/datasets/Dahoas/synthetic-instruct-gptj-pairwise).

You can try a [demo](https://cloud.lambdalabs.com/demos/ml/gpt-neox-side-by-side) of the model hosted on [Lambda Cloud](https://lambdalabs.com/service/gpu-cloud).

### Model Details

- Finetuned by: [Lambda](https://lambdalabs.com/)

- Model type: Transformer-based Language Model

- Language: English

- Pre-trained model: [EleutherAI/pythia-12b-deduped](https://huggingface.co/EleutherAI/pythia-12b-deduped)

- Dataset: [Dahoas/synthetic-instruct-gptj-pairwise](https://huggingface.co/datasets/Dahoas/synthetic-instruct-gptj-pairwise)

- Library: [transformers](https://huggingface.co/docs/transformers/index)

- License: Apache 2.0

### Prerequisites

Running inference with the model takes ~24GB of GPU memory.

### Quick Start

```

import torch

from transformers import AutoTokenizer, pipeline, StoppingCriteria, StoppingCriteriaList

device = torch.device("cuda:0") if torch.cuda.is_available() else torch.device("cpu")

model_name = "lambdalabs/pythia-12b-deduped-synthetic-instruct"

max_new_tokens = 1536

stop_token = "<|stop|>"

class KeywordsStoppingCriteria(StoppingCriteria):

def __init__(self, keywords_ids: list):

self.keywords = keywords_ids

def __call__(

self, input_ids: torch.LongTensor, scores: torch.FloatTensor, **kwargs

) -> bool:

if input_ids[0][-1] in self.keywords:

return True

return False

tokenizer = AutoTokenizer.from_pretrained(

model_name,

)

tokenizer.pad_token = tokenizer.eos_token

tokenizer.add_tokens([stop_token])

stop_ids = [tokenizer.encode(w)[0] for w in [stop_token]]

stop_criteria = KeywordsStoppingCriteria(stop_ids)

generator = pipeline(

"text-generation",

model=model_name,

device=device,

max_new_tokens=max_new_tokens,

torch_dtype=torch.float16,

stopping_criteria=StoppingCriteriaList([stop_criteria]),

)

example = "How can I make an omelette."

text = "Question: {}\nAnswer:".format(example)

result = generator(

text,

num_return_sequences=1,

)

output = result[0]["generated_text"]

print(output)

```

Output:

```

Question: How can I make an omelette.

Answer:To make an omelette, start by cracking two eggs into a bowl and whisking them together with a pinch of salt and pepper. Heat a non-stick pan over medium-high heat and add a tablespoon of butter. Once the butter has melted, pour in the egg mixture and let it cook for a few minutes until the edges start to turn golden. Then, using a spatula, fold the omelette in half and let it cook for another minute or two. Finally, flip the omelette over and cook for another minute or two until the omelette is cooked through. Serve the omelette with your favorite toppings and enjoy.<|stop|>

```

### Training

The model was trained on the [`Dahoas/synthetic-instruct-gptj-pairwise`](https://huggingface.co/datasets/Dahoas/synthetic-instruct-gptj-pairwise). We split the original dataset into the train (first 32000 examples) and validation (the remaining 1144 examples) subsets.