modelId

stringlengths 4

81

| tags

list | pipeline_tag

stringclasses 17

values | config

dict | downloads

int64 0

59.7M

| first_commit

timestamp[ns, tz=UTC] | card

stringlengths 51

438k

|

|---|---|---|---|---|---|---|

DeepPavlov/xlm-roberta-large-en-ru-mnli

|

[

"pytorch",

"xlm-roberta",

"text-classification",

"en",

"ru",

"dataset:glue",

"dataset:mnli",

"transformers",

"xlm-roberta-large",

"xlm-roberta-large-en-ru",

"xlm-roberta-large-en-ru-mnli",

"has_space"

] |

text-classification

|

{

"architectures": [

"XLMRobertaForSequenceClassification"

],

"model_type": "xlm-roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 227 | null |

---

license: mit

tags:

- feature-extraction

library_name: fasttext

language: ur

widget:

- text: apple

example_title: apple

---

# fastText (Urdu)

fastText is an open-source, free, lightweight library that allows users to learn text representations and text classifiers. It works on standard, generic hardware. Models can later be reduced in size to even fit on mobile devices. It was introduced in [this paper](https://arxiv.org/abs/1607.04606). The official website can be found [here](https://fasttext.cc/).

## Model description

fastText is a library for efficient learning of word representations and sentence classification. fastText is designed to be simple to use for developers, domain experts, and students. It's dedicated to text classification and learning word representations, and was designed to allow for quick model iteration and refinement without specialized hardware. fastText models can be trained on more than a billion words on any multicore CPU in less than a few minutes.

It includes pre-trained models learned on Wikipedia and in over 157 different languages. fastText can be used as a command line, linked to a C++ application, or used as a library for use cases from experimentation and prototyping to production.

## Intended uses & limitations

You can use pre-trained word vectors for text classification or language identification. See the [tutorials](https://fasttext.cc/docs/en/supervised-tutorial.html) and [resources](https://fasttext.cc/docs/en/english-vectors.html) on its official website to look for tasks that interest you.

### How to use

Here is how to load and use a pre-trained vectors

```python

>>> import fasttext

>>> from huggingface_hub import hf_hub_download

>>> model_path = hf_hub_download(repo_id="facebook/fasttext-ur-vectors", filename="model.bin")

>>> model = fasttext.load_model(model_path)

>>> model.words

['the', 'of', 'and', 'to', 'in', 'a', 'that', 'is', ...]

>>> len(model.words)

145940

>>> model['bread']

array([ 4.89417791e-01, 1.60882145e-01, -2.25947708e-01, -2.94273376e-01,

-1.04577184e-01, 1.17962055e-01, 1.34821936e-01, -2.41778508e-01, ...])

```

Here is how to use this model to query nearest neighbors of an English word vector:

```python

>>> import fasttext

>>> from huggingface_hub import hf_hub_download

>>> model_path = hf_hub_download(repo_id="facebook/fasttext-en-nearest-neighbors", filename="model.bin")

>>> model = fasttext.load_model(model_path)

>>> model.get_nearest_neighbors("bread", k=5)

[(0.5641006231307983, 'butter'),

(0.48875734210014343, 'loaf'),

(0.4491206705570221, 'eat'),

(0.42444291710853577, 'food'),

(0.4229326844215393, 'cheese')]

```

Here is how to use this model to detect the language of a given text:

```python

>>> import fasttext

>>> from huggingface_hub import hf_hub_download

>>> model_path = hf_hub_download(repo_id="facebook/fasttext-language-identification", filename="model.bin")

>>> model = fasttext.load_model(model_path)

>>> model.predict("Hello, world!")

(('__label__eng_Latn',), array([0.81148803]))

>>> model.predict("Hello, world!", k=5)

(('__label__eng_Latn', '__label__vie_Latn', '__label__nld_Latn', '__label__pol_Latn', '__label__deu_Latn'),

array([0.61224753, 0.21323682, 0.09696738, 0.01359863, 0.01319415]))

```

### Limitations and bias

Even if the training data used for this model could be characterized as fairly neutral, this model can have biased predictions.

Cosine similarity can be used to measure the similarity between two different word vectors. If two two vectors are identical, the cosine similarity will be 1. For two completely unrelated vectors, the value will be 0. If two vectors have an opposite relationship, the value will be -1.

```python

>>> import numpy as np

>>> def cosine_similarity(word1, word2):

>>> return np.dot(model[word1], model[word2]) / (np.linalg.norm(model[word1]) * np.linalg.norm(model[word2]))

>>> cosine_similarity("man", "boy")

0.061653383

>>> cosine_similarity("man", "ceo")

0.11989131

>>> cosine_similarity("woman", "ceo")

-0.08834904

```

## Training data

Pre-trained word vectors for 157 languages were trained on [Common Crawl](http://commoncrawl.org/) and [Wikipedia](https://www.wikipedia.org/) using fastText. These models were trained using CBOW with position-weights, in dimension 300, with character n-grams of length 5, a window of size 5 and 10 negatives. We also distribute three new word analogy datasets, for French, Hindi and Polish.

## Training procedure

### Tokenization

We used the [Stanford word segmenter](https://nlp.stanford.edu/software/segmenter.html) for Chinese, [Mecab](http://taku910.github.io/mecab/) for Japanese and [UETsegmenter](https://github.com/phongnt570/UETsegmenter) for Vietnamese. For languages using the Latin, Cyrillic, Hebrew or Greek scripts, we used the tokenizer from the [Europarl](https://www.statmt.org/europarl/) preprocessing tools. For the remaining languages, we used the ICU tokenizer.

More information about the training of these models can be found in the article [Learning Word Vectors for 157 Languages](https://arxiv.org/abs/1802.06893).

### License

The word vectors are distributed under the [*Creative Commons Attribution-Share-Alike License 3.0*](https://creativecommons.org/licenses/by-sa/3.0/).

### Evaluation datasets

The analogy evaluation datasets described in the paper are available here: [French](https://dl.fbaipublicfiles.com/fasttext/word-analogies/questions-words-fr.txt), [Hindi](https://dl.fbaipublicfiles.com/fasttext/word-analogies/questions-words-hi.txt), [Polish](https://dl.fbaipublicfiles.com/fasttext/word-analogies/questions-words-pl.txt).

### BibTeX entry and citation info

Please cite [1] if using this code for learning word representations or [2] if using for text classification.

[1] P. Bojanowski\*, E. Grave\*, A. Joulin, T. Mikolov, [*Enriching Word Vectors with Subword Information*](https://arxiv.org/abs/1607.04606)

```markup

@article{bojanowski2016enriching,

title={Enriching Word Vectors with Subword Information},

author={Bojanowski, Piotr and Grave, Edouard and Joulin, Armand and Mikolov, Tomas},

journal={arXiv preprint arXiv:1607.04606},

year={2016}

}

```

[2] A. Joulin, E. Grave, P. Bojanowski, T. Mikolov, [*Bag of Tricks for Efficient Text Classification*](https://arxiv.org/abs/1607.01759)

```markup

@article{joulin2016bag,

title={Bag of Tricks for Efficient Text Classification},

author={Joulin, Armand and Grave, Edouard and Bojanowski, Piotr and Mikolov, Tomas},

journal={arXiv preprint arXiv:1607.01759},

year={2016}

}

```

[3] A. Joulin, E. Grave, P. Bojanowski, M. Douze, H. Jégou, T. Mikolov, [*FastText.zip: Compressing text classification models*](https://arxiv.org/abs/1612.03651)

```markup

@article{joulin2016fasttext,

title={FastText.zip: Compressing text classification models},

author={Joulin, Armand and Grave, Edouard and Bojanowski, Piotr and Douze, Matthijs and J{'e}gou, H{'e}rve and Mikolov, Tomas},

journal={arXiv preprint arXiv:1612.03651},

year={2016}

}

```

If you use these word vectors, please cite the following paper:

[4] E. Grave\*, P. Bojanowski\*, P. Gupta, A. Joulin, T. Mikolov, [*Learning Word Vectors for 157 Languages*](https://arxiv.org/abs/1802.06893)

```markup

@inproceedings{grave2018learning,

title={Learning Word Vectors for 157 Languages},

author={Grave, Edouard and Bojanowski, Piotr and Gupta, Prakhar and Joulin, Armand and Mikolov, Tomas},

booktitle={Proceedings of the International Conference on Language Resources and Evaluation (LREC 2018)},

year={2018}

}

```

(\* These authors contributed equally.)

|

DeltaHub/adapter_t5-3b_cola

|

[

"pytorch",

"transformers"

] | null |

{

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 3 | null |

---

tags:

- FrozenLake-v1-4x4-no_slippery

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-FrozenLake-v1-4x4-noSlippery

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: FrozenLake-v1-4x4-no_slippery

type: FrozenLake-v1-4x4-no_slippery

metrics:

- type: mean_reward

value: 1.00 +/- 0.00

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **FrozenLake-v1**

This is a trained model of a **Q-Learning** agent playing **FrozenLake-v1** .

## Usage

```python

model = load_from_hub(repo_id="GillesEverling/q-FrozenLake-v1-4x4-noSlippery", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

```

|

Deniskin/essays_small_2000i

|

[] | null |

{

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 0 | 2023-03-24T07:31:58Z |

---

license: mit

tags:

- feature-extraction

library_name: fasttext

language: vec

widget:

- text: apple

example_title: apple

---

# fastText (Venetian)

fastText is an open-source, free, lightweight library that allows users to learn text representations and text classifiers. It works on standard, generic hardware. Models can later be reduced in size to even fit on mobile devices. It was introduced in [this paper](https://arxiv.org/abs/1607.04606). The official website can be found [here](https://fasttext.cc/).

## Model description

fastText is a library for efficient learning of word representations and sentence classification. fastText is designed to be simple to use for developers, domain experts, and students. It's dedicated to text classification and learning word representations, and was designed to allow for quick model iteration and refinement without specialized hardware. fastText models can be trained on more than a billion words on any multicore CPU in less than a few minutes.

It includes pre-trained models learned on Wikipedia and in over 157 different languages. fastText can be used as a command line, linked to a C++ application, or used as a library for use cases from experimentation and prototyping to production.

## Intended uses & limitations

You can use pre-trained word vectors for text classification or language identification. See the [tutorials](https://fasttext.cc/docs/en/supervised-tutorial.html) and [resources](https://fasttext.cc/docs/en/english-vectors.html) on its official website to look for tasks that interest you.

### How to use

Here is how to load and use a pre-trained vectors

```python

>>> import fasttext

>>> from huggingface_hub import hf_hub_download

>>> model_path = hf_hub_download(repo_id="facebook/fasttext-vec-vectors", filename="model.bin")

>>> model = fasttext.load_model(model_path)

>>> model.words

['the', 'of', 'and', 'to', 'in', 'a', 'that', 'is', ...]

>>> len(model.words)

145940

>>> model['bread']

array([ 4.89417791e-01, 1.60882145e-01, -2.25947708e-01, -2.94273376e-01,

-1.04577184e-01, 1.17962055e-01, 1.34821936e-01, -2.41778508e-01, ...])

```

Here is how to use this model to query nearest neighbors of an English word vector:

```python

>>> import fasttext

>>> from huggingface_hub import hf_hub_download

>>> model_path = hf_hub_download(repo_id="facebook/fasttext-en-nearest-neighbors", filename="model.bin")

>>> model = fasttext.load_model(model_path)

>>> model.get_nearest_neighbors("bread", k=5)

[(0.5641006231307983, 'butter'),

(0.48875734210014343, 'loaf'),

(0.4491206705570221, 'eat'),

(0.42444291710853577, 'food'),

(0.4229326844215393, 'cheese')]

```

Here is how to use this model to detect the language of a given text:

```python

>>> import fasttext

>>> from huggingface_hub import hf_hub_download

>>> model_path = hf_hub_download(repo_id="facebook/fasttext-language-identification", filename="model.bin")

>>> model = fasttext.load_model(model_path)

>>> model.predict("Hello, world!")

(('__label__eng_Latn',), array([0.81148803]))

>>> model.predict("Hello, world!", k=5)

(('__label__eng_Latn', '__label__vie_Latn', '__label__nld_Latn', '__label__pol_Latn', '__label__deu_Latn'),

array([0.61224753, 0.21323682, 0.09696738, 0.01359863, 0.01319415]))

```

### Limitations and bias

Even if the training data used for this model could be characterized as fairly neutral, this model can have biased predictions.

Cosine similarity can be used to measure the similarity between two different word vectors. If two two vectors are identical, the cosine similarity will be 1. For two completely unrelated vectors, the value will be 0. If two vectors have an opposite relationship, the value will be -1.

```python

>>> import numpy as np

>>> def cosine_similarity(word1, word2):

>>> return np.dot(model[word1], model[word2]) / (np.linalg.norm(model[word1]) * np.linalg.norm(model[word2]))

>>> cosine_similarity("man", "boy")

0.061653383

>>> cosine_similarity("man", "ceo")

0.11989131

>>> cosine_similarity("woman", "ceo")

-0.08834904

```

## Training data

Pre-trained word vectors for 157 languages were trained on [Common Crawl](http://commoncrawl.org/) and [Wikipedia](https://www.wikipedia.org/) using fastText. These models were trained using CBOW with position-weights, in dimension 300, with character n-grams of length 5, a window of size 5 and 10 negatives. We also distribute three new word analogy datasets, for French, Hindi and Polish.

## Training procedure

### Tokenization

We used the [Stanford word segmenter](https://nlp.stanford.edu/software/segmenter.html) for Chinese, [Mecab](http://taku910.github.io/mecab/) for Japanese and [UETsegmenter](https://github.com/phongnt570/UETsegmenter) for Vietnamese. For languages using the Latin, Cyrillic, Hebrew or Greek scripts, we used the tokenizer from the [Europarl](https://www.statmt.org/europarl/) preprocessing tools. For the remaining languages, we used the ICU tokenizer.

More information about the training of these models can be found in the article [Learning Word Vectors for 157 Languages](https://arxiv.org/abs/1802.06893).

### License

The word vectors are distributed under the [*Creative Commons Attribution-Share-Alike License 3.0*](https://creativecommons.org/licenses/by-sa/3.0/).

### Evaluation datasets

The analogy evaluation datasets described in the paper are available here: [French](https://dl.fbaipublicfiles.com/fasttext/word-analogies/questions-words-fr.txt), [Hindi](https://dl.fbaipublicfiles.com/fasttext/word-analogies/questions-words-hi.txt), [Polish](https://dl.fbaipublicfiles.com/fasttext/word-analogies/questions-words-pl.txt).

### BibTeX entry and citation info

Please cite [1] if using this code for learning word representations or [2] if using for text classification.

[1] P. Bojanowski\*, E. Grave\*, A. Joulin, T. Mikolov, [*Enriching Word Vectors with Subword Information*](https://arxiv.org/abs/1607.04606)

```markup

@article{bojanowski2016enriching,

title={Enriching Word Vectors with Subword Information},

author={Bojanowski, Piotr and Grave, Edouard and Joulin, Armand and Mikolov, Tomas},

journal={arXiv preprint arXiv:1607.04606},

year={2016}

}

```

[2] A. Joulin, E. Grave, P. Bojanowski, T. Mikolov, [*Bag of Tricks for Efficient Text Classification*](https://arxiv.org/abs/1607.01759)

```markup

@article{joulin2016bag,

title={Bag of Tricks for Efficient Text Classification},

author={Joulin, Armand and Grave, Edouard and Bojanowski, Piotr and Mikolov, Tomas},

journal={arXiv preprint arXiv:1607.01759},

year={2016}

}

```

[3] A. Joulin, E. Grave, P. Bojanowski, M. Douze, H. Jégou, T. Mikolov, [*FastText.zip: Compressing text classification models*](https://arxiv.org/abs/1612.03651)

```markup

@article{joulin2016fasttext,

title={FastText.zip: Compressing text classification models},

author={Joulin, Armand and Grave, Edouard and Bojanowski, Piotr and Douze, Matthijs and J{'e}gou, H{'e}rve and Mikolov, Tomas},

journal={arXiv preprint arXiv:1612.03651},

year={2016}

}

```

If you use these word vectors, please cite the following paper:

[4] E. Grave\*, P. Bojanowski\*, P. Gupta, A. Joulin, T. Mikolov, [*Learning Word Vectors for 157 Languages*](https://arxiv.org/abs/1802.06893)

```markup

@inproceedings{grave2018learning,

title={Learning Word Vectors for 157 Languages},

author={Grave, Edouard and Bojanowski, Piotr and Gupta, Prakhar and Joulin, Armand and Mikolov, Tomas},

booktitle={Proceedings of the International Conference on Language Resources and Evaluation (LREC 2018)},

year={2018}

}

```

(\* These authors contributed equally.)

|

Denny29/DialoGPT-medium-asunayuuki

|

[

"pytorch",

"gpt2",

"text-generation",

"transformers",

"conversational"

] |

conversational

|

{

"architectures": [

"GPT2LMHeadModel"

],

"model_type": "gpt2",

"task_specific_params": {

"conversational": {

"max_length": 1000

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 9 | 2023-03-24T07:32:35Z |

---

license: cc-by-nc-4.0

---

License for all my models listed here models

https://civitai.com/models/19466/blaest-mix

https://civitai.com/models/23668/blaestive-mix

|

Denver/distilbert-base-uncased-finetuned-squad

|

[] | null |

{

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 0 | 2023-03-24T07:32:56Z |

---

tags:

- FrozenLake-v1-8x8

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-FrozenLake-v1-8x8-Slippery

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: FrozenLake-v1-8x8

type: FrozenLake-v1-8x8

metrics:

- type: mean_reward

value: 0.53 +/- 0.50

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **FrozenLake-v1**

This is a trained model of a **Q-Learning** agent playing **FrozenLake-v1** .

## Usage

```python

model = load_from_hub(repo_id="GillesEverling/q-FrozenLake-v1-8x8-Slippery", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

```

|

DeskDown/MarianMixFT_en-th

|

[

"pytorch",

"marian",

"text2text-generation",

"transformers",

"autotrain_compatible"

] |

text2text-generation

|

{

"architectures": [

"MarianMTModel"

],

"model_type": "marian",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 3 | null |

---

tags:

- text-classification

- endpoints-template

- optimum

library_name: generic

---

# Optimized and Quantized DistilBERT with a custom pipeline with handler.py

> NOTE: Blog post coming soon

This is a template repository for Text Classification using Optimum and onnxruntime to support generic inference with Hugging Face Hub generic Inference API. There are two required steps:

1. Specify the requirements by defining a `requirements.txt` file.

2. Implement the `handler.py` `__init__` and `__call__` methods. These methods are called by the Inference API. The `__init__` method should load the model and preload the optimum model and tokenizers as well as the `text-classification` pipeline needed for inference. This is only called once. The `__call__` method performs the actual inference. Make sure to follow the same input/output specifications defined in the template for the pipeline to work.

add

```

library_name: generic

```

to the readme.

_note: the `generic` community image currently only support `inputs` as parameter and no parameter._

|

Devrim/prism-default

|

[

"license:mit"

] | null |

{

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 0 | 2023-03-24T07:52:36Z |

---

tags:

- Taxi-v3

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-Taxi-v3

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Taxi-v3

type: Taxi-v3

metrics:

- type: mean_reward

value: 7.56 +/- 2.71

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **Taxi-v3**

This is a trained model of a **Q-Learning** agent playing **Taxi-v3** .

## Usage

```python

model = load_from_hub(repo_id="GillesEverling/q-Taxi-v3", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

```

|

Dhritam/Zova-bot

|

[] | null |

{

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 0 | 2023-03-24T08:00:31Z |

---

tags:

- LunarLander-v2

- ppo

- deep-reinforcement-learning

- reinforcement-learning

- custom-implementation

- deep-rl-course

model-index:

- name: PPO

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: LunarLander-v2

type: LunarLander-v2

metrics:

- type: mean_reward

value: -115.54 +/- 54.00

name: mean_reward

verified: false

---

# PPO Agent Playing LunarLander-v2

This is a trained model of a PPO agent playing LunarLander-v2.

# Hyperparameters

```python

{'exp_name': 'ppo'

'seed': 1

'torch_deterministic': True

'cuda': True

'track': False

'wandb_project_name': 'cleanRL'

'wandb_entity': None

'capture_video': False

'env_id': 'LunarLander-v2'

'total_timesteps': 50000

'learning_rate': 0.00025

'num_envs': 4

'num_steps': 128

'anneal_lr': True

'gae': True

'gamma': 0.99

'gae_lambda': 0.95

'num_minibatches': 4

'update_epochs': 4

'norm_adv': True

'clip_coef': 0.2

'clip_vloss': True

'ent_coef': 0.01

'vf_coef': 0.5

'max_grad_norm': 0.5

'target_kl': None

'repo_id': 'McCheng/ppo-LunarLander-v2-Unit8'

'batch_size': 512

'minibatch_size': 128}

```

|

Dimedrolza/DialoGPT-small-cyberpunk

|

[

"pytorch",

"gpt2",

"text-generation",

"transformers",

"conversational"

] |

conversational

|

{

"architectures": [

"GPT2LMHeadModel"

],

"model_type": "gpt2",

"task_specific_params": {

"conversational": {

"max_length": 1000

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 9 | null |

---

license: mit

tags:

- feature-extraction

library_name: fasttext

language: pnb

widget:

- text: apple

example_title: apple

---

# fastText (Western Punjabi)

fastText is an open-source, free, lightweight library that allows users to learn text representations and text classifiers. It works on standard, generic hardware. Models can later be reduced in size to even fit on mobile devices. It was introduced in [this paper](https://arxiv.org/abs/1607.04606). The official website can be found [here](https://fasttext.cc/).

## Model description

fastText is a library for efficient learning of word representations and sentence classification. fastText is designed to be simple to use for developers, domain experts, and students. It's dedicated to text classification and learning word representations, and was designed to allow for quick model iteration and refinement without specialized hardware. fastText models can be trained on more than a billion words on any multicore CPU in less than a few minutes.

It includes pre-trained models learned on Wikipedia and in over 157 different languages. fastText can be used as a command line, linked to a C++ application, or used as a library for use cases from experimentation and prototyping to production.

## Intended uses & limitations

You can use pre-trained word vectors for text classification or language identification. See the [tutorials](https://fasttext.cc/docs/en/supervised-tutorial.html) and [resources](https://fasttext.cc/docs/en/english-vectors.html) on its official website to look for tasks that interest you.

### How to use

Here is how to load and use a pre-trained vectors

```python

>>> import fasttext

>>> from huggingface_hub import hf_hub_download

>>> model_path = hf_hub_download(repo_id="facebook/fasttext-pnb-vectors", filename="model.bin")

>>> model = fasttext.load_model(model_path)

>>> model.words

['the', 'of', 'and', 'to', 'in', 'a', 'that', 'is', ...]

>>> len(model.words)

145940

>>> model['bread']

array([ 4.89417791e-01, 1.60882145e-01, -2.25947708e-01, -2.94273376e-01,

-1.04577184e-01, 1.17962055e-01, 1.34821936e-01, -2.41778508e-01, ...])

```

Here is how to use this model to query nearest neighbors of an English word vector:

```python

>>> import fasttext

>>> from huggingface_hub import hf_hub_download

>>> model_path = hf_hub_download(repo_id="facebook/fasttext-en-nearest-neighbors", filename="model.bin")

>>> model = fasttext.load_model(model_path)

>>> model.get_nearest_neighbors("bread", k=5)

[(0.5641006231307983, 'butter'),

(0.48875734210014343, 'loaf'),

(0.4491206705570221, 'eat'),

(0.42444291710853577, 'food'),

(0.4229326844215393, 'cheese')]

```

Here is how to use this model to detect the language of a given text:

```python

>>> import fasttext

>>> from huggingface_hub import hf_hub_download

>>> model_path = hf_hub_download(repo_id="facebook/fasttext-language-identification", filename="model.bin")

>>> model = fasttext.load_model(model_path)

>>> model.predict("Hello, world!")

(('__label__eng_Latn',), array([0.81148803]))

>>> model.predict("Hello, world!", k=5)

(('__label__eng_Latn', '__label__vie_Latn', '__label__nld_Latn', '__label__pol_Latn', '__label__deu_Latn'),

array([0.61224753, 0.21323682, 0.09696738, 0.01359863, 0.01319415]))

```

### Limitations and bias

Even if the training data used for this model could be characterized as fairly neutral, this model can have biased predictions.

Cosine similarity can be used to measure the similarity between two different word vectors. If two two vectors are identical, the cosine similarity will be 1. For two completely unrelated vectors, the value will be 0. If two vectors have an opposite relationship, the value will be -1.

```python

>>> import numpy as np

>>> def cosine_similarity(word1, word2):

>>> return np.dot(model[word1], model[word2]) / (np.linalg.norm(model[word1]) * np.linalg.norm(model[word2]))

>>> cosine_similarity("man", "boy")

0.061653383

>>> cosine_similarity("man", "ceo")

0.11989131

>>> cosine_similarity("woman", "ceo")

-0.08834904

```

## Training data

Pre-trained word vectors for 157 languages were trained on [Common Crawl](http://commoncrawl.org/) and [Wikipedia](https://www.wikipedia.org/) using fastText. These models were trained using CBOW with position-weights, in dimension 300, with character n-grams of length 5, a window of size 5 and 10 negatives. We also distribute three new word analogy datasets, for French, Hindi and Polish.

## Training procedure

### Tokenization

We used the [Stanford word segmenter](https://nlp.stanford.edu/software/segmenter.html) for Chinese, [Mecab](http://taku910.github.io/mecab/) for Japanese and [UETsegmenter](https://github.com/phongnt570/UETsegmenter) for Vietnamese. For languages using the Latin, Cyrillic, Hebrew or Greek scripts, we used the tokenizer from the [Europarl](https://www.statmt.org/europarl/) preprocessing tools. For the remaining languages, we used the ICU tokenizer.

More information about the training of these models can be found in the article [Learning Word Vectors for 157 Languages](https://arxiv.org/abs/1802.06893).

### License

The word vectors are distributed under the [*Creative Commons Attribution-Share-Alike License 3.0*](https://creativecommons.org/licenses/by-sa/3.0/).

### Evaluation datasets

The analogy evaluation datasets described in the paper are available here: [French](https://dl.fbaipublicfiles.com/fasttext/word-analogies/questions-words-fr.txt), [Hindi](https://dl.fbaipublicfiles.com/fasttext/word-analogies/questions-words-hi.txt), [Polish](https://dl.fbaipublicfiles.com/fasttext/word-analogies/questions-words-pl.txt).

### BibTeX entry and citation info

Please cite [1] if using this code for learning word representations or [2] if using for text classification.

[1] P. Bojanowski\*, E. Grave\*, A. Joulin, T. Mikolov, [*Enriching Word Vectors with Subword Information*](https://arxiv.org/abs/1607.04606)

```markup

@article{bojanowski2016enriching,

title={Enriching Word Vectors with Subword Information},

author={Bojanowski, Piotr and Grave, Edouard and Joulin, Armand and Mikolov, Tomas},

journal={arXiv preprint arXiv:1607.04606},

year={2016}

}

```

[2] A. Joulin, E. Grave, P. Bojanowski, T. Mikolov, [*Bag of Tricks for Efficient Text Classification*](https://arxiv.org/abs/1607.01759)

```markup

@article{joulin2016bag,

title={Bag of Tricks for Efficient Text Classification},

author={Joulin, Armand and Grave, Edouard and Bojanowski, Piotr and Mikolov, Tomas},

journal={arXiv preprint arXiv:1607.01759},

year={2016}

}

```

[3] A. Joulin, E. Grave, P. Bojanowski, M. Douze, H. Jégou, T. Mikolov, [*FastText.zip: Compressing text classification models*](https://arxiv.org/abs/1612.03651)

```markup

@article{joulin2016fasttext,

title={FastText.zip: Compressing text classification models},

author={Joulin, Armand and Grave, Edouard and Bojanowski, Piotr and Douze, Matthijs and J{'e}gou, H{'e}rve and Mikolov, Tomas},

journal={arXiv preprint arXiv:1612.03651},

year={2016}

}

```

If you use these word vectors, please cite the following paper:

[4] E. Grave\*, P. Bojanowski\*, P. Gupta, A. Joulin, T. Mikolov, [*Learning Word Vectors for 157 Languages*](https://arxiv.org/abs/1802.06893)

```markup

@inproceedings{grave2018learning,

title={Learning Word Vectors for 157 Languages},

author={Grave, Edouard and Bojanowski, Piotr and Gupta, Prakhar and Joulin, Armand and Mikolov, Tomas},

booktitle={Proceedings of the International Conference on Language Resources and Evaluation (LREC 2018)},

year={2018}

}

```

(\* These authors contributed equally.)

|

Dkwkk/Da

|

[] | null |

{

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 0 | 2023-03-24T08:19:24Z |

---

library_name: stable-baselines3

tags:

- LunarLander-v2

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: PPO

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: LunarLander-v2

type: LunarLander-v2

metrics:

- type: mean_reward

value: 276.44 +/- 18.34

name: mean_reward

verified: false

---

# **PPO** Agent playing **LunarLander-v2**

This is a trained model of a **PPO** agent playing **LunarLander-v2**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

import gym

from huggingface_sb3 import load_from_hub, package_to_hub, push_to_hub

from huggingface_hub import notebook_login # To log to our Hugging Face account to be able to upload models to the Hub.

from stable_baselines3 import PPO

from stable_baselines3.common.evaluation import evaluate_policy

from stable_baselines3.common.env_util import make_vec_env

# Create the environment

env = make_vec_env('LunarLander-v2', n_envs=16)

model = PPO(

policy = 'MlpPolicy',

env = env,

n_steps = 1024,

batch_size = 64,

n_epochs = 4,

gamma = 0.999,

gae_lambda = 0.98,

ent_coef = 0.01,

verbose=1)

# Train it for 1,000,000 timesteps

model.learn(total_timesteps=1000000)

# Save the model

model_name = "unit1-ppo-LunarLander-v2"

model.save(model_name)

#evaluate model

eval_env = gym.make("LunarLander-v2")

mean_reward, std_reward = evaluate_policy(model, eval_env, n_eval_episodes=30, deterministic=True)

print(f"mean_reward={mean_reward:.2f} +/- {std_reward}")

...

```

|

Dkwkk/W

|

[] | null |

{

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 0 | null |

---

license: mit

tags:

- feature-extraction

library_name: fasttext

language: yi

widget:

- text: apple

example_title: apple

---

# fastText (Yiddish)

fastText is an open-source, free, lightweight library that allows users to learn text representations and text classifiers. It works on standard, generic hardware. Models can later be reduced in size to even fit on mobile devices. It was introduced in [this paper](https://arxiv.org/abs/1607.04606). The official website can be found [here](https://fasttext.cc/).

## Model description

fastText is a library for efficient learning of word representations and sentence classification. fastText is designed to be simple to use for developers, domain experts, and students. It's dedicated to text classification and learning word representations, and was designed to allow for quick model iteration and refinement without specialized hardware. fastText models can be trained on more than a billion words on any multicore CPU in less than a few minutes.

It includes pre-trained models learned on Wikipedia and in over 157 different languages. fastText can be used as a command line, linked to a C++ application, or used as a library for use cases from experimentation and prototyping to production.

## Intended uses & limitations

You can use pre-trained word vectors for text classification or language identification. See the [tutorials](https://fasttext.cc/docs/en/supervised-tutorial.html) and [resources](https://fasttext.cc/docs/en/english-vectors.html) on its official website to look for tasks that interest you.

### How to use

Here is how to load and use a pre-trained vectors

```python

>>> import fasttext

>>> from huggingface_hub import hf_hub_download

>>> model_path = hf_hub_download(repo_id="facebook/fasttext-yi-vectors", filename="model.bin")

>>> model = fasttext.load_model(model_path)

>>> model.words

['the', 'of', 'and', 'to', 'in', 'a', 'that', 'is', ...]

>>> len(model.words)

145940

>>> model['bread']

array([ 4.89417791e-01, 1.60882145e-01, -2.25947708e-01, -2.94273376e-01,

-1.04577184e-01, 1.17962055e-01, 1.34821936e-01, -2.41778508e-01, ...])

```

Here is how to use this model to query nearest neighbors of an English word vector:

```python

>>> import fasttext

>>> from huggingface_hub import hf_hub_download

>>> model_path = hf_hub_download(repo_id="facebook/fasttext-en-nearest-neighbors", filename="model.bin")

>>> model = fasttext.load_model(model_path)

>>> model.get_nearest_neighbors("bread", k=5)

[(0.5641006231307983, 'butter'),

(0.48875734210014343, 'loaf'),

(0.4491206705570221, 'eat'),

(0.42444291710853577, 'food'),

(0.4229326844215393, 'cheese')]

```

Here is how to use this model to detect the language of a given text:

```python

>>> import fasttext

>>> from huggingface_hub import hf_hub_download

>>> model_path = hf_hub_download(repo_id="facebook/fasttext-language-identification", filename="model.bin")

>>> model = fasttext.load_model(model_path)

>>> model.predict("Hello, world!")

(('__label__eng_Latn',), array([0.81148803]))

>>> model.predict("Hello, world!", k=5)

(('__label__eng_Latn', '__label__vie_Latn', '__label__nld_Latn', '__label__pol_Latn', '__label__deu_Latn'),

array([0.61224753, 0.21323682, 0.09696738, 0.01359863, 0.01319415]))

```

### Limitations and bias

Even if the training data used for this model could be characterized as fairly neutral, this model can have biased predictions.

Cosine similarity can be used to measure the similarity between two different word vectors. If two two vectors are identical, the cosine similarity will be 1. For two completely unrelated vectors, the value will be 0. If two vectors have an opposite relationship, the value will be -1.

```python

>>> import numpy as np

>>> def cosine_similarity(word1, word2):

>>> return np.dot(model[word1], model[word2]) / (np.linalg.norm(model[word1]) * np.linalg.norm(model[word2]))

>>> cosine_similarity("man", "boy")

0.061653383

>>> cosine_similarity("man", "ceo")

0.11989131

>>> cosine_similarity("woman", "ceo")

-0.08834904

```

## Training data

Pre-trained word vectors for 157 languages were trained on [Common Crawl](http://commoncrawl.org/) and [Wikipedia](https://www.wikipedia.org/) using fastText. These models were trained using CBOW with position-weights, in dimension 300, with character n-grams of length 5, a window of size 5 and 10 negatives. We also distribute three new word analogy datasets, for French, Hindi and Polish.

## Training procedure

### Tokenization

We used the [Stanford word segmenter](https://nlp.stanford.edu/software/segmenter.html) for Chinese, [Mecab](http://taku910.github.io/mecab/) for Japanese and [UETsegmenter](https://github.com/phongnt570/UETsegmenter) for Vietnamese. For languages using the Latin, Cyrillic, Hebrew or Greek scripts, we used the tokenizer from the [Europarl](https://www.statmt.org/europarl/) preprocessing tools. For the remaining languages, we used the ICU tokenizer.

More information about the training of these models can be found in the article [Learning Word Vectors for 157 Languages](https://arxiv.org/abs/1802.06893).

### License

The word vectors are distributed under the [*Creative Commons Attribution-Share-Alike License 3.0*](https://creativecommons.org/licenses/by-sa/3.0/).

### Evaluation datasets

The analogy evaluation datasets described in the paper are available here: [French](https://dl.fbaipublicfiles.com/fasttext/word-analogies/questions-words-fr.txt), [Hindi](https://dl.fbaipublicfiles.com/fasttext/word-analogies/questions-words-hi.txt), [Polish](https://dl.fbaipublicfiles.com/fasttext/word-analogies/questions-words-pl.txt).

### BibTeX entry and citation info

Please cite [1] if using this code for learning word representations or [2] if using for text classification.

[1] P. Bojanowski\*, E. Grave\*, A. Joulin, T. Mikolov, [*Enriching Word Vectors with Subword Information*](https://arxiv.org/abs/1607.04606)

```markup

@article{bojanowski2016enriching,

title={Enriching Word Vectors with Subword Information},

author={Bojanowski, Piotr and Grave, Edouard and Joulin, Armand and Mikolov, Tomas},

journal={arXiv preprint arXiv:1607.04606},

year={2016}

}

```

[2] A. Joulin, E. Grave, P. Bojanowski, T. Mikolov, [*Bag of Tricks for Efficient Text Classification*](https://arxiv.org/abs/1607.01759)

```markup

@article{joulin2016bag,

title={Bag of Tricks for Efficient Text Classification},

author={Joulin, Armand and Grave, Edouard and Bojanowski, Piotr and Mikolov, Tomas},

journal={arXiv preprint arXiv:1607.01759},

year={2016}

}

```

[3] A. Joulin, E. Grave, P. Bojanowski, M. Douze, H. Jégou, T. Mikolov, [*FastText.zip: Compressing text classification models*](https://arxiv.org/abs/1612.03651)

```markup

@article{joulin2016fasttext,

title={FastText.zip: Compressing text classification models},

author={Joulin, Armand and Grave, Edouard and Bojanowski, Piotr and Douze, Matthijs and J{'e}gou, H{'e}rve and Mikolov, Tomas},

journal={arXiv preprint arXiv:1612.03651},

year={2016}

}

```

If you use these word vectors, please cite the following paper:

[4] E. Grave\*, P. Bojanowski\*, P. Gupta, A. Joulin, T. Mikolov, [*Learning Word Vectors for 157 Languages*](https://arxiv.org/abs/1802.06893)

```markup

@inproceedings{grave2018learning,

title={Learning Word Vectors for 157 Languages},

author={Grave, Edouard and Bojanowski, Piotr and Gupta, Prakhar and Joulin, Armand and Mikolov, Tomas},

booktitle={Proceedings of the International Conference on Language Resources and Evaluation (LREC 2018)},

year={2018}

}

```

(\* These authors contributed equally.)

|

Dmitriiserg/Pxd

|

[] | null |

{

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 0 | null |

---

tags:

- Pixelcopter-PLE-v0

- reinforce

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: Pixelcopter-PLE-v0-1

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Pixelcopter-PLE-v0

type: Pixelcopter-PLE-v0

metrics:

- type: mean_reward

value: 28.80 +/- 29.19

name: mean_reward

verified: false

---

# **Reinforce** Agent playing **Pixelcopter-PLE-v0**

This is a trained model of a **Reinforce** agent playing **Pixelcopter-PLE-v0** .

To learn to use this model and train yours check Unit 4 of the Deep Reinforcement Learning Course: https://huggingface.co/deep-rl-course/unit4/introduction

|

Dmitry12/sber

|

[] | null |

{

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 0 | null |

---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: vjsyong/my_awesome_model

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# vjsyong/my_awesome_model

This model is a fine-tuned version of [distilbert-base-multilingual-cased](https://huggingface.co/distilbert-base-multilingual-cased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.0536

- Validation Loss: 0.2951

- Train Accuracy: 0.9169

- Epoch: 3

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 7810, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Validation Loss | Train Accuracy | Epoch |

|:----------:|:---------------:|:--------------:|:-----:|

| 0.3400 | 0.2347 | 0.9037 | 0 |

| 0.1873 | 0.2533 | 0.9021 | 1 |

| 0.1064 | 0.2473 | 0.9156 | 2 |

| 0.0536 | 0.2951 | 0.9169 | 3 |

### Framework versions

- Transformers 4.27.3

- TensorFlow 2.10.0

- Datasets 2.10.1

- Tokenizers 0.13.2

|

DongHai/DialoGPT-small-rick

|

[

"pytorch",

"gpt2",

"text-generation",

"transformers",

"conversational"

] |

conversational

|

{

"architectures": [

"GPT2LMHeadModel"

],

"model_type": "gpt2",

"task_specific_params": {

"conversational": {

"max_length": 1000

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 9 | null |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- imdb

metrics:

- accuracy

- f1

model-index:

- name: finetuning-sentiment-model-3000-samples

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: imdb

type: imdb

config: plain_text

split: test

args: plain_text

metrics:

- name: Accuracy

type: accuracy

value: 0.88

- name: F1

type: f1

value: 0.880794701986755

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# finetuning-sentiment-model-3000-samples

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2904

- Accuracy: 0.88

- F1: 0.8808

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

### Framework versions

- Transformers 4.27.3

- Pytorch 1.13.1+cu116

- Datasets 2.10.1

- Tokenizers 0.13.2

|

DongHyoungLee/kogpt2-base-v2-finetuned-kogpt2_nsmc_single_sentence_classification

|

[] | null |

{

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 0 | null |

---

license: mit

tags:

- feature-extraction

library_name: fasttext

language: yo

widget:

- text: apple

example_title: apple

---

# fastText (Yoruba)

fastText is an open-source, free, lightweight library that allows users to learn text representations and text classifiers. It works on standard, generic hardware. Models can later be reduced in size to even fit on mobile devices. It was introduced in [this paper](https://arxiv.org/abs/1607.04606). The official website can be found [here](https://fasttext.cc/).

## Model description

fastText is a library for efficient learning of word representations and sentence classification. fastText is designed to be simple to use for developers, domain experts, and students. It's dedicated to text classification and learning word representations, and was designed to allow for quick model iteration and refinement without specialized hardware. fastText models can be trained on more than a billion words on any multicore CPU in less than a few minutes.

It includes pre-trained models learned on Wikipedia and in over 157 different languages. fastText can be used as a command line, linked to a C++ application, or used as a library for use cases from experimentation and prototyping to production.

## Intended uses & limitations

You can use pre-trained word vectors for text classification or language identification. See the [tutorials](https://fasttext.cc/docs/en/supervised-tutorial.html) and [resources](https://fasttext.cc/docs/en/english-vectors.html) on its official website to look for tasks that interest you.

### How to use

Here is how to load and use a pre-trained vectors

```python

>>> import fasttext

>>> from huggingface_hub import hf_hub_download

>>> model_path = hf_hub_download(repo_id="facebook/fasttext-yo-vectors", filename="model.bin")

>>> model = fasttext.load_model(model_path)

>>> model.words

['the', 'of', 'and', 'to', 'in', 'a', 'that', 'is', ...]

>>> len(model.words)

145940

>>> model['bread']

array([ 4.89417791e-01, 1.60882145e-01, -2.25947708e-01, -2.94273376e-01,

-1.04577184e-01, 1.17962055e-01, 1.34821936e-01, -2.41778508e-01, ...])

```

Here is how to use this model to query nearest neighbors of an English word vector:

```python

>>> import fasttext

>>> from huggingface_hub import hf_hub_download

>>> model_path = hf_hub_download(repo_id="facebook/fasttext-en-nearest-neighbors", filename="model.bin")

>>> model = fasttext.load_model(model_path)

>>> model.get_nearest_neighbors("bread", k=5)

[(0.5641006231307983, 'butter'),

(0.48875734210014343, 'loaf'),

(0.4491206705570221, 'eat'),

(0.42444291710853577, 'food'),

(0.4229326844215393, 'cheese')]

```

Here is how to use this model to detect the language of a given text:

```python

>>> import fasttext

>>> from huggingface_hub import hf_hub_download

>>> model_path = hf_hub_download(repo_id="facebook/fasttext-language-identification", filename="model.bin")

>>> model = fasttext.load_model(model_path)

>>> model.predict("Hello, world!")

(('__label__eng_Latn',), array([0.81148803]))

>>> model.predict("Hello, world!", k=5)

(('__label__eng_Latn', '__label__vie_Latn', '__label__nld_Latn', '__label__pol_Latn', '__label__deu_Latn'),

array([0.61224753, 0.21323682, 0.09696738, 0.01359863, 0.01319415]))

```

### Limitations and bias

Even if the training data used for this model could be characterized as fairly neutral, this model can have biased predictions.

Cosine similarity can be used to measure the similarity between two different word vectors. If two two vectors are identical, the cosine similarity will be 1. For two completely unrelated vectors, the value will be 0. If two vectors have an opposite relationship, the value will be -1.

```python

>>> import numpy as np

>>> def cosine_similarity(word1, word2):

>>> return np.dot(model[word1], model[word2]) / (np.linalg.norm(model[word1]) * np.linalg.norm(model[word2]))

>>> cosine_similarity("man", "boy")

0.061653383

>>> cosine_similarity("man", "ceo")

0.11989131

>>> cosine_similarity("woman", "ceo")

-0.08834904

```

## Training data

Pre-trained word vectors for 157 languages were trained on [Common Crawl](http://commoncrawl.org/) and [Wikipedia](https://www.wikipedia.org/) using fastText. These models were trained using CBOW with position-weights, in dimension 300, with character n-grams of length 5, a window of size 5 and 10 negatives. We also distribute three new word analogy datasets, for French, Hindi and Polish.

## Training procedure

### Tokenization

We used the [Stanford word segmenter](https://nlp.stanford.edu/software/segmenter.html) for Chinese, [Mecab](http://taku910.github.io/mecab/) for Japanese and [UETsegmenter](https://github.com/phongnt570/UETsegmenter) for Vietnamese. For languages using the Latin, Cyrillic, Hebrew or Greek scripts, we used the tokenizer from the [Europarl](https://www.statmt.org/europarl/) preprocessing tools. For the remaining languages, we used the ICU tokenizer.

More information about the training of these models can be found in the article [Learning Word Vectors for 157 Languages](https://arxiv.org/abs/1802.06893).

### License

The word vectors are distributed under the [*Creative Commons Attribution-Share-Alike License 3.0*](https://creativecommons.org/licenses/by-sa/3.0/).

### Evaluation datasets

The analogy evaluation datasets described in the paper are available here: [French](https://dl.fbaipublicfiles.com/fasttext/word-analogies/questions-words-fr.txt), [Hindi](https://dl.fbaipublicfiles.com/fasttext/word-analogies/questions-words-hi.txt), [Polish](https://dl.fbaipublicfiles.com/fasttext/word-analogies/questions-words-pl.txt).

### BibTeX entry and citation info

Please cite [1] if using this code for learning word representations or [2] if using for text classification.

[1] P. Bojanowski\*, E. Grave\*, A. Joulin, T. Mikolov, [*Enriching Word Vectors with Subword Information*](https://arxiv.org/abs/1607.04606)

```markup

@article{bojanowski2016enriching,

title={Enriching Word Vectors with Subword Information},

author={Bojanowski, Piotr and Grave, Edouard and Joulin, Armand and Mikolov, Tomas},

journal={arXiv preprint arXiv:1607.04606},

year={2016}

}

```

[2] A. Joulin, E. Grave, P. Bojanowski, T. Mikolov, [*Bag of Tricks for Efficient Text Classification*](https://arxiv.org/abs/1607.01759)

```markup

@article{joulin2016bag,

title={Bag of Tricks for Efficient Text Classification},

author={Joulin, Armand and Grave, Edouard and Bojanowski, Piotr and Mikolov, Tomas},

journal={arXiv preprint arXiv:1607.01759},

year={2016}

}

```

[3] A. Joulin, E. Grave, P. Bojanowski, M. Douze, H. Jégou, T. Mikolov, [*FastText.zip: Compressing text classification models*](https://arxiv.org/abs/1612.03651)

```markup

@article{joulin2016fasttext,

title={FastText.zip: Compressing text classification models},

author={Joulin, Armand and Grave, Edouard and Bojanowski, Piotr and Douze, Matthijs and J{'e}gou, H{'e}rve and Mikolov, Tomas},

journal={arXiv preprint arXiv:1612.03651},

year={2016}

}

```

If you use these word vectors, please cite the following paper:

[4] E. Grave\*, P. Bojanowski\*, P. Gupta, A. Joulin, T. Mikolov, [*Learning Word Vectors for 157 Languages*](https://arxiv.org/abs/1802.06893)

```markup

@inproceedings{grave2018learning,

title={Learning Word Vectors for 157 Languages},

author={Grave, Edouard and Bojanowski, Piotr and Gupta, Prakhar and Joulin, Armand and Mikolov, Tomas},

booktitle={Proceedings of the International Conference on Language Resources and Evaluation (LREC 2018)},

year={2018}

}

```

(\* These authors contributed equally.)

|

Donghyun/L2_BERT

|

[] | null |

{

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 0 | null |

---

datasets:

- competitions/aiornot

language:

- en

metrics:

- accuracy

library_name: keras

---

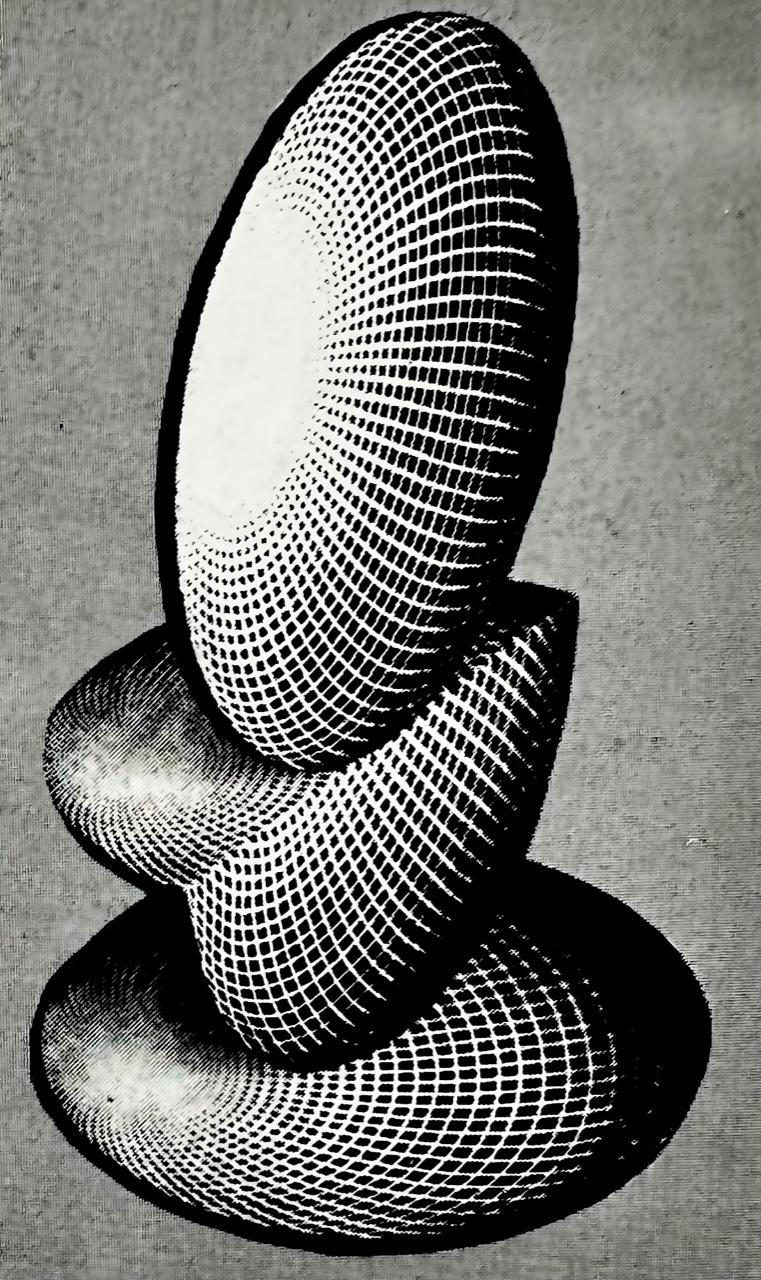

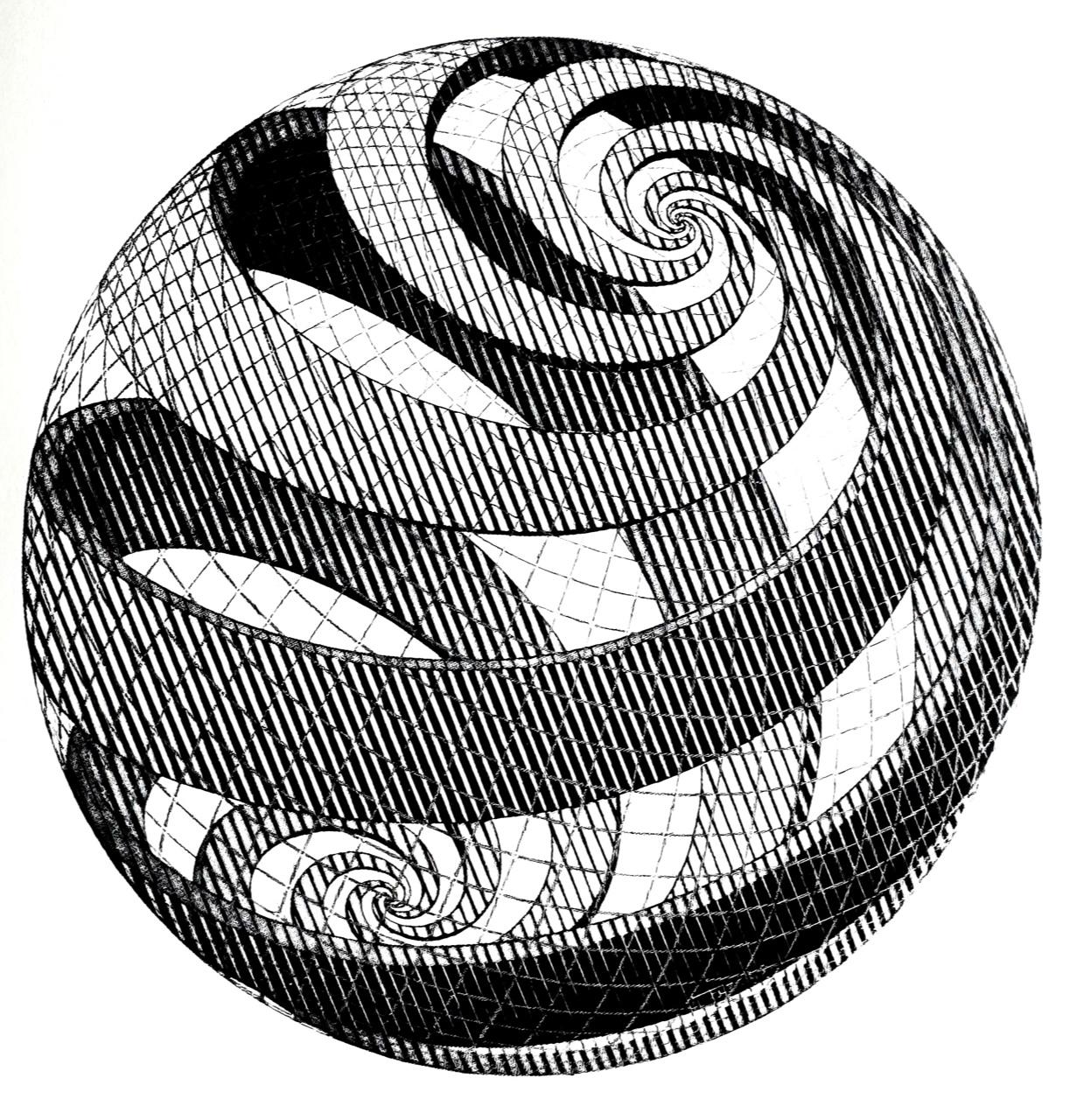

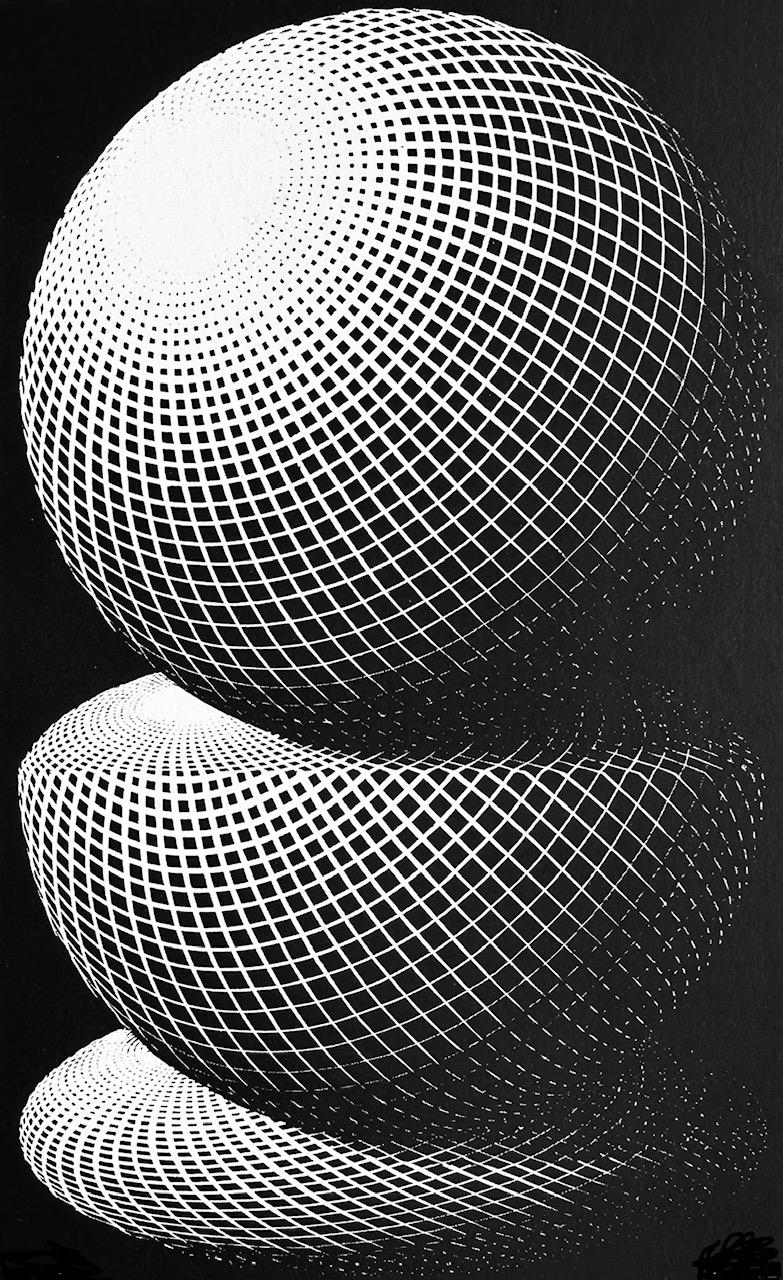

The datasets / https://huggingface.co/datasets/competitions/aiornot from Hugging Face were utilized to construct sequential CNN models using Keras.

The above images that have been attached were classified incorrectly by the AI.

Some of the images were classified as natural images, even though they were generated by AI,

due to their vibrant color combination and intricate patterns.

On the other hand, one painted image that was actually a natural image was classified as

AI generated because the paint created some distortion or aliasing effects that confused the model.

|

Dongjae/mrc2reader

|

[

"pytorch",

"xlm-roberta",

"question-answering",

"transformers",

"autotrain_compatible"

] |

question-answering

|

{

"architectures": [

"XLMRobertaForQuestionAnswering"

],

"model_type": "xlm-roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

}

| 3 | null |

---

license: mit

tags:

- feature-extraction

library_name: fasttext

language: diq

widget:

- text: apple

example_title: apple

---

# fastText (Zazaki)

fastText is an open-source, free, lightweight library that allows users to learn text representations and text classifiers. It works on standard, generic hardware. Models can later be reduced in size to even fit on mobile devices. It was introduced in [this paper](https://arxiv.org/abs/1607.04606). The official website can be found [here](https://fasttext.cc/).

## Model description

fastText is a library for efficient learning of word representations and sentence classification. fastText is designed to be simple to use for developers, domain experts, and students. It's dedicated to text classification and learning word representations, and was designed to allow for quick model iteration and refinement without specialized hardware. fastText models can be trained on more than a billion words on any multicore CPU in less than a few minutes.

It includes pre-trained models learned on Wikipedia and in over 157 different languages. fastText can be used as a command line, linked to a C++ application, or used as a library for use cases from experimentation and prototyping to production.

## Intended uses & limitations

You can use pre-trained word vectors for text classification or language identification. See the [tutorials](https://fasttext.cc/docs/en/supervised-tutorial.html) and [resources](https://fasttext.cc/docs/en/english-vectors.html) on its official website to look for tasks that interest you.

### How to use

Here is how to load and use a pre-trained vectors

```python

>>> import fasttext

>>> from huggingface_hub import hf_hub_download

>>> model_path = hf_hub_download(repo_id="facebook/fasttext-diq-vectors", filename="model.bin")

>>> model = fasttext.load_model(model_path)

>>> model.words

['the', 'of', 'and', 'to', 'in', 'a', 'that', 'is', ...]

>>> len(model.words)

145940

>>> model['bread']

array([ 4.89417791e-01, 1.60882145e-01, -2.25947708e-01, -2.94273376e-01,

-1.04577184e-01, 1.17962055e-01, 1.34821936e-01, -2.41778508e-01, ...])

```

Here is how to use this model to query nearest neighbors of an English word vector:

```python

>>> import fasttext

>>> from huggingface_hub import hf_hub_download

>>> model_path = hf_hub_download(repo_id="facebook/fasttext-en-nearest-neighbors", filename="model.bin")

>>> model = fasttext.load_model(model_path)

>>> model.get_nearest_neighbors("bread", k=5)

[(0.5641006231307983, 'butter'),

(0.48875734210014343, 'loaf'),

(0.4491206705570221, 'eat'),

(0.42444291710853577, 'food'),

(0.4229326844215393, 'cheese')]

```

Here is how to use this model to detect the language of a given text:

```python

>>> import fasttext

>>> from huggingface_hub import hf_hub_download

>>> model_path = hf_hub_download(repo_id="facebook/fasttext-language-identification", filename="model.bin")

>>> model = fasttext.load_model(model_path)

>>> model.predict("Hello, world!")

(('__label__eng_Latn',), array([0.81148803]))

>>> model.predict("Hello, world!", k=5)

(('__label__eng_Latn', '__label__vie_Latn', '__label__nld_Latn', '__label__pol_Latn', '__label__deu_Latn'),

array([0.61224753, 0.21323682, 0.09696738, 0.01359863, 0.01319415]))

```

### Limitations and bias

Even if the training data used for this model could be characterized as fairly neutral, this model can have biased predictions.

Cosine similarity can be used to measure the similarity between two different word vectors. If two two vectors are identical, the cosine similarity will be 1. For two completely unrelated vectors, the value will be 0. If two vectors have an opposite relationship, the value will be -1.

```python

>>> import numpy as np

>>> def cosine_similarity(word1, word2):

>>> return np.dot(model[word1], model[word2]) / (np.linalg.norm(model[word1]) * np.linalg.norm(model[word2]))

>>> cosine_similarity("man", "boy")

0.061653383

>>> cosine_similarity("man", "ceo")

0.11989131

>>> cosine_similarity("woman", "ceo")

-0.08834904

```

## Training data

Pre-trained word vectors for 157 languages were trained on [Common Crawl](http://commoncrawl.org/) and [Wikipedia](https://www.wikipedia.org/) using fastText. These models were trained using CBOW with position-weights, in dimension 300, with character n-grams of length 5, a window of size 5 and 10 negatives. We also distribute three new word analogy datasets, for French, Hindi and Polish.

## Training procedure

### Tokenization

We used the [Stanford word segmenter](https://nlp.stanford.edu/software/segmenter.html) for Chinese, [Mecab](http://taku910.github.io/mecab/) for Japanese and [UETsegmenter](https://github.com/phongnt570/UETsegmenter) for Vietnamese. For languages using the Latin, Cyrillic, Hebrew or Greek scripts, we used the tokenizer from the [Europarl](https://www.statmt.org/europarl/) preprocessing tools. For the remaining languages, we used the ICU tokenizer.

More information about the training of these models can be found in the article [Learning Word Vectors for 157 Languages](https://arxiv.org/abs/1802.06893).

### License

The word vectors are distributed under the [*Creative Commons Attribution-Share-Alike License 3.0*](https://creativecommons.org/licenses/by-sa/3.0/).

### Evaluation datasets

The analogy evaluation datasets described in the paper are available here: [French](https://dl.fbaipublicfiles.com/fasttext/word-analogies/questions-words-fr.txt), [Hindi](https://dl.fbaipublicfiles.com/fasttext/word-analogies/questions-words-hi.txt), [Polish](https://dl.fbaipublicfiles.com/fasttext/word-analogies/questions-words-pl.txt).

### BibTeX entry and citation info

Please cite [1] if using this code for learning word representations or [2] if using for text classification.

[1] P. Bojanowski\*, E. Grave\*, A. Joulin, T. Mikolov, [*Enriching Word Vectors with Subword Information*](https://arxiv.org/abs/1607.04606)

```markup

@article{bojanowski2016enriching,

title={Enriching Word Vectors with Subword Information},

author={Bojanowski, Piotr and Grave, Edouard and Joulin, Armand and Mikolov, Tomas},