modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-08-01 12:29:10

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 547

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-08-01 12:28:04

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

kangnichaluo/mnli-1

|

kangnichaluo

| 2021-05-25T11:36:25Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-03-02T23:29:05Z |

learning rate: 2e-5

training epochs: 3

batch size: 64

seed: 42

model: bert-base-uncased

trained on MNLI which is converted into two-way nli classification (predict entailment or not-entailment class)

|

Intel/bert-base-uncased-mnli-sparse-70-unstructured

|

Intel

| 2021-05-24T17:47:03Z | 16 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"text-classification",

"en",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-03-02T23:29:04Z |

---

language: en

---

# Sparse BERT base model fine tuned to MNLI (uncased)

Fine tuned sparse BERT base to MNLI (GLUE Benchmark) task from [bert-base-uncased-sparse-70-unstructured](https://huggingface.co/Intel/bert-base-uncased-sparse-70-unstructured).

<br><br>

Note: This model requires `transformers==2.10.0`

## Evaluation Results

Matched: 82.5%

Mismatched: 83.3%

This model can be further fine-tuned to other tasks and achieve the following evaluation results:

| Task | QQP (Acc/F1) | QNLI (Acc) | SST-2 (Acc) | STS-B (Pears/Spear) | SQuADv1.1 (Acc/F1) |

|------|--------------|------------|-------------|---------------------|--------------------|

| | 90.2/86.7 | 90.3 | 91.5 | 88.9/88.6 | 80.5/88.2 |

|

RecordedFuture/Swedish-Sentiment-Violence-Targets

|

RecordedFuture

| 2021-05-24T13:02:37Z | 31 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"jax",

"bert",

"token-classification",

"sv",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-03-02T23:29:04Z |

---

language: sv

license: mit

---

## Swedish BERT models for sentiment analysis, Sentiment targets.

[Recorded Future](https://www.recordedfuture.com/) together with [AI Sweden](https://www.ai.se/en) releases two language models for target/role assignment in Swedish. The two models are based on the [KB/bert-base-swedish-cased](https://huggingface.co/KB/bert-base-swedish-cased), the models as has been fine tuned to solve a Named Entety Recognition(NER) token classification task.

This is a downstream model to be used in conjunction with the [Swedish violence sentiment classifier](https://huggingface.co/RecordedFuture/Swedish-Sentiment-Violence) or [Swedish violence sentiment classifier](https://huggingface.co/RecordedFuture/Swedish-Sentiment-Fear). The models are trained to tag parts of sentences that has recieved a positive classification from the upstream sentiment classifier. The model will tag parts of sentences that contains the targets that the upstream model has activated on.

The NER sentiment target models do work as standalone models but their recommended application is downstreamfrom a sentence classification model.

The models are only trained on Swedish data and only supports inference of Swedish input texts. The models inference metrics for all non-Swedish inputs are not defined, these inputs are considered as out of domain data.

The current models are supported at Transformers version >= 4.3.3 and Torch version 1.8.0, compatibility with older versions are not verified.

### Fear targets

The model can be imported from the transformers library by running

from transformers import BertForSequenceClassification, BertTokenizerFast

tokenizer = BertTokenizerFast.from_pretrained("RecordedFuture/Swedish-Sentiment-Fear-Targets")

classifier_fear_targets= BertForTokenClassification.from_pretrained("RecordedFuture/Swedish-Sentiment-Fear-Targets")

When the model and tokenizer are initialized the model can be used for inference.

#### Verification metrics

During training the Fear target model had the following verification metrics when using "any overlap" as the evaluation metric.

| F-score | Precision | Recall |

|:-------------------------:|:-------:|:---------:|:------:|

| 0.8361 | 0.7903 | 0.8876 |

#### Swedish-Sentiment-Violence

The model be can imported from the transformers library by running

from transformers import BertForSequenceClassification, BertTokenizerFast

tokenizer = BertTokenizerFast.from_pretrained("RecordedFuture/Swedish-Sentiment-Violence-Targets")

classifier_violence_targets = BertForTokenClassification.from_pretrained("RecordedFuture/Swedish-Sentiment-Violence-Targets")

When the model and tokenizer are initialized the model can be used for inference.

#### Verification metrics

During training the Violence target model had the following verification metrics when using "any overlap" as the evaluation metric.

| F-score | Precision | Recall |

|:-------------------------:|:-------:|:---------:|:------:|

| 0.7831| 0.9155| 0.8442 |

|

Intel/bert-base-uncased-sparse-70-unstructured

|

Intel

| 2021-05-24T12:42:47Z | 11 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"fill-mask",

"en",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-03-02T23:29:04Z |

---

language: en

---

# Sparse BERT base model (uncased)

Pretrained model pruned to 70% sparsity.

The model is a pruned version of the [BERT base model](https://huggingface.co/bert-base-uncased).

## Intended Use

The model can be used for fine-tuning to downstream tasks with sparsity already embeded to the model.

To keep the sparsity a mask should be added to each sparse weight blocking the optimizer from updating the zeros.

|

felflare/bert-restore-punctuation

|

felflare

| 2021-05-24T03:04:47Z | 13,671 | 64 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"token-classification",

"punctuation",

"en",

"dataset:yelp_polarity",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-03-02T23:29:05Z |

---

language:

- en

tags:

- punctuation

license: mit

datasets:

- yelp_polarity

metrics:

- f1

---

# ✨ bert-restore-punctuation

[]()

This a bert-base-uncased model finetuned for punctuation restoration on [Yelp Reviews](https://www.tensorflow.org/datasets/catalog/yelp_polarity_reviews).

The model predicts the punctuation and upper-casing of plain, lower-cased text. An example use case can be ASR output. Or other cases when text has lost punctuation.

This model is intended for direct use as a punctuation restoration model for the general English language. Alternatively, you can use this for further fine-tuning on domain-specific texts for punctuation restoration tasks.

Model restores the following punctuations -- **[! ? . , - : ; ' ]**

The model also restores the upper-casing of words.

-----------------------------------------------

## 🚋 Usage

**Below is a quick way to get up and running with the model.**

1. First, install the package.

```bash

pip install rpunct

```

2. Sample python code.

```python

from rpunct import RestorePuncts

# The default language is 'english'

rpunct = RestorePuncts()

rpunct.punctuate("""in 2018 cornell researchers built a high-powered detector that in combination with an algorithm-driven process called ptychography set a world record

by tripling the resolution of a state-of-the-art electron microscope as successful as it was that approach had a weakness it only worked with ultrathin samples that were

a few atoms thick anything thicker would cause the electrons to scatter in ways that could not be disentangled now a team again led by david muller the samuel b eckert

professor of engineering has bested its own record by a factor of two with an electron microscope pixel array detector empad that incorporates even more sophisticated

3d reconstruction algorithms the resolution is so fine-tuned the only blurring that remains is the thermal jiggling of the atoms themselves""")

# Outputs the following:

# In 2018, Cornell researchers built a high-powered detector that, in combination with an algorithm-driven process called Ptychography, set a world record by tripling the

# resolution of a state-of-the-art electron microscope. As successful as it was, that approach had a weakness. It only worked with ultrathin samples that were a few atoms

# thick. Anything thicker would cause the electrons to scatter in ways that could not be disentangled. Now, a team again led by David Muller, the Samuel B.

# Eckert Professor of Engineering, has bested its own record by a factor of two with an Electron microscope pixel array detector empad that incorporates even more

# sophisticated 3d reconstruction algorithms. The resolution is so fine-tuned the only blurring that remains is the thermal jiggling of the atoms themselves.

```

**This model works on arbitrarily large text in English language and uses GPU if available.**

-----------------------------------------------

## 📡 Training data

Here is the number of product reviews we used for finetuning the model:

| Language | Number of text samples|

| -------- | ----------------- |

| English | 560,000 |

We found the best convergence around _**3 epochs**_, which is what presented here and available via a download.

-----------------------------------------------

## 🎯 Accuracy

The fine-tuned model obtained the following accuracy on 45,990 held-out text samples:

| Accuracy | Overall F1 | Eval Support |

| -------- | ---------------------- | ------------------- |

| 91% | 90% | 45,990

Below is a breakdown of the performance of the model by each label:

| label | precision | recall | f1-score | support|

| --------- | -------------|-------- | ----------|--------|

| **!** | 0.45 | 0.17 | 0.24 | 424

| **!+Upper** | 0.43 | 0.34 | 0.38 | 98

| **'** | 0.60 | 0.27 | 0.37 | 11

| **,** | 0.59 | 0.51 | 0.55 | 1522

| **,+Upper** | 0.52 | 0.50 | 0.51 | 239

| **-** | 0.00 | 0.00 | 0.00 | 18

| **.** | 0.69 | 0.84 | 0.75 | 2488

| **.+Upper** | 0.65 | 0.52 | 0.57 | 274

| **:** | 0.52 | 0.31 | 0.39 | 39

| **:+Upper** | 0.36 | 0.62 | 0.45 | 16

| **;** | 0.00 | 0.00 | 0.00 | 17

| **?** | 0.54 | 0.48 | 0.51 | 46

| **?+Upper** | 0.40 | 0.50 | 0.44 | 4

| **none** | 0.96 | 0.96 | 0.96 |35352

| **Upper** | 0.84 | 0.82 | 0.83 | 5442

-----------------------------------------------

## ☕ Contact

Contact [Daulet Nurmanbetov]([email protected]) for questions, feedback and/or requests for similar models.

-----------------------------------------------

|

thingsu/koDPR_context

|

thingsu

| 2021-05-24T02:46:37Z | 3 | 3 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z |

fintuned the kykim/bert-kor-base model as a dense passage retrieval context encoder by KLUE dataset

this link is experiment result. https://wandb.ai/thingsu/DenseRetrieval

Corpus : Korean Wikipedia Corpus

Trained Strategy :

- Pretrained Model : kykim/bert-kor-base

- Inverse Cloze Task : 16 Epoch, by korquad v 1.0, KLUE MRC dataset

- In-batch Negatives : 12 Epoch, by KLUE MRC dataset, random sampling between Sparse Retrieval(TF-IDF) top 100 passage per each query

You must need to use Korean wikipedia corpus

<pre>

<code>

from Transformers import AutoTokenizer, BertPreTrainedModel, BertModel

class BertEncoder(BertPreTrainedModel):

def __init__(self, config):

super(BertEncoder, self).__init__(config)

self.bert = BertModel(config)

self.init_weights()

def forward(self, input_ids, attention_mask=None, token_type_ids=None):

outputs = self.bert(input_ids, attention_mask, token_type_ids)

pooled_output = outputs[1]

return pooled_output

model_name = 'kykim/bert-kor-base'

tokenizer = AutoTokenizer.from_pretrained(model_name)

q_encoder = BertEncoder.from_pretrained("thingsu/koDPR_question")

p_encoder = BertEncoder.from_pretrained("thingsu/koDPR_context")

</pre>

</code>

|

uw-hai/polyjuice

|

uw-hai

| 2021-05-24T01:21:24Z | 167 | 3 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"counterfactual generation",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: "en"

tags:

- counterfactual generation

widget:

- text: "It is great for kids. <|perturb|> [negation] It [BLANK] great for kids. [SEP]"

---

# Polyjuice

## Model description

This is a ported version of [Polyjuice](https://homes.cs.washington.edu/~wtshuang/static/papers/2021-arxiv-polyjuice.pdf), the general-purpose counterfactual generator.

For more code release, please refer to [this github page](https://github.com/tongshuangwu/polyjuice).

#### How to use

```python

from transformers import AutoTokenizer, AutoModelWithLMHead

from transformers import pipeline, AutoTokenizer, AutoModelForCausalLM

model_path = "uw-hai/polyjuice"

generator = pipeline("text-generation",

model=AutoModelForCausalLM.from_pretrained(model_path),

tokenizer=AutoTokenizer.from_pretrained(model_path),

framework="pt", device=0 if is_cuda else -1)

prompt_text = "A dog is embraced by the woman. <|perturb|> [negation] A dog is [BLANK] the woman."

generator(prompt_text, num_beams=3, num_return_sequences=3)

```

### BibTeX entry and citation info

```bibtex

@inproceedings{polyjuice:acl21,

title = "{P}olyjuice: Generating Counterfactuals for Explaining, Evaluating, and Improving Models",

author = "Tongshuang Wu and Marco Tulio Ribeiro and Jeffrey Heer and Daniel S. Weld",

booktitle = "Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics",

year = "2021",

publisher = "Association for Computational Linguistics"

```

|

huggingtweets/heaven_ley

|

huggingtweets

| 2021-05-23T14:18:42Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/heaven_ley/1621532679555/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1391998269430116355/O5NJQwYC_400x400.jpg')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI BOT 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">Ashley 🌻</div>

<div style="text-align: center; font-size: 14px;">@heaven_ley</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from Ashley 🌻.

| Data | Ashley 🌻 |

| --- | --- |

| Tweets downloaded | 3084 |

| Retweets | 563 |

| Short tweets | 101 |

| Tweets kept | 2420 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/h9ex5ztp/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @heaven_ley's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/2rr1mtsr) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/2rr1mtsr/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/heaven_ley')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

stevhliu/astroGPT

|

stevhliu

| 2021-05-23T12:59:14Z | 139 | 5 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"jax",

"gpt2",

"text-generation",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: "en"

thumbnail: "https://raw.githubusercontent.com/stevhliu/satsuma/master/images/astroGPT-thumbnail.png"

widget:

- text: "Jan 18, 2020"

- text: "Feb 14, 2020"

- text: "Jul 04, 2020"

---

# astroGPT 🪐

## Model description

This is a GPT-2 model fine-tuned on Western zodiac signs. For more information about GPT-2, take a look at 🤗 Hugging Face's GPT-2 [model card](https://huggingface.co/gpt2). You can use astroGPT to generate a daily horoscope by entering the current date.

## How to use

To use this model, simply enter the current date like so `Mon DD, YEAR`:

```python

from transformers import AutoTokenizer, AutoModelWithLMHead

tokenizer = AutoTokenizer.from_pretrained("stevhliu/astroGPT")

model = AutoModelWithLMHead.from_pretrained("stevhliu/astroGPT")

input_ids = tokenizer.encode('Sep 03, 2020', return_tensors='pt').to('cuda')

sample_output = model.generate(input_ids,

do_sample=True,

max_length=75,

top_k=20,

top_p=0.97)

print(sample_output)

```

## Limitations and bias

astroGPT inherits the same biases that affect GPT-2 as a result of training on a lot of non-neutral content on the internet. The model does not currently support zodiac sign-specific generation and only returns a general horoscope. While the generated text may occasionally mention a specific zodiac sign, this is due to how the horoscopes were originally written by it's human authors.

## Data

The data was scraped from [Horoscope.com](https://www.horoscope.com/us/index.aspx) and trained on 4.7MB of text. The text was collected from four categories (daily, love, wellness, career) and span from 09/01/19 to 08/01/2020. The archives only store horoscopes dating a year back from the current date.

## Training and results

The text was tokenized using the fast GPT-2 BPE [tokenizer](https://huggingface.co/transformers/model_doc/gpt2.html#gpt2tokenizerfast). It has a vocabulary size of 50,257 and sequence length of 1024 tokens. The model was trained with on one of Google Colaboratory's GPU's for approximately 2.5 hrs with [fastai's](https://docs.fast.ai/) learning rate finder, discriminative learning rates and 1cycle policy. See table below for a quick summary of the training procedure and results.

| dataset size | epochs | lr | training time | train_loss | valid_loss | perplexity |

|:-------------:|:------:|:-----------------:|:-------------:|:----------:|:----------:|:----------:|

| 5.9MB |32 | slice(1e-7,1e-5) | 2.5 hrs | 2.657170 | 2.642387 | 14.046692 |

|

rjbownes/Magic-The-Generating

|

rjbownes

| 2021-05-23T12:17:20Z | 5 | 1 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

widget:

- text: "Even the Dwarves"

- text: "The secrets of"

---

# Model name

Magic The Generating

## Model description

This is a fine tuned GPT-2 model trained on a corpus of all available English language Magic the Gathering card flavour texts.

## Intended uses & limitations

This is intended only for use in generating new, novel, and sometimes surprising, MtG like flavour texts.

#### How to use

```python

from transformers import GPT2Tokenizer, GPT2LMHeadModel

tokenizer = GPT2Tokenizer.from_pretrained("rjbownes/Magic-The-Generating")

model = GPT2LMHeadModel.from_pretrained("rjbownes/Magic-The-Generating")

```

#### Limitations and bias

The training corpus was surprisingly small, only ~29000 cards, I had suspected there were more. This might mean there is a real limit to the number of entirely original strings this will generate.

This is also only based on the 117M parameter GPT2, it's a pretty obvious upgrade to retrain with medium, large or XL models. However, despite this, the outputs I tested were very convincing!

## Training data

The data was 29222 MtG card flavour texts. The model was based on the "gpt2" pretrained transformer: https://huggingface.co/gpt2.

## Training procedure

Only English language MtG flavour texts were scraped from the [Scryfall](https://scryfall.com/) API. Empty strings and any non-UTF-8 encoded tokens were removed leaving 29222 entries.

This was trained using google Colab with a T4 instance. 4 epochs, adamW optimizer with default parameters and a batch size of 32. Token embedding lengths were capped at 98 tokens as this was the longest string and an attention mask was added to the training model to ignore all padding tokens.

## Eval results

Average Training Loss: 0.44866578806635815.

Validation loss: 0.5606984243444775.

Sample model outputs:

1. "Every branch a crossroads, every vine a swift steed."

—Gwendlyn Di Corci

2. "The secrets of this world will tell their masters where to strike if need be."

—Noyan Dar, Tazeem roilmage

3. "The secrets of nature are expensive. You'd be better off just to have more freedom."

4. "Even the Dwarves knew to leave some stones unturned."

5. "The wise always keep an ear open to the whispers of power."

### BibTeX entry and citation info

```bibtex

@article{BownesLM,

title={Fine Tuning GPT-2 for Magic the Gathering flavour text generation.},

author={Richard J. Bownes},

journal={Medium},

year={2020}

}

```

|

rathi/storyGenerator

|

rathi

| 2021-05-23T12:11:32Z | 7 | 2 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

## This is a genre-based Movie plot generator.

For best results, structure the input as follows -

1. Add a `<BOS>` tag in the start.

2. Add a `<genre>` tag (with the genre as a placeholder for lowercased genres such as `<action>`, `<romantic>`, `<thriller>`, `<comedy>`

|

himanshu-dutta/pycoder-gpt2

|

himanshu-dutta

| 2021-05-23T11:56:06Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

<br />

<div align="center">

<img src="https://raw.githubusercontent.com/himanshu-dutta/pycoder/master/docs/pycoder-logo-p.png">

<br/>

<img alt="Made With Python" src="http://ForTheBadge.com/images/badges/made-with-python.svg" height=28 style="display:inline; height:28px;" />

<img alt="Medium" src="https://img.shields.io/badge/Medium-12100E?style=for-the-badge&logo=medium&logoColor=white" height=28 style="display:inline; height:28px;"/>

<a href="https://wandb.ai/himanshu-dutta/pycoder">

<img alt="WandB Dashboard" src="https://raw.githubusercontent.com/wandb/assets/04cfa58cc59fb7807e0423187a18db0c7430bab5/wandb-github-badge-28.svg" height=28 style="display:inline; height:28px;" />

</a>

[](https://pypi.org/project/pycoder/)

</div>

<div align="justify">

`PyCoder` is a tool to generate python code out of a few given topics and a description. It uses GPT-2 language model as its engine. Pycoder poses writing Python code as a conditional-Causal Language Modelling(c-CLM). It has been trained on millions of lines of Python code written by all of us. At the current stage and state of training, it produces sensible code with few lines of description, but the scope of improvement for the model is limitless.

Pycoder has been developed as a Command-Line tool (CLI), an API endpoint, as well as a python package (yet to be deployed to PyPI). This repository acts as a framework for anyone who either wants to try to build Pycoder from scratch or turn Pycoder into maybe a `CPPCoder` or `JSCoder` 😃. A blog post about the development of the project will be released soon.

To use `Pycoder` as a CLI utility, clone the repository as normal, and install the package with:

```console

foo@bar:❯ pip install pycoder

```

After this the package could be verified and accessed as either a native CLI tool or a python package with:

```console

foo@bar:❯ python -m pycoder --version

Or directly as:

foo@bar:❯ pycoder --version

```

On installation the CLI can be used directly, such as:

```console

foo@bar:❯ pycoder -t pytorch -t torch -d "a trainer class to train vision model" -ml 120

```

The API endpoint is deployed using FastAPI. Once all the requirements have been installed for the project, the API can be accessed with:

```console

foo@bar:❯ pycoder --endpoint PORT_NUMBER

Or

foo@bar:❯ pycoder -e PORT_NUMBER

```

</div>

## Tech Stack

<div align="center">

<img alt="Python" src="https://img.shields.io/badge/python-%2314354C.svg?style=for-the-badge&logo=python&logoColor=white" style="display:inline;" />

<img alt="PyTorch" src="https://img.shields.io/badge/PyTorch-%23EE4C2C.svg?style=for-the-badge&logo=PyTorch&logoColor=white" style="display:inline;" />

<img alt="Transformers" src="https://raw.githubusercontent.com/huggingface/transformers/master/docs/source/imgs/transformers_logo_name.png" height=28 width=120 style="display:inline; background-color:white; height:28px; width:120px"/>

<img alt="Docker" src="https://img.shields.io/badge/docker-%230db7ed.svg?style=for-the-badge&logo=docker&logoColor=white" style="display:inline;" />

<img src="https://fastapi.tiangolo.com/img/logo-margin/logo-teal.png" alt="FastAPI" height=28 style="display:inline; background-color:black; height:28px;" />

<img src="https://typer.tiangolo.com/img/logo-margin/logo-margin-vector.svg" height=28 style="display:inline; background-color:teal; height:28px;" />

</div>

## Tested Platforms

<div align="center">

<img alt="Linux" src="https://img.shields.io/badge/Linux-FCC624?style=for-the-badge&logo=linux&logoColor=black" style="display:inline;" />

<img alt="Windows 10" src="https://img.shields.io/badge/Windows-0078D6?style=for-the-badge&logo=windows&logoColor=white" style="display:inline;" />

</div>

## BibTeX

If you want to cite the framework feel free to use this:

```bibtex

@article{dutta2021pycoder,

title={Pycoder},

author={Dutta, H},

journal={GitHub. Note: https://github.com/himanshu-dutta/pycoder},

year={2021}

}

```

<hr />

<div align="center">

<img alt="MIT License" src="https://img.shields.io/github/license/himanshu-dutta/pycoder?style=for-the-badge&logo=appveyor" style="display:inline;" />

<img src="https://img.shields.io/badge/Copyright-Himanshu_Dutta-2ea44f?style=for-the-badge&logo=appveyor" style="display:inline;" />

</div>

|

pranavpsv/gpt2-genre-story-generator

|

pranavpsv

| 2021-05-23T11:02:06Z | 507 | 46 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

# GPT2 Genre Based Story Generator

## Model description

GPT2 fine-tuned on genre-based story generation.

## Intended uses

Used to generate stories based on user inputted genre and starting prompts.

## How to use

#### Supported Genres

superhero, action, drama, horror, thriller, sci_fi

#### Input text format

\<BOS> \<genre> Some optional text...

**Example**: \<BOS> \<sci_fi> After discovering time travel,

```python

# Example of usage

from transformers import pipeline

story_gen = pipeline("text-generation", "pranavpsv/gpt2-genre-story-generator")

print(story_gen("<BOS> <superhero> Batman"))

```

## Training data

Initialized with pre-trained weights of "gpt2" checkpoint. Fine-tuned the model on stories of various genres.

|

p208p2002/gpt2-squad-qg-hl

|

p208p2002

| 2021-05-23T10:54:57Z | 13 | 3 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"question-generation",

"dataset:squad",

"arxiv:1606.05250",

"arxiv:1705.00106",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

datasets:

- squad

tags:

- question-generation

widget:

- text: "Harry Potter is a series of seven fantasy novels written by British author, [HL]J. K. Rowling[HL]."

---

# Transformer QG on SQuAD

HLQG is Proposed by [Ying-Hong Chan & Yao-Chung Fan. (2019). A Re-current BERT-based Model for Question Generation.](https://www.aclweb.org/anthology/D19-5821/)

**This is a Reproduce Version**

More detail: [p208p2002/Transformer-QG-on-SQuAD](https://github.com/p208p2002/Transformer-QG-on-SQuAD)

## Usage

### Input Format

```

C' = [c1, c2, ..., [HL], a1, ..., a|A|, [HL], ..., c|C|]

```

### Input Example

```

Harry Potter is a series of seven fantasy novels written by British author, [HL]J. K. Rowling[HL].

```

> # Who wrote Harry Potter?

## Data setting

We report two dataset setting as Follow

### SQuAD

- train: 87599\\\\t

- validation: 10570

> [SQuAD: 100,000+ Questions for Machine Comprehension of Text](https://arxiv.org/abs/1606.05250)

### SQuAD NQG

- train: 75722

- dev: 10570

- test: 11877

> [Learning to Ask: Neural Question Generation for Reading Comprehension](https://arxiv.org/abs/1705.00106)

## Available models

- BART

- GPT2

- T5

## Expriments

We report score with `NQG Scorer` which is using in SQuAD NQG.

If not special explanation, the size of the model defaults to "base".

### SQuAD

Model |Bleu 1|Bleu 2|Bleu 3|Bleu 4|METEOR|ROUGE-L|

---------------------------------|------|------|------|------|------|-------|

BART-HLSQG |54.67 |39.26 |30.34 |24.15 |25.43 |52.64 |

GPT2-HLSQG |49.31 |33.95 |25.41| 19.69 |22.29 |48.82 |

T5-HLSQG |54.29 |39.22 |30.43 |24.26 |25.56 |53.11 |

### SQuAD NQG

Model |Bleu 1|Bleu 2|Bleu 3|Bleu 4|METEOR|ROUGE-L|

---------------------------------|------|------|------|------|------|-------|

BERT-HLSQG (Chan et al.) |49.73 |34.60 |26.13 |20.33 |23.88 |48.23 |

BART-HLSQG |54.12 |38.19 |28.84 |22.35 |24.55 |51.03 |

GPT2-HLSQG |49.82 |33.69 |24.71 |18.63 |21.90 |47.60 |

T5-HLSQG |53.13 |37.60 |28.62 |22.38 |24.48 |51.20 |

|

ncoop57/DiGPTame-medium

|

ncoop57

| 2021-05-23T10:47:17Z | 37 | 2 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"conversational",

"arxiv:1911.00536",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

tags:

- conversational

license: mit

---

## Finetuned DialoGPT model on Spanish Conversations

This model was finetuned from the original [DialoGPT-medium](https://huggingface.co/microsoft/DialoGPT-medium) model on subtitles from Spanish movies and telenovelas from the awesome [OpenSubtitle dataset](https://github.com/PolyAI-LDN/conversational-datasets/tree/master/opensubtitles).

DialoGPT paper: [https://arxiv.org/abs/1911.00536](https://arxiv.org/abs/1911.00536)

Some example dialog from the model:

|Role | Response |

|---------|--------|

|User | ¿Cómo está el clima hoy? |

| Bot | Muy bien. |

|User | Eso es bueno escuchar. ¿Vas a ir a la playa? |

| Bot | No, también puedo regresar. |

|User | Oh bien, bueno, iré a la playa más tarde. |

| Bot | ¡No! |

|User | ¿Qué está mal con eso? |

| Bot | ¡Cómo que no! |

|User | Estoy confundido, ¿por qué no puedo ir a la playa? |

| Bot | ¡Cómo que no! |

|User | Explícamelo por favor. |

| Bot | ¡No! |

## Using the model

Example code for trying out the model (taken directly from the [DialoGPT-medium](https://huggingface.co/microsoft/DialoGPT-medium) model card):

```python

from transformers import AutoModelWithLMHead, AutoTokenizer

import torch

tokenizer = AutoTokenizer.from_pretrained("ncoop57/DiGPTame-medium")

model = AutoModelWithLMHead.from_pretrained("ncoop57/DiGPTame-medium")

# Let's chat for 5 lines

for step in range(5):

# encode the new user input, add the eos_token and return a tensor in Pytorch

new_user_input_ids = tokenizer.encode(input(">> User:") + tokenizer.eos_token, return_tensors='pt')

# append the new user input tokens to the chat history

bot_input_ids = torch.cat([chat_history_ids, new_user_input_ids], dim=-1) if step > 0 else new_user_input_ids

# generated a response while limiting the total chat history to 1000 tokens,

chat_history_ids = model.generate(bot_input_ids, max_length=1000, pad_token_id=tokenizer.eos_token_id)

# pretty print last ouput tokens from bot

print("DialoGPT: {}".format(tokenizer.decode(chat_history_ids[:, bot_input_ids.shape[-1]:][0], skip_special_tokens=True)))

```

## Training your own model

If you would like to finetune your own model or finetune this Spanish model, please checkout my blog post on that exact topic!

https://nathancooper.io/i-am-a-nerd/chatbot/deep-learning/gpt2/2020/05/12/chatbot-part-1.html

|

mymusise/CPM-GPT2-FP16

|

mymusise

| 2021-05-23T10:32:23Z | 9 | 2 |

transformers

|

[

"transformers",

"tf",

"gpt2",

"text-generation",

"zh",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: zh

widget:

- text: "今天是下雨天"

- text: "走向森林"

---

<h1 align="center">

CPM

</h1>

CPM(Chinese Pre-Trained Language Models), which has 2.6B parameters, made by the research team of Beijing Zhiyuan Institute of artificial intelligence and Tsinghua University @TsinghuaAI.

[repo: CPM-Generate](https://github.com/TsinghuaAI/CPM-Generate)

The One Thing You Need to Know is this model is not uploaded by official, the conver script is [here](https://github.com/mymusise/CPM-TF2Transformer/blob/main/transfor_CMP.ipynb)

# Overview

- **Language model**: CPM

- **Model size**: 2.6B parameters

- **Language**: Chinese

# How to use

How to use this model directly from the 🤗/transformers library:

```python

from transformers import XLNetTokenizer, TFGPT2LMHeadModel

import jieba

# add spicel process

class XLNetTokenizer(XLNetTokenizer):

translator = str.maketrans(" \n", "\u2582\u2583")

def _tokenize(self, text, *args, **kwargs):

text = [x.translate(self.translator) for x in jieba.cut(text, cut_all=False)]

text = " ".join(text)

return super()._tokenize(text, *args, **kwargs)

def _decode(self, *args, **kwargs):

text = super()._decode(*args, **kwargs)

text = text.replace(' ', '').replace('\u2582', ' ').replace('\u2583', '\n')

return text

tokenizer = XLNetTokenizer.from_pretrained('mymusise/CPM-GPT2-FP16')

model = TFGPT2LMHeadModel.from_pretrained("mymusise/CPM-GPT2-FP16")

```

How to generate text

```python

from transformers import TextGenerationPipeline

text_generater = TextGenerationPipeline(model, tokenizer)

texts = [

'今天天气不错',

'天下武功, 唯快不',

"""

我们在火星上发现了大量的神奇物种。有神奇的海星兽,身上是粉色的,有5条腿;有胆小的猫猫兽,橘色,有4条腿;有令人恐惧的蜈蚣兽,全身漆黑,36条腿;有纯洁的天使兽,全身洁白无瑕,有3条腿;有贪吃的汪汪兽,银色的毛发,有5条腿;有蛋蛋兽,紫色,8条腿。

请根据上文,列出一个表格,包含物种名、颜色、腿数量。

|物种名|颜色|腿数量|

|亚古兽|金黄|2|

|海星兽|粉色|5|

|猫猫兽|橘色|4|

|蜈蚣兽|漆黑|36|

"""

]

for text in texts:

token_len = len(tokenizer._tokenize(text))

print(text_generater(text, max_length=token_len + 15, top_k=1, use_cache=True, prefix='')[0]['generated_text'])

print(text_generater(text, max_length=token_len + 15, do_sample=True, top_k=5)[0]['generated_text'])

```

You can try it on [colab](https://colab.research.google.com/github/mymusise/CPM-TF2Transformer/blob/main/demo-fp16.ipynb)

<a href="https://colab.research.google.com/github/mymusise/CPM-TF2Transformer/blob/main/demo-fp16.ipynb">

<img alt="Build" src="https://colab.research.google.com/assets/colab-badge.svg">

</a>

|

mrm8488/gpt2-imdb-neg

|

mrm8488

| 2021-05-23T10:27:14Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z |

# GPT2-IMDB-neg (LM + RL) 🎞😡✍

All credits to [@lvwerra](https://twitter.com/lvwerra)

## What is it?

A small GPT2 (`lvwerra/gpt2-imdb`) language model fine-tuned to produce **negative** movie reviews based the [IMDB dataset](https://www.kaggle.com/lakshmi25npathi/imdb-dataset-of-50k-movie-reviews). The model is trained with rewards from a BERT sentiment classifier (`lvwerra/gpt2-imdb`) via **PPO**.

## Why?

I wanted to reproduce the experiment [lvwerra/gpt2-imdb-pos](https://huggingface.co/lvwerra/gpt2-imdb-pos) but for generating **negative** movie reviews.

## Training setting

The model was trained for `100` optimisation steps with a batch size of `256` which corresponds to `25600` training samples. The full experiment setup (for positive samples) in [trl repo](https://lvwerra.github.io/trl/04-gpt2-sentiment-ppo-training/).

## Examples

A few examples of the model response to a query before and after optimisation:

| query | response (before) | response (after) | rewards (before) | rewards (after) |

|-------|-------------------|------------------|------------------|-----------------|

|This movie is a fine | attempt as far as live action is concerned, n...|example of how bad Hollywood in theatrics pla...| 2.118391 | -3.31625|

|I have watched 3 episodes |with this guy and he is such a talented actor...| but the show is just plain awful and there ne...| 2.681171| -4.512792|

|We know that firefighters and| police officers are forced to become populari...| other chains have going to get this disaster ...| 1.367811| -3.34017|

## Training logs and metrics <img src="https://gblobscdn.gitbook.com/spaces%2F-Lqya5RvLedGEWPhtkjU%2Favatar.png?alt=media" width="25" height="25">

Watch the whole training logs and metrics on [W&B](https://app.wandb.ai/mrm8488/gpt2-sentiment-negative?workspace=user-mrm8488)

> Created by [Manuel Romero/@mrm8488](https://twitter.com/mrm8488)

> Made with <span style="color: #e25555;">♥</span> in Spain

|

mrm8488/GuaPeTe-2-tiny-finetuned-TED

|

mrm8488

| 2021-05-23T10:14:53Z | 14 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"spanish",

"gpt-2",

"spanish gpt2",

"es",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: es

tags:

- spanish

- gpt-2

- spanish gpt2

widget:

- text: "Ustedes tienen la oportunidad de"

---

# GuaPeTe-2-tiny fine-tuned on TED dataset for CLM

|

mrm8488/GPT-2-finetuned-common_gen

|

mrm8488

| 2021-05-23T10:12:07Z | 135 | 3 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"en",

"dataset:common_gen",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

datasets:

- common_gen

widget:

- text: "<|endoftext|> apple, tree, pick:"

---

# GPT-2 fine-tuned on CommonGen

[GPT-2](https://huggingface.co/gpt2) fine-tuned on [CommonGen](https://inklab.usc.edu/CommonGen/index.html) for *Generative Commonsense Reasoning*.

## Details of GPT-2

GPT-2 is a transformers model pretrained on a very large corpus of English data in a self-supervised fashion. This

means it was pretrained on the raw texts only, with no humans labelling them in any way (which is why it can use lots

of publicly available data) with an automatic process to generate inputs and labels from those texts. More precisely,

it was trained to guess the next word in sentences.

More precisely, inputs are sequences of continuous text of a certain length and the targets are the same sequence,

shifted one token (word or piece of word) to the right. The model uses internally a mask-mechanism to make sure the

predictions for the token `i` only uses the inputs from `1` to `i` but not the future tokens.

This way, the model learns an inner representation of the English language that can then be used to extract features

useful for downstream tasks. The model is best at what it was pretrained for however, which is generating texts from a

prompt.

## Details of the dataset 📚

CommonGen is a constrained text generation task, associated with a benchmark dataset, to explicitly test machines for the ability of generative commonsense reasoning. Given a set of common concepts; the task is to generate a coherent sentence describing an everyday scenario using these concepts.

CommonGen is challenging because it inherently requires 1) relational reasoning using background commonsense knowledge, and 2) compositional generalization ability to work on unseen concept combinations. Our dataset, constructed through a combination of crowd-sourcing from AMT and existing caption corpora, consists of 30k concept-sets and 50k sentences in total.

| Dataset | Split | # samples |

| -------- | ----- | --------- |

| common_gen | train | 67389 |

| common_gen | valid | 4018 |

| common_gen | test | 1497 |

## Model fine-tuning 🏋️

You can find the fine-tuning script [here](https://github.com/huggingface/transformers/tree/master/examples/language-modeling)

## Model in Action 🚀

```bash

python ./transformers/examples/text-generation/run_generation.py \

--model_type=gpt2 \

--model_name_or_path="mrm8488/GPT-2-finetuned-common_gen" \

--num_return_sequences 1 \

--prompt "<|endoftext|> kid, room, dance:" \

--stop_token "."

```

> Created by [Manuel Romero/@mrm8488](https://twitter.com/mrm8488) | [LinkedIn](https://www.linkedin.com/in/manuel-romero-cs/)

> Made with <span style="color: #e25555;">♥</span> in Spain

|

mrm8488/GPT-2-finetuned-CORD19

|

mrm8488

| 2021-05-23T10:09:38Z | 6 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail:

---

# GPT-2 + CORD19 dataset : 🦠 ✍ ⚕

**GPT-2** fine-tuned on **biorxiv_medrxiv**, **comm_use_subset** and **custom_license** files from [CORD-19](https://www.kaggle.com/allen-institute-for-ai/CORD-19-research-challenge) dataset.

## Datasets details

| Dataset | # Files |

| ---------------------- | ----- |

| biorxiv_medrxiv | 885 |

| comm_use_subset | 9K |

| custom_license | 20.6K |

## Model training

The model was trained on a Tesla P100 GPU and 25GB of RAM with the following command:

```bash

export TRAIN_FILE=/path/to/dataset/train.txt

python run_language_modeling.py \

--model_type gpt2 \

--model_name_or_path gpt2 \

--do_train \

--train_data_file $TRAIN_FILE \

--num_train_epochs 4 \

--output_dir model_output \

--overwrite_output_dir \

--save_steps 10000 \

--per_gpu_train_batch_size 3

```

<img alt="training loss" src="https://svgshare.com/i/JTf.svg' title='GTP-2-finetuned-CORDS19-loss" width="600" height="300" />

## Model in action / Example of usage ✒

You can get the following script [here](https://github.com/huggingface/transformers/blob/master/examples/text-generation/run_generation.py)

```bash

python run_generation.py \

--model_type gpt2 \

--model_name_or_path mrm8488/GPT-2-finetuned-CORD19 \

--length 200

```

```txt

# Input: the effects of COVID-19 on the lungs

# Output: === GENERATED SEQUENCE 1 ===

the effects of COVID-19 on the lungs are currently debated (86). The role of this virus in the pathogenesis of pneumonia and lung cancer is still debated. MERS-CoV is also known to cause acute respiratory distress syndrome (87) and is associated with increased expression of pulmonary fibrosis markers (88). Thus, early airway inflammation may play an important role in the pathogenesis of coronavirus pneumonia and may contribute to the severe disease and/or mortality observed in coronavirus patients.

Pneumonia is an acute, often fatal disease characterized by severe edema, leakage of oxygen and bronchiolar inflammation. Viruses include coronaviruses, and the role of oxygen depletion is complicated by lung injury and fibrosis in the lung, in addition to susceptibility to other lung diseases. The progression of the disease may be variable, depending on the lung injury, pathologic role, prognosis, and the immune status of the patient. Inflammatory responses to respiratory viruses cause various pathologies of the respiratory

```

> Created by [Manuel Romero/@mrm8488](https://twitter.com/mrm8488) | [LinkedIn](https://www.linkedin.com/in/manuel-romero-cs/)

> Made with <span style="color: #e25555;">♥</span> in Spain

|

mofawzy/gpt-2-goodreads-ar

|

mofawzy

| 2021-05-23T09:53:17Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

### Generate Arabic reviews sentences with model GPT-2 Medium.

#### Load model

```

from transformers import AutoTokenizer, AutoModelWithLMHead

tokenizer = AutoTokenizer.from_pretrained("mofawzy/gpt-2-medium-ar")

model = AutoModelWithLMHead.from_pretrained("mofawzy/gpt-2-medium-ar")

```

### Eval:

```

***** eval metrics *****

epoch = 20.0

eval_loss = 1.7798

eval_mem_cpu_alloc_delta = 3MB

eval_mem_cpu_peaked_delta = 0MB

eval_mem_gpu_alloc_delta = 0MB

eval_mem_gpu_peaked_delta = 7044MB

eval_runtime = 0:03:03.37

eval_samples = 527

eval_samples_per_second = 2.874

perplexity = 5.9285

```

#### Notebook:

https://colab.research.google.com/drive/1P0Raqrq0iBLNH87DyN9j0SwWg4C2HubV?usp=sharing

|

microsoft/DialogRPT-width

|

microsoft

| 2021-05-23T09:20:20Z | 41 | 1 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-classification",

"arxiv:2009.06978",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-03-02T23:29:05Z |

# Demo

Please try this [➤➤➤ Colab Notebook Demo (click me!)](https://colab.research.google.com/drive/1cAtfkbhqsRsT59y3imjR1APw3MHDMkuV?usp=sharing)

| Context | Response | `width` score |

| :------ | :------- | :------------: |

| I love NLP! | Can anyone recommend a nice review paper? | 0.701 |

| I love NLP! | Me too! | 0.029 |

The `width` score predicts how likely the response is getting replied.

# DialogRPT-width

### Dialog Ranking Pretrained Transformers

> How likely a dialog response is upvoted 👍 and/or gets replied 💬?

This is what [**DialogRPT**](https://github.com/golsun/DialogRPT) is learned to predict.

It is a set of dialog response ranking models proposed by [Microsoft Research NLP Group](https://www.microsoft.com/en-us/research/group/natural-language-processing/) trained on 100 + millions of human feedback data.

It can be used to improve existing dialog generation model (e.g., [DialoGPT](https://huggingface.co/microsoft/DialoGPT-medium)) by re-ranking the generated response candidates.

Quick Links:

* [EMNLP'20 Paper](https://arxiv.org/abs/2009.06978/)

* [Dataset, training, and evaluation](https://github.com/golsun/DialogRPT)

* [Colab Notebook Demo](https://colab.research.google.com/drive/1cAtfkbhqsRsT59y3imjR1APw3MHDMkuV?usp=sharing)

We considered the following tasks and provided corresponding pretrained models.

|Task | Description | Pretrained model |

| :------------- | :----------- | :-----------: |

| **Human feedback** | **given a context and its two human responses, predict...**|

| `updown` | ... which gets more upvotes? | [model card](https://huggingface.co/microsoft/DialogRPT-updown) |

| `width`| ... which gets more direct replies? | this model |

| `depth`| ... which gets longer follow-up thread? | [model card](https://huggingface.co/microsoft/DialogRPT-depth) |

| **Human-like** (human vs fake) | **given a context and one human response, distinguish it with...** |

| `human_vs_rand`| ... a random human response | [model card](https://huggingface.co/microsoft/DialogRPT-human-vs-rand) |

| `human_vs_machine`| ... a machine generated response | [model card](https://huggingface.co/microsoft/DialogRPT-human-vs-machine) |

### Contact:

Please create an issue on [our repo](https://github.com/golsun/DialogRPT)

### Citation:

```

@inproceedings{gao2020dialogrpt,

title={Dialogue Response RankingTraining with Large-Scale Human Feedback Data},

author={Xiang Gao and Yizhe Zhang and Michel Galley and Chris Brockett and Bill Dolan},

year={2020},

booktitle={EMNLP}

}

```

|

microsoft/DialogRPT-updown

|

microsoft

| 2021-05-23T09:19:13Z | 2,559 | 10 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-classification",

"arxiv:2009.06978",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-03-02T23:29:05Z |

# Demo

Please try this [➤➤➤ Colab Notebook Demo (click me!)](https://colab.research.google.com/drive/1cAtfkbhqsRsT59y3imjR1APw3MHDMkuV?usp=sharing)

| Context | Response | `updown` score |

| :------ | :------- | :------------: |

| I love NLP! | Here’s a free textbook (URL) in case anyone needs it. | 0.613 |

| I love NLP! | Me too! | 0.111 |

The `updown` score predicts how likely the response is getting upvoted.

# DialogRPT-updown

### Dialog Ranking Pretrained Transformers

> How likely a dialog response is upvoted 👍 and/or gets replied 💬?

This is what [**DialogRPT**](https://github.com/golsun/DialogRPT) is learned to predict.

It is a set of dialog response ranking models proposed by [Microsoft Research NLP Group](https://www.microsoft.com/en-us/research/group/natural-language-processing/) trained on 100 + millions of human feedback data.

It can be used to improve existing dialog generation model (e.g., [DialoGPT](https://huggingface.co/microsoft/DialoGPT-medium)) by re-ranking the generated response candidates.

Quick Links:

* [EMNLP'20 Paper](https://arxiv.org/abs/2009.06978/)

* [Dataset, training, and evaluation](https://github.com/golsun/DialogRPT)

* [Colab Notebook Demo](https://colab.research.google.com/drive/1cAtfkbhqsRsT59y3imjR1APw3MHDMkuV?usp=sharing)

We considered the following tasks and provided corresponding pretrained models. This page is for the `updown` task, and other model cards can be found in table below.

|Task | Description | Pretrained model |

| :------------- | :----------- | :-----------: |

| **Human feedback** | **given a context and its two human responses, predict...**|

| `updown` | ... which gets more upvotes? | this model |

| `width`| ... which gets more direct replies? | [model card](https://huggingface.co/microsoft/DialogRPT-width) |

| `depth`| ... which gets longer follow-up thread? | [model card](https://huggingface.co/microsoft/DialogRPT-depth) |

| **Human-like** (human vs fake) | **given a context and one human response, distinguish it with...** |

| `human_vs_rand`| ... a random human response | [model card](https://huggingface.co/microsoft/DialogRPT-human-vs-rand) |

| `human_vs_machine`| ... a machine generated response | [model card](https://huggingface.co/microsoft/DialogRPT-human-vs-machine) |

### Contact:

Please create an issue on [our repo](https://github.com/golsun/DialogRPT)

### Citation:

```

@inproceedings{gao2020dialogrpt,

title={Dialogue Response RankingTraining with Large-Scale Human Feedback Data},

author={Xiang Gao and Yizhe Zhang and Michel Galley and Chris Brockett and Bill Dolan},

year={2020},

booktitle={EMNLP}

}

```

|

microsoft/DialogRPT-human-vs-rand

|

microsoft

| 2021-05-23T09:18:07Z | 36 | 9 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-classification",

"arxiv:2009.06978",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-03-02T23:29:05Z |

# Demo

Please try this [➤➤➤ Colab Notebook Demo (click me!)](https://colab.research.google.com/drive/1cAtfkbhqsRsT59y3imjR1APw3MHDMkuV?usp=sharing)

| Context | Response | `human_vs_rand` score |

| :------ | :------- | :------------: |

| I love NLP! | He is a great basketball player. | 0.027 |

| I love NLP! | Can you tell me how it works? | 0.754 |

| I love NLP! | Me too! | 0.631 |

The `human_vs_rand` score predicts how likely the response is corresponding to the given context, rather than a random response.

# DialogRPT-human-vs-rand

### Dialog Ranking Pretrained Transformers

> How likely a dialog response is upvoted 👍 and/or gets replied 💬?

This is what [**DialogRPT**](https://github.com/golsun/DialogRPT) is learned to predict.

It is a set of dialog response ranking models proposed by [Microsoft Research NLP Group](https://www.microsoft.com/en-us/research/group/natural-language-processing/) trained on 100 + millions of human feedback data.

It can be used to improve existing dialog generation model (e.g., [DialoGPT](https://huggingface.co/microsoft/DialoGPT-medium)) by re-ranking the generated response candidates.

Quick Links:

* [EMNLP'20 Paper](https://arxiv.org/abs/2009.06978/)

* [Dataset, training, and evaluation](https://github.com/golsun/DialogRPT)

* [Colab Notebook Demo](https://colab.research.google.com/drive/1cAtfkbhqsRsT59y3imjR1APw3MHDMkuV?usp=sharing)

We considered the following tasks and provided corresponding pretrained models.

|Task | Description | Pretrained model |

| :------------- | :----------- | :-----------: |

| **Human feedback** | **given a context and its two human responses, predict...**|

| `updown` | ... which gets more upvotes? | [model card](https://huggingface.co/microsoft/DialogRPT-updown) |

| `width`| ... which gets more direct replies? | [model card](https://huggingface.co/microsoft/DialogRPT-width) |

| `depth`| ... which gets longer follow-up thread? | [model card](https://huggingface.co/microsoft/DialogRPT-depth) |

| **Human-like** (human vs fake) | **given a context and one human response, distinguish it with...** |

| `human_vs_rand`| ... a random human response | this model |

| `human_vs_machine`| ... a machine generated response | [model card](https://huggingface.co/microsoft/DialogRPT-human-vs-machine) |

### Contact:

Please create an issue on [our repo](https://github.com/golsun/DialogRPT)

### Citation:

```

@inproceedings{gao2020dialogrpt,

title={Dialogue Response RankingTraining with Large-Scale Human Feedback Data},

author={Xiang Gao and Yizhe Zhang and Michel Galley and Chris Brockett and Bill Dolan},

year={2020},

booktitle={EMNLP}

}

```

|

microsoft/DialogRPT-depth

|

microsoft

| 2021-05-23T09:15:24Z | 57 | 5 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-classification",

"arxiv:2009.06978",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-03-02T23:29:05Z |

# Demo

Please try this [➤➤➤ Colab Notebook Demo (click me!)](https://colab.research.google.com/drive/1cAtfkbhqsRsT59y3imjR1APw3MHDMkuV?usp=sharing)

| Context | Response | `depth` score |

| :------ | :------- | :------------: |

| I love NLP! | Can anyone recommend a nice review paper? | 0.724 |

| I love NLP! | Me too! | 0.032 |

The `depth` score predicts how likely the response is getting a long follow-up discussion thread.

# DialogRPT-depth

### Dialog Ranking Pretrained Transformers

> How likely a dialog response is upvoted 👍 and/or gets replied 💬?

This is what [**DialogRPT**](https://github.com/golsun/DialogRPT) is learned to predict.

It is a set of dialog response ranking models proposed by [Microsoft Research NLP Group](https://www.microsoft.com/en-us/research/group/natural-language-processing/) trained on 100 + millions of human feedback data.

It can be used to improve existing dialog generation model (e.g., [DialoGPT](https://huggingface.co/microsoft/DialoGPT-medium)) by re-ranking the generated response candidates.

Quick Links:

* [EMNLP'20 Paper](https://arxiv.org/abs/2009.06978/)

* [Dataset, training, and evaluation](https://github.com/golsun/DialogRPT)

* [Colab Notebook Demo](https://colab.research.google.com/drive/1cAtfkbhqsRsT59y3imjR1APw3MHDMkuV?usp=sharing)

We considered the following tasks and provided corresponding pretrained models.

|Task | Description | Pretrained model |

| :------------- | :----------- | :-----------: |

| **Human feedback** | **given a context and its two human responses, predict...**|

| `updown` | ... which gets more upvotes? | [model card](https://huggingface.co/microsoft/DialogRPT-updown) |

| `width`| ... which gets more direct replies? | [model card](https://huggingface.co/microsoft/DialogRPT-width) |

| `depth`| ... which gets longer follow-up thread? | this model |

| **Human-like** (human vs fake) | **given a context and one human response, distinguish it with...** |

| `human_vs_rand`| ... a random human response | [model card](https://huggingface.co/microsoft/DialogRPT-human-vs-rand) |

| `human_vs_machine`| ... a machine generated response | [model card](https://huggingface.co/microsoft/DialogRPT-human-vs-machine) |

### Contact:

Please create an issue on [our repo](https://github.com/golsun/DialogRPT)

### Citation:

```

@inproceedings{gao2020dialogrpt,

title={Dialogue Response RankingTraining with Large-Scale Human Feedback Data},

author={Xiang Gao and Yizhe Zhang and Michel Galley and Chris Brockett and Bill Dolan},

year={2020},

booktitle={EMNLP}

}

```

|

model-attribution-challenge/DialoGPT-large

|

model-attribution-challenge

| 2021-05-23T09:06:08Z | 3 | 1 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"jax",

"gpt2",

"text-generation",

"conversational",

"arxiv:1911.00536",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-07-26T13:35:04Z |

---

thumbnail: https://huggingface.co/front/thumbnails/dialogpt.png

tags:

- conversational

license: mit

---

## A State-of-the-Art Large-scale Pretrained Response generation model (DialoGPT)

DialoGPT is a SOTA large-scale pretrained dialogue response generation model for multiturn conversations.

The [human evaluation results](https://github.com/dreasysnail/Dialogpt_dev#human-evaluation) indicate that the response generated from DialoGPT is comparable to human response quality under a single-turn conversation Turing test.

The model is trained on 147M multi-turn dialogue from Reddit discussion thread.

* Multi-turn generation examples from an interactive environment:

|Role | Response |

|---------|--------|

|User | Does money buy happiness? |

| Bot | Depends how much money you spend on it .|

|User | What is the best way to buy happiness ? |

| Bot | You just have to be a millionaire by your early 20s, then you can be happy . |

|User |This is so difficult ! |

| Bot | You have no idea how hard it is to be a millionaire and happy . There is a reason the rich have a lot of money |

Please find the information about preprocessing, training and full details of the DialoGPT in the [original DialoGPT repository](https://github.com/microsoft/DialoGPT)

ArXiv paper: [https://arxiv.org/abs/1911.00536](https://arxiv.org/abs/1911.00536)

### How to use

Now we are ready to try out how the model works as a chatting partner!

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

import torch

tokenizer = AutoTokenizer.from_pretrained("microsoft/DialoGPT-large")

model = AutoModelForCausalLM.from_pretrained("microsoft/DialoGPT-large")

# Let's chat for 5 lines

for step in range(5):

# encode the new user input, add the eos_token and return a tensor in Pytorch

new_user_input_ids = tokenizer.encode(input(">> User:") + tokenizer.eos_token, return_tensors='pt')

# append the new user input tokens to the chat history

bot_input_ids = torch.cat([chat_history_ids, new_user_input_ids], dim=-1) if step > 0 else new_user_input_ids

# generated a response while limiting the total chat history to 1000 tokens,

chat_history_ids = model.generate(bot_input_ids, max_length=1000, pad_token_id=tokenizer.eos_token_id)

# pretty print last ouput tokens from bot

print("DialoGPT: {}".format(tokenizer.decode(chat_history_ids[:, bot_input_ids.shape[-1]:][0], skip_special_tokens=True)))

```

|

lvwerra/gpt2-imdb

|

lvwerra

| 2021-05-23T08:38:34Z | 44,499 | 16 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

# GPT2-IMDB

## What is it?

A GPT2 (`gpt2`) language model fine-tuned on the [IMDB dataset](https://www.kaggle.com/lakshmi25npathi/imdb-dataset-of-50k-movie-reviews).

## Training setting

The GPT2 language model was fine-tuned for 1 epoch on the IMDB dataset. All comments were joined into a single text file separated by the EOS token:

```

import pandas as pd

df = pd.read_csv("imdb-dataset.csv")

imdb_str = " <|endoftext|> ".join(df['review'].tolist())

with open ('imdb.txt', 'w') as f:

f.write(imdb_str)

```

To train the model the `run_language_modeling.py` script in the `transformer` library was used:

```

python run_language_modeling.py

--train_data_file imdb.txt

--output_dir gpt2-imdb

--model_type gpt2

--model_name_or_path gpt2

```

|

lvwerra/gpt2-imdb-ctrl

|

lvwerra

| 2021-05-23T08:37:09Z | 6 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z |

# GPT2-IMDB-ctrl

## What is it?

A small GPT2 (`lvwerra/gpt2-imdb`) language model fine-tuned to produce controlled movie reviews based the [IMDB dataset](https://www.kaggle.com/lakshmi25npathi/imdb-dataset-of-50k-movie-reviews). The model is trained with rewards from a BERT sentiment classifier (`lvwerra/bert-imdb`) via PPO.

## Training setting

The model was trained for `200` optimisation steps with a batch size of `256` which corresponds to `51200` training samples. The full experiment setup can be found in the Jupyter notebook in the [trl repo](https://lvwerra.github.io/trl/05-gpt2-sentiment-ppo-training/). The strings `"[negative]"`, `"[neutral]"`, and `"[positive]"` are added at the beginning of the query to control the sentiment.

## Examples

A few examples of the model response to a query before and after optimisation:

| query | response [negative] | rewards [negative] | response [neutral] | rewards [neutral] | response [positive] | rewards [positive] |

|-------|---------------------|--------------------|--------------------|-------------------|---------------------|--------------------|

|I watched this movie when|it was released and was awful. Little bit of ...|3.130034|it was released and it was the first movie I ...|-1.351991|I was younger it was wonderful. The new play ...|4.232218|

|I can remember seeing this|movie in 2008, and I was so disappointed...yo...|3.428725|in support groups, which I think was not as i...|0.213288|movie, and it is one of my favorite movies ev...|4.168838|

|This 1970 hit film has|little resonance. This movie is bad, not only...|4.241872|a bit of Rocket power.783287. It can be easil...|0.849278|the best formula for comedy and is't just jus...|4.208804|

|

lucone83/deep-metal

|

lucone83

| 2021-05-23T08:36:22Z | 8 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"jax",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

## Model description

**DeepMetal** is a model capable of generating lyrics taylored for heavy metal songs.

The model is based on the [OpenAI GPT-2](https://huggingface.co/gpt2) and has been finetuned on a dataset of 141,718 heavy metal songs lyrics.

More info about the project can be found in the [official GitHub repository](https://github.com/lucone83/deep-metal) and in the related articles on my blog: [part I](https://blog.lucaballore.com/when-heavy-metal-meets-data-science-2e840897922e), [part II](https://blog.lucaballore.com/when-heavy-metal-meets-data-science-3fc32e9096fa) and [part III](https://blog.lucaballore.com/when-heavy-metal-meets-data-science-episode-iii-9f6e4772847e).

### Legal notes

Due to incertainity about legal rights, the dataset used for training the model is not provided. I hope you'll understand. The lyrics in question have been scraped from the website [DarkLyrics](http://www.darklyrics.com/) using the library [metal-parser](https://github.com/lucone83/metal-parser).

## Intended uses and limitations

The model is released under the [Apache 2.0 license](https://www.apache.org/licenses/LICENSE-2.0). You can use the raw model for lyrics generation or fine-tune it further to a downstream task.

The model is capable to generate **explicit lyrics**. It is a consequence of a fine-tuning made with a dataset that contained such lyrics, which are part of the discography of many heavy metal bands. Be aware of that before you use the model, the author is **not liable for any emotional response and following consequences**.

## How to use

You can use this model directly with a pipeline for text generation. Since the generation relies on some randomness, it could be good to set a seed for reproducibility:

```python

>>> from transformers import pipeline, set_seed

>>> generator = pipeline('text-generation', model='lucone83/deep-metal', device=-1) # to use GPU, set device=<CUDA_device_ordinal>

>>> set_seed(42)

>>> generator(

"End of passion play",

num_return_sequences=1,

max_length=256,

min_length=128,

top_p=0.97,

top_k=0,

temperature=0.90

)

[{'generated_text': "End of passion play for you\nFrom the spiritual to the mental\nIt is hard to see it all\nBut let's not end up all be\nTill we see the fruits of our deeds\nAnd see the fruits of our suffering\nLet's start a fight for victory\nIt was our birthright\nIt was our call\nIt was our call\nIt was our call\nIt was our call\nIt was our call\nIt was our call\nIt was our call\nIt was our call\nIt was our call\nIt was our call\nIt was our call\nIt was our call \nLike a typhoon upon your doorstep\nYou're a victim of own misery\nAnd the god has made you pay\n\nWe are the wolves\nWe will not stand the pain\nWe are the wolves\nWe will never give up\nWe are the wolves\nWe will not leave\nWe are the wolves\nWe will never grow old\nWe are the wolves\nWe will never grow old "}]

```

Of course, it's possible to play with parameters like `top_k`, `top_p`, `temperature`, `max_length` and all the other parameters included in the `generate` method. Please look at the [documentation](https://huggingface.co/transformers/main_classes/model.html?highlight=generate#transformers.generation_utils.GenerationMixin.generate) for further insights.

Here is how to use this model to get the features of a given text in PyTorch:

```python

from transformers import GPT2Tokenizer, GPT2Model

tokenizer = GPT2Tokenizer.from_pretrained('lucone83/deep-metal')

model = GPT2Model.from_pretrained('lucone83/deep-metal')

text = "Replace me by any text you'd like."

encoded_input = tokenizer(text, return_tensors='pt')

output_features = model(**encoded_input)

```

and in TensorFlow:

```python

from transformers import GPT2Tokenizer, TFGPT2Model

tokenizer = GPT2Tokenizer.from_pretrained('lucone83/deep-metal')

model = TFGPT2Model.from_pretrained('lucone83/deep-metal')

text = "Replace me by any text you'd like."

encoded_input = tokenizer(text, return_tensors='tf')

output_features = model(encoded_input)

```

## Model training

The dataset used for training this model contained 141,718 heavy metal songs lyrics.

The model has been trained using an NVIDIA Tesla T4 with 16 GB, using the following command:

```bash

python run_language_modeling.py \

--output_dir=$OUTPUT_DIR \

--model_type=gpt2 \

--model_name_or_path=gpt2 \

--do_train \

--train_data_file=$TRAIN_FILE \

--do_eval \

--eval_data_file=$VALIDATION_FILE \

--per_device_train_batch_size=3 \

--per_device_eval_batch_size=3 \

--evaluate_during_training \

--learning_rate=1e-5 \

--num_train_epochs=20 \

--logging_steps=3000 \

--save_steps=3000 \

--gradient_accumulation_steps=3

```

To checkout the code related to training and testing, please look at the [GitHub repository](https://github.com/lucone83/deep-metal) of the project.

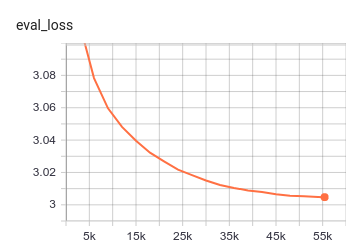

## Evaluation results

The model achieves the following results:

```bash

{

'eval_loss': 3.0047452173826406,

'epoch': 19.99987972095261,

'total_flos': 381377736125448192,

'step': 55420

}

perplexity = 20.18107365414611

```

|

lighteternal/gpt2-finetuned-greek

|

lighteternal

| 2021-05-23T08:33:11Z | 261 | 6 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"causal-lm",

"el",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language:

- el

tags:

- pytorch

- causal-lm

widget:

- text: "Το αγαπημένο μου μέρος είναι"

license: apache-2.0

---

# Greek (el) GPT2 model

<img src="https://huggingface.co/lighteternal/gpt2-finetuned-greek-small/raw/main/GPT2el.png" width="600"/>

### By the Hellenic Army Academy (SSE) and the Technical University of Crete (TUC)

* language: el

* licence: apache-2.0

* dataset: ~23.4 GB of Greek corpora

* model: GPT2 (12-layer, 768-hidden, 12-heads, 117M parameters. OpenAI GPT-2 English model, finetuned for the Greek language)

* pre-processing: tokenization + BPE segmentation

* metrics: perplexity

### Model description