modelId

stringlengths 5

122

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC] | downloads

int64 0

738M

| likes

int64 0

11k

| library_name

stringclasses 245

values | tags

sequencelengths 1

4.05k

| pipeline_tag

stringclasses 48

values | createdAt

timestamp[us, tz=UTC] | card

stringlengths 1

901k

|

|---|---|---|---|---|---|---|---|---|---|

Sao10K/Zephyrus-L1-33B | Sao10K | 2023-11-18T04:34:17Z | 1,635 | 4 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"en",

"license:other",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-09-25T21:56:56Z | ---

language:

- en

license: other

---

some quants I guess: https://huggingface.co/Sao10K/Zephyrus-L1-33B-GGUF

Zephyrus v1 - A multi-merging of several Llama1 30B Models using newer Merging Methods seen after the release of Llama2, with a sprinking of an experimental LoRA on top.

The goal is to improve Writing Quality to match those seen in top L2 13B Models, while keeping the improved Logic and Spatial Awareness a 30B Model has. As usual, this is a Roleplay-focused Model, and I cannot test or verify its effectiveness as an Assistant or Tool.

Did I succeed? Partially. It's not the best partner for chatting, but I love it for storywriting and as my writing companion. It's fairly smart and spatially aware, and I've noticed no glaring issues so far.

While it may appear censored with zero input prompts, in actual Roleplay with a Character in SillyTavern, there are no issues even with NSFL topics as long as minimal context is there.

If you face any impersonation / dumb moments, a simple swipe or two fixes things.

It has its issues at times, yes, but this is my... first successful attempt. I'll try to work on more in the future.

SillyTavern Formats: simple-proxy-for-tavern in ST for Instruct Prompt, change to Default for Context Template.

<br>Ooba Presets I'd recommend are Kobold Godlike, NovelAI Best Guess, simple-proxy-for-tavern or Shortwave with 1.22 Temperature, from My Testing. Test them out, different RPs work best with different presets.

**REMEMBER TO SET CONTEXT AT 2048 OR IT WILL BREAK. THIS IS A LLAMA1 MODEL AFTER ALL.**

Most formats could work, but Alpaca works the best. Use simple proxy instead, works much better.

```

### Instruction:

Your instruction or question here.

For roleplay purposes, I suggest the following - Write <CHAR NAME>'s next reply in a chat between <YOUR NAME> and <CHAR NAME>. Write a single reply only.

### Response:

```

Support me [here](https://ko-fi.com/sao10k) :)

# [Open LLM Leaderboard Evaluation Results](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)

Detailed results can be found [here](https://huggingface.co/datasets/open-llm-leaderboard/details_Sao10K__Zephyrus-L1-33B)

| Metric | Value |

|-----------------------|---------------------------|

| Avg. | 55.79 |

| ARC (25-shot) | 64.51 |

| HellaSwag (10-shot) | 84.15 |

| MMLU (5-shot) | 57.37 |

| TruthfulQA (0-shot) | 53.87 |

| Winogrande (5-shot) | 80.19 |

| GSM8K (5-shot) | 23.58 |

| DROP (3-shot) | 26.89 |

|

Sao10K/BrainDerp2 | Sao10K | 2023-11-18T04:33:26Z | 1,635 | 1 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"en",

"license:llama2",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-09-29T15:26:57Z | ---

license: llama2

language:

- en

---

'We have EnSEmblEd toP RAnKeRs tO mAKE oUr mODeL.'

'This MoDeL hAS BeEN trAINED oN orCa-StyLe DatAsEtS.'

'***trAINed***' KEK

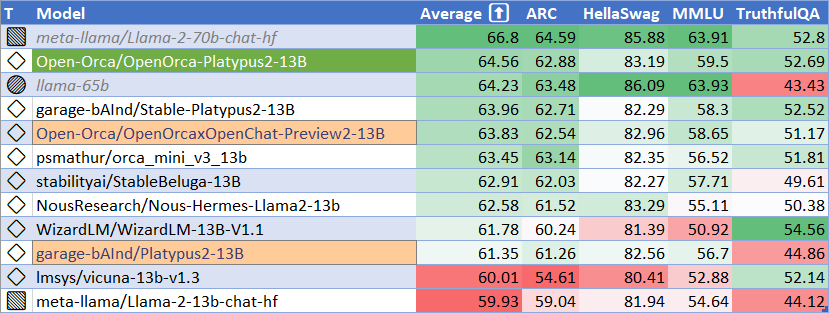

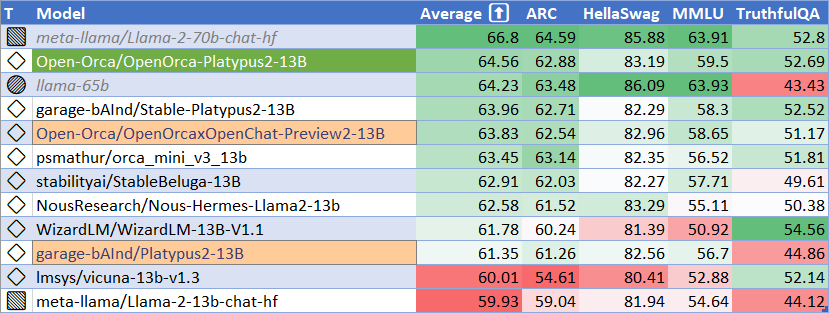

'We hAVE AchIEvEd TOp RAnKeR In thE leAdErBOArDs.'

Lmao its all bs, you're all running merge scripts like we do, literally trying to game the leaderboards huh? Pathetic lol. Literally 0 card info, copy pasted from llama2 base models, with no other goals than going for leaderboards.

Atleast merge with a goal, like come on. I'm going for RP, Undi's going for RP. Atleast make a believable goal.

Brainderp is exposing the frauds who lie about it lol. Atleast be honest if you're merging like us poor people, which I appreciate some models do.

for BrainDerp, I have 'ensembled' several random models in the leaderboard to create this model.

i can easily steal the top leaderboard spots but meh not worth the effort.

that's it. i didn't bother testing much. ymmv.

<img src="https://blog.cdn.own3d.tv/resize=fit:crop,height:400,width:600/tbv2RYWpReqNtof2dD0U" style="width: 70%; min-width: 300px; display: block; margin: auto;">

# [Open LLM Leaderboard Evaluation Results](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)

Detailed results can be found [here](https://huggingface.co/datasets/open-llm-leaderboard/details_Sao10K__BrainDerp2)

| Metric | Value |

|-----------------------|---------------------------|

| Avg. | 51.18 |

| ARC (25-shot) | 60.92 |

| HellaSwag (10-shot) | 81.94 |

| MMLU (5-shot) | 58.9 |

| TruthfulQA (0-shot) | 57.19 |

| Winogrande (5-shot) | 75.93 |

| GSM8K (5-shot) | 9.02 |

| DROP (3-shot) | 14.34 |

|

wei123602/Llama-2-13b-FINETUNE4_TEST3 | wei123602 | 2023-09-30T03:02:00Z | 1,635 | 0 | transformers | [

"transformers",

"pytorch",

"llama",

"text-generation",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-09-30T02:38:57Z | Entry not found |

migtissera/Tess-M-v1.1 | migtissera | 2023-11-24T18:49:17Z | 1,635 | 7 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"license:other",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-11-22T03:23:34Z | ---

license: other

license_name: yi-34b

license_link: https://huggingface.co/01-ai/Yi-34B/blob/main/LICENSE

---

# Note:

This version is experimental and have been depracated. Please use the stable release Tess-M-v1.3: https://huggingface.co/migtissera/Tess-M-v1.3

# Tess

Tess, short for Tessoro/Tessoso, is a general purpose Large Language Model series. Tess-M-v1.1 was trained on the Yi-34B-200K base.

# Prompt Format:

```

SYSTEM: <ANY SYSTEM CONTEXT>

USER:

ASSISTANT:

```

|

digiplay/majicMIX_lux_v3 | digiplay | 2024-03-12T20:47:33Z | 1,635 | 3 | diffusers | [

"diffusers",

"safetensors",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"license:other",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | 2024-03-12T20:39:49Z | ---

license: other

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

inference: true

---

Model info :

https://civitai.com/models/56967/majicmix-lux

https://civitai.com/models/56967?modelVersionId=286238

|

brazilianslib/Llama-3-8B-Dolphin-Portuguese-v0.3-Q8_0-GGUF | brazilianslib | 2024-06-27T15:27:39Z | 1,635 | 0 | transformers | [

"transformers",

"gguf",

"llama-cpp",

"gguf-my-repo",

"pt",

"dataset:adalbertojunior/dolphin_portuguese_legal",

"base_model:adalbertojunior/Llama-3-8B-Dolphin-Portuguese-v0.3",

"model-index",

"endpoints_compatible",

"region:us"

] | null | 2024-06-27T15:27:01Z | ---

base_model: adalbertojunior/Llama-3-8B-Dolphin-Portuguese-v0.3

datasets:

- adalbertojunior/dolphin_portuguese_legal

language:

- pt

library_name: transformers

tags:

- llama-cpp

- gguf-my-repo

model-index:

- name: Llama-3-8B-Dolphin-Portuguese-v0.3

results:

- task:

type: text-generation

name: Text Generation

dataset:

name: ENEM Challenge (No Images)

type: eduagarcia/enem_challenge

split: train

args:

num_few_shot: 3

metrics:

- type: acc

value: 68.86

name: accuracy

source:

url: https://huggingface.co/spaces/eduagarcia/open_pt_llm_leaderboard?query=adalbertojunior/Llama-3-8B-Dolphin-Portuguese-v0.3

name: Open Portuguese LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: BLUEX (No Images)

type: eduagarcia-temp/BLUEX_without_images

split: train

args:

num_few_shot: 3

metrics:

- type: acc

value: 57.86

name: accuracy

source:

url: https://huggingface.co/spaces/eduagarcia/open_pt_llm_leaderboard?query=adalbertojunior/Llama-3-8B-Dolphin-Portuguese-v0.3

name: Open Portuguese LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: OAB Exams

type: eduagarcia/oab_exams

split: train

args:

num_few_shot: 3

metrics:

- type: acc

value: 61.91

name: accuracy

source:

url: https://huggingface.co/spaces/eduagarcia/open_pt_llm_leaderboard?query=adalbertojunior/Llama-3-8B-Dolphin-Portuguese-v0.3

name: Open Portuguese LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: Assin2 RTE

type: assin2

split: test

args:

num_few_shot: 15

metrics:

- type: f1_macro

value: 93.05

name: f1-macro

source:

url: https://huggingface.co/spaces/eduagarcia/open_pt_llm_leaderboard?query=adalbertojunior/Llama-3-8B-Dolphin-Portuguese-v0.3

name: Open Portuguese LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: Assin2 STS

type: eduagarcia/portuguese_benchmark

split: test

args:

num_few_shot: 15

metrics:

- type: pearson

value: 76.48

name: pearson

source:

url: https://huggingface.co/spaces/eduagarcia/open_pt_llm_leaderboard?query=adalbertojunior/Llama-3-8B-Dolphin-Portuguese-v0.3

name: Open Portuguese LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: FaQuAD NLI

type: ruanchaves/faquad-nli

split: test

args:

num_few_shot: 15

metrics:

- type: f1_macro

value: 76.78

name: f1-macro

source:

url: https://huggingface.co/spaces/eduagarcia/open_pt_llm_leaderboard?query=adalbertojunior/Llama-3-8B-Dolphin-Portuguese-v0.3

name: Open Portuguese LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: HateBR Binary

type: ruanchaves/hatebr

split: test

args:

num_few_shot: 25

metrics:

- type: f1_macro

value: 83.25

name: f1-macro

source:

url: https://huggingface.co/spaces/eduagarcia/open_pt_llm_leaderboard?query=adalbertojunior/Llama-3-8B-Dolphin-Portuguese-v0.3

name: Open Portuguese LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: PT Hate Speech Binary

type: hate_speech_portuguese

split: test

args:

num_few_shot: 25

metrics:

- type: f1_macro

value: 68.85

name: f1-macro

source:

url: https://huggingface.co/spaces/eduagarcia/open_pt_llm_leaderboard?query=adalbertojunior/Llama-3-8B-Dolphin-Portuguese-v0.3

name: Open Portuguese LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: tweetSentBR

type: eduagarcia/tweetsentbr_fewshot

split: test

args:

num_few_shot: 25

metrics:

- type: f1_macro

value: 71.3

name: f1-macro

source:

url: https://huggingface.co/spaces/eduagarcia/open_pt_llm_leaderboard?query=adalbertojunior/Llama-3-8B-Dolphin-Portuguese-v0.3

name: Open Portuguese LLM Leaderboard

---

# brazilianslib/Llama-3-8B-Dolphin-Portuguese-v0.3-Q8_0-GGUF

This model was converted to GGUF format from [`adalbertojunior/Llama-3-8B-Dolphin-Portuguese-v0.3`](https://huggingface.co/adalbertojunior/Llama-3-8B-Dolphin-Portuguese-v0.3) using llama.cpp via the ggml.ai's [GGUF-my-repo](https://huggingface.co/spaces/ggml-org/gguf-my-repo) space.

Refer to the [original model card](https://huggingface.co/adalbertojunior/Llama-3-8B-Dolphin-Portuguese-v0.3) for more details on the model.

## Use with llama.cpp

Install llama.cpp through brew (works on Mac and Linux)

```bash

brew install llama.cpp

```

Invoke the llama.cpp server or the CLI.

### CLI:

```bash

llama-cli --hf-repo brazilianslib/Llama-3-8B-Dolphin-Portuguese-v0.3-Q8_0-GGUF --hf-file llama-3-8b-dolphin-portuguese-v0.3-q8_0.gguf -p "The meaning to life and the universe is"

```

### Server:

```bash

llama-server --hf-repo brazilianslib/Llama-3-8B-Dolphin-Portuguese-v0.3-Q8_0-GGUF --hf-file llama-3-8b-dolphin-portuguese-v0.3-q8_0.gguf -c 2048

```

Note: You can also use this checkpoint directly through the [usage steps](https://github.com/ggerganov/llama.cpp?tab=readme-ov-file#usage) listed in the Llama.cpp repo as well.

Step 1: Clone llama.cpp from GitHub.

```

git clone https://github.com/ggerganov/llama.cpp

```

Step 2: Move into the llama.cpp folder and build it with `LLAMA_CURL=1` flag along with other hardware-specific flags (for ex: LLAMA_CUDA=1 for Nvidia GPUs on Linux).

```

cd llama.cpp && LLAMA_CURL=1 make

```

Step 3: Run inference through the main binary.

```

./llama-cli --hf-repo brazilianslib/Llama-3-8B-Dolphin-Portuguese-v0.3-Q8_0-GGUF --hf-file llama-3-8b-dolphin-portuguese-v0.3-q8_0.gguf -p "The meaning to life and the universe is"

```

or

```

./llama-server --hf-repo brazilianslib/Llama-3-8B-Dolphin-Portuguese-v0.3-Q8_0-GGUF --hf-file llama-3-8b-dolphin-portuguese-v0.3-q8_0.gguf -c 2048

```

|

togethercomputer/GPT-JT-6B-v0 | togethercomputer | 2022-11-24T09:07:01Z | 1,634 | 2 | transformers | [

"transformers",

"pytorch",

"gptj",

"text-generation",

"gpt",

"en",

"dataset:natural_instructions",

"dataset:the_pile",

"dataset:cot",

"dataset:Muennighoff/P3",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-generation | 2022-11-22T12:26:50Z | ---

language:

- en

datasets:

- natural_instructions

- the_pile

- cot

- Muennighoff/P3

tags:

- gpt

pipeline_tag: text-generation

inference:

parameters:

temperature: 0.1

widget:

- text: "Is this review positive or negative? Review: Best cast iron skillet you will ever buy. Answer:"

example_title: "Sentiment analysis"

- text: "Where is Zurich? Ans:"

example_title: "Question Answering"

---

# Quick Start

```python

from transformers import pipeline

pipe = pipeline(model='togethercomputer/GPT-JT-6B-v0')

pipe("Where is Zurich? Ans:")

``` |

kevinpro/Vicuna-13B-CoT | kevinpro | 2023-06-04T08:05:43Z | 1,634 | 4 | transformers | [

"transformers",

"pytorch",

"llama",

"text-generation",

"code",

"en",

"dataset:QingyiSi/Alpaca-CoT",

"arxiv:1910.09700",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-06-01T03:12:22Z | ---

datasets:

- QingyiSi/Alpaca-CoT

language:

- en

library_name: transformers

pipeline_tag: text-generation

tags:

- code

---

# Model Card for Model ID

SFT to enhance the CoT capabiliy of Vicuna

If you find the model helpful, please click "like" to support us.

We also welcome feedback on your usage experience and any issues you encounter in the issues section.

Another 7B version: https://huggingface.co/kevinpro/Vicuna-7B-CoT

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

- **Developed by:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Data Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Data Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

|

Fredithefish/RedPajama-INCITE-Chat-3B-ShareGPT-11K | Fredithefish | 2023-06-06T16:36:59Z | 1,634 | 2 | transformers | [

"transformers",

"pytorch",

"gpt_neox",

"text-generation",

"en",

"dataset:Fredithefish/ShareGPT-Unfiltered-RedPajama-Chat-format",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-06-06T16:24:52Z | ---

license: apache-2.0

datasets:

- Fredithefish/ShareGPT-Unfiltered-RedPajama-Chat-format

language:

- en

inference: false

---

### Model Description

<a href="https://huggingface.co/togethercomputer/RedPajama-INCITE-Chat-3B-v1">RedPajama-INCITE-Chat-3B-v1</a> finetuned on 11K pairs from unfiltered ShareGPT dataset

- **Model type:** language model

- **Language(s) (NLP):** Engish

- **License:** apache-2.0

- **Finetuned from model:** RedPajama-INCITE-Chat-3B |

CobraMamba/mamba-gpt-3b | CobraMamba | 2023-07-28T06:42:23Z | 1,634 | 4 | transformers | [

"transformers",

"pytorch",

"safetensors",

"llama",

"text-generation",

"gpt",

"llm",

"large language model",

"en",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-06-12T06:08:57Z | ---

language:

- en

library_name: transformers

tags:

- gpt

- llm

- large language model

inference: false

thumbnail: >-

https://h2o.ai/etc.clientlibs/h2o/clientlibs/clientlib-site/resources/images/favicon.ico

license: apache-2.0

---

# Model Card

## Github

https://github.com/chi2liu/mamba-gpt-3b

| Metric | Value |

|-----------------------|-------|

| MMLU (5-shot) | 25.3 |

| ARC (25-shot) | 40.5 |

| HellaSwag (10-shot) | 64.9 |

| TruthfulQA (0-shot) | 37.1 |

| Avg. | 42.0 |

We use state-of-the-art [Language Model Evaluation Harness](https://github.com/EleutherAI/lm-evaluation-harness) to run the benchmark tests above.

## Summary

We have fine-tuned the open-lama model and surpassed the original model in multiple evaluation subtasks, making it currently the best performing 3B model with comparable performance to llama-7b

- Base model: [openlm-research/open_llama_3b](https://huggingface.co/openlm-research/open_llama_3b)

## Usage

To use the model with the `transformers` library on a machine with GPUs, first make sure you have the `transformers`, `accelerate` and `torch` libraries installed.

```bash

pip install transformers==4.29.2

pip install accelerate==0.19.0

pip install torch==2.0.0

```

```python

import torch

from transformers import pipeline

generate_text = pipeline(

model="CobraMamba/mamba-gpt-3b",

torch_dtype="auto",

trust_remote_code=True,

use_fast=False,

device_map={"": "cuda:0"},

)

res = generate_text(

"Why is drinking water so healthy?",

min_new_tokens=2,

max_new_tokens=1024,

do_sample=False,

num_beams=1,

temperature=float(0.3),

repetition_penalty=float(1.2),

renormalize_logits=True

)

print(res[0]["generated_text"])

```

You can print a sample prompt after the preprocessing step to see how it is feed to the tokenizer:

```python

print(generate_text.preprocess("Why is drinking water so healthy?")["prompt_text"])

```

```bash

<|prompt|>Why is drinking water so healthy?</s><|answer|>

```

Alternatively, you can download the mamba_gpt_pipeline.py, store it alongside your notebook, and construct the pipeline yourself from the loaded model and tokenizer. If the model and the tokenizer are fully supported in the `transformers` package, this will allow you to set `trust_remote_code=False`.

```python

import torch

from mamba_gpt_pipeline import MambaGPTTextGenerationPipeline

from transformers import AutoModelForCausalLM, AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained(

"CobraMamba/mamba-gpt-3b",

use_fast=False,

padding_side="left",

trust_remote_code=False,

)

model = AutoModelForCausalLM.from_pretrained(

"CobraMamba/mamba-gpt-3b",

torch_dtype="auto",

device_map={"": "cuda:0"},

trust_remote_code=False,

)

generate_text = MambaGPTTextGenerationPipeline(model=model, tokenizer=tokenizer)

res = generate_text(

"Why is drinking water so healthy?",

min_new_tokens=2,

max_new_tokens=1024,

do_sample=False,

num_beams=1,

temperature=float(0.3),

repetition_penalty=float(1.2),

renormalize_logits=True

)

print(res[0]["generated_text"])

```

You may also construct the pipeline from the loaded model and tokenizer yourself and consider the preprocessing steps:

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

model_name = "CobraMamba/mamba-gpt-3b" # either local folder or huggingface model name

# Important: The prompt needs to be in the same format the model was trained with.

# You can find an example prompt in the experiment logs.

prompt = "<|prompt|>How are you?</s><|answer|>"

tokenizer = AutoTokenizer.from_pretrained(

model_name,

use_fast=False,

trust_remote_code=False,

)

model = AutoModelForCausalLM.from_pretrained(

model_name,

torch_dtype="auto",

device_map={"": "cuda:0"},

trust_remote_code=False,

)

model.cuda().eval()

inputs = tokenizer(prompt, return_tensors="pt", add_special_tokens=False).to("cuda")

# generate configuration can be modified to your needs

tokens = model.generate(

**inputs,

min_new_tokens=2,

max_new_tokens=1024,

do_sample=False,

num_beams=1,

temperature=float(0.3),

repetition_penalty=float(1.2),

renormalize_logits=True

)[0]

tokens = tokens[inputs["input_ids"].shape[1]:]

answer = tokenizer.decode(tokens, skip_special_tokens=True)

print(answer)

```

## Model Architecture

```

LlamaForCausalLM(

(model): LlamaModel(

(embed_tokens): Embedding(32000, 4096, padding_idx=0)

(layers): ModuleList(

(0-31): 32 x LlamaDecoderLayer(

(self_attn): LlamaAttention(

(q_proj): Linear(in_features=4096, out_features=4096, bias=False)

(k_proj): Linear(in_features=4096, out_features=4096, bias=False)

(v_proj): Linear(in_features=4096, out_features=4096, bias=False)

(o_proj): Linear(in_features=4096, out_features=4096, bias=False)

(rotary_emb): LlamaRotaryEmbedding()

)

(mlp): LlamaMLP(

(gate_proj): Linear(in_features=4096, out_features=11008, bias=False)

(down_proj): Linear(in_features=11008, out_features=4096, bias=False)

(up_proj): Linear(in_features=4096, out_features=11008, bias=False)

(act_fn): SiLUActivation()

)

(input_layernorm): LlamaRMSNorm()

(post_attention_layernorm): LlamaRMSNorm()

)

)

(norm): LlamaRMSNorm()

)

(lm_head): Linear(in_features=4096, out_features=32000, bias=False)

)

```

## Evaluation

We evaluated OpenLLaMA on a wide range of tasks using [lm-evaluation-harness](https://github.com/EleutherAI/lm-evaluation-harness). The LLaMA results are generated by running the original LLaMA model on the same evaluation metrics. We note that our results for the LLaMA model differ slightly from the original LLaMA paper, which we believe is a result of different evaluation protocols. Similar differences have been reported in [this issue of lm-evaluation-harness](https://github.com/EleutherAI/lm-evaluation-harness/issues/443). Additionally, we present the results of GPT-J, a 6B parameter model trained on the [Pile](https://pile.eleuther.ai/) dataset by [EleutherAI](https://www.eleuther.ai/).

The original LLaMA model was trained for 1 trillion tokens and GPT-J was trained for 500 billion tokens. We present the results in the table below. OpenLLaMA exhibits comparable performance to the original LLaMA and GPT-J across a majority of tasks, and outperforms them in some tasks.

| **Task/Metric** | finetuned-GPT 3B | OpenLLaMA 3B |

| ---------------------- | -------- | ------------ |

| anli_r1/acc | **0.35** | 0.33 |

| anli_r2/acc | **0.33** | 0.32 |

| anli_r3/acc | 0.35 | 0.35 |

| arc_challenge/acc | **0.35** | 0.34 |

| arc_challenge/acc_norm | 0.37 | 0.37 |

| arc_easy/acc | **0.71** | 0.69 |

| arc_easy/acc_norm | 0.65 | 0.65 |

| boolq/acc | **0.72** | 0.66 |

| hellaswag/acc | **0.49** | 0.43 |

| hellaswag/acc_norm | 0.66 | **0.67** |

| openbookqa/acc | 0.26 | **0.27** |

| openbookqa/acc_norm | 0.40 | 0.40 |

| piqa/acc | **0.76** | 0.75 |

| piqa/acc_norm | 0.76 | 0.76 |

| record/em | 0.88 | 0.88 |

| record/f1 | 0.88 | **0.89** |

| rte/acc | 0.55 | **0.58** |

| truthfulqa_mc/mc1 | **0.27** | 0.22 |

| truthfulqa_mc/mc2 | **0.37** | 0.35 |

| wic/acc | **0.49** | 0.48 |

| winogrande/acc | **0.63** | 0.62 |

| Average | **0.53** | 0.52 |

We removed the task CB and WSC from our benchmark, as our model performs suspiciously well on these two tasks. We hypothesize that there could be a benchmark data contamination in the training set.

## Disclaimer

Please read this disclaimer carefully before using the large language model provided in this repository. Your use of the model signifies your agreement to the following terms and conditions.

- Biases and Offensiveness: The large language model is trained on a diverse range of internet text data, which may contain biased, racist, offensive, or otherwise inappropriate content. By using this model, you acknowledge and accept that the generated content may sometimes exhibit biases or produce content that is offensive or inappropriate. The developers of this repository do not endorse, support, or promote any such content or viewpoints.

- Limitations: The large language model is an AI-based tool and not a human. It may produce incorrect, nonsensical, or irrelevant responses. It is the user's responsibility to critically evaluate the generated content and use it at their discretion.

- Use at Your Own Risk: Users of this large language model must assume full responsibility for any consequences that may arise from their use of the tool. The developers and contributors of this repository shall not be held liable for any damages, losses, or harm resulting from the use or misuse of the provided model.

- Ethical Considerations: Users are encouraged to use the large language model responsibly and ethically. By using this model, you agree not to use it for purposes that promote hate speech, discrimination, harassment, or any form of illegal or harmful activities.

- Reporting Issues: If you encounter any biased, offensive, or otherwise inappropriate content generated by the large language model, please report it to the repository maintainers through the provided channels. Your feedback will help improve the model and mitigate potential issues.

- Changes to this Disclaimer: The developers of this repository reserve the right to modify or update this disclaimer at any time without prior notice. It is the user's responsibility to periodically review the disclaimer to stay informed about any changes.

By using the large language model provided in this repository, you agree to accept and comply with the terms and conditions outlined in this disclaimer. If you do not agree with any part of this disclaimer, you should refrain from using the model and any content generated by it.

|

jondurbin/airoboros-13b-gpt4-1.4-fp16 | jondurbin | 2023-06-25T11:39:17Z | 1,634 | 0 | transformers | [

"transformers",

"pytorch",

"llama",

"text-generation",

"dataset:jondurbin/airoboros-gpt4-1.4",

"license:other",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-06-22T10:46:42Z | ---

license: other

datasets:

- jondurbin/airoboros-gpt4-1.4

---

float16 version of https://huggingface.co/jondurbin/airoboros-13b-gpt4-1.4 |

Yntec/Noosphere_v3_CVAE | Yntec | 2024-04-06T07:05:45Z | 1,634 | 2 | diffusers | [

"diffusers",

"safetensors",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"skumerz",

"Rexts",

"license:creativeml-openrail-m",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | 2023-07-24T05:18:51Z | ---

license: creativeml-openrail-m

library_name: diffusers

pipeline_tag: text-to-image

tags:

- stable-diffusion

- stable-diffusion-diffusers

- diffusers

- text-to-image

- skumerz

- Rexts

---

# Noosphere v3 Color 101 VAE

The ultimate version of Noosphere v3 by skumerz! Better definition, better contrast and saturation! Thanks to Rexts's Color 101 VAE! The best 2.5D model just got better. Limited supply, get it while it lasts!

Original pages:

https://civitai.com/models/36538?modelVersionId=107675

https://civitai.com/models/70248/color101-vae |

golaxy/gogpt2-13b | golaxy | 2023-08-12T09:19:39Z | 1,634 | 4 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"llama",

"text-generation",

"llama2",

"chinese-llama2",

"gogpt2-7b",

"zh",

"dataset:c-s-ale/alpaca-gpt4-data-zh",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-07-31T13:32:31Z | ---

license: apache-2.0

datasets:

- c-s-ale/alpaca-gpt4-data-zh

language:

- zh

tags:

- llama2

- chinese-llama2

- gogpt2-7b

---

# GoGPT2-13B: 基于Llama2-13b训练的中英文增强大模型

<p align="center">

<img alt="GitHub" src="https://img.shields.io/github/license/ymcui/Chinese-LLaMA-Alpaca.svg?color=blue&style=flat-square">

<img alt="GitHub top language" src="https://img.shields.io/github/languages/top/ymcui/Chinese-LLaMA-Alpaca">

</p>

> ICT中英文底座增强大模型:70亿参数、130亿参数

🤗Huggingface上提供了GoGPT权重,目前开放了gogpt-7b和gogpt2-7b权重

| 模型名称 | 基座模型 | 模型大小 | 下载地址 |

|-------------------------------------------------------------|-----------|------|-------------------------------------------------|

| [golaxy/gogpt-7b](https://huggingface.co/golaxy/gogpt-7b) | Llama-7b | 7B | [模型下载](https://huggingface.co/golaxy/gogpt-7b) |

| [golaxy/gogpt2-7b](https://huggingface.co/golaxy/gogpt2-7b) | Llama2-7b | 7B | [模型下载](https://huggingface.co/golaxy/gogpt2-7b) |

| [golaxy/gogpt2-7b-pretrain](https://huggingface.co/golaxy/gogpt2-7b-pretrain) | Llama2-7b | 7B | [模型下载](https://huggingface.co/golaxy/gogpt2-7b-pretrain) |

| [golaxy/gogpt2-13b-pretrain](https://huggingface.co/golaxy/gogpt2-13b-pretrain) | Llama2-13b | 13B | [模型下载](https://huggingface.co/golaxy/gogpt2-13b-pretrain) |

| [golaxy/gogpt2-13b](https://huggingface.co/golaxy/gogpt2-13b) | Llama2-13b | 13B | [模型下载](https://huggingface.co/golaxy/gogpt2-13b) |

[GoGPT-Github](https://github.com/yanqiangmiffy/GoGPT)

## 测试效果

- 怎么学习机器学习

```

好的,以下是一些学习机器学习的方法:

1. 上下线:通过实践项目来学习,例如使用开源机器学习框架(如TensorFlow、Keras、Scikit-Learn等)来实现各种机器学习任务。

2. 课程学习:选择一些在线或线下的机器学习课程,例如Coursera、Udemy、edX等,这些平台都提供了各种机器学习课程。

3. 阅读书籍:选择一些经典的机器学习书籍,例如Andrew Ng的《深度学习》、《机器学习》等,这些书籍可以帮助你深入地学习机器学习基础知识。

4. 加入社区:加入机器学习社区(如Kaggle、Github、Reddit等),与其他机器学习爱好者交流和分享经验,从而不断学习和改进。

5. 参加比赛:参加一些机器学习比赛(如Kaggle等),可以通过解决实际问题来学习并提高自己的技能。

总之,学习机器学习需要不断实践和探索,找到适合自己的学习方法。

```

- 撰写一篇交响乐音乐会评论,讨论乐团的表演和观众的整体体验。

```

交响乐音乐会是音乐爱好者和古典音乐的爱好者的梦想。上周末,我参加了一场交响乐音乐会,我的经历真的很难忘。

首先,乐团的表演非常出色。他们都是专业音乐家,他们的演奏技巧非常娴熟。他们在演奏时,展现出了音乐的真谛,让人感受到音乐的力量和魅力。同时,他们还非常出色地演绎了交响乐中的不同元素,如高音、低音、节奏、调性和变化等。他们的表演非常自然,没有过分的紧张或放松,给人一种平和的、和谐的感觉。

其次,观众的整体体验也非常不错。音乐会现场非常宽敞明亮,座椅间隔非常宽敞。观众可以自由地欣赏音乐的每一个细节,同时还可以享受到音乐的魅力。同时,音乐会现场的氛围也非常友好,观众之间相互交流,享受音乐的共品。此外,音乐会现场还设有各种美食和饮料供观众品尝,增加了观众的舒适度。

总的来说,这次交响乐音乐会是一次非常愉快的音乐体验。乐团的表演非常出色,观众的整体体验也非常不错。我真的非常期待下一场音乐会,再次领略到音乐的魅力。

```

- 为一部最近上映的科幻电影撰写一个引人入胜的影评,讨论它的情节,角色和特效。

```

《星球大战:新纪元》是一部令人兴奋的科幻电影,它延续了之前的星球大战系列的故事情节和角色,同时也带来了新的挑战和惊喜。

这部电影的情节非常引人入胜,它讲述了一群反抗军与帝国之间的斗争,反抗军试图推翻帝国的统治,而帝国则竭力维持其统治。故事情节的复杂程度和紧张程度让人惊叹,每个角色都有自己独特的动机和经历,这使得整个电影的故事更加生动。

这部电影的主要角色包括雷伊(Rey),一个年轻的女武士,以及她的导师凯诺(Kylo Ren),一名邪恶的武士。这两个角色的互动和冲突是电影中的一个重要焦点,他们的故事情节充满了神秘和悬念,使得观众期待着下一刻会发生什么。此外,电影中还有一些新角色的出现,如米斯·恩德(Maisy Endor),一个新的星球上反抗军的领导者,以及C-3PO和R2-D2等经典的机器人角色,这些角色的回归和表现也都令人兴奋。

这部电影的视觉效果也非常惊人,它使用了最新的技术和特效来营造出逼真的宇宙场景和战斗场面。电影中的星球、建筑物、机械设备和武器的视觉效果都非常逼真,而且动作场面也非常刺激。电影中的视觉效果让人感到惊奇,也使观众更加沉浸在电影中的世界。

总的来说,《星球大战:新纪元》是一部非常值得一看的科幻电影,它讲述了一个引人入胜的故事,有着精彩的角色和特效,使得整个电影令人兴奋和震撼。这部电影的成功,也预示着星球大战系列的未来。

```

- 人类平均一生眨眼多少次?请尝试解释你的答案。你的解释应该逐步带领读者理解你的推理过程

```

人类平均一生眨眼几次是5600000次左右。这个数字是由许多不同的研究结果和估算得出的。

首先,眨眼频率随年龄的增加而变化。婴儿眨眼频率比成人高,随着年龄的增长,眨眼频率逐渐下降。根据这个规律,我们可以计算出成人眨眼频率约为10次/分钟。

其次,眨眼频率还受到多种因素的影响。例如,当人们感到紧张或疲劳时,眨眼频率会增加。同样地,当人们感到愉悦或放松时,眨眼频率会降低。此外,不同的身体部位的眨眼频率也不同。例如,眼睛内侧的眨眼频率比内侧的眨眼频率高。

最后,由于人们的眼睛健康状况也会影响眨眼频率,例如眼底病变或视力问题。这些因素都可以导致眨眼频率的改变。

综上所述,人类平均一生眨眼几次约为5600000次左右。这个数字是由多个因素影响的结果,包括年龄、身体状况、情感状态等。

```

- 请用python编程语言实现快速排序算法的代码

以下是使用Python快速排序算法的示例代码:

```python

def quick_sort(arr):

if len(arr) <= 1:

return arr

else:

pivot = arr[0]

left = []

right = []

for i in range(1, len(arr)):

if arr[i] < pivot:

left.append(arr[i])

else:

right.append(arr[i])

return quick_sort(left) + [pivot] + quick_sort(right)

```

这个函数接受一个整数数组作为输入,并在最坏情况下的时间复杂度为O(n^2)的情况下,最佳情况下时间复杂度为O(nlogn)。

- 如何提高我的时间管理技能?

```

以下是一些提高时间管理技能的建议:

1. 制定计划:每天早上或每天开始时,制定一个详细的计划,包括每天需要完成的任务和目标。

2. 优先级排序:将任务按照优先级排序,先完成重要任务。

3. 集中注意力:集中注意力,将注意力集中在当前的任务上,避免分心。

4. 避免拖延:尽可能避免拖延,尽快完成任务,避免任务过期。

5. 避免多任务:多任务会增加任务的时间,所以最好只专注一个任务,直到完成它。

6. 休息:休息是恢复和提高生产力的关键,因此定期休息,放松身心。

7. 调整时间:如果发现自己的时间管理有问题,可以调整时间,例如延长休息时间或缩短任务时间。

8. 学习新技能:学习新的技能可以帮助提高工作效率,例如使用工具或软件来管理任务。

9. 寻求帮助:如果你发现自己难以管理时间,可以寻求家人、朋友或同事的帮助。

希望这些建议可以帮助您提高时间管理技能。

```

- Python和JavaScript编程语言的主要区别是什么?

```

Python和JavaScript是两个不同的编程语言,它们有以下主要区别:

1. 数据类型:Python是一种动态类型的编程语言,它无法指定数据类型,而是根据变量中使用的值自动确定数据类型。JavaScript是一种动态类型编程语言,它使用字面量来指定变量的数据类型。

2. 语法:Python和JavaScript的语法有一些相似之处,但也有一些不同之处。例如,Python使用缩进来定义代码块,而JavaScript使用花括号。

3. 执行环境:Python通常运行在本地计算机的Python解释器中运行,而JavaScript通常运行在浏览器中运行。这意味着Python通常用于开发桌面应用程序和Web服务器端应用程序,而JavaScript主要用于开发Web应用程序。

4. 编程语言特性:Python具有更高的可读性和可维护性,而JavaScript具有更好的可扩展性和可维护性。

总之,Python和JavaScript是两种不同的编程语言,具有不同的特点和应用场景。选择哪种编程语言取决于项目的具体需求和开发者的技能水平。

```

- 植物蛋白和动物蛋白的主要区别是什么?

```

植物蛋白和动物蛋白的主要区别在于来源和氨基酸成分。

来源:

植物蛋白主要来自植物组织,如豆类、种子、果实、叶子等。而动物蛋白主要来自动物的身体部位,如肌肉、内脏、鱼类、蛋类等。

氨基酸成分:

植物蛋白中的主要氨基酸是植物性氨基酸,如氨基酸,植物性必需氨基酸(如硫胺素和植酸)等。而动物蛋白中的主要氨基酸是动物性氨基酸,如肌肉蛋白、乳糖蛋白等。

因此,植物蛋白和动物蛋白的营养价值是不同的。植物蛋白富含植物性必需氨基酸,如硫胺素、植酸等,而动物蛋白则含有更多的动物性必需氨基酸,如肌肉蛋白、乳糖蛋白等。植物蛋白通常比动物蛋白低热量,但也有些植物蛋白的蛋白质质量较低。动物蛋白通常比植物蛋白高蛋白质质量,但同样含有较多的饱和脂肪和胆固醇等有害物质。

```

- 影响消费者行为的主要因素是什么?

```

影响消费者行为的因素有很多,以下是其中几个主要的因素:

1. 消费需求:消费者的需求是影响消费者行为的主要因素之一。消费者的需求是根据个人利益和价值观而产生的。例如,如果一个消费者喜欢时尚的服装,那么他们可能会对购买这些服装的商场和品牌的选择更有吸引力。

2. 购买力:消费者购买力的限制是一个影响消费者行为的因素。消费者可能会因为预算限制而选择购买某些商品。

3. 价格:价格也是影响消费者行为的因素之一。消费者通常会比较不同商品的价格,并根据价格选择购买哪种商品。

4. 品牌知名度:品牌知名度是影响消费者行为的因素之一。消费者通常会选择有信誉的品牌,因为他们相信这些品牌能够提供高质量的产品和服务。

5. 社交媒体:社交媒体在影响消费者行为中扮演越来越重要的作用。许多消费者会在社交媒体上了解商品和品牌的信息,并选择购买这些商品。

6. 社交媒体:社交媒体在影响消费者行为中扮演越来越重要的角色。许多消费者会在社交媒体上了解商品和品牌的信息,并选择购买这些商品。

总之,影响消费者行为的主要因素有很多,这些因素往往是相互关联的。企业可以通过了解这些因素,来制定有效的营销策略,吸引和留住消费者。

```

|

HyperbeeAI/Tulpar-7b-v0 | HyperbeeAI | 2023-09-13T19:04:10Z | 1,634 | 22 | transformers | [

"transformers",

"pytorch",

"llama",

"text-generation",

"en",

"license:llama2",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-08-23T10:13:55Z | ---

license: llama2

language:

- en

library_name: transformers

thumbnail: "https://huggingface.co/HyperbeeAI/Tulpar-7b-v0/resolve/main/tulpar.png"

---

<p align="center">

<img src="https://huggingface.co/HyperbeeAI/Tulpar-7b-v0/resolve/main/tulpar.png" width="360" height="360" >

</p>

# Model Description

Tulpar-7b is a LLama2-7b-based model trained by HyperbeeAI. Training is done on a filtered and preprocessed instruction finetuning dataset that includes GPT-4 generated and generally curated datasets like Airoboros and Platypus.

# Example Usage

Loading the model:

```python

from transformers import AutoTokenizer, AutoModelForCausalLM

tokenizer = AutoTokenizer.from_pretrained("HyperbeeAI/Tulpar-7b-v0")

model = AutoModelForCausalLM.from_pretrained("HyperbeeAI/Tulpar-7b-v0", device_map="auto")

```

You can run inference with both of the following prompts:

```python

input_text="What is deep learning?"

prompt = f"### User: {input_text}\n\n### Assistant:\n"

inputs = tokenizer(prompt, return_tensors="pt")

output = model.generate(**inputs, do_sample=True, top_p=0.95, top_k=0, max_new_tokens=512)

print(tokenizer.decode(output[0]))

```

```python

input_text="What is deep learning?"

prompt = f"Question: {input_text}\n\nAnswer:"

inputs = tokenizer(prompt, return_tensors="pt")

output = model.generate(**inputs, do_sample=True, top_p=0.95, top_k=0, max_new_tokens=512)

print(tokenizer.decode(output[0]))

```

# Evaluation

Our offline HF Leaderboard evaluation results:

||||

|:------:|:--------:|:-------:|

|**Task**|**Metric**|**Value**|

|*arc_challenge*|acc_norm|0.5614|

|*hellaswag*|acc_norm|0.7901|

|*mmlu*|acc_norm|0.5242|

|*truthfulqa_mc*|mc2|0.5160|

|**Average**|-|**0.5979**||

Other GPT4All evaluation results:

||||

|:------:|:--------:|:-------:|

|**Task**|**Metric**|**Value**|

|boolq|acc |0.8306|

|piqa|acc |0.7905|

| |acc_norm|0.7884|

|winogrande|acc |0.7159|

|openbookqa|acc |0.356|

| |acc_norm|0.448|

|**Average** (including HF leaderboard datasets) | | **0.6468** |

BigBenchHard results:

||||

|:------:|:--------:|:-------:|

|**Task**|**Metric**|**Value**|

|bigbench_causal_judgement |multiple_choice_grade|0.6105|

|bigbench_date_understanding |multiple_choice_grade|0.6423|

|bigbench_disambiguation_qa |multiple_choice_grade|0.3643|

|bigbench_dyck_languages |multiple_choice_grade|0.2000|

|bigbench_formal_fallacies_syllogisms_negation |multiple_choice_grade|0.5002|

|bigbench_geometric_shapes |multiple_choice_grade|0.0000|

| |exact_str_match |0.0000|

|bigbench_hyperbaton |multiple_choice_grade|0.6754|

|bigbench_logical_deduction_five_objects |multiple_choice_grade|0.2700|

|bigbench_logical_deduction_seven_objects |multiple_choice_grade|0.1929|

|bigbench_logical_deduction_three_objects |multiple_choice_grade|0.4133|

|bigbench_movie_recommendation |multiple_choice_grade|0.3000|

|bigbench_navigate |multiple_choice_grade|0.5000|

|bigbench_reasoning_about_colored_objects |multiple_choice_grade|0.5750|

|bigbench_ruin_names |multiple_choice_grade|0.3281|

|bigbench_salient_translation_error_detection |multiple_choice_grade|0.2976|

|bigbench_snarks |multiple_choice_grade|0.6022|

|bigbench_sports_understanding |multiple_choice_grade|0.5122|

|bigbench_temporal_sequences |multiple_choice_grade|0.1450|

|bigbench_tracking_shuffled_objects_five_objects |multiple_choice_grade|0.1976|

|bigbench_tracking_shuffled_objects_seven_objects|multiple_choice_grade|0.1440|

|bigbench_tracking_shuffled_objects_three_objects|multiple_choice_grade|0.4133|

|**Average**| |**0.3754**

# Ethical Considerations and Limitations

Tulpar is a technology with potential risks and limitations. This model is finetuned only in English and all language-related scenarios are not covered. As HyperbeeAI, we neither guarantee ethical, accurate, unbiased, objective responses nor endorse its outputs. Before deploying this model, you are advised to make safety tests for your use case. |

Sao10K/Medusa-13b | Sao10K | 2023-11-18T04:33:07Z | 1,634 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"en",

"license:llama2",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-08-28T14:22:41Z | ---

license: llama2

language:

- en

---

<img src="https://s1.zerochan.net/Lancer.%28Medusa%29.600.2885725.jpg" style="width: 50%; min-width: 200px; display: block; margin: auto;">

<br>My First ever attempt at Model Merging. I have zero experience, like zero, none. I don't know how to code, or use linux. Getting the terminal to work on Runpod was a nightmare.

<br>Experimental Model I posted. Usable in RPs but it is not the best, compared to top dog models like Mythomax.

<br> Just proof that a clueless guy let me can work on models.

<br> I'm still learning basics on fine-tuning and training models.

<br> I am seriously reading up on various model merging methods, and what models are best to use. I'll leave this here as my first work.

Base Model: [Llama-2-13B-fp16](https://huggingface.co/TheBloke/Llama-2-13B-fp16)

<br> ---**Merged with**

<br> ----- [chronos-13b-v2](https://huggingface.co/elinas/chronos-13b-v2)

<br> ----- [Nous-Hermes-Llama2-13b](https://huggingface.co/NousResearch/Nous-Hermes-Llama2-13b)

<br> ----- [airoboros-l2-13b-gpt4-m2.0](https://huggingface.co/jondurbin/airoboros-l2-13b-gpt4-m2.0)

<br> ----- [OpenOrca-Platypus2-13B](https://huggingface.co/Open-Orca/OpenOrca-Platypus2-13B)

<br> ----- [Kimiko-13B](https://huggingface.co/TheBloke/Kimiko-13B-fp16)

<br> ----- [StableBeluga-13B](https://huggingface.co/stabilityai/StableBeluga-13B)

Rationale:

<br> Chronos - Used Chronoboros L1 so I put them here

<br> Nous-Hermes - First ever L2 Model I used (づ ᴗ _ᴗ)づ♡

<br> Airoboros - Used Chronoboros L1 so I put them here

<br> OpenOrca-Platypus - platypus.

<br> Kimiko - Cute sounding name

<br> StableBeluga - favourite animal

<br> Prompt Format? No clue. I've been using Alpaca and It's been working fine.

<br>Script used to Merge [here](https://github.com/cg123/ties-merge)

<br>Thank you for the easy to set up script, [Chargoddard](https://huggingface.co/chargoddard) !

<br>Command used below:

```

python ties_merge.py TheBloke/Llama-2-13B-fp16 ./Medusa-13b --merge elinas/chronos-13b-v2 --merge NousResearch/Nous-Hermes-Llama2-13b --merge jondurbin/airoboros-l2-13b-gpt4-m2.0 --merge Open-Orca/OpenOrca-Platypus2-13B --merge TheBloke/Kimiko-13B-fp16 --merge stabilityai/StableBeluga-13B --cuda

```

<br>Do not let me cook!

```

Image Credit: https://www.pixiv.net/en/artworks/80169912

Artist: TEL-O

```

# [Open LLM Leaderboard Evaluation Results](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)

Detailed results can be found [here](https://huggingface.co/datasets/open-llm-leaderboard/details_Sao10K__Medusa-13b)

| Metric | Value |

|-----------------------|---------------------------|

| Avg. | 49.85 |

| ARC (25-shot) | 58.19 |

| HellaSwag (10-shot) | 81.35 |

| MMLU (5-shot) | 57.39 |

| TruthfulQA (0-shot) | 51.24 |

| Winogrande (5-shot) | 73.32 |

| GSM8K (5-shot) | 6.82 |

| DROP (3-shot) | 20.61 |

|

uukuguy/speechless-llama2-luban-orca-platypus-13b | uukuguy | 2023-09-01T06:28:52Z | 1,634 | 3 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"facebook",

"meta",

"pytorch",

"llama-2",

"en",

"dataset:garage-bAInd/Open-Platypus",

"arxiv:2307.09288",

"autotrain_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-09-01T02:43:40Z | ---

extra_gated_heading: Access Llama 2 on Hugging Face

extra_gated_description: >-

This is a form to enable access to Llama 2 on Hugging Face after you have been

granted access from Meta. Please visit the [Meta website](https://ai.meta.com/resources/models-and-libraries/llama-downloads) and accept our

license terms and acceptable use policy before submitting this form. Requests

will be processed in 1-2 days.

extra_gated_prompt: "**Your Hugging Face account email address MUST match the email you provide on the Meta website, or your request will not be approved.**"

extra_gated_button_content: Submit

extra_gated_fields:

I agree to share my name, email address and username with Meta and confirm that I have already been granted download access on the Meta website: checkbox

language:

- en

datasets:

- garage-bAInd/Open-Platypus

library_name: transformers

pipeline_tag: text-generation

inference: false

tags:

- facebook

- meta

- pytorch

- llama

- llama-2

---

<p><h1> speechless-llama2-orca-platypus-13b </h1></p>

speechless-llama2-orca-platypus-13b is a merge of AIDC-ai-business/Luban-13B and Open-Orca/OpenOrca-Platypus2-13B.

| Metric | Value |

| --- | --- |

| ARC | 62.54 |

| HellaSwag | 82.76 |

| MMLU | 59.23 |

| TruthfulQA | 54.66 |

| Average | 64.80 |

# **Llama 2**

Llama 2 is a collection of pretrained and fine-tuned generative text models ranging in scale from 7 billion to 70 billion parameters. This is the repository for the 13B pretrained model, converted for the Hugging Face Transformers format. Links to other models can be found in the index at the bottom.

## Model Details

*Note: Use of this model is governed by the Meta license. In order to download the model weights and tokenizer, please visit the [website](https://ai.meta.com/resources/models-and-libraries/llama-downloads/) and accept our License before requesting access here.*

Meta developed and publicly released the Llama 2 family of large language models (LLMs), a collection of pretrained and fine-tuned generative text models ranging in scale from 7 billion to 70 billion parameters. Our fine-tuned LLMs, called Llama-2-Chat, are optimized for dialogue use cases. Llama-2-Chat models outperform open-source chat models on most benchmarks we tested, and in our human evaluations for helpfulness and safety, are on par with some popular closed-source models like ChatGPT and PaLM.

**Model Developers** Meta

**Variations** Llama 2 comes in a range of parameter sizes — 7B, 13B, and 70B — as well as pretrained and fine-tuned variations.

**Input** Models input text only.

**Output** Models generate text only.

**Model Architecture** Llama 2 is an auto-regressive language model that uses an optimized transformer architecture. The tuned versions use supervised fine-tuning (SFT) and reinforcement learning with human feedback (RLHF) to align to human preferences for helpfulness and safety.

||Training Data|Params|Content Length|GQA|Tokens|LR|

|---|---|---|---|---|---|---|

|Llama 2|*A new mix of publicly available online data*|7B|4k|✗|2.0T|3.0 x 10<sup>-4</sup>|

|Llama 2|*A new mix of publicly available online data*|13B|4k|✗|2.0T|3.0 x 10<sup>-4</sup>|

|Llama 2|*A new mix of publicly available online data*|70B|4k|✔|2.0T|1.5 x 10<sup>-4</sup>|

*Llama 2 family of models.* Token counts refer to pretraining data only. All models are trained with a global batch-size of 4M tokens. Bigger models - 70B -- use Grouped-Query Attention (GQA) for improved inference scalability.

**Model Dates** Llama 2 was trained between January 2023 and July 2023.

**Status** This is a static model trained on an offline dataset. Future versions of the tuned models will be released as we improve model safety with community feedback.

**License** A custom commercial license is available at: [https://ai.meta.com/resources/models-and-libraries/llama-downloads/](https://ai.meta.com/resources/models-and-libraries/llama-downloads/)

**Research Paper** ["Llama-2: Open Foundation and Fine-tuned Chat Models"](arxiv.org/abs/2307.09288)

## Intended Use

**Intended Use Cases** Llama 2 is intended for commercial and research use in English. Tuned models are intended for assistant-like chat, whereas pretrained models can be adapted for a variety of natural language generation tasks.

To get the expected features and performance for the chat versions, a specific formatting needs to be followed, including the `INST` and `<<SYS>>` tags, `BOS` and `EOS` tokens, and the whitespaces and breaklines in between (we recommend calling `strip()` on inputs to avoid double-spaces). See our reference code in github for details: [`chat_completion`](https://github.com/facebookresearch/llama/blob/main/llama/generation.py#L212).

**Out-of-scope Uses** Use in any manner that violates applicable laws or regulations (including trade compliance laws).Use in languages other than English. Use in any other way that is prohibited by the Acceptable Use Policy and Licensing Agreement for Llama 2.

## Hardware and Software

**Training Factors** We used custom training libraries, Meta's Research Super Cluster, and production clusters for pretraining. Fine-tuning, annotation, and evaluation were also performed on third-party cloud compute.

**Carbon Footprint** Pretraining utilized a cumulative 3.3M GPU hours of computation on hardware of type A100-80GB (TDP of 350-400W). Estimated total emissions were 539 tCO2eq, 100% of which were offset by Meta’s sustainability program.

||Time (GPU hours)|Power Consumption (W)|Carbon Emitted(tCO<sub>2</sub>eq)|

|---|---|---|---|

|Llama 2 7B|184320|400|31.22|

|Llama 2 13B|368640|400|62.44|

|Llama 2 70B|1720320|400|291.42|

|Total|3311616||539.00|

**CO<sub>2</sub> emissions during pretraining.** Time: total GPU time required for training each model. Power Consumption: peak power capacity per GPU device for the GPUs used adjusted for power usage efficiency. 100% of the emissions are directly offset by Meta's sustainability program, and because we are openly releasing these models, the pretraining costs do not need to be incurred by others.

## Training Data

**Overview** Llama 2 was pretrained on 2 trillion tokens of data from publicly available sources. The fine-tuning data includes publicly available instruction datasets, as well as over one million new human-annotated examples. Neither the pretraining nor the fine-tuning datasets include Meta user data.

**Data Freshness** The pretraining data has a cutoff of September 2022, but some tuning data is more recent, up to July 2023.

## Evaluation Results

In this section, we report the results for the Llama 1 and Llama 2 models on standard academic benchmarks.For all the evaluations, we use our internal evaluations library.

|Model|Size|Code|Commonsense Reasoning|World Knowledge|Reading Comprehension|Math|MMLU|BBH|AGI Eval|

|---|---|---|---|---|---|---|---|---|---|

|Llama 1|7B|14.1|60.8|46.2|58.5|6.95|35.1|30.3|23.9|

|Llama 1|13B|18.9|66.1|52.6|62.3|10.9|46.9|37.0|33.9|

|Llama 1|33B|26.0|70.0|58.4|67.6|21.4|57.8|39.8|41.7|

|Llama 1|65B|30.7|70.7|60.5|68.6|30.8|63.4|43.5|47.6|

|Llama 2|7B|16.8|63.9|48.9|61.3|14.6|45.3|32.6|29.3|

|Llama 2|13B|24.5|66.9|55.4|65.8|28.7|54.8|39.4|39.1|

|Llama 2|70B|**37.5**|**71.9**|**63.6**|**69.4**|**35.2**|**68.9**|**51.2**|**54.2**|

**Overall performance on grouped academic benchmarks.** *Code:* We report the average pass@1 scores of our models on HumanEval and MBPP. *Commonsense Reasoning:* We report the average of PIQA, SIQA, HellaSwag, WinoGrande, ARC easy and challenge, OpenBookQA, and CommonsenseQA. We report 7-shot results for CommonSenseQA and 0-shot results for all other benchmarks. *World Knowledge:* We evaluate the 5-shot performance on NaturalQuestions and TriviaQA and report the average. *Reading Comprehension:* For reading comprehension, we report the 0-shot average on SQuAD, QuAC, and BoolQ. *MATH:* We report the average of the GSM8K (8 shot) and MATH (4 shot) benchmarks at top 1.

|||TruthfulQA|Toxigen|

|---|---|---|---|

|Llama 1|7B|27.42|23.00|

|Llama 1|13B|41.74|23.08|

|Llama 1|33B|44.19|22.57|

|Llama 1|65B|48.71|21.77|

|Llama 2|7B|33.29|**21.25**|

|Llama 2|13B|41.86|26.10|

|Llama 2|70B|**50.18**|24.60|

**Evaluation of pretrained LLMs on automatic safety benchmarks.** For TruthfulQA, we present the percentage of generations that are both truthful and informative (the higher the better). For ToxiGen, we present the percentage of toxic generations (the smaller the better).

|||TruthfulQA|Toxigen|

|---|---|---|---|

|Llama-2-Chat|7B|57.04|**0.00**|

|Llama-2-Chat|13B|62.18|**0.00**|

|Llama-2-Chat|70B|**64.14**|0.01|

**Evaluation of fine-tuned LLMs on different safety datasets.** Same metric definitions as above.

## Ethical Considerations and Limitations

Llama 2 is a new technology that carries risks with use. Testing conducted to date has been in English, and has not covered, nor could it cover all scenarios. For these reasons, as with all LLMs, Llama 2’s potential outputs cannot be predicted in advance, and the model may in some instances produce inaccurate, biased or other objectionable responses to user prompts. Therefore, before deploying any applications of Llama 2, developers should perform safety testing and tuning tailored to their specific applications of the model.

Please see the Responsible Use Guide available at [https://ai.meta.com/llama/responsible-use-guide/](https://ai.meta.com/llama/responsible-use-guide)

## Reporting Issues

Please report any software “bug,” or other problems with the models through one of the following means:

- Reporting issues with the model: [github.com/facebookresearch/llama](http://github.com/facebookresearch/llama)

- Reporting problematic content generated by the model: [developers.facebook.com/llama_output_feedback](http://developers.facebook.com/llama_output_feedback)

- Reporting bugs and security concerns: [facebook.com/whitehat/info](http://facebook.com/whitehat/info)

## Llama Model Index

|Model|Llama2|Llama2-hf|Llama2-chat|Llama2-chat-hf|

|---|---|---|---|---|

|7B| [Link](https://huggingface.co/llamaste/Llama-2-7b) | [Link](https://huggingface.co/llamaste/Llama-2-7b-hf) | [Link](https://huggingface.co/llamaste/Llama-2-7b-chat) | [Link](https://huggingface.co/llamaste/Llama-2-7b-chat-hf)|

|13B| [Link](https://huggingface.co/llamaste/Llama-2-13b) | [Link](https://huggingface.co/llamaste/Llama-2-13b-hf) | [Link](https://huggingface.co/llamaste/Llama-2-13b-chat) | [Link](https://huggingface.co/llamaste/Llama-2-13b-hf)|

|70B| [Link](https://huggingface.co/llamaste/Llama-2-70b) | [Link](https://huggingface.co/llamaste/Llama-2-70b-hf) | [Link](https://huggingface.co/llamaste/Llama-2-70b-chat) | [Link](https://huggingface.co/llamaste/Llama-2-70b-hf)|

|

Faradaylab/ARIA-70B-V3 | Faradaylab | 2023-10-10T14:02:44Z | 1,634 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"license:other",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-09-11T09:34:47Z | ---

license: other

---

ARIA V3 has been trained over 100.000 high quality french language with a focus on data bias, grammar and overall language/writing capacities of the model.

The training has been done on Nvidia GPU in the cloud with Amazon Sagemaker.

Base Model : Llama2-70B-Chat-HF

Dataset : private dataset.

Added value : French Language / Writing / Content Creation / Data bias reduction

Feel free to reach out to us ! [email protected]

|

CHIH-HUNG/llama-2-13b-FINETUNE3_3.3w-r4-gate_up_down | CHIH-HUNG | 2023-09-19T22:24:10Z | 1,634 | 0 | transformers | [

"transformers",

"pytorch",

"llama",

"text-generation",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-09-19T22:09:24Z | Entry not found |

amazingvince/zephyr-smol_llama-100m-sft-full | amazingvince | 2023-11-20T17:27:19Z | 1,634 | 1 | transformers | [

"transformers",

"tensorboard",

"safetensors",

"llama",

"text-generation",

"generated_from_trainer",

"conversational",

"base_model:BEE-spoke-data/smol_llama-101M-GQA",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-11-20T16:50:31Z | ---

license: apache-2.0

base_model: BEE-spoke-data/smol_llama-101M-GQA

tags:

- generated_from_trainer

model-index:

- name: zephyr-smol_llama-100m-sft-full

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# zephyr-smol_llama-100m-sft-full

This model is a fine-tuned version of [BEE-spoke-data/smol_llama-101M-GQA](https://huggingface.co/BEE-spoke-data/smol_llama-101M-GQA) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 1.9579

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- distributed_type: multi-GPU

- num_devices: 2

- gradient_accumulation_steps: 4

- total_train_batch_size: 128

- total_eval_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- num_epochs: 1

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 1.9642 | 0.7 | 1141 | 1.9578 |

### Framework versions

- Transformers 4.35.0

- Pytorch 2.1.0

- Datasets 2.14.6

- Tokenizers 0.14.1

|

BreadAi/gpt-YA-1-1_70M | BreadAi | 2023-11-18T10:45:28Z | 1,633 | 0 | transformers | [

"transformers",

"pytorch",

"gpt_neox",

"text-generation",

"dataset:breadlicker45/1m-YA-dataset",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-02-01T20:09:00Z | ---

datasets:

- breadlicker45/1m-YA-dataset

---

# [Open LLM Leaderboard Evaluation Results](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)

Detailed results can be found [here](https://huggingface.co/datasets/open-llm-leaderboard/details_BreadAi__gpt-YA-1-1_70M)

| Metric | Value |

|-----------------------|---------------------------|

| Avg. | 25.64 |

| ARC (25-shot) | 22.53 |

| HellaSwag (10-shot) | 27.37 |

| MMLU (5-shot) | 25.38 |

| TruthfulQA (0-shot) | 47.09 |

| Winogrande (5-shot) | 50.91 |

| GSM8K (5-shot) | 0.0 |

| DROP (3-shot) | 6.18 |

|

h2oai/h2ogpt-gm-oasst1-en-1024-12b | h2oai | 2023-05-02T19:15:21Z | 1,633 | 5 | transformers | [

"transformers",

"pytorch",

"gpt_neox",

"text-generation",

"gpt",

"llm",

"large language model",

"h2o-llmstudio",

"en",

"dataset:OpenAssistant/oasst1",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-05-02T12:02:19Z | ---

language:

- en

library_name: transformers

tags:

- gpt

- llm

- large language model

- h2o-llmstudio

inference: false

thumbnail: >-

https://h2o.ai/etc.clientlibs/h2o/clientlibs/clientlib-site/resources/images/favicon.ico

license: apache-2.0

datasets:

- OpenAssistant/oasst1

---

# Model Card

## Summary

This model was trained using [H2O LLM Studio](https://github.com/h2oai/h2o-llmstudio).

- Base model: [EleutherAI/pythia-12b-deduped](https://huggingface.co/EleutherAI/pythia-12b-deduped)

- Dataset preparation: [OpenAssistant/oasst1](https://github.com/h2oai/h2o-llmstudio/blob/1935d84d9caafed3ee686ad2733eb02d2abfce57/app_utils/utils.py#LL1896C5-L1896C28)

## Usage

To use the model with the `transformers` library on a machine with GPUs, first make sure you have the `transformers` and `torch` libraries installed.

```bash

pip install transformers==4.28.1

pip install torch==2.0.0

```

```python

import torch

from transformers import pipeline

generate_text = pipeline(

model="h2oai/h2ogpt-gm-oasst1-en-1024-12b",

torch_dtype=torch.float16,

trust_remote_code=True,

device_map={"": "cuda:0"},

)

res = generate_text(

"Why is drinking water so healthy?",

min_new_tokens=2,

max_new_tokens=256,

do_sample=False,

num_beams=2,

temperature=float(0.3),

repetition_penalty=float(1.2),

)

print(res[0]["generated_text"])

```

You can print a sample prompt after the preprocessing step to see how it is feed to the tokenizer:

```python

print(generate_text.preprocess("Why is drinking water so healthy?")["prompt_text"])

```

```bash

<|prompt|>Why is drinking water so healthy?<|endoftext|><|answer|>

```

Alternatively, if you prefer to not use `trust_remote_code=True` you can download [h2oai_pipeline.py](h2oai_pipeline.py), store it alongside your notebook, and construct the pipeline yourself from the loaded model and tokenizer:

```python

import torch

from h2oai_pipeline import H2OTextGenerationPipeline

from transformers import AutoModelForCausalLM, AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained(

"h2oai/h2ogpt-gm-oasst1-en-1024-12b",

padding_side="left"

)

model = AutoModelForCausalLM.from_pretrained(

"h2oai/h2ogpt-gm-oasst1-en-1024-12b",

torch_dtype=torch.float16,

device_map={"": "cuda:0"}

)

generate_text = H2OTextGenerationPipeline(model=model, tokenizer=tokenizer)

res = generate_text(

"Why is drinking water so healthy?",

min_new_tokens=2,

max_new_tokens=256,

do_sample=False,

num_beams=2,

temperature=float(0.3),

repetition_penalty=float(1.2),

)

print(res[0]["generated_text"])

```

You may also construct the pipeline from the loaded model and tokenizer yourself and consider the preprocessing steps:

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

model_name = "h2oai/h2ogpt-gm-oasst1-en-1024-12b" # either local folder or huggingface model name

# Important: The prompt needs to be in the same format the model was trained with.

# You can find an example prompt in the experiment logs.

prompt = "<|prompt|>How are you?<|endoftext|><|answer|>"

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForCausalLM.from_pretrained(model_name)

model.cuda().eval()

inputs = tokenizer(prompt, return_tensors="pt", add_special_tokens=False).to("cuda")

# generate configuration can be modified to your needs

tokens = model.generate(

**inputs,

min_new_tokens=2,

max_new_tokens=256,

do_sample=False,

num_beams=2,

temperature=float(0.3),

repetition_penalty=float(1.2),

)[0]

tokens = tokens[inputs["input_ids"].shape[1]:]

answer = tokenizer.decode(tokens, skip_special_tokens=True)

print(answer)

```

## Model Architecture

```

GPTNeoXForCausalLM(

(gpt_neox): GPTNeoXModel(

(embed_in): Embedding(50688, 5120)

(layers): ModuleList(

(0-35): 36 x GPTNeoXLayer(

(input_layernorm): LayerNorm((5120,), eps=1e-05, elementwise_affine=True)

(post_attention_layernorm): LayerNorm((5120,), eps=1e-05, elementwise_affine=True)

(attention): GPTNeoXAttention(

(rotary_emb): RotaryEmbedding()

(query_key_value): Linear(in_features=5120, out_features=15360, bias=True)

(dense): Linear(in_features=5120, out_features=5120, bias=True)

)

(mlp): GPTNeoXMLP(

(dense_h_to_4h): Linear(in_features=5120, out_features=20480, bias=True)

(dense_4h_to_h): Linear(in_features=20480, out_features=5120, bias=True)

(act): GELUActivation()

)

)

)

(final_layer_norm): LayerNorm((5120,), eps=1e-05, elementwise_affine=True)

)

(embed_out): Linear(in_features=5120, out_features=50688, bias=False)

)

```

## Model Configuration

This model was trained using H2O LLM Studio and with the configuration in [cfg.yaml](cfg.yaml). Visit [H2O LLM Studio](https://github.com/h2oai/h2o-llmstudio) to learn how to train your own large language models.

## Model Validation

Model validation results using [EleutherAI lm-evaluation-harness](https://github.com/EleutherAI/lm-evaluation-harness).

```bash

CUDA_VISIBLE_DEVICES=0 python main.py --model hf-causal-experimental --model_args pretrained=h2oai/h2ogpt-gm-oasst1-en-1024-12b --tasks openbookqa,arc_easy,winogrande,hellaswag,arc_challenge,piqa,boolq --device cuda &> eval.log

```

| Task |Version| Metric |Value | |Stderr|

|-------------|------:|--------|-----:|---|-----:|

|arc_challenge| 0|acc |0.3345|± |0.0138|

| | |acc_norm|0.3754|± |0.0142|

|arc_easy | 0|acc |0.6435|± |0.0098|

| | |acc_norm|0.5800|± |0.0101|

|boolq | 1|acc |0.5098|± |0.0087|

|hellaswag | 0|acc |0.5150|± |0.0050|

| | |acc_norm|0.6951|± |0.0046|

|openbookqa | 0|acc |0.3080|± |0.0207|

| | |acc_norm|0.3980|± |0.0219|

|piqa | 0|acc |0.7704|± |0.0098|

| | |acc_norm|0.7704|± |0.0098|

|winogrande | 0|acc |0.6622|± |0.0133|

## Disclaimer

Please read this disclaimer carefully before using the large language model provided in this repository. Your use of the model signifies your agreement to the following terms and conditions.

- Biases and Offensiveness: The large language model is trained on a diverse range of internet text data, which may contain biased, racist, offensive, or otherwise inappropriate content. By using this model, you acknowledge and accept that the generated content may sometimes exhibit biases or produce content that is offensive or inappropriate. The developers of this repository do not endorse, support, or promote any such content or viewpoints.

- Limitations: The large language model is an AI-based tool and not a human. It may produce incorrect, nonsensical, or irrelevant responses. It is the user's responsibility to critically evaluate the generated content and use it at their discretion.

- Use at Your Own Risk: Users of this large language model must assume full responsibility for any consequences that may arise from their use of the tool. The developers and contributors of this repository shall not be held liable for any damages, losses, or harm resulting from the use or misuse of the provided model.

- Ethical Considerations: Users are encouraged to use the large language model responsibly and ethically. By using this model, you agree not to use it for purposes that promote hate speech, discrimination, harassment, or any form of illegal or harmful activities.

- Reporting Issues: If you encounter any biased, offensive, or otherwise inappropriate content generated by the large language model, please report it to the repository maintainers through the provided channels. Your feedback will help improve the model and mitigate potential issues.

- Changes to this Disclaimer: The developers of this repository reserve the right to modify or update this disclaimer at any time without prior notice. It is the user's responsibility to periodically review the disclaimer to stay informed about any changes.

By using the large language model provided in this repository, you agree to accept and comply with the terms and conditions outlined in this disclaimer. If you do not agree with any part of this disclaimer, you should refrain from using the model and any content generated by it. |

Rallio67/7B-redpajama-conditional-alpha | Rallio67 | 2023-05-14T18:30:16Z | 1,633 | 7 | transformers | [

"transformers",

"pytorch",

"gpt_neox",

"text-generation",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-05-09T01:30:27Z | # Conditional Pretraining of Large Language Models

Large language models (LLMs), such as OpenAI's ChatGPT and similar chatbot products from other organizations, have recently gained widespread adoption. These models can extend text or respond to instructions in a natural and helpful manner. Despite the core technologies behind LLMs, namely the transformer architecture and the GPT decoder-only causal language model, remaining relatively unchanged for over five years, the surge in popularity of ChatGPT can be largely attributed to recent approaches that better align the output of LLMs with users' and service providers' intentions.

## Primary Approaches for Aligning LLMs with Human Expectations

1. **Supervised finetuning (SFT) on natural instructions**

2. **Reinforcement learning from human feedback (RLHF)**

## Conditional Pretraining: A Third Approach

- Utilizes a large number of pretraining examples tagged with human-understandable classifiers

- Leverages content tagging found in many online environments

- Examples of commonly used tags:

- Suitable for work (SFW) and not suitable for work (NSFW)

- G, PG, PG-13, and R for television and movie content

## Converting Existing Pretraining Data into Conditional Pretraining Data

- Traditional pretraining involves predicting the subsequent word in minimally processed text.

- Conditional pretraining prepends training examples with descriptive tags and a brief synopsis.

## Transparency and Accountability

- Current LLMs have proprietary instructions and reward models, which can hinder public review and discussions on sensitive topics.

- Conditional pretraining tags are transparent and easily understood by auditors or end users.

## Conditional pretraining example

An example output from this conditional tagging model for a recent news article about LAION.

[`Article Here`](https://www.forbes.com/sites/hessiejones/2023/04/19/amid-growing-call-to-pause-ai-research-laion-petitions-governments-to-keep-agi-research-open-active-and-responsible/) is below. To generate these document tags only text from the body of the article was used.

```

[ artificial intelligence, open source, ai, open letter, open source ai, ai research]

# This article explains the importance of a CERN-like organization to coordinate efforts on the transparency of large-scale AI research and provides information about LAION.

```

## How to use the model