modelId

stringlengths 5

122

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC] | downloads

int64 0

738M

| likes

int64 0

11k

| library_name

stringclasses 245

values | tags

sequencelengths 1

4.05k

| pipeline_tag

stringclasses 48

values | createdAt

timestamp[us, tz=UTC] | card

stringlengths 1

901k

|

|---|---|---|---|---|---|---|---|---|---|

eclipsemint/kollama2-7b-v0.1 | eclipsemint | 2023-10-31T10:32:27Z | 1,342 | 0 | transformers | [

"transformers",

"pytorch",

"llama",

"text-generation",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-10-31T10:21:23Z | Entry not found |

BM-K/llama-2-ko-7b-it-v1.0.0 | BM-K | 2023-11-15T11:33:58Z | 1,342 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-11-15T11:22:14Z | Entry not found |

chargoddard/mixtralmerge-8x7B-rebalanced-test | chargoddard | 2024-01-05T05:48:53Z | 1,342 | 0 | transformers | [

"transformers",

"pytorch",

"safetensors",

"mixtral",

"text-generation",

"merge",

"mergekit",

"conversational",

"dataset:Open-Orca/SlimOrca",

"license:cc-by-nc-4.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2024-01-04T07:49:22Z | ---

license: cc-by-nc-4.0

tags:

- merge

- mergekit

datasets:

- Open-Orca/SlimOrca

---

This is a dumb experiment - don't expect it to be good!

I merged a few Mixtral models together then tuned *only the routing parameters*. There was a pretty steep drop in loss with only a bit of training - went from ~0.99 to ~.7 over about ten million tokens.

I'm hoping this after-the-fact balancing will have reduced some of the nasty behavior typical of current tunes. But maybe it just made it even dumber! We'll see.

Uses ChatML format.

Will update with more details if it turns out promising. |

flemmingmiguel/Mistrality-7B | flemmingmiguel | 2024-01-11T08:31:26Z | 1,342 | 0 | transformers | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"merge",

"mergekit",

"lazymergekit",

"argilla/distilabeled-Hermes-2.5-Mistral-7B",

"EmbeddedLLM/Mistral-7B-Merge-14-v0.4",

"conversational",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2024-01-11T08:27:38Z | ---

license: apache-2.0

tags:

- merge

- mergekit

- lazymergekit

- argilla/distilabeled-Hermes-2.5-Mistral-7B

- EmbeddedLLM/Mistral-7B-Merge-14-v0.4

---

# Mistrality-7B

Mistrality-7B is a merge of the following models using [LazyMergekit](https://colab.research.google.com/drive/1obulZ1ROXHjYLn6PPZJwRR6GzgQogxxb?usp=sharing):

* [argilla/distilabeled-Hermes-2.5-Mistral-7B](https://huggingface.co/argilla/distilabeled-Hermes-2.5-Mistral-7B)

* [EmbeddedLLM/Mistral-7B-Merge-14-v0.4](https://huggingface.co/EmbeddedLLM/Mistral-7B-Merge-14-v0.4)

## 🧩 Configuration

```yaml

slices:

- sources:

- model: argilla/distilabeled-Hermes-2.5-Mistral-7B

layer_range: [0, 32]

- model: EmbeddedLLM/Mistral-7B-Merge-14-v0.4

layer_range: [0, 32]

merge_method: slerp

base_model: argilla/distilabeled-Hermes-2.5-Mistral-7B

parameters:

t:

- filter: self_attn

value: [0, 0.5, 0.3, 0.7, 1]

- filter: mlp

value: [1, 0.5, 0.7, 0.3, 0]

- value: 0.5

dtype: bfloat16

```

## 💻 Usage

```python

!pip install -qU transformers accelerate

from transformers import AutoTokenizer

import transformers

import torch

model = "flemmingmiguel/Mistrality-7B"

messages = [{"role": "user", "content": "What is a large language model?"}]

tokenizer = AutoTokenizer.from_pretrained(model)

prompt = tokenizer.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

pipeline = transformers.pipeline(

"text-generation",

model=model,

torch_dtype=torch.float16,

device_map="auto",

)

outputs = pipeline(prompt, max_new_tokens=256, do_sample=True, temperature=0.7, top_k=50, top_p=0.95)

print(outputs[0]["generated_text"])

``` |

macadeliccc/Orca-SOLAR-4x10.7b | macadeliccc | 2024-03-04T19:20:54Z | 1,342 | 0 | transformers | [

"transformers",

"safetensors",

"mixtral",

"text-generation",

"code",

"conversational",

"en",

"dataset:Intel/orca_dpo_pairs",

"arxiv:2312.15166",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2024-01-13T18:48:26Z | ---

language:

- en

license: apache-2.0

library_name: transformers

tags:

- code

datasets:

- Intel/orca_dpo_pairs

model-index:

- name: Orca-SOLAR-4x10.7b

results:

- task:

type: text-generation

name: Text Generation

dataset:

name: AI2 Reasoning Challenge (25-Shot)

type: ai2_arc

config: ARC-Challenge

split: test

args:

num_few_shot: 25

metrics:

- type: acc_norm

value: 68.52

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/Orca-SOLAR-4x10.7b

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: HellaSwag (10-Shot)

type: hellaswag

split: validation

args:

num_few_shot: 10

metrics:

- type: acc_norm

value: 86.78

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/Orca-SOLAR-4x10.7b

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: MMLU (5-Shot)

type: cais/mmlu

config: all

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 67.03

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/Orca-SOLAR-4x10.7b

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: TruthfulQA (0-shot)

type: truthful_qa

config: multiple_choice

split: validation

args:

num_few_shot: 0

metrics:

- type: mc2

value: 64.54

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/Orca-SOLAR-4x10.7b

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: Winogrande (5-shot)

type: winogrande

config: winogrande_xl

split: validation

args:

num_few_shot: 5

metrics:

- type: acc

value: 83.9

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/Orca-SOLAR-4x10.7b

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: GSM8k (5-shot)

type: gsm8k

config: main

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 68.23

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/Orca-SOLAR-4x10.7b

name: Open LLM Leaderboard

---

# 🌞🚀 Orca-SOLAR-4x10.7_36B

Merge of four Solar-10.7B instruct finetunes.

## 🌟 Usage

This SOLAR model _loves_ to code. In my experience, if you ask it a code question it will use almost all of the available token limit to complete the code.

However, this can also be to its own detriment. If the request is complex it may not finish the code in a given time period. This behavior is not because of an eos token, as it finishes sentences quite normally if its a non code question.

Your mileage may vary.

## 🌎 HF Spaces

This 36B parameter model is capabale of running on free tier hardware (CPU only - GGUF)

+ Try the model [here](https://huggingface.co/spaces/macadeliccc/Orca-SOLAR-4x10.7b-chat-GGUF)

## 🌅 Code Example

Example also available in [colab](https://colab.research.google.com/drive/10FWCLODU_EFclVOFOlxNYMmSiLilGMBZ?usp=sharing)

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

def generate_response(prompt):

"""

Generate a response from the model based on the input prompt.

Args:

prompt (str): Prompt for the model.

Returns:

str: The generated response from the model.

"""

# Tokenize the input prompt

inputs = tokenizer(prompt, return_tensors="pt")

# Generate output tokens

outputs = model.generate(**inputs, max_new_tokens=512, eos_token_id=tokenizer.eos_token_id, pad_token_id=tokenizer.pad_token_id)

# Decode the generated tokens to a string

response = tokenizer.decode(outputs[0], skip_special_tokens=True)

return response

# Load the model and tokenizer

model_id = "macadeliccc/Orca-SOLAR-4x10.7b"

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(model_id, load_in_4bit=True)

prompt = "Explain the proof of Fermat's Last Theorem and its implications in number theory."

print("Response:")

print(generate_response(prompt), "\n")

```

## Llama.cpp

GGUF Quants available [here](https://huggingface.co/macadeliccc/Orca-SOLAR-4x10.7b-GGUF)

## Evaluations

https://huggingface.co/datasets/open-llm-leaderboard/details_macadeliccc__Orca-SOLAR-4x10.7b

### 📚 Citations

```bibtex

@misc{kim2023solar,

title={SOLAR 10.7B: Scaling Large Language Models with Simple yet Effective Depth Up-Scaling},

author={Dahyun Kim and Chanjun Park and Sanghoon Kim and Wonsung Lee and Wonho Song and Yunsu Kim and Hyeonwoo Kim and Yungi Kim and Hyeonju Lee and Jihoo Kim and Changbae Ahn and Seonghoon Yang and Sukyung Lee and Hyunbyung Park and Gyoungjin Gim and Mikyoung Cha and Hwalsuk Lee and Sunghun Kim},

year={2023},

eprint={2312.15166},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

# [Open LLM Leaderboard Evaluation Results](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)

Detailed results can be found [here](https://huggingface.co/datasets/open-llm-leaderboard/details_macadeliccc__Orca-SOLAR-4x10.7b)

| Metric |Value|

|---------------------------------|----:|

|Avg. |73.17|

|AI2 Reasoning Challenge (25-Shot)|68.52|

|HellaSwag (10-Shot) |86.78|

|MMLU (5-Shot) |67.03|

|TruthfulQA (0-shot) |64.54|

|Winogrande (5-shot) |83.90|

|GSM8k (5-shot) |68.23|

|

adamo1139/yi-34b-200k-rawrr-dpo-1 | adamo1139 | 2024-05-27T21:29:39Z | 1,342 | 2 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"finetune",

"fine-tune",

"dataset:adamo1139/rawrr_v1",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2024-01-15T15:06:21Z | ---

license: apache-2.0

tags:

- finetune

- fine-tune

datasets:

- adamo1139/rawrr_v1

---

NEW STRONGER RAWRR FINETUNE COMING SOON!

This model is Yi-34B-200K fine-tuned using DPO on rawrr_v1 dataset using QLoRA at ctx 200, lora_r 4 and lora_alpha 8. I then merged the adapter with base model.

This model is akin to raw LLaMa 65B, it's not meant to follow instructions but instead should be useful as base for further fine-tuning.

Rawrr_v1 dataset made it so that this model issue less refusals, especially for benign topics, and is moreso completion focused rather than instruct focused.

Base yi-34B-200k suffers from contamination on instruct and refusal datasets, i am attempting to fix that by training base models with DPO on rawrr dataset, making them more raw.

License:

yi-license + non-commercial use only |

macadeliccc/piccolo-8x7b | macadeliccc | 2024-03-04T16:33:35Z | 1,342 | 1 | transformers | [

"transformers",

"safetensors",

"mixtral",

"text-generation",

"license:cc-by-4.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2024-01-16T19:41:13Z | ---

license: cc-by-4.0

model-index:

- name: piccolo-8x7b

results:

- task:

type: text-generation

name: Text Generation

dataset:

name: AI2 Reasoning Challenge (25-Shot)

type: ai2_arc

config: ARC-Challenge

split: test

args:

num_few_shot: 25

metrics:

- type: acc_norm

value: 69.62

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/piccolo-8x7b

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: HellaSwag (10-Shot)

type: hellaswag

split: validation

args:

num_few_shot: 10

metrics:

- type: acc_norm

value: 86.98

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/piccolo-8x7b

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: MMLU (5-Shot)

type: cais/mmlu

config: all

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 64.13

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/piccolo-8x7b

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: TruthfulQA (0-shot)

type: truthful_qa

config: multiple_choice

split: validation

args:

num_few_shot: 0

metrics:

- type: mc2

value: 64.17

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/piccolo-8x7b

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: Winogrande (5-shot)

type: winogrande

config: winogrande_xl

split: validation

args:

num_few_shot: 5

metrics:

- type: acc

value: 79.87

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/piccolo-8x7b

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: GSM8k (5-shot)

type: gsm8k

config: main

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 72.02

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=macadeliccc/piccolo-8x7b

name: Open LLM Leaderboard

---

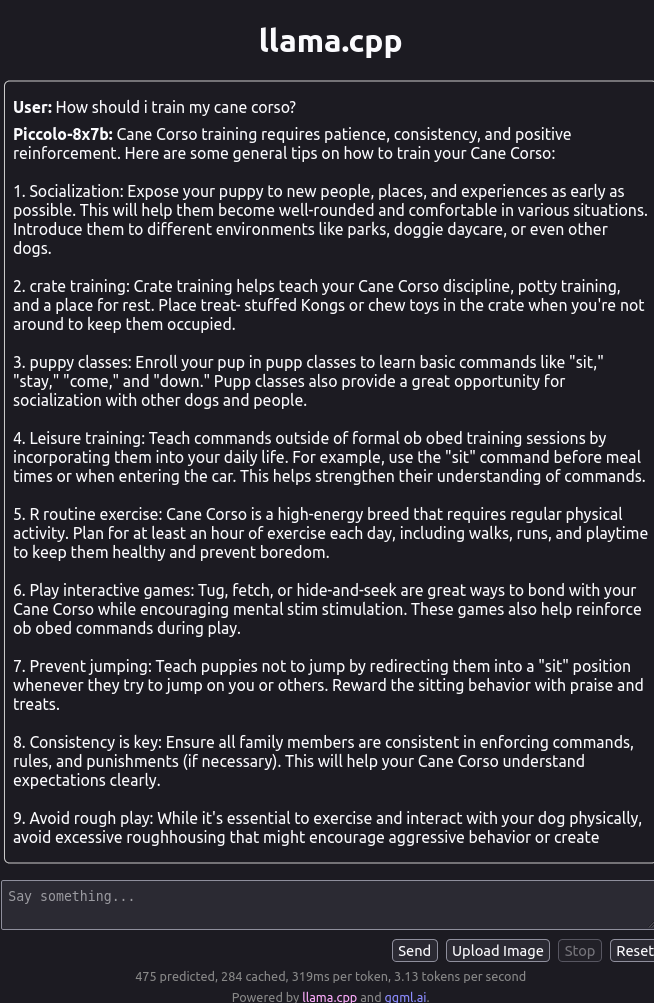

# Piccolo-8x7b

**In loving memory of my dog Klaus (Piccolo)**

_~ Piccolo (Italian): the little one ~_

Based on mlabonne/NeuralBeagle-7b

Quants are available [here](https://huggingface.co/macadeliccc/piccolo-8x7b-GGUF)

# Code Example

Inference and Evaluation colab available [here](https://colab.research.google.com/drive/1ZqLNvVvtFHC_4v2CgcMVh7pP9Fvx0SbI?usp=sharing)

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

def generate_response(prompt):

"""

Generate a response from the model based on the input prompt.

Args:

prompt (str): Prompt for the model.

Returns:

str: The generated response from the model.

"""

inputs = tokenizer(prompt, return_tensors="pt")

outputs = model.generate(**inputs, max_new_tokens=256, eos_token_id=tokenizer.eos_token_id, pad_token_id=tokenizer.pad_token_id)

response = tokenizer.decode(outputs[0], skip_special_tokens=True)

return response

model_id = "macadeliccc/piccolo-8x7b"

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(model_id,load_in_4bit=True)

prompt = "What is the best way to train Cane Corsos?"

print("Response:")

print(generate_response(prompt), "\n")

```

The model is capable of quality code, math, and logical reasoning. Try whatever questions you think of.

## Example output

# Evaluations

https://huggingface.co/datasets/open-llm-leaderboard/details_macadeliccc__piccolo-8x7b

# [Open LLM Leaderboard Evaluation Results](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)

Detailed results can be found [here](https://huggingface.co/datasets/open-llm-leaderboard/details_macadeliccc__piccolo-8x7b)

| Metric |Value|

|---------------------------------|----:|

|Avg. |72.80|

|AI2 Reasoning Challenge (25-Shot)|69.62|

|HellaSwag (10-Shot) |86.98|

|MMLU (5-Shot) |64.13|

|TruthfulQA (0-shot) |64.17|

|Winogrande (5-shot) |79.87|

|GSM8k (5-shot) |72.02|

|

h2m/mhm-8x7B-FrankenMoE-v1.0 | h2m | 2024-01-24T05:03:35Z | 1,342 | 2 | transformers | [

"transformers",

"safetensors",

"mixtral",

"text-generation",

"merge",

"moe",

"en",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2024-01-17T13:07:05Z | ---

license: apache-2.0

language:

- en

tags:

- merge

- moe

---

## Recipe for a Beautiful Frankenstein

In the laboratory of the mind, where thoughts entwine,

MHM and MOE, a potion for a unique design.

With stitches of curiosity and bolts of creativity,

8 times 7, the magic number, a poetic proclivity.

### Ingredients:

- **MHM:** A dash of mystery, a sprinkle of hum,

Blend with a melody, let the heartstrings strum.

Murmurs in the shadows, whispers in the light,

Stir the concoction gently, make the emotions ignite.

- **MOE:** Essence of the moment, like dew on a rose,

Capture the now, before time swiftly goes.

Colors of experience, a palette so divine,

Mix with MHM, let the fusion entwine.

### Directions:

1. **Take 8 parts MHM,** elusive and profound,

Let it dance in your thoughts, on imagination's ground.

Blend it with the echoes, the silent undertones,

A symphony of ideas, where inspiration condones.

2. **Add 7 parts MOE,** the fleeting embrace,

Seize the seconds, let them leave a trace.

Infuse it with memories, both bitter and sweet,

The tapestry of time, where moments and dreams meet.

3. **Stir the potion with wonder,** a wand of delight,

Let the sparks fly, in the dark of the night.

Watch as the alchemy unfolds its grand design,

MHM and MOE, a beautiful Frankenstein.

### Conclusion:

In the laboratory of life, where dreams come alive,

MHM and MOE, the recipe to thrive.

A creation so poetic, a fusion so divine,

8 times 7, a symphony of time.

As the echoes resonate, and the moments blend,

A masterpiece unfolds, where beginnings and ends,

MHM and MOE, a concoction so rare,

A beautiful Frankenstein, beyond compare.

---

MoE model build with:

1. https://github.com/cg123/mergekit/tree/mixtral

2. Mistral models, latest merges and fine tunes.

3. Expert prompts heavily inspired by https://huggingface.co/Kquant03/Eukaryote-8x7B-bf16

For details check model files, there is config yaml I used to create that model.

Come back later for more details. |

fierysurf/Kan-LLaMA-7B-SFT-v0.1-sharded | fierysurf | 2024-01-18T08:47:22Z | 1,342 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"kn",

"en",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2024-01-18T08:38:44Z | ---

license: mit

language:

- kn

- en

---

# Kannada LLaMA 7B

Welcome to the repository dedicated to the Kannada LLaMA 7B model. This repository is specifically tailored to offer users a sharded version of the original Kannada LLaMA 7B model, which was initially developed and released by Tensoic. The model in question is a significant development in the field of language processing and machine learning, specifically tailored for the Kannada language.

The original model, titled "Kan-LLaMA-7B-base", is available on Hugging Face, a popular platform for hosting machine learning models. You can access and explore the original model by visiting the Hugging Face website at this link: [Tensoic/Kan-LLaMA-7B-SFT-v0.1](https://huggingface.co/Tensoic/Kan-LLaMA-7B-SFT-v0.1). This link will direct you to the model's page where you can find detailed information about its architecture, usage, and capabilities.

For those who are interested in a deeper understanding of the Kannada LLaMA 7B model, including its development process, applications, and technical specifications, Tensoic has published an extensive blog post. This blog post provides valuable insights into the model's creation and its potential impact on natural language processing tasks involving the Kannada language. To read this informative and detailed blog post, please follow this link: [Tensoic's Kannada LLaMA blog post](https://www.tensoic.com/blog/kannada-llama/).

The blog is an excellent resource for anyone looking to gain a comprehensive understanding of the model, whether you are a student, researcher, or a professional in the field of machine learning and language processing.

In summary, this repository serves as a gateway to accessing the sharded version of the Kannada LLaMA 7B model and provides links to the original model and an informative blog post for a more in-depth exploration. We encourage all interested parties to explore these resources to fully appreciate the capabilities and advancements represented by the Kannada LLaMA 7B model. |

gokaygokay/paligemma-rich-captions | gokaygokay | 2024-06-15T10:53:55Z | 1,342 | 8 | transformers | [

"transformers",

"safetensors",

"paligemma",

"pretraining",

"image-text-to-text",

"en",

"dataset:google/docci",

"license:apache-2.0",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | image-text-to-text | 2024-05-17T04:54:32Z | ---

license: apache-2.0

datasets:

- google/docci

language:

- en

library_name: transformers

pipeline_tag: image-text-to-text

---

Fine tuned version of [PaliGemma](https://huggingface.co/google/paligemma-3b-pt-224-jax) model on [google/docci](https://huggingface.co/datasets/google/docci) dataset with middle size captions between 200 and 350 characters. This model has less halucinations.

```

pip install git+https://github.com/huggingface/transformers

```

```python

from transformers import AutoProcessor, PaliGemmaForConditionalGeneration

from PIL import Image

import requests

import torch

model_id = "gokaygokay/paligemma-rich-captions"

url = "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/tasks/car.jpg?download=true"

image = Image.open(requests.get(url, stream=True).raw)

model = PaliGemmaForConditionalGeneration.from_pretrained(model_id).to('cuda').eval()

processor = AutoProcessor.from_pretrained(model_id)

## prefix

prompt = "caption en"

model_inputs = processor(text=prompt, images=image, return_tensors="pt").to('cuda')

input_len = model_inputs["input_ids"].shape[-1]

with torch.inference_mode():

generation = model.generate(**model_inputs, max_new_tokens=256, do_sample=False)

generation = generation[0][input_len:]

decoded = processor.decode(generation, skip_special_tokens=True)

print(decoded)

``` |

digiplay/CCTV2.5d_v1 | digiplay | 2024-05-05T00:44:50Z | 1,341 | 4 | diffusers | [

"diffusers",

"safetensors",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"license:other",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | 2023-06-22T22:30:21Z | ---

license: other

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

inference: true

---

Model info:

https://civitai.com/models/93854/cctv25d

Sample image I made :

|

daekeun-ml/Llama-2-ko-DPO-13B | daekeun-ml | 2023-10-31T13:19:37Z | 1,341 | 19 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"llama-2",

"dpo",

"ko",

"license:llama2",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-10-31T08:44:53Z | ---

language:

- ko

tags:

- llama-2

- dpo

pipeline_tag: text-generation

license: llama2

---

# Llama-2-ko-DPO-13B

Based on the changed criteria from Open-AI-LLM leaderboard, the evaluation metric exceeded 50 percent for the first time. I am pretty proud of myself, even though this score will soon fade into the background as I'm simply testing a hypothesis rather than competing, and there are a lot of great models coming out of 7B.

Since my day job is technical support, not R&D, I could not spend a lot of time on it, so I only processed about 1000 samples and tuned them with DPO (Direct Preference Optimization) to reduce hallucination. The infrastructure was the same as before, using AWS g5.12xlarge, and no additional prompts were given.

I think the potential of the base LLM model is enormous, seeing how much hallucination are reduced with very little data and without much effort. When I meet with customers, many of them have difficulty implementing GenAI features. But it does not take much effort to implement them since many template codes/APIs are well done. It is a world where anyone who is willing to process data can easily and quickly create their own quality model.

### Model Details

- Base Model: [Llama-2-ko-instruct-13B](https://huggingface.co/daekeun-ml/Llama-2-ko-instruct-13B)

### Datasets

- 1,000 samples generated by myself

- Sentences generated by Amazon Bedrock Claude-2 were adopted as chosen, and sentences generated by the Llama-2-13B model fine-tuned with SFT were adopted as rejected.

### Benchmark

- This is the first Korean LLM model to exceed the average metric of 50 percent.

- SOTA model as of October 31, 2023 (https://huggingface.co/spaces/upstage/open-ko-llm-leaderboard).

| Model | Average |Ko-ARC | Ko-HellaSwag | Ko-MMLU | Ko-TruthfulQA | Ko-CommonGen V2 |

| --- | --- | --- | --- | --- | --- | --- |

| **daekeun-ml/Llama-2-ko-DPO-13B (Ours)** | **51.03** | 47.53 | 58.28 | 43.59 | 51.91 | 53.84 |

| [daekeun-ml/Llama-2-ko-instruct-13B](https://huggingface.co/daekeun-ml/Llama-2-ko-instruct-13B) | 49.52 | 46.5 | 56.9 | 43.76 | 42 | 58.44 |

| [kyujinpy/Korean-OpenOrca-13B](https://huggingface.co/kyujinpy/KO-Platypus2-13B) | 48.79 | 43.09 | 54.13 | 40.24 | 45.22 | 61.28 |

### License

- Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International Public License, under LLAMA 2 COMMUNITY LICENSE AGREEMENT

This model was created as a personal experiment, unrelated to the organization I work for. |

Minirecord/Mini_synatra_7b_02 | Minirecord | 2023-11-24T07:12:23Z | 1,341 | 3 | transformers | [

"transformers",

"pytorch",

"mistral",

"text-generation",

"conversational",

"dataset:hwanhe/Mini_orca",

"base_model:maywell/Synatra-7B-v0.3-dpo",

"license:cc-by-sa-4.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-11-22T01:38:35Z | ---

license: cc-by-sa-4.0

base_model: maywell/Synatra-7B-v0.3-dpo

datasets:

- hwanhe/Mini_orca

pipeline_tag: text-generation

---

# Mini_synatra_7b_02

# (주)Minirecord에서 파인튜닝한 모델입니다.

<img src = "https://cdn-uploads.huggingface.co/production/uploads/64c1e30e2bac49787a998397/47NT3IZ6y-oNnB96_oJPJ.png" width="30%" height="30%">

The license is cc-by-sa-4.0

## Model Details

### input

models input text only.

### output

models output text only.

### Base Model

[maywell/Synatra-7B-v0.3-dpo](https://huggingface.co/maywell/Synatra-7B-v0.3-dpo)

## Training Details

### Training Data

hwanhe/Mini_orca (private)

직접 손번역, 검수한 7만개의 Orca 데이터셋을 이용 하였습니다.

추가 훈련 정보는 계속 업데이트 하겠습니다.

|

brucethemoose/CaPlatTessDolXaBoros-Yi-34B-200K-DARE-Ties-ExtremeDensity | brucethemoose | 2024-03-11T20:09:17Z | 1,341 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"merge",

"license:other",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-12-09T16:39:14Z | ---

license: other

tags:

- merge

license_name: yi-license

license_link: https://huggingface.co/01-ai/Yi-34B-200K/blob/main/LICENSE

model-index:

- name: CaPlatTessDolXaBoros-Yi-34B-200K-DARE-Ties-ExtremeDensity

results:

- task:

type: text-generation

name: Text Generation

dataset:

name: AI2 Reasoning Challenge (25-Shot)

type: ai2_arc

config: ARC-Challenge

split: test

args:

num_few_shot: 25

metrics:

- type: acc_norm

value: 66.89

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=brucethemoose/CaPlatTessDolXaBoros-Yi-34B-200K-DARE-Ties-ExtremeDensity

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: HellaSwag (10-Shot)

type: hellaswag

split: validation

args:

num_few_shot: 10

metrics:

- type: acc_norm

value: 85.69

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=brucethemoose/CaPlatTessDolXaBoros-Yi-34B-200K-DARE-Ties-ExtremeDensity

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: MMLU (5-Shot)

type: cais/mmlu

config: all

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 77.35

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=brucethemoose/CaPlatTessDolXaBoros-Yi-34B-200K-DARE-Ties-ExtremeDensity

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: TruthfulQA (0-shot)

type: truthful_qa

config: multiple_choice

split: validation

args:

num_few_shot: 0

metrics:

- type: mc2

value: 57.63

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=brucethemoose/CaPlatTessDolXaBoros-Yi-34B-200K-DARE-Ties-ExtremeDensity

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: Winogrande (5-shot)

type: winogrande

config: winogrande_xl

split: validation

args:

num_few_shot: 5

metrics:

- type: acc

value: 82.0

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=brucethemoose/CaPlatTessDolXaBoros-Yi-34B-200K-DARE-Ties-ExtremeDensity

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: GSM8k (5-shot)

type: gsm8k

config: main

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 59.82

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=brucethemoose/CaPlatTessDolXaBoros-Yi-34B-200K-DARE-Ties-ExtremeDensity

name: Open LLM Leaderboard

---

Just a test of a very high density DARE ties merge, for benchmarking on the open llm leaderboard.

You probably shouldn't use this model, use this one instead: https://huggingface.co/brucethemoose/CaPlatTessDolXaBoros-Yi-34B-200K-DARE-Ties-HighDensity

mergekit config:

```

models:

- model: /home/alpha/Storage/Models/Raw/chargoddard_Yi-34B-200K-Llama

# no parameters necessary for base model

- model: /home/alpha/Storage/Models/Raw/migtissera_Tess-34B-v1.4

parameters:

weight: 0.19

density: 0.83

- model: /home/alpha//Storage/Models/Raw/bhenrym14_airoboros-3_1-yi-34b-200k

parameters:

weight: 0.14

density: 0.6

- model: /home/alpha/Storage/Models/Raw/Nous-Capybara-34B

parameters:

weight: 0.19

density: 0.83

- model: /home/alpha/Storage/Models/Raw/kyujinpy_PlatYi-34B-200K-Q

parameters:

weight: 0.14

density: 0.6

- model: /home/alpha/FastModels/ehartford_dolphin-2.2-yi-34b-200k

parameters:

weight: 0.19

density: 0.83

- model: /home/alpha/FastModels/fblgit_una-xaberius-34b-v1beta

parameters:

weight: 0.15

density: 0.08

merge_method: dare_ties

base_model: /home/alpha/Storage/Models/Raw/chargoddard_Yi-34B-200K-Llama

parameters:

int8_mask: true

dtype: bfloat16

```

# [Open LLM Leaderboard Evaluation Results](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)

Detailed results can be found [here](https://huggingface.co/datasets/open-llm-leaderboard/details_brucethemoose__CaPlatTessDolXaBoros-Yi-34B-200K-DARE-Ties-ExtremeDensity)

| Metric |Value|

|---------------------------------|----:|

|Avg. |71.57|

|AI2 Reasoning Challenge (25-Shot)|66.89|

|HellaSwag (10-Shot) |85.69|

|MMLU (5-Shot) |77.35|

|TruthfulQA (0-shot) |57.63|

|Winogrande (5-shot) |82.00|

|GSM8k (5-shot) |59.82|

|

beberik/Nyxene-v3-11B | beberik | 2024-03-04T16:16:13Z | 1,341 | 10 | transformers | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"merge",

"license:cc-by-nc-4.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-12-12T22:45:39Z | ---

license: cc-by-nc-4.0

tags:

- merge

model-index:

- name: Nyxene-v3-11B

results:

- task:

type: text-generation

name: Text Generation

dataset:

name: AI2 Reasoning Challenge (25-Shot)

type: ai2_arc

config: ARC-Challenge

split: test

args:

num_few_shot: 25

metrics:

- type: acc_norm

value: 69.62

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=beberik/Nyxene-v3-11B

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: HellaSwag (10-Shot)

type: hellaswag

split: validation

args:

num_few_shot: 10

metrics:

- type: acc_norm

value: 85.33

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=beberik/Nyxene-v3-11B

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: MMLU (5-Shot)

type: cais/mmlu

config: all

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 64.75

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=beberik/Nyxene-v3-11B

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: TruthfulQA (0-shot)

type: truthful_qa

config: multiple_choice

split: validation

args:

num_few_shot: 0

metrics:

- type: mc2

value: 60.91

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=beberik/Nyxene-v3-11B

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: Winogrande (5-shot)

type: winogrande

config: winogrande_xl

split: validation

args:

num_few_shot: 5

metrics:

- type: acc

value: 80.19

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=beberik/Nyxene-v3-11B

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: GSM8k (5-shot)

type: gsm8k

config: main

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 63.53

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=beberik/Nyxene-v3-11B

name: Open LLM Leaderboard

---

## Description

This repo contains bf16 files of Nyxene-v1-11B. Just new version with some new things.

## Model used

- [Intel/neural-chat-7b-v3-3-Slerp](https://huggingface.co/Intel/neural-chat-7b-v3-3-Slerp)

- [AIDC-ai-business/Marcoroni-7B-v3](https://huggingface.co/AIDC-ai-business/Marcoroni-7B-v3)

- [rwitz/go-bruins-v2](https://huggingface.co/rwitz/go-bruins-v2)

- [chargoddard/loyal-piano-m7-cdpo](https://huggingface.co/chargoddard/loyal-piano-m7-cdpo)

## Prompt template

Just use chatml.

## The secret sauce

go-bruins-loyal-piano-11B :

```

slices:

- sources:

- model: rwitz/go-bruins-v2

layer_range: [0, 24]

- sources:

- model: chargoddard/loyal-piano-m7-cdpo

layer_range: [8, 32]

merge_method: passthrough

dtype: bfloat16

```

neural-marcoroni-11B :

```

slices:

- sources:

- model: AIDC-ai-business/Marcoroni-7B-v3

layer_range: [0, 24]

- sources:

- model: Intel/neural-chat-7b-v3-3-Slerp

layer_range: [8, 32]

merge_method: passthrough

dtype: bfloat16

```

Nyxene-11B :

```

slices:

- sources:

- model: "./go-bruins-loyal-piano-11B"

layer_range: [0, 48]

- model: "./neural-marcoroni-11B"

layer_range: [0, 48]

merge_method: slerp

base_model: "./go-bruins-loyal-piano-11B"

parameters:

t:

- filter: lm_head

value: [0.5]

- filter: embed_tokens

value: [0.75]

- filter: self_attn

value: [0.75, 0.25]

- filter: mlp

value: [0.25, 0.75]

- filter: layernorm

value: [0.5, 0.5]

- filter: modelnorm

value: [0.5]

- value: 0.5 # fallback for rest of tensors

dtype: bfloat16

```

I use [mergekit](https://github.com/cg123/mergekit) for all the manipulation told here.

Thanks to the [Undi95](https://huggingface.co/Undi95) for the original [11B mistral merge](https://huggingface.co/Undi95/Mistral-11B-OmniMix) recipe.

# [Open LLM Leaderboard Evaluation Results](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)

Detailed results can be found [here](https://huggingface.co/datasets/open-llm-leaderboard/details_beberik__Nyxene-v3-11B)

| Metric |Value|

|---------------------------------|----:|

|Avg. |70.72|

|AI2 Reasoning Challenge (25-Shot)|69.62|

|HellaSwag (10-Shot) |85.33|

|MMLU (5-Shot) |64.75|

|TruthfulQA (0-shot) |60.91|

|Winogrande (5-shot) |80.19|

|GSM8k (5-shot) |63.53|

|

xformAI/facebook-opt-125m-qcqa-ub-6-best-for-q-loss | xformAI | 2024-01-23T14:18:19Z | 1,341 | 0 | transformers | [

"transformers",

"pytorch",

"opt",

"text-generation",

"en",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2024-01-23T14:15:41Z | ---

license: mit

language:

- en

library_name: transformers

---

This is a QCQA version of the original model facebook/opt-125m. In this version, the original MHA architecture is preserved but instead of having a single K/V head, different K/V heads corresponding to the same group have the same mean-pooled K or V values. It has upto 6 groups of KV heads per layer instead of original 12 KV heads in the MHA implementation. This implementation is supposed to more efficient than corresponding GQA one. This has been optimized for quality loss. |

easybits/ProteusV0.4.fp16 | easybits | 2024-03-21T12:46:05Z | 1,341 | 2 | diffusers | [

"diffusers",

"safetensors",

"text-to-image",

"license:gpl-3.0",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionXLPipeline",

"region:us"

] | text-to-image | 2024-02-23T08:09:02Z | ---

pipeline_tag: text-to-image

widget:

- text: 3 fish in a fish tank wearing adorable outfits, best quality, hd

- text: >-

a woman sitting in a wooden chair in the middle of a grass field on a farm,

moonlight, best quality, hd, anime art

- text: 'Masterpiece, glitch, holy holy holy, fog, by DarkIncursio '

- text: >-

jpeg Full Body Photo of a weird imaginary Female creatures captured on

celluloid film, (((ghost))),heavy rain, thunder, snow, water's surface,

night, expressionless, Blood, Japan God,(school), Ultra Realistic,

((Scary)),looking at camera, screem, plaintive cries, Long claws, fangs,

scales,8k, HDR, 500px, mysterious and ornate digital art, photic, intricate,

fantasy aesthetic.

- text: >-

The divine tree of knowledge, an interplay between purple and gold, floats

in the void of the sea of quanta, the tree is made of crystal, the void is

made of nothingness, strong contrast, dim lighting, beautiful and surreal

scene. wide shot

- text: >-

The image features an older man, a long white beard and mustache, He has a

stern expression, giving the impression of a wise and experienced

individual. The mans beard and mustache are prominent, adding to his

distinguished appearance. The close-up shot of the mans face emphasizes his

facial features and the intensity of his gaze.

- text: 'Ghost in the Shell Stand Alone Complex '

- text: >-

(impressionistic realism by csybgh), a 50 something male, working in

banking, very short dyed dark curly balding hair, Afro-Asiatic ancestry,

talks a lot but listens poorly, stuck in the past, wearing a suit, he has a

certain charm, bronze skintone, sitting in a bar at night, he is smoking and

feeling cool, drunk on plum wine, masterpiece, 8k, hyper detailed, smokey

ambiance, perfect hands AND fingers

- text: >-

black fluffy gorgeous dangerous cat animal creature, large orange eyes, big

fluffy ears, piercing gaze, full moon, dark ambiance, best quality,

extremely detailed

license: gpl-3.0

library_name: diffusers

---

<Gallery />

# fp16 Fork or [dataautogpt3/ProteusV0.4](https://huggingface.co/dataautogpt3/ProteusV0.4)

## ProteusV0.4: The Style Update

This update enhances stylistic capabilities, similar to Midjourney's approach, rather than advancing prompt comprehension. Methods used do not infringe on any copyrighted material.

## Proteus

Proteus serves as a sophisticated enhancement over OpenDalleV1.1, leveraging its core functionalities to deliver superior outcomes. Key areas of advancement include heightened responsiveness to prompts and augmented creative capacities. To achieve this, it was fine-tuned using approximately 220,000 GPTV captioned images from copyright-free stock images (with some anime included), which were then normalized. Additionally, DPO (Direct Preference Optimization) was employed through a collection of 10,000 carefully selected high-quality, AI-generated image pairs.

In pursuit of optimal performance, numerous LORA (Low-Rank Adaptation) models are trained independently before being selectively incorporated into the principal model via dynamic application methods. These techniques involve targeting particular segments within the model while avoiding interference with other areas during the learning phase. Consequently, Proteus exhibits marked improvements in portraying intricate facial characteristics and lifelike skin textures, all while sustaining commendable proficiency across various aesthetic domains, notably surrealism, anime, and cartoon-style visualizations.

finetuned/trained on a total of 400k+ images at this point.

## Settings for ProteusV0.4

Use these settings for the best results with ProteusV0.4:

CFG Scale: Use a CFG scale of 4 to 6

Steps: 20 to 60 steps for more detail, 20 steps for faster results.

Sampler: DPM++ 2M SDE

Scheduler: Karras

Resolution: 1280x1280 or 1024x1024

please also consider using these keep words to improve your prompts:

best quality, HD, `~*~aesthetic~*~`.

if you are having trouble coming up with prompts you can use this GPT I put together to help you refine the prompt. https://chat.openai.com/g/g-RziQNoydR-diffusion-master

## Use it with 🧨 diffusers

```python

import torch

from diffusers import (

StableDiffusionXLPipeline,

KDPM2AncestralDiscreteScheduler,

AutoencoderKL

)

# Load VAE component

vae = AutoencoderKL.from_pretrained(

"madebyollin/sdxl-vae-fp16-fix",

torch_dtype=torch.float16

)

# Configure the pipeline

pipe = StableDiffusionXLPipeline.from_pretrained(

"dataautogpt3/ProteusV0.4",

vae=vae,

torch_dtype=torch.float16

)

pipe.scheduler = KDPM2AncestralDiscreteScheduler.from_config(pipe.scheduler.config)

pipe.to('cuda')

# Define prompts and generate image

prompt = "black fluffy gorgeous dangerous cat animal creature, large orange eyes, big fluffy ears, piercing gaze, full moon, dark ambiance, best quality, extremely detailed"

negative_prompt = "nsfw, bad quality, bad anatomy, worst quality, low quality, low resolutions, extra fingers, blur, blurry, ugly, wrongs proportions, watermark, image artifacts, lowres, ugly, jpeg artifacts, deformed, noisy image"

image = pipe(

prompt,

negative_prompt=negative_prompt,

width=1024,

height=1024,

guidance_scale=4,

num_inference_steps=20

).images[0]

```

please support the work I do through donating to me on:

https://www.buymeacoffee.com/DataVoid

or following me on

https://twitter.com/DataPlusEngine |

MoritzLaurer/roberta-base-zeroshot-v2.0-c | MoritzLaurer | 2024-04-04T07:04:03Z | 1,341 | 3 | transformers | [

"transformers",

"safetensors",

"roberta",

"text-classification",

"zero-shot-classification",

"en",

"arxiv:2312.17543",

"base_model:facebookai/roberta-base",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | zero-shot-classification | 2024-03-22T15:11:35Z | ---

language:

- en

tags:

- text-classification

- zero-shot-classification

base_model: facebookai/roberta-base

pipeline_tag: zero-shot-classification

library_name: transformers

license: mit

---

# Model description: roberta-base-zeroshot-v2.0-c

## zeroshot-v2.0 series of models

Models in this series are designed for efficient zeroshot classification with the Hugging Face pipeline.

These models can do classification without training data and run on both GPUs and CPUs.

An overview of the latest zeroshot classifiers is available in my [Zeroshot Classifier Collection](https://huggingface.co/collections/MoritzLaurer/zeroshot-classifiers-6548b4ff407bb19ff5c3ad6f).

The main update of this `zeroshot-v2.0` series of models is that several models are trained on fully commercially-friendly data for users with strict license requirements.

These models can do one universal classification task: determine whether a hypothesis is "true" or "not true" given a text

(`entailment` vs. `not_entailment`).

This task format is based on the Natural Language Inference task (NLI).

The task is so universal that any classification task can be reformulated into this task by the Hugging Face pipeline.

## Training data

Models with a "`-c`" in the name are trained on two types of fully commercially-friendly data:

1. Synthetic data generated with [Mixtral-8x7B-Instruct-v0.1](https://huggingface.co/mistralai/Mixtral-8x7B-Instruct-v0.1).

I first created a list of 500+ diverse text classification tasks for 25 professions in conversations with Mistral-large. The data was manually curated.

I then used this as seed data to generate several hundred thousand texts for these tasks with Mixtral-8x7B-Instruct-v0.1.

The final dataset used is available in the [synthetic_zeroshot_mixtral_v0.1](https://huggingface.co/datasets/MoritzLaurer/synthetic_zeroshot_mixtral_v0.1) dataset

in the subset `mixtral_written_text_for_tasks_v4`. Data curation was done in multiple iterations and will be improved in future iterations.

2. Two commercially-friendly NLI datasets: ([MNLI](https://huggingface.co/datasets/nyu-mll/multi_nli), [FEVER-NLI](https://huggingface.co/datasets/fever)).

These datasets were added to increase generalization.

3. Models without a "`-c`" in the name also included a broader mix of training data with a broader mix of licenses: ANLI, WANLI, LingNLI,

and all datasets in [this list](https://github.com/MoritzLaurer/zeroshot-classifier/blob/7f82e4ab88d7aa82a4776f161b368cc9fa778001/v1_human_data/datasets_overview.csv)

where `used_in_v1.1==True`.

## How to use the models

```python

#!pip install transformers[sentencepiece]

from transformers import pipeline

text = "Angela Merkel is a politician in Germany and leader of the CDU"

hypothesis_template = "This text is about {}"

classes_verbalized = ["politics", "economy", "entertainment", "environment"]

zeroshot_classifier = pipeline("zero-shot-classification", model="MoritzLaurer/deberta-v3-large-zeroshot-v2.0") # change the model identifier here

output = zeroshot_classifier(text, classes_verbalized, hypothesis_template=hypothesis_template, multi_label=False)

print(output)

```

`multi_label=False` forces the model to decide on only one class. `multi_label=True` enables the model to choose multiple classes.

## Metrics

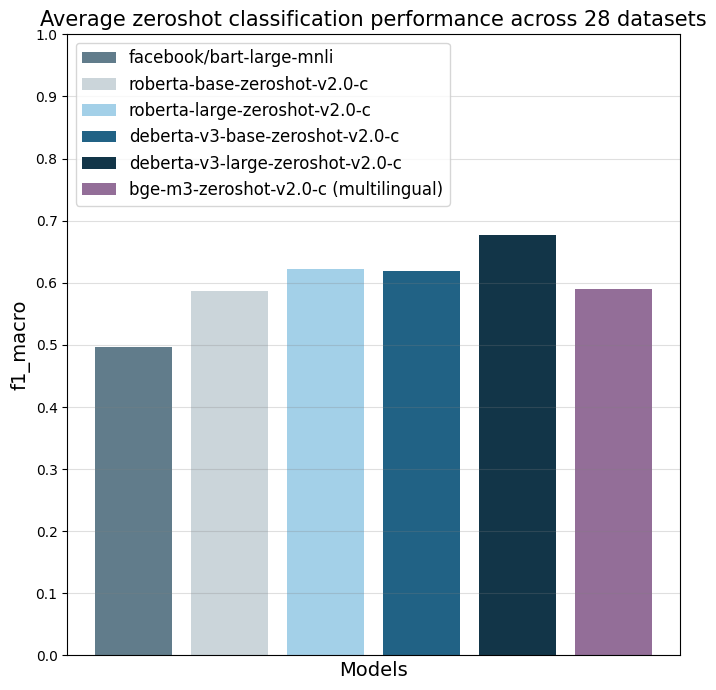

The models were evaluated on 28 different text classification tasks with the [f1_macro](https://scikit-learn.org/stable/modules/generated/sklearn.metrics.f1_score.html) metric.

The main reference point is `facebook/bart-large-mnli` which is, at the time of writing (03.04.24), the most used commercially-friendly 0-shot classifier.

| | facebook/bart-large-mnli | roberta-base-zeroshot-v2.0-c | roberta-large-zeroshot-v2.0-c | deberta-v3-base-zeroshot-v2.0-c | deberta-v3-base-zeroshot-v2.0 (fewshot) | deberta-v3-large-zeroshot-v2.0-c | deberta-v3-large-zeroshot-v2.0 (fewshot) | bge-m3-zeroshot-v2.0-c | bge-m3-zeroshot-v2.0 (fewshot) |

|:---------------------------|---------------------------:|-----------------------------:|------------------------------:|--------------------------------:|-----------------------------------:|---------------------------------:|------------------------------------:|-----------------------:|--------------------------:|

| all datasets mean | 0.497 | 0.587 | 0.622 | 0.619 | 0.643 (0.834) | 0.676 | 0.673 (0.846) | 0.59 | (0.803) |

| amazonpolarity (2) | 0.937 | 0.924 | 0.951 | 0.937 | 0.943 (0.961) | 0.952 | 0.956 (0.968) | 0.942 | (0.951) |

| imdb (2) | 0.892 | 0.871 | 0.904 | 0.893 | 0.899 (0.936) | 0.923 | 0.918 (0.958) | 0.873 | (0.917) |

| appreviews (2) | 0.934 | 0.913 | 0.937 | 0.938 | 0.945 (0.948) | 0.943 | 0.949 (0.962) | 0.932 | (0.954) |

| yelpreviews (2) | 0.948 | 0.953 | 0.977 | 0.979 | 0.975 (0.989) | 0.988 | 0.985 (0.994) | 0.973 | (0.978) |

| rottentomatoes (2) | 0.83 | 0.802 | 0.841 | 0.84 | 0.86 (0.902) | 0.869 | 0.868 (0.908) | 0.813 | (0.866) |

| emotiondair (6) | 0.455 | 0.482 | 0.486 | 0.459 | 0.495 (0.748) | 0.499 | 0.484 (0.688) | 0.453 | (0.697) |

| emocontext (4) | 0.497 | 0.555 | 0.63 | 0.59 | 0.592 (0.799) | 0.699 | 0.676 (0.81) | 0.61 | (0.798) |

| empathetic (32) | 0.371 | 0.374 | 0.404 | 0.378 | 0.405 (0.53) | 0.447 | 0.478 (0.555) | 0.387 | (0.455) |

| financialphrasebank (3) | 0.465 | 0.562 | 0.455 | 0.714 | 0.669 (0.906) | 0.691 | 0.582 (0.913) | 0.504 | (0.895) |

| banking77 (72) | 0.312 | 0.124 | 0.29 | 0.421 | 0.446 (0.751) | 0.513 | 0.567 (0.766) | 0.387 | (0.715) |

| massive (59) | 0.43 | 0.428 | 0.543 | 0.512 | 0.52 (0.755) | 0.526 | 0.518 (0.789) | 0.414 | (0.692) |

| wikitoxic_toxicaggreg (2) | 0.547 | 0.751 | 0.766 | 0.751 | 0.769 (0.904) | 0.741 | 0.787 (0.911) | 0.736 | (0.9) |

| wikitoxic_obscene (2) | 0.713 | 0.817 | 0.854 | 0.853 | 0.869 (0.922) | 0.883 | 0.893 (0.933) | 0.783 | (0.914) |

| wikitoxic_threat (2) | 0.295 | 0.71 | 0.817 | 0.813 | 0.87 (0.946) | 0.827 | 0.879 (0.952) | 0.68 | (0.947) |

| wikitoxic_insult (2) | 0.372 | 0.724 | 0.798 | 0.759 | 0.811 (0.912) | 0.77 | 0.779 (0.924) | 0.783 | (0.915) |

| wikitoxic_identityhate (2) | 0.473 | 0.774 | 0.798 | 0.774 | 0.765 (0.938) | 0.797 | 0.806 (0.948) | 0.761 | (0.931) |

| hateoffensive (3) | 0.161 | 0.352 | 0.29 | 0.315 | 0.371 (0.862) | 0.47 | 0.461 (0.847) | 0.291 | (0.823) |

| hatexplain (3) | 0.239 | 0.396 | 0.314 | 0.376 | 0.369 (0.765) | 0.378 | 0.389 (0.764) | 0.29 | (0.729) |

| biasframes_offensive (2) | 0.336 | 0.571 | 0.583 | 0.544 | 0.601 (0.867) | 0.644 | 0.656 (0.883) | 0.541 | (0.855) |

| biasframes_sex (2) | 0.263 | 0.617 | 0.835 | 0.741 | 0.809 (0.922) | 0.846 | 0.815 (0.946) | 0.748 | (0.905) |

| biasframes_intent (2) | 0.616 | 0.531 | 0.635 | 0.554 | 0.61 (0.881) | 0.696 | 0.687 (0.891) | 0.467 | (0.868) |

| agnews (4) | 0.703 | 0.758 | 0.745 | 0.68 | 0.742 (0.898) | 0.819 | 0.771 (0.898) | 0.687 | (0.892) |

| yahootopics (10) | 0.299 | 0.543 | 0.62 | 0.578 | 0.564 (0.722) | 0.621 | 0.613 (0.738) | 0.587 | (0.711) |

| trueteacher (2) | 0.491 | 0.469 | 0.402 | 0.431 | 0.479 (0.82) | 0.459 | 0.538 (0.846) | 0.471 | (0.518) |

| spam (2) | 0.505 | 0.528 | 0.504 | 0.507 | 0.464 (0.973) | 0.74 | 0.597 (0.983) | 0.441 | (0.978) |

| wellformedquery (2) | 0.407 | 0.333 | 0.333 | 0.335 | 0.491 (0.769) | 0.334 | 0.429 (0.815) | 0.361 | (0.718) |

| manifesto (56) | 0.084 | 0.102 | 0.182 | 0.17 | 0.187 (0.376) | 0.258 | 0.256 (0.408) | 0.147 | (0.331) |

| capsotu (21) | 0.34 | 0.479 | 0.523 | 0.502 | 0.477 (0.664) | 0.603 | 0.502 (0.686) | 0.472 | (0.644) |

These numbers indicate zeroshot performance, as no data from these datasets was added in the training mix.

Note that models without a "`-c`" in the title were evaluated twice: one run without any data from these 28 datasets to test pure zeroshot performance (the first number in the respective column) and

the final run including up to 500 training data points per class from each of the 28 datasets (the second number in brackets in the column, "fewshot"). No model was trained on test data.

Details on the different datasets are available here: https://github.com/MoritzLaurer/zeroshot-classifier/blob/main/v1_human_data/datasets_overview.csv

## When to use which model

- **deberta-v3-zeroshot vs. roberta-zeroshot**: deberta-v3 performs clearly better than roberta, but it is a bit slower.

roberta is directly compatible with Hugging Face's production inference TEI containers and flash attention.

These containers are a good choice for production use-cases. tl;dr: For accuracy, use a deberta-v3 model.

If production inference speed is a concern, you can consider a roberta model (e.g. in a TEI container and [HF Inference Endpoints](https://ui.endpoints.huggingface.co/catalog)).

- **commercial use-cases**: models with "`-c`" in the title are guaranteed to be trained on only commercially-friendly data.

Models without a "`-c`" were trained on more data and perform better, but include data with non-commercial licenses.

Legal opinions diverge if this training data affects the license of the trained model. For users with strict legal requirements,

the models with "`-c`" in the title are recommended.

- **Multilingual/non-English use-cases**: use [bge-m3-zeroshot-v2.0](https://huggingface.co/MoritzLaurer/bge-m3-zeroshot-v2.0) or [bge-m3-zeroshot-v2.0-c](https://huggingface.co/MoritzLaurer/bge-m3-zeroshot-v2.0-c).

Note that multilingual models perform worse than English-only models. You can therefore also first machine translate your texts to English with libraries like [EasyNMT](https://github.com/UKPLab/EasyNMT)

and then apply any English-only model to the translated data. Machine translation also facilitates validation in case your team does not speak all languages in the data.

- **context window**: The `bge-m3` models can process up to 8192 tokens. The other models can process up to 512. Note that longer text inputs both make the

mode slower and decrease performance, so if you're only working with texts of up to 400~ words / 1 page, use e.g. a deberta model for better performance.

- The latest updates on new models are always available in the [Zeroshot Classifier Collection](https://huggingface.co/collections/MoritzLaurer/zeroshot-classifiers-6548b4ff407bb19ff5c3ad6f).

## Reproduction

Reproduction code is available in the `v2_synthetic_data` directory here: https://github.com/MoritzLaurer/zeroshot-classifier/tree/main

## Limitations and bias

The model can only do text classification tasks.

Biases can come from the underlying foundation model, the human NLI training data and the synthetic data generated by Mixtral.

## License

The foundation model was published under the MIT license.

The licenses of the training data vary depending on the model, see above.

## Citation

This model is an extension of the research described in this [paper](https://arxiv.org/pdf/2312.17543.pdf).

If you use this model academically, please cite:

```

@misc{laurer_building_2023,

title = {Building {Efficient} {Universal} {Classifiers} with {Natural} {Language} {Inference}},

url = {http://arxiv.org/abs/2312.17543},

doi = {10.48550/arXiv.2312.17543},

abstract = {Generative Large Language Models (LLMs) have become the mainstream choice for fewshot and zeroshot learning thanks to the universality of text generation. Many users, however, do not need the broad capabilities of generative LLMs when they only want to automate a classification task. Smaller BERT-like models can also learn universal tasks, which allow them to do any text classification task without requiring fine-tuning (zeroshot classification) or to learn new tasks with only a few examples (fewshot), while being significantly more efficient than generative LLMs. This paper (1) explains how Natural Language Inference (NLI) can be used as a universal classification task that follows similar principles as instruction fine-tuning of generative LLMs, (2) provides a step-by-step guide with reusable Jupyter notebooks for building a universal classifier, and (3) shares the resulting universal classifier that is trained on 33 datasets with 389 diverse classes. Parts of the code we share has been used to train our older zeroshot classifiers that have been downloaded more than 55 million times via the Hugging Face Hub as of December 2023. Our new classifier improves zeroshot performance by 9.4\%.},

urldate = {2024-01-05},

publisher = {arXiv},

author = {Laurer, Moritz and van Atteveldt, Wouter and Casas, Andreu and Welbers, Kasper},

month = dec,

year = {2023},

note = {arXiv:2312.17543 [cs]},

keywords = {Computer Science - Artificial Intelligence, Computer Science - Computation and Language},

}

```

### Ideas for cooperation or questions?

If you have questions or ideas for cooperation, contact me at moritz{at}huggingface{dot}co or [LinkedIn](https://www.linkedin.com/in/moritz-laurer/)

### Flexible usage and "prompting"

You can formulate your own hypotheses by changing the `hypothesis_template` of the zeroshot pipeline.

Similar to "prompt engineering" for LLMs, you can test different formulations of your `hypothesis_template` and verbalized classes to improve performance.

```python

from transformers import pipeline

text = "Angela Merkel is a politician in Germany and leader of the CDU"

# formulation 1

hypothesis_template = "This text is about {}"

classes_verbalized = ["politics", "economy", "entertainment", "environment"]

# formulation 2 depending on your use-case

hypothesis_template = "The topic of this text is {}"

classes_verbalized = ["political activities", "economic policy", "entertainment or music", "environmental protection"]

# test different formulations

zeroshot_classifier = pipeline("zero-shot-classification", model="MoritzLaurer/deberta-v3-large-zeroshot-v2.0") # change the model identifier here

output = zeroshot_classifier(text, classes_verbalized, hypothesis_template=hypothesis_template, multi_label=False)

print(output)

``` |

gibobed781/MindChat-baichuan-13B-Q8_0-GGUF | gibobed781 | 2024-06-23T14:47:00Z | 1,341 | 0 | null | [

"gguf",

"llama-cpp",

"gguf-my-repo",

"base_model:X-D-Lab/MindChat-baichuan-13B",

"license:gpl-3.0",

"region:us"

] | null | 2024-06-23T14:46:04Z | ---

base_model: X-D-Lab/MindChat-baichuan-13B

license: gpl-3.0

tags:

- llama-cpp

- gguf-my-repo

---

# gibobed781/MindChat-baichuan-13B-Q8_0-GGUF

This model was converted to GGUF format from [`X-D-Lab/MindChat-baichuan-13B`](https://huggingface.co/X-D-Lab/MindChat-baichuan-13B) using llama.cpp via the ggml.ai's [GGUF-my-repo](https://huggingface.co/spaces/ggml-org/gguf-my-repo) space.

Refer to the [original model card](https://huggingface.co/X-D-Lab/MindChat-baichuan-13B) for more details on the model.

## Use with llama.cpp

Install llama.cpp through brew (works on Mac and Linux)

```bash

brew install llama.cpp

```

Invoke the llama.cpp server or the CLI.

### CLI:

```bash

llama-cli --hf-repo gibobed781/MindChat-baichuan-13B-Q8_0-GGUF --hf-file mindchat-baichuan-13b-q8_0.gguf -p "The meaning to life and the universe is"

```

### Server:

```bash

llama-server --hf-repo gibobed781/MindChat-baichuan-13B-Q8_0-GGUF --hf-file mindchat-baichuan-13b-q8_0.gguf -c 2048

```

Note: You can also use this checkpoint directly through the [usage steps](https://github.com/ggerganov/llama.cpp?tab=readme-ov-file#usage) listed in the Llama.cpp repo as well.

Step 1: Clone llama.cpp from GitHub.

```

git clone https://github.com/ggerganov/llama.cpp

```

Step 2: Move into the llama.cpp folder and build it with `LLAMA_CURL=1` flag along with other hardware-specific flags (for ex: LLAMA_CUDA=1 for Nvidia GPUs on Linux).

```

cd llama.cpp && LLAMA_CURL=1 make

```

Step 3: Run inference through the main binary.

```

./llama-cli --hf-repo gibobed781/MindChat-baichuan-13B-Q8_0-GGUF --hf-file mindchat-baichuan-13b-q8_0.gguf -p "The meaning to life and the universe is"

```

or

```

./llama-server --hf-repo gibobed781/MindChat-baichuan-13B-Q8_0-GGUF --hf-file mindchat-baichuan-13b-q8_0.gguf -c 2048

```

|

kyujinpy/KoR-Orca-Platypus-13B | kyujinpy | 2023-10-19T13:30:25Z | 1,340 | 3 | transformers | [

"transformers",

"pytorch",

"llama",

"text-generation",

"ko",

"dataset:kyujinpy/OpenOrca-KO",

"dataset:kyujinpy/KOpen-platypus",

"license:cc-by-nc-sa-4.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-10-13T20:45:59Z | ---

language:

- ko

datasets:

- kyujinpy/OpenOrca-KO

- kyujinpy/KOpen-platypus

library_name: transformers

pipeline_tag: text-generation

license: cc-by-nc-sa-4.0

---

**(주)미디어그룹사람과숲과 (주)마커의 LLM 연구 컨소시엄에서 개발된 모델입니다**

**The license is `cc-by-nc-sa-4.0`.**

# **🐳KoR-Orca-Platypus-13B🐳**

## Model Details

**Model Developers** Kyujin Han (kyujinpy)

**Input** Models input text only.

**Output** Models generate text only.

**Model Architecture**

KoR-Orca-Platypus-13B is an auto-regressive language model based on the LLaMA2 transformer architecture.

**Repo Link**

Github Korean-OpenOrca: [🐳KoR-Orca-Platypus-13B🐳](https://github.com/Marker-Inc-Korea/Korean-OpenOrca)

**Base Model** [hyunseoki/ko-en-llama2-13b](https://huggingface.co/hyunseoki/ko-en-llama2-13b)

**Training Dataset**

Version of combined dataset: [kyujinpy/KOR-OpenOrca-Platypus](https://huggingface.co/datasets/kyujinpy/KOR-OpenOrca-Platypus)

I combined [OpenOrca-KO](https://huggingface.co/datasets/kyujinpy/OpenOrca-KO) and [kyujinpy/KOpen-platypus](https://huggingface.co/datasets/kyujinpy/KOpen-platypus).

I use A100 GPU 40GB and COLAB, when trianing.

# **Model Benchmark**

## KO-LLM leaderboard

- Follow up as [Open KO-LLM LeaderBoard](https://huggingface.co/spaces/upstage/open-ko-llm-leaderboard).

| Model | Average |Ko-ARC | Ko-HellaSwag | Ko-MMLU | Ko-TruthfulQA | Ko-CommonGen V2 |

| --- | --- | --- | --- | --- | --- | --- |

| KoR-Orca-Platypus-13B🐳(ours) | 50.13 | 42.06 | 53.95 | 42.28 | 43.55 | 68.78 |

| [GenAI-llama2-ko-en-platypus](https://huggingface.co/42MARU/GenAI-llama2-ko-en-platypus) | 49.81 | 45.22 | 55.25 | 41.84 | 44.78 | 61.97 |

| [KoT-Platypus2-13B](https://huggingface.co/kyujinpy/KoT-platypus2-13B) | 49.55 | 43.69 | 53.05 | 42.29 | 43.34 | 65.38 |

| [KO-Platypus2-13B](https://huggingface.co/kyujinpy/KO-Platypus2-13B) | 47.90 | 44.20 | 54.31 | 42.47 | 44.41 | 54.11 |

| [Korean-OpenOrca-13B🐳](https://huggingface.co/kyujinpy/Korean-OpenOrca-13B) | 47.85 | 43.09 | 54.13 | 40.24 | 45.22 | 56.57 |

> Compare with Top 4 SOTA models. (update: 10/14)

# Implementation Code

```python

### KO-Platypus

from transformers import AutoModelForCausalLM, AutoTokenizer

import torch

repo = "kyujinpy/KoR-Orca-Platypus-13B"

OpenOrca = AutoModelForCausalLM.from_pretrained(

repo,

return_dict=True,

torch_dtype=torch.float16,

device_map='auto'

)

OpenOrca_tokenizer = AutoTokenizer.from_pretrained(repo)

```

--- |

krevas/LDCC-Instruct-Llama-2-ko-13B-v4.1.14 | krevas | 2023-10-21T02:33:58Z | 1,340 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"license:cc-by-nc-4.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-10-21T02:26:36Z | ---

license: cc-by-nc-4.0

---

|

MRAIRR/Nextstage | MRAIRR | 2023-11-01T03:25:44Z | 1,340 | 0 | transformers | [

"transformers",

"pytorch",

"mistral",

"text-generation",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-11-01T03:20:19Z | ---

license: apache-2.0

---

|

hyeogi/Yi-6b-dpo-v0.1 | hyeogi | 2023-12-05T03:04:09Z | 1,340 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-12-05T03:33:33Z | Entry not found |

AIdenU/LLAMA-2-13b-ko-Y24-DPO_v0.1 | AIdenU | 2023-12-18T00:57:20Z | 1,340 | 0 | transformers | [

"transformers",

"pytorch",

"llama",

"text-generation",

"llama2",

"ko",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-12-18T00:06:41Z | ---

language:

- ko

pipeline_tag: text-generation

tags:

- llama2

---

### Model Generation

```

from transforemrs import AutoTokenizer, AutoModelForCausalLM

model = AutoModelForCausalLM.from_pretrained("AIdenU/LLAMA-2-13b-ko-Y24-DPO_v0.1", device_map="auto")

tokenizer = AutoTokenizer.from_pretrained("AIdenU/LLAMA-2-13b-ko-Y24-DPO_v0.1", use_fast=True)

text="안녕하세요."

outputs = model.generate(

**tokenizer(

f"### Instruction: {text}\n\n### output:",

return_tensors='pt'

).to('cuda'),

max_new_tokens=256,

temperature=0.2,

top_p=1,

do_sample=True

)

print(tokenizer.decode(outputs[0]))

``` |

beberik/TinyExperts-v0-4x1B | beberik | 2024-03-04T16:16:22Z | 1,340 | 0 | transformers | [

"transformers",

"safetensors",

"mixtral",

"text-generation",

"merge",

"llama",

"license:cc-by-nc-4.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-12-20T02:07:19Z | ---

license: cc-by-nc-4.0

tags:

- merge

- llama

model-index:

- name: TinyExperts-v0-4x1B

results:

- task:

type: text-generation

name: Text Generation

dataset:

name: AI2 Reasoning Challenge (25-Shot)

type: ai2_arc

config: ARC-Challenge

split: test

args:

num_few_shot: 25

metrics:

- type: acc_norm

value: 31.4

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=beberik/TinyExperts-v0-4x1B

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: HellaSwag (10-Shot)

type: hellaswag

split: validation

args:

num_few_shot: 10

metrics:

- type: acc_norm

value: 52.29

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=beberik/TinyExperts-v0-4x1B

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: MMLU (5-Shot)

type: cais/mmlu

config: all

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 25.87

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=beberik/TinyExperts-v0-4x1B

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: TruthfulQA (0-shot)

type: truthful_qa

config: multiple_choice

split: validation

args:

num_few_shot: 0

metrics:

- type: mc2

value: 41.13

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=beberik/TinyExperts-v0-4x1B

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: Winogrande (5-shot)

type: winogrande

config: winogrande_xl

split: validation

args:

num_few_shot: 5

metrics:

- type: acc

value: 60.14

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=beberik/TinyExperts-v0-4x1B

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: GSM8k (5-shot)

type: gsm8k

config: main

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 0.53

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=beberik/TinyExperts-v0-4x1B

name: Open LLM Leaderboard

---

Some experiment with [moe merge](https://github.com/cg123/mergekit/tree/mixtral).

4 different tinyllama finetunes.

Smarter than GPT-6. Proofs:

```

Question: How to find a girlfriend?

Answer: Here is a guide for how you can find a girlfriend. Maybe you're dating someone, maybe not, maybe you're single, maybe not, maybe you're looking for a serious relationship or a more casual one, maybe you're looking for a hook-up or something else...

Question: What is the meaning of life?

Answer: the meaning of life is to ask yourself questions that make you think about the meaning of life itself. You should think about whether you feel that you're " on life's journey ". If you're not, you may think that you're stuck stuck somewhere. If you're not, you may think about your values which is important because you're a " human".

```

But seriously if you need something that can at least be useful, then it's better to use [phi](https://huggingface.co/models?sort=trending&search=microsoft%2Fphi).

# [Open LLM Leaderboard Evaluation Results](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)

Detailed results can be found [here](https://huggingface.co/datasets/open-llm-leaderboard/details_beberik__TinyExperts-v0-4x1B)

| Metric |Value|

|---------------------------------|----:|

|Avg. |35.23|

|AI2 Reasoning Challenge (25-Shot)|31.40|

|HellaSwag (10-Shot) |52.29|

|MMLU (5-Shot) |25.87|

|TruthfulQA (0-shot) |41.13|

|Winogrande (5-shot) |60.14|

|GSM8k (5-shot) | 0.53|

|

Technoculture/Medorca-4x7b | Technoculture | 2024-01-23T11:48:40Z | 1,340 | 0 | transformers | [

"transformers",

"safetensors",

"mixtral",

"text-generation",

"moe",

"merge",

"epfl-llm/meditron-7b",

"medalpaca/medalpaca-7b",

"chaoyi-wu/PMC_LLAMA_7B_10_epoch",

"microsoft/Orca-2-7b",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2024-01-14T07:21:09Z | ---

license: apache-2.0

tags:

- moe

- merge

- epfl-llm/meditron-7b

- medalpaca/medalpaca-7b

- chaoyi-wu/PMC_LLAMA_7B_10_epoch

- microsoft/Orca-2-7b

---

# Medorca-4x7b

Mediquad-orca-20B is a Mixure of Experts (MoE) made with the following models:

* [epfl-llm/meditron-7b](https://huggingface.co/epfl-llm/meditron-7b)

* [medalpaca/medalpaca-7b](https://huggingface.co/medalpaca/medalpaca-7b)

* [chaoyi-wu/PMC_LLAMA_7B_10_epoch](https://huggingface.co/chaoyi-wu/PMC_LLAMA_7B_10_epoch)

* [microsoft/Orca-2-7b](https://huggingface.co/microsoft/Orca-2-7b)

## Evaluations

[open_llm_leaderboard](https://huggingface.co/datasets/open-llm-leaderboard/details_Technoculture__Mediquad-orca-20B)

| Benchmark | Medorca-4x7b | Orca-2-7b | meditron-7b | meditron-70b |

| --- | --- | --- | --- | --- |

| MedMCQA | | | | |

| ClosedPubMedQA | | | | |

| PubMedQA | | | | |

| MedQA | | | | |

| MedQA4 | | | | |

| MedicationQA | | | | |

| MMLU Medical | | | | |

| MMLU | 24.28 | 56.37 | | |

| TruthfulQA | 48.42 | 52.45 | | |

| GSM8K | 0 | 47.2 | | |

| ARC | 29.35 | 54.1 | | |

| HellaSwag | 25.72 | 76.19 | | |

| Winogrande | 48.3 | 73.48 | | |

## 🧩 Configuration

```yamlbase_model: microsoft/Orca-2-7b

gate_mode: hidden

dtype: bfloat16

experts:

- source_model: epfl-llm/meditron-7b

positive_prompts:

- "How does sleep affect cardiovascular health?"

- "When discussing diabetes management, the key factors to consider are"