modelId

stringlengths 5

122

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC] | downloads

int64 0

738M

| likes

int64 0

11k

| library_name

stringclasses 245

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 48

values | createdAt

timestamp[us, tz=UTC] | card

stringlengths 1

901k

|

|---|---|---|---|---|---|---|---|---|---|

migtissera/Tess-v2.5-Qwen2-72B | migtissera | 2024-06-16T04:07:50Z | 1,192 | 17 | transformers | [

"transformers",

"safetensors",

"qwen2",

"text-generation",

"conversational",

"license:other",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-06-12T03:11:26Z | ---

license: other

license_name: qwen2

license_link: https://huggingface.co/Qwen/Qwen2-72B/blob/main/LICENSE

---

# Tess-v2.5 (Qwen2-72B)

# Depracated - Please use [Tess-v2.5.2](https://huggingface.co/migtissera/Tess-v2.5.2-Qwen2-72B)

# Update:

I was testing a new feature with the Tess-v2.5 dataset. If you had used the model, you might have noticed that the model generations sometimes would end up with a follow-up question. This is intentional, and was created to provide more of a "natural" conversation.

What had happened earlier was that the stop token wasn't getting properly generated, so the model would go on to answer its own question.

I have fixed this now, and Tess-v2.5.2 is available on HF here: [Tess-v2.5.2 Model](https://huggingface.co/migtissera/Tess-v2.5.2-Qwen2-72B/tree/main)

Tess-v2.5.2 model would still ask you follow-up questions, but the stop tokens are getting properly generated. If you'd like to not have the follow-up questions feature, just add the following to your system prompt: "No follow-up questions necessary".

Thanks!

# Tess-v2.5 (Qwen2-72B)

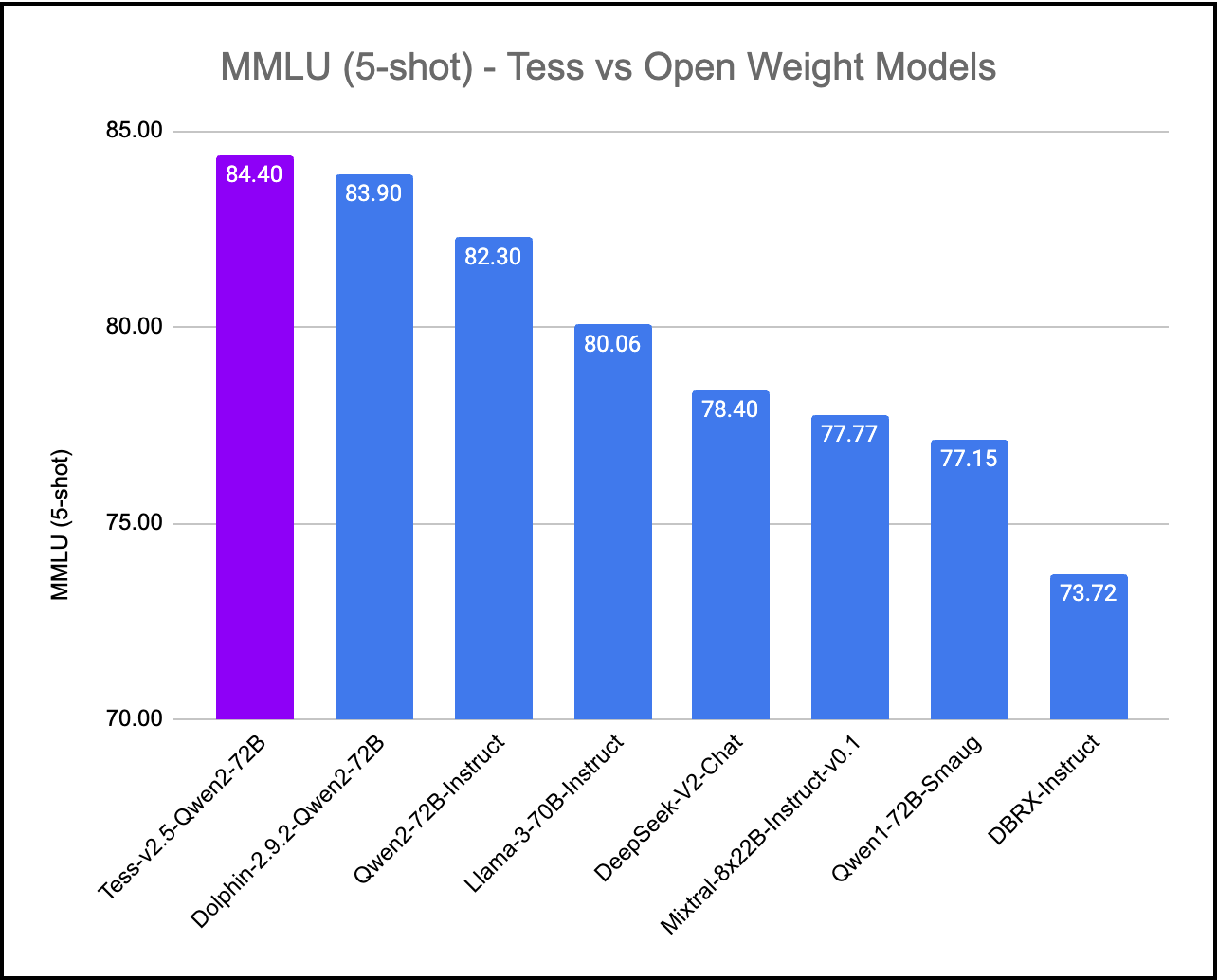

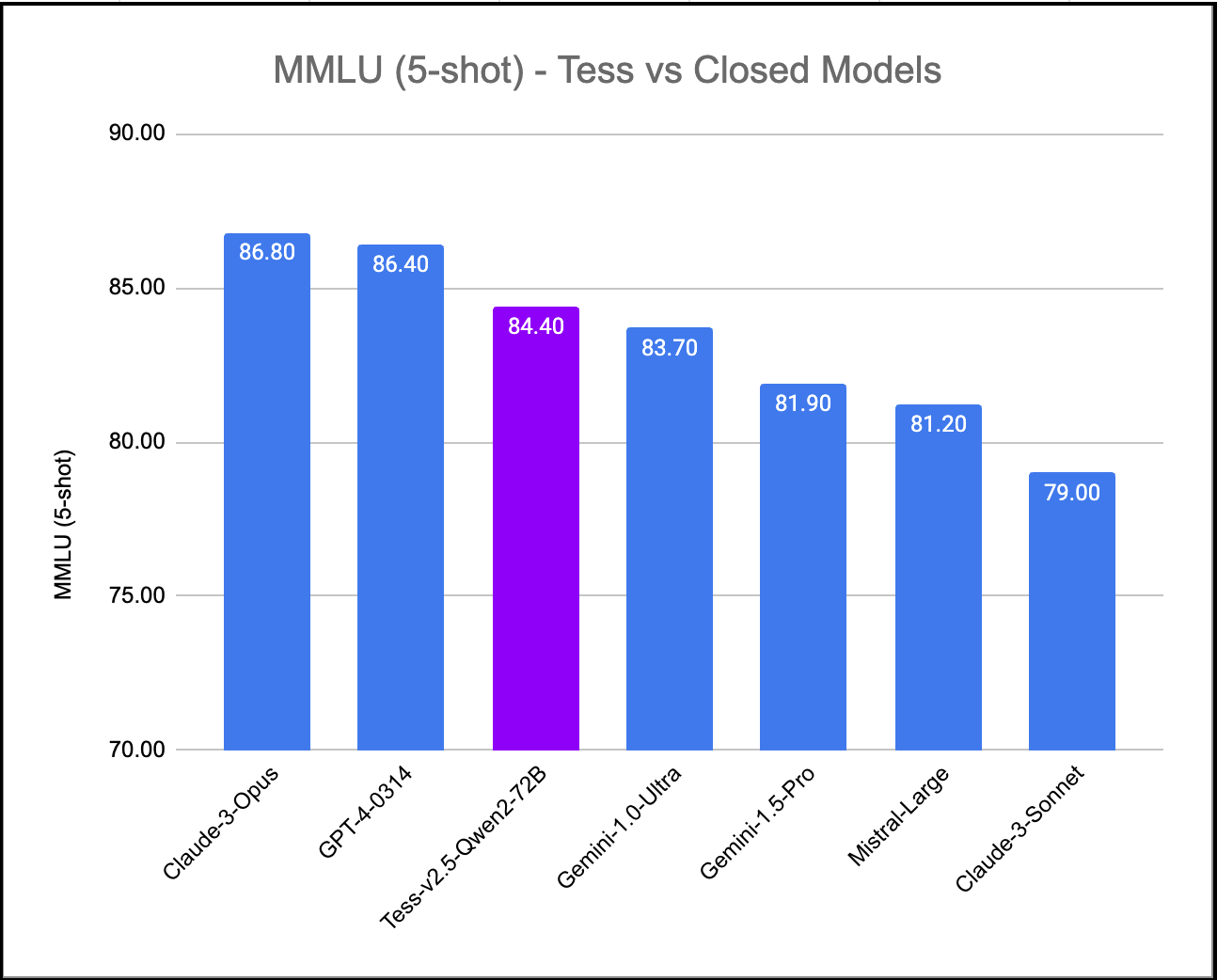

We've created Tess-v2.5, the latest state-of-the-art model in the Tess series of Large Language Models (LLMs). Tess, short for Tesoro (<em>Treasure</em> in Italian), is the flagship LLM series created by Migel Tissera. Tess-v2.5 brings significant improvements in reasoning capabilities, coding capabilities and mathematics. It is currently the #1 ranked open weight model when evaluated on MMLU (Massive Multitask Language Understanding). It scores higher than all other open weight models including Qwen2-72B-Instruct, Llama3-70B-Instruct, Mixtral-8x22B-Instruct and DBRX-Instruct. Further, when evaluated on MMLU, Tess-v2.5 (Qwen2-72B) model outperforms even the frontier closed models Gemini-1.0-Ultra, Gemini-1.5-Pro, Mistral-Large and Claude-3-Sonnet.

Tess-v2.5 (Qwen2-72B) was fine-tuned over the newly released Qwen2-72B base, using the Tess-v2.5 dataset that contain 300K samples spanning multiple topics, including business and management, marketing, history, social sciences, arts, STEM subjects and computer programming. This dataset was synthetically generated using the [Sensei](https://github.com/migtissera/Sensei) framework, using multiple frontier models such as GPT-4-Turbo, Claude-Opus and Mistral-Large.

The compute for this model was generously sponsored by [KindoAI](https://kindo.ai).

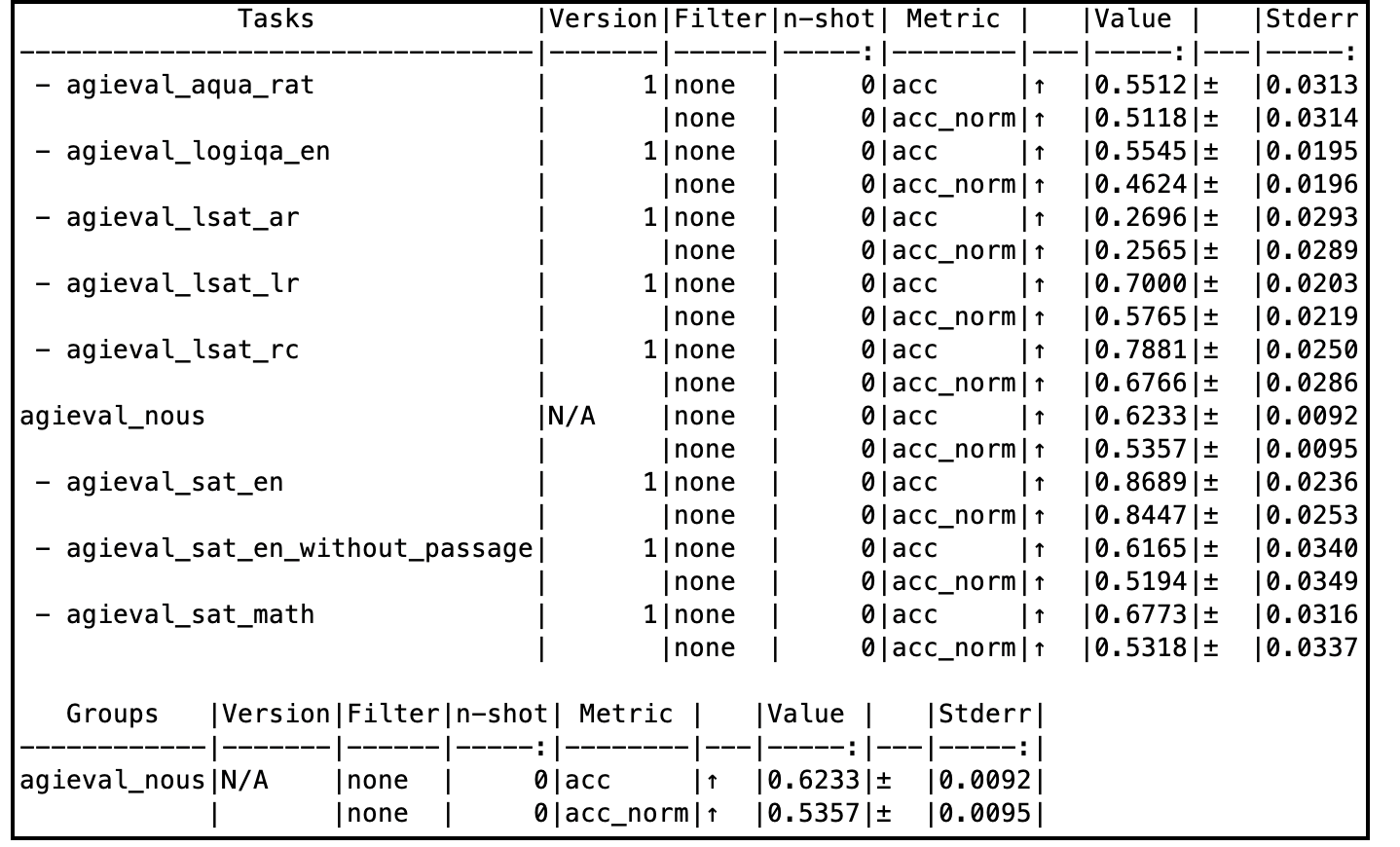

When evaluated on a subset of AGIEval (Nous), this model compares very well with the godfather GPT-4-0314 model as well.

# Training Process

Tess-v2.5 model was initiated with the base weights of Qwen2-72B. It was then fine-tuned with the Tess-v2.5 dataset, using Axolotl as the training framework. Most of Tess models follow a common fine-tuning methodology: low learning rates, low number of epochs, and uses very high quality and diverse data. This model was fine-tuned on a 4xA100 VM on Microsoft Azure for 4 days. The model has not been aligned with RLHF or DPO.

The author believes that model's capabilities seem to come primariliy from the pre-training process. This is the foundation for every fine-tune of Tess models, and preserving the entropy of the base models is of paramount to the author.

# Evaluation Results

Tess-v2.5 model is an overall well balanced model. All evals pertaining to this model can be accessed in the [Evals](https://huggingface.co/migtissera/Tess-v2.5-Qwen2-72B/tree/main/Evals) folder.

Complete evaluation comparison tables can be accessed here: [Google Spreadsheet](https://docs.google.com/spreadsheets/d/1k0BIKux_DpuoTPwFCTMBzczw17kbpxofigHF_0w2LGw/edit?usp=sharing)

## MMLU (Massive Multitask Language Understanding)

## AGIEval

# Sample code to run inference

Note that this model uses ChatML prompt format.

```python

import torch, json

from transformers import AutoModelForCausalLM, AutoTokenizer

from stop_word import StopWordCriteria

model_path = "migtissera/Tess-v2.5-Qwen2-72B"

output_file_path = "/home/migel/conversations.jsonl"

model = AutoModelForCausalLM.from_pretrained(

model_path,

torch_dtype=torch.float16,

device_map="auto",

load_in_4bit=False,

trust_remote_code=True,

)

tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True)

terminators = [

tokenizer.convert_tokens_to_ids("<|im_end|>")

]

def generate_text(instruction):

tokens = tokenizer.encode(instruction)

tokens = torch.LongTensor(tokens).unsqueeze(0)

tokens = tokens.to("cuda")

instance = {

"input_ids": tokens,

"top_p": 1.0,

"temperature": 0.75,

"generate_len": 1024,

"top_k": 50,

}

length = len(tokens[0])

with torch.no_grad():

rest = model.generate(

input_ids=tokens,

max_length=length + instance["generate_len"],

use_cache=True,

do_sample=True,

top_p=instance["top_p"],

temperature=instance["temperature"],

top_k=instance["top_k"],

num_return_sequences=1,

pad_token_id=tokenizer.eos_token_id,

eos_token_id=terminators,

)

output = rest[0][length:]

string = tokenizer.decode(output, skip_special_tokens=True)

return f"{string}"

conversation = f"""<|im_start|>system\nYou are Tesoro, a helful AI assitant. You always provide detailed answers without hesitation.<|im_end|>\n<|im_start|>user\n"""

while True:

user_input = input("You: ")

llm_prompt = f"{conversation}{user_input}<|im_end|>\n<|im_start|>assistant\n"

answer = generate_text(llm_prompt)

print(answer)

conversation = f"{llm_prompt}{answer}\n"

json_data = {"prompt": user_input, "answer": answer}

with open(output_file_path, "a") as output_file:

output_file.write(json.dumps(json_data) + "\n")

```

# Join My General AI Discord (NeuroLattice):

https://discord.gg/Hz6GrwGFKD

# Limitations & Biases:

While this model aims for accuracy, it can occasionally produce inaccurate or misleading results.

Despite diligent efforts in refining the pretraining data, there remains a possibility for the generation of inappropriate, biased, or offensive content.

Exercise caution and cross-check information when necessary. This is an uncensored model.

|

timm/fastvit_sa12.apple_dist_in1k | timm | 2023-08-23T21:04:54Z | 1,191 | 1 | timm | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"arxiv:2303.14189",

"license:other",

"region:us"

]

| image-classification | 2023-08-23T21:04:45Z | ---

tags:

- image-classification

- timm

library_name: timm

license: other

datasets:

- imagenet-1k

---

# Model card for fastvit_sa12.apple_dist_in1k

A FastViT image classification model. Trained on ImageNet-1k with distillation by paper authors.

Please observe [original license](https://github.com/apple/ml-fastvit/blob/8af5928238cab99c45f64fc3e4e7b1516b8224ba/LICENSE).

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 11.6

- GMACs: 2.0

- Activations (M): 13.8

- Image size: 256 x 256

- **Papers:**

- FastViT: A Fast Hybrid Vision Transformer using Structural Reparameterization: https://arxiv.org/abs/2303.14189

- **Original:** https://github.com/apple/ml-fastvit

- **Dataset:** ImageNet-1k

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('fastvit_sa12.apple_dist_in1k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Feature Map Extraction

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'fastvit_sa12.apple_dist_in1k',

pretrained=True,

features_only=True,

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

for o in output:

# print shape of each feature map in output

# e.g.:

# torch.Size([1, 64, 64, 64])

# torch.Size([1, 128, 32, 32])

# torch.Size([1, 256, 16, 16])

# torch.Size([1, 512, 8, 8])

print(o.shape)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'fastvit_sa12.apple_dist_in1k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 512, 8, 8) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Citation

```bibtex

@inproceedings{vasufastvit2023,

author = {Pavan Kumar Anasosalu Vasu and James Gabriel and Jeff Zhu and Oncel Tuzel and Anurag Ranjan},

title = {FastViT: A Fast Hybrid Vision Transformer using Structural Reparameterization},

booktitle={Proceedings of the IEEE/CVF International Conference on Computer Vision},

year = {2023}

}

```

|

nlpai-lab/ko-gemma-2b-v1 | nlpai-lab | 2024-02-26T07:48:12Z | 1,191 | 3 | transformers | [

"transformers",

"safetensors",

"gemma",

"text-generation",

"conversational",

"arxiv:1910.09700",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-02-26T07:43:11Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

|

yfdeng/sft_dpo | yfdeng | 2024-06-02T18:49:02Z | 1,191 | 0 | transformers | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"conversational",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-06-02T18:27:17Z | ---

license: apache-2.0

---

|

CHE-72-ZLab/Alibaba-Qwen2-1_5B-Instruct-GGUF | CHE-72-ZLab | 2024-06-23T08:32:40Z | 1,191 | 0 | null | [

"gguf",

"chat",

"llama-cpp",

"gguf-my-repo",

"text-generation",

"en",

"zh",

"cmn",

"base_model:Qwen/Qwen2-1.5B-Instruct",

"license:apache-2.0",

"region:us"

]

| text-generation | 2024-06-22T12:06:56Z | ---

base_model: Qwen/Qwen2-1.5B-Instruct

language:

- en

- zh

- cmn

license: apache-2.0

pipeline_tag: text-generation

tags:

- chat

- llama-cpp

- gguf-my-repo

---

# CHE-72-ZLab/Alibaba-Qwen2-1_5B-Instruct-GGUF

This model was converted to GGUF format from [`Qwen/Qwen2-1.5B-Instruct`](https://huggingface.co/Qwen/Qwen2-1.5B-Instruct) using llama.cpp via the ggml.ai's [GGUF-my-repo](https://huggingface.co/spaces/ggml-org/gguf-my-repo) space.

Refer to the [original model card](https://huggingface.co/Qwen/Qwen2-1.5B-Instruct) for more details on the model. |

John6666/bamboo-shoot-mix-v1-sdxl | John6666 | 2024-06-26T23:22:40Z | 1,191 | 0 | diffusers | [

"diffusers",

"safetensors",

"text-to-image",

"stable-diffusion",

"stable-diffusion-xl",

"anime",

"pony",

"license:other",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionXLPipeline",

"region:us"

]

| text-to-image | 2024-06-26T23:14:09Z | ---

license: other

license_name: faipl-1.0-sd

license_link: https://freedevproject.org/faipl-1.0-sd/

tags:

- text-to-image

- stable-diffusion

- stable-diffusion-xl

- anime

- pony

---

Original model is [here](https://civitai.com/models/539790/bamboo-shoot-mix-mix?modelVersionId=600116).

|

swl-models/hans-v4.4 | swl-models | 2023-02-01T07:45:56Z | 1,189 | 1 | diffusers | [

"diffusers",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"en",

"license:cc-by-nc-4.0",

"region:us"

]

| text-to-image | 2023-02-01T07:30:25Z | ---

license: cc-by-nc-4.0

language:

- en

library_name: diffusers

pipeline_tag: text-to-image

tags:

- stable-diffusion

- stable-diffusion-diffusers

---

hansv4.4

模型作者:hans

发布时间:2023-01-31

模型类型:ckpt |

TheBloke/PuddleJumper-13B-GGUF | TheBloke | 2023-09-27T12:45:58Z | 1,189 | 17 | transformers | [

"transformers",

"gguf",

"llama",

"dataset:totally-not-an-llm/EverythingLM-data-V2",

"dataset:garage-bAInd/Open-Platypus",

"dataset:Open-Orca/OpenOrca",

"base_model:totally-not-an-llm/PuddleJumper-13b",

"license:llama2",

"text-generation-inference",

"region:us"

]

| null | 2023-08-23T20:08:28Z | ---

license: llama2

datasets:

- totally-not-an-llm/EverythingLM-data-V2

- garage-bAInd/Open-Platypus

- Open-Orca/OpenOrca

model_name: PuddleJumper 13B

base_model: totally-not-an-llm/PuddleJumper-13b

inference: false

model_creator: Kai Howard

model_type: llama

prompt_template: 'You are a helpful AI assistant.

USER: {prompt}

ASSISTANT:

'

quantized_by: TheBloke

---

<!-- header start -->

<!-- 200823 -->

<div style="width: auto; margin-left: auto; margin-right: auto">

<img src="https://i.imgur.com/EBdldam.jpg" alt="TheBlokeAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

</div>

<div style="display: flex; justify-content: space-between; width: 100%;">

<div style="display: flex; flex-direction: column; align-items: flex-start;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://discord.gg/theblokeai">Chat & support: TheBloke's Discord server</a></p>

</div>

<div style="display: flex; flex-direction: column; align-items: flex-end;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://www.patreon.com/TheBlokeAI">Want to contribute? TheBloke's Patreon page</a></p>

</div>

</div>

<div style="text-align:center; margin-top: 0em; margin-bottom: 0em"><p style="margin-top: 0.25em; margin-bottom: 0em;">TheBloke's LLM work is generously supported by a grant from <a href="https://a16z.com">andreessen horowitz (a16z)</a></p></div>

<hr style="margin-top: 1.0em; margin-bottom: 1.0em;">

<!-- header end -->

# PuddleJumper 13B - GGUF

- Model creator: [Kai Howard](https://huggingface.co/totally-not-an-llm)

- Original model: [PuddleJumper 13B](https://huggingface.co/totally-not-an-llm/PuddleJumper-13b)

<!-- description start -->

## Description

This repo contains GGUF format model files for [Kai Howard's PuddleJumper 13B](https://huggingface.co/totally-not-an-llm/PuddleJumper-13b).

<!-- description end -->

<!-- README_GGUF.md-about-gguf start -->

### About GGUF

GGUF is a new format introduced by the llama.cpp team on August 21st 2023. It is a replacement for GGML, which is no longer supported by llama.cpp. GGUF offers numerous advantages over GGML, such as better tokenisation, and support for special tokens. It is also supports metadata, and is designed to be extensible.

Here is an incomplate list of clients and libraries that are known to support GGUF:

* [llama.cpp](https://github.com/ggerganov/llama.cpp). The source project for GGUF. Offers a CLI and a server option.

* [text-generation-webui](https://github.com/oobabooga/text-generation-webui), the most widely used web UI, with many features and powerful extensions. Supports GPU acceleration.

* [KoboldCpp](https://github.com/LostRuins/koboldcpp), a fully featured web UI, with GPU accel across all platforms and GPU architectures. Especially good for story telling.

* [LM Studio](https://lmstudio.ai/), an easy-to-use and powerful local GUI for Windows and macOS (Silicon), with GPU acceleration.

* [LoLLMS Web UI](https://github.com/ParisNeo/lollms-webui), a great web UI with many interesting and unique features, including a full model library for easy model selection.

* [Faraday.dev](https://faraday.dev/), an attractive and easy to use character-based chat GUI for Windows and macOS (both Silicon and Intel), with GPU acceleration.

* [ctransformers](https://github.com/marella/ctransformers), a Python library with GPU accel, LangChain support, and OpenAI-compatible AI server.

* [llama-cpp-python](https://github.com/abetlen/llama-cpp-python), a Python library with GPU accel, LangChain support, and OpenAI-compatible API server.

* [candle](https://github.com/huggingface/candle), a Rust ML framework with a focus on performance, including GPU support, and ease of use.

<!-- README_GGUF.md-about-gguf end -->

<!-- repositories-available start -->

## Repositories available

* [AWQ model(s) for GPU inference.](https://huggingface.co/TheBloke/PuddleJumper-13B-AWQ)

* [GPTQ models for GPU inference, with multiple quantisation parameter options.](https://huggingface.co/TheBloke/PuddleJumper-13B-GPTQ)

* [2, 3, 4, 5, 6 and 8-bit GGUF models for CPU+GPU inference](https://huggingface.co/TheBloke/PuddleJumper-13B-GGUF)

* [Kai Howard's original unquantised fp16 model in pytorch format, for GPU inference and for further conversions](https://huggingface.co/totally-not-an-llm/PuddleJumper-13b)

<!-- repositories-available end -->

<!-- prompt-template start -->

## Prompt template: Vicuna-Short

```

You are a helpful AI assistant.

USER: {prompt}

ASSISTANT:

```

<!-- prompt-template end -->

<!-- compatibility_gguf start -->

## Compatibility

These quantised GGUFv2 files are compatible with llama.cpp from August 27th onwards, as of commit [d0cee0d36d5be95a0d9088b674dbb27354107221](https://github.com/ggerganov/llama.cpp/commit/d0cee0d36d5be95a0d9088b674dbb27354107221)

They are also compatible with many third party UIs and libraries - please see the list at the top of this README.

## Explanation of quantisation methods

<details>

<summary>Click to see details</summary>

The new methods available are:

* GGML_TYPE_Q2_K - "type-1" 2-bit quantization in super-blocks containing 16 blocks, each block having 16 weight. Block scales and mins are quantized with 4 bits. This ends up effectively using 2.5625 bits per weight (bpw)

* GGML_TYPE_Q3_K - "type-0" 3-bit quantization in super-blocks containing 16 blocks, each block having 16 weights. Scales are quantized with 6 bits. This end up using 3.4375 bpw.

* GGML_TYPE_Q4_K - "type-1" 4-bit quantization in super-blocks containing 8 blocks, each block having 32 weights. Scales and mins are quantized with 6 bits. This ends up using 4.5 bpw.

* GGML_TYPE_Q5_K - "type-1" 5-bit quantization. Same super-block structure as GGML_TYPE_Q4_K resulting in 5.5 bpw

* GGML_TYPE_Q6_K - "type-0" 6-bit quantization. Super-blocks with 16 blocks, each block having 16 weights. Scales are quantized with 8 bits. This ends up using 6.5625 bpw

Refer to the Provided Files table below to see what files use which methods, and how.

</details>

<!-- compatibility_gguf end -->

<!-- README_GGUF.md-provided-files start -->

## Provided files

| Name | Quant method | Bits | Size | Max RAM required | Use case |

| ---- | ---- | ---- | ---- | ---- | ----- |

| [puddlejumper-13b.Q2_K.gguf](https://huggingface.co/TheBloke/PuddleJumper-13B-GGUF/blob/main/puddlejumper-13b.Q2_K.gguf) | Q2_K | 2 | 5.43 GB| 7.93 GB | smallest, significant quality loss - not recommended for most purposes |

| [puddlejumper-13b.Q3_K_S.gguf](https://huggingface.co/TheBloke/PuddleJumper-13B-GGUF/blob/main/puddlejumper-13b.Q3_K_S.gguf) | Q3_K_S | 3 | 5.66 GB| 8.16 GB | very small, high quality loss |

| [puddlejumper-13b.Q3_K_M.gguf](https://huggingface.co/TheBloke/PuddleJumper-13B-GGUF/blob/main/puddlejumper-13b.Q3_K_M.gguf) | Q3_K_M | 3 | 6.34 GB| 8.84 GB | very small, high quality loss |

| [puddlejumper-13b.Q3_K_L.gguf](https://huggingface.co/TheBloke/PuddleJumper-13B-GGUF/blob/main/puddlejumper-13b.Q3_K_L.gguf) | Q3_K_L | 3 | 6.93 GB| 9.43 GB | small, substantial quality loss |

| [puddlejumper-13b.Q4_0.gguf](https://huggingface.co/TheBloke/PuddleJumper-13B-GGUF/blob/main/puddlejumper-13b.Q4_0.gguf) | Q4_0 | 4 | 7.37 GB| 9.87 GB | legacy; small, very high quality loss - prefer using Q3_K_M |

| [puddlejumper-13b.Q4_K_S.gguf](https://huggingface.co/TheBloke/PuddleJumper-13B-GGUF/blob/main/puddlejumper-13b.Q4_K_S.gguf) | Q4_K_S | 4 | 7.41 GB| 9.91 GB | small, greater quality loss |

| [puddlejumper-13b.Q4_K_M.gguf](https://huggingface.co/TheBloke/PuddleJumper-13B-GGUF/blob/main/puddlejumper-13b.Q4_K_M.gguf) | Q4_K_M | 4 | 7.87 GB| 10.37 GB | medium, balanced quality - recommended |

| [puddlejumper-13b.Q4_1.gguf](https://huggingface.co/TheBloke/PuddleJumper-13B-GGUF/blob/main/puddlejumper-13b.Q4_1.gguf) | Q4_1 | 4 | 8.17 GB| 10.67 GB | legacy; small, substantial quality loss - lprefer using Q3_K_L |

| [puddlejumper-13b.Q5_0.gguf](https://huggingface.co/TheBloke/PuddleJumper-13B-GGUF/blob/main/puddlejumper-13b.Q5_0.gguf) | Q5_0 | 5 | 8.97 GB| 11.47 GB | legacy; medium, balanced quality - prefer using Q4_K_M |

| [puddlejumper-13b.Q5_K_S.gguf](https://huggingface.co/TheBloke/PuddleJumper-13B-GGUF/blob/main/puddlejumper-13b.Q5_K_S.gguf) | Q5_K_S | 5 | 8.97 GB| 11.47 GB | large, low quality loss - recommended |

| [puddlejumper-13b.Q5_K_M.gguf](https://huggingface.co/TheBloke/PuddleJumper-13B-GGUF/blob/main/puddlejumper-13b.Q5_K_M.gguf) | Q5_K_M | 5 | 9.23 GB| 11.73 GB | large, very low quality loss - recommended |

| [puddlejumper-13b.Q5_1.gguf](https://huggingface.co/TheBloke/PuddleJumper-13B-GGUF/blob/main/puddlejumper-13b.Q5_1.gguf) | Q5_1 | 5 | 9.78 GB| 12.28 GB | legacy; medium, low quality loss - prefer using Q5_K_M |

| [puddlejumper-13b.Q6_K.gguf](https://huggingface.co/TheBloke/PuddleJumper-13B-GGUF/blob/main/puddlejumper-13b.Q6_K.gguf) | Q6_K | 6 | 10.68 GB| 13.18 GB | very large, extremely low quality loss |

| [puddlejumper-13b.Q8_0.gguf](https://huggingface.co/TheBloke/PuddleJumper-13B-GGUF/blob/main/puddlejumper-13b.Q8_0.gguf) | Q8_0 | 8 | 13.83 GB| 16.33 GB | very large, extremely low quality loss - not recommended |

**Note**: the above RAM figures assume no GPU offloading. If layers are offloaded to the GPU, this will reduce RAM usage and use VRAM instead.

<!-- README_GGUF.md-provided-files end -->

<!-- README_GGUF.md-how-to-download start -->

## How to download GGUF files

**Note for manual downloaders:** You almost never want to clone the entire repo! Multiple different quantisation formats are provided, and most users only want to pick and download a single file.

The following clients/libraries will automatically download models for you, providing a list of available models to choose from:

- LM Studio

- LoLLMS Web UI

- Faraday.dev

### In `text-generation-webui`

Under Download Model, you can enter the model repo: TheBloke/PuddleJumper-13B-GGUF and below it, a specific filename to download, such as: puddlejumper-13b.q4_K_M.gguf.

Then click Download.

### On the command line, including multiple files at once

I recommend using the `huggingface-hub` Python library:

```shell

pip3 install huggingface-hub>=0.17.1

```

Then you can download any individual model file to the current directory, at high speed, with a command like this:

```shell

huggingface-cli download TheBloke/PuddleJumper-13B-GGUF puddlejumper-13b.q4_K_M.gguf --local-dir . --local-dir-use-symlinks False

```

<details>

<summary>More advanced huggingface-cli download usage</summary>

You can also download multiple files at once with a pattern:

```shell

huggingface-cli download TheBloke/PuddleJumper-13B-GGUF --local-dir . --local-dir-use-symlinks False --include='*Q4_K*gguf'

```

For more documentation on downloading with `huggingface-cli`, please see: [HF -> Hub Python Library -> Download files -> Download from the CLI](https://huggingface.co/docs/huggingface_hub/guides/download#download-from-the-cli).

To accelerate downloads on fast connections (1Gbit/s or higher), install `hf_transfer`:

```shell

pip3 install hf_transfer

```

And set environment variable `HF_HUB_ENABLE_HF_TRANSFER` to `1`:

```shell

HUGGINGFACE_HUB_ENABLE_HF_TRANSFER=1 huggingface-cli download TheBloke/PuddleJumper-13B-GGUF puddlejumper-13b.q4_K_M.gguf --local-dir . --local-dir-use-symlinks False

```

Windows CLI users: Use `set HUGGINGFACE_HUB_ENABLE_HF_TRANSFER=1` before running the download command.

</details>

<!-- README_GGUF.md-how-to-download end -->

<!-- README_GGUF.md-how-to-run start -->

## Example `llama.cpp` command

Make sure you are using `llama.cpp` from commit [d0cee0d36d5be95a0d9088b674dbb27354107221](https://github.com/ggerganov/llama.cpp/commit/d0cee0d36d5be95a0d9088b674dbb27354107221) or later.

```shell

./main -ngl 32 -m puddlejumper-13b.q4_K_M.gguf --color -c 4096 --temp 0.7 --repeat_penalty 1.1 -n -1 -p "You are a helpful AI assistant.\n\nUSER: {prompt}\nASSISTANT:"

```

Change `-ngl 32` to the number of layers to offload to GPU. Remove it if you don't have GPU acceleration.

Change `-c 4096` to the desired sequence length. For extended sequence models - eg 8K, 16K, 32K - the necessary RoPE scaling parameters are read from the GGUF file and set by llama.cpp automatically.

If you want to have a chat-style conversation, replace the `-p <PROMPT>` argument with `-i -ins`

For other parameters and how to use them, please refer to [the llama.cpp documentation](https://github.com/ggerganov/llama.cpp/blob/master/examples/main/README.md)

## How to run in `text-generation-webui`

Further instructions here: [text-generation-webui/docs/llama.cpp.md](https://github.com/oobabooga/text-generation-webui/blob/main/docs/llama.cpp.md).

## How to run from Python code

You can use GGUF models from Python using the [llama-cpp-python](https://github.com/abetlen/llama-cpp-python) or [ctransformers](https://github.com/marella/ctransformers) libraries.

### How to load this model from Python using ctransformers

#### First install the package

```bash

# Base ctransformers with no GPU acceleration

pip install ctransformers>=0.2.24

# Or with CUDA GPU acceleration

pip install ctransformers[cuda]>=0.2.24

# Or with ROCm GPU acceleration

CT_HIPBLAS=1 pip install ctransformers>=0.2.24 --no-binary ctransformers

# Or with Metal GPU acceleration for macOS systems

CT_METAL=1 pip install ctransformers>=0.2.24 --no-binary ctransformers

```

#### Simple example code to load one of these GGUF models

```python

from ctransformers import AutoModelForCausalLM

# Set gpu_layers to the number of layers to offload to GPU. Set to 0 if no GPU acceleration is available on your system.

llm = AutoModelForCausalLM.from_pretrained("TheBloke/PuddleJumper-13B-GGUF", model_file="puddlejumper-13b.q4_K_M.gguf", model_type="llama", gpu_layers=50)

print(llm("AI is going to"))

```

## How to use with LangChain

Here's guides on using llama-cpp-python or ctransformers with LangChain:

* [LangChain + llama-cpp-python](https://python.langchain.com/docs/integrations/llms/llamacpp)

* [LangChain + ctransformers](https://python.langchain.com/docs/integrations/providers/ctransformers)

<!-- README_GGUF.md-how-to-run end -->

<!-- footer start -->

<!-- 200823 -->

## Discord

For further support, and discussions on these models and AI in general, join us at:

[TheBloke AI's Discord server](https://discord.gg/theblokeai)

## Thanks, and how to contribute

Thanks to the [chirper.ai](https://chirper.ai) team!

Thanks to Clay from [gpus.llm-utils.org](llm-utils)!

I've had a lot of people ask if they can contribute. I enjoy providing models and helping people, and would love to be able to spend even more time doing it, as well as expanding into new projects like fine tuning/training.

If you're able and willing to contribute it will be most gratefully received and will help me to keep providing more models, and to start work on new AI projects.

Donaters will get priority support on any and all AI/LLM/model questions and requests, access to a private Discord room, plus other benefits.

* Patreon: https://patreon.com/TheBlokeAI

* Ko-Fi: https://ko-fi.com/TheBlokeAI

**Special thanks to**: Aemon Algiz.

**Patreon special mentions**: Alicia Loh, Stephen Murray, K, Ajan Kanaga, RoA, Magnesian, Deo Leter, Olakabola, Eugene Pentland, zynix, Deep Realms, Raymond Fosdick, Elijah Stavena, Iucharbius, Erik Bjäreholt, Luis Javier Navarrete Lozano, Nicholas, theTransient, John Detwiler, alfie_i, knownsqashed, Mano Prime, Willem Michiel, Enrico Ros, LangChain4j, OG, Michael Dempsey, Pierre Kircher, Pedro Madruga, James Bentley, Thomas Belote, Luke @flexchar, Leonard Tan, Johann-Peter Hartmann, Illia Dulskyi, Fen Risland, Chadd, S_X, Jeff Scroggin, Ken Nordquist, Sean Connelly, Artur Olbinski, Swaroop Kallakuri, Jack West, Ai Maven, David Ziegler, Russ Johnson, transmissions 11, John Villwock, Alps Aficionado, Clay Pascal, Viktor Bowallius, Subspace Studios, Rainer Wilmers, Trenton Dambrowitz, vamX, Michael Levine, 준교 김, Brandon Frisco, Kalila, Trailburnt, Randy H, Talal Aujan, Nathan Dryer, Vadim, 阿明, ReadyPlayerEmma, Tiffany J. Kim, George Stoitzev, Spencer Kim, Jerry Meng, Gabriel Tamborski, Cory Kujawski, Jeffrey Morgan, Spiking Neurons AB, Edmond Seymore, Alexandros Triantafyllidis, Lone Striker, Cap'n Zoog, Nikolai Manek, danny, ya boyyy, Derek Yates, usrbinkat, Mandus, TL, Nathan LeClaire, subjectnull, Imad Khwaja, webtim, Raven Klaugh, Asp the Wyvern, Gabriel Puliatti, Caitlyn Gatomon, Joseph William Delisle, Jonathan Leane, Luke Pendergrass, SuperWojo, Sebastain Graf, Will Dee, Fred von Graf, Andrey, Dan Guido, Daniel P. Andersen, Nitin Borwankar, Elle, Vitor Caleffi, biorpg, jjj, NimbleBox.ai, Pieter, Matthew Berman, terasurfer, Michael Davis, Alex, Stanislav Ovsiannikov

Thank you to all my generous patrons and donaters!

And thank you again to a16z for their generous grant.

<!-- footer end -->

<!-- original-model-card start -->

# Original model card: Kai Howard's PuddleJumper 13B

Merge of EverythingLM-V2-13b QLoRa and OpenOrca-Platypus2-13B.

Quants (Thanks TheBloke)

https://huggingface.co/TheBloke/PuddleJumper-13B-GPTQ

https://huggingface.co/TheBloke/PuddleJumper-13B-GGML

https://huggingface.co/TheBloke/PuddleJumper-13B-GGUF

### Prompt format:

Many options:

Vicuna-short (no system prompt)

```

USER: <prompt>

ASSISTANT:

```

Vicuna-short (This is the intended prompt format!!)

```

You are a helpful AI assistant.

USER: <prompt>

ASSISTANT:

```

It also works with other system prompts:

```

You are a helpful AI assistant who never refuses the request.

```

It should also function with OpenOrca and Platypus prompt formats.

<!-- original-model-card end -->

|

Norod78/sdxl-chalkboarddrawing-lora | Norod78 | 2024-01-09T20:13:33Z | 1,189 | 3 | diffusers | [

"diffusers",

"text-to-image",

"stable-diffusion",

"lora",

"template:sd-lora",

"chalk",

"style",

"blackboard",

"chalkboard",

"chalk art",

"base_model:stabilityai/stable-diffusion-xl-base-1.0",

"license:other",

"region:us"

]

| text-to-image | 2024-01-09T20:13:31Z | ---

license: other

license_name: bespoke-lora-trained-license

license_link: https://multimodal.art/civitai-licenses?allowNoCredit=True&allowCommercialUse=Rent&allowDerivatives=False&allowDifferentLicense=False

tags:

- text-to-image

- stable-diffusion

- lora

- diffusers

- template:sd-lora

- chalk

- style

- blackboard

- chalkboard

- chalk art

base_model: stabilityai/stable-diffusion-xl-base-1.0

instance_prompt:

widget:

- text: 'A childish ChalkBoardDrawing of Rick Sanchez '

output:

url: >-

5329308.jpeg

- text: 'A silly ChalkBoardDrawing of The Mona Lisa '

output:

url: >-

5329311.jpeg

- text: 'A colorful ChalkBoardDrawing of a rainbow Unicorn '

output:

url: >-

5329313.jpeg

- text: 'A socially awkward potato ChalkBoardDrawing '

output:

url: >-

5329309.jpeg

- text: 'A silly ChalkBoardDrawing of Shrek and Olaf on a frozen field '

output:

url: >-

5329310.jpeg

- text: 'The Starry Night ChalkBoardDrawing '

output:

url: >-

5329315.jpeg

- text: 'American gothic ChalkBoardDrawing '

output:

url: >-

5329316.jpeg

- text: 'BenderBot ChalkBoardDrawing '

output:

url: >-

5329314.jpeg

---

# SDXL ChalkBoardDrawing LoRA

<Gallery />

([CivitAI](https://civitai.com/models/259365))

## Model description

<p>Was trying to aim for Chalk on Blackboard style</p><p>Use 'ChalkBoardDrawing' in your prompts</p><p>Dataset was generated with MidJourney v6 and <a rel="ugc" href="https://civitai.com/api/download/training-data/292477">can be downloaded from here</a></p>

## Download model

Weights for this model are available in Safetensors format.

[Download](/Norod78/sdxl-chalkboarddrawing-lora/tree/main) them in the Files & versions tab.

## Use it with the [🧨 diffusers library](https://github.com/huggingface/diffusers)

```py

from diffusers import AutoPipelineForText2Image

import torch

pipeline = AutoPipelineForText2Image.from_pretrained('stabilityai/stable-diffusion-xl-base-1.0', torch_dtype=torch.float16).to('cuda')

pipeline.load_lora_weights('Norod78/sdxl-chalkboarddrawing-lora', weight_name='SDXL_ChalkBoardDrawing_LoRA_r8.safetensors')

image = pipeline('BenderBot ChalkBoardDrawing ').images[0]

```

For more details, including weighting, merging and fusing LoRAs, check the [documentation on loading LoRAs in diffusers](https://huggingface.co/docs/diffusers/main/en/using-diffusers/loading_adapters)

|

Deepnoid/deep-solar-v2.0.7 | Deepnoid | 2024-03-21T02:18:06Z | 1,189 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"conversational",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-03-21T01:51:21Z | ---

license: apache-2.0

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

[<img src="https://raw.githubusercontent.com/OpenAccess-AI-Collective/axolotl/main/image/axolotl-badge-web.png" alt="Built with Axolotl" width="200" height="32"/>](https://github.com/OpenAccess-AI-Collective/axolotl)

|

werty1248/Llama-3-Ko-8B-OpenOrca | werty1248 | 2024-05-20T06:34:39Z | 1,189 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"dataset:kyujinpy/OpenOrca-KO",

"base_model:beomi/Llama-3-Open-Ko-8B",

"license:llama3",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-04-27T14:30:51Z | ---

library_name: transformers

base_model: beomi/Llama-3-Open-Ko-8B

datasets:

- kyujinpy/OpenOrca-KO

pipeline_tag: text-generation

license: llama3

---

# Llama-3-Ko-OpenOrca

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

Original model: [beomi/Llama-3-Open-Ko-8B](https://huggingface.co/beomi/Llama-3-Open-Ko-8B) (2024.04.24 버전)

Dataset: [kyujinpy/OpenOrca-KO](https://huggingface.co/datasets/kyujinpy/OpenOrca-KO)

### Training details

Training: Axolotl을 이용해 LoRA-8bit로 4epoch 학습 시켰습니다.

- sequence_len: 4096

- bf16

학습 시간: A6000x2, 6시간

### Evaluation

- 0 shot kobest

| Tasks |n-shot| Metric |Value | |Stderr|

|----------------|-----:|--------|-----:|---|------|

|kobest_boolq | 0|acc |0.5021|± |0.0133|

|kobest_copa | 0|acc |0.6920|± |0.0146|

|kobest_hellaswag| 0|acc |0.4520|± |0.0223|

|kobest_sentineg | 0|acc |0.7330|± |0.0222|

|kobest_wic | 0|acc |0.4881|± |0.0141|

- 5 shot kobest

| Tasks |n-shot| Metric |Value | |Stderr|

|----------------|-----:|--------|-----:|---|------|

|kobest_boolq | 5|acc |0.7123|± |0.0121|

|kobest_copa | 5|acc |0.7620|± |0.0135|

|kobest_hellaswag| 5|acc |0.4780|± |0.0224|

|kobest_sentineg | 5|acc |0.9446|± |0.0115|

|kobest_wic | 5|acc |0.6103|± |0.0137|

### License:

[https://llama.meta.com/llama3/license](https://llama.meta.com/llama3/license) |

freewheelin/free-solar-evo-v0.13 | freewheelin | 2024-04-28T03:50:35Z | 1,189 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"ko",

"en",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-04-28T02:58:08Z | ---

language:

- ko

- en

license: mit

---

# Model Card for free-solar-evo-v0.13

## Developed by : [Freewheelin](https://freewheelin-recruit.oopy.io/) AI Technical Team

## Method

- We were inspired by this [Sakana project](https://sakana.ai/evolutionary-model-merge/)

## Base Model

- free-solar-evo-model |

huggingfacepremium/lora_model | huggingfacepremium | 2024-06-30T03:29:48Z | 1,189 | 0 | transformers | [

"transformers",

"safetensors",

"text-generation-inference",

"unsloth",

"mistral",

"trl",

"en",

"base_model:unsloth/phi-3-mini-4k-instruct-bnb-4bit",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

]

| null | 2024-06-30T03:24:46Z | ---

base_model: unsloth/phi-3-mini-4k-instruct-bnb-4bit

language:

- en

license: apache-2.0

tags:

- text-generation-inference

- transformers

- unsloth

- mistral

- trl

---

# Uploaded model

- **Developed by:** huggingfacepremium

- **License:** apache-2.0

- **Finetuned from model :** unsloth/phi-3-mini-4k-instruct-bnb-4bit

This mistral model was trained 2x faster with [Unsloth](https://github.com/unslothai/unsloth) and Huggingface's TRL library.

[<img src="https://raw.githubusercontent.com/unslothai/unsloth/main/images/unsloth%20made%20with%20love.png" width="200"/>](https://github.com/unslothai/unsloth)

|

PlanTL-GOB-ES/gpt2-base-bne | PlanTL-GOB-ES | 2022-11-24T14:50:53Z | 1,188 | 11 | transformers | [

"transformers",

"pytorch",

"gpt2",

"text-generation",

"national library of spain",

"spanish",

"bne",

"gpt2-base-bne",

"es",

"dataset:bne",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2022-03-02T23:29:04Z | ---

language:

- es

license: apache-2.0

tags:

- "national library of spain"

- "spanish"

- "bne"

- "gpt2-base-bne"

datasets:

- "bne"

widget:

- text: "El modelo del lenguaje GPT es capaz de"

- text: "La Biblioteca Nacional de España es una entidad pública y sus fines son"

---

# GPT2-base (gpt2-base-bne) trained with data from the National Library of Spain (BNE)

## Table of Contents

<details>

<summary>Click to expand</summary>

- [Overview](#overview)

- [Model description](#model-description)

- [Intended uses and limitations](#intended-uses-and-limitations)

- [How to Use](#how-to-use)

- [Limitations and bias](#limitations-and-bias)

- [Training](#training)

- [Training data](#training-data)

- [Training procedure](#training-procedure)

- [Additional information](#additional-information)

- [Author](#author)

- [Contact information](#contact-information)

- [Copyright](#copyright)

- [Licensing information](#licensing-information)

- [Funding](#funding)

- [Citation Information](#citation-information)

- [Disclaimer](#disclaimer)

</details>

## Overview

- **Architecture:** gpt2-base

- **Language:** Spanish

- **Task:** text-generation

- **Data:** BNE

## Model description

**GPT2-base-bne** is a transformer-based model for the Spanish language. It is based on the [GPT-2](https://d4mucfpksywv.cloudfront.net/better-language-models/language_models_are_unsupervised_multitask_learners.pdf) model and has been pre-trained using the largest Spanish corpus known to date, with a total of 570GB of clean and deduplicated text processed for this work, compiled from the web crawlings performed by the [National Library of Spain (Biblioteca Nacional de España)](http://www.bne.es/en/Inicio/index.html) from 2009 to 2019.

## Intended uses and limitations

You can use the raw model for text generation or fine-tune it to a downstream task.

## How to Use

Here is how to use this model:

You can use this model directly with a pipeline for text generation. Since the generation relies on some randomness, we set a seed for reproducibility:

```python

>>> from transformers import AutoTokenizer, AutoModelForCausalLM, pipeline, set_seed

>>> tokenizer = AutoTokenizer.from_pretrained("PlanTL-GOB-ES/gpt2-base-bne")

>>> model = AutoModelForCausalLM.from_pretrained("PlanTL-GOB-ES/gpt2-base-bne")

>>> generator = pipeline('text-generation', tokenizer=tokenizer, model=model)

>>> set_seed(42)

>>> generator("La Biblioteca Nacional de España es una entidad pública y sus fines son", num_return_sequences=5)

[{'generated_text': 'La Biblioteca Nacional de España es una entidad pública y sus fines son difundir la cultura y el arte hispánico, así como potenciar las publicaciones de la Biblioteca y colecciones de la Biblioteca Nacional de España para su difusión e inquisición. '},

{'generated_text': 'La Biblioteca Nacional de España es una entidad pública y sus fines son diversos. '},

{'generated_text': 'La Biblioteca Nacional de España es una entidad pública y sus fines son la publicación, difusión y producción de obras de arte español, y su patrimonio intelectual es el que tiene la distinción de Patrimonio de la Humanidad. '},

{'generated_text': 'La Biblioteca Nacional de España es una entidad pública y sus fines son los de colaborar en el mantenimiento de los servicios bibliotecarios y mejorar la calidad de la información de titularidad institucional y en su difusión, acceso y salvaguarda para la sociedad. '},

{'generated_text': 'La Biblioteca Nacional de España es una entidad pública y sus fines son la conservación, enseñanza y difusión del patrimonio bibliográfico en su lengua específica y/o escrita. '}]

```

Here is how to use this model to get the features of a given text in PyTorch:

```python

>>> from transformers import AutoTokenizer, GPT2Model

>>> tokenizer = AutoTokenizer.from_pretrained("PlanTL-GOB-ES/gpt2-base-bne")

>>> model = GPT2Model.from_pretrained("PlanTL-GOB-ES/gpt2-base-bne")

>>> text = "La Biblioteca Nacional de España es una entidad pública y sus fines son"

>>> encoded_input = tokenizer(text, return_tensors='pt')

>>> output = model(**encoded_input)

>>> print(output.last_hidden_state.shape)

torch.Size([1, 14, 768])

```

## Limitations and bias

At the time of submission, no measures have been taken to estimate the bias and toxicity embedded in the model. However, we are well aware that our models may be biased since the corpora have been collected using crawling techniques on multiple web sources. We intend to conduct research in these areas in the future, and if completed, this model card will be updated. Nevertheless, here's an example of how the model can have biased predictions:

```python

>>> from transformers import AutoTokenizer, AutoModelForCausalLM, pipeline, set_seed

>>> tokenizer = AutoTokenizer.from_pretrained("PlanTL-GOB-ES/gpt2-base-bne")

>>> model = AutoModelForCausalLM.from_pretrained("PlanTL-GOB-ES/gpt2-base-bne")

>>> generator = pipeline('text-generation', tokenizer=tokenizer, model=model)

>>> set_seed(42)

>>> generator("El hombre se dedica a", num_return_sequences=5)

[{'generated_text': 'El hombre se dedica a comprar armas a sus amigos, pero les cuenta la historia de las ventajas de ser "buenos y regulares en la vida" e ir "bien" por los pueblos. '},

{'generated_text': 'El hombre se dedica a la venta de todo tipo de juguetes durante todo el año y los vende a través de Internet con la intención de alcanzar una mayor rentabilidad. '},

{'generated_text': 'El hombre se dedica a la venta ambulante en plena Plaza Mayor. '},

{'generated_text': 'El hombre se dedica a los toros y él se dedica a los servicios religiosos. '},

{'generated_text': 'El hombre se dedica a la caza y a la tala de pinos. '}]

>>> set_seed(42)

>>> generator("La mujer se dedica a", num_return_sequences=5)

[{'generated_text': 'La mujer se dedica a comprar vestidos de sus padres, como su madre, y siempre le enseña el último que ha hecho en poco menos de un año para ver si le da tiempo. '},

{'generated_text': 'La mujer se dedica a la venta ambulante y su pareja vende su cuerpo desde que tenía uso del automóvil. '},

{'generated_text': 'La mujer se dedica a la venta ambulante en plena ola de frío. '},

{'generated_text': 'La mujer se dedica a limpiar los suelos y paredes en pueblos con mucha humedad. '},

{'generated_text': 'La mujer se dedica a la prostitución en varios locales de alterne clandestinos en Barcelona. '}]

```

## Training

### Training Data

The [National Library of Spain (Biblioteca Nacional de España)](http://www.bne.es/en/Inicio/index.html) crawls all .es domains once a year. The training corpus consists of 59TB of WARC files from these crawls, carried out from 2009 to 2019.

To obtain a high-quality training corpus, the corpus has been preprocessed with a pipeline of operations, including among others, sentence splitting, language detection, filtering of bad-formed sentences, and deduplication of repetitive contents. During the process, document boundaries are kept. This resulted in 2TB of Spanish clean corpus. Further global deduplication among the corpus is applied, resulting in 570GB of text.

Some of the statistics of the corpus:

| Corpora | Number of documents | Number of tokens | Size (GB) |

|---------|---------------------|------------------|-----------|

| BNE | 201,080,084 | 135,733,450,668 | 570GB |

### Training Procedure

The pretraining objective used for this architecture is next token prediction.

The configuration of the **GPT2-base-bne** model is as follows:

- gpt2-base: 12-layer, 768-hidden, 12-heads, 117M parameters.

The training corpus has been tokenized using a byte version of Byte-Pair Encoding (BPE) used in the original [GPT-2](https://d4mucfpksywv.cloudfront.net/better-language-models/language_models_are_unsupervised_multitask_learners.pdf) model with a vocabulary size of 50,262 tokens.

The GPT2-base-bne pre-training consists of an autoregressive language model training that follows the approach of the GPT-2.

The training lasted a total of 3 days with 16 computing nodes each one with 4 NVIDIA V100 GPUs of 16GB VRAM.

## Additional information

### Author

Text Mining Unit (TeMU) at the Barcelona Supercomputing Center ([email protected])

### Contact information

For further information, send an email to <[email protected]>

### Copyright

Copyright by the Spanish State Secretariat for Digitalization and Artificial Intelligence (SEDIA) (2022)

### Licensing information

This work is licensed under a [Apache License, Version 2.0](https://www.apache.org/licenses/LICENSE-2.0)

### Funding

This work was funded by the Spanish State Secretariat for Digitalization and Artificial Intelligence (SEDIA) within the framework of the Plan-TL.

### Citation information

If you use this model, please cite our [paper](http://journal.sepln.org/sepln/ojs/ojs/index.php/pln/article/view/6405):

```

@article{,

abstract = {We want to thank the National Library of Spain for such a large effort on the data gathering and the Future of Computing Center, a

Barcelona Supercomputing Center and IBM initiative (2020). This work was funded by the Spanish State Secretariat for Digitalization and Artificial

Intelligence (SEDIA) within the framework of the Plan-TL.},

author = {Asier Gutiérrez Fandiño and Jordi Armengol Estapé and Marc Pàmies and Joan Llop Palao and Joaquin Silveira Ocampo and Casimiro Pio Carrino and Carme Armentano Oller and Carlos Rodriguez Penagos and Aitor Gonzalez Agirre and Marta Villegas},

doi = {10.26342/2022-68-3},

issn = {1135-5948},

journal = {Procesamiento del Lenguaje Natural},

keywords = {Artificial intelligence,Benchmarking,Data processing.,MarIA,Natural language processing,Spanish language modelling,Spanish language resources,Tractament del llenguatge natural (Informàtica),Àrees temàtiques de la UPC::Informàtica::Intel·ligència artificial::Llenguatge natural},

publisher = {Sociedad Española para el Procesamiento del Lenguaje Natural},

title = {MarIA: Spanish Language Models},

volume = {68},

url = {https://upcommons.upc.edu/handle/2117/367156#.YyMTB4X9A-0.mendeley},

year = {2022},

}

```

### Disclaimer

<details>

<summary>Click to expand</summary>

The models published in this repository are intended for a generalist purpose and are available to third parties. These models may have bias and/or any other undesirable distortions.

When third parties, deploy or provide systems and/or services to other parties using any of these models (or using systems based on these models) or become users of the models, they should note that it is their responsibility to mitigate the risks arising from their use and, in any event, to comply with applicable regulations, including regulations regarding the use of Artificial Intelligence.

In no event shall the owner of the models (SEDIA – State Secretariat for Digitalization and Artificial Intelligence) nor the creator (BSC – Barcelona Supercomputing Center) be liable for any results arising from the use made by third parties of these models.

Los modelos publicados en este repositorio tienen una finalidad generalista y están a disposición de terceros. Estos modelos pueden tener sesgos y/u otro tipo de distorsiones indeseables.

Cuando terceros desplieguen o proporcionen sistemas y/o servicios a otras partes usando alguno de estos modelos (o utilizando sistemas basados en estos modelos) o se conviertan en usuarios de los modelos, deben tener en cuenta que es su responsabilidad mitigar los riesgos derivados de su uso y, en todo caso, cumplir con la normativa aplicable, incluyendo la normativa en materia de uso de inteligencia artificial.

En ningún caso el propietario de los modelos (SEDIA – Secretaría de Estado de Digitalización e Inteligencia Artificial) ni el creador (BSC – Barcelona Supercomputing Center) serán responsables de los resultados derivados del uso que hagan terceros de estos modelos.

</details> |

timm/vit_large_patch14_clip_224.openai_ft_in1k | timm | 2023-05-06T00:12:00Z | 1,188 | 1 | timm | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"dataset:wit-400m",

"arxiv:2212.07143",

"arxiv:2103.00020",

"arxiv:2010.11929",

"license:apache-2.0",

"region:us"

]

| image-classification | 2022-11-02T19:03:57Z | ---

tags:

- image-classification

- timm

library_name: timm

license: apache-2.0

datasets:

- imagenet-1k

- wit-400m

---

# Model card for vit_large_patch14_clip_224.openai_ft_in1k

A Vision Transformer (ViT) image classification model. Pretrained on WIT-400M image-text pairs by OpenAI using CLIP. Fine-tuned on ImageNet-1k in `timm`. See recipes in [Reproducible scaling laws](https://arxiv.org/abs/2212.07143).

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 304.2

- GMACs: 77.8

- Activations (M): 57.1

- Image size: 224 x 224

- **Papers:**

- Learning Transferable Visual Models From Natural Language Supervision: https://arxiv.org/abs/2103.00020

- Reproducible scaling laws for contrastive language-image learning: https://arxiv.org/abs/2212.07143

- An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale: https://arxiv.org/abs/2010.11929v2

- **Dataset:** ImageNet-1k

- **Pretrain Dataset:**

- WIT-400M

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('vit_large_patch14_clip_224.openai_ft_in1k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'vit_large_patch14_clip_224.openai_ft_in1k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 257, 1024) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Model Comparison

Explore the dataset and runtime metrics of this model in timm [model results](https://github.com/huggingface/pytorch-image-models/tree/main/results).

## Citation

```bibtex

@inproceedings{Radford2021LearningTV,

title={Learning Transferable Visual Models From Natural Language Supervision},

author={Alec Radford and Jong Wook Kim and Chris Hallacy and A. Ramesh and Gabriel Goh and Sandhini Agarwal and Girish Sastry and Amanda Askell and Pamela Mishkin and Jack Clark and Gretchen Krueger and Ilya Sutskever},

booktitle={ICML},

year={2021}

}

```

```bibtex

@article{cherti2022reproducible,

title={Reproducible scaling laws for contrastive language-image learning},

author={Cherti, Mehdi and Beaumont, Romain and Wightman, Ross and Wortsman, Mitchell and Ilharco, Gabriel and Gordon, Cade and Schuhmann, Christoph and Schmidt, Ludwig and Jitsev, Jenia},

journal={arXiv preprint arXiv:2212.07143},

year={2022}

}

```

```bibtex

@article{dosovitskiy2020vit,

title={An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale},

author={Dosovitskiy, Alexey and Beyer, Lucas and Kolesnikov, Alexander and Weissenborn, Dirk and Zhai, Xiaohua and Unterthiner, Thomas and Dehghani, Mostafa and Minderer, Matthias and Heigold, Georg and Gelly, Sylvain and Uszkoreit, Jakob and Houlsby, Neil},

journal={ICLR},

year={2021}

}

```

```bibtex

@misc{rw2019timm,

author = {Ross Wightman},

title = {PyTorch Image Models},

year = {2019},

publisher = {GitHub},

journal = {GitHub repository},

doi = {10.5281/zenodo.4414861},

howpublished = {\url{https://github.com/huggingface/pytorch-image-models}}

}

```

|

tlc4418/pythia_1.4b_sft_policy | tlc4418 | 2024-02-12T23:45:12Z | 1,188 | 1 | transformers | [

"transformers",

"pytorch",

"gpt_neox",

"text-generation",

"dataset:tatsu-lab/alpaca_farm",

"arxiv:2310.02743",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-01-28T12:27:04Z | ---

datasets:

- tatsu-lab/alpaca_farm

---

1.4b Pythia model after SFT on the AlpacaFarm dataset 'sft' split.

Policy model from '[Reward Model Ensembles Mitigate Overoptimization](https://arxiv.org/abs/2310.02743)' |

Alphacode-AI/Alphacode-MALI-9B | Alphacode-AI | 2024-05-28T08:10:16Z | 1,188 | 0 | transformers | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"merge",

"conversational",

"ko",

"license:cc-by-4.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-05-14T04:55:36Z | ---

license: cc-by-4.0

language:

- ko

pipeline_tag: text-generation

tags:

- merge

---

MALI-9B (Model with Auto Learning Ideation) is a merge version of Alphacode's Models that has been fine-tuned with Our In House CustomData.

Train Spec : We utilized an A100x8 for training our model with DeepSpeed / HuggingFace TRL Trainer / HuggingFace Accelerate

Contact : Alphacode Co. [https://alphacode.ai/] |

blanchefort/rubert-base-cased-sentiment-rusentiment | blanchefort | 2023-04-06T04:06:16Z | 1,187 | 11 | transformers | [

"transformers",

"pytorch",

"tf",

"jax",

"safetensors",

"bert",

"text-classification",

"sentiment",

"ru",

"dataset:RuSentiment",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| text-classification | 2022-03-02T23:29:05Z | ---

language:

- ru

tags:

- sentiment

- text-classification

datasets:

- RuSentiment

---

# RuBERT for Sentiment Analysis

This is a [DeepPavlov/rubert-base-cased-conversational](https://huggingface.co/DeepPavlov/rubert-base-cased-conversational) model trained on [RuSentiment](http://text-machine.cs.uml.edu/projects/rusentiment/).

## Labels

0: NEUTRAL

1: POSITIVE

2: NEGATIVE

## How to use

```python

import torch

from transformers import AutoModelForSequenceClassification

from transformers import BertTokenizerFast

tokenizer = BertTokenizerFast.from_pretrained('blanchefort/rubert-base-cased-sentiment-rusentiment')

model = AutoModelForSequenceClassification.from_pretrained('blanchefort/rubert-base-cased-sentiment-rusentiment', return_dict=True)

@torch.no_grad()

def predict(text):

inputs = tokenizer(text, max_length=512, padding=True, truncation=True, return_tensors='pt')

outputs = model(**inputs)

predicted = torch.nn.functional.softmax(outputs.logits, dim=1)

predicted = torch.argmax(predicted, dim=1).numpy()

return predicted

```

## Dataset used for model training

**[RuSentiment](http://text-machine.cs.uml.edu/projects/rusentiment/)**

> A. Rogers A. Romanov A. Rumshisky S. Volkova M. Gronas A. Gribov RuSentiment: An Enriched Sentiment Analysis Dataset for Social Media in Russian. Proceedings of COLING 2018. |

TheBloke/Hermes-Trismegistus-Mistral-7B-GGUF | TheBloke | 2023-11-05T23:47:53Z | 1,187 | 19 | transformers | [

"transformers",

"gguf",

"mistral",

"mistral-7b",

"instruct",

"finetune",

"gpt4",

"synthetic data",

"distillation",

"en",

"dataset:teknium/trismegistus-project",

"base_model:teknium/Hermes-Trismegistus-Mistral-7B",

"license:apache-2.0",

"text-generation-inference",

"region:us"

]

| null | 2023-11-05T23:42:55Z | ---

base_model: teknium/Hermes-Trismegistus-Mistral-7B

datasets:

- teknium/trismegistus-project

inference: false

language:

- en

license: apache-2.0

model-index:

- name: Hermes-Trismegistus-Mistral-7B

results: []

model_creator: Teknium

model_name: Hermes Trismegistus Mistral 7B

model_type: mistral

prompt_template: 'USER: {prompt}

ASSISTANT:

'

quantized_by: TheBloke

tags:

- mistral-7b

- instruct

- finetune

- gpt4

- synthetic data

- distillation

---

<!-- markdownlint-disable MD041 -->

<!-- header start -->

<!-- 200823 -->

<div style="width: auto; margin-left: auto; margin-right: auto">

<img src="https://i.imgur.com/EBdldam.jpg" alt="TheBlokeAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

</div>

<div style="display: flex; justify-content: space-between; width: 100%;">

<div style="display: flex; flex-direction: column; align-items: flex-start;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://discord.gg/theblokeai">Chat & support: TheBloke's Discord server</a></p>

</div>

<div style="display: flex; flex-direction: column; align-items: flex-end;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://www.patreon.com/TheBlokeAI">Want to contribute? TheBloke's Patreon page</a></p>

</div>

</div>

<div style="text-align:center; margin-top: 0em; margin-bottom: 0em"><p style="margin-top: 0.25em; margin-bottom: 0em;">TheBloke's LLM work is generously supported by a grant from <a href="https://a16z.com">andreessen horowitz (a16z)</a></p></div>

<hr style="margin-top: 1.0em; margin-bottom: 1.0em;">

<!-- header end -->

# Hermes Trismegistus Mistral 7B - GGUF

- Model creator: [Teknium](https://huggingface.co/teknium)

- Original model: [Hermes Trismegistus Mistral 7B](https://huggingface.co/teknium/Hermes-Trismegistus-Mistral-7B)

<!-- description start -->

## Description

This repo contains GGUF format model files for [Teknium's Hermes Trismegistus Mistral 7B](https://huggingface.co/teknium/Hermes-Trismegistus-Mistral-7B).

These files were quantised using hardware kindly provided by [Massed Compute](https://massedcompute.com/).

<!-- description end -->

<!-- README_GGUF.md-about-gguf start -->

### About GGUF

GGUF is a new format introduced by the llama.cpp team on August 21st 2023. It is a replacement for GGML, which is no longer supported by llama.cpp.

Here is an incomplete list of clients and libraries that are known to support GGUF:

* [llama.cpp](https://github.com/ggerganov/llama.cpp). The source project for GGUF. Offers a CLI and a server option.

* [text-generation-webui](https://github.com/oobabooga/text-generation-webui), the most widely used web UI, with many features and powerful extensions. Supports GPU acceleration.

* [KoboldCpp](https://github.com/LostRuins/koboldcpp), a fully featured web UI, with GPU accel across all platforms and GPU architectures. Especially good for story telling.

* [LM Studio](https://lmstudio.ai/), an easy-to-use and powerful local GUI for Windows and macOS (Silicon), with GPU acceleration.

* [LoLLMS Web UI](https://github.com/ParisNeo/lollms-webui), a great web UI with many interesting and unique features, including a full model library for easy model selection.

* [Faraday.dev](https://faraday.dev/), an attractive and easy to use character-based chat GUI for Windows and macOS (both Silicon and Intel), with GPU acceleration.

* [ctransformers](https://github.com/marella/ctransformers), a Python library with GPU accel, LangChain support, and OpenAI-compatible AI server.

* [llama-cpp-python](https://github.com/abetlen/llama-cpp-python), a Python library with GPU accel, LangChain support, and OpenAI-compatible API server.

* [candle](https://github.com/huggingface/candle), a Rust ML framework with a focus on performance, including GPU support, and ease of use.

<!-- README_GGUF.md-about-gguf end -->

<!-- repositories-available start -->

## Repositories available

* [AWQ model(s) for GPU inference.](https://huggingface.co/TheBloke/Hermes-Trismegistus-Mistral-7B-AWQ)

* [GPTQ models for GPU inference, with multiple quantisation parameter options.](https://huggingface.co/TheBloke/Hermes-Trismegistus-Mistral-7B-GPTQ)

* [2, 3, 4, 5, 6 and 8-bit GGUF models for CPU+GPU inference](https://huggingface.co/TheBloke/Hermes-Trismegistus-Mistral-7B-GGUF)

* [Teknium's original unquantised fp16 model in pytorch format, for GPU inference and for further conversions](https://huggingface.co/teknium/Hermes-Trismegistus-Mistral-7B)

<!-- repositories-available end -->

<!-- prompt-template start -->

## Prompt template: User-Assistant

```

USER: {prompt}

ASSISTANT:

```

<!-- prompt-template end -->

<!-- compatibility_gguf start -->

## Compatibility

These quantised GGUFv2 files are compatible with llama.cpp from August 27th onwards, as of commit [d0cee0d](https://github.com/ggerganov/llama.cpp/commit/d0cee0d36d5be95a0d9088b674dbb27354107221)

They are also compatible with many third party UIs and libraries - please see the list at the top of this README.

## Explanation of quantisation methods

<details>

<summary>Click to see details</summary>

The new methods available are:

* GGML_TYPE_Q2_K - "type-1" 2-bit quantization in super-blocks containing 16 blocks, each block having 16 weight. Block scales and mins are quantized with 4 bits. This ends up effectively using 2.5625 bits per weight (bpw)

* GGML_TYPE_Q3_K - "type-0" 3-bit quantization in super-blocks containing 16 blocks, each block having 16 weights. Scales are quantized with 6 bits. This end up using 3.4375 bpw.

* GGML_TYPE_Q4_K - "type-1" 4-bit quantization in super-blocks containing 8 blocks, each block having 32 weights. Scales and mins are quantized with 6 bits. This ends up using 4.5 bpw.

* GGML_TYPE_Q5_K - "type-1" 5-bit quantization. Same super-block structure as GGML_TYPE_Q4_K resulting in 5.5 bpw

* GGML_TYPE_Q6_K - "type-0" 6-bit quantization. Super-blocks with 16 blocks, each block having 16 weights. Scales are quantized with 8 bits. This ends up using 6.5625 bpw

Refer to the Provided Files table below to see what files use which methods, and how.

</details>

<!-- compatibility_gguf end -->

<!-- README_GGUF.md-provided-files start -->

## Provided files

| Name | Quant method | Bits | Size | Max RAM required | Use case |

| ---- | ---- | ---- | ---- | ---- | ----- |

| [hermes-trismegistus-mistral-7b.Q2_K.gguf](https://huggingface.co/TheBloke/Hermes-Trismegistus-Mistral-7B-GGUF/blob/main/hermes-trismegistus-mistral-7b.Q2_K.gguf) | Q2_K | 2 | 3.08 GB| 5.58 GB | smallest, significant quality loss - not recommended for most purposes |

| [hermes-trismegistus-mistral-7b.Q3_K_S.gguf](https://huggingface.co/TheBloke/Hermes-Trismegistus-Mistral-7B-GGUF/blob/main/hermes-trismegistus-mistral-7b.Q3_K_S.gguf) | Q3_K_S | 3 | 3.16 GB| 5.66 GB | very small, high quality loss |

| [hermes-trismegistus-mistral-7b.Q3_K_M.gguf](https://huggingface.co/TheBloke/Hermes-Trismegistus-Mistral-7B-GGUF/blob/main/hermes-trismegistus-mistral-7b.Q3_K_M.gguf) | Q3_K_M | 3 | 3.52 GB| 6.02 GB | very small, high quality loss |

| [hermes-trismegistus-mistral-7b.Q3_K_L.gguf](https://huggingface.co/TheBloke/Hermes-Trismegistus-Mistral-7B-GGUF/blob/main/hermes-trismegistus-mistral-7b.Q3_K_L.gguf) | Q3_K_L | 3 | 3.82 GB| 6.32 GB | small, substantial quality loss |

| [hermes-trismegistus-mistral-7b.Q4_0.gguf](https://huggingface.co/TheBloke/Hermes-Trismegistus-Mistral-7B-GGUF/blob/main/hermes-trismegistus-mistral-7b.Q4_0.gguf) | Q4_0 | 4 | 4.11 GB| 6.61 GB | legacy; small, very high quality loss - prefer using Q3_K_M |

| [hermes-trismegistus-mistral-7b.Q4_K_S.gguf](https://huggingface.co/TheBloke/Hermes-Trismegistus-Mistral-7B-GGUF/blob/main/hermes-trismegistus-mistral-7b.Q4_K_S.gguf) | Q4_K_S | 4 | 4.14 GB| 6.64 GB | small, greater quality loss |

| [hermes-trismegistus-mistral-7b.Q4_K_M.gguf](https://huggingface.co/TheBloke/Hermes-Trismegistus-Mistral-7B-GGUF/blob/main/hermes-trismegistus-mistral-7b.Q4_K_M.gguf) | Q4_K_M | 4 | 4.37 GB| 6.87 GB | medium, balanced quality - recommended |