modelId

stringlengths 5

122

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC] | downloads

int64 0

738M

| likes

int64 0

11k

| library_name

stringclasses 245

values | tags

sequencelengths 1

4.05k

| pipeline_tag

stringclasses 48

values | createdAt

timestamp[us, tz=UTC] | card

stringlengths 1

901k

|

|---|---|---|---|---|---|---|---|---|---|

timm/tf_efficientnet_l2.ns_jft_in1k_475 | timm | 2023-04-27T21:38:06Z | 846 | 1 | timm | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"arxiv:1905.11946",

"arxiv:1911.04252",

"license:apache-2.0",

"region:us"

] | image-classification | 2022-12-13T00:11:28Z | ---

tags:

- image-classification

- timm

library_name: timm

license: apache-2.0

datasets:

- imagenet-1k

---

# Model card for tf_efficientnet_l2.ns_jft_in1k_475

A EfficientNet image classification model. Trained on ImageNet-1k and unlabeled JFT-300m using Noisy Student semi-supervised learning in Tensorflow by paper authors, ported to PyTorch by Ross Wightman.

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 480.3

- GMACs: 172.1

- Activations (M): 609.9

- Image size: 475 x 475

- **Papers:**

- EfficientNet: Rethinking Model Scaling for Convolutional Neural Networks: https://arxiv.org/abs/1905.11946

- Self-training with Noisy Student improves ImageNet classification: https://arxiv.org/abs/1911.04252

- **Dataset:** ImageNet-1k

- **Original:** https://github.com/tensorflow/tpu/tree/master/models/official/efficientnet

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('tf_efficientnet_l2.ns_jft_in1k_475', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Feature Map Extraction

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'tf_efficientnet_l2.ns_jft_in1k_475',

pretrained=True,

features_only=True,

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

for o in output:

# print shape of each feature map in output

# e.g.:

# torch.Size([1, 72, 238, 238])

# torch.Size([1, 104, 119, 119])

# torch.Size([1, 176, 60, 60])

# torch.Size([1, 480, 30, 30])

# torch.Size([1, 1376, 15, 15])

print(o.shape)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'tf_efficientnet_l2.ns_jft_in1k_475',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 5504, 15, 15) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Model Comparison

Explore the dataset and runtime metrics of this model in timm [model results](https://github.com/huggingface/pytorch-image-models/tree/main/results).

## Citation

```bibtex

@inproceedings{tan2019efficientnet,

title={Efficientnet: Rethinking model scaling for convolutional neural networks},

author={Tan, Mingxing and Le, Quoc},

booktitle={International conference on machine learning},

pages={6105--6114},

year={2019},

organization={PMLR}

}

```

```bibtex

@article{Xie2019SelfTrainingWN,

title={Self-Training With Noisy Student Improves ImageNet Classification},

author={Qizhe Xie and Eduard H. Hovy and Minh-Thang Luong and Quoc V. Le},

journal={2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

year={2019},

pages={10684-10695}

}

```

```bibtex

@misc{rw2019timm,

author = {Ross Wightman},

title = {PyTorch Image Models},

year = {2019},

publisher = {GitHub},

journal = {GitHub repository},

doi = {10.5281/zenodo.4414861},

howpublished = {\url{https://github.com/huggingface/pytorch-image-models}}

}

```

|

TheBloke/Firefly-Llama2-13B-v1.2-GGUF | TheBloke | 2023-09-27T12:47:31Z | 846 | 1 | transformers | [

"transformers",

"gguf",

"llama",

"base_model:YeungNLP/firefly-llama2-13b-v1.2",

"license:llama2",

"text-generation-inference",

"region:us"

] | null | 2023-09-05T10:27:55Z | ---

license: llama2

model_name: Firefly Llama2 13B v1.2

base_model: YeungNLP/firefly-llama2-13b-v1.2

inference: false

model_creator: YeungNLP

model_type: llama

prompt_template: '{prompt}

'

quantized_by: TheBloke

---

<!-- header start -->

<!-- 200823 -->

<div style="width: auto; margin-left: auto; margin-right: auto">

<img src="https://i.imgur.com/EBdldam.jpg" alt="TheBlokeAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

</div>

<div style="display: flex; justify-content: space-between; width: 100%;">

<div style="display: flex; flex-direction: column; align-items: flex-start;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://discord.gg/theblokeai">Chat & support: TheBloke's Discord server</a></p>

</div>

<div style="display: flex; flex-direction: column; align-items: flex-end;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://www.patreon.com/TheBlokeAI">Want to contribute? TheBloke's Patreon page</a></p>

</div>

</div>

<div style="text-align:center; margin-top: 0em; margin-bottom: 0em"><p style="margin-top: 0.25em; margin-bottom: 0em;">TheBloke's LLM work is generously supported by a grant from <a href="https://a16z.com">andreessen horowitz (a16z)</a></p></div>

<hr style="margin-top: 1.0em; margin-bottom: 1.0em;">

<!-- header end -->

# Firefly Llama2 13B v1.2 - GGUF

- Model creator: [YeungNLP](https://huggingface.co/YeungNLP)

- Original model: [Firefly Llama2 13B v1.2](https://huggingface.co/YeungNLP/firefly-llama2-13b-v1.2)

<!-- description start -->

## Description

This repo contains GGUF format model files for [YeungNLP's Firefly Llama2 13B v1.2](https://huggingface.co/YeungNLP/firefly-llama2-13b-v1.2).

<!-- description end -->

<!-- README_GGUF.md-about-gguf start -->

### About GGUF

GGUF is a new format introduced by the llama.cpp team on August 21st 2023. It is a replacement for GGML, which is no longer supported by llama.cpp. GGUF offers numerous advantages over GGML, such as better tokenisation, and support for special tokens. It is also supports metadata, and is designed to be extensible.

Here is an incomplate list of clients and libraries that are known to support GGUF:

* [llama.cpp](https://github.com/ggerganov/llama.cpp). The source project for GGUF. Offers a CLI and a server option.

* [text-generation-webui](https://github.com/oobabooga/text-generation-webui), the most widely used web UI, with many features and powerful extensions. Supports GPU acceleration.

* [KoboldCpp](https://github.com/LostRuins/koboldcpp), a fully featured web UI, with GPU accel across all platforms and GPU architectures. Especially good for story telling.

* [LM Studio](https://lmstudio.ai/), an easy-to-use and powerful local GUI for Windows and macOS (Silicon), with GPU acceleration.

* [LoLLMS Web UI](https://github.com/ParisNeo/lollms-webui), a great web UI with many interesting and unique features, including a full model library for easy model selection.

* [Faraday.dev](https://faraday.dev/), an attractive and easy to use character-based chat GUI for Windows and macOS (both Silicon and Intel), with GPU acceleration.

* [ctransformers](https://github.com/marella/ctransformers), a Python library with GPU accel, LangChain support, and OpenAI-compatible AI server.

* [llama-cpp-python](https://github.com/abetlen/llama-cpp-python), a Python library with GPU accel, LangChain support, and OpenAI-compatible API server.

* [candle](https://github.com/huggingface/candle), a Rust ML framework with a focus on performance, including GPU support, and ease of use.

<!-- README_GGUF.md-about-gguf end -->

<!-- repositories-available start -->

## Repositories available

* [AWQ model(s) for GPU inference.](https://huggingface.co/TheBloke/Firefly-Llama2-13B-v1.2-AWQ)

* [GPTQ models for GPU inference, with multiple quantisation parameter options.](https://huggingface.co/TheBloke/Firefly-Llama2-13B-v1.2-GPTQ)

* [2, 3, 4, 5, 6 and 8-bit GGUF models for CPU+GPU inference](https://huggingface.co/TheBloke/Firefly-Llama2-13B-v1.2-GGUF)

* [YeungNLP's original unquantised fp16 model in pytorch format, for GPU inference and for further conversions](https://huggingface.co/YeungNLP/firefly-llama2-13b-v1.2)

<!-- repositories-available end -->

<!-- prompt-template start -->

## Prompt template: Unknown

```

{prompt}

```

<!-- prompt-template end -->

<!-- compatibility_gguf start -->

## Compatibility

These quantised GGUFv2 files are compatible with llama.cpp from August 27th onwards, as of commit [d0cee0d36d5be95a0d9088b674dbb27354107221](https://github.com/ggerganov/llama.cpp/commit/d0cee0d36d5be95a0d9088b674dbb27354107221)

They are also compatible with many third party UIs and libraries - please see the list at the top of this README.

## Explanation of quantisation methods

<details>

<summary>Click to see details</summary>

The new methods available are:

* GGML_TYPE_Q2_K - "type-1" 2-bit quantization in super-blocks containing 16 blocks, each block having 16 weight. Block scales and mins are quantized with 4 bits. This ends up effectively using 2.5625 bits per weight (bpw)

* GGML_TYPE_Q3_K - "type-0" 3-bit quantization in super-blocks containing 16 blocks, each block having 16 weights. Scales are quantized with 6 bits. This end up using 3.4375 bpw.

* GGML_TYPE_Q4_K - "type-1" 4-bit quantization in super-blocks containing 8 blocks, each block having 32 weights. Scales and mins are quantized with 6 bits. This ends up using 4.5 bpw.

* GGML_TYPE_Q5_K - "type-1" 5-bit quantization. Same super-block structure as GGML_TYPE_Q4_K resulting in 5.5 bpw

* GGML_TYPE_Q6_K - "type-0" 6-bit quantization. Super-blocks with 16 blocks, each block having 16 weights. Scales are quantized with 8 bits. This ends up using 6.5625 bpw

Refer to the Provided Files table below to see what files use which methods, and how.

</details>

<!-- compatibility_gguf end -->

<!-- README_GGUF.md-provided-files start -->

## Provided files

| Name | Quant method | Bits | Size | Max RAM required | Use case |

| ---- | ---- | ---- | ---- | ---- | ----- |

| [firefly-llama2-13b-v1.2.Q2_K.gguf](https://huggingface.co/TheBloke/Firefly-Llama2-13B-v1.2-GGUF/blob/main/firefly-llama2-13b-v1.2.Q2_K.gguf) | Q2_K | 2 | 5.43 GB| 7.93 GB | smallest, significant quality loss - not recommended for most purposes |

| [firefly-llama2-13b-v1.2.Q3_K_S.gguf](https://huggingface.co/TheBloke/Firefly-Llama2-13B-v1.2-GGUF/blob/main/firefly-llama2-13b-v1.2.Q3_K_S.gguf) | Q3_K_S | 3 | 5.66 GB| 8.16 GB | very small, high quality loss |

| [firefly-llama2-13b-v1.2.Q3_K_M.gguf](https://huggingface.co/TheBloke/Firefly-Llama2-13B-v1.2-GGUF/blob/main/firefly-llama2-13b-v1.2.Q3_K_M.gguf) | Q3_K_M | 3 | 6.34 GB| 8.84 GB | very small, high quality loss |

| [firefly-llama2-13b-v1.2.Q3_K_L.gguf](https://huggingface.co/TheBloke/Firefly-Llama2-13B-v1.2-GGUF/blob/main/firefly-llama2-13b-v1.2.Q3_K_L.gguf) | Q3_K_L | 3 | 6.93 GB| 9.43 GB | small, substantial quality loss |

| [firefly-llama2-13b-v1.2.Q4_0.gguf](https://huggingface.co/TheBloke/Firefly-Llama2-13B-v1.2-GGUF/blob/main/firefly-llama2-13b-v1.2.Q4_0.gguf) | Q4_0 | 4 | 7.37 GB| 9.87 GB | legacy; small, very high quality loss - prefer using Q3_K_M |

| [firefly-llama2-13b-v1.2.Q4_K_S.gguf](https://huggingface.co/TheBloke/Firefly-Llama2-13B-v1.2-GGUF/blob/main/firefly-llama2-13b-v1.2.Q4_K_S.gguf) | Q4_K_S | 4 | 7.41 GB| 9.91 GB | small, greater quality loss |

| [firefly-llama2-13b-v1.2.Q4_K_M.gguf](https://huggingface.co/TheBloke/Firefly-Llama2-13B-v1.2-GGUF/blob/main/firefly-llama2-13b-v1.2.Q4_K_M.gguf) | Q4_K_M | 4 | 7.87 GB| 10.37 GB | medium, balanced quality - recommended |

| [firefly-llama2-13b-v1.2.Q5_0.gguf](https://huggingface.co/TheBloke/Firefly-Llama2-13B-v1.2-GGUF/blob/main/firefly-llama2-13b-v1.2.Q5_0.gguf) | Q5_0 | 5 | 8.97 GB| 11.47 GB | legacy; medium, balanced quality - prefer using Q4_K_M |

| [firefly-llama2-13b-v1.2.Q5_K_S.gguf](https://huggingface.co/TheBloke/Firefly-Llama2-13B-v1.2-GGUF/blob/main/firefly-llama2-13b-v1.2.Q5_K_S.gguf) | Q5_K_S | 5 | 8.97 GB| 11.47 GB | large, low quality loss - recommended |

| [firefly-llama2-13b-v1.2.Q5_K_M.gguf](https://huggingface.co/TheBloke/Firefly-Llama2-13B-v1.2-GGUF/blob/main/firefly-llama2-13b-v1.2.Q5_K_M.gguf) | Q5_K_M | 5 | 9.23 GB| 11.73 GB | large, very low quality loss - recommended |

| [firefly-llama2-13b-v1.2.Q6_K.gguf](https://huggingface.co/TheBloke/Firefly-Llama2-13B-v1.2-GGUF/blob/main/firefly-llama2-13b-v1.2.Q6_K.gguf) | Q6_K | 6 | 10.68 GB| 13.18 GB | very large, extremely low quality loss |

| [firefly-llama2-13b-v1.2.Q8_0.gguf](https://huggingface.co/TheBloke/Firefly-Llama2-13B-v1.2-GGUF/blob/main/firefly-llama2-13b-v1.2.Q8_0.gguf) | Q8_0 | 8 | 13.83 GB| 16.33 GB | very large, extremely low quality loss - not recommended |

**Note**: the above RAM figures assume no GPU offloading. If layers are offloaded to the GPU, this will reduce RAM usage and use VRAM instead.

<!-- README_GGUF.md-provided-files end -->

<!-- README_GGUF.md-how-to-download start -->

## How to download GGUF files

**Note for manual downloaders:** You almost never want to clone the entire repo! Multiple different quantisation formats are provided, and most users only want to pick and download a single file.

The following clients/libraries will automatically download models for you, providing a list of available models to choose from:

- LM Studio

- LoLLMS Web UI

- Faraday.dev

### In `text-generation-webui`

Under Download Model, you can enter the model repo: TheBloke/Firefly-Llama2-13B-v1.2-GGUF and below it, a specific filename to download, such as: firefly-llama2-13b-v1.2.q4_K_M.gguf.

Then click Download.

### On the command line, including multiple files at once

I recommend using the `huggingface-hub` Python library:

```shell

pip3 install huggingface-hub>=0.17.1

```

Then you can download any individual model file to the current directory, at high speed, with a command like this:

```shell

huggingface-cli download TheBloke/Firefly-Llama2-13B-v1.2-GGUF firefly-llama2-13b-v1.2.q4_K_M.gguf --local-dir . --local-dir-use-symlinks False

```

<details>

<summary>More advanced huggingface-cli download usage</summary>

You can also download multiple files at once with a pattern:

```shell

huggingface-cli download TheBloke/Firefly-Llama2-13B-v1.2-GGUF --local-dir . --local-dir-use-symlinks False --include='*Q4_K*gguf'

```

For more documentation on downloading with `huggingface-cli`, please see: [HF -> Hub Python Library -> Download files -> Download from the CLI](https://huggingface.co/docs/huggingface_hub/guides/download#download-from-the-cli).

To accelerate downloads on fast connections (1Gbit/s or higher), install `hf_transfer`:

```shell

pip3 install hf_transfer

```

And set environment variable `HF_HUB_ENABLE_HF_TRANSFER` to `1`:

```shell

HUGGINGFACE_HUB_ENABLE_HF_TRANSFER=1 huggingface-cli download TheBloke/Firefly-Llama2-13B-v1.2-GGUF firefly-llama2-13b-v1.2.q4_K_M.gguf --local-dir . --local-dir-use-symlinks False

```

Windows CLI users: Use `set HUGGINGFACE_HUB_ENABLE_HF_TRANSFER=1` before running the download command.

</details>

<!-- README_GGUF.md-how-to-download end -->

<!-- README_GGUF.md-how-to-run start -->

## Example `llama.cpp` command

Make sure you are using `llama.cpp` from commit [d0cee0d36d5be95a0d9088b674dbb27354107221](https://github.com/ggerganov/llama.cpp/commit/d0cee0d36d5be95a0d9088b674dbb27354107221) or later.

```shell

./main -ngl 32 -m firefly-llama2-13b-v1.2.q4_K_M.gguf --color -c 4096 --temp 0.7 --repeat_penalty 1.1 -n -1 -p "{prompt}"

```

Change `-ngl 32` to the number of layers to offload to GPU. Remove it if you don't have GPU acceleration.

Change `-c 4096` to the desired sequence length. For extended sequence models - eg 8K, 16K, 32K - the necessary RoPE scaling parameters are read from the GGUF file and set by llama.cpp automatically.

If you want to have a chat-style conversation, replace the `-p <PROMPT>` argument with `-i -ins`

For other parameters and how to use them, please refer to [the llama.cpp documentation](https://github.com/ggerganov/llama.cpp/blob/master/examples/main/README.md)

## How to run in `text-generation-webui`

Further instructions here: [text-generation-webui/docs/llama.cpp.md](https://github.com/oobabooga/text-generation-webui/blob/main/docs/llama.cpp.md).

## How to run from Python code

You can use GGUF models from Python using the [llama-cpp-python](https://github.com/abetlen/llama-cpp-python) or [ctransformers](https://github.com/marella/ctransformers) libraries.

### How to load this model from Python using ctransformers

#### First install the package

```bash

# Base ctransformers with no GPU acceleration

pip install ctransformers>=0.2.24

# Or with CUDA GPU acceleration

pip install ctransformers[cuda]>=0.2.24

# Or with ROCm GPU acceleration

CT_HIPBLAS=1 pip install ctransformers>=0.2.24 --no-binary ctransformers

# Or with Metal GPU acceleration for macOS systems

CT_METAL=1 pip install ctransformers>=0.2.24 --no-binary ctransformers

```

#### Simple example code to load one of these GGUF models

```python

from ctransformers import AutoModelForCausalLM

# Set gpu_layers to the number of layers to offload to GPU. Set to 0 if no GPU acceleration is available on your system.

llm = AutoModelForCausalLM.from_pretrained("TheBloke/Firefly-Llama2-13B-v1.2-GGUF", model_file="firefly-llama2-13b-v1.2.q4_K_M.gguf", model_type="llama", gpu_layers=50)

print(llm("AI is going to"))

```

## How to use with LangChain

Here's guides on using llama-cpp-python or ctransformers with LangChain:

* [LangChain + llama-cpp-python](https://python.langchain.com/docs/integrations/llms/llamacpp)

* [LangChain + ctransformers](https://python.langchain.com/docs/integrations/providers/ctransformers)

<!-- README_GGUF.md-how-to-run end -->

<!-- footer start -->

<!-- 200823 -->

## Discord

For further support, and discussions on these models and AI in general, join us at:

[TheBloke AI's Discord server](https://discord.gg/theblokeai)

## Thanks, and how to contribute

Thanks to the [chirper.ai](https://chirper.ai) team!

Thanks to Clay from [gpus.llm-utils.org](llm-utils)!

I've had a lot of people ask if they can contribute. I enjoy providing models and helping people, and would love to be able to spend even more time doing it, as well as expanding into new projects like fine tuning/training.

If you're able and willing to contribute it will be most gratefully received and will help me to keep providing more models, and to start work on new AI projects.

Donaters will get priority support on any and all AI/LLM/model questions and requests, access to a private Discord room, plus other benefits.

* Patreon: https://patreon.com/TheBlokeAI

* Ko-Fi: https://ko-fi.com/TheBlokeAI

**Special thanks to**: Aemon Algiz.

**Patreon special mentions**: Alicia Loh, Stephen Murray, K, Ajan Kanaga, RoA, Magnesian, Deo Leter, Olakabola, Eugene Pentland, zynix, Deep Realms, Raymond Fosdick, Elijah Stavena, Iucharbius, Erik Bjäreholt, Luis Javier Navarrete Lozano, Nicholas, theTransient, John Detwiler, alfie_i, knownsqashed, Mano Prime, Willem Michiel, Enrico Ros, LangChain4j, OG, Michael Dempsey, Pierre Kircher, Pedro Madruga, James Bentley, Thomas Belote, Luke @flexchar, Leonard Tan, Johann-Peter Hartmann, Illia Dulskyi, Fen Risland, Chadd, S_X, Jeff Scroggin, Ken Nordquist, Sean Connelly, Artur Olbinski, Swaroop Kallakuri, Jack West, Ai Maven, David Ziegler, Russ Johnson, transmissions 11, John Villwock, Alps Aficionado, Clay Pascal, Viktor Bowallius, Subspace Studios, Rainer Wilmers, Trenton Dambrowitz, vamX, Michael Levine, 준교 김, Brandon Frisco, Kalila, Trailburnt, Randy H, Talal Aujan, Nathan Dryer, Vadim, 阿明, ReadyPlayerEmma, Tiffany J. Kim, George Stoitzev, Spencer Kim, Jerry Meng, Gabriel Tamborski, Cory Kujawski, Jeffrey Morgan, Spiking Neurons AB, Edmond Seymore, Alexandros Triantafyllidis, Lone Striker, Cap'n Zoog, Nikolai Manek, danny, ya boyyy, Derek Yates, usrbinkat, Mandus, TL, Nathan LeClaire, subjectnull, Imad Khwaja, webtim, Raven Klaugh, Asp the Wyvern, Gabriel Puliatti, Caitlyn Gatomon, Joseph William Delisle, Jonathan Leane, Luke Pendergrass, SuperWojo, Sebastain Graf, Will Dee, Fred von Graf, Andrey, Dan Guido, Daniel P. Andersen, Nitin Borwankar, Elle, Vitor Caleffi, biorpg, jjj, NimbleBox.ai, Pieter, Matthew Berman, terasurfer, Michael Davis, Alex, Stanislav Ovsiannikov

Thank you to all my generous patrons and donaters!

And thank you again to a16z for their generous grant.

<!-- footer end -->

<!-- original-model-card start -->

# Original model card: YeungNLP's Firefly Llama2 13B v1.2

No original model card was available.

<!-- original-model-card end -->

|

shaowenchen/vicuna-33b-v1.3-gguf | shaowenchen | 2023-09-27T02:16:12Z | 846 | 5 | null | [

"gguf",

"vicuna",

"chinese",

"text-generation",

"zh",

"license:other",

"region:us"

] | text-generation | 2023-09-13T22:30:57Z | ---

inference: false

language:

- zh

license: other

model_creator: lmsys

model_link: https://huggingface.co/lmsys/vicuna-33b-v1.3

model_name: vicuna-33b-v1.3

model_type: vicuna

pipeline_tag: text-generation

quantized_by: shaowenchen

tasks:

- text2text-generation

tags:

- gguf

- vicuna

- chinese

---

## Provided files

| Name | Quant method | Size |

| ----------------------------- | ------------ | ----- |

| vicuna-33b-v1.3.Q2_K.gguf | Q2_K | 13 GB |

| vicuna-33b-v1.3.Q3_K.gguf | Q3_K | 15 GB |

| vicuna-33b-v1.3.Q3_K_L.gguf | Q3_K_L | 16 GB |

| vicuna-33b-v1.3.Q3_K_S.gguf | Q3_K_S | 13 GB |

| vicuna-33b-v1.3.Q4_0.gguf | Q4_0 | 17 GB |

| vicuna-33b-v1.3.Q4_1.gguf | Q4_1 | 19 GB |

| vicuna-33b-v1.3.Q4_K.gguf | Q4_K | 18 GB |

| vicuna-33b-v1.3.Q4_K_S.gguf | Q4_K_S | 17 GB |

| vicuna-33b-v1.3.Q5_0.gguf | Q5_0 | 21 GB |

| vicuna-33b-v1.3.Q5_1.gguf | Q5_1 | 23 GB |

| vicuna-33b-v1.3.Q5_K.gguf | Q5_K | 21 GB |

| vicuna-33b-v1.3.Q5_K_S.gguf | Q5_K_S | 21 GB |

| vicuna-33b-v1.3.Q6_K.gguf | Q6_K | 25 GB |

| vicuna-33b-v1.3.Q8_0.gguf | Q8_0 | 32 GB |

Usage:

```bash

docker run --rm -it -p 8000:8000 -v /path/to/models:/models -e MODEL=/models/gguf-model-name.gguf hubimage/llama-cpp-python:latest

```

## Provided images

| Name | Quant method | Compressed Size |

| --------------------------------------- | ------------ | --------------- |

| `shaowenchen/vicuna-33b-v1.3-gguf:Q2_K` | Q2_K | 12.78 GB |

| `shaowenchen/vicuna-33b-v1.3-gguf:Q3_K` | Q3_K | 14.81 GB |

| `shaowenchen/vicuna-33b-v1.3-gguf:Q4_K` | Q4_K | 18.24 GB |

| `shaowenchen/vicuna-33b-v1.3-gguf:Q5_K` | Q5_K | 21.72 GB |

| `shaowenchen/vicuna-33b-v1.3-gguf:Q6_K` | Q6_K | 25.05 GB |

| `shaowenchen/vicuna-33b-v1.3-gguf:Q8_0` | Q8_0 | 31.34 GB |

| `shaowenchen/vicuna-33b-v1.3-gguf:full` | full | 56.07 GB |

Usage:

```

docker run --rm -p 8000:8000 shaowenchen/vicuna-33b-v1.3-gguf:Q2_K

```

and you can view http://localhost:8000/docs to see the swagger UI.

|

01-ai/Yi-6B-Chat-4bits | 01-ai | 2024-06-26T10:25:32Z | 846 | 18 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"conversational",

"arxiv:2403.04652",

"arxiv:2311.16502",

"arxiv:2401.11944",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"4-bit",

"awq",

"region:us"

] | text-generation | 2023-11-22T09:55:46Z | ---

license: apache-2.0

widget:

- example_title: "Yi-34B-Chat"

text: "hi"

output:

text: " Hello! How can I assist you today?"

- example_title: "Yi-34B"

text: "There's a place where time stands still. A place of breath taking wonder, but also"

output:

text: " an eerie sense that something is just not right…\nBetween the two worlds lies The Forgotten Kingdom - home to creatures long since thought extinct and ancient magic so strong it defies belief! Only here can you find what has been lost for centuries: An Elixir Of Life which will restore youth and vitality if only those who seek its power are brave enough to face up against all manner of dangers lurking in this mysterious land! But beware; some say there may even exist powerful entities beyond our comprehension whose intentions towards humanity remain unclear at best ---- they might want nothing more than destruction itself rather then anything else from their quest after immortality (and maybe someone should tell them about modern medicine)? In any event though – one thing remains true regardless : whether or not success comes easy depends entirely upon how much effort we put into conquering whatever challenges lie ahead along with having faith deep down inside ourselves too ;) So let’s get started now shall We?"

pipeline_tag: text-generation

---

<div align="center">

<picture>

<source media="(prefers-color-scheme: dark)" srcset="https://raw.githubusercontent.com/01-ai/Yi/main/assets/img/Yi_logo_icon_dark.svg" width="200px">

<source media="(prefers-color-scheme: light)" srcset="https://raw.githubusercontent.com/01-ai/Yi/main/assets/img/Yi_logo_icon_light.svg" width="200px">

<img alt="specify theme context for images" src="https://raw.githubusercontent.com/01-ai/Yi/main/assets/img/Yi_logo_icon_light.svg">

</picture>

</br>

</br>

<div style="display: inline-block;">

<a href="https://github.com/01-ai/Yi/actions/workflows/build_docker_image.yml">

<img src="https://github.com/01-ai/Yi/actions/workflows/build_docker_image.yml/badge.svg">

</a>

</div>

<div style="display: inline-block;">

<a href="mailto:[email protected]">

<img src="https://img.shields.io/badge/✉️[email protected]">

</a>

</div>

</div>

<div align="center">

<h3 align="center">Building the Next Generation of Open-Source and Bilingual LLMs</h3>

</div>

<p align="center">

🤗 <a href="https://huggingface.co/01-ai" target="_blank">Hugging Face</a> • 🤖 <a href="https://www.modelscope.cn/organization/01ai/" target="_blank">ModelScope</a> • ✡️ <a href="https://wisemodel.cn/organization/01.AI" target="_blank">WiseModel</a>

</p>

<p align="center">

👩🚀 Ask questions or discuss ideas on <a href="https://github.com/01-ai/Yi/discussions" target="_blank"> GitHub </a>

</p>

<p align="center">

👋 Join us on <a href="https://discord.gg/hYUwWddeAu" target="_blank"> 👾 Discord </a> or <a href="有官方的微信群嘛 · Issue #43 · 01-ai/Yi" target="_blank"> 💬 WeChat </a>

</p>

<p align="center">

📝 Check out <a href="https://arxiv.org/abs/2403.04652"> Yi Tech Report </a>

</p>

<p align="center">

📚 Grow at <a href="#learning-hub"> Yi Learning Hub </a>

</p>

<!-- DO NOT REMOVE ME -->

<hr>

<details open>

<summary></b>📕 Table of Contents</b></summary>

- [What is Yi?](#what-is-yi)

- [Introduction](#introduction)

- [Models](#models)

- [Chat models](#chat-models)

- [Base models](#base-models)

- [Model info](#model-info)

- [News](#news)

- [How to use Yi?](#how-to-use-yi)

- [Quick start](#quick-start)

- [Choose your path](#choose-your-path)

- [pip](#quick-start---pip)

- [docker](#quick-start---docker)

- [llama.cpp](#quick-start---llamacpp)

- [conda-lock](#quick-start---conda-lock)

- [Web demo](#web-demo)

- [Fine-tuning](#fine-tuning)

- [Quantization](#quantization)

- [Deployment](#deployment)

- [FAQ](#faq)

- [Learning hub](#learning-hub)

- [Why Yi?](#why-yi)

- [Ecosystem](#ecosystem)

- [Upstream](#upstream)

- [Downstream](#downstream)

- [Serving](#serving)

- [Quantization](#quantization-1)

- [Fine-tuning](#fine-tuning-1)

- [API](#api)

- [Benchmarks](#benchmarks)

- [Base model performance](#base-model-performance)

- [Chat model performance](#chat-model-performance)

- [Tech report](#tech-report)

- [Citation](#citation)

- [Who can use Yi?](#who-can-use-yi)

- [Misc.](#misc)

- [Acknowledgements](#acknowledgments)

- [Disclaimer](#disclaimer)

- [License](#license)

</details>

<hr>

# What is Yi?

## Introduction

- 🤖 The Yi series models are the next generation of open-source large language models trained from scratch by [01.AI](https://01.ai/).

- 🙌 Targeted as a bilingual language model and trained on 3T multilingual corpus, the Yi series models become one of the strongest LLM worldwide, showing promise in language understanding, commonsense reasoning, reading comprehension, and more. For example,

- Yi-34B-Chat model **landed in second place (following GPT-4 Turbo)**, outperforming other LLMs (such as GPT-4, Mixtral, Claude) on the AlpacaEval Leaderboard (based on data available up to January 2024).

- Yi-34B model **ranked first among all existing open-source models** (such as Falcon-180B, Llama-70B, Claude) in **both English and Chinese** on various benchmarks, including Hugging Face Open LLM Leaderboard (pre-trained) and C-Eval (based on data available up to November 2023).

- 🙏 (Credits to Llama) Thanks to the Transformer and Llama open-source communities, as they reduce the efforts required to build from scratch and enable the utilization of the same tools within the AI ecosystem.

<details style="display: inline;"><summary> If you're interested in Yi's adoption of Llama architecture and license usage policy, see <span style="color: green;">Yi's relation with Llama.</span> ⬇️</summary> <ul> <br>

> 💡 TL;DR

>

> The Yi series models adopt the same model architecture as Llama but are **NOT** derivatives of Llama.

- Both Yi and Llama are based on the Transformer structure, which has been the standard architecture for large language models since 2018.

- Grounded in the Transformer architecture, Llama has become a new cornerstone for the majority of state-of-the-art open-source models due to its excellent stability, reliable convergence, and robust compatibility. This positions Llama as the recognized foundational framework for models including Yi.

- Thanks to the Transformer and Llama architectures, other models can leverage their power, reducing the effort required to build from scratch and enabling the utilization of the same tools within their ecosystems.

- However, the Yi series models are NOT derivatives of Llama, as they do not use Llama's weights.

- As Llama's structure is employed by the majority of open-source models, the key factors of determining model performance are training datasets, training pipelines, and training infrastructure.

- Developing in a unique and proprietary way, Yi has independently created its own high-quality training datasets, efficient training pipelines, and robust training infrastructure entirely from the ground up. This effort has led to excellent performance with Yi series models ranking just behind GPT4 and surpassing Llama on the [Alpaca Leaderboard in Dec 2023](https://tatsu-lab.github.io/alpaca_eval/).

</ul>

</details>

<p align="right"> [

<a href="#top">Back to top ⬆️ </a> ]

</p>

## News

<details>

<summary>🎯 <b>2024-05-13</b>: The <a href="https://github.com/01-ai/Yi-1.5">Yi-1.5 series models </a> are open-sourced, further improving coding, math, reasoning, and instruction-following abilities.</summary>

</details>

<details>

<summary>🎯 <b>2024-03-16</b>: The <code>Yi-9B-200K</code> is open-sourced and available to the public.</summary>

</details>

<details>

<summary>🎯 <b>2024-03-08</b>: <a href="https://arxiv.org/abs/2403.04652">Yi Tech Report</a> is published! </summary>

</details>

<details open>

<summary>🔔 <b>2024-03-07</b>: The long text capability of the Yi-34B-200K has been enhanced. </summary>

<br>

In the "Needle-in-a-Haystack" test, the Yi-34B-200K's performance is improved by 10.5%, rising from 89.3% to an impressive 99.8%. We continue to pre-train the model on 5B tokens long-context data mixture and demonstrate a near-all-green performance.

</details>

<details open>

<summary>🎯 <b>2024-03-06</b>: The <code>Yi-9B</code> is open-sourced and available to the public.</summary>

<br>

<code>Yi-9B</code> stands out as the top performer among a range of similar-sized open-source models (including Mistral-7B, SOLAR-10.7B, Gemma-7B, DeepSeek-Coder-7B-Base-v1.5 and more), particularly excelling in code, math, common-sense reasoning, and reading comprehension.

</details>

<details open>

<summary>🎯 <b>2024-01-23</b>: The Yi-VL models, <code><a href="https://huggingface.co/01-ai/Yi-VL-34B">Yi-VL-34B</a></code> and <code><a href="https://huggingface.co/01-ai/Yi-VL-6B">Yi-VL-6B</a></code>, are open-sourced and available to the public.</summary>

<br>

<code><a href="https://huggingface.co/01-ai/Yi-VL-34B">Yi-VL-34B</a></code> has ranked <strong>first</strong> among all existing open-source models in the latest benchmarks, including <a href="https://arxiv.org/abs/2311.16502">MMMU</a> and <a href="https://arxiv.org/abs/2401.11944">CMMMU</a> (based on data available up to January 2024).</li>

</details>

<details>

<summary>🎯 <b>2023-11-23</b>: <a href="#chat-models">Chat models</a> are open-sourced and available to the public.</summary>

<br>This release contains two chat models based on previously released base models, two 8-bit models quantized by GPTQ, and two 4-bit models quantized by AWQ.

- `Yi-34B-Chat`

- `Yi-34B-Chat-4bits`

- `Yi-34B-Chat-8bits`

- `Yi-6B-Chat`

- `Yi-6B-Chat-4bits`

- `Yi-6B-Chat-8bits`

You can try some of them interactively at:

- [Hugging Face](https://huggingface.co/spaces/01-ai/Yi-34B-Chat)

- [Replicate](https://replicate.com/01-ai)

</details>

<details>

<summary>🔔 <b>2023-11-23</b>: The Yi Series Models Community License Agreement is updated to <a href="https://github.com/01-ai/Yi/blob/main/MODEL_LICENSE_AGREEMENT.txt">v2.1</a>.</summary>

</details>

<details>

<summary>🔥 <b>2023-11-08</b>: Invited test of Yi-34B chat model.</summary>

<br>Application form:

- [English](https://cn.mikecrm.com/l91ODJf)

- [Chinese](https://cn.mikecrm.com/gnEZjiQ)

</details>

<details>

<summary>🎯 <b>2023-11-05</b>: <a href="#base-models">The base models, </a><code>Yi-6B-200K</code> and <code>Yi-34B-200K</code>, are open-sourced and available to the public.</summary>

<br>This release contains two base models with the same parameter sizes as the previous

release, except that the context window is extended to 200K.

</details>

<details>

<summary>🎯 <b>2023-11-02</b>: <a href="#base-models">The base models, </a><code>Yi-6B</code> and <code>Yi-34B</code>, are open-sourced and available to the public.</summary>

<br>The first public release contains two bilingual (English/Chinese) base models

with the parameter sizes of 6B and 34B. Both of them are trained with 4K

sequence length and can be extended to 32K during inference time.

</details>

<p align="right"> [

<a href="#top">Back to top ⬆️ </a> ]

</p>

## Models

Yi models come in multiple sizes and cater to different use cases. You can also fine-tune Yi models to meet your specific requirements.

If you want to deploy Yi models, make sure you meet the [software and hardware requirements](#deployment).

### Chat models

| Model | Download |

|---|---|

|Yi-34B-Chat | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi-34B-Chat) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi-34B-Chat/summary) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi-34B-Chat) |

|Yi-34B-Chat-4bits | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi-34B-Chat-4bits) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi-34B-Chat-4bits/summary) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi-34B-Chat-4bits) |

|Yi-34B-Chat-8bits | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi-34B-Chat-8bits) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi-34B-Chat-8bits/summary) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi-34B-Chat-8bits) |

|Yi-6B-Chat| • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi-6B-Chat) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi-6B-Chat/summary) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi-6B-Chat) |

|Yi-6B-Chat-4bits | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi-6B-Chat-4bits) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi-6B-Chat-4bits/summary) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi-6B-Chat-4bits) |

|Yi-6B-Chat-8bits | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi-6B-Chat-8bits) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi-6B-Chat-8bits/summary) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi-6B-Chat-8bits) |

<sub><sup> - 4-bit series models are quantized by AWQ. <br> - 8-bit series models are quantized by GPTQ <br> - All quantized models have a low barrier to use since they can be deployed on consumer-grade GPUs (e.g., 3090, 4090). </sup></sub>

### Base models

| Model | Download |

|---|---|

|Yi-34B| • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi-34B) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi-34B/summary) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi-6B-Chat-8bits) |

|Yi-34B-200K|• [🤗 Hugging Face](https://huggingface.co/01-ai/Yi-34B-200K) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi-34B-200K/summary) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi-6B-Chat-8bits)|

|Yi-9B|• [🤗 Hugging Face](https://huggingface.co/01-ai/Yi-9B) • [🤖 ModelScope](https://wisemodel.cn/models/01.AI/Yi-6B-Chat-8bits) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi-9B)|

|Yi-9B-200K | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi-9B-200K) • [🤖 ModelScope](https://wisemodel.cn/models/01.AI/Yi-9B-200K) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi-6B-Chat-8bits) |

|Yi-6B| • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi-6B) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi-6B/summary) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi-6B-Chat-8bits) |

|Yi-6B-200K | • [🤗 Hugging Face](https://huggingface.co/01-ai/Yi-6B-200K) • [🤖 ModelScope](https://www.modelscope.cn/models/01ai/Yi-6B-200K/summary) • [🟣 wisemodel](https://wisemodel.cn/models/01.AI/Yi-6B-Chat-8bits) |

<sub><sup> - 200k is roughly equivalent to 400,000 Chinese characters. <br> - If you want to use the previous version of the Yi-34B-200K (released on Nov 5, 2023), run `git checkout 069cd341d60f4ce4b07ec394e82b79e94f656cf` to download the weight. </sup></sub>

### Model info

- For chat and base models

<table>

<thead>

<tr>

<th>Model</th>

<th>Intro</th>

<th>Default context window</th>

<th>Pretrained tokens</th>

<th>Training Data Date</th>

</tr>

</thead>

<tbody><tr>

<td>6B series models</td>

<td>They are suitable for personal and academic use.</td>

<td rowspan="3">4K</td>

<td>3T</td>

<td rowspan="3">Up to June 2023</td>

</tr>

<tr>

<td>9B series models</td>

<td>It is the best at coding and math in the Yi series models.</td>

<td>Yi-9B is continuously trained based on Yi-6B, using 0.8T tokens.</td>

</tr>

<tr>

<td>34B series models</td>

<td>They are suitable for personal, academic, and commercial (particularly for small and medium-sized enterprises) purposes. It's a cost-effective solution that's affordable and equipped with emergent ability.</td>

<td>3T</td>

</tr>

</tbody></table>

- For chat models

<details style="display: inline;"><summary>For chat model limitations, see the explanations below. ⬇️</summary>

<ul>

<br>The released chat model has undergone exclusive training using Supervised Fine-Tuning (SFT). Compared to other standard chat models, our model produces more diverse responses, making it suitable for various downstream tasks, such as creative scenarios. Furthermore, this diversity is expected to enhance the likelihood of generating higher quality responses, which will be advantageous for subsequent Reinforcement Learning (RL) training.

<br>However, this higher diversity might amplify certain existing issues, including:

<li>Hallucination: This refers to the model generating factually incorrect or nonsensical information. With the model's responses being more varied, there's a higher chance of hallucination that are not based on accurate data or logical reasoning.</li>

<li>Non-determinism in re-generation: When attempting to regenerate or sample responses, inconsistencies in the outcomes may occur. The increased diversity can lead to varying results even under similar input conditions.</li>

<li>Cumulative Error: This occurs when errors in the model's responses compound over time. As the model generates more diverse responses, the likelihood of small inaccuracies building up into larger errors increases, especially in complex tasks like extended reasoning, mathematical problem-solving, etc.</li>

<li>To achieve more coherent and consistent responses, it is advisable to adjust generation configuration parameters such as temperature, top_p, or top_k. These adjustments can help in the balance between creativity and coherence in the model's outputs.</li>

</ul>

</details>

<p align="right"> [

<a href="#top">Back to top ⬆️ </a> ]

</p>

# How to use Yi?

- [Quick start](#quick-start)

- [Choose your path](#choose-your-path)

- [pip](#quick-start---pip)

- [docker](#quick-start---docker)

- [conda-lock](#quick-start---conda-lock)

- [llama.cpp](#quick-start---llamacpp)

- [Web demo](#web-demo)

- [Fine-tuning](#fine-tuning)

- [Quantization](#quantization)

- [Deployment](#deployment)

- [FAQ](#faq)

- [Learning hub](#learning-hub)

## Quick start

Getting up and running with Yi models is simple with multiple choices available.

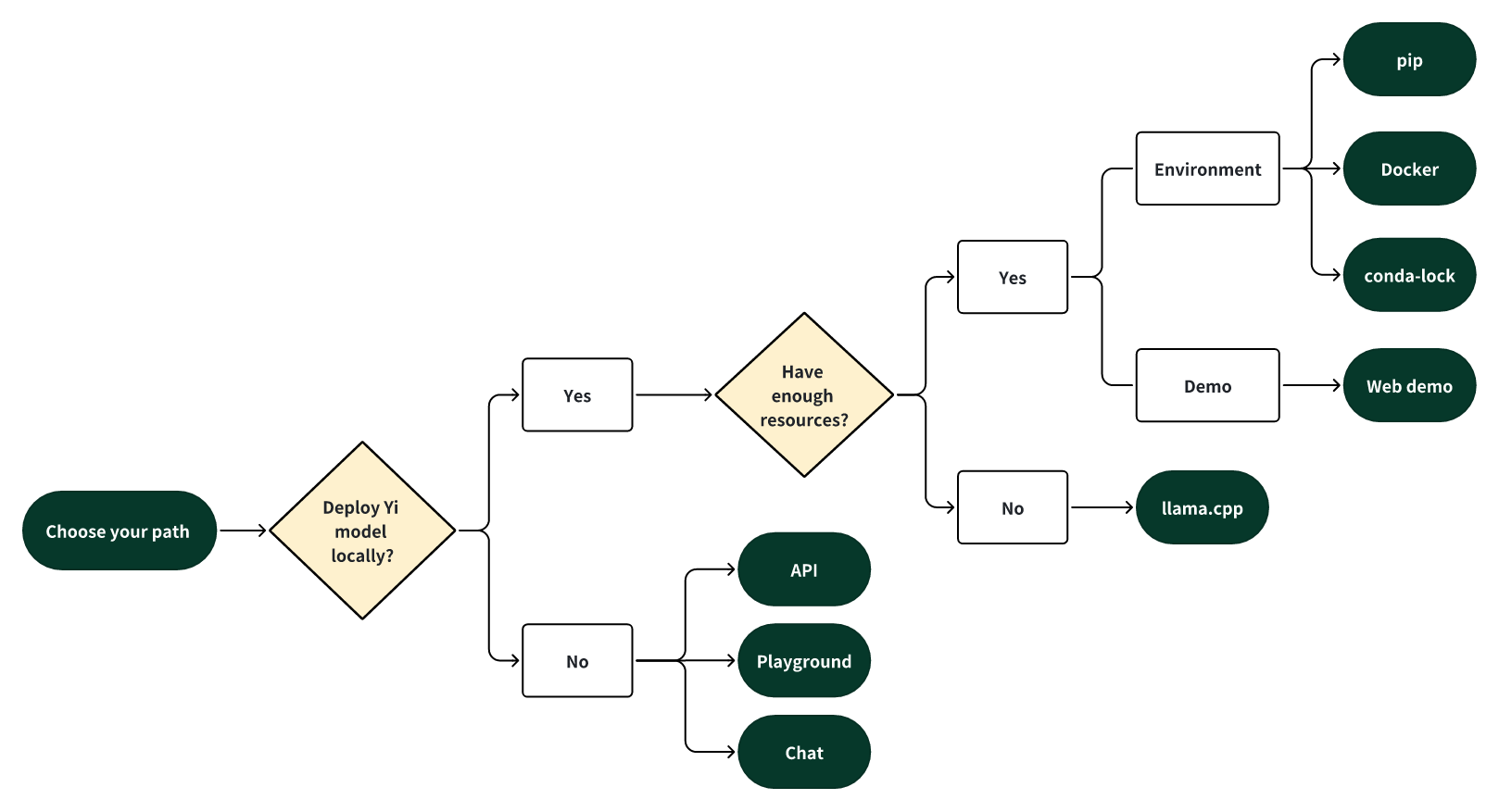

### Choose your path

Select one of the following paths to begin your journey with Yi!

#### 🎯 Deploy Yi locally

If you prefer to deploy Yi models locally,

- 🙋♀️ and you have **sufficient** resources (for example, NVIDIA A800 80GB), you can choose one of the following methods:

- [pip](#quick-start---pip)

- [Docker](#quick-start---docker)

- [conda-lock](#quick-start---conda-lock)

- 🙋♀️ and you have **limited** resources (for example, a MacBook Pro), you can use [llama.cpp](#quick-start---llamacpp).

#### 🎯 Not to deploy Yi locally

If you prefer not to deploy Yi models locally, you can explore Yi's capabilities using any of the following options.

##### 🙋♀️ Run Yi with APIs

If you want to explore more features of Yi, you can adopt one of these methods:

- Yi APIs (Yi official)

- [Early access has been granted](https://x.com/01AI_Yi/status/1735728934560600536?s=20) to some applicants. Stay tuned for the next round of access!

- [Yi APIs](https://replicate.com/01-ai/yi-34b-chat/api?tab=nodejs) (Replicate)

##### 🙋♀️ Run Yi in playground

If you want to chat with Yi with more customizable options (e.g., system prompt, temperature, repetition penalty, etc.), you can try one of the following options:

- [Yi-34B-Chat-Playground](https://platform.lingyiwanwu.com/prompt/playground) (Yi official)

- Access is available through a whitelist. Welcome to apply (fill out a form in [English](https://cn.mikecrm.com/l91ODJf) or [Chinese](https://cn.mikecrm.com/gnEZjiQ)).

- [Yi-34B-Chat-Playground](https://replicate.com/01-ai/yi-34b-chat) (Replicate)

##### 🙋♀️ Chat with Yi

If you want to chat with Yi, you can use one of these online services, which offer a similar user experience:

- [Yi-34B-Chat](https://huggingface.co/spaces/01-ai/Yi-34B-Chat) (Yi official on Hugging Face)

- No registration is required.

- [Yi-34B-Chat](https://platform.lingyiwanwu.com/) (Yi official beta)

- Access is available through a whitelist. Welcome to apply (fill out a form in [English](https://cn.mikecrm.com/l91ODJf) or [Chinese](https://cn.mikecrm.com/gnEZjiQ)).

<p align="right"> [

<a href="#top">Back to top ⬆️ </a> ]

</p>

### Quick start - pip

This tutorial guides you through every step of running **Yi-34B-Chat locally on an A800 (80G)** and then performing inference.

#### Step 0: Prerequisites

- Make sure Python 3.10 or a later version is installed.

- If you want to run other Yi models, see [software and hardware requirements](#deployment).

#### Step 1: Prepare your environment

To set up the environment and install the required packages, execute the following command.

```bash

git clone https://github.com/01-ai/Yi.git

cd yi

pip install -r requirements.txt

```

#### Step 2: Download the Yi model

You can download the weights and tokenizer of Yi models from the following sources:

- [Hugging Face](https://huggingface.co/01-ai)

- [ModelScope](https://www.modelscope.cn/organization/01ai/)

- [WiseModel](https://wisemodel.cn/organization/01.AI)

#### Step 3: Perform inference

You can perform inference with Yi chat or base models as below.

##### Perform inference with Yi chat model

1. Create a file named `quick_start.py` and copy the following content to it.

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

model_path = '<your-model-path>'

tokenizer = AutoTokenizer.from_pretrained(model_path, use_fast=False)

# Since transformers 4.35.0, the GPT-Q/AWQ model can be loaded using AutoModelForCausalLM.

model = AutoModelForCausalLM.from_pretrained(

model_path,

device_map="auto",

torch_dtype='auto'

).eval()

# Prompt content: "hi"

messages = [

{"role": "user", "content": "hi"}

]

input_ids = tokenizer.apply_chat_template(conversation=messages, tokenize=True, add_generation_prompt=True, return_tensors='pt')

output_ids = model.generate(input_ids.to('cuda'))

response = tokenizer.decode(output_ids[0][input_ids.shape[1]:], skip_special_tokens=True)

# Model response: "Hello! How can I assist you today?"

print(response)

```

2. Run `quick_start.py`.

```bash

python quick_start.py

```

Then you can see an output similar to the one below. 🥳

```bash

Hello! How can I assist you today?

```

##### Perform inference with Yi base model

- Yi-34B

The steps are similar to [pip - Perform inference with Yi chat model](#perform-inference-with-yi-chat-model).

You can use the existing file [`text_generation.py`](https://github.com/01-ai/Yi/tree/main/demo).

```bash

python demo/text_generation.py --model <your-model-path>

```

Then you can see an output similar to the one below. 🥳

<details>

<summary>Output. ⬇️ </summary>

<br>

**Prompt**: Let me tell you an interesting story about cat Tom and mouse Jerry,

**Generation**: Let me tell you an interesting story about cat Tom and mouse Jerry, which happened in my childhood. My father had a big house with two cats living inside it to kill mice. One day when I was playing at home alone, I found one of the tomcats lying on his back near our kitchen door, looking very much like he wanted something from us but couldn’t get up because there were too many people around him! He kept trying for several minutes before finally giving up...

</details>

- Yi-9B

Input

```bash

from transformers import AutoModelForCausalLM, AutoTokenizer

MODEL_DIR = "01-ai/Yi-9B"

model = AutoModelForCausalLM.from_pretrained(MODEL_DIR, torch_dtype="auto")

tokenizer = AutoTokenizer.from_pretrained(MODEL_DIR, use_fast=False)

input_text = "# write the quick sort algorithm"

inputs = tokenizer(input_text, return_tensors="pt").to(model.device)

outputs = model.generate(**inputs, max_length=256)

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

```

Output

```bash

# write the quick sort algorithm

def quick_sort(arr):

if len(arr) <= 1:

return arr

pivot = arr[len(arr) // 2]

left = [x for x in arr if x < pivot]

middle = [x for x in arr if x == pivot]

right = [x for x in arr if x > pivot]

return quick_sort(left) + middle + quick_sort(right)

# test the quick sort algorithm

print(quick_sort([3, 6, 8, 10, 1, 2, 1]))

```

<p align="right"> [

<a href="#top">Back to top ⬆️ </a> ]

</p>

### Quick start - Docker

<details>

<summary> Run Yi-34B-chat locally with Docker: a step-by-step guide. ⬇️</summary>

<br>This tutorial guides you through every step of running <strong>Yi-34B-Chat on an A800 GPU</strong> or <strong>4*4090</strong> locally and then performing inference.

<h4>Step 0: Prerequisites</h4>

<p>Make sure you've installed <a href="https://docs.docker.com/engine/install/?open_in_browser=true">Docker</a> and <a href="https://docs.nvidia.com/datacenter/cloud-native/container-toolkit/latest/install-guide.html">nvidia-container-toolkit</a>.</p>

<h4> Step 1: Start Docker </h4>

<pre><code>docker run -it --gpus all \

-v <your-model-path>: /models

ghcr.io/01-ai/yi:latest

</code></pre>

<p>Alternatively, you can pull the Yi Docker image from <code>registry.lingyiwanwu.com/ci/01-ai/yi:latest</code>.</p>

<h4>Step 2: Perform inference</h4>

<p>You can perform inference with Yi chat or base models as below.</p>

<h5>Perform inference with Yi chat model</h5>

<p>The steps are similar to <a href="#perform-inference-with-yi-chat-model">pip - Perform inference with Yi chat model</a>.</p>

<p><strong>Note</strong> that the only difference is to set <code>model_path = '<your-model-mount-path>'</code> instead of <code>model_path = '<your-model-path>'</code>.</p>

<h5>Perform inference with Yi base model</h5>

<p>The steps are similar to <a href="#perform-inference-with-yi-base-model">pip - Perform inference with Yi base model</a>.</p>

<p><strong>Note</strong> that the only difference is to set <code>--model <your-model-mount-path>'</code> instead of <code>model <your-model-path></code>.</p>

</details>

### Quick start - conda-lock

<details>

<summary>You can use <code><a href="https://github.com/conda/conda-lock">conda-lock</a></code> to generate fully reproducible lock files for conda environments. ⬇️</summary>

<br>

You can refer to <a href="https://github.com/01-ai/Yi/blob/ebba23451d780f35e74a780987ad377553134f68/conda-lock.yml">conda-lock.yml</a> for the exact versions of the dependencies. Additionally, you can utilize <code><a href="https://mamba.readthedocs.io/en/latest/user_guide/micromamba.html">micromamba</a></code> for installing these dependencies.

<br>

To install the dependencies, follow these steps:

1. Install micromamba by following the instructions available <a href="https://mamba.readthedocs.io/en/latest/installation/micromamba-installation.html">here</a>.

2. Execute <code>micromamba install -y -n yi -f conda-lock.yml</code> to create a conda environment named <code>yi</code> and install the necessary dependencies.

</details>

### Quick start - llama.cpp

<a href="https://github.com/01-ai/Yi/blob/main/docs/README_llama.cpp.md">The following tutorial </a> will guide you through every step of running a quantized model (<a href="https://huggingface.co/XeIaso/yi-chat-6B-GGUF/tree/main">Yi-chat-6B-2bits</a>) locally and then performing inference.

<details>

<summary> Run Yi-chat-6B-2bits locally with llama.cpp: a step-by-step guide. ⬇️</summary>

<br><a href="https://github.com/01-ai/Yi/blob/main/docs/README_llama.cpp.md">This tutorial</a> guides you through every step of running a quantized model (<a href="https://huggingface.co/XeIaso/yi-chat-6B-GGUF/tree/main">Yi-chat-6B-2bits</a>) locally and then performing inference.</p>

- [Step 0: Prerequisites](#step-0-prerequisites)

- [Step 1: Download llama.cpp](#step-1-download-llamacpp)

- [Step 2: Download Yi model](#step-2-download-yi-model)

- [Step 3: Perform inference](#step-3-perform-inference)

#### Step 0: Prerequisites

- This tutorial assumes you use a MacBook Pro with 16GB of memory and an Apple M2 Pro chip.

- Make sure [`git-lfs`](https://git-lfs.com/) is installed on your machine.

#### Step 1: Download `llama.cpp`

To clone the [`llama.cpp`](https://github.com/ggerganov/llama.cpp) repository, run the following command.

```bash

git clone [email protected]:ggerganov/llama.cpp.git

```

#### Step 2: Download Yi model

2.1 To clone [XeIaso/yi-chat-6B-GGUF](https://huggingface.co/XeIaso/yi-chat-6B-GGUF/tree/main) with just pointers, run the following command.

```bash

GIT_LFS_SKIP_SMUDGE=1 git clone https://huggingface.co/XeIaso/yi-chat-6B-GGUF

```

2.2 To download a quantized Yi model ([yi-chat-6b.Q2_K.gguf](https://huggingface.co/XeIaso/yi-chat-6B-GGUF/blob/main/yi-chat-6b.Q2_K.gguf)), run the following command.

```bash

git-lfs pull --include yi-chat-6b.Q2_K.gguf

```

#### Step 3: Perform inference

To perform inference with the Yi model, you can use one of the following methods.

- [Method 1: Perform inference in terminal](#method-1-perform-inference-in-terminal)

- [Method 2: Perform inference in web](#method-2-perform-inference-in-web)

##### Method 1: Perform inference in terminal

To compile `llama.cpp` using 4 threads and then conduct inference, navigate to the `llama.cpp` directory, and run the following command.

> ##### Tips

>

> - Replace `/Users/yu/yi-chat-6B-GGUF/yi-chat-6b.Q2_K.gguf` with the actual path of your model.

>

> - By default, the model operates in completion mode.

>

> - For additional output customization options (for example, system prompt, temperature, repetition penalty, etc.), run `./main -h` to check detailed descriptions and usage.

```bash

make -j4 && ./main -m /Users/yu/yi-chat-6B-GGUF/yi-chat-6b.Q2_K.gguf -p "How do you feed your pet fox? Please answer this question in 6 simple steps:\nStep 1:" -n 384 -e

...

How do you feed your pet fox? Please answer this question in 6 simple steps:

Step 1: Select the appropriate food for your pet fox. You should choose high-quality, balanced prey items that are suitable for their unique dietary needs. These could include live or frozen mice, rats, pigeons, or other small mammals, as well as fresh fruits and vegetables.

Step 2: Feed your pet fox once or twice a day, depending on the species and its individual preferences. Always ensure that they have access to fresh water throughout the day.

Step 3: Provide an appropriate environment for your pet fox. Ensure it has a comfortable place to rest, plenty of space to move around, and opportunities to play and exercise.

Step 4: Socialize your pet with other animals if possible. Interactions with other creatures can help them develop social skills and prevent boredom or stress.

Step 5: Regularly check for signs of illness or discomfort in your fox. Be prepared to provide veterinary care as needed, especially for common issues such as parasites, dental health problems, or infections.

Step 6: Educate yourself about the needs of your pet fox and be aware of any potential risks or concerns that could affect their well-being. Regularly consult with a veterinarian to ensure you are providing the best care.

...

```

Now you have successfully asked a question to the Yi model and got an answer! 🥳

##### Method 2: Perform inference in web

1. To initialize a lightweight and swift chatbot, run the following command.

```bash

cd llama.cpp

./server --ctx-size 2048 --host 0.0.0.0 --n-gpu-layers 64 --model /Users/yu/yi-chat-6B-GGUF/yi-chat-6b.Q2_K.gguf

```

Then you can get an output like this:

```bash

...

llama_new_context_with_model: n_ctx = 2048

llama_new_context_with_model: freq_base = 5000000.0

llama_new_context_with_model: freq_scale = 1

ggml_metal_init: allocating

ggml_metal_init: found device: Apple M2 Pro

ggml_metal_init: picking default device: Apple M2 Pro

ggml_metal_init: ggml.metallib not found, loading from source

ggml_metal_init: GGML_METAL_PATH_RESOURCES = nil

ggml_metal_init: loading '/Users/yu/llama.cpp/ggml-metal.metal'

ggml_metal_init: GPU name: Apple M2 Pro

ggml_metal_init: GPU family: MTLGPUFamilyApple8 (1008)

ggml_metal_init: hasUnifiedMemory = true

ggml_metal_init: recommendedMaxWorkingSetSize = 11453.25 MB

ggml_metal_init: maxTransferRate = built-in GPU

ggml_backend_metal_buffer_type_alloc_buffer: allocated buffer, size = 128.00 MiB, ( 2629.44 / 10922.67)

llama_new_context_with_model: KV self size = 128.00 MiB, K (f16): 64.00 MiB, V (f16): 64.00 MiB

ggml_backend_metal_buffer_type_alloc_buffer: allocated buffer, size = 0.02 MiB, ( 2629.45 / 10922.67)

llama_build_graph: non-view tensors processed: 676/676

llama_new_context_with_model: compute buffer total size = 159.19 MiB

ggml_backend_metal_buffer_type_alloc_buffer: allocated buffer, size = 156.02 MiB, ( 2785.45 / 10922.67)

Available slots:

-> Slot 0 - max context: 2048

llama server listening at http://0.0.0.0:8080

```

2. To access the chatbot interface, open your web browser and enter `http://0.0.0.0:8080` into the address bar.

3. Enter a question, such as "How do you feed your pet fox? Please answer this question in 6 simple steps" into the prompt window, and you will receive a corresponding answer.

</ul>

</details>

<p align="right"> [

<a href="#top">Back to top ⬆️ </a> ]

</p>

### Web demo

You can build a web UI demo for Yi **chat** models (note that Yi base models are not supported in this senario).

[Step 1: Prepare your environment](#step-1-prepare-your-environment).

[Step 2: Download the Yi model](#step-2-download-the-yi-model).

Step 3. To start a web service locally, run the following command.

```bash

python demo/web_demo.py -c <your-model-path>

```

You can access the web UI by entering the address provided in the console into your browser.

<p align="right"> [

<a href="#top">Back to top ⬆️ </a> ]

</p>

### Fine-tuning

```bash

bash finetune/scripts/run_sft_Yi_6b.sh

```

Once finished, you can compare the finetuned model and the base model with the following command:

```bash

bash finetune/scripts/run_eval.sh

```

<details style="display: inline;"><summary>For advanced usage (like fine-tuning based on your custom data), see the explanations below. ⬇️ </summary> <ul>

### Finetune code for Yi 6B and 34B

#### Preparation

##### From Image

By default, we use a small dataset from [BAAI/COIG](https://huggingface.co/datasets/BAAI/COIG) to finetune the base model.

You can also prepare your customized dataset in the following `jsonl` format:

```json

{ "prompt": "Human: Who are you? Assistant:", "chosen": "I'm Yi." }

```

And then mount them in the container to replace the default ones:

```bash

docker run -it \

-v /path/to/save/finetuned/model/:/finetuned-model \

-v /path/to/train.jsonl:/yi/finetune/data/train.json \

-v /path/to/eval.jsonl:/yi/finetune/data/eval.json \

ghcr.io/01-ai/yi:latest \

bash finetune/scripts/run_sft_Yi_6b.sh

```

##### From Local Server

Make sure you have conda. If not, use

```bash

mkdir -p ~/miniconda3

wget https://repo.anaconda.com/miniconda/Miniconda3-latest-Linux-x86_64.sh -O ~/miniconda3/miniconda.sh

bash ~/miniconda3/miniconda.sh -b -u -p ~/miniconda3

rm -rf ~/miniconda3/miniconda.sh

~/miniconda3/bin/conda init bash

source ~/.bashrc

```

Then, create a conda env:

```bash

conda create -n dev_env python=3.10 -y

conda activate dev_env

pip install torch==2.0.1 deepspeed==0.10 tensorboard transformers datasets sentencepiece accelerate ray==2.7

```

#### Hardware Setup

For the Yi-6B model, a node with 4 GPUs, each with GPU memory larger than 60GB, is recommended.

For the Yi-34B model, because the usage of the zero-offload technique consumes a lot of CPU memory, please be careful to limit the number of GPUs in the 34B finetune training. Please use CUDA_VISIBLE_DEVICES to limit the number of GPUs (as shown in scripts/run_sft_Yi_34b.sh).

A typical hardware setup for finetuning the 34B model is a node with 8 GPUs (limited to 4 in running by CUDA_VISIBLE_DEVICES=0,1,2,3), each with GPU memory larger than 80GB, and total CPU memory larger than 900GB.

#### Quick Start

Download a LLM-base model to MODEL_PATH (6B and 34B). A typical folder of models is like:

```bash

|-- $MODEL_PATH

| |-- config.json

| |-- pytorch_model-00001-of-00002.bin

| |-- pytorch_model-00002-of-00002.bin

| |-- pytorch_model.bin.index.json

| |-- tokenizer_config.json

| |-- tokenizer.model

| |-- ...

```

Download a dataset from huggingface to local storage DATA_PATH, e.g. Dahoas/rm-static.

```bash

|-- $DATA_PATH

| |-- data

| | |-- train-00000-of-00001-2a1df75c6bce91ab.parquet

| | |-- test-00000-of-00001-8c7c51afc6d45980.parquet

| |-- dataset_infos.json

| |-- README.md

```

`finetune/yi_example_dataset` has example datasets, which are modified from [BAAI/COIG](https://huggingface.co/datasets/BAAI/COIG)

```bash

|-- $DATA_PATH

|--data

|-- train.jsonl

|-- eval.jsonl

```

`cd` into the scripts folder, copy and paste the script, and run. For example:

```bash

cd finetune/scripts

bash run_sft_Yi_6b.sh

```

For the Yi-6B base model, setting training_debug_steps=20 and num_train_epochs=4 can output a chat model, which takes about 20 minutes.

For the Yi-34B base model, it takes a relatively long time for initialization. Please be patient.

#### Evaluation

```bash

cd finetune/scripts

bash run_eval.sh

```

Then you'll see the answer from both the base model and the finetuned model.

</ul>

</details>

<p align="right"> [

<a href="#top">Back to top ⬆️ </a> ]

</p>

### Quantization

#### GPT-Q

```bash

python quantization/gptq/quant_autogptq.py \

--model /base_model \

--output_dir /quantized_model \

--trust_remote_code

```

Once finished, you can then evaluate the resulting model as follows:

```bash

python quantization/gptq/eval_quantized_model.py \

--model /quantized_model \

--trust_remote_code

```

<details style="display: inline;"><summary>For details, see the explanations below. ⬇️</summary> <ul>

#### GPT-Q quantization

[GPT-Q](https://github.com/IST-DASLab/gptq) is a PTQ (Post-Training Quantization)

method. It saves memory and provides potential speedups while retaining the accuracy

of the model.

Yi models can be GPT-Q quantized without a lot of efforts.

We provide a step-by-step tutorial below.

To run GPT-Q, we will use [AutoGPTQ](https://github.com/PanQiWei/AutoGPTQ) and

[exllama](https://github.com/turboderp/exllama).

And the huggingface transformers has integrated optimum and auto-gptq to perform

GPTQ quantization on language models.

##### Do Quantization

The `quant_autogptq.py` script is provided for you to perform GPT-Q quantization:

```bash

python quant_autogptq.py --model /base_model \

--output_dir /quantized_model --bits 4 --group_size 128 --trust_remote_code

```

##### Run Quantized Model

You can run a quantized model using the `eval_quantized_model.py`:

```bash

python eval_quantized_model.py --model /quantized_model --trust_remote_code

```

</ul>

</details>

#### AWQ

```bash

python quantization/awq/quant_autoawq.py \

--model /base_model \

--output_dir /quantized_model \

--trust_remote_code

```

Once finished, you can then evaluate the resulting model as follows:

```bash

python quantization/awq/eval_quantized_model.py \

--model /quantized_model \

--trust_remote_code

```

<details style="display: inline;"><summary>For details, see the explanations below. ⬇️</summary> <ul>

#### AWQ quantization

[AWQ](https://github.com/mit-han-lab/llm-awq) is a PTQ (Post-Training Quantization)

method. It's an efficient and accurate low-bit weight quantization (INT3/4) for LLMs.

Yi models can be AWQ quantized without a lot of efforts.

We provide a step-by-step tutorial below.

To run AWQ, we will use [AutoAWQ](https://github.com/casper-hansen/AutoAWQ).

##### Do Quantization

The `quant_autoawq.py` script is provided for you to perform AWQ quantization:

```bash

python quant_autoawq.py --model /base_model \

--output_dir /quantized_model --bits 4 --group_size 128 --trust_remote_code

```

##### Run Quantized Model

You can run a quantized model using the `eval_quantized_model.py`:

```bash

python eval_quantized_model.py --model /quantized_model --trust_remote_code

```

</ul>

</details>

<p align="right"> [

<a href="#top">Back to top ⬆️ </a> ]

</p>

### Deployment

If you want to deploy Yi models, make sure you meet the software and hardware requirements.

#### Software requirements

Before using Yi quantized models, make sure you've installed the correct software listed below.

| Model | Software

|---|---

Yi 4-bit quantized models | [AWQ and CUDA](https://github.com/casper-hansen/AutoAWQ?tab=readme-ov-file#install-from-pypi)

Yi 8-bit quantized models | [GPTQ and CUDA](https://github.com/PanQiWei/AutoGPTQ?tab=readme-ov-file#quick-installation)

#### Hardware requirements

Before deploying Yi in your environment, make sure your hardware meets the following requirements.

##### Chat models

| Model | Minimum VRAM | Recommended GPU Example |

|:----------------------|:--------------|:-------------------------------------:|

| Yi-6B-Chat | 15 GB | 1 x RTX 3090 (24 GB) <br> 1 x RTX 4090 (24 GB) <br> 1 x A10 (24 GB) <br> 1 x A30 (24 GB) |

| Yi-6B-Chat-4bits | 4 GB | 1 x RTX 3060 (12 GB)<br> 1 x RTX 4060 (8 GB) |

| Yi-6B-Chat-8bits | 8 GB | 1 x RTX 3070 (8 GB) <br> 1 x RTX 4060 (8 GB) |

| Yi-34B-Chat | 72 GB | 4 x RTX 4090 (24 GB)<br> 1 x A800 (80GB) |

| Yi-34B-Chat-4bits | 20 GB | 1 x RTX 3090 (24 GB) <br> 1 x RTX 4090 (24 GB) <br> 1 x A10 (24 GB) <br> 1 x A30 (24 GB) <br> 1 x A100 (40 GB) |

| Yi-34B-Chat-8bits | 38 GB | 2 x RTX 3090 (24 GB) <br> 2 x RTX 4090 (24 GB)<br> 1 x A800 (40 GB) |

Below are detailed minimum VRAM requirements under different batch use cases.

| Model | batch=1 | batch=4 | batch=16 | batch=32 |

| ----------------------- | ------- | ------- | -------- | -------- |

| Yi-6B-Chat | 12 GB | 13 GB | 15 GB | 18 GB |

| Yi-6B-Chat-4bits | 4 GB | 5 GB | 7 GB | 10 GB |

| Yi-6B-Chat-8bits | 7 GB | 8 GB | 10 GB | 14 GB |

| Yi-34B-Chat | 65 GB | 68 GB | 76 GB | > 80 GB |

| Yi-34B-Chat-4bits | 19 GB | 20 GB | 30 GB | 40 GB |

| Yi-34B-Chat-8bits | 35 GB | 37 GB | 46 GB | 58 GB |

##### Base models

| Model | Minimum VRAM | Recommended GPU Example |

|----------------------|--------------|:-------------------------------------:|

| Yi-6B | 15 GB | 1 x RTX 3090 (24 GB) <br> 1 x RTX 4090 (24 GB) <br> 1 x A10 (24 GB) <br> 1 x A30 (24 GB) |

| Yi-6B-200K | 50 GB | 1 x A800 (80 GB) |

| Yi-9B | 20 GB | 1 x RTX 4090 (24 GB) |

| Yi-34B | 72 GB | 4 x RTX 4090 (24 GB) <br> 1 x A800 (80 GB) |

| Yi-34B-200K | 200 GB | 4 x A800 (80 GB) |

<p align="right"> [

<a href="#top">Back to top ⬆️ </a> ]

</p>

### FAQ

<details>

<summary> If you have any questions while using the Yi series models, the answers provided below could serve as a helpful reference for you. ⬇️</summary>

<br>

#### 💡Fine-tuning

- <strong>Base model or Chat model - which to fine-tune?</strong>

<br>The choice of pre-trained language model for fine-tuning hinges on the computational resources you have at your disposal and the particular demands of your task.

- If you are working with a substantial volume of fine-tuning data (say, over 10,000 samples), the Base model could be your go-to choice.

- On the other hand, if your fine-tuning data is not quite as extensive, opting for the Chat model might be a more fitting choice.

- It is generally advisable to fine-tune both the Base and Chat models, compare their performance, and then pick the model that best aligns with your specific requirements.

- <strong>Yi-34B versus Yi-34B-Chat for full-scale fine-tuning - what is the difference?</strong>

<br>

The key distinction between full-scale fine-tuning on `Yi-34B`and `Yi-34B-Chat` comes down to the fine-tuning approach and outcomes.

- Yi-34B-Chat employs a Special Fine-Tuning (SFT) method, resulting in responses that mirror human conversation style more closely.

- The Base model's fine-tuning is more versatile, with a relatively high performance potential.

- If you are confident in the quality of your data, fine-tuning with `Yi-34B` could be your go-to.

- If you are aiming for model-generated responses that better mimic human conversational style, or if you have doubts about your data quality, `Yi-34B-Chat` might be your best bet.

#### 💡Quantization

- <strong>Quantized model versus original model - what is the performance gap?</strong>

- The performance variance is largely contingent on the quantization method employed and the specific use cases of these models. For instance, when it comes to models provided by the AWQ official, from a Benchmark standpoint, quantization might result in a minor performance drop of a few percentage points.

- Subjectively speaking, in situations like logical reasoning, even a 1% performance shift could impact the accuracy of the output results.

#### 💡General

- <strong>Where can I source fine-tuning question answering datasets?</strong>

- You can find fine-tuning question answering datasets on platforms like Hugging Face, with datasets like [m-a-p/COIG-CQIA](https://huggingface.co/datasets/m-a-p/COIG-CQIA) readily available.

- Additionally, Github offers fine-tuning frameworks, such as [hiyouga/LLaMA-Factory](https://github.com/hiyouga/LLaMA-Factory), which integrates pre-made datasets.

- <strong>What is the GPU memory requirement for fine-tuning Yi-34B FP16?</strong>

<br>

The GPU memory needed for fine-tuning 34B FP16 hinges on the specific fine-tuning method employed. For full parameter fine-tuning, you'll need 8 GPUs each with 80 GB; however, more economical solutions like Lora require less. For more details, check out [hiyouga/LLaMA-Factory](https://github.com/hiyouga/LLaMA-Factory). Also, consider using BF16 instead of FP16 for fine-tuning to optimize performance.

- <strong>Are there any third-party platforms that support chat functionality for the Yi-34b-200k model?</strong>

<br>

If you're looking for third-party Chats, options include [fireworks.ai](https://fireworks.ai/login?callbackURL=https://fireworks.ai/models/fireworks/yi-34b-chat).

</details>

### Learning hub

<details>

<summary> If you want to learn Yi, you can find a wealth of helpful educational resources here. ⬇️</summary>

<br>

Welcome to the Yi learning hub!

Whether you're a seasoned developer or a newcomer, you can find a wealth of helpful educational resources to enhance your understanding and skills with Yi models, including insightful blog posts, comprehensive video tutorials, hands-on guides, and more.

The content you find here has been generously contributed by knowledgeable Yi experts and passionate enthusiasts. We extend our heartfelt gratitude for your invaluable contributions!

At the same time, we also warmly invite you to join our collaborative effort by contributing to Yi. If you have already made contributions to Yi, please don't hesitate to showcase your remarkable work in the table below.

With all these resources at your fingertips, you're ready to start your exciting journey with Yi. Happy learning! 🥳

#### Tutorials

##### Blog tutorials

| Deliverable | Date | Author |

| ------------------------------------------------------------ | ---------- | ------------------------------------------------------------ |

| [使用 Dify、Meilisearch、零一万物模型实现最简单的 RAG 应用(三):AI 电影推荐](https://mp.weixin.qq.com/s/Ri2ap9_5EMzdfiBhSSL_MQ) | 2024-05-20 | [苏洋](https://github.com/soulteary) |

| [使用autodl服务器,在A40显卡上运行, Yi-34B-Chat-int4模型,并使用vllm优化加速,显存占用42G,速度18 words-s](https://blog.csdn.net/freewebsys/article/details/134698597?ops_request_misc=%7B%22request%5Fid%22%3A%22171636168816800227489911%22%2C%22scm%22%3A%2220140713.130102334.pc%5Fblog.%22%7D&request_id=171636168816800227489911&biz_id=0&utm_medium=distribute.pc_search_result.none-task-blog-2~blog~first_rank_ecpm_v1~times_rank-17-134698597-null-null.nonecase&utm_term=Yi大模型&spm=1018.2226.3001.4450) | 2024-05-20 | [fly-iot](https://gitee.com/fly-iot) |

| [Yi-VL 最佳实践](https://modelscope.cn/docs/yi-vl最佳实践) | 2024-05-20 | [ModelScope](https://github.com/modelscope) |

| [一键运行零一万物新鲜出炉Yi-1.5-9B-Chat大模型](https://mp.weixin.qq.com/s/ntMs2G_XdWeM3I6RUOBJrA) | 2024-05-13 | [Second State](https://github.com/second-state) |

| [零一万物开源Yi-1.5系列大模型](https://mp.weixin.qq.com/s/d-ogq4hcFbsuL348ExJxpA) | 2024-05-13 | [刘聪](https://github.com/liucongg) |

| [零一万物Yi-1.5系列模型发布并开源! 34B-9B-6B 多尺寸,魔搭社区推理微调最佳实践教程来啦!](https://mp.weixin.qq.com/s/3wD-0dCgXB646r720o8JAg) | 2024-05-13 | [ModelScope](https://github.com/modelscope) |

| [Yi-34B 本地部署简单测试](https://blog.csdn.net/arkohut/article/details/135331469?ops_request_misc=%7B%22request%5Fid%22%3A%22171636390616800185813639%22%2C%22scm%22%3A%2220140713.130102334.pc%5Fblog.%22%7D&request_id=171636390616800185813639&biz_id=0&utm_medium=distribute.pc_search_result.none-task-blog-2~blog~first_rank_ecpm_v1~times_rank-10-135331469-null-null.nonecase&utm_term=Yi大模型&spm=1018.2226.3001.4450) | 2024-05-13 | [漆妮妮](https://space.bilibili.com/1262370256) |