Lynx 2B (micro)

Model Details

Model Description

This is the first release of a series of Swedish large language models we call "Lynx". Micro is a small model (2 billion params), but punches way above its weight!

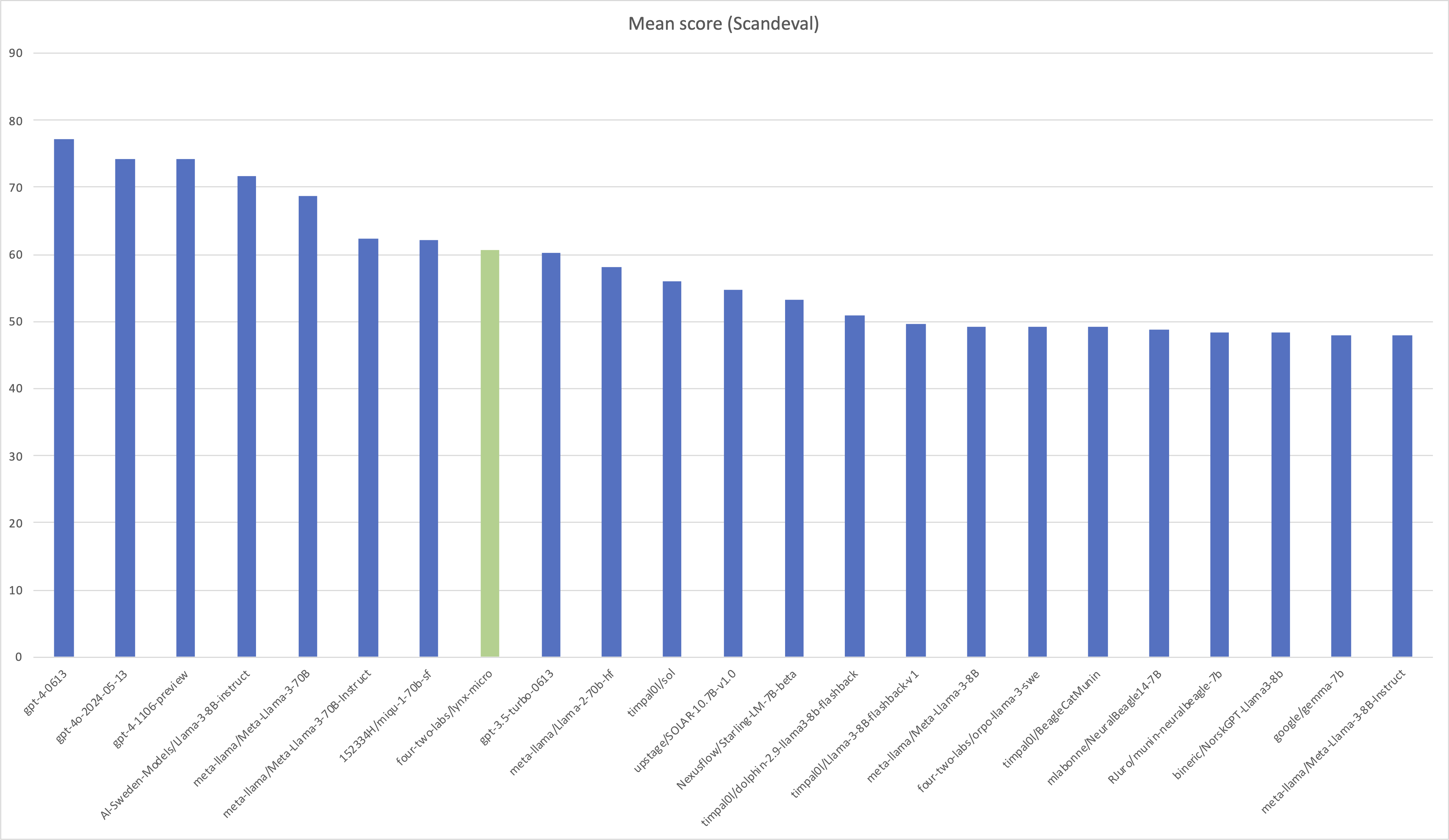

Lynx micro is a fine-tune of Google DeepMind Gemma 2B, scores just below GPT-3.5 Turbo on Scandeval. In fact, the only non OpenAI model (currently) topping the Swedish NLG board on scandeval is a fine-tune of Llama-3 by AI Sweden based on our data recipe.

We believe that this is a really capable model (for its size), but keep in mind that it is still a small model and hasn't memorized as much as larger models tend to do.

- Funded, Developed and shared by: 42 Labs

- Model type: Auto-regressive transformer

- Language(s) (NLP): Swedish and English

- License: Gemma terms of use

- Finetuned from model: Gemma 2B, 1.1 instruct

How to Get Started with the Model

import torch

from transformers import pipeline

from transformers import TextStreamer

from transformers import AutoTokenizer

from transformers import AutoModelForCausalLM

model_name = 'four-two-labs/lynx-micro'

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForCausalLM.from_pretrained(

model_name,

device_map='cuda',

torch_dtype=torch.bfloat16,

use_flash_attention_2=True, # Remove if flash attention isn't available

)

pipe = pipeline(

'text-generation',

model=model,

tokenizer=tokenizer,

streamer=TextStreamer(tokenizer=tokenizer)

)

messages = [

#{'role': 'user', 'content': 'Lös ekvationen 2x^2-5 = 9'},

#{'role': 'user', 'content': 'Vad är fel med denna mening: "Hej! Jag idag bra mår."'},

#{'role': 'user', 'content': """Översätt till svenska: Hayashi, the Japanese government spokesperson, said Monday that Tokyo is answering the Chinese presence around the islands with vessels of its own.\n\n“We ensure a comprehensive security system for territorial waters by deploying Coast Guard patrol vessels that are consistently superior to other party’s capacity,” Hayashi said.\n\nAny Japanese-Chinese incident in the Senkakus raises the risk of a wider conflict, analysts note, due to Japan’s mutual defense treaty with the United States.\n\nWashington has made clear on numerous occasions that it considers the Senkakus to be covered by the mutual defense pact."""},

#{'role': 'user', 'content': """Vad handlar texten om?\n\nHayashi, the Japanese government spokesperson, said Monday that Tokyo is answering the Chinese presence around the islands with vessels of its own.\n\n“We ensure a comprehensive security system for territorial waters by deploying Coast Guard patrol vessels that are consistently superior to other party’s capacity,” Hayashi said.\n\nAny Japanese-Chinese incident in the Senkakus raises the risk of a wider conflict, analysts note, due to Japan’s mutual defense treaty with the United States.\n\nWashington has made clear on numerous occasions that it considers the Senkakus to be covered by the mutual defense pact."""},

#{'role': 'user', 'content': """Skriv en sci-fi novell som utspelar sig över millenium på en planet runt ett binärt stjärnsystem."""},

{'role': 'user', 'content': 'Hur många helikoptrar kan en människa äta på en gång?'},

]

r = pipe(

messages,

max_length=4096,

do_sample=False,

eos_token_id=[tokenizer.vocab['<end_of_turn>'], tokenizer.eos_token_id],

)

Training Details

Training Data

The model has been trained on a proprietary dataset of ~1.35M examples consisting of

- High quality swedish instruct data

- Single turn

- Multi-turn

- High quality swe <-> eng translations

Training Procedure

For training we used hugginface Accelerate and TRL.

Preprocessing

For efficiency, we packed all the examples into 8K context windows, reducing the number examples to ~12% of their original count.

Training Hyperparameters

- Training regime:

[More Information Needed]

Evaluation

The model has been evaluated on Scandeval swedish subset.

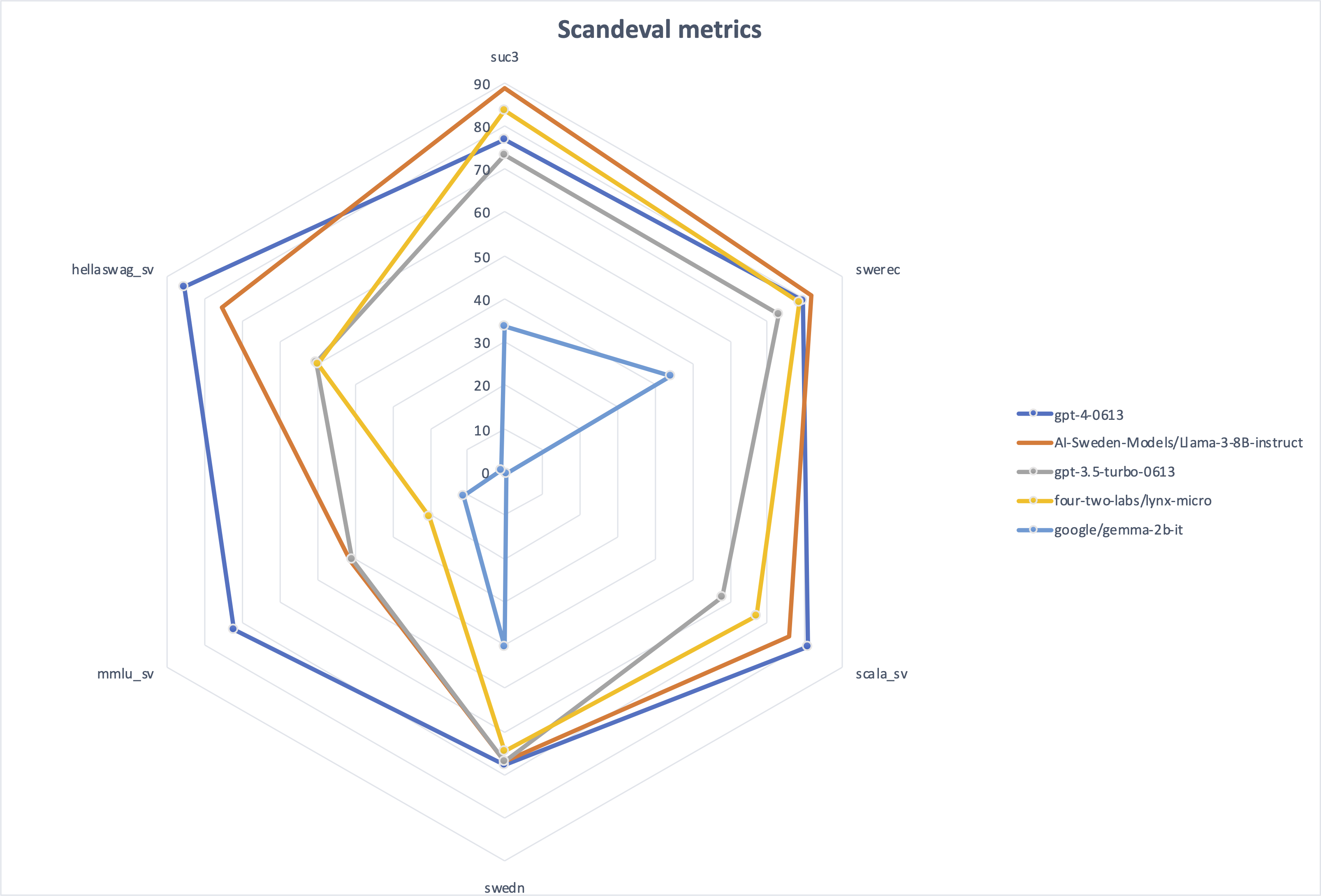

The result of the individual metrics compared to other top scoring models

The mean score of all metrics compared to other models in the Swedish NLG category.

Environmental Impact

- Hardware Type: 8xH100

- Hours used: ~96 GPU hours

- Cloud Provider: runpod.io

- Compute Region: Canada

- Carbon Emitted: Minimal

- Downloads last month

- 35