license: apache-2.0

tags:

- contrastive learning

- CLAP

- audio classification

- zero-shot classification

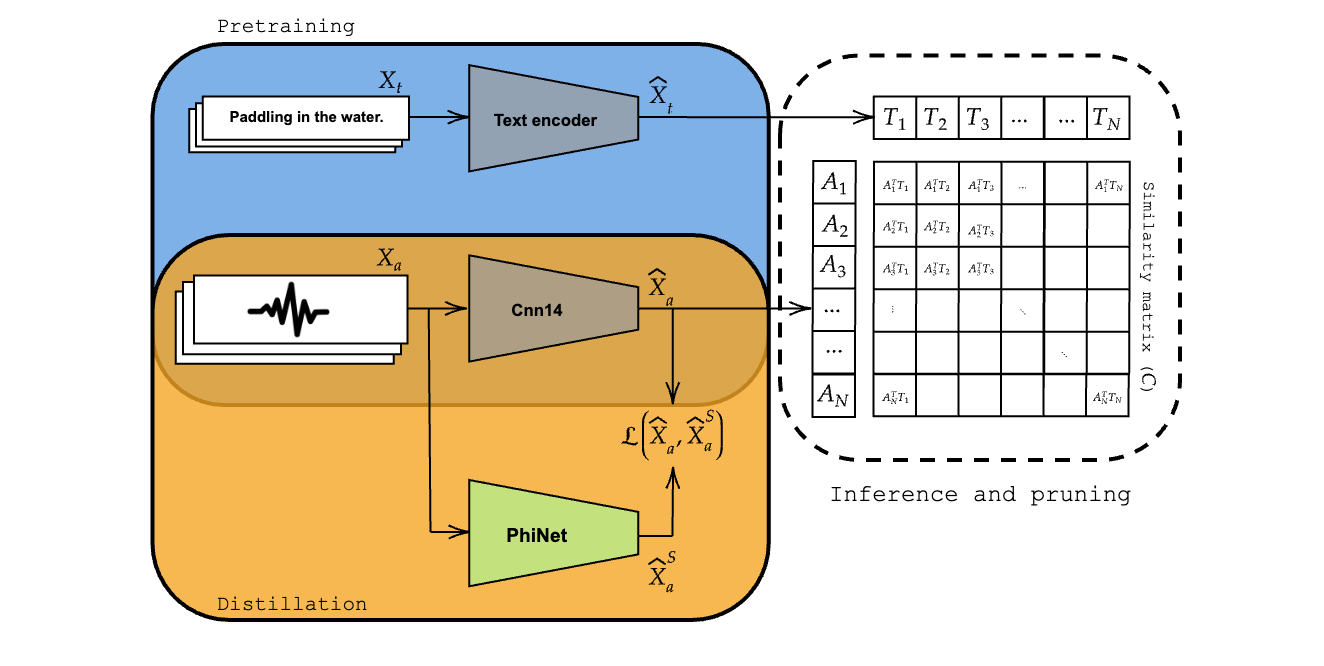

tinyCLAP: Distilling Contrastive Language-Audio Pretrained models

This repository contains the official implementation of tinyCLAP. To access the project website, use this link.

Requirements

To clone the repo and install requirements:

git clone https://github.com/fpaissan/tinyCLAP & cd tinyCLAP

pip install -r extra_requirements.txt

Training

To train the model(s) in the paper, run this command:

MODEL_NAME=phinet_alpha_1.50_beta_0.75_t0_6_N_7

./run_tinyCLAP.sh $MODEL_NAME

Note that MODEL_NAME is formatted such that the script will automatically parse the configuration for the student model.

You can change parameters by changing the model name.

Please note:

- To use the original CLAP encoder in the distillation setting, replace the model name with

Cnn14; - To reproduce the variants of PhiNet from the manuscript, refer to the hyperparameters listed in Table 1.

Evaluation

The command to evaluate the model on each dataset varies slightly among datasets. Below are listed all the necessary commands.

ESC50

python train_clap.py hparams/distill_clap.yaml --experiment_name tinyCLAP_$MODEL_NAME --zs_eval True --esc_folder $PATH_TO_ESC

UrbanSound8K

python train_clap.py hparams/distill_clap.yaml --experiment_name tinyCLAP_$MODEL_NAME --zs_eval True --us8k_folder $PATH_TO_US8K

TUT17

python train_clap.py hparams/distill_clap.yaml --experiment_name tinyCLAP_$MODEL_NAME --zs_eval True --tut17_folder $PATH_TO_TUT17

Pre-trained Models

You can download pretrained models from the tinyCLAP HF.

Note: The checkpoints on HF contain the entire CLAP module (complete of text encoder and teacher encoder).

To run inference using the pretrained models, please use:

python train_clap.py hparams/distill_clap.yaml --pretrained_clap fpaissan/tinyCLAP/$MODEL_NAME.ckpt --zs_eval True --tut17_folder $PATH_TO_TUT17

This command will automatically download the checkpoint, if present in the zoo of pretrained models. Make sure to change the dataset configuration file based on the evaluation.

A list of available models with their computational cost is described in the follwing table:

| audioenc_name_student | Params [M] | ESC-50 | UrbanSound8K | TUT17 |

|---|---|---|---|---|

| MSFT CLAP | 82.8 | 80.7% | 72.1% | 25.2% |

| Cnn14 | 82.8 | 81.3% | 72.3% | 23.7% |

| phinet_alpha_1.50_beta_0.75_t0_6_N_7 | 4.4 | 77.3% | 69.7% | 21.9% |

The original paper's checkpoints are available through this link.

Citing tinyCLAP

@inproceedings{paissan24_interspeech,

title = {tinyCLAP: Distilling Constrastive Language-Audio Pretrained Models},

author = {Francesco Paissan and Elisabetta Farella},

year = {2024},

booktitle = {Interspeech 2024},

pages = {1685--1689},

doi = {10.21437/Interspeech.2024-193},

issn = {2958-1796},

}