license: apache-2.0

language:

- multilingual

library_name: gliner

datasets:

- urchade/pile-mistral-v0.1

- numind/NuNER

- knowledgator/GLINER-multi-task-synthetic-data

pipeline_tag: token-classification

tags:

- NER

- GLiNER

- information extraction

- encoder

- entity recognition

About

GLiNER is a Named Entity Recognition (NER) model capable of identifying any entity type using a bidirectional transformer encoders (BERT-like). It provides a practical alternative to traditional NER models, which are limited to predefined entities, and Large Language Models (LLMs) that, despite their flexibility, are costly and large for resource-constrained scenarios.

This particular version utilize bi-encoder architecture, where textual encoder is ModernBERT-base and entity label encoder is sentence transformer - BGE-small-en.

Such architecture brings several advantages over uni-encoder GLiNER:

- An unlimited amount of entities can be recognized at a single time;

- Faster inference if entity embeddings are preprocessed;

- Better generalization to unseen entities;

Utilization of ModernBERT uncovers up to 3 times better efficiency in comparison to DeBERTa-based models and context length up to 8,192 tokens while demonstrating comparable results.

However, bi-encoder architecture has some drawbacks such as a lack of inter-label interactions that make it hard for the model to disambiguate semantically similar but contextually different entities.

Installation & Usage

Install or update the gliner package:

pip install gliner -U

You need to install the latest version of transformers to use this model:

pip install git+https://github.com/huggingface/transformers.git

Once you've downloaded the GLiNER library, you can import the GLiNER class. You can then load this model using GLiNER.from_pretrained and predict entities with predict_entities.

from gliner import GLiNER

model = GLiNER.from_pretrained("knowledgator/modern-gliner-bi-base-v1.0")

text = """

Cristiano Ronaldo dos Santos Aveiro (Portuguese pronunciation: [kɾiʃˈtjɐnu ʁɔˈnaldu]; born 5 February 1985) is a Portuguese professional footballer who plays as a forward for and captains both Saudi Pro League club Al Nassr and the Portugal national team. Widely regarded as one of the greatest players of all time, Ronaldo has won five Ballon d'Or awards,[note 3] a record three UEFA Men's Player of the Year Awards, and four European Golden Shoes, the most by a European player. He has won 33 trophies in his career, including seven league titles, five UEFA Champions Leagues, the UEFA European Championship and the UEFA Nations League. Ronaldo holds the records for most appearances (183), goals (140) and assists (42) in the Champions League, goals in the European Championship (14), international goals (128) and international appearances (205). He is one of the few players to have made over 1,200 professional career appearances, the most by an outfield player, and has scored over 850 official senior career goals for club and country, making him the top goalscorer of all time.

"""

labels = ["person", "award", "date", "competitions", "teams"]

entities = model.predict_entities(text, labels, threshold=0.3)

for entity in entities:

print(entity["text"], "=>", entity["label"])

Cristiano Ronaldo dos Santos Aveiro => person

5 February 1985 => date

Al Nassr => teams

Portugal national team => teams

Ballon d'Or => award

UEFA Men's Player of the Year Awards => award

European Golden Shoes => award

UEFA Champions Leagues => competitions

UEFA European Championship => competitions

UEFA Nations League => competitions

Champions League => competitions

European Championship => competitions

If you want to use flash attention or increase sequence length, please, check the following code:

Firstly, install flash attention and triton packages:

pip install flash-attn triton

model = GLiNER.from_pretrained("knowledgator/modern-gliner-bi-base-v1.0",

_attn_implementation = 'flash_attention_2',

max_len = 2048).to('cuda:0')

If you have a large amount of entities and want to pre-embed them, please, refer to the following code snippet:

labels = ["your entities"]

texts = ["your texts"]

entity_embeddings = model.encode_labels(labels, batch_size = 8)

outputs = model.batch_predict_with_embeds(texts, entity_embeddings, labels)

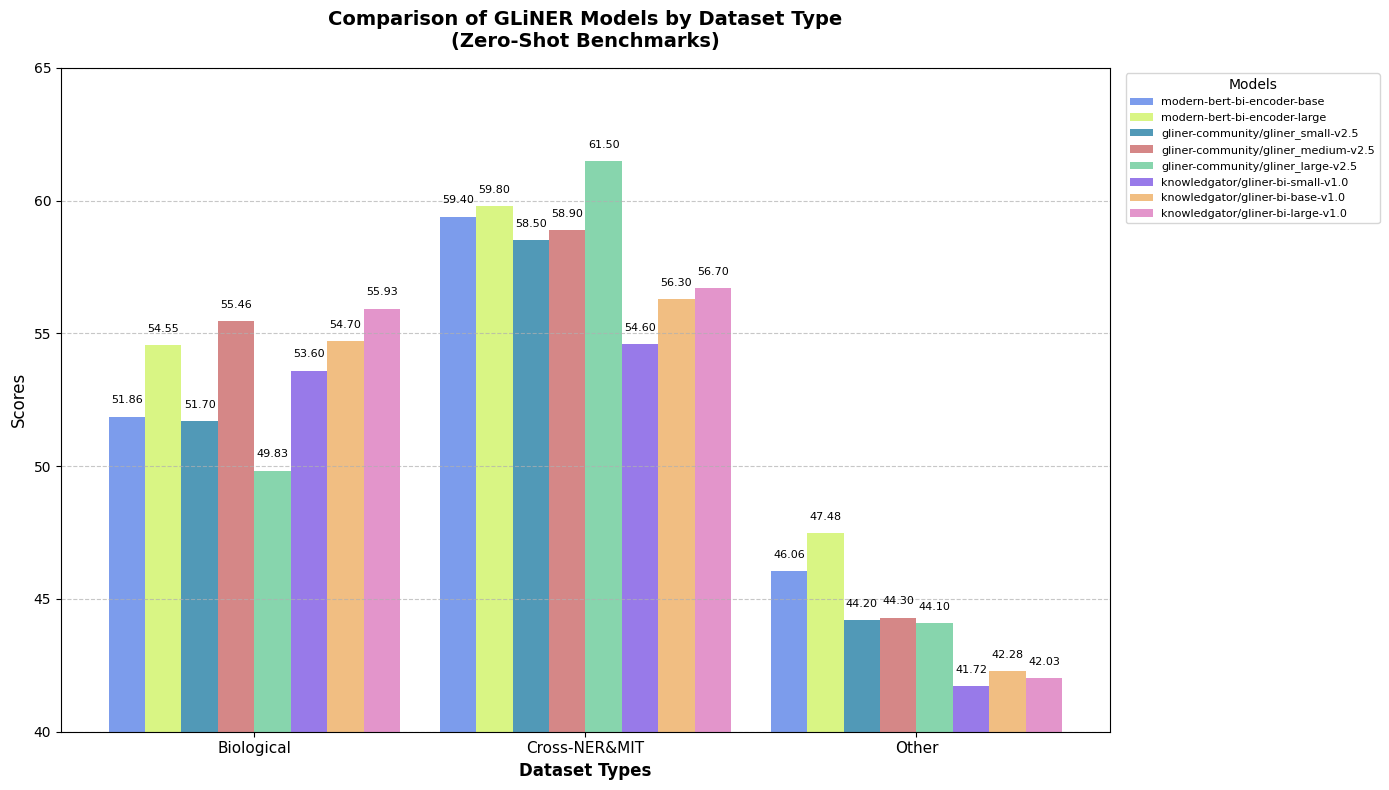

Benchmarks

Below you can see the table with benchmarking results on various named entity recognition datasets:

| Dataset | Score |

|---|---|

| ACE 2004 | 29.5% |

| ACE 2005 | 25.5% |

| AnatEM | 39.9% |

| Broad Tweet Corpus | 70.9% |

| CoNLL 2003 | 65.8% |

| FabNER | 22.8% |

| FindVehicle | 41.8% |

| GENIA_NER | 46.8% |

| HarveyNER | 15.2% |

| MultiNERD | 70.9% |

| Ontonotes | 34.9% |

| PolyglotNER | 47.6% |

| TweetNER7 | 38.2% |

| WikiANN en | 54.2% |

| WikiNeural | 81.6% |

| bc2gm | 50.7% |

| bc4chemd | 49.6% |

| bc5cdr | 65.0% |

| ncbi | 58.9% |

| Average | 47.9% |

| CrossNER_AI | 57.4% |

| CrossNER_literature | 59.4% |

| CrossNER_music | 71.1% |

| CrossNER_politics | 73.8% |

| CrossNER_science | 65.5% |

| mit-movie | 48.6% |

| mit-restaurant | 39.7% |

| Average (zero-shot benchmark) | 59.4% |

Join Our Discord

Connect with our community on Discord for news, support, and discussion about our models. Join Discord.

Citation

If you use this model in your work, please cite:

@misc{modernbert,

title={Smarter, Better, Faster, Longer: A Modern Bidirectional Encoder for Fast, Memory Efficient, and Long Context Finetuning and Inference},

author={Benjamin Warner and Antoine Chaffin and Benjamin Clavié and Orion Weller and Oskar Hallström and Said Taghadouini and Alexis Gallagher and Raja Biswas and Faisal Ladhak and Tom Aarsen and Nathan Cooper and Griffin Adams and Jeremy Howard and Iacopo Poli},

year={2024},

eprint={2412.13663},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2412.13663},

}

@misc{zaratiana2023gliner,

title={GLiNER: Generalist Model for Named Entity Recognition using Bidirectional Transformer},

author={Urchade Zaratiana and Nadi Tomeh and Pierre Holat and Thierry Charnois},

year={2023},

eprint={2311.08526},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

@misc{stepanov2024gliner,

title={GLiNER multi-task: Generalist Lightweight Model for Various Information Extraction Tasks},

author={Ihor Stepanov and Mykhailo Shtopko},

year={2024},

eprint={2406.12925},

archivePrefix={arXiv},

primaryClass={id='cs.LG' full_name='Machine Learning' is_active=True alt_name=None in_archive='cs' is_general=False description='Papers on all aspects of machine learning research (supervised, unsupervised, reinforcement learning, bandit problems, and so on) including also robustness, explanation, fairness, and methodology. cs.LG is also an appropriate primary category for applications of machine learning methods.'}

}