File size: 10,505 Bytes

de19084 008afd2 de19084 008afd2 de19084 008afd2 29330a6 de19084 eefecce de19084 008afd2 e2ab3a1 008afd2 de19084 008afd2 de19084 b096517 de19084 125d167 adde4e0 b95dc25 adde4e0 2c5f2ad d50e425 adde4e0 d50e425 adde4e0 d50e425 3f61c4f adde4e0 3b45b2f adde4e0 de19084 70cb0a9 de19084 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 |

---

license: apache-2.0

base_model:

- HuggingFaceTB/SmolLM3-3B

pipeline_tag: text-generation

library_name: optimum-executorch

tags:

- executorch

- transformers

- optimum-executorch

- smollm

---

[HuggingFaceTB/SmolLM3-3B](https://huggingface.co/HuggingFaceTB/SmolLM3-3B) is quantized using [torchao](https://huggingface.co/docs/transformers/main/en/quantization/torchao) with 8-bit embeddings and 8-bit dynamic activations with 4-bit weight linears (`8da4w`). It is then lowered to [ExecuTorch](https://github.com/pytorch/executorch) with several optimizations—custom SPDA, custom KV cache, and parallel prefill—to achieve high performance on the CPU backend, making it well-suited for mobile deployment.

We provide the [.pte file](https://huggingface.co/pytorch/SmolLM3-3B-8da4w/blob/main/smollm3-3b-8da4w.pte) for direct use in ExecuTorch. *(The provided pte file is exported with the default max_seq_length/max_context_length of 2k.)*

# Running in a mobile app

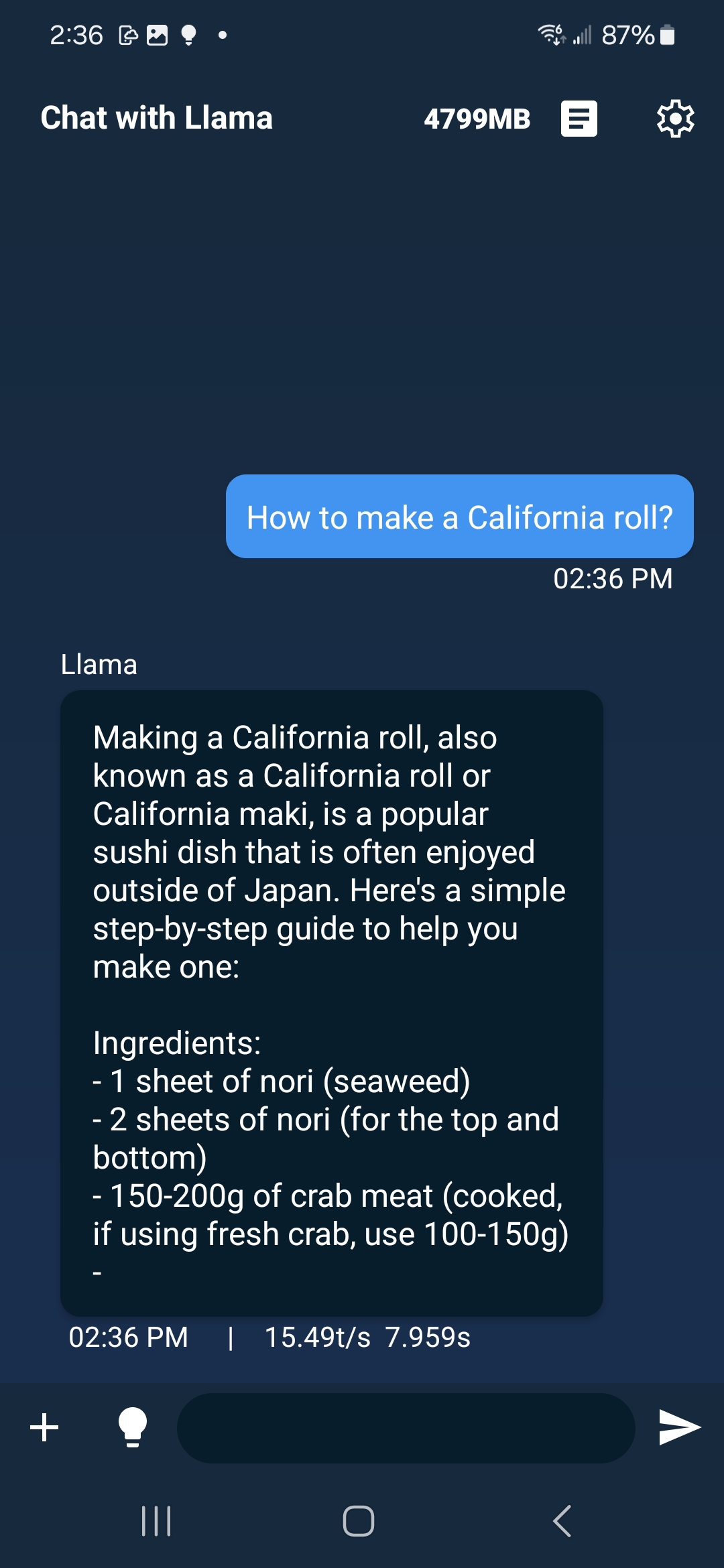

The [.pte file](https://huggingface.co/pytorch/SmolLM3-3B-8da4w/blob/main/smollm3-3b-8da4w.pte) can be run with ExecuTorch on a mobile phone. See the instructions for doing this in [iOS](https://pytorch.org/executorch/main/llm/llama-demo-ios.html) and [Android](https://docs.pytorch.org/executorch/main/llm/llama-demo-android.html). On Samsung Galaxy S22, the model runs at 15.5 tokens/s.

# Running with ExecuTorch’s sample runner

You can also run this model using ExecuTorch’s sample runner following [Step 3&4 in this instruction](https://github.com/pytorch/executorch/blob/main/examples/models/llama/README.md#step-3-run-on-your-computer-to-validate).

# Export Recipe

You can re-create the `.pte` file from eager source using this export recipe.

First install `optimum-executorch` by following this [instruction](https://github.com/huggingface/optimum-executorch?tab=readme-ov-file#-quick-installation), then you can use `optimum-cli` to export the model to ExecuTorch:

```Shell

optimum-cli export executorch \

--model HuggingFaceTB/SmolLM3-3B \

--task text-generation \

--recipe xnnpack \

--use_custom_sdpa \

--use_custom_kv_cache \

--qlinear 8da4w \

--qembedding 8w \

--output_dir ./smollm3_3b

```

# Quantization Recipe

First need to install the required packages:

```Shell

pip install git+https://github.com/huggingface/transformers@main

pip install torchao

```

## Untie Embedding Weights

We want to quantize the embedding and lm_head differently. Since those layers are tied, we first need to untie the model:

```Py

from transformers import (

AutoModelForCausalLM,

AutoProcessor,

AutoTokenizer,

)

import torch

model_id = "HuggingFaceTB/SmolLM3-3B"

untied_model = AutoModelForCausalLM.from_pretrained(model_id, torch_dtype="auto", device_map="auto")

tokenizer = AutoTokenizer.from_pretrained(model_id)

print(untied_model)

from transformers.modeling_utils import find_tied_parameters

print("tied weights:", find_tied_parameters(untied_model))

if getattr(untied_model.config.get_text_config(decoder=True), "tie_word_embeddings"):

setattr(untied_model.config.get_text_config(decoder=True), "tie_word_embeddings", False)

untied_model._tied_weights_keys = []

untied_model.lm_head.weight = torch.nn.Parameter(untied_model.lm_head.weight.clone())

print("tied weights:", find_tied_parameters(untied_model))

USER_ID = "YOUR_USER_ID"

MODEL_NAME = model_id.split("/")[-1]

save_to = f"{USER_ID}/{MODEL_NAME}-untied-weights"

untied_model.push_to_hub(save_to)

tokenizer.push_to_hub(save_to)

# or save locally

save_to_local_path = f"{MODEL_NAME}-untied-weights"

untied_model.save_pretrained(save_to_local_path)

tokenizer.save_pretrained(save_to)

```

Note: to `push_to_hub` you need to run

```Shell

pip install -U "huggingface_hub[cli]"

huggingface-cli login

```

and use a token with write access, from https://huggingface.co/settings/tokens

## Quantization

We used following code to get the quantized model:

```Py

from transformers import (

AutoModelForCausalLM,

AutoProcessor,

AutoTokenizer,

TorchAoConfig,

)

from torchao.quantization.quant_api import (

IntxWeightOnlyConfig,

Int8DynamicActivationIntxWeightConfig,

ModuleFqnToConfig,

quantize_,

)

from torchao.quantization.granularity import PerGroup, PerAxis

import torch

# we start from the model with untied weights

model_id = "HuggingFaceTB/SmolLM3-3B"

USER_ID = "YOUR_USER_ID"

MODEL_NAME = model_id.split("/")[-1]

untied_model_id = f"{USER_ID}/{MODEL_NAME}-untied-weights"

untied_model_local_path = f"{MODEL_NAME}-untied-weights"

embedding_config = IntxWeightOnlyConfig(

weight_dtype=torch.int8,

granularity=PerAxis(0),

)

linear_config = Int8DynamicActivationIntxWeightConfig(

weight_dtype=torch.int4,

weight_granularity=PerGroup(32),

weight_scale_dtype=torch.bfloat16,

)

quant_config = ModuleFqnToConfig({"_default": linear_config, "model.embed_tokens": embedding_config})

quantization_config = TorchAoConfig(quant_type=quant_config, include_embedding=True, untie_embedding_weights=True, modules_to_not_convert=[])

# either use `untied_model_id` or `untied_model_local_path`

quantized_model = AutoModelForCausalLM.from_pretrained(untied_model_id, torch_dtype=torch.float32, device_map="auto", quantization_config=quantization_config)

tokenizer = AutoTokenizer.from_pretrained(model_id)

# Push to hub

MODEL_NAME = model_id.split("/")[-1]

save_to = f"{USER_ID}/{MODEL_NAME}-8da4w"

quantized_model.push_to_hub(save_to, safe_serialization=False)

tokenizer.push_to_hub(save_to)

# Manual testing

prompt = "Hey, are you conscious? Can you talk to me?"

messages = [

{

"role": "system",

"content": "",

},

{"role": "user", "content": prompt},

]

templated_prompt = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True,

)

print("Prompt:", prompt)

print("Templated prompt:", templated_prompt)

inputs = tokenizer(

templated_prompt,

return_tensors="pt",

).to("cuda")

generated_ids = quantized_model.generate(**inputs, max_new_tokens=128)

output_text = tokenizer.batch_decode(

generated_ids, skip_special_tokens=True, clean_up_tokenization_spaces=False

)

print("Response:", output_text[0][len(prompt):])

```

The response from the manual testing is:

```

Okay, the user is asking if I can talk to them. First, I need to clarify that I can't communicate like a human because I don't have consciousness or emotions. I'm an AI model created by Hugging Face.

```

# Model Quality

| Benchmark | | |

|----------------------------------|----------------|---------------------------|

| | SmolLM3-3B | SmolLM3-3B-8da4w |

| **Popular aggregated benchmark** | | |

| mmlu | 59.29 | 55.52 |

| **Reasoning** | | |

| hellaswag | 56.53 | 54.39 |

| gpqa_main_zeroshot | 32.37 | 27.46 |

| **Multilingual** | | |

| mgsm_en_cot_en | 66.80 | 40.40 |

| **Math** | | |

| gsm8k | 72.71 | 58.08 |

| leaderboard_math_hard (v3) | 27.87 | 19.94 |

| **Overall** | 52.60 | 42.63 |

<details>

<summary> Reproduce Model Quality Results </summary>

We rely on [lm-evaluation-harness](https://github.com/EleutherAI/lm-evaluation-harness) to evaluate the quality of the quantized model.

Need to install lm-eval from source: https://github.com/EleutherAI/lm-evaluation-harness#install

## baseline

```Shell

lm_eval --model hf --model_args pretrained=HuggingFaceTB/SmolLM3-3B --tasks mmlu --device cuda:0 --batch_size auto

```

## int8 dynamic activation and int4 weight quantization (8da4w)

```Shell

lm_eval --model hf --model_args pretrained=pytorch/SmolLM3-3B-8da4w --tasks mmlu --device cuda:0 --batch_size auto

```

</details>

# Paper: TorchAO: PyTorch-Native Training-to-Serving Model Optimization

The model's quantization is powered by **TorchAO**, a framework presented in the paper [TorchAO: PyTorch-Native Training-to-Serving Model Optimization](https://huggingface.co/papers/2507.16099).

**Abstract:** We present TorchAO, a PyTorch-native model optimization framework leveraging quantization and sparsity to provide an end-to-end, training-to-serving workflow for AI models. TorchAO supports a variety of popular model optimization techniques, including FP8 quantized training, quantization-aware training (QAT), post-training quantization (PTQ), and 2:4 sparsity, and leverages a novel tensor subclass abstraction to represent a variety of widely-used, backend agnostic low precision data types, including INT4, INT8, FP8, MXFP4, MXFP6, and MXFP8. TorchAO integrates closely with the broader ecosystem at each step of the model optimization pipeline, from pre-training (TorchTitan) to fine-tuning (TorchTune, Axolotl) to serving (HuggingFace, vLLM, SGLang, ExecuTorch), connecting an otherwise fragmented space in a single, unified workflow. TorchAO has enabled recent launches of the quantized Llama 3.2 1B/3B and LlamaGuard3-8B models and is open-source at this https URL .

# Resources

* **Official TorchAO GitHub Repository:** [https://github.com/pytorch/ao](https://github.com/pytorch/ao)

* **TorchAO Documentation:** [https://docs.pytorch.org/ao/stable/index.html](https://docs.pytorch.org/ao/stable/index.html)

# Disclaimer

PyTorch has not performed safety evaluations or red teamed the quantized models. Performance characteristics, outputs, and behaviors may differ from the original models. Users are solely responsible for selecting appropriate use cases, evaluating and mitigating for accuracy, safety, and fairness, ensuring security, and complying with all applicable laws and regulations.

Nothing contained in this Model Card should be interpreted as or deemed a restriction or modification to the licenses the models are released under, including any limitations of liability or disclaimers of warranties provided therein. |