Spaces:

Running

Running

metadata

title: README

emoji: 👁

colorFrom: purple

colorTo: green

sdk: static

pinned: false

HuggingFaceTB

This is the home for smol models (SmolLM) and high quality pre-training datasets. We released:

- FineWeb-Edu: a filtered version of FineWeb dataset for educational content, paper available here.

- Cosmopedia: the largest open synthetic dataset, with 25B tokens and 30M samples. It contains synthetic textbooks, blog posts, and stories, posts generated by Mixtral. Blog post available here.

- Smollm-Corpus: the pre-training corpus of SmolLM: Cosmopedia v0.2, FineWeb-Edu dedup and Python-Edu. Blog post available here.

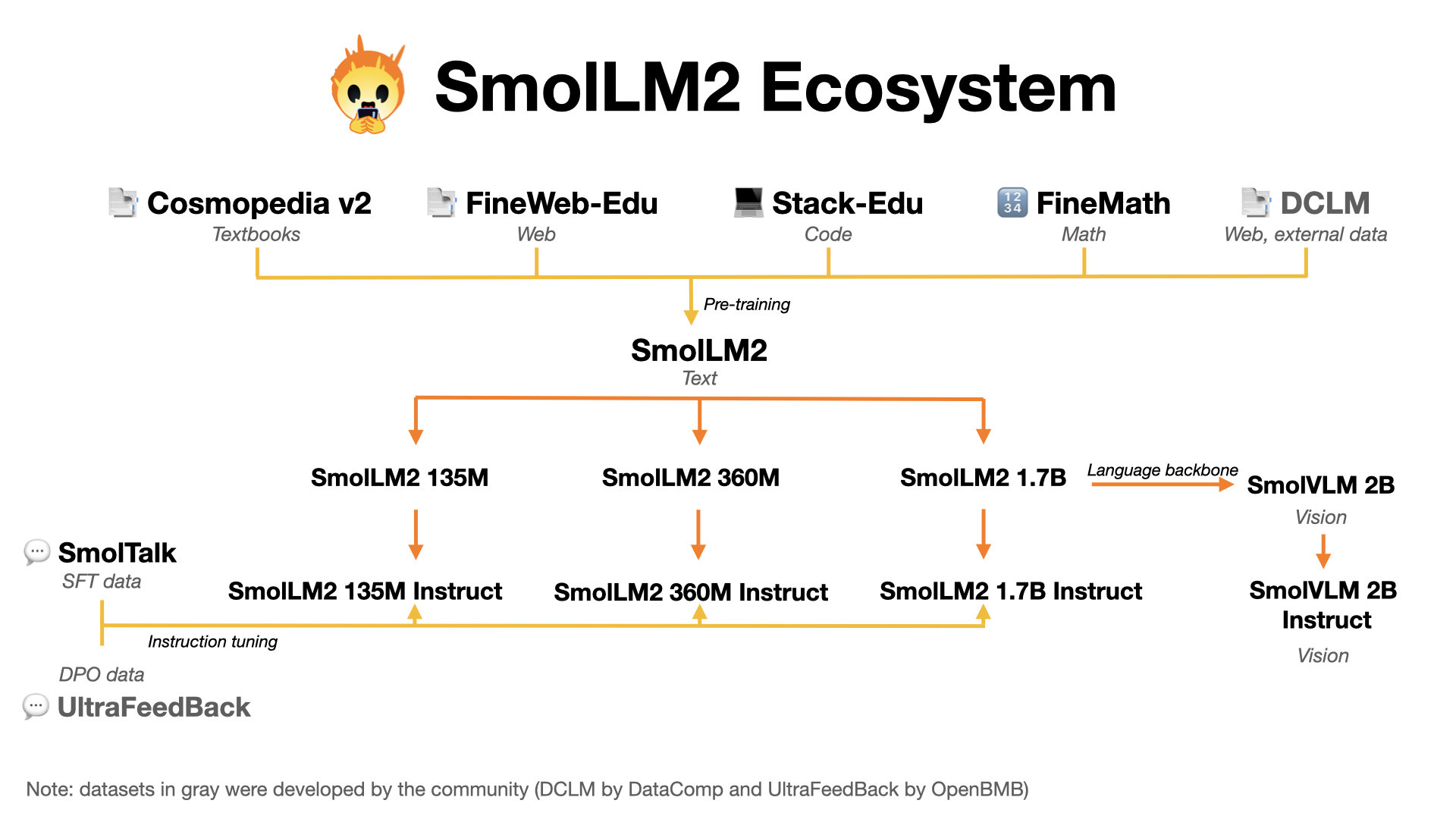

- SmolLM2 models: a series of strong small models in three sizes: 135M, 360M and 1.7B

- SmolVLM: a 2 billion Vision Language Model (VLM) built for on-device inference. It uses SmolLM2-1.7B as a language backbone. Blog post available here.

- FineMath: the best public math pretraining dataset with 50B tokens of mathematical and problem solving data.

News 🗞️

- FineMath: the best public math pretraining dataset with 50B tokens of mathematical and problem solving data https://huggingface.co/datasets/HuggingFaceTB/finemath