Spaces:

Runtime error

A newer version of the Gradio SDK is available:

5.9.1

AdaFace-Animate

This folder contains the preliminary implementation of AdaFace-Animate. It is a zero-shot subject-guided animation generator conditioned with human subject images, by combining AnimateDiff, ID-Animator and AdaFace. The ID-Animator provides AnimateDiff with rough subject characteristics, and AdaFace provides refined and more authentic subject facial details.

Please refer to our NeurIPS 2024 submission for more details about AdaFace:

AdaFace: A Versatile Face Encoder for Zero-Shot Diffusion Model Personalization

This pipeline uses 4 pretrained models: Stable Diffusion V1.5, AnimateDiff v3, ID-Animator and AdaFace.

AnimateDiff uses a SD-1.5 type checkpoint, referred to as a "DreamBooth" model. The DreamBooth model we use is an average of three SD-1.5 models named as "SAR": the original Stable Diffusion V1.5, AbsoluteReality V1.8.1, and RealisticVision V4.0. In our experiments, this average model performs better than any of the individual models.

Procedures of Generation

We find that using an initial image helps stablize the animation sequence and improve the quality. When generating each example video, an initial image is first generated by AdaFace with the same prompt as used to generate the video. This image is blended with multiple frames of random noises with weights decreasing with $t$. The multi-frame blended noises are converted to a 1-second animation with AnimateDiff, conditioned by both AdaFace and ID-Animator embeddings.

Gallery

Gallery contains 100 subject videos generated by us. They belong to 10 celebrities, each with 10 different prompts. The (shortened) prompts are: "Armor Suit", "Iron Man Costume", "Superman Costume", "Wielding a Lightsaber", "Walking on the beach", "Cooking", "Dancing", "Playing Guitar", "Reading", and "Running".

Some example videos are shown below. The full set of videos can be found in Gallery.

(Hint: use the horizontal scroll bar at the bottom of the table to view the full table)

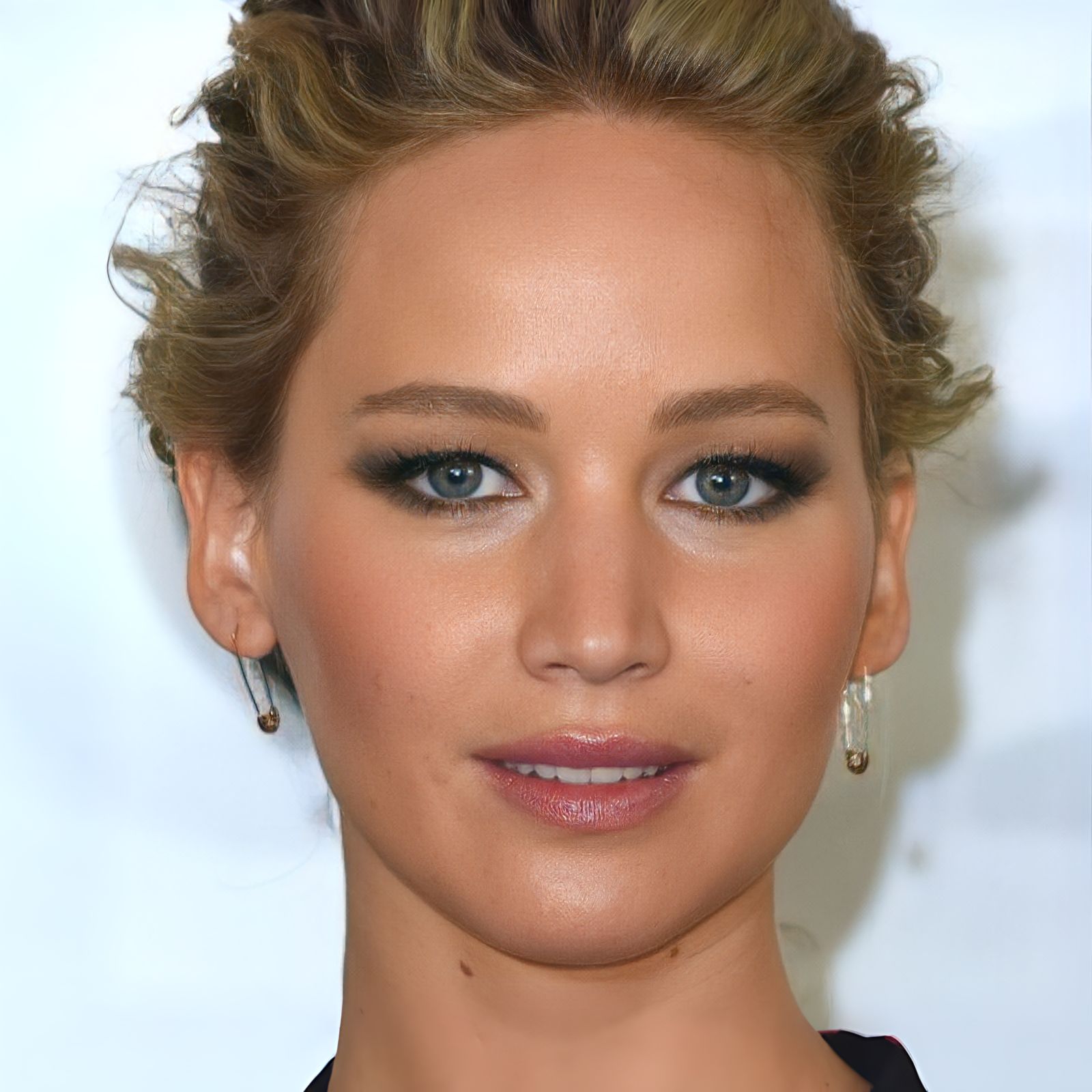

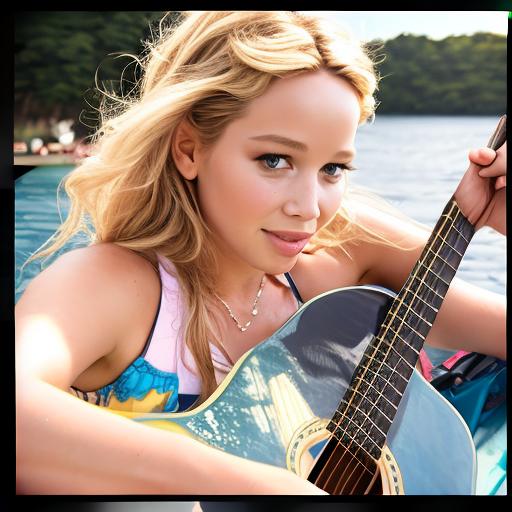

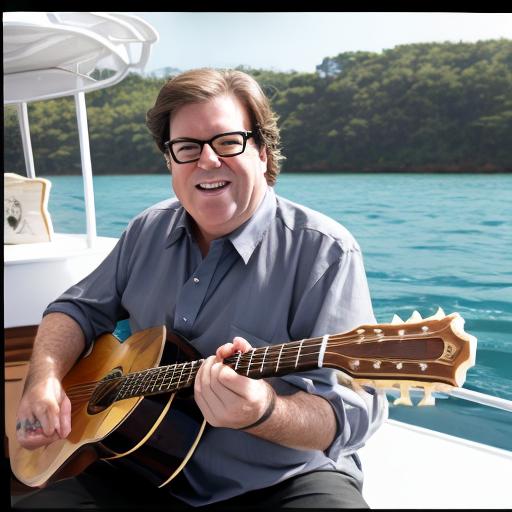

| Input (Celebrities) | Animation 1: Playing Guitar | Animation 2: Cooking | Animation 3: Dancing |

|

|||

|

|||

|

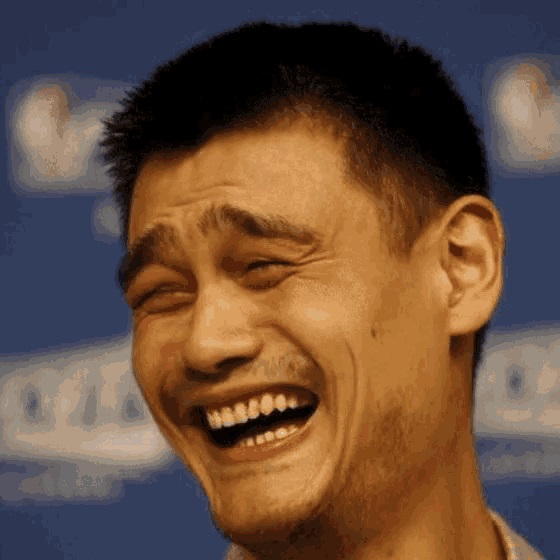

To illustrate the wide range of applications of our method, we animated 8 internet memes. 4 of them are shown in the table below. The full gallery can be found in memes.

| Input (Memes) | Animation | Input | Animation |

| Yao Ming Laugh | Girl Burning House | ||

|

|

||

| Girl with a Pearl Earring | Great Gatsby | ||

|

|

Comparison with ID-Animator, with AdaFace Initial Images

To compare with the baseline method "ID-Animator", for each video, we disable AdaFace, and generate the corresponding video with ID-Animator, using otherwise identical settings: the same subject image(s) and initial image, and the same random seed and prompt. The table below compares some of these videos side-by-side with the AdaFace-Animate videos. The full set of ID-Animator videos can be found in each subject folder in Gallery, named as "* orig.mp4".

NOTE Since ID-Animator videos utilize initial images generated by AdaFace, this gives ID-Animator an advantage over the original ID-Animator.

(Hint: use the horizontal scroll bar at the bottom of the table to view the full table)

| Initial Image: Playing Guitar | ID-Animator: Playing Guitar | AdaFace-Animate: Playing Guitar | Initial Image Dancing | ID-Animator: Dancing | AdaFace-Animate: Dancing |

|

|

||||

|

|

||||

|

|

The table below compares the animated internet memes. The initial image for each video is the meme image itself. For "Yao Ming laughing" and "Great Gatsby", 2~3 extra portrait photos of the subject are included as the subject images to enhance the facial fidelity. For other memes, the subject image is only the meme image. The full set of ID-Animator meme videos can be found in memes, named as "* orig.mp4".

| Input (Memes) | ID-Animator | AdaFace-Animate |

|

||

|

We can see that the subjects in AdaFace-Animate videos have more authentic facial features and better preserve the facial expressions, while the subjects in ID-Animator videos are less authentic and faithful to the original images.

Comparison with ID-Animator, without AdaFace Initial Images

To exclude the effects of AdaFace, we generate a subset of videos with AdaFace-Animate / ID-Animator without initial images. These videos were generated under the same settings as above, except not using initial images. The table below shows a selection of the videos. The complete set of such videos can be found in no-init. It can be seen that without the help of AdaFace initial images, the compositionality, or the overall layout deteriorates on some prompts. In particular, some background objects are suppressed by over-expressed facial features. Moreover, the performance discrepancy between AdaFace-Animate and ID-Animator becomes more pronounced.

(Hint: use the horizontal scroll bar at the bottom of the table to view the full table)

| Input (Celebrities) | ID-Animator: Playing Guitar | AdaFace-Animate: Playing Guitar | ID-Animator: Dancing | AdaFace-Animate: Dancing |

|

||||

|

||||

|

Installation

Manually Download Model Checkpoints

Download Stable Diffusion V1.5 into

animatediff/sd:git clone https://huggingface.co/runwayml/stable-diffusion-v1-5 animatediff/sdDownload AnimateDiff motion module into

models/v3_sd15_mm.ckpt: https://huggingface.co/guoyww/animatediff/blob/main/v3_sd15_mm.ckptDownload Animatediff adapter into

models/v3_adapter_sd_v15.ckpt: https://huggingface.co/guoyww/animatediff/blob/main/v3_sd15_adapter.ckptDownload ID-Animator checkpoint into

models/animator.ckptfrom: https://huggingface.co/spaces/ID-Animator/ID-Animator/blob/main/animator.ckptDownload CLIP Image encoder into

models/image_encoder/from: https://huggingface.co/spaces/ID-Animator/ID-Animator/tree/main/image_encoderDownload AdaFace checkpoint into

models/adaface/from: https://huggingface.co/adaface-neurips/adaface/tree/main/subjects-celebrity2024-05-16T17-22-46_zero3-ada-30000.pt

Prepare the SAR Model

Manually download the three .safetensors models: the original Stable Diffusion V1.5, AbsoluteReality V1.8.1, and RealisticVision V4.0. Save them to models/sar.

Run the following command to generate an average of the three models:

python3 scripts/avg_models.py --input models/sar/absolutereality_v181.safetensors models/sar/realisticVisionV40_v40VAE.safetensors models/sar/v1-5-pruned.safetensors --output models/sar/sar.safetensors

[Optional Improvement]

- You can replace the VAE of the SAR model with the MSE-840000 finetuned VAE for slightly better video details:

python3 scripts/repl_vae.py --base_ckpt models/sar/sar.safetensors --vae_ckpt models/sar/vae-ft-mse-840000-ema-pruned.ckpt --out_ckpt models/sar/sar-vae.safetensors

mv models/sar/sar-vae.safetensors models/sar/sar.safetensors

- You can replace the text encoder of the SAR model with the text encoder of DreamShaper V8 for slightly more authentic facial features:

python3 scripts/repl_textencoder.py --base_ckpt models/sar/sar.safetensors --te_ckpt models/sar/dreamshaper_8.safetensors --out_ckpt models/sar/sar2.safetensors

mv models/sar/sar2.safetensors models/sar/sar.safetensors

Inference

Run the demo inference scripts:

python3 app.py

Then connect to the Gradio interface at local-ip-address:7860 or https://*.gradio.live shown in the terminal.

Use of Initial Image

The use of an initial image is optional. It usually helps stabilize the animation sequence and improve the quality.

You can generate 3 initial images in one go by clicking "Generate 3 new init images". The images will be based on the same prompt as the video generation. You can also use different prompts for the initial images and the video generation. Select the desired initial image by clicking on the image, and then click "Generate Video". If none of the initial images are good enough, you can generate again by clicking "Generate 3 new init images" again.

Common Issues

- Defocus. This is the biggest possible issue. When the subject is far from the camera, the model may not be able to generate a clear face and control the subject's facial details. In this situation, consider to increase the weights of "Image Embedding Scale", "Attention Processor Scale" and "AdaFace Embedding ID CFG Scale". You can also add a prefix "face portrait of" to the prompt to help the model focus on the face.

- Motion Degeneration. When the subject is too close to the camera, the model may not be able to generate correct motions and poses, and only generate the face. In this situation, consider to decrease the weights of "Image Embedding Scale", "Attention Processor Scale" and "AdaFace Embedding ID CFG Scale". You can also adjust the prompt slightly to let it focus on the whole body.

- Lesser Facial Characteristics. If the subject's facial characteristics is not so distinctive, you can increase the weights of "AdaFace Embedding ID CFG Scale".

- Unstable Motions. If the generated video has unstable motions, this is probably due to the limitations of AnimateDiff. Nonetheless, you can make it more stable by using a carefully selected initial image, and optionally increase the "Init Image Strength" and "Final Weight of the Init Image". Note that when "Final Weight of the Init Image" is larger, the motion in the generated video will be less dynamic.

Disclaimer

This project is intended for academic purposes only. We do not accept responsibility for user-generated content. Users are solely responsible for their own actions. The contributors to this project are not legally affiliated with, nor are they liable for, the actions of users. Please use this generative model responsibly, in accordance with ethical and legal standards.