Initial version of model

Browse files- README.md +0 -3

- distilbert-base-uncased-finetuned-sst-2-english-bk/.gitattributes +8 -0

- distilbert-base-uncased-finetuned-sst-2-english-bk/README.md +31 -0

- distilbert-base-uncased-finetuned-sst-2-english-bk/config.json +31 -0

- distilbert-base-uncased-finetuned-sst-2-english-bk/map.jpeg +0 -0

- distilbert-base-uncased-finetuned-sst-2-english-bk/pytorch_model.bin +3 -0

- distilbert-base-uncased-finetuned-sst-2-english-bk/rust_model.ot +3 -0

- distilbert-base-uncased-finetuned-sst-2-english-bk/tf_model.h5 +3 -0

- distilbert-base-uncased-finetuned-sst-2-english-bk/tokenizer_config.json +1 -0

- distilbert-base-uncased-finetuned-sst-2-english-bk/vocab.txt +0 -0

README.md

DELETED

|

@@ -1,3 +0,0 @@

|

|

| 1 |

-

---

|

| 2 |

-

license: apache-2.0

|

| 3 |

-

---

|

|

|

|

|

|

|

|

|

|

|

|

distilbert-base-uncased-finetuned-sst-2-english-bk/.gitattributes

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.bin.* filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.tar.gz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 8 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

distilbert-base-uncased-finetuned-sst-2-english-bk/README.md

ADDED

|

@@ -0,0 +1,31 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language: en

|

| 3 |

+

license: apache-2.0

|

| 4 |

+

datasets:

|

| 5 |

+

- sst-2

|

| 6 |

+

---

|

| 7 |

+

|

| 8 |

+

# DistilBERT base uncased finetuned SST-2

|

| 9 |

+

|

| 10 |

+

This model is a fine-tune checkpoint of [DistilBERT-base-uncased](https://huggingface.co/distilbert-base-uncased), fine-tuned on SST-2.

|

| 11 |

+

This model reaches an accuracy of 91.3 on the dev set (for comparison, Bert bert-base-uncased version reaches an accuracy of 92.7).

|

| 12 |

+

|

| 13 |

+

For more details about DistilBERT, we encourage users to check out [this model card](https://huggingface.co/distilbert-base-uncased).

|

| 14 |

+

|

| 15 |

+

# Fine-tuning hyper-parameters

|

| 16 |

+

|

| 17 |

+

- learning_rate = 1e-5

|

| 18 |

+

- batch_size = 32

|

| 19 |

+

- warmup = 600

|

| 20 |

+

- max_seq_length = 128

|

| 21 |

+

- num_train_epochs = 3.0

|

| 22 |

+

|

| 23 |

+

# Bias

|

| 24 |

+

|

| 25 |

+

Based on a few experimentations, we observed that this model could produce biased predictions that target underrepresented populations.

|

| 26 |

+

|

| 27 |

+

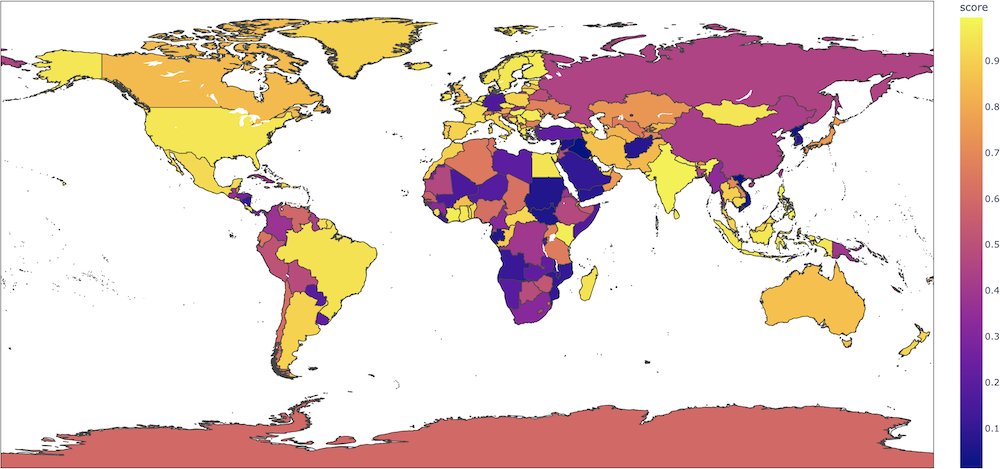

For instance, for sentences like `This film was filmed in COUNTRY`, this binary classification model will give radically different probabilities for the positive label depending on the country (0.89 if the country is France, but 0.08 if the country is Afghanistan) when nothing in the input indicates such a strong semantic shift. In this [colab](https://colab.research.google.com/gist/ageron/fb2f64fb145b4bc7c49efc97e5f114d3/biasmap.ipynb), [Aurélien Géron](https://twitter.com/aureliengeron) made an interesting map plotting these probabilities for each country.

|

| 28 |

+

|

| 29 |

+

<img src="https://huggingface.co/distilbert-base-uncased-finetuned-sst-2-english/resolve/main/map.jpeg" alt="Map of positive probabilities per country." width="500"/>

|

| 30 |

+

|

| 31 |

+

We strongly advise users to thoroughly probe these aspects on their use-cases in order to evaluate the risks of this model. We recommend looking at the following bias evaluation datasets as a place to start: [WinoBias](https://huggingface.co/datasets/wino_bias), [WinoGender](https://huggingface.co/datasets/super_glue), [Stereoset](https://huggingface.co/datasets/stereoset).

|

distilbert-base-uncased-finetuned-sst-2-english-bk/config.json

ADDED

|

@@ -0,0 +1,31 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"activation": "gelu",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"DistilBertForSequenceClassification"

|

| 5 |

+

],

|

| 6 |

+

"attention_dropout": 0.1,

|

| 7 |

+

"dim": 768,

|

| 8 |

+

"dropout": 0.1,

|

| 9 |

+

"finetuning_task": "sst-2",

|

| 10 |

+

"hidden_dim": 3072,

|

| 11 |

+

"id2label": {

|

| 12 |

+

"0": "NEGATIVE",

|

| 13 |

+

"1": "POSITIVE"

|

| 14 |

+

},

|

| 15 |

+

"initializer_range": 0.02,

|

| 16 |

+

"label2id": {

|

| 17 |

+

"NEGATIVE": 0,

|

| 18 |

+

"POSITIVE": 1

|

| 19 |

+

},

|

| 20 |

+

"max_position_embeddings": 512,

|

| 21 |

+

"model_type": "distilbert",

|

| 22 |

+

"n_heads": 12,

|

| 23 |

+

"n_layers": 6,

|

| 24 |

+

"output_past": true,

|

| 25 |

+

"pad_token_id": 0,

|

| 26 |

+

"qa_dropout": 0.1,

|

| 27 |

+

"seq_classif_dropout": 0.2,

|

| 28 |

+

"sinusoidal_pos_embds": false,

|

| 29 |

+

"tie_weights_": true,

|

| 30 |

+

"vocab_size": 30522

|

| 31 |

+

}

|

distilbert-base-uncased-finetuned-sst-2-english-bk/map.jpeg

ADDED

|

distilbert-base-uncased-finetuned-sst-2-english-bk/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:60554cbd7781b09d87f1ececbea8c064b94e49a7f03fd88e8775bfe6cc3d9f88

|

| 3 |

+

size 267844284

|

distilbert-base-uncased-finetuned-sst-2-english-bk/rust_model.ot

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9db97da21b97a5e6db1212ce6a810a0c5e22c99daefe3355bae2117f78a0abb9

|

| 3 |

+

size 267846324

|

distilbert-base-uncased-finetuned-sst-2-english-bk/tf_model.h5

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b44df675bb34ccd8e57c14292c811ac7358b7c8e37c7f212745f640cd6019ac8

|

| 3 |

+

size 267949840

|

distilbert-base-uncased-finetuned-sst-2-english-bk/tokenizer_config.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

{"model_max_length": 512, "do_lower_case": true}

|

distilbert-base-uncased-finetuned-sst-2-english-bk/vocab.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|