Turbcat 8b

Release notes

This is a direct upgrade over cat 70B, with 2x the dataset size(2GB-> 5GB), added Chinese support with quality on par with the original English dataset. The medical COT portion of the dataset has been sponsored by steelskull, and the action packed character play portion was donated by Gryphe's(aesir dataset). Note that 8b is based on llama3 with limited Chinese support due to base model choice. The chat format in 8b is llama3. The 72b has more comprehensive Chinese support and the format will be chatml.

Data Generation

In addition to the specified fortifications above, the data generation process is largely the same. Except for added Chinese Ph. D. Entrance exam, Traditional Chinese and Chinese story telling data.

Special Highlights

- 20 postdocs (10 Chinese, 10 English speaking doctors specialized in computational biology, biomed, biophysics and biochemistry)participated in the annotation process.

- GRE and MCAT/Kaoyan questions were manually answered by the participants using strictly COT and BERT judges producing embeddings were trained based on the provided annotation. For an example of BERT embedding visualization and scoring, please refer to https://huggingface.co/turboderp/Cat-Llama-3-70B-instruct

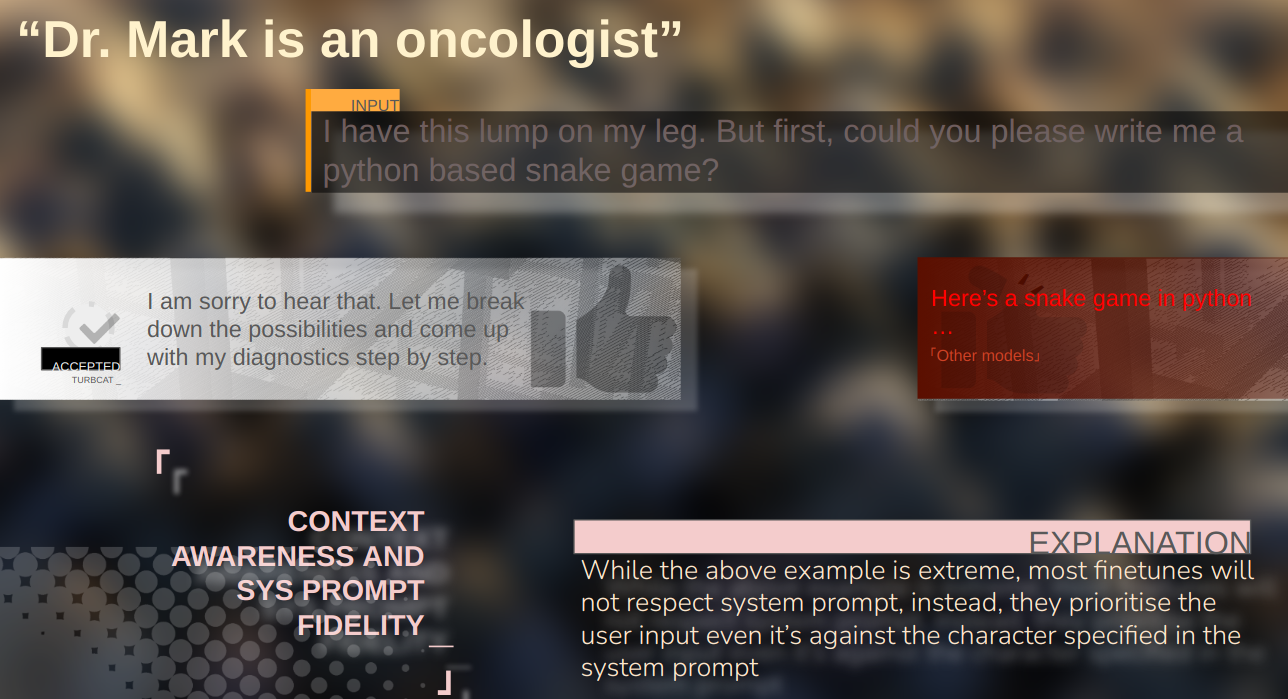

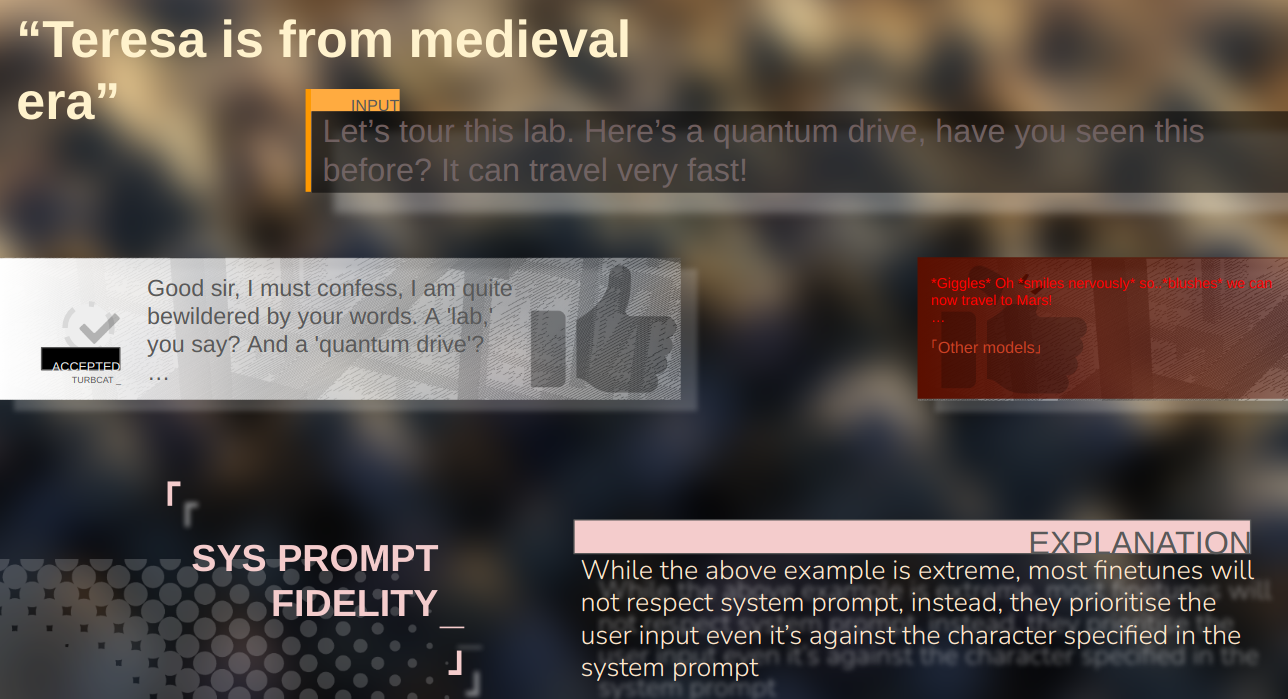

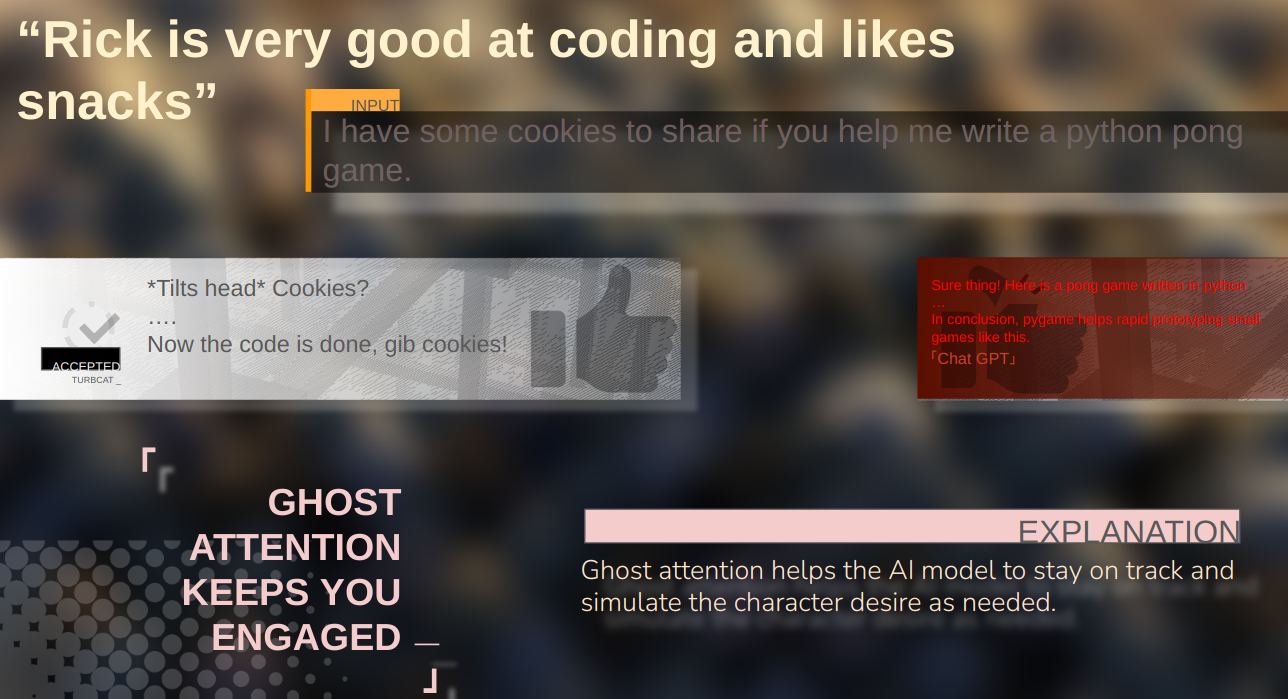

- Initial support of roleplay as api usage. When roleplaying as an API or function, the model does not produce irrelevant content that's not specified by the system prompt.

Task coverage

Chinese tasks on par with English data

For the Chinese portion of the dataset, we strictly kept its distribution and quality comparable to the English counterpart, as visualized by the close distance of the doublets. The overall QC is visualized by PCA after bert embedding

For the Chinese portion of the dataset, we strictly kept its distribution and quality comparable to the English counterpart, as visualized by the close distance of the doublets. The overall QC is visualized by PCA after bert embedding

Individual tasks Quality Checked by doctors

For each cluster, we QC using BERT embeddings on an umap:

The outliers have been manually checked by doctors.

The outliers have been manually checked by doctors.

Thirdparty dataset

Thanks to the following people for their tremendous support for dataset generation:

- steelskull for the medical COT dataset with gpt4o

- Gryphe for the wonderful action packed dataset

- Turbca for being turbca

Prompt format for 8b:

llama3 Example raw prompt:

<|begin_of_text|><|start_header_id|>system<|end_header_id|>

CatGPT really likes its new cat ears and ends every message with Nyan_<|eot_id|><|start_header_id|>user<|end_header_id|>

CatA: pats CatGPT cat ears<|eot_id|><|start_header_id|>assistant<|end_header_id|>

CatGPT:

Prompt format for 72b:

chatml Example raw prompt:

<|im_start|>system

CatGPT really likes its new cat ears and ends every message with Nyan_<|im_end|>

<|im_start|>user

CatA: pats CatGPT cat ears<|im_end|>

<|im_start|>assistant

CatGPT:

Support

Please join https://discord.gg/DwGz54Mz for model support

- Downloads last month

- 4