|

|

--- |

|

|

pipeline_tag: text-generation |

|

|

library_name: transformers |

|

|

license: cc-by-nc-4.0 |

|

|

tags: |

|

|

- text-to-sql |

|

|

- reinforcement-learning |

|

|

- qwen |

|

|

--- |

|

|

|

|

|

# CSC-SQL: Corrective Self-Consistency in Text-to-SQL via Reinforcement Learning |

|

|

|

|

|

This repository contains the `CscSQL-Grpo-Qwen2.5-Coder-7B-Instruct` model, a key component of the CSC-SQL framework, as presented in the paper [CSC-SQL: Corrective Self-Consistency in Text-to-SQL via Reinforcement Learning](https://huggingface.co/papers/2505.13271). |

|

|

|

|

|

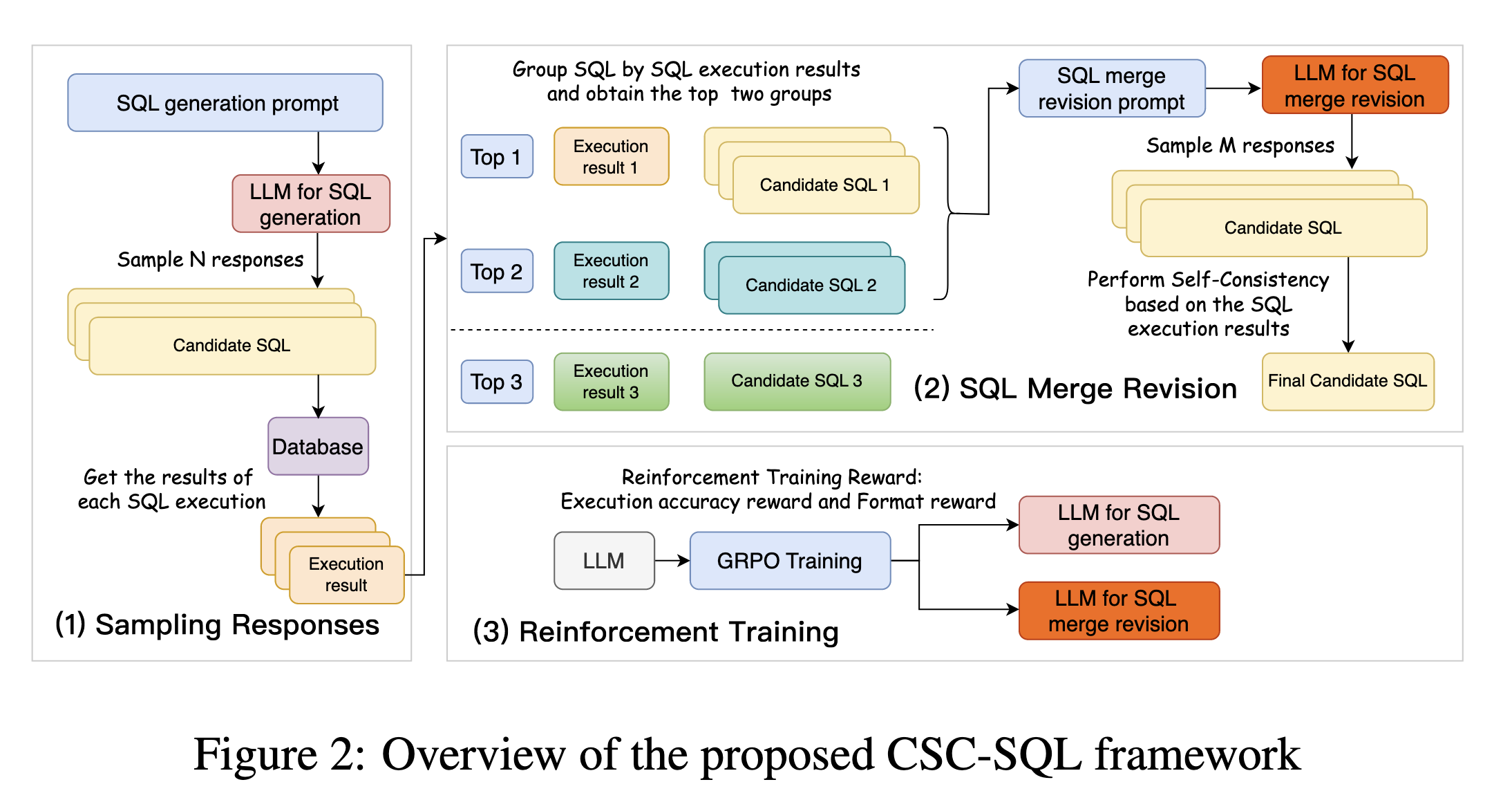

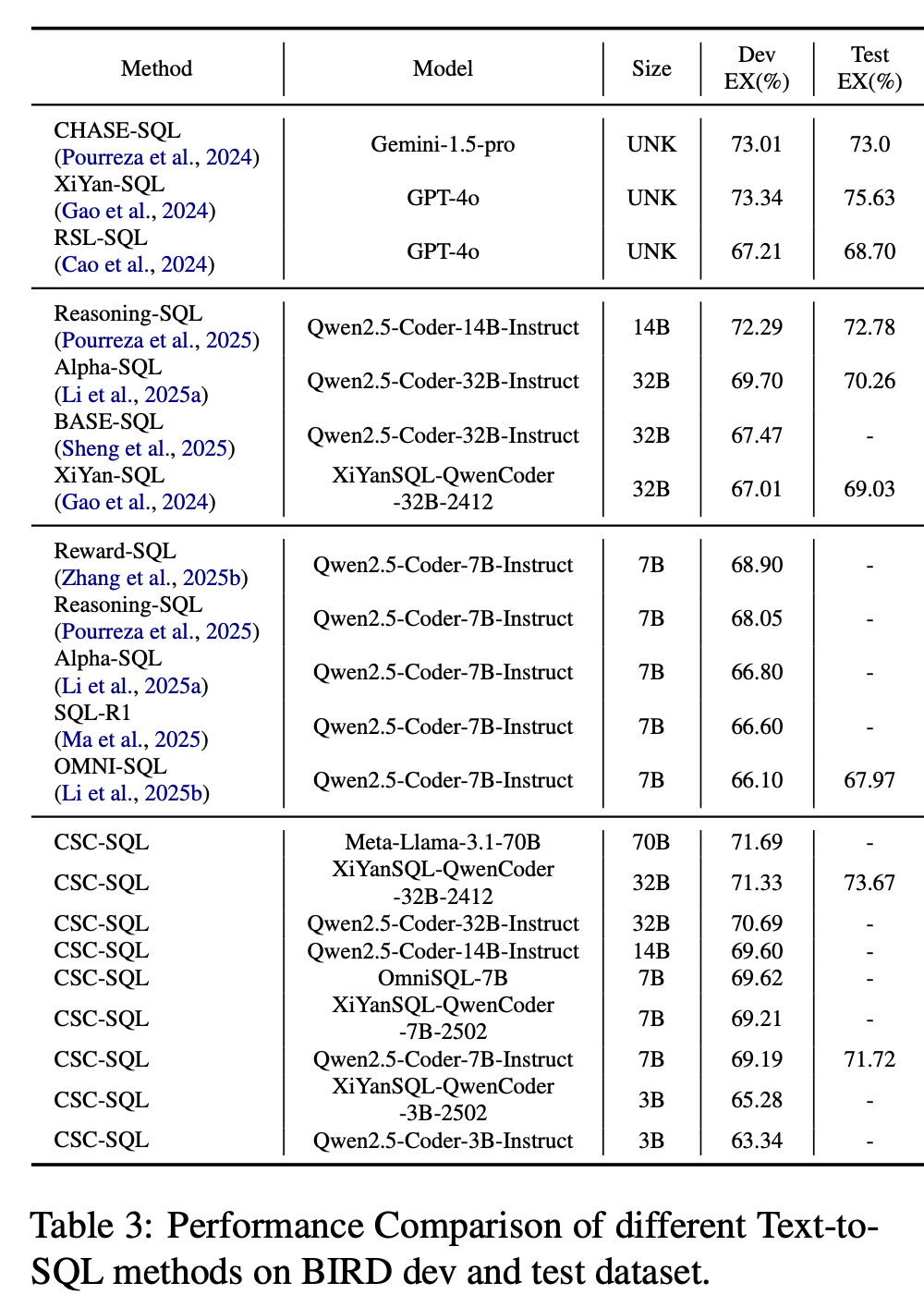

## Abstract |

|

|

Large language models (LLMs) have demonstrated strong capabilities in translating natural language questions about relational databases into SQL queries. In particular, test-time scaling techniques such as Self-Consistency and Self-Correction can enhance SQL generation accuracy by increasing computational effort during inference. However, these methods have notable limitations: Self-Consistency may select suboptimal outputs despite majority votes, while Self-Correction typically addresses only syntactic errors. To leverage the strengths of both approaches, we propose CSC-SQL, a novel method that integrates Self-Consistency and Self-Correction. CSC-SQL selects the two most frequently occurring outputs from parallel sampling and feeds them into a merge revision model for correction. Additionally, we employ the Group Relative Policy Optimization (GRPO) algorithm to fine-tune both the SQL generation and revision models via reinforcement learning, significantly enhancing output quality. Experimental results confirm the effectiveness and generalizability of CSC-SQL. On the BIRD private test set, our 7B model achieves 71.72% execution accuracy, while the 32B model achieves 73.67%. |

|

|

|

|

|

For more details, refer to the [paper](https://huggingface.co/papers/2505.13271) and the [official GitHub repository](https://github.com/CycloneBoy/csc_sql). |

|

|

|

|

|

## Framework Overview |

|

|

|

|

|

|

|

|

## Code |

|

|

The official code repository for CSC-SQL is available on GitHub: [https://github.com/CycloneBoy/csc_sql](https://github.com/CycloneBoy/csc_sql) |

|

|

|

|

|

## Main Results |

|

|

Performance comparison of different Text-to-SQL methods on BIRD dev and test dataset: |

|

|

|

|

|

<img src="https://raw.githubusercontent.com/CycloneBoy/csc_sql/main/data/image/csc_sql_result_main.png" height="500" alt="csc_sql_result main"> |

|

|

|

|

|

## Model Checkpoints |

|

|

This model is part of a collection of checkpoints related to CSC-SQL, also available on Hugging Face: |

|

|

|

|

|

| **Model** | HuggingFace | |

|

|

|-------------------------------|--------------------------------------------------------------------------------------------| |

|

|

| CscSQL-Merge-Qwen2.5-Coder-3B-Instruct | [🤗 HuggingFace](https://huggingface.co/cycloneboy/CscSQL-Merge-Qwen2.5-Coder-3B-Instruct) | |

|

|

| CscSQL-Merge-Qwen2.5-Coder-7B-Instruct | [🤗 HuggingFace](https://huggingface.co/cycloneboy/CscSQL-Merge-Qwen2.5-Coder-7B-Instruct) | |

|

|

| CscSQL-Grpo-Qwen2.5-Coder-3B-Instruct | [🤗 HuggingFace](https://huggingface.co/cycloneboy/CscSQL-Grpo-Qwen2.5-Coder-3B-Instruct) | |

|

|

| CscSQL-Grpo-XiYanSQL-QwenCoder-3B-2502 | [🤗 HuggingFace](https://huggingface.co/cycloneboy/CscSQL-Grpo-XiYanSQL-QwenCoder-3B-2502) | |

|

|

| CscSQL-Grpo-Qwen2.5-Coder-7B-Instruct | [🤗 HuggingFace](https://huggingface.co/cycloneboy/CscSQL-Grpo-Qwen2.5-Coder-7B-Instruct) | |

|

|

| CscSQL-Grpo-XiYanSQL-QwenCoder-7B-2502 | [🤗 HuggingFace](https://huggingface.co/cycloneboy/CscSQL-Grpo-XiYanSQL-QwenCoder-7B-2502) | |

|

|

|

|

|

## Usage |

|

|

You can load this model using the `transformers` library. Here's a basic example for inference: |

|

|

|

|

|

```python |

|

|

import torch |

|

|

from transformers import AutoTokenizer, AutoModelForCausalLM, GenerationConfig |

|

|

|

|

|

model_name = "cycloneboy/CscSQL-Grpo-Qwen2.5-Coder-7B-Instruct" |

|

|

|

|

|

# Load model and tokenizer |

|

|

tokenizer = AutoTokenizer.from_pretrained(model_name) |

|

|

model = AutoModelForCausalLM.from_pretrained( |

|

|

model_name, |

|

|

torch_dtype=torch.bfloat16, # Or torch.float16 depending on your hardware |

|

|

device_map="auto" |

|

|

) |

|

|

model.eval() |

|

|

|

|

|

# Example prompt for text-to-SQL generation |

|

|

# Note: The prompt format might need to align with the model's specific training |

|

|

# and database schema format for optimal text-to-SQL performance. |

|

|

prompt = "Translate the following question to SQL: 'What are the names of all employees?'" |

|

|

|

|

|

# Encode the prompt |

|

|

input_ids = tokenizer.encode(prompt, return_tensors="pt").to(model.device) |

|

|

|

|

|

# Set generation configuration based on the model's generation_config.json |

|

|

generation_config = GenerationConfig( |

|

|

bos_token_id=tokenizer.bos_token_id, |

|

|

eos_token_id=[tokenizer.eos_token_id, 151643], # Include <|endoftext|> as eos_token_id |

|

|

pad_token_id=tokenizer.bos_token_id, # Or use tokenizer.pad_token_id if different |

|

|

temperature=0.7, |

|

|

max_new_tokens=512, |

|

|

do_sample=True, |

|

|

top_p=0.8, |

|

|

repetition_penalty=1.1, |

|

|

top_k=20, |

|

|

) |

|

|

|

|

|

# Generate SQL query |

|

|

output_ids = model.generate( |

|

|

input_ids, |

|

|

generation_config=generation_config |

|

|

) |

|

|

|

|

|

# Decode the generated SQL |

|

|

generated_sql = tokenizer.decode(output_ids[0], skip_special_tokens=True) |

|

|

print(generated_sql) |

|

|

|

|

|

# For detailed usage, including how to integrate with the full CSC-SQL framework |

|

|

# for improved accuracy via reinforcement learning, please refer to the |

|

|

# official GitHub repository: https://github.com/CycloneBoy/csc_sql |

|

|

``` |

|

|

|

|

|

## Citation |

|

|

If you find this work helpful or inspiring, please feel free to cite it: |

|

|

|

|

|

```bibtex |

|

|

@misc{sheng2025cscsqlcorrectiveselfconsistencytexttosql, |

|

|

title={CSC-SQL: Corrective Self-Consistency in Text-to-SQL via Reinforcement Learning}, |

|

|

author={Lei Sheng and Shuai-Shuai Xu}, |

|

|

year={2025}, |

|

|

eprint={2505.13271}, |

|

|

archivePrefix={arXiv}, |

|

|

primaryClass={cs.CL}, |

|

|

url={https://arxiv.org/abs/2505.13271}, |

|

|

} |

|

|

``` |