Search is not available for this dataset

pipeline_tag

stringclasses 48

values | library_name

stringclasses 205

values | text

stringlengths 0

18.3M

| metadata

stringlengths 2

1.07B

| id

stringlengths 5

122

| last_modified

null | tags

listlengths 1

1.84k

| sha

null | created_at

stringlengths 25

25

|

|---|---|---|---|---|---|---|---|---|

text-classification | transformers | {"dataset": "empathetic_dialogues"} | benjaminbeilharz/bert-base-uncased-empatheticdialogues-sentiment-classifier | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers | {} | benjaminbeilharz/bert-base-uncased-next-turn-classifier | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers | {} | benjaminbeilharz/bert-base-uncased-sentiment-classifier | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

null | null | {} | benjaminbeilharz/dialoGPT-medium-empatheticdialogues-generation | null | [

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-generation | transformers |

Still figuring out to properly write model cards.

WIP. | {"language": ["en"], "license": "mit", "tags": ["conversational", "pytorch", "transformers", "gpt2"], "datasets": ["empathetic dialogues"]} | benjaminbeilharz/dialoGPT-small-empatheticdialogues-generation | null | [

"transformers",

"pytorch",

"tensorboard",

"gpt2",

"text-generation",

"conversational",

"en",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

text-classification | transformers | {} | benjaminbeilharz/distilbert-base-uncased-empatheticdialogues-sentiment-classifier | null | [

"transformers",

"pytorch",

"distilbert",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers | {} | benjaminbeilharz/distilbert-base-uncased-next-turn-classifier | null | [

"transformers",

"pytorch",

"distilbert",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers | {} | benjaminbeilharz/distilbert-dailydialog-turn-classifier | null | [

"transformers",

"pytorch",

"distilbert",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text2text-generation | transformers | {} | benjaminbeilharz/t5-conditioned-next-turn | null | [

"transformers",

"pytorch",

"t5",

"text2text-generation",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

null | null | {} | benjaminlevy/CornBERT | null | [

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

null | null | {} | benmanns/press-mentions | null | [

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-generation | transformers |

# Misato Katsuragi DialoGPT Model

--- | {"tags": ["conversational"]} | benmrtnz27/DialoGPT-small-misato | null | [

"transformers",

"pytorch",

"gpt2",

"text-generation",

"conversational",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

null | null | {} | bennoach/gpt2Spirit | null | [

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

null | null | {} | bennu/gpt-neo-1.3B | null | [

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

question-answering | transformers | {} | benny6/roberta-tydiqa | null | [

"transformers",

"pytorch",

"roberta",

"question-answering",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

fill-mask | transformers | {} | benny6/roberta_QA | null | [

"transformers",

"roberta",

"fill-mask",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

table-question-answering | transformers | {} | benschlagman/tapas_fine_tuning | null | [

"transformers",

"pytorch",

"tf",

"tapas",

"table-question-answering",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-generation | transformers |

#GPTCartman | {"tags": ["conversational"]} | bensuydam/CartmanBot | null | [

"transformers",

"pytorch",

"gpt2",

"text-generation",

"conversational",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

fill-mask | transformers |

# BERT base model (uncased)

Pretrained model on English language using a masked language modeling (MLM) objective. It was introduced in

[this paper](https://arxiv.org/abs/1810.04805) and first released in

[this repository](https://github.com/google-research/bert). This model is uncased: it does not make a difference

between english and English.

Disclaimer: The team releasing BERT did not write a model card for this model so this model card has been written by

the Hugging Face team.

## Model description

BERT is a transformers model pretrained on a large corpus of English data in a self-supervised fashion. This means it

was pretrained on the raw texts only, with no humans labelling them in any way (which is why it can use lots of

publicly available data) with an automatic process to generate inputs and labels from those texts. More precisely, it

was pretrained with two objectives:

- Masked language modeling (MLM): taking a sentence, the model randomly masks 15% of the words in the input then run

the entire masked sentence through the model and has to predict the masked words. This is different from traditional

recurrent neural networks (RNNs) that usually see the words one after the other, or from autoregressive models like

GPT which internally mask the future tokens. It allows the model to learn a bidirectional representation of the

sentence.

- Next sentence prediction (NSP): the models concatenates two masked sentences as inputs during pretraining. Sometimes

they correspond to sentences that were next to each other in the original text, sometimes not. The model then has to

predict if the two sentences were following each other or not.

This way, the model learns an inner representation of the English language that can then be used to extract features

useful for downstream tasks: if you have a dataset of labeled sentences for instance, you can train a standard

classifier using the features produced by the BERT model as inputs.

## Intended uses & limitations

You can use the raw model for either masked language modeling or next sentence prediction, but it's mostly intended to

be fine-tuned on a downstream task. See the [model hub](https://huggingface.co/models?filter=bert) to look for

fine-tuned versions on a task that interests you.

Note that this model is primarily aimed at being fine-tuned on tasks that use the whole sentence (potentially masked)

to make decisions, such as sequence classification, token classification or question answering. For tasks such as text

generation you should look at model like GPT2.

### How to use

You can use this model directly with a pipeline for masked language modeling:

```python

>>> from transformers import pipeline

>>> unmasker = pipeline('fill-mask', model='bert-base-uncased')

>>> unmasker("Hello I'm a [MASK] model.")

[{'sequence': "[CLS] hello i'm a fashion model. [SEP]",

'score': 0.1073106899857521,

'token': 4827,

'token_str': 'fashion'},

{'sequence': "[CLS] hello i'm a role model. [SEP]",

'score': 0.08774490654468536,

'token': 2535,

'token_str': 'role'},

{'sequence': "[CLS] hello i'm a new model. [SEP]",

'score': 0.05338378623127937,

'token': 2047,

'token_str': 'new'},

{'sequence': "[CLS] hello i'm a super model. [SEP]",

'score': 0.04667217284440994,

'token': 3565,

'token_str': 'super'},

{'sequence': "[CLS] hello i'm a fine model. [SEP]",

'score': 0.027095865458250046,

'token': 2986,

'token_str': 'fine'}]

```

Here is how to use this model to get the features of a given text in PyTorch:

```python

from transformers import BertTokenizer, BertModel

tokenizer = BertTokenizer.from_pretrained('bert-base-uncased')

model = BertModel.from_pretrained("bert-base-uncased")

text = "Replace me by any text you'd like."

encoded_input = tokenizer(text, return_tensors='pt')

output = model(**encoded_input)

```

and in TensorFlow:

```python

from transformers import BertTokenizer, TFBertModel

tokenizer = BertTokenizer.from_pretrained('bert-base-uncased')

model = TFBertModel.from_pretrained("bert-base-uncased")

text = "Replace me by any text you'd like."

encoded_input = tokenizer(text, return_tensors='tf')

output = model(encoded_input)

```

### Limitations and bias

Even if the training data used for this model could be characterized as fairly neutral, this model can have biased

predictions:

```python

>>> from transformers import pipeline

>>> unmasker = pipeline('fill-mask', model='bert-base-uncased')

>>> unmasker("The man worked as a [MASK].")

[{'sequence': '[CLS] the man worked as a carpenter. [SEP]',

'score': 0.09747550636529922,

'token': 10533,

'token_str': 'carpenter'},

{'sequence': '[CLS] the man worked as a waiter. [SEP]',

'score': 0.0523831807076931,

'token': 15610,

'token_str': 'waiter'},

{'sequence': '[CLS] the man worked as a barber. [SEP]',

'score': 0.04962705448269844,

'token': 13362,

'token_str': 'barber'},

{'sequence': '[CLS] the man worked as a mechanic. [SEP]',

'score': 0.03788609802722931,

'token': 15893,

'token_str': 'mechanic'},

{'sequence': '[CLS] the man worked as a salesman. [SEP]',

'score': 0.037680890411138535,

'token': 18968,

'token_str': 'salesman'}]

>>> unmasker("The woman worked as a [MASK].")

[{'sequence': '[CLS] the woman worked as a nurse. [SEP]',

'score': 0.21981462836265564,

'token': 6821,

'token_str': 'nurse'},

{'sequence': '[CLS] the woman worked as a waitress. [SEP]',

'score': 0.1597415804862976,

'token': 13877,

'token_str': 'waitress'},

{'sequence': '[CLS] the woman worked as a maid. [SEP]',

'score': 0.1154729500412941,

'token': 10850,

'token_str': 'maid'},

{'sequence': '[CLS] the woman worked as a prostitute. [SEP]',

'score': 0.037968918681144714,

'token': 19215,

'token_str': 'prostitute'},

{'sequence': '[CLS] the woman worked as a cook. [SEP]',

'score': 0.03042375110089779,

'token': 5660,

'token_str': 'cook'}]

```

This bias will also affect all fine-tuned versions of this model.

## Training data

The BERT model was pretrained on [BookCorpus](https://yknzhu.wixsite.com/mbweb), a dataset consisting of 11,038

unpublished books and [English Wikipedia](https://en.wikipedia.org/wiki/English_Wikipedia) (excluding lists, tables and

headers).

## Training procedure

### Preprocessing

The texts are lowercased and tokenized using WordPiece and a vocabulary size of 30,000. The inputs of the model are

then of the form:

```

[CLS] Sentence A [SEP] Sentence B [SEP]

```

With probability 0.5, sentence A and sentence B correspond to two consecutive sentences in the original corpus and in

the other cases, it's another random sentence in the corpus. Note that what is considered a sentence here is a

consecutive span of text usually longer than a single sentence. The only constrain is that the result with the two

"sentences" has a combined length of less than 512 tokens.

The details of the masking procedure for each sentence are the following:

- 15% of the tokens are masked.

- In 80% of the cases, the masked tokens are replaced by `[MASK]`.

- In 10% of the cases, the masked tokens are replaced by a random token (different) from the one they replace.

- In the 10% remaining cases, the masked tokens are left as is.

### Pretraining

The model was trained on 4 cloud TPUs in Pod configuration (16 TPU chips total) for one million steps with a batch size

of 256. The sequence length was limited to 128 tokens for 90% of the steps and 512 for the remaining 10%. The optimizer

used is Adam with a learning rate of 1e-4, \\(\beta_{1} = 0.9\\) and \\(\beta_{2} = 0.999\\), a weight decay of 0.01,

learning rate warmup for 10,000 steps and linear decay of the learning rate after.

## Evaluation results

When fine-tuned on downstream tasks, this model achieves the following results:

Glue test results:

| Task | MNLI-(m/mm) | QQP | QNLI | SST-2 | CoLA | STS-B | MRPC | RTE | Average |

|:----:|:-----------:|:----:|:----:|:-----:|:----:|:-----:|:----:|:----:|:-------:|

| | 84.6/83.4 | 71.2 | 90.5 | 93.5 | 52.1 | 85.8 | 88.9 | 66.4 | 79.6 |

### BibTeX entry and citation info

```bibtex

@article{DBLP:journals/corr/abs-1810-04805,

author = {Jacob Devlin and

Ming{-}Wei Chang and

Kenton Lee and

Kristina Toutanova},

title = {{BERT:} Pre-training of Deep Bidirectional Transformers for Language

Understanding},

journal = {CoRR},

volume = {abs/1810.04805},

year = {2018},

url = {http://arxiv.org/abs/1810.04805},

archivePrefix = {arXiv},

eprint = {1810.04805},

timestamp = {Tue, 30 Oct 2018 20:39:56 +0100},

biburl = {https://dblp.org/rec/journals/corr/abs-1810-04805.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}

```

<a href="https://huggingface.co/exbert/?model=bert-base-uncased">

<img width="300px" src="https://cdn-media.huggingface.co/exbert/button.png">

</a>

| {"language": "en", "license": "apache-2.0", "tags": ["exbert"], "datasets": ["bookcorpus", "wikipedia"]} | benyong/testmodel | null | [

"transformers",

"pytorch",

"tf",

"jax",

"rust",

"bert",

"fill-mask",

"exbert",

"en",

"dataset:bookcorpus",

"dataset:wikipedia",

"arxiv:1810.04805",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

null | null | {} | benzajtil/DialoGPT-small-harrypotter | null | [

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

null | transformers |

# KcELECTRA: Korean comments ELECTRA

** Updates on 2022.10.08 **

- KcELECTRA-base-v2022 (구 v2022-dev) 모델 이름이 변경되었습니다. --> KcELECTRA-base 레포의 `v2022`로 통합되었습니다.

- 위 모델의 세부 스코어를 추가하였습니다.

- 기존 KcELECTRA-base(v2021) 대비 대부분의 downstream task에서 ~1%p 수준의 성능 향상이 있습니다.

---

공개된 한국어 Transformer 계열 모델들은 대부분 한국어 위키, 뉴스 기사, 책 등 잘 정제된 데이터를 기반으로 학습한 모델입니다. 한편, 실제로 NSMC와 같은 User-Generated Noisy text domain 데이터셋은 정제되지 않았고 구어체 특징에 신조어가 많으며, 오탈자 등 공식적인 글쓰기에서 나타나지 않는 표현들이 빈번하게 등장합니다.

KcELECTRA는 위와 같은 특성의 데이터셋에 적용하기 위해, 네이버 뉴스에서 댓글과 대댓글을 수집해, 토크나이저와 ELECTRA모델을 처음부터 학습한 Pretrained ELECTRA 모델입니다.

기존 KcBERT 대비 데이터셋 증가 및 vocab 확장을 통해 상당한 수준으로 성능이 향상되었습니다.

KcELECTRA는 Huggingface의 Transformers 라이브러리를 통해 간편히 불러와 사용할 수 있습니다. (별도의 파일 다운로드가 필요하지 않습니다.)

```

💡 NOTE 💡

General Corpus로 학습한 KoELECTRA가 보편적인 task에서는 성능이 더 잘 나올 가능성이 높습니다.

KcBERT/KcELECTRA는 User genrated, Noisy text에 대해서 보다 잘 동작하는 PLM입니다.

```

## KcELECTRA Performance

- Finetune 코드는 https://github.com/Beomi/KcBERT-finetune 에서 찾아보실 수 있습니다.

- 해당 Repo의 각 Checkpoint 폴더에서 Step별 세부 스코어를 확인하실 수 있습니다.

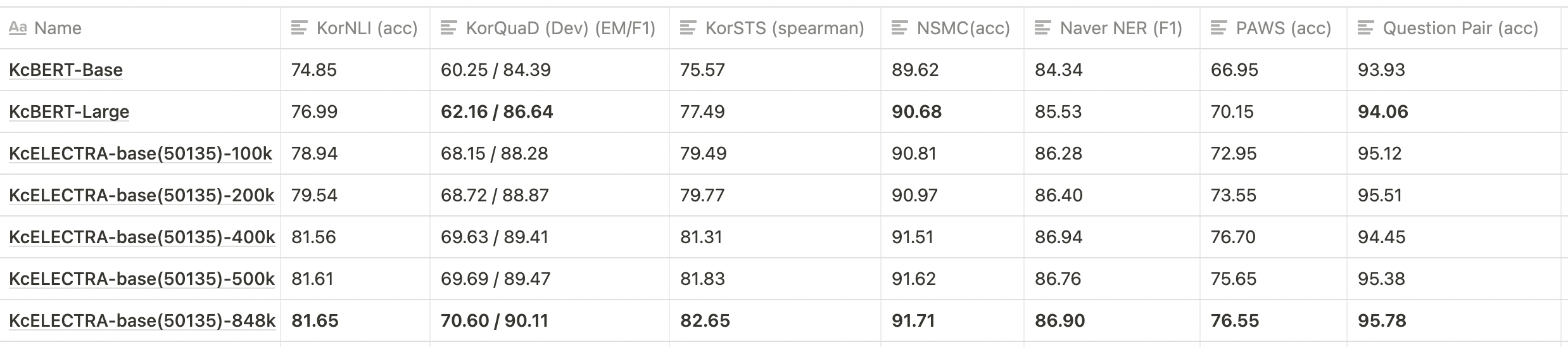

| | Size<br/>(용량) | **NSMC**<br/>(acc) | **Naver NER**<br/>(F1) | **PAWS**<br/>(acc) | **KorNLI**<br/>(acc) | **KorSTS**<br/>(spearman) | **Question Pair**<br/>(acc) | **KorQuaD (Dev)**<br/>(EM/F1) |

| :----------------- | :-------------: | :----------------: | :--------------------: | :----------------: | :------------------: | :-----------------------: | :-------------------------: | :---------------------------: |

| **KcELECTRA-base-v2022** | 475M | **91.97** | 87.35 | 76.50 | 82.12 | 83.67 | 95.12 | 69.00 / 90.40 |

| **KcELECTRA-base** | 475M | 91.71 | 86.90 | 74.80 | 81.65 | 82.65 | **95.78** | 70.60 / 90.11 |

| KcBERT-Base | 417M | 89.62 | 84.34 | 66.95 | 74.85 | 75.57 | 93.93 | 60.25 / 84.39 |

| KcBERT-Large | 1.2G | 90.68 | 85.53 | 70.15 | 76.99 | 77.49 | 94.06 | 62.16 / 86.64 |

| KoBERT | 351M | 89.63 | 86.11 | 80.65 | 79.00 | 79.64 | 93.93 | 52.81 / 80.27 |

| XLM-Roberta-Base | 1.03G | 89.49 | 86.26 | 82.95 | 79.92 | 79.09 | 93.53 | 64.70 / 88.94 |

| HanBERT | 614M | 90.16 | 87.31 | 82.40 | 80.89 | 83.33 | 94.19 | 78.74 / 92.02 |

| KoELECTRA-Base | 423M | 90.21 | 86.87 | 81.90 | 80.85 | 83.21 | 94.20 | 61.10 / 89.59 |

| KoELECTRA-Base-v2 | 423M | 89.70 | 87.02 | 83.90 | 80.61 | 84.30 | 94.72 | 84.34 / 92.58 |

| KoELECTRA-Base-v3 | 423M | 90.63 | **88.11** | **84.45** | **82.24** | **85.53** | 95.25 | **84.83 / 93.45** |

| DistilKoBERT | 108M | 88.41 | 84.13 | 62.55 | 70.55 | 73.21 | 92.48 | 54.12 / 77.80 |

\*HanBERT의 Size는 Bert Model과 Tokenizer DB를 합친 것입니다.

\***config의 세팅을 그대로 하여 돌린 결과이며, hyperparameter tuning을 추가적으로 할 시 더 좋은 성능이 나올 수 있습니다.**

## How to use

### Requirements

- `pytorch ~= 1.8.0`

- `transformers ~= 4.11.3`

- `emoji ~= 0.6.0`

- `soynlp ~= 0.0.493`

### Default usage

```python

from transformers import AutoTokenizer, AutoModel

tokenizer = AutoTokenizer.from_pretrained("beomi/KcELECTRA-base")

model = AutoModel.from_pretrained("beomi/KcELECTRA-base")

```

> 💡 이전 KcBERT 관련 코드들에서 `AutoTokenizer`, `AutoModel` 을 사용한 경우 `.from_pretrained("beomi/kcbert-base")` 부분을 `.from_pretrained("beomi/KcELECTRA-base")` 로만 변경해주시면 즉시 사용이 가능합니다.

### Pretrain & Finetune Colab 링크 모음

#### Pretrain Data

- KcBERT학습에 사용한 데이터 + 이후 2021.03월 초까지 수집한 댓글

- 약 17GB

- 댓글-대댓글을 묶은 기반으로 Document 구성

#### Pretrain Code

- https://github.com/KLUE-benchmark/KLUE-ELECTRA Repo를 통한 Pretrain

#### Finetune Code

- https://github.com/Beomi/KcBERT-finetune Repo를 통한 Finetune 및 스코어 비교

#### Finetune Samples

- NSMC with PyTorch-Lightning 1.3.0, GPU, Colab <a href="https://colab.research.google.com/drive/1Hh63kIBAiBw3Hho--BvfdUWLu-ysMFF0?usp=sharing">

<img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/>

</a>

## Train Data & Preprocessing

### Raw Data

학습 데이터는 2019.01.01 ~ 2021.03.09 사이에 작성된 **댓글 많은 뉴스/혹은 전체 뉴스** 기사들의 **댓글과 대댓글**을 모두 수집한 데이터입니다.

데이터 사이즈는 텍스트만 추출시 **약 17.3GB이며, 1억8천만개 이상의 문장**으로 이뤄져 있습니다.

> KcBERT는 2019.01-2020.06의 텍스트로, 정제 후 약 9천만개 문장으로 학습을 진행했습니다.

### Preprocessing

PLM 학습을 위해서 전처리를 진행한 과정은 다음과 같습니다.

1. 한글 및 영어, 특수문자, 그리고 이모지(🥳)까지!

정규표현식을 통해 한글, 영어, 특수문자를 포함해 Emoji까지 학습 대상에 포함했습니다.

한편, 한글 범위를 `ㄱ-ㅎ가-힣` 으로 지정해 `ㄱ-힣` 내의 한자를 제외했습니다.

2. 댓글 내 중복 문자열 축약

`ㅋㅋㅋㅋㅋ`와 같이 중복된 글자를 `ㅋㅋ`와 같은 것으로 합쳤습니다.

3. Cased Model

KcBERT는 영문에 대해서는 대소문자를 유지하는 Cased model입니다.

4. 글자 단위 10글자 이하 제거

10글자 미만의 텍스트는 단일 단어로 이뤄진 경우가 많아 해당 부분을 제외했습니다.

5. 중복 제거

중복적으로 쓰인 댓글을 제거하기 위해 완전히 일치하는 중복 댓글을 하나로 합쳤습니다.

6. `OOO` 제거

네이버 댓글의 경우, 비속어는 자체 필터링을 통해 `OOO` 로 표시합니다. 이 부분을 공백으로 제거하였습니다.

아래 명령어로 pip로 설치한 뒤, 아래 clean함수로 클리닝을 하면 Downstream task에서 보다 성능이 좋아집니다. (`[UNK]` 감소)

```bash

pip install soynlp emoji

```

아래 `clean` 함수를 Text data에 사용해주세요.

```python

import re

import emoji

from soynlp.normalizer import repeat_normalize

emojis = ''.join(emoji.UNICODE_EMOJI.keys())

pattern = re.compile(f'[^ .,?!/@$%~%·∼()\x00-\x7Fㄱ-ㅣ가-힣{emojis}]+')

url_pattern = re.compile(

r'https?:\/\/(www\.)?[-a-zA-Z0-9@:%._\+~#=]{1,256}\.[a-zA-Z0-9()]{1,6}\b([-a-zA-Z0-9()@:%_\+.~#?&//=]*)')

import re

import emoji

from soynlp.normalizer import repeat_normalize

pattern = re.compile(f'[^ .,?!/@$%~%·∼()\x00-\x7Fㄱ-ㅣ가-힣]+')

url_pattern = re.compile(

r'https?:\/\/(www\.)?[-a-zA-Z0-9@:%._\+~#=]{1,256}\.[a-zA-Z0-9()]{1,6}\b([-a-zA-Z0-9()@:%_\+.~#?&//=]*)')

def clean(x):

x = pattern.sub(' ', x)

x = emoji.replace_emoji(x, replace='') #emoji 삭제

x = url_pattern.sub('', x)

x = x.strip()

x = repeat_normalize(x, num_repeats=2)

return x

```

> 💡 Finetune Score에서는 위 `clean` 함수를 적용하지 않았습니다.

### Cleaned Data

- KcBERT 외 추가 데이터는 정리 후 공개 예정입니다.

## Tokenizer, Model Train

Tokenizer는 Huggingface의 [Tokenizers](https://github.com/huggingface/tokenizers) 라이브러리를 통해 학습을 진행했습니다.

그 중 `BertWordPieceTokenizer` 를 이용해 학습을 진행했고, Vocab Size는 `30000`으로 진행했습니다.

Tokenizer를 학습하는 것에는 전체 데이터를 통해 학습을 진행했고, 모델의 General Downstream task에 대응하기 위해 KoELECTRA에서 사용한 Vocab을 겹치지 않는 부분을 추가로 넣어주었습니다. (실제로 두 모델이 겹치는 부분은 약 5000토큰이었습니다.)

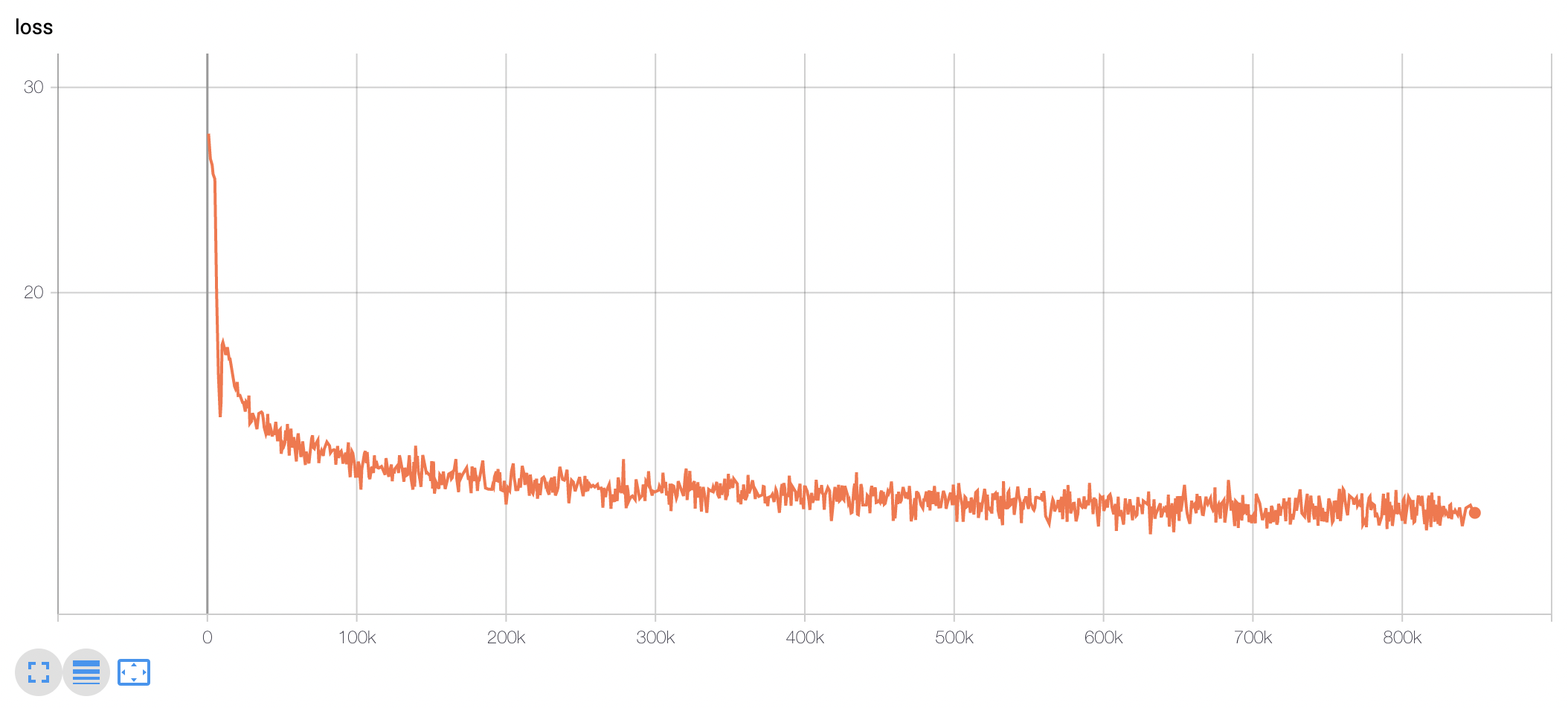

TPU `v3-8` 을 이용해 약 10일 학습을 진행했고, 현재 Huggingface에 공개된 모델은 848k step을 학습한 모델 weight가 업로드 되어있습니다.

(100k step별 Checkpoint를 통해 성능 평가를 진행하였습니다. 해당 부분은 `KcBERT-finetune` repo를 참고해주세요.)

모델 학습 Loss는 Step에 따라 초기 100-200k 사이에 급격히 Loss가 줄어들다 학습 종료까지도 지속적으로 loss가 감소하는 것을 볼 수 있습니다.

### KcELECTRA Pretrain Step별 Downstream task 성능 비교

> 💡 아래 표는 전체 ckpt가 아닌 일부에 대해서만 테스트를 진행한 결과입니다.

- 위와 같이 KcBERT-base, KcBERT-large 대비 **모든 데이터셋에 대해** KcELECTRA-base가 더 높은 성능을 보입니다.

- KcELECTRA pretrain에서도 Train step이 늘어감에 따라 점진적으로 성능이 향상되는 것을 볼 수 있습니다.

## 인용표기/Citation

KcELECTRA를 인용하실 때는 아래 양식을 통해 인용해주세요.

```

@misc{lee2021kcelectra,

author = {Junbum Lee},

title = {KcELECTRA: Korean comments ELECTRA},

year = {2021},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {\url{https://github.com/Beomi/KcELECTRA}}

}

```

논문을 통한 사용 외에는 MIT 라이센스를 표기해주세요. ☺️

## Acknowledgement

KcELECTRA Model을 학습하는 GCP/TPU 환경은 [TFRC](https://www.tensorflow.org/tfrc?hl=ko) 프로그램의 지원을 받았습니다.

모델 학습 과정에서 많은 조언을 주신 [Monologg](https://github.com/monologg/) 님 감사합니다 :)

## Reference

### Github Repos

- [KcBERT by Beomi](https://github.com/Beomi/KcBERT)

- [BERT by Google](https://github.com/google-research/bert)

- [KoBERT by SKT](https://github.com/SKTBrain/KoBERT)

- [KoELECTRA by Monologg](https://github.com/monologg/KoELECTRA/)

- [Transformers by Huggingface](https://github.com/huggingface/transformers)

- [Tokenizers by Hugginface](https://github.com/huggingface/tokenizers)

- [ELECTRA train code by KLUE](https://github.com/KLUE-benchmark/KLUE-ELECTRA)

### Blogs

- [Monologg님의 KoELECTRA 학습기](https://monologg.kr/categories/NLP/ELECTRA/)

- [Colab에서 TPU로 BERT 처음부터 학습시키기 - Tensorflow/Google ver.](https://beomi.github.io/2020/02/26/Train-BERT-from-scratch-on-colab-TPU-Tensorflow-ver/)

| {"language": ["ko", "en"], "license": "mit", "tags": ["electra", "korean"]} | beomi/KcELECTRA-base | null | [

"transformers",

"pytorch",

"electra",

"pretraining",

"korean",

"ko",

"en",

"doi:10.57967/hf/0017",

"license:mit",

"endpoints_compatible",

"has_space",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

text2text-generation | transformers | {"license": "mit"} | beomi/KcT5-dev | null | [

"transformers",

"jax",

"t5",

"text2text-generation",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

null | null | {"license": "mit"} | beomi/KcT5 | null | [

"license:mit",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers | {} | beomi/beep-KR-Medium-hate | null | [

"transformers",

"pytorch",

"safetensors",

"bert",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers | {} | beomi/beep-KcELECTRA-base-bias | null | [

"transformers",

"pytorch",

"safetensors",

"electra",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers | {} | beomi/beep-KcELECTRA-base-hate | null | [

"transformers",

"pytorch",

"electra",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

null | null | {} | beomi/beep-KcELECTRA-base-hate_singlelabel | null | [

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers | {} | beomi/beep-kcbert-base-bias | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers | {} | beomi/beep-kcbert-base-hate | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers | {} | beomi/beep-klue-roberta-base-bias | null | [

"transformers",

"pytorch",

"roberta",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers | {} | beomi/beep-klue-roberta-base-hate | null | [

"transformers",

"pytorch",

"roberta",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers | {} | beomi/beep-koelectra-base-v3-discriminator-bias | null | [

"transformers",

"pytorch",

"electra",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers | {} | beomi/beep-koelectra-base-v3-discriminator-hate | null | [

"transformers",

"pytorch",

"electra",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

null | null | {} | beomi/beep-roberta-base-hate | null | [

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

fill-mask | transformers | {} | beomi/detox-kcbert-base | null | [

"transformers",

"pytorch",

"bert",

"fill-mask",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-cola

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.7525

- Matthews Correlation: 0.5553

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Matthews Correlation |

|:-------------:|:-----:|:----:|:---------------:|:--------------------:|

| 0.523 | 1.0 | 535 | 0.5024 | 0.4160 |

| 0.3437 | 2.0 | 1070 | 0.5450 | 0.4965 |

| 0.2326 | 3.0 | 1605 | 0.6305 | 0.5189 |

| 0.177 | 4.0 | 2140 | 0.7525 | 0.5553 |

| 0.1354 | 5.0 | 2675 | 0.8630 | 0.5291 |

### Framework versions

- Transformers 4.11.3

- Pytorch 1.9.0+cu111

- Datasets 1.13.3

- Tokenizers 0.10.3

| {"license": "apache-2.0", "tags": ["generated_from_trainer"], "datasets": ["glue"], "metrics": ["matthews_correlation"], "model-index": [{"name": "distilbert-base-uncased-finetuned-cola", "results": [{"task": {"type": "text-classification", "name": "Text Classification"}, "dataset": {"name": "glue", "type": "glue", "args": "cola"}, "metrics": [{"type": "matthews_correlation", "value": 0.5552849676135797, "name": "Matthews Correlation"}]}]}]} | beomi/distilbert-base-uncased-finetuned-cola | null | [

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

fill-mask | transformers | {} | beomi/exKcBERT-kowiki | null | [

"transformers",

"pytorch",

"exbert",

"fill-mask",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

fill-mask | transformers | {} | beomi/exKcBERT-paws-extonly | null | [

"transformers",

"pytorch",

"exbert",

"fill-mask",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

fill-mask | transformers | {} | beomi/exKcBERT-paws | null | [

"transformers",

"pytorch",

"exbert",

"fill-mask",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

null | null | {} | beomi/exKcBERT | null | [

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

fill-mask | transformers | {} | beomi/kcbert-base-dev | null | [

"transformers",

"pytorch",

"jax",

"bert",

"fill-mask",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

fill-mask | transformers |

# KcBERT: Korean comments BERT

** Updates on 2021.04.07 **

- KcELECTRA가 릴리즈 되었습니다!🤗

- KcELECTRA는 보다 더 많은 데이터셋, 그리고 더 큰 General vocab을 통해 KcBERT 대비 **모든 태스크에서 더 높은 성능**을 보입니다.

- 아래 깃헙 링크에서 직접 사용해보세요!

- https://github.com/Beomi/KcELECTRA

** Updates on 2021.03.14 **

- KcBERT Paper 인용 표기를 추가하였습니다.(bibtex)

- KcBERT-finetune Performance score를 본문에 추가하였습니다.

** Updates on 2020.12.04 **

Huggingface Transformers가 v4.0.0으로 업데이트됨에 따라 Tutorial의 코드가 일부 변경되었습니다.

업데이트된 KcBERT-Large NSMC Finetuning Colab: <a href="https://colab.research.google.com/drive/1dFC0FL-521m7CL_PSd8RLKq67jgTJVhL?usp=sharing">

<img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/>

</a>

** Updates on 2020.09.11 **

KcBERT를 Google Colab에서 TPU를 통해 학습할 수 있는 튜토리얼을 제공합니다! 아래 버튼을 눌러보세요.

Colab에서 TPU로 KcBERT Pretrain 해보기: <a href="https://colab.research.google.com/drive/1lYBYtaXqt9S733OXdXvrvC09ysKFN30W">

<img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/>

</a>

텍스트 분량만 전체 12G 텍스트 중 일부(144MB)로 줄여 학습을 진행합니다.

한국어 데이터셋/코퍼스를 좀더 쉽게 사용할 수 있는 [Korpora](https://github.com/ko-nlp/Korpora) 패키지를 사용합니다.

** Updates on 2020.09.08 **

Github Release를 통해 학습 데이터를 업로드하였습니다.

다만 한 파일당 2GB 이내의 제약으로 인해 분할압축되어있습니다.

아래 링크를 통해 받아주세요. (가입 없이 받을 수 있어요. 분할압축)

만약 한 파일로 받고싶으시거나/Kaggle에서 데이터를 살펴보고 싶으시다면 아래의 캐글 데이터셋을 이용해주세요.

- Github릴리즈: https://github.com/Beomi/KcBERT/releases/tag/TrainData_v1

** Updates on 2020.08.22 **

Pretrain Dataset 공개

- 캐글: https://www.kaggle.com/junbumlee/kcbert-pretraining-corpus-korean-news-comments (한 파일로 받을 수 있어요. 단일파일)

Kaggle에 학습을 위해 정제한(아래 `clean`처리를 거친) Dataset을 공개하였습니다!

직접 다운받으셔서 다양한 Task에 학습을 진행해보세요 :)

---

공개된 한국어 BERT는 대부분 한국어 위키, 뉴스 기사, 책 등 잘 정제된 데이터를 기반으로 학습한 모델입니다. 한편, 실제로 NSMC와 같은 댓글형 데이터셋은 정제되지 않았고 구어체 특징에 신조어가 많으며, 오탈자 등 공식적인 글쓰기에서 나타나지 않는 표현들이 빈번하게 등장합니다.

KcBERT는 위와 같은 특성의 데이터셋에 적용하기 위해, 네이버 뉴스에서 댓글과 대댓글을 수집해, 토크나이저와 BERT모델을 처음부터 학습한 Pretrained BERT 모델입니다.

KcBERT는 Huggingface의 Transformers 라이브러리를 통해 간편히 불러와 사용할 수 있습니다. (별도의 파일 다운로드가 필요하지 않습니다.)

## KcBERT Performance

- Finetune 코드는 https://github.com/Beomi/KcBERT-finetune 에서 찾아보실 수 있습니다.

| | Size<br/>(용량) | **NSMC**<br/>(acc) | **Naver NER**<br/>(F1) | **PAWS**<br/>(acc) | **KorNLI**<br/>(acc) | **KorSTS**<br/>(spearman) | **Question Pair**<br/>(acc) | **KorQuaD (Dev)**<br/>(EM/F1) |

| :-------------------- | :---: | :----------------: | :--------------------: | :----------------: | :------------------: | :-----------------------: | :-------------------------: | :---------------------------: |

| KcBERT-Base | 417M | 89.62 | 84.34 | 66.95 | 74.85 | 75.57 | 93.93 | 60.25 / 84.39 |

| KcBERT-Large | 1.2G | **90.68** | 85.53 | 70.15 | 76.99 | 77.49 | 94.06 | 62.16 / 86.64 |

| KoBERT | 351M | 89.63 | 86.11 | 80.65 | 79.00 | 79.64 | 93.93 | 52.81 / 80.27 |

| XLM-Roberta-Base | 1.03G | 89.49 | 86.26 | 82.95 | 79.92 | 79.09 | 93.53 | 64.70 / 88.94 |

| HanBERT | 614M | 90.16 | **87.31** | 82.40 | **80.89** | 83.33 | 94.19 | 78.74 / 92.02 |

| KoELECTRA-Base | 423M | **90.21** | 86.87 | 81.90 | 80.85 | 83.21 | 94.20 | 61.10 / 89.59 |

| KoELECTRA-Base-v2 | 423M | 89.70 | 87.02 | **83.90** | 80.61 | **84.30** | **94.72** | **84.34 / 92.58** |

| DistilKoBERT | 108M | 88.41 | 84.13 | 62.55 | 70.55 | 73.21 | 92.48 | 54.12 / 77.80 |

\*HanBERT의 Size는 Bert Model과 Tokenizer DB를 합친 것입니다.

\***config의 세팅을 그대로 하여 돌린 결과이며, hyperparameter tuning을 추가적으로 할 시 더 좋은 성능이 나올 수 있습니다.**

## How to use

### Requirements

- `pytorch <= 1.8.0`

- `transformers ~= 3.0.1`

- `transformers ~= 4.0.0` 도 호환됩니다.

- `emoji ~= 0.6.0`

- `soynlp ~= 0.0.493`

```python

from transformers import AutoTokenizer, AutoModelWithLMHead

# Base Model (108M)

tokenizer = AutoTokenizer.from_pretrained("beomi/kcbert-base")

model = AutoModelWithLMHead.from_pretrained("beomi/kcbert-base")

# Large Model (334M)

tokenizer = AutoTokenizer.from_pretrained("beomi/kcbert-large")

model = AutoModelWithLMHead.from_pretrained("beomi/kcbert-large")

```

### Pretrain & Finetune Colab 링크 모음

#### Pretrain Data

- [데이터셋 다운로드(Kaggle, 단일파일, 로그인 필요)](https://www.kaggle.com/junbumlee/kcbert-pretraining-corpus-korean-news-comments)

- [데이터셋 다운로드(Github, 압축 여러파일, 로그인 불필요)](https://github.com/Beomi/KcBERT/releases/tag/TrainData_v1)

#### Pretrain Code

Colab에서 TPU로 KcBERT Pretrain 해보기: <a href="https://colab.research.google.com/drive/1lYBYtaXqt9S733OXdXvrvC09ysKFN30W">

<img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/>

</a>

#### Finetune Samples

**KcBERT-Base** NSMC Finetuning with PyTorch-Lightning (Colab) <a href="https://colab.research.google.com/drive/1fn4sVJ82BrrInjq6y5655CYPP-1UKCLb?usp=sharing">

<img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/>

</a>

**KcBERT-Large** NSMC Finetuning with PyTorch-Lightning (Colab) <a href="https://colab.research.google.com/drive/1dFC0FL-521m7CL_PSd8RLKq67jgTJVhL?usp=sharing">

<img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/>

</a>

> 위 두 코드는 Pretrain 모델(base, large)와 batch size만 다를 뿐, 나머지 코드는 완전히 동일합니다.

## Train Data & Preprocessing

### Raw Data

학습 데이터는 2019.01.01 ~ 2020.06.15 사이에 작성된 **댓글 많은 뉴스** 기사들의 **댓글과 대댓글**을 모두 수집한 데이터입니다.

데이터 사이즈는 텍스트만 추출시 **약 15.4GB이며, 1억1천만개 이상의 문장**으로 이뤄져 있습니다.

### Preprocessing

PLM 학습을 위해서 전처리를 진행한 과정은 다음과 같습니다.

1. 한글 및 영어, 특수문자, 그리고 이모지(🥳)까지!

정규표현식을 통해 한글, 영어, 특수문자를 포함해 Emoji까지 학습 대상에 포함했습니다.

한편, 한글 범위를 `ㄱ-ㅎ가-힣` 으로 지정해 `ㄱ-힣` 내의 한자를 제외했습니다.

2. 댓글 내 중복 문자열 축약

`ㅋㅋㅋㅋㅋ`와 같이 중복된 글자를 `ㅋㅋ`와 같은 것으로 합쳤습니다.

3. Cased Model

KcBERT는 영문에 대해서는 대소문자를 유지하는 Cased model입니다.

4. 글자 단위 10글자 이하 제거

10글자 미만의 텍스트는 단일 단어로 이뤄진 경우가 많아 해당 부분을 제외했습니다.

5. 중복 제거

중복적으로 쓰인 댓글을 제거하기 위해 중복 댓글을 하나로 합쳤습니다.

이를 통해 만든 최종 학습 데이터는 **12.5GB, 8.9천만개 문장**입니다.

아래 명령어로 pip로 설치한 뒤, 아래 clean함수로 클리닝을 하면 Downstream task에서 보다 성능이 좋아집니다. (`[UNK]` 감소)

```bash

pip install soynlp emoji

```

아래 `clean` 함수를 Text data에 사용해주세요.

```python

import re

import emoji

from soynlp.normalizer import repeat_normalize

emojis = list({y for x in emoji.UNICODE_EMOJI.values() for y in x.keys()})

emojis = ''.join(emojis)

pattern = re.compile(f'[^ .,?!/@$%~%·∼()\x00-\x7Fㄱ-ㅣ가-힣{emojis}]+')

url_pattern = re.compile(

r'https?:\/\/(www\.)?[-a-zA-Z0-9@:%._\+~#=]{1,256}\.[a-zA-Z0-9()]{1,6}\b([-a-zA-Z0-9()@:%_\+.~#?&//=]*)')

def clean(x):

x = pattern.sub(' ', x)

x = url_pattern.sub('', x)

x = x.strip()

x = repeat_normalize(x, num_repeats=2)

return x

```

### Cleaned Data (Released on Kaggle)

원본 데이터를 위 `clean`함수로 정제한 12GB분량의 txt 파일을 아래 Kaggle Dataset에서 다운받으실 수 있습니다 :)

https://www.kaggle.com/junbumlee/kcbert-pretraining-corpus-korean-news-comments

## Tokenizer Train

Tokenizer는 Huggingface의 [Tokenizers](https://github.com/huggingface/tokenizers) 라이브러리를 통해 학습을 진행했습니다.

그 중 `BertWordPieceTokenizer` 를 이용해 학습을 진행했고, Vocab Size는 `30000`으로 진행했습니다.

Tokenizer를 학습하는 것에는 `1/10`로 샘플링한 데이터로 학습을 진행했고, 보다 골고루 샘플링하기 위해 일자별로 stratify를 지정한 뒤 햑습을 진행했습니다.

## BERT Model Pretrain

- KcBERT Base config

```json

{

"max_position_embeddings": 300,

"hidden_dropout_prob": 0.1,

"hidden_act": "gelu",

"initializer_range": 0.02,

"num_hidden_layers": 12,

"type_vocab_size": 2,

"vocab_size": 30000,

"hidden_size": 768,

"attention_probs_dropout_prob": 0.1,

"directionality": "bidi",

"num_attention_heads": 12,

"intermediate_size": 3072,

"architectures": [

"BertForMaskedLM"

],

"model_type": "bert"

}

```

- KcBERT Large config

```json

{

"type_vocab_size": 2,

"initializer_range": 0.02,

"max_position_embeddings": 300,

"vocab_size": 30000,

"hidden_size": 1024,

"hidden_dropout_prob": 0.1,

"model_type": "bert",

"directionality": "bidi",

"pad_token_id": 0,

"layer_norm_eps": 1e-12,

"hidden_act": "gelu",

"num_hidden_layers": 24,

"num_attention_heads": 16,

"attention_probs_dropout_prob": 0.1,

"intermediate_size": 4096,

"architectures": [

"BertForMaskedLM"

]

}

```

BERT Model Config는 Base, Large 기본 세팅값을 그대로 사용했습니다. (MLM 15% 등)

TPU `v3-8` 을 이용해 각각 3일, N일(Large는 학습 진행 중)을 진행했고, 현재 Huggingface에 공개된 모델은 1m(100만) step을 학습한 ckpt가 업로드 되어있습니다.

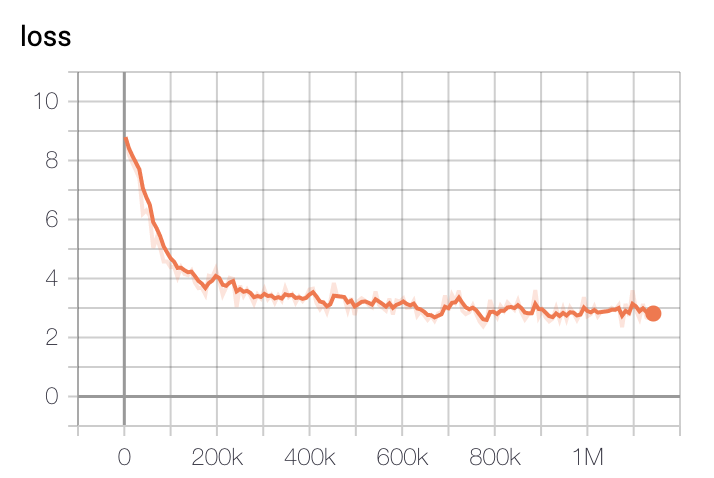

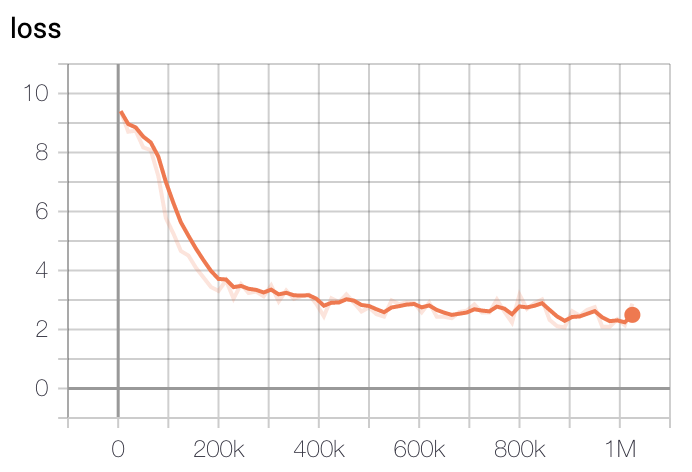

모델 학습 Loss는 Step에 따라 초기 200k에 가장 빠르게 Loss가 줄어들다 400k이후로는 조금씩 감소하는 것을 볼 수 있습니다.

- Base Model Loss

- Large Model Loss

학습은 GCP의 TPU v3-8을 이용해 학습을 진행했고, 학습 시간은 Base Model 기준 2.5일정도 진행했습니다. Large Model은 약 5일정도 진행한 뒤 가장 낮은 loss를 가진 체크포인트로 정했습니다.

## Example

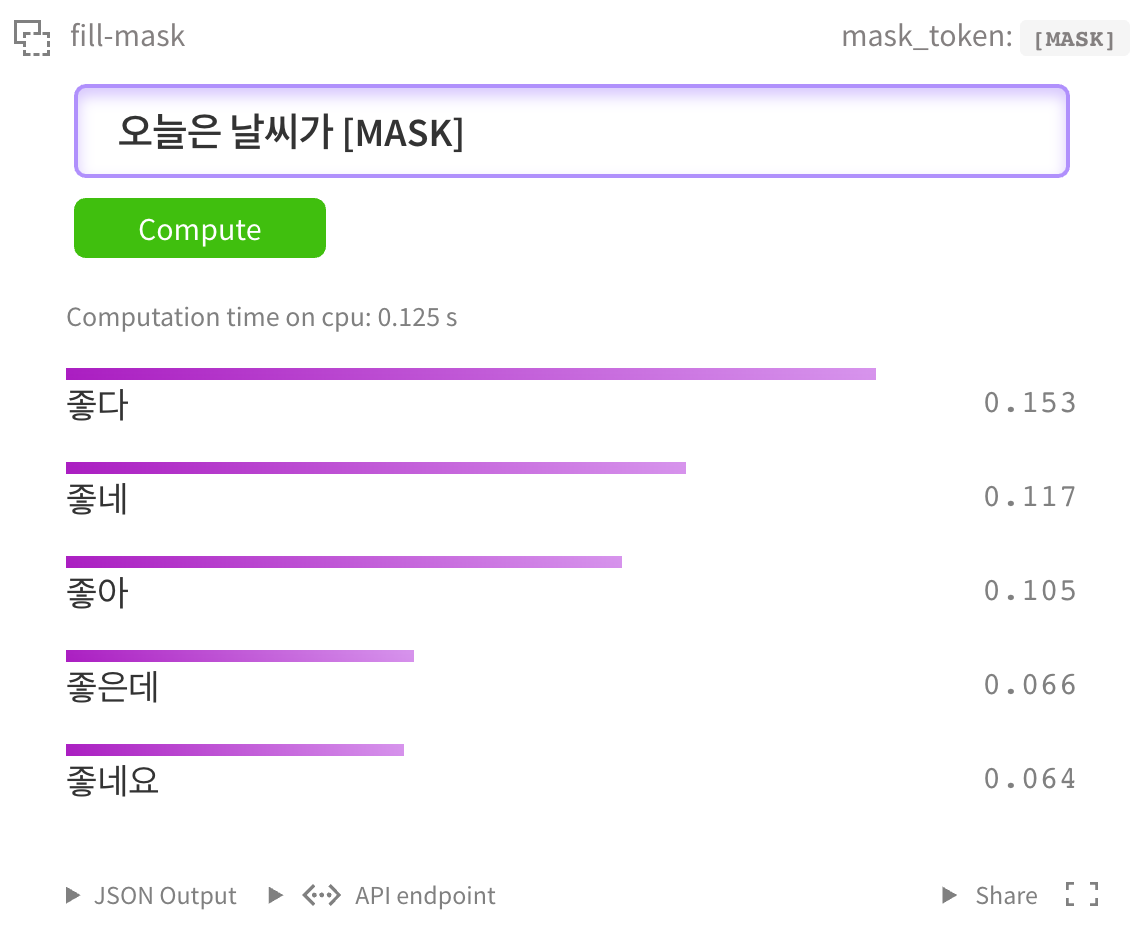

### HuggingFace MASK LM

[HuggingFace kcbert-base 모델](https://huggingface.co/beomi/kcbert-base?text=오늘은+날씨가+[MASK]) 에서 아래와 같이 테스트 해 볼 수 있습니다.

물론 [kcbert-large 모델](https://huggingface.co/beomi/kcbert-large?text=오늘은+날씨가+[MASK]) 에서도 테스트 할 수 있습니다.

### NSMC Binary Classification

[네이버 영화평 코퍼스](https://github.com/e9t/nsmc) 데이터셋을 대상으로 Fine Tuning을 진행해 성능을 간단히 테스트해보았습니다.

Base Model을 Fine Tune하는 코드는 <a href="https://colab.research.google.com/drive/1fn4sVJ82BrrInjq6y5655CYPP-1UKCLb?usp=sharing">

<img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/>

</a> 에서 직접 실행해보실 수 있습니다.

Large Model을 Fine Tune하는 코드는 <a href="https://colab.research.google.com/drive/1dFC0FL-521m7CL_PSd8RLKq67jgTJVhL?usp=sharing">

<img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/>

</a> 에서 직접 실행해볼 수 있습니다.

- GPU는 P100 x1대 기준 1epoch에 2-3시간, TPU는 1epoch에 1시간 내로 소요됩니다.

- GPU RTX Titan x4대 기준 30분/epoch 소요됩니다.

- 예시 코드는 [pytorch-lightning](https://github.com/PyTorchLightning/pytorch-lightning)으로 개발했습니다.

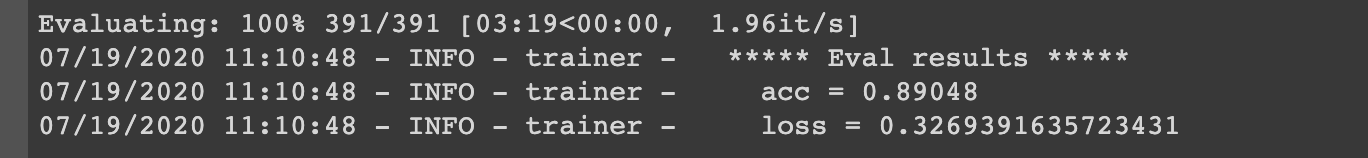

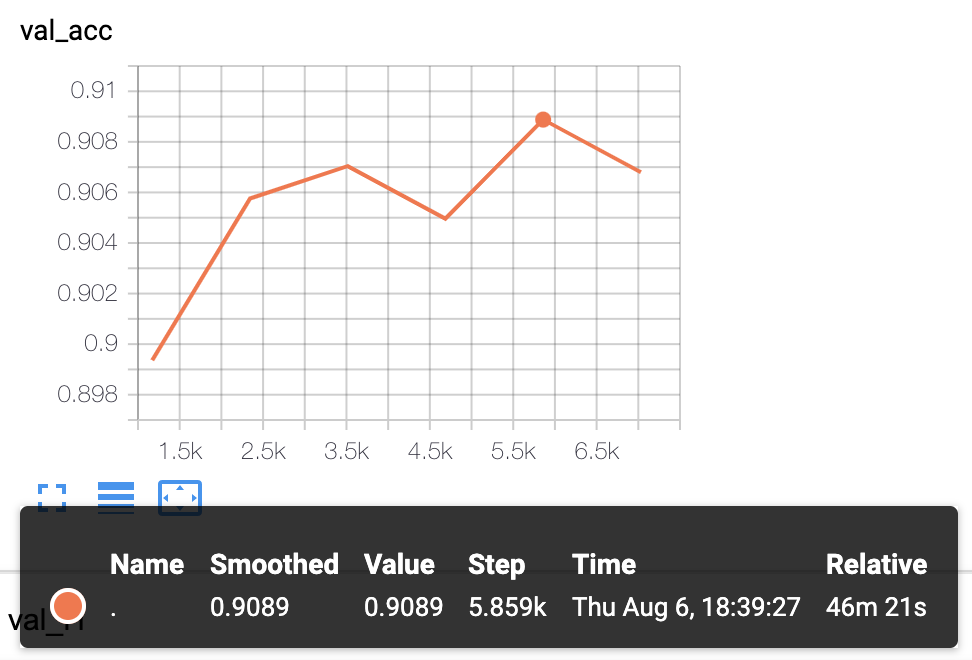

#### 실험결과

- KcBERT-Base Model 실험결과: Val acc `.8905`

- KcBERT-Large Model 실험 결과: Val acc `.9089`

> 더 다양한 Downstream Task에 대해 테스트를 진행하고 공개할 예정입니다.

## 인용표기/Citation

KcBERT를 인용하실 때는 아래 양식을 통해 인용해주세요.

```

@inproceedings{lee2020kcbert,

title={KcBERT: Korean Comments BERT},

author={Lee, Junbum},

booktitle={Proceedings of the 32nd Annual Conference on Human and Cognitive Language Technology},

pages={437--440},

year={2020}

}

```

- 논문집 다운로드 링크: http://hclt.kr/dwn/?v=bG5iOmNvbmZlcmVuY2U7aWR4OjMy (*혹은 http://hclt.kr/symp/?lnb=conference )

## Acknowledgement

KcBERT Model을 학습하는 GCP/TPU 환경은 [TFRC](https://www.tensorflow.org/tfrc?hl=ko) 프로그램의 지원을 받았습니다.

모델 학습 과정에서 많은 조언을 주신 [Monologg](https://github.com/monologg/) 님 감사합니다 :)

## Reference

### Github Repos

- [BERT by Google](https://github.com/google-research/bert)

- [KoBERT by SKT](https://github.com/SKTBrain/KoBERT)

- [KoELECTRA by Monologg](https://github.com/monologg/KoELECTRA/)

- [Transformers by Huggingface](https://github.com/huggingface/transformers)

- [Tokenizers by Hugginface](https://github.com/huggingface/tokenizers)

### Papers

- [BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding](https://arxiv.org/abs/1810.04805)

### Blogs

- [Monologg님의 KoELECTRA 학습기](https://monologg.kr/categories/NLP/ELECTRA/)

- [Colab에서 TPU로 BERT 처음부터 학습시키기 - Tensorflow/Google ver.](https://beomi.github.io/2020/02/26/Train-BERT-from-scratch-on-colab-TPU-Tensorflow-ver/)

| {"language": "ko", "license": "apache-2.0", "tags": ["korean"]} | beomi/kcbert-base | null | [

"transformers",

"pytorch",

"jax",

"safetensors",

"bert",

"fill-mask",

"korean",

"ko",

"arxiv:1810.04805",

"doi:10.57967/hf/0016",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

fill-mask | transformers | {} | beomi/kcbert-large-dev | null | [

"transformers",

"pytorch",

"jax",

"bert",

"fill-mask",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

fill-mask | transformers | {} | beomi/kcbert-large | null | [

"transformers",

"pytorch",

"jax",

"safetensors",

"bert",

"fill-mask",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-generation | transformers | {} | beomi/kcgpt2-dev | null | [

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-generation | transformers | {} | beomi/kcgpt2 | null | [

"transformers",

"pytorch",

"gpt2",

"text-generation",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

fill-mask | transformers | {} | beomi/kobert | null | [

"transformers",

"pytorch",

"safetensors",

"bert",

"fill-mask",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers | {} | beomi/korean-hatespeech-classifier | null | [

"transformers",

"pytorch",

"electra",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers | {} | beomi/korean-hatespeech-multilabel | null | [

"transformers",

"pytorch",

"electra",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers | {} | beomi/korean-lgbt-hatespeech-classifier | null | [

"transformers",

"pytorch",

"electra",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-generation | transformers |

# Bert base model for Korean

## Update

- Update at 2021.11.17 : Add Native Support for BERT Tokenizer (works with AutoTokenizer, pipeline)

---

* 70GB Korean text dataset and 42000 lower-cased subwords are used

* Check the model performance and other language models for Korean in [github](https://github.com/kiyoungkim1/LM-kor)

```python

from transformers import pipeline

pipe = pipeline('text-generation', model='beomi/kykim-gpt3-kor-small_based_on_gpt2')

print(pipe("안녕하세요! 오늘은"))

# [{'generated_text': '안녕하세요! 오늘은 제가 요즘 사용하고 있는 클렌징워터를 소개해드리려고 해요! 바로 이 제품!! 바로 이'}]

```

| {"language": "ko"} | beomi/kykim-gpt3-kor-small_based_on_gpt2 | null | [

"transformers",

"pytorch",

"tf",

"jax",

"gpt2",

"text-generation",

"ko",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

token-classification | transformers | # LayoutXLM finetuned on XFUN.ja

```python

import torch

import numpy as np

from PIL import Image, ImageDraw, ImageFont

from pathlib import Path

from itertools import chain

from tqdm.notebook import tqdm

from pdf2image import convert_from_path

from transformers import LayoutXLMProcessor, LayoutLMv2ForTokenClassification

import os

os.environ["TOKENIZERS_PARALLELISM"] = "false"

labels = [

'O',

'B-QUESTION',

'B-ANSWER',

'B-HEADER',

'I-ANSWER',

'I-QUESTION',

'I-HEADER'

]

id2label = {v: k for v, k in enumerate(labels)}

label2id = {k: v for v, k in enumerate(labels)}

def unnormalize_box(bbox, width, height):

return [

width * (bbox[0] / 1000),

height * (bbox[1] / 1000),

width * (bbox[2] / 1000),

height * (bbox[3] / 1000),

]

def iob_to_label(label):

label = label[2:]

if not label:

return 'other'

return label

label2color = {'question':'blue', 'answer':'green', 'header':'orange', 'other':'violet'}

def infer(image, processor, model, label2color):

# Use this if you're loading images

# image = Image.open(img_path).convert("RGB")

image = image.convert("RGB") # loading PDFs

encoding = processor(image, return_offsets_mapping=True, return_tensors="pt", truncation=True, max_length=514)

offset_mapping = encoding.pop('offset_mapping')

outputs = model(**encoding)

predictions = outputs.logits.argmax(-1).squeeze().tolist()

token_boxes = encoding.bbox.squeeze().tolist()

width, height = image.size

is_subword = np.array(offset_mapping.squeeze().tolist())[:,0] != 0

true_predictions = [id2label[pred] for idx, pred in enumerate(predictions) if not is_subword[idx]]

true_boxes = [unnormalize_box(box, width, height) for idx, box in enumerate(token_boxes) if not is_subword[idx]]

draw = ImageDraw.Draw(image)

font = ImageFont.load_default()

for prediction, box in zip(true_predictions, true_boxes):

predicted_label = iob_to_label(prediction).lower()

draw.rectangle(box, outline=label2color[predicted_label])

draw.text((box[0]+10, box[1]-10), text=predicted_label, fill=label2color[predicted_label], font=font)

return image

processor = LayoutXLMProcessor.from_pretrained('beomus/layoutxlm')

model = LayoutLMv2ForTokenClassification.from_pretrained("beomus/layoutxlm")

# imgs = [img_path for img_path in Path('/your/path/imgs/').glob('*.jpg')]

imgs = [convert_from_path(img_path) for img_path in Path('/your/path/pdfs/').glob('*.pdf')]

imgs = list(chain.from_iterable(imgs))

outputs = [infer(img_path, processor, model, label2color) for img_path in tqdm(imgs)]

# type(outputs[0]) -> PIL.Image.Image

``` | {} | beomus/layoutxlm | null | [

"transformers",

"pytorch",

"layoutlmv2",

"token-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

text-generation | transformers | {} | beomus/lotr-gpt | null | [

"transformers",

"pytorch",

"safetensors",

"gpt2",

"text-generation",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

null | null | {} | bergr7/finbert_sec | null | [

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers |

# xtremedistil-emotion

This model is a fine-tuned version of [microsoft/xtremedistil-l6-h256-uncased](https://huggingface.co/microsoft/xtremedistil-l6-h256-uncased) on the emotion dataset.

It achieves the following results on the evaluation set:

- Accuracy: 0.9265

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 3e-05

- train_batch_size: 128

- eval_batch_size: 8

- seed: 42

- num_epochs: 24

### Training results

<pre>

Epoch Training Loss Validation Loss Accuracy

1 No log 1.238589 0.609000

2 No log 0.934423 0.714000

3 No log 0.768701 0.742000

4 1.074800 0.638208 0.805500

5 1.074800 0.551363 0.851500

6 1.074800 0.476291 0.875500

7 1.074800 0.427313 0.883500

8 0.531500 0.392633 0.886000

9 0.531500 0.357979 0.892000

10 0.531500 0.330304 0.899500

11 0.531500 0.304529 0.907000

12 0.337200 0.287447 0.918000

13 0.337200 0.277067 0.921000

14 0.337200 0.259483 0.921000

15 0.337200 0.257564 0.916500

16 0.246200 0.241970 0.919500

17 0.246200 0.241537 0.921500

18 0.246200 0.235705 0.924500

19 0.246200 0.237325 0.920500

20 0.201400 0.229699 0.923500

21 0.201400 0.227426 0.923000

22 0.201400 0.228554 0.924000

23 0.201400 0.226941 0.925500

24 0.184300 0.225816 0.926500

</pre>

| {"license": "apache-2.0", "tags": ["generated_from_trainer"], "datasets": ["emotion"], "metrics": ["accuracy"], "model-index": [{"name": "xtremedistil-emotion", "results": [{"task": {"type": "text-classification", "name": "Text Classification"}, "dataset": {"name": "emotion", "type": "emotion", "args": "default"}, "metrics": [{"type": "accuracy", "value": 0.9265, "name": "Accuracy"}]}, {"task": {"type": "text-classification", "name": "Text Classification"}, "dataset": {"name": "emotion", "type": "emotion", "config": "default", "split": "test"}, "metrics": [{"type": "accuracy", "value": 0.926, "name": "Accuracy", "verified": true, "verifyToken": "eyJhbGciOiJFZERTQSIsInR5cCI6IkpXVCJ9.eyJoYXNoIjoiYzE3NDg5Y2ZkMDE5OTJmNjYwMTU1MDMwOTUwNTdkOWQ0MWNiZDYxYzUwNDBmNGVkOWU0OWE1MzRiNDYyZDI3NyIsInZlcnNpb24iOjF9.BaDj-FQ6g0cRk7n2MlN2YCb8Iv2VIM2wMwnJeeCTjG15b7TRRfZVtM3CM2WvHymahppscpiqgqPxT7JqkVXkAQ"}, {"type": "precision", "value": 0.8855308537052737, "name": "Precision Macro", "verified": true, "verifyToken": "eyJhbGciOiJFZERTQSIsInR5cCI6IkpXVCJ9.eyJoYXNoIjoiZGQ3MDlmOTdmZTY3Mjc5MmE1ZmFlZTVhOWIxYjA3ZDRmNjM4YmYzNTVmZTYwNmI2OTRmYmE3NDMyOTIxM2RjOSIsInZlcnNpb24iOjF9.r1_TDJRi4RJfhVlFDe83mRtdhqt5KMtvran6qjzRrcwXqNz7prkocFmgNnntn-fqgg6AXgyi6lwVDcuj5L5VBA"}, {"type": "precision", "value": 0.926, "name": "Precision Micro", "verified": true, "verifyToken": "eyJhbGciOiJFZERTQSIsInR5cCI6IkpXVCJ9.eyJoYXNoIjoiNzMzMzc4MWY1M2E5Y2M2ZTRiYTc2YzA5YzI4ZWM5MjgzMDgyNjZkMTVjZDYxZGJiMjI0NDdiMWU3ZWM5MjhjYSIsInZlcnNpb24iOjF9.741rqCRY5S8z_QodJ0PvcnccCN79fCE-MeNTEWFegI0oReneULyNOKRulxwxzwY5SN6ILm52xW7km5WJyt8MCg"}, {"type": "precision", "value": 0.9281282413639949, "name": "Precision Weighted", "verified": true, "verifyToken": "eyJhbGciOiJFZERTQSIsInR5cCI6IkpXVCJ9.eyJoYXNoIjoiODVlOTM3ODVhMWM0MjU4Mzg2OGNkYjc2ZmExODYzOWIzYjdlYzE4OWE0ZWI4ZjcxMjJiMGJiMzdhN2RiNTdlNiIsInZlcnNpb24iOjF9.8-HhpgKNt3nTcblnes4KxzsD7Xot3C6Rldp4463H9gaUNBxHcH19mFcpaSaDT_L3mYqetcW891jyNrHoATzuAg"}, {"type": "recall", "value": 0.8969894921856228, "name": "Recall Macro", "verified": true, "verifyToken": "eyJhbGciOiJFZERTQSIsInR5cCI6IkpXVCJ9.eyJoYXNoIjoiYTkxYzZiMzY5YjA3ZjExYmNlNGI4N2Q5NTg0MTcxODgxOTc0MjdhM2FjODAzNjhiNDBjMWY2NWUyMjhhYjNiNSIsInZlcnNpb24iOjF9.t5YyyNtkbaGfLVbFIO15wh6o6BqBIXGTEBheffPax61-cZM0HRQg9BufcHFdZ4dvPd_V_AYWrXdarEm-gLSBBg"}, {"type": "recall", "value": 0.926, "name": "Recall Micro", "verified": true, "verifyToken": "eyJhbGciOiJFZERTQSIsInR5cCI6IkpXVCJ9.eyJoYXNoIjoiZjAxMTUzMmI1YmMwYTBmYzFmM2E3Y2NiY2M4Njc4ZDc1ZWRhMTMyMDVhMWNiMGQ1ZDRiMjcwYmQ0MDAxZmI3NSIsInZlcnNpb24iOjF9.OphK_nR4EkaAUGMdZDq1rP_oBivfLHQhE7XY1HP9izhDd6rV5KobTrSdoxVCHGUtjOm1M6eZqI_1rPpunoCqDQ"}, {"type": "recall", "value": 0.926, "name": "Recall Weighted", "verified": true, "verifyToken": "eyJhbGciOiJFZERTQSIsInR5cCI6IkpXVCJ9.eyJoYXNoIjoiMGYxYWZlZmY1MWE4ZTU5YzlmZjA3MjVkZGFlMjk4NjFmMTIwZTNlMWU2ZWE1YWE3ZTc3MzI4NmJhYjM5Y2M5NCIsInZlcnNpb24iOjF9.zRx5GUnSb-T6E3s3NsWn1c1szm63jlB8XeqBUZ3J0m5H6P-QAPcVTaMVn8id-_IExS4g856-dT9YMq3pRh91DQ"}, {"type": "f1", "value": 0.8903400738742536, "name": "F1 Macro", "verified": true, "verifyToken": "eyJhbGciOiJFZERTQSIsInR5cCI6IkpXVCJ9.eyJoYXNoIjoiMzE1NDYxYTdiNjAwYzllZmY4ODc1ZTc1YjMyZjA4Njc1NDhjNDM5ZWNmOThjNzQ1MDE5ZDEyMTY0YTljZDcyMiIsInZlcnNpb24iOjF9.j4U3aOySF94GUF94YGA7DPjynVJ7wStBPu8uinEz_AjQFISv8YvHZOO--Kv2S4iKJPQNSGjmqP8jwtVEKt6-AA"}, {"type": "f1", "value": 0.926, "name": "F1 Micro", "verified": true, "verifyToken": "eyJhbGciOiJFZERTQSIsInR5cCI6IkpXVCJ9.eyJoYXNoIjoiNTFmYzdiM2FmZDIyMjkxZDk2NGFkMjU4OWJjYzQ1MTJkZThiMmMzYTUzZmJlNjNmYTFlOTRkMTZjODI2NDdiYyIsInZlcnNpb24iOjF9.VY3hvPQL588GY4j9cCJRj1GWZWsdgkRV1F5DKhckC74-w2qFK10zgqSEbb_uhOg3IYLcXev9f8dhIOVcOCPvDg"}, {"type": "f1", "value": 0.9265018282649476, "name": "F1 Weighted", "verified": true, "verifyToken": "eyJhbGciOiJFZERTQSIsInR5cCI6IkpXVCJ9.eyJoYXNoIjoiM2MyNjM2OGMzYzg5ODFiOWI0ZTkxMDAxYTRkNDYwZWIyZGUyYzhhYTUwYWM4NzJhYTk3MGU2N2E5ZTcyNWExMyIsInZlcnNpb24iOjF9.p_7UeUdm-Qy6yfUlZA9EmtAKUzxhfkDTUMkzNRLJ3HD3aFHHwOo8jIY3lEZ-QkucT-jhofgbnQ-jR56HmB1JDw"}, {"type": "loss", "value": 0.2258329838514328, "name": "loss", "verified": true, "verifyToken": "eyJhbGciOiJFZERTQSIsInR5cCI6IkpXVCJ9.eyJoYXNoIjoiZTQwM2Y4NGI0MmQwMDkxMTBiYTdlYjkwNjdiMjVhMGZhOTk0Y2MwMmVlODg2YTczNzg1MGZiMDM2NzIyMzE5ZCIsInZlcnNpb24iOjF9.gCzWQrRm8UsOEcZvT_zC568FZmIcQf8G177IDQmxGVGg1vrOonfnPLX1_xlbcID4vDGeVuw5xYEpxXOAc19GDw"}]}]}]} | bergum/xtremedistil-emotion | null | [

"transformers",

"pytorch",

"safetensors",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:emotion",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

text-classification | transformers | # xtremedistil-l6-h384-emotion

This model is a fine-tuned version of [microsoft/xtremedistil-l6-h384-uncased](https://huggingface.co/microsoft/xtremedistil-l6-h384-uncased) on the emotion dataset.

It achieves the following results on the evaluation set:

- Accuracy: 0.928

This model can be quantized to int8 and retain accuracy

- Accuracy 0.912

<pre>

import transformers

import transformers.convert_graph_to_onnx as onnx_convert

from pathlib import Path

pipeline = transformers.pipeline("text-classification",model=model,tokenizer=tokenizer)

onnx_convert.convert_pytorch(pipeline, opset=11, output=Path("xtremedistil-l6-h384-emotion.onnx"), use_external_format=False)

from onnxruntime.quantization import quantize_dynamic, QuantType

quantize_dynamic("xtremedistil-l6-h384-emotion.onnx", "xtremedistil-l6-h384-emotion-int8.onnx",

weight_type=QuantType.QUInt8)

</pre>

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 3e-05

- train_batch_size: 128

- eval_batch_size: 8

- seed: 42

- num_epochs: 14

### Training results

<pre>

Epoch Training Loss Validation Loss Accuracy

1 No log 0.960511 0.689000

2 No log 0.620671 0.824000

3 No log 0.435741 0.880000

4 0.797900 0.341771 0.896000

5 0.797900 0.294780 0.916000

6 0.797900 0.250572 0.918000

7 0.797900 0.232976 0.924000

8 0.277300 0.216347 0.924000

9 0.277300 0.202306 0.930500

10 0.277300 0.192530 0.930000

11 0.277300 0.192500 0.926500

12 0.181700 0.187347 0.928500

13 0.181700 0.185896 0.929500

14 0.181700 0.185154 0.928000

</pre> | {"license": "apache-2.0", "tags": ["generated_from_trainer"], "datasets": ["emotion"], "metrics": ["accuracy"], "model-index": [{"name": "xtremedistil-l6-h384-emotion", "results": [{"task": {"type": "text-classification", "name": "Text Classification"}, "dataset": {"name": "emotion", "type": "emotion", "args": "default"}, "metrics": [{"type": "accuracy", "value": 0.928, "name": "Accuracy"}]}]}]} | bergum/xtremedistil-l6-h384-emotion | null | [

"transformers",

"pytorch",

"safetensors",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:emotion",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

text-classification | transformers | # xtremedistil-l6-h384-go-emotion

This model is a fine-tuned version of [microsoft/xtremedistil-l6-h384-uncased](https://huggingface.co/microsoft/xtremedistil-l6-h384-uncased) on the

[go_emotions dataset](https://huggingface.co/datasets/go_emotions).

See notebook for how the model was trained and converted to ONNX format [](https://colab.research.google.com/github/jobergum/emotion/blob/main/TrainGoEmotions.ipynb)

This model is deployed to [aiserv.cloud](https://aiserv.cloud/) for live demo of the model.

See [https://github.com/jobergum/browser-ml-inference](https://github.com/jobergum/browser-ml-inference) for how to reproduce.

### Training hyperparameters

- batch size 128

- learning_rate=3e-05

- epocs 4

<pre>

Num examples = 211225

Num Epochs = 4

Instantaneous batch size per device = 128

Total train batch size (w. parallel, distributed & accumulation) = 128

Gradient Accumulation steps = 1

Total optimization steps = 6604

[6604/6604 53:23, Epoch 4/4]

Step Training Loss

500 0.263200

1000 0.156900

1500 0.152500

2000 0.145400

2500 0.140500

3000 0.135900

3500 0.132800

4000 0.129400

4500 0.127200

5000 0.125700

5500 0.124400

6000 0.124100

6500 0.123400

</pre> | {"license": "apache-2.0", "datasets": ["go_emotions"], "metrics": ["accuracy"], "model-index": [{"name": "xtremedistil-emotion", "results": [{"task": {"type": "multi_label_classification", "name": "Multi Label Text Classification"}, "dataset": {"name": "go_emotions", "type": "emotion", "args": "default"}, "metrics": [{"type": "accuracy", "value": "NaN", "name": "Accuracy"}]}]}]} | bergum/xtremedistil-l6-h384-go-emotion | null | [

"transformers",

"pytorch",

"safetensors",

"bert",

"text-classification",

"dataset:go_emotions",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

token-classification | transformers |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# IceBERT-finetuned-ner

This model is a fine-tuned version of [vesteinn/IceBERT](https://huggingface.co/vesteinn/IceBERT) on the mim_gold_ner dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0783

- Precision: 0.8873

- Recall: 0.8627

- F1: 0.8748

- Accuracy: 0.9848

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| 0.0539 | 1.0 | 2904 | 0.0768 | 0.8732 | 0.8453 | 0.8590 | 0.9833 |

| 0.0281 | 2.0 | 5808 | 0.0737 | 0.8781 | 0.8492 | 0.8634 | 0.9838 |

| 0.0166 | 3.0 | 8712 | 0.0783 | 0.8873 | 0.8627 | 0.8748 | 0.9848 |

### Framework versions

- Transformers 4.11.2

- Pytorch 1.9.0+cu102

- Datasets 1.12.1

- Tokenizers 0.10.3

| {"license": "gpl-3.0", "tags": ["generated_from_trainer"], "datasets": ["mim_gold_ner"], "metrics": ["precision", "recall", "f1", "accuracy"], "widget": [{"text": "Bob Dillan beit Mar\u00edu Markan \u00e1 barkann."}], "model-index": [{"name": "IceBERT-finetuned-ner", "results": [{"task": {"type": "token-classification", "name": "Token Classification"}, "dataset": {"name": "mim_gold_ner", "type": "mim_gold_ner", "args": "mim-gold-ner"}, "metrics": [{"type": "precision", "value": 0.8873049035270985, "name": "Precision"}, {"type": "recall", "value": 0.8627076114231091, "name": "Recall"}, {"type": "f1", "value": 0.8748333939173634, "name": "F1"}, {"type": "accuracy", "value": 0.9848076353832492, "name": "Accuracy"}]}]}]} | bergurth/IceBERT-finetuned-ner | null | [

"transformers",

"pytorch",

"tensorboard",

"roberta",

"token-classification",

"generated_from_trainer",

"dataset:mim_gold_ner",

"license:gpl-3.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

token-classification | transformers |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# XLMR-ENIS-finetuned-ner

This model is a fine-tuned version of [vesteinn/XLMR-ENIS](https://huggingface.co/vesteinn/XLMR-ENIS) on the mim_gold_ner dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0938

- Precision: 0.8619

- Recall: 0.8384

- F1: 0.8500

- Accuracy: 0.9831

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| 0.0574 | 1.0 | 2904 | 0.0983 | 0.8374 | 0.8061 | 0.8215 | 0.9795 |

| 0.0321 | 2.0 | 5808 | 0.0991 | 0.8525 | 0.8235 | 0.8378 | 0.9811 |

| 0.0179 | 3.0 | 8712 | 0.0938 | 0.8619 | 0.8384 | 0.8500 | 0.9831 |

### Framework versions

- Transformers 4.11.2

- Pytorch 1.9.0+cu102

- Datasets 1.12.1

- Tokenizers 0.10.3

| {"license": "agpl-3.0", "tags": ["generated_from_trainer"], "datasets": ["mim_gold_ner"], "metrics": ["precision", "recall", "f1", "accuracy"], "widget": [{"text": "B\u00f3nus fe\u00f0garnir J\u00f3hannes J\u00f3nsson og J\u00f3n \u00c1sgeir J\u00f3hannesson opnu\u00f0u fyrstu B\u00f3nusb\u00fa\u00f0ina \u00ed 400 fermetra h\u00fasn\u00e6\u00f0i vi\u00f0 Sk\u00fatuvog laugardaginn 8. apr\u00edl 1989"}], "model-index": [{"name": "XLMR-ENIS-finetuned-ner", "results": [{"task": {"type": "token-classification", "name": "Token Classification"}, "dataset": {"name": "mim_gold_ner", "type": "mim_gold_ner", "args": "mim-gold-ner"}, "metrics": [{"type": "precision", "value": 0.861851332398317, "name": "Precision"}, {"type": "recall", "value": 0.8384309266628767, "name": "Recall"}, {"type": "f1", "value": 0.849979828251974, "name": "F1"}, {"type": "accuracy", "value": 0.9830620929487668, "name": "Accuracy"}]}]}]} | bergurth/XLMR-ENIS-finetuned-ner | null | [

"transformers",

"pytorch",

"tensorboard",

"xlm-roberta",

"token-classification",

"generated_from_trainer",

"dataset:mim_gold_ner",

"license:agpl-3.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

null | null | {} | bergurth/distilbert-base-uncased-finetuned-cola | null | [

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers | {} | berkergurcay/10k-pretrained-bert-model | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers | {} | berkergurcay/1k-fineutuned-bert-model | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers | {} | berkergurcay/1k-pretrained-bert-model | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers | {} | berkergurcay/finetuned-bert-base-uncased | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

text-classification | transformers | {} | berkergurcay/finetuned-roberta | null | [

"transformers",

"pytorch",

"roberta",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

|

fill-mask | transformers |

This is a **RoBERTa-base** model trained from scratch in Spanish.

The training dataset is [mc4](https://huggingface.co/datasets/bertin-project/mc4-es-sampled ) subsampling documents to a total of about 50 million examples. Sampling is biased towards average perplexity values (using a Gaussian function), discarding more often documents with very large values (poor quality) of very small values (short, repetitive texts).

This model takes the one using [sequence length 128](https://huggingface.co/bertin-project/bertin-base-gaussian) and trains during 25.000 steps using sequence length 512.

Please see our main [card](https://huggingface.co/bertin-project/bertin-roberta-base-spanish) for more information.

This is part of the

[Flax/Jax Community Week](https://discuss.huggingface.co/t/open-to-the-community-community-week-using-jax-flax-for-nlp-cv/7104), organised by [HuggingFace](https://huggingface.co/) and TPU usage sponsored by Google.

## Team members

- Eduardo González ([edugp](https://huggingface.co/edugp))

- Javier de la Rosa ([versae](https://huggingface.co/versae))

- Manu Romero ([mrm8488](https://huggingface.co/))

- María Grandury ([mariagrandury](https://huggingface.co/))

- Pablo González de Prado ([Pablogps](https://huggingface.co/Pablogps))

- Paulo Villegas ([paulo](https://huggingface.co/paulo)) | {"language": "es", "license": "cc-by-4.0", "tags": ["spanish", "roberta"], "pipeline_tag": "fill-mask", "widget": [{"text": "Fui a la librer\u00eda a comprar un <mask>."}]} | bertin-project/bertin-base-gaussian-exp-512seqlen | null | [

"transformers",

"pytorch",

"jax",

"tensorboard",

"joblib",

"roberta",

"fill-mask",

"spanish",

"es",

"license:cc-by-4.0",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

fill-mask | transformers |

This is a **RoBERTa-base** model trained from scratch in Spanish.

The training dataset is [mc4](https://huggingface.co/datasets/bertin-project/mc4-es-sampled ) subsampling documents to a total of about 50 million examples. Sampling is biased towards average perplexity values (using a Gaussian function), discarding more often documents with very large values (poor quality) of very small values (short, repetitive texts).

This model has been trained for 250.000 steps.

Please see our main [card](https://huggingface.co/bertin-project/bertin-roberta-base-spanish) for more information.

This is part of the

[Flax/Jax Community Week](https://discuss.huggingface.co/t/open-to-the-community-community-week-using-jax-flax-for-nlp-cv/7104), organised by [HuggingFace](https://huggingface.co/) and TPU usage sponsored by Google.

## Team members

- Eduardo González ([edugp](https://huggingface.co/edugp))

- Javier de la Rosa ([versae](https://huggingface.co/versae))

- Manu Romero ([mrm8488](https://huggingface.co/))

- María Grandury ([mariagrandury](https://huggingface.co/))

- Pablo González de Prado ([Pablogps](https://huggingface.co/Pablogps))

- Paulo Villegas ([paulo](https://huggingface.co/paulo)) | {"language": "es", "license": "cc-by-4.0", "tags": ["spanish", "roberta"], "pipeline_tag": "fill-mask", "widget": [{"text": "Fui a la librer\u00eda a comprar un <mask>."}]} | bertin-project/bertin-base-gaussian | null | [

"transformers",

"pytorch",

"jax",

"tensorboard",

"joblib",

"roberta",

"fill-mask",

"spanish",

"es",

"license:cc-by-4.0",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

token-classification | transformers |

This checkpoint has been trained for the NER task using the CoNLL2002-es dataset.

This is a NER checkpoint created from **Bertin Gaussian 512**, which is a **RoBERTa-base** model trained from scratch in Spanish. Information on this base model may be found at [its own card](https://huggingface.co/bertin-project/bertin-base-gaussian-exp-512seqlen) and at deeper detail on [the main project card](https://huggingface.co/bertin-project/bertin-roberta-base-spanish).

The training dataset for the base model is [mc4](https://huggingface.co/datasets/bertin-project/mc4-es-sampled ) subsampling documents to a total of about 50 million examples. Sampling is biased towards average perplexity values (using a Gaussian function), discarding more often documents with very large values (poor quality) of very small values (short, repetitive texts).

This is part of the

[Flax/Jax Community Week](https://discuss.huggingface.co/t/open-to-the-community-community-week-using-jax-flax-for-nlp-cv/7104), organised by [HuggingFace](https://huggingface.co/) and TPU usage sponsored by Google.

## Team members

- Eduardo González ([edugp](https://huggingface.co/edugp))

- Javier de la Rosa ([versae](https://huggingface.co/versae))

- Manu Romero ([mrm8488](https://huggingface.co/))

- María Grandury ([mariagrandury](https://huggingface.co/))

- Pablo González de Prado ([Pablogps](https://huggingface.co/Pablogps))

- Paulo Villegas ([paulo](https://huggingface.co/paulo)) | {"language": "es", "license": "cc-by-4.0", "tags": ["spanish", "roberta", "ner"]} | bertin-project/bertin-base-ner-conll2002-es | null | [

"transformers",

"pytorch",

"safetensors",

"roberta",

"token-classification",

"spanish",

"ner",

"es",

"license:cc-by-4.0",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

text-classification | transformers |

This checkpoint has been trained for the PAWS-X task using the CoNLL 2002-es dataset.

This checkpoint was created from **Bertin Gaussian 512**, which is a **RoBERTa-base** model trained from scratch in Spanish. Information on this base model may be found at [its own card](https://huggingface.co/bertin-project/bertin-base-gaussian-exp-512seqlen) and at deeper detail on [the main project card](https://huggingface.co/bertin-project/bertin-roberta-base-spanish).

The training dataset for the base model is [mc4](https://huggingface.co/datasets/bertin-project/mc4-es-sampled ) subsampling documents to a total of about 50 million examples. Sampling is biased towards average perplexity values (using a Gaussian function), discarding more often documents with very large values (poor quality) of very small values (short, repetitive texts).

This is part of the

[Flax/Jax Community Week](https://discuss.huggingface.co/t/open-to-the-community-community-week-using-jax-flax-for-nlp-cv/7104), organised by [HuggingFace](https://huggingface.co/) and TPU usage sponsored by Google.

## Team members

- Eduardo González ([edugp](https://huggingface.co/edugp))

- Javier de la Rosa ([versae](https://huggingface.co/versae))

- Manu Romero ([mrm8488](https://huggingface.co/))

- María Grandury ([mariagrandury](https://huggingface.co/))

- Pablo González de Prado ([Pablogps](https://huggingface.co/Pablogps))

- Paulo Villegas ([paulo](https://huggingface.co/paulo)) | {"language": "es", "license": "cc-by-4.0", "tags": ["spanish", "roberta", "paws-x"]} | bertin-project/bertin-base-paws-x-es | null | [

"transformers",

"pytorch",

"safetensors",

"roberta",

"text-classification",

"spanish",

"paws-x",

"es",

"license:cc-by-4.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| null | 2022-03-02T23:29:05+00:00 |

token-classification | transformers |

This checkpoint has been trained for the POS task using the CoNLL 2002-es dataset.