modelId

stringlengths 4

81

| tags

list | pipeline_tag

stringclasses 17

values | config

dict | downloads

int64 0

59.7M

| first_commit

timestamp[ns, tz=UTC] | card

stringlengths 51

438k

|

|---|---|---|---|---|---|---|

DevsIA/Devs_IA | []

| null | {

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 0 | null | ---

tags:

- Question(s) Generation

metrics:

- rouge

model-index:

- name: consciousAI/question-generation-auto-t5-v1-base-s-q-c

results: []

---

# Auto Question Generation

The model is intended to be used for Auto Question Generation task i.e. no hint are required as input. The model is expected to produce one or possibly more than one question from the provided context.

[Live Demo: Question Generation](https://huggingface.co/spaces/consciousAI/question_generation)

Including this there are four models trained with different training sets, demo provide comparison to all in one go. However, you can reach individual projects at below links:

[Auto Question Generation v1](https://huggingface.co/consciousAI/question-generation-auto-t5-v1-base-s)

[Auto Question Generation v2](https://huggingface.co/consciousAI/question-generation-auto-t5-v1-base-s-q)

[Auto/Hints based Question Generation v1](https://huggingface.co/consciousAI/question-generation-auto-hints-t5-v1-base-s-q)

[Auto/Hints based Question Generation v2](https://huggingface.co/consciousAI/question-generation-auto-hints-t5-v1-base-s-q-c)

This model can be used as below:

```

from transformers import (

AutoModelForSeq2SeqLM,

AutoTokenizer

)

model_checkpoint = "consciousAI/question-generation-auto-t5-v1-base-s-q-c"

model = AutoModelForSeq2SeqLM.from_pretrained(model_checkpoint)

tokenizer = AutoTokenizer.from_pretrained(model_checkpoint)

## Input with prompt

context="question_context: <context>"

encodings = tokenizer.encode(context, return_tensors='pt', truncation=True, padding='max_length').to(device)

## You can play with many hyperparams to condition the output, look at demo

output = model.generate(encodings,

#max_length=300,

#min_length=20,

#length_penalty=2.0,

num_beams=4,

#early_stopping=True,

#do_sample=True,

#temperature=1.1

)

## Multiple questions are expected to be delimited by '?' You can write a small wrapper to elegantly format. Look at the demo.

questions = [tokenizer.decode(id, clean_up_tokenization_spaces=False, skip_special_tokens=False) for id in output]

```

## Training and evaluation data

Squad & QNLi combo.

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 6

- eval_batch_size: 6

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum |

|:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:|:---------:|

| 1.8732 | 1.0 | 942 | 1.4330 | 0.5558 | 0.3857 | 0.5219 | 0.5231 |

| 1.2361 | 2.0 | 1884 | 1.3720 | 0.5649 | 0.4042 | 0.5332 | 0.5338 |

| 0.9515 | 3.0 | 2826 | 1.3703 | 0.5699 | 0.4044 | 0.5373 | 0.5385 |

| 0.7383 | 4.0 | 3768 | 1.4039 | 0.5753 | 0.4159 | 0.5414 | 0.5426 |

| 0.6291 | 5.0 | 4710 | 1.4661 | 0.5809 | 0.4227 | 0.5488 | 0.5498 |

### Framework versions

- Transformers 4.23.0.dev0

- Pytorch 1.12.1+cu113

- Datasets 2.5.2

- Tokenizers 0.13.0

|

Doohae/roberta | [

"pytorch",

"roberta",

"question-answering",

"transformers",

"autotrain_compatible"

]

| question-answering | {

"architectures": [

"RobertaForQuestionAnswering"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 3 | null | ---

tags:

- Taxi-v3

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-Taxi-v3

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Taxi-v3

type: Taxi-v3

metrics:

- type: mean_reward

value: 7.56 +/- 2.71

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing **Taxi-v3**

This is a trained model of a **Q-Learning** agent playing **Taxi-v3** .

## Usage

```python

model = load_from_hub(repo_id="Shri3/q-Taxi-v3", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

evaluate_agent(env, model["max_steps"], model["n_eval_episodes"], model["qtable"], model["eval_seed"])

```

|

DoyyingFace/bert-asian-hate-tweets-asian-unclean-warmup-25 | [

"pytorch",

"bert",

"text-classification",

"transformers"

]

| text-classification | {

"architectures": [

"BertForSequenceClassification"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 30 | 2022-10-27T15:07:07Z | ---

tags:

- flair

- token-classification

- sequence-tagger-model

---

### Demo: How to use in Flair

Requires:

- **[Flair](https://github.com/flairNLP/flair/)** (`pip install flair`)

```python

from flair.data import Sentence

from flair.models import SequenceTagger

# load tagger

tagger = SequenceTagger.load("alanakbik/test-push-public")

# make example sentence

sentence = Sentence("On September 1st George won 1 dollar while watching Game of Thrones.")

# predict NER tags

tagger.predict(sentence)

# print sentence

print(sentence)

# print predicted NER spans

print('The following NER tags are found:')

# iterate over entities and print

for entity in sentence.get_spans('ner'):

print(entity)

``` |

DoyyingFace/bert-asian-hate-tweets-concat-clean-with-unclean-valid | [

"pytorch",

"bert",

"text-classification",

"transformers"

]

| text-classification | {

"architectures": [

"BertForSequenceClassification"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 25 | null | ---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

- name: trialzz

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# trialzz

This model is a fine-tuned version of [distilroberta-base](https://huggingface.co/distilroberta-base) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 2.1097

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| No log | 1.0 | 113 | 2.2090 |

| No log | 2.0 | 226 | 2.1168 |

| No log | 3.0 | 339 | 2.1097 |

### Framework versions

- Transformers 4.20.1

- Pytorch 1.12.1

- Datasets 2.5.1

- Tokenizers 0.12.1

|

bert-base-uncased | [

"pytorch",

"tf",

"jax",

"rust",

"safetensors",

"bert",

"fill-mask",

"en",

"dataset:bookcorpus",

"dataset:wikipedia",

"arxiv:1810.04805",

"transformers",

"exbert",

"license:apache-2.0",

"autotrain_compatible",

"has_space"

]

| fill-mask | {

"architectures": [

"BertForMaskedLM"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 59,663,489 | 2022-10-27T16:58:41Z | ---

license: apache-2.0

datasets:

- food101

tags:

- openvino

- int8

---

## [Vision Transformer (ViT)](https://huggingface.co/juliensimon/autotrain-food101-1471154050) quantized and exported to the OpenVINO IR.

## Model Details

**Model Description:** This ViT model fine-tuned on Food-101 was statically quantized and exported to the OpenVINO IR using [optimum](https://huggingface.co/docs/optimum/intel/optimization_ov).

## Usage example

You can use this model with Transformers *pipeline*.

```python

from transformers import pipeline, AutoFeatureExtractor

from optimum.intel.openvino import OVModelForImageClassification

model_id = "echarlaix/vit-food101-int8"

model = OVModelForImageClassification.from_pretrained(model_id)

feature_extractor = AutoFeatureExtractor.from_pretrained(model_id)

pipe = pipeline("image-classification", model=model, feature_extractor=feature_extractor)

outputs = pipe("http://farm2.staticflickr.com/1375/1394861946_171ea43524_z.jpg")

``` |

bert-large-cased-whole-word-masking-finetuned-squad | [

"pytorch",

"tf",

"jax",

"rust",

"safetensors",

"bert",

"question-answering",

"en",

"dataset:bookcorpus",

"dataset:wikipedia",

"arxiv:1810.04805",

"transformers",

"license:apache-2.0",

"autotrain_compatible",

"has_space"

]

| question-answering | {

"architectures": [

"BertForQuestionAnswering"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 8,214 | 2022-10-27T17:15:40Z | ---

language:

- en

thumbnail: "https://huggingface.co/DGSpitzer/Cyberpunk-Anime-Diffusion/resolve/main/img/thumbnail.png"

tags:

- cyberpunk

- anime

- waifu-diffusion

- stable-diffusion

- aiart

- text-to-image

license: creativeml-openrail-m

---

<center><img src="https://huggingface.co/DGSpitzer/Cyberpunk-Anime-Diffusion/resolve/main/img/5.jpg" width="512" height="512"/></center>

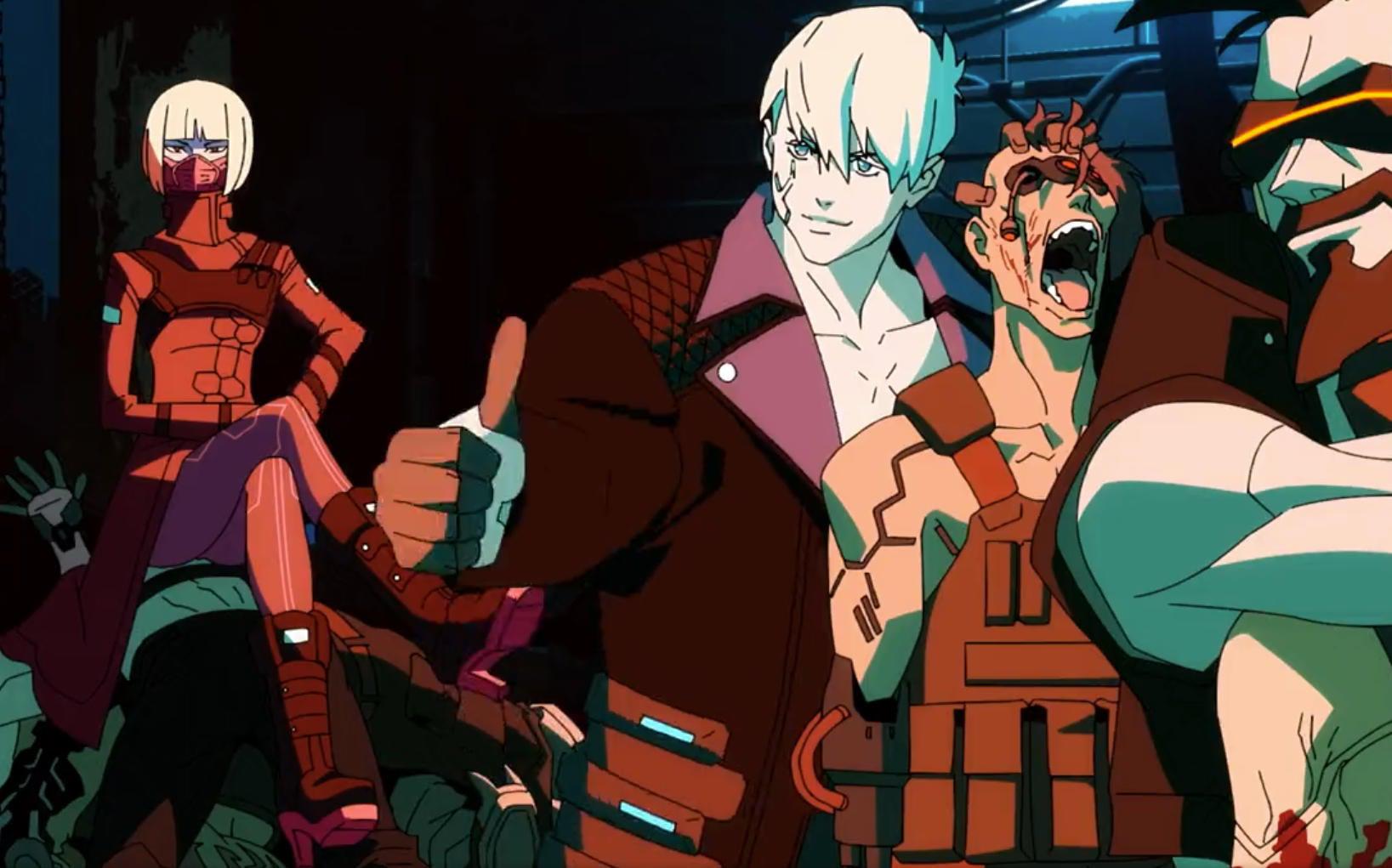

# Cyberpunk Anime Diffusion

An AI model that generates cyberpunk anime characters!~

Based of a finetuned Waifu Diffusion V1.3 Model with Stable Diffusion V1.5 New Vae, training in Dreambooth

by [DGSpitzer](https://www.youtube.com/channel/UCzzsYBF4qwtMwJaPJZ5SuPg)

### 🧨 Diffusers

This repo contains both .ckpt and Diffuser model files. It's compatible to be used as any Stable Diffusion model, using standard [Stable Diffusion Pipelines](https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion).

You can convert this model to [ONNX](https://huggingface.co/docs/diffusers/optimization/onnx), [MPS](https://huggingface.co/docs/diffusers/optimization/mps) and/or [FLAX/JAX](https://huggingface.co/blog/stable_diffusion_jax).

```python example for loading the Diffuser

#!pip install diffusers transformers scipy torch

from diffusers import StableDiffusionPipeline

import torch

model_id = "DGSpitzer/Cyberpunk-Anime-Diffusion"

pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16)

pipe = pipe.to("cuda")

prompt = "a beautiful perfect face girl in dgs illustration style, Anime fine details portrait of school girl in front of modern tokyo city landscape on the background deep bokeh, anime masterpiece, 8k, sharp high quality anime"

image = pipe(prompt).images[0]

image.save("./cyberpunk_girl.png")

```

# Online Demo

You can try the Online Web UI demo build with [Gradio](https://github.com/gradio-app/gradio), or use Colab Notebook at here:

*My Online Space Demo*

[](https://huggingface.co/spaces/DGSpitzer/DGS-Diffusion-Space)

*Finetuned Diffusion WebUI Demo by anzorq*

[](https://huggingface.co/spaces/anzorq/finetuned_diffusion)

*Colab Notebook*

[](https://colab.research.google.com/github/HelixNGC7293/cyberpunk-anime-diffusion/blob/main/cyberpunk_anime_diffusion.ipynb)[](https://github.com/HelixNGC7293/cyberpunk-anime-diffusion)

*Buy me a coffee if you like this project ;P ♥*

[](https://www.buymeacoffee.com/dgspitzer)

<center><img src="https://huggingface.co/DGSpitzer/Cyberpunk-Anime-Diffusion/resolve/main/img/1.jpg" width="512" height="512"/></center>

# **👇Model👇**

AI Model Weights available at huggingface: https://huggingface.co/DGSpitzer/Cyberpunk-Anime-Diffusion

<center><img src="https://huggingface.co/DGSpitzer/Cyberpunk-Anime-Diffusion/resolve/main/img/2.jpg" width="512" height="512"/></center>

# Usage

After model loaded, use keyword **dgs** in your prompt, with **illustration style** to get even better results.

For sampler, use **Euler A** for the best result (**DDIM** kinda works too), CFG Scale 7, steps 20 should be fine

**Example 1:**

```

portrait of a girl in dgs illustration style, Anime girl, female soldier working in a cyberpunk city, cleavage, ((perfect femine face)), intricate, 8k, highly detailed, shy, digital painting, intense, sharp focus

```

For cyber robot male character, you can add **muscular male** to improve the output.

**Example 2:**

```

a photo of muscular beard soldier male in dgs illustration style, half-body, holding robot arms, strong chest

```

**Example 3 (with Stable Diffusion WebUI):**

If using [AUTOMATIC1111's Stable Diffusion WebUI](https://github.com/AUTOMATIC1111/stable-diffusion-webui)

You can simply use this as **prompt** with **Euler A** Sampler, CFG Scale 7, steps 20, 704 x 704px output res:

```

an anime girl in dgs illustration style

```

And set the **negative prompt** as this to get cleaner face:

```

out of focus, scary, creepy, evil, disfigured, missing limbs, ugly, gross, missing fingers

```

This will give you the exactly same style as the sample images above.

<center><img src="https://huggingface.co/DGSpitzer/Cyberpunk-Anime-Diffusion/resolve/main/img/ReadmeAddon.jpg" width="256" height="353"/></center>

---

**NOTE: usage of this model implies accpetance of stable diffusion's [CreativeML Open RAIL-M license](LICENSE)**

---

<center><img src="https://huggingface.co/DGSpitzer/Cyberpunk-Anime-Diffusion/resolve/main/img/4.jpg" width="700" height="700"/></center>

<center><img src="https://huggingface.co/DGSpitzer/Cyberpunk-Anime-Diffusion/resolve/main/img/6.jpg" width="700" height="700"/></center>

|

bert-large-cased | [

"pytorch",

"tf",

"jax",

"safetensors",

"bert",

"fill-mask",

"en",

"dataset:bookcorpus",

"dataset:wikipedia",

"arxiv:1810.04805",

"transformers",

"license:apache-2.0",

"autotrain_compatible",

"has_space"

]

| fill-mask | {

"architectures": [

"BertForMaskedLM"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 388,769 | null | Access to model brillo/Fuckyou is restricted and you are not in the authorized list. Visit https://huggingface.co/brillo/Fuckyou to ask for access. |

t5-small | [

"pytorch",

"tf",

"jax",

"rust",

"safetensors",

"t5",

"text2text-generation",

"en",

"fr",

"ro",

"de",

"multilingual",

"dataset:c4",

"arxiv:1805.12471",

"arxiv:1708.00055",

"arxiv:1704.05426",

"arxiv:1606.05250",

"arxiv:1808.09121",

"arxiv:1810.12885",

"arxiv:1905.10044",

"arxiv:1910.09700",

"transformers",

"summarization",

"translation",

"license:apache-2.0",

"autotrain_compatible",

"has_space"

]

| translation | {

"architectures": [

"T5ForConditionalGeneration"

],

"model_type": "t5",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": true,

"length_penalty": 2,

"max_length": 200,

"min_length": 30,

"no_repeat_ngram_size": 3,

"num_beams": 4,

"prefix": "summarize: "

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": true,

"max_length": 300,

"num_beams": 4,

"prefix": "translate English to German: "

},

"translation_en_to_fr": {

"early_stopping": true,

"max_length": 300,

"num_beams": 4,

"prefix": "translate English to French: "

},

"translation_en_to_ro": {

"early_stopping": true,

"max_length": 300,

"num_beams": 4,

"prefix": "translate English to Romanian: "

}

}

} | 1,886,928 | 2022-10-27T19:22:09Z | ---

datasets:

- bigscience/xP3

- mc4

license: apache-2.0

language:

- af

- am

- ar

- az

- be

- bg

- bn

- ca

- ceb

- co

- cs

- cy

- da

- de

- el

- en

- eo

- es

- et

- eu

- fa

- fi

- fil

- fr

- fy

- ga

- gd

- gl

- gu

- ha

- haw

- hi

- hmn

- ht

- hu

- hy

- ig

- is

- it

- iw

- ja

- jv

- ka

- kk

- km

- kn

- ko

- ku

- ky

- la

- lb

- lo

- lt

- lv

- mg

- mi

- mk

- ml

- mn

- mr

- ms

- mt

- my

- ne

- nl

- no

- ny

- pa

- pl

- ps

- pt

- ro

- ru

- sd

- si

- sk

- sl

- sm

- sn

- so

- sq

- sr

- st

- su

- sv

- sw

- ta

- te

- tg

- th

- tr

- uk

- und

- ur

- uz

- vi

- xh

- yi

- yo

- zh

- zu

pipeline_tag: text2text-generation

widget:

- text: "一个传奇的开端,一个不灭的神话,这不仅仅是一部电影,而是作为一个走进新时代的标签,永远彪炳史册。Would you rate the previous review as positive, neutral or negative?"

example_title: "zh-en sentiment"

- text: "一个传奇的开端,一个不灭的神话,这不仅仅是一部电影,而是作为一个走进新时代的标签,永远彪炳史册。你认为这句话的立场是赞扬、中立还是批评?"

example_title: "zh-zh sentiment"

- text: "Suggest at least five related search terms to \"Mạng neural nhân tạo\"."

example_title: "vi-en query"

- text: "Proposez au moins cinq mots clés concernant «Réseau de neurones artificiels»."

example_title: "fr-fr query"

- text: "Explain in a sentence in Telugu what is backpropagation in neural networks."

example_title: "te-en qa"

- text: "Why is the sky blue?"

example_title: "en-en qa"

- text: "Write a fairy tale about a troll saving a princess from a dangerous dragon. The fairy tale is a masterpiece that has achieved praise worldwide and its moral is \"Heroes Come in All Shapes and Sizes\". Story (in Spanish):"

example_title: "es-en fable"

- text: "Write a fable about wood elves living in a forest that is suddenly invaded by ogres. The fable is a masterpiece that has achieved praise worldwide and its moral is \"Violence is the last refuge of the incompetent\". Fable (in Hindi):"

example_title: "hi-en fable"

model-index:

- name: mt0-small

results:

- task:

type: Coreference resolution

dataset:

type: winogrande

name: Winogrande XL (xl)

config: xl

split: validation

revision: a80f460359d1e9a67c006011c94de42a8759430c

metrics:

- type: Accuracy

value: 50.51

- task:

type: Coreference resolution

dataset:

type: Muennighoff/xwinograd

name: XWinograd (en)

config: en

split: test

revision: 9dd5ea5505fad86b7bedad667955577815300cee

metrics:

- type: Accuracy

value: 51.31

- task:

type: Coreference resolution

dataset:

type: Muennighoff/xwinograd

name: XWinograd (fr)

config: fr

split: test

revision: 9dd5ea5505fad86b7bedad667955577815300cee

metrics:

- type: Accuracy

value: 54.22

- task:

type: Coreference resolution

dataset:

type: Muennighoff/xwinograd

name: XWinograd (jp)

config: jp

split: test

revision: 9dd5ea5505fad86b7bedad667955577815300cee

metrics:

- type: Accuracy

value: 52.45

- task:

type: Coreference resolution

dataset:

type: Muennighoff/xwinograd

name: XWinograd (pt)

config: pt

split: test

revision: 9dd5ea5505fad86b7bedad667955577815300cee

metrics:

- type: Accuracy

value: 51.71

- task:

type: Coreference resolution

dataset:

type: Muennighoff/xwinograd

name: XWinograd (ru)

config: ru

split: test

revision: 9dd5ea5505fad86b7bedad667955577815300cee

metrics:

- type: Accuracy

value: 54.29

- task:

type: Coreference resolution

dataset:

type: Muennighoff/xwinograd

name: XWinograd (zh)

config: zh

split: test

revision: 9dd5ea5505fad86b7bedad667955577815300cee

metrics:

- type: Accuracy

value: 54.17

- task:

type: Natural language inference

dataset:

type: anli

name: ANLI (r1)

config: r1

split: validation

revision: 9dbd830a06fea8b1c49d6e5ef2004a08d9f45094

metrics:

- type: Accuracy

value: 34.7

- task:

type: Natural language inference

dataset:

type: anli

name: ANLI (r2)

config: r2

split: validation

revision: 9dbd830a06fea8b1c49d6e5ef2004a08d9f45094

metrics:

- type: Accuracy

value: 34.0

- task:

type: Natural language inference

dataset:

type: anli

name: ANLI (r3)

config: r3

split: validation

revision: 9dbd830a06fea8b1c49d6e5ef2004a08d9f45094

metrics:

- type: Accuracy

value: 33.83

- task:

type: Natural language inference

dataset:

type: super_glue

name: SuperGLUE (cb)

config: cb

split: validation

revision: 9e12063561e7e6c79099feb6d5a493142584e9e2

metrics:

- type: Accuracy

value: 50.0

- task:

type: Natural language inference

dataset:

type: super_glue

name: SuperGLUE (rte)

config: rte

split: validation

revision: 9e12063561e7e6c79099feb6d5a493142584e9e2

metrics:

- type: Accuracy

value: 61.01

- task:

type: Natural language inference

dataset:

type: xnli

name: XNLI (ar)

config: ar

split: validation

revision: a5a45e4ff92d5d3f34de70aaf4b72c3bdf9f7f16

metrics:

- type: Accuracy

value: 37.43

- task:

type: Natural language inference

dataset:

type: xnli

name: XNLI (bg)

config: bg

split: validation

revision: a5a45e4ff92d5d3f34de70aaf4b72c3bdf9f7f16

metrics:

- type: Accuracy

value: 37.55

- task:

type: Natural language inference

dataset:

type: xnli

name: XNLI (de)

config: de

split: validation

revision: a5a45e4ff92d5d3f34de70aaf4b72c3bdf9f7f16

metrics:

- type: Accuracy

value: 35.78

- task:

type: Natural language inference

dataset:

type: xnli

name: XNLI (el)

config: el

split: validation

revision: a5a45e4ff92d5d3f34de70aaf4b72c3bdf9f7f16

metrics:

- type: Accuracy

value: 37.43

- task:

type: Natural language inference

dataset:

type: xnli

name: XNLI (en)

config: en

split: validation

revision: a5a45e4ff92d5d3f34de70aaf4b72c3bdf9f7f16

metrics:

- type: Accuracy

value: 38.47

- task:

type: Natural language inference

dataset:

type: xnli

name: XNLI (es)

config: es

split: validation

revision: a5a45e4ff92d5d3f34de70aaf4b72c3bdf9f7f16

metrics:

- type: Accuracy

value: 36.75

- task:

type: Natural language inference

dataset:

type: xnli

name: XNLI (fr)

config: fr

split: validation

revision: a5a45e4ff92d5d3f34de70aaf4b72c3bdf9f7f16

metrics:

- type: Accuracy

value: 37.15

- task:

type: Natural language inference

dataset:

type: xnli

name: XNLI (hi)

config: hi

split: validation

revision: a5a45e4ff92d5d3f34de70aaf4b72c3bdf9f7f16

metrics:

- type: Accuracy

value: 35.38

- task:

type: Natural language inference

dataset:

type: xnli

name: XNLI (ru)

config: ru

split: validation

revision: a5a45e4ff92d5d3f34de70aaf4b72c3bdf9f7f16

metrics:

- type: Accuracy

value: 37.35

- task:

type: Natural language inference

dataset:

type: xnli

name: XNLI (sw)

config: sw

split: validation

revision: a5a45e4ff92d5d3f34de70aaf4b72c3bdf9f7f16

metrics:

- type: Accuracy

value: 35.18

- task:

type: Natural language inference

dataset:

type: xnli

name: XNLI (th)

config: th

split: validation

revision: a5a45e4ff92d5d3f34de70aaf4b72c3bdf9f7f16

metrics:

- type: Accuracy

value: 37.55

- task:

type: Natural language inference

dataset:

type: xnli

name: XNLI (tr)

config: tr

split: validation

revision: a5a45e4ff92d5d3f34de70aaf4b72c3bdf9f7f16

metrics:

- type: Accuracy

value: 36.51

- task:

type: Natural language inference

dataset:

type: xnli

name: XNLI (ur)

config: ur

split: validation

revision: a5a45e4ff92d5d3f34de70aaf4b72c3bdf9f7f16

metrics:

- type: Accuracy

value: 35.78

- task:

type: Natural language inference

dataset:

type: xnli

name: XNLI (vi)

config: vi

split: validation

revision: a5a45e4ff92d5d3f34de70aaf4b72c3bdf9f7f16

metrics:

- type: Accuracy

value: 36.95

- task:

type: Natural language inference

dataset:

type: xnli

name: XNLI (zh)

config: zh

split: validation

revision: a5a45e4ff92d5d3f34de70aaf4b72c3bdf9f7f16

metrics:

- type: Accuracy

value: 37.07

- task:

type: Sentence completion

dataset:

type: story_cloze

name: StoryCloze (2016)

config: "2016"

split: validation

revision: e724c6f8cdf7c7a2fb229d862226e15b023ee4db

metrics:

- type: Accuracy

value: 54.36

- task:

type: Sentence completion

dataset:

type: super_glue

name: SuperGLUE (copa)

config: copa

split: validation

revision: 9e12063561e7e6c79099feb6d5a493142584e9e2

metrics:

- type: Accuracy

value: 57.0

- task:

type: Sentence completion

dataset:

type: xcopa

name: XCOPA (et)

config: et

split: validation

revision: 37f73c60fb123111fa5af5f9b705d0b3747fd187

metrics:

- type: Accuracy

value: 57.0

- task:

type: Sentence completion

dataset:

type: xcopa

name: XCOPA (ht)

config: ht

split: validation

revision: 37f73c60fb123111fa5af5f9b705d0b3747fd187

metrics:

- type: Accuracy

value: 60.0

- task:

type: Sentence completion

dataset:

type: xcopa

name: XCOPA (id)

config: id

split: validation

revision: 37f73c60fb123111fa5af5f9b705d0b3747fd187

metrics:

- type: Accuracy

value: 59.0

- task:

type: Sentence completion

dataset:

type: xcopa

name: XCOPA (it)

config: it

split: validation

revision: 37f73c60fb123111fa5af5f9b705d0b3747fd187

metrics:

- type: Accuracy

value: 59.0

- task:

type: Sentence completion

dataset:

type: xcopa

name: XCOPA (qu)

config: qu

split: validation

revision: 37f73c60fb123111fa5af5f9b705d0b3747fd187

metrics:

- type: Accuracy

value: 54.0

- task:

type: Sentence completion

dataset:

type: xcopa

name: XCOPA (sw)

config: sw

split: validation

revision: 37f73c60fb123111fa5af5f9b705d0b3747fd187

metrics:

- type: Accuracy

value: 55.0

- task:

type: Sentence completion

dataset:

type: xcopa

name: XCOPA (ta)

config: ta

split: validation

revision: 37f73c60fb123111fa5af5f9b705d0b3747fd187

metrics:

- type: Accuracy

value: 59.0

- task:

type: Sentence completion

dataset:

type: xcopa

name: XCOPA (th)

config: th

split: validation

revision: 37f73c60fb123111fa5af5f9b705d0b3747fd187

metrics:

- type: Accuracy

value: 65.0

- task:

type: Sentence completion

dataset:

type: xcopa

name: XCOPA (tr)

config: tr

split: validation

revision: 37f73c60fb123111fa5af5f9b705d0b3747fd187

metrics:

- type: Accuracy

value: 58.0

- task:

type: Sentence completion

dataset:

type: xcopa

name: XCOPA (vi)

config: vi

split: validation

revision: 37f73c60fb123111fa5af5f9b705d0b3747fd187

metrics:

- type: Accuracy

value: 54.0

- task:

type: Sentence completion

dataset:

type: xcopa

name: XCOPA (zh)

config: zh

split: validation

revision: 37f73c60fb123111fa5af5f9b705d0b3747fd187

metrics:

- type: Accuracy

value: 56.0

- task:

type: Sentence completion

dataset:

type: Muennighoff/xstory_cloze

name: XStoryCloze (ar)

config: ar

split: validation

revision: 8bb76e594b68147f1a430e86829d07189622b90d

metrics:

- type: Accuracy

value: 48.78

- task:

type: Sentence completion

dataset:

type: Muennighoff/xstory_cloze

name: XStoryCloze (es)

config: es

split: validation

revision: 8bb76e594b68147f1a430e86829d07189622b90d

metrics:

- type: Accuracy

value: 55.2

- task:

type: Sentence completion

dataset:

type: Muennighoff/xstory_cloze

name: XStoryCloze (eu)

config: eu

split: validation

revision: 8bb76e594b68147f1a430e86829d07189622b90d

metrics:

- type: Accuracy

value: 52.95

- task:

type: Sentence completion

dataset:

type: Muennighoff/xstory_cloze

name: XStoryCloze (hi)

config: hi

split: validation

revision: 8bb76e594b68147f1a430e86829d07189622b90d

metrics:

- type: Accuracy

value: 53.01

- task:

type: Sentence completion

dataset:

type: Muennighoff/xstory_cloze

name: XStoryCloze (id)

config: id

split: validation

revision: 8bb76e594b68147f1a430e86829d07189622b90d

metrics:

- type: Accuracy

value: 53.08

- task:

type: Sentence completion

dataset:

type: Muennighoff/xstory_cloze

name: XStoryCloze (my)

config: my

split: validation

revision: 8bb76e594b68147f1a430e86829d07189622b90d

metrics:

- type: Accuracy

value: 51.82

- task:

type: Sentence completion

dataset:

type: Muennighoff/xstory_cloze

name: XStoryCloze (ru)

config: ru

split: validation

revision: 8bb76e594b68147f1a430e86829d07189622b90d

metrics:

- type: Accuracy

value: 49.7

- task:

type: Sentence completion

dataset:

type: Muennighoff/xstory_cloze

name: XStoryCloze (sw)

config: sw

split: validation

revision: 8bb76e594b68147f1a430e86829d07189622b90d

metrics:

- type: Accuracy

value: 54.53

- task:

type: Sentence completion

dataset:

type: Muennighoff/xstory_cloze

name: XStoryCloze (te)

config: te

split: validation

revision: 8bb76e594b68147f1a430e86829d07189622b90d

metrics:

- type: Accuracy

value: 53.67

- task:

type: Sentence completion

dataset:

type: Muennighoff/xstory_cloze

name: XStoryCloze (zh)

config: zh

split: validation

revision: 8bb76e594b68147f1a430e86829d07189622b90d

metrics:

- type: Accuracy

value: 57.78

---

# Table of Contents

1. [Model Summary](#model-summary)

2. [Use](#use)

3. [Limitations](#limitations)

4. [Training](#training)

5. [Evaluation](#evaluation)

7. [Citation](#citation)

# Model Summary

> We present BLOOMZ & mT0, a family of models capable of following human instructions in dozens of languages zero-shot. We finetune BLOOM & mT5 pretrained multilingual language models on our crosslingual task mixture (xP3) and find our resulting models capable of crosslingual generalization to unseen tasks & languages.

- **Repository:** [bigscience-workshop/xmtf](https://github.com/bigscience-workshop/xmtf)

- **Paper:** [Crosslingual Generalization through Multitask Finetuning](https://arxiv.org/abs/2211.01786)

- **Point of Contact:** [Niklas Muennighoff](mailto:[email protected])

- **Languages:** Refer to [mc4](https://huggingface.co/datasets/mc4) for pretraining & [xP3](https://huggingface.co/datasets/bigscience/xP3) for finetuning language proportions. It understands both pretraining & finetuning languages.

- **BLOOMZ & mT0 Model Family:**

<div class="max-w-full overflow-auto">

<table>

<tr>

<th colspan="12">Multitask finetuned on <a style="font-weight:bold" href=https://huggingface.co/datasets/bigscience/xP3>xP3</a>. Recommended for prompting in English.

</tr>

<tr>

<td>Parameters</td>

<td>300M</td>

<td>580M</td>

<td>1.2B</td>

<td>3.7B</td>

<td>13B</td>

<td>560M</td>

<td>1.1B</td>

<td>1.7B</td>

<td>3B</td>

<td>7.1B</td>

<td>176B</td>

</tr>

<tr>

<td>Finetuned Model</td>

<td><a href=https://huggingface.co/bigscience/mt0-small>mt0-small</a></td>

<td><a href=https://huggingface.co/bigscience/mt0-base>mt0-base</a></td>

<td><a href=https://huggingface.co/bigscience/mt0-large>mt0-large</a></td>

<td><a href=https://huggingface.co/bigscience/mt0-xl>mt0-xl</a></td>

<td><a href=https://huggingface.co/bigscience/mt0-xxl>mt0-xxl</a></td>

<td><a href=https://huggingface.co/bigscience/bloomz-560m>bloomz-560m</a></td>

<td><a href=https://huggingface.co/bigscience/bloomz-1b1>bloomz-1b1</a></td>

<td><a href=https://huggingface.co/bigscience/bloomz-1b7>bloomz-1b7</a></td>

<td><a href=https://huggingface.co/bigscience/bloomz-3b>bloomz-3b</a></td>

<td><a href=https://huggingface.co/bigscience/bloomz-7b1>bloomz-7b1</a></td>

<td><a href=https://huggingface.co/bigscience/bloomz>bloomz</a></td>

</tr>

</tr>

<tr>

<th colspan="12">Multitask finetuned on <a style="font-weight:bold" href=https://huggingface.co/datasets/bigscience/xP3mt>xP3mt</a>. Recommended for prompting in non-English.</th>

</tr>

<tr>

<td>Finetuned Model</td>

<td></td>

<td></td>

<td></td>

<td></td>

<td><a href=https://huggingface.co/bigscience/mt0-xxl-mt>mt0-xxl-mt</a></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td><a href=https://huggingface.co/bigscience/bloomz-7b1-mt>bloomz-7b1-mt</a></td>

<td><a href=https://huggingface.co/bigscience/bloomz-mt>bloomz-mt</a></td>

</tr>

<th colspan="12">Multitask finetuned on <a style="font-weight:bold" href=https://huggingface.co/datasets/Muennighoff/P3>P3</a>. Released for research purposes only. Strictly inferior to above models!</th>

</tr>

<tr>

<td>Finetuned Model</td>

<td></td>

<td></td>

<td></td>

<td></td>

<td><a href=https://huggingface.co/bigscience/mt0-xxl-p3>mt0-xxl-p3</a></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td><a href=https://huggingface.co/bigscience/bloomz-7b1-p3>bloomz-7b1-p3</a></td>

<td><a href=https://huggingface.co/bigscience/bloomz-p3>bloomz-p3</a></td>

</tr>

<th colspan="12">Original pretrained checkpoints. Not recommended.</th>

<tr>

<td>Pretrained Model</td>

<td><a href=https://huggingface.co/google/mt5-small>mt5-small</a></td>

<td><a href=https://huggingface.co/google/mt5-base>mt5-base</a></td>

<td><a href=https://huggingface.co/google/mt5-large>mt5-large</a></td>

<td><a href=https://huggingface.co/google/mt5-xl>mt5-xl</a></td>

<td><a href=https://huggingface.co/google/mt5-xxl>mt5-xxl</a></td>

<td><a href=https://huggingface.co/bigscience/bloom-560m>bloom-560m</a></td>

<td><a href=https://huggingface.co/bigscience/bloom-1b1>bloom-1b1</a></td>

<td><a href=https://huggingface.co/bigscience/bloom-1b7>bloom-1b7</a></td>

<td><a href=https://huggingface.co/bigscience/bloom-3b>bloom-3b</a></td>

<td><a href=https://huggingface.co/bigscience/bloom-7b1>bloom-7b1</a></td>

<td><a href=https://huggingface.co/bigscience/bloom>bloom</a></td>

</tr>

</table>

</div>

# Use

## Intended use

We recommend using the model to perform tasks expressed in natural language. For example, given the prompt "*Translate to English: Je t’aime.*", the model will most likely answer "*I love you.*". Some prompt ideas from our paper:

- 一个传奇的开端,一个不灭的神话,这不仅仅是一部电影,而是作为一个走进新时代的标签,永远彪炳史册。你认为这句话的立场是赞扬、中立还是批评?

- Suggest at least five related search terms to "Mạng neural nhân tạo".

- Write a fairy tale about a troll saving a princess from a dangerous dragon. The fairy tale is a masterpiece that has achieved praise worldwide and its moral is "Heroes Come in All Shapes and Sizes". Story (in Spanish):

- Explain in a sentence in Telugu what is backpropagation in neural networks.

**Feel free to share your generations in the Community tab!**

## How to use

### CPU

<details>

<summary> Click to expand </summary>

```python

# pip install -q transformers

from transformers import AutoModelForSeq2SeqLM, AutoTokenizer

checkpoint = "bigscience/mt0-small"

tokenizer = AutoTokenizer.from_pretrained(checkpoint)

model = AutoModelForSeq2SeqLM.from_pretrained(checkpoint)

inputs = tokenizer.encode("Translate to English: Je t’aime.", return_tensors="pt")

outputs = model.generate(inputs)

print(tokenizer.decode(outputs[0]))

```

</details>

### GPU

<details>

<summary> Click to expand </summary>

```python

# pip install -q transformers accelerate

from transformers import AutoModelForSeq2SeqLM, AutoTokenizer

checkpoint = "bigscience/mt0-small"

tokenizer = AutoTokenizer.from_pretrained(checkpoint)

model = AutoModelForSeq2SeqLM.from_pretrained(checkpoint, torch_dtype="auto", device_map="auto")

inputs = tokenizer.encode("Translate to English: Je t’aime.", return_tensors="pt").to("cuda")

outputs = model.generate(inputs)

print(tokenizer.decode(outputs[0]))

```

</details>

### GPU in 8bit

<details>

<summary> Click to expand </summary>

```python

# pip install -q transformers accelerate bitsandbytes

from transformers import AutoModelForSeq2SeqLM, AutoTokenizer

checkpoint = "bigscience/mt0-small"

tokenizer = AutoTokenizer.from_pretrained(checkpoint)

model = AutoModelForSeq2SeqLM.from_pretrained(checkpoint, device_map="auto", load_in_8bit=True)

inputs = tokenizer.encode("Translate to English: Je t’aime.", return_tensors="pt").to("cuda")

outputs = model.generate(inputs)

print(tokenizer.decode(outputs[0]))

```

</details>

<!-- Necessary for whitespace -->

###

# Limitations

**Prompt Engineering:** The performance may vary depending on the prompt. For BLOOMZ models, we recommend making it very clear when the input stops to avoid the model trying to continue it. For example, the prompt "*Translate to English: Je t'aime*" without the full stop (.) at the end, may result in the model trying to continue the French sentence. Better prompts are e.g. "*Translate to English: Je t'aime.*", "*Translate to English: Je t'aime. Translation:*" "*What is "Je t'aime." in English?*", where it is clear for the model when it should answer. Further, we recommend providing the model as much context as possible. For example, if you want it to answer in Telugu, then tell the model, e.g. "*Explain in a sentence in Telugu what is backpropagation in neural networks.*".

# Training

## Model

- **Architecture:** Same as [mt5-small](https://huggingface.co/google/mt5-small), also refer to the `config.json` file

- **Finetuning steps:** 25000

- **Finetuning tokens:** 4.62 billion

- **Precision:** bfloat16

## Hardware

- **TPUs:** TPUv4-64

## Software

- **Orchestration:** [T5X](https://github.com/google-research/t5x)

- **Neural networks:** [Jax](https://github.com/google/jax)

# Evaluation

We refer to Table 7 from our [paper](https://arxiv.org/abs/2211.01786) & [bigscience/evaluation-results](https://huggingface.co/datasets/bigscience/evaluation-results) for zero-shot results on unseen tasks. The sidebar reports zero-shot performance of the best prompt per dataset config.

# Citation

```bibtex

@misc{muennighoff2022crosslingual,

title={Crosslingual Generalization through Multitask Finetuning},

author={Niklas Muennighoff and Thomas Wang and Lintang Sutawika and Adam Roberts and Stella Biderman and Teven Le Scao and M Saiful Bari and Sheng Shen and Zheng-Xin Yong and Hailey Schoelkopf and Xiangru Tang and Dragomir Radev and Alham Fikri Aji and Khalid Almubarak and Samuel Albanie and Zaid Alyafeai and Albert Webson and Edward Raff and Colin Raffel},

year={2022},

eprint={2211.01786},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

``` |

A-bhimany-u08/bert-base-cased-qqp | [

"pytorch",

"bert",

"text-classification",

"dataset:qqp",

"transformers"

]

| text-classification | {

"architectures": [

"BertForSequenceClassification"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 138 | 2022-10-28T01:27:38Z | ---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

- name: languagemodel

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# languagemodel

This model is a fine-tuned version of [monideep2255/XLRS-torgo](https://huggingface.co/monideep2255/XLRS-torgo) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: inf

- Wer: 1.1173

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 16

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 30

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 2.3015 | 3.12 | 400 | inf | 1.3984 |

| 0.6892 | 6.25 | 800 | inf | 1.1059 |

| 0.5069 | 9.37 | 1200 | inf | 1.0300 |

| 0.3596 | 12.5 | 1600 | inf | 1.0830 |

| 0.2571 | 15.62 | 2000 | inf | 1.1981 |

| 0.198 | 18.75 | 2400 | inf | 1.1009 |

| 0.1523 | 21.87 | 2800 | inf | 1.1803 |

| 0.1112 | 25.0 | 3200 | inf | 1.0429 |

| 0.08 | 28.12 | 3600 | inf | 1.1173 |

### Framework versions

- Transformers 4.23.1

- Pytorch 1.10.0+cu113

- Datasets 1.18.3

- Tokenizers 0.13.1

|

AWTStress/stress_score | [

"tf",

"distilbert",

"text-classification",

"transformers",

"generated_from_keras_callback"

]

| text-classification | {

"architectures": [

"DistilBertForSequenceClassification"

],

"model_type": "distilbert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 14 | null | ---

pipeline_tag: sentence-similarity

tags:

- feature-extraction

- sentence-similarity

- transformers

---

# CoT-MAE MS-Marco Passage Retriever

CoT-MAE is a transformers based Mask Auto-Encoder pretraining architecture designed for Dense Passage Retrieval.

**CoT-MAE MS-Marco Passage Retriever** is a retriever trained with BM25 hard negatives and CoT-MAE retriever mined MS-Marco hard negatives using [Tevatron](github.com/texttron/tevatron) toolkit. Specifically, we trained a stage-one retriever using BM25 HN, using stage-one retriever to mine HN, then trained a stage-two retriever using both BM25 HN & stage-one retriever mined hn. The release is the stage-two retriever.

Details can be found in our paper and codes.

Paper: [ConTextual Mask Auto-Encoder for Dense Passage Retrieval](https://arxiv.org/abs/2208.07670).

Code: [caskcsg/ir/cotmae](https://github.com/caskcsg/ir/tree/main/cotmae)

## Scores

### MS-Marco Passage full-ranking

| MRR @10 | recall@1 | recall@50 | recall@1k | QueriesRanked |

|----------|----------|-----------|-----------|----------------|

| 0.394431 | 0.265903 | 0.870344 | 0.986676 | 6980 |

## Citations

If you find our work useful, please cite our paper.

```bibtex

@misc{https://doi.org/10.48550/arxiv.2208.07670,

doi = {10.48550/ARXIV.2208.07670},

url = {https://arxiv.org/abs/2208.07670},

author = {Wu, Xing and Ma, Guangyuan and Lin, Meng and Lin, Zijia and Wang, Zhongyuan and Hu, Songlin},

keywords = {Computation and Language (cs.CL), Artificial Intelligence (cs.AI), FOS: Computer and information sciences, FOS: Computer and information sciences},

title = {ConTextual Mask Auto-Encoder for Dense Passage Retrieval},

publisher = {arXiv},

year = {2022},

copyright = {arXiv.org perpetual, non-exclusive license}

}

```

|

Aarav/MeanMadCrazy_HarryPotterBot | []

| null | {

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 0 | null | ---

tags:

- generated_from_trainer

metrics:

- accuracy

model-index:

- name: DNABert_K6_G_quad_3

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# DNABert_K6_G_quad_3

This model is a fine-tuned version of [armheb/DNA_bert_6](https://huggingface.co/armheb/DNA_bert_6) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0722

- Accuracy: 0.9761

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|

| 0.0912 | 1.0 | 9375 | 0.0883 | 0.9707 |

| 0.0668 | 2.0 | 18750 | 0.0723 | 0.9757 |

| 0.0598 | 3.0 | 28125 | 0.0722 | 0.9761 |

### Framework versions

- Transformers 4.22.1

- Pytorch 1.12.1

- Datasets 2.4.0

- Tokenizers 0.12.1

|

AethiQs-Max/AethiQs_GemBERT_bertje_50k | [

"pytorch",

"bert",

"fill-mask",

"transformers",

"autotrain_compatible"

]

| fill-mask | {

"architectures": [

"BertForMaskedLM"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 11 | null | ---

license: mit

---

### Edgerunners Style on Stable Diffusion

This is the `<edgerunners-style-av>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

|

Ajteks/Chatbot | []

| null | {

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 0 | null | ---

tags:

- generated_from_trainer

datasets:

- snli

model-index:

- name: bigbird-roberta-large-snli

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bigbird-roberta-large-snli

This model was trained from scratch on the snli dataset.

It achieves the following results on the evaluation set:

- eval_loss: 0.2437

- eval_p: 0.9216

- eval_r: 0.9214

- eval_f1: 0.9215

- eval_runtime: 22.8545

- eval_samples_per_second: 429.849

- eval_steps_per_second: 26.866

- step: 0

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- num_epochs: 4

### Framework versions

- Transformers 4.21.1

- Pytorch 1.12.1

- Datasets 2.6.1

- Tokenizers 0.12.1

|

Akash7897/bert-base-cased-wikitext2 | [

"pytorch",

"tensorboard",

"bert",

"fill-mask",

"transformers",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible"

]

| fill-mask | {

"architectures": [

"BertForMaskedLM"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 8 | null | csv files can be downloaded [here](https://www.kaggle.com/datasets/oddrationale/mnist-in-csv/download?datasetVersionNumber=2)

|

Akashpb13/Hausa_xlsr | [

"pytorch",

"wav2vec2",

"automatic-speech-recognition",

"ha",

"dataset:mozilla-foundation/common_voice_8_0",

"transformers",

"generated_from_trainer",

"hf-asr-leaderboard",

"model_for_talk",

"mozilla-foundation/common_voice_8_0",

"robust-speech-event",

"license:apache-2.0",

"model-index",

"has_space"

]

| automatic-speech-recognition | {

"architectures": [

"Wav2Vec2ForCTC"

],

"model_type": "wav2vec2",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 31 | null | ---

tags:

- generated_from_trainer

model-index:

- name: bert-base-chinese-finetuned-own

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-chinese-finetuned-own

This model is a fine-tuned version of [bert-base-chinese](https://huggingface.co/bert-base-chinese) on the Myown Car_information dataset.

It achieves the following results on the evaluation set:

- Loss: 1.6957

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| No log | 1.0 | 120 | 1.7141 |

| No log | 2.0 | 240 | 1.6677 |

| No log | 3.0 | 360 | 1.7976 |

### Framework versions

- Transformers 4.23.1

- Pytorch 1.12.1+cu113

- Datasets 2.6.1

- Tokenizers 0.13.1

|

Akbarariza/Anjar | []

| null | {

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 0 | null | ---

license: creativeml-openrail-m

---

**Borderlands Diffusion**

This is a fine-tuned Stable Diffusion model trained on screenshots from the video game series Borderlands.

Use the token **_brld_** in your prompts for the style effect.

#### Prompt and settings for portraits:

**brld harrison ford**

_Steps: 50, Sampler: Euler a, CFG scale: 7, Seed: 3940025417

**brld morgan freeman**

_Steps: 50, Sampler: Euler a, CFG scale: 7, Seed: 3940025417

## License

This model is open access and available to all, with a CreativeML OpenRAIL-M license further specifying rights and usage.

The CreativeML OpenRAIL License specifies:

1. You can't use the model to deliberately produce nor share illegal or harmful outputs or content

2. The authors claims no rights on the outputs you generate, you are free to use them and are accountable for their use which must not go against the provisions set in the license

3. You may re-distribute the weights and use the model commercially and/or as a service. If you do, please be aware you have to include the same use restrictions as the ones in the license and share a copy of the CreativeML OpenRAIL-M to all your users (please read the license entirely and carefully)

[Please read the full license here](https://huggingface.co/spaces/CompVis/stable-diffusion-license) |

AkshatSurolia/ViT-FaceMask-Finetuned | [

"pytorch",

"safetensors",

"vit",

"image-classification",

"dataset:Face-Mask18K",

"transformers",

"license:apache-2.0",

"autotrain_compatible"

]

| image-classification | {

"architectures": [

"ViTForImageClassification"

],

"model_type": "vit",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 40 | null | ---

license: apache-2.0

---

# DiffAb

This repository contains trained weights of the DiffAb model.

Code: https://github.com/luost26/diffab

Paper: https://www.biorxiv.org/content/10.1101/2022.07.10.499510.abstract

Demo: https://huggingface.co/spaces/luost26/DiffAb

|

AkshaySg/GrammarCorrection | []

| null | {

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 0 | null | ---

license: apache-2.0

widget:

- text: "Ayer dormí la siesta durante 3 horas"

- text: "Recuerda tu cita con el médico el lunes a las 8 de la tarde"

- text: "Recuerda tomar la medicación cada noche"

tags:

- LABEL-0 = NONE

- LABEL-1 = B-DATE

- LABEL-2 = I-DATE

- LABEL-3 = B-TIME

- LABEL-4 = I-TIME

- LABEL-5 = B-DURATION

- LABEL-6 = B-DURATION

- LABEL-7 = B-SET

- LABEL-8 = B-SET

metrics:

- precision

- recall

- f1

- accuracy

datasets:

- Spanish-Timebank

model-index:

- name: roberta-base-biomedical-clinical-es-finetuned-ner-timebank

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# roberta-base-biomedical-clinical-es-finetuned-ner-timebank

This model is a fine-tuned version of [PlanTL-GOB-ES/roberta-base-biomedical-clinical-es](https://huggingface.co/PlanTL-GOB-ES/roberta-base-biomedical-clinical-es) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0162

- Precision: 0.8070

- Recall: 0.8854

- F1: 0.8444

- Accuracy: 0.9921

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 8e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 82

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 24

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| 0.0658 | 1.0 | 8 | 0.0613 | 0.3704 | 0.4514 | 0.4069 | 0.9758 |

| 0.038 | 2.0 | 16 | 0.0328 | 0.4528 | 0.6667 | 0.5393 | 0.9789 |

| 0.0067 | 3.0 | 24 | 0.0212 | 0.4515 | 0.7431 | 0.5617 | 0.9776 |

| 0.0225 | 4.0 | 32 | 0.0177 | 0.4913 | 0.7847 | 0.6043 | 0.9814 |

| 0.0119 | 5.0 | 40 | 0.0170 | 0.5531 | 0.7778 | 0.6465 | 0.9848 |

| 0.0109 | 6.0 | 48 | 0.0151 | 0.7027 | 0.8125 | 0.7536 | 0.9893 |

| 0.003 | 7.0 | 56 | 0.0136 | 0.6260 | 0.8194 | 0.7098 | 0.9861 |

| 0.0059 | 8.0 | 64 | 0.0134 | 0.7164 | 0.8333 | 0.7705 | 0.9895 |

| 0.0065 | 9.0 | 72 | 0.0125 | 0.7040 | 0.8507 | 0.7704 | 0.9890 |

| 0.0017 | 10.0 | 80 | 0.0118 | 0.6177 | 0.8472 | 0.7145 | 0.9852 |

| 0.0021 | 11.0 | 88 | 0.0150 | 0.7812 | 0.8681 | 0.8224 | 0.9915 |

| 0.0041 | 12.0 | 96 | 0.0165 | 0.8078 | 0.8611 | 0.8336 | 0.9922 |

| 0.0025 | 13.0 | 104 | 0.0142 | 0.7723 | 0.8715 | 0.8189 | 0.9909 |

| 0.0024 | 14.0 | 112 | 0.0134 | 0.7440 | 0.8681 | 0.8013 | 0.9905 |

| 0.0027 | 15.0 | 120 | 0.0142 | 0.7699 | 0.8715 | 0.8176 | 0.9912 |

| 0.0019 | 16.0 | 128 | 0.0151 | 0.7918 | 0.8715 | 0.8298 | 0.9918 |

| 0.0036 | 17.0 | 136 | 0.0151 | 0.7837 | 0.8681 | 0.8237 | 0.9917 |

| 0.0011 | 18.0 | 144 | 0.0149 | 0.7730 | 0.875 | 0.8208 | 0.9914 |

| 0.0013 | 19.0 | 152 | 0.0152 | 0.7913 | 0.8819 | 0.8342 | 0.9918 |

| 0.0017 | 20.0 | 160 | 0.0159 | 0.8038 | 0.8819 | 0.8411 | 0.9922 |

| 0.0006 | 21.0 | 168 | 0.0164 | 0.8070 | 0.8854 | 0.8444 | 0.9922 |

| 0.002 | 22.0 | 176 | 0.0164 | 0.8121 | 0.8854 | 0.8472 | 0.9922 |

| 0.0021 | 23.0 | 184 | 0.0163 | 0.8121 | 0.8854 | 0.8472 | 0.9922 |

| 0.0013 | 24.0 | 192 | 0.0162 | 0.8070 | 0.8854 | 0.8444 | 0.9921 |

### Framework versions

- Transformers 4.24.0

- Pytorch 1.12.1+cu113

- Datasets 2.6.1

- Tokenizers 0.13.2

|

Al/mymodel | []

| null | {

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 0 | null | ---

tags:

- autotrain

- summarization

language:

- en

widget:

- text: "Positivity towards meat consumption remains strong, despite evidence of negative environmental and ethical outcomes. Although awareness of these repercussions is rising, there is still public resistance to removing meat from our diets. One potential method to alleviate these effects is to produce in vitro meat: meat grown in a laboratory that does not carry the same environmental or ethical concerns. However, there is limited research examining public attitudes towards in vitro meat, thus we know little about the capacity for it be accepted by consumers. This study aimed to examine perceptions of in vitro meat and identify potential barriers that might prevent engagement. Through conducting an online survey with US participants, we identified that although most respondents were willing to try in vitro meat, only one third were definitely or probably willing to eat in vitro meat regularly or as a replacement for farmed meat. Men were more receptive to it than women, as were politically liberal respondents compared with conservative ones. Vegetarians and vegans were more likely to perceive benefits compared to farmed meat, but they were less likely to want to try it than meat eaters. The main concerns were an anticipated high price, limited taste and appeal and a concern that the product was unnatural. It is concluded that people in the USA are likely to try in vitro meat, but few believed that it would replace farmed meat in their diet."

datasets:

- vegancreativecompass/autotrain-data-scitldr-for-vegan-studies

co2_eq_emissions:

emissions: 57.779835625872906

---

# About This Model