modelId

stringlengths 4

81

| tags

list | pipeline_tag

stringclasses 17

values | config

dict | downloads

int64 0

59.7M

| first_commit

timestamp[ns, tz=UTC] | card

stringlengths 51

438k

|

|---|---|---|---|---|---|---|

Ayham/ernie_gpt2_summarization_cnn_dailymail | [

"pytorch",

"tensorboard",

"encoder-decoder",

"text2text-generation",

"dataset:cnn_dailymail",

"transformers",

"generated_from_trainer",

"autotrain_compatible"

]

| text2text-generation | {

"architectures": [

"EncoderDecoderModel"

],

"model_type": "encoder-decoder",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 13 | null | ---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

- name: bert-base-uncased-finetuned-imdb

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-finetuned-imdb

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 3.8056

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| No log | 1.0 | 250 | 3.7125 |

### Framework versions

- Transformers 4.29.2

- Pytorch 1.8.1+cu101

- Datasets 2.12.0

- Tokenizers 0.13.1

|

Ayham/robertagpt2_cnn | [

"pytorch",

"tensorboard",

"encoder-decoder",

"text2text-generation",

"transformers",

"generated_from_trainer",

"autotrain_compatible"

]

| text2text-generation | {

"architectures": [

"EncoderDecoderModel"

],

"model_type": "encoder-decoder",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 4 | null | ---

license: mit

---

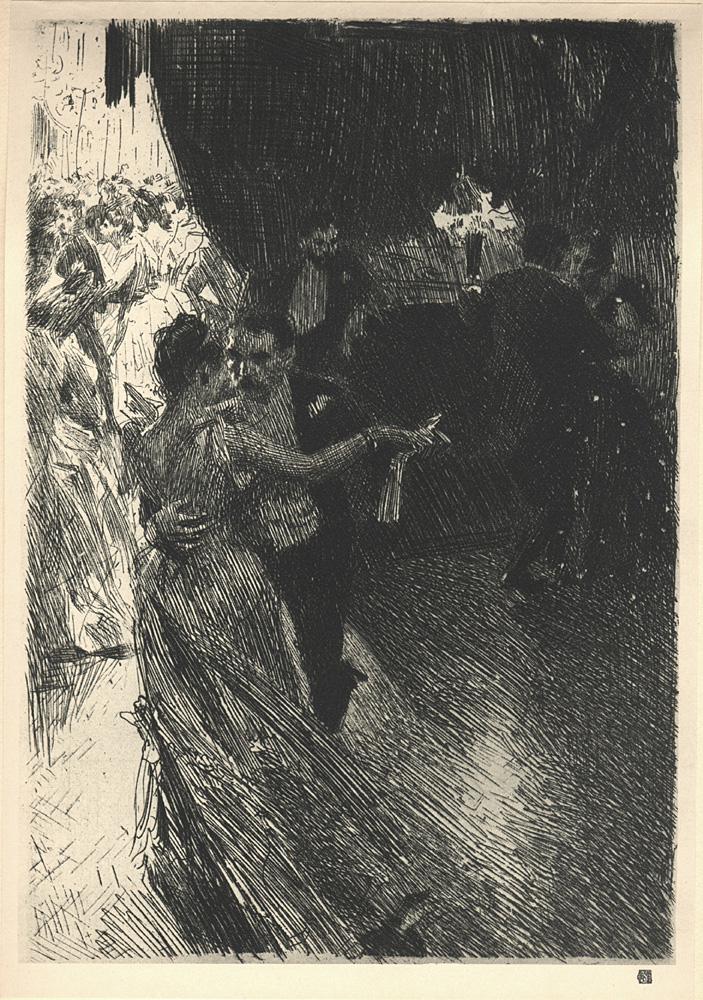

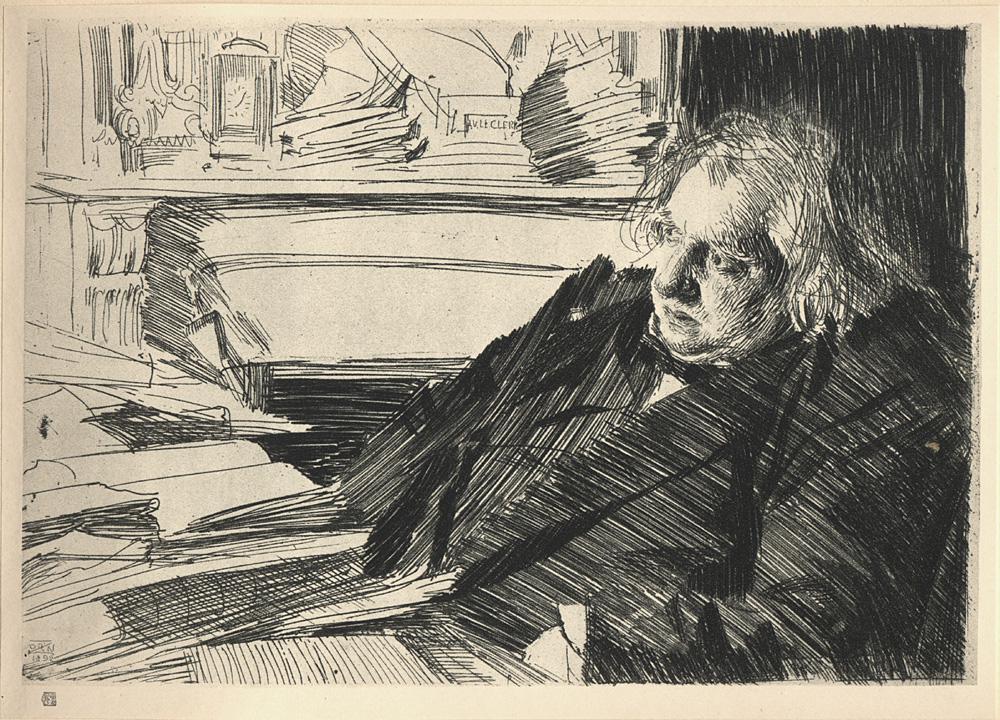

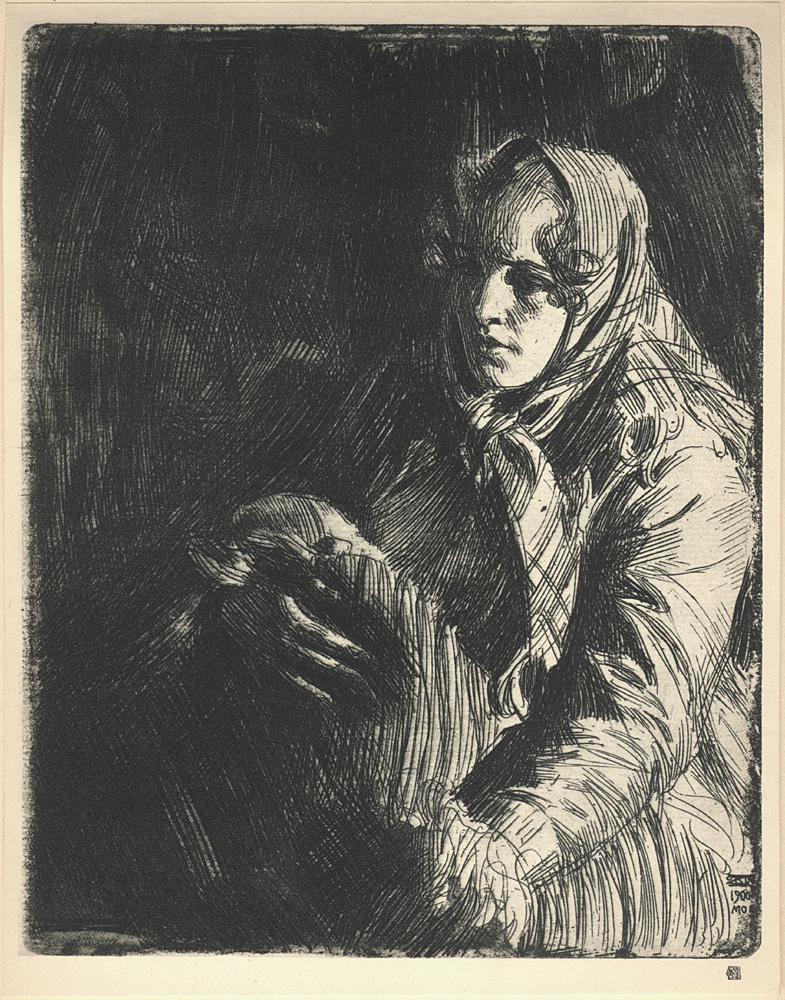

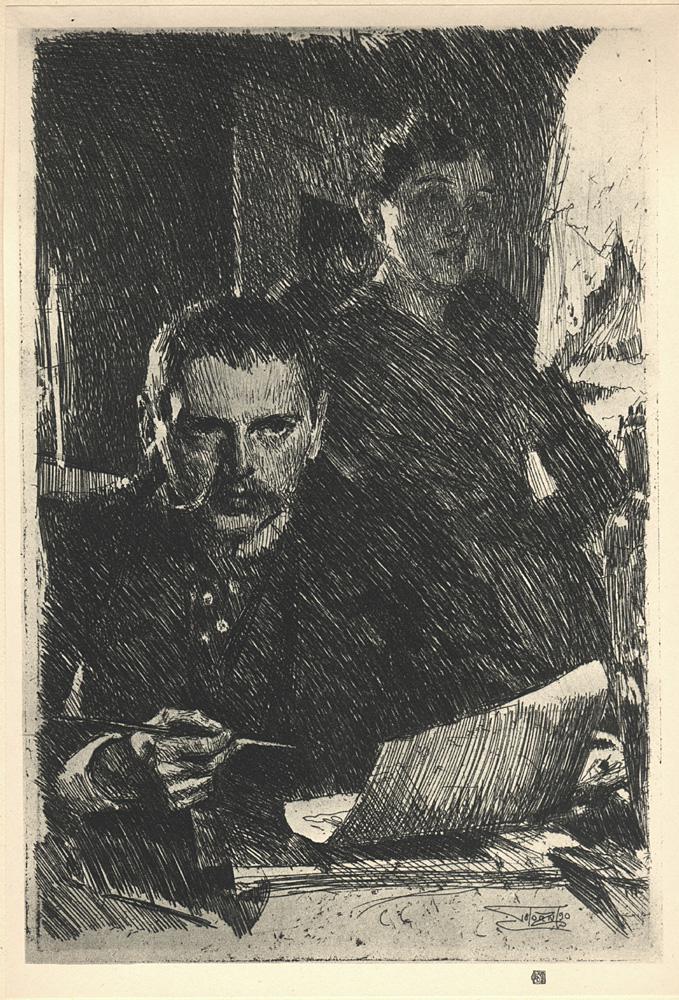

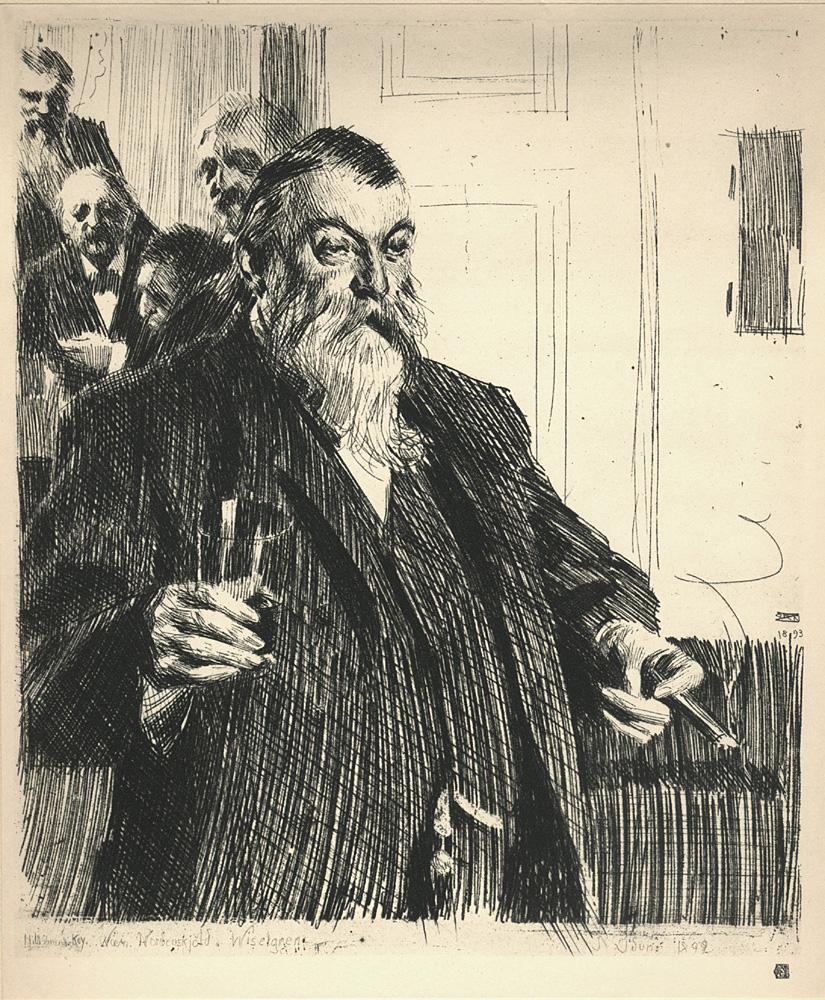

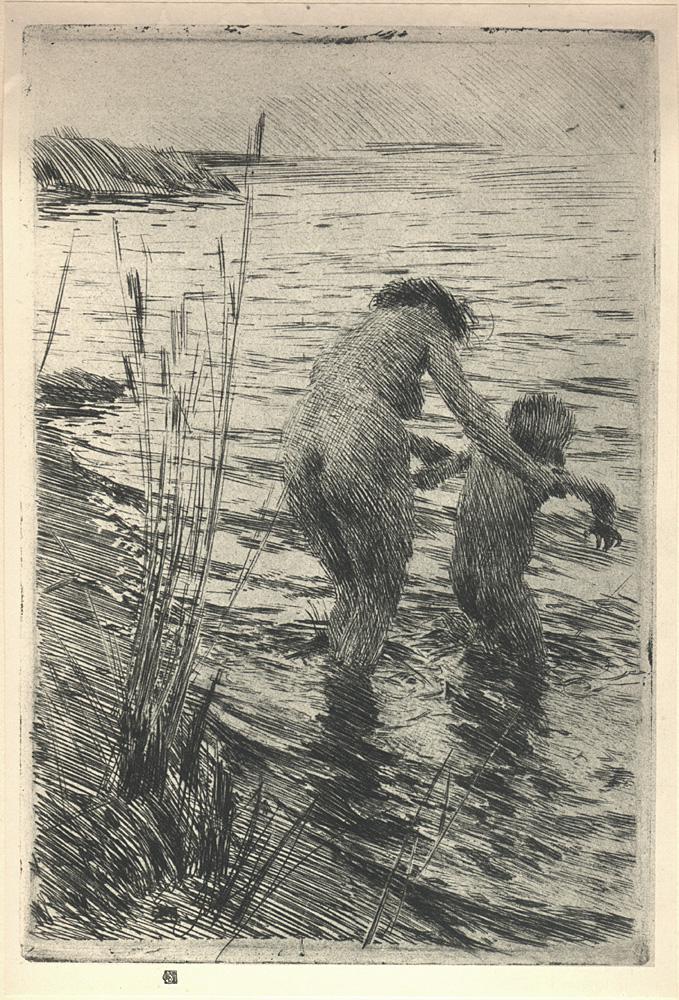

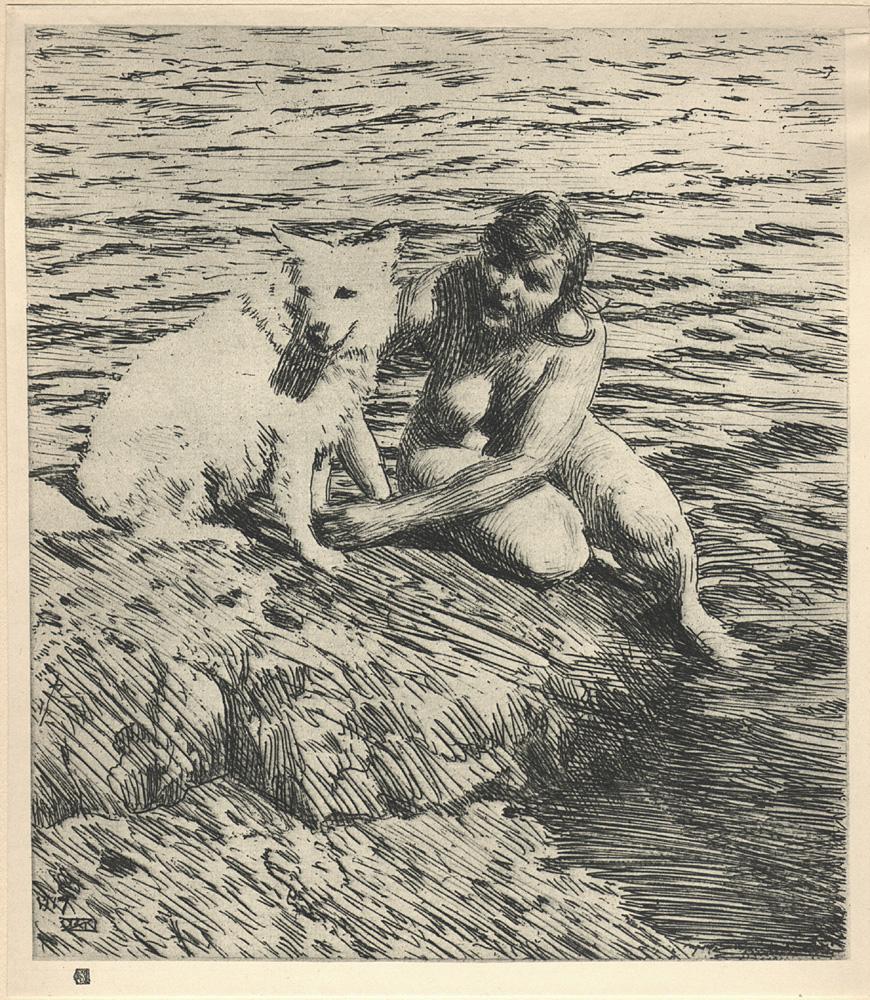

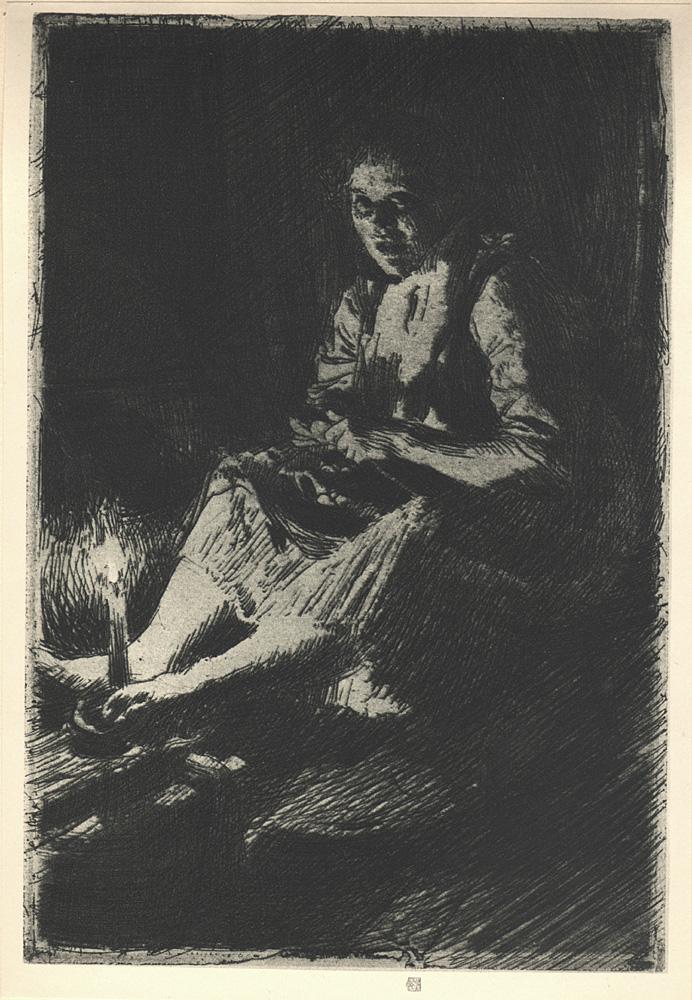

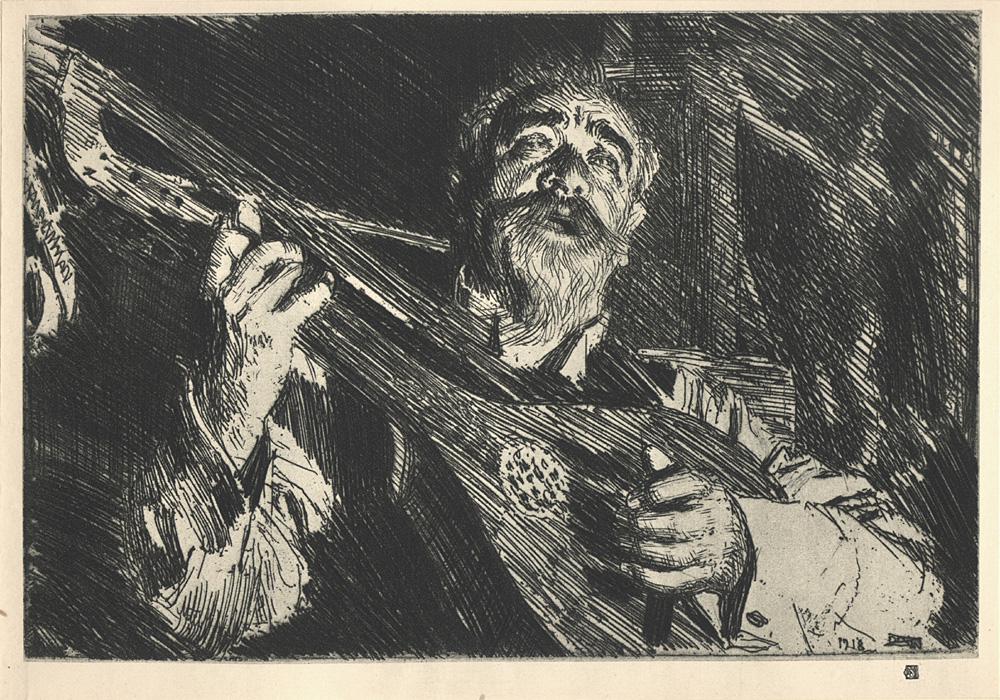

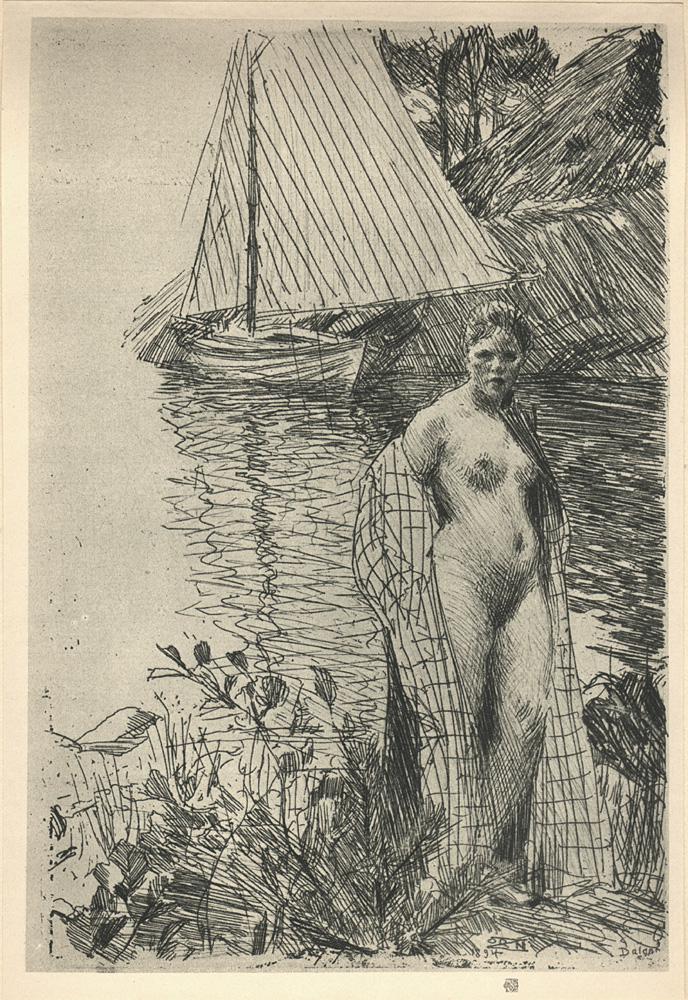

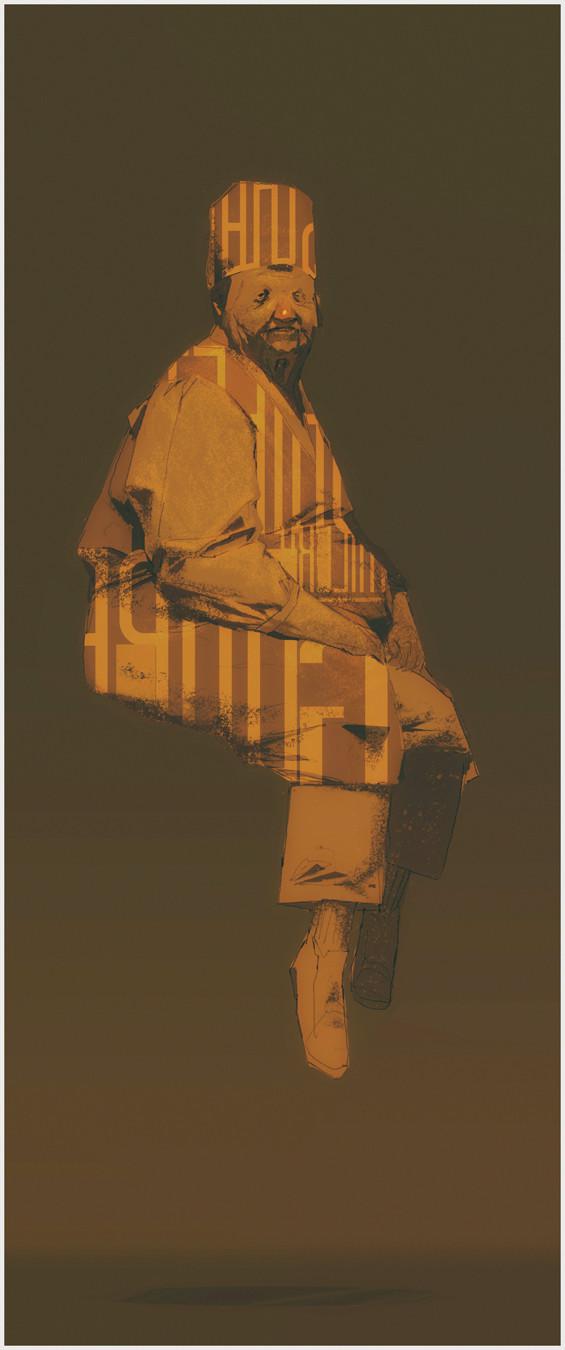

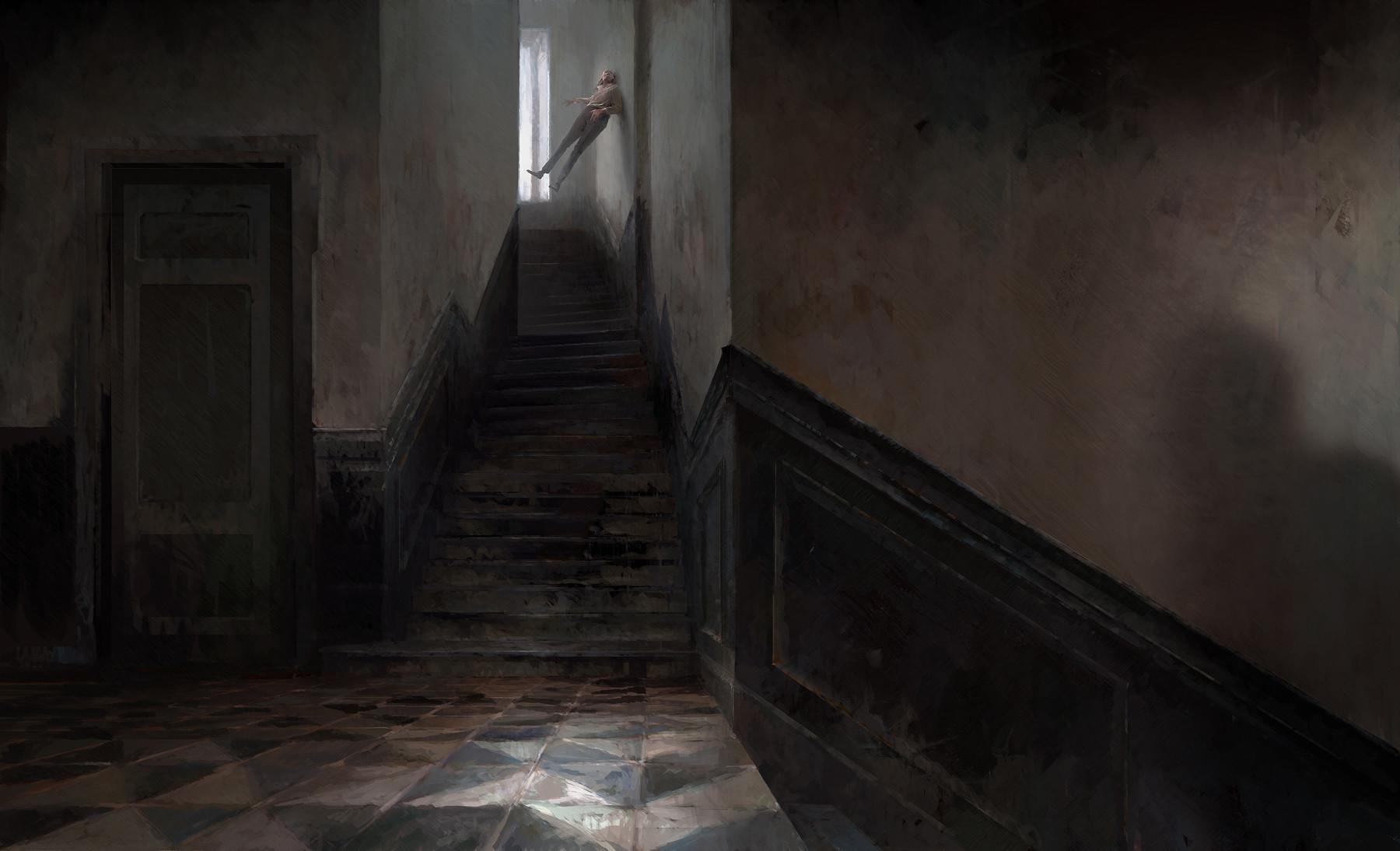

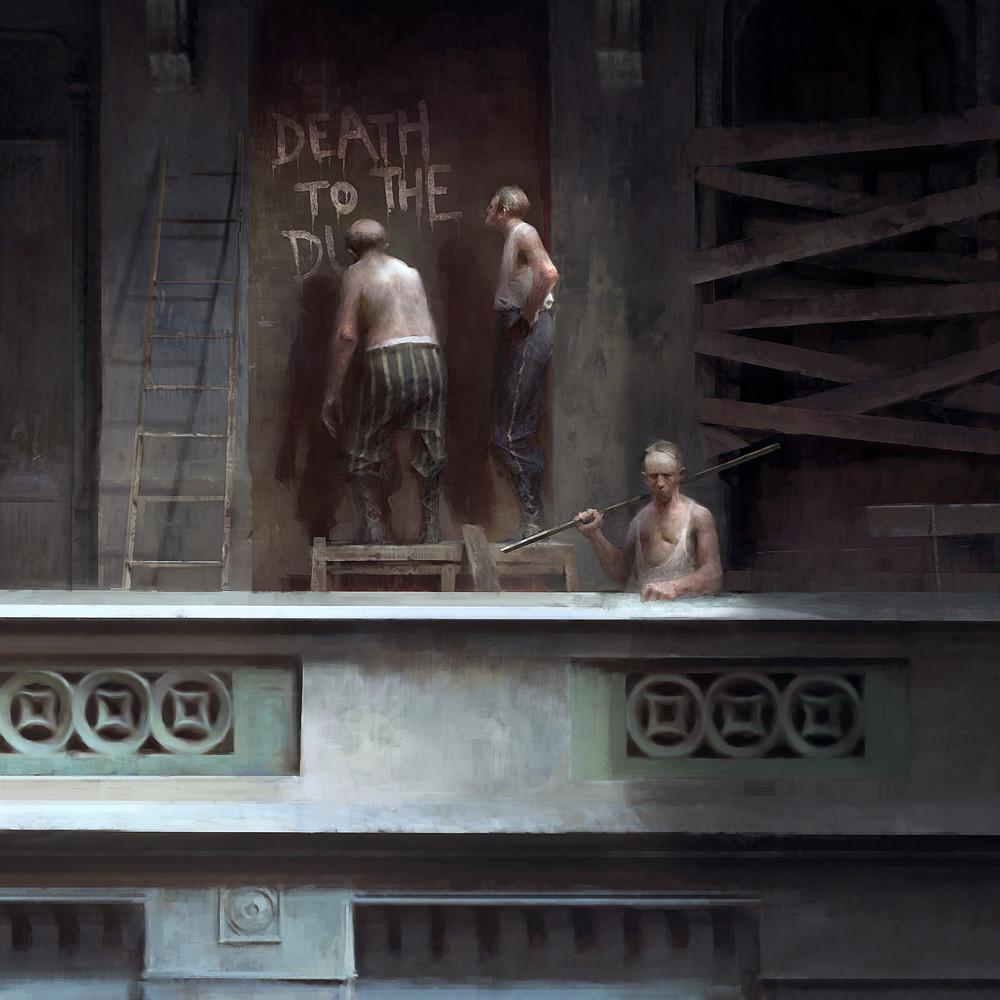

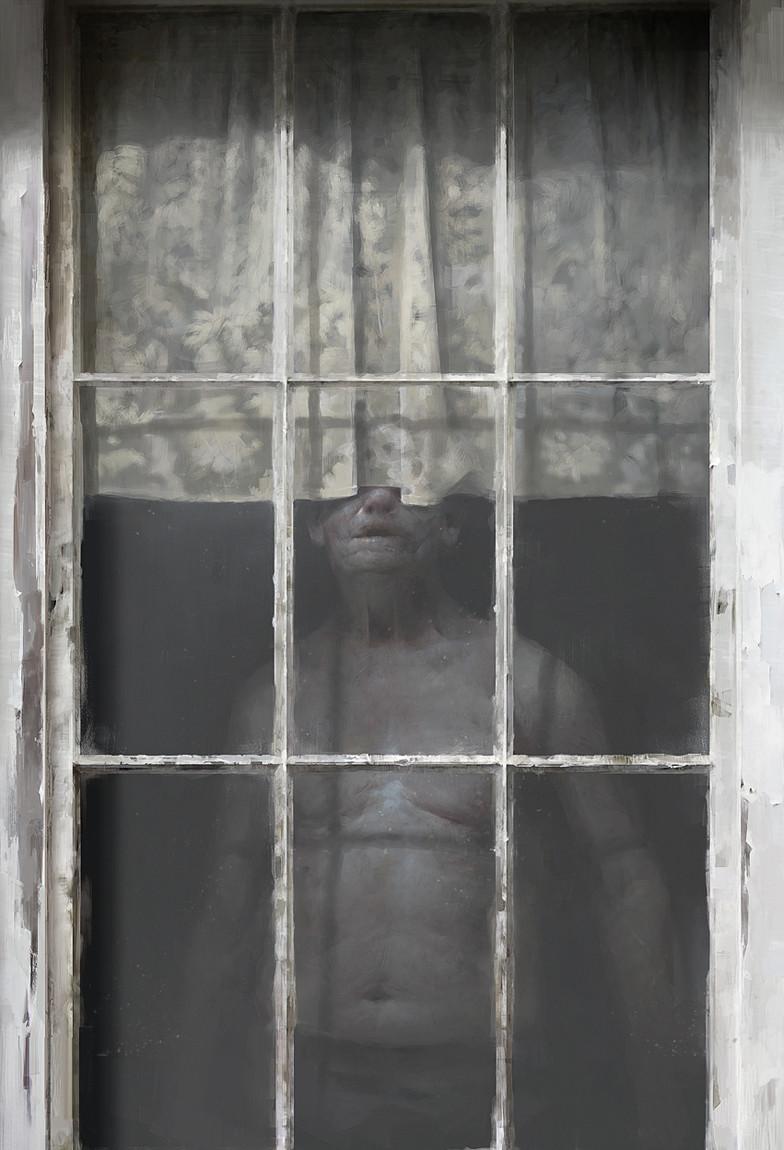

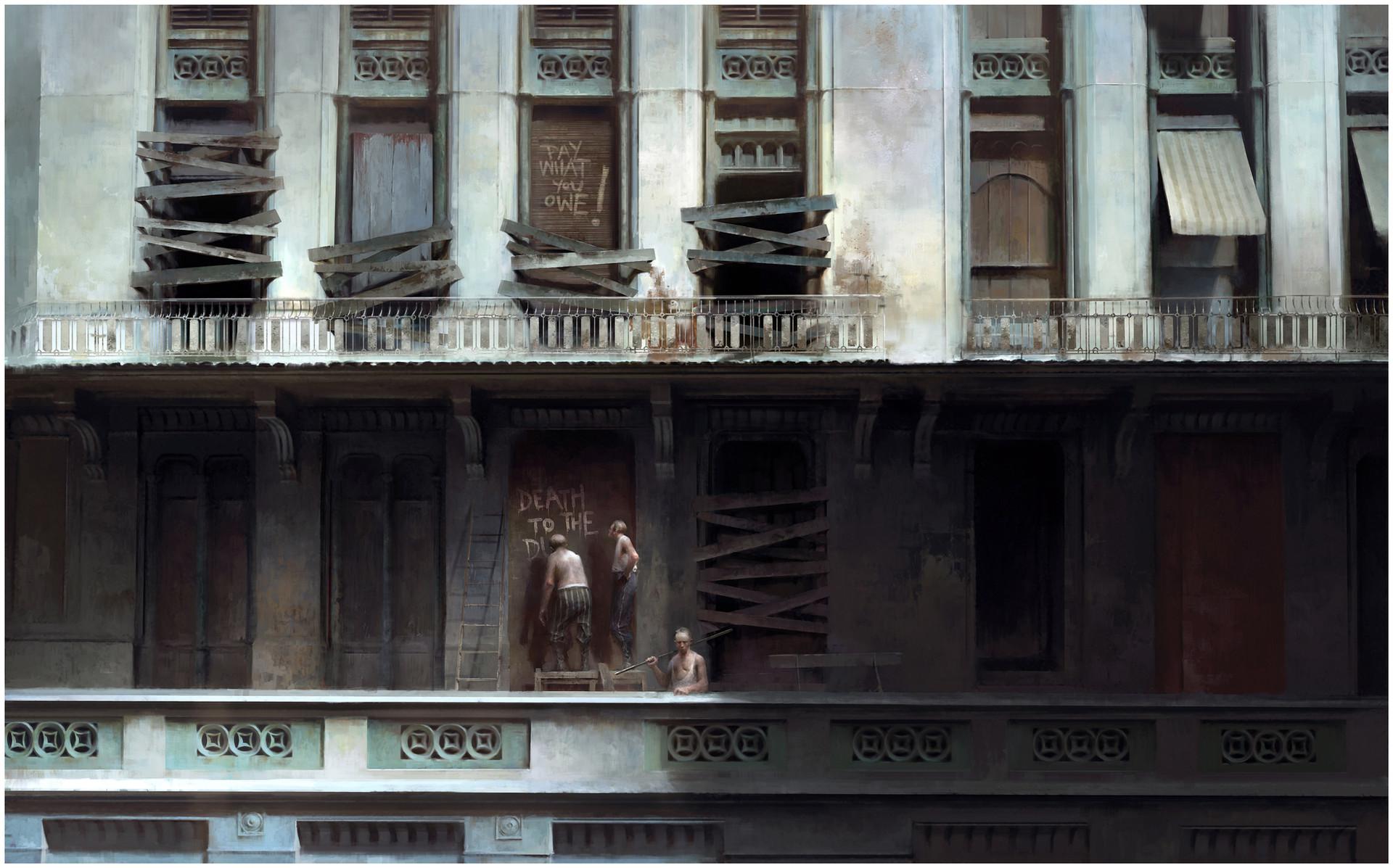

### Anders Zorn on Stable Diffusion

This is the `<anders-zorn>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

|

Ayham/robertagpt2_xsum2 | [

"pytorch",

"tensorboard",

"encoder-decoder",

"text2text-generation",

"transformers",

"generated_from_trainer",

"autotrain_compatible"

]

| text2text-generation | {

"architectures": [

"EncoderDecoderModel"

],

"model_type": "encoder-decoder",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 6 | null | ---

language:

- pt

thumbnail: "Portugues BERT for the Legal Domain"

tags:

- bert

- pytorch

- tsdae

datasets:

- rufimelo/PortugueseLegalSentences-v1

license: "mit"

widget:

- text: "O advogado apresentou [MASK] ao juíz."

---

# Legal_BERTimbau

## Introduction

Legal_BERTimbau Large is a fine-tuned BERT model based on [BERTimbau](https://huggingface.co/neuralmind/bert-base-portuguese-cased) Large.

"BERTimbau Base is a pretrained BERT model for Brazilian Portuguese that achieves state-of-the-art performances on three downstream NLP tasks: Named Entity Recognition, Sentence Textual Similarity and Recognizing Textual Entailment. It is available in two sizes: Base and Large.

For further information or requests, please go to [BERTimbau repository](https://github.com/neuralmind-ai/portuguese-bert/)."

The performance of Language Models can change drastically when there is a domain shift between training and test data. In order create a Portuguese Language Model adapted to a Legal domain, the original BERTimbau model was submitted to a fine-tuning stage where it was performed 1 "PreTraining" epoch over 50000 cleaned documents (lr: 2e-5, using TSDAE technique)

## Available models

| Model | Arch. | #Layers | #Params |

| ---------------------------------------- | ---------- | ------- | ------- |

|`rufimelo/Legal-BERTimbau-base` |BERT-Base |12 |110M|

| `rufimelo/Legal-BERTimbau-large` | BERT-Large | 24 | 335M |

## Usage

```python

from transformers import AutoTokenizer, AutoModelForMaskedLM

tokenizer = AutoTokenizer.from_pretrained("rufimelo/Legal-BERTimbau-large-TSDAE")

model = AutoModelForMaskedLM.from_pretrained("rufimelo/Legal-BERTimbau-large-TSDAE")

```

### Masked language modeling prediction example

```python

from transformers import pipeline

from transformers import AutoTokenizer, AutoModelForMaskedLM

tokenizer = AutoTokenizer.from_pretrained("rufimelo/Legal-BERTimbau-large-TSDAE")

model = AutoModelForMaskedLM.from_pretrained("rufimelo/Legal-BERTimbau-large-TSDAE")

pipe = pipeline('fill-mask', model=model, tokenizer=tokenizer)

pipe('O advogado apresentou [MASK] para o juíz')

# [{'score': 0.5034703612327576,

#'token': 8190,

#'token_str': 'recurso',

#'sequence': 'O advogado apresentou recurso para o juíz'},

#{'score': 0.07347951829433441,

#'token': 21973,

#'token_str': 'petição',

#'sequence': 'O advogado apresentou petição para o juíz'},

#{'score': 0.05165359005331993,

#'token': 4299,

#'token_str': 'resposta',

#'sequence': 'O advogado apresentou resposta para o juíz'},

#{'score': 0.04611917585134506,

#'token': 5265,

#'token_str': 'exposição',

#'sequence': 'O advogado apresentou exposição para o juíz'},

#{'score': 0.04068068787455559,

#'token': 19737, 'token_str':

#'alegações',

#'sequence': 'O advogado apresentou alegações para o juíz'}]

```

### For BERT embeddings

```python

import torch

from transformers import AutoModel

model = AutoModel.from_pretrained('rufimelo/Legal-BERTimbau-large-TSDAE')

input_ids = tokenizer.encode('O advogado apresentou recurso para o juíz', return_tensors='pt')

with torch.no_grad():

outs = model(input_ids)

encoded = outs[0][0, 1:-1]

#tensor([[ 0.0328, -0.4292, -0.6230, ..., -0.3048, -0.5674, 0.0157],

#[-0.3569, 0.3326, 0.7013, ..., -0.7778, 0.2646, 1.1310],

#[ 0.3169, 0.4333, 0.2026, ..., 1.0517, -0.1951, 0.7050],

#...,

#[-0.3648, -0.8137, -0.4764, ..., -0.2725, -0.4879, 0.6264],

#[-0.2264, -0.1821, -0.3011, ..., -0.5428, 0.1429, 0.0509],

#[-1.4617, 0.6281, -0.0625, ..., -1.2774, -0.4491, 0.3131]])

```

## Citation

If you use this work, please cite BERTimbau's work:

```bibtex

@inproceedings{souza2020bertimbau,

author = {F{\'a}bio Souza and

Rodrigo Nogueira and

Roberto Lotufo},

title = {{BERT}imbau: pretrained {BERT} models for {B}razilian {P}ortuguese},

booktitle = {9th Brazilian Conference on Intelligent Systems, {BRACIS}, Rio Grande do Sul, Brazil, October 20-23 (to appear)},

year = {2020}

}

```

|

Ayham/xlnet_distilgpt2_summarization_cnn_dailymail | [

"pytorch",

"tensorboard",

"encoder-decoder",

"text2text-generation",

"dataset:cnn_dailymail",

"transformers",

"generated_from_trainer",

"autotrain_compatible"

]

| text2text-generation | {

"architectures": [

"EncoderDecoderModel"

],

"model_type": "encoder-decoder",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 13 | 2022-11-01T01:14:08Z | ---

license: mit

tags:

- generated_from_keras_callback

model-index:

- name: gabrielgmendonca/bert-base-portuguese-cased-finetuned-enjoei

results: []

---

# gabrielgmendonca/bert-base-portuguese-cased-finetuned-enjoei

This model is a fine-tuned version of [neuralmind/bert-base-portuguese-cased](https://huggingface.co/neuralmind/bert-base-portuguese-cased)

on a teaching dataset extracted from https://www.enjoei.com.br/.

It achieves the following results on the evaluation set:

- Train Loss: 6.0784

- Validation Loss: 5.2882

- Epoch: 2

## Intended uses & limitations

This model is intended for **educational purposes**.

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'WarmUp', 'config': {'initial_learning_rate': 2e-05, 'decay_schedule_fn': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': -985, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}, '__passive_serialization__': True}, 'warmup_steps': 1000, 'power': 1.0, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01}

- training_precision: float32

### Training results

| Train Loss | Validation Loss | Epoch |

|:----------:|:---------------:|:-----:|

| 6.3618 | 5.7723 | 0 |

| 6.3353 | 5.4076 | 1 |

| 6.0784 | 5.2882 | 2 |

### Framework versions

- Transformers 4.23.1

- TensorFlow 2.9.2

- Datasets 2.6.1

- Tokenizers 0.13.1

|

Ayta/Haha | []

| null | {

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 0 | null | ---

tags:

- conversational

---

# Marina DialoGPT Model |

Ayumi/Jovana | []

| null | {

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 0 | null | ---

language: en

thumbnail: http://www.huggingtweets.com/_electricviews_/1667270688148/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1585640841099743233/NrT5Y7dh_400x400.jpg')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI BOT 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">ElectricViews</div>

<div style="text-align: center; font-size: 14px;">@_electricviews_</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from ElectricViews.

| Data | ElectricViews |

| --- | --- |

| Tweets downloaded | 863 |

| Retweets | 79 |

| Short tweets | 121 |

| Tweets kept | 663 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/jeu1b88j/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @_electricviews_'s tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/1mrestz4) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/1mrestz4/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/_electricviews_')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

AyushPJ/ai-club-inductions-21-nlp-XLNet | [

"pytorch",

"xlnet",

"question-answering",

"transformers",

"generated_from_trainer",

"autotrain_compatible"

]

| question-answering | {

"architectures": [

"XLNetForQuestionAnsweringSimple"

],

"model_type": "xlnet",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": true,

"max_length": 250

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 9 | null | data: https://github.com/BigSalmon2/InformalToFormalDataset

Text Generation Informal Formal

```

from transformers import AutoTokenizer, AutoModelForCausalLM

tokenizer = AutoTokenizer.from_pretrained("BigSalmon/InformalToFormalLincoln88Paraphrase")

model = AutoModelForCausalLM.from_pretrained("BigSalmon/InformalToFormalLincoln88Paraphrase")

```

```

Demo:

https://huggingface.co/spaces/BigSalmon/FormalInformalConciseWordy

```

```

prompt = """informal english: corn fields are all across illinois, visible once you leave chicago.\nTranslated into the Style of Abraham Lincoln:"""

input_ids = tokenizer.encode(prompt, return_tensors='pt')

outputs = model.generate(input_ids=input_ids,

max_length=10 + len(prompt),

temperature=1.0,

top_k=50,

top_p=0.95,

do_sample=True,

num_return_sequences=5,

early_stopping=True)

for i in range(5):

print(tokenizer.decode(outputs[i]))

```

Most likely outputs (Disclaimer: I highly recommend using this over just generating):

```

prompt = """informal english: corn fields are all across illinois, visible once you leave chicago.\nTranslated into the Style of Abraham Lincoln:"""

text = tokenizer.encode(prompt)

myinput, past_key_values = torch.tensor([text]), None

myinput = myinput

myinput= myinput.to(device)

logits, past_key_values = model(myinput, past_key_values = past_key_values, return_dict=False)

logits = logits[0,-1]

probabilities = torch.nn.functional.softmax(logits)

best_logits, best_indices = logits.topk(250)

best_words = [tokenizer.decode([idx.item()]) for idx in best_indices]

text.append(best_indices[0].item())

best_probabilities = probabilities[best_indices].tolist()

words = []

print(best_words)

```

```

How To Make Prompt:

informal english: i am very ready to do that just that.

Translated into the Style of Abraham Lincoln: you can assure yourself of my readiness to work toward this end.

Translated into the Style of Abraham Lincoln: please be assured that i am most ready to undertake this laborious task.

***

informal english: space is huge and needs to be explored.

Translated into the Style of Abraham Lincoln: space awaits traversal, a new world whose boundaries are endless.

Translated into the Style of Abraham Lincoln: space is a ( limitless / boundless ) expanse, a vast virgin domain awaiting exploration.

***

informal english: corn fields are all across illinois, visible once you leave chicago.

Translated into the Style of Abraham Lincoln: corn fields ( permeate illinois / span the state of illinois / ( occupy / persist in ) all corners of illinois / line the horizon of illinois / envelop the landscape of illinois ), manifesting themselves visibly as one ventures beyond chicago.

informal english:

```

```

original: microsoft word's [MASK] pricing invites competition.

Translated into the Style of Abraham Lincoln: microsoft word's unconscionable pricing invites competition.

***

original: the library’s quiet atmosphere encourages visitors to [blank] in their work.

Translated into the Style of Abraham Lincoln: the library’s quiet atmosphere encourages visitors to immerse themselves in their work.

```

```

Essay Intro (Warriors vs. Rockets in Game 7):

text: eagerly anticipated by fans, game 7's are the highlight of the post-season.

text: ever-building in suspense, game 7's have the crowd captivated.

***

Essay Intro (South Korean TV Is Becoming Popular):

text: maturing into a bona fide paragon of programming, south korean television ( has much to offer / entertains without fail / never disappoints ).

text: increasingly held in critical esteem, south korean television continues to impress.

text: at the forefront of quality content, south korea is quickly achieving celebrity status.

***

Essay Intro (

```

```

Search: What is the definition of Checks and Balances?

https://en.wikipedia.org/wiki/Checks_and_balances

Checks and Balances is the idea of having a system where each and every action in government should be subject to one or more checks that would not allow one branch or the other to overly dominate.

https://www.harvard.edu/glossary/Checks_and_Balances

Checks and Balances is a system that allows each branch of government to limit the powers of the other branches in order to prevent abuse of power

https://www.law.cornell.edu/library/constitution/Checks_and_Balances

Checks and Balances is a system of separation through which branches of government can control the other, thus preventing excess power.

***

Search: What is the definition of Separation of Powers?

https://en.wikipedia.org/wiki/Separation_of_powers

The separation of powers is a principle in government, whereby governmental powers are separated into different branches, each with their own set of powers, that are prevent one branch from aggregating too much power.

https://www.yale.edu/tcf/Separation_of_Powers.html

Separation of Powers is the division of governmental functions between the executive, legislative and judicial branches, clearly demarcating each branch's authority, in the interest of ensuring that individual liberty or security is not undermined.

***

Search: What is the definition of Connection of Powers?

https://en.wikipedia.org/wiki/Connection_of_powers

Connection of Powers is a feature of some parliamentary forms of government where different branches of government are intermingled, typically the executive and legislative branches.

https://simple.wikipedia.org/wiki/Connection_of_powers

The term Connection of Powers describes a system of government in which there is overlap between different parts of the government.

***

Search: What is the definition of

```

```

Search: What are phrase synonyms for "second-guess"?

https://www.powerthesaurus.org/second-guess/synonyms

Shortest to Longest:

- feel dubious about

- raise an eyebrow at

- wrinkle their noses at

- cast a jaundiced eye at

- teeter on the fence about

***

Search: What are phrase synonyms for "mean to newbies"?

https://www.powerthesaurus.org/mean_to_newbies/synonyms

Shortest to Longest:

- readiness to balk at rookies

- absence of tolerance for novices

- hostile attitude toward newcomers

***

Search: What are phrase synonyms for "make use of"?

https://www.powerthesaurus.org/make_use_of/synonyms

Shortest to Longest:

- call upon

- glean value from

- reap benefits from

- derive utility from

- seize on the merits of

- draw on the strength of

- tap into the potential of

***

Search: What are phrase synonyms for "hurting itself"?

https://www.powerthesaurus.org/hurting_itself/synonyms

Shortest to Longest:

- erring

- slighting itself

- forfeiting its integrity

- doing itself a disservice

- evincing a lack of backbone

***

Search: What are phrase synonyms for "

```

```

- nebraska

- unicamerical legislature

- different from federal house and senate

text: featuring a unicameral legislature, nebraska's political system stands in stark contrast to the federal model, comprised of a house and senate.

***

- penny has practically no value

- should be taken out of circulation

- just as other coins have been in us history

- lost use

- value not enough

- to make environmental consequences worthy

text: all but valueless, the penny should be retired. as with other coins in american history, it has become defunct. too minute to warrant the environmental consequences of its production, it has outlived its usefulness.

***

-

```

```

original: sports teams are profitable for owners. [MASK], their valuations experience a dramatic uptick.

infill: sports teams are profitable for owners. ( accumulating vast sums / stockpiling treasure / realizing benefits / cashing in / registering robust financials / scoring on balance sheets ), their valuations experience a dramatic uptick.

***

original:

```

```

wordy: classical music is becoming less popular more and more.

Translate into Concise Text: interest in classic music is fading.

***

wordy:

```

```

sweet: savvy voters ousted him.

longer: voters who were informed delivered his defeat.

***

sweet:

```

```

1: commercial space company spacex plans to launch a whopping 52 flights in 2022.

2: spacex, a commercial space company, intends to undertake a total of 52 flights in 2022.

3: in 2022, commercial space company spacex has its sights set on undertaking 52 flights.

4: 52 flights are in the pipeline for 2022, according to spacex, a commercial space company.

5: a commercial space company, spacex aims to conduct 52 flights in 2022.

***

1:

```

Keywords to sentences or sentence.

```

ngos are characterized by:

□ voluntary citizens' group that is organized on a local, national or international level

□ encourage political participation

□ often serve humanitarian functions

□ work for social, economic, or environmental change

***

what are the drawbacks of living near an airbnb?

□ noise

□ parking

□ traffic

□ security

□ strangers

***

```

```

original: musicals generally use spoken dialogue as well as songs to convey the story. operas are usually fully sung.

adapted: musicals generally use spoken dialogue as well as songs to convey the story. ( in a stark departure / on the other hand / in contrast / by comparison / at odds with this practice / far from being alike / in defiance of this standard / running counter to this convention ), operas are usually fully sung.

***

original: akoya and tahitian are types of pearls. akoya pearls are mostly white, and tahitian pearls are naturally dark.

adapted: akoya and tahitian are types of pearls. ( a far cry from being indistinguishable / easily distinguished / on closer inspection / setting them apart / not to be mistaken for one another / hardly an instance of mere synonymy / differentiating the two ), akoya pearls are mostly white, and tahitian pearls are naturally dark.

***

original:

```

```

original: had trouble deciding.

translated into journalism speak: wrestled with the question, agonized over the matter, furrowed their brows in contemplation.

***

original:

```

```

input: not loyal

1800s english: ( two-faced / inimical / perfidious / duplicitous / mendacious / double-dealing / shifty ).

***

input:

```

```

first: ( was complicit in / was involved in ).

antonym: ( was blameless / was not an accomplice to / had no hand in / was uninvolved in ).

***

first: ( have no qualms about / see no issue with ).

antonym: ( are deeply troubled by / harbor grave reservations about / have a visceral aversion to / take ( umbrage at / exception to ) / are wary of ).

***

first: ( do not see eye to eye / disagree often ).

antonym: ( are in sync / are united / have excellent rapport / are like-minded / are in step / are of one mind / are in lockstep / operate in perfect harmony / march in lockstep ).

***

first:

```

```

stiff with competition, law school {A} is the launching pad for countless careers, {B} is a crowded field, {C} ranks among the most sought-after professional degrees, {D} is a professional proving ground.

***

languishing in viewership, saturday night live {A} is due for a creative renaissance, {B} is no longer a ratings juggernaut, {C} has been eclipsed by its imitators, {C} can still find its mojo.

***

dubbed the "manhattan of the south," atlanta {A} is a bustling metropolis, {B} is known for its vibrant downtown, {C} is a city of rich history, {D} is the pride of georgia.

***

embattled by scandal, harvard {A} is feeling the heat, {B} cannot escape the media glare, {C} is facing its most intense scrutiny yet, {D} is in the spotlight for all the wrong reasons.

```

Infill / Infilling / Masking / Phrase Masking (Works pretty decently actually, especially when you use logprobs code from above):

```

his contention [blank] by the evidence [sep] was refuted [answer]

***

few sights are as [blank] new york city as the colorful, flashing signage of its bodegas [sep] synonymous with [answer]

***

when rick won the lottery, all of his distant relatives [blank] his winnings [sep] clamored for [answer]

***

the library’s quiet atmosphere encourages visitors to [blank] in their work [sep] immerse themselves [answer]

***

the joy of sport is that no two games are alike. for every exhilarating experience, however, there is an interminable one. the national pastime, unfortunately, has a penchant for the latter. what begins as a summer evening at the ballpark can quickly devolve into a game of tedium. the primary culprit is the [blank] of play. from batters readjusting their gloves to fielders spitting on their mitts, the action is [blank] unnecessary interruptions. the sport's future is [blank] if these tendencies are not addressed [sep] plodding pace [answer] riddled with [answer] bleak [answer]

***

microsoft word's [blank] pricing [blank] competition [sep] unconscionable [answer] invites [answer]

***

```

```

original: microsoft word's [MASK] pricing invites competition.

Translated into the Style of Abraham Lincoln: microsoft word's unconscionable pricing invites competition.

***

original: the library’s quiet atmosphere encourages visitors to [blank] in their work.

Translated into the Style of Abraham Lincoln: the library’s quiet atmosphere encourages visitors to immerse themselves in their work.

```

Backwards

```

Essay Intro (National Parks):

text: tourists are at ease in the national parks, ( swept up in the beauty of their natural splendor ).

***

Essay Intro (D.C. Statehood):

washington, d.c. is a city of outsize significance, ( ground zero for the nation's political life / center stage for the nation's political machinations ).

```

```

topic: the Golden State Warriors.

characterization 1: the reigning kings of the NBA.

characterization 2: possessed of a remarkable cohesion.

characterization 3: helmed by superstar Stephen Curry.

characterization 4: perched atop the league’s hierarchy.

characterization 5: boasting a litany of hall-of-famers.

***

topic: emojis.

characterization 1: shorthand for a digital generation.

characterization 2: more versatile than words.

characterization 3: the latest frontier in language.

characterization 4: a form of self-expression.

characterization 5: quintessentially millennial.

characterization 6: reflective of a tech-centric world.

***

topic:

```

```

regular: illinois went against the census' population-loss prediction by getting more residents.

VBG: defying the census' prediction of population loss, illinois experienced growth.

***

regular: microsoft word’s high pricing increases the likelihood of competition.

VBG: extortionately priced, microsoft word is inviting competition.

***

regular:

```

```

source: badminton should be more popular in the US.

QUERY: Based on the given topic, can you develop a story outline?

target: (1) games played with racquets are popular, (2) just look at tennis and ping pong, (3) but badminton underappreciated, (4) fun, fast-paced, competitive, (5) needs to be marketed more

text: the sporting arena is dominated by games that are played with racquets. tennis and ping pong, in particular, are immensely popular. somewhat curiously, however, badminton is absent from this pantheon. exciting, fast-paced, and competitive, it is an underappreciated pastime. all that it lacks is more effective marketing.

***

source: movies in theaters should be free.

QUERY: Based on the given topic, can you develop a story outline?

target: (1) movies provide vital life lessons, (2) many venues charge admission, (3) those without much money

text: the lessons that movies impart are far from trivial. the vast catalogue of cinematic classics is replete with inspiring sagas of friendship, bravery, and tenacity. it is regrettable, then, that admission to theaters is not free. in their current form, the doors of this most vital of institutions are closed to those who lack the means to pay.

***

source:

```

```

in the private sector, { transparency } is vital to the business’s credibility. the { disclosure of information } can be the difference between success and failure.

***

the labor market is changing, with { remote work } now the norm. this { flexible employment } allows the individual to design their own schedule.

***

the { cubicle } is the locus of countless grievances. many complain that the { enclosed workspace } restricts their freedom of movement.

***

```

```

it would be natural to assume that americans, as a people whose ancestors { immigrated to this country }, would be sympathetic to those seeking to do likewise.

question: what does “do likewise” mean in the above context?

(a) make the same journey

(b) share in the promise of the american dream

(c) start anew in the land of opportunity

(d) make landfall on the united states

***

in the private sector, { transparency } is vital to the business’s credibility. this orientation can be the difference between success and failure.

question: what does “this orientation” mean in the above context?

(a) visible business practices

(b) candor with the public

(c) open, honest communication

(d) culture of accountability

```

```

example: suppose you are a teacher. further suppose you want to tell an accurate telling of history. then suppose a parent takes offense. they do so in the name of name of their kid. this happens a lot.

text: educators' responsibility to remain true to the historical record often clashes with the parent's desire to shelter their child from uncomfortable realities.

***

example: suppose you are a student at college. now suppose you have to buy textbooks. that is going to be worth hundreds of dollars. given how much you already spend on tuition, that is going to hard cost to bear.

text: the exorbitant cost of textbooks, which often reaches hundreds of dollars, imposes a sizable financial burden on the already-strapped college student.

```

```

<Prefix> the atlanta hawks may attribute <Prefix> <Suffix> trae young <Suffix> <Middle> their robust season to <Middle>

***

<Prefix> the nobel prize in literature <Prefix> <Suffix> honor <Suffix> <Middle> is a singularly prestigious <Middle>

```

```

accustomed to having its name uttered ______, harvard university is weathering a rare spell of reputational tumult

(a) in reverential tones

(b) with great affection

(c) in adulatory fashion

(d) in glowing terms

```

```

clarify: international ( {working together} / cooperation ) is called for when ( {issue go beyond lots of borders} / an issue transcends borders / a given matter has transnational implications ).

```

```

description: when someone thinks that their view is the only right one.

synonyms: intolerant, opinionated, narrow-minded, insular, self-righteous.

***

description: when you put something off.

synonyms: shelve, defer, table, postpone.

```

```

organic sentence: crowdfunding is about winner of best ideas and it can test an entrepreneur’s idea.

rewrite phrases: meritocratic, viability, vision

rewritten with phrases: the meritocratic nature of crowdfunding empowers entrepreneurs to test their vision's viability.

```

*Note* Of all the masking techniques, this one works the best.

```

<Prefix> the atlanta hawks may attribute <Prefix> <Suffix> trae young <Suffix> <Middle> their robust season to <Middle>

***

<Prefix> the nobel prize in literature <Prefix> <Suffix> honor <Suffix> <Middle> is a singularly prestigious <Middle>

```

```

essence: when someone's views are keeping within reasonable.

refine: the senator's voting record is ( moderate / centrist / pragmatic / balanced / fair-minded / even-handed ).

***

essence: when things are worked through in a petty way.

refine: the propensity of the u.s. congress to settle every dispute by way of ( mudslinging / bickering / demagoguery / name-calling / finger-pointing / vilification ) is appalling.

```

```

description: when someone thinks that their view is the only right one.

synonyms: intolerant, opinionated, narrow-minded, insular, self-righteous.

***

description: when you put something off.

synonyms: shelve, defer, table, postpone.

```

```

organic sentence: crowdfunding is about winner of best ideas and it can test an entrepreneur’s idea.

rewrite phrases: meritocratic, viability, vision

rewritten with phrases: the meritocratic nature of crowdfunding empowers entrepreneurs to test their vision's viability.

```

```

music before bedtime [makes for being able to relax] -> is a recipe for relaxation.

```

```

[people wanting entertainment love traveling new york city] -> travelers flock to new york city in droves, drawn to its iconic entertainment scene. [cannot blame them] -> one cannot fault them [broadway so fun] -> when it is home to such thrilling fare as Broadway.

```

```

in their ( ‖ when you are rushing because you want to get there on time ‖ / haste to arrive punctually / mad dash to be timely ), morning commuters are too rushed to whip up their own meal.

***

politicians prefer to author vague plans rather than ( ‖ when you can make a plan without many unknowns ‖ / actionable policies / concrete solutions ).

```

```

Q: What is whistleblower protection?

A: Whistleblower protection is a form of legal immunity granted to employees who expose the unethical practices of their employer.

Q: Why are whistleblower protections important?

A: Absent whistleblower protections, employees would be deterred from exposing their employer’s wrongdoing for fear of retribution.

Q: Why would an employer engage in retribution?

A: An employer who has acted unethically stands to suffer severe financial and reputational damage were their transgressions to become public. To safeguard themselves from these consequences, they might seek to dissuade employees from exposing their wrongdoing.

```

```

original: the meritocratic nature of crowdfunding [MASK] into their vision's viability.

infill: the meritocratic nature of crowdfunding [gives investors idea of how successful] -> ( offers entrepreneurs a window ) into their vision's viability.

```

```

Leadership | Lecture 17: Worker Morale

What Workers Look for in Companies:

• Benefits

o Tuition reimbursement

o Paid parental leave

o 401K matching

o Profit sharing

o Pension plans

o Free meals

• Social responsibility

o Environmental stewardship

o Charitable contributions

o Diversity

• Work-life balance

o Telecommuting

o Paid holidays and vacation

o Casual dress

• Growth opportunities

• Job security

• Competitive compensation

• Recognition

o Open-door policies

o Whistleblower protection

o Employee-of-the-month awards

o Positive performance reviews

o Bonuses

``` |

AyushPJ/ai-club-inductions-21-nlp-roBERTa-base-squad-v2 | [

"pytorch",

"roberta",

"question-answering",

"transformers",

"generated_from_trainer",

"autotrain_compatible"

]

| question-answering | {

"architectures": [

"RobertaForQuestionAnswering"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 8 | 2022-11-01T03:39:16Z | ---

language: en

thumbnail: http://www.huggingtweets.com/fienddddddd/1667274315870/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1429983882741489668/TQAnTzje_400x400.jpg')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI BOT 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">Golden Boy Noah</div>

<div style="text-align: center; font-size: 14px;">@fienddddddd</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from Golden Boy Noah.

| Data | Golden Boy Noah |

| --- | --- |

| Tweets downloaded | 158 |

| Retweets | 30 |

| Short tweets | 12 |

| Tweets kept | 116 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/25q0d5x3/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @fienddddddd's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/3ob718th) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/3ob718th/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/fienddddddd')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

AyushPJ/test-squad-trained-finetuned-squad | [

"pytorch",

"tensorboard",

"distilbert",

"question-answering",

"dataset:squad",

"transformers",

"generated_from_trainer",

"autotrain_compatible"

]

| question-answering | {

"architectures": [

"DistilBertForQuestionAnswering"

],

"model_type": "distilbert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 8 | null | ---

language: en

thumbnail: http://www.huggingtweets.com/codeinecucumber-fienddddddd/1667275198553/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1579203041764442116/RSLookYD_400x400.jpg')">

</div>

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1429983882741489668/TQAnTzje_400x400.jpg')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI CYBORG 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">Gutted & Golden Boy Noah</div>

<div style="text-align: center; font-size: 14px;">@codeinecucumber-fienddddddd</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from Gutted & Golden Boy Noah.

| Data | Gutted | Golden Boy Noah |

| --- | --- | --- |

| Tweets downloaded | 1588 | 163 |

| Retweets | 234 | 30 |

| Short tweets | 298 | 12 |

| Tweets kept | 1056 | 121 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/1jm5zshq/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @codeinecucumber-fienddddddd's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/1wp79eh4) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/1wp79eh4/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/codeinecucumber-fienddddddd')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

BJTK2/model_name | []

| null | {

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 0 | 2022-11-01T05:59:36Z | ---

language: "en"

tags:

- style-transfer

- text2text-generation

- seq2seq

inference: true

---

# Formality Style Transfer

## Model description

T5 Model for Formality Style Transfer. Trained on the GYAFC dataset.

## How to use

PyTorch model available.

```python

from transformers import AutoTokenizer, AutoModelForSeq2SeqLM

tokenizer = AutoTokenizer.from_pretrained("Isotonic/informal_to_formal")

model = AutoModelForSeq2SeqLM.from_pretrained("Isotonic/informal_to_formal")

sentence = "will you look into these two deals and let me know"

text = "Make the following sentence Formal: " + sentence + " </s>"

encoding = tokenizer.encode_plus(text,pad_to_max_length=True, return_tensors="pt")

input_ids, attention_masks = encoding["input_ids"].to("cuda"), encoding["attention_mask"].to("cuda")

outputs = model.generate(

input_ids=input_ids, attention_mask=attention_masks,

max_length=256,

do_sample=True,

top_k=120,

top_p=0.95,

early_stopping=True,

num_return_sequences=5

)

for output in outputs:

line = tokenizer.decode(output, skip_special_tokens=True,clean_up_tokenization_spaces=True)

print(line)

Output: "Would you look into the two deals in question, then let me know?"

``` |

BSen/wav2vec2-base-timit-demo-colab | [

"pytorch",

"tensorboard",

"wav2vec2",

"automatic-speech-recognition",

"transformers",

"generated_from_trainer",

"license:apache-2.0"

]

| automatic-speech-recognition | {

"architectures": [

"Wav2Vec2ForCTC"

],

"model_type": "wav2vec2",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 4 | 2022-11-01T07:39:34Z | ---

tags:

- generated_from_trainer

datasets:

- common_voice

metrics:

- wer

model-index:

- name: outputs

results:

- task:

name: Automatic Speech Recognition

type: automatic-speech-recognition

dataset:

name: common_voice

type: common_voice

config: tr

split: train+validation

args: tr

metrics:

- name: Wer

type: wer

value: 0.35818608926565215

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# outputs

This model was trained from scratch on the common_voice dataset.

It achieves the following results on the evaluation set:

- Loss: 0.3878

- Wer: 0.3582

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 64

- eval_batch_size: 32

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 128

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 5

- num_epochs: 1.0

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 3.7391 | 0.92 | 100 | 3.5760 | 1.0 |

| 2.927 | 1.83 | 200 | 3.0796 | 0.9999 |

| 0.9009 | 2.75 | 300 | 0.9278 | 0.8226 |

| 0.6529 | 3.67 | 400 | 0.5926 | 0.6367 |

| 0.3623 | 4.59 | 500 | 0.5372 | 0.5692 |

| 0.2888 | 5.5 | 600 | 0.4407 | 0.4838 |

| 0.285 | 6.42 | 700 | 0.4341 | 0.4694 |

| 0.0842 | 7.34 | 800 | 0.4153 | 0.4302 |

| 0.1415 | 8.26 | 900 | 0.4317 | 0.4136 |

| 0.1552 | 9.17 | 1000 | 0.4145 | 0.4013 |

| 0.1184 | 10.09 | 1100 | 0.4115 | 0.3844 |

| 0.0556 | 11.01 | 1200 | 0.4182 | 0.3862 |

| 0.0851 | 11.93 | 1300 | 0.3985 | 0.3688 |

| 0.0961 | 12.84 | 1400 | 0.4030 | 0.3665 |

| 0.0596 | 13.76 | 1500 | 0.3880 | 0.3631 |

| 0.0917 | 14.68 | 1600 | 0.3878 | 0.3582 |

### Framework versions

- Transformers 4.25.0.dev0

- Pytorch 1.11.0+cu102

- Datasets 2.6.1

- Tokenizers 0.13.1

|

BSen/wav2vec2-large-xls-r-300m-turkish-colab | [

"pytorch",

"tensorboard",

"wav2vec2",

"automatic-speech-recognition",

"dataset:common_voice",

"transformers",

"generated_from_trainer",

"license:apache-2.0"

]

| automatic-speech-recognition | {

"architectures": [

"Wav2Vec2ForCTC"

],

"model_type": "wav2vec2",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 6 | null | ---

tags:

- generated_from_trainer

model-index:

- name: gpt2-gpt2-mc-weight0.25-epoch15-new

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# gpt2-gpt2-mc-weight0.25-epoch15-new

This model is a fine-tuned version of [](https://huggingface.co/) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 4.7276

- Cls loss: 3.0579

- Lm loss: 3.9626

- Cls Accuracy: 0.6110

- Cls F1: 0.6054

- Cls Precision: 0.6054

- Cls Recall: 0.6110

- Perplexity: 52.59

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 2

- eval_batch_size: 2

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 15

### Training results

| Training Loss | Epoch | Step | Validation Loss | Cls loss | Lm loss | Cls Accuracy | Cls F1 | Cls Precision | Cls Recall | Perplexity |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|:-------:|:------------:|:------:|:-------------:|:----------:|:----------:|

| 4.674 | 1.0 | 3470 | 4.4372 | 1.5961 | 4.0380 | 0.5487 | 0.5279 | 0.5643 | 0.5487 | 56.71 |

| 4.3809 | 2.0 | 6940 | 4.3629 | 1.4483 | 4.0006 | 0.6023 | 0.5950 | 0.6174 | 0.6023 | 54.63 |

| 4.2522 | 3.0 | 10410 | 4.3721 | 1.5476 | 3.9849 | 0.6012 | 0.5981 | 0.6186 | 0.6012 | 53.78 |

| 4.1478 | 4.0 | 13880 | 4.3892 | 1.6429 | 3.9782 | 0.6081 | 0.6019 | 0.6128 | 0.6081 | 53.42 |

| 4.0491 | 5.0 | 17350 | 4.4182 | 1.8093 | 3.9656 | 0.6156 | 0.6091 | 0.6163 | 0.6156 | 52.75 |

| 3.9624 | 6.0 | 20820 | 4.4757 | 2.0348 | 3.9666 | 0.6121 | 0.6048 | 0.6189 | 0.6121 | 52.81 |

| 3.8954 | 7.0 | 24290 | 4.4969 | 2.1327 | 3.9634 | 0.6092 | 0.6028 | 0.6087 | 0.6092 | 52.64 |

| 3.846 | 8.0 | 27760 | 4.5632 | 2.4063 | 3.9613 | 0.6017 | 0.5972 | 0.6014 | 0.6017 | 52.52 |

| 3.8036 | 9.0 | 31230 | 4.6068 | 2.5888 | 3.9592 | 0.6052 | 0.5988 | 0.6026 | 0.6052 | 52.41 |

| 3.7724 | 10.0 | 34700 | 4.6175 | 2.6197 | 3.9621 | 0.6052 | 0.6006 | 0.6009 | 0.6052 | 52.57 |

| 3.7484 | 11.0 | 38170 | 4.6745 | 2.8470 | 3.9622 | 0.6046 | 0.5996 | 0.6034 | 0.6046 | 52.57 |

| 3.7291 | 12.0 | 41640 | 4.6854 | 2.8950 | 3.9611 | 0.6110 | 0.6056 | 0.6049 | 0.6110 | 52.52 |

| 3.7148 | 13.0 | 45110 | 4.7103 | 2.9919 | 3.9618 | 0.6063 | 0.6002 | 0.6029 | 0.6063 | 52.55 |

| 3.703 | 14.0 | 48580 | 4.7226 | 3.0417 | 3.9616 | 0.6081 | 0.6027 | 0.6021 | 0.6081 | 52.54 |

| 3.6968 | 15.0 | 52050 | 4.7276 | 3.0579 | 3.9626 | 0.6110 | 0.6054 | 0.6054 | 0.6110 | 52.59 |

### Framework versions

- Transformers 4.21.2

- Pytorch 1.12.1

- Datasets 2.4.0

- Tokenizers 0.12.1 |

Bagus/wav2vec2-xlsr-greek-speech-emotion-recognition | [

"pytorch",

"tensorboard",

"wav2vec2",

"el",

"dataset:aesdd",

"transformers",

"audio",

"audio-classification",

"speech",

"license:apache-2.0"

]

| audio-classification | {

"architectures": [

"Wav2Vec2ForSpeechClassification"

],

"model_type": "wav2vec2",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 21 | null | ---

language:

- tr

license: apache-2.0

tags:

- automatic-speech-recognition

- common_voice

- generated_from_trainer

datasets:

- common_voice

model-index:

- name: wav2vec2-common_voice-tr-demo

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# wav2vec2-common_voice-tr-demo

This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the COMMON_VOICE - TR dataset.

It achieves the following results on the evaluation set:

- Loss: 0.5682

- Wer: 0.5739

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 64

- eval_batch_size: 32

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 128

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 15.0

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| No log | 3.69 | 100 | 3.5365 | 1.0 |

| No log | 7.4 | 200 | 2.9341 | 0.9999 |

| No log | 11.11 | 300 | 0.6994 | 0.6841 |

| No log | 14.8 | 400 | 0.5623 | 0.5792 |

### Framework versions

- Transformers 4.23.1

- Pytorch 1.11.0+cu113

- Datasets 2.6.1

- Tokenizers 0.12.1

|

BatuhanYilmaz/mlm-finetuned-imdb | []

| null | {

"architectures": null,

"model_type": null,

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 0 | null | ---

language: en

thumbnail: https://github.com/borisdayma/huggingtweets/blob/master/img/logo.png?raw=true

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1586447193900204035/fLZqjQLG_400x400.jpg')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI BOT 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">MIGUEL GARGALLO ⚪️</div>

<div style="text-align: center; font-size: 14px;">@miguelgargallo</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from MIGUEL GARGALLO ⚪️.

| Data | MIGUEL GARGALLO ⚪️ |

| --- | --- |

| Tweets downloaded | 3242 |

| Retweets | 1884 |

| Short tweets | 231 |

| Tweets kept | 1127 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/1ybplkw5/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @miguelgargallo's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/5p9jhuq5) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/5p9jhuq5/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='miguelgargallo/huggingtweets')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

BeIR/query-gen-msmarco-t5-large-v1 | [

"pytorch",

"jax",

"t5",

"text2text-generation",

"transformers",

"autotrain_compatible"

]

| text2text-generation | {

"architectures": [

"T5ForConditionalGeneration"

],

"model_type": "t5",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": true,

"length_penalty": 2,

"max_length": 200,

"min_length": 30,

"no_repeat_ngram_size": 3,

"num_beams": 4,

"prefix": "summarize: "

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": true,

"max_length": 300,

"num_beams": 4,

"prefix": "translate English to German: "

},

"translation_en_to_fr": {

"early_stopping": true,

"max_length": 300,

"num_beams": 4,

"prefix": "translate English to French: "

},

"translation_en_to_ro": {

"early_stopping": true,

"max_length": 300,

"num_beams": 4,

"prefix": "translate English to Romanian: "

}

}

} | 1,225 | null | ---

license: other

tags:

- stable-diffusion

- text-to-image

---

# Currently being edited. Model files are already available.

# 現在編集中です。モデルファイルは既に公開してあります。

---

# ProjectTurn8

<img src="https://i.imgur.com/WiS93wx.png" width="1000" height="">

●What is this?

We are submitting a variety of merge models that are well done.

●How to use

Put the downloaded model file into stable-diffusion-webui\models\Stable-diffusion

It is recommended to use bad_prompt_version2 of TextualInversion, but the painting style may change significantly.

●Comparison of models in the public domain.

<img src="https://i.imgur.com/j1lmHAQ.jpg" width="1000" height="">

```jsx

straw hat, (white sundress:1.2), 1 girl,loli, standing, blond hair, yellow eyes, medium breasts, outdoor, beach, cowboy shot, from outside, looking at viewer, perfect anatomy, sunlight

Negative prompt: (worst quality:1.4), (low quality:1.4),(monochrome:1.1),(bad_prompt:0.5),(swimsuit:1.2)

Steps: 36, Sampler: DPM++ SDE Karras, CFG scale: 6.5, Seed: 123, Size: 512x768, Model hash: 40ab3495, Eta: 0.67, Clip skip: 2, ENSD: 31337

```

----

## ProjectTurn8-Jupiter

●What is this?

This model is a merge of Stella and basil_mix using the extension sdweb-merge-block-weighted-gui.

Compared to Earth, the skin and clothing textures are more realistic and improved.

However, if you do not use Hires. fix, the look will be lost.

●Recommended setting

CFG Scale : 9±3

Clip skip : 2

Hires. fix : Upscale by 2

●Samples

<img src="https://i.imgur.com/zIn6hkC.png" width="400" height="">

----

## ProjectTurn8-Stella

<img src="https://i.imgur.com/qUTbReP.png" width="1000" height="">

●What is this?

This is a merged model based on anything+everything ver2.

It is mainly suited for writing 2D cute girls.

Basically, other models are created based on this model.

●Recommended setting

CFG Scale : 8±3

Clip skip : 2

----

## ProjectTurn8-Earth

<img src="https://i.imgur.com/efIyvTu.png" width="1000" height="">

●What is this?

This model was created using the extension sdweb-merge-block-weighted-gui.

It is possible to create more realistic illustrations compared to Stella.

●Recommended setting

CFG Scale : 6±1

Sampling method : DPM++ SDE Karras

----

## ProjectTurn8-Luna

<img src="https://i.imgur.com/pnVSdat.png" width="1000" height="">

●What is this?

This model is a cross between Earth and Stella.

●Recommended setting

CFG Scale : 6±1

Sampling method : DPM++ SDE Karras

|

BeIR/sparta-msmarco-distilbert-base-v1 | [

"pytorch",

"distilbert",

"feature-extraction",

"arxiv:2009.13013",

"arxiv:2104.08663",

"transformers"

]

| feature-extraction | {

"architectures": [

"DistilBertModel"

],

"model_type": "distilbert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 106 | 2022-11-01T11:41:26Z | ---

tags:

- conversational

---

# Melody DialoGPT Model |

BearThreat/distilbert-base-uncased-finetuned-cola | [

"pytorch",

"tensorboard",

"distilbert",

"text-classification",

"dataset:glue",

"transformers",

"generated_from_trainer",

"license:apache-2.0",

"model-index"

]

| text-classification | {

"architectures": [

"DistilBertForSequenceClassification"

],

"model_type": "distilbert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,